Strategies for Limit of Detection Optimization in Inorganic Trace Analysis: From Fundamentals to Advanced Validation

This article provides a comprehensive guide to limit of detection (LOD) optimization for inorganic trace analysis, addressing the critical needs of researchers and drug development professionals.

Strategies for Limit of Detection Optimization in Inorganic Trace Analysis: From Fundamentals to Advanced Validation

Abstract

This article provides a comprehensive guide to limit of detection (LOD) optimization for inorganic trace analysis, addressing the critical needs of researchers and drug development professionals. It explores foundational LOD concepts and definitions from international standards, examines advanced methodological approaches including novel sorbents and extraction techniques, details systematic troubleshooting for signal enhancement, and compares validation protocols for regulatory compliance. By synthesizing current methodologies and validation frameworks, this resource enables scientists to achieve superior analytical sensitivity for reliable ultratrace quantification in complex matrices.

Understanding LOD Fundamentals: Definitions, Standards, and Statistical Basis

Core Definitions and Troubleshooting FAQs

What are the fundamental definitions of LOB, LOD, and LOQ?

The Limit of Blank (LOB) is the highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested [1]. It represents the measurement background noise level.

The Limit of Detection (LOD) is the lowest analyte concentration likely to be reliably distinguished from the LOB and at which detection is feasible. It is the smallest amount of analyte that can be detected, though not necessarily quantified as an exact value [1] [2] [3].

The Limit of Quantitation (LOQ), sometimes called the Lower Limit of Quantitation (LLOQ), is the lowest concentration at which the analyte can not only be reliably detected but also measured with predefined goals for bias and imprecision (e.g., precision and accuracy) [1] [4]. The LOQ cannot be lower than the LOD [1].

How are LOB, LOD, and LOQ mathematically related?

The following table summarizes the typical calculations for these parameters, often based on the standard deviation (SD) of measurements [1] [2] [5].

| Parameter | Typical Calculation Formula | Statistical Basis |

|---|---|---|

| LOB | Mean~blank~ + 1.645(SD~blank~) [1] | 95% one-sided confidence limit for blank measurements (assuming Gaussian distribution) [1]. |

| LOD | LOB + 1.645(SD~low concentration sample~) [1] or 3.3(SD)/Slope [2] [5] | Ensures 95% probability that a sample at the LOD concentration will be distinguished from the LOB [1]. The factor 3.3 derives from 1.645 (for α-error) + 1.645 (for β-error) [5]. |

| LOQ | 10(SD)/Slope [2] [5] | The concentration where the signal is 10 times the noise, meeting predefined bias and imprecision goals [2]. |

A practical analogy for understanding these limits

Imagine two people talking near a jet engine [2]:

- LOB is the noise of the engine with no one talking.

- LOD is when you can detect that a person is speaking (you see lips moving) but cannot understand any words.

- LOQ is when the engine noise is low enough that you can clearly hear and understand every word.

Why is my calculated LOD different from the value in a reagent package insert?

This is a common issue. A manufacturer's LOD is established using multiple instruments and reagent lots to capture the expected performance of the typical population of analyers and reagents, often with 60 replicates [1]. Your verification in a single lab, typically with 20 replicates, captures a smaller range of variability, which can lead to a different result [1]. Furthermore, the calculation method might differ. Always follow a standardized protocol like CLSI EP17 for verification [1].

What should I do if my sample measurements are consistently below the LOD?

Measurements below the LOD are not reliably distinguishable from the assay background noise [1]. In this situation:

- Do not report a numerical value, as it carries a high risk of being a false positive or false negative [3].

- Report the result as "< LOD" along with the specific LOD value for your assay.

- Consider a more sensitive analytical method if detecting at lower concentrations is clinically or research-critical [4].

How can I improve my assay's Limit of Detection?

A low LOD requires both a low LOB and a clear signal from low-concentration samples [4]. To optimize your LOD:

- Minimize Background Noise (Lower the LOB): Investigate and reduce sources of non-specific binding in immunoassays or background signal in your instrumentation [4].

- Reduce Variability: Improve pipetting technique, use high-quality reagents from a single lot if possible, and ensure instrument maintenance to lower the standard deviation of low-concentration samples [1].

- Increase Signal Strength: Optimize reaction conditions (e.g., temperature, incubation time) to enhance the analytical signal for trace-level analytes.

Standards-Based Guidance and Experimental Protocols

The table below compares the key characteristics, sample requirements, and purposes of these three parameters based on CLSI guidelines [1].

| Feature | Limit of Blank (LOB) | Limit of Detection (LOD) | Limit of Quantitation (LOQ) |

|---|---|---|---|

| Definition | Highest apparent concentration from a blank sample [1]. | Lowest concentration distinguished from LOB [1]. | Lowest concentration measured with defined precision and bias [1]. |

| Sample Type | Sample containing no analyte (e.g., zero calibrator) [1]. | Sample with a low concentration of analyte [1]. | Sample with analyte at or above the LOD [1]. |

| Primary Goal | Define the assay's background noise and false-positive rate (α-error) [1] [3]. | Define the reliable detection limit, controlling false-negative rate (β-error) [3]. | Define the reliable quantification limit for reporting numerical results [1]. |

| Typical Replicates | Establishment: 60, Verification: 20 [1]. | Establishment: 60, Verification: 20 [1]. | Establishment: 60, Verification: 20 [1]. |

Experimental Protocol: Determining LOB and LOD per CLSI EP17

This protocol provides a detailed methodology for establishing LOB and LOD, as outlined in CLSI EP17 [1].

1. Experimental Design:

- Samples: Prepare a blank sample (containing no analyte) and a low-concentration sample (with analyte near the expected LOD).

- Replicates: A minimum of 20 replicate measurements for each sample is recommended for verification studies. Manufacturers should use 60 replicates to establish the parameters [1].

- Matrix: Ensure samples are in a matrix commutable with patient specimens.

2. Data Collection:

- Analyze the blank sample and the low-concentration sample in replicate over multiple days to capture inter-assay variability.

- Record the measured concentration value for each replicate.

3. Calculation and Analysis:

- Calculate LOB: Compute the mean and standard deviation (SD~blank~) of the blank sample measurements. LOB = mean~blank~ + 1.645(SD~blank~) [1].

- Calculate LOD: Compute the mean and standard deviation (SD~low~) of the low-concentration sample. LOD = LOB + 1.645(SD~low~) [1].

- Verification: Confirm the LOD by testing a sample with analyte at the calculated LOD concentration. No more than 5% of the results (roughly 1 in 20) should fall below the LOB. If more do, the LOD must be re-estimated using a higher concentration sample [1].

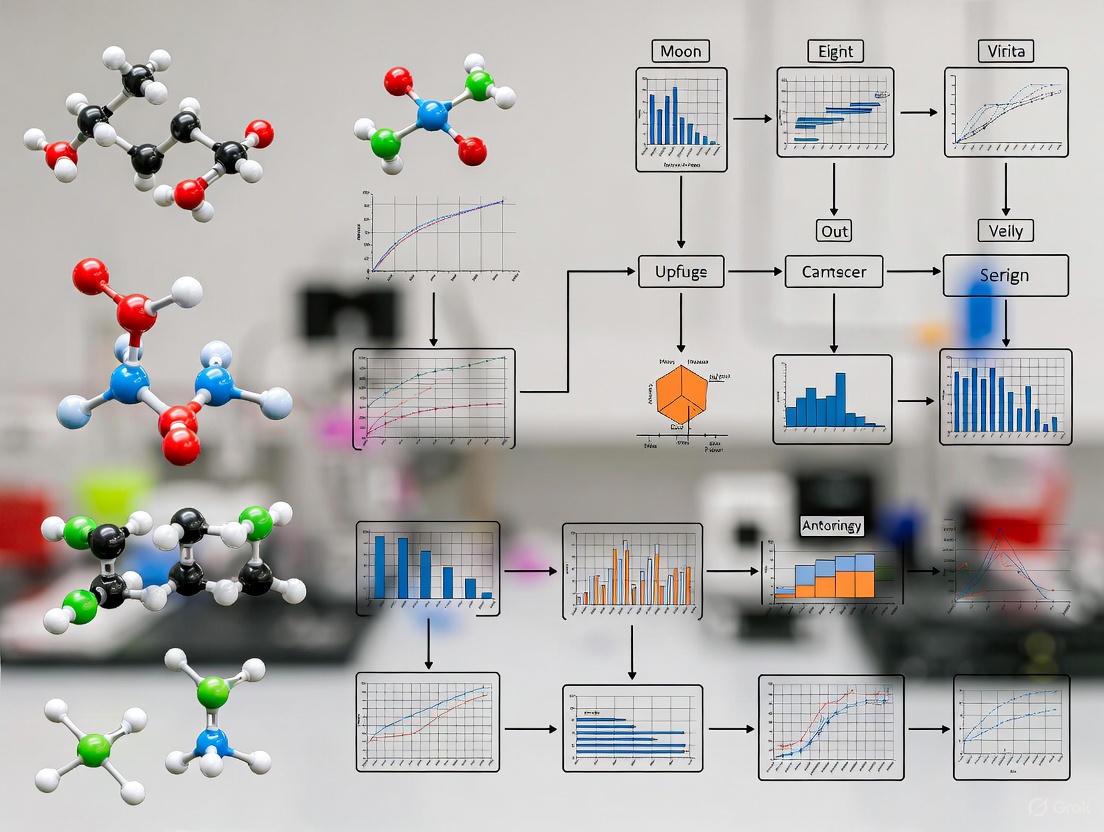

Visual Workflow for Determining LOB and LOD

The following diagram illustrates the decision and calculation process for determining LOB and LOD according to CLSI guidelines.

Statistical Principles Behind LOD and LOQ Factors

The common factors of 3.3 and 10 used in LOD and LOQ calculations are derived from statistical principles of error [5]. The factor 3.3 for LOD is the sum of two one-sided Student t-values (approximately 1.645 each), set to control both the α-error (false positive, risk of saying analyte is present when it is not) and the β-error (false negative, risk of saying analyte is absent when it is present) at 5% each [3] [5]. This ensures a 95% probability that a sample at the LOD concentration will be correctly distinguished from a blank [1]. The factor of 10 for LOQ is chosen to provide a signal sufficiently large relative to noise to allow for quantification with acceptable precision and bias [2].

ICH Q2(R2) Approaches for LOD and LOQ Determination

The ICH guideline describes several acceptable methods for determining LOD and LOQ [2]:

- Visual Evaluation: The analyte concentration is determined by analysis of samples with known concentrations, establishing the minimum level at which the analyte is reliably detected. This is often used for non-instrumental methods [2].

- Signal-to-Noise Ratio: This approach is applicable to analytical procedures that exhibit baseline noise. An S/N ratio between 3:1 and 2:1 is generally considered acceptable for estimating LOD, while a typical ratio for LOQ is 10:1 [2] [3].

- Standard Deviation of the Response: Based on the standard deviation of the response (σ) and the slope of the calibration curve (S). The following formulas are used [2]:

- LOD = 3.3 σ / S

- LOQ = 10 σ / S

The Scientist's Toolkit: Essential Reagents and Materials

For experiments focused on determining limits of detection and quantitation, especially in trace analysis, the quality and consistency of materials are paramount. The following table lists key reagents and their critical functions.

| Research Reagent / Material | Function in LOD/LOQ Studies |

|---|---|

| Blank Matrix | A sample material free of the target analyte, used to establish the baseline signal (background noise) and calculate the Limit of Blank (LOB) [1] [6]. |

| Primary Reference Material | A certified material with a known, precise concentration of the analyte, used to prepare accurate calibrators and low-concentration samples for LOD/LOQ determination [1]. |

| Low-Level Quality Control (LLQC) Sample | A sample spiked with the analyte at a concentration near the expected LOD/LOQ, used to assess assay performance and variability at the low end of the measuring interval [1]. |

| High-Purity Solvents & Water | Used for preparing samples and standards to minimize background contamination and interference that can adversely affect the LOB and LOD [6]. |

| Commutable Patient-like Matrix | A matrix that behaves like real patient samples (e.g., serum, plasma) is essential for validation to ensure that performance characteristics determined in the study reflect real-world usage [1]. |

In inorganic trace analysis, accurately determining the limit of detection (LOD) is fundamental to method validation. The reliability of these detection capabilities is fundamentally governed by the statistical management of Type I (false positive) and Type II (false negative) errors. Setting the LOD requires carefully balancing the risks of these errors to meet the specific requirements of an analytical method. This guide addresses common challenges and provides practical protocols for optimizing detection limits in inorganic trace analysis.

Understanding Type I and Type II Errors in Detection Context

Core Definitions

| Error Type | Statistical Term | Analytical Consequence | Risk Controlled By |

|---|---|---|---|

| Type I Error | False Positive ((\alpha)) | Concluding an analyte is present when it is not [3] | Setting the Critical Level (LC) [3] |

| Type II Error | False Negative ((\beta)) | Concluding an analyte is absent when it is present [3] | Setting the Detection Limit (LD) [3] |

The Decision Process and Error Relationship

The following workflow visualizes the decision-making process for analyte detection and where Type I and Type II errors occur.

Experimental Protocol: Determining LOD with Controlled Error Rates

This procedure outlines how to experimentally establish the Critical Level (LC) and Limit of Detection (LD) for methods like ICP-MS or ICP-OES used in inorganic trace analysis [3].

Step-by-Step Methodology

- Sample Preparation: Obtain a test sample with low analyte concentration, near the expected LOD. If a real sample is unavailable, prepare an artificially spiked sample [3].

- Replicate Analysis: Analyze a minimum of 10 portions of the sample, following the complete, validated analytical procedure. Specify the precision conditions (e.g., repeatability) under which the analysis is performed [3].

- Blank Measurement: Analyze multiple blank samples (not containing the analyte) to characterize the background signal and its variability [3].

- Data Conversion: Convert all measured signals (responses) into concentration units. This is typically done by subtracting the average blank signal and dividing by the slope of the analytical calibration curve [3].

- Statistical Calculation:

- Calculate the standard deviation (SD or s0) of the concentrations obtained from the blank measurements.

- Compute the Critical Level, LC = t1-α, ν * s0, where t1-α, ν is the one-sided t-value for the desired confidence (1-α) and degrees of freedom (ν) [3].

- Compute the Limit of Detection, LD = LC + t1-β, ν * sD, where sD is the standard deviation at the low concentration near the LOD. If s0 ≈ sD, this simplifies to LD ≈ 2 * t * s0 for α=β=0.05 [3].

Research Reagent Solutions

| Item | Function in LOD Determination |

|---|---|

| High-Purity Blank | A matrix-matched sample without the analyte; essential for estimating the mean and standard deviation of the background signal (s₀) [3]. |

| Traceable Standard | A certified reference material or single-element standard with a known, low concentration of the analyte, used to prepare test samples near the LOD [7]. |

| LC-MS Grade Solvents | High-purity solvents (e.g., acids for digestion/dilution) minimize background contamination and signal noise, which is critical for ultra-trace analysis [8]. |

| Teflon/Quartz Filters | Used for collecting particulate matter (e.g., PM2.5); their low inherent levels of inorganic elements prevent contamination during environmental sampling [9]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Our method validation shows a good LOD, but we are getting a high rate of false negatives with low-level samples. What is the most likely cause? A: This typically indicates that the risk of a Type II error (β) is too high. The LOD was likely set based solely on the blank's variability (LC) without sufficiently accounting for the precision of samples containing the analyte near the detection limit. Re-estimate LD by including the standard deviation (sD) from repeated measurements of a sample at the suspected LOD concentration, as per the protocol in Section 3 [3].

Q2: Can I use a signal-to-noise (S/N) ratio of 3:1 to define my LOD for a chromatographic method? A: Using an S/N of 3:1 is a common and often acceptable practice in chromatography, as it approximates a critical level that controls false positives. The ICH guidelines allow this approach. However, you must be aware that this primarily addresses the Type I error risk. For a definitive LOD that also controls Type II errors, you should subsequently validate this value by analyzing multiple samples prepared at that S/N-based concentration and confirming the detection reliability with the desired confidence [3].

Q3: What is the practical difference between the Limit of Detection (LOD) and the Limit of Quantification (LOQ)? A: The LOD is the lowest concentration that can be detected but not necessarily quantified with acceptable precision. It is concerned with answering "Is it there?" and is governed by the control of both Type I and Type II errors. The LOQ is the lowest concentration that can be quantitatively determined with stated, acceptable precision and accuracy (e.g., ≤20% RSD). The LOQ is always greater than the LOD, typically by a factor of 3 to 5 [10] [11].

Troubleshooting Common Scenarios

| Problem | Possible Root Cause | Suggested Solution |

|---|---|---|

| High False Positives | Critical Level (LC) is set too low, increasing α risk [3]. | Re-evaluate blank variability. Increase LC by using a higher confidence level (e.g., from 95% to 99%) for the t-value [3]. |

| High False Negatives | LOD is underestimated; β risk is too high at the reported LOD [3]. | Determine LD using the full formula that includes sD. Use a more sensitive analytical line or pre-concentrate the sample [3] [8]. |

| Irreproducible LOD | High and variable background noise or contamination [8]. | Implement rigorous system cleaning protocols, use higher purity reagents (LC-MS grade), and ensure proper sample clean-up (e.g., Solid-Phase Extraction) [8]. |

| LOD not fit for purpose | The defined LOD does not meet the regulatory or research requirement for the target analyte. | Employ graphical validation strategies like the "Uncertainty Profile," which provides a more realistic assessment of the lowest quantifiable concentration by incorporating measurement uncertainty [11]. |

Advanced Concepts: Graphical Validation Strategies

Modern validation approaches like the Uncertainty Profile offer a robust alternative to classical methods for assessing LOD and LOQ. This graphical tool combines a β-content tolerance interval with predefined acceptance limits. The method is considered valid for concentrations where the entire uncertainty interval falls within the acceptance limits. The intersection point of the uncertainty profile and the acceptability limit provides a rigorously defined LOQ, offering a more realistic and reliable assessment of the method's capabilities, especially at low concentrations [11].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between LOD and LOQ in trace analysis?

The Limit of Detection (LOD) represents the lowest concentration of an analyte that can be detected but not necessarily quantified, defined as 3×SD₀, where SD₀ is the standard deviation as the concentration approaches zero. The Limit of Quantitation (LOQ) represents the lowest concentration that can be quantitatively measured with acceptable precision and accuracy, defined as 10×SD₀, providing an uncertainty of approximately ±30% at the 95% confidence level. These parameters are essential for demonstrating method capability and defining the working range for inorganic trace analysis [12].

FAQ 2: How does ICH Q2(R2) address analytical procedure validation for inorganic trace analysis?

ICH Q2(R2) provides a comprehensive framework for validating analytical procedures, emphasizing characteristics like specificity, accuracy, precision, linearity, range, LOD, and LOQ [13]. The guideline applies to analytical procedures used for release and stability testing of commercial drug substances and products, including both chemical and biological/biotechnological materials. In July 2025, ICH released updated training materials to support harmonized global understanding and consistent application of these validation requirements [14].

FAQ 3: Why is measurement traceability critical in ISO 17025 for trace element analysis?

Measurement traceability under ISO 17025 ensures your laboratory's results can be linked to recognized national or international standards through an unbroken chain of comparisons with documented uncertainties [15]. This is critical because it establishes metrological traceability, building trust in your data's reliability and ensuring worldwide recognition of your measurement results. Without this documented chain, you cannot prove the validity of your analytical results to clients or regulators [15].

FAQ 4: What approach should I take when my detection limits don't meet requirements?

When detection limits are inadequate, systematically investigate these key areas: First, assess spectral interferences by examining alternative analytical lines using single-element standards [7]. Second, consider sample preparation adjustments, such as increasing sample concentration factor or optimizing dilution protocols [7]. Third, evaluate instrumental parameters including RF power, nebulizer type, and integration times to enhance sensitivity [12].

Troubleshooting Guide: Detection Limit Optimization

Table 1: Common Detection Limit Problems and Solutions

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Poor Signal-to-Noise Ratio | Instrument drift, suboptimal detection parameters, low light throughput | Increase sample concentration; optimize RF power, nebulizer flow, and integration time; verify detector performance [7] [12] |

| Spectral Interferences | Direct spectral overlap, matrix effects, polyatomic ions | Select alternative analytical lines; use collision/reaction cells (ICP-MS); implement mathematical correction techniques [7] |

| High Method Blanks | Contaminated reagents, environmental contamination, insufficient cleaning | Use high-purity reagents; implement rigorous blank monitoring; enhance cleaning protocols between samples [7] |

| Inconsistent Results | Uncontrolled method parameters, sample introduction issues, matrix variations | Conduct robustness testing; control critical parameters (temperature, reagent concentration); use internal standards [12] |

Table 2: Validation Characteristics for Trace Analysis Methods

| Validation Characteristic | Definition | Acceptance Criteria Example |

|---|---|---|

| Specificity | Ability to measure analyte accurately in presence of interferences | No significant interference from matrix; confirmed via standard additions [12] |

| Accuracy/Bias | Closeness between measured value and true value | ±10% relative at 10 ppm level; verified via CRM analysis [7] [12] |

| Repeatability (Precision) | Agreement under same conditions over short time | Standard deviation <5% RSD for mid-range concentrations [12] |

| Linearity | Ability to obtain results proportional to analyte concentration | R² > 0.998 over specified range [7] |

| Range | Interval between upper and lower concentration levels | LOQ to 1000×LOQ or point where linearity ends [12] |

| Robustness | Capacity to remain unaffected by small parameter variations | Deliberate variations in power, temperature, or reagent concentration yield <10% signal change [12] |

Advanced Optimization Protocol

Spectral Line Selection Workflow:

- Characterize Multiple Lines: Begin with 5-6 potential analytical lines for your element [7]

- Interference Testing: Analyze high-purity single-element solutions of potential interferents at expected sample concentrations [7]

- Sensitivity Assessment: Determine Instrument Detection Limits (IDLs) for each line [7]

- Composite Spectrum Modeling: Use stored single-element spectra to construct simulated sample spectra for interference prediction [7]

Sample Introduction Optimization:

- For radial view ICP-OES: Prepare standards at 0.0, 1, 10, 100, and 1000 µg/mL [7]

- For axial view ICP-OES: Prepare standards at 0.0, 0.1, 1, 10, and 100 µg/mL [7]

- For quadrupole ICP-MS: Prepare standards at 0, 1, 10, 100, and 1000 ng/mL [7]

Experimental Protocols

Protocol 1: Method Validation for Trace Element Analysis

Scope: This protocol establishes validation procedures for quantitative trace element analysis according to ICH Q2(R2) and ISO 17025 requirements.

Materials and Equipment:

- Certified reference materials (CRMs) traceable to national standards [12]

- High-purity single-element standards with certified impurity profiles [7]

- Optimized ICP-OES or ICP-MS system with documented calibration [15]

Procedure:

- Specificity Assessment

- Analyze blank, standard, and potential interferent solutions separately

- Compare results with and without internal standardization [12]

- Document all spectral interferences and correction methods applied

Accuracy Determination

- Analyze certified reference materials (CRMs) with matrix matching samples

- Perform spike recovery studies at multiple concentration levels

- Calculate percent recovery: (Measured Concentration/Expected Concentration) × 100 [12]

Precision Evaluation

- Analyze homogeneous sample at least 11 times at low, mid, and high concentrations

- Calculate standard deviation and relative standard deviation (RSD)

- Repeat over multiple days for intermediate precision [12]

Linearity and Range Establishment

- Prepare calibration standards across anticipated working range

- Analyze in triplicate from low to high concentration

- Calculate correlation coefficient, y-intercept, and slope of regression line [7]

LOD and LOQ Determination

- Analyze blank solution at least 10 times

- Calculate standard deviation (SD₀) of blank responses

- LOD = 3 × SD₀, LOQ = 10 × SD₀ [12]

Protocol 2: Measurement Traceability Documentation

Purpose: Establish an unbroken chain of calibration traceable to SI units as required by ISO 17025 [15].

Procedure:

- Reference Standard Selection

- Identify appropriate national or international standards for each measurement [15]

- Select reference materials with certified values and documented uncertainties

Calibration Hierarchy Establishment

- Document complete chain from working standards to primary standards

- Ensure each calibration step includes measurement uncertainty [15]

- Maintain certificates for all reference materials and calibrations

Uncertainty Budget Development

- Identify all uncertainty sources: equipment, environment, operator, method [15]

- Quantify Type A (statistical) and Type B (other information) uncertainties

- Combine uncertainties using appropriate mathematical models

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Trace Element Analysis

| Reagent/Material | Function | Critical Quality Attributes |

|---|---|---|

| Single-Element Standards | Instrument calibration, line characterization | Certified purity, documented trace metal impurities, stability [7] |

| Certified Reference Materials | Method validation, accuracy verification | Matrix-matched, certified values with uncertainties, traceability [12] |

| High-Purity Acids & Reagents | Sample preparation, dilution | Low trace metal background, lot-to-lot consistency [7] |

| Internal Standard Solutions | Correction for instrumental drift, matrix effects | Non-interfering spectral lines, similar behavior to analytes [12] |

| Quality Control Materials | Ongoing method performance verification | Homogeneous, stable, concentrations at decision levels [15] |

Workflow Visualization

Analytical Method Lifecycle Workflow

Measurement Traceability Chain

The Role of Blank Samples and Background Signals in LOD Determination

Frequently Asked Questions (FAQs)

Q1: Why can't I achieve the low detection limits claimed by my ICP instrument manufacturer? A1: Manufacturer specifications are typically determined under ideal, interference-free conditions using pure standard solutions. In real-world analysis, your sample matrix introduces effects such as ion quenching, high salt content, and spectral interferences that can raise the practical detection limit. Furthermore, the background signal and its variability from your reagents and sample matrix are often higher than those of the ultra-pure blanks used by manufacturers. Using a standard additions approach and ensuring your reagent blank closely matches the sample matrix can provide a more realistic estimation of your achievable Limit of Detection (LOD) [16].

Q2: What is the fundamental difference between the Limit of Blank (LoB) and the Limit of Detection (LOD)?

A2: The Limit of Blank (LoB) is the highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested. It is calculated as LoB = mean_blank + 1.645(SD_blank) and represents the 95th percentile of the blank distribution, helping to control false positives. The Limit of Detection (LOD), on the other hand, is the lowest analyte concentration that can be reliably distinguished from the LoB. It is calculated as LOD = LoB + 1.645(SD_low concentration sample) and is set to also control the risk of false negatives. The LOD is always a higher, more conservative value than the LoB [1].

Q3: My blank samples show no analyte signal. How can I calculate the LOD? A3: A blank with no signal and zero standard deviation presents a calculation problem. In this case, you cannot use statistical methods that rely on the standard deviation of the blank. Alternative approaches include:

- Experimental Serial Dilution: Prepare and analyze samples with serially decreasing concentrations of the analyte. The LOD can be taken as the lowest concentration that yields a signal-to-noise ratio (S/N) greater than 3 or 3.3 [17].

- Calibration Curve Method: If a calibration curve is feasible at low concentrations, the LOD can be estimated from its parameters, typically as

3.3 * SD_slope / Slope, where SD_slope is the standard deviation of the regression [17].

Q4: How does sample matrix affect LOD determination using blanks? A4: The sample matrix is a critical factor. A pure solvent blank will not account for matrix-induced signal suppression or enhancement. For a realistic LOD, your blank should be a matrix blank—a sample that is identical to your test samples but without the target analyte. This ensures that the background signal and its variability (standard deviation) used in LOD calculations accurately reflect the analytical conditions, including matrix effects that can significantly degrade the practical detection limit [16] [18].

Key Concepts and Statistical Definitions

The following table summarizes the core parameters involved in characterizing the detection capabilities of an analytical method.

Table 1: Key Definitions in Detection Limit Determination

| Parameter | Definition | Typical Calculation | Purpose |

|---|---|---|---|

| Limit of Blank (LoB) | The highest apparent analyte concentration expected from a blank sample [1]. | LoB = mean_blank + 1.645(SD_blank) [1] |

To establish a threshold for distinguishing a real signal from background noise, controlling false positives. |

| Limit of Detection (LOD) | The lowest analyte concentration that can be reliably distinguished from the LoB [1]. | LOD = LoB + 1.645(SD_low concentration sample) [1] |

To define the lowest concentration at which detection is feasible, controlling both false positives and false negatives. |

| Signal-to-Noise (S/N) | A ratio comparing the magnitude of the analyte signal to the background noise [3]. | S/N = Analyte Response / Amplitude of Noise [17] |

A practical, instrumental approach for estimating LOD, often targeting S/N ≥ 3 [3] [17]. |

| False Positive (Type I Error, α) | The probability of concluding the analyte is present when it is not [3]. | - | The risk set by the choice of critical level (e.g., α=0.05 for 5% risk) [3]. |

| False Negative (Type II Error, β) | The probability of failing to detect the analyte when it is present [3]. | - | The risk set by the choice of LOD (e.g., β=0.05 for 5% risk) [3]. |

Experimental Protocols

Protocol 1: Establishing LoB and LOD following CLSI EP17 Guidelines

This protocol provides a standardized method for determining LoB and LOD, requiring a significant number of replicates to ensure statistical reliability [1].

Step 1: Prepare Samples

- Blank Sample: A sample that does not contain the analyte but contains all other components of the matrix (e.g., a placebo or analyte-free biological fluid).

- Low Concentration Sample: A sample spiked with the analyte at a concentration near the expected LOD. This sample should be prepared in the same matrix as the blank.

Step 2: Data Acquisition

- Analyze a minimum of 20 replicates (for a verification) to 60 replicates (for a full establishment) of both the blank sample and the low-concentration sample. These analyses should be performed over multiple days and using different reagent lots to capture routine experimental variance [1].

Step 3: Calculation

- Calculate LoB:

LoB = mean_blank + 1.645(SD_blank)- Note: This formula assumes a one-sided 95% confidence interval for the blank values.

- Calculate LOD:

LOD = LoB + 1.645(SD_low concentration sample)- Note: This formula ensures that 95% of the signals from a sample at the LOD will exceed the LoB.

- Calculate LoB:

Step 4: Verification

- Analyze multiple replicates (e.g., 20) of a sample prepared at the calculated LOD concentration.

- The result is verified if no more than 5% of the measured values fall below the LoB [1].

Protocol 2: Rapid LOD Estimation via Signal-to-Noise (S/N) Ratio

This method is commonly used in chromatographic and spectroscopic techniques and is often integrated into instrument software [3] [17].

Step 1: System Setup and Analysis

- Optimize and stabilize your instrument system.

- Inject a blank matrix sample and analyze the region where the analyte peak is expected. Measure the peak-to-peak noise amplitude (h~noise~) over a distance equivalent to 20 times the peak width at half-height [3].

- Inject a sample with a low concentration of the analyte and measure the height of the analyte peak (H~analyte~).

Step 2: Calculation and Determination

Visual Workflow for LOD Determination

The following diagram illustrates the statistical relationship between blank measurements, low-concentration sample measurements, and the definitions of LoB and LOD, incorporating the risks of false positives and false negatives.

Diagram 1: LOD Determination Workflow

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Essential Materials for Accurate LOD Determination in Trace Analysis

| Material / Solution | Critical Function in LOD Context |

|---|---|

| High-Purity Matrix Blank | Serves as the foundational sample for measuring the method's background signal (LoB). Its composition must match the test samples to accurately account for matrix effects [16]. |

| Certified Single-Element Standards | Used to prepare low-concentration spiked samples for LOD calculation and verification. Certificates of Analysis (CoA) with reported trace metal impurities are vital to avoid misidentifying impurities as interferences [7]. |

| High-Purity Acids & Reagents | Essential for sample preparation and dilution. Contaminants in reagents contribute directly to the blank signal, artificially raising the calculated LoB and LOD. |

| Certified Reference Material (CRM) | Used to validate the accuracy and detection capability of the final method. A CRM with an analyte concentration near the LOD provides the best confirmation that the method is "fit-for-purpose" [7]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between the Critical Level (LC) and the Limit of Detection (LOD)?

The Critical Level (LC) and Limit of Detection (LOD) are distinct statistical concepts used for decision-making and capability assessment, respectively [3].

- Critical Level (LC): This is a decision threshold. An observed signal above the LC leads to the conclusion that the analyte is detected. This level is set to control the probability of a false positive (Type I error, α), where you incorrectly conclude the analyte is present when it is not [3] [19].

- Limit of Detection (LOD): This is the lowest true concentration of an analyte that an analytical method can reliably detect. It is defined as the concentration where the probability of a false negative (Type II error, β) is acceptably low. A signal from an analyte at the LOD will exceed the LC with high probability (1-β) [3].

FAQ 2: Why can't I simply use a signal-to-noise ratio of 3 as my LOD for all methods?

While a signal-to-noise (S/N) ratio of 3 is a common and practical approximation for the LOD in techniques like chromatography, it is a simplification [3]. This approach does not explicitly account for the statistical risks of false positives and false negatives in a formal way. Modern international standards (ISO, IUPAC) define LOD based on these statistical error probabilities (α and β). For methods requiring strict validation or regulatory compliance, the statistical approach based on standard deviation is more robust and defensible [3] [20].

FAQ 3: How do I estimate the standard deviation of the blank (σ₀) in practice?

The standard deviation of the blank can be estimated in several ways [3] [20]:

- Replicate Blank Measurements: Analyze a minimum of 10 portions of a blank sample (a sample without the analyte) through the complete analytical procedure. The standard deviation of the resulting calculated concentrations is your estimate (s₀) [3].

- Background Signal: In some techniques, you can measure the background signal (e.g., baseline noise in a chromatogram) and convert it to concentration units using the calibration function [3].

- Low-Level Sample: If a true blank is unavailable, you can use a test sample with a very low analyte concentration, close to the expected LOD [3].

FAQ 4: What is the relationship between LOD and Limit of Quantification (LOQ)?

The Limit of Quantification (LOQ) is the lowest concentration at which an analyte can not only be reliably detected but also quantified with acceptable precision and accuracy [21]. While the LOD is primarily concerned with the signal being distinguishable from the blank, the LOQ requires a higher signal to ensure the quantitative measurement is sufficiently precise. A common convention is to set the LOQ at a value corresponding to 10 times the standard deviation of the blank [21].

Troubleshooting Guides

Problem 1: High False Positive Rate

- Symptoms: The method frequently indicates the presence of the analyte in blank samples.

- Potential Causes & Solutions:

- Cause: The Critical Level (LC) is set too low.

- Solution: Recalculate LC using the correct standard deviation of the blank (s₀) and the desired confidence level (typically α=0.05, which corresponds to a 5% false positive rate). Ensure you are using the appropriate value from the t-distribution (e.g., t~1-α,ν) if the standard deviation is estimated from a limited number of replicates [3].

- Cause: Contamination from reagents, labware, or the environment is inflating the blank signal.

- Solution: Implement rigorous contamination control protocols. Use high-purity reagents and dedicated, clean labware. Assess the impact of emerging contaminants on your specific inorganic analysis [22].

Problem 2: High False Negative Rate

- Symptoms: The method fails to detect the analyte in samples known to contain it at concentrations near the claimed LOD.

- Potential Causes & Solutions:

- Cause: The method's LOD is over-optimistic (too low), potentially due to an underestimated standard deviation or use of an S/N ratio without verification.

- Solution: Re-evaluate the LOD using the proper statistical procedure. The LOD must account for both Type I (α) and Type II (β) errors. Analyze samples spiked at the claimed LOD; approximately 50% of the results should fall below the LC if the LOD is underestimated [3].

- Cause: Poor method sensitivity or significant signal suppression from the sample matrix.

- Solution: Optimize the analytical instrumentation. For trace analysis, incorporate a pre-concentration step, such as Solid-Phase Extraction (SPE) using selective sorbents like Layered Double Hydroxides (LDHs), to enhance the signal [23].

Problem 3: Inconsistent LOD Values

- Symptoms: Replicate experiments to determine the LOD yield widely varying results.

- Potential Causes & Solutions:

- Cause: An insufficient number of replicate measurements were used to estimate the standard deviation, leading to high uncertainty.

- Solution: Increase the number of replicate blank or low-concentration sample measurements. A minimum of 10 replicates is recommended, but more may be needed for a stable estimate [3] [20].

- Cause: Instability in the analytical system (e.g., drifting baseline, fluctuating instrument response).

- Solution: Perform instrument maintenance and calibration to ensure stable operation. The precision conditions (e.g., repeatability) under which the LOD is estimated must be clearly defined and controlled [3].

Core Equations and Data

The following equations form the statistical foundation for calculating the Critical Level and the Limit of Detection.

Table 1: Fundamental Equations for LOD Determination

| Term | Symbol | Equation | Description & Notes |

|---|---|---|---|

| Critical Level | L~C~ | ( LC = t{1-\alpha, \nu} \cdot s_0 ) [3] | The decision limit to control false positives. If the measured signal > L~C~, the analyte is "detected." |

| Limit of Detection | L~D~ | ( LD = LC + t{1-\beta, \nu} \cdot sD \approx 2 \cdot t{1-\alpha, \nu} \cdot s0 ) [3] | The true concentration that will be detected with high probability (1-β). The approximation holds if α=β and s₀ ≈ s~D~. |

| Simplified LOD | L~D~ | ( LD = 3.3 \cdot s0 ) [3] | A common simplification when α=β=0.05 and a sufficient number of replicates are used (where the t-value approaches the normal distribution z-value). |

| Signal-to-Noise LOD | L~D~ | ( LD = \frac{3 \cdot h{noise}}{R} ) [3] | A chromatographic approach where h~noise~ is half the maximum baseline noise and R is the response factor (concentration/peak height). |

Where:

- t~1-α, ν~, t~1-β, ν~: Critical values from the Student's t-distribution for probabilities 1-α and 1-β with ν degrees of freedom.

- s₀: Estimated standard deviation of the blank (in concentration units).

- s~D~: Estimated standard deviation at the LOD (often assumed to be equal to s₀).

- α: Probability of a false positive (Type I error). Typically set to 0.05.

- β: Probability of a false negative (Type II error). Typically set to 0.05.

Experimental Protocol: Estimating LOD for a Chromatographic Method

This is a detailed methodology for establishing the LOD based on the statistical evaluation of blank measurements [3].

- Preparation: Obtain a test sample where the concentration of the target component is low, ideally close to the expected detection limit. A blank sample (without the analyte) is also required.

- Analysis: Analyze a minimum of 10 portions of this test sample (or blank), following the complete analytical procedure from sample preparation to final measurement. The precision conditions (e.g., repeatability) must be specified.

- Data Conversion: For each measurement, convert the instrument response (e.g., peak area) into a concentration value. This is typically done by subtracting any blank signal and dividing by the slope of the analytical calibration curve.

- Standard Deviation Calculation: Calculate the standard deviation (s₀) of the resulting concentration values from the replicate analyses.

- Calculation: Compute the Critical Level (L~C~) and the Limit of Detection (L~D~) using the equations provided in Table 1 (e.g., Equations 4 and 5 from the literature [3]).

Visual Workflow: From Signal to Detection Limit

The following diagram illustrates the logical relationship between the blank signal, the Critical Level, and the Limit of Detection, including the associated statistical risks.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Enhancing Detection in Inorganic Trace Analysis

| Item | Function in Analysis | Example Application |

|---|---|---|

| Layered Double Hydroxides (LDHs) | Advanced sorbents for Solid-Phase Extraction (SPE). Their tunable composition allows for selective adsorption and pre-concentration of target oxyanions from sample matrices [23]. | Separation and pre-concentration of inorganic oxyanions of chromium, arsenic, and selenium from aqueous matrices prior to spectrometric detection [23]. |

| High-Purity Reference Materials | Certified materials used for instrument calibration, method validation, and ensuring accuracy and traceability of results. Critical for reliable LOD determination [22]. | Used in QC protocols to confirm method performance and for spiking experiments in recovery studies to validate LOD/LOQ [22] [6]. |

| Specialized Sorbents (SPE Columns) | Used in sample preparation to isolate and enrich analytes, thereby improving sensitivity and mitigating matrix effects that can impair detection limits [23]. | Conventional, dispersive (DSPE), and magnetic (MSPE) SPE procedures for the clean-up and pre-concentration of trace elements [23]. |

| ICP-MS Tuning Solutions | Standardized solutions used to optimize instrument parameters (nebulizer flow, torch position, ion lens voltages) for maximum sensitivity and stability [20]. | Essential for achieving the lowest possible instrumental detection limit (IDL) for elements like arsenic in ICP-MS, which directly influences the method detection limit (MDL) [20]. |

Advanced Methodologies for Enhanced Sensitivity in Trace Analysis

Fundamental Principles and Material Selection

FAQ: What are the core advantages of LDHs and biochar for preconcentration in trace analysis?

Answer: Both Layered Double Hydroxides (LDHs) and biochar offer unique structural properties that make them highly effective as sorbents for the preconcentration of trace inorganic analytes, directly contributing to lower limits of detection.

Layered Double Hydroxides (LDHs): LDHs are a class of synthetic clay materials with a general formula of ([M^{2+}{1-x}M^{3+}x(OH)2]^{x+}[A^{n-}]{x/n} \cdot mH_2O), where (M^{2+}) and (M^{3+}) are di- and trivalent metal cations, and (A^{n-}) is an interlayer anion [24] [25]. Their key advantages include:

- Designable Structure: The metal cation composition and interlayer anion can be precisely tuned to enhance affinity for specific target analytes [24] [26] [25].

- Multiple Adsorption Mechanisms: They can capture contaminants through anion exchange, surface adsorption, and ligand exchange, making them versatile for various ionic species [24].

- Functionalization Potential: Their structure can be easily modified with other functional materials, like graphene quantum dots, to significantly boost extraction efficiency and introduce new properties [25] [27].

Biochar: Biochar is a carbon-rich porous material produced from the pyrolysis of organic biomass under oxygen-limited conditions [28] [29]. Its advantages include:

- High Surface Area and Porosity: It possesses a complex, honeycomb-like network of pores, providing numerous adsorption sites. The surface area can range from low (<250 m²/g) to very high (>500 m²/g), comparable to activated carbon [28].

- Eco-friendly and Cost-Effective: It can be produced from abundant agricultural waste, making it a sustainable and economical choice [30] [31].

- Rich Surface Functional Groups: Its surface contains functional groups (e.g., carboxyl, hydroxyl) that facilitate the adsorption of metal ions [30] [28].

FAQ: How does sorbent preconcentration optimize the Limit of Detection (LOD)?

Answer: Preconcentration using solid-phase sorbents like LDHs and biochar is a critical sample preparation step that directly improves the Limit of Detection (LOD) by addressing two key factors:

- Analyte Enrichment: It transfers the target trace analytes from a large volume of sample onto a much smaller volume of sorbent. Subsequent elution (desorption) releases the analytes into a minimal volume of solvent, significantly increasing their concentration [27].

- Matrix Clean-up: The process separates the analytes from a complex sample matrix, reducing potential interferences during the final instrumental analysis (e.g., by ICP-MS or HPLC). A cleaner sample leads to a lower signal-to-noise ratio and more accurate quantification at trace levels.

Experimental Protocols for Sorbent Synthesis and Application

Protocol: Synthesis of Carbonate-Free Mg-Al LDH for Enhanced Performance

Background: Standard LDH coprecipitation can incorporate carbonate ions ((CO_3^{2-})) from the air, which strongly bind to the LDH layers and reduce capacity for other anions. Creating a carbonate-free LDH is essential for maximizing preconcentration efficiency [27].

Materials:

- Magnesium nitrate hexahydrate (Mg(NO₃)₂·6H₂O) and Aluminum nitrate nonahydrate (Al(NO₃)₃·9H₂O)

- Sodium hydroxide (NaOH)

- Deionized water, degassed by boiling and cooling under an inert atmosphere

- Nitrogen (N₂) gas supply

Procedure:

- Solution Preparation: Prepare a 1.5 M mixed metal nitrate solution with a Mg²⁺/Al³⁺ molar ratio of 3:1. Simultaneously, prepare a 2.0 M NaOH solution. Use degassed deionized water for all solutions.

- Coprecipitation: Set up the reactor with a constant flow of N₂ gas to maintain an inert atmosphere. Add the metal nitrate solution and the NaOH solution dropwise simultaneously into a reaction vessel containing a small amount of degassed water. Maintain vigorous stirring and keep the pH between 9.5 and 10.0.

- Aging: After complete addition, continue stirring the slurry under N₂ atmosphere for 24 hours at room temperature.

- Washing and Drying: Recover the solid product by centrifugation. Wash the precipitate several times with degassed deionized water and ethanol. Finally, dry the product in an oven at 60-70°C overnight [27].

Protocol: Dispersive Solid-Phase Extraction (d-SPE) using LDH/GQD Composite

Background: This protocol describes using an LDH composite for efficient extraction of organic and inorganic analytes from water samples, a key preconcentration step [27].

Materials:

- Synthesized LDH or LDH/GQD composite sorbent

- Water sample (e.g., lake, river, wastewater)

- Appropriate elution solvent (e.g., methanol, acidic solution)

- Centrifuge tubes, centrifuge, vortex mixer

Procedure:

- Sorbent Addition: Weigh a precise amount of the sorbent (e.g., 10-20 mg) into a centrifuge tube containing a known volume of the water sample.

- Extraction: Vortex the mixture vigorously to ensure complete dispersion of the sorbent and maximize contact with the analytes. Continue the extraction for a predetermined time (e.g., 5-15 minutes).

- Separation: Centrifuge the tubes to separate the sorbent from the liquid phase.

- Elution: Carefully decant the supernatant. Add a small volume of a strong elution solvent to the sorbent pellet. Vortex to desorb the concentrated analytes.

- Analysis: Separate the eluent from the sorbent via centrifugation and filter it if necessary. The resulting eluent is now preconcentrated and ready for analysis by techniques like HPLC or ICP-MS [27].

Figure 1: Workflow for dispersive Solid-Phase Extraction using LDHs.

Protocol: Functionalization of LDH with Graphene Quantum Dots (GQDs)

Background: Incorporating GQDs into LDHs creates a composite material that combines the high surface area and rich functionality of GQDs with the layered structure of LDHs, leading to a dramatic increase in extraction efficiency [27].

Materials:

- Pre-synthesized carbonate-free Mg-Al LDH

- Citric acid (for GQD synthesis)

- Deionized water

Procedure:

- GQD Synthesis: Heat citric acid at 200°C for 30 minutes. The liquid will carbonize to form GQDs. Dissolve the resulting yellow-orange product in deionized water to create a 20% w/v GQD solution [27].

- Composite Formation: Add the carbonate-free LDH powder to the GQD solution under stirring. Use an optimal ratio of 1.0 mL of 20% w/v GQD solution per gram of LDH.

- Loading and Drying: Continue stirring for several hours to allow the GQDs to intercalate and bind to the LDH layers. Separate the solid composite by centrifugation and dry it at low temperature.

Performance: This functionalization can lead to an 80% increase in extraction efficiency compared to bare LDH [27].

Sorbent Properties and Performance Data

Table: Classification and Applications of Biochar Based on Surface Area

| Surface Area Category | Range (m²/g) | Ideal Preconcentration Applications | Key Considerations for LOD Optimization |

|---|---|---|---|

| Low | < 250 | - Solid fuel for sample digestion [28] | - Lower affinity for trace metals. Primarily useful as a matrix for other processes. |

| Moderate | 250 - 500 | - Preconcentration of organic pollutants [28]- Water treatment for cation removal [28] | - Good balance between capacity and cost. Suitable for less complex matrices. |

| High | > 500 | - Preconcentration of heavy metals (Pb²⁺, Cu²⁺, Cd²⁺) [28] [31]- Capture of CO₂ for analysis [28] | - Highest adsorption capacity, directly leading to greater enrichment factors and lower LODs. Chemical functionalization can further enhance selectivity. |

Table: Metal Combinations in LDHs for Targeting Specific Anions

| Divalent Metal (M²⁺) | Trivalent Metal (M³⁺) | Target Anion/Application | Key Performance Insight |

|---|---|---|---|

| Mg, Ca, Ba, Mn, Co, Ni, Cu, Zn [24] | Cr, Fe, Al, Bi, Ga [24] | Iodate (IO₃⁻) decontamination | Machine learning discovered multi-metal LDHs (quaternary, quinary) show superior performance due to synergistic effects [24]. |

| Mg [32] | Fe [32] | Arsenic (As) removal | The Fe component enables strong adsorption of arsenic oxyanions. |

| Fe [32] | Mn, Zr [32] | Arsenic (As) removal | The Mn component can oxidize As(III) to As(V), while Zr enhances overall adsorption capacity, creating a powerful ternary system [32]. |

| Not Specified | Lanthanides (e.g., Dy³⁺) [33] | Heavy metal detection (Pb²⁺, Cu²⁺) | LDHs intercalated with organic molecules (e.g., stilbene) can be used for phosphorescence-based sensing of adsorbed metals. |

Troubleshooting Common Experimental Issues

FAQ: My LDH sorbent shows low adsorption capacity. What could be wrong?

Answer: Low capacity can stem from several factors related to synthesis and application:

- Problem: Incorrect pH during Use. The pH of the sample solution drastically affects the surface charge of the LDH and the speciation of the target analyte.

- Solution: Conduct preliminary experiments to determine the optimal pH for your specific LDH-analyte pair. For example, adsorption of arsenic on Fe-Mn-Zr composites is most effective at acidic to neutral pH [32].

- Problem: Presence of Carbonate Ions. If the LDH was synthesized without excluding air, carbonate ions can occupy the interlayer space, reducing availability for target anions [27].

- Solution: Synthesize LDHs under a nitrogen atmosphere using degassed solutions to produce a carbonate-free sorbent with higher anion exchange capacity [27].

- Problem: Suboptimal Metal Ratio. The molar ratio of M²⁺ to M³⁺ is critical for layer charge and reactivity. A standard ratio is often 2:1 to 4:1, but this should be optimized.

- Solution: Use design of experiment (DoE) approaches, like the Taguchi method, to optimize the metal composition for your target analyte. This was successfully used to develop a highly effective Fe-Mn-Zr sorbent [32].

FAQ: I am experiencing poor recovery of my target analyte during elution. How can I improve this?

Answer: Poor recovery indicates the analytes are not being effectively desorbed from the sorbent.

- Problem: Elution Solvent is Too Weak. The binding affinity between the sorbent and analyte may be too strong for a mild solvent to break.

- Solution: Use a stronger elution solvent. For metal ions adsorbed on biochar or LDHs, a small volume of a concentrated acid (e.g., 1-2% HNO₃) is typically effective. For organic molecules, a solvent like methanol or acetonitrile is used [27].

- Problem: Incomplete Contact during Elution. Simply adding solvent may not be sufficient.

- Solution: Ensure thorough mixing (vortexing or shaking) during the elution step to maximize contact between the eluent and the sorbent [27].

FAQ: My biochar's performance seems to degrade over time. Is this expected?

Answer: Yes, this is a recognized phenomenon known as "biochar ageing." Ageing alters the physicochemical properties of biochar, which can impact its long-term effectiveness for preconcentration [29].

- Cause: Ageing can be caused by chemical oxidation, dry-wet cycles, and freeze-thaw cycles in the environment. This process increases oxygen content, forms more oxygen-containing functional groups, can clog pores, and significantly lowers the pH of the biochar [29].

- Effect on Analysis: Ageing can change the adsorption/desorption dynamics. One study showed that aged biochar increased the exchangeable (bioavailable) fractions of Cu, Cd, and Pb, which could lead to inaccurate results in stability studies or re-release of preconcentrated analytes [29].

- Solution: For consistent analytical results, use freshly prepared biochar and store it properly. When studying long-term trends, account for ageing effects in your experimental design.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Reagents for LDH and Biochar-based Preconcentration

| Reagent / Material | Function in Preconcentration | Example Application |

|---|---|---|

| Metal Nitrate Salts (e.g., Mg(NO₃)₂, Al(NO₃)₃, Fe(NO₃)₃) | Precursors for the synthesis of LDHs, providing the divalent and trivalent metal cations for the layered structure [24] [27]. | Synthesis of Mg-Al LDH for anion exchange [27]. |

| Graphene Quantum Dots (GQDs) | Functionalizing agent to enhance LDH sorption properties. GQDs provide a high surface area and abundant oxygen-containing functional groups (-OH, -COOH) [27]. | Creating LDH/GQD composites for increased extraction efficiency of benzophenones and parabens [27]. |

| Citric Acid | A common and safe carbon source for the synthesis of GQDs [27]. | Production of GQDs for functionalizing LDHs. |

| Hydrogen Peroxide (H₂O₂) | A chemical oxidizing agent used in artificial ageing studies to simulate long-term environmental effects on biochar [29]. | Evaluating the long-term stability and performance of biochar sorbents. |

| Lanthanide Salts (e.g., Dy(NO₃)₃, Eu(NO₃)₃) | Used to form lanthanide-containing LDHs, which can be part of sensing systems due to their luminescence properties [33]. | Developing LDH-based phosphorescence sensors for heavy metals like Pb²⁺ and Cu²⁺ [33]. |

Figure 2: A decision guide for selecting between LDH and Biochar-based sorbents.

Solid-Phase Extraction (SPE) is a fundamental sample preparation technique that enables the purification, separation, and concentration of analytes from complex sample matrices. Within the context of organic trace analysis research, effective matrix cleanup is paramount for achieving optimal limits of detection (LOD). By selectively removing interfering compounds, SPE techniques significantly reduce background noise and matrix effects that can compromise analytical sensitivity and accuracy. The evolution from traditional SPE to more advanced formats including dispersive SPE (dSPE) and magnetic SPE (MSPE) has provided researchers with a versatile toolkit for addressing diverse analytical challenges, particularly when dealing with complex samples such as environmental pollutants, biological fluids, pharmaceuticals, and food products.

This technical support center addresses the most common experimental challenges encountered when implementing SPE, dSPE, and MSPE methodologies, with particular emphasis on their application in LOD optimization for trace organic analysis. The guidance provided is specifically framed within the rigorous requirements of drug development and research environments, where reproducibility, sensitivity, and efficiency are critical.

Understanding SPE Techniques and Configurations

Core Principles and Technique Comparison

SPE operates on the principle of differential affinity, where analytes of interest are selectively retained on a solid sorbent while matrix components are washed away. The fundamental process involves four key stages: conditioning (to activate the sorbent), sample loading (where analytes are retained), washing (to remove impurities), and elution (to recover purified analytes) [34] [35]. This process effectively bridges the gap between sample collection and analysis, serving to preconcentrate target analytes while removing matrix interferents that could cause ion suppression in mass spectrometric detection or deteriorate chromatographic performance [36] [35].

The continuing development of SPE has led to several specialized configurations, each with distinct advantages for particular applications. The table below summarizes the key characteristics of these techniques:

Table 1: Comparison of Solid-Phase Extraction Techniques

| Parameter | SPE Cartridge | dSPE | MSPE |

|---|---|---|---|

| Classification | Exhaustive flow-through equilibrium [36] | Non-exhaustive batch equilibrium [36] | Non-exhaustive batch equilibrium [36] |

| Mechanism | Sample flows through a packed sorbent bed [34] | Sorbent dispersed in sample solution [36] | Magnetic sorbent separated by external magnet [36] |

| Typical Sorbent Mass | 4–30 mg [36] (up to several grams for larger volumes [36]) | 4–400 µg [36] | Varies with synthesis |

| Primary Benefits | Wide range of sorbents; established protocols [36] | Simplicity, shorter extraction time; no conditioning required [36] | Rapid separation; reusability; avoids centrifugation/filtration [36] |

| Common Applications | Wide variety of sample matrices [36] | QuEChERS methods; pesticide residues [36] | Environmental and biomedical samples [36] |

| Limitations | Possible channeling; sluggish flow; plugging [36] | Decreased breakthrough volume; potential for small sample loss [36] | Sorbent synthesis required; limited commercial availability [36] |

The following diagram illustrates the generalized operational workflow for SPE, dSPE, and MSPE techniques, highlighting their parallel steps and key decision points for method optimization.

Troubleshooting Guides

Poor Recovery

Poor recovery is the most frequently encountered problem in SPE and can severely impact quantitative accuracy and LOD [34] [37].

- Problem: The analyte is present in the loading or wash fraction, indicating insufficient retention.

- Solution: Verify that conditioning steps were performed correctly and the sorbent bed did not dry out before loading [34] [35]. Adjust the sample pH to ensure the analyte is in a neutral state for reversed-phase mechanisms or in a charged state for ion-exchange [34] [37]. Consider using a sorbent with greater affinity for your analyte [37] [35]. Reduce the flow rate during sample loading to increase interaction time with the sorbent [35].

- Problem: The analyte is retained but not fully eluting.

- Solution: Increase the strength of the elution solvent (e.g., higher organic percentage) or adjust its pH to convert the analyte to its non-retained form [34]. Increase the elution volume or perform two sequential elutions with smaller volumes [34] [35]. For strong secondary interactions, switch to a less retentive sorbent (e.g., C4 instead of C18) or add modifiers to the elution solvent [34] [37].

Lack of Reproducibility

Inconsistent results between extractions undermine method validation and reliability.

- Problem: High variability in recovery between replicate samples.

- Solution: Ensure consistent sample pre-treatment and that analytes are fully dissolved [35]. Do not allow the sorbent bed to dry out after conditioning and before sample loading [34] [35]. Control and maintain a slow, consistent flow rate (typically 1-2 mL/min) during critical loading and elution steps [34] [35]. Include soak steps of 1-5 minutes during conditioning and elution to allow for complete solvent-sorbent equilibration [35].

- Problem: Cartridge overload leading to breakthrough and analyte loss.

- Solution: Reduce the sample load or switch to a cartridge with higher capacity. As a general guide, silica-based sorbents have a capacity of ~5% of sorbent mass, while polymeric sorbents can be ~15% [34].

Impure Extractions (Inadequate Cleanup)

Inadequate cleanup leads to co-eluting interferences, causing ion suppression in LC-MS and inaccurate quantification.

- Problem: Matrix interferences are eluting with the target analyte.

- Solution: Optimize the wash solvent strength—it should be strong enough to remove impurities but not elute the analyte [37] [35]. For complex matrices, consider using a more selective sorbent mechanism (e.g., ion-exchange) or a mixed-mode sorbent [34] [37]. Implement sample pre-treatments such as protein precipitation, liquid-liquid extraction for lipids, or dilution to reduce matrix effects [37] [35].

- Problem: Contaminants leaching from the cartridge itself.

- Solution: Pre-wash the cartridge with elution solvent prior to the conditioning step to remove potential leachables [35].

Flow Rate Issues

Improper flow control affects retention efficiency and reproducibility.

- Problem: Flow rate is too slow or the cartridge is clogged.

- Solution: Filter or centrifuge samples to remove particulate matter before loading [34] [35]. For viscous samples, dilute with a compatible solvent to reduce viscosity [34]. If the flow is slow but not clogged, apply gentle positive pressure or vacuum within the manufacturer's limits [34].

- Problem: Flow rate is too fast, reducing retention.

- Solution: Use a manifold or pump to control and maintain a slower, optimal flow rate [34]. A typical stable flow rate for many procedures is below 5 mL/min [34].

Frequently Asked Questions (FAQs)

Q1: How do I choose the right sorbent for my application? The choice of sorbent depends on the analyte's chemical properties and the sample matrix. Use reversed-phase (C18, C8, polymeric) for non-polar neutral molecules; normal-phase (silica, cyano) for polar analytes in non-polar solvents; cation exchange for positively charged bases; and anion exchange for negatively charged acids [34]. For analytes with both non-polar and ionizable groups, mixed-mode sorbents are highly effective [37].

Q2: What are the primary causes of low recovery in dSPE? In dSPE, low recovery is often due to insufficient interaction time between the sorbent and analyte, incorrect sorbent selection, or inefficient centrifugation leading to incomplete phase separation. Ensure adequate vortexing time and speed to promote interaction, and confirm that the sorbent chemistry is appropriate for your analyte [36].

Q3: Why is MSPE considered advantageous for certain applications? MSPE simplifies the separation process by using an external magnet, eliminating the need for centrifugation or filtration, which can be time-consuming and lead to analyte loss [36]. The magnetic sorbents can often be regenerated and reused, making the process more cost-effective and environmentally friendly [36].

Q4: How can I improve the cleanliness of my final extract? If your wash step is not removing enough interference, try using a water-immiscible solvent like hexane or ethyl acetate during the wash. These solvents can effectively elute non-polar matrix interferences while retaining the analyte if it is insoluble in them [37]. Also, ensure the cartridge is properly dried after aqueous washes before proceeding with elution [35].

Q5: My method was working but now shows poor recovery. What should I check? First, verify the performance of your analytical instrument with pure standards [37]. Then, compare the lot numbers of your SPE sorbents; performance can vary between manufacturing batches [37]. Finally, meticulously re-check all preparation steps, including the pH of all solvents and samples, as small deviations can have large effects.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for SPE Method Development

| Item | Function & Application |

|---|---|

| C18 (Octadecyl) Sorbent | Reversed-phase workhorse for retaining non-polar compounds and hydrocarbons; widely used for environmental and pharmaceutical analysis in aqueous matrices [34] [36]. |

| Mixed-Mode Sorbent | Combines two retention mechanisms (e.g., reversed-phase and ion exchange), offering superior selectivity for analytes with both hydrophobic and ionizable groups, leading to cleaner extracts [37]. |

| Hydrophilic-Lipophilic Balance (HLB) Sorbent | A water-wettable polymeric sorbent effective for a broad range of acidic, basic, and neutral compounds without requiring conditioning; ideal for unknown screening or multiple analyte classes [34] [36]. |

| Primary/Secondary Amine (PSA) Sorbent | Used primarily in dSPE for QuEChERS methods; effectively removes various polar interferences like fatty acids, sugars, and organic acids from food matrices [36]. |

| Magnetic Sorbents (e.g., Fe3O4@C18) | The core component of MSPE; provides a high-surface-area solid phase that can be rapidly separated from the sample solution using a magnet, streamlining the cleanup process [36]. |

| Graphitized Carbon Black (GCB) | Used to remove planar molecules, pigments, and sterols from samples; particularly effective for pigment cleanup in food analysis [36]. |

| Strong Cation/Anion Exchange Sorbents (SCX/SAX) | Provide high-capacity, selective retention of basic (SCX) or acidic (SAX) compounds based on ionic interactions, often at specific pH values [34]. |

Detailed Experimental Protocol: SPE Method Development for Aqueous Samples

This protocol provides a generalized framework for developing a reversed-phase SPE method suitable for extracting non-polar to moderately polar organic analytes from aqueous matrices, a common scenario in environmental and bioanalytical chemistry.

Materials:

- SPE manifolds (vacuum or positive pressure)

- Appropriate sorbents (e.g., 100 mg/1mL or 500 mg/3mL cartridges)

- HPLC-grade solvents (methanol, acetonitrile, water)

- Buffers for pH adjustment (e.g., phosphate, acetate)

- Sample vials and collection tubes

Step-by-Step Procedure:

- Sorbent Selection: Based on preliminary analyte characterization, select a suitable reversed-phase sorbent (e.g., C18 for highly non-polar, polymeric for broader range).

- Conditioning: Pass 2-3 column volumes of methanol (or another strong solvent compatible with the sorbent) through the cartridge to wet the surface and solvate the functional groups. Follow with 2-3 column volumes of water or a buffer matching the sample's starting pH. Do not allow the sorbent to run dry after this step [34] [35].

- Sample Loading: Adjust the sample pH to ensure analytes are uncharged for maximum retention on reversed-phase sorbents. Load the sample at a controlled, slow flow rate (1-2 mL/min) to maximize equilibrium and prevent breakthrough [34] [35].

- Washing: After sample loading, pass 2-3 column volumes of a weak solvent (e.g., 5-10% methanol in water, or a buffer) to remove undesired matrix components without eluting the analytes. For tougher cleanup, a water-immiscible solvent like hexane can be highly effective for removing non-polar interferences [37].

- Drying: Remove residual water by applying full vacuum for 5-20 minutes or by passing air through the cartridge. This step is critical when the elution solvent is not miscible with water [35].

- Elution: Elute the analytes with 1-2 column volumes of a strong solvent (e.g., pure methanol, acetonitrile, or a mixture). For difficult-to-elute analytes, a stronger solvent or one modified with acid/base may be needed. Collect the eluate in a clean vial [34] [37].

- Reconstitution (if needed): If further concentration is required or the eluate solvent is incompatible with the analytical instrument, evaporate the solvent under a gentle stream of nitrogen and reconstitute the residue in a compatible mobile phase.

- Analysis: Analyze the purified extract using your chosen chromatographic method (e.g., LC-MS, GC-MS).

Optimization Notes: Always validate the method by collecting and analyzing fractions from the loading, wash, and elution steps to create a mass balance and identify where analyte loss occurs [37]. Systematically vary one parameter at a time (e.g., wash solvent strength, elution volume) to refine the method for maximum recovery and cleanliness.

# Troubleshooting Guide: FAQs on Signal Enhancement and LOD Optimization

This guide addresses common experimental challenges in optimizing the Limit of Detection (LOD) for inorganic trace analysis, providing targeted solutions based on current research in chemical modification and interface engineering.

1. Why does my sensor show high background noise, leading to poor signal-to-noise ratio?

- Root Cause: Non-specific binding of signaling units or slow flow rates in assays can increase background interference. In photoelectrochemical (PEC) systems, inefficient charge separation causes rapid electron-hole recombination.

- Solution: Incorporate blocking agents like Bovine Serum Albumin (BSA) to passivate unused surface sites on nanoparticles. Ensure the use of surfactants in running buffers and optimize flow dynamics to reduce non-specific interactions [38]. For PEC sensors, apply interface engineering to create efficient charge transfer channels, suppressing charge recombination [39].

2. How can I improve an assay's sensitivity without expensive external equipment or reagents?

- Root Cause: Conventional assay designs may not efficiently concentrate the analyte at the detection zone.

- Solution: Implement a test-zone pre-enrichment strategy. By modifying the assembly order of a lateral flow assay (LFA) strip and loading the sample before the conjugate pad, you can pre-concentrate the analyte at the test line. This method has been shown to improve the visual LOD for biomacromolecules by 10 to 100-fold without additional instruments or reagents [38].

3. My inorganic-organic composite material has weak interfacial compatibility, hurting mechanical properties. What can I do?

- Root Cause: Traditional organic modifiers can age and decompose, while unmodified inorganic particles often have poor compatibility with polymer matrices.

- Solution: Utilize facet engineering of inorganic particles. By controlling the exposure of specific crystal facets (e.g., the (102) facet of anhydrite), you can modulate the surface electron density and coordination environment. This enhances electron transfer and interfacial compatibility with polymers like polypropylene, dramatically improving mechanical properties such as tensile strain at break by up to 395% without organic modifiers [40].

4. What is the most reliable way to estimate the Limit of Detection (LOD) for my voltammetric method?

- Root Cause: Inconsistent definitions and calculation methods for LOD lead to unreliable estimates.

- Solution: Follow a statistically rigorous procedure. Analyze a minimum of 10 replicate blank samples (or low-concentration samples) to estimate the standard deviation ((s0)). Then, calculate the LOD using the formula: (LOD = 3.3 \times s0) (for a 5% risk of both false positives and false negatives). This method is more reliable than simple signal-to-noise ratio measurements for concentration-based results [3] [41].

5. The sensitivity of my metal oxide semiconductor (MOS) gas sensor is insufficient for trace gas detection.

- Root Cause: Low surface area, inefficient gas diffusion, or poor catalytic activity limit interaction with target gas molecules.

- Solution: Employ material-level engineering strategies. This includes designing porous nanostructures to increase surface area, doping with noble metal nanoparticles (e.g., Au, Pd) to enhance catalytic activity, and forming heterojunctions (e.g., ZnFe₂O₄/SnO₂) to improve charge separation and specificity [42].

# Experimental Protocols for Key Enhancement Strategies

Protocol 1: Test-Zone Pre-enrichment for Lateral Flow Assays (LFA)

This protocol outlines the procedure to enhance LOD by modifying the strip assembly sequence, based on the method described for detecting miR-210 and HCG [38].

- Key Principle: Pre-concentrate the analyte at the test zone before introducing the signal probe (e.g., gold nanoparticles), increasing the local concentration and improving the capture efficiency.

- Materials:

- Nitrocellulose (NC) membrane

- Sample pad

- Conjugate pad

- Absorbent pad

- Phosphate running buffer (pH 7.4)

- Sample solution

- Gold nanoparticle (AuNP)-antibody/aptamer conjugates (DP-AuNPs)

- Procedure:

- Strip Assembly (Modified Order): Assemble the LFA strip components without the conjugate pad. Fix the sample pad, NC membrane (pre-coated with capture probes), and absorbent pad in sequence on a backing card.

- Sample Pre-enrichment: Apply 50-500 µL of sample diluted in phosphate running buffer to the sample pad. Allow it to migrate and be captured at the test zone on the NC membrane. This step takes approximately 6-8 minutes per 50 µL.

- Conjugate Pad Installation: After the sample has been fully enriched, attach the conjugate pad (pre-dried with DP-AuNPs) between the sample pad and the NC membrane.

- Signal Development: Apply running buffer to the sample pad. The buffer will rehydrate and carry the DP-AuNPs across the test zone, where they bind to the pre-captured analyte, generating a visible signal.

- Result Interpretation: Visually inspect or use a strip reader to quantify the signal at the test zone within 20 minutes of the initial sample application.

Protocol 2: Enhancing Interfacial Compatibility via Facet Engineering of Inorganic Particles