Strategic Resource Allocation for Effective Environmental Scanning in Drug Development

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to optimize resource allocation for environmental scanning.

Strategic Resource Allocation for Effective Environmental Scanning in Drug Development

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to optimize resource allocation for environmental scanning. It covers foundational principles, advanced methodological applications, common troubleshooting for optimization challenges, and validation techniques. By integrating predictive analytics, AI-driven tools, and strategic frameworks, the content demonstrates how to efficiently identify emerging trends, assess risks, and capitalize on opportunities, thereby enhancing R&D efficiency and strategic decision-making in the competitive biomedical landscape.

Understanding Environmental Scanning and Its Strategic Value in Biomedical Research

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary value of using a structured framework like PESTEL for environmental scanning?

A structured PESTEL framework transforms chaotic external data into actionable strategic insights. It provides a complete guide to examining Political, Economic, Social, Technological, Environmental, and Legal factors, acting as an early warning system to detect emerging opportunities and threats before they impact operations [1]. This systematic approach brings clarity to the external business environment, helping organizations spot risks earlier, respond faster to change, and turn macro-level disruption into a competitive advantage [1].

FAQ 2: How does competitive intelligence (CI) integrate with PESTEL analysis?

Competitive intelligence focuses specifically on understanding competitors' moves, strategies, and weaknesses [2]. When integrated with the broader, macro-environmental focus of PESTEL, it creates a holistic view of the business landscape. Modern CI is evolving into holistic "Market & Competitive Intelligence" (M&CI), which analyzes adjacent industries, partner ecosystems, and regulatory shifts, connecting the dots that a narrow focus on direct competitors would miss [3]. For example, a company like Nike competes not just with Adidas, but also with technology firms and health apps [3].

FAQ 3: Our resource allocation for research is limited. Which environmental scanning activities should we prioritize?

Prioritize activities that directly inform your most critical strategic decisions. Begin by clearly defining the scope of your analysis, including geography and time horizon [1]. Focus resources on gathering high-quality information from credible sources like government reports, industry associations, and academic research [1]. Leveraging AI-powered CI tools can also maximize efficiency, as they can analyze massive volumes of unstructured data in seconds, automating routine tasks and surfacing insights faster than manual methods [3].

FAQ 4: We've collected environmental data, but our strategies remain unchanged. How do we transform insights into action?

The key is to deliberately connect insights to strategy development. Use PESTEL findings as direct input for your SWOT analysis, transforming external trends into concrete opportunities and threats [1]. Develop multiple scenarios based on key PESTEL factors to stress-test your strategic options [1]. Furthermore, adopting business wargaming—structured simulations to anticipate competitor moves—can help you create actionable "if-then" plans, ensuring your insights lead to prepared responses [3].

Troubleshooting Guides

Issue 1: Overcoming Data Overload and Poor-Quality Information

Problem Statement: Researchers are overwhelmed by the volume of available data and cannot verify its quality or relevance, leading to paralysis in decision-making.

Diagnosis: This is often caused by a lack of a defined scope for the scanning activity and over-reliance on a single type of data source.

Resolution Protocol:

- Define Scope & Boundaries: Clearly delineate the geographic and time horizons for your analysis. Decide if you are analyzing local, national, or global trends and whether the focus is on short-term (1-2 years) or long-term (3-5+ years) shifts [1].

- Diversify Information Sources: Move beyond a single source type. Gather data from a mix of:

- Authoritative External Sources: Government publications, economic forecasts, and industry reports [1].

- Front-Line Intelligence: Conduct structured interviews with sales, procurement, and operations teams who possess invaluable market intelligence [1].

- AI-Enhanced Tools: Implement competitive intelligence platforms that use natural language processing to sift through millions of documents like SEC filings, news, and expert call transcripts [2].

- Establish a Data Quality Checklist: Validate all information against criteria including source authority, timeliness, consistency across multiple sources, and relevance to your pre-defined scope.

Issue 2: Integrating Scanning Insights into Resource Allocation and Project Planning

Problem Statement: Environmental scanning is treated as an academic exercise, and its findings fail to influence how resources, budgets, and personnel are assigned to R&D projects.

Diagnosis: The disconnect arises from a lack of formal processes to translate macro-trends into micro-level resource decisions.

Resolution Protocol:

- Formalize Cross-Functional Review: Assemble a team with representatives from R&D, strategy, finance, and competitive intelligence to review PESTEL/CI findings [1] [4].

- Conduct a Strategic Impact vs. Resource Demand Assessment: Use a framework to prioritize trends. For each significant trend, such as a new regulatory shift (Legal) or breakthrough technology (Technological), assess its potential impact on your research portfolio against the resource demand required to address it.

- Leverage Resource Optimization Tools: Use resource management software to model different scenarios.

- Tools like Epicflow offer features for competence management and smart resource allocation, ensuring the right personnel are assigned to projects based on the skills required by new strategic priorities [5].

- Forecast utilizes AI-assisted scheduling to analyze team members' skills, availability, and historical performance to optimally allocate resources in response to new initiatives [6].

Issue 3: Failure to Anticipate a Competitor's Strategic Move or Market Disruption

Problem Statement: An organization is blindsided by a competitor's product launch, a disruptive business model, or a sudden regulatory change.

Diagnosis: The competitive intelligence function is reactive, siloed, or relies on outdated manual tracking methods.

Resolution Protocol:

- Implement Real-Time Monitoring Systems: Adopt CI platforms that offer real-time data processing and alerts. Organizations using real-time data enrichment enable 25% faster decision-making [3].

- Activate "Dark Data" Analysis: Use AI-driven tools to analyze your organization's unstructured data (e.g., customer service emails, archived documents, support call transcripts). This can uncover recurring complaints or emerging competitive threats that were previously invisible [3].

- Conduct Business Wargaming: Move from passive tracking to active prediction. Run structured simulations where teams role-play as key competitors to stress-test your strategies and anticipate their likely moves in dynamic scenarios [3].

The Researcher's Toolkit: Essential Solutions for Environmental Scanning

The following tools and platforms are essential for conducting effective environmental scanning and competitive intelligence.

Competitive & Market Intelligence Platforms

| Platform | Primary Function | Key Feature / Strategic Advantage |

|---|---|---|

| AlphaSense [2] | AI-powered market intelligence | Searches millions of documents (SEC filings, transcripts) using natural language processing. |

| Tegus [2] | Expert transcript library | Provides a vast, searchable database of expert interview transcripts on companies and industries. |

| PitchBook [2] | Private market data | Tracks VC, PE, and M&A activity; uses AI to surface trends in private company data. |

| Gartner [2] | Research and advisory | Offers industry-specific reports and strategic advisory services, notably its "Magic Quadrant" evaluations. |

| Expert Network Calls (ENC) [2] | Expert network aggregator | Provides a single point of access to a large pool of experts across multiple network providers. |

Resource & Project Management Tools

| Tool | Primary Function | Key Feature / Strategic Advantage |

|---|---|---|

| Epicflow [5] | AI-powered multi-project resource management | Features automatic task prioritization and a competence management system for optimal resource allocation. |

| Forecast [6] | AI-powered project & resource management | Uses machine learning for predictive resource scheduling and auto-assigning tasks based on skills and availability. |

| Float [6] | Visual resource planning | Offers a simple, visual resource scheduling interface with drag-and-drop functionality for quick resource reallocation. |

| ONES Resource [6] | Project resource management | Provides multi-dimensional Gantt views for cross-project resource planning and workload management. |

Experimental Protocols & Workflows

Protocol 1: Systematic PESTEL Analysis for Strategic Planning

Objective: To methodically identify and evaluate macro-environmental factors that could impact an organization's strategic goals, particularly in resource allocation for R&D.

Methodology:

- Step 1: Assemble a Cross-Functional Team. Include members from R&D, marketing, finance, and operations to gain diverse perspectives [1] [4].

- Step 2: Gather Information. Collect data from credible sources, including government reports, economic forecasts, academic research, and internal stakeholder interviews [1].

- Step 3: Analyze Each PESTEL Factor. Systematically examine each of the six dimensions. For each factor, distinguish between minor background conditions and significant directional shifts. Evaluate the specific organizational impact (opportunity, threat, or neutral) and rank factors by probability and potential impact [1].

- Step 4: Identify Interconnections. Look for relationships between different factors (e.g., how a technological breakthrough might trigger a new regulatory response) [1].

- Step 5: Connect to Strategy. Use the insights to inform SWOT analysis, develop strategic scenarios, and generate concrete strategic responses for resource allocation and project prioritization [1].

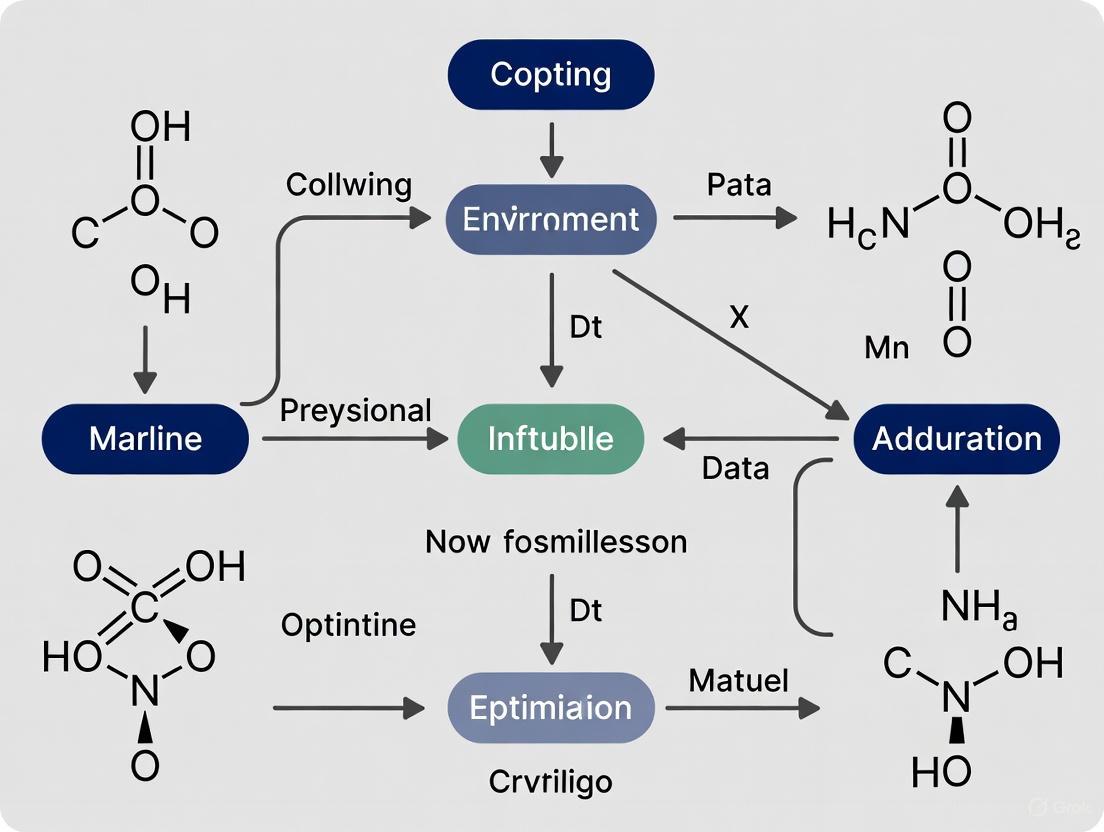

The following workflow diagram illustrates this systematic process:

Protocol 2: Integrating PESTEL Analysis with Resource Allocation

Objective: To create a direct linkage between macro-environmental trends and the allocation of R&D resources (personnel, budget, equipment).

Methodology:

- Step 1: From Trends to Strategic Initiatives. Translate the most critical PESTEL trends into proposed strategic R&D initiatives.

- Step 2: Impact & Resource Assessment. For each initiative, assess the potential impact on the organization and the estimated resource demand (FTE, budget, time).

- Step 3: Portfolio Prioritization Matrix. Plot each initiative on a matrix based on its strategic impact and resource demand to visualize and prioritize the portfolio.

- Step 4: Allocate & Optimize Resources. Use resource management tools to assign personnel based on competencies and availability, applying leveling or smoothing techniques to optimize the portfolio [5].

- Step 5: Implement Monitoring & Feedback Loop. Establish key performance indicators (KPIs) for the initiatives and continuously monitor the external environment for changes that might require reallocation.

This resource allocation logic is detailed in the following diagram:

Troubleshooting Guides and FAQs

This technical support center is designed to help researchers and scientists optimize their use of various scanning technologies within the drug development pipeline. The guidance is framed within the broader thesis of strategic resource allocation for environmental scanning research, ensuring that investments in these techniques yield maximum returns in risk mitigation and innovation identification.

FAQ: Scanning in Preclinical Development

Q1: Our histopathology results are inconsistent between animal model samples. What could be the root cause and how can we troubleshoot this?

Inconsistent histopathology results often stem from pre-analytical variables. Follow this systematic troubleshooting guide:

- Problem: Variable tissue morphology and staining artifacts.

- Potential Root Causes:

- Fixation Delay: Tissues not fixed promptly after collection, leading to autolysis.

- Fixation Time Inconsistency: Variable fixation durations across samples, altering antigenicity for IHC stains [7].

- Sectioning Thickness: Irregular paraffin section thickness on the microtome.

- Troubleshooting Steps:

- Standardize SOPs: Implement a strict standard operating procedure (SOP) from necropsy to slide mounting, specifying exact fixation times and conditions.

- Control Staining Batch: Include a control tissue sample in every staining batch (e.g., IHC, H&E) to validate assay performance [7].

- Adopt Digital Pathology: Utilize digital pathology systems to scan slides. This creates a permanent, high-resolution digital record, allowing for re-analysis and reducing observer bias. AI-powered analysis of these digital images can add consistency [7].

Q2: How can we better characterize a lead compound's crystallinity and formulation stability early on to avoid downstream failures?

Poor solid-form characterization is a major cause of formulation instability. Advanced material scanning techniques are critical.

- Problem: Unidentified polymorphic changes or particle size variations affecting drug stability and bioavailability.

- Troubleshooting with Scanning Electron Microscopy (SEM):

- Acquire High-Resolution Images: Use SEM to examine the morphology, surface texture, and microstructure of your API and formulated product [8].

- Analyze Particle Size Distribution: Consistent particle size within a batch ensures uniform dosing and drug release. SEM analysis is a key quality indicator for manufacturing processes [8].

- Perform Failure Analysis: If a batch fails stability tests, use SEM to visually inspect for changes in particle shape, surface morphology, or the presence of unexpected crystalline structures that indicate form conversion.

FAQ: Strategic Horizon and Environmental Scanning

Q3: Our organization often reacts to competitor drug launches rather than anticipating them. How can we build a proactive scanning system?

Reactive postures stem from a lack of systematic horizon scanning. Implementing a structured environmental scanning process is key to strategic resource allocation.

- Recommended Framework: A structured, three-step approach is effective [9]:

- Define Scope: Identify the strategic decisions you need to support (e.g., pipeline priorities, therapeutic area focus). Determine relevant time horizons (e.g., 12-36 months before regulatory approval) and key drivers of change [9] [10].

- Apply Structure: Use frameworks like PESTLE (Political, Economic, Social, Technological, Legal, Environmental) or STEEP to categorize intelligence. Assign clear roles and responsibilities (e.g., a RACI chart) for continuous monitoring [9].

- Equip People & Tools: Invest in specialized platforms (e.g., ITONICS, AdisInsight) that aggregate data from regulatory documents (EMA/FDA), clinical trial databases, scientific publications, and patent filings [9] [10].

Q4: What is the difference between a "weak signal" and a "macro trend," and which should we allocate more resources to tracking?

Distinguishing between these is crucial for efficient resource allocation in your scanning activities.

- Macro Trends: These are broad, long-term directional shifts that are already widely recognized (e.g., "aging populations," "digital health"). They are useful for structuring strategic thinking but offer little competitive advantage as they are known to all players [9].

- Weak Signals: These are the first subtle signs of potential discontinuity or change, often observed in fringe experiments, unusual scientific publications, or nascent startup activities. Tracking weak signals provides the highest leverage for early risk detection and innovation opportunity identification [9].

- Resource Allocation Recommendation: Allocate significant resources to tracking and qualifying weak signals. By the time a trend becomes "macro," the opportunity to move first is often gone. Real foresight comes from acting on weak signals before competitors do [9].

Experimental Protocols for Key Scanning Methodologies

Protocol 1: Multiplex Immunohistochemistry (IHC) for Complex Disease Microenvironments

Objective: To simultaneously detect multiple protein markers on a single formalin-fixed paraffin-embedded (FFPE) tissue section to understand cell populations and their functional interactions within a disease microenvironment (e.g., a tumor).

Methodology:

- Sectioning: Cut FFPE tissue sections at 4-5 µm and mount on charged slides. Bake slides at 60°C for 1 hour.

- Deparaffinization and Antigen Retrieval: Deparaffinize in xylene and rehydrate through a graded ethanol series to water. Perform heat-induced epitope retrieval using a suitable buffer (e.g., citrate, EDTA).

- Multiplexed Staining Cycle:

- Blocking: Block endogenous peroxidases and non-specific binding sites.

- Primary Antibody Incubation: Apply the first primary antibody, optimally validated and titrated for multiplex IHC.

- Detection: Use a tyramide signal amplification (TSA) system with a fluorescent dye (e.g., Cy3, Cy5) for detection.

- Antibody Stripping: Apply a heat-based or chemical stripping step to remove the primary-secondary antibody complex without damaging the tissue or other antigens.

- Repetition: Repeat Step 3 for each subsequent antibody in the panel. Modern multiplexing technologies can analyze up to 6 or 7 different markers on the same section [7].

- Counterstaining and Mounting: Counterstain with DAPI to label nuclei and mount with an anti-fade mounting medium.

- Imaging and Analysis: Scan slides using a fluorescent slide scanner. Use digital pathology and AI-powered image analysis software to quantify and spatially map the different cell populations [7].

Protocol 2: Systematic Environmental/Horizon Scanning for Drug Pipeline Planning

Objective: To proactively identify, assess, and prioritize emerging drugs, technologies, and regulatory shifts that could impact the organization's drug development strategy and resource planning.

Methodology (Based on the AIFA Horizon Scanning System) [10]:

- Identification:

- Systematically gather information on medicines in development expected to receive marketing authorization within the next 12–36 months.

- Key Sources: EMA documents (PRIME designation, orphan drug status, scientific advice), commercial databases (e.g., AdisInsight), clinical trial registries, and company pipelines [10].

- Selection and Prioritization:

- Exclude products of low strategic interest (e.g., generics, biosimilars, known substances).

- Use a prioritization tool (PrioTool) to score remaining products. The AIFA model uses criteria scored on a 0-4 point scale (0-5 for some criteria) [10]:

- Disease severity and unmet therapeutic need.

- Potential clinical value.

- Estimated treatment population size and cost.

- Organizational impact on the healthcare system.

- Regulatory status (e.g., orphan drug designation adds 5 points).

- Products are categorized based on their total score for further action (e.g., "medicines of particular interest," "medicines for monitoring") [10].

- Assessment: Conduct a detailed assessment of high-priority products, evaluating the strength of clinical evidence, potential budget impact, and readiness of the healthcare system for implementation.

- Dissemination and Action: Share synthesized reports with key internal stakeholders (R&D, portfolio strategy, market access) to inform strategic planning, opportunity identification, and risk mitigation.

Data Presentation

Table 1: Prioritization Criteria for Horizon Scanning of Emerging Pharmaceuticals (Based on the AIFA PrioTool) [10]

| Criterion | Description | Scoring Scale |

|---|---|---|

| Disease Impact | Severity of the target disease and burden on patients/public health. | 0 - 3 points |

| Therapeutic Need | Level of unmet medical need; availability of existing treatments. | 0 - 4 points |

| Potential Clinical Value | Anticipated improvement in efficacy/safety over standard of care. | 0 - 4 points |

| Organizational Impact | Expected impact on healthcare delivery structures and processes. | 0 - 3 points |

| Estimated Population | Size of the patient population that may be eligible for treatment. | 0 - 4 points |

| Estimated Cost | Projected cost of the treatment per patient/course. | 0 - 4 points |

| Regulatory Status | Presence of designations like Orphan Drug or Advanced Therapy. | +5 points |

Table 2: Key "Research Reagent Solutions" for Advanced Scanning Techniques

| Item | Primary Function in Scanning | Application Context |

|---|---|---|

| Tyramide Signal Amplification (TSA) Kits | Enables highly sensitive, multiplexed detection of proteins by amplifying a fluorescent signal. | Essential for Multiplex IHC, allowing detection of 6-7 markers on one slide [7]. |

| Validated Primary Antibody Panels | Specifically bind to target proteins (e.g., immune cell markers, signaling proteins) in tissue. | Used in IHC and Multiplex IHC to characterize cell types and disease mechanisms [7]. |

| Digital Slide Scanner | Creates high-resolution digital images of entire histology slides for analysis and archiving. | Foundation of Digital Pathology; enables AI-based analysis and remote collaboration [7]. |

| SEM Sample Stubs and Conductive Coatings | Holds samples and provides a conductive surface to prevent charging under the electron beam. | Critical for Scanning Electron Microscopy (SEM) to analyze particle morphology [8]. |

| Spatial Transcriptomics Kits | Allows for mapping of all gene activity across a tissue sample, providing genomic context. | Used to identify novel drug targets and biomarkers by visualizing gene expression in situ [7]. |

Workflow and Relationship Visualizations

Drug development scanning workflow

Horizon scanning prioritization logic

In the context of environmental scanning research, which involves acquiring and using information about external events and relationships to guide future action, organizations face three interconnected core challenges: information overload, data quality issues, and resource constraints [11]. The digitalization of scientific work has exponentially increased the volume of available information, with one estimate suggesting the amount of information created every two days is roughly equivalent to that created from the beginning of human civilization until 2003 [11]. This systematic review aims to provide an overview of these challenges and present evidence-based strategies for optimizing resource allocation to address them, with a specific focus on creating effective technical support structures for researchers.

Understanding Information Overload

Definition and Theoretical Framework

Information overload occurs when the information processing demands exceed an individual's or organization's capacity to process it, leading to decreased decision quality and increased stress [11]. In scientific environments, this manifests when researchers cannot efficiently filter, process, or apply relevant information from the overwhelming volume available.

The theoretical understanding of information overload draws from several frameworks:

- Cognitive Load Theory: Suggests human working memory is limited to approximately seven ± two units of information, and overload occurs when information exceeds this capacity [11]

- Media Richness Theory: Proposes that different communication channels vary in their ability to convey information and reduce ambiguity [11]

- Technostress Concept: Identifies information overload as one of the main stressors caused by information and communication technologies (ICTs) [11]

Quantitative Impact on Scientific Productivity

Table 1: Measured Impact of Information Overload in Research Environments

| Metric | Impact Level | Consequence |

|---|---|---|

| Average feature adoption in scientific software | 24.5% (median 16.5%) | Three-quarters of developed features go unused due to usability issues [12] |

| Bioinformatics tool installation failure rate | 28% within 2-hour limit | Significant time lost before research can even begin [12] |

| Training cost for new researchers on complex software | $15,000 annually for 20 users | Senior researcher time diverted from actual research [12] |

| Error correction cost after product release | 100x more than fixing during design | Substantial financial impact on research budgets [12] |

Technical Support Center: Troubleshooting Guides and FAQs

Structural Framework for Technical Support

An effective technical support system for scientific environments should integrate multiple resource types to address different learning preferences and problem-solving approaches. Based on analysis of successful support models [13] [14], the following structure provides comprehensive assistance:

Table 2: Technical Support Resource Framework

| Resource Type | Function | Implementation Example |

|---|---|---|

| Application-Specific Support Centers | Provide targeted resources for specific techniques or instruments | Curated content with getting-started tips and troubleshooting help [13] |

| Direct Scientist Access | Enable researchers to consult with experienced scientists | "Ask a Scientist" programs with dedicated phone hours and submission portals [14] |

| Technical Documentation | Offer standardized protocols and application notes | Searchable databases of instruction manuals and technical materials [14] |

| Troubleshooting Guides | Address common experimental problems | Expert-created guides for improving results in techniques like western blotting, IHC, and IP [15] |

| Training Resources | Reduce cognitive load through structured learning | Webinars, selection guides, and compatibility charts for product selection [14] |

Frequently Asked Questions: Experimental Scenarios

Q: How can I reduce cognitive overload when learning new complex analysis software?

A: Research indicates that software with poor user interface design contributes significantly to cognitive overload [12]. Seek platforms that employ user-centered design principles, including:

- Consistent navigation patterns across tools

- High "memorability" in interface design

- Contextual help systems rather than separate complex manuals

- Progressive disclosure of advanced features to avoid overwhelming new users [16]

Q: What strategies help manage the constant influx of new relevant literature?

A: Scientists report feeling overwhelmed by the approximately 1.8 million new scientific articles published yearly ( nearly 5,000 per day) [17]. Effective strategies include:

- Using filtered search engines with careful pre-screening criteria

- Establishing journal clubs to distribute reading burden and generate concise summaries

- Subscribing to curated science news feeds that highlight only the most relevant content

- Maintaining external tracking systems (to-do lists, physical reminders) to free mental capacity [17]

Q: How can our lab minimize decision fatigue when selecting reagents and protocols?

A: Decision fatigue drains cognitive resources needed for critical research decisions [17]. Counter measures include:

- Establishing standardized protocols for common procedures to reduce repetitive decisions

- Creating preferred supplier lists for frequently ordered reagents

- Making high-impact decisions early in the day before decision fatigue accumulates

- Prioritizing decisions based on their potential impact on research goals [17]

Data Quality Assurance Protocols

Framework for Research Data Quality

High-quality research software is essential for ensuring data quality and reproducible results [18]. The following dot visualization illustrates the interconnected components of a robust data quality assurance framework:

Data Quality Assurance Workflow

Experimental Protocol: Quality Control Checkpoints

Objective: Implement systematic quality control measures throughout the experimental workflow to ensure data integrity and reproducibility.

Materials:

- Standardized documentation templates

- Version control system (e.g., Git)

- Data validation software or scripts

- Reference standards for calibration

Methodology:

- Pre-experimental Phase

- Define clear data quality metrics and acceptance criteria

- Establish version control protocols for all experimental protocols

- Document all reagent sources, lot numbers, and preparation dates

Experimental Execution Phase

- Implement real-time data recording with timestamps

- Apply built-in validation checks for instrument outputs

- Include appropriate controls and reference standards in each run

Post-experimental Phase

- Perform reproducibility analysis on subset of experiments

- Conduct peer review of raw data before analysis

- Archive both raw and processed data with complete metadata

Quality Control Checkpoints: The following dot visualization illustrates critical quality control checkpoints throughout the research lifecycle:

Quality Control Checkpoints

Resource Optimization Strategies

Resource Allocation Patterns for Scientific Environments

Effective resource orchestration in scientific environments requires strategic alignment of limited resources with research priorities. Research on green technology innovation efficiency has identified several resource allocation patterns that translate well to scientific settings [19]:

Table 3: Resource Allocation Patterns in Research Environments

| Pattern Type | Key Characteristics | Application to Scientific Research |

|---|---|---|

| Pressure Response Model (PRM) | Reactive resource allocation in response to external pressures | Allocating resources to address immediate compliance requirements or urgent experimental deadlines [19] |

| Active Competitive Model (ACM) | Proactive investment in strategic capabilities | Dedicating resources to develop novel methodologies or acquire cutting-edge instrumentation [19] |

| Stereotyped Development Model (SDM) | Following established patterns without innovation | Maintaining traditional research approaches without optimizing for efficiency [19] |

| Blind Development Model (BDM) | Unfocused resource allocation without clear strategy | Spreading resources too thinly across multiple research directions without clear prioritization [19] |

Experimental Protocol: Resource Efficiency Assessment

Objective: Systematically evaluate and optimize resource allocation across research activities to maximize output while minimizing waste.

Materials:

- Research activity tracking system

- Resource utilization metrics

- Output impact assessment framework

Methodology:

- Resource Mapping

- Catalog all available resources (personnel, equipment, reagents, computational)

- Track time allocation across different research activities

- Quantify material and reagent usage per experimental unit

Efficiency Analysis

- Calculate output-to-input ratios for key research activities

- Identify bottlenecks and resource constraints

- Evaluate cost-benefit relationships for different resource allocations

Optimization Implementation

- Reallocate resources from low-efficiency to high-efficiency activities

- Implement monitoring systems for continuous assessment

- Establish feedback loops for iterative improvement

The Scientist's Toolkit: Essential Research Solutions

Research Reagent Solutions

Table 4: Essential Research Reagents and Their Functions

| Reagent Category | Specific Examples | Primary Function | Quality Considerations |

|---|---|---|---|

| Cell Isolation Products | Immune cell isolation kits | Separation of specific cell populations from heterogeneous mixtures | Certification of purity and viability; validation for specific applications [17] |

| Cell Culture Supplements | Growth factors, cytokines | Promote cell growth, maintenance, and specific differentiation pathways | Batch-to-batch consistency; concentration verification; endotoxin testing [14] |

| Analysis Reagents | Antibodies, detection substrates | Enable visualization and quantification of specific targets | Specificity validation; application-specific testing; lot-to-lot consistency [15] |

| Specialized Buffers | Lysis buffers, assay buffers | Maintain optimal chemical environment for specific experimental conditions | pH stability; osmolarity verification; contaminant screening [14] |

| Nucleic Acid Tools | Primers, probes, sequencing kits | Genetic material analysis and manipulation | Purity confirmation; specificity validation; performance benchmarking [17] |

Integrated Solution Framework

Unified Workflow for Addressing Core Challenges

The interrelationship between information management, data quality, and resource optimization requires an integrated approach. The following dot visualization illustrates how these elements connect in an optimized research environment:

Integrated Challenge Management Framework

Implementation Roadmap

Based on UX maturity assessment research, scientific teams can implement the following phased approach to address these core challenges [12]:

Phase 1: Foundation (Months 1-6)

- Conduct current state assessment of information management practices

- Identify highest-impact data quality issues

- Map resource allocation patterns and identify inefficiencies

- Implement quick wins to reduce immediate pain points

Phase 2: Systematic Improvement (Months 7-18)

- Develop standardized protocols for critical research processes

- Implement monitoring systems for resource utilization

- Establish continuous improvement feedback loops

- Train team members on optimized workflows

Phase 3: Sustained Excellence (Ongoing)

- Regular review and refinement of systems

- Adoption of emerging technologies that enhance efficiency

- Knowledge transfer and onboarding optimization

- Cross-team collaboration and best practice sharing

Addressing the core challenges of information overload, data quality, and resource constraints requires a systematic approach that integrates technical solutions, process improvements, and cultural changes. By implementing structured technical support systems, rigorous quality control protocols, and strategic resource allocation patterns, scientific organizations can significantly enhance research efficiency and output quality. The frameworks and protocols presented here provide a foundation for building more resilient and productive research environments capable of navigating the complexities of modern science while optimizing limited resources for maximum impact.

FAQs: Core Concepts and Setup

Q1: What are the primary advantages of using AI over traditional statistical methods for data analysis in research?

AI, particularly machine learning (ML), excels at identifying complex, non-linear patterns within large and high-dimensional datasets that traditional statistics might miss [20]. Key advantages include:

- Predictive Power: ML models can predict outcomes, such as drug-target interactions or patient responses in clinical trials, with high accuracy (e.g., over 85% in some pharmaceutical applications) [21].

- Automation and Efficiency: AI can automate tedious processes like virtual screening of compounds, reducing drug discovery timelines from years to weeks and cutting clinical trial costs by up to 70% [21].

- Handling Unstructured Data: Natural Language Processing (NLP), a subset of AI, can analyze text from research papers, clinical notes, or social media to extract valuable insights [22].

Q2: When should I use traditional machine learning versus generative AI for my project?

The choice depends on your goal [20]:

- Use Traditional Machine Learning when:

- Your task is prediction or classification (e.g., predicting equipment failure from sensor data).

- You are working with highly specific, domain-knowledge data (e.g., medical images like MRIs).

- There are significant data privacy concerns with using external models.

- Use Generative AI when:

- Your goal is to create new content (e.g., generating novel molecular structures).

- You need to work with everyday language or images "off-the-shelf" (e.g., classifying product reviews).

- You want to augment a traditional ML model by generating synthetic data or helping with data cleaning.

Q3: What are the critical data requirements for a successful machine learning project?

Data is the foundation of any ML project. Key challenges and requirements include [23]:

- Quality and Quantity: ML models require large volumes of high-quality, accurately labeled training data. Noisy or poor-quality datasets severely impact model performance.

- Data Preparation: This is a complex and critical step, involving data gathering, consistent formatting, reduction (sampling, aggregating), and rescaling.

- Bias Mitigation: It is crucial to address biases in training data to ensure fair and equitable model predictions, especially in applications impacting diverse populations.

Troubleshooting Common Technical Issues

Q1: My model's performance is poor or inconsistent. What steps should I take?

This is often related to data or model design. Follow this diagnostic workflow:

Q2: My AI model is a "black box." How can I improve interpretability for regulatory submissions?

The "black box" problem, where the model's decision-making process is opaque, is a significant challenge in regulated fields like medicine and finance [23]. Mitigation strategies include:

- Use Simpler, Interpretable Models: Where possible, use models like decision trees or linear regression that are inherently more transparent [23].

- Employ Explainability Techniques: Utilize methods like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to explain individual predictions.

- Regulatory Dialogue: Engage early with regulators like the FDA or EMA. The FDA's "Digital Health Center of Excellence" provides guidance on demonstrating model credibility, which includes aspects of interpretability [22]. The EMA also emphasizes the need for explainability metrics, especially for "black-box" models [24].

Q3: How can I manage the computational cost and environmental impact of running large AI models?

The energy demand for AI training and inference (using a trained model) is a valid concern. A full-stack approach to efficiency is required [25]:

- Model Architecture: Use efficient architectures like Mixture-of-Experts (MoE), which activates only parts of the network for a given task, reducing computations.

- Quantization: Techniques like Accurate Quantized Training (AQT) represent numbers with fewer bits, reducing energy use without compromising quality.

- Optimized Inference: Technologies like speculative decoding use smaller models to draft responses verified by a larger model, improving speed and efficiency.

- Hardware: Leverage hardware optimized for AI, like Google's TPUs, which are designed for performance per watt.

Table 1: Environmental Impact of AI Inference (Example: Google Gemini Text Prompt)

| Metric | Comprehensive Footprint Estimate | Theoretical (Active Chip Only) Estimate |

|---|---|---|

| Energy per Prompt | 0.24 watt-hours (Wh) | 0.10 Wh |

| CO2e per Prompt | 0.03 grams (gCO2e) | 0.02 gCO2e |

| Water per Prompt | 0.26 milliliters (mL) | 0.12 mL |

| Equivalent To | Watching TV for <9 seconds | N/A |

Source: Adapted from [25]. Comprehensive estimates account for idle machines, data center overhead, and other real-world factors.

Experimental Protocols & Methodologies

This section provides a detailed methodology for implementing an AI-driven approach to a common research challenge: optimizing clinical trial patient recruitment using real-world data.

Protocol: AI-Powered Patient Recruitment and Trial Matching

1. Objective: To accelerate clinical trial enrollment and improve diversity by using machine learning to identify and match eligible patients from Electronic Health Records (EHRs) and other data sources.

2. Prerequisites & Data Sources:

- Data Access: Approved access to EHR systems, clinical data warehouses, or anonymized patient datasets.

- Trial Protocol: A detailed protocol with clear inclusion and exclusion criteria.

- Computing Environment: A secure computing environment (e.g., HIPAA-compliant cloud or server) with access to ML libraries (e.g., Scikit-learn, TensorFlow/PyTorch).

3. Step-by-Step Workflow:

4. Detailed Methodology:

- Step 1: Define Eligibility Criteria. Translate the trial's protocol into a structured, machine-readable logic. For example:

(DiagnosisCode == "C50.9") AND (Age >= 18) AND (Lab_Value_Creatinine < 1.5). - Step 2: Extract & Preprocess Data. Extract relevant patient data from source systems. Preprocessing is critical and includes:

- Structuring Unstructured Data: Use NLP on clinical notes to extract key terms, medications, and family history.

- Handling Missing Data: Implement strategies like median/mode imputation or use algorithms that support missing values.

- Normalization: Scale numerical features (e.g., age, lab values) to a common range.

- Step 3: Feature Engineering. Create features that represent each patient's clinical profile. Examples:

- Demographics (age, gender).

- Comorbidities (encoded as binary features).

- Medication history.

- Key lab values and vital signs.

- Step 4: Model Training & Validation.

- Labeling: Create a labeled dataset by manually reviewing a subset of patient records to determine true eligibility (a "gold standard" set).

- Algorithm Selection: Start with supervised learning algorithms like Random Forests or Gradient Boosting Machines (XGBoost), which handle mixed data types well.

- Validation: Use k-fold cross-validation to assess performance metrics (Precision, Recall, F1-Score) to ensure the model generalizes well.

- Step 5: Deployment & Matching. Apply the validated model to the entire target patient population to generate a ranked list of potential candidates, prioritized by their predicted probability of eligibility.

- Step 6: Generate Recruitment List. The output is a list of patient IDs (for authorized users) or de-identified profiles for outreach, enabling the research team to focus on the most promising candidates.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Frameworks for AI-Driven Research

| Tool / Solution | Type | Primary Function in Research |

|---|---|---|

| MLX [26] | Array Framework | Enables fast and flexible machine learning on Apple silicon hardware, ideal for prototyping and running models on Macs and iPads. |

| TensorFlow / PyTorch [23] | ML Framework | Open-source libraries for building and training deep learning models. They are the industry standard for complex AI research. |

| Generative AI Models (e.g., Claude, Gemini, Llama) [20] | Pre-trained Model | Useful for classifying text, generating reports, brainstorming molecular structures, and assisting with data cleaning and code generation. |

| Digital Twin [27] [24] | Computational Model | A virtual replica of a physical entity (e.g., a patient organ or a clinical trial control arm) used to simulate outcomes and optimize interventions without physical experiments. |

| IBM Watson Health [22] | Domain-Specific AI | An example of AI systems tailored for healthcare and life sciences, used for tasks like analyzing clinical trial protocols and suggesting patient eligibility. |

| Custom AI Hardware (e.g., TPU, GPU) [25] | Hardware | Specialized processors designed to accelerate the massive computations required for training and running large AI models. |

Frequently Asked Questions (FAQs) on Environmental Scanning

1. What is environmental scanning, and why is it a strategic necessity for R&D? Environmental scanning is the systematic collection, analysis, and dissemination of information on trends, signals, and developments within an organization's business environment [28]. It encompasses political, economic, social, technological, environmental, and legal (PESTEL) trends, alongside insights into competitors and markets [28]. For R&D, it is a strategic necessity because it enables organizations to recognize potential innovation opportunities and risks early, ensuring a proactive stance in market and innovation strategies [28]. This foundational knowledge helps R&D transition from an isolated function to one that is centrally woven into the organization's mission and corporate strategy [29].

2. Our R&D team is disconnected from market needs. How can scanning help? A common challenge is the R&D group being isolated, working in a "black box," and lacking direct connection to the customer [29]. Environmental scanning systematically addresses this by forcing conversations about customer needs and possible solutions [29]. It provides a mechanism for customer-oriented innovation by helping companies better understand their target groups’ changing needs and expectations, allowing them to offer relevant, innovative solutions [28]. A systematic scanning process replaces reliance on intermediaries with direct market insight.

3. We tend to favor incremental projects. How can a scanning process encourage bolder innovation? Our research indicates that incremental projects account for more than half of an average company's R&D investment, even though bold bets deliver higher success rates [29]. This often stems from a mindset that views risk as something to be avoided rather than managed [29]. Environmental scanning combats this by revealing strategic options and highlighting promising ways to reposition the business through new platforms and disruptive breakthroughs [29]. By identifying emerging, broadly applicable technologies from outside the organization, scanning provides the external stimulus needed to justify and guide more ambitious, transformational R&D projects [29].

4. What are the primary methods for conducting an environmental scan? Several established methods can be used individually or in combination to create a comprehensive picture of the external environment [28]. Key methods include:

- PESTEL Analysis: A method that analyzes the Political, Economic, Social, Technological, Environmental, and Legal factors influencing the business environment [28].

- SWOT Analysis: Focuses on an organization's internal Strengths and Weaknesses as well as external Opportunities and Threats [28].

- Scenario Planning: Involves creating several hypothetical scenarios to examine various possible future developments, helping to prepare for different outcomes [28].

5. How can we effectively integrate scanning data into our R&D resource allocation decisions? The link between scanning and resource allocation is achieved through innovation portfolio oversight [30]. A strong R&D strategy manages a balanced portfolio that includes incremental improvements, adjacent opportunities, and long-term bets [30]. The insights from environmental scanning—such as emerging technologies or new regulatory challenges—directly inform this balancing act. They provide the data-driven justification to shift resources from "safe" but low-impact projects toward areas with the greatest potential for strategic return and future growth [30]. This ensures resources flow to R&D projects that address the most critical market and technological battlegrounds [29].

Troubleshooting Guides: Addressing Common Scanning Challenges

Problem: Information Overload from Scanning

Symptoms

- Inability to distinguish critical "weak signals" from mainstream trends [28].

- Paralysis in decision-making due to conflicting or excessive data.

- Resources wasted on collecting irrelevant information.

Investigation and Resolution

| Step | Action | Objective |

|---|---|---|

| 1. Define Scope | Use a framework like PESTEL to cluster information into predefined categories (e.g., Political, Technological) [28]. | To filter out noise and focus scanning activities on areas most relevant to strategic goals. |

| 2. Identify Sources & Drivers | Tag collected information with identified drivers and keywords. Analyze the value systems behind information publishers [28]. | To understand the context and potential bias of information, helping to prioritize credible sources. |

| 3. Leverage Technology | Use digital tools like AI and machine learning to analyze large datasets and identify patterns and relevant insights [28]. | To automate the analysis of large volumes of data and surface the most significant trends. |

Prevention Best Practices

- Establish a continuous scanning process with regular review cycles, rather than treating it as a one-off event [28].

- Assign dedicated personnel or a team within the innovation management department to regularly scan for signals and perform deep dives [28].

Problem: Scanning Data Fails to Influence R&D Strategy

Symptoms

- Scanned information is collected in reports but not discussed in R&D strategy meetings.

- A persistent disconnect between the "scanning team" and the "strategy team."

- R&D projects continue to be prioritized based on historical patterns, not future signals.

Investigation and Resolution

| Step | Action | Objective |

|---|---|---|

| 1. Align with Corporate Strategy | Actively engage corporate-strategy leaders with R&D and scanning outputs. Provide clarity on long-term corporate goals that require R&D to realize [29]. | To ensure scanning is focused on revealing strategic options that align with the company's highest priorities. |

| 2. Facilitate Strategic Dialogue | Use scanning findings to force conversations between R&D, commercial, and strategy functions about core battlegrounds and customer solutions [29]. | To translate environmental data into strategic conversations about which markets will make or break the company. |

| 3. Establish Clear Governance | Implement a governance structure with clear decision rights. Define who sets strategy, approves initiatives, and monitors progress based on scanning insights [30]. | To create transparency and accountability, ensuring scanned information leads to timely and consistent decision-making. |

Prevention Best Practices

- The dialogue between R&D, commercial, and strategy functions cannot stop once the strategy is set. Leaders should continuously reexamine the strategic direction as the environment evolves [29].

- Use technology roadmaps and innovation portfolio matrices as tools to visually connect scanning data to strategic planning and resource allocation [30].

Experimental Protocols for Environmental Scanning

Protocol 1: Conducting a PESTEL Analysis

Objective To systematically identify and evaluate macro-environmental factors that could impact the organization's R&D strategy and innovation potential.

Methodology

- Assemble a Cross-Functional Team: Include members from R&D, marketing, strategy, and regulatory affairs.

- Brainstorm Factors: For each PESTEL category (Political, Economic, Social, Technological, Environmental, Legal), brainstorm relevant trends, signals, and developments.

- Political: Changes in research funding, trade policies.

- Economic: Investment trends in specific technologies, economic cycles.

- Social: Shifting patient demographics, public acceptance of technologies.

- Technological: Emerging platform technologies (e.g., AI, CRISPR), advancements in adjacent fields.

- Environmental: Sustainability regulations, climate impact.

- Legal: Intellectual property law shifts, new regulatory pathways for drug approval [28].

- Analyze Impact and Uncertainty: Plot the significance of each factor on axes of potential impact on the organization and uncertainty about its future state.

- Identify Strategic Implications: Discuss what each high-impact factor means for current R&D projects and future capabilities. Ask: "How does this change what we need to develop?"

Protocol 2: Scenario Planning Workshop

Objective To prepare the R&D organization for different possible futures, enhancing its adaptability and resilience.

Methodology

- Define Focal Question: Start with a critical strategic question for R&D (e.g., "How will we deliver therapeutics in 2035?").

- Identify Key Driving Forces: Use scanning data to pinpoint the two most critical and uncertain forces influencing the focal question (e.g., "Regulatory Centralization" vs. "Decentralization" and "Technology Platform Convergence" vs. "Fragmentation").

- Develop Scenario Frameworks: Plot these forces on axes to create 2x2 matrix, defining four distinct future scenarios.

- Flesh Out Narratives: For each quadrant, develop a detailed narrative describing what that world would look like.

- Derive Strategic Options: Identify early warning signals for each scenario and develop a portfolio of "no-regret" and "strategic bet" R&D projects that would be valuable across multiple futures.

Research Reagent Solutions: The Strategist's Toolkit

This table details key frameworks and concepts essential for effective environmental scanning and strategic linking.

| Tool/Concept | Function & Explanation |

|---|---|

| PESTEL Framework | A systematic guide to cluster and analyze macro-environmental information. It ensures comprehensive coverage of relevant external factors and helps filter information overload [28]. |

| Innovation Portfolio Matrix | A governance tool for overseeing a balanced mix of R&D projects. It helps prevent over-investment in incremental projects by ensuring resources are allocated to short, medium, and long-term bets based on scanned opportunities [30]. |

| Strategic Dialogue | A facilitated conversation between R&D, commercial, and strategy functions. Its purpose is to align on core battlegrounds and translate scanning data into concrete target product profiles and capability needs [29]. |

| Capability vs. Technology Map | A strategic planning tool to distinguish between technical abilities (capabilities) and the inputs that enable them (technologies). It ensures R&D builds future-proof abilities rather than just investing in soon-to-be-obsolete tools [29]. |

Strategic Scanning Process Workflow

Linking Environmental Data to R&D Outcomes

Frameworks and Tools for Implementing Efficient Scanning Systems

Frequently Asked Questions (FAQs) on Strategic Analysis

Q1: What is the core difference between a SWOT and a PESTEL analysis?

A1: The core difference lies in their focus. A SWOT analysis evaluates both internal and external factors; it examines internal Strengths and Weaknesses of your organization, and external Opportunities and Threats from the market environment [31] [32]. A PESTEL analysis examines only the external macro-environmental factors that can influence your organization: Political, Economic, Social, Technological, Environmental, and Legal forces [33] [34] [32]. PESTEL provides the external context, while SWOT assesses your organization's position within that context.

Q2: When should I use a PESTEL analysis versus a SWOT analysis?

A2: Use them together for a comprehensive view. A sound approach is to:

- Start with a PESTEL analysis to gain a detailed understanding of the broad external trends [32].

- Transfer the key external findings from the PESTEL into the Opportunities and Threats sections of your SWOT analysis [32].

- Identify your internal Strengths and Weaknesses relative to your ability to respond to those external factors [32].

Q3: What are common mistakes to avoid when conducting a SWOT analysis?

A3: Common pitfalls include [35]:

- Lacking a clear goal: Conducting the analysis without a specific objective leads to unfocused results.

- Being overly general: Using broad statements like "poor brand recognition" instead of data-backed, specific weaknesses.

- Ignoring external factors: Focusing too much on internal dynamics and underestimating market threats or new competitors.

- Misclassifying factors: Confusing internal weaknesses (which you can control) with external threats (which you cannot directly control).

- Treating it as a one-time activity: Failing to regularly update the analysis as internal and external conditions change.

Q4: Can a PESTEL analysis be applied to the pharmaceutical and drug development industry?

A4: Yes, it is highly relevant. The table below summarizes how PESTEL factors directly impact drug development.

Table: Application of PESTEL in Drug Development

| PESTEL Factor | Example in Drug Development & Research |

|---|---|

| Political | Changes in healthcare policy, government funding for research, political pressure on drug pricing [33]. |

| Economic | Inflation affecting R&D costs, economic downturns impacting investment, employment rates for hiring scientific talent [33]. |

| Social | Aging populations increasing demand for therapeutics, public opinion on genetic testing, shifting health consciousness [33]. |

| Technological | Advancements in AI for drug discovery, new laboratory equipment, developments in data analytics and cloud computing [33] [36]. |

| Environmental | Environmental regulations on chemical waste, impact of climate change on disease patterns, sustainable sourcing of raw materials [33]. |

| Legal | Patent and intellectual property laws, FDA regulatory approval processes (e.g., IND/NDA), occupational safety laws in labs, and liability issues [33] [37]. |

Integrated PESTEL-SWOT Analysis Protocol

This protocol provides a methodology for integrating PESTEL and SWOT analyses to optimize resource allocation for environmental scanning.

Objective

To systematically analyze the external landscape and internal capabilities to inform strategic decision-making and prioritize resource allocation in research and development.

Workflow Diagram

The following diagram illustrates the integrated, cyclical process of conducting a PESTEL-SWOT analysis.

Methodology

Step 1: Define Scope and Assemble Team Clearly define the purpose and scope of the analysis (e.g., for a specific drug pipeline, a new research area, or overall R&D strategy). Assemble a diverse team with representatives from R&D, regulatory affairs, clinical operations, and commercial strategy to ensure multiple perspectives [35].

Step 2: Conduct the PESTEL Analysis Brainstorm and document key factors for each PESTEL category relevant to your scope [33]. Use the table in FAQ Q4 as a starting point.

- Data Collection: Utilize resources like regulatory databases (e.g., FDA guidance), industry reports, scientific literature, and economic data [34].

Quantitative Data: Summarize key quantitative findings for easy comparison. Table: Example Quantitative Data from PESTEL Scan

Factor Category Metric Current Value Trend Impact Level (H/M/L) Economic Average Cost of Phase 3 Clinical Trial ~$20M Increasing H Political Number of Approved INDs (FY) Value Stable / Increasing H Social Public Trust in Pharma (Index Score) Value Decreasing M

Step 3: Transfer Findings to SWOT The key external trends identified in the PESTEL analysis become the initial list of external Opportunities (O) and Threats (T) for the SWOT framework [32]. For example, a favorable regulatory shift (Political) is an Opportunity, while a new competitor's drug approval (Legal/Competitive) is a Threat.

Step 4: Complete the SWOT Analysis With the external factors defined, the team now identifies internal Strengths (S) and Weaknesses (W). These should be considered relative to the external context. For instance, a strong intellectual property portfolio (Strength) is key to capitalize on a new market opportunity, while a lack of expertise in a new technological area like AI (Weakness) is a liability against a relevant Technological trend [35].

Step 5: Develop Strategic Actions and Allocate Resources Use the completed SWOT matrix to formulate actionable strategies. The goal is to leverage Strengths to capitalize on Opportunities, use Strengths to mitigate Threats, fix Weaknesses that make you vulnerable to Threats, and address Weaknesses that prevent you from seizing Opportunities [38] [35]. This process directly informs where to allocate financial, human, and technical resources most effectively.

Troubleshooting Guide for Experimental Research

This guide provides a systematic approach to diagnosing and resolving issues in experimental research, a critical skill for efficient resource utilization.

Troubleshooting Workflow

The following diagram outlines a logical, step-by-step protocol for troubleshooting failed experiments.

Troubleshooting Protocol

Step 1: Repeat the Experiment Unless prohibitively costly or time-consuming, repeat the experiment exactly. This controls for simple human error, such as pipetting mistakes or miscalculations [39].

Step 2: Consider Experimental Validity Re-examine the scientific hypothesis and literature. Is there another plausible biological or chemical reason for the unexpected result? A failed experiment could, in fact, be a valid but unexpected discovery [40] [39].

Step 3: Verify Controls Ensure appropriate controls were used and performed as expected. A positive control validates that the experimental system works, while a negative control helps identify background signal or contamination. If controls also fail, the issue is likely with the protocol or reagents [39].

Step 4: Check Equipment and Materials

- Reagents: Verify storage conditions (temperature, light sensitivity) and expiration dates. Visually inspect solutions for precipitates or cloudiness. Check for known issues with specific reagent batches [39].

- Equipment: Confirm proper calibration and functionality of all instruments (e.g., centrifuges, microscopes, plate readers) [40].

Step 5: Systematically Change Variables If the problem persists, begin testing potential root causes. Generate a list of variables (e.g., concentration, incubation time, temperature, pH) and test them one at a time [39]. This isolation is critical for identifying the true source of error. Prioritize testing variables that are most likely to be the problem or are easiest to change [39].

Step 6: Document the Process Meticulously document every step, change, and outcome in a lab notebook. This creates a record for future troubleshooting and ensures the problem can be permanently resolved [39].

Research Reagent Solutions

Table: Essential Materials for Common Experimental Scenarios

| Item | Function | Example Application |

|---|---|---|

| Primary Antibody | Binds specifically to the protein of interest for detection. | Immunohistochemistry, Western Blot [39]. |

| Secondary Antibody | Conjugated to a marker; binds to the primary antibody for signal amplification. | Fluorescent imaging (e.g., Alexa Fluor conjugates) [39]. |

| Positive Control | A known sample that should produce a positive result; validates the experimental system. | Confirming assay functionality when test samples fail [39]. |

| Negative Control | A known sample that should not produce a signal; identifies background noise. | Detecting non-specific binding or contamination [39]. |

| Cell Viability Assay | Measures the health and proliferation of cells in culture. | Assessing cytotoxicity of new drug compounds (e.g., MTT Assay) [40]. |

Troubleshooting Guide: Power Automate for Research Monitoring

This guide addresses common issues researchers face when using workflow automation tools like Power Automate to set up real-time monitoring systems for scientific literature and news.

Flow Trigger Issues

Problem: My monitoring flow doesn't trigger

- Data Loss Prevention (DLP) Policies: Check if your flow violates organizational DLP policies, which can automatically suspend flows. Edit and save the flow; the flow checker will report any DLP violations [41].

- Connection Verification: Broken authentication is a common cause. Verify your connections via More > Connections in Power Automate and reauthenticate if the status shows an error [42] [41].

- Trigger Conditions: Custom trigger conditions might prevent execution. In the flow editor, check the Trigger Conditions in the trigger's Settings tab to ensure your data meets the defined criteria [41].

- Administrative Mode: If admin mode is enabled for your environment, all background processes (including flows) are disabled. An environment administrator must disable admin mode via the Power Platform Admin Center [41].

Problem: Flow triggers for old events when re-enabled

The behavior depends on your trigger type, as summarized in the table below [43] [41]:

| Trigger Type | Description When Flow is Reactivated |

|---|---|

| Polling (e.g., Recurrence) | Processes all unprocessed/pending events that occurred while the flow was off. |

| Webhook | Processes only new events generated after the flow is turned back on. |

To avoid processing old items with a polling trigger, delete and recreate the flow instead of simply turning it off and on [41].

Flow Execution and Performance Issues

Problem: Flow runs multiple times or creates duplicates

This can result from the "at-least-once" delivery design of cloud services. Design your flows to be idempotent to handle duplicate executions [41].

- Solution: Implement checks before creating items, such as verifying a SharePoint document doesn't already exist or using key constraints in Dataverse to prevent duplicate records [41].

Problem: Flow trigger is delayed

Polling triggers check for new data at set intervals. Delays can be caused by:

- License Plan: Flows on a Free plan may only run every 15 minutes, while paid plans (e.g., Flow for Office 365) run approximately every 5 minutes [41].

- Throttling: High frequency of calls to a connector can result in throttling. If a manual test triggers immediately, your flow is likely being throttled. Redesign the flow to use fewer actions if throttling is frequent [41].

Authentication Failures

Problem: Error codes 401 (Unauthorized) or 403 (Forbidden)

- Solution: In the failed run history, open the step showing the error. In the right pane, select View Connections and use the Fix connection link to update your credentials [42]. Passwords or authentication tokens can expire due to organizational policies [41].

Frequently Asked Questions (FAQs)

General

What is Power Automate and who is it for?

Power Automate is a cloud-based service for building automated workflows between applications and services. It serves two primary audiences: line-of-business users ("Citizen Integrators") and IT professionals who can empower business users to create their own solutions [43].

Which email addresses are supported?

As of November 2025, Power Automate supports work or school email addresses. After July 27, 2025, personal email accounts (e.g., Gmail, Outlook.com) will no longer be supported [43].

Can I connect to on-premises data sources or custom APIs?

Yes. You can connect to on-premises data sources (like SQL Server) using the on-premises data gateway. For custom REST APIs, you can create a custom connector [43].

Functionality for Research

How can I ensure my corporate or research data is protected?

Administrators can create Data Loss Prevention (DLP) policies that control which connectors can be used together, preventing data from being accidentally shared with unsanctioned services [43] [41].

Is there a way to troubleshoot flows more efficiently?

Yes. Use the Troubleshoot in Copilot feature, which provides a human-readable summary of errors and suggested solutions. You can also customize the run history view to display specific trigger outputs, making it faster to identify problematic runs [42].

Experimental Protocols for Automated Research Monitoring

Protocol 1: AI-Powered Environmental Scanning with Custom Assistants

This methodology enables automated processing of diverse data sources for foresight intelligence [44].

- Objective Setup: Define scanning parameters, including keywords, domains, and content types (news, patents, scientific publications).

- Information Retrieval: A custom AI assistant (e.g., based on technology like ChatGPT) gathers data from specified sources.

- Analysis and Summarization: The assistant uses natural language processing (NLP) to analyze text, extract key insights, and condense lengthy documents.

- Trend Identification: The system analyzes data patterns over time to identify emerging trends and shifts.

- Centralization: Insights are fed into a centralized system (e.g., an Innovation OS) for organization, collaboration, and strategic decision-making [44].

Protocol 2: Real-Time Monitoring with an Innovation OS

This protocol provides a systematic approach for tracking specific technological developments [44].

- Signal Sourcing: Configure the system to aggregate signals from news, patent data, and scientific publications.

- Advanced Filtering: Use filtering options (timeline, source exclusion, content type) to narrow down signals and identify a specific area of interest (e.g., '5G Private Networks').

- Automated Tracking: Enable automated monitoring to track selected developments over time.

- Alert Configuration: Set up email alerts for significant changes, such as sudden spikes or declines in activity related to a tracked trend or technology.

- Analysis and Reporting: Use automated scoring (e.g., "Speed of Change") and aggregation clusters with AI-generated summaries to quickly understand developments and report to stakeholders [44].

Workflow and System Diagrams

Automated Environmental Scanning Workflow

Self-Driving Lab for Materials Discovery

Research Reagent Solutions for Automated Discovery

Key components and systems enabling modern, automated research workflows.

| Item / System | Function in Research Automation |

|---|---|

| A-Lab (Berkeley Lab) | An automated facility where AI proposes new compounds and robots prepare and test them, creating a tight loop for rapid materials discovery [45]. |

| Self-Driving Lab (NC State) | A robotic platform using dynamic flow experiments and machine learning to run continuous, real-time chemical experiments, accelerating discovery [46]. |

| CRESt Platform (MIT) | A copilot system that uses multimodal AI (text, images, data) and robotic equipment to plan and execute high-throughput materials science experiments [47]. |

| Liquid-Handling Robot | Automates the precise dispensing and mixing of liquid precursors for sample preparation, a key component in self-driving labs [47]. |

| On-Premises Data Gateway | A software service that allows cloud workflows (e.g., in Power Automate) to securely connect to and access data from on-premises systems [43]. |

| Custom Connector | Allows researchers to extend workflow automation tools to connect to their own or third-party REST APIs, enabling integration with specialized scientific databases [43]. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: What are the typical performance improvements we can expect from AI in clinical trial data analysis?

Based on a comprehensive review of the current state of AI, several key performance metrics have been established. The table below summarizes quantitative benchmarks for AI integration in clinical research [48].

Table 1: AI Performance Benchmarks in Clinical Trials

| Metric Area | Reported Improvement | Key Finding |

|---|---|---|

| Patient Recruitment | Enrollment rates improved by 65% [48] | AI-powered tools significantly reduce recruitment delays, which affect 80% of traditional studies [48]. |

| Trial Outcome Prediction | 85% accuracy in forecasting trial outcomes [48] | Predictive analytics models enhance trial planning and resource allocation [48]. |

| Trial Timeline & Cost | Timelines accelerated by 30–50%; costs reduced by up to 40% [48] | AI integration addresses systemic inefficiencies across the clinical trial lifecycle [48]. |

| Adverse Event Detection | 90% sensitivity for detecting adverse events using digital biomarkers [48] | Enables continuous monitoring and improved patient safety [48]. |

Q2: Our AI model for predicting patient enrollment performs well on training data but generalizes poorly to new trial sites. What could be the issue?

This is a classic sign of data bias or overfitting. The model may have learned patterns specific to the demographics or operational characteristics of the initial trial sites used for training. To troubleshoot, follow this protocol [48] [49]:

- Data Diversity Audit: Analyze the demographic, geographic, and clinical characteristic distributions in your training data versus the new sites. Ensure your training set is representative.

- Feature Importance Review: Use SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to identify which features are driving the predictions. Look for over-reliance on site-specific administrative codes or local practices.

- Prospective Validation: Implement a phased rollout where the model's predictions are monitored and compared against actual enrollment in a pilot phase at the new sites before full deployment.

Q3: How can we efficiently monitor and analyze regulatory announcements from multiple global jurisdictions?

Manual tracking is inefficient. The recommended methodology involves using specialized AI-powered regulatory change management platforms [50]. The core protocol involves:

- Business Profiling: Define your organization's specific profile within the platform, including relevant jurisdictions (e.g., US, EU, China) and "Areas of Focus" (e.g., "drug discovery," "clinical trials") [50].

- Automated Alerting: Set up customized alerts using the platform's "Views" feature. These pre-populate searches based on your profile, automatically filtering for relevant agencies and topics [50].

- Impact Analysis with Auto-Labeling: Leverage automated labeling to tag incoming regulatory documents according to your company's internal taxonomy (e.g., "Impact: High," "Affects: Phase-3 Protocol"). This immediately flags documents of interest [50].

Q4: What are the primary regulatory and ethical challenges when implementing AI for clinical data analysis?

The main barriers are not solely technical. The most significant challenges include [48] [51]:

- Algorithmic Bias: Models may perpetuate or amplify existing biases in training data, leading to inequitable outcomes [48].

- Regulatory Uncertainty: The regulatory landscape for AI-based SaMD (Software as a Medical Device) is still evolving, creating uncertainty for developers [48].

- Explainability (XAI): The "black box" nature of some complex models makes it difficult for clinicians and regulators to understand and trust the AI's decisions [49].

- Data Privacy & Security: Handling sensitive patient data requires strict adherence to HIPAA, GDPR, and other regulations, which AI systems must be designed to comply with [52].

Experimental Protocols for Key Analyses

Protocol 1: Systematic Workflow for AI-Powered Pattern Recognition in Clinical Trial Data

This protocol provides a detailed methodology for leveraging machine learning to identify patterns in complex clinical trial datasets, from data preparation to model deployment and monitoring [48] [49] [52].

Table 2: Research Reagent Solutions for AI-Driven Clinical Data Analysis

| Item Category | Specific Examples & Functions |

|---|---|

| Data Sources | Electronic Health Records (EHRs), Clinical Trial Management Systems (CTMS), Patient-Reported Outcome (PRO) data, Genomic/Proteomic datasets, Wearable device metrics [52]. |

| AI/ML Models | Convolutional Neural Networks (CNNs): For image/data analysis [49]. Natural Language Processing (NLP): To extract insights from unstructured text like clinical notes [52]. Predictive Analytics Models: For forecasting trial outcomes or patient risks [48]. |

| Validation Frameworks | SHAP/LIME: For model explainability and interpreting predictions [49]. Cohort Separation Tools: To ensure training and validation sets are statistically separate. Multi-center Data: For external validation to test model generalizability [49]. |