Strategic Coordination of Multidisciplinary Analysis Teams in Drug Development: A 2025 Framework for Enhanced Collaboration and Innovation

This article provides a comprehensive framework for improving the coordination of multidisciplinary analysis teams in pharmaceutical research and drug development.

Strategic Coordination of Multidisciplinary Analysis Teams in Drug Development: A 2025 Framework for Enhanced Collaboration and Innovation

Abstract

This article provides a comprehensive framework for improving the coordination of multidisciplinary analysis teams in pharmaceutical research and drug development. It addresses the critical challenges of integrating diverse scientific disciplines—from medicinal chemistry and structural biology to clinical research and AI informatics—by exploring foundational team science principles, practical methodological applications, advanced troubleshooting strategies, and validation techniques. Tailored for researchers, scientists, and drug development professionals, the content synthesizes the latest research on team dynamics, digital tools, and collaborative models to enhance productivity, foster innovation, and accelerate the translation of research into marketable therapies. The guidance is designed to help teams navigate the complexities of modern, data-intensive drug discovery pipelines.

The Science of Team Science: Laying the Groundwork for Effective Multidisciplinary Collaboration

Understanding Multidisciplinary Team Dynamics in Drug Discovery

Frequently Asked Questions (FAQs)

General Team Dynamics

What are the most critical factors for effective multidisciplinary team coordination? Effective coordination relies on a balance between formal organizational structures and informal coordination practices [1]. Formal structures set the boundary conditions, while within these boundaries, self-managed sub-teams use informal practices like cross-disciplinary anticipation, workflow synchronization, and triangulation of findings to overcome knowledge boundaries [1].

How can teams overcome communication barriers between different scientific disciplines? Teams should use more precise language to avoid misunderstandings from domain-specific terminology [2]. Practicing active listening and contribution, as outlined in resources like the "Seven Norms of Collaboration," can be highly effective [3]. Encourage members to learn the "big picture" context of each other's work to foster mutual understanding [2].

What is the role of team leadership in fostering collaboration? Leaders must provide the right balance of formal and informal structures [1]. They should encourage teams to flexibly change their composition over time as scientific questions evolve and ensure an environment with sufficient resources to enable this flexibility [1]. Leadership also involves fostering trust and psychological safety among team members [3].

Troubleshooting Common Team Challenges

What should we do when sub-teams become stuck or face deadlocks? Actively seek the opinion of "team outsiders"—specialists not currently part of the sub-team [1]. Contributions from outsiders challenge sub-team members to rethink their processes and can foreground unexplored questions, often leading to productive restructuring and onboarding of new specialists to resolve deadlocks [1].

How can we manage different pacing and workflow priorities across disciplines? Pay explicit attention to the synchronization of workflows [1]. Specialists need to openly discuss temporal interdependencies and plan resources so that cross-disciplinary inputs and outputs are aligned. For example, pharmacologists needing several weeks to grow disease models must coordinate timelines with chemists who need to have compounds ready for testing [1].

How do we handle conflicting data or assumptions between disciplines? Implement a practice of triangulating assumptions and findings across disciplines [1]. Scrutinize findings and assumptions by going back and forth across domains to ensure output constitutes useful input for others. This involves aligning experimental conditions and parameters and being sensitive to misunderstandings arising from domain-specific criteria [1].

Troubleshooting Guides

Problem: Siloed Thinking and Lack of Cohesion

| Observed Symptom | Recommended Action | Expected Outcome |

|---|---|---|

| Scientists prioritize domain-specific excellence over project goals [1]. | Facilitate big-picture context sessions where each discipline explains their role and dependencies [2]. | Team members understand project goals, leading to compromise for the common good [1]. |

| Miscommunication due to disciplinary jargon [2]. | Create a shared glossary of terms and encourage the use of precise language [2]. | Reduced misunderstandings and clearer communication [2]. |

| Lack of personal connection between team members. | Use structured team-building activities and personality assessments (e.g., 16 Personalities, CliftonStrengths) [3]. | Improved trust, psychological safety, and team cohesion [3]. |

Problem: Inefficient and Desynchronized Workflows

| Observed Symptom | Recommended Action | Expected Outcome |

|---|---|---|

| Experiments are delayed due to unready inputs from other disciplines [1]. | Implement formal workflow synchronization meetings to map out and align temporal interdependencies [1]. | Smoother workflow integration, fewer delays, and optimal resource use [1]. |

| Difficulty integrating data from different domains [1]. | Establish joint data review sessions focused on triangulation to align experimental findings and assumptions [1]. | More reliable, cross-validated data and stronger project conclusions [1]. |

| Team is resistant to changing its composition despite new challenges. | Empower teams to self-organize and formally restructure sub-teams around emerging scientific questions [1]. | An agile team that can dynamically adapt to new challenges and incorporate needed expertise [1]. |

Experimental Protocols for Team Coordination

Protocol 1: Cross-Disciplinary Anticipation Workshop

Objective: To prevent cross-domain inconsistencies by having specialists anticipate the requirements, procedures, and potential challenges of other domains.

Methodology:

- Preparation: Schedule a 2-hour workshop with representatives from all core disciplines on the team.

- Brainstorming: For a key upcoming project milestone, each discipline outlines their planned activities and lists their specific requirements from other teams (e.g., "As chemists, we need the biology team to provide the assay results in X format by this date").

- Anticipation Round: Each group then presents what they believe other disciplines will require from them. This is discussed in a plenary session.

- Gap Analysis: The facilitator leads a discussion to identify mismatches between provided and perceived requirements.

- Action Plan: Develop a concrete action plan to address identified gaps, assigning owners and deadlines.

Protocol 2: Data Triangulation and Alignment Session

Objective: To establish the reliability of knowledge across different knowledge domains by aligning experimental parameters and scrutinizing findings.

Methodology:

- Pre-Session Data Sharing: Circulate relevant data sets (e.g., in vivo data from immunology, in vitro data from biochemistry) among sub-teams at least 48 hours in advance [1].

- Structured Meeting: Conduct a 90-minute session with a clear agenda:

- Presentation: Each domain briefly presents their key findings and the experimental conditions used.

- Cross-Examination: Teams from different domains ask clarifying questions, focusing on understanding how experimental conditions (e.g., buffer composition, cell lines, animal models) might influence the results.

- Alignment: The group discusses discrepancies and works to align on a set of core, cross-disciplinary findings. Unexplained discrepancies are flagged for follow-up experiments.

- Documentation: A summary of the discussion, aligned findings, and action items is shared with the entire project team.

| Resource Category | Specific Tool / Resource | Function / Purpose |

|---|---|---|

| Team Science Frameworks | Collaboration & Team Science: A Field Guide [3] | Provides best practices, tips, and tools for working effectively in a research team, covering leadership, trust, and conflict. |

| "Seven Norms of Collaboration" [3] | Offers practical tips for effective listening and contributing in meetings and collaborative environments. | |

| Formal Agreement Templates | Collaboration Agreement Template [3] | Helps teams explicitly define how they will collaborate, preemptively addressing potential conflicts over authorship, data sharing, and roles. |

| Personality & Style Assessments | 16 Personalities / Myers-Briggs [3] | Builds self-awareness and team understanding of different working and communication styles. |

| CliftonStrengths [3] | Provides a shared language for articulating individual strengths and contribution styles. | |

| Project Management Tools | Drug Discovery Guide (e.g., MSIP Excel Template) [4] | A flexible template to track and plan key experiments, de-risking a drug candidate by ensuring critical data is collected. |

| External Expertise | Contract Research Organizations (CROs) [4] | Provide efficient, highly experienced support for specialized studies (e.g., pharmacokinetics, toxicology), supplementing internal team capabilities. |

Multidisciplinary Team Coordination Workflow

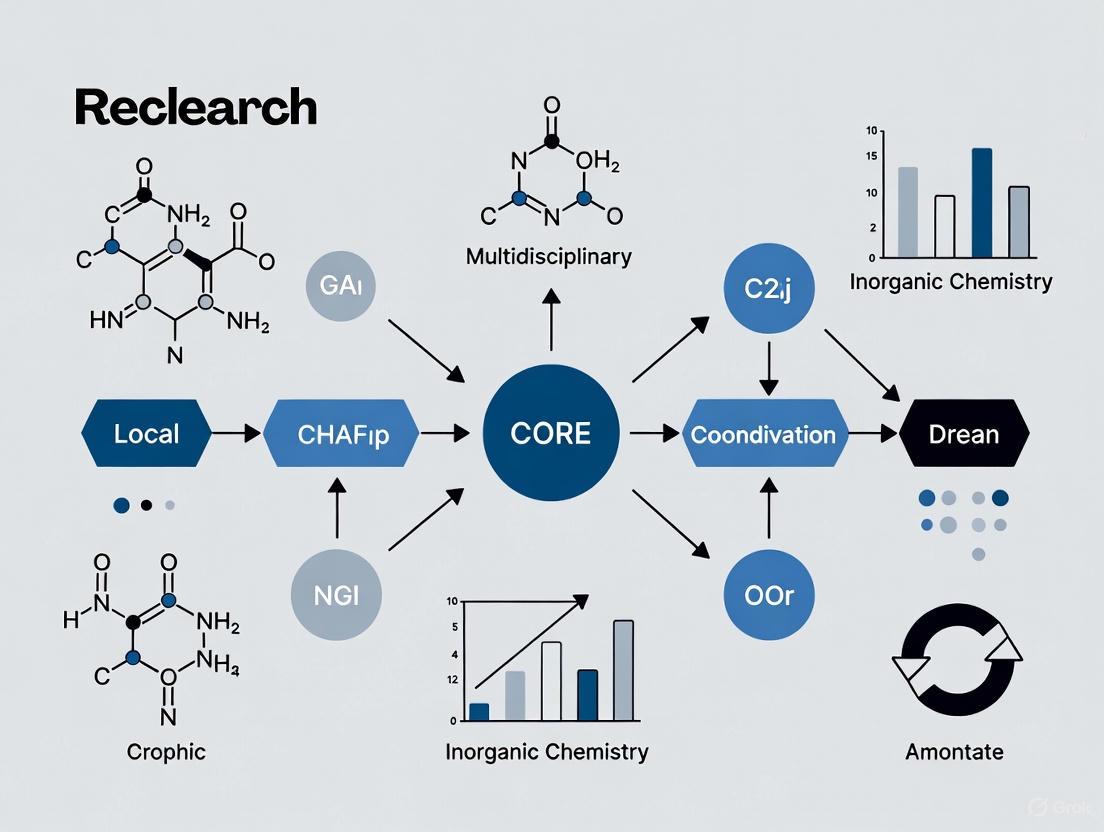

The diagram below illustrates the dynamic interplay between formal structures and informal practices that underpin successful multidisciplinary teams in drug discovery.

The Critical Balance Between Formal and Informal Coordination Mechanisms

In the high-stakes field of multidisciplinary drug development and research analysis, effective coordination is not merely beneficial—it is essential for success. Coordination is defined as "the integration of the activities of individuals and units into a concerted effort that works towards a common aim" [5]. For researchers, scientists, and drug development professionals, this translates to seamlessly integrating diverse expertise—from basic research and preclinical studies to clinical trials and applied research—to accelerate innovation and improve outcomes.

The complex landscape of modern research, particularly in drug development, demands a sophisticated approach to coordination. Multidisciplinary teamwork in non-hospital settings has demonstrated significant benefits, including improved self-management, self-efficacy, and patient satisfaction for chronic conditions, though effects on clinical outcomes require further investigation [6]. Furthermore, advancements in biotechnology have ushered in a new era characterized by increased collaborative efforts among academic institutions, pharmaceutical firms, hospitals, and foundations [7]. These partnerships are essential for addressing the increasingly complex health needs of patients and accelerating the pace of scientific discovery.

This technical support center provides frameworks, diagnostics, and protocols to help research teams strike the critical balance between formal coordination—structured, process-driven mechanisms—and informal coordination—flexible, relationship-based approaches [5]. By understanding and implementing both types of coordination mechanisms, multidisciplinary teams can enhance their collaborative potential, navigate the complexities of modern research environments, and ultimately drive more successful outcomes in drug development and scientific innovation.

Core Concepts: Formal and Informal Coordination

Defining Formal Coordination Mechanisms

Formal coordination refers to the structured, predefined systems and processes established by an organization to integrate activities and ensure alignment with institutional goals [5] [8]. These mechanisms are characterized by their deliberate design, explicit documentation, and adherence to established protocols. In research environments, formal coordination creates the essential scaffolding that supports reproducible science, regulatory compliance, and accountable resource management.

Formal coordination mechanisms encompass several distinct types that are particularly relevant to multidisciplinary research settings:

- Vertical Coordination: Occurs between different hierarchical levels within an organization, such as communication between principal investigators and research associates, ensuring that tasks and activities align with overarching research strategies [5].

- Horizontal Coordination: Takes place between individuals or departments at the same organizational level, such as collaboration between bioinformatics and wet lab teams, requiring effective communication and resource sharing to accomplish common objectives [5].

- Cross-functional Coordination: Coordinates activities between different functional areas or departments, essential for projects requiring contributions from multiple specialties, such as drug development projects that require integrated efforts from discovery, development, and clinical research teams [5].

Defining Informal Coordination Mechanisms

Informal coordination operates through social networks, relationships, and spontaneous interactions that develop organically within research environments [5]. Unlike their formal counterparts, these mechanisms are not mandated by institutional policy but emerge naturally from daily interactions among team members. They represent the vital human element that complements structured processes, enabling adaptability, trust-building, and creative problem-solving.

The "grapevine"—as informal communication is often called—manifests in several distinct patterns within research organizations [9] [10]:

- Cluster Networks: In this common form, a person receives information and chooses to share it with their trusted network clusters. This selective sharing based on trust often characterizes how preliminary research findings or methodological insights circulate among specialist subgroups before formal dissemination [9].

- Single-Strand Chains: Information passes sequentially from one person to another in a linear fashion. This pattern might occur when specific technical details or procedural updates are shared among team members with sequential dependencies in their workflows [10].

- Probability Chains: Individuals randomly share information with others without a predetermined pattern. This approach can be valuable for serendipitous connections or cross-pollination of ideas across disparate research domains [9].

Table: Comparison of Formal and Informal Coordination Mechanisms

| Characteristic | Formal Coordination | Informal Coordination |

|---|---|---|

| Basis | Formal systems, processes, and structures [5] | Social networks and relationships [5] |

| Reliability | High, with documented trails [9] [10] | Variable, with no documentation [9] |

| Speed | Slower, due to structured processes [9] [10] | Fast, often instantaneous [9] [10] |

| Flexibility | Low, bound by established rules [11] | High, adaptable to changing needs [11] |

| Primary Benefit | Accountability and consistency [11] | Enhanced morale and creativity [11] |

| Primary Risk | Rigidity and slow communication [9] [11] | Potential for misinformation [9] [11] |

Troubleshooting Guide: Common Coordination Challenges

This section addresses frequently encountered coordination breakdowns in multidisciplinary research teams, providing diagnostic questions and evidence-based solutions.

FAQ 1: How can we maintain research quality and reproducibility while accelerating our pace?

Diagnostic Questions:

- Are protocol deviations consistently documented and analyzed?

- Do team members bypass established processes to save time?

- Is there variability in experimental execution across team members?

Solution: Implement a balanced coordination framework with complementary formal and informal elements. Formal mechanisms ensure standardization, while informal channels facilitate quick problem-solving.

Formal Components:

- Establish detailed Standard Operating Procedures (SOPs) with version control

- Implement electronic lab notebooks with required data fields

- Create formal audit trails for critical reagent handling

Informal Components:

- Institute weekly "methods huddles" for rapid troubleshooting

- Create specialized communication channels (e.g., Slack groups) for technical issues

- Facilitate peer-to-peer observation and feedback sessions

FAQ 2: How do we overcome communication barriers between different scientific disciplines?

Diagnostic Questions:

- Do team members use discipline-specific jargon that others don't understand?

- Are there misunderstandings about methodological constraints across disciplines?

- Do team members from different specialties socialize or interact informally?

Solution: Create structured opportunities for informal interaction while establishing formal communication standards.

Formal Components:

- Develop a shared glossary of terms across disciplines

- Implement structured cross-training sessions with required attendance

- Establish formal templates for cross-disciplinary project updates

Informal Components:

- Facilitate informal "lunch and learn" sessions without formal agendas

- Create mixed-discipline teams for problem-solving exercises

- Establish interest-based groups (e.g., journal clubs) that span disciplines

FAQ 3: How can we better manage conflicts between research urgency and regulatory compliance?

Diagnostic Questions:

- Do researchers ever bypass approval processes to accelerate timelines?

- Is there tension between quality assurance staff and research staff?

- Are compliance requirements perceived as obstacles rather than safeguards?

Solution: Develop integrated workflows that embed compliance into research processes through both formal and informal mechanisms.

Formal Components:

- Establish clear, documented approval pathways with explicit turnaround times

- Create parallel processing systems for time-sensitive activities

- Implement formal compliance checkpoints integrated with research milestones

Informal Components:

- Facilitate regular informal meetings between compliance and research staff

- Establish mentorship pairings between experienced and junior researchers

- Create shared spaces that encourage spontaneous interactions across functions

Experimental Protocols: Investigating Coordination Effectiveness

Protocol: Measuring the Impact of Coordination Balance on Research Outcomes

Background: Systematic investigation requires validated methodologies to quantify how formal and informal coordination mechanisms affect research productivity, innovation, and team dynamics.

Objective: To evaluate the effects of different coordination approaches on multidisciplinary research team performance and identify optimal balances for various research contexts.

Materials:

- Multidisciplinary research teams (minimum 4 members from different disciplines)

- Project management and communication tracking software

- Team performance assessment surveys

- Output quality rating rubrics

- Data recording and analysis platform

Methodology:

- Baseline Assessment Phase (Weeks 1-2):

- Document existing formal and informal coordination mechanisms

- Map communication networks using survey tools

- Establish baseline performance metrics

Intervention Phase (Weeks 3-10):

- Implement complementary formal and informal mechanisms

- Formal: standardized reporting templates, regular progress reviews

- Informal: structured social interactions, cross-disciplinary brainstorming

- Track coordination activities and research progress

Evaluation Phase (Week 11):

- Measure outcome variables against baseline

- Analyze relationship between coordination patterns and outcomes

- Identify successful coordination balances for specific contexts

Table: Research Reagent Solutions for Coordination Experiments

| Item | Function | Application in Coordination Research |

|---|---|---|

| Communication Tracking Software | Records and analyzes team interactions | Quantifies formal and informal communication patterns [7] |

| Network Mapping Survey | Visualizes relationship structures | Identifies informal networks and communication pathways [7] |

| Team Performance Metrics | Assesses output quality and efficiency | Measures impact of coordination approaches on research outcomes [6] |

| Coordination Mechanism Inventory | Catalogs formal and informal processes | Provides baseline assessment of existing coordination approaches [5] |

Data Analysis and Interpretation Framework

Quantitative Measures:

- Communication frequency and patterns by mechanism type

- Research output quality scores

- Timeline adherence metrics

- Team satisfaction and psychological safety scores

Analytical Approach:

- Compare performance metrics across coordination conditions

- Conduct correlation analysis between coordination balance and outcomes

- Perform subgroup analysis by research phase and team composition

Visualization: Coordination Mechanisms Workflow

The following diagram illustrates the integrated relationship between formal and informal coordination mechanisms in supporting multidisciplinary research:

Coordination Mechanisms in Research Workflow - This diagram illustrates how formal and informal coordination mechanisms operate in parallel to support multidisciplinary research goals, with both pathways contributing to enhanced research outcomes.

The critical balance between formal and informal coordination mechanisms is not a fixed formula but a dynamic equilibrium that must be continually assessed and adjusted based on research phase, team composition, and project requirements. Evidence suggests that both mechanistic (formal) and organic (informal) coordination approaches can positively impact project performance in open innovation R&D settings [8]. The most successful multidisciplinary research teams intentionally design and cultivate both types of coordination, recognizing their complementary strengths and compensating for their respective limitations.

For research teams seeking to optimize their coordination approaches, regular assessment of both formal structures and informal networks is essential. By applying the troubleshooting frameworks, experimental protocols, and visualization tools provided in this technical support center, teams can systematically enhance their coordination capabilities. The ultimate goal is creating research environments where formal mechanisms provide the necessary structure for rigor and reproducibility, while informal mechanisms foster the creativity, adaptability, and collaboration that drive scientific innovation forward.

Navigating Knowledge Boundaries and Thought Worlds Across Disciplines

Frequently Asked Questions (FAQs) for Multidisciplinary Analysis Teams

Q1: Why is the text on my data visualization axis labels difficult to read, and how can I fix it? A1: Poor readability is often due to insufficient color contrast between the text and its background. This is not just a visual design issue but an accessibility one, as it can prevent team members with low vision from interpreting data correctly. The solution is to ensure your contrast ratio meets the Web Content Accessibility Guidelines (WCAG). For most text, a minimum contrast ratio of 4.5:1 is required. For large-scale text (approximately 18.66px and bold or larger, or 24px and larger), a ratio of 3:1 is sufficient [12] [13] [14]. In charting libraries, you must explicitly set the text color property, as the default may not provide enough contrast.

Q2: How do I programmatically change axis text color in common charting libraries?

A2: The method depends on your library. Crucially, you often need to set the color within a textStyle object, not a general color property.

- Google Charts: Use the

textStyleconfiguration within the axis object [15]. - D3.js: Use the

.style()method on your text elements after they have been appended [16] [17].

Q3: My chart has a dark background. What are the best color choices for text and graphical elements?

A3: When using a dark background, choose light colors for foreground elements to achieve high contrast. For example, white (#FFFFFF) or light grey (#F1F3F4) text on a dark grey (#202124) background provides an excellent contrast ratio. The required contrast ratio for graphical objects, like the lines of a chart or the borders of input fields, is at least 3:1 [18] [14]. Always use a contrast checker tool to validate your choices.

Q4: What constitutes "large text" for the different contrast requirements? A4: "Large text" is defined by WCAG in two ways [13] [14]:

- Text that is at least 18.66 pixels (14pt) and bold.

- Text that is at least 24 pixels (18pt) in size, regardless of weight.

Troubleshooting Guides

Guide 1: Resolving Low Color Contrast in Data Visualizations

Problem: Text or graphical elements in a chart have insufficient color contrast, making them hard to read and potentially excluding team members.

Investigation & Diagnosis:

- Identify Low-Contrast Elements: Visually scan charts for faded or hard-to-read text (axis labels, legends, data labels) and graphical elements (chart lines, data points).

- Measure Contrast Ratio: Use an online contrast checker (e.g., WebAIM's Contrast Checker [14]). Input the foreground (text) and background colors to get a numerical ratio.

- Compare Against Standards: Check if the ratio meets the required threshold.

Solution & Protocol:

- Adjust Colors: Choose a new foreground or background color from the approved palette that provides a higher contrast ratio.

- Implement in Code: Update your chart's configuration using the correct syntax for your library (see FAQ A2).

- Re-test: Always re-check the final implementation with a contrast checker to ensure compliance.

Guide 2: Implementing a Consistent and Accessible Color Palette

Problem: Inconsistent color usage across visualizations from different team members causes confusion and slows down analysis.

Investigation & Diagnosis: Audit existing charts and tools for color usage. Look for non-compliant contrast and inconsistent meaning (e.g., "red" means "high" in one chart and "error" in another).

Solution & Protocol:

- Define a Team Palette: Standardize a limited set of colors to be used by all team members. The provided palette (

#4285F4,#EA4335,#FBBC05,#34A853,#FFFFFF,#F1F3F4,#202124,#5F6368) is designed for good contrast and distinctness. - Document Color Meaning: Create a shared document that defines the semantic meaning of each color (e.g.,

#34A853for "go/success",#EA4335for "stop/error"). - Provide Implementation Examples: Share code snippets showing how to apply the palette in common libraries like Google Charts or D3.js.

Quantitative Data Tables

Table 1: WCAG 2.2 Level AA Color Contrast Requirements

| Element Type | Text Size / Context | Minimum Contrast Ratio | WCAG Level |

|---|---|---|---|

| Normal Text | Less than 18.66px and not bold | 4.5:1 | AA |

| Large Text | 18.66px and bold, or 24px and larger | 3:1 | AA |

| Graphical Objects | User interface components (buttons, borders), chart elements | 3:1 | AA |

| Normal Text | Less than 18.66px and not bold | 7:1 | AAA |

| Large Text | 18.66px and bold, or 24px and larger | 4.5:1 | AAA |

Source: Compiled from WCAG understanding documents and WebAIM [18] [13] [14].

Table 2: Approved Color Palette with Contrast Examples

| Color Name | Hex Code | Example Use Case | Contrast with White | Contrast with Dark Grey |

|---|---|---|---|---|

| Blue | #4285F4 |

Primary data series | 4.3:1 (Fails for text) | 3.8:1 (Passes for graphics) |

| Red | #EA4335 |

Error states, negative trends | 3.8:1 (Fails for text) | 3.5:1 (Passes for graphics) |

| Yellow | #FBBC05 |

Warnings, highlights | 2.0:1 (Fails) | 8.4:1 (Passes for text) |

| Green | #34A853 |

Success states, positive trends | 3.6:1 (Fails for text) | 4.1:1 (Passes for graphics) |

| White | #FFFFFF |

Text on dark backgrounds | 21:1 (Passes) | 21:1 (Passes) |

| Light Grey | #F1F3F4 |

Secondary text, backgrounds | 1.8:1 (Fails) | 12.6:1 (Passes) |

| Dark Grey | #202124 |

Primary text, dark backgrounds | 21:1 (Passes) | N/A |

| Medium Grey | #5F6368 |

Borders, inactive elements | 6.3:1 (Passes) | 4.8:1 (Passes) |

Note: Contrast ratios are approximate. Always verify with a checker [14].

Experimental Protocols

Protocol 1: Validating Color Contrast in a Multidisciplinary Team Environment

Objective: To establish a standardized, repeatable method for verifying that all data visualizations shared within the team meet minimum accessibility contrast standards.

Methodology:

- Tool Selection: Designate a specific contrast checking tool (e.g., WebAIM's Contrast Checker [14]) as the team standard.

- Sampling: For each new visualization, sample at least three critical text elements (e.g., main title, one axis label, one data label) and one graphical element (e.g., a key data line).

- Measurement:

- Use the tool's eyedropper function or manually input Hex codes for foreground and background colors.

- Record the computed contrast ratio for each sampled element.

- Validation: Check that each recorded ratio meets or exceeds the requirements outlined in Table 1. Document any failures.

Required Reagents & Solutions:

- Software: Web browser with access to a designated contrast checker.

- Data: The visualization to be tested (e.g., a PNG image, or a live web page).

- Documentation: A shared lab notebook or digital file to record validation results.

Workflow and Relationship Visualizations

Multidisciplinary Analysis Workflow

Visualization Accessibility Check

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Materials for Accessible Visualizations

| Item Name | Function / Application |

|---|---|

| WebAIM Contrast Checker | An online tool to calculate the contrast ratio between two hex colors and immediately determine WCAG compliance [14]. |

| Approved Color Palette | A pre-defined set of hex codes (e.g., #4285F4, #EA4335) that ensures visual consistency and accessibility across all team visualizations. |

| Google Charts Library | A widely-used, well-documented JavaScript library for creating interactive charts, with configuration options for accessibility features like text color [19]. |

| D3.js Library | A powerful JavaScript library for producing custom, dynamic data visualizations, offering low-level control over styling and appearance [17]. |

| WCAG 2.2 Guidelines | The definitive international standard for web accessibility, providing the technical requirements for contrast that form the basis of this protocol [12] [18] [13]. |

Technical Support Center: Troubleshooting Common Team Coordination Issues

This support center provides practical solutions for researchers, scientists, and drug development professionals facing common coordination challenges in multidisciplinary R&D teams.

Troubleshooting Guides

Issue: Delays in Shared Data Analysis

- Problem Description: Multiple teams or institutions are struggling to analyze shared datasets due to inconsistent procedures, communication gaps, and incompatible data formats, leading to significant project delays.

- Diagnosis Steps:

- Identify Inconsistencies: Audit data handling and analysis procedures across all participating teams to identify points of divergence.

- Map Communication Channels: Diagram formal and informal communication pathways to locate bottlenecks or missing links.

- Assess Tool Compatibility: Inventory software, versions, and computational environments used by all collaborators.

- Resolution Actions:

- Establish a Common Framework: Develop and document a minimal set of standardized operating procedures (SOPs) for data management.

- Leverage Common Resources: Implement a shared project intranet or database with a unified interface to reduce communication and data transfer costs [20].

- Create a Communication Protocol: Schedule brief, regular sync meetings with a clear agenda to share updates and align on next steps [21].

Issue: Breakdown in Interdisciplinary Communication

- Problem Description: Team members from different scientific domains (e.g., basic science, clinical research, data science) cannot communicate effectively, leading to misunderstandings and duplicated effort [22].

- Diagnosis Steps:

- Define Terminology: Have each discipline list their key terms and definitions to identify jargon and concepts that may be misunderstood.

- Gather Feedback: Use anonymous surveys or interviews to assess team members' satisfaction with collaboration and identify specific communication pain points [22].

- Resolution Actions:

- Develop a Shared Glossary: Create a living document of project-specific terms and definitions that is accessible to all team members.

- Facilitate Cross-Functional Understanding: Organize micro-learning sessions or job shadowing opportunities to build empathy and understanding across disciplines [21].

- Position Yourself as an Advocate: Empathize and assure all members that you are working together towards a common goal [23].

Frequently Asked Questions (FAQs)

Q: Our multi-university collaboration feels inefficient. Is this normal? A: Yes, this is a documented challenge. Research shows that collaborations involving multiple universities impose significantly higher coordination costs than single-institution projects. These stem from institutional differences (e.g., pay scales, tenure requirements) and geographical distance, which can slow communication and consensus-building [20].

Q: What is the tangible impact of good team dynamics on research outcomes? A: Positive team dynamics are directly correlated with success. One study of multidisciplinary pilot awards found that the quality of team interactions was positively and significantly associated with the achievement of scholarly products like manuscripts and grant proposals (r = 0.64, p = 0.02) [22].

Q: How can we reduce the "coordination costs" in our team? A: Focus on developing shared knowledge. Teams that build a foundation of common understanding over time learn to communicate more effectively and save energy through a more efficient division of labor. This process decreases coordination costs and can lead to "super-efficiency," where the team's output becomes greater than the sum of individual contributions [24].

Q: What technological tools can help keep distributed R&D teams aligned? A: Utilize project management tools that offer real-time dashboards for visibility into progress and deadlines [21]. For time and expense tracking, integrated software solutions can provide insights into resource utilization, helping teams stay on budget and timeline [25].

Coordination Data and Impact

The following tables summarize quantitative findings on how team structure and dynamics influence research outcomes.

Table 1: Impact of Multiple Universities on Project Coordination and Outcomes [20]

| Variable | Single-University Projects | Multi-University Projects | Statistical Significance |

|---|---|---|---|

| Coordination Activities | Higher level of coordination activities reported | Fewer coordination activities | ( p < 0.01 ) |

| Project Outcomes | More project outcomes achieved | Worse project outcomes | ( p < 0.05 ) |

| Effect of Each Additional University | -- | 5.5% decrease in coordination; 3.3% decrease in outcomes | ( p < 0.01 ) |

Table 2: Association Between Team Dynamics and Scholarly Outputs [22]

| Team Dynamic Metric | Correlation with Achievement of Scholarly Products | Statistical Significance |

|---|---|---|

| Quality of Team Interactions | r = 0.64 | p = 0.02 |

| Team Collaboration Score | r = 0.43 | Not Significant (p-value not reported) |

| Satisfaction with Team Members | r = 0.38 | Not Significant (p-value not reported) |

Experimental Protocol: Assessing Team Coordination

This methodology details how to quantitatively and qualitatively assess coordination within a multidisciplinary research team.

1. Objective: To measure coordination activities, team dynamics, and their association with project outcomes in a research collaboration.

2. Background: Integrating diverse expertise requires creating a common language and managing task dependencies. Multi-university projects face higher coordination costs due to geographical and institutional barriers, complicating this integration [20].

3. Materials and Reagents:

- Survey Platform: Software such as Qualtrics for distributing and collecting survey responses [22].

- Communication Badges: Sociometric badges or similar tools to record meta-communication patterns (total silence, speaking, listening, overlap) during team meetings [24].

- Data Analysis Software: Statistical packages (e.g., R, SPSS) for analyzing survey, communication, and outcome data.

4. Procedure:

Step 1: Participant Recruitment

- Recruit all named members of the research team or project under study. Aim for a high response rate to ensure data reliability [22].

Step 2: Data Collection - Survey Administration

- Distribute a validated survey to all team members. Key metrics to collect include [22]:

- Satisfaction with Team Members: Rate satisfaction with each collaborator on a 5-point scale.

- Assessment of Team Collaboration: Use an 8-item scale to assess interpersonal processes and collaborative productivity.

- Quality of Team Interactions: Measure using the 18-item Team Performance Scale (TPS).

- Team Tenure: Record the length of time members have collaborated with one another.

Step 3: Data Collection - Objective Metrics

- Communication Tracking: Use sociometric badges during team meetings to objectively quantify communication patterns over time [24].

- Outcome Measurement: After a set period (e.g., 18 months), count tangible scholarly products (manuscripts, grant proposals, awarded grants) linked to the project [22].

Step 4: Data Analysis

- Aggregate individual survey responses at the team level by calculating median scores.

- Use correlation analysis (e.g., Pearson's r) to examine the relationship between team dynamic scores (e.g., quality of interactions) and the number of scholarly products achieved [22].

- Employ regression analysis to test the impact of the number of collaborating institutions on coordination activities and outcomes [20].

5. Safety and Ethics:

- Obtain Institutional Review Board (IRB) approval before beginning the study.

- Ensure participant anonymity and confidentiality for all survey and communication data.

Team Coordination Workflow

The diagram below illustrates the pathway from team formation to outcomes, highlighting how coordination acts as a critical mediator.

The Scientist's Toolkit: Key Research Reagents for Team Science

Table 3: Essential Materials and Tools for Coordinated Research

| Item | Function/Benefit |

|---|---|

| Project Management Software | Facilitates task planning, tracking, and transparency. Tools with real-time dashboards keep all members aligned on progress and deadlines [21]. |

| Shared Digital Workspace | A common intranet or platform reduces communication costs, provides a single source of truth for data and protocols, and leads to more systematic methods [20]. |

| Standardized Operating Procedures (SOPs) | Documented guidelines for data handling, communication, and analysis ensure consistency across teams and institutions, reducing errors and rework. |

| Communication Assessment Tools | Surveys (e.g., Team Performance Scale) and sociometric badges provide objective data on team dynamics, helping to identify and troubleshoot coordination issues [22] [24]. |

| Video Conferencing & Chat Platforms | Enable regular sync meetings and spontaneous communication, which are essential for building trust and maintaining awareness in distributed teams [21]. |

Building and Executing Your Collaborative Framework: Practical Tools and Processes

Frequently Asked Questions (FAQs)

Q1: Our multidisciplinary team is avoiding controversial topics and seems overly polite, slowing down our research. What stage are we likely in, and how can we advance? Your team is likely in the Forming stage. This initial phase is characterized by politeness, tentative joining, and a desire to avoid controversy as team members get acquainted and seek acceptance [26]. To advance, the team must consciously relinquish the comfort zone of non-threatening topics and risk the possibility of conflict [26]. Facilitate this by having the team leader or project guide provide clear structure, establish the team's mission and vision early, and create specific objectives and tasks to build a foundation of safety from which the team can progress [26] [27].

Q2: We are experiencing significant conflict over goals, roles, and how to handle our coupled variables. Is this normal, and how can we resolve it without damaging collaboration? Yes, this is a normal and expected part of the Storming stage [28]. As teams begin to organize tasks, interpersonal conflicts surface around leadership, power, and structural issues [26]. To resolve this constructively, confront conflict in a healthy manner [27]. Avoidance does not support team building. Instead, establish clear processes for conflict resolution, refocus on the team's shared goals, and clarify roles and responsibilities [26] [29]. Teach and encourage active listening skills, as moving from a "testing and proving" mentality to a problem-solving one is crucial for progression [26].

Q3: After a period of conflict, our team has agreed on processes and is working together more harmoniously. How can we solidify these new ways of working? Your team has entered the Norming stage, where members create new ways of doing and being together [26]. To solidify this, formalize the agreed-upon processes and procedures [27]. This is the time to develop a shared decision-making process and ensure problem-solving is a collaborative effort [26]. Leadership should shift to a more shared model, promoting team interaction and asking for contributions from all members to reinforce the collaborative work ethic and shared leadership [26].

Q4: What does a "Performing" team look like in a multidisciplinary research context, and how can we sustain it? A team in the Performing stage operates with true interdependence and flexibility [26]. In a research context, this means team members understand each other's strengths and weaknesses, roles are clear, and the team can organize itself to be highly productive [26] [28]. To sustain this, maintain team flexibility and ensure leadership (which is now shared) continues to observe and fulfill team needs [26]. Keep the team focused on its goals and celebrate accomplishments to maintain high commitment and satisfaction [26] [27]. Be aware that changes, such as a new member joining, can cause the team to cycle back to an earlier stage, so continuous attention to process is key [29].

Q5: How do we effectively conclude a project team while preserving knowledge and relationships for future collaborations? This final Adjourning stage requires managing the team's termination and transition [26]. To conclude effectively, the team should evaluate its efforts, tie up loose ends, and recognize and reward team achievements [26]. A planned conclusion should include recognition for participation and achievement, and provide an opportunity for members to say personal goodbyes [26]. This formal acknowledgement helps provide closure, manages feelings of termination, and helps carry forth collaborative learning to the next opportunity [26] [28].

Troubleshooting Guides

Diagnosis and Resolution of Common Team Dysfunctions

Problem: Lack of Shared Understanding and Conflicting Goals

- Symptoms: Miscommunication, misaligned efforts, disagreements about priorities, and tension between members from different disciplines (e.g., research vs. development) [30].

- Underlying Stage: This is common in the Storming stage but can persist if not resolved [29] [28].

- Root Cause: Team members come from different functional areas with their own jargon, objectives, and success metrics, leading to a lack of a unified direction [30].

- Solution:

- Re-establish Common Goals: Facilitate a session to redefine the team's overarching mission and how each discipline contributes to it [30].

- Clarify Interdependencies: Use a framework like Multidisciplinary Design Optimization (MDO) to explicitly map coupling variables (shared information), design variables (what each team controls), and response variables (team outputs) [31].

- Implement a Clear Communication Plan: Define how key information will be shared, including status updates and decision-making processes [27].

Problem: Ineffective Conflict Resolution Stifling Progress

- Symptoms: Arguments among members, avoidance of difficult discussions, vying for leadership, and a lack of consensus-seeking behaviors [26].

- Underlying Stage: Storming [26] [28].

- Root Cause: Inability to move from a "testing and proving" mentality to a problem-solving mentality, often exacerbated by a lack of trust and effective listening skills [26].

- Solution:

- Acknowledge the Conflict: Leaders should openly acknowledge that conflict is a normal part of team development [26].

- Establish Conflict Resolution Ground Rules: Define acceptable ways to voice disagreements and create a psychologically safe environment for discussion [28] [27].

- Focus on Interests, Not Positions: Guide the team to explore the underlying reasons for their stances and find mutually beneficial solutions [26].

Problem: Regression to an Earlier Stage After Making Progress

- Symptoms: A team that was working well (Norming/Performing) suddenly returns to Storming behaviors, such as increased conflict or confusion over roles [29] [28].

- Underlying Cause: A significant change, such as a new team member joining, a change in project scope, or a key member leaving [29] [28].

- Solution:

- Anticipate and Normalize Regression: Recognize that team development is not always linear and that regression is a common response to change [29].

- Revisit Team Charters and Processes: Quickly re-clarify goals, roles, and ground rules to re-stabilize the team [27].

- Re-integrate New Members: Formally onboard new members into the team's mission, culture, and established workflows [28].

Quantitative Data on Team Development and Effectiveness

Table 1: Behavioral Indicators and Leadership Needs Across Tuckman's Stages

| Stage | Observable Behaviors | Team Feelings & Thoughts | Critical Team Needs | Required Leadership Style |

|---|---|---|---|---|

| Forming [26] | Politeness, tentative joining, avoidance of controversy, discussion of irrelevant problems. | Excitement, optimism, suspicion, anxiety, uncertainty about roles. | Clear mission & vision, specific objectives, defined roles, ground rules. | Directive, provides structure and task direction [26]. |

| Storming [26] | Arguing, vying for leadership, lack of role clarity, power struggles, lack of progress. | Defensiveness, frustration, fluctuations in attitude, questioning team goals. | Conflict resolution, effective listening, clarification of team purpose, reestablishing ground rules. | Coaching, acknowledges conflict, teaches resolution methods, encourages shared leadership [26]. |

| Norming [26] | Agreement on processes, comfort with relationships, effective conflict resolution, balanced influence. | Sense of belonging, high confidence, trust, freedom to express and contribute. | Develop decision-making processes, shared problem-solving, utilization of all resources. | Participative & Supportive, facilitates collaboration, builds relationships [26]. |

| Performing [26] | Fully functional, self-organizing, flexible subgroups, understanding of strengths/weaknesses. | Empathy, high commitment, tight bonds, satisfaction, personal development. | Maintain flexibility, measure performance, continuous feedback. | Delegating, shared leadership is practiced; leader provides minimal direction [26]. |

| Adjourning [26] | Visible signs of grief, restless behavior, bursts of energy followed by lethargy. | Sadness, humor, relief. | Evaluate efforts, tie up loose ends, recognize and reward. | Supporting, provides closure, good listening, reflection [26]. |

Table 2: Impact of Cross-Functional Team Dynamics on Performance

| Factor | Impact on Performance | Supporting Evidence |

|---|---|---|

| Blended Skills & Perspectives | Can lead to a 35% performance edge over homogenous teams [30]. | Research by McKinsey indicates diversity of thought drives groundbreaking achievements [30]. |

| Clear Objectives | Only 15% of employees are typically aware of their organization's most important goals, making clear goals a key differentiator for effective teams [32]. | A well-defined mission provides clarity and direction, allowing teams to prioritize efforts and reduce misalignment [32]. |

| Multidisciplinary Healthcare Teams | Found to reduce patient mortality, complications, length of hospital stay, and readmissions [33]. | A systematic review showed these teams improve patient outcomes and enhance the quality and coordination of care [33]. |

Experimental Protocols for Team Coordination

Protocol: Establishing a Team Charter and Defining Interdependencies

Purpose: To create a foundational document that aligns a multidisciplinary team during the Forming stage, explicitly defining shared goals, individual roles, and critical interdependencies to prevent Storming-stage conflicts.

Background: Effective teams begin with a clear structure and a shared understanding of their mission [26] [27]. For multidisciplinary teams working on complex problems, explicitly defining how the teams are coupled is essential, as dependencies need to be converged to find a feasible solution for the entire system [31].

Methodology:

- Kick-off Meeting: Convene all team members and key stakeholders.

- Define the Mission Statement: Collaboratively articulate the team's primary objective. For a research team, this could be "To optimize the design of a new drug delivery system through integrated computational modeling and in-vitro experimentation."

- Identify Key Variables (Adapted from MDO [31]):

- Shared Variables: Brainstorm and list all

coupling variables(information shared between disciplines, e.g., physicochemical properties of a compound, efficacy data from a bioassay). - Inputs and Outputs: For each sub-team, define their

design variables(parameters they control) andresponse variables(outputs they produce).

- Shared Variables: Brainstorm and list all

- Establish Ground Rules: Document expectations for communication (e.g., response times, meeting schedules), decision-making, and conflict resolution [27].

- Document the Charter: Formalize all agreements into a single, living document.

Expected Outcome: A Team Charter that serves as a binding reference point, reducing ambiguity and setting the stage for effective collaboration.

Protocol: Conducting a Structured Norming Session

Purpose: To formally guide a team from the Storming stage into the Norming stage by establishing shared workflows and reinforcing psychological safety.

Background: The Norming stage is characterized by the establishment of processes and a conscious effort to resolve problems and achieve harmony [26] [29]. This protocol creates a dedicated forum for that work.

Methodology:

- Scheduling: Conduct this session after the team has navigated its initial major conflicts but before it is expected to perform at peak efficiency.

- Review and Reflect: Revisit the Team Charter and discuss what has worked well and what has been challenging during the initial project phase.

- Process Formalization:

- Workflow Mapping: Visually map the agreed-upon processes for data sharing, analysis, and decision-making. The diagram below can serve as a template.

- Role Clarification: Use a RACI chart (Responsible, Accountable, Consulted, Informed) to clarify involvement in key tasks [27].

- Feedback Practice: Run a short exercise where team members practice giving and receiving constructive feedback on a non-critical topic to strengthen communication skills [26].

Expected Outcome: Explicitly agreed-upon team processes, clarified roles, and strengthened interpersonal relationships that enable the team to become more self-sufficient and productive.

Workflow and Process Visualization

Team Development Workflow with Regression Paths

The Scientist's Toolkit: Essential Reagents for Team Experiments

Table 3: Key Resources for Multidisciplinary Team Coordination

| Tool / Reagent | Function | Application Context |

|---|---|---|

| Team Charter | A foundational document that explicitly states the team's mission, goals, roles, responsibilities, and ground rules [27]. | Used in the Forming stage to create structure and direction, reducing ambiguity and setting expectations from the outset. |

| RACI Chart | A matrix (Responsible, Accountable, Consulted, Informed) that clarifies involvement in tasks and decisions, preventing role confusion [27]. | Critical during Storming and Norming to resolve power struggles and define clear ownership, especially in cross-functional teams. |

| MDO Framework | Multidisciplinary Design Optimization; a structured method for defining system coupling (shared variables), design controls, and team outputs [31]. | Applied in complex research (Forming/Norming) to map interdependencies between disciplines, ensuring technical coordination aligns with team structure. |

| Communication Plan | A defined protocol outlining how information is shared, including channels, frequency, and stakeholders for different update types [27]. | Essential in all stages but established in Forming; vital for maintaining Performing status by preventing misunderstandings and ensuring alignment. |

| Conflict Resolution Protocol | Pre-agreed ground rules for how disagreements will be handled, promoting healthy, constructive conflict rather than avoidance [26] [27]. | Primarily implemented for the Storming stage, but benefits all stages by creating psychological safety and a framework for problem-solving. |

| After-Action Review | A structured debrief process for evaluating team efforts, successes, and lessons learned upon project completion [26]. | The key activity for the Adjourning stage, providing closure, recognizing achievements, and capturing knowledge for future collaborations. |

Foundational Concepts and Quantitative Evidence

In multidisciplinary research teams, effectiveness is defined as the collective capacity to sustainably deliver results [34]. Research has identified specific team behaviors, or "health drivers," that are critical to performance, grouped into four core areas: Configuration, Alignment, Execution, and Renewal [34].

Studies indicate that 17 key health drivers explain between 69% and 76% of the differences between low- and high-performing teams across efficiency, results, and innovation metrics [34]. Among these, four drivers have the most significant impact:

- Trust: Teams with above-average trust were 3.3 times more efficient and 5.1 times more likely to produce results [34].

- Communication: Essential for coordinating complex tasks and preventing errors [35].

- Innovative Thinking: Encourages out-of-the-box solutions and open discussion of new ideas [34].

- Decision Making: Teams with above-average decision making were 2.8 times more innovative [34].

The table below summarizes the quantitative impact of these key drivers.

| Health Driver | Impact on Efficiency | Impact on Results Delivery | Impact on Innovation |

|---|---|---|---|

| Trust | 3.3x more efficient [34] | 5.1x more likely [34] | - |

| Decision Making | - | - | 2.8x more innovative [34] |

The Role of Psychological Safety

Psychological safety is the shared belief that the team is safe for interpersonal risk-taking [36]. It is a performance driver that enables team members to admit mistakes, share unconventional ideas, and ask questions without fear of ridicule or punishment [37]. It is distinct from trust, which exists between individuals, whereas psychological safety applies to the entire team environment [36].

Troubleshooting Guides and FAQs

FAQ: Addressing Common Team Coordination Issues

1. How can we improve decision-making clarity in our interdisciplinary team?

- Problem: Unclear decision-making roles lead to stagnation and confusion.

- Solution: Implement the DARE model (Deciders, Advisers, Recommenders, Executors) to clarify roles [34].

- Deciders: Have the final vote.

- Advisers: Provide input to shape the decision.

- Recommenders: Offer perspectives and present facts.

- Executors: Carry out the decision.

- Protocol: In a team meeting, use a whiteboard to map a recent or upcoming decision to the DARE roles. This visual exercise resolves ambiguity and ensures the right people are involved [34].

2. Our team meetings are unproductive, with uneven participation. How can we fix this?

- Problem: Dominant voices overshadow others, leading to lost ideas and disengagement.

- Solution: Establish and enforce team norms that promote psychological safety [37] [36].

- Protocol:

- Set Speaking Time Expectations: Explicitly encourage contributions from all members [36].

- Start with a "No Wrong Answers" Mindset: Frame brainstorming sessions as exploratory, not evaluative [36].

- Protect Time for Reflection: Build quiet reflection into meetings to allow slower, more deliberate thinkers to contribute [37].

3. A lack of trust is hindering collaboration and risk-taking. How can we rebuild it?

- Problem: Team members are reluctant to rely on one another or share unfinished work.

- Solution: Actively build cognitive trust (belief in competence) and affective trust (interpersonal bonds) [34].

- Protocol:

- Model Vulnerability: Leaders should acknowledge their own mistakes openly and without blame [37] [36].

- Create Bonding Experiences: Host informal sessions, like a "storytelling dinner," where team members share personal or professional experiences that shaped them [34].

- Reframe Mistakes: Publicly analyze setbacks as valuable learning data, not personal failures [37].

Advanced Diagnostic: Assessing Physiological Synchrony

For high-stakes research environments (e.g., clinical simulations, lab crises), Physiological Synchrony (PS) provides an objective measure of team dynamics. PS is the similarity in team members' physiological signals (e.g., heart rate), indicating implicit coordination and cohesion [38].

Experimental Protocol for PS Assessment [38]:

- Equipment Setup: Fit each team member with a wearable electrocardiogram (ECG) sensor to capture heart rate (HR) and heart rate variability (HRV) metrics like RMSSD and SDNN.

- Data Collection: Record data at a high frequency (e.g., 5-second intervals) during a cooperative team task and a baseline (non-interactive) task.

- Proximity Tracking: Use appropriate technology (e.g., indoor positioning systems) to automatically capture the physical distance between team members.

- Analysis: Calculate PS using dynamic time warping (dtw) to compare the physiological signals of team members over time. Compare PS during high-interaction tasks versus baseline.

Interpretation: Higher PS during cooperative tasks compared to baseline suggests strong team cohesion and non-verbal alignment. This data can be used to provide high-resolution feedback on team dynamics that traditional surveys might miss [38].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function | Key Features for Teams |

|---|---|---|

| DARE Framework | Clarifies decision-making roles. | Defines "Decider," "Adviser," "Recommender," and "Executor" roles for unambiguous accountability [34]. |

| Team Health Survey | Diagnoses team strengths and weaknesses. | Assesses 17 health drivers across 4 areas (Configuration, Alignment, Execution, Renewal) to identify gaps [34]. |

| Anara AI Platform | Unified research workflow collaboration. | AI chat with source verification, real-time collaborative editing, and team knowledge management [39]. |

| OSF (Open Science Framework) | Open-source project management. | Manages public/private sharing, version control, and connects with tools like GitHub and Zotero [40]. |

| Physiological Sensors (ECG) | Objectively measures team dynamics. | Provides automated, high-resolution data on team synchrony (PS) via heart rate and HRV metrics [38]. |

| Structured Debriefing Protocol | Guided post-task reflection. | Enables teams to analyze performance, reinforce learning, and improve future coordination [38]. |

Frequently Asked Questions (FAQs)

Q1: How do I access the KanBo Help Portal directly from the application? To access the KanBo Help Portal directly from the platform, select the Help icon on the Sidebar [41].

Q2: Where can I find a comprehensive guide on KanBo's basic features like the Home Page? The KanBo Help Portal contains a detailed KanBo Help Center with instructions and application usage tips for every user, from beginner to advanced [41]. This includes a specific article explaining that the KanBo Home Page, which you access by selecting the KanBo icon on the Sidebar, displays the current date, your total number of unread notifications, and the number of cards you have blocked [42].

Q3: What should I do if I encounter a technical error, such as a "401 Error"? The KanBo Help Portal has a dedicated Troubleshooting section for resolving technical issues [43]. For persistent problems, you can use the "Report a problem" button on the top of any Help Portal page, ask a question in the comments under a relevant article, or write an email to support@kanboapp.com [41].

Q4: What is a Card in KanBo and how is it structured? Cards are the fundamental building blocks in KanBo, representing tasks, projects, or important information [44]. A card has a front side that provides a visual summary and a detailed content view. The card's content is organized into three major sections on the Content tab: Card Details (on the left, showing descriptions, related cards, and users), Card Elements (in the middle, containing features like notes and to-do lists), and the Card Activity Stream (on the right, showing a history of all actions and comments) [44].

Troubleshooting Guides

Guide 1: Resolving Collaboration and Workflow Bottlenecks in Multidisciplinary Teams

Problem Statement: Research teams often face challenges with cross-functional communication, task coordination, and tracking dependencies, leading to delays and misalignment in complex projects like drug development or clinical trials [45] [46].

Required KanBo Features:

- Spaces & Cards: For organizing projects and individual tasks [44] [46].

- Card Relations: To establish dependencies between tasks (e.g., parent-child cards) [47] [46].

- Card Blockers: To identify and categorize stalled work [47].

- Comments & Mentions (@): For contextual, real-time communication [45] [46].

- Gantt Chart View: To visualize task timelines and dependencies [47] [46].

Step-by-Step Solution:

- Create a Dedicated Workspace: Set up a Workspace for your overarching research program (e.g., "Drug Development") and add all team members [47] [46].

- Structure the Project into Spaces: Within the Workspace, create Spaces for different project phases or disciplines (e.g., "Pre-Clinical Research," "Clinical Trial Phase 1") [47].

- Break Down Work into Cards: Create Cards within Spaces for every individual task, such as "Analyze Patient Data" or "Prepare Trial Protocol" [44] [46].

- Establish Task Dependencies: Use the Card Relations feature to link dependent tasks. For example, make "Patient Data Analysis" a parent card of "Generate Statistical Report" [47] [46].

- Identify and Resolve Blockers: If a task is stalled, use the Card Blocker feature to flag it (e.g., "Awaiting ethical approval") so the team can focus on resolution [47].

- Communicate Contextually: Use Comments and @Mentions inside the relevant Card to discuss issues and alert specific team members without switching to email [45] [46].

- Monitor Overall Progress: Switch to the Gantt Chart View to get a high-level overview of the project timeline, spot potential delays, and manage critical paths [47] [46].

Guide 2: Troubleshooting Data and Document Management Issues

Problem Statement: Scientists struggle with managing vast datasets and research documents, leading to version control issues, difficulty in locating files, and compromised data integrity [46].

Required KanBo Features:

- Document Sources: To link external repositories like SharePoint [46].

- Card Documents & Space Documents: For attaching and organizing files directly within tasks and project areas [46].

- Activity Stream: To track all document-related activities [45] [46].

Step-by-Step Solution:

- Connect External Document Sources: In your Space, use the Document Management settings to Add a Document Source, such as your organization's SharePoint library, to centralize access [46].

- Attach Documents to Cards: For task-specific documents, use the Card Documents element to attach files directly to the relevant Card, ensuring all context is in one place [46].

- Maintain a Space Document Library: Use the Space Documents section to create a centralized repository for protocols, research data, and findings relevant to the entire project phase [46].

- Audit Document Activity: Monitor the Activity Stream within a Card or Space to see who uploaded, modified, or accessed a document, maintaining a clear audit trail [46].

System Requirements and Feature Reference Tables

Table 1: KanBo On-Premises Server Requirements

| Component | Minimum Requirement |

|---|---|

| Operating System | Windows Server 2016 or higher [48] |

| SharePoint | SharePoint 2019 or higher [48] |

| Authentication | All users managed by Active Directory [48] |

| Server | Must be part of your Windows Domain [48] |

| Browser (for installation) | Modern browser (Firefox, Edge, Chrome); Internet Explorer not supported [48] |

| Framework | .NET 8 hosting bundle installed [48] |

Table 2: Essential KanBo Features for Research Coordination

| Feature | Function | Use Case in Research |

|---|---|---|

| Spaces | Clusters for grouping related tasks (Cards) [46] | Organize work by research phase (e.g., "Compound Screening," "Trial Management") [47]. |

| Card Status | Indicates the progress of a task (e.g., To Do, In Progress, Completed) [47] | Track an experiment from "Hypothesis" to "Data Analysis" to "Conclusion" [47] [46]. |

| Gantt Chart View | Visualizes task timelines and dependencies [47] [46] | Plan and monitor the long-term schedule of a clinical trial [46]. |

| Card Relations | Breaks down complex tasks and links dependencies [47] | Map out a sequence of experimental procedures that must occur in a specific order [46]. |

| Activity Stream | Real-time feed of all actions in a Space or Card [45] | Provide transparency and allow team leaders to monitor project progress and team contributions [45] [46]. |

Experimental Protocol: Implementing a Digital Coordination Platform for a Research Team

Objective: To systematically implement KanBo as a digital coordination platform to enhance workflow efficiency, data management, and cross-functional collaboration within a multidisciplinary research team.

Methodology:

- Workspace & Space Configuration:

- Create a primary Workspace for the research project (e.g., "Oncology Drug X Development") [46].

- Inside, establish multiple Spaces using Space Templates for different workstreams: "Target Validation," "Pre-Clinical Studies," "Regulatory Submissions," and "Clinical Operations" [47] [46].

- Assign team members with appropriate role-based access levels (Owner, Member, Visitor) to maintain data security [46].

- Task & Workflow Implementation:

- Within each Space, create Cards for all individual tasks. Use Card Templates to standardize recurring tasks like "Weekly Data Review" [46].

- Utilize the Kanban View to visualize the workflow of tasks across columns like "Backlog," "In Progress," and "Completed" [47].

- Employ Card Relations to define dependencies (e.g., "Toxicity Report" cannot start until "Animal Study" is complete) [47] [46].

- Data & Document Integration:

- Communication & Monitoring:

Workflow Visualizations

Diagram 1: Troubleshooting Path for a Blocked Research Task

Diagram 2: Multidisciplinary Research Coordination with KanBo

The Scientist's Toolkit: Key Digital Platform Features

Table 3: Essential Digital Platform "Reagents" for Research Coordination

| Platform Feature | Function / Purpose |

|---|---|

| Workspace | A top-level container to organize an entire research program, grouping all related activities and teams [46]. |

| Space | A dedicated area within a Workspace for a specific project phase or discipline, containing clusters of tasks [47] [46]. |

| Card | The fundamental unit of work, representing a single task, experiment, or action item; the primary object for tracking and collaboration [44]. |

| Card Relation | A feature to link Cards, creating parent-child hierarchies or dependencies, which is critical for mapping complex experimental workflows [47] [46]. |

| Gantt Chart View | A visualization tool that displays tasks against a timeline, enabling project leads to manage schedules and dependencies across the entire project [47] [46]. |

| Document Source | A connection to an external document management system (e.g., SharePoint), centralizing research data and protocols within the platform [46]. |

Fostering Cross-Disciplinary Anticipation, Synchronization, and Triangulation

Technical Support Center: Troubleshooting Guides and FAQs

This section provides targeted support for common coordination challenges faced by multidisciplinary analysis teams.

Frequently Asked Questions (FAQs)

Q: What is the most significant organizational challenge when starting a new multidisciplinary research program?

- A: The "appropriate organization challenge" is critical, involving the difficulty of fitting a new program within existing university department structures and funding agency requirements while minimizing redundant processes and administrative duties that can reduce academic output [49].

Q: How can we improve communication between disciplines with different research cultures?

- A: Actively work to overcome the "discipline openness challenge." This involves fostering an environment where team members are receptive to theories and methods from outside their own field and developing a "shared theoretical framework" to facilitate deeper collaboration and a common language [49].

Q: Our team struggles with inefficient software development for research. What is a common pitfall?

- A: The "mutual understanding of software requirements challenge" is common. This occurs when there is a disconnect between the researchers' needs and the software developers' understanding of those needs, often due to differing terminologies and priorities [49].

Q: What is a key benefit of a multidisciplinary approach in a clinical setting?

- A: A key benefit is significantly improved patient outcomes. By combining expertise from various specialties, teams can achieve more accurate diagnoses, more effective treatment strategies, and better overall recovery or quality of life for patients [33].

Q: Why is troubleshooting a vital skill for managing multidisciplinary teams?

Troubleshooting Common Team Coordination Issues

Problem: Communication breakdowns and unclear responsibilities are slowing down research progress.

| Troubleshooting Step | Actions for Multidisciplinary Teams | Expected Outcome |

|---|---|---|

| 1. Identify the Problem | Gather information via team meetings; Question all team members; Identify symptoms (e.g., missed deadlines); Determine recent changes (e.g., new team member) [51]. | A clear, consensus-based understanding of the core issue, separating it from surface-level symptoms. |

| 2. Establish a Theory of Probable Cause | Question the obvious (e.g., are meeting notes shared?); Consider communication channels; Use a top-down (from project goals) or bottom-up (from individual tasks) approach to isolate the cause [51]. | A hypothesized root cause, such as a lack of a shared project management tool or undefined leadership for a specific task. |

| 3. Test the Theory | If the theory is a lack of documentation, check existing protocol repositories; Interview team members on their understanding of responsibilities [51]. | Confirmation or rejection of the hypothesized cause, potentially leading back to Step 1 for re-evaluation. |

| 4. Establish a Plan of Action | Develop a clear plan, such as implementing a shared lab notebook or defining a communication charter. Identify potential effects, including the need for team training [51]. | A documented set of steps to resolve the issue, with a rollback plan if the solution creates new problems. |

| 5. Implement the Solution | Roll out the new tool or protocol; Provide necessary training; Ensure leadership endorsement and participation [51]. | The proposed solution is put into practice across the team. |

| 6. Verify Full Functionality | Have the team use the new system for a trial period; Check if deadlines are met more reliably; Confirm with all members that communication has improved [51]. | Confidence that the solution has effectively resolved the original problem without negative side effects. |

| 7. Document Findings | Record the problem, the solution implemented, and the outcome. Share this with the entire team and archive it for future reference [51]. | Creation of an institutional memory to prevent recurrence and expedite future troubleshooting. |

Problem: Diagnosing complex, intertwined technical and methodological issues in a project.

| Troubleshooting Step | Actions for Complex Technical Issues | Expected Outcome |

|---|---|---|

| 1. Understanding the Problem | Ask open-ended questions to fully grasp the issue; Gather information from logs and data outputs; Reproduce the issue in a controlled environment [23]. | A deep, shared understanding of what is happening versus what is expected to happen. |

| 2. Isolating the Issue | Remove complexity by testing subsystems individually; Change one variable at a time; Compare the broken system to a known working version [23]. | The problem is narrowed down to a specific component, methodology, or interaction between disciplines. |

| 3. Find a Fix or Workaround | Propose a solution, such as a methodological adjustment or a software patch; Test the fix internally before full deployment; If a permanent fix isn't possible, establish a reliable workaround [23]. | A functional resolution is identified and validated, allowing the research to proceed. |

Quantitative Data on Multidisciplinary Research

| Theme | Specific Challenge | Description |

|---|---|---|

| Organization | The Appropriate Organization Challenge | Fitting a new program within university and funder structures with minimal redundancy. |

| The Strategic Support Challenge | Attracting support from department heads and university management. | |

| Communication | The Internal Communication and Documentation Challenge | Ensuring efficient knowledge transfer and documentation within the team. |

| Multidisciplinarity | The Discipline Openness Challenge | Fostering receptiveness to theories and methods from other fields. |

| The Shared Theoretical Framework Challenge | Developing a common conceptual foundation for the research. | |

| Software Development | The Mutual Understanding of Software Requirements Challenge | Bridging the gap between researcher needs and developer understanding. |

| Aspect | Documented Benefit / Challenge |

|---|---|

| Key Benefits | Decreased patient mortality and complications. |

| Reduced hospital length of stay and readmissions. | |

| Enhanced patient satisfaction. | |

| Improved communication between healthcare disciplines. | |

| Reported Challenges | Time allocation limitations for team rounds. |

| Hierarchical mentality between doctors and nurses. | |

| Limited nurse involvement in decision-making. |

Experimental Protocols for Team Coordination

Objective: To systematically establish a new, publicly funded multidisciplinary research environment, anticipating and mitigating common challenges.

Methodology:

- Stakeholder Mapping and Engagement: Identify and secure support from key institutional leaders (e.g., department heads, vice-chancellors) to build strategic support.