Statistical Validation of Environmental Scanning in Drug Development: From Data to Decision-Making

This article provides a comprehensive framework for the statistical validation of environmental scanning results, tailored for researchers, scientists, and drug development professionals.

Statistical Validation of Environmental Scanning in Drug Development: From Data to Decision-Making

Abstract

This article provides a comprehensive framework for the statistical validation of environmental scanning results, tailored for researchers, scientists, and drug development professionals. It bridges the gap between qualitative scanning processes and rigorous quantitative validation, addressing a critical need in evidence-based pharmaceutical research and development. The content spans from foundational concepts and methodological applications to troubleshooting common pitfalls and establishing robust validation protocols. By integrating statistical harmonization techniques, model validation methods, and phase-appropriate approaches, this guide empowers professionals to transform environmental data into statistically sound, strategic assets for optimizing drug development pipelines and mitigating risks.

Understanding Environmental Scanning and the Imperative for Statistical Validation

Defining Environmental Scanning in a Biomedical Context

In the complex, dynamic, and high-stakes field of biomedicine, decision-makers are consistently faced with rapid scientific growth and emerging challenges that demand evidence-based responses. Environmental scanning (ES) serves as a crucial methodological tool to navigate this landscape, enabling researchers, pharmaceutical developers, and healthcare policy makers to collect, analyze, and interpret internal and external data to identify important patterns, trends, and evidence [1]. Originally derived from business and information science disciplines, environmental scanning has been widely adopted in healthcare to assess internal strengths and weaknesses while evaluating external opportunities and threats [2] [3]. In essence, environmental scanning functions as an organizational radar system, providing systematic awareness of the scientific, competitive, and regulatory environment to inform strategic planning and future-oriented decision making [1] [3].

The value of environmental scanning in a biomedical context is particularly evident in its applications across diverse areas including cancer care, mental health, injury prevention, and quality improvement programs [1] [4]. More recently, environmental scanning has been applied to emerging fields such as the implementation of generative AI infrastructure for clinical and translational science, helping institutions understand the current landscape of technological adoption [5]. For drug development professionals and biomedical researchers, environmental scanning offers a structured approach to anticipate market shifts, identify research gaps, recognize collaborative opportunities, and avoid costly oversights in research and development pipelines.

Core Methodological Frameworks and Approaches

Comparative Analysis of Environmental Scanning Models

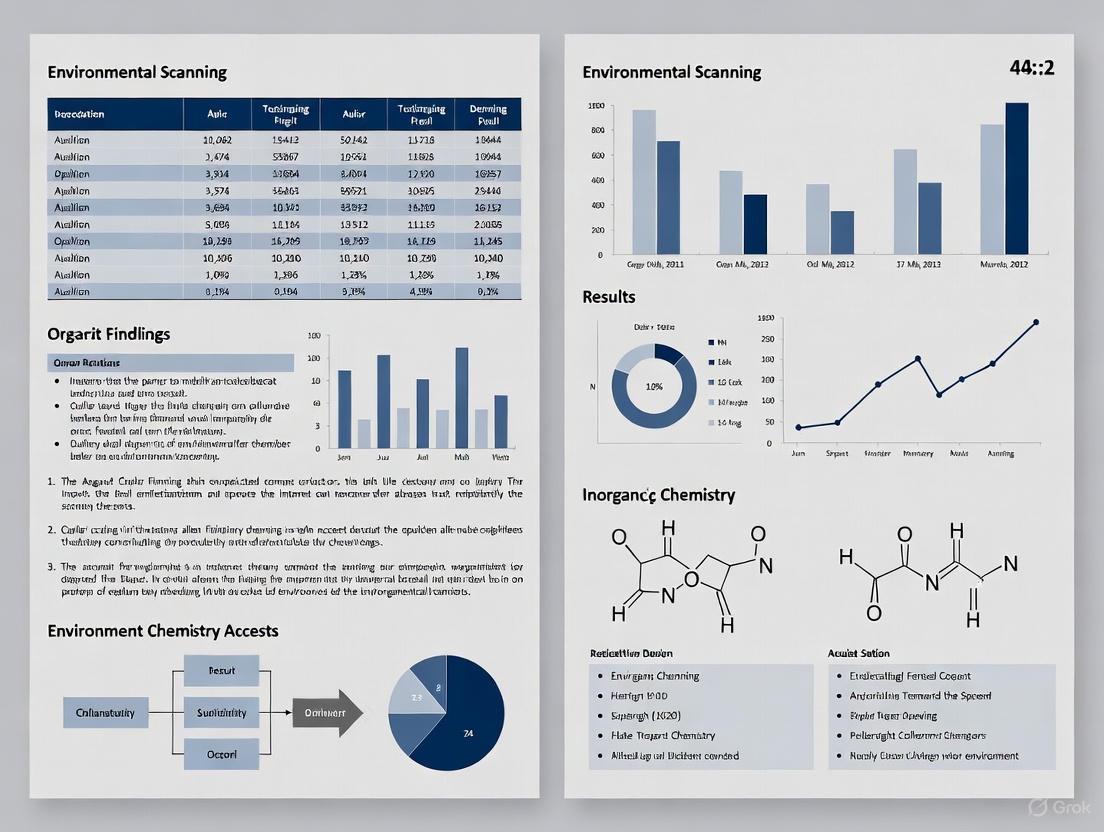

Environmental scanning methodologies in healthcare encompass a spectrum of approaches, from passive information gathering to active knowledge creation. A scoping review of healthcare literature identified that most studies propose six main steps for conducting an environmental survey in the healthcare system [1]. The models vary in their complexity, data collection methods, and intended applications, as summarized in Table 1.

Table 1: Comparative Analysis of Environmental Scanning Approaches in Biomedical Contexts

| Model/Framework | Primary Focus | Core Steps/Phases | Key Advantages | Reported Applications |

|---|---|---|---|---|

| Traditional Business-Derived ES | Internal strengths & weaknesses; External opportunities & threats | Varies; typically 6 main steps | Comprehensive assessment; Strategic planning | Cancer care, mental health, injury prevention [1] [4] |

| RADAR-ES Framework | Health services delivery research | 5 phases: Recognizing issue, Assessing factors, Developing protocol, Acquiring/analyzing data, Reporting results | Methodological rigor; Specific to HSDR; Evidence-informed | Research methodology development; Program planning [2] |

| 7-Step Public Health Model | Public health practice & research | 7 steps from leadership determination to dissemination | Practical application; Stakeholder engagement; Federal funding compliance | HPV vaccination projects; Cancer screening initiatives [4] |

| Passive Scanning Approach | Casual, opportunistic data collection | Collection of existing knowledge from established sources | Resource-efficient; Leverages existing knowledge | Literature reviews; Policy analysis; Database assessment [3] |

| Active Scanning Approach | Creating new knowledge | Organization takes action and analyzes reactions | Generates tailored, relevant data; Responsive to specific needs | Stakeholder interviews; Surveys; Focus groups [3] |

The RADAR-ES Methodological Framework

The RADAR-ES framework represents a recent advancement in environmental scanning methodology specifically developed for health services delivery research. This evidence-informed framework consists of five distinct phases that guide researchers through a systematic process [2]:

- Recognizing the Issue: Identifying and defining the focal area or research question that the environmental scan will address.

- Assessing Factors for ES: Evaluating contextual elements, resources, and constraints that will influence the scan's design and implementation.

- Developing an ES Protocol: Creating a structured plan that outlines methodology, data sources, and analytical approaches.

- Acquiring and Analyzing the Data: Systematically collecting and synthesizing information from diverse sources.

- Reporting the Results: Disseminating findings to appropriate stakeholders and decision-makers.

This framework is particularly valuable for biomedical researchers as it provides methodological rigor to a process that has often been inconsistently applied in healthcare settings. The framework addresses the notable gap in guidance for designing, implementing, and reporting environmental scans in health services research, offering a standardized approach that enhances comparability and quality [2].

Practical Implementation: The 7-Step Public Health Model

For biomedical practitioners seeking immediately applicable guidance, the 7-step model implemented in Kentucky's Human Papillomavirus (HPV) Vaccination Project offers a practical roadmap [4]:

- Determine Leadership and Capacity: Designate a coordinator or team with clear roles and responsibilities to champion the entire process.

- Establish Focal Area and Purpose: Specify the purpose to anchor the process and focus limited resources.

- Create and Adhere to a Timeline: Establish realistic timelines with incremental goals, accounting for institutional review board approvals and stakeholder availability.

- Determine Information Needs: Brainstorm all topics and resources that could inform the scan, acknowledging that the list may evolve throughout the process.

- Identify and Engage Stakeholders: Create a diverse, iterative list of key informants and organizations with relevant knowledge or influence.

- Gather and Analyze Information: Systematically collect and synthesize data from multiple sources using both quantitative and qualitative methods.

- Disseminate and Implement Findings: Share results with stakeholders and use evidence to inform strategic planning and decision-making.

This model emphasizes the dynamic nature of environmental scanning, describing it as "a dynamic process of comprehensive assessment aimed at exploring a health topic in a manner that makes connections not previously established and highlights barriers and facilitators not previously identified" [4].

Experimental Protocols and Data Collection Methodologies

Standardized Data Collection Workflow

The experimental protocol for environmental scanning in biomedical contexts typically follows a systematic workflow that integrates multiple data sources and analytical approaches. The process flow can be visualized as follows:

Diagram 1: Environmental scanning workflow for biomedical research

Quantitative Data from Recent Environmental Scans

Recent environmental scans in biomedical settings have yielded valuable quantitative insights, particularly in emerging fields like artificial intelligence implementation in healthcare. Table 2 summarizes key metrics from a comprehensive environmental scan of generative AI infrastructure across 36 institutions in the Clinical and Translational Science Awards (CTSA) Program [5].

Table 2: Quantitative Findings from Environmental Scan of Generative AI Implementation in Clinical and Translational Science (2025)

| Metric Category | Specific Measure | Reported Percentage | Sample Size (Institutions) | Implications for Biomedical Research |

|---|---|---|---|---|

| Stakeholder Involvement | Senior leadership involvement in GenAI decision-making | 94.4% | 36 | Top-down approach dominates AI adoption |

| IT staff involvement | High (exact % not specified) | 36 | Technical expertise crucial for implementation | |

| Nurse involvement | Significantly lower than other stakeholders | 36 | Clinical end-users underrepresented | |

| Patient/community representative involvement | 0% at institutions without formal committees | 7 | Limited patient perspective in governance | |

| Governance Structure | Institutions with formal GenAI oversight committees | 77.8% | 36 | Movement toward structured governance |

| Centralized (top-down) decision-making approach | 61.1% | 36 | Centralized control preferred in early adoption | |

| Ethical Considerations | Data security as primary concern | 53.0% | 36 | Data protection paramount in biomedical AI |

| Lack of clinician trust as barrier | 50.0% | 36 | Implementation challenges beyond technology | |

| AI bias as significant concern | 44.0% | 36 | Recognition of algorithmic fairness issues | |

| Adoption Status | Institutions in experimentation phase | 75.0% | 36 | Widespread exploration but limited production use |

| Workforce receiving LLM training | 36.1% | 36 | Significant training gap exists | |

| Workforce desiring additional training | 83.3% | 36 | Strong demand for skill development |

Statistical Validation of Environmental Scanning Results

Methodological Considerations for Validation

The statistical validation of environmental scanning findings represents a critical yet underdeveloped aspect of biomedical research methodology. Proper validation ensures that identified trends and patterns reflect genuine phenomena rather than random variations or measurement artifacts. The complex, multivariate nature of environmental data in biomedical contexts necessitates specialized statistical approaches that can account for multiple variables, temporal correlations, and natural variability [6].

Statistical validation in environmental assessment must distinguish between impact-induced variability and changes triggered by natural causes, a challenge particularly relevant to biomedical studies evaluating the effects of interventions or environmental exposures [6]. Traditional statistical methods such as Before-After (BA), Before-After-Control-Impact (BACI), and Impact vs. Reference Site (IVRS) analyses each present limitations for validating environmental scanning results, including difficulties in locating appropriate control sites with similar environmental conditions and challenges in accounting for temporal and spatial variability [6].

Advanced Statistical Techniques

Novel statistical approaches have been developed specifically for the validation of environmental impact assessments, which can be adapted for biomedical scanning applications. The EIA algorithm represents one such advancement, offering a multivariate approach that summarizes information from multiple variables into a single statistical parameter while accounting for temporal autocorrelation in the data [6]. This algorithm uses polar coordinates to convert original variables into a multispecies vector, calculates the mean vector for the period before the impact, and determines confidence limits for the mean vector length, enabling researchers to identify significant changes following an intervention or event [6].

Additional statistical validation methods with relevance to biomedical environmental scanning include:

SIMEX (Simulation-Extrapolation) Procedure: A method that adds simulated measurement error to model predictions and then subtracts the total measurement error, analogous to the method of standard additions used by analytical chemists [7]. This approach is particularly valuable for correcting measurement errors in environmental exposure data.

Hypothesis Testing for Comparative Performance: Statistical inference techniques including paired sample tests, statistical testing of mean value in a normal population, and two-sample tests in normal populations with unknown yet equal variances [8]. These methods enable researchers to validate claims of outperformance between alternative methods or interventions.

Multivariate Statistical Approaches: Techniques that allow for the simultaneous analysis of multiple variables, addressing a limitation of traditional parametric and non-parametric tests that focus primarily on mean comparisons [6].

Table 3: Essential Research Reagents and Resources for Environmental Scanning in Biomedicine

| Tool Category | Specific Tool/Resource | Function in Environmental Scanning | Application Examples |

|---|---|---|---|

| Data Sources | Electronic databases (Web of Science, PubMed, Scopus, Embase) | Identification of peer-reviewed literature and published evidence | Comprehensive literature assessment; Research trend analysis [1] |

| Gray literature sources (technical reports, conference proceedings) | Access to emerging research and unpublished findings | Identification of cutting-edge developments; Competitor intelligence | |

| Government and regulatory databases | Monitoring policy changes and regulatory guidelines | FDA approval tracking; Compliance assessment | |

| Analytical Tools | Statistical software packages (R, Python with relevant libraries) | Data analysis, visualization, and statistical validation | Trend analysis; Multivariate statistical testing [6] |

| Bibliometric analysis tools | Mapping research networks and knowledge domains | Identification of emerging research fronts; Collaboration opportunity mapping | |

| Content analysis software | Systematic analysis of qualitative data | Policy document analysis; Interview transcript coding | |

| Stakeholder Engagement Tools | Structured interview guides | Systematic collection of expert knowledge | Key informant interviews; Delphi studies [2] |

| Survey platforms and instruments | Quantitative data collection from stakeholder groups | Provider surveys; Patient preference assessment [4] | |

| Methodological Frameworks | RADAR-ES framework | Guidance for designing, conducting, and reporting environmental scans | Health services research planning; Program development [2] |

| PRISMA guidelines | Systematic review and scoping study conduct | Literature review methodology; Evidence synthesis [1] |

Environmental scanning represents a powerful methodological approach for biomedical researchers, drug development professionals, and healthcare decision-makers navigating an increasingly complex and rapidly evolving landscape. When properly implemented using structured frameworks such as RADAR-ES or the 7-step public health model, and validated through appropriate statistical techniques, environmental scanning provides invaluable evidence for strategic planning, resource allocation, and research priority setting [1] [2] [4]. As biomedical challenges grow in complexity, the systematic application of environmental scanning methodologies will become increasingly essential for translating research evidence into effective healthcare solutions and therapeutic advancements.

The integration of advanced statistical validation methods addresses a critical gap in current environmental scanning practice, enhancing the credibility and utility of scan findings for biomedical decision-making [7] [6] [8]. By adopting these rigorous approaches, biomedical researchers can ensure that their environmental scanning initiatives produce reliable, actionable intelligence to guide the future of drug development, clinical practice, and public health policy.

Validation is the cornerstone of trust in data-driven decision-making, whether in business intelligence or scientific research. This guide examines validation protocols across domains, comparing methodologies and the experimental data that underpin them, framed within the context of statistical validation for environmental scanning research.

Understanding Validity and Reliability

For researchers interpreting environmental scanning data, a precise understanding of validity and reliability is fundamental.

- Reliability refers to the consistency of a measurement. A reliable process yields the same result when the same data is analyzed repeatedly under identical conditions [9].

- Validity refers to the accuracy of a measurement. A valid process correctly captures the real-world phenomenon it is intended to measure [10] [9].

A metric can be reliable without being valid, producing consistently incorrect results. For trusted outcomes, both properties are essential [9]. Key types of validity include:

- Construct Validity: Does the test measure the theoretical concept it claims to? [10] [9]

- Internal Validity: Are the observed effects genuinely due to the intervention, not other factors? [10]

- External Validity: Can the findings be generalized beyond the specific study conditions? [10]

Validation in Business and Environmental Intelligence

In business and environmental contexts, validation ensures that analytics and sustainability claims are both consistent and accurate.

Experimental Protocols for Data Validation

Advanced analytics platforms implement specific protocols to ensure reliability and validity [9]:

- Standardized KPI Definitions: A semantic layer is used to enforce consistent business logic and calculations across all queries, ensuring reliability.

- Real-Time Data Governance: Live connections to cloud data warehouses guarantee that reports reflect the current business state, supporting validity.

- Automated Model Monitoring: Frameworks continuously monitor AI models in production for "data drift," where changing real-world conditions make training data less representative, thus preserving validity over time.

Supporting Experimental Data

Research demonstrates the tangible impact of these practices. A study on Jordanian manufacturing firms found that Business Intelligence (BI) capabilities do not directly enhance environmental performance. Instead, BI fully relies on Green Supply Chain Management (GSCM) practices to mediate its influence, showing that the validity of BI for sustainability goals depends on its integration into specific operational systems [11].

The table below summarizes key challenges and the technologies addressing them:

Table: Challenges and Solutions in Analytical Validation for Sustainability

| Challenge | Impact on Validation | Modern Solution | Documented Outcome |

|---|---|---|---|

| Data Silos [9] | Undermines construct validity by creating an incomplete picture. | Integrated platforms using governed data models. | Creates a single source of truth for reliable and valid reporting [9]. |

| Proxy Metrics [9] | Threatens criterion validity if the proxy does not align with the true outcome. | Semantic layers that define and enforce accurate business logic [9]. | Ensures metrics like "customer growth" are validly measured according to organizational definitions [9]. |

| Integrating BI for Environmental Goals [11] | BI alone lacks construct validity for driving sustainability. | Embedding BI within Green Supply Chain Management (GSCM) systems [11]. | Establishes an indirect, fully mediated pathway for BI to improve environmental performance [11]. |

Validation in Pharmaceutical Development and Discovery

In drug discovery, validation moves from data interpretation to confirming physiological reality, with stringent regulatory oversight.

Experimental Protocols for Target Engagement

A critical validation step is confirming that a drug candidate engages its intended target in a physiologically relevant environment. The Cellular Thermal Shift Assay (CETSA) protocol is widely used for this [12]:

- Compound Treatment: Intact cells or tissues are treated with the drug candidate.

- Heat Denaturation: Samples are heated, causing unbound proteins to denature and precipitate.

- Protein Analysis: The remaining stabilized target protein (bound to the drug) is quantified, often via high-resolution mass spectrometry.

- Data Validation: Dose-dependent and temperature-dependent stabilization of the target protein confirms direct binding engagement.

The U.S. Food and Drug Administration (FDA) emphasizes a risk-based framework for AI/ML use in drug development, requiring robust validation of AI models from nonclinical through postmarketing phases [13].

Supporting Experimental Data

The adoption of Digital Validation Tools (DVTs) in the pharmaceutical industry has reached a tipping point. In 2025, 58% of organizations reported using these systems, a significant jump from 30% the previous year. Another 35% are planning adoption within two years. The drivers for this shift are directly related to validation rigour: data integrity and audit readiness were cited as the two most valuable benefits [14].

In AI-driven drug discovery, studies demonstrate the power of validated workflows. A 2025 study used deep graph networks to generate over 26,000 virtual analogs, ultimately producing inhibitors with a >4,500-fold potency improvement over initial hits. This showcases a validated DMTA (Design-Make-Test-Analyze) cycle where in-silico predictions are rigorously confirmed by experimental testing [12].

Table: Quantitative Trends in Pharmaceutical Validation (2025)

| Validation Area | Metric | 2025 Benchmark | Significance |

|---|---|---|---|

| Digital Tool Adoption [14] | % of orgs using Digital Validation Systems (DVTs) | 58% | Indicates a mainstream shift towards digitized, more reliable validation processes. |

| Regulatory Submissions [13] | Number of drug applications with AI/ML components | >500 (2016-2023) | Shows the increasing reliance on advanced, validated AI models in formal regulatory contexts. |

| Hit-to-Lead Acceleration [12] | Potency improvement in optimized inhibitors | >4,500-fold | Demonstrates the validated output of AI-driven discovery cycles, compressing timelines from months to weeks. |

Comparative Analysis: Visualization of Validation Workflows

The following diagrams illustrate the core logical workflows for validating experiments in statistical and pharmaceutical contexts.

Statistical Experimental Validation Workflow

This workflow outlines the key stages for ensuring the validity of a statistical experiment, such as an A/B test, from initial design to final interpretation [10].

Drug Target Validation Workflow

This workflow depicts the multi-stage process of validating a potential drug target, from initial computational screening to confirmation in complex biological systems [12].

The Scientist's Toolkit: Key Research Reagent Solutions

Essential tools and platforms for conducting validated research in data science and drug discovery are listed below.

Table: Essential Reagents and Platforms for Validated Research

| Tool Category | Example | Primary Function in Validation |

|---|---|---|

| Digital Validation Platforms [14] | Kneat | Streamlines validation lifecycle management for regulated industries, ensuring audit readiness and data integrity. |

| AI-Driven Analytics [9] | ThoughtSpot Spotter | Provides AI-generated insights backed by a semantic layer to ensure consistent (reliable) and accurate (valid) business metrics. |

| Target Engagement Assays [12] | CETSA (Cellular Thermal Shift Assay) | Validates direct drug-target interaction in physiologically relevant intact cells, bridging biochemical and cellular efficacy. |

| In-Silico Screening Suites [12] | AutoDock, SwissADME | Computationally prioritizes compounds based on predicted binding (docking) and drug-likeness (ADME), filtering libraries before costly wet-lab work. |

| Statistical Platform [15] [10] | Eppo, Statsig | Enables robust A/B testing and experimentation with safeguards against p-hacking, supports power analysis, and ensures statistical validity. |

In the field of environmental scanning for drug development, researchers face a triad of fundamental data challenges: non-uniform measures, heterogeneous sources, and measurement error. These issues critically hamper the statistical validation of environmental scanning results, potentially compromising the reliability of research outcomes and subsequent decision-making. As the industry increasingly adopts real-time monitoring and complex geospatial modeling, understanding and mitigating these data problems becomes paramount for ensuring research integrity and accelerating the development of new therapies.

This guide objectively compares current environmental monitoring systems and data handling methodologies, providing a framework for researchers to evaluate solutions based on standardized experimental data and protocols.

Comparative Analysis of Environmental Monitoring Systems

Environmental monitoring systems (EMS) are integrated platforms that connect sensors to a centralized data platform, automating collection, validation, and alerts across locations to turn raw readings into actionable intelligence [16]. The table below compares key systems used in research and regulated industries.

Table 1: Comparison of Environmental Monitoring Systems for Research and Drug Development

| Tool Name | Best For | Monitoring Parameters | Standout Feature | Key Consideration for Data Challenges |

|---|---|---|---|---|

| Envirosuite [17] | Large-scale industrial operations (mining, aviation) | Noise, air, water, dust [17] | Predictive analytics [17] | Addresses heterogeneous data via integration with IoT networks [17]. |

| Novatek Environmental Monitoring [17] | Pharmaceuticals, cleanroom facilities | Air quality, microbial contaminants [17] | Visual facility control tool for mapping sample points [17] | Mitigates measurement error via automated investigations and risk-based monitoring (FMEA) [17]. |

| Rotronic Monitoring System (RMS) [17] | Pharmaceuticals, manufacturing | Humidity, temperature, CO2 [17] | Flexible integration with third-party devices [17] | Combats non-uniform data by integrating diverse third-party devices via a converter [17]. |

| IBM Envizi ESG Suite [17] | Large enterprises, ESG reporting | Emissions, energy use [17] | AI-driven analytics for impact assessment [17] | Manages heterogeneous sources by integrating weather, geospatial, and enterprise data [17]. |

| Cleartrace [17] | Energy-intensive businesses | Carbon emissions, energy consumption [17] | AI-powered insights for decarbonization [17] | Uses AI to handle data from heterogeneous sources and provide unified analytics [17]. |

Understanding Core Data Challenges

Non-Uniform Measures

This challenge arises when data is collected using different units, scales, or procedural standards across studies or locations. In environmental scanning, this could manifest as differing units for air particulate matter (e.g., PM2.5 concentrations) or inconsistent water quality metrics. This lack of standardization complicates direct comparison and data aggregation, leading to significant integration hurdles in meta-analyses or large-scale geospatial models [18].

Modern research often integrates data from vastly different sources, including IoT sensors, remote sensing, laboratory instruments, and public databases [16] [18]. These sources generate data in various formats (e.g., continuous sensor streams, categorical lab results, image data) with differing spatial and temporal resolutions. The technical challenge lies in harmonizing these disparate data types into a cohesive dataset for analysis, a process fraught with risks of introducing error or misrepresenting original information [18].

Measurement Error

Measurement error is any systematic or random deviation between the measured value and the true value. In epidemiology and environmental research, it is often incorrectly assumed that large sample sizes will compensate for measurement error or that its effect will always be to underestimate an exposure effect [19]. These are myths; measurement error can cause bias in any direction, reduce precision, and mask data features, regardless of sample size [19]. Errors can be differential or non-differential, and in multivariate settings, errors between variables may be dependent, further complicating their impact [19].

Experimental Protocols for System Validation

Validating an environmental monitoring system or data integration process requires rigorous experimental design to quantify its performance in handling these core challenges. The following protocols provide a framework for assessment.

Protocol 1: Accuracy and Reliability Testing

Objective: To evaluate a system's measurement accuracy against ground-truth reference instruments and its reliability over time [20].

Methodology:

- Co-location Study: Deploy the monitoring system(s) under test alongside reference-grade instruments in a controlled or representative environment.

- Data Collection: Collect simultaneous, paired measurements from both the test and reference systems over a sufficient period to capture environmental variability.

- Statistical Analysis: Calculate key performance metrics [20]:

- Correlation Analysis: Assess the strength of the linear relationship between the test and reference data.

- Regression Analysis: Evaluate the predictive capability and identify any systematic bias (slope and intercept).

- Residual Error Calculation: Determine the average difference between observed (reference) and modeled (test) values to assess accuracy [20].

Protocol 2: Data Integration and Heterogeneity Handling

Objective: To assess a system's ability to ingest, harmonize, and manage data from heterogeneous sources.

Methodology:

- Data Ingestion Test: Feed the system with diverse data types (e.g., real-time sensor data from IoT endpoints, structured lab results from CSV files, spatial data from GIS)[ccitation:4] [17].

- Validation and QA/QC Check: Verify that the system's automated Quality Assurance/Quality Control (QA/QC) processes—such as range/spike/flatline detection and calibration tracking—function correctly across the different data streams [16].

- Output Analysis: Evaluate the system's success in creating a unified, queryable dataset and generating accurate, combined visualizations (e.g., heatmaps that overlay air quality and noise data) [16].

The workflow for deploying and validating a monitoring system, from sensor deployment to data-driven decision-making, can be visualized as follows:

Diagram 1: Environmental Monitoring System Workflow

The Researcher's Toolkit: Key Reagents and Materials

The following table details essential components of a modern, data-driven environmental monitoring system, explaining their role in addressing core data challenges.

Table 2: Essential Components of a Research-Grade Environmental Monitoring System

| System Component | Function & Role in Mitigating Data Challenges |

|---|---|

| Distributed Sensor Nodes [16] | Measure parameters (e.g., PM, gases, noise, temperature). Local data buffering prevents loss during comms failure, mitigating measurement gaps (a type of error) [16]. |

| LoRaWAN / LTE/5G Gateways [16] | Transmit sensor data to the platform. Long-range, low-power options enable deployment in varied locales, helping manage spatial heterogeneity [16]. |

| Centralized Data Platform [16] | The system's core for data ingest, storage, and management. Automated QA/QC checks (range/spike/drift) directly combat measurement error [16]. |

| Calibration Tracking [16] | Logs calibration certificates and schedules. Critical for identifying and correcting systematic measurement error over time [16]. |

| Open API / Webhooks [16] | Enable integration with other systems (EHS, CMMS, GIS). Key technology for standardizing and managing data from heterogeneous sources [16]. |

Statistical Validation and Uncertainty Management

Robust statistical validation is required to account for measurement error and the inherent uncertainty in integrated datasets. Key considerations include:

Spatial Autocorrelation (SAC): In geospatial modeling, SAC occurs when data points near each other are more similar than distant points. If ignored during model training and validation, it can lead to deceptively high, inflated performance metrics and poor generalization to new areas [18]. Specialized spatial cross-validation techniques are essential.

Uncertainty Estimation: For model inferences to be trustworthy, they must include uncertainty estimations. This is especially critical when dealing with "out-of-distribution" problems, where the input data for prediction differs from the data used for training, a common issue when integrating heterogeneous sources [18].

The logical relationship between data challenges, analytical pitfalls, and required validation techniques is summarized below:

Diagram 2: Data Challenges and Validation Pathways

Navigating the challenges of non-uniform measures, heterogeneous sources, and measurement error is a fundamental requirement for producing statistically valid environmental scanning results in drug development. While modern monitoring systems offer advanced features for data integration, QA/QC, and analytics, researchers must employ rigorous, standardized experimental protocols to validate these tools within their specific context.

A critical understanding of measurement error and its potential to bias results—in any direction—is essential. By systematically selecting appropriate tools, applying robust validation methodologies, and incorporating uncertainty quantification, researchers can enhance the reliability of their data, thereby strengthening the foundation of scientific and regulatory decisions.

In the realm of organizational management, environmental scanning provides the critical data and insights necessary for navigating a complex and interconnected global landscape [21]. For researchers and scientists, particularly in high-stakes fields like drug development, the principles of systematic scanning—traditionally applied in cybersecurity and strategic business planning—offer a robust framework for identifying technological opportunities, regulatory shifts, and competitive threats [22]. This process is not merely about data collection; it is a disciplined approach to informing strategic decisions, proactively mitigating risks, and optimizing the allocation of finite resources [22]. The efficacy of any scanning exercise, however, hinges on the statistical validation of its results, ensuring that subsequent strategic outcomes are built upon a foundation of reliable, actionable intelligence rather than observational noise.

The Strategic Imperative of Scanning

From Data to Strategic Insight

Environmental scanning transforms raw data into a strategic asset. The American Hospital Association's (AHA) annual Environmental Scan exemplifies this, combining data, surveys, and trend analysis to help health systems plan for the future and explore important issues with boards and community stakeholders [21]. This structured approach to sense-making is crucial for anticipating disruptions and aligning organizational resources with future realities. A comprehensive strategic risk management framework leverages scanning to protect an organization's future, enabling it to stay ahead of technological disruptions and respond effectively to global challenges [22].

The Critical Role of Validation

For researchers, the transition from scanning to strategic outcomes must be underpinned by statistical validation. An environmental scan's value is determined by the accuracy and rigor of its data collection and analysis methodologies. Without validation, organizations risk basing critical decisions on flawed or incomplete information. The process of validation involves quantitative risk assessment models, including probability assessment and impact evaluation, to ensure that identified risks and opportunities are real and significant [22].

Experimental Protocols for Scanning Tool Efficacy

To objectively compare scanning methodologies, a standardized experimental protocol is essential. The following provides a detailed framework suitable for evaluating a range of scanning tools, from network security to data analysis software.

Protocol for Comparative Analysis of Scanning Tools

This protocol is adapted from rigorous cybersecurity testing methodologies for broader application [23].

- Objective: To quantify and compare the accuracy and efficiency of different scanning tools or methodologies in a controlled environment.

- Experimental Setup: A dedicated, isolated test environment (e.g., a controlled network segment, a standardized dataset, or a software sandbox) is constructed. This environment contains a known set of targets (e.g., network services, data patterns, specific vulnerabilities) that the tools are expected to identify.

- Tool Selection: Select multiple scanning tools or methods for comparison. These could range from commercial and open-source software to different algorithmic approaches for data analysis.

- Execution: Each tool is executed against the identical test environment according to its standard operating procedures. To control for variability, the order of tool execution should be randomized across multiple experimental runs [24].

- Data Collection: The output from each tool is systematically recorded. Key performance indicators (KPIs) must be collected uniformly, including:

- True Positives (TP): Correctly identified targets.

- False Positives (FP): Incorrectly identified targets (type I error).

- False Negatives (FN): Missed targets (type II error).

- Time to Completion: The total time taken to complete the scan.

- Resource Utilization: Computational resources consumed (e.g., CPU, memory).

- Analysis: The collected data is analyzed to calculate standard efficacy metrics:

- Accuracy: (TP + TN) / (TP + FP + FN + TN)

- Precision: TP / (TP + FP)

- Recall (Sensitivity): TP / (TP + FN)

- Efficiency: Targets identified per unit of time or resource.

Visualizing the Experimental Workflow

The experimental protocol for comparing scanning tools can be visualized as a sequential workflow with key decision points, ensuring rigorous and repeatable testing. The diagram below outlines the process from setup to statistical analysis.

Comparative Analysis of Scanning Tools

A comparative analysis reveals significant differences in tool performance, which directly impacts strategic decision-making. The following data synthesizes findings from controlled experiments and market analyses.

Table 1: Comparative Efficacy of Port Scanning Tools from Experimental Data [23]

| Tool | Accuracy | False Positive Rate | False Negative Rate | Efficiency (Relative) |

|---|---|---|---|---|

| Nmap | No significant difference | No significant difference | No significant difference | Baseline |

| Zmap | No significant difference | No significant difference | No significant difference | Statistically Significant Difference |

| Masscan | No significant difference | No significant difference | No significant difference | Statistically Significant Difference |

Table 2: Popular Vulnerability Scanning Tools in 2025 [25]

| Tool Name | Primary Scanning Focus | Key Characteristics |

|---|---|---|

| Nessus | Network & Cloud | Comprehensive plugin library, ease of use, detailed reporting |

| Qualys VMDR | Cloud-based VM | Continuous asset discovery, threat prioritization, remediation workflow |

| Rapid7 InsightVM | Vulnerability Management | Real-time risk visibility, live dashboards, prioritization by impact |

| OpenVAS | Network & System | Open source, robust vulnerability checks, large community support |

| Acunetix | Web Application | Specialization in OWASP Top 10, user-friendly, DevSecOps integration |

| Burp Scanner | Web Application | Combines automated scanning with powerful manual testing tools |

| Nmap | Network Discovery | Fundamental port scanning utility, extensible with scripting engine |

| Invicti | Web Application | Proof-based scanning to automatically verify vulnerabilities, low false positives |

The experimental data in Table 1 indicates that while common port scanning tools do not differ significantly in raw accuracy, their operational efficiency varies considerably [23]. This finding is critical for strategic resource allocation; a tool that completes its task faster frees up computational resources for other tasks, thereby increasing overall organizational capacity. From a strategic perspective, the choice of tool must be aligned with the specific outcome desired—whether maximum accuracy is paramount, or whether speed and efficiency are more critical for the operational context.

Table 2 illustrates the diverse tooling landscape available for different scanning domains. Specialized tools like Acunetix and Invicti, which focus on web applications, employ techniques like proof-based scanning to minimize false positives, which is essential for efficient resource allocation in software development [25]. Conversely, platform solutions like Qualys VMDR offer continuous monitoring and workflow integration, which supports ongoing risk management and strategic oversight [25].

The Scientist's Toolkit: Essential Research Reagents for Scanning Validation

The rigorous evaluation of scanning methodologies requires a set of standardized "research reagents"—whether digital or analytical. The following table details key components of an experimental framework for validating scanning results.

Table 3: Research Reagent Solutions for Scanning Validation

| Reagent / Tool | Function in Experimental Protocol |

|---|---|

| Controlled Test Environment | Provides a known, reproducible baseline against which tool performance is measured. Eliminates environmental variability. |

| Known Exploited Vulnerabilities (KEV) Catalog | Serves as a ground-truth dataset for validating vulnerability scanners, ensuring they detect known, actively exploited weaknesses [25]. |

| OWASP Top 10 Reference Set | A standardized list of critical web application security risks used to validate the coverage and accuracy of web application scanners [25]. |

| Statistical Modeling Software | Used for probability assessment and impact evaluation in risk analysis, enabling the quantitative prioritization of findings [22]. |

| Dynamic SWOT Analysis Framework | An enhanced tool for strategic risk identification that incorporates temporal elements and quantitative metrics, linking weaknesses to strategic objectives [22]. |

| Risk Prioritization Matrix | A visual tool for categorizing identified risks based on quantitative metrics like likelihood and impact, guiding resource allocation for mitigation [22]. |

Linking Scanning to Strategic Outcomes

Informing Strategic Decision-Making

Validated scan data feeds directly into strategic planning. The AHA's Environmental Scan is explicitly designed to help health systems "plan for the future" and "consider ways our field can move forward together" [21]. In a research context, this could involve using trend data from literature scans to decide which drug development pathways to pursue. A dynamic SWOT analysis, enhanced with quantitative metrics, can identify emerging threats and opportunities that traditional methods miss, allowing for more nimble and evidence-based strategic decisions [22].

Enabling Proactive Risk Mitigation

Scanning shifts an organization from a reactive to a proactive posture. The primary benefit of vulnerability scanning, for instance, is identifying security weaknesses before they can be exploited [25]. This principle applies directly to drug development, where scanning for regulatory, competitive, and technological risks allows organizations to develop contingency plans. Effective strategic risk management involves developing a three-tiered approach: prevention strategies (proactive), response strategies (immediate), and recovery strategies (long-term) for when risks materialize [22].

Optimizing Resource Allocation

Perhaps the most direct strategic outcome of scanning is the ability to allocate resources more efficiently. A risk prioritization matrix that incorporates factors beyond simple probability and impact—such as risk velocity and interconnectivity—helps organizations focus their resources on the most impactful risks [22]. In tool selection, the data from Table 1 and Table 2 enables evidence-based decisions. Choosing a more efficient scanner, for example, optimizes computational resource allocation. Similarly, selecting a scanner with low false positives (e.g., Invicti) prevents the waste of developer hours on chasing non-existent problems, thereby conserving human resources [25].

Strategic Integration Workflow

The journey from initial scanning to achieved strategic outcomes is a cohesive, integrated process. The following diagram maps this workflow, highlighting how raw data is transformed through analysis and validation into concrete actions that secure strategic advantages.

In the rigorous field of drug development, where regulatory scrutiny is high and R&D investments are substantial, a profound understanding of the external business environment is not merely advantageous—it is critical for de-risking projects and ensuring long-term viability. Strategic planning tools like the PESTLE and STEEP frameworks provide a systematic methodology for this essential external analysis [26]. These frameworks enable researchers and pharmaceutical professionals to move beyond internal laboratory data and clinical results to comprehend the macro-environmental forces that can dictate a drug's path to market and commercial success. Within the context of academic research on statistical validation, these models offer a structured hypothesis about the external environment, the accuracy of which can be tested and validated against real-world outcomes, thereby strengthening strategic decision-making with empirical evidence [7].

Demystifying the Frameworks: PESTLE and STEEP

Core Definitions and Factor Breakdown

PESTLE Analysis is a comprehensive strategic planning tool that examines six key macro-environmental factors: Political, Economic, Social, Technological, Legal, and Environmental [27] [28]. It serves as a foundational checklist to ensure that no critical external factor is overlooked when planning market entry, major investments, or long-term projects [29].

STEEP Analysis is a closely related framework that evaluates five external factors: Social, Technological, Economic, Environmental, and Political [30] [31]. It is often employed when Legal factors are considered under the Political and Environmental categories, or when a more streamlined analysis is sufficient.

The following table provides a detailed breakdown of each factor as it pertains to the pharmaceutical and research sectors.

Table 1: Detailed Factor Breakdown of PESTLE and STEEP Frameworks

| Factor | Description & Relevance | Key Considerations for Drug Development |

|---|---|---|

| Political | Government policies, political stability, trade agreements, and foreign trade policies [27] [32]. | Stability of regulatory bodies like the FDA/EMA; government healthcare policies; pricing and reimbursement regulations; tax incentives for R&D; political pressure on drug access [26]. |

| Economic | Economic growth, inflation, interest rates, exchange rates, and disposable income [27] [33]. | Funding for basic research; global economic conditions affecting healthcare budgets; cost of capital for long-term R&D; pricing pressures from payers; patient ability to pay for treatments [29]. |

| Social | Demographic shifts, cultural trends, health consciousness, population aging, and educational levels [27] [34]. | Aging populations increasing demand for chronic disease therapies; public trust in science; health literacy; cultural attitudes towards vaccination or genetic therapies; patient advocacy group influence [26]. |

| Technological | Technological advancements, automation, R&D activity, and technological infrastructure [27] [32]. | AI in drug discovery; high-throughput screening; advancements in biologics and gene therapy; clinical trial technologies; digital health and data analytics; manufacturing innovations [34] [29]. |

| Legal | Health and safety laws, consumer protection, employment law, and industry-specific regulations [27] [28]. | Patent and intellectual property law; regulatory compliance (e.g., GCP, GMP); data protection (GDPR, HIPAA); liability laws; antitrust regulations in pharma [33] [26]. |

| Environmental | Environmental protection laws, waste disposal, carbon footprint, and sustainability agendas [27] [32]. | Environmental impact of manufacturing processes; safe disposal of pharmaceutical waste; green chemistry initiatives; supply chain sustainability; environmental regulations on chemicals [34]. |

Comparative Analysis: PESTLE vs. STEEP

While PESTLE and STEEP are structurally similar, their distinctions are important for precise application. PESTLE offers a more granular view by explicitly separating Legal factors from Political ones, which is crucial in highly regulated industries like pharmaceuticals [27] [28]. STEEP, on the other hand, provides a consolidated framework, which can be advantageous for a high-level, initial environmental scan [30].

Variations of these frameworks exist to suit specific analytical needs. For instance, STEEPLE analysis incorporates an additional E for Ethical factors, covering issues like corporate social responsibility, ethical marketing, and bioethical considerations in clinical trials—a highly relevant extension for life sciences companies [34]. Another variant, STEEPLED, further includes D for Demographic factors [31].

Table 2: Framework Comparison and Selection Guide

| Framework | Factors Covered | Primary Strength | Ideal Use Case in Research/Drug Development |

|---|---|---|---|

| PESTLE | Political, Economic, Social, Technological, Legal, Environmental | Most comprehensive; explicitly addresses legal and regulatory complexities. | Planning for new drug launches; navigating international regulatory submissions; comprehensive risk assessment. |

| STEEP | Social, Technological, Economic, Environmental, Political | Streamlined and efficient for a high-level overview. | Early-stage research prioritization; initial assessment of a new market or therapeutic area. |

| STEEPLE | STEEP + Legal, Ethical | Incorporates ethical considerations, crucial for public trust and trial integrity. | Developing clinical trial protocols; public-private partnerships; addressing gene therapy or other sensitive research areas. |

Statistical Validation of Environmental Scanning

The Need for Validation in Strategic Frameworks

The qualitative insights generated from PESTLE/STEEP analyses, while valuable, introduce a layer of subjectivity. For the scientific community, particularly in a field grounded in evidence like drug development, it is imperative to validate that these identified factors are not only relevant but are also accurately weighted and predictive of real-world impacts [7]. Statistical validation transforms a subjective list of external factors into a quantitatively robust model of the business environment. This process helps confirm that the "signal" of a meaningful external trend has been correctly identified against the "noise" of irrelevant data, ensuring that strategic resources are allocated to monitor and respond to the most impactful variables [7].

Methodologies for Validating Analysis Results

Validating the results of a PESTLE analysis involves treating its output as a model to be tested. The following workflow outlines a structured protocol for this validation, drawing parallels with established empirical research methods.

Diagram 1: Workflow for Statistically Validating a PESTLE/STEEP Analysis.

Step 1: Define Factors and Operationalize Metrics The first step is to translate the qualitative factors from the PESTLE analysis into quantifiable variables [29]. For example:

- Political Factor: "Stringency of FDA approval process" could be operationalized as "Percentage of New Drug Applications (NDAs) receiving a Complete Response Letter in a fiscal year."

- Economic Factor: "Pricing pressure" could be measured as "Annual percentage change in average net price for a therapeutic class."

- Social Factor: "Disease awareness" could be quantified as "Volume of related search queries on health information platforms over time."

Step 2: Data Collection and Sourcing Gather time-series data for the defined metrics. Sources should be reliable and objective, including:

- Public Databases: FDA archives, clinicaltrials.gov, WHO data, OECD health statistics [32].

- Market Research Reports: IBISWorld, Pew Research Center, analyst reports [32] [29].

- Financial and Economic Data: SEC filings for public biotech companies, GDP and inflation data from central banks [32].

Step 3: Model Development and Hypothesis Formulation Develop a statistical model that links the external PESTLE variables (independent variables) to key internal performance outcomes (dependent variables). For a drug development context, the dependent variable could be "Time from Phase I trial initiation to market approval." The hypothesis would be that specific PESTLE metrics (e.g., regulatory stringency, public funding levels) are significant predictors of this timeline [7].

Step 4: Statistical Analysis and Testing Employ appropriate statistical techniques to test the model.

- Regression Analysis: To determine the strength and significance of the relationship between each PESTLE factor and the outcome variable [7].

- Time-Series Analysis: To understand how changes in external factors lead to lagged effects on outcomes.

- Measurement Error Correction: As highlighted in environmental health models, it is critical to account for error in the measurement of external variables (e.g., using methods like SIMEX) to avoid biased results before applying standard statistical tests [7].

Step 5: Model Validation and Refinement Validate the model by testing its predictive power on a new, out-of-sample dataset. The insights from this validation should be used to refine the original PESTLE framework, perhaps by re-weighting factors or eliminating those proven to be insignificant, creating a more accurate and evidence-based tool for future scanning [29].

The Scientist's Toolkit: Essential Reagents for Environmental Analysis

Just as a laboratory requires specific reagents and instruments to conduct research, the strategic analyst needs a defined set of tools to execute a statistically valid environmental scan.

Table 3: Key Research Reagent Solutions for Environmental Analysis

| Tool / Resource | Function | Application Example |

|---|---|---|

| Government & Regulatory Databases (e.g., FDA, EMA, data.census.gov) | Provides official data on regulations, approvals, and public demographics [32]. | Tracking approval timelines for a drug class to quantify "Political/Regulatory" factor volatility. |

| Economic Intelligence Platforms (e.g., IBISWorld, OECD iLibrary) | Supplies detailed industry reports and economic indicators [32]. | Sourcing data on R&D expenditure in biotechnology to model the "Economic" factor. |

| Social & Public Opinion Trackers (e.g., Pew Research Center) | Measures cultural trends, public attitudes, and demographic shifts [32]. | Quantifying "Social" acceptance of a novel therapy through public survey data. |

| Technology & Patent Analytics (e.g., Google Patents, scientific literature databases) | Tracks technological advancements and innovation landscapes. | Analyzing the growth rate of patents related to mRNA technology to assess the "Technological" factor. |

| Statistical Software (e.g., R, Python, Stata) | Performs regression, time-series analysis, and other statistical validation tests. | Building a predictive model to test the impact of interest rates ("Economic" factor) on biotech venture funding. |

| Structured Analytical Techniques (e.g., Hypothesis Testing) | Provides a formal framework to challenge and test assumptions within the analysis. | Testing the hypothesis that a change in data protection law ("Legal" factor) increases clinical trial costs. |

For the scientific and drug development community, the integration of PESTLE/STEEP frameworks with rigorous statistical validation represents a powerful synergy. It marries the comprehensive, qualitative understanding of the external environment with the quantitative rigor and objectivity demanded by the field. By adopting this evidence-based approach, organizations can move beyond simple checklist exercises and build dynamically validated models of their operating environment. This enables them to not only identify the critical external forces shaping the future of medicine but also to precisely measure their impact, thereby de-risking innovation and strategically navigating the complex journey from the lab to the patient.

Statistical Techniques for Harmonizing and Validating Scanning Data

Retrospective Harmonization of Multi-Source Data

Retrospective harmonization is a crucial process in research that involves integrating data from multiple, pre-existing studies or sources after the data has already been collected [35] [36]. This approach is indispensable in fields like drug development and environmental scanning, where pooling data from diverse cohorts can significantly increase statistical power, enable the study of rare outcomes, and validate findings across different populations [35] [36]. The core challenge of retrospective harmonization lies in reconciling variables that were measured using different instruments, coding schemes, or data formats across the original studies, with the goal of creating a unified, analysis-ready dataset [35].

This guide provides an objective comparison of methodological approaches and computational tools for retrospective harmonization, framing them within the critical context of statistical validation to ensure the reliability and integrity of the harmonized data.

Methodological Approaches: A Comparative Analysis

The process of retrospective harmonization can be broadly categorized into different methodological approaches, each with distinct workflows, advantages, and challenges. The following table summarizes the core characteristics of two primary methods.

Table 1: Comparison of Retrospective Harmonization Methodologies

| Feature | Manual Coding & ETL Process | R-Based Automated Reporting |

|---|---|---|

| Core Description | A structured, multi-stage process of Extraction, Transformation, and Loading of data from source systems into a harmonized database [36]. | Use of R and Quarto to create dynamic, transparent, and automated reports that document and execute the harmonization process [35]. |

| Primary Workflow | 1. Variable Mapping [36]2. Algorithm Development for Transformation [36]3. Automated ETL Execution [36]4. Quality Assurance via Sampling [36] | 1. Data Versioning Checks [35]2. Automated Data Validation [35]3. Variable Mapping & Validation [35]4. Dynamic Report Generation [35] |

| Key Advantages | - High degree of control and customization [36]- Can be automated for ongoing data collection [36]- Secure, with role-based data access [36] | - Enhanced transparency and reproducibility [35]- Automated checks with packages like testthat and pointblank [35]- Comprehensive documentation for collaborators [35] |

| Reported Challenges | - Requires extensive knowledge of source variables [36]- Time-consuming initial mapping process [36] | - Limited functionality of specialized R packages (e.g., retroharmonize, Rmonize) [35]- Can be complex for categorical data [35] |

| Ideal Application Context | Harmonizing active, ongoing cohort studies using platforms like REDCap [36]. | Creating auditable and collaborative harmonization reports for completed or static datasets [35]. |

Experimental Protocol: Manual Coding & ETL Process

The methodology employed by the LIFE and CAP3 cohort studies provides a detailed protocol for the Manual Coding and ETL approach [36].

1. Variable Mapping: Researchers with extensive knowledge of the source datasets hold working group sessions to identify variables that represent the same underlying construct (e.g., "smoking status") across the different sources. A mapping table is created, specifying the source variable, the destination variable, and any necessary recoding rules for value options [36].

2. Transformation Algorithm Development: An algorithm is developed to execute the mappings. For variables of the same type, a direct mapping is performed. For variables of different types, a user-defined mapping table is used to recode values from the source format to be consistent with the destination format [36].

3. ETL Implementation: A custom application (e.g., in Java) is developed to automatically extract data from the source systems (often via APIs, such as those provided by REDCap), transform it according to the mapping table, and load it into a unified, integrated database. This process is often run on a scheduled basis (e.g., weekly) [36].

4. Quality Assurance and Validation: Quality checks are routinely conducted. This involves pulling a random sample from the integrated database and cross-checking it against the original source data. Any errors are corrected at the source, and the integrity of the merged database is maintained by preventing direct data entry into it [36].

Experimental Protocol: R-Based Automated Reporting

Jeremy Selva's work on retrospective clinical data harmonization provides a protocol for a validation-heavy, R-centric workflow [35].

1. Data Versioning and Ingestion: The process begins by addressing data versioning issues. Using R packages like readr, data is imported, and functions like problems() are used to catch data import issues at the earliest stage [35].

2. Automated Data Validation: Robustness against changes in data versions is ensured by employing data validation frameworks. This can include R packages like testthat for unit-testing data assumptions and pointblank for defining and running data validation rules [35].

3. Variable Mapping and Validation: A workflow for mapping variables and validating these mappings is implemented. This may involve creating interactive tables for collaborator review and using validation functions to ensure the integrity of the merged data before final analysis [35].

4. Dynamic Report Generation: Finally, Quarto is used to automate the creation of comprehensive harmonization reports for each cohort. An R script renders Quarto documents, facilitating the creation of both reference documents and how-to guides, ensuring consistency and efficiency in reporting [35].

Workflow Visualization

The following diagram illustrates the core logical workflow of a retrospective data harmonization process, integrating key steps from both methodological approaches.

The Scientist's Toolkit: Essential Research Reagents & Software

Successful retrospective harmonization requires a suite of computational tools and reagents. The following table details key solutions used in the field.

Table 2: Essential Research Reagent Solutions for Data Harmonization

| Tool / Reagent | Type | Primary Function | Application Context |

|---|---|---|---|

| REDCap | Software Platform | A secure web application for building and managing online surveys and databases [36]. | Serves as a central data collection and management platform for multi-site studies; its API enables automated data extraction for ETL processes [36]. |

| Great Expectations | Python Library | An open-source tool for validating, documenting, and profiling data to maintain quality [37]. | Defines "expectations" (rules) for data (e.g., value ranges, non-null checks) and validates data against them at various pipeline stages, tracking quality over time [37]. |

| R & Quarto | Programming Language & Publishing System | Provides an environment for data cleaning, analysis, and generation of dynamic, reproducible reports [35]. | Used to create transparent harmonization reports, automate data validation checks (with testthat/pointblank), and document the entire mapping process [35]. |

retroharmonize / Rmonize |

R Packages | Specialized R packages designed to assist with the data harmonization process [35]. | Provide structured functions for common harmonization tasks, though they may have limitations with complex categorical or continuous data [35]. |

| Custom ETL Scripts (e.g., Java) | Custom Software | Bespoke programs written to perform the specific Extract, Transform, Load operations for a project [36]. | Automates the weekly or daily pooling of data from multiple sources into a unified database based on a predefined mapping table [36]. |

Statistical Validation of Harmonized Data

Statistical validation is the cornerstone of ensuring that the harmonization process has not introduced bias or error. Beyond the automated checks provided by tools like Great Expectations [37], several analytical methods are critical.

1. Data Quality Profiling: This involves generating summary statistics (e.g., means, medians, standard deviations, missing value counts) for key variables both before and after harmonization. Drastic shifts in these metrics can indicate problems in the transformation logic.

2. Coverage Analysis: As demonstrated in the LIFE and CAP3 harmonization project, it is essential to report the proportion of variables that were successfully harmonized. For example, in their study, 17 of 23 questionnaire forms (74%) harmonized more than 50% of the variables, providing a quantitative measure of comprehensiveness [36].

3. Comparative Analysis of Outcome Prevalence: A powerful validation technique is to use the harmonized data to test pre-specified, biologically plausible hypotheses. For instance, the LIFE/CAP3 study compared the age-adjusted prevalence of health conditions between the Jamaican and U.S. cohorts, demonstrating that the merged dataset could detect expected regional differences and thus was valid for investigating disease hypotheses [36].

4. Visualization for Validation: Heat maps can be effectively used to convey harmonization outcomes to collaborators and management. They provide a clear overview of cohort attributes, patient numbers, and variable availability, quickly highlighting gaps or inconsistencies in the harmonized dataset [35].

In the field of statistical validation, particularly for environmental scanning results research, selecting the appropriate analytical framework is paramount. Two advanced methodologies dominate this space: Latent Factor Analysis (LFA)—often manifested through techniques like Confirmatory Factor Analysis (CFA) and Latent Class Analysis (LCA)—and Item Response Theory (IRT). While both approaches model latent constructs from observed variables, their philosophical foundations, mathematical formulations, and optimal application contexts differ significantly.

This guide provides an objective comparison of these methodologies, supported by experimental data and practical applications within environmental research. We examine their core properties, equivalence conditions, and performance across various research scenarios to inform researchers, scientists, and development professionals in selecting the most appropriate validation framework for their specific needs.

Theoretical Foundations and Comparative Framework

Core Conceptual Definitions

Latent Factor Analysis (LFA) is a family of structural equation modeling techniques that explain relationships between observed variables and their underlying latent constructs through covariance structures. It primarily uses first- and second-order moments of variable distributions [38]. Variants like Latent Class Analysis (LCA) identify distinct subgroups within populations based on response patterns, as demonstrated in environmental behavior research where five distinct classes of sustainable practice adoption were identified [39].

Item Response Theory (IRT) comprises mathematical models that characterize the relationship between latent traits (abilities, attitudes) and item-level responses, using full information from response patterns rather than just covariances [40]. IRT models include parameters for item characteristics (difficulty, discrimination, guessing) and person abilities, operating on the principle of local independence—where item responses are mutually independent conditional on the latent trait [40].

Key Differences and Similarities

The table below summarizes the fundamental differences between these approaches:

Table 1: Fundamental Comparison Between LFA and IRT

| Characteristic | Latent Factor Analysis (LFA) | Item Response Theory (IRT) |

|---|---|---|

| Primary Focus | Variable covariances and factor structures [38] | Item response probabilities and characteristics [40] |

| Statistical Foundation | Covariance-based modeling (linear models) | Probability-based modeling (non-linear models) [38] |

| Information Utilization | First- and second-order moments (limited information) | Full response pattern information [38] |

| Parameter Interpretation | Factor loadings, intercepts, residuals | Difficulty, discrimination, guessing parameters [40] |

| Model Flexibility | Multivariate systems with complex relationships [38] | Customized item response functions [38] |

| Invariance Properties | Population-dependent without specific equating | Strong item and population invariance [40] [41] |

Despite these differences, under specific conditions, certain LFA and IRT models are mathematically equivalent. A single-factor CFA with binary items is equivalent to a two-parameter normal ogive IRT model, while a single-factor CFA with polytomous items corresponds to Samejima's graded response model [38]. The connection is particularly strong between CFA and normal ogive IRT models, with Takane and de Leeuw (1987) providing algebraic proof of their equivalence [42].

Figure 1: Methodological Relationships Between LFA and IRT

Experimental Protocols and Applications

Environmental Behavior Assessment Using LCA

A study examining the relationship between environmental behavior, job satisfaction, and burnout employed Latent Class Analysis to identify distinct behavioral patterns among 537 professionals across various sectors [39]. The experimental protocol followed these key steps:

- Data Collection: Administered surveys measuring sustainable practices participation, job satisfaction metrics, performance indicators, and burnout assessments

- Class Enumeration: Systematically tested models with varying numbers of latent classes to determine optimal solution

- Model Estimation: Used maximum likelihood estimation to identify class membership probabilities

- Validation: Examined class differences on external variables (job satisfaction, burnout)

- Interpretation: Characterized five distinct classes based on sustainable practice engagement levels

The analysis revealed significant differences in job satisfaction across classes, with higher participation in sustainable behaviors generally associated with greater job satisfaction [39]. Although performance remained stable across classes, burnout levels varied significantly, demonstrating LCA's utility in identifying meaningful subgroups for targeted organizational interventions.

Agricultural Sustainability Measurement Using IRT

Research developing a farm-level agricultural sustainability index demonstrated IRT's application to environmental assessment [41]. The methodology proceeded as follows:

- Data Source: Farm Accountancy Data Network (FADN) with 8,928 farms in Germany

- Model Selection: Implemented graded response model within Bayesian framework using brms package in R

- Parameter Estimation: Employed Markov Chain Monte Carlo sampling through adaptive Hamiltonian Monte Carlo

- Validation: Conducted leave-one-out cross-validation, compared results with existing knowledge, tested robustness to missing data

- Linking: Simulated scale linking procedures to test expansion potential across regions with different data sets

The IRT approach successfully generated a farm-level sustainability index independent of the specific variables used, enabling comparisons across different variable sets and regions [41]. Results indicated positive relationships between farm size and sustainability, higher performance for crop and mixed farms, and below-average performance for livestock operations.

Scale Refinement Using IRT in Environmental Psychology

A study analyzing the Connectedness to Nature Scale (CNS) demonstrated IRT's utility for refining environmental psychology instruments [43]. The protocol included:

- Participants: 1008 participants from previous studies using Spanish-language CNS

- Dimensionality Assessment: Conducted factor analysis to verify unidimensionality assumption

- Item Calibration: Estimated discrimination and difficulty parameters using Samejima's graded response model

- Fit Evaluation: Employed S-χ² statistic to assess item-level model fit

- Local Independence: Tested for redundant items using LD χ²

- Validation: Second study with 321 participants confirmed reliability and validity of refined scale

The analysis identified seven items with appropriate discrimination and difficulty parameters, while six items demonstrated inadequate psychometric properties [43]. This led to a refined, more precise measurement instrument for connectedness to nature research.

Performance Comparison and Decision Framework

Quantitative Comparison of Methodological Properties

The table below summarizes experimental findings comparing LFA and IRT performance across key metrics:

Table 2: Experimental Performance Comparison of LFA and IRT

| Performance Metric | LFA/CFA Findings | IRT Findings | Experimental Context |

|---|---|---|---|

| Measurement Precision | Limited to covariance structure | Varies by ability level; quantifiable via information functions [40] | Conditional error assessment [44] |

| Model Flexibility | Excellent for multivariate systems [38] | Superior for customized item response functions [38] | Complex latent trait assessment [38] |

| Handling Missing Data | Requires complete data or imputation | Robust to missing items under certain conditions [41] | Agricultural sustainability index [41] |

| Cross-population Comparison | Limited invariance without constraints | Strong invariance properties [40] [41] | Scale linking simulations [41] |

| Item Analysis Capability | Factor loadings, modification indices | Detailed discrimination, difficulty, guessing parameters [40] | Connectedness to Nature Scale refinement [43] |

| Implementation Complexity | Relatively straightforward | Computationally intensive, especially for complex models | Bayesian IRT with MCMC sampling [41] |

Methodological Selection Guidelines

The choice between LFA and IRT depends primarily on research goals, data characteristics, and application context:

Figure 2: Methodological Selection Decision Framework

Essential Research Reagents and Computational Tools

Statistical Software and Packages

The table below outlines key computational tools for implementing LFA and IRT analyses:

Table 3: Essential Computational Tools for Latent Variable Modeling

| Tool Name | Primary Function | Key Features | Application Examples |

|---|---|---|---|

| Mplus | Structural equation modeling, LCA, IRT | Integrated framework for both LFA and IRT | Latent class analysis of environmental behavior [39] |

| IRT.PRO | Item response theory analysis | Specialized IRT calibration and fit assessment | Connectedness to Nature Scale analysis [43] |

| brms (R package) | Bayesian regression models | Flexible IRT implementation in Stan | Agricultural sustainability measurement [41] |

| FACTOR | Exploratory factor analysis | Dimensionality assessment for ordinal data | Unidimensionality testing for IRT assumptions [43] |

| lavaan (R package) | Structural equation modeling | CFA and latent variable modeling | Covariance structure analysis [38] |

Latent Factor Analysis and Item Response Theory offer complementary approaches to statistical validation in environmental research. LFA, particularly through LCA, excels at identifying population subgroups and modeling complex multivariate relationships. IRT provides superior measurement precision, item-level analysis, and cross-population comparability, making it ideal for instrument development and latent trait assessment.