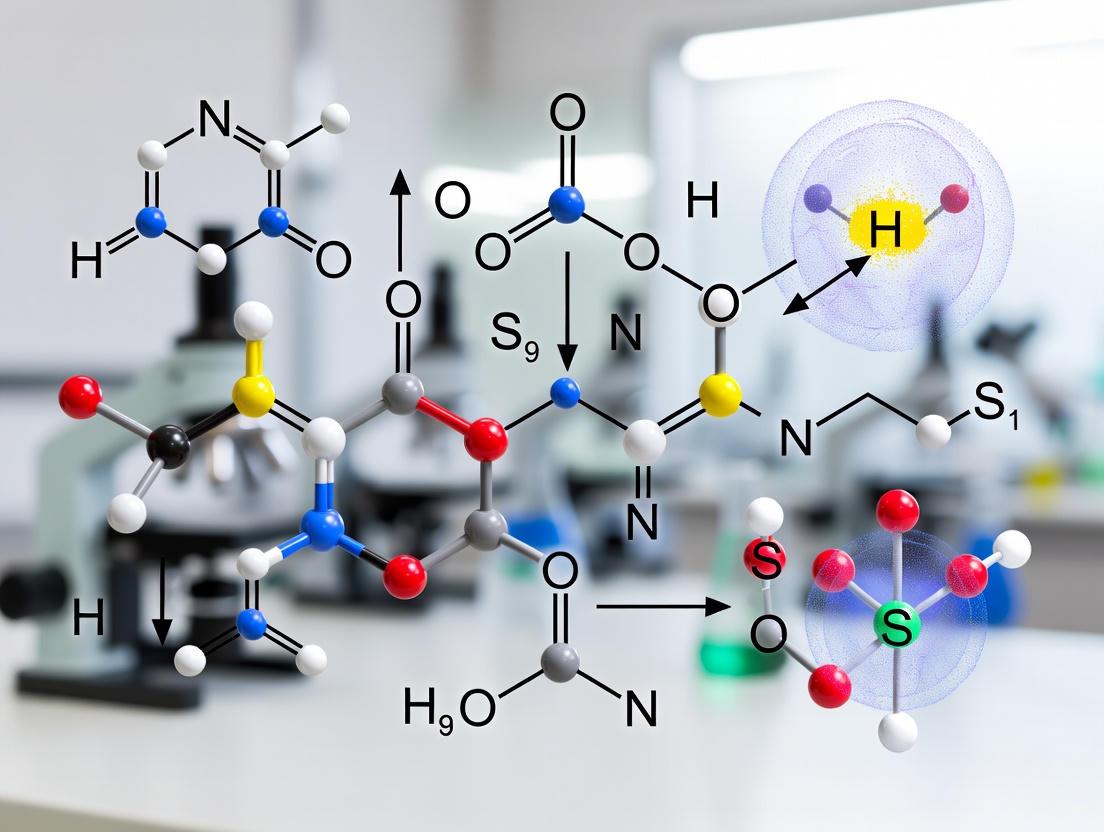

Resolving Linear Dependence in Quantum Chemistry: A Practical Guide to Managing Diffuse Functions for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of linear dependence caused by diffuse basis sets in quantum chemical calculations.

Resolving Linear Dependence in Quantum Chemistry: A Practical Guide to Managing Diffuse Functions for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of linear dependence caused by diffuse basis sets in quantum chemical calculations. It covers the fundamental principles of why linear dependence occurs, outlines step-by-step methodological solutions for function removal, presents advanced troubleshooting techniques for complex systems, and establishes validation protocols to ensure computational accuracy remains intact. By synthesizing foundational theory with practical application, this guide enables more robust and reliable computational chemistry workflows, which are essential for computer-aided drug design and materials modeling.

Understanding the Linear Dependence Problem: Why Diffuse Functions Create Computational Challenges

Defining Linear Dependence in Quantum Chemistry Calculations

A technical guide for researchers tackling a common computational hurdle.

Linear dependence in the atomic orbital (AO) basis is a frequent challenge in quantum chemistry calculations, often triggered by the use of diffuse basis functions. This guide provides clear diagnostics and solutions to help you identify and resolve these issues, ensuring the robustness of your computational research.

What is Linear Dependence and What Causes It?

Linear dependence occurs when one or more basis functions in your atomic orbital set can be written as a linear combination of other functions in the same set. This makes the overlap matrix (S) singular or nearly singular, preventing the self-consistent field (SCF) procedure from converging [1] [2].

The primary cause is the use of diffuse basis functions, which are essential for accuracy but detrimental to numerical stability [2]. These functions have small exponents, causing them to decay slowly and become very similar in spatial regions where atoms are close, leading to a condition known as "over-completeness" of the basis set [1] [3].

- Why Diffuse Functions are a "Blessing and a Curse": While absolutely essential for an accurate description of properties like non-covalent interactions (NCI), they severely impact the sparsity of the density matrix and introduce linear dependencies [2]. Calculations on DNA fragments show that small basis sets without diffuse functions (e.g., STO-3G) exhibit significant sparsity, while medium-sized diffuse basis sets (e.g., def2-TZVPPD) can remove almost all usable sparsity and introduce linear dependence [2].

How to Diagnose Linear Dependence

Most quantum chemistry software packages will automatically detect and report linear dependence. Here is what to look for in your output file.

1. Check for Warning Messages The software will typically print an explicit warning. For example, in Q-Chem, look for a statement like [1]:

2. Compare the Number of Basis Functions A clear sign is a reduction in the number of basis functions used in the calculation compared to the number originally specified. In the example above, the original basis had 495 functions, but one was removed due to linear dependence, resulting in 494 orthogonalized AOs [1].

3. Monitor the SCF Convergence Difficulties in achieving SCF convergence, or large oscillations in the energy during the SCF cycle, can be an indirect symptom of underlying linear dependencies in the basis set [1].

How to Resolve Linear Dependence Issues

When you encounter linear dependence, you can apply the following troubleshooting strategies.

Solution 1: Adjust the Linear Dependency Threshold (Recommended) Most programs have a keyword to control the threshold for removing linearly dependent functions. The default is often appropriate, but tightening it can resolve discrepancies between different software.

- Q-Chem: Use the

BASIS_LIN_DEP_THRESHkeyword. The default is6(meaning 1e-6). Tightening it (e.g., to20for 1e-20) can prevent the removal of functions, yielding energies consistent with other programs that use tighter defaults [1]. - ORCA: Use the

sthreshkeyword. The default in ORCA is 1e-7, which is tighter than in Q-Chem or Gaussian. Setting it to 1e-6 is often recommended for better SCF convergence and consistency [1].

Solution 2: Use a Less Diffuse Basis Set If adjusting the threshold does not suffice, consider switching to a more compact basis set.

- Remove Diffuse Functions: Switch from an augmented basis (e.g.,

aug-cc-pVDZ) to its standard version (cc-pVDZ) [1]. - Use Specially Designed Basis Sets: The vDZP basis set is designed to minimize basis set superposition error (BSSE) and is generally more robust, often achieving accuracy near triple-ζ levels without the computational cost or linear dependence issues of larger, diffuse sets [4].

Solution 3: Employ Advanced Basis Set Techniques For high-precision work where diffuse functions are non-negotiable, consider:

- Complementary Auxiliary Basis Set (CABS) Correction: This approach can help recover accuracy when using more compact, low quantum-number basis sets, thus avoiding the need for highly diffuse functions [2].

- Manual Basis Set Inspection: Be aware that some program libraries use pre-defined reductions in their default basis sets (e.g., a reduced form of

cc-pVDZ). Using the basis set directly from the Basis Set Exchange and ensuring proper normalization can sometimes affect results [5].

Experimental Protocol: Systematically Addressing Linear Dependence

Follow this workflow to diagnose and resolve linear dependence in your calculations.

Troubleshooting Guide at a Glance

This table summarizes the common symptoms and their solutions.

| Symptom | Diagnostic Check | Recommended Solution |

|---|---|---|

| SCF convergence failure, large energy oscillations | Check output for "Linear dependence detected" warning [1]. |

Tighten the BASIS_LIN_DEP_THRESH in Q-Chem or adjust sthresh in ORCA [1]. |

| Energy discrepancy between different software packages | Verify the number of basis functions used is the same in all programs. | Ensure consistent linear dependence thresholds across software (e.g., use 1e-6 in both Q-Chem and ORCA) [1]. |

| Need for high accuracy in Non-Covalent Interactions (NCIs) but facing linear dependence | Confirm the problem disappears when using non-diffuse basis sets. | Use a robust, compact basis set like vDZP or consider CABS corrections with a reduced basis [2] [4]. |

| Item | Function in Research |

|---|---|

| BASISLINDEP_THRESH (Q-Chem) | Controls the sensitivity for removing linearly dependent AOs. Lower values (e.g., 1e-6) remove more functions, while tighter values (e.g., 1e-10) remove fewer [1]. |

| sthresh (ORCA) | The threshold for the smallest allowed eigenvalue of the overlap matrix. Setting it to 1e-6 is often recommended for better consistency with other codes [1]. |

| vDZP Basis Set | A compact double-zeta basis set designed for minimal BSSE, offering near triple-zeta accuracy without the linear dependence issues of diffuse-augmented sets [4]. |

| Complementary Auxiliary Basis Set (CABS) | An advanced technique to recover accuracy when using compact basis sets, mitigating the need for diffuse functions that cause linear dependence [2]. |

| Basis Set Exchange (BSE) | A repository to obtain standardized, uncontracted basis sets, ensuring consistency and helping to diagnose issues related to internal program reductions [5]. |

Technical Support Center: Troubleshooting Guides and FAQs

This guide addresses common challenges researchers face when working with diffuse basis sets in electronic structure calculations, providing practical solutions to manage the trade-off between accuracy and computational cost.

Frequently Asked Questions (FAQs)

1. What are diffuse basis functions, and why are they considered a "blessing" for accuracy? Diffuse functions are atomic orbital basis functions with a small exponent, meaning they decay slowly and are spatially extended. They are essential for an accurate description of non-covalent interactions (NCIs), such as van der Waals forces, hydrogen bonding, and π-π stacking, which are critical in drug design and molecular recognition [2]. Without them, calculations on NCIs can suffer from large errors. For example, as shown in Table 1, diffuse functions are necessary to achieve chemically accurate results (errors < ~3 kJ/mol) for non-covalent interactions [2].

2. What is the "curse" associated with using diffuse functions? The primary "curse" is their detrimental impact on computational performance. Diffuse functions significantly reduce the sparsity (the number of near-zero elements) of the one-particle density matrix (1-PDM), even for large, insulating systems where the electronic structure is expected to be local [2]. This low sparsity undermines the efficiency of linear-scaling algorithms, leading to longer computation times, larger memory requirements, and more pronounced issues with linear dependence [2].

3. What is linear dependence, and why does it occur with diffuse functions? Linear dependence is a numerical issue where the basis functions used to describe the system are no longer linearly independent. In crystalline systems, high-quality molecular basis sets often contain functions that are too diffuse. When these are applied in a periodic context, the overlap between functions on adjacent atoms becomes excessive, causing the overlap matrix to become singular or ill-conditioned, which prevents the self-consistent field (SCF) procedure from converging [6].

4. My calculation with a large, diffuse basis set has failed due to linear dependence. What is the first thing I should check? First, verify if your system is appropriate for a diffuse basis set. For solid-state calculations, diffuse functions are often problematic. If your system is a molecule, consider whether you truly need a description of long-range electron density, such as for modeling anion stability, weak interactions, or excitation properties. If not, a less diffuse basis set may be more robust [6].

5. Are there automated methods to handle linear dependence in my calculations? Yes. For calculations with the CRYSTAL code, a projector-based method has been developed to automatically identify and remove linear dependence issues arising from large and diffuse basis sets. This allows for the use of high-quality molecular basis sets in solid-state calculations with minimal user intervention [6].

6. I need an accurate description of non-covalent interactions for my drug discovery project but cannot manage the cost of a fully augmented basis. What are my options? Consider multi-level approaches or composite methods. One promising solution is the use of the complementary auxiliary basis set (CABS) singles correction in combination with compact, low angular momentum (l-quantum-number) basis sets. This approach has shown promising results for recovering the accuracy for non-covalent interactions without the severe computational penalties of standard diffuse basis sets [2].

Troubleshooting Guide

| Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| SCF convergence failure; "linear dependence" error message. | Overlap matrix is ill-conditioned due to highly diffuse functions in the basis set [6]. | 1. Automated Screening: Use code features (e.g., in CRYSTAL) that automatically project out linearly dependent components [6].2. Manual Pruning: Systematically remove the most diffuse basis functions from the set and re-test. |

| Calculation runs unacceptably slow or exhausts memory for medium-to-large systems. | Diffuse functions destroy sparsity in the 1-PDM, pushing the calculation out of the low-scaling regime [2]. | 1. Method Change: Switch to a compact, yet accurate, composite method like r2SCAN-3c or B97M-V/def2-SVPD [7].2. Advanced Correction: Employ the CABS singles correction with a compact basis set to regain accuracy [2]. |

| Inaccurate non-covalent interaction (NCI) energies. | Lack of diffuse functions in the basis set leads to improper description of long-range electron correlation [2]. | Use an augmented basis set. For example, use def2-TZVPPD or aug-cc-pVTZ instead of their non-augmented counterparts, as verified in Table 1 [2]. |

| Inconsistent results when comparing molecular and periodic calculations. | Different (or unoptimized) basis sets are used for the molecule and the solid, often due to linear dependence in the solid [6]. | Apply the same high-quality molecular basis to both system types, leveraging automated linear dependence removal tools in the periodic code for a consistent theoretical model [6]. |

Quantitative Data: The Accuracy vs. Basis Set Trade-Off

The following table summarizes key performance metrics for various basis sets, illustrating the "blessing" of accuracy and the "curse" of computational cost. Data is based on calculations using the ωB97X-V density functional [2].

Table 1: Basis Set Performance for the ASCDB Benchmark

| Basis Set | RMSD (NCI) (kJ/mol) | Relative Time (s) | Notes |

|---|---|---|---|

| def2-SVP | 31.51 | 151 | Small basis, large error for NCIs. |

| def2-TZVP | 8.20 | 481 | Medium basis, still significant error. |

| def2-QZVP | 2.98 | 1935 | Large basis, good accuracy, high cost. |

| def2-SVPD | 7.53 | 521 | Adding diffuse functions to SVP significantly improves NCI accuracy. |

| def2-TZVPPD | 2.45 | 1440 | Recommended: Excellent accuracy-to-cost ratio with diffuse functions. |

| aug-cc-pVDZ | 4.83 | 975 | Augmented Dunning basis, moderate accuracy. |

| aug-cc-pVTZ | 2.50 | 2706 | Recommended: High accuracy, but higher cost. |

Experimental Protocols

Protocol 1: Assessing the Necessity of Diffuse Functions for a Given System

Objective: To determine if a project requires the use of diffuse basis functions to achieve reliable results. Methodology:

- Geometry Optimization: Optimize the molecular structure of your system using a robust, medium-sized basis set (e.g., def2-TZVP).

- Single-Point Energy Comparison: Perform single-point energy calculations on the optimized geometry using two different basis sets:

- Protocol A: A standard basis set without diffuse functions (e.g., def2-TZVP).

- Protocol B: An augmented basis set with diffuse functions (e.g., def2-TZVPPD).

- Analysis: Compare the resulting energies and, if applicable, the non-covalent interaction energies of a complex versus its monomers. A difference of more than ~4 kJ/mol often indicates that diffuse functions are critical for your system [2].

Protocol 2: Automated Removal of Linear Dependence in CRYSTAL

Objective: To enable the use of large, diffuse molecular basis sets in solid-state calculations without manual modification. Methodology:

- Basis Set Selection: Choose a high-quality molecular basis set from a repository like the EMSL Basis Set Exchange.

- Input File Setup: Prepare a standard input file for CRYSTAL. The key is to activate the internal linear dependence treatment [6].

- Execution: Run the calculation. The modified CRYSTAL code will automatically:

- Identify the linearly dependent components of the basis set.

- Construct a projector to remove these components from the solution of the matrix equations.

- Proceed with the SCF calculation using the now well-conditioned basis.

- Validation: Check the output for successful SCF convergence and verify that the total energy is physically reasonable. This method has been successfully applied to semiconductors, insulators, metals, and molecular crystals [6].

Workflow and Pathway Visualization

Decision Workflow for Using Diffuse Functions

The following diagram outlines a logical workflow for deciding when and how to use diffuse functions in a computational project, incorporating troubleshooting steps.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational "Reagents" and Their Functions

| Item | Function / Purpose | Example(s) |

|---|---|---|

| Localized Basis Sets | A set of non-orthogonal atomic orbitals used to represent the wavefunction and electronic density. The quality dictates accuracy and cost. | Gaussian-type orbitals (GTOs), STO-3G, def2-SVP, def2-TZVP, cc-pVXZ [6] [7]. |

| Diffuse/Augmentation Functions | Specific type of basis function with a small exponent, providing a spatially extended "fuzzy" layer around atoms to capture long-range electronic effects. | Essential for anions, excited states, and non-covalent interactions [2]. |

| Density Functional (DFT) | The quantum mechanical method used to solve the electronic structure problem, defining the exchange-correlation energy. | ωB97X-V, B3LYP, r2SCAN-3c [2] [7]. |

| Linear Dependence Projector | An algorithmic tool that acts as a "filter" to automatically identify and remove linearly dependent components from a basis set before the SCF calculation. | Used in CRYSTAL code to enable the use of diffuse molecular basis sets in solids [6]. |

| Complementary Auxiliary Basis Set (CABS) | An auxiliary basis set used in perturbation-based corrections to recover electron correlation effects typically captured by diffuse functions, but at a lower cost. | Enables accurate NCI calculations with compact basis sets (e.g., CABS singles correction) [2]. |

Molecular Geometry and Close Atomic Distances Trigger Linear Dependence

Frequently Asked Questions (FAQs)

FAQ 1: What is linear dependence in the context of computational chemistry? Linear dependence occurs when the basis functions used in a quantum chemical calculation are no longer linearly independent. This often happens in systems with large, diffuse basis sets, where the overlap between basis functions on atoms that are in close proximity becomes significant. The consequence is that the overlap matrix becomes singular or nearly singular, causing the calculation to fail during the matrix diagonalization step [2].

FAQ 2: How do molecular geometry and atomic distances contribute to this problem? When atoms are very close together, their atomic orbitals, especially the diffuse ones, have substantial overlap. In certain molecular geometries, such as dense clusters or metal complexes with short bond distances, this effect is amplified. The diffuse functions, which have a broad spatial extent, are particularly prone to this, leading to a situation where the set of basis functions cannot be treated as independent, triggering linear dependence [2].

FAQ 3: Why are diffuse functions both a "blessing and a curse"? Diffuse basis functions are a blessing for accuracy because they are essential for correctly describing properties like non-covalent interactions, electron affinities, and excited states. However, they are a curse for sparsity and computational stability because they drastically reduce the sparsity of the one-particle density matrix and are the primary cause of linear dependence issues in calculations involving molecules with close atomic contacts [2].

FAQ 4: What are the symptoms of a linear dependency error in my calculation? Common symptoms include:

- Fatal errors during the self-consistent field (SCF) procedure related to matrix diagonalization.

- Error messages explicitly mentioning "linear dependence" in the basis set.

- Unphysical molecular orbitals or energies.

- Failure of the calculation to converge.

FAQ 5: What is the most direct way to resolve linear dependence caused by diffuse functions? The most straightforward troubleshooting step is to remove the diffuse functions from your basis set. This directly addresses the root cause by eliminating the most spatially extended functions that are creating the excessive overlap. You can then attempt your calculation again with a more compact basis [2].

Troubleshooting Guide: Resolving Linear Dependence

Issue: Calculation fails due to linear dependence in the basis set, suspected to be caused by close atomic distances and the use of diffuse functions.

Phase 1: Understand and Reproduce the Problem

Ask Diagnostic Questions:

- What is the specific error message?

- What basis set are you using? Does it include diffuse functions (e.g., "aug-", "-aug", "++", or names like "def2-SVPD")?

- What is the molecular system? Are there regions with very close interatomic distances (e.g., metal clusters, van der Waals complexes, or compressed geometries)?

Gather Information:

- Check your output file for the exact error and any warnings about small eigenvalues in the overlap matrix.

- Examine the molecular structure and identify any atoms separated by a distance significantly less than the sum of their van der Waals radii.

Reproduce the Issue:

- Run a single-point energy calculation on the problematic geometry using the same method and basis set to confirm the error persists.

Phase 2: Isolate the Issue

- Remove Complexity:

- Change one thing at a time: Start by simplifying the basis set.

- Remove diffuse functions: Perform the same calculation with a basis set that does not include diffuse functions. For example, switch from

aug-cc-pVTZtocc-pVTZ, or fromdef2-TZVPPDtodef2-TZVPP[2]. - Result Interpretation: If the calculation completes successfully without diffuse functions, you have confirmed that the diffuse functions are the primary cause of the linear dependence.

Phase 3: Find a Fix or Workaround

Once you have isolated the issue, consider these solutions, ordered from the most direct to the more advanced.

Solution 1: Use a Compact Basis Set

- Action: Permanently switch to a basis set without diffuse functions for this specific system.

- When to Use: When the highest accuracy for properties like non-covalent interactions is not critical for your study.

- Trade-off: This solution sacrifices some accuracy for stability and speed. The data below shows the significant accuracy loss for non-covalent interactions (NCI) when diffuse functions are absent [2].

Solution 2: The CABS Singlets Correction with a Reduced Basis

- Action: Employ the Complementary Auxiliary Basis Set (CABS) singles correction in conjunction with a compact, low angular momentum (

l-quantum-number) basis set. - When to Use: When you require higher accuracy but are facing linear dependence. This approach has been shown to provide promising results for non-covalent interactions while mitigating the "curse of sparsity" associated with large, diffuse basis sets [2].

- Trade-off: This is a more sophisticated method that may require specific functionality in your computational chemistry software.

Solution 3: Geometrical Intervention

- Action: If the close atomic distances are due to an unphysical or poorly optimized geometry, consider re-optimizing the molecular structure at a lower level of theory (with a smaller basis set) before proceeding.

- When to Use: When you suspect the input geometry itself is problematic.

- Trade-off: This may change the system you are studying, so it is not applicable if the close-contact geometry is intentional.

Basis Set Performance and Error Analysis

Table 1: Root-mean-square deviations (RMSD) for the ωB97X-V functional with various basis sets on the ASCDB benchmark, highlighting the importance of diffuse functions for accuracy, especially for non-covalent interactions (NCI). All values are in kJ/mol. Data from [2].

| Basis Set | Total RMSD (Basis Error) | NCI RMSD (Basis Error) | Has Diffuse Functions? |

|---|---|---|---|

| def2-SVP | 30.84 | 31.33 | No |

| def2-TZVP | 5.50 | 7.75 | No |

| def2-QZVP | 1.93 | 1.73 | No |

| def2-SVPD | 23.45 | 7.04 | Yes |

| def2-TZVPPD | 1.82 | 0.73 | Yes |

| aug-cc-pVDZ | 15.94 | 4.32 | Yes |

| aug-cc-pVTZ | 3.90 | 1.23 | Yes |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key computational tools and their functions in managing linear dependence.

| Item | Function / Description |

|---|---|

| Compact Basis Sets | Basis sets without diffuse functions (e.g., cc-pVTZ, def2-TZVP). Used to avoid linear dependence by reducing orbital overlap [2]. |

| CABS Singles Correction | A computational method that can recover correlation energy, allowing the use of smaller, more compact basis sets while maintaining accuracy [2]. |

| Geometry Optimization | The process of finding a stable molecular arrangement. A better-optimized geometry can sometimes alleviate pathologically short atomic distances. |

| Internal Coordinate System | A molecular representation used in computations. A well-defined coordinate system can improve numerical stability during calculations. |

Workflow for Diagnosing and Resolving Linear Dependence

Molecular Geometry and Basis Set Locality Relationship

The Role of Small Exponent Basis Functions in Creating Overlap

In computational chemistry, a basis set is a set of functions used to represent the electronic wave function, turning partial differential equations into algebraic equations suitable for computers [8]. Diffuse functions, also known as small exponent basis functions, are Gaussian-type orbitals with small exponents, giving flexibility to the "tail" portion of atomic orbitals far from the nucleus [8]. They are essential for accurate calculations of anions, dipole moments, and non-covalent interactions [8] [2].

However, in large molecular systems or when using very large basis sets, these diffuse functions can lead to linear dependence. This is an over-complete description of the space spanned by the basis functions, causing a loss of uniqueness in the molecular orbital coefficients and resulting in a poorly behaved or erratic Self-Consistent Field (SCF) calculation [9]. This guide provides protocols for identifying and resolving this issue.

Frequently Asked Questions (FAQs)

1. What is linear dependence in a basis set? Linear dependence occurs when your basis set is nearly over-complete. This means that at least one basis function can be represented as a linear combination of other functions in the set. In practice, this is detected by the presence of very small eigenvalues in the basis set overlap matrix (S) [9].

2. Why do diffuse functions cause linear dependence? Diffuse functions are spatially extended, leading to significant overlap between functions on different atoms in large systems. This overlap, when combined with a large number of functions, creates a near-redundant description of the electronic space, manifesting as linear dependence [2] [9].

3. What are the symptoms of linear dependence in a calculation? Common symptoms include:

- SCF convergence failure or extremely slow convergence.

- Erratic behavior during the SCF cycle.

- Warnings or errors about linear dependence from the software.

- The calculation projecting out near-degeneracies, resulting in fewer molecular orbitals than basis functions [9].

4. When should I consider removing diffuse functions? Removal is a practical consideration for large systems where linear dependence prevents SCF convergence. It is a trade-off between numerical stability and accuracy, particularly for properties like non-covalent interactions where diffuse functions are most beneficial [2].

Troubleshooting Guide: Diagnosing Linear Dependence

Follow this workflow to confirm if linear dependence is the cause of your calculation failure.

Experimental Protocol: Diagnosing Linear Dependence

Objective: To confirm the presence of linear dependence in the basis set by examining the overlap matrix eigenvalues.

- Run a Single-Point Energy Calculation: Perform a standard SCF calculation on your system. Let it run until it fails to converge or finishes with warnings.

- Scrutinize the Output Log: Search for keywords such as "linear dependence," "overlap matrix," "small eigenvalues," or "projecting out functions."

- Locate the Overlap Matrix Analysis: In the output, find the section that details the eigenvalues of the basis set overlap matrix. In Q-Chem, this analysis is performed automatically when potential linear dependence is detected [9].

- Apply the Threshold: Identify the smallest eigenvalue. If its value is smaller than the default threshold of 10⁻⁶, linear dependence is confirmed as the likely cause of the calculation failure [9].

Resolution Protocols: Removing Diffuse Functions

Once linear dependence is diagnosed, use these structured methods to resolve it.

Protocol 1: The Standard Basis Set Reduction

This is the most direct approach, switching to a basis set that does not include diffuse functions.

- Methodology: Replace your augmented basis set (e.g.,

aug-cc-pVTZ) with its non-augmented counterpart (e.g.,cc-pVTZ). Similarly, replace a basis set with a 'D' for diffuse (e.g.,def2-TZVPPD) with its standard version (e.g.,def2-TZVPP) [2]. - Expected Outcome: Calculation stability is greatly improved, but at the cost of reduced accuracy for properties that require a good description of the electron tail, such as non-covalent interaction energies [2].

Protocol 2: Selective Removal of High Angular Momentum Functions

A more nuanced approach that retains some diffuse functions while improving stability.

- Methodology: Manually edit the basis set to remove the most diffuse functions for high angular momentum quantum numbers (e.g., remove diffuse

fandgfunctions while keeping diffusesandp). This can often be done within the input file of the quantum chemistry software. - Expected Outcome: Reduces the severity of linear dependence while preserving a significant portion of the accuracy gain from diffuse functions, particularly for properties dominated by valence and polarization effects.

Protocol 3: Adjusting the Linear Dependence Threshold

A last-resort method for systems where diffuse functions are absolutely necessary.

- Methodology: Force the calculation to proceed by instructing the program to use a stricter (larger) threshold for identifying linear dependence. In Q-Chem, this is done by setting the

BASIS_LIN_DEP_THRESH$remvariable to a value like5(threshold of 10⁻⁵) or4(10⁻⁴) [9]. - Expected Outcome: The SCF calculation may converge. However, this comes with a strong warning: this procedure projects out the near-linear dependencies, which can lead to a loss of accuracy, and the results should be treated with caution [9].

Quantitative Impact of Basis Set Choice

The table below summarizes the trade-off between accuracy and stability, using data from non-covalent interaction (NCI) benchmarks [2].

Table 1: Basis Set Error and Computational Cost for ωB97X-V Functional

| Basis Set | Diffuse Functions? | NCI RMSD (kJ/mol) | Relative SCF Time (s) | Recommended Use Case |

|---|---|---|---|---|

| cc-pVTZ | No | 12.73 | 573 | Stable calculations on large systems; lower accuracy on NCIs. |

| aug-cc-pVTZ | Yes | 2.50 | 2706 | High-accuracy studies of NCIs; prone to linear dependence in large systems. |

| def2-TZVP | No | 8.20 | 481 | A efficient alternative to cc-pVTZ. |

| def2-TZVPPD | Yes | 2.45 | 1440 | A accurate, often more efficient alternative to aug-cc-pVTZ. |

Data adapted from calculations on the ASCDB benchmark, referenced to aug-cc-pV6Z [2]. RMSD: Root-Mean-Square Deviation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Resources for Basis Set Troubleshooting

| Item | Function | Example Sources |

|---|---|---|

| Standard Basis Sets | Provide a balanced starting point for calculations without built-in linear dependence risks. | cc-pVXZ (X=D,T,Q,...), def2-SVP, def2-TZVP [8] [2]. |

| Augmented Basis Sets | Include diffuse functions for accurate anion, excited state, and non-covalent interaction calculations. | aug-cc-pVXZ, def2-SVPD, def2-TZVPPD [2]. |

| Basis Set Exchange | A repository to browse, download, and customize basis sets for various quantum chemistry software. | https://www.basissetexchange.org [2]. |

| Linear Dependence Threshold | A key computational parameter that controls sensitivity to linear dependence. | BASIS_LIN_DEP_THRESH in Q-Chem [9]. |

Frequently Asked Questions

Q1: What are the immediate signs that my quantum chemistry calculation has failed due to linear dependency? The most common signs are fatal errors during the self-consistent field (SCF) procedure related to matrix singularity, a sudden and dramatic increase in computed energy, or convergence failure. In some software, a failed calculation might not throw an error but return physically meaningless results, such as wildly incorrect interaction energies for non-covalent complexes [10].

Q2: Why does removing diffuse functions resolve linear dependency issues? Linear dependency occurs when basis functions on different atoms become too similar, making the overlap matrix singular or nearly singular. Diffuse functions have a large spatial extent, increasing the likelihood of this overlap, especially in systems with many atoms or small interatomic distances. Removing them increases the linear independence of the basis set, restoring numerical stability [2].

Q3: How does removing diffuse functions impact the accuracy of my results, particularly for non-covalent interactions? Removing diffuse functions stabilizes calculations but sacrifices accuracy. They are essential for correctly modeling the weak electronic interactions in systems like drug-protein complexes. As shown in Table 1, unaugmented basis sets like def2-TZVP can have errors over 8 kJ/mol for NCIs, while augmented counterparts like def2-TZVPPD reduce this error below 2.5 kJ/mol [2].

Q4: Are there alternatives to completely removing diffuse functions to avoid linear dependency? Yes, advanced techniques exist. One promising solution is using the complementary auxiliary basis set (CABS) singles correction in combination with compact, low angular momentum quantum number (l-quantum-number) basis sets. This approach can help recover some of the accuracy lost when using less diffuse basis sets [2].

Q5: Can a calculation appear successful but still produce erroneous results due to prior failures?

Yes. Some software libraries may not properly clear error states from a previous failed calculation. A subsequent call for a property calculation might then return an erroneous value without any warning, as was demonstrated with the ALLPROPSdll function in REFPROP [10].

Troubleshooting Guide: Identifying and Resolving Linear Dependency

Problem: Your electronic structure calculation fails or produces nonsensical results, and the error log points to linear dependency in the basis set.

Step 1: Diagnose the Error

Consult your software's output log for specific error messages. Common indicators include:

- Error 121 in REFPROP: Input outside valid physical range (e.g., temperature above critical point) [10].

- #NUM! in Excel: A numerical overflow or operation on an impossibly large/small number, analogous to a failed quantum chemical calculation [11].

- #DIV/0! in Excel: Division by zero, analogous to a singular matrix inversion [11].

- Warnings about the overlap matrix being singular, non-positive-definite, or having a very high condition number.

Step 2: Confirm the Source is Diffuse Functions

Linear dependency is most pronounced in systems with many atoms and when using large, diffuse basis sets. To confirm:

- Check Basis Set: Are you using an "aug-" (augmented) or "-pp-d" (diffuse) basis set, such as

aug-cc-pVTZordef2-TZVPPD? - Check System Size: The problem is more likely in large molecular systems (>500 atoms) where the diffuse orbitals from distant atoms can linearly depend on each other [2].

- Visualize Sparsity: The one-particle density matrix (1-PDM) becomes significantly less sparse with diffuse basis sets, a key indicator of the problem as shown in Figure 1(c) for

def2-TZVPPD[2].

Step 3: Implement a Solution

Follow this workflow to resolve the issue, starting with the least impactful method:

Step 4: Validate Results

After implementing a fix, you must verify that your results are physically meaningful and sufficiently accurate.

- Check Energy Convergence: Ensure the SCF energy has converged to a stable value.

- Compare Geometries: For geometry optimizations, check that bond lengths and angles are reasonable.

- Benchmark Interaction Energies: If studying non-covalent interactions, compare your results against known benchmark values or higher-level calculations to gauge the accuracy cost of removing diffuse functions. Refer to the accuracy benchmarks in Table 1 [2].

Table 1: Impact of Basis Set Diffuseness on Accuracy and Performance [2] Root mean-square deviations (RMSD) for the ωB97X-V functional on the ASCDB benchmark, referenced to aug-cc-pV6Z. NCI RMSD values highlight the critical need for diffuse functions for non-covalent interactions.

| Basis Set | RMSD (B) kJ/mol | NCI RMSD (B) kJ/mol | NCI RMSD (M+B) kJ/mol | SCF Time (s) |

|---|---|---|---|---|

| def2-SVP | 30.84 | 31.33 | 31.51 | 151 |

| def2-TZVP | 5.50 | 7.75 | 8.20 | 481 |

| def2-TZVPPD | 1.82 | 0.73 | 2.45 | 1440 |

| aug-cc-pVTZ | 3.90 | 1.23 | 2.50 | 2706 |

Table 2: Researcher's Toolkit for Basis Set Management Key computational "reagents" and their roles in managing linear dependency and accuracy.

| Item | Function | Consideration for Linear Dependency |

|---|---|---|

| Compact Basis Set (e.g., def2-SVP) | A basis set without diffuse functions; the starting point for calculations. | Maximizes numerical stability and sparsity of the 1-PDM but sacrifices accuracy for properties like NCIs [2]. |

| Diffuse/Augmented Basis Set (e.g., aug-cc-pVTZ) | A basis set augmented with diffuse functions to better model the electron tail. | Essential for accurate NCIs but is the primary cause of linear dependency in large systems [2]. |

| Integration Grid | Numerical grid used for evaluating integrals in DFT calculations. | A coarse grid can sometimes cause convergence failure; increasing grid size can help before modifying the basis set. |

| CABS Singles Correction | A computational correction applied to recover electron correlation energy. | Can be used with compact basis sets as a potential solution to regain some accuracy lost by removing diffuse functions [2]. |

Experimental Protocol: Basis Set Dependency and Error Analysis

This protocol outlines the steps to systematically quantify the error introduced by removing diffuse functions, using non-covalent interaction energies as a benchmark.

Objective: To determine the trade-off between numerical stability and accuracy when using pruned versus diffuse basis sets for a target molecular system (e.g., a drug fragment interacting with a protein pocket).

Procedure:

- System Selection: Choose a model non-covalent complex relevant to your research (e.g., a substrate in an enzyme active site).

- Geometry Optimization: Optimize the geometry of the complex and its isolated monomers using a medium-sized, stable basis set (e.g.,

def2-SVP). - Single-Point Energy Calculations: Using the optimized geometry, perform single-point energy calculations at a high level of theory (e.g.,

ωB97X-V) with a series of basis sets. The workflow should include:- A large, diffuse reference basis set (e.g.,

aug-cc-pVQZ). - The target diffuse basis set you wish to test (e.g.,

aug-cc-pVTZ). - A pruned version of the target basis set (e.g.,

cc-pVTZ). - A compact basis set (e.g.,

def2-SVP).

- A large, diffuse reference basis set (e.g.,

- Interaction Energy Calculation: For each basis set, calculate the interaction energy (ΔE) as:

ΔE = E(complex) - E(monomer A) - E(monomer B). - Error Analysis: Calculate the absolute error of each method/basis set combination by comparing its ΔE to the ΔE computed with the large reference basis set.

- Stability Check: Document any SCF convergence issues, linear dependency warnings, or other numerical problems encountered with each calculation.

The following diagram illustrates this workflow:

Expected Outcome: The data will show a clear trend: compact basis sets (def2-SVP) are numerically stable but yield high errors in ΔE. As diffuseness increases (cc-pVTZ -> aug-cc-pVTZ), accuracy improves significantly, but the risk of numerical failure (linear dependency) increases, especially for larger systems. This quantitative analysis provides a justified basis for choosing a basis set for production calculations.

Practical Strategies for Removing Diffuse Functions Without Sacrificing Essential Accuracy

A technical guide for computational researchers tackling numerical instability in electronic structure calculations.

This resource provides targeted solutions for researchers encountering the challenge of linear dependence in quantum chemical calculations, a common problem when using diffuse basis sets essential for accurately modeling non-covalent interactions in drug development.

FAQs on Linear Dependence and Diffuse Functions

What is linear dependence in a basis set and why is it a problem?

Linear dependence occurs when one or more basis functions in your set can be expressed as a linear combination of other functions in the same set. This makes the overlap matrix (S) singular or ill-conditioned, preventing the self-consistent field (SCF) procedure from converging and halting your calculation [2].

Why do diffuse functions cause linear dependence?

Diffuse functions have Gaussian exponents with very small values (e.g., 0.0001, 0.0032), giving them a broad spatial distribution. When placed on atoms in molecules, these widespread functions on adjacent centers overlap strongly. This significant overlap leads to near-duplicate mathematical descriptions of the electron cloud, creating linear dependencies in the basis set [12] [2].

How can I identify problematic, highly diffuse functions?

The primary method is to monitor the condition number of your basis set's overlap matrix during a calculation setup. A very high condition number signals ill-conditioning. Problematic functions are typically those with the smallest exponents. The table below lists examples of diffuse exponents identified in recent studies that may require scrutiny [12].

Table 1: Examples of Diffuse Function Exponents from Literature

| Function Type | Exponent Value | Context / Note |

|---|---|---|

| s and p functions | 0.0001 * 2^n |

Example of an even-tempered expansion scheme [12]. |

| s and p functions | 0.0032 or smaller | Recommended smallest exponents for use with aug-cc-pVTZ [12]. |

| d functions | 0.0064 or smaller | Recommended smallest exponents for use with aug-cc-pVTZ [12]. |

| f functions | 0.0064 or smaller | Recommended smallest exponents for use with aug-cc-pVTZ [12]. |

| f functions (for Oxygen) | 0.0512, 0.1024 | Additional "tight" diffuse functions needed for electronegative atoms [12]. |

What is the "conundrum" of diffuse basis sets?

Diffuse basis sets present a "blessing and a curse" [2]. They are a blessing for accuracy because they are absolutely essential for obtaining correct interaction energies, especially for non-covalent interactions like those critical in drug binding [2]. However, they are a curse for sparsity because they drastically reduce the sparsity of the one-particle density matrix (1-PDM), increasing computational cost and memory requirements, and introduce the risk of linear dependence [2].

Troubleshooting Guide: Resolving Linear Dependence

Issue: SCF Convergence Failure Due to Linear Dependence

Symptoms:

- Calculation fails with errors related to the overlap matrix being singular, positive definite, or ill-conditioned.

- SCF procedure oscillates wildly or fails to converge.

Solution 1: Prune the Most Diffuse Functions The most direct fix is to manually remove the basis functions with the smallest exponents, which are the primary culprits.

- Step 1: Identify the basis set file (e.g.,

.nw,.bas,.gbs) you are using for your calculation. - Step 2: Locate the most diffuse functions (those with the smallest exponent values) for each angular momentum type (s, p, d, f).

- Step 3: Create a new, modified basis set file by commenting out or deleting the lines corresponding to these functions. Start by removing the single most diffuse function (smallest exponent) and proceed cautiously.

- Step 4: Re-run your calculation with the pruned basis set. If linear dependence persists, remove the next most diffuse function and iterate.

Table 2: Pros and Cons of Manual Pruning

| Aspect | Manual Pruning |

|---|---|

| Advantage | Direct, transparent control; no "black box" procedures. |

| Disadvantage | Can be tedious and requires trial-and-error; may compromise accuracy if too many functions are removed. |

Solution 2: Use a Pre-Optimized, Robust Basis Set

Instead of manual pruning, use a basis set designed to balance accuracy and numerical stability. For example, the def2-TZVPPD or aug-cc-pVTZ basis sets have been shown to provide well-converged accuracy for non-covalent interactions while being more robust than larger sets [2].

Solution 3: Employ the CABS Singles Correction A more advanced solution is to use a compact basis set (fewer diffuse functions) and correct for the resulting basis set incompleteness error. The Complementary Auxiliary Basis Set (CABS) singles correction can recover a significant portion of the accuracy lost by using a smaller basis set, helping to resolve the conundrum [2].

Experimental Protocol: Systematic Basis Set Evaluation

Objective: To evaluate the impact of progressively removing diffuse functions on the accuracy and stability of a quantum chemical computation.

Materials:

- A molecular system of interest (e.g., a drug fragment or a DNA base pair).

- Computational chemistry software (e.g., NWChem, Gaussian, Psi4, ORCA).

- A standard diffuse basis set (e.g.,

aug-cc-pVTZ).

Methodology:

- Baseline Calculation: Run a single-point energy calculation on your molecular system using the full, unmodified

aug-cc-pVTZbasis set. Record the total energy and successful completion status. - Systematic Pruning: a. Modify the basis set by removing the single most diffuse function (smallest exponent) for one angular momentum type. b. Run the single-point energy calculation again with this pruned basis set. c. Record the total energy, SCF convergence behavior, and any error messages. d. Repeat steps a-c, removing the next most diffuse function each time.

- Data Analysis:

- Plot the computed total energy against the number of diffuse functions removed.

- Note the point at which the calculation first fails due to linear dependence.

- Identify the "sweet spot" where the energy is sufficiently converged (changes minimally with further additions) and the calculation remains stable.

The workflow for this protocol is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Basis Set Management

| Tool / Resource | Function / Purpose |

|---|---|

| Basis Set Exchange (BSE) | A primary online repository to browse, search, and download standard basis sets in formats for all major computational codes [2]. |

| Standard Basis Sets (e.g., def2-X, cc-pVXZ) | Pre-optimized families of basis sets that provide a controlled balance between accuracy and cost. The "X" indicates the level of completeness (e.g., DZ, TZ, QZ) [2]. |

| Augmented/Diffuse Basis Sets (e.g., aug-cc-pVXZ, def2-XPD) | Standard basis sets that have been explicitly augmented with diffuse functions of various angular momenta, making them suitable for modeling non-covalent interactions [2]. |

| Condition Number Analysis | A numerical procedure, often built into quantum chemistry software, that diagnoses the severity of linear dependence in the chosen basis set for a given molecular geometry. |

| CABS Singles Correction | A computational method that corrects for basis set incompleteness, allowing for the use of more compact basis sets while maintaining good accuracy [2]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What does the "ERROR CHOLSK BASIS SET LINEARLY DEPENDENT" mean and what causes it?

This error indicates that the basis set used in your calculation contains functions that are not linearly independent, making the overlap matrix impossible to factorize [13]. This typically occurs when diffuse orbitals with small exponents are present and the atomic geometry brings these orbitals too close together [13].

Q2: How does the LDREMO keyword resolve linear dependency issues?

The LDREMO keyword systematically removes linearly dependent functions by diagonalizing the overlap matrix in reciprocal space before the SCF step [13]. It excludes basis functions corresponding to eigenvalues below a specified threshold (integer value × 10⁻⁵) [13].

Q3: Can I use LDREMO with parallel processing?

The LDREMO function removal information is only available in serial mode (single process) [13]. While calculations may run in parallel, you might need to switch to serial execution to diagnose LDREMO-related issues if your parallel job aborts without clear error messages [13].

Q4: What should I do if I encounter an "ILA DIMENSION EXCEEDED" error after implementing LDREMO?

This error is unrelated to linear dependency and indicates the system size requires increasing the ILASIZE parameter [13]. Consult your software documentation (e.g., CRYSTAL user manual, page 117) to adjust this dimension [13].

Q5: Are there functional and basis set combinations where modifying basis sets is not recommended?

Yes, composite methods like B973C are specifically designed for use with the mTZVP basis set [13]. Modifying such basis sets can introduce errors, and these combinations were primarily developed for molecular systems or molecular crystals, not bulk materials [13].

Troubleshooting Guide: LDREMO Implementation

Problem: Calculation fails with "ERROR * CHOLSK * BASIS SET LINEARLY DEPENDENT"

Diagnosis and Resolution Path:

Step-by-Step Resolution Protocol:

Initial Assessment: Confirm the basis set contains diffuse functions (exponents <0.1) that typically cause this issue [13].

Primary Intervention: Add

LDREMO 4to your input file below the SHRINK keyword. This removes functions with eigenvalues <4×10⁻⁵ [13].Verification Step: Execute in serial mode to confirm the excluded basis functions are properly identified in the output [13].

Progressive Escalation: If linear dependency persists, gradually increase the threshold (e.g.,

LDREMO 8) to remove more functions [13].Alternative Approach: For composite methods with optimized basis sets (e.g., B973C/mTZVP), consider switching to a different functional/basis set combination rather than modifying the basis [13].

Experimental Protocol for Systematic Function Removal

Objective: Implement and validate the LDREMO keyword for removing linearly dependent basis functions in electronic structure calculations.

Methodology:

Input File Modification:

- Insert

LDREMO <integer>in the third section of the input file - Position below SHRINK keyword

- Begin with integer value 4

- Insert

Execution Parameters:

- Initial run: Serial execution mode

- Monitor output for removed function information

- Subsequent runs: Parallel execution if supported

Threshold Optimization:

- Systematic evaluation of integer values (4, 6, 8, 10)

- Documentation of eigenvalues for removed functions

- Energy convergence monitoring

Validation Metrics:

- Successful factorization of overlap matrix

- Maintenance of calculation accuracy

- Acceptable convergence behavior

Research Reagent Solutions

Table: Computational Components for Linear Dependency Resolution

| Component | Function | Implementation Notes |

|---|---|---|

| LDREMO Keyword | Systematically removes linearly dependent basis functions | Threshold = integer × 10⁻⁵; Start value = 4 [13] |

| B973C Functional | Composite method with built-in corrections | Requires specific mTZVP basis set; not recommended for modification [13] |

| mTZVP Basis Set | Molecular triple-zeta valence polarization basis | Contains diffuse functions that may cause linear dependence [13] |

| Serial Execution | Diagnostic mode for function removal verification | Essential for viewing LDREMO exclusion information [13] |

Linear Dependency Resolution Workflow

Table: LDREMO Parameter Optimization Guide

| Threshold | Eigenvalue Cutoff | Aggressiveness | Typical Use Case |

|---|---|---|---|

| 4 | 4×10⁻⁵ | Conservative | Initial attempt; minor dependencies |

| 6 | 6×10⁻⁵ | Moderate | Persistent linear dependence |

| 8 | 8×10⁻⁵ | Aggressive | Strong dependencies; complex systems |

| 10 | 10×10⁻⁵ | Very aggressive | Last resort before basis set change |

Frequently Asked Questions (FAQs)

Q1: I need accurate interaction energies for my drug-like molecule but my calculations with a large, diffuse basis set keep failing to converge. What is a reliable alternative?

A1: Consider using a minimally-augmented basis set like ma-def2-TZVPP or applying a basis set extrapolation scheme. Diffuse functions, while often important for describing weak interactions, can cause SCF convergence issues and even increase basis set superposition error (BSSE) in some cases [14]. The ma-def2 series (minimally-augmented) is specifically designed for density functional theory (DFT) calculations of weak interactions, providing a good balance of accuracy and stability [14] [15]. Alternatively, basis set extrapolation from smaller basis sets can closely reproduce the results of more demanding calculations [14].

Q2: My project involves screening a large library of compounds. Are double-ζ basis sets ever acceptable for production-level DFT calculations?

A2: Yes, but the choice of double-ζ basis set is critical. Conventional double-ζ basis sets like 6-31G or def2-SVP can have substantial BSSE and basis set incompleteness error (BSIE) [4]. However, the recently developed vDZP basis set is designed to minimize these errors and has been shown to deliver accuracy close to triple-ζ levels for a wide variety of density functionals without system-specific reparameterization [4]. This makes it an excellent choice for efficient and accurate high-throughput screening.

Q3: How can I obtain a result close to the complete basis set (CBS) limit without the cost of a quadruple-ζ calculation?

A3: A two-point basis set extrapolation is an effective and established strategy. You can perform calculations with two basis sets of different qualities (e.g., def2-SVP and def2-TZVPP) and then extrapolate the energy to the CBS limit. For the B3LYP-D3(BJ) functional, using an exponential-square-root formula with an optimized exponent parameter (α) of 5.674 has been demonstrated to yield results comparable to more expensive CP-corrected calculations [14]. The formula for the extrapolation is:

E_CBS = (E_X * e^(-α*√X) - E_Y * e^(-α*√Y)) / (e^(-α*√X) - e^(-α*√Y))

where X and Y are the cardinal numbers of the two basis sets (e.g., 2 for double-ζ, 3 for triple-ζ) [14].

Troubleshooting Guides

Problem: SCF Convergence Failure with Large, Diffuse Basis Sets

Issue: Your self-consistent field (SCF) calculation fails to converge when using a fully augmented basis set (e.g., aug-cc-pVTZ).

Solution:

- Switch to a minimally-augmented basis set. Replace

def2-TZVPPwithma-def2-TZVPP[14] [15]. These basis sets add a minimal number of diffuse functions to mitigate linear dependence issues, which is often the root cause of convergence failures. - Verify the basis set availability. In your input file, ensure the basis set is specified correctly and is available for all elements in your system. The ORCA manual provides a complete list of built-in basis sets [15].

Problem: Inaccurate Weak Interaction Energies with a Small Basis Set Issue: The interaction energy you calculated for a host-guest complex or protein-ligand system is inaccurate due to using a small double-ζ basis set. Solution:

- Adopt an optimized modern basis set. Use the

vDZPbasis set, which is explicitly designed to reduce BSSE and BSIE, pathologies common in small basis sets [4]. - Apply a basis set extrapolation protocol. If a triple-ζ calculation is feasible, perform a two-point extrapolation from

def2-SVPanddef2-TZVPPusing the optimized parameter (α = 5.674 for B3LYP-D3(BJ)) [14]. This protocol has been validated on supramolecular systems containing up to 205 atoms. - Apply Counterpoise (CP) correction. For conventional double-ζ basis sets, CP correction is considered mandatory for reliable interaction energies. Its benefit becomes less critical with triple-ζ basis sets and is often negligible with quadruple-ζ sets [14].

Experimental Protocols

Protocol 1: Basis Set Extrapolation for Weak Interaction Energies

This protocol outlines the steps to accurately calculate weak interaction energies using a basis set extrapolation technique, providing an alternative to large, diffuse basis sets [14].

- Objective: To compute the CBS limit of interaction energy using a two-point extrapolation from

def2-SVPanddef2-TZVPPbasis sets. - Software Requirement: A quantum chemistry package capable of single-point energy calculations (e.g., ORCA).

- Procedure:

- Geometry Preparation: Obtain the geometry of the complex (AB) and the isolated monomers (A and B). Ensure the monomer geometries are extracted directly from the complex without further optimization (the "supermolecular method").

- Single-Point Calculations:

- Calculate the energy of the complex,

E(AB), using thedef2-SVPbasis set. - Calculate the energy of monomer A,

E(A), using thedef2-SVPbasis set. - Calculate the energy of monomer B,

E(B), using thedef2-SVPbasis set. - Repeat all three calculations using the

def2-TZVPPbasis set.

- Calculate the energy of the complex,

- Compute Raw Interaction Energies:

- For each basis set (def2-SVP and def2-TZVPP), calculate the uncorrected interaction energy:

ΔE = E(AB) - E(A) - E(B).

- For each basis set (def2-SVP and def2-TZVPP), calculate the uncorrected interaction energy:

- Perform Two-Point Extrapolation:

- Use the exponential-square-root formula with α = 5.674.

- Let

E_2be the interaction energy fromdef2-SVP(cardinal number X=2). - Let

E_3be the interaction energy fromdef2-TZVPP(cardinal number X=3). - Calculate the extrapolated CBS energy:

E_CBS = (E_3 * e^(-5.674*√3) - E_2 * e^(-5.674*√2)) / (e^(-5.674*√3) - e^(-5.674*√2))

Protocol 2: Efficient Energy Calculations using the vDZP Basis Set

This protocol describes how to use the vDZP basis set for efficient and accurate single-point energy calculations on medium to large molecular systems [4].

- Objective: To perform a single-point energy calculation with a low-cost basis set that minimizes common errors.

- Software Requirement: Psi4 or another quantum chemistry package that supports the

vDZPbasis set. - Procedure:

- Geometry Input: Provide a valid molecular geometry in the software's required format (e.g., Z-matrix, XYZ).

- Basis Set Specification: Set the orbital basis set to

vDZP. - Functional and Dispersion: Choose a density functional (e.g., B97-D3BJ, r2SCAN-D4, B3LYP-D4) and ensure an appropriate empirical dispersion correction (D3 or D4) is applied.

- Calculation Settings: It is recommended to use a fine integration grid (e.g., (99,590)) and a tight integral tolerance (e.g., 10⁻¹⁴) for improved accuracy [4].

- Run Calculation: Execute the single-point energy computation.

Quantitative Data for Basis Set Selection

Table 1: Performance Comparison of Selected Basis Sets on the GMTKN55 Thermochemistry Benchmark (Weighted Total Mean Absolute Deviation, WTMAD2) [4]

| Basis Set | ζ-quality | B97-D3BJ/vDZP | r2SCAN-D4/vDZP | B3LYP-D4/vDZP | M06-2X/vDZP |

|---|---|---|---|---|---|

| vDZP | Double | 9.56 | 8.34 | 7.87 | 7.13 |

| def2-SVP | Double | 12.90 | 11.16 | 10.72 | 9.49 |

| 6-31G(d) | Double | 18.77 | 15.90 | 15.20 | 13.83 |

| def2-QZVP | Quadruple | 8.42 | 7.45 | 6.42 | 5.68 |

Table 2: Basis Set Extrapolation Parameters for DFT (B3LYP-D3(BJ)) [14]

| Extrapolation Pair | Optimized α | Mean Absolute Error (kcal/mol) | Max Absolute Error (kcal/mol) |

|---|---|---|---|

| def2-SVP → def2-TZVPP | 5.674 | 0.19 | 0.83 |

Research Reagent Solutions

Table 3: Essential Computational Tools for Basis Set Studies

| Item / Software | Function / Purpose |

|---|---|

| ORCA | A quantum chemistry program with a comprehensive suite of built-in basis sets and functionalities for energy calculations and extrapolation [15]. |

| Psi4 | An open-source quantum chemistry software used for benchmarking and developing new methods, including support for the vDZP basis set [4]. |

| def2 Family Basis Sets | A widely used series of basis sets (e.g., SVP, TZVP, TZVPP) of varying quality, available for most elements, facilitating systematic studies [14] [15]. |

| vDZP Basis Set | A modern double-ζ basis set designed with deeply contracted valence functions and effective core potentials to minimize BSSE and BSIE, enabling fast, accurate calculations [4]. |

| GMTKN55 Database | A benchmark suite of 55 chemical datasets used to rigorously evaluate the general accuracy of quantum chemical methods across a wide range of properties [4]. |

Workflow and Relationship Diagrams

Basis Set Selection Strategy

Basis Set Extrapolation Workflow

Frequently Asked Questions

What does the "BASIS SET LINEARLY DEPENDENT" error mean? This error occurs when the basis functions in your calculation are not all independent of one another. In essence, one or more basis functions can be represented as a linear combination of others. This mathematical linear dependence causes the overlap matrix to become singular (non-invertible), which halts the calculation [13].

Why would a pre-defined, built-in basis set cause this error? Even built-in basis sets, which are often optimized for molecular systems, can cause this error in extended systems like crystals or surfaces. This is primarily due to the presence of diffuse functions with small exponents. In periodic systems, where atomic orbitals are closer together, these diffuse functions can overlap significantly, leading to linear dependence. A basis set that works for one geometry might fail for another where atoms are in closer proximity [13].

Is it safe to modify a built-in basis set? Proceed with caution. Modifying a built-in set can introduce errors, especially if the set is part of a composite method (like the B973C functional with the mTZVP basis) where they were developed and optimized together. If your system is a bulk material rather than a molecule or molecular crystal, it is often better to choose a different, more suitable functional and basis set pair from the start rather than modifying an ill-suited one [13].

What is the LDREMO keyword and how do I use it?

The LDREMO keyword is a systematic way to remove linearly dependent functions before the SCF step. It works by diagonalizing the overlap matrix in reciprocal space and removing basis functions corresponding to eigenvalues below a defined threshold [13].

The syntax in your CRYSTAL input file is:

The <integer> value sets the threshold to <integer> × 10⁻⁵. A good starting value is 4. Note: This feature currently only works in serial mode (running with a single process) [13].

Troubleshooting Guide

Initial Diagnosis

When you encounter a linear dependence error, your first step is to identify the likely cause. The following flowchart outlines the diagnostic process and potential solutions.

Detailed Experimental Protocols

Protocol 1: Using the LDREMO Keyword

This method is preferred for its systematic approach and is less prone to user error.

- Modify Input File: In your CRYSTAL input file, locate the third section (after the geometry and basis set definitions). Below the

SHRINKkeyword, add the following lines: - Run in Serial Mode: Execute your CRYSTAL calculation using a single processor. Parallel runs may not output the necessary diagnostic information and can fail silently [13].

- Check Output: The output file will list the basis functions that have been excluded. If the error persists, gradually increase the integer value (e.g., to 5 or 6) to remove more functions.

- Troubleshoot

ILASIZE: If usingLDREMOleads to an "ILA DIMENSION EXCEEDED" error, you must increase theILASIZEparameter in your input file as specified in the CRYSTAL user manual [13].

Protocol 2: Manual Removal of Diffuse Functions

This hands-on approach gives you direct control but requires careful editing of the basis set.

- Identify Diffuse Functions: In your basis set definition, locate the shells (s, p, d) for each atom type. Identify the functions with the smallest exponent values (typically less than 0.1). These are the diffuse functions most likely causing the issue [13].

- Edit the Basis Set: Remove the entire shell (the line with the number of primitives and the subsequent lines of exponents and contraction coefficients) corresponding to the identified diffuse functions.

- Test the Calculation: Run the calculation with the modified basis set. Be aware that this modification may affect the accuracy of your results, as you are altering the basis.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational "reagents" and concepts essential for understanding and resolving basis set linear dependence.

| Item Name | Function & Explanation |

|---|---|

| Basis Set | A set of mathematical functions (atomic orbitals) used to represent the electronic wavefunction in quantum chemical calculations. It is the fundamental "reagent" for the experiment. |

| Diffuse Functions | Basis functions with small exponents that are spatially extended. They are important for describing electrons far from the nucleus but are the primary cause of linear dependence in periodic systems [13]. |

| Overlap Matrix | A matrix representing the overlap between different basis functions in the system. Its invertibility is crucial for the calculation, and linear dependence prevents this. |

LDREMO Keyword |

A computational tool that automatically diagnoses and removes linearly dependent basis functions by analyzing the eigenvalues of the overlap matrix [13]. |

ILASIZE Parameter |

An internal memory parameter in CRYSTAL that may need to be increased when using LDREMO on larger systems to avoid dimension-related errors [13]. |

| Composite Method (e.g., B973C) | A pre-defined combination of a functional and a basis set (e.g., B973C/mTZVP) that is optimized to work together. Modifying the basis set in such a pair is not recommended [13]. |

Technical Support & Troubleshooting Hub

Frequently Asked Questions (FAQs)

Q1: What is "diffuse function removal" in the context of DNA fragment systems, and why is it critical? In DNA biochemistry, "diffuse function" can refer to the non-specific binding and activity of proteins or enzymes on non-target DNA sequences, which can interfere with the intended, specific function. Its removal—the process of eliminating these non-specific interactions or contaminants—is critical for achieving clean experimental results. For instance, in the preparation of pure circular DNA for expression vectors, the removal of linear DNA fragments (a contaminant) is essential because linear DNA is highly susceptible to degradation by exonucleases in the cytoplasm, whereas circular DNA is stable and replicatively competent [16]. Failure to remove this "diffuse" linear DNA can lead to failed transformations, inefficient transfection, and ambiguous data.

Q2: My enzymatic purification of circular DNA is inefficient, and I suspect linear DNA contaminants persist. What could be wrong? Several factors in the enzymatic digestion step could be at fault:

- Incorrect Enzyme Ratio or Units: The protocol may require optimization of the units of λ exonuclease and RecJf used relative to the amount of linear DNA contaminant [17].

- Incomplete Digestion Time: The incubation period of 16 hours at 37°C might be insufficient for your specific DNA preparation. Scaling up DNA quantity requires scaling up digestion time or enzyme units [17].

- Enzyme Inactivation: Ensure the enzymes are stored and handled correctly to prevent loss of activity. The reaction buffer conditions (1X λ exonuclease buffer) must be precisely followed [17].

Q3: After attempting to create nicked-circular DNA from a supercoiled plasmid, I see a significant amount of linear DNA on my gel. How can I fix this? The formation of linear DNA is a known side reaction during enzymatic nicking of supercoiled DNA, caused by double-strand breaks at the restriction site. To obtain pure nicked-circular DNA, you must actively remove the linear byproduct. Applying a post-nicking enzymatic cleanup step with λ exonuclease and RecJf is an effective solution. This combination will selectively digest the linear DNA fragments while leaving the nicked-circular DNA intact [17].

Q4: How does the phenomenon of "facilitated diffusion" relate to the purification of specific DNA-protein complexes? Facilitated diffusion is the process by which DNA-binding proteins like repair glycosylases (e.g., NEIL1) or transcription factors rapidly locate their specific target sites by combining three-dimensional diffusion with one-dimensional sliding or hopping along the DNA strand [18]. This process creates a "diffuse function" challenge: the protein spends most of its time non-specifically bound to and scanning non-target DNA. In a purified system, if your goal is to study only the specific protein-lesion complex, this non-specific binding represents a contaminating population. Understanding the kinetics of this process (e.g., the dissociation time of non-specific complexes, ~8 seconds for NEIL1) is essential for designing experiments, such as wash steps in pull-down assays, to remove these non-specifically bound proteins and avoid linear dependency in your binding data [19].

Troubleshooting Guide

| Problem | Potential Cause | Solution |

|---|---|---|

| Low yield of circular DNA after ligation | DNA fragment length is outside optimal range; short ligation time | Use linear dsDNA fragments between 450-950 bp for highest efficiency. Extend ligation duration, with 1 hour as a practical minimum [16]. |

| Persistent linear DNA contaminants in circular DNA preps | Inefficient enzymatic digestion; large scale of preparation | Treat DNA mixture with λ exonuclease (5 units) and RecJf (90 units) in 100 µL reaction volume. Incubate at 37°C for 16 hours [17]. |

| High mosaicism in transgenic models | DNA concentration toxicity; microinjection into pronucleus | For pronuclear microinjection, optimize DNA concentration to 1-3 ng/µL. Use linearized DNA fragments with dissimilar ends for higher integration efficiency [20]. |

| Biphasic kinetics in lesion excision assays | Competing non-specific protein binding to unmodified DNA | Account for facilitated diffusion. Under single-turnover conditions, the slow kinetic phase represents dissociation of non-specific complexes (τ~8 s for NEIL1) [19]. |

| Highly restricted DNA diffusion in nucleus | DNA fragment size too large; binding to immobile obstacles | For studies requiring nuclear mobility, use DNA fragments <250 bp. Fragments >2000 bp are nearly immobile in the nucleoplasm [21]. |

The following tables consolidate key quantitative findings from the research, providing a quick reference for experimental design.

Table 1: DNA Size-Dependent Properties and Reaction Yields

| Parameter | Size / Condition | Quantitative Value | Reference / Context |

|---|---|---|---|

| Optimal Circular Vector Length | 450 - 950 bp | Relative yield up to 62% | [16] |

| Diffusion in Water (Dw) | 21 bp | 53 × 10-8 cm²/s | [21] |

| 6000 bp | 0.81 × 10-8 cm²/s | [21] | |

| Diffusion in Cytoplasm (Dcyto/Dw) | 100 bp | 0.19 | [21] |

| 250 bp | 0.06 | [21] | |

| >2000 bp | <0.01 | [21] | |

| Molar Fraction of Single-Unit Circular Vector | 1 hr ligation (450-950 bp) | Band 1 (Monomer): ~70% | [16] |

Table 2: Protein-DNA Interaction Kinetics and Specificity

| Protein | Parameter | Value | Experimental Context |

|---|---|---|---|

| NEIL1 (Glycosylase) | Non-specific complex dissociation time (τ-ns) | ~8 s | Single Sp lesion excision in plasmid [19] |

| Effective translocation distance | ~80 bp | Facilitated diffusion on DNA [19] | |

| Fraction of productive encounters (φ) | ~0.03 | Single Sp lesion excision in plasmid [19] | |

| XPA (Damage Recognition) | KD for AAF-damaged DNA | 109 ± 5 nM | EMSA with 37 bp duplex [22] |

| KD for non-damaged DNA | 253 ± 14 nM | EMSA with 37 bp duplex [22] | |

| Specificity for damage (dG-C8-AAF) | ~85-fold | Accounted for non-specific binding [22] |

Experimental Protocol: Enzymatic Removal of Linear DNA Contaminants

This protocol details a method for the selective removal of linear DNA from a mixture containing supercoiled or nicked-circular plasmid DNA, using a combination of λ exonuclease and RecJf [17].

Key Principle: λ exonuclease processively digests one strand of linear double-stranded DNA from the 5' to 3' direction. The resulting single-stranded DNA is then completely digested into mononucleotides by the single-strand-specific exonuclease RecJf. Critically, λ exonuclease cannot initiate digestion at nicks or gaps, leaving nicked-circular and supercoiled DNA intact [17].

Materials & Reagents

- DNA Sample: Mixture of supercoiled/nicked-circular and linear plasmid DNA.

- Enzymes: λ exonuclease and RecJf (commercially available, e.g., New England Biolabs).

- Buffers: 1X λ exonuclease reaction buffer.

- Purification Reagents: Phenol (pH >7.5), chloroform:isoamyl alcohol (24:1), ethanol.

- Equipment: Thermostatic water bath or heat block set to 37°C.

Step-by-Step Procedure

- Setup Reaction Mixture: In a microcentrifuge tube, combine:

- DNA mixture (e.g., 3.7 µg supercoiled + 3.3 µg linear).

- 1X λ exonuclease buffer.

- λ exonuclease (1 µL, 5 units/µL).

- RecJf (3 µL, 30 units/µL).

- Add nuclease-free water to a final volume of 100 µL.

- Incubation: Incubate the reaction mixture at 37°C for 16 hours (overnight).

- Enzyme Inactivation: Heat-inactivate the λ exonuclease by transferring the tube to 65°C for 10 minutes.

- Purification:

- Extract the reaction mixture with an equal volume of phenol and then with chloroform:isoamyl alcohol to remove proteins.

- Precipitate the purified DNA from the aqueous phase using ethanol.

- Resuspend the purified DNA pellet in an appropriate buffer (e.g., 1X PBS or TE buffer).

- Analysis: Evaluate the success of the digestion by analyzing an aliquot of the DNA sample before and after treatment using 1% agarose gel electrophoresis. The band corresponding to linear DNA should be completely absent post-digestion.

Experimental Workflow Visualizations

Diagram 1: Linear DNA Contaminant Removal Workflow

Diagram 2: Protein Facilitated Diffusion on DNA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for DNA Fragment Manipulation and Study

| Reagent / Tool | Function / Application | Key Characteristics |

|---|---|---|

| λ Exonuclease | Selective digestion of one strand of linear dsDNA. | Processive 5'→3' exonuclease; cannot initiate at nicks [17]. |

| RecJf Exonuclease | Digests the complementary ssDNA strand into nucleotides. | Single-strand-specific 5'→3' exonuclease; works synergistically with λ exonuclease [17]. |

| Covalently Closed Circular Plasmid | Stable expression vector for transfection; model substrate for repair studies. | Resistant to cytoplasmic exonuclease degradation [16] [19]. |

| Site-specific Lesion-containing DNA (e.g., Sp) | Defined substrate for studying DNA repair enzyme kinetics. | Allows precise measurement of excision rates and facilitated diffusion parameters [19]. |

| DNA Glycosylase (e.g., NEIL1) | Bifunctional enzyme for initiating Base Excision Repair (BER). | Excises oxidized bases via combined glycosylase/lyase activity; model for studying facilitated diffusion [19]. |

| Restriction Enzyme (e.g., EcoRI) + Ethidium Bromide | Generation of nicked-circular DNA from supercoiled plasmid. | Intercalation by EtBr causes enzyme to nick only one strand at its recognition site [17]. |

Advanced Troubleshooting: Resolving Persistent Linear Dependence and Associated Errors

A technical support guide for computational researchers

This guide provides targeted support for researchers facing the "ILASIZE limitation" error when using the LDREMO (Linear Dependency REMOval) procedure in computational chemistry software. This error typically occurs when diffuse functions in a basis set create near-linear dependencies, overwhelming the matrix conditioning algorithms.

Troubleshooting Guide

Problem: "ILASIZE Limit Exceeded" Error after LDREMO Execution

Error Signature: