Quantitative vs Qualitative Environmental Analysis: A Strategic Guide for Biomedical Research

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic application of quantitative and qualitative environmental analysis.

Quantitative vs Qualitative Environmental Analysis: A Strategic Guide for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic application of quantitative and qualitative environmental analysis. It explores the foundational principles of both methodologies, detailing their specific applications in areas like exposure assessment and analytical chemistry. The content further addresses troubleshooting common challenges, optimizing data quality, and validating methods using modern frameworks like White Analytical Chemistry (WAC). By comparing the strengths and limitations of each approach, this guide empowers professionals to design more robust, sustainable, and impactful research studies in biomedical and clinical contexts.

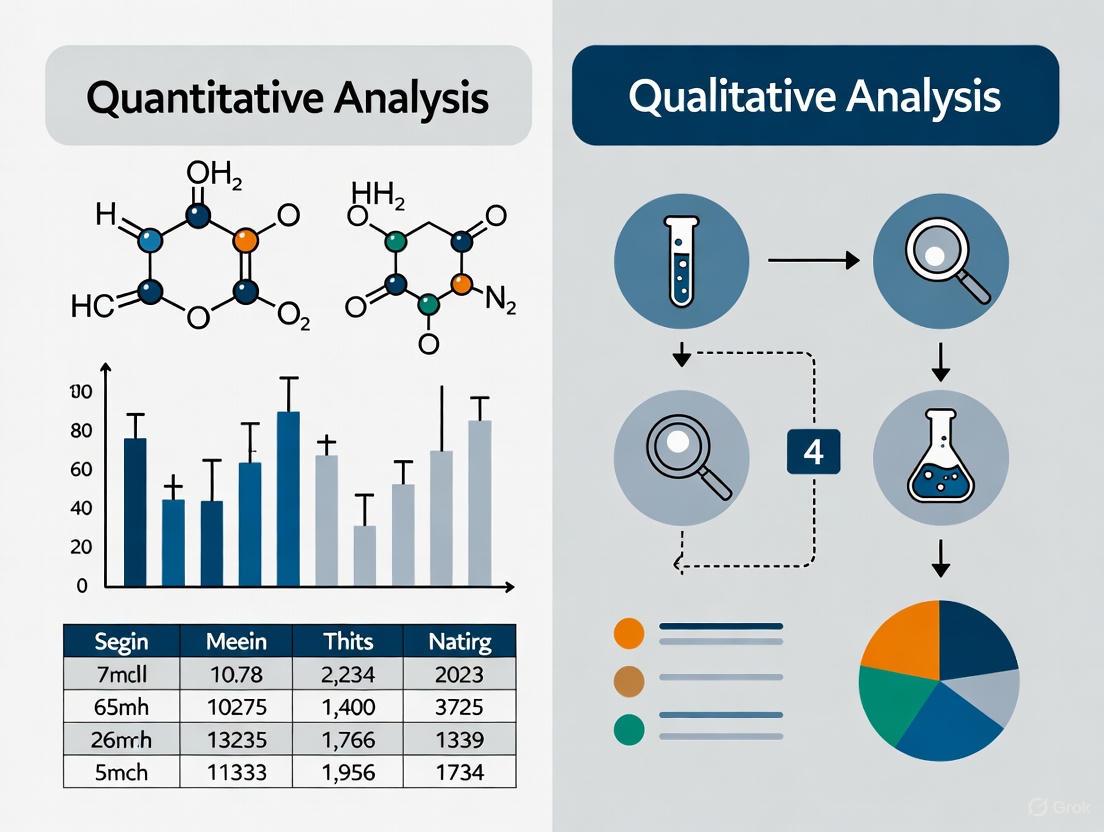

Core Principles: When to Use Qualitative vs Quantitative Analysis in Environmental Science

In environmental science, the pursuit of knowledge is guided by two distinct yet complementary paradigms: objective measurement and subjective understanding. The positivist approach of objective measurement seeks to quantify environmental realities through observable, consistent facts independent of human cognition [1]. In contrast, the interpretivist approach of subjective understanding explores how people perceive, experience, and ascribe meaning to their environmental surroundings, recognizing that reality is filtered through human interpretation [1].

This guide provides a structured comparison of these paradigms, detailing their methodological applications, experimental protocols, and appropriate contexts within environmental and public health research. We present synthesized quantitative data, standardized workflows, and essential research tools to equip scientists and drug development professionals with a comprehensive framework for selecting and implementing these approaches in their investigative work.

Core Definitions and Characteristics

Objective Measurement

Objective assessment uses clearly defined, standardized criteria to measure knowledge, skills, or performance through methods that yield a single correct answer or fixed scoring system [2]. This approach ensures consistency, fairness, and minimal bias across all participants or data points [3] [2].

Key Characteristics:

- Quantitative Focus: Deals with numerical data that is easily evaluated and measured [4].

- Fact-Based: Relies on information obtained through established facts or resources [4].

- Consistency: Results remain stable and predictable across different contexts and researchers [2].

- Verifiability: Data can be consistently verified through transparent methodologies and replication [4].

Subjective Understanding

Subjective assessment relies on open-ended responses and evaluator judgment to interpret reasoning, creativity, and complex thought processes [2]. It explores qualitative aspects of human experience that cannot be reduced to single answers [3].

Key Characteristics:

- Qualitative Focus: Concerned with interpretation-based data that explains why or how phenomena occur [4].

- Experience-Based: Derived from feelings, opinions, experiences, and personal thoughts [4].

- Context-Dependent: Findings may vary based on individual perspectives and situational factors [3].

- Interpretive Nature: Requires researcher interpretation to make sense of multifaceted responses [2].

Comparative Analysis: Applications and Limitations

Table 1: Comparative Analysis of Objective and Subjective Approaches

| Aspect | Objective Measurement | Subjective Understanding |

|---|---|---|

| Primary Data Type | Quantitative [4] | Qualitative [4] |

| Data Collection Methods | Multiple-choice questions, true/false, fill-in-the-blank, matching, GIS/GPS data, accelerometry [3] [2] [5] | Interviews (one-on-one, group), essays, observations, document analysis, focus groups [3] [2] [1] |

| Analysis Approach | Statistical analysis, standardized scoring, algorithmic processing [2] [5] | Thematic analysis, interpretation, pattern identification, narrative description [1] |

| Key Strengths | High reliability, consistency, scalability, efficient for large datasets, minimizes bias through standardization [3] [2] | Captures complex reasoning, explores underlying motivations, contextual richness, adapts to emerging themes [3] [2] |

| Principal Limitations | May overlook deeper conceptual understanding, restricts personal expression, limited capacity to capture creative thinking [2] | Time-intensive analysis, potential scoring inconsistency, vulnerable to various biases, challenging with large samples [3] [2] |

| Ideal Application Contexts | Testing factual knowledge, measuring specific physical parameters, large-scale studies, standardized assessments [3] [6] | Exploring perceptions, understanding complex behaviors, investigating social dimensions of environmental issues [7] [1] |

Table 2: Environmental Research Applications and Findings

| Research Domain | Objective Approach | Subjective Approach | Key Findings |

|---|---|---|---|

| Built Environment & Physical Activity | GIS-derived neighborhood measures, accelerometry [6] [5] | Self-reported perceptions of environment, interview responses [6] | Objective measures showed stronger associations with walking behaviors than subjective perceptions in comparative studies [6] |

| Environmental Health Exposures | Physical/chemical exposure monitoring, biological sampling [1] | Lay perception studies, community narratives, focus groups [1] | Qualitative data improve understanding of complex exposure pathways, including social factor influences [1] |

| Sustainability Behaviors | Resource consumption metrics, ecological footprint calculations | Surveys on environmental attitudes, values, and behavioral intentions [7] | Integrating well-being and behavior research helps anticipate behavioral responses to environmental policies [7] |

| Environmental Governance | Satellite imagery, regulatory compliance data | Q-methodology to identify stakeholder perspectives [8] | Mixed-methods reveal diverse social perspectives on resource management while highlighting methodological reporting gaps [8] |

Experimental Protocols and Methodological Standards

Objective Measurement Protocol: Built Environment and Physical Activity

Overview: This protocol details the objective assessment of relationships between built environment features and physical activity levels using Geographic Information Systems (GIS) and accelerometry [5].

Step-by-Step Workflow:

Participant Recruitment and Sampling:

- Recruit adult participants (≥18 years) without disabilities or long-term health conditions that significantly limit physical activity [5].

- Collect demographic information including age, gender, socioeconomic status, and ethnicity, ensuring diverse representation [5].

- Obtain informed consent and ethical approval following institutional guidelines.

Geocoding Participant Locations:

Built Environment Data Collection:

- Source objective built environment data from standardized databases, including:

- Land use mix (diversity of residential, commercial, institutional uses)

- Street connectivity (intersection density, street network design)

- Access to destinations (parks, recreational facilities, retail)

- Neighborhood walkability (composite indices) [5]

- Process data using consistent, reproducible GIS methodologies.

- Source objective built environment data from standardized databases, including:

Physical Activity Measurement:

- Utilize accelerometers (e.g., ActiGraph, Axivity) to objectively measure physical activity intensity and duration [5].

- Instruct participants to wear devices during waking hours for a minimum of 4-7 days, including weekdays and weekends [5].

- Apply standardized data processing protocols to categorize activity into intensity levels (sedentary, light, moderate, vigorous) [5].

Data Integration and Statistical Analysis:

- Merge built environment metrics with physical activity data using unique participant identifiers.

- Employ multivariate regression models adjusting for demographic covariates.

- Calculate association strengths using odds ratios, p-values, and model fit statistics [6].

Subjective Understanding Protocol: Qualitative Environmental Health Research

Overview: This protocol employs qualitative methods to understand how people perceive, interpret, and experience environmental health issues, providing depth and context to quantitative findings [1].

Step-by-Step Workflow:

Research Design and Question Formulation:

- Develop open-ended research questions focused on understanding perceptions, experiences, and beliefs about environmental exposures [1].

- Select appropriate qualitative methodologies (e.g., ethnography, phenomenology, grounded theory) based on research aims.

- Practice reflexivity by explicitly considering and documenting researchers' theoretical perspectives and potential biases [1].

Participant Selection and Recruitment:

- Use purposive sampling to identify information-rich participants who have experienced the environmental phenomenon under study [1].

- Consider theoretical sampling to develop emerging categories in iterative research designs.

- Continue recruitment until reaching theoretical saturation (no new themes emerge from additional data).

Data Collection Procedures:

- Conduct one-on-one interviews using open-ended questions that allow participants to express their perspectives without predetermined response categories [1].

- Consider supplementary methods such as focus groups, participant observation, or document analysis to triangulate findings.

- Audio-record and professionally transcribe interviews verbatim to preserve data integrity.

Qualitative Data Analysis:

- Immerse in data through repeated reading of transcripts to gain familiarity with content.

- Apply systematic coding procedures to identify key concepts, themes, and patterns.

- Use constant comparative analysis to refine categories and explore relationships between themes.

- Support interpretations with direct participant quotes and narrative descriptions in research outputs [1].

Theoretical Development and Validation:

- Develop theoretical explanations that connect findings to broader constructs in environmental health.

- Implement member checking by returning preliminary findings to participants for verification.

- Document analytical decisions and methodological transparency to establish trustworthiness.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Tools for Environmental Data Collection and Analysis

| Tool/Reagent | Primary Function | Research Application |

|---|---|---|

| Accelerometers (ActiGraph, Axivity) | Objective measurement of physical activity intensity, duration, and frequency through motion sensors [5] | Quantifying moderate-to-vigorous physical activity levels in built environment studies; validating self-reported activity data [5] |

| Geographic Information Systems (GIS) | Spatial analysis and mapping of environmental features; calculation of built environment metrics around specific locations [5] | Measuring walkability indices, land use mix, green space access, and destination density in neighborhood studies [6] [5] |

| Global Positioning System (GPS) Devices | Precise location tracking to document environmental exposures and activity spaces in real-time [5] | Linking specific geographical locations with physical activity patterns; identifying frequently visited destinations [5] |

| Qualitative Interview Guides | Structured protocols with open-ended questions to explore participant experiences and perceptions [1] | Investigating lay understandings of environmental health risks; exploring community concerns about local contamination [1] |

| Q-Methodology Sets | Structured sorting of statements to identify shared perspectives and subjective viewpoints [8] | Measuring social perspectives on sustainability issues, environmental governance, and resource management conflicts [8] |

| Audio Recording Equipment | High-fidelity capture of interview and focus group discussions for accurate transcription and analysis [1] | Preserving nuanced participant responses, emotional tones, and contextual details in qualitative data collection [1] |

Integration and Complementary Applications

The most robust environmental research often integrates both objective measurement and subjective understanding through mixed-methods designs [1]. This triangulation approach leverages the strengths of each paradigm while mitigating their respective limitations.

Successful Integration Strategies:

Sequential Explanatory Design: Objective measures identify patterns or relationships, followed by qualitative methods to explain the underlying mechanisms and contextual factors [1].

Convergent Parallel Design: Independent collection and analysis of quantitative and qualitative data, with integration during interpretation to develop comprehensive understanding [1].

Embedded Design: One paradigm provides supportive, contextualizing data within a study primarily based on the other paradigm [1].

Environmental health research exemplifies this integration, where qualitative data "improve understanding of complex exposure pathways, including the influence of social factors on environmental health, and health outcomes" [1]. Similarly, sustainability research benefits from integrating well-being and behavior studies to anticipate how people respond to environmental policies and changes [7].

The choice between objective measurement and subjective understanding depends primarily on the research question, purpose, and context. Objective approaches are optimal for standardized assessment of factual knowledge, physical parameters, and large-scale comparative studies [3] [2]. Subjective approaches are indispensable for exploring complex perceptions, motivations, and the human dimensions of environmental issues [3] [1].

Rather than positioning these paradigms as opposites, forward-looking environmental research recognizes their complementary value. The most impactful studies strategically employ both approaches to develop nuanced, comprehensive understandings of environmental challenges and solutions. By applying the appropriate methodological standards, experimental protocols, and analytical frameworks outlined in this guide, researchers can effectively leverage both paradigms to advance environmental science and policy.

In environmental analysis research, the choice between numerical data and descriptive information fundamentally shapes the research design, methodology, and interpretation of findings. Numerical data, often termed quantitative data, consists of information that can be counted or measured and expressed numerically. This type of data is used to quantify attitudes, opinions, behaviors, and other defined variables to formulate facts and uncover patterns in research [9]. Conversely, descriptive information, typically referred to as qualitative data, encompasses descriptive and conceptual findings collected through language, words, and narratives. It focuses on verbal accounts and offers detailed narratives of personal experiences, providing a richly textured grasp of social occurrences by providing insights into their complexity [9]. Within the context of environmental analysis, these two data forms serve complementary yet distinct roles in helping researchers, scientists, and drug development professionals understand complex ecological systems, environmental impacts, and sustainability challenges.

The distinction between these data types extends beyond mere format differences to encompass fundamentally different approaches to understanding reality. Quantitative research emphasizes objective measurements and the statistical, mathematical, or numerical analysis of data collected through polls, questionnaires, and surveys, or by manipulating pre-existing statistical data using computational techniques [10]. Qualitative research, in contrast, seeks to explore and understand the meaning individuals or groups ascribe to a social or human problem, with researchers building a complex, holistic picture, analyzing words, reporting detailed views of informants, and conducting the study in a natural setting [11]. This article provides a comprehensive comparison of these approaches, specifically framed within environmental analysis research, to guide professionals in selecting appropriate methodologies for their investigative needs.

Core Characteristics and Philosophical Underpinnings

Nature and Form of Data

The fundamental distinction between numerical and descriptive data lies in their basic form and characteristics. Numerical data is structured and statistical in nature, represented through numbers, values, and quantities that can be mathematically processed and analyzed. This data type is typically collected in controlled environments using standardized instruments designed to minimize bias and maximize reliability. The numerical nature of this data allows for precise measurement and comparison across different studies, time periods, or environmental contexts. For example, in environmental drug development, quantitative approaches might measure precise concentrations of pharmaceutical compounds in water systems, pollution levels in various ecosystems, or statistically significant changes in biodiversity metrics [10].

Descriptive information, by contrast, is unstructured and conceptual, taking the form of words, descriptions, narratives, and visual representations rather than numerical values. This data type is inherently subjective and contextual, capturing nuances, complexities, and underlying meanings that numbers alone cannot convey. In qualitative environmental research, this might include detailed field observations of ecological systems, in-depth interviews with community members affected by environmental changes, or case studies analyzing the implementation of environmental policies [12]. The richness of descriptive information lies in its ability to capture the complexity of environmental phenomena in their natural settings, providing insights into the human dimensions of environmental issues that quantitative approaches might overlook.

Philosophical Foundations

The divergence between numerical and descriptive data extends to their underlying philosophical assumptions about knowledge and reality. Quantitative research typically aligns with a positivist paradigm, which operates on the assumption that reality is objective, singular, and separate from the researcher. This perspective maintains that environmental phenomena can be understood through observation and measurement, with the goal of developing generalizable truths that apply across different contexts. The researcher remains independent from what is being researched, aiming for objectivity and distance to prevent personal biases from influencing the results [10].

Qualitative research, conversely, generally embraces constructivist or interpretive frameworks, which posit that reality is socially constructed, multiple, and intertwined with the researcher. From this viewpoint, environmental understanding emerges through the interpretation of contextualized experiences, with researchers actively engaging with participants to co-construct meaning. Rather than seeking a single objective truth, qualitative approaches acknowledge multiple realities and perspectives, particularly valuable in environmental research when understanding diverse stakeholder viewpoints, cultural relationships with nature, or community-based environmental knowledge systems [11] [12].

Methodological Approaches and Research Designs

Quantitative Research Designs and Methods

Quantitative research employs several distinct designs tailored to different research questions in environmental analysis. Experimental designs utilize the scientific approach, establishing procedures that allow researchers to test hypotheses and systematically study causal relationships among environmental variables. True experiments involve randomly assigning subjects to different groups, implementing different interventions or conditions, and measuring outcomes to determine cause-effect relationships. For instance, researchers might employ experimental designs to test the efficacy of new environmental remediation techniques or to establish causal relationships between specific pollutants and biological effects [10] [12].

Quasi-experimental designs attempt to establish cause-effect relationships but lack random assignment of subjects to groups. Instead, participants are assigned to groups based on non-random criteria or pre-existing attributes. In environmental research, this might involve comparing ecosystems exposed to different levels of pollutants where random assignment is impossible, yet researchers still seek to draw inferences about potential causal mechanisms [10].

Descriptive quantitative designs focus on measuring variables and establishing associations without manipulating them. This observational approach includes methods like cross-sectional studies (analyzing variables at a single point in time), prospective or longitudinal studies (tracking variables and outcomes over extended periods), and case-control studies (comparing cases with certain attributes to controls without them). Environmental scientists might use these designs to document pollution patterns, track climate change indicators, or correlate environmental factors with public health outcomes without intervening in natural systems [10] [13].

Correlational designs examine relationships between variables without attributing causation. These studies measure two or more variables and determine how closely they are related, enabling predictions but not definitive causal conclusions. In environmental science, correlational research might explore relationships between industrial activity and air quality, between deforestation rates and rainfall patterns, or between agricultural practices and soil health indicators [10] [12].

Qualitative Research Approaches and Strategies

Qualitative research employs distinct methodological approaches tailored to understanding complex environmental phenomena in depth. Case studies provide intensive, detailed examination of a single instance or phenomenon, such as a specific environmental policy implementation, a particular ecosystem response to stress, or a community's adaptation to environmental change. This approach allows researchers to retain the holistic and meaningful characteristics of real-life events while providing rich, contextual insights that might be lost in broader quantitative studies [12] [13].

Observational research involves systematically watching and recording behavior, interactions, or processes in their natural settings without intervention. In environmental research, this might include ethnographic studies of human-environment interactions, direct observation of ecological systems, or participatory observation where researchers engage in environmental management activities alongside community members. The key strength of observational methods lies in their ability to capture authentic behaviors and processes as they naturally occur, free from the artificiality of controlled experimental settings [12].

Interview and focus group strategies utilize direct personal interaction to gather rich, detailed perspectives on environmental issues. Interviews may be structured, semi-structured, or unstructured, allowing for varying degrees of flexibility in exploring emergent topics. Focus groups facilitate group discussions that can reveal collective understandings, cultural values, or shared concerns about environmental challenges. These approaches are particularly valuable for understanding the human dimensions of environmental issues, including perceptions of risk, values associated with nature, and community responses to environmental policies or changes [11] [12].

Table 1: Comparative Analysis of Research Methods in Environmental Studies

| Method | Primary Function | Environmental Application Examples | Key Strengths | Principal Limitations |

|---|---|---|---|---|

| Experimental Design | Establish cause-effect relationships through variable manipulation | Testing efficacy of remediation techniques; determining pollutant toxicity | High internal validity; clear causal inference | Artificial conditions may not reflect real-world complexity; ethical constraints |

| Quasi-experimental Design | Approximate cause-effect relationships without random assignment | Comparing impacted vs. non-impacted ecosystems; evaluating policy implementations | Practical when randomization impossible; higher real-world relevance | Potential confounding variables; weaker causal claims |

| Descriptive Quantitative Design | Document and describe characteristics, frequencies, patterns | Environmental monitoring; biodiversity inventories; pollution mapping | Identifies patterns and trends; provides baseline data | Cannot establish causation; may miss contextual factors |

| Correlational Design | Identify relationships and predictive patterns between variables | Linking climate variables to ecosystem responses; connecting land use to water quality | Identifies interrelated factors; enables prediction | Correlation does not imply causation; third variable problems |

| Case Study | In-depth investigation of a single instance in its real-world context | Analyzing specific environmental disasters; studying successful conservation programs | Rich, contextual details; holistic perspective | Limited generalizability; potential researcher bias |

| Observational Research | Document natural behaviors and processes without intervention | Studying human-wildlife interactions; observing ecosystem recovery processes | Captures authentic behavior in natural context | Time-consuming; potential observer effect; interpretation challenges |

| Interviews/Focus Groups | Explore perspectives, experiences, and meanings in depth | Understanding stakeholder values; exploring community responses to environmental changes | Rich, detailed data; explores complexity of human perspectives | Small samples; potential social desirability bias; analysis complexity |

Data Collection Techniques and Instruments

Quantitative Data Collection Methods

Quantitative research in environmental science employs structured, standardized instruments designed to generate numerical data amenable to statistical analysis. Surveys and questionnaires represent one of the most common quantitative tools, utilizing closed-ended questions with predetermined response options that can be easily quantified and analyzed. Environmental researchers might deploy surveys to measure public attitudes toward conservation policies, assess community awareness of environmental issues, or gather standardized data on environmental behaviors across large populations. The strength of surveys lies in their ability to efficiently collect comparable data from large samples, though they may miss contextual nuances and depth of understanding [10] [13].

Systematic environmental measurements involve standardized protocols for collecting physical, chemical, or biological data using specialized instruments and precise methodologies. This might include air or water quality monitoring, biodiversity assessments using standardized sampling techniques, satellite imagery analysis for land use classification, or laboratory analysis of environmental samples. These methods prioritize accuracy, precision, and reproducibility, generating the robust numerical data required for environmental modeling, regulatory compliance determination, and trend analysis. The objectivity and comparability of systematic measurements make them indispensable for environmental monitoring and impact assessment [10].

Structured observations employ predefined categories and recording protocols to convert observable phenomena into quantifiable data. Unlike qualitative observations that seek rich description, structured observations use coding schemes, checklists, or rating scales to systematically record specific behaviors, events, or conditions. Environmental researchers might use structured observations to document wildlife behaviors according to established ethograms, classify land use patterns according to standardized categorization systems, or record human activities in natural areas using predetermined activity codes. This approach combines the real-world relevance of observation with the standardization required for quantitative analysis [12].

Qualitative Data Collection Approaches

Qualitative data collection methods prioritize depth, context, and richness of understanding through more flexible, emergent approaches. In-depth interviews utilize open-ended questions and conversational formats to explore participants' perspectives, experiences, and meanings in rich detail. In environmental research, interviews might explore how indigenous communities perceive environmental changes, how farmers make decisions about sustainable practices, or how policymakers balance economic and environmental considerations. The flexible nature of qualitative interviews allows researchers to pursue unexpected insights and adapt questioning to emergent themes, providing nuanced understanding of complex environmental issues [11] [12].

Focus groups facilitate group discussions that generate insights through participant interaction, revealing collective understandings, shared concerns, or divergent viewpoints on environmental topics. Well-suited for exploring complex issues where group dynamics mirror real-world decision-making contexts, focus groups might examine community responses to proposed environmental regulations, explore differing stakeholder perspectives on resource management conflicts, or identify shared values regarding landscape conservation. The group setting can stimulate participants to articulate, refine, or reconsider their views through interaction with others, generating data that reflects social processes rather than merely individual opinions [11].

Participant observation involves researchers immersing themselves in the setting or community being studied, participating in activities while systematically observing and recording details about the environment, interactions, and processes. Environmental anthropologists might use this approach to understand community-based resource management systems, while conservation biologists might employ it to study the implementation of conservation programs in specific cultural contexts. The extended engagement characteristic of participant observation allows researchers to develop trust, understand contextual factors, and observe processes over time, providing insights that would be inaccessible through more detached methods [12].

Document analysis systematically examines existing texts, records, and visual materials as sources of qualitative data. Environmental historians might analyze archival documents to understand historical landscape changes, while policy researchers might examine meeting minutes, reports, and media coverage to trace the development of environmental policies. This approach leverages existing materials as data sources, providing historical depth and contextual understanding without requiring new data collection, though researchers must critically consider the original purposes and potential biases within the documents [12].

Data Analysis and Interpretation Frameworks

Quantitative Analysis Techniques

Quantitative data analysis employs statistical methods to identify patterns, test hypotheses, and draw inferences from numerical data. Descriptive statistics provide summary measures that characterize the central tendency (mean, median, mode), variability (range, standard deviation), and distribution of datasets. Environmental scientists use descriptive statistics to summarize monitoring data, describe environmental conditions, and communicate basic patterns in accessible formats. These techniques transform raw numerical data into meaningful summaries that support initial interpretation and decision-making [10] [9].

Inferential statistics enable researchers to draw conclusions about populations based on sample data, testing hypotheses and determining the probability that observed patterns occurred by chance. Common inferential techniques include t-tests (comparing two groups), ANOVA (comparing multiple groups), correlation analysis (examining relationships between variables), and regression analysis (modeling and predicting relationships). Environmental researchers might use these methods to determine whether pollution levels differ significantly between sites, identify factors that predict ecosystem health, or model the relationship between climate variables and species distributions. These powerful techniques support generalizations beyond immediate data but require meeting specific statistical assumptions [10] [9].

Multivariate analysis techniques examine complex relationships among multiple variables simultaneously, addressing the multidimensional nature of environmental systems. Methods such as factor analysis, cluster analysis, multidimensional scaling, and structural equation modeling help identify underlying patterns, group similar cases, or test complex theoretical models. Environmental scientists might apply these approaches to identify suites of correlated environmental stressors, classify ecosystems into functional types, or model the direct and indirect pathways through which human activities affect ecological outcomes. These sophisticated techniques can reveal patterns invisible to simpler analyses but require larger sample sizes and specialized expertise [9].

Qualitative Analysis Approaches

Qualitative analysis involves systematic approaches to identify patterns, themes, and meanings within non-numerical data. Thematic analysis provides a foundational approach for identifying, analyzing, and reporting patterns (themes) within qualitative data. It involves familiarization with data, generating initial codes, searching for themes, reviewing themes, defining and naming themes, and producing the analysis. Environmental researchers might use thematic analysis to identify recurring concerns in community responses to environmental projects, dominant frames in media coverage of conservation issues, or shared values expressed in interviews about landscape change. The flexibility of thematic analysis makes it widely applicable across various qualitative traditions [11] [9].

Content analysis systematically categorizes and counts the frequency of specific words, phrases, concepts, or themes within texts, bridging qualitative and quantitative approaches. While often producing numerical outputs (frequencies), it maintains qualitative attention to meaning and context. Environmental communication researchers might content analyze media coverage of climate change, policy documents addressing sustainability, or public comments on environmental impact statements. The structured nature of content analysis enhances transparency and reproducibility while maintaining connection to qualitative meaning [9].

Narrative analysis examines the stories, accounts, and narratives people use to describe and make sense of environmental experiences and phenomena. This approach focuses on how people structure their experiences temporally, select and emphasize certain events, and position themselves within their stories. Environmental researchers might use narrative analysis to understand how communities remember and recount environmental disasters, how scientists describe their relationship with studied ecosystems, or how different stakeholders story the history of environmental conflicts. This approach reveals how meaning is constructed through storytelling and how narratives shape environmental understanding and action [11].

Discourse analysis examines how language constructs and reflects social realities, power relationships, and knowledge claims about the environment. Going beyond what people say to analyze how they say it, discourse analysis explores linguistic features, rhetorical strategies, and conversation structures. Environmental researchers might analyze how scientific certainty/uncertainty is discursively constructed in climate debates, how different stakeholders frame environmental problems and solutions, or how power relationships are enacted in environmental decision-making processes. This approach reveals how language shapes environmental understanding, identities, and social actions [11].

Table 2: Data Analysis Techniques Comparison

| Analysis Method | Primary Function | Typical Outputs | Software Tools | Environmental Application Examples |

|---|---|---|---|---|

| Descriptive Statistics | Summarize and describe basic features of datasets | Measures of central tendency, variability, frequency distributions | SPSS, R, Excel | Summarizing monitoring data; describing baseline environmental conditions |

| Inferential Statistics | Draw conclusions about populations from samples; test hypotheses | Significance tests, confidence intervals, effect sizes | SPSS, R, SAS | Comparing impacted and control sites; testing intervention effectiveness |

| Multivariate Analysis | Examine complex relationships among multiple variables | Classification systems, dimension reduction, causal models | R, SPSS, SAS | Identifying environmental gradients; modeling ecosystem responses to multiple stressors |

| Thematic Analysis | Identify, analyze, and report patterns/themes across qualitative datasets | Identified themes with supporting evidence and interpretations | NVivo, Dedoose, Atlas.ti | Analyzing stakeholder perspectives; identifying emergent concerns in community responses |

| Content Analysis | Systematically categorize and quantify content of texts | Frequencies of codes/categories; conceptual maps | NVivo, MAXQDA, CATMA | Tracking media framing of environmental issues; analyzing policy documents |

| Narrative Analysis | Examine story structure, content, and function in accounts | Narrative typologies; plot analyses; positioning analyses | NVivo, Dedoose | Understanding community environmental memories; analyzing scientist fieldwork stories |

| Discourse Analysis | Examine how language constructs social realities | Identified discursive patterns; rhetorical strategies; framing analyses | NVivo, Atlas.ti | Analyzing environmental debates; examining how scientific knowledge is communicated |

Visualization Methods for Different Data Types

Quantitative Data Visualization

Effective visualization of numerical data enables environmental researchers to communicate patterns, trends, and relationships clearly and efficiently. Bar and column charts represent categorical data with rectangular bars whose lengths/heights are proportional to the values they represent. These charts excel at comparing values across different categories, such as pollution levels across different sites, species counts across habitat types, or resource allocations across different conservation programs. For environmental data with long category labels, bar charts (horizontal bars) typically provide better readability than column charts (vertical bars) [14] [15].

Line charts display data points connected by straight lines, effectively showing trends over time. Environmental scientists frequently use line charts to visualize changes in climate variables, pollutant concentrations, population sizes, or ecosystem indicators across temporal scales. The connected points emphasize continuity and directionality, making line charts ideal for showing patterns, progressions, and forecasting future environmental conditions based on historical trends [15].

Scatter plots represent the relationship between two continuous variables by displaying data points on a horizontal and vertical axis. These visualizations help identify correlations, clusters, and outliers in environmental data, such as relationships between temperature and species richness, between pollutant concentration and distance from source, or between multiple environmental stressors. The distribution of points reveals the strength and direction of relationships, informing statistical analysis and model development [15].

Histograms approximate the distribution of a continuous numerical dataset by dividing the data into bins (intervals) and counting frequency within each bin. Unlike bar charts that display categorical data, histograms visualize the underlying frequency distribution of continuous variables, helping environmental researchers assess normality, identify skewness, and detect outliers in measurements like pollutant concentrations, organism sizes, or environmental gradient responses [15].

Qualitative Data Visualization

Visualization of qualitative data helps represent patterns, relationships, and conceptual structures emerging from descriptive information. Concept maps diagram proposed relationships between concepts, ideas, or themes, typically represented as boxes or circles connected by labeled arrows in a hierarchical structure. Environmental researchers might use concept maps to visualize stakeholder mental models of ecosystem functioning, theoretical frameworks guiding conservation programs, or interconnected factors affecting environmental decision-making. These visualizations help synthesize complex qualitative findings into coherent conceptual frameworks [11].

Flow charts and process diagrams illustrate sequences, procedures, or causal pathways identified in qualitative analysis. These visualizations might map environmental management decision processes, community adaptation strategies to environmental change, or implementation pathways for conservation interventions. By making processes explicit, these diagrams facilitate understanding of complex sequences and identify potential leverage points or bottlenecks in environmental systems [11].

Network diagrams display relationships and connections between actors, organizations, or concepts, represented as nodes (points) and edges (connecting lines). Environmental governance researchers might use network diagrams to visualize collaboration patterns among conservation organizations, information flows in environmental management systems, or influence relationships among stakeholders in environmental conflicts. These visualizations reveal structural patterns that might be difficult to discern from textual data alone [11].

The following diagram illustrates the decision-making process for selecting appropriate research methodologies based on study goals in environmental research:

Experimental Protocols and Methodological Rigor

Ensuring Quantitative Research Validity

Quantitative research employs specific protocols to ensure validity, reliability, and generalizability in environmental studies. Experimental controls involve managing variables to isolate cause-effect relationships, including control groups (not receiving experimental treatment), random assignment (distributing confounding variables equally across groups), and blinding (preventing bias in treatment administration or outcome assessment). In environmental experimental research, this might involve control sites matching experimental sites in all characteristics except the intervention, randomized placement of sampling units, or blinded assessment of environmental outcomes to prevent measurement bias. These controls strengthen causal inferences but present challenges in complex environmental systems where complete control is often impossible [10] [12].

Measurement reliability addresses the consistency and stability of data collection instruments and procedures. Environmental researchers establish reliability through methods like test-retest reliability (consistent results over time), inter-rater reliability (agreement between different observers or instruments), and internal consistency (coherence between multiple measurement items targeting the same construct). In environmental monitoring, this might involve calibrating instruments regularly, training multiple observers to consistent standards, or using multiple indicators to measure complex environmental concepts. High reliability ensures that measurements reflect actual phenomena rather than random error [10].

Sampling approaches in quantitative research prioritize statistical representativeness to support generalizations to broader populations. Probability sampling methods (simple random, systematic, stratified, cluster) ensure that each element of the population has a known, non-zero probability of selection. Environmental researchers might use stratified random sampling to ensure representation across different habitat types, systematic sampling along environmental gradients, or cluster sampling when complete population lists are unavailable. Appropriate sampling designs balance practical constraints with statistical requirements to support valid population inferences [10].

Ensuring Qualitative Research Trustworthiness

Qualitative research establishes methodological rigor through different frameworks emphasizing credibility, transferability, and confirmability rather than traditional validity and reliability. Triangulation uses multiple data sources, methods, investigators, or theories to cross-verify findings and overcome the limitations of single approaches. Environmental qualitative researchers might triangulate interview data with documentary evidence, combine observations with participatory mapping, or bring multiple disciplinary perspectives to interpret complex environmental phenomena. Convergence across different approaches strengthens confidence in findings, while discrepancies provide opportunities for deeper understanding [11] [12].

Reflexivity involves critical self-reflection by researchers about their assumptions, values, biases, and how these might shape the research process and interpretations. Environmental researchers practice reflexivity through maintaining research journals documenting decision processes, examining how their positionality (disciplinary background, institutional affiliation, personal values) might influence what they notice and how they interpret it, and openly addressing potential conflicts of interest in environmental controversies. Rather than attempting value-neutrality (as in quantitative approaches), reflexivity acknowledges and manages researcher subjectivity as an inherent part of qualitative inquiry [11].

Member checking involves returning preliminary findings to participants to verify accuracy, interpretation, and resonance with their experiences. In environmental research, this might involve sharing interview summaries with participants for correction, presenting preliminary community case study findings for community feedback, or collaborating with stakeholders in developing interpretations of shared environmental experiences. This process enhances factual accuracy, interpretive validity, and ethical respect for participants' meanings, though it requires balancing participant perspectives with researcher analytical insights [12].

Thick description provides detailed, contextualized accounts of research settings, participants, and phenomena that enable readers to assess transferability to other contexts. Unlike the standardized procedural descriptions in quantitative research, thick description in environmental studies might detail the physical setting, historical context, social dynamics, and researcher experiences in sufficient richness that readers can judge which elements might apply to other environmental contexts. This approach supports transferability through contextual understanding rather than statistical representativeness [12].

The Scientist's Toolkit: Essential Research Reagents and Materials

Quantitative Research Instruments

Table 3: Essential Tools for Quantitative Environmental Research

| Tool/Instrument | Primary Function | Application Examples | Technical Considerations |

|---|---|---|---|

| Environmental Sensor Networks | Automated collection of continuous physical, chemical, biological data | Air/water quality monitoring; climate data collection; ecosystem metabolism measurements | Calibration protocols; data management systems; sensor precision and detection limits |

| Laboratory Analytical Equipment | Precise quantification of environmental samples | Spectrophotometers; chromatographs; mass spectrometers; atomic absorption instruments | Standard operating procedures; quality assurance/control; sample preservation methods |

| Statistical Software Packages | Data management, statistical analysis, visualization | R, SPSS, SAS, Python libraries (pandas, scikit-learn) | Algorithm selection; assumption testing; reproducible coding practices |

| Geographic Information Systems (GIS) | Spatial data analysis, mapping, spatial statistics | Habitat mapping; land use change analysis; environmental impact assessment | Coordinate systems; spatial resolution; remote sensing data integration |

| Structured Survey Platforms | Standardized data collection from human participants | Online surveys; interview schedules; structured observation protocols | Sampling frames; question validation; response rate management |

Qualitative Research Instruments

Table 4: Essential Tools for Qualitative Environmental Research

| Tool/Instrument | Primary Function | Application Examples | Technical Considerations |

|---|---|---|---|

| Digital Recording Equipment | High-quality audio/video recording of interviews, focus groups, observations | Recording community meetings; documenting traditional ecological knowledge; capturing environmental practices | File management; transcription protocols; participant consent documentation |

| Qualitative Data Analysis Software | Organization, coding, analysis of textual, audio, visual data | NVivo, MAXQDA, Atlas.ti, Dedoose | Coding schema development; inter-coder reliability; data security and confidentiality |

| Field Note Systems | Systematic documentation of observations, reflections, contextual details | Ecological field journals; participant observation records; ethnographic field notes | Structured templates; reflective components; integration with other data sources |

| Participatory Research Tools | Collaborative knowledge production with stakeholders | Participatory mapping; community workshops; photovoice; future scenario exercises | Facilitation skills; power dynamics management; co-analysis processes |

| Interview/Focus Group Protocols | Flexible guides for qualitative data collection | Semi-structured interview guides; focus group question routes; participant recruitment materials | Question phrasing; sequencing; pilot testing; ethical considerations |

Integrated Mixed-Methods Approaches in Environmental Research

The most robust environmental research often integrates quantitative and qualitative approaches to leverage their complementary strengths. Sequential designs collect and analyze one type of data first, then use those findings to inform subsequent collection of the other data type. This might involve initial qualitative interviews to identify important variables later measured quantitatively, or initial quantitative surveys to identify outliers or patterns later explored qualitatively. Environmental researchers might begin with community interviews to understand local environmental concerns, then design quantitative surveys to measure the prevalence of these concerns across broader populations [11] [9].

Concurrent designs collect both quantitative and qualitative data simultaneously, then integrate findings during interpretation. This approach might combine quantitative ecological measurements with qualitative observations of management practices, or statistical analysis of environmental trends with discourse analysis of policy debates. The parallel data streams provide both statistical patterns and contextual understanding, offering a more comprehensive picture of complex environmental issues than either approach alone [11] [9].

Embedded designs use a primary method (quantitative or qualitative) supplemented by secondary method data to address different research questions. A primarily quantitative experimental study might embed qualitative interviews to understand implementation challenges, while a primarily qualitative case study might incorporate quantitative documentation to contextualize findings. Environmental researchers might embed qualitative process evaluation within quantitative impact assessments, or include quantitative background data to contextualize in-depth qualitative findings [11].

The integration of quantitative and qualitative approaches requires careful planning but offers significant benefits for environmental research. As noted in organizational research, "The integration of both quantitative and qualitative data enhances research validity, allowing for a comprehensive understanding of subjects through a mixed methods approach" [11]. This integration helps researchers balance breadth and depth, generalize while respecting context, and measure outcomes while understanding processes—all crucial for addressing complex environmental challenges.

The choice between numerical data and descriptive information in environmental research design should be guided by the specific research questions, purposes, and contexts of investigation. Quantitative approaches excel when research requires generalizable findings, precise measurement, hypothesis testing, or statistical modeling of environmental phenomena. Qualitative approaches prove indispensable when seeking to understand complex social-ecological systems, explore understudied phenomena, give voice to diverse perspectives, or develop contextualized understandings of environmental processes.

Rather than viewing these approaches as competing alternatives, environmental researchers increasingly recognize their complementary value within mixed-methods frameworks. As one analysis notes, "By integrating both methodologies, researchers can achieve a holistic perspective that captures the complexity of organizational climates," a principle equally applicable to environmental systems [11]. The most insightful environmental research often emerges from strategically combining numerical precision with descriptive richness to address the multifaceted nature of contemporary environmental challenges.

For drug development professionals and environmental researchers, methodological selection should align with specific investigation phases: quantitative methods for establishing prevalence, testing interventions, and modeling systems; qualitative methods for exploring complex phenomena, understanding stakeholder perspectives, and contextualizing findings; and integrated approaches for comprehensive environmental assessment and decision support. This strategic alignment ensures that research designs effectively address environmental questions while generating actionable knowledge for science, policy, and practice.

In environmental analysis research, the journey from initial observation to validated conclusion is structured around two distinct but complementary pillars: exploratory and confirmatory research. This dichotomy frames a fundamental cycle of scientific discovery, where exploratory research aims to generate new hypotheses and uncover patterns, while confirmatory research rigorously tests these pre-specified hypotheses [16] [17]. Within the context of comparing quantitative and qualitative environmental analysis, understanding this distinction is not merely academic; it is essential for designing robust studies, selecting appropriate methodologies, and drawing valid, reproducible conclusions that can inform drug development and environmental policy.

The synergy between these approaches forms the backbone of the scientific method. Exploratory research, often leaning on qualitative methods, delves into the depth and context of human experiences and complex environmental systems, asking "how" and "why" [18] [19]. In contrast, confirmatory research typically employs quantitative methods to measure variables, test causal relationships, and make generalizations, asking "how many" or "how much" [18] [19]. For researchers and scientists, clearly delineating between these modes of investigation is critical to maintaining scientific integrity, as confusing the two can lead to false positives and non-reproducible results, a practice known as HARKing (Hypothesizing After the Results are Known) [16] [20].

Core Concepts and Definitions

What is Exploratory Research?

Exploratory research is an initial, open-ended investigation into a subject where little prior empirical research exists. Its primary aim is to explore an idea, gather preliminary insights, and generate a broader understanding of a phenomenon, often leading to the development of testable hypotheses for future study [16]. This approach is particularly valuable when investigating complex, poorly understood environmental interactions or patient experiences where the relevant variables are not yet fully identified.

The defining characteristics of exploratory research include:

- Flexibility in Design: The research process is adaptive and can evolve as new findings emerge, allowing researchers to pursue interesting leads [19] [17].

- Generation of Theories and Hypotheses: Insights and theories are developed from the patterns found in the collected data, rather than from testing pre-existing theories [19].

- Focus on Sensitivity: The goal is to detect all possible strategies, theories, or connections that might be useful, casting a wide net to avoid missing promising avenues [17].

In environmental science, an exploratory study might use open-ended interviews with communities living near a potential pollutant source to understand their health concerns and experiences, thereby identifying key variables for later quantitative measurement.

What is Confirmatory Research?

Confirmatory research, also known as hypothesis-testing research, follows a structured, pre-specified approach to test specific ideas about the relationships between variables [16] [20]. It is conducted when substantial background knowledge exists, often built upon the findings of prior exploratory studies. The goal is to find compelling evidence for or against a pre-defined hypothesis, thereby drawing firm inferences about a population or phenomenon [16].

The defining characteristics of confirmatory research include:

- Rigid, Pre-specified Design: The study's structure, methods, and primary hypothesis are clearly defined before data collection begins, making the results replicable and comparable [19] [17].

- Testing of Existing Hypotheses: The research sets out to test a specific theory or hypothesis, with the results either supporting or rejecting it [21] [19].

- Focus on Specificity: The aim is to exclude all strategies or explanations that will prove useless, thereby minimizing false positives and justifying the economic and moral costs of further development, such as in clinical trials [17].

A confirmatory study in drug development, for instance, would be a tightly controlled, randomized clinical trial to test the efficacy and safety of a new compound identified in earlier exploratory preclinical investigations.

A Comparative Analysis: Objectives, Methods, and Outputs

The differences between exploratory and confirmatory research extend across the entire research lifecycle, from initial aims to final interpretations. The table below provides a structured, point-by-point comparison of these two research approaches.

Table 1: A Comprehensive Comparison of Exploratory and Confirmatory Research

| Aspect | Exploratory Research | Confirmatory Research |

|---|---|---|

| Primary Aim | To explore, discover, and generate new hypotheses and theories [16] [22]. | To test, confirm, and validate pre-existing hypotheses [16] [21]. |

| Research Question | "Why?" "How?" Focuses on depth and detailed understanding [19]. | "How many?" "How much?" "What is the relationship?" [19]. |

| Typical Data Type | Qualitative (words, images, narratives) or unquantified observations [18] [19]. | Quantitative (numerical, statistical) [18] [19]. |

| Common Methods | In-depth interviews, focus groups, observations, case studies, ethnography [18] [19]. | Controlled experiments, surveys with closed questions, structured observations [18] [19]. |

| Study Design | Flexible, adaptive, and often unstructured to accommodate new insights [16] [19]. | Rigid, pre-specified, and highly structured to minimize bias [16] [19]. |

| Sample Strategy | Smaller, often non-randomized samples (e.g., convenience samples) for in-depth understanding [18] [19]. | Larger, often randomized samples intended to be representative of a population [18] [19]. |

| Role of Researcher | Active participant in the process; subjectivity is acknowledged [19]. | Objective and detached to minimize bias and achieve consistency [19]. |

| Outputs | Detailed descriptions, rich insights, new concepts, and hypotheses for future testing [16] [19]. | Objective, empirical data, statistical conclusions, and generalizable findings [18] [19]. |

| Validity Emphasis | Conceptual validity through corroboration across different lines of experimentation [17]. | Internal and construct validity through controlled conditions and pre-specified designs [17]. |

Visualizing the Research Workflow

The following diagram illustrates the cyclical, complementary relationship between exploratory and confirmatory research within the scientific process.

Experimental Protocols and Data Analysis

Methodologies for Exploratory Research

Exploratory research relies on flexible, iterative protocols designed to capture depth and richness of data. A common methodology is the exploratory sequential design, often used in environmental health to understand complex phenomena.

Table 2: Key Research Reagent Solutions for Qualitative Exploration

| Reagent / Tool | Primary Function in Exploration |

|---|---|

| Semi-Structured Interview Guides | Provides a flexible framework of open-ended questions to explore participant experiences and views without constraining responses [19]. |

| Focus Group Protocols | Facilitates group discussion to explore shared views, social norms, and interactions on a specific topic [19]. |

| CAQDAS (Computer-Assisted Qualitative Data Analysis Software) | Organizes and manages non-numerical data, assisting researchers in coding and identifying emerging themes [18]. |

| Thematic Analysis Framework | A systematic method for identifying, analyzing, and reporting patterns (themes) within qualitative data [19]. |

Detailed Workflow:

- Data Collection: Researchers gather data through methods like in-depth interviews or focus groups. In a study on community response to an environmental change, researchers would conduct interviews in participants' homes or community centers to capture context [19].

- Data Organization: Data is transcribed and organized using CAQDAS [18].

- Coding and Theme Development: Researchers immerse themselves in the data, coding interesting features and systematically collating codes into potential themes. This is an iterative process of going back and forth between the dataset and the emerging themes [19].

- Hypothesis Generation: The final themes and patterns are used to construct a coherent narrative about the data and, crucially, to generate specific, testable hypotheses for future confirmatory research [16] [22].

Methodologies for Confirmatory Research

Confirmatory research requires strict, pre-registered protocols to ensure objectivity and reproducibility. A prime example is the randomized controlled trial (RCT), the gold standard in clinical drug development and increasingly used in environmental toxicology.

Table 3: Essential Reagent Solutions for Confirmatory Analysis

| Reagent / Tool | Primary Function in Confirmation |

|---|---|

| Pre-Registration Protocol | A detailed, time-stamped plan filed before experimentation, specifying the hypothesis, primary outcome, sample size, and analysis plan to prevent HARKing and p-hacking [20] [17]. |

| Standardized Assays & Kits | Validated, reproducible measurement tools (e.g., ELISA kits for biomarker quantification) that ensure consistency and accuracy across samples and studies. |

| Statistical Analysis Software (e.g., R, SPSS) | Used to apply pre-specified statistical models, calculate significance (p-values), and produce estimates with a specified level of confidence (confidence intervals) [23]. |

| Blinding & Randomization Tools | Methods to eliminate bias, such as random number generators for assigning subjects to groups and blinding protocols for researchers and participants [24]. |

Detailed Workflow:

- Pre-registration: The research hypothesis, primary and secondary outcome measures, sample size calculation, and statistical analysis plan are documented in a public repository before the study begins [17].

- Controlled Experimentation: The study is conducted under controlled conditions. For example, in a preclinical efficacy study, animals are randomly assigned to treatment or control groups, and researchers administering the treatment are often blinded to the group assignments [17].

- Confirmatory Data Analysis: Data is analyzed according to the pre-registered plan. This involves testing hypotheses using traditional statistical tools like significance testing (e.g., t-tests, ANOVA) and producing estimates with confidence intervals [23]. Deviation from the plan is considered a breach of confirmatory principles.

- Inference: Based on the statistical evidence, the pre-specified null hypothesis is either rejected or not rejected, providing rigorous evidence for or against the intervention's effect [21].

The following diagram contrasts the high-level workflows of these two research approaches.

The Critical Importance of Distinguishing Between the Two Aims

The failure to clearly separate exploratory and confirmatory research poses a significant threat to the credibility and reproducibility of scientific findings, particularly in fields like drug development and environmental science where the stakes are high.

The most salient risk is HARKing (Hypothesizing After the Results are Known) [16]. This occurs when a researcher formulates a hypothesis after seeing the results of an exploratory analysis and then presents it as if it were the original, a priori hypothesis. This practice leads to false positives because the hypothesis is tailored to fit the specific, often noisy, dataset rather than being independently tested [16] [20]. A finding that appears definitive in one study may fail to generalize or be replicated in subsequent research.

To mitigate these risks and improve translational success, particularly in preclinical research, the following reforms are urged [17]:

- Explicit Demarcation: All research protocols and publications should pre-specify whether they are exploratory or confirmatory.

- Tailored Standards: Journal editors and funding agencies should hold confirmatory studies to higher standards of internal and construct validity, similar to clinical trials, requiring pre-registration, large sample sizes, and fastidious design.

- Promotion of Confirmatory Studies: The research ecosystem should incentivize and fund a greater volume of confirmatory investigation to rigorously test the most promising leads generated by exploratory research.

Exploratory and confirmatory research are not in competition; they are essential, complementary partners in the scientific enterprise. Exploratory research generates the novel hypotheses and theories that drive innovation, while confirmatory research rigorously validates these insights, ensuring they are robust and reproducible [16] [22]. For researchers, scientists, and drug development professionals, the strategic integration of both modes is key to advancing knowledge.

The most powerful approach is often a mixed-methods design, which leverages the strengths of both to provide a more comprehensive understanding [18] [22]. For example, qualitative exploratory interviews can be used to discover the most relevant terms and concepts for a subsequent quantitative survey, the results of which can then be explained through further qualitative follow-up [22]. This triangulation enhances the overall validity and impact of the research.

Therefore, the path forward requires a conscious and disciplined application of both approaches. By clearly framing research aims, choosing methodologies aligned with those aims, and upholding the distinct principles of exploration and confirmation, the scientific community can produce findings that are both deeply insightful and reliably true, ultimately accelerating progress in environmental health and therapeutic development.

In environmental and pharmaceutical research, the choice between quantitative and qualitative methodologies is foundational, shaping the trajectory of an investigation from its core questions to its ultimate conclusions. Quantitative research focuses on numerical data to measure environmental phenomena, asking "how much" or "how many" to identify patterns and test hypotheses. In contrast, qualitative research deals with non-numerical information to understand underlying motivations, perceptions, and experiences, exploring the "why" and "how" [9]. For researchers and drug development professionals, selecting the appropriate approach is not merely an academic exercise; it is a critical strategic decision that determines the validity, applicability, and impact of their work in addressing complex environmental problems.

Core Concept Comparison: Quantitative vs. Qualitative Analysis

The table below summarizes the fundamental distinctions between these two research paradigms.

Table 1: Fundamental Differences Between Quantitative and Qualitative Research Approaches

| Aspect | Quantitative Research | Qualitative Research |

|---|---|---|

| Nature of Data | Numerical, countable, measurable [9] | Descriptive, textual, experiential [9] |

| Core Objective | To measure, predict, and generalize findings from a sample to a population [9] | To gain in-depth understanding, explore complexities, and interpret social phenomena [9] |

| Approach | Deductive (testing a hypothesis) [9] | Inductive (exploring ideas and forming theories) [9] |

| Data Collection Methods | Surveys, polls, experiments, controlled studies [9] | Interviews, focus groups, observations [9] |

| Analysis Techniques | Statistical analysis (e.g., descriptive and inferential statistics) [9] | Thematic analysis, coding, and interpretation [9] |

Application in Environmental and Pharmaceutical Research

The Quantitative Lens: Measuring the Measurable

Quantitative analysis excels at providing objective, comparable data that is essential for benchmarking and statistical modeling.

In environmental studies, this approach is used to track changes in climate metrics, pollution levels, and resource consumption. For instance, a systematic review of climate knowledge research found that the vast majority of studies employ quantitative methods to measure factual, objective knowledge about climate change through standardized instruments [25]. In pharmaceutical development, quantitative data is the bedrock of sustainability metrics. Companies measure solvent volumes, plastic waste, energy consumption, and carbon emissions to quantify their environmental footprint and track progress toward reduction goals [26]. The push for open data in environmental research, where journals now mandate the public sharing of data and code, further underscores the field's reliance on verifiable, quantitative evidence [27].

The Qualitative Lens: Understanding Context and Complexity

Qualitative analysis provides the crucial context behind the numbers, uncovering the human and systemic factors that drive environmental outcomes.

In impact assessment (IA), a shift toward "next-generation IA" requires incorporating social, cultural, and health equity implications. This necessitates robust qualitative methods to meaningfully include diverse knowledges and values, giving decision-makers confidence in the evidence base beyond pure numbers [28]. In the pharmaceutical industry, qualitative insights drive strategic sustainability initiatives. Understanding stakeholder motivations, corporate cultures, and the barriers to adopting green practices (like the pervasive use of virgin plastics) relies on methods like interviews and focus groups [26]. This deep exploration is vital for designing effective change management and policies.

The Power of Integration: Mixed-Methods Approach

The most robust research often integrates both methodologies, a practice known as a mixed-methods approach [9]. This combination allows the strengths of one method to compensate for the weaknesses of the other, leading to a more comprehensive understanding.

For example, a researcher might use a quantitative survey to identify a trend in a community's recycling behavior and then conduct qualitative focus groups to understand the underlying reasons for that trend. This integration enhances validity and reliability through triangulation—verifying a finding with multiple data sources [9].

Experimental Protocols and Workflows

Protocol 1: Quantitative Assessment of Environmental Knowledge

This protocol is adapted from methodologies used in systematic reviews of climate knowledge measurement [25].

- 1. Research Question & Hypothesis Formulation: Define a specific, measurable question (e.g., "What is the level of objective climate knowledge among university students in this region?"). Formulate a testable hypothesis.

- 2. Instrument Design: Develop a structured questionnaire with closed-ended questions. Types of questions include:

- True/False or Multiple-Choice: To assess knowledge of factual statements (e.g., "The primary greenhouse gas is...").

- Likert Scales: To gauge agreement with specific statements (e.g., "I believe human activity is a major cause of climate change" from Strongly Disagree to Strongly Agree).

- 3. Sampling & Data Collection: Use a random or stratified sampling method to recruit a statistically significant number of participants. Administer the survey under standardized conditions.

- 4. Data Analysis: Employ statistical software (e.g., R, Stata). Conduct:

- Descriptive Statistics: Calculate means, frequencies, and standard deviations to summarize the data.

- Inferential Statistics: Use t-tests or ANOVA to compare knowledge scores across different demographic groups.

- 5. Reporting & Data Deposition: Report findings with clear statistical results. As per emerging journal policies, deposit raw data, analysis code, and documentation in a public repository like Harvard Dataverse or Zenodo [27].

Protocol 2: Qualitative Analysis of Sustainability in Drug Development

This protocol is informed by practices discussed at industry conferences like ELRIG 2025 [26] and principles of qualitative impact assessment [28].

- 1. Problem Scoping: Identify a complex problem area (e.g., "Barriers to adopting green chemistry principles in early-stage drug discovery").

- 2. Study Design & Participant Recruitment: Choose a purposive sampling strategy to recruit key informants with relevant expertise (e.g., lab managers, medicinal chemists, procurement officers). Design a semi-structured interview guide or focus group protocol with open-ended questions.

- 3. Data Collection: Conduct in-depth interviews or focus groups. Record and transcribe the sessions verbatim. Maintain detailed field notes to capture non-verbal cues and contextual information.

- 4. Data Analysis: Perform a thematic analysis.

- Familiarization: Immerse in the data by reading transcripts multiple times.

- Coding: Generate initial codes that identify meaningful data segments.

- Theme Development: Collate codes into potential themes, refining them to accurately represent the dataset.

- Reflexivity: Continuously document the researcher's own biases and perspectives to ensure analytical rigor [9] [28].

- 5. Reporting: Present the findings as a rich, narrative account supported by direct quotations that illustrate the identified themes.

The following workflow diagram visualizes the decision process for selecting a research methodology based on the nature of the environmental problem.

The Scientist's Toolkit: Key Reagents and Solutions

Whether in a wet lab or a data lab, research requires specific "reagents" to generate evidence. The following table details essential solutions for conducting environmental and pharmaceutical sustainability research.

Table 2: Key Research Reagent Solutions for Environmental Analysis

| Research Reagent / Solution | Function / Application | Field of Use |

|---|---|---|

| Biodegradable Solvents [29] | Replace traditional, hazardous solvents in drug synthesis and manufacturing to reduce environmental toxicity and waste. | Eco-friendly Drug Manufacturing |

| Green Catalysts [29] | Increase the efficiency of chemical reactions, reducing energy consumption and unwanted byproducts. | Eco-friendly Drug Manufacturing |

| Structured Surveys & Questionnaires [9] [25] | Systematically collect standardized, quantifiable data from a large population for statistical analysis. | Environmental Knowledge, Attitudes and Practices (KAP) Studies |

| Semi-Structured Interview Guides [9] [28] | Provide a flexible framework for in-depth conversations to explore complex experiences, values, and perceptions. | Sustainability Impact Assessment, Barriers to Green Adoption |