Quantitative Techniques for Environmental Analysis: Methods, Applications, and Validation in Pharmaceutical Research

This article provides a comprehensive overview of quantitative techniques essential for robust environmental analysis, with a specific focus on applications in pharmaceutical research and drug development.

Quantitative Techniques for Environmental Analysis: Methods, Applications, and Validation in Pharmaceutical Research

Abstract

This article provides a comprehensive overview of quantitative techniques essential for robust environmental analysis, with a specific focus on applications in pharmaceutical research and drug development. It explores the foundational principles of quantitative methods, details specific methodological approaches like chromatography and remote sensing, addresses common troubleshooting and optimization challenges, and provides a framework for the critical validation and comparative assessment of different techniques. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes current methodologies to support data-driven decision-making, ensure regulatory compliance, and enhance the reliability of environmental data in biomedical contexts.

What is Quantitative Environmental Analysis? Core Principles and Strategic Importance

Defining Quantitative Research in Environmental Science

Quantitative environmental science utilizes numerical data, statistical analysis, mathematical modeling, and measurement to systematically study environmental systems and human impact [1]. This approach provides the empirical foundation for defining planetary boundaries, setting emissions targets, and monitoring conservation efforts, offering verifiable metrics to assess ecological status and forecast change [1] [2].

Core Analytical Framework

The table below summarizes the primary quantitative approaches used in this field.

| Quantitative Approach | Key Function | Application Examples in Environmental Science |

|---|---|---|

| Statistical Analysis [2] | Summarizes data, tests hypotheses, and makes inferences from samples to populations. | Analyzing pollution concentration data; comparing biodiversity metrics between protected and unprotected areas. |

| Mathematical Modeling [1] | Simulates complex environmental processes to forecast future conditions and test scenarios. | Climate modeling; predicting the carrying capacity of an ecosystem. |

| Numerical Data & Measurement [1] | Provides the fundamental, verifiable metrics for assessing current ecological status. | Tracking greenhouse gas emissions; measuring deforestation rates via satellite imagery. |

| Bayesian Methods [2] | Enables systematic updating of predictions and conclusions as new data becomes available. | Incorporating prior evidence into conservation biology models for quicker reaction to emerging conditions. |

Key Quantitative Data in Environmental Research

The following table compiles critical quantitative metrics used to assess environmental health and human impact.

| Environmental Domain | Key Quantitative Metrics | Typical Measurement Units | Significance & Impact Scale |

|---|---|---|---|

| Climate Science [1] | Atmospheric CO2 Concentration, Global Mean Temperature, Sea Level Rise | parts per million (ppm), degrees Celsius (°C), millimeters (mm) | Used to define planetary boundaries and set international emissions reduction targets. |

| Ecosystem Health [1] | Species Richness, Population Abundance, Eutrophication (e.g., N/P levels) | Count of species, Number of individuals, milligrams per liter (mg/L) | Determines the carrying capacity of ecosystems and monitors the effectiveness of conservation efforts. |

| Pollution Tracking [1] [2] | Particulate Matter (PM2.5/PM10), Heavy Metal Concentration in Water | micrograms per cubic meter (µg/m³), micrograms per liter (µg/L) | Informs legally binding environmental standards and public health advisories. |

Experimental Protocol: Quantitative Analysis of Water Quality Parameters

This protocol provides a detailed methodology for the collection, preservation, and statistical analysis of water samples to assess pollutant levels, a common application in environmental monitoring [2].

Setting Up

- Reboot the field computer and data loggers 15 minutes before departure.

- Calibrate all portable meters (pH, conductivity, dissolved oxygen) according to manufacturer specifications using standard calibration solutions.

- Verify that all sample bottles are pre-cleaned, sterilized (if required for microbiological tests), and appropriately labeled.

- Arrange the workspace in the mobile lab van to ensure a clean, organized flow for sample processing.

Sampling and Data Collection

- Meet the participant: For community-based sampling, meet the volunteer at a pre-arranged, public location. Clearly explain the purpose of the study and obtain informed consent before proceeding.

- Instructions and practice: For volunteers, demonstrate the correct sampling technique at the first site. Have them perform a practice collection under your supervision before collecting samples for analysis.

- In-situ Measurements: At each sampling site, directly measure and record parameters like temperature, pH, dissolved oxygen, and conductivity using the pre-calibrated portable meters.

- Sample Collection: Collect water samples in appropriate bottles from predetermined depths and locations. Preserve samples immediately on ice in dark containers to prevent degradation during transport.

Monitoring and Data Management

- Monitor sample integrity during transport, ensuring the cooler temperature remains at 4°C.

- Record all field observations and measurements directly into a digital data sheet to minimize transcription errors.

- On-call researchers should be available during the sampling run to answer questions from volunteers and troubleshoot any equipment issues.

Laboratory Analysis and Data Storage

- Thank and debrief volunteers and provide a means for them to receive the final study results.

- Transport samples to the laboratory for further analysis (e.g., nutrient analysis, metal concentration).

- Save the data by uploading field records to the secure lab server. Perform a backup at the end of the day.

- Shut down the field lab by cleaning all equipment and restocking sampling supplies for the next run.

Exceptions and Unusual Events

- Participant Withdrawal: If a volunteer withdraws consent, their collected data and samples must be securely destroyed. Document the withdrawal.

- Sample Contamination: If a sample is compromised, document the incident, discard the sample, and note the reason in the sample log.

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below details key reagents and materials essential for conducting quantitative environmental research.

| Item Name | Function / Application |

|---|---|

| Standard Calibration Solutions | Used to calibrate portable meters (e.g., for pH, ions) to ensure the accuracy of field measurements. |

| Chemical Preservatives (e.g., acids, biocides) | Added to water samples immediately after collection to prevent chemical and biological degradation of the target analytes during storage. |

| Peer-Reviewed Laboratory Protocols [3] | Detailed, validated instructions for performing specific analytical procedures, ensuring experiments can be reproduced with minimal mistakes. |

| Statistical Software Packages [2] | Enable sophisticated data analysis, including descriptive statistics, inferential testing, and multivariate analysis, to interpret complex environmental data. |

| Bayesian Statistical Models [2] | Provide a framework for decision-making that incorporates prior evidence and systematically accounts for uncertainty in environmental predictions. |

The Role of Numerical Data and Statistical Methods in Environmental Assessment

Environmental assessment relies on numerical data and statistical methods to transform raw environmental observations into actionable evidence for researchers, scientists, and policy-makers. This quantitative approach enables objective evaluation of environmental status, trends, and risks, which is particularly crucial in pharmaceutical development where environmental factors can influence drug safety and efficacy. The complex nature of environmental systems, characterized by multi-pollutant exposures, spatial dependencies, and temporal variations, requires advanced statistical frameworks to accurately discern patterns, attribute causes, and predict outcomes [4]. This document outlines the key statistical methodologies, data visualization techniques, and experimental protocols that form the foundation of robust environmental assessment.

The shift from single-pollutant models to multi-pollutant mixture analysis represents a significant advancement in environmental epidemiology, better reflecting real-world exposure scenarios [4]. Concurrently, developments in data management practices and visualization tools have enhanced our ability to communicate complex environmental data to diverse audiences, from technical specialists to regulatory bodies and the public [5] [6]. These quantitative techniques provide the necessary framework for environmental impact assessments, risk analysis, and compliance monitoring in drug development and broader environmental applications.

Statistical Frameworks for Environmental Data Analysis

Methods for Analyzing Multi-Pollutant Mixtures

Human and ecological systems are typically exposed to complex mixtures of environmental contaminants that may interact, creating combined effects that differ from individual component impacts. Statistical methods have evolved to address the analytical challenges posed by these mixtures, including high dimensionality, correlation between pollutants, and potential interaction effects [4].

Table 1: Statistical Methods for Multi-Pollutant Mixture Analysis

| Method | Primary Application | Key Advantages | Limitations |

|---|---|---|---|

| Weighted Quantile Sum (WQS) Regression | Overall effect estimation of mixtures; identification of high-risk components | Reduces dimensionality; handles multicollinearity; provides component weights | Requires "directional consistency" (all effects in same direction) [4] |

| Bayesian Kernel Machine Regression (BKMR) | Flexible modeling of nonlinear exposure-response relationships; interaction analysis | Does not require pre-specified parametric forms; generates posterior inclusion probabilities (PIPs) for variable importance | Requires continuous exposures; computationally intensive for large datasets [4] |

| Toxicity Equivalency Analysis | Assessment of pollutants with similar mechanisms of action | Uses toxicological potency weighting; conceptually straightforward | Limited to compounds with established toxic equivalence factors [4] |

These methods address different aspects of the mixture analysis challenge. WQS regression constructs a weighted index representing the overall mixture effect while quantifying each component's contribution, making it particularly useful for identifying priority pollutants requiring intervention [4]. BKMR excels at visualizing complex exposure-response relationships and detecting interactions between mixture components without imposing linearity assumptions, valuable for understanding non-additive effects in environmental exposures relevant to pharmaceutical safety assessments [4].

Handling Spatial and Temporal Dependencies

Environmental data often contain inherent spatial and temporal structures that must be accounted for in statistical analyses to avoid misleading conclusions. Spatial dependencies arise from the geographic nature of environmental phenomena, while temporal patterns manifest as trends, seasonality, and autocorrelation in time series data.

Non-parametric methods like the Mann-Kendall trend test are frequently employed for analyzing environmental time series because they do not require assumptions about data distribution and are less sensitive to outliers compared to parametric alternatives [7]. These methods are particularly valuable for assessing long-term environmental changes, such as groundwater quality trends or climate change indicators, which may inform environmental risk assessments for pharmaceutical manufacturing and disposal.

Spatial statistical approaches, including kriging and variogram analysis, enable researchers to model and interpolate environmental variables across geographic areas, supporting the identification of pollution hotspots and understanding of contaminant transport mechanisms [8]. These methods formally incorporate spatial autocorrelation, providing more accurate estimates at unsampled locations and proper uncertainty quantification.

Experimental Protocols and Analytical Workflows

Protocol 1: Weighted Quantile Sum Regression for Mixture Analysis

Weighted Quantile Sum (WQS) regression is a supervised method for estimating the overall effect of a mixture and identifying the relative importance of its components.

Step-by-Step Protocol:

Data Preparation and Preprocessing

- Compile exposure data for all mixture components and outcome measurement

- Address missing data using appropriate imputation methods

- Transform exposure variables to quartiles or deciles to reduce influence of extreme values

- Randomly split data into training (e.g., 40-50%) and validation sets

Bootstrap Sampling and Weight Estimation

- Perform 100-1000 bootstrap samples in the training set

- For each bootstrap sample, estimate toxic weights for each component through regression, constraining weights to sum to 1 and have consistent direction

- Calculate final weights as the mean of bootstrap weights

Model Fitting and Validation

- Construct WQS index using final weights: WQS = Σ(wi × qi), where wi is weight and qi is quantile for component i

- Fit regression model with WQS index and covariates in the validation set: g(μ) = β0 + β1WQS + Σ(δj × cj)

- Evaluate model performance using appropriate metrics (e.g., R², AUC, prediction error)

Interpretation and Reporting

- β_1 represents the overall mixture effect

- Component weights (w_i) indicate relative contribution to overall effect

- Components with highest weights are identified as potential drivers of mixture toxicity

Protocol 2: Bayesian Kernel Machine Regression for Complex Relationships

BKMR provides a flexible framework for modeling exposure-response relationships without pre-specified parametric forms, accommodating nonlinearities and interactions.

Step-by-Step Protocol:

Model Specification

- Define the BKMR model: Yi = h(zi) + β'Xi + εi, where h() is an exposure-response function, zi is the vector of exposures, and Xi are covariates

- Select appropriate kernel function (e.g., Gaussian kernel) to capture similarity between exposure profiles

- Specify prior distributions for model parameters

Model Fitting via Markov Chain Monte Carlo

- Implement MCMC algorithm to generate posterior distributions

- Run sufficient iterations (typically 10,000-50,000) with burn-in period

- Assess convergence using diagnostic statistics (Gelman-Rubin statistic, trace plots)

Exposure-Response Visualization

- Estimate univariate exposure-response relationships by fixing other exposures at specific percentiles (e.g., median)

- Visualize bivariate exposure-response relationships to assess interactions

- Generate plots showing how the response changes as exposures vary

Variable Importance Assessment

- Calculate Posterior Inclusion Probabilities (PIPs) for each exposure

- Interpret PIPs as probability that exposure is associated with outcome (PIP > 0.5 suggests important variable)

- Identify exposures most likely to drive health effects

Data Visualization for Environmental Assessment

Visualization Techniques for Different Data Types

Effective data visualization transforms complex environmental datasets into interpretable information that can drive decision-making in pharmaceutical development and environmental management.

Table 2: Environmental Data Visualization Methods

| Data Type | Recommended Visualizations | Applications in Environmental Assessment |

|---|---|---|

| Temporal Trends | Line charts, Area charts | Tracking pollutant concentrations over time, climate change indicators, compliance monitoring [5] |

| Spatial Patterns | Heat maps, Chloropleth maps, 3D visualizations | Identifying pollution hotspots, species distribution, environmental justice assessments [5] |

| Comparative Analysis | Bar charts, Radar charts | Comparing emissions across regions/industries, multidimensional environmental performance [5] |

| Distributions | Histograms, Scatter plots | Analyzing pollution level distributions, relationships between environmental variables [5] |

| Proportions | Pie charts, Donut charts, Tree maps | Energy source composition, biodiversity contributions by region [5] |

Best Practices in Environmental Data Visualization

Implementing effective visualizations requires attention to design principles that enhance comprehension and accurate interpretation:

- Audience Appropriateness: Tailor complexity and terminology to the target audience (public, policymakers, scientific peers) [5]

- Strategic Color Use: Employ intuitive color schemes (e.g., green for vegetation, blue for water) with sufficient contrast for readability [5]

- Narrative Focus: Lead with key insights rather than raw data, ensuring visualizations serve the story (e.g., rising sea levels, conservation success) [5]

- Balanced Simplification: Reduce clutter without omitting critical details, using annotations to highlight key takeaways [5]

- Interactive Exploration: Implement drill-down capabilities, parameter adjustments, and temporal sliders to engage users in data exploration [5] [9]

Advanced visualization platforms like Infogram and Locus EIM offer AI-powered chart suggestions, interactive features, and custom branding options that facilitate the creation of compelling environmental data visualizations for regulatory submissions and stakeholder communications [5] [9].

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Environmental Assessment

| Tool/Category | Specific Examples | Function in Environmental Assessment |

|---|---|---|

| Statistical Software | R packages (WQS, BKMR), Python libraries | Implementation of specialized statistical methods for mixture analysis and spatial-temporal modeling [4] |

| Data Visualization Platforms | Infogram, Tableau, Locus EIM, Ocean Data View | Creation of interactive maps, charts, and dashboards for environmental data exploration and communication [5] [9] [10] |

| Environmental Data Repositories | DataONE, CEBS, Comparative Toxigenomics Database | Access to standardized environmental and toxicological datasets for comparative analysis and model validation [11] |

| Geospatial Tools | GIS+, Google Earth Engine, Argovis | Spatial analysis, interpolation, and mapping of environmental variables across geographic regions [9] [10] |

| Data Management Frameworks | FAIR Principles, Data Life Cycle Models | Ensuring research data integrity, accessibility, and reproducibility through structured management practices [6] |

Numerical data and statistical methods form the cornerstone of robust environmental assessment, providing the quantitative foundation for evidence-based decision-making in pharmaceutical development and environmental management. The advancement of mixture methods like WQS regression and BKMR has significantly improved our ability to analyze complex multi-pollutant exposures that better reflect real-world conditions [4]. When coupled with appropriate data visualization techniques and comprehensive research data management practices, these quantitative approaches enable researchers to transform raw environmental measurements into actionable insights for protecting human health and ecological systems.

The continued development and application of these quantitative methods will be essential for addressing emerging environmental challenges and fulfilling regulatory requirements in pharmaceutical development. By adhering to standardized protocols, implementing appropriate statistical frameworks, and effectively communicating results through strategic visualization, environmental researchers can generate reliable evidence to support drug safety assessments, environmental impact evaluations, and sustainability initiatives across the pharmaceutical industry.

Within the rigorous field of environmental analysis, the application of robust quantitative techniques is paramount for generating reliable and actionable evidence. This document delineates the core advantages of quantitative research—objectivity, measurability, and generalizability—and provides detailed application notes and protocols to implement these principles effectively in studies pertaining to environmental monitoring, resource management, and sustainable engineering. The structured approach outlined herein ensures that research findings are not only scientifically sound but also capable of informing policy and industrial practices [12].

Core Advantages and Their Application in Environmental Analysis

The strength of quantitative research lies in its systematic approach to data collection and analysis, which is critical for addressing complex environmental challenges.

2.1 Objectivity and Unbiased Results Quantitative research is fundamentally built on objectivity. It utilizes numerical data, controlled methods, and standardized processes that minimize personal bias and influence [13]. This is achieved through consistent questions, structured answer options, and an overall measurement framework. In environmental analysis, this translates to data that reflects facts rather than opinions, making it indispensable for contentious areas such as carbon footprint analysis or environmental impact assessments where unbiased evidence is crucial for stakeholder trust and regulatory compliance [12].

2.2 Measurability, Accuracy, and Data Integrity This advantage refers to the capacity to precisely quantify phenomena and verify the resulting data. Quantitative studies adhere to strict rules that underpin the confidence in the results, including replication, reliability, and data validation [13]. For environmental scientists, this allows for the precise tracking of pollutant concentrations, the modeling of resource consumption, and the verification of emission reduction strategies. Statistical techniques such as regression analysis and multivariate analysis reveal underlying patterns and relationships, supporting hypothesis testing and predictive modeling about environmental cause and effect [13] [12].

2.3 Generalizability of Findings The ability to generalize findings from a sample to a broader population is a key strength of quantitative research. By employing random sampling, stratified sampling, and other well-planned methods, researchers can create datasets that are representative of large populations, such as a specific watershed, an urban airshed, or a regional ecosystem [13]. This generalizability is essential for developing large-scale environmental policies and management strategies, as it ensures that the insights gained from the study are applicable and reliable for the entire system of interest.

Table 1: Core Advantages of Quantitative Research in Environmental Analysis

| Key Advantage | Core Principle | Application in Environmental Analysis |

|---|---|---|

| Objectivity | Relies on numerical data and controlled methods to reduce personal bias [13]. | Provides unbiased data for environmental impact statements and regulatory compliance. |

| Measurability | Employs statistical analysis to reveal patterns, trends, and predictions [13]. | Tracks pollutant levels, models resource allocation, and forecasts climate change impacts. |

| Generalizability | Uses large sample sizes and probabilistic sampling to infer findings to a larger population [13]. | Enables the scaling of findings from a local study site to a regional or national policy. |

Experimental Protocols for Quantitative Environmental Analysis

The following protocols provide a framework for conducting sound quantitative environmental research.

3.1 Protocol: Lifecycle Assessment (LCA) for Sustainable Engineering 1. Goal and Scope Definition: Clearly define the purpose of the assessment and the system boundaries (e.g., "cradle-to-grave" for a product). Establish the functional unit for all comparisons (e.g., per 1 kg of material produced). 2. Inventory Analysis (LCI): Compile and quantify energy and material inputs, and environmental releases (outputs) for each stage of the product's life cycle. This involves data collection on resource extraction, manufacturing, transportation, use, and disposal. 3. Impact Assessment (LCIA): Evaluate the potential environmental impacts of the inventory items. This includes classifying emissions into impact categories (e.g., global warming potential, acidification, eutrophication) and modeling their respective contributions. 4. Interpretation: Analyze the results, check their sensitivity, and draw conclusions consistent with the goal and scope. This step should identify significant issues and provide actionable information for decision-makers [12].

3.2 Protocol: Quantitative Survey on Environmental Attitudes and Behaviors 1. Survey Design: Develop a structured questionnaire with closed-ended questions (e.g., Likert scales, multiple-choice) to ensure consistency and quantifiability. Pre-test the survey to identify and rectify ambiguities. 2. Sampling: Define the target population (e.g., residents of a specific region). Use a probability sampling method, such as stratified random sampling, to ensure the sample is representative and supports generalizability. 3. Data Collection: Administer the survey via digital platforms, telephone, or in-person interviews, maintaining consistent procedures across all respondents. 4. Data Analysis: Employ statistical software to analyze the data. Techniques include descriptive statistics (e.g., means, frequencies) to summarize responses and inferential statistics (e.g., chi-square tests, regression) to test hypotheses about relationships between variables, such as the link between demographic factors and recycling habits [13] [14].

Data Presentation and Visualization Protocols

Effective communication of quantitative findings is achieved through clear tables and diagrams.

4.1 Guidelines for Effective Table Design Tables are used to present systematic overviews of results, providing a richer understanding of data where exact numerical values are important [15]. A well-constructed table should be clear and concise, meeting standard scientific conventions [14].

- Self-Explanatory: Include a clear title, numbered consecutively. The title should briefly explain what, where, and when the data represents [15].

- Structure: Rows should be ordered in a meaningful sequence, and comparisons are typically placed from left to right. Avoid crowding the table with non-essential data [15].

- Footnotes: Use footnotes for definitions of abbreviations, explanatory notes, and to highlight statistical significance [14] [15].

- Discussion: In the text, do not simply restate the table's contents. Instead, interpret and highlight the key findings and trends that the table reveals [14].

Table 2: Example Structure for Presenting Descriptive Statistics of an Environmental Dataset

| Variable | Mean | Standard Deviation | Median | Range | N |

|---|---|---|---|---|---|

| PM2.5 (μg/m³) | 12.5 | 4.2 | 11.7 | 5.2 - 28.9 | 1,200 |

| Water pH | 7.2 | 0.5 | 7.1 | 6.0 - 8.5 | 850 |

| Household Energy Consumption (kWh/month) | 350 | 120 | 330 | 150 - 900 | 500 |

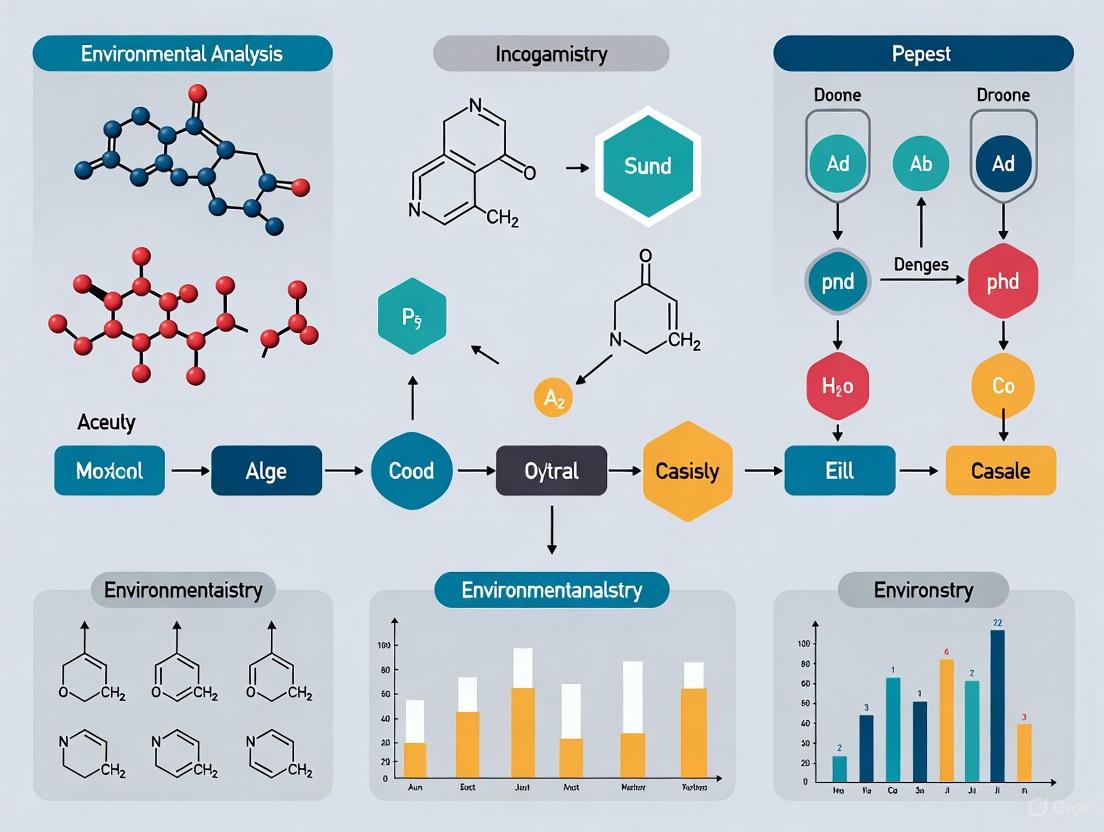

4.2 Experimental Workflow for an Environmental Monitoring Study The following diagram illustrates a generalized workflow for a quantitative environmental monitoring study, from hypothesis formulation to the application of findings.

The Scientist's Toolkit: Essential Reagents and Materials

This section details key resources commonly used in quantitative environmental analysis research.

Table 3: Key Research Reagent Solutions for Environmental Analysis

| Item / Solution | Function / Application |

|---|---|

| Statistical Software (R, Python, SPSS) | Used for data cleaning, statistical analysis (e.g., regression, multivariate analysis), and generating predictive models [13]. |

| Environmental Sampling Kits | Pre-packaged kits for field collection of water, soil, or air samples, ensuring standardized and uncontaminated collection. |

| Reference Materials (CRMs) | Certified samples with known analyte concentrations used to calibrate instruments and validate analytical methods, ensuring data accuracy. |

| Mathematical Modeling Software | Enables the creation of models for sustainable engineering practices, such as optimizing resource allocation or simulating environmental impacts [12]. |

| Digital Data Collection Platforms | Supports large-scale, cost-efficient surveys and automated data gathering across diverse geographical regions [13]. |

| Laboratory Information Management System (LIMS) | Software-based system that tracks samples and associated data to ensure integrity and streamline workflow in analytical laboratories. |

In environmental analysis research, the choice between quantitative and qualitative methods represents a fundamental decision point that shapes all subsequent aspects of study design, data collection, and analytical interpretation. These methodological approaches represent distinct paradigms for investigating environmental phenomena, each with characteristic strengths and limitations. Quantitative research employs numerical data and statistical analysis to objectively measure variables and test predefined hypotheses, answering questions about "how much" or "how many" [16]. In contrast, qualitative research explores subjective experiences, meanings, and contexts through non-numerical data to understand "how" or "why" environmental phenomena occur [16]. Within environmental science, this distinction proves particularly significant when investigating complex socio-ecological systems where both biophysical measurements and human dimensions require integration.

The emerging field of sustainable engineering increasingly relies on quantitative methods for modeling environmental impacts, optimizing resource allocation, and developing decision support systems [12]. Simultaneously, qualitative approaches remain essential for understanding stakeholder perspectives, governance challenges, and behavioral dimensions of environmental problems [17]. Mixed-methods research, which strategically combines both approaches, has gained prominence in environmental studies as it can provide both statistical generalization and contextual depth, potentially canceling out the limitations of either methodology used alone [16].

Key Differences Between Quantitative and Qualitative Research Approaches

The methodological divide between quantitative and qualitative research extends throughout the entire research process, from initial design to final analysis. Understanding these distinctions enables environmental researchers to select the approach most aligned with their specific investigative goals and the nature of their research questions.

Table 1: Fundamental Differences Between Quantitative and Qualitative Research Approaches

| Characteristic | Quantitative Research | Qualitative Research |

|---|---|---|

| Research Aims | Measures variables and tests hypotheses through numerical data [16] | Explores subjective experiences and meanings through non-numerical data [16] |

| Data Collection Methods | Surveys, experiments, compilations of records and information, observations of specific reactions [16] | Interviews, focus groups, ethnographic studies, examination of personal accounts and documents [16] |

| Study Design | Structured, rigid designs; often based on random samples [16] | Flexible, emergent designs; typically uses smaller, context-driven samples [16] |

| Data Analysis | Statistical tools including cross-tabulation, trend analysis, and descriptive statistics [16] | Coding and interpreting narratives; identifying themes and patterns [16] |

| Sample Characteristics | Larger, often randomized samples [16] | Smaller, flexible, non-randomized samples [16] |

| Research Environment | Typically controlled settings [16] | Natural field settings (e.g., participants' homes) [16] |

The epistemological foundations of these approaches differ significantly. Quantitative methods typically embrace a positivist perspective, seeking objective measurement and causal explanation through mathematical representation of environmental phenomena [16]. Qualitative methods generally adopt an interpretivist stance, acknowledging that environmental realities are socially constructed and context-dependent, requiring researchers to interpret meanings and perspectives embedded in specific situations [16]. These philosophical differences manifest practically in how researchers frame questions, interact with subjects, and conceptualize validity.

Selecting the Appropriate Methodological Approach

Alignment with Research Questions

The most critical factor in methodological selection is the nature of the research question itself. Quantitative approaches prove most appropriate when researchers seek to measure environmental variables, establish statistical relationships, test hypotheses, or generalize findings to broader populations. Qualitative approaches excel when investigating complex processes, understanding perspectives of stakeholders, exploring understudied phenomena, or developing contextualized explanations.

Table 2: Exemplary Research Questions in Environmental Analysis

| Quantitative Research Questions | Qualitative Research Questions |

|---|---|

| What is the correlation between industrial effluent concentrations and aquatic biodiversity metrics in a watershed? | How do different stakeholder groups perceive the effectiveness of watershed conservation policies? |

| What percentage of a population adheres to recommended recycling guidelines across demographic segments? | Why do some communities maintain strong environmental traditions while others abandon them despite similar economic conditions? |

| How does the introduction of an emissions trading scheme quantitatively affect air pollution levels over time? | How do cultural factors influence the adoption of sustainable agricultural practices among smallholder farmers? |

The research purpose further guides methodological selection. When environmental research aims to confirm or validate existing theories or measure predefined variables, quantitative methods typically offer greater precision and statistical power. When the goal is to explore complex phenomena, generate new theoretical frameworks, or understand nuanced contextual factors, qualitative approaches provide the necessary flexibility and depth [16]. In environmental policy contexts, quantitative data often demonstrates the scale and distribution of problems, while qualitative data illuminates implementation challenges and social acceptance.

Practical Considerations in Method Selection

Several practical considerations influence the choice between quantitative and qualitative methods in environmental research:

Resource availability: Quantitative studies often require substantial resources for large-scale data collection, specialized equipment for environmental measurements, and statistical expertise, but can analyze large datasets efficiently once collected [16]. Qualitative studies may demand fewer participants but require significant time for data collection through interviews or observations, and specialized expertise in interpretive analysis [16].

Temporal dimensions: Quantitative methods can efficiently track changes over time through repeated measures designs, while qualitative approaches can provide rich understanding of processes and temporal sequences through longitudinal case studies.

Audience expectations: Decision-makers and regulatory bodies often prefer quantitative evidence for its perceived objectivity and generalizability, while communities and implementation teams may value qualitative insights for their contextual relevance and narrative power.

The choice between these approaches is not necessarily binary. Mixed-methods designs strategically combine quantitative and qualitative elements to leverage the strengths of both paradigms [16]. For example, an environmental study might employ quantitative methods to establish statistical relationships between pollution sources and health outcomes, while using qualitative approaches to understand community responses and adaptation strategies.

Quantitative Methods in Environmental Analysis: Protocols and Data Presentation

Experimental Protocols for Quantitative Environmental Analysis

Protocol 1: Systematic Environmental Monitoring and Data Collection

Objective: To establish standardized procedures for collecting quantitative environmental data that ensures consistency, reliability, and statistical validity.

Research Planning Phase

- Define specific, measurable environmental variables and their units of measurement

- Establish sampling framework (random, stratified, or systematic) based on research objectives

- Determine appropriate sample size using statistical power calculations

- Select measurement instruments and validate their precision and accuracy

Data Collection Phase

- Implement standardized measurement procedures across all sampling locations

- Record metadata including temporal, spatial, and contextual factors

- Establish quality control protocols including replicate measurements and control samples

- Maintain detailed documentation of all procedures and any deviations

Data Management Phase

- Create structured database with consistent formatting and coding

- Implement data validation checks to identify outliers or errors

- Document all data transformations or calculations

- preserve raw data while creating analysis-ready datasets

Protocol 2: Quantitative Analysis of Environmental Correlations

Objective: To identify and measure statistical relationships between environmental variables through systematic data analysis.

Data Preparation

- Screen data for missing values, outliers, and distributional characteristics

- Determine appropriate data transformations for non-normal distributions

- Create descriptive statistics for all variables (mean, median, standard deviation, range) [14]

Statistical Analysis Selection

- Select analytical techniques based on research questions and data characteristics

- For continuous variables: correlation analysis, regression modeling

- For categorical comparisons: t-tests, ANOVA, chi-square tests

- For complex multivariate relationships: factor analysis, multivariate regression

Interpretation and Validation

- Interpret statistical significance in context of practical importance

- Validate model assumptions through residual analysis

- Conduct sensitivity analyses to test robustness of findings

- Triangulate results with complementary qualitative data when possible

Quantitative Data Presentation Standards

Effective presentation of quantitative environmental data requires careful consideration of both tabular and graphical formats to communicate findings clearly and accurately.

Table 3: Descriptive Statistics for Environmental Monitoring Data

| Variable | Mean | Median | Standard Deviation | Variance | Range | Skewness | Kurtosis | N |

|---|---|---|---|---|---|---|---|---|

| PM2.5 (μg/m³) | 24.56 | 22.10 | 8.811 | 77.635 | 35 (8-43) | 0.341 | -0.709 | 145 |

| Water pH | 6.89 | 6.95 | 0.433 | 0.187 | 2.1 (5.8-7.9) | -0.218 | -0.918 | 89 |

| Soil Lead (mg/kg) | 142.33 | 118.75 | 67.234 | 4520.415 | 285 (25-310) | 1.018 | 0.885 | 203 |

| Biodiversity Index | 0.67 | 0.69 | 0.156 | 0.024 | 0.58 (0.32-0.90) | -0.105 | -0.642 | 56 |

When creating tables for quantitative environmental data, researchers should follow established principles of effective table design: number tables consecutively, provide clear brief titles, ensure column and row headings are unambiguous, present data in logical order, and include units of measurement for all variables [18] [14]. For quantitative data with natural ordering, presentation should follow that order (e.g., size, chronological sequence, or geographical logic) rather than alphabetical arrangement [18].

Graphical Presentation of Quantitative Environmental Data

Visual representation of quantitative data enhances comprehension of patterns, trends, and relationships in environmental datasets. Different graphical formats serve distinct communicative purposes:

Histograms display frequency distributions of continuous environmental variables (e.g., pollutant concentrations, temperature measurements) using contiguous bars that represent class intervals [19]. The area of each bar corresponds to the frequency of observations within that range, providing immediate visual understanding of distribution shape, central tendency, and variability [18].

Line diagrams effectively illustrate temporal trends in environmental parameters, showing changes in metrics such as air quality indices, species populations, or resource consumption over time [18]. These are essentially frequency polygons where class intervals represent temporal units (months, years, decades).

Scatter plots visualize correlations between two continuous environmental variables, such as the relationship between industrial activity and water quality parameters [18]. When points concentrate around a line or curve, they indicate a relationship between the variables, with correlation coefficients quantifying the strength and direction of association.

Frequency polygons represent distributions through points connected by straight lines, particularly useful for comparing multiple distributions simultaneously (e.g., pollution levels across different regions or time periods) [19].

For all graphical presentations, researchers must ensure proper labeling, appropriate scaling, and clear legends to prevent misinterpretation [18]. Visualizations should be self-explanatory with informative titles and axis labels that include units of measurement [18].

Qualitative Methods in Environmental Analysis: Protocols and Approaches

Methodological Protocols for Qualitative Environmental Research

Protocol 3: Conducting Qualitative Interviews for Stakeholder Analysis

Objective: To systematically collect rich, contextual data on perspectives, experiences, and values related to environmental issues.

Interview Protocol Development

- Develop semi-structured interview guide with open-ended questions

- Sequence questions to move from general to specific topics

- Include probing questions to elicit detailed responses

- Pilot test and refine questions for clarity and relevance

Data Collection Procedures

- Select participants through purposive sampling to ensure diverse perspectives

- Conduct interviews in settings comfortable for participants

- Record interviews with permission and take supplementary notes

- Maintain reflexivity by documenting researcher impressions and contextual factors

Data Management and Documentation

- Transcribe interviews verbatim while preserving conversational features

- Anonymize data to protect participant confidentiality

- Create system for tracking interviews and supporting materials

- Establish audit trail documenting methodological decisions

Protocol 4: Qualitative Data Analysis through Thematic Coding

Objective: To identify, analyze, and report patterns (themes) within qualitative environmental data.

Data Familiarization

- Read and re-read transcripts to gain immersion and intimate familiarity

- Note initial observations, patterns, and potential themes

- Document analytical memos capturing early interpretations

Systematic Coding

- Generate initial codes systematically across entire dataset

- Organize data relevant to each code while preserving context

- Refine codes through iterative review and comparison

- Collate codes into potential themes gathering all relevant data

Theme Development and Refinement

- Review candidate themes in relation to coded extracts and entire dataset

- Develop thematic map of analysis and define essence of each theme

- Identify compelling extract examples and analyze within and across themes

- Relate thematic analysis back to research question and literature

Analytical Rigor in Qualitative Environmental Research

Ensuring methodological rigor in qualitative environmental studies involves addressing credibility, transferability, dependability, and confirmability through specific techniques:

Triangulation uses multiple data sources, methods, investigators, or theories to cross-validate findings and reduce the risk of systematic biases [17].

Member checking returns preliminary findings to participants to verify accuracy and interpretive validity, strengthening the credibility of results.

Thick description provides detailed accounts of contexts and phenomena to allow readers to assess transferability to other settings.

Transparent documentation of methodological decisions, data collection processes, and analytical procedures creates an audit trail that supports dependability and confirmability.

Environmental researchers using qualitative methods should explicitly address their positionality and reflexivity, acknowledging how their backgrounds, assumptions, and relationships to the research topic might influence the research process [17]. This transparency enhances the integrity and trustworthiness of qualitative findings.

Integrated and Mixed-Method Approaches in Environmental Research

Mixed-Method Designs for Complex Environmental Questions

Many complex environmental problems benefit from methodological integration, where quantitative and qualitative approaches complement each other to provide more comprehensive understanding. Three common mixed-method designs in environmental research include:

Convergent parallel design: Quantitative and qualitative data are collected simultaneously but independently, then merged during interpretation to develop complete understanding of the research problem.

Explanatory sequential design: Quantitative methods identify patterns or relationships, followed by qualitative methods to explain or contextualize those patterns.

Exploratory sequential design: Qualitative investigation explores a phenomenon and identifies key variables, informing subsequent quantitative study that tests relationships in larger samples.

The Q-methodology represents a distinctive mixed-method approach increasingly applied in environmental sustainability research [17]. This technique combines qualitative depth with quantitative analytical rigor by systematically studying human subjectivity through factor analysis of individual viewpoints. In environmental applications, Q-methodology helps identify shared perspectives on sustainability issues, natural resource management conflicts, or environmental governance preferences across different stakeholder groups [17].

Decision Framework for Methodological Selection

Environmental researchers can apply a systematic decision framework when selecting appropriate methodological approaches:

Clarify the research purpose: Is the goal exploration, description, explanation, prediction, or intervention?

Identify the knowledge gap: Does the research require breadth and generalization or depth and contextualization?

Consider resource constraints: What are the limitations regarding time, funding, expertise, and access?

Anticipate analytical requirements: What types of evidence will be most convincing to intended audiences?

Evaluate ethical dimensions: How will the methodological approach affect participants and communities?

This decision process should recognize that methodological choices are not permanent; initial qualitative exploration often informs subsequent quantitative verification, while unexpected quantitative findings may necessitate qualitative investigation to explain mechanisms or contextual factors.

Table 4: Research Reagent Solutions for Environmental Analysis

| Research Tool | Primary Function | Application Examples |

|---|---|---|

| Geographic Information Systems (GIS) | Spatial data analysis and visualization | Mapping pollution distribution, land use changes, habitat fragmentation |

| Remote Sensing Platforms | Large-scale environmental monitoring | Tracking deforestation, urban expansion, water body changes |

| Environmental Sensors and Loggers | Continuous automated data collection | Monitoring air/water quality parameters, microclimate conditions |

| Statistical Analysis Software | Quantitative data analysis and modeling | Identifying trends, testing relationships, predicting environmental outcomes |

| CAQDAS (Computer-Assisted Qualitative Data Analysis Software) | Qualitative data organization and analysis | Coding interview transcripts, developing thematic frameworks |

| Stable Isotope Analysis | Tracing biogeochemical pathways | Identifying pollution sources, studying food webs, water cycling |

| Environmental DNA (eDNA) Methods | Biodiversity assessment through genetic material | Detecting species presence, measuring biodiversity, monitoring invasive species |

| Life Cycle Assessment Tools | Quantifying environmental impacts across product lifecycles | Comparing sustainability of materials, processes, or products |

The quantitative-qualitative dichotomy in environmental research represents not opposing camps but complementary approaches to understanding complex socio-ecological systems. Quantitative methods provide the precision, generalizability, and statistical power needed to measure environmental parameters, test interventions, and establish empirical relationships at scale. Qualitative approaches offer the contextual depth, conceptual richness, and phenomenological understanding necessary to interpret environmental behaviors, policies, and perceptions in real-world settings.

The most robust environmental research increasingly transcends simplistic methodological divisions, employing integrated approaches that combine numerical measurement with interpretive understanding. This integration acknowledges that environmental challenges exist simultaneously as biophysical phenomena measurable through scientific instruments and as social constructs shaped by human values, institutions, and experiences. Future methodological innovation in environmental research will likely focus not on privileging one approach over the other, but on developing more sophisticated frameworks for their strategic combination.

Environmental researchers stand to benefit from methodological flexibility—selecting and adapting approaches based on the specific nature of their research questions rather than disciplinary convention or technical familiarity. As environmental problems grow increasingly complex and interdisciplinary, the ability to strategically employ both quantitative and qualitative methods, either sequentially or in parallel, will become an essential competency for generating the comprehensive knowledge needed to address sustainability challenges.

Essential Statistical Concepts for Environmental Data Analysis

Environmental science is a multidisciplinary field that relies on quantitative techniques to understand complex natural systems, address sustainability concerns, and develop evidence-based solutions to environmental problems. The core of this approach lies in using statistical methods to transform raw environmental data into actionable knowledge, providing a reliable representation of reality to reduce uncertainties and inform policy-making [20] [2]. This involves a rigorous process of collecting, summarizing, presenting, and analyzing sample data to draw valid conclusions about population characteristics and make reasonable decisions [21]. The ability to understand and critically evaluate this statistical information—a skill known as statistical literacy—is fundamental for researchers, scientists, and professionals engaged in environmental analysis and drug development, enabling informed decisions and effective sustainability measures [22].

Foundational Statistical Concepts

Populations, Samples, and Data Types

In environmental data analysis, a population represents the complete collection of all elements or items of interest in a particular study, while a sample is a subset of that population, collected to represent the whole [21]. For instance, when studying groundwater contamination, the population might be all groundwater resources in a region, whereas samples would be specific water collections from multiple monitoring wells. A parameter is an unknown characteristic of the population (e.g., the true mean concentration of a pollutant), while a statistic is a function of sample observations used to estimate that parameter [21].

Environmental data can be classified into different types:

- Numerical data: Measurements like pollutant concentrations, temperature, or pH levels.

- Categorical data: Classifications such as soil type, land use category, or species presence/absence.

- Time series data: Measurements collected sequentially over time, common in climate and air quality monitoring.

- Spatial data: Georeferenced information used in geographical analyses.

Descriptive versus Inferential Statistics

Descriptive statistics quantitatively describe or summarize features of a dataset through measures of central tendency (mean, median, mode), measures of dispersion (range, variance, standard deviation), and graphical representations (histograms, scatter plots, box plots) [21]. These methods help researchers understand the basic patterns and distribution of their environmental data before proceeding to more complex analyses.

Inferential statistics employ probability theory to deduce properties of a population from sample data [21]. This process includes:

- Estimation: Calculating point estimates (single values) or interval estimates (ranges) for population parameters.

- Hypothesis testing: Making decisions about population parameters using sample statistics.

The transition from descriptive to inferential statistics enables environmental scientists to make predictions and draw conclusions that extend beyond their immediate data, which is particularly valuable when studying large environmental systems where investigating each member is impractical or expensive [21].

Table 1: Key Statistical Concepts in Environmental Data Analysis

| Concept | Definition | Environmental Application Example |

|---|---|---|

| Population | Complete collection of elements of interest | All trees in a forest ecosystem |

| Sample | Subset of the population hopefully representative of the total | Selected trees measured for growth rate |

| Parameter | Unknown population characteristic | True mean height of all trees in the forest |

| Statistic | Function of sample observations | Calculated mean height from sampled trees |

| Descriptive Statistics | Methods for summarizing and describing data | Calculating average air quality index values |

| Inferential Statistics | Methods for making conclusions about populations based on samples | Estimating total forest carbon storage from sample plots |

Core Statistical Tests and Their Applications

Hypothesis Testing Framework

Hypothesis testing is a formal procedure for investigating ideas about population parameters using sample statistics [21]. In environmental science, this process begins with defining a null hypothesis (H₀), which represents a default position or status quo (e.g., "the new pollutant has no effect on fish mortality"), and an alternative hypothesis (H₁), which contradicts the null hypothesis [21].

The testing procedure involves:

- Selecting an appropriate test statistic based on the data type and research question.

- Determining a critical region (rejection region) based on the chosen significance level (α), which represents the probability of rejecting a true null hypothesis (Type I error) [21].

- Calculating the p-value, the minimum significance level for which the null hypothesis would be rejected [21].

Environmental researchers must also be aware of Type II error (β), which occurs when a false null hypothesis is not rejected [21]. The probability of correctly rejecting a false null hypothesis is known as the power of the test (1-β) [21].

Parametric and Non-Parametric Tests

Environmental researchers select statistical tests based on their data characteristics and research questions. Parametric tests make assumptions about population parameters (e.g., normality of data), while non-parametric tests make fewer assumptions and are useful when data are incomplete, significantly missing, or not normally distributed [21] [7].

Statistical Test Selection Workflow

Table 2: Common Statistical Tests in Environmental Research

| Test Type | Specific Test | Application | Data Requirements | Environmental Example |

|---|---|---|---|---|

| Parametric | One-sample t-test | Compare sample mean to known value | Continuous, normal distribution | Compare measured pollutant levels to regulatory standards |

| Parametric | Two-sample t-test | Compare means of two independent groups | Continuous, normal distribution, equal variances | Compare species richness in protected vs. developed areas |

| Parametric | ANOVA | Compare means of three or more groups | Continuous, normal distribution, homogeneity of variance | Test plant growth across multiple fertilizer treatments |

| Parametric | Linear Regression | Model relationship between variables | Continuous, linear relationship, normal errors | Predict ozone formation based on precursor pollutants |

| Parametric | Pearson Correlation | Assess linear relationship between two variables | Continuous, normal distribution | Examine relationship between temperature and species abundance |

| Non-Parametric | Mann-Whitney U | Compare two independent groups | Ordinal or continuous, non-normal | Compare sediment toxicity between two sites with small samples |

| Non-Parametric | Kruskal-Wallis | Compare three or more independent groups | Ordinal or continuous, non-normal | Test water quality differences across multiple watersheds |

| Non-Parametric | Spearman Correlation | Assess monotonic relationship | Ordinal or continuous, non-normal | Rank correlation between industrial activity and pollution levels |

Non-parametric tests like the Mann-Kendall trend test are particularly valuable in environmental science for analyzing large datasets produced by monitoring programs, as they don't require assumptions about data distribution and are less sensitive to outliers [7].

Advanced Analytical Approaches

Regression Analysis and Environmental Modeling

Regression analysis is a powerful statistical tool for examining relationships between environmental variables, enabling researchers to model the impact of various factors on environmental outcomes [23] [22]. Environmental applications range from simple linear models predicting deforestation rates based on economic drivers to complex multivariate approaches that account for multiple interacting factors.

Advanced regression techniques commonly used in environmental data analysis include:

- Multiple linear regression: Models the relationship between multiple predictor variables and a continuous response variable.

- Logistic regression: Predicts categorical outcomes, such as species presence/absence based on habitat characteristics.

- Nonlinear regression: Applies when relationships between variables follow nonlinear patterns common in ecological systems.

- Time series analysis: Accounts for temporal dependencies in environmental data collected over time [23].

These methods allow researchers to quantify effect sizes, identify significant drivers of environmental change, and generate predictive models for scenario planning and risk assessment.

Spatial and Temporal Analysis

Environmental data often contain spatial and temporal dependencies that require specialized analytical approaches. Spatial statistics address the geographic component of environmental data through techniques such as spatial interpolation, spatial weighting, and spatial clustering [23]. These methods help identify patterns, hotspots, and spatial relationships that might not be apparent through non-spatial analyses.

Temporal analysis techniques, including time series analysis and forecasting, are essential for understanding trends, cycles, and seasonal patterns in environmental parameters such as air quality measurements, water quality indicators, and climate variables [23]. These approaches enable researchers to separate signal from noise in long-term monitoring data and make informed projections about future environmental conditions.

Experimental Design and Data Collection Protocols

Sampling Design for Environmental Studies

Proper sampling design is crucial for generating reliable environmental data. The sampling approach must consider representativeness, sample size, and potential biases to ensure valid statistical inferences [22]. Common environmental sampling designs include:

- Simple random sampling: Each potential sampling unit has an equal probability of selection.

- Stratified random sampling: The population is divided into homogeneous subgroups (strata), with random sampling within each stratum.

- Systematic sampling: Samples are collected at regular intervals in space or time.

- Cluster sampling: Intact groups (clusters) are randomly selected, and all units within clusters are sampled.

The choice of sampling design depends on research objectives, population characteristics, and practical constraints such as accessibility and resources. Environmental researchers must also carefully consider sample size determination to ensure adequate statistical power while optimizing resource allocation.

Environmental Study Design Process

Quality Assurance and Quality Control (QA/QC)

Implementing robust QA/QC protocols is essential for generating reliable environmental data. Key components include:

- Field blanks: Samples containing analyte-free media exposed to sampling conditions to detect contamination.

- Duplicate samples: Paired samples collected simultaneously to assess measurement precision.

- Standard reference materials: Samples with known analyte concentrations to evaluate analytical accuracy.

- Calibration curves: Relationships between instrument response and known standard concentrations.

- Detection limits: Calculations of the minimum detectable concentration for each analytical method.

Documenting and reporting QA/QC results allows researchers to quantify and communicate measurement uncertainty, supporting appropriate interpretation of environmental data.

Statistical Software and Computing Tools

Environmental data analysts utilize various software tools for statistical analysis and data management:

- R: An open-source programming language and environment for statistical computing and graphics, particularly strong for environmental applications with specialized packages for ecological statistics, spatial analysis, and hydrology.

- Python: A general-purpose programming language with extensive data analysis libraries (pandas, NumPy, SciPy) and specialized environmental packages.

- GIS software: Geographic Information Systems for managing, analyzing, and visualizing spatial environmental data.

- Supercomputing resources: High-performance computing facilities, such as the OU Supercomputing Center for Education and Research, enable complex environmental modeling and large dataset analysis [11].

Several specialized data repositories support environmental research by providing access to quality-controlled datasets:

- DataONE (Data Observation Network for Earth): A distributed framework and cyberinfrastructure for open, persistent, secure access to Earth observational data [11].

- Comparative Toxigenomics Database (CTD): Illuminates how environmental chemicals affect human health [11].

- Chemical Entities of Biological Interest (ChEBI): A freely available dictionary of molecular entities focused on 'small' chemical compounds [11].

- NIEHS Environmental Genome Project: Examines relationships between environmental exposures, inter-individual sequence variation in human genes, and disease risk [11].

Table 3: Essential Research Tools for Environmental Data Analysis

| Tool Category | Specific Tool/Resource | Primary Function | Application in Environmental Research |

|---|---|---|---|

| Statistical Software | R | Statistical computing and graphics | Data cleaning, analysis, visualization; specialized environmental packages |

| Statistical Software | Python with scientific libraries (pandas, SciPy) | Data manipulation and analysis | Automated data processing, machine learning applications |

| Spatial Analysis | GIS (Geographic Information Systems) | Spatial data management and analysis | Mapping environmental variables, spatial pattern analysis |

| Computing Resources | Supercomputing Centers | High-performance computing | Complex environmental models, large dataset processing |

| Data Repositories | DataONE | Earth observational data access | Climate, ecological, and environmental data discovery |

| Data Repositories | Comparative Toxigenomics Database | Chemical-biological interactions | Understanding environmental chemical effects on health |

| Specialized Databases | Chemical Entities of Biological Interest (ChEBI) | Chemical compound dictionary | Identifying molecular entities in environmental samples |

| Research Protocols | Springer Protocols, Protocols.io | Reproducible laboratory methods | Standardized procedures for environmental sampling and analysis |

Mastering essential statistical concepts is fundamental for effective environmental data analysis. From basic descriptive statistics to advanced spatial and temporal modeling, these quantitative techniques provide the foundation for evidence-based environmental science and sustainability measurement. The increasing complexity of environmental challenges demands rigorous application of statistical methods, proper experimental design, and appropriate interpretation of results within environmental contexts. By developing statistical literacy and applying these concepts critically, environmental researchers, scientists, and drug development professionals can contribute meaningfully to understanding and addressing pressing environmental issues, from climate change and pollution to conservation and public health. Future directions in environmental statistics will likely involve continued development of methods for handling complex, high-dimensional datasets and integrating diverse data sources to better understand interconnected environmental systems.

Key Quantitative Methods and Their Real-World Applications in Research and Industry

Ultra-Fast Liquid Chromatography (UFLC) represents a significant technological advancement in analytical chemistry, offering dramatically reduced analysis times while maintaining high resolution and sensitivity. This technique is particularly valuable in environmental analysis, where researchers often need to detect and quantify trace-level contaminants in complex matrices quickly and reliably. UFLC achieves this performance through the use of small particle size phases (typically 1.5-3.0 μm) packed in shorter columns (30-50 mm) with reduced internal diameter (~2.0 mm), operating at elevated flow velocities and backpressures. The relationship between particle size and performance follows the van Deemter equation, where decreasing particle size significantly reduces the minimum plate height, allowing operation at higher flow velocities without sacrificing efficiency [24].

The application of UFLC to environmental monitoring provides researchers with the capability to conduct high-throughput screening of multiple samples, enabling more comprehensive environmental assessments and faster response to contamination events. As environmental concerns continue to grow, the implementation of faster, more efficient analytical techniques like UFLC becomes increasingly crucial for assessing ecosystem health and human exposure risks.

Theoretical Principles and Instrumentation

Fundamental Separation Principles

The enhanced performance of UFLC stems from fundamental chromatographic principles described by the van Deemter equation, which relates plate height (H) to linear velocity (μ) through the equation: H = A + B/μ + Cμ [24]. In this equation, A, B, and C represent the coefficients for eddy diffusion, longitudinal diffusion, and resistance to mass transfer, respectively. The A term is proportional to the particle diameter (dp), while the C term is proportional to dp². Therefore, reducing particle size significantly decreases the minimum plate height and allows operation at higher optimum velocities, enabling both faster separations and maintained efficiency [24].

The backpressure generated across the column is inversely proportional to the square of the particle size, creating practical limitations for further particle size reduction. When particle size is halved, pressure increases by a factor of four, making it challenging to use longer columns for increased resolution without specialized high-pressure hardware [24]. This relationship necessitates careful balancing of separation requirements with instrument capabilities when designing UFLC methods.

UFLC System Components

Modern UFLC systems incorporate several specialized components to handle the demands of high-speed separations:

- High-Pressure Pumps: Capable of delivering precise mobile phase gradients at pressures up to 15,000 psi or higher, with low dwell volumes to maintain separation integrity [24].

- Reduced Dispersion Tubing: Specialized small internal diameter capillaries and connections minimize dead volumes that can negatively affect narrow peaks [24].

- Rapid Injection Systems: Automated injectors designed for speed and minimal carryover between samples.

- Fast Detection Systems: Detectors with rapid acquisition rates and quick response times to accurately capture narrow peak profiles [24].

- Temperature Control: Column ovens with precise temperature control to maintain retention time reproducibility.

The following workflow diagram illustrates the typical components and process flow in a UFLC system:

Research Reagent Solutions and Materials

Successful implementation of UFLC methods requires specific reagents and materials optimized for high-speed separations. The following table details essential components for UFLC analysis:

Table 1: Essential Reagents and Materials for UFLC Analysis

| Component | Function | Specifications |

|---|---|---|

| Chromatography Column | Stationary phase support for compound separation | 30-50 mm length, 2.0 mm internal diameter, packed with 1.5-3.0 μm particles [24] |

| Mobile Phase Solvents | Liquid medium for transporting samples through the system | High-purity acetonitrile, methanol, and water; filtered and degassed [25] |

| Derivatization Agents | Enhance detection of target compounds | 6-aminoquinolyl-N-hydroxysuccinimidyl carbamate (AQC), N-(2-aminoethyl) glycine (AEG) [26] |

| Reference Standards | Method calibration and quantification | Certified reference materials of target analytes in appropriate matrices |

| Sample Preparation Materials | Extract and clean samples before analysis | Solid-phase extraction cartridges, filtration units (0.2 μm), centrifugation devices |

For environmental applications focusing on neurotoxin detection, specific derivatization agents have proven valuable. In the analysis of β-N-methylamine-l-alanine (BMAA) and its isomers in environmental samples, derivatizing agents including 6-aminoquinolyl-N-hydroxysuccinimidyl carbamate (AQC) and N-(2-aminoethyl) glycine (AEG) were synthesized and confirmed via nuclear magnetic resonance (NMR) spectroscopy to enhance the detection of isomeric neurotoxic compounds [26].

UFLC Protocol for Environmental Neurotoxin Analysis

Sample Preparation Protocol

- Sample Collection: Collect environmental samples (water, soil, or biological specimens) using clean, contaminant-free containers. For cycad-based samples, collect seeds, leaves, male cones, cyanobacterial symbionts, coralloid roots, or processed cycad seed flour [26].

- Extraction: Homogenize samples in appropriate extraction solvent (typically acidified methanol or aqueous ethanol) using a tissue homogenizer. Use a sample-to-solvent ratio of 1:10 (w/v).

- Cleanup: Centrifuge extracts at 10,000 × g for 15 minutes at 4°C. Transfer supernatant to clean tubes.

- Derivatization: Add 6-aminoquinolyl-N-hydroxysuccinimidyl carbamate (AQC) derivatizing agent to samples at a molar ratio of 1:5 (analyte:AQC). Heat mixture at 55°C for 10 minutes to complete derivatization [26].

- Filtration: Pass derivatives through 0.2 μm membrane filters before UFLC analysis to remove particulate matter.

UFLC Instrument Configuration and Method Parameters

Table 2: UFLC Instrument Parameters for Neurotoxin Separation

| Parameter | Specification |

|---|---|

| Column Type | C18 reverse phase (50 × 2.0 mm) |

| Particle Size | 1.8 μm |

| Mobile Phase A | 0.1% Formic acid in water |

| Mobile Phase B | 0.1% Formic acid in acetonitrile |

| Gradient Program | 5-95% B over 8 minutes |

| Flow Rate | 0.4 mL/min |

| Column Temperature | 40°C |

| Injection Volume | 5 μL |

| Detection | Fluorescence or mass spectrometry |

Separation and Quantification

- System Equilibration: Condition the UFLC system with initial mobile phase composition (95% A, 5% B) for at least 10 column volumes before analysis.

- Sample Analysis: Inject prepared samples using the specified parameters.

- Compound Identification: Identify target neurotoxins based on retention times: L-BMAA (5.4 min), AEG (5.6 min), and 2,4-DAB (6.1 min) [26].

- Quantification: Prepare calibration curves using reference standards at concentrations ranging from 10-1000 ng/mL. Calculate sample concentrations using linear regression analysis.

The following workflow summarizes the complete UFLC analytical process for environmental neurotoxins:

Application in Environmental Neurotoxin Quantification

UFLC has demonstrated exceptional utility in detecting and quantifying environmental neurotoxins, particularly cyanobacterial toxins such as β-N-methylamine-l-alanine (BMAA) and its isomers. Recent research applied UFLC to analyze various environmental samples, revealing significant findings about toxin distribution [26].

Table 3: Quantitative Results of Neurotoxin Analysis in Environmental Samples Using UFLC

| Sample Type | BMAA Concentration | AEG Concentration | 2,4-DAB Concentration | Extraction Efficiency |

|---|---|---|---|---|

| Cycad Seeds | Detected | Detected | Detected | 85-92% |

| Cyanobacterial Symbionts | High levels | High levels | High levels | 88-95% |

| Coralloid Roots | Detected | Detected | Detected | 82-90% |

| Processed Cycad Flour | Below detectable limits | Below detectable limits | Below detectable limits | N/A |

The detection limit for these neurotoxic compounds using the UFLC method was established at approximately 6 × 10³ ng/mL, with the method effectively reducing levels of neurotoxic compounds in processed cycad seeds to below detectable limits [26]. This sensitivity demonstrates the utility of UFLC for monitoring environmental toxins that may pose human health risks.

Quantitative precision for the method showed coefficient of variation (CV) below 20% for 90% of precursors and 95% of proteins, with median CVs at precursor level below 7% for data-independent acquisition methods [27]. This high level of precision makes UFLC particularly valuable for environmental monitoring programs requiring reproducible results across multiple sampling events and analytical batches.

Green Assessment of UFLC Methods

The environmental impact of analytical methods is an increasingly important consideration in laboratory practice. UFLC offers several advantages for green chromatography compared to conventional HPLC methods:

Table 4: Green Assessment of UFLC versus Conventional HPLC

| Parameter | Conventional HPLC | UFLC | Green Improvement |

|---|---|---|---|

| Analysis Time | 15-60 minutes | 1-10 minutes | 50-80% reduction [24] |

| Solvent Consumption | High (mL/min flow rates) | Low (μL-min flow rates) | 50-80% reduction [24] [25] |

| Energy Consumption | Extended run times | Short run times | Significant reduction [25] |

| Waste Generation | High volume | Low volume | Proportional reduction [25] |