Quality Control in Inorganic Analysis: 2025 Protocols for Precision, Compliance, and Innovation

This article provides a comprehensive guide to modern quality control (QC) protocols for inorganic analytical laboratories, tailored for researchers, scientists, and drug development professionals.

Quality Control in Inorganic Analysis: 2025 Protocols for Precision, Compliance, and Innovation

Abstract

This article provides a comprehensive guide to modern quality control (QC) protocols for inorganic analytical laboratories, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of QC, from international standards like ISO 15189:2022 and CLIA to core statistical concepts. The piece delves into practical methodologies, including the application of Internal Quality Control (IQC), advanced techniques like ICP-MS, and emerging trends such as Patient-Based Real-Time Quality Control (PBRTQC). It also offers strategies for troubleshooting common analytical problems, optimizing workflows through automation and data analytics, and validating methods through Proficiency Testing (PT) and measurement uncertainty, ensuring data is reliable, defensible, and fit for purpose in biomedical and clinical research.

The Pillars of Quality: Understanding Standards and Core Concepts for Inorganic Labs

Inorganic analytical laboratories operate within a complex framework of quality and safety standards. Adherence to these protocols is not merely about regulatory compliance but is fundamental to ensuring the accuracy, reliability, and safety of research and diagnostic outcomes. This technical support center focuses on three pivotal sets of guidelines: the international quality standard ISO 15189:2022 for medical laboratories, the United States' Clinical Laboratory Improvement Amendments (CLIA), and the Environmental Protection Agency (EPA) guidelines governing environmental analysis and waste management [1]. The following troubleshooting guides and FAQs are designed to help researchers and scientists navigate specific, common challenges encountered when implementing these standards.

Troubleshooting Guides

Proficiency Testing (PT) Failure Investigation

Proficiency testing is a cornerstone of laboratory quality assurance, required by CLIA, ISO 15189, and EPA frameworks [1] [2]. A failure signals a potential issue in your analytical process.

Problem: Your laboratory has received an unsatisfactory result in an inorganic metals proficiency testing scheme.

Objective: To perform a systematic root cause analysis and implement corrective actions to prevent recurrence.

Experimental Protocol for Investigation:

Immediate Action and Documentation:

- Action: Quarantine all samples and patient results associated with the analytical run in question. Clearly document the failure and all subsequent investigation steps in your Quality Management System (QMS).

- Rationale: This prevents the reporting of potentially inaccurate data and ensures traceability [3].

Re-examine the PT Sample Handling:

- Action: Verify records for PT sample receipt. Check for any deviations from handling instructions (e.g., storage temperature, stabilization period).

- Rationale: Samples that are thermally compromised or improperly stored can degrade, leading to inaccurate results [2].

Review Preparation and Analysis Processes:

- Action: Retrace all steps documented in your procedure. Key points to re-examine include:

- Pipettes and Volumetric Glassware: Check calibration certificates.

- Reagents and Water Purity: Confirm that high-purity (e.g., ASTM Type I) water and trace metal-grade acids were used. Review certificates of analysis for elemental contamination levels [2].

- Calibration: Verify that calibrators were fresh, within expiration, and that the calibration curve was properly accepted.

- Instrument Performance: Review maintenance logs and quality control data from before, during, and after the PT analysis.

- Action: Retrace all steps documented in your procedure. Key points to re-examine include:

Investigate Potential Contamination Sources:

- Action: Analyze your method blanks from the PT run. Elevated levels in the blank indicate contamination. Common sources in inorganic analysis include:

- Protocol: To identify the source, prepare and analyze blank samples in a clean room environment and compare results to those from the main lab.

Implement Corrective and Preventive Action (CAPA):

- Based on the root cause, implement a CAPA. This may involve recalculating and reporting results, retraining staff, changing a procedure, or introducing new controls [2].

Addressing Matrix Interference in EPA Analyses

Problem: Analysis of a soil sample for TCLP (Toxicity Characteristic Leaching Procedure) inorganic contaminants shows an elevated Lower Limit of Quantitation (LLOQ) that is above the regulatory limit.

Objective: Reduce the LLOQ to a level at or below the regulatory threshold to make a definitive compliance determination [4].

Experimental Protocol for Mitigation:

Avoid Unnecessary Dilution:

- Action: Review the sample preparation procedure. If the sample was diluted to bring it within the instrument's calibration range, explore whether a smaller dilution factor can be used.

- Rationale: High dilution factors directly elevate the reporting limit [4].

Employ Sample Clean-up Methods:

- Action: Implement a validated clean-up procedure specific to the matrix and analytes of concern. For inorganic analysis, this could include additional filtration, centrifugation, or chelation techniques not in the original method.

- Rationale: Clean-up removes interfering substances that can cause elevated baselines or signal suppression/enhancement, allowing for a lower LLOQ [4].

Verify Instrument Performance:

- Action: Ensure the instrument is optimized for maximum sensitivity. This may involve cleaning the source, replacing nebulizers, or tuning the mass spectrometer for lower background noise.

- Rationale: Peak instrument condition is a prerequisite for achieving the lowest possible detection limits.

Documentation and Regulatory Reporting:

- Action: If, after all efforts, the LLOQ remains above the regulatory level for specific contaminants like 2,4-Dinitrotoluene, the quantitation limit itself may become the regulatory level for that sample, as per EPA guidance [4]. This decision and all mitigation attempts must be thoroughly documented.

Implementing Risk Management for ISO 15189:2022 Compliance

Problem: A laboratory adopting the updated ISO 15189:2022 standard struggles to integrate the new requirement for a proactive, patient-centered risk management process [5].

Objective: To establish and document a risk management process that identifies, assesses, and mitigates potential risks to patient safety and result quality.

Experimental Protocol for Risk Management:

Risk Identification:

- Action: Conduct a process walk-through from sample collection to result reporting. Use techniques like brainstorming and flowcharting to identify potential failure points (e.g., mislabeled sample, incorrect data entry, reagent storage failure, loss of power to critical equipment).

- Rationale: A systematic review ensures comprehensive coverage of all operational areas [5] [3].

Risk Analysis and Evaluation:

- Action: For each identified risk, estimate its severity (impact on patient care) and its likelihood of occurrence. Use a risk matrix to prioritize which risks require immediate mitigation.

- Rationale: This focused approach ensures efficient use of resources on the most significant risks [6].

Risk Mitigation (Treatment):

- Action: For high-priority risks, develop and implement control measures. For example:

- Risk: Sample mix-up. Mitigation: Implement barcoding and dual-verification at accessioning.

- Risk: Power outage to -80°C freezer. Mitigation: Install a backup generator and continuous temperature monitoring with alarms.

- Rationale: Mitigation actions directly reduce the severity or likelihood of a risk event [5].

- Action: For high-priority risks, develop and implement control measures. For example:

Monitoring and Review:

- Action: Integrate risk review into management meetings. Use internal audits, non-conformances, and customer feedback to trigger updates to the risk register.

- Rationale: Risk management is a continuous process, not a one-time activity [3].

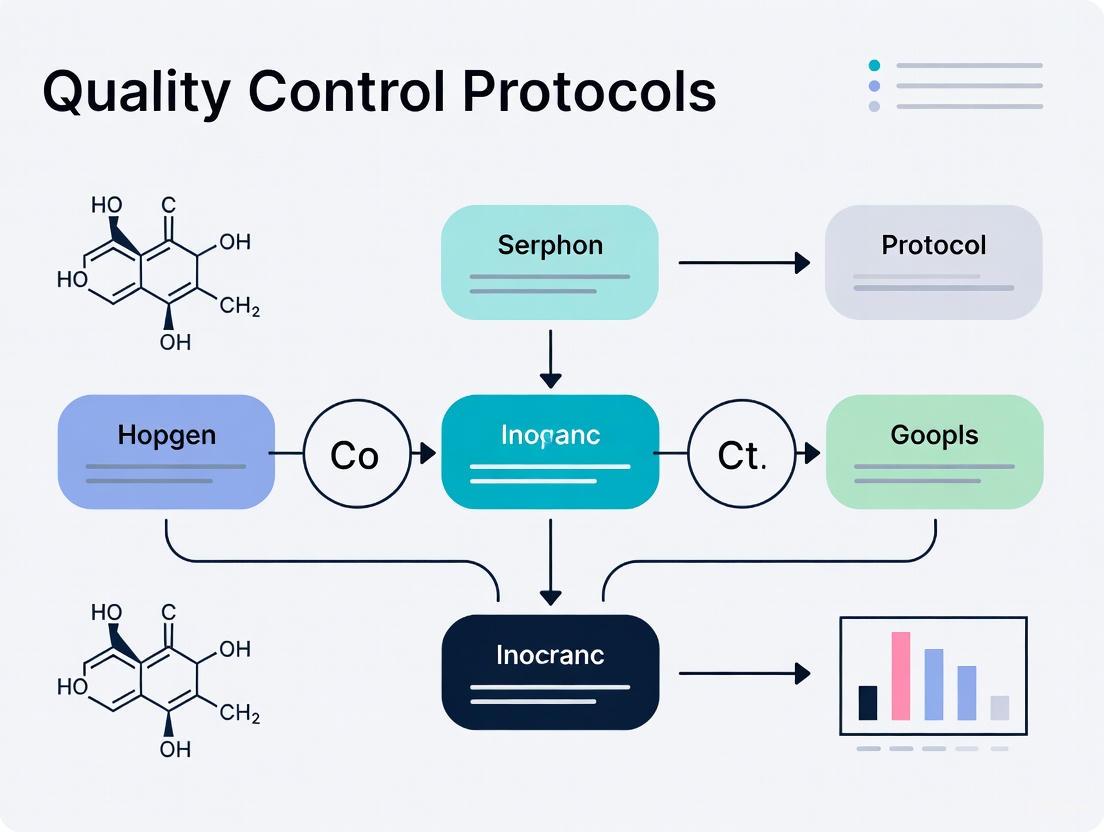

The following workflow visualizes the core processes and their relationships under the three regulatory frameworks discussed:

Frequently Asked Questions (FAQs)

Q1: Under the 2025 CLIA updates, can a Matrix Spike (MS) be used in place of a Laboratory Control Sample (LCS) for accuracy checks?

A1: While performance-based methodology may allow it under certain conditions, this is not recommended as a routine practice. The MS and LCS serve different primary purposes [4]. The LCS demonstrates that the laboratory can perform the analytical procedure correctly in a clean matrix, isolating laboratory performance. The MS demonstrates how the specific sample matrix affects the analytical method. Using an MS in place of an LCS is considered an occasional "batch saver" if the LCS fails or is unavailable, but you should not rely on it routinely, especially for multi-analyte methods [4].

Q2: What is the required frequency for running quality control (QC) samples like blanks, LCS, and MS/MSD under EPA's SW-846 guidelines?

A2: A typical frequency for many QC operations in EPA methods is once for every 20 samples (a 5% rate) [4]. However, the EPA recognizes that other frequencies may be appropriate. For long-term monitoring projects with a consistent matrix, MS/MSD analyses may be run less frequently. Any deviation from the 1-in-20 frequency must be clearly documented and justified in a sampling and analysis plan approved by the relevant regulatory authority [4].

Q3: Our lab is accredited to ISO 15189:2012. What are the most significant changes in the 2022 version we need to address before the December 2025 transition deadline?

A3: The key changes your lab must address are [5] [3] [6]:

- Enhanced Risk Management: A greater emphasis on establishing a proactive, patient-centered risk management process for all activities.

- Incorporation of POCT Requirements: Requirements for Point-of-Care Testing (previously in ISO 22870) are now integrated directly into the standard.

- Structural Re-alignment: The standard's structure has been aligned with ISO/IEC 17025:2017, and management system requirements (Clause 8) are now at the end of the document.

- Strengthened Ethical Requirements: Requirements for impartiality, confidentiality, and patient welfare have been strengthened [7].

Q4: What statistical methods are used to evaluate Proficiency Testing (PT) results, and what do the scores mean?

A4: The two primary statistical methods used per ISO 13528 are the z-score and the En-value [2].

- z-score: Used when all samples are assumed to have the same uncertainty.

- |z| < 2.0: Successful

- 2.0 ≤ |z| < 3.0: Questionable/Suspect

- |z| ≥ 3.0: Unsuccessful (requires corrective action)

- En-value: Used when laboratories report their own measurement uncertainty.

- |En| ≤ 1.0: Successful

- |En| > 1.0: Unsuccessful (requires corrective action)

Q5: What are the updated personnel qualification rules for Lab Directors under the 2025 CLIA regulations?

A5: The 2025 CLIA updates tightened qualifications for Lab Directors, particularly for high-complexity testing [8] [9]. Key changes include:

- Removal of Equivalency: The pathway for demonstrating "equivalent" qualifications or board certifications has been removed.

- Specific Coursework: Pathways requiring a bachelor's or master's degree now have more specific semester-hour requirements in science and medical lab courses.

- New CE Requirement: MDs or DOs qualifying as Lab Directors for high-complexity testing must now have at least 20 continuing education hours in laboratory practice.

- Grandfathering: Existing personnel are typically grandfathered in if their employment is continuous.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential materials for maintaining quality and preventing contamination in inorganic analytical work, a critical concern highlighted in the troubleshooting guides.

| Reagent/Material | Function in Inorganic Analysis | Key Quality Considerations |

|---|---|---|

| High-Purity Water (ASTM Type I) | Solvent for preparing standards, blanks, and sample dilutions; rinsing labware. | Essential for trace metal analysis to prevent contamination from ions (e.g., Na⁺, Ca²⁺, Cl⁻) present in lower-grade water [2]. |

| Trace Metal-Grade Acids | Sample digestion/dissolution, preservation, and preparation of calibration standards. | High-purity (multiple distillations) minimizes background levels of elemental contaminants. Certificates of Analysis should be reviewed for specific metal concentrations [2]. |

| Certified Reference Materials (CRMs) | Calibration of instruments, verification of method accuracy, and for use in Proficiency Testing schemes. | Must be traceable to a national metrology institute. CRMs validate the entire analytical process from sample preparation to instrumental analysis [2]. |

| Laboratory Control Samples (LCS) | Monitors the performance of the entire analytical method in a clean matrix, isolated from real-sample effects. | Prepared by spiking a known concentration of analyte into a clean, interference-free matrix. Recovery of the LCS indicates whether the lab can perform the method correctly [4]. |

| Matrix Spike (MS) / Matrix Spike Duplicate (MSD) | Assesses the effect of a specific sample matrix on methodological accuracy and precision. | Prepared by spiking analytes into actual patient/sample aliquots. Results identify matrix-related suppression or enhancement of the signal [4]. |

In inorganic analytical laboratories, the reliability of every result hinges on a fundamental understanding of core measurement concepts. The terms accuracy, precision, bias, error, and measurement uncertainty form the backbone of quality control protocols, yet they are frequently misunderstood or used interchangeably. In metrology, the science of measurement, each term has a distinct and critical meaning [10]. For researchers and drug development professionals, properly applying these concepts is not merely academic—it is essential for ensuring data integrity, regulatory compliance, and the safety of products and processes. This guide provides a practical framework for integrating these principles into daily laboratory practice, from foundational definitions to advanced troubleshooting of analytical methods.

Definitions and Key Terminology

Foundational Concepts

- Error: The difference between a measured value and the true value of the measurand. Since the true value is inherently indeterminate, error can never be known exactly [10] [11]. Error is an unavoidable aspect of all measurements and can be classified as random or systematic.

- Accuracy: The closeness of agreement between a measured value and a true value. Accuracy cannot be quantified directly because the true value is unknowable, but it can be estimated through uncertainty [10] [11] [12]. It is inversely related to the total error of the measurement.

- Precision: The degree of consistency and agreement among independent measurements of the same quantity under specified conditions. Precision describes the reproducibility or reliability of a result and is indicated by the measurement uncertainty, without reference to a true value [11] [12].

- Bias: A type of systematic error that represents a reproducible, consistent deviation from the true value. Bias is sometimes used synonymously with systematic error and can often be corrected for if identified [11].

- Measurement Uncertainty: A parameter that characterizes the dispersion of values that could reasonably be attributed to the measurand. It is a quantitative estimate of the doubt associated with a measurement result and is expressed in statistical terms, typically with a confidence interval [10] [11].

Visualizing Accuracy and Precision

The relationship between accuracy and precision is often illustrated using a target analogy. The following diagram clarifies these conceptual relationships and their connection to error types:

Key Parameter Comparison Table

Table 1: Comparison of core statistical concepts in analytical measurement

| Concept | Quantitative Expression | Primary Influence | Reduction Strategy | Known with Certainty? |

|---|---|---|---|---|

| Error | Measured Value - True Value [11] | Both random and systematic effects | Improve method design and calibration | No (true value is indeterminate) [10] |

| Accuracy | Cannot be directly quantified [10] | Total error (systematic + random) | Calibration against standards, bias correction | No |

| Precision | Standard deviation, variance, or relative standard deviation [12] | Random error | Replication, improved instrumentation | Yes (from repeated measurements) |

| Bias | $\frac{\text{Mean of measurements} - \text{True value}}{\text{True value}} \times 100\%$ [12] | Systematic error | Method validation, calibration, blank correction | No (requires reference) |

| Measurement Uncertainty | Combined standard uncertainty ($u_c$), expanded uncertainty ($U$) at a confidence level (e.g., $k=2$ for 95%) [11] | All known significant error sources | Uncertainty budget analysis, improved methods | Yes (as an estimate) |

Uncertainty Components Table

Table 2: Types of measurement uncertainty evaluation

| Uncertainty Type | Evaluation Method | Common Sources | Statistical Treatment |

|---|---|---|---|

| Type A | Statistical analysis of series of observations [11] | Random variations, instrument noise | Standard deviation, ANOVA |

| Type B | Means other than statistical analysis of series [11] | Reference standard uncertainty, instrument resolution, environmental factors | Probability distributions based on experience/specifications |

Troubleshooting Guides

Systematic Approach to Measurement Problems

Effective troubleshooting in analytical laboratories requires a disciplined, systematic approach. The principle of "one thing at a time" is fundamental—changing only one variable at a time allows you to clearly identify which change resolved the problem and understand the root cause [13]. The following workflow provides a logical framework for diagnosing and resolving measurement quality issues:

Frequently Asked Questions (FAQs)

Q1: Our laboratory is consistently seeing higher than expected variation in repeated measurements of inorganic reference materials. What are the most likely causes and how should we proceed?

This indicates a precision problem, most likely stemming from random error sources. Begin by investigating the following:

- Instrument resolution: Check if the instrument's precision specifications are adequate for your measurement requirements [12].

- Environmental factors: Monitor laboratory temperature, humidity, and vibration, which can affect sensitive analytical instruments.

- Operator technique: Ensure consistent sample preparation and measurement technique across different analysts.

- Sample heterogeneity: Verify that samples are properly homogenized before analysis. Systematically address one potential cause at a time, documenting the effect of each change. Implement regular precision checks using control charts to monitor measurement variability over time.

Q2: How often should we run quality control samples in our inorganic analysis workflow?

For many analytical programs, a typical frequency is once for every 20 samples (5%), but this should be determined based on a risk analysis [4]. Consider these factors when establishing QC frequency:

- The clinical significance and criticality of the analyte [14]

- The stability and robustness of the analytical method

- Regulatory requirements for your specific application

- The feasibility of re-analyzing samples if problems are detected [14] Document your chosen frequency in your Quality Assurance Project Plan (QAPP) and have it approved by the relevant regulatory authority if necessary [4].

Q3: What is the practical difference between calculating Total Error versus Measurement Uncertainty for our quality control protocols?

- Total Error approaches focus on setting acceptability limits that account for both random (imprecision) and systematic (bias) errors combined. This model is often used in setting performance specifications in clinical laboratories.

- Measurement Uncertainty characterizes the dispersion of values that could reasonably be attributed to the measurand, expressed as a confidence interval [11]. According to recent IFCC recommendations, there remains a "major issue related to how bias should be handled" in uncertainty calculations [14]. For inorganic analytical laboratories, the uncertainty approach is increasingly required by international standards (ISO 17025) and provides a more statistically rigorous framework for comparing results against specifications or reference values.

Q4: We've identified a consistent bias in our atomic absorption spectroscopy results. How can we determine if this is a systematic error that needs correction?

A consistent, reproducible deviation from reference values likely indicates systematic error. Take these steps:

- Verify with reference materials: Analyze certified reference materials with matrices similar to your samples.

- Check calibration standards: Prepare fresh standards from independent sources to verify your calibration curve.

- Evaluate method blank: Ensure your blank correction is properly accounted for in calculations.

- Compare methods: If possible, analyze subsets of samples by a different analytical technique. If the bias is consistent and significant, apply a correction factor and document this in your standard operating procedures. Remember that unlike random errors, systematic errors cannot be reduced simply by increasing the number of observations [12].

Experimental Protocols and Methodologies

Protocol for Evaluating Measurement Uncertainty

Objective: To estimate the combined standard uncertainty of an analytical measurement procedure for inorganic analytes.

Materials:

- Certified reference materials

- Quality control samples

- All standard laboratory equipment and reagents

Procedure:

- Identify uncertainty sources: List all significant factors that could influence the measurement result (e.g., balance calibration, volumetric glassware, reference material purity, environmental conditions, operator technique).

Quantify uncertainty components:

- For Type A evaluations: Perform at least 10 replicate measurements of a homogeneous sample. Calculate the standard deviation as $s = \sqrt{\frac{\sum{i=1}^{n}(xi - \bar{x})^2}{n-1}}$ [12].

- For Type B evaluations: Use manufacturer specifications, calibration certificates, or literature data to estimate standard uncertainties. Convert stated uncertainties to standard uncertainties by dividing by the appropriate coverage factor (typically 2 for 95% confidence).

Calculate combined uncertainty: Use the law of propagation of uncertainties (root-sum-of-squares method) to combine all significant uncertainty components: $$uc(y) = \sqrt{\sum{i=1}^{n}\left(\frac{\partial y}{\partial xi}\right)^2 u^2(xi)}$$ where $uc(y)$ is the combined standard uncertainty of the result $y$, and $u(xi)$ are the standard uncertainties of the input quantities $x_i$ [11].

Report expanded uncertainty: Multiply the combined standard uncertainty by a coverage factor $k$ (typically $k=2$ for approximately 95% confidence) to obtain the expanded uncertainty $U = k \cdot u_c(y)$.

Documentation: Maintain records of all uncertainty evaluations for method verification and regulatory compliance.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key materials for quality control in inorganic analytical laboratories

| Material/Reagent | Function | Quality Considerations |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide traceable standards for calibration and accuracy verification | Certification with stated uncertainty, matrix matching to samples, stability |

| Laboratory Control Samples (LCS) | Monitor analytical performance in a clean matrix | Known concentration, homogeneity, stability, prepared independently from calibration standards [4] |

| Matrix Spike/Matrix Spike Duplicate (MS/MSD) | Evaluate method performance in specific sample matrix | Representative matrix, appropriate spike concentration, account for native concentrations [4] |

| High-Purity Solvents and Acids | Sample preparation and dilution | Grade appropriate for application, verified lot-to-lot consistency, minimal contaminant levels |

| Stable Calibration Standards | Establish quantitative relationship between signal and concentration | Purity verification, appropriate solvent, stability monitoring, traceability |

| Method Blanks | Identify contamination from reagents or apparatus | Use high-purity water/solvents, process through entire analytical method |

Mastering the core statistical concepts of accuracy, precision, bias, error, and measurement uncertainty is fundamental to establishing robust quality control protocols in inorganic analytical laboratories. By implementing systematic troubleshooting approaches, following standardized experimental protocols, and utilizing appropriate research reagents, laboratories can generate reliable, defensible data that meets regulatory requirements and supports confident decision-making in research and drug development. Regular monitoring of these parameters through well-designed quality control practices provides early detection of methodological problems and ensures the ongoing validity of analytical results.

In inorganic analytical laboratories, establishing robust quality control (QC) protocols is fundamental to producing reliable data that supports critical decisions in drug development and research. These protocols are built on clearly defined performance specifications that align analytical methods with their intended clinical or research applications. The core objective is to minimize errors in the analytical phase, which, while less frequent than pre-analytical errors, have a disproportionately high potential to negatively impact patient care or research outcomes [15]. A structured approach to quality ensures that results are not only precise and accurate but also clinically meaningful.

Frequently Asked Questions (FAQs)

1. What is the difference between quality control (QC) and quality assurance (QA) in the laboratory?

- Quality Control (QC) refers to the routine technical activities that assess the precision and accuracy of your testing processes. This typically involves the daily use of control materials to monitor the stability of your analytical systems [16] [15].

- Quality Assurance (QA) is the broader, comprehensive system designed to ensure that the final results reported are reliable. It encompasses all aspects of the testing process, from sample collection to reporting, including QC, personnel training, equipment validation, and documentation [17].

2. How do I set a performance specification for a new analytical method? Performance specifications should be based on the intended use of the test and follow a recognized hierarchy. The highest level of this hierarchy is based on the clinical effect on patient outcomes, followed by biological variation, and then other sources such as regulatory or professional recommendations [15]. The specification is often defined as a Total Allowable Error (TEa), which sets the maximum amount of error that can be tolerated before a result becomes clinically unusable [15].

3. What are the 2025 IFCC recommendations for Internal Quality Control (IQC)? The latest IFCC recommendations, based on ISO 15189:2022, emphasize that laboratories must establish a structured plan for their IQC procedures [14]. This includes determining:

- Frequency of IQC: How often control materials are analyzed.

- Size of the series: The number of patient samples analyzed between two IQC events.

- Acceptability criteria: The statistical control rules used to judge whether a run is in-control. The plan should be risk-based, considering the clinical criticality of the analyte, the feasibility of sample re-analysis, and the robustness of the method as measured by Sigma-metrics [14].

4. What is a Sigma-metric and how is it used? Sigma-metric is a powerful tool that quantifies the performance of a method by combining its imprecision (CV), bias (inaccuracy), and the defined TEa [15]. It is calculated as: Sigma = (TEa – Bias) / CV A higher Sigma value indicates a more robust and reliable method. Methods with a Sigma greater than 6 are considered world-class, while those below 3 are often inadequate for routine use without extensive QC.

5. How is measurement uncertainty (MU) different from Total Error?

- Measurement Uncertainty is a metrological concept that quantifies the doubt about a measurement result. It is a parameter that defines an interval around the measured value within which the true value is believed to lie with a stated probability [17].

- Total Error is a model that combines random error (imprecision) and systematic error (bias) to evaluate whether a method meets a predefined performance goal (TEa) [14]. While related, they represent different philosophical approaches to quality. The new IFCC guidance cautions against confusing the two concepts [14].

Troubleshooting Guides

Issue 1: Unacceptable Performance in Proficiency Testing (PT)

Problem: Your laboratory consistently receives unsatisfactory scores in external proficiency testing (PT) schemes for a specific inorganic analyte.

| Investigation Step | Action | Documentation to Review |

|---|---|---|

| 1. Verify Result | Re-check calculations and transcription for the PT sample result. | PT submission form, instrument printout. |

| 2. Analyze QC Data | Review Internal QC (IQC) data from the day the PT sample was analyzed. Was the system in control? | Levey-Jennings charts, QC logs. |

| 3. Check Calibration | Verify the calibration status and traceability of the calibrators used. | Calibration certificates, standard operating procedures (SOPs). |

| 4. Method Comparison | Compare your method against a reference method or using certified reference materials (CRMs). | CRM certificates, method validation reports [17]. |

| 5. Assess Bias | Calculate the systematic bias from the PT assigned value and from CRMs. | PT reports, CRM analysis data [15]. |

Corrective Actions:

- If a calibration error is identified, recalibrate using a fresh, traceable standard.

- If a persistent bias is found, refine the method or adjust the calibration procedure.

- Participate in measurement evaluation (ME) programs that use reference values from National Metrology Institutes (NMIs) for a more definitive assessment of accuracy [17].

Issue 2: Frequent QC Failures and Unstable Methods

Problem: Your Internal Quality Control (IQC) frequently triggers rejection rules, indicating an unstable analytical process.

| Investigation Step | Action | Potential Root Cause |

|---|---|---|

| 1. Rule Violation | Identify which specific QC rule was violated (e.g., 1:3s, 2:2s). | Random error (imprecision) or systematic shift/trend [14]. |

| 2. Check Reagents | Inspect reagent lots, preparation, and expiration dates. | Deteriorated or improperly prepared reagents; lot-to-lot variation [16]. |

| 3. Instrument Check | Perform maintenance and check for worn parts, source lamp degradation, or clogged tubing. | Instrument malfunction or wear-and-tear [16]. |

| 4. Control Material | Verify the control material was reconstituted and stored correctly. | Degraded or compromised control material. |

Corrective Actions:

- Standardize reagent and calibrator lot-change procedures to avoid simultaneous changes [16] [14].

- Increase the frequency of IQC during method setup and after any major maintenance.

- For methods with low Sigma-metrics (inherently poor performance), implement more stringent multi-rule QC procedures to reduce the risk of reporting erroneous results.

Issue 3: Inconsistent Results Between Technicians or Shifts

Problem: The same sample yields different results when analyzed by different personnel.

| Investigation Step | Action | Potential Root Cause |

|---|---|---|

| 1. SOP Review | Compare the actual practices of each technician against the written SOP. | Non-adherence to SOP; outdated or ambiguous SOP [16]. |

| 2. Training Records | Review training and competency assessment records for all involved staff. | Inadequate training or lack of standardization [16]. |

| 3. Observation | Observe each technician performing the assay from start to finish. | Variations in sample preparation, instrument operation, or data recording. |

Corrective Actions:

- Revise and clarify the SOP, incorporating feedback from technicians.

- Implement mandatory re-training and competency certification for all personnel.

- Use centralized digital dashboards and electronic documentation to enforce standardized workflows and improve traceability [16].

Experimental Protocols for Quality Assessment

Protocol 1: Calculating Sigma-Metrics for Method Evaluation

Purpose: To objectively evaluate the analytical performance of a method and guide QC design [15].

Materials:

- IQC data (for at least 20 days) to calculate the coefficient of variation (CV).

- Proficiency Testing (PT) or Certified Reference Material (CRM) data to calculate Bias.

- A defined Total Allowable Error (TEa) goal from a recognized source (e.g., based on biological variation).

Methodology:

- Calculate Imprecision: From your IQC data, calculate the mean (μ) and standard deviation (SD) for the analyte. Then compute the CV as: CV% = (SD / μ) * 100.

- Calculate Bias: Using PT data, calculate the average difference between your results and the assigned value (peer group mean or reference value). Bias% = (|Your Mean - Assigned Value| / Assigned Value) * 100.

- Select TEa: Choose an appropriate TEa% for the analyte based on the intended clinical use and relevant guidelines [15].

- Compute Sigma: Use the formula: Sigma = (TEa% - Bias%) / CV%.

Interpretation: Refer to the following table to interpret the Sigma-metric and determine the appropriate QC strategy:

| Sigma Metric | Level of Performance | Recommended QC Strategy |

|---|---|---|

| > 6 | World-Class | Minimal QC; use simple 1:3s rule with 2 controls per run [14]. |

| 5 - 6 | Excellent | Good QC; use 1:3s/2:2s rules with 2 controls per run. |

| 4 - 5 | Acceptable | Multirule QC (e.g., Westgard Rules) with 2-4 controls per run. |

| 3 - 4 | Marginal | Poor performance; needs improved method or stringent QC with 4-6 controls per run. |

| < 3 | Unacceptable | Method is not suitable for clinical use; requires replacement or major improvement. |

Protocol 2: Using a Graphic Tool for TEa Source Selection

Purpose: To standardize the selection of the most appropriate Total Allowable Error (TEa) source that fits the actual analytical performance of a test [15].

Materials:

- Calculated Bias% and Sigma-metric from Protocol 1.

- Chart with Sigma-metric on the Y-axis and Bias% (of TEa) on the X-axis.

- Defined "objective area" on the chart representing optimal performance.

Methodology:

- For each analyte, plot a point on the chart using its calculated (Bias%, Sigma) coordinates.

- Apply a selection algorithm based on the hierarchy of quality specifications (e.g., Milan 2014 consensus).

- Interpretation:

- If the point falls within the objective area, the current TEa source (e.g., biological variability) is appropriate.

- If the point falls outside the area, the analytical performance does not support the use of that TEa source. A re-evaluation is required, potentially selecting a TEa source from a lower level in the hierarchy (e.g., based on regulatory requirements or the state of the art) [15].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Inorganic Analysis |

|---|---|

| Certified Reference Materials (CRMs) | Provide an unambiguous traceability chain to international standards (SI units); used for method validation, calibration, and assigning values to in-house controls [17]. |

| Primary Calibration Standards | High-purity materials (e.g., metals, salts) with known stoichiometry, used to prepare primary calibrators with minimal measurement uncertainty [17]. |

| Third-Party Quality Control Materials | Independent controls not supplied by the instrument/reagent manufacturer; crucial for unbiased assessment of analytical performance and detecting reagent/calibrator lot-to-lot variation [16] [14]. |

| Isotopically Enriched Spikes | Essential for isotope dilution mass spectrometry (IDMS), a primary method for achieving high accuracy and low uncertainty in quantitative analysis [17]. |

Workflow Diagram: Quality Specification Implementation

The following diagram illustrates the logical workflow for defining and implementing performance specifications in an inorganic analytical laboratory.

The Role of a Robust Quality Management System (QMS) in Laboratory Accreditation

In the field of inorganic analytical laboratories, where the accuracy of a single result can impact drug development timelines and regulatory approvals, a robust Quality Management System (QMS) is not merely an administrative requirement but the fundamental backbone of technical competence and accreditation success. A QMS provides the formal framework that documents the processes, personnel, and procedures through which a laboratory ensures the consistent quality of its outputs [18]. For researchers and scientists working with complex inorganic analyses, implementing a rigorous QMS directly addresses the reproducibility crisis noted in scientific literature; a Nature survey found that over 70% of researchers have failed to reproduce another scientist's data, highlighting the critical need for systems that ensure reliable and reproducible results [18]. Accreditation against international standards like ISO/IEC 17025, which specifies requirements for laboratory competence, impartiality, and consistent operation, provides demonstrable proof of this reliability to regulatory authorities and clients alike [19] [20]. This article explores the integral role of a QMS in achieving and maintaining accreditation, framed within the context of quality control for inorganic analytical research.

The Integral Link Between QMS and Laboratory Accreditation

Understanding Laboratory Accreditation

Laboratory accreditation is a formal, independent assessment that verifies a laboratory's competence to perform specific types of testing, measurement, and calibration. It evaluates whether a laboratory operates competently and generates valid results according to internationally recognized standards [19] [21]. The primary standard for testing and calibration laboratories is ISO/IEC 17025, which serves as the baseline criteria for accreditation bodies worldwide [19] [20]. The process is designed to ensure that laboratories meet stringent requirements for their technical competence, impartiality, and consistent operation, thereby fostering trust in their reported results [22] [21].

The accreditation process typically follows a structured path, from initial application through onsite assessment and decision. While specifics vary by accrediting body—such as the College of American Pathologists (CAP) or The Joint Commission—common stages include application and self-assessment, document review, an onsite audit, and a final accreditation decision [23] [22] [21]. For laboratories, accreditation is not a one-time event but a continuous cycle of improvement, involving regular reassessments to maintain accredited status [22].

The QMS as the Engine for Accreditation Success

A robust QMS is not a separate entity from the pursuit of accreditation; rather, it is the very system that enables a laboratory to meet and demonstrate compliance with accreditation standards. The QMS provides the documented structure, processes, and evidence that assessors review to determine conformity. Key accreditation standards explicitly require the implementation of a QMS. For instance, ISO/IEC 17025:2017 includes major sections on structural, resource, process, and management requirements—all core components of a functioning QMS [20].

The management system requirements under ISO/IEC 17025 align with other quality standards such as ISO 9001 but are specifically tailored to the technical environment of testing and calibration laboratories [20]. A well-documented QMS directly satisfies these requirements by providing:

- Documented procedures for all laboratory activities

- Records of staff competence and training

- Equipment management and calibration records

- Processes for handling customer complaints and non-conformances

- Internal audit schedules and reports [20]

Without an effective QMS, a laboratory would lack the systematic evidence needed to demonstrate compliance during an accreditation assessment. The QMS serves as both the preparation tool and the proof of a laboratory's commitment to quality and technical excellence.

Core Components of a QMS for Analytical Laboratories

The 12 Quality System Essentials (QSEs) Framework

For inorganic analytical laboratories, an effective QMS can be structured around the 12 Quality System Essentials (QSEs), a comprehensive framework that covers all critical aspects of laboratory operations. These QSEs, modified from the World Health Organization's Laboratory Quality Management System Handbook, provide a practical blueprint for implementing and maintaining a robust quality system [18]. The table below outlines these 12 essential components and their implementation relevance to inorganic analytical laboratories.

Table 1: The 12 Quality System Essentials (QSEs) for Laboratories

| QSE Name | Description | Implementation Examples for Inorganic Labs |

|---|---|---|

| Organization | Management structure, roles, and quality culture | Organizational charts, quality manual, RASCI matrices, management reviews [18] |

| Facilities & Safety | Laboratory workspace, environmental conditions, safety protocols | Environmental monitoring, pest control, job hazard analysis, SDS management [18] |

| Personnel | Staff competence, training, and evaluation | Onboarding training, competency assessments, proficiency testing, continuing education [18] |

| Equipment | Instrument management, calibration, and maintenance | Preventive maintenance procedures, equipment qualification, calibration records [18] |

| Purchasing & Inventory | Control of reagents, standards, and supplies | Supplier qualification, inventory tracking, reagent certification [18] |

| Process Management | Standardized testing, calibration, and sampling methods | SOPs for inorganic analyses, method validation protocols [18] |

| Documents & Records | Control of manuals, procedures, and test records | Document control system, version control, archival procedures [18] |

| Information Management | Data handling, security, and LIMS implementation | LIMS deployment, data integrity measures, backup systems [24] [20] |

| Assessments | Internal audits, management reviews, and corrective actions | Audit schedules, assessment checklists, corrective action plans [18] [20] |

| Occurrence Management | Non-conforming work, incident investigation, and root cause analysis | Deviation reporting, out-of-specification results procedures [18] |

| Customer Satisfaction | Feedback mechanisms, service evaluation, and responsiveness | Client survey systems, complaint handling procedures [18] |

| Continual Improvement | Quality indicators, improvement projects, and preventive actions | Performance metrics, improvement initiatives, preventive action systems [18] |

QMS Workflow in the Laboratory Path

The QMS integrates seamlessly into the laboratory's three-phase path of workflow: pre-analytic, analytic, and post-analytic [18]. Each phase has specific quality requirements that the QMS addresses through the relevant QSEs. The following diagram illustrates how the QSEs align with the laboratory workflow to ensure quality at every stage, ultimately supporting accreditation readiness.

Technical Support Center: Troubleshooting Guides and FAQs for Inorganic Analysis

Troubleshooting Common Analytical Problems in Inorganic Chemistry

Inorganic analytical laboratories frequently encounter specific technical challenges that can compromise data quality and accreditation readiness. The following troubleshooting guide addresses common issues with key elements, drawing from established analytical knowledge and quality control principles.

Table 2: Troubleshooting Common Problems in Inorganic Analysis

| Element/Analyte | Common Problems | Root Cause | QMS-Based Solution | Preventive Action |

|---|---|---|---|---|

| Silver (Ag) | Low recoveries, precipitation | Formation of insoluble AgCl; photo-reduction of Ag+ to Ag0 [25] | Use HNO₃ or HF for sample prep; avoid Cl⁻ contamination; protect from light [25] | Document sample prep procedures; control environmental conditions |

| Arsenic (As) | Volatile losses, spectral interference | Loss as As₂O₃ (bp 460°C) or AsCl₃ (bp 130°C); ⁴⁰Ar³⁵Cl interference on ⁷⁵As in ICP-MS [25] | Use closed-vessel digestion; apply collision/reaction cell in ICP-MS; use hydride generation AAS [25] | Validate and document sample prep methods for volatile analytes |

| Barium (Ba) | Precipitation, low recovery | Formation of BaSO₄, BaCrO₄, or BaCO₃ [25] | Avoid combinations with SO₄²⁻, CrO₄²⁻, F⁻, or CO₃²⁻; maintain acidic pH [25] | Document chemical compatibility in SOPs; implement reagent checks |

| Lead (Pb) | Contamination, precipitation | Environmental contamination; use of glassware; formation of PbSO₄ or PbCrO₄ [25] | Use closed-container digestion; quartz/fused silica containers; avoid sulfate and chromate [25] | Environmental monitoring; documented container cleaning procedures |

| Chromium (Cr) | Difficulty dissolving samples, especially refractory materials | Chromite (FeO·Cr₂O₃), ignited chromic oxide pigments resistant to acid digestion [25] | Use appropriate fusion techniques (Na₂O₂, NaOH/KNO₃); know sample composition [25] | Method validation using CRM with real-world materials; document sample history |

Quality Control FAQs for Inorganic Laboratories

Q1: What is the purpose of analyzing a matrix spike (MS) sample versus a laboratory control sample (LCS), and why should we run both? [4]

The matrix spike (MS) measures method performance relative to the specific sample matrix, demonstrating the applicability of the analytical approach to the site-specific matrix. The laboratory control sample (LCS) demonstrates that the laboratory can perform the overall analytical approach in a matrix free of interferences, showing the analytical system is in control. Running both helps separate issues of laboratory performance from matrix effects, providing a more complete picture of data quality [4].

Q2: Why do many quality control procedures require running QC samples "once for every 20 samples?" [4]

The 1-in-20 (5%) frequency is a typical value used in many EPA programs for years, providing a statistically meaningful sampling of data quality. However, regulations recognize that other frequencies may be appropriate with proper documentation and regulatory approval, particularly for long-term monitoring projects with consistent matrices [4].

Q3: What should we do when matrix interference effects cause elevated detection limits above regulatory limits? [4]

When the Lower Limit of Quantitation (LLOQ) exceeds regulatory limits, the quantitation limit may become the regulatory level, provided the laboratory has taken every possible step to keep the reporting limit as low as possible (avoiding unnecessary sample dilutions, using clean-up methods, etc.). This approach must be documented in the laboratory's standard operating procedures [4].

Q4: Can we use matrix spike (MS) recovery in place of laboratory control sample (LCS) recovery for establishing analytical process control? [4]

While performance-based methodology may allow using MS in place of LCS if acceptance criteria are as stringent, this practice has significant limitations. MS results are affected by matrix effects, and spike amounts may not be appropriate for native sample levels. The EPA recommends viewing this as an occasional "batch saver" rather than routine practice, as both forms of quality control are needed for comprehensive accuracy assessment [4].

Q5: How does a Laboratory Information Management System (LIMS) support our QMS and accreditation efforts? [24] [20]

A LIMS enhances QMS effectiveness and accreditation readiness through:

- Improved data integrity and security with audit trails and user access controls

- Streamlined document control ensuring latest versions of SOPs are accessible

- Support for method validation by maintaining detailed validation records

- Ensuring traceability of measurements through calibration schedule management

- Managing quality control procedures by automating scheduling and recording of QC activities

- Support for corrective and preventive actions by tracking investigations and resolutions

- Automating reporting while ensuring compliance with accreditation requirements [24] [20]

The Scientist's Toolkit: Essential Research Reagent Solutions

For inorganic analytical laboratories pursuing accreditation, certain reagents and materials are essential for maintaining quality control and ensuring reliable results. The following table details key research reagent solutions and their functions within the quality framework.

Table 3: Essential Research Reagent Solutions for Quality Control in Inorganic Analysis

| Reagent/Material | Function in Quality Control | Application Examples | Quality Considerations |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Method validation, accuracy verification, calibration | Quantifying analytes in unknown samples; testing method accuracy [25] | Traceability to national/international standards; documentation of uncertainty |

| High-Purity Acids | Sample digestion, matrix preparation | HNO₃ for Ag analysis; avoiding Cl⁻ contamination for silver [25] | Certified purity levels; supplier qualification; contamination control |

| Matrix Spike Solutions | Accuracy assessment in specific sample matrices | Evaluating matrix effects in environmental samples [4] | Appropriate concentration; stability documentation; traceable preparation |

| Laboratory Control Samples (LCS) | Monitoring laboratory performance without matrix effects | Verifying analytical system is in control [4] | Different matrix from samples; known concentrations; stability data |

| Quality Control Check Standards | Continuing calibration verification | Instrument performance monitoring every 15 samples or as required by method [4] | Independent source from calibration standards; appropriate concentration levels |

| Internal Standard Solutions | Correction for instrument fluctuations and sample matrix effects | ICP-MS analysis to correct for signal drift and matrix suppression/enhancement | Element not present in samples; does not interfere with analytes; consistent response |

For inorganic analytical laboratories serving the research and drug development sectors, a robust Quality Management System is far more than a compliance requirement—it is a strategic asset that drives technical excellence, enhances reputation, and ensures the reliability of results that impact public health and scientific progress. The framework provided by the 12 Quality System Essentials, when properly implemented and integrated throughout the laboratory's workflow, creates a culture of quality that naturally leads to successful accreditation outcomes [18]. By addressing common technical challenges through systematic troubleshooting and maintaining rigorous quality control practices, laboratories can not only achieve accreditation against standards like ISO/IEC 17025 but also position themselves as leaders in generating reliable, reproducible scientific data. In an era increasingly focused on data integrity and reproducibility, investment in a comprehensive QMS represents the foundation upon which scientific credibility is built and maintained.

From Theory to Practice: Implementing Effective QC Strategies and Techniques

Frequently Asked Questions (FAQs)

What are the core components of an IQC strategy? An IQC strategy must define the types of control materials to be used, the frequency and timing of IQC measurements, the number of concentration levels tested, and the statistical rules (e.g., Westgard rules) used for acceptance or rejection of a run [26]. This strategy should be designed to detect changes in performance that could pose a risk to data quality.

How often should we run Internal Quality Controls? The frequency of IQC is not one-size-fits-all; it should be determined through a risk-based approach. Key factors to consider include the clinical or analytical significance of the test, the stability of the analytical method, the required timeframe for result reporting, and the feasibility of re-analyzing samples [14]. The laboratory must define the number of patient samples analyzed between two IQC events, known as the "series" [14].

What is the difference between a QC warning and a rejection? A warning (e.g., a

1₂ₛrule violation) signals that a single control measurement has fallen outside the 2 standard deviation (SD) limit. It prompts the operator to be alert to potential problems. A rejection (e.g., a1₃ₛor2₂ₛrule violation) signifies a higher likelihood of an analytical error and requires the laboratory to stop patient reporting, investigate the cause, and apply corrective actions before results can be released [26].Can we use the manufacturer's stated ranges for our controls? While manufacturer ranges are a good starting point, it is considered a best practice to establish your laboratory's own mean and standard deviation. Laboratories often operate with better precision than the manufacturer's wide, "forgiving" ranges. Establishing tighter, laboratory-specific ranges makes the QC procedure more sensitive, acting as an early warning system for instrument problems [27].

Why is a weekly review of QC data necessary if we check it daily? A daily review checks for immediate acceptance or rejection of a run. A weekly (or monthly) holistic review of Levey-Jennings charts is essential for identifying long-term trends (a gradual drift in results) and shifts (an abrupt change in the mean) that may not be apparent day-to-day. This proactive review helps detect problems before they cause a QC failure, ensuring greater long-term reliability of patient results [27].

Troubleshooting Guide: Addressing Common QC Problems

This guide provides a systematic approach to resolving frequent IQC issues.

| Problem | Potential Causes | Corrective Actions & Troubleshooting Steps |

|---|---|---|

| One control level is out of range | • Problem with the specific control vial (e.g., improperly mixed, evaporated, contaminated)• Instrument sampling error for that vial (e.g., bubble)• Random error | 1. Re-mix the control vial and repeat the analysis.2. Open a new vial of the same control level and repeat.3. Check other control levels and patient results for consistency. If they are acceptable, the issue is likely isolated to that vial [27]. |

| All levels of control are out of range for one analyte | • Calibration error• Expired or degraded reagents• Instrument malfunction specific to that test• Incorrect calibration factor | 1. Check reagent expiration dates and look for signs of contamination.2. Verify calibration data and, if necessary, perform a new calibration.3. Perform required instrument maintenance (e.g., probe cleaning, replacing lamps/filters).4. Consult the instrument's troubleshooting manual [27]. |

| A shift (all results are suddenly higher/lower) | • New lot of calibrator or reagent• New calibration performed• Critical instrument maintenance performed (e.g., new light source)• Incorrect assignment of a new control lot's target value | 1. Review logs to correlate the shift with recent events (reagent lot change, calibration, maintenance).2. If a new reagent lot was introduced, confirm it was validated properly.3. If a new control lot was introduced, verify the assigned target and SD [27]. |

| A trend (gradual increase/decrease over days) | • Gradual instrument deterioration (e.g., aging lamp, clogging probe)• Deterioration of reagents or controls over time (especially after opening/reconstitution)• Environmental factors (e.g., room temperature fluctuation) | 1. Review maintenance records and perform unscheduled maintenance.2. Check storage conditions and stability of reagents/controls.3. Use the QC action log to identify patterns and pinpoint the root cause [27]. |

| Increased imprecision (high scatter) | • Instrument instability (e.g., intermittent faults)• Contaminated reagents or samples• Issues with sample/reagent delivery system• Operator technique variability | 1. Check for loose connections or intermittent errors in the instrument log.2. Replace reagents with a new lot.3. Ensure all operators are following standardized procedures [16]. |

The following table summarizes the essential elements that must be defined in a laboratory's IQC plan [26].

| IQC Component | Description & Considerations |

|---|---|

| Control Materials | Can be assayed (with stated target values) or unassayed. Use of third-party materials (independent of the instrument manufacturer) should be considered for independence. Materials should be commutable and mimic patient samples [14]. |

| Frequency & Timing | Based on a risk assessment considering the test's criticality, method stability, and required turnaround time. In continuous testing, IQC is scheduled at defined intervals or after critical events (e.g., calibration, maintenance) [26] [14]. |

| Concentration Levels | A minimum of two levels (normal and pathological) is recommended. For some tests, a third level is advised to monitor performance across the analytical measuring range [26]. |

| Statistical Procedures | Levey-Jennings Charts: Visual plot of control results over time.Westgard Rules: A multi-rule procedure using a combination of rules (e.g., 1₃ₛ, 2₂ₛ, R₄ₛ) to minimize false rejections while maintaining high error detection [26]. |

| Acceptance Criteria | Limits are set based on medical relevance and analytical performance goals (e.g., allowable total error). Tighter, laboratory-defined ranges are superior to wide manufacturer ranges for early error detection [26] [27]. |

Foundational Statistical Rules for IQC

This table details common statistical control rules used in the multi-rule QC procedure, explaining what they detect and their implications [26].

| Control Rule | Description | What It Detects |

|---|---|---|

| 1₂ₛ (Warning Rule) | One control measurement exceeds ±2 standard deviations (SD) from the mean. | Serves as a warning of potential problems. Triggers heightened scrutiny but does not reject the run. |

| 1₃ₛ (Rejection Rule) | One control measurement exceeds ±3 SD from the mean. | Detects large random errors or significant systematic errors. Typically results in run rejection. |

| 2₂ₛ (Rejection Rule) | Two consecutive control measurements for the same level exceed the same ±2 SD limit. | Detects systematic errors (shift in accuracy). |

| R₄ₛ (Rejection Rule) | The range between the highest and lowest control measurements in one run exceeds 4 SD. | Detects increased random error (imprecision). |

| 4₁ₛ (Rejection Rule) | Four consecutive control measurements for the same level exceed the same ±1 SD limit. | Detects a systematic trend or shift. |

Workflow for Implementing and Managing IQC

The following diagram illustrates the continuous workflow for implementing and managing an effective Internal Quality Control strategy.

Research Reagent Solutions for IQC

This table lists essential materials and their functions for establishing a robust IQC system in an inorganic analytical laboratory.

| Item | Function in IQC |

|---|---|

| Third-Party Control Materials | Independent quality control samples not tied to a specific instrument manufacturer, used to provide unbiased assessment of analytical performance [14]. |

| Assayed & Unassayed Controls | Assayed: Comes with predetermined target values and ranges. Unassayed: Requires the laboratory to establish its own target values and ranges through validation [27]. |

| Calibrators | Solutions with known concentrations used to adjust the analyzer's response and establish the relationship between the signal and the analyte concentration. A change in lot can cause QC shifts [27]. |

| Levey-Jennings Charts | A graphical tool (a type of control chart) for plotting QC results over time against the laboratory's established mean and standard deviation lines, enabling visual detection of trends and shifts [26] [27]. |

| Peer Group Data | Data collected from multiple laboratories using the same analytical methods, equipment, and control lots. Allows a laboratory to compare its performance (bias) against a larger group [26]. |

Quality control (QC) is the cornerstone of generating reliable and defensible data in inorganic analytical laboratories. For techniques as sensitive as Inductively Coupled Plasma Optical Emission Spectroscopy (ICP-OES), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), and Ion Chromatography (IC), robust QC protocols are non-negotiable. These protocols are designed to monitor laboratory performance, identify potential errors, and ensure that results are accurate and precise. A comprehensive QC program includes the analysis of method blanks, laboratory control samples (LCS), matrix spikes (MS), and matrix spike duplicates (MSD), typically at a frequency of one for every 20 samples, to validate both the method's performance in a clean matrix and its applicability to the specific sample matrix of interest [4]. Adherence to these protocols within a quality assurance (QA) framework is essential for laboratories involved in critical fields such as drug development, environmental monitoring, and material sciences.

Troubleshooting Guides

Even with optimal QC practices, analysts may encounter instrumental or methodological issues. The following guides address common problems, their potential causes, and solutions.

Common ICP-OES Issues and Solutions

ICP-OES is a powerful technique for elemental analysis, but it can suffer from issues like poor precision, sample drift, and nebulizer clogging [28] [29].

Table 1: Troubleshooting Guide for Common ICP-OES Problems

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| Poor Precision [28] [29] | Inefficient sample aerosolization; Nebulizer clogging; Pump tubing issues. | Check nebulizer mist for consistency; Clean or replace the nebulizer; Ensure pump tubing is secure and not worn. |

| Sample Drift [28] | Solid buildup in tubing; Degraded tubing from acidic samples. | Inspect and clean sample introduction system; Replace tubing, especially after running acidic samples. |

| High Background/Noise | Contaminated sample introduction system; Dirty torch or injector. | Soak spray chamber and torch in 25% v/v detergent or 50% v/v HNO₃; Clean injector regularly, especially with high total dissolved solids (TDS) samples [29]. |

| Nebulizer Clogging [29] | High TDS samples; Particulates in sample. | Use an argon humidifier; Filter samples prior to analysis; Increase sample dilution; Use a specialized clog-resistant nebulizer. |

| Calibration Curve Issues [29] | Contaminated blank; Improper background correction; Outside linear range. | Ensure blank is clean; Examine spectra for correct peak alignment and background points; Work within the instrument's linear dynamic range. |

| Low Sensitivity | Incorrect wavelength; Worn-out injector; Improper plasma viewing position (axial/radial). | Verify wavelength selection and alignment; Inspect and clean or replace the injector; Choose radial view for complex matrices for better detection limits [28]. |

Common ICP-MS Issues and Solutions

ICP-MS offers exceptional sensitivity but requires careful attention to contamination, matrix effects, and interferences [30].

Table 2: Troubleshooting Guide for Common ICP-MS Problems

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| High Background/Contamination [31] [30] | Impure acids/vials; Laboratory environment; Contaminated labware. | Use high-purity (trace metal grade) acids and reagents; Test vials for leaching; Use FEP or quartz labware instead of glass [31]. |

| Signal Suppression/Enhancement [30] | High matrix (e.g., >0.5% TDS); Presence of organic carbon. | Dilute sample; Use internal standards (e.g., Sc, Y, Li) to correct for suppression; Digest samples to remove organic carbon. |

| Polyatomic Interferences (e.g., ArCl⁺ on As⁺) [30] [25] | Plasma gas and matrix components forming interfering ions. | Use collision-reaction cell (CRC) technology with gases like Helium (KED mode) or Hydrogen; Consider triple-quadrupole ICP-MS for difficult interferences. |

| Isobaric & Doubly Charged Interferences [30] | Elements with overlapping masses (e.g., ¹¹⁴Cd and ¹¹⁴Sn); Elements with low 2nd ionization potential (e.g., Ba⁺⁺). | Choose an alternative, interference-free isotope; Mathematically correct for known isobaric overlaps; Examine full mass spectrum for doubly charged ion patterns. |

| Drift & Instability | Cone clogging; Maintenance disrupting equilibrium. | Avoid over-cleaning cones; Monitor performance via ratios (e.g., ⁵⁹Co⁺/³⁵Cl¹⁶O⁺); Clean cones only when sensitivity or interference removal deteriorates [30]. |

| Low Concentration Instability (e.g., for Be) [29] | Operation near detection limit; Suboptimal instrument tuning. | Use a closely matching internal standard (e.g., ⁷Li for Be); Optimize nebulizer gas flow to favor the low mass range. |

Ion Chromatography and Hyphenated Techniques

The hyphenation of Ion Chromatography (IC) with ICP-OES or ICP-MS is a powerful approach for speciation analysis, allowing for the determination of specific elemental species, such as oxyhalides (e.g., bromate, chlorate) [32]. This provides crucial information beyond total elemental concentration.

A key challenge in IC-ICP is ensuring seamless interfacing between the two instruments. Issues can arise from:

- Mobile Phase Incompatibility: The IC eluent (often a carbonate/bicarbonate buffer) must be compatible with the ICP's sample introduction system. High salt concentrations can lead to nebulizer and injector clogging, and signal suppression.

- Flow Rate Mismatch: The flow rate from the IC system must be within the optimal uptake rate for the ICP nebulizer.

- Data Synchronization: Precise timing and synchronization are required to correlate the IC chromatogram with the ICP elemental signal.

Solutions involve using a suppressor in the IC system to convert the eluent to pure water before introduction into the ICP, carefully matching flow rates, and using software that can seamlessly integrate data from both instruments.

Sample Preparation and Contamination Control

A significant source of error in trace analysis occurs long before the sample reaches the instrument [31] [2].

Table 3: Common Sample Preparation Errors and Contamination Sources

| Source | Potential Contaminants | Prevention Strategies |

|---|---|---|

| Water [31] [2] | Wide range of inorganic ions. | Use ASTM Type I water for all trace analysis; Regularly validate water purification system output. |

| Acids & Reagents [31] [30] | Alkali, transition, and heavy metals. | Use high-purity (e.g., ICP-MS grade) acids; Check certificates of analysis; Consider sub-boiling distillation. |

| Labware [31] | Si, Na, B (from glass); Zn (from neoprene tubing); Adsorbed metals. | Use FEP, PFA, or quartz over glass; Segregate labware for high/low level use; Acid-leach new containers. |

| Laboratory Environment [31] [2] | Dust (Al, Si, Ca, Fe, Pb); Airborne particulates. | Perform critical steps in HEPA-filtered clean hoods or rooms; Control dust and corrosion. |

| Personnel [31] [2] | Na, K, Ca (sweat); Zn (glove powder); Pb, Cd (cosmetics, dyes). | Wear powder-free gloves; avoid wearing jewelry, makeup, or lotions in the lab. |

Frequently Asked Questions (FAQs)

1. How often should I run quality control samples like Blanks, LCS, and MS/MSD? For many regulatory methods (e.g., EPA SW-846), a frequency of once per every 20 samples is standard. However, the frequency should be justified in a project's Quality Assurance Project Plan (QAPP) and can be adjusted based on the project's scope and sample matrix stability [4].

2. What is the difference between a Laboratory Control Sample (LCS) and a Matrix Spike (MS)? The LCS tests the performance of the entire analytical method in a clean, interference-free matrix (like reagent water). The MS tests the effect of the specific sample matrix on the analytical method's accuracy by spiking the analyte into the actual sample [4]. Both are crucial for a complete data quality assessment.

3. My ICP-MS calibration was perfect yesterday, but today it's unstable. What should I check first? Begin with the sample introduction system. Check for nebulizer clogs, ensure the pump tubing is not cracked or loose, and verify that the spray chamber is dry and clean. Also, confirm that your argon supply and pressure are stable [29].

4. How can I prevent the loss of volatile elements like Arsenic (As) and Mercury (Hg) during sample preparation? Avoid open-vessel digestions or dry ashing. Use closed-vessel microwave digestion systems, which prevent the volatilization of species like AsCl₃ (bp 130 °C) [25]. For Hg, store samples in glass or fluoropolymer containers, as Hg vapor can diffuse through polyethylene [31].

5. Why is my silver (Ag) recovery always low, even when I prepare standards in nitric acid? This is likely due to trace chloride contamination and photoreduction. Even tiny amounts of chloride can cause Ag to precipitate as AgCl, which then photoreduces to metallic silver and plates onto the container walls. Ensure all acids and water are chloride-free, use quartz or FEP containers, and minimize the solution's exposure to light [25].

6. What is the best way to handle a high total dissolved solids (TDS) sample? Dilute the sample to keep the TDS below 0.2-0.5%. If dilution is not possible due to low analyte concentrations, use an argon humidifier to prevent salt deposition in the nebulizer, consider a specialized high-solids nebulizer, and increase the frequency of rinsing and maintenance of the sample introduction system and interface cones [29] [30].

7. When should I clean or replace my ICP-MS cones? Clean the sampler and skimmer cones when you observe a consistent loss of sensitivity for low-mass elements or a decline in the signal-to-background ratio for key isotopes (e.g., ⁵⁹Co⁺/³⁵Cl¹⁶O⁺). Avoid cleaning them too frequently, as a slight deposition can create a stable equilibrium that reduces drift [30].

Essential Research Reagent Solutions

The purity of reagents is paramount in trace element analysis. The following table lists essential materials and their functions.

Table 4: Key Reagents and Materials for Trace Element Analysis

| Item | Function & Importance | Key Considerations |

|---|---|---|

| High-Purity Water (ASTM Type I) [31] [2] | Primary diluent for standards and samples; Rinsing agent. | Must have a resistivity of ≥18 MΩ·cm; Low total organic carbon (TOC) and bacterial count. Critical for blank levels. |

| Trace Metal Grade Acids [31] [30] | Sample digestion/dissolution; Sample preservation; Diluent for standards. | Nitric acid is generally the cleanest. HCl can have high impurities. Always check the certificate of analysis for elemental contamination levels. |

| Certified Reference Materials (CRMs) [31] [25] | Calibration; Verifying method accuracy and precision. | Must be from an accredited producer; Match the matrix of your samples as closely as possible; Use before expiration date. |

| Internal Standard Solution [28] | Corrects for signal drift and matrix suppression/enhancement in ICP-OES/MS. | Added to all standards and samples. Common IS: Sc, Y, In, Tb, Bi. Must not be present in samples and be free of interferences. |

| Multi-Element Calibration Standards | Instrument calibration. | Should be prepared in the same acid matrix as samples. Can be purchased as certified solutions or prepared gravimetrically from single-element stocks. |

| FEP/PFA Labware [31] | Storage of standards and samples; Sample preparation. | Superior to glass and polypropylene for trace metal work due to lower leaching and adsorption characteristics. |

Workflow and Quality Control Diagrams

The following diagrams outline a general analytical workflow and the integration of quality control protocols.

Analytical Workflow with Integrated QC

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

What is PBRTQC and how does it differ from traditional Internal Quality Control (IQC)? Patient-based real-time quality control (PBRTQC) is a method that uses real-time patient test results to monitor the stability and performance of analytical systems, unlike traditional IQC which uses separate control materials. Key differences include: PBRTQC uses commutable samples (actual patient specimens), provides continuous real-time monitoring, offers significant cost savings by reducing need for commercial control materials, and avoids matrix effects that can affect traditional IQC materials [33] [34].

Why is the adoption of PBRTQC taking so long in clinical laboratories? Despite its advantages, PBRTQC adoption faces several barriers: most laboratorians don't understand the algorithms and how to optimize them; there's a lack of knowledge about how patient population fluctuations impact PBRTQC; many laboratories have unrealistic expectations about immediate gains with minimal effort; and there are concerns about regulatory acceptance, though PBRTQC is acceptable under ISO 15189 and CAP accreditation standards [34].

Which analytes are best suited for initial PBRTQC implementation? It's recommended to start with measurands with tight biological control such as sodium, calcium, and potassium. These analytes are clinically important and have less biological variation due to age, sex, and seasonal factors. Studies have successfully implemented PBRTQC for alanine aminotransferase (ALT), albumin, calcium, ferritin, and sodium [33] [34].

How does artificial intelligence enhance PBRTQC performance? Advanced neural network models like PCRTQC-NN (Pre-classified Real-Time Quality Control with Neural Network) significantly improve systematic error detection. This model uses an autoencoder neural network to extract analytical features from testing instruments under error-free conditions, then identifies systematic errors by comparing reconstruction residuals. This approach has reduced the average number of patient samples until error detection by up to 37% for some analytes [35].

What are the most effective algorithms for PBRTQC? Algorithm effectiveness depends on the analyte and data distribution. The exponentially weighted moving average (EWMA) is particularly effective for monitoring inter-instrument comparability and detecting small shifts. The moving median is robust for handling skewed data but requires larger sample sizes (approximately 200 results). Moving average procedures with smaller block sizes can detect bias earlier for symmetrically distributed analytes [33] [36].

Troubleshooting Common PBRTQC Implementation Issues

Problem: Excessive false positive flags disrupting workflow

- Potential Cause: Control limits are too narrow for the patient population and algorithm used [34].

- Solution: Use simulation software to optimize PBRTQC parameters (block size, exclusion, truncation, and error detection limits) specifically for your patient population. Consider using algorithms more robust to outliers such as moving median or trimmed mean [34].

Problem: Inability to detect small systematic errors

- Potential Cause: Using suboptimal algorithms insensitive to small bias shifts [35] [34].

- Solution: Implement exponentially weighted moving average (EWMA) which introduces weighting coefficients to improve sensitivity to small offsets. For advanced applications, consider neural network approaches like PCRTQC-NN that extract analytical features as discrete signals [35] [36].

Problem: Inconsistent performance across different patient populations

- Potential Cause: Fluctuations in patient population characteristics affecting PBRTQC calculations [34].

- Solution: Understand your patient population dynamics including sex-related variations, pre-analytical problems, and arrival patterns for different patient groups (inpatients, outpatients, critical care). Implement separate protocols for distinct patient populations if necessary [34].

Problem: Software limitations restricting algorithm options