Phonon Dispersion Step Size Optimization: Advanced Techniques for Accurate and Efficient Calculations

This article provides a comprehensive guide to optimizing step size and convergence parameters in phonon dispersion calculations, a critical step for obtaining accurate vibrational properties of materials.

Phonon Dispersion Step Size Optimization: Advanced Techniques for Accurate and Efficient Calculations

Abstract

This article provides a comprehensive guide to optimizing step size and convergence parameters in phonon dispersion calculations, a critical step for obtaining accurate vibrational properties of materials. Tailored for researchers and computational scientists, we cover foundational principles of Brillouin zone sampling, compare methodological approaches like DFPT and finite displacement, and address common pitfalls in convergence. The content extends to advanced optimization strategies, including high-throughput frameworks and machine learning potentials, and concludes with robust validation protocols to ensure computational reliability against experimental and reference data, empowering efficient and precise materials discovery.

Understanding Phonon Calculations and Why Step Size Matters

In the calculation of phonon dispersion relations, q-point sampling refers to the selection of wave vectors within the first Brillouin zone at which the dynamical matrix is evaluated. The accuracy of the computed phonon spectrum is fundamentally governed by the density and distribution of these q-points. Unlike electronic structure calculations that employ k-point sampling for electron wavefunctions, phonon calculations utilize q-point sampling to capture the spatial variation of atomic vibrations throughout the crystal lattice. The central challenge lies in selecting a sufficient number and appropriate arrangement of q-points to accurately represent the force constants in real space, which are then Fourier transformed to obtain the phonon frequencies at any wave vector along high-symmetry paths for dispersion curves or on dense uniform meshes for phonon density of states (DOS).

The relationship between q-point sampling and phonon accuracy manifests through two distinct computational grids: (1) the coarse q-point grid used for explicit calculation of force constants, and (2) the fine q-point grid employed for Fourier interpolation of phonon frequencies. The coarse grid must be sufficiently dense to capture the decay of force constants with interatomic distance, while the fine grid determines the smoothness and resolution of the final phonon dispersion curves and DOS. Inadequate sampling in either grid introduces artifacts such as unphysical gaps, imaginary frequencies (where none should exist), and inaccurate thermodynamic properties derived from the phonon spectrum. For polar materials, additional complications arise from long-range dipole-dipole interactions that require special treatment through the inclusion of Born effective charges and dielectric tensors to properly account for LO-TO splitting at the Γ-point [1].

Theoretical Foundations: Why q-point Sampling Matters

The Role of q-points in Lattice Dynamics

The theoretical foundation of lattice dynamics establishes that the phonon frequencies, ω(q), for a wave vector q are eigenvalues of the dynamical matrix D(q), which is the Fourier transform of the real-space force constant matrix. The force constant matrix represents the second-order derivative of the total energy with respect to atomic displacements and must be converged with respect to the supercell size (or equivalently, the q-point mesh) to ensure that all significant interatomic interactions are captured. The fundamental connection between real-space and reciprocal-space representations dictates that a denser q-point mesh in reciprocal space corresponds to a larger supercell in real space, thereby incorporating longer-range force constants [2] [1].

The computational process involves first calculating the force constants on a coarse q-point grid commensurate with the supercell used for the calculation. These force constants are then Fourier interpolated to a much finer q-point grid to produce smooth phonon dispersion curves along high-symmetry directions and detailed density of states spectra. This two-grid approach recognizes that the explicit calculation of force constants at every point needed for smooth curves is computationally prohibitive, while interpolation from a sufficiently converged coarse grid provides an efficient and accurate alternative [3].

Sampling Requirements for Different Material Classes

The optimal q-point sampling strategy varies significantly across material classes due to differences in their bonding characteristics and structural complexity:

- Simple crystals (e.g., silicon, MgO) with short-range interactions typically require moderate q-point meshes (e.g., 4×4×4 to 6×6×6 for the coarse grid) to achieve convergence [4].

- Polar materials (e.g., GaAs, AlN) exhibit long-range dipole-dipole interactions that necessitate special treatment through the inclusion of Born effective charges and dielectric constants to properly account for the non-analytical term at the Γ-point, which leads to LO-TO splitting [1] [5].

- Complex and low-symmetry crystals (e.g., organic molecular crystals, metal-organic frameworks) often require denser q-point sampling due to their larger unit cells and more complicated vibrational spectra [6] [7].

- Low-dimensional materials (e.g., graphene, phosphorene) require specialized sampling approaches, such as the

assume_isolated = '2D'flag in Quantum ESPRESSO, to avoid artifacts like imaginary acoustic frequencies near the Γ-point [5].

Table 1: Recommended Initial q-point Sampling Strategies for Different Material Systems

| Material Class | Coarse Grid Size | Fine Grid Size | Special Considerations |

|---|---|---|---|

| Simple Metals & Semiconductors | 4×4×4 - 6×6×6 | 20×20×20 - 30×30×30 | Standard sampling usually sufficient |

| Ionic Compounds (Polar) | 6×6×6 - 8×8×8 | 24×24×24 - 32×32×32 | Must include Born charges & dielectric tensor |

| Complex Oxides | 8×8×8 - 12×12×12 | 30×30×30 - 40×40×40 | Denser sampling due to complex unit cells |

| Organic Molecular Crystals | 2×2×2 - 4×4×4 | 20×20×20 - 30×30×30 | Large unit cells limit coarse grid density |

| 2D Materials | 4×4×1 - 8×8×1 | 20×20×1 - 30×30×1 | Use 2D isolation flag; anisotropic sampling |

| Metal-Organic Frameworks | 2×2×2 - 3×3×3 | 20×20×20 - 30×30×30 | Very large unit cells; ML potentials recommended |

Convergence Protocols and Methodologies

Two-Stage Convergence Procedure

Achieving accurate phonon dispersion relations requires a systematic, two-stage convergence approach that separately addresses the coarse grid for explicit force constant calculations and the fine grid for interpolation [3]:

Stage 1: Coarse Grid Convergence

- Select a fixed, sufficiently dense fine grid (e.g., 30×30×30) for final interpolation

- Perform a series of calculations with increasing coarse grid sizes (e.g., 2×2×2, 3×3×3, 4×4×4, etc.)

- For each coarse grid calculation, compute the phonon DOS using the fixed fine grid

- Compare the DOS profiles across different coarse grids, monitoring for changes in key features

- Identify the point where further increases in coarse grid density produce negligible changes in the DOS

- This converged coarse grid becomes the reference for all subsequent calculations

Stage 2: Fine Grid Convergence

- Fix the coarse grid at the converged value from Stage 1

- Perform calculations with increasing fine grid densities (e.g., 20×20×20, 30×30×30, 40×40×40, etc.)

- Monitor the smoothness of phonon dispersion curves and resolution of the DOS

- Identify the fine grid density where further increases no longer visually improve the smoothness of dispersion curves

- This converged fine grid provides the optimal balance between computational cost and result quality

This systematic approach ensures that both the explicit force constant calculation (coarse grid) and the interpolation accuracy (fine grid) are properly converged, eliminating potential artifacts from insufficient sampling in either aspect of the calculation.

Computational Parameters Interacting with q-point Sampling

The convergence of q-point sampling cannot be considered in isolation, as it interacts with several other computational parameters that must be simultaneously optimized [2] [6] [4]:

- Electronic k-point sampling: The accuracy of force constants depends on proper convergence of the underlying electronic structure calculation. When increasing supercell size for phonon calculations, the k-point sampling should be adjusted to maintain a consistent sampling density in reciprocal space. For example, if a primitive cell calculation uses a 12×12×12 k-point mesh, a 2×2×2 supercell should use a 6×6×6 mesh to maintain equivalent sampling [2].

- Energy cutoff (ENCUT): The plane-wave basis set size significantly impacts force and stress calculations. For phonon calculations, it is recommended to increase ENCUT by approximately 30% above the default values, with systematic testing in steps of 15% to ensure full convergence [2].

- Supercell size: The coarse q-point grid density is intrinsically linked to supercell size in finite-displacement methods. A 4×4×4 supercell calculation corresponds to a 4×4×4 q-point mesh for force constant calculations. The supercell must be large enough so that force constants decay to negligible values at the boundaries [1].

- Charge density grids: The Fourier grid used for representing charge density significantly impacts phonon frequencies, particularly for low-frequency modes in complex materials like organic molecular crystals. Poorly converged charge density grids can introduce errors of tens of wavenumbers in phonon frequencies and significantly alter normal mode eigenvectors [6].

Table 2: Key Parameters for Comprehensive Convergence Testing in Phonon Calculations

| Parameter | Convergence Metric | Typical Range | Interactions with q-points |

|---|---|---|---|

| Coarse q-grid | Phonon DOS profile, stability of soft modes | 2×2×2 to 8×8×8 | Primary factor for force constant accuracy |

| Fine q-grid | Smoothness of dispersion curves | 20×20×20 to 40×40×40 | Determines interpolation quality |

| k-point mesh | Total energy, forces | Scale with supercell size | Affects accuracy of force constants |

| ENCUT | Stress tensor, forces | +20-30% beyond default | Underlying basis for force accuracy |

| Supercell size | Force constant decay | 2×2×2 to 4×4×4 | Determines maximum real-space range |

| Charge grid | Low-frequency phonons | 2-4× planewave cutoff | Affects numerical accuracy of forces |

Practical Implementation Across Computational Frameworks

VASP Workflow for Phonon Dispersion

The VASP software package provides two primary approaches for phonon calculations: finite differences (IBRION=5,6) and density functional perturbation theory (DFPT, IBRION=7,8). The workflow for computing phonon dispersion with proper q-point sampling involves [2] [1]:

Force Constant Calculation: Perform calculation in a sufficiently large supercell using either finite displacements or DFPT to obtain the force constants. For finite differences, IBRION=6 is preferred as it uses symmetry to reduce computational cost.

QPOINTS Path Specification: Create a QPOINTS file containing a high-symmetry path in the Brillouin zone. Tools such as SeeK-path or pymatgen can generate appropriate paths with labels for specific crystal structures.

Phonon Dispersion Computation: Set

LPHON_DISPERSION = .TRUE.in the INCAR file to compute the phonon dispersion along the specified path through Fourier interpolation of the force constants.Phonon DOS Computation: For DOS calculations, create a QPOINTS file with a uniform mesh and set

PHON_DOS > 0to compute the phonon density of states with Gaussian (PHON_DOS = 1) or tetrahedron (PHON_DOS = 2) smearing.

For polar materials, additional steps are required:

- Compute Born effective charges and dielectric tensor through a DFPT calculation (

LEPSILON = .TRUE.orLCALCEPS = .TRUE.) in the primitive cell - Provide these tensors in the supercell calculation via

PHON_BORN_CHARGESandPHON_DIELECTRIC - Set

LPHON_POLAR = .TRUE.to include long-range dipole-dipole corrections

Quantum ESPRESSO Protocol

Quantum ESPRESSO employs a DFPT-based approach for phonon calculations with a distinct workflow [5]:

Self-Consistent Field (SCF) Calculation: Perform a highly converged SCF calculation with increased energy cutoff and dense k-point sampling using

pw.x.Dynamical Matrix Calculation: Compute the dynamical matrix on a uniform q-point mesh using

ph.xwithldisp = .true.and specifyingnq1,nq2,nq3for the coarse grid.Force Constant Transformation: Use

q2r.xto perform inverse Fourier transform of dynamical matrices to obtain real-space force constants.Phonon Dispersion Interpolation: Employ

matdyn.xto compute phonon frequencies along high-symmetry paths and on fine uniform meshes for DOS.

A critical consideration in Quantum ESPRESSO is ensuring the commensurability of k-point and q-point grids. The SCF calculation should use a k-point grid that is an integer multiple of the q-point grid to maintain consistency in the reciprocal space sampling.

Specialized Sampling for Complex Materials

For materials with large unit cells such as metal-organic frameworks (MOFs) and organic molecular crystals, traditional DFT-based phonon calculations become computationally prohibitive. In these cases, alternative strategies emerge [8] [7]:

Machine Learning Potentials: Pre-trained universal potentials (e.g., MACE-MP-0) or fine-tuned specialized potentials (e.g., MACE-MP-MOF0 for MOFs) can accurately reproduce phonon properties while dramatically reducing computational cost.

Compressive Sensing Lattice Dynamics: This approach uses random displacement configurations with all atoms perturbed simultaneously, requiring fewer supercell calculations than the standard finite displacement method.

Strategic Sampling: For high-throughput screening, initial assessments can use smaller q-point grids (2×2×2 or 3×3×3) to identify promising candidates, followed by more converged calculations only for selected materials.

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key Research Reagent Solutions for q-point Convergence Studies

| Reagent/Software | Function in q-point Studies | Implementation Considerations |

|---|---|---|

| VASP (IBRION=5,6,7,8) | Finite difference & DFPT force constant calculation | Use IBRION=6 for symmetry-reduced displacements; PREC=Accurate recommended |

| Quantum ESPRESSO (ph.x) | DFPT dynamical matrix calculation | Ensure k-point and q-point grids are commensurate |

| Phonopy | Post-processing finite displacement calculations | Automated supercell generation and force constant extraction |

| SeeK-path | High-symmetry path generation for dispersion curves | Provides standardized labeling for reproducible research |

| pymatgen | Structure manipulation and analysis | Python library for creating supercells and k-point/q-point meshes |

| MACE-MP-0 | Machine learning potential for accelerated phonons | Particularly valuable for high-throughput screening of complex materials |

| ALIGNN | Direct phonon spectrum prediction | Graph neural network bypassing force constant calculation entirely |

Workflow Visualization and Decision Pathways

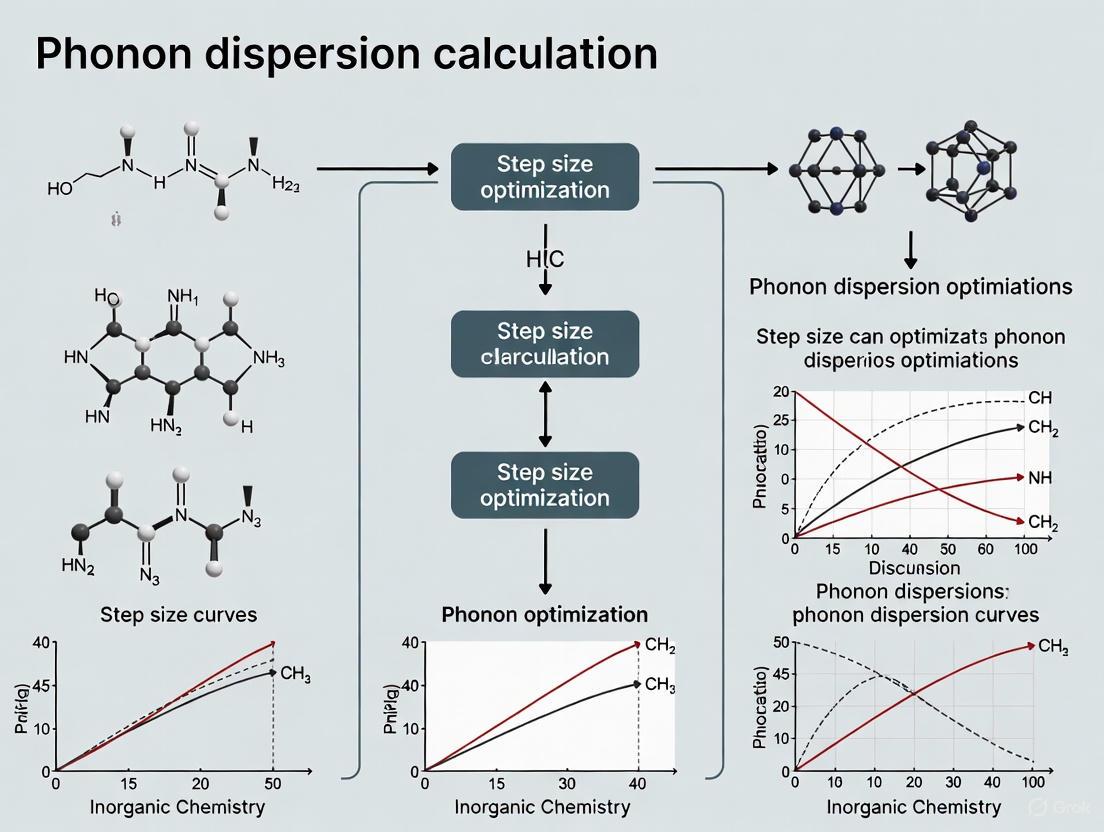

The computational workflow for determining optimal q-point sampling involves multiple decision points and validation steps. The following diagram illustrates the comprehensive protocol:

Phonon Calculation q-point Convergence Workflow

The relationship between computational parameters in phonon calculations forms an interconnected network where each parameter influences multiple aspects of the final result:

Computational Parameter Interrelationships in Phonon Calculations

Advanced Considerations and Emerging Methodologies

Machine Learning Accelerated Approaches

Recent advances in machine learning offer promising alternatives to traditional DFT-based phonon calculations [8] [7]. These approaches fall into two primary categories:

Direct Phonon Prediction: Graph neural networks (GNNs) such as ALIGNN (Atomistic Line Graph Neural Network) and Euclidean neural networks (E(3)-NN) can directly predict phonon density of states and dispersion relations when trained on large phonon databases. These models bypass force constant calculations entirely, enabling instantaneous phonon spectrum estimation.

Machine Learning Interatomic Potentials (MLIPs): Models like MACE (Multi-Atomic Cluster Expansion) learn the relationship between atomic configurations and potential energy surfaces, providing accurate forces for supercell calculations at a fraction of the computational cost of DFT. Specialized MLIPs such as MACE-MP-MOF0 fine-tuned for metal-organic frameworks demonstrate remarkable accuracy in reproducing phonon properties for complex materials.

The data efficiency of these machine learning approaches continues to improve, with some models achieving reliable predictions with training sets of just 1,200 materials. For high-throughput screening applications where computational efficiency is paramount, these methods enable phonon calculations for thousands of materials while maintaining quantum-mechanical accuracy.

High-Throughput Screening Protocols

For large-scale materials discovery projects targeting specific phonon-related properties (e.g., thermal conductivity, thermodynamic stability), a tiered screening approach optimizes computational resources [8] [7]:

- Initial Filtering: Apply simple geometric and compositional descriptors to identify candidate materials.

- Rapid Phonon Assessment: Use machine learning potentials or low-resolution q-point sampling (2×2×2) to identify materials with desired phonon characteristics.

- Validation Set Calculation: Perform fully converged DFT phonon calculations for a subset of promising candidates to validate the screening approach.

- Experimental Prioritization: Apply additional filters based on synthesizability and other practical considerations to generate final candidate lists for experimental investigation.

This tiered approach dramatically reduces the computational cost of screening large materials databases while maintaining confidence in the predictions through strategic validation calculations.

The accuracy of phonon dispersion calculations is inextricably linked to proper q-point sampling strategies. Through the systematic two-stage convergence protocol—separately addressing coarse grid and fine grid requirements—researchers can achieve reliable phonon spectra while optimizing computational resources. The specific sampling requirements vary significantly across material classes, with polar materials demanding special treatment for LO-TO splitting and complex materials requiring balanced approaches that account for their large unit cells.

Emerging methodologies based on machine learning interatomic potentials present promising avenues for accelerating phonon calculations, particularly in high-throughput screening contexts. However, these approaches should be validated against traditional DFT calculations for each new class of materials until their reliability is firmly established. By adhering to the protocols and considerations outlined in this work, researchers can ensure the accuracy of their phonon dispersion calculations while making efficient use of computational resources.

In the domain of first-principles materials simulations, precise Brillouin zone sampling is a cornerstone for obtaining accurate results. This process is governed by two distinct but related concepts: k-points and q-points. k-points sample the electronic Brillouin zone for calculating ground-state electronic properties, whereas q-points sample the phonon Brillouin zone for lattice dynamical calculations such as phonon dispersions [9] [8]. Within the context of phonon dispersion calculation step-size optimization techniques, understanding the convergence behavior of both k-points and q-points is critical. The selection of sampling meshes directly influences the numerical precision of computed properties, including total energies, forces, and vibrational frequencies, thereby impacting the predictive power of high-throughput materials discovery workflows [10].

The challenge intensifies when dealing with metallic systems, where the discontinuity of the electronic occupation function at the Fermi surface leads to notoriously poor convergence with a uniform sampling mesh. Smearing techniques are often employed to mitigate this issue by smoothing the occupation function, effectively introducing a fictitious electronic temperature that accelerates k-point convergence, albeit at the cost of a slight deviation from the true zero-smearing limit [10]. This article establishes detailed protocols for assessing the convergence of these parameters, providing structured methodologies and metrics essential for reliable phonon calculations.

Defining k-points and q-points

k-points represent the wavevectors used to sample the electronic Brillouin zone (BZ) in Bloch's theorem, enabling the computation of electronic wavefunctions and eigenvalues in periodic crystals. The convergence of k-point sampling is vital for accurately determining total energies, electronic densities, and derived properties such as forces and stresses [10]. The density of the k-point mesh is inversely related to the size of the real-space unit cell; larger unit cells require fewer k-points for equivalent sampling density [11].

In contrast, q-points are the wavevectors used to sample the phonon Brillouin zone. They represent the periodicity of a phonon perturbation within a crystal lattice. Phonon properties, including dispersion curves and density of states, are calculated on a grid of these q-points [9] [8]. The force constants, which describe the interatomic interactions, are typically computed in a real-space supercell, and the quality of the phonon spectrum is contingent upon the convergence of the q-point mesh, which must be dense enough to capture all relevant vibrational modes [12] [13].

Table 1: Key Differences Between k-points and q-points

| Feature | k-points | q-points |

|---|---|---|

| Physical Meaning | Electronic wavevectors [9] | Phonon wavevectors [9] |

| Sampled Zone | Electronic Brillouin Zone | Phonon Brillouin Zone |

| Influences | Total energy, forces, stresses, electronic band structure | Phonon frequencies, dispersion, thermodynamic properties |

| Convergence Priority | Electronic structure, Hellmann-Feynman forces [10] | Dynamical matrix, vibrational spectra [8] |

Convergence metrics and criteria

The convergence of k-point and q-point sampling is evaluated by monitoring the changes in key physical properties as the sampling density increases. The primary metric is the total energy per atom, where the variation should fall below a predefined threshold, often chosen as 1 meV/atom for high-precision studies [10]. However, for phonon-specific calculations, forces on atoms become a more sensitive and critical metric. The convergence of the maximum force and root-mean-square (RMS) force across all atoms in the system must be assessed to ensure reliable ionic relaxation and force constant calculations [13].

For metallic systems or narrow-gap semiconductors, the use of smearing techniques introduces an additional convergence parameter. The smearing width (SIGMA in VASP, for instance) must be optimized in tandem with the k-point mesh. The generalized free energy includes an entropic term that depends on both the smearing temperature and the derivatives of the electronic density of states at the Fermi energy [10]. The convergence protocol must therefore target the zero-smearing limit, requiring a systematic reduction of the smearing width as the k-point density increases.

Table 2: Quantitative Convergence Guidelines for k-point Sampling (SCM/BAND Code) [11]

| KSpace Quality | Energy Error / Atom (eV) | CPU Time Ratio | Recommended For |

|---|---|---|---|

| Gamma-Only | 3.3 | 1 | Quick tests, large systems |

| Basic | 0.6 | 2 | --- |

| Normal | 0.03 | 6 | Insulators, wide-gap semiconductors |

| Good | 0.002 | 16 | Metals, narrow-gap semiconductors, geometry under pressure |

| VeryGood | 0.0001 | 35 | High-precision phonons, properties sensitive to sampling |

Experimental protocols for convergence testing

Protocol for k-point convergence

A rigorous k-point convergence study should be performed for each new material system. The following step-by-step protocol, adaptable for codes like VASP and Quantum ESPRESSO, ensures systematic testing.

- Initial Setup: Begin with a fully optimized crystal structure. Select a plane-wave energy cutoff (

ENCUT) well above the required minimum to decouple basis set and BZ sampling errors [10] [13]. - Mesh Generation: Generate a series of Γ-centered k-point meshes with increasing density. A practical sequence is 2×2×2, 4×4×4, 6×6×6, 8×8×8, etc. For hexagonal systems, use a similar sequence like 6×6×4, 8×8×6, 10×10×8 to maintain consistent sampling density across lattice vectors [11].

- Smearing Selection: Choose an appropriate smearing method (e.g., Methfessel-Paxton, Marzari-Vanderbilt cold smearing) and an initial smearing width based on system type (e.g., 0.2 eV for metals, 0.01 eV for insulators) [10] [13].

- Property Calculation: For each k-point mesh in the sequence, perform a single-point energy calculation and extract the total energy, forces on atoms, and the stress tensor.

- Data Analysis: Plot the total energy per atom and the maximum force component as a function of the inverse number of k-points (or the k-spacing). The calculations are considered converged when these values change by less than the target thresholds (e.g., 1 meV/atom for energy and 1 meV/Å for forces) between successive mesh refinements.

- Smearing Optimization: Once a dense k-mesh is identified, reduce the smearing width and repeat the calculation to ensure the results are stable and approach the

T=0 Klimit.

Protocol for q-point convergence in phonons

Converging the q-point mesh for phonon calculations is intrinsically linked to the size of the supercell used to compute the force constants.

- Force Constant Calculation: The force constants are calculated using either Density Functional Perturbation Theory (DFPT) or the finite-displacement method. In the finite-displacement method, this involves constructing supercells of increasing size (e.g., 2×2×2, 3×3×3, 4×4×4 of the primitive cell) and computing the Hessian matrix by displacing atoms and calculating the resulting forces [13] [8].

- Phonon Property Computation: Use the force constants from each supercell to compute the phonon dispersion and phonon density of states on a dense q-point mesh.

- Metric Tracking: Monitor the changes in key phonon properties with increasing supercell size. These include:

- The phonon frequencies at high-symmetry points (especially the lowest-frequency acoustic modes).

- The phonon density of states.

- The detection of any imaginary frequencies (soft modes) that may appear or disappear with improved sampling.

- Convergence Criterion: The q-point sampling is considered converged when the phonon frequencies at all high-symmetry points change by less than a target value (e.g., 0.1 THz or 1 cm⁻¹) and the overall shape of the phonon dispersion and DOS remains unchanged.

Figure 1: Workflow for k-point and q-point convergence testing. The protocol begins with geometry optimization, proceeds through iterative k-point convergence, and culminates in q-point convergence for phonon properties.

The scientist's toolkit: Essential research reagents and computational solutions

Successful Brillouin zone sampling and phonon calculations rely on a suite of software tools and computational "reagents." The following table details key solutions used in the field.

Table 3: Essential Computational Tools for Brillouin Zone Sampling and Phonon Calculations

| Tool Name | Type | Primary Function | Relevance to Sampling |

|---|---|---|---|

| VASP [13] | DFT Code | Electronic structure calculations | Implements k-points (KPOINTS) and q-points (QPOINTS/PHPOINTS) for DFPT and finite-difference phonons. |

| Quantum ESPRESSO [10] | DFT Code | Plane-wave pseudopotential DFT | Used with SSSP protocols for automated k-point and smearing parameter selection. |

| AiiDA [10] | Workflow Manager | Automating and managing simulation workflows | Enforces reproducible convergence tests and manages parameter optimization. |

| Phonopy [8] | Post-Processing Tool | Phonon analysis | Works with force constants from supercell calculations to produce phonon band structures and DOS. |

| pymatgen/ASE [13] [14] | Materials API | Structure manipulation and analysis | Scripting generation of k-point meshes, supercells, and automated analysis of convergence. |

| MACE MLIP [8] | Machine Learning Potential | Accelerated force prediction | Reduces number of DFT supercell calculations needed for phonon force constants. |

Advanced considerations and machine learning approaches

The computational cost of achieving fully converged q-point meshes, especially for large or low-symmetry unit cells, remains a significant bottleneck in high-throughput phonon studies [8]. The finite-displacement method requires numerous DFT calculations on large supercells, which can be prohibitively expensive.

Machine learning interatomic potentials (MLIPs) are emerging as a powerful strategy to overcome this barrier. Models such as MACE (Multi-Atomic Cluster Expansion) are trained on a subset of supercell structures where atoms are randomly perturbed, and the resulting interatomic forces are computed with DFT [8]. Once trained, the MLIP can predict forces for new configurations with near-DFT accuracy but at a fraction of the computational cost. This approach dramatically accelerates the construction of the force constant matrix, enabling efficient convergence tests of q-point sampling for a vast number of materials. Studies have demonstrated that universal MLIPs, trained on diverse materials, can successfully predict harmonic phonon properties, including full phonon dispersions and vibrational free energies [8] [14].

Furthermore, for systems with high symmetry, the choice of k-point grid type can impact efficiency and accuracy. A symmetric grid that samples only the irreducible wedge of the Brillouin zone can be more efficient for highly symmetric crystals like silicon or graphene. This is particularly important for properties like the electronic band structure, where including specific high-symmetry points (e.g., the 'K' point in graphene) is essential for capturing the correct physics [11].

The Critical Role of Lattice Optimization as a Prerequisite for Phonon Calculations

Lattice optimization, encompassing the relaxation of both atomic positions and lattice vectors, is a non-negotiable prerequisite for obtaining physically meaningful results from subsequent phonon calculations. Within the context of phonon dispersion curve research, the accuracy of the optimized lattice parameters directly dictates the precision of the calculated vibrational frequencies and the resulting step sizes in the Brillouin zone. This foundational step ensures that the calculation of interatomic force constants (IFCs) originates from a true energy minimum, thereby guaranteeing the dynamical stability of the system under investigation. Failure to perform a rigorous geometry optimization can lead to the appearance of unphysical imaginary frequencies, which obscure the true vibrational characteristics and thermodynamic properties of the material [12] [15]. This article outlines the critical protocols and provides supporting data to establish robust pre-phonon calculation procedures.

Theoretical Foundation

Phonon calculations, particularly those employing the harmonic approximation, are fundamentally based on the analysis of the dynamical matrix, which is built from the second-order interatomic force constants (IFCs). These IFCs are formally defined as the second derivative of the total energy with respect to atomic displacements:

[ \Phi{ij}^{ab} = \frac{\partial^2 E}{\partial ui^a \partial u_j^b} ]

where ( E ) is the total energy of the crystal, and ( u_i^a ) is the displacement of atom ( a ) in the Cartesian direction ( i ) [16]. This mathematical formulation is only valid at a mechanical equilibrium point, where the first derivatives of the energy—the forces on all atoms—are zero. Lattice optimization is the computational process that locates this equilibrium configuration. An unoptimized structure, with residual forces or non-optimal lattice parameters, violates the core assumption of the harmonic approximation. Consequently, the calculated phonon spectrum will exhibit imaginary frequencies, erroneously indicating a dynamically unstable structure, even for well-known stable crystals like silicon [15]. Furthermore, the choice of the exchange-correlation functional in DFT calculations can significantly influence the optimized lattice parameter; for instance, the PBEsol functional is often preferred over PBE for solid-state systems as it tends to provide more accurate lattice constants and, by extension, more reliable phonon frequencies [16].

Table 1: Impact of Optimization on Calculated Properties

| Property | Unoptimized Structure | Optimized Structure |

|---|---|---|

| Atomic Forces | Non-zero, significant | Close to zero (< 0.01 eV/Å) |

| Phonon Frequencies | Imaginary (unphysical) | Real (physically meaningful) |

| Lattice Parameters | Often over/under-estimated | Consistent with functional & experiment |

| Predicted Stability | Incorrectly unstable | Correctly assessed |

Experimental and Computational Protocols

Standardized Workflow for Lattice Optimization and Phonon Calculation

Adherence to a systematic workflow is paramount for the reproducibility and accuracy of phonon properties. The following protocol, summarized in the diagram below, outlines the essential steps from initial structure preparation to the final phonon analysis.

Diagram 1: Lattice Optimization and Phonon Calculation Workflow

Detailed Methodology for Key Steps

Step 1: Stringent Geometry Optimization Initiate a geometry optimization calculation with constraints relaxed. It is critical to optimize both the internal atomic coordinates and the lattice vectors (cell parameters) [12].

- Convergence Criteria: Set tight convergence thresholds. For nuclear degrees of freedom, a maximum force below 0.01 eV/Šis recommended. For lattice stress, a threshold of 0.0001 eV/ų ensures the removal of internal stress that can cause imaginary phonon modes, as demonstrated in graphene nanoribbon studies [17].

- Functional Selection: Use a density functional theory (DFT) functional that accurately reproduces lattice constants, such as PBEsol, which is designed for solids and often outperforms PBE in this regard [16].

Step 2: Force Calculation for Phonons Once a fully optimized geometry is obtained, the forces for phonon calculation are computed.

- Supercell Generation: Use a tool like

phonopyto create a set of supercells with small atomic displacements. A common supercell dimension is 4x4x4, but this should be tested for convergence [18]. - Force Calculations: Perform single-point energy and force calculations on each of the displaced supercells. It is vital that these supercells are not re-relaxed, as the forces induced by the small, finite displacements are the direct input for the force constants [18].

- Electronic Settings: Ensure a high k-point sampling and energy cutoff. For accurate forces, the

TOLDEEparameter (or its equivalent in other codes) should be set to a stringent value (e.g., 10 or higher) to ensure precise diagonalization [18].

Step 3: Phonon Property Calculation Post-process the calculated forces to obtain phonon properties.

- Force Constants: Use the calculated forces from all displaced supercells to construct the second-order force constants file (e.g.,

FORCE_SETSin phonopy) [18]. - Band Structure and DOS: Calculate the phonon dispersion along high-symmetry paths in the Brillouin zone and the phonon density of states (DOS). The DOS sampling mesh (e.g., a 51x51x51 q-point grid) must be dense enough for convergence [17].

Table 2: Essential Computational "Reagents" for Phonon Calculations

| Research Reagent / Tool | Function / Purpose |

|---|---|

| DFT Code (VASP, CRYSTAL, QuantumATK) | Performs electronic structure calculations for geometry optimization and force evaluations. |

| Phonopy / Phono3py | Generates displaced supercells, post-processes forces to obtain phonon spectra, and calculates thermal properties. |

| HiPhive Package | Fits harmonic and anharmonic force constants using advanced sampling and regression techniques. |

| ShengBTE / FourPhonon | Solves the Boltzmann Transport Equation to compute lattice thermal conductivity, including 3ph and 4ph scattering. |

| Machine Learning Potentials (MACE) | Accelerates force calculations by learning the potential energy surface, reducing the need for expensive DFT. |

Advanced Considerations and Emerging Methodologies

High-Throughput and Anharmonic Workflows

For high-throughput screening or the calculation of anharmonic properties, the workflow must be automated and computationally efficient. Recent frameworks integrate multiple packages to create a seamless pipeline [16].

- Automated Workflows: Platforms like

atomateautomate the entire process, from structural optimization and force calculations to force constant fitting and thermal property computation, ensuring consistency across large sets of materials [16]. - Anharmonic IFCs: Calculating properties like lattice thermal conductivity requires higher-order force constants (3rd and 4th order). Tools like

HiPhiveuse compressive sensing to fit these anharmonic IFCs from a relatively small number of strategically displaced configurations, bypassing the combinatorial explosion of the finite-displacement method [16]. - GPU Acceleration: The immense computational cost of calculating phonon scattering rates, especially for four-phonon processes, is being addressed by GPU-accelerated codes like

FourPhonon_GPU, which can achieve over 10x speedup [19].

The Role of Machine Learning

Machine learning is revolutionizing high-throughput phonon calculations by drastically reducing computational cost.

- Machine Learning Interatomic Potentials (MLIPs): Models like MACE are trained on DFT data to predict interatomic forces with near-DFT accuracy but at a fraction of the computational cost [8]. This allows for the generation of large training datasets and the rapid screening of phonon properties across vast chemical spaces.

- Data-Driven Training: Instead of using many single-atom displacements, MLIPs can be trained on a smaller number of supercells where all atoms are randomly perturbed. A universal MLIP trained on diverse materials can then predict accurate harmonic phonon properties for new compounds, significantly accelerating the workflow [8].

A meticulously executed lattice optimization is the cornerstone of reliable phonon calculations. The protocols detailed herein—emphasizing the optimization of both atomic positions and lattice parameters under stringent convergence criteria—provide a roadmap for obtaining physically sound phonon dispersions. As the field progresses towards high-throughput screening and the incorporation of strong anharmonicity, the integration of automated workflows, advanced force-constant fitting methods, and machine learning potentials will further cement the role of precise lattice optimization as an indispensable first step in computational lattice dynamics.

In the calculation of phonon dispersion, the appearance of imaginary frequencies—mathematically represented as negative values on the dispersion plot—is a primary indicator of non-physical results. Often mistaken as a sign of computational failure, these artifacts frequently stem from a fundamental issue: improper sampling during the preceding stages of geometry optimization and force constant calculation. This application note delineates the critical relationship between sampling techniques and the physical validity of phonon spectra, providing researchers with structured protocols to identify, troubleshoot, and prevent these prevalent pitfalls. Within the broader context of phonon dispersion calculation step size optimization, this document emphasizes that achieving physically meaningful results is not merely a function of computational power but of meticulous sampling strategy.

The Critical Link Between Sampling and Dynamical Matrix Accuracy

The harmonic phonon frequencies of a crystal are obtained by solving the eigenvalue problem derived from its dynamical matrix. The accuracy of this matrix is entirely contingent upon the accurate calculation of the force constants, which are the second derivatives of the total energy with respect to atomic displacements [20]. Any error in these force constants propagates directly into the phonon frequencies, potentially manifesting as imaginary modes.

Improper sampling can corrupt the force constants in several key ways:

- Insufficient Lattice Optimization: Phonons must be calculated on a fully relaxed geometry, including both atomic positions and lattice vectors. An inadequately optimized lattice retains residual stresses, causing the dynamical matrix to be solved for a non-equilibrium configuration, which often results in imaginary frequencies [12].

- Sparse k-Space Sampling: Using an insufficiently dense k-point grid during the initial geometry optimization can lead to an incorrect prediction of the equilibrium lattice parameter. This forces subsequent phonon calculations to be performed on a unit cell that is not at its true energy minimum [12].

- Inadequate Supercell Sampling for Force Constants: The finite-displacement method requires calculating forces in a supercell to capture the range of atomic interactions. Using a supercell that is too small fails to capture long-range force constants accurately, leading to an incomplete and erroneous dynamical matrix [8].

- Coarse q-Mesh for Scattering Rates: When calculating phonon-mediated properties like thermal conductivity, a coarse q-mesh in the reciprocal space fails to adequately sample the scattering phase space, leading to non-converged and often under-predicted physical properties [21].

Current Methodologies and Sampling Solutions

Recent research has produced advanced methodologies specifically designed to overcome sampling-related challenges. The table below summarizes several key approaches.

Table 1: Advanced Methodologies for Accelerated and Accurate Phonon Calculations

| Methodology | Core Principle | Key Sampling Innovation | Demonstrated Benefit |

|---|---|---|---|

| Machine Learning Universal Potentials [8] | Uses ML interatomic potentials (MLIPs) to predict forces. | Trains on a diverse dataset generated from random atomic perturbations (0.01–0.05 Å) in a subset of supercells. | Reduces the number of required DFT supercell calculations; enables high-throughput screening. |

| Sampling & Maximum Likelihood Estimation (MLE) [21] | Estimates phonon scattering rates from a small sample of processes. | Applies MLE to a randomly selected subset of 3-phonon and 4-phonon scattering processes. | Accelerates calculations by 3-4 orders of magnitude; enables converged results with a dense 32x32x32 q-mesh. |

| Minimal Molecular Displacement (MMD) [20] | Reformulates lattice dynamics in a basis of molecular coordinates. | Uses rigid-body motions and intramolecular vibrations as displacements, reducing the number of needed supercell force calculations. | Reduces computational cost by a factor of 4-10 while preserving accuracy, especially for low-frequency modes. |

| Foundation Model Fine-Tuning (MACE-MP-MOF0) [7] | Fine-tunes a general ML potential (MACE-MP-0) on a curated, diverse dataset. | Incorporates data from molecular dynamics, strained configurations, and optimization trajectories of 127 MOFs. | Corrects imaginary frequencies and accurately predicts phonon density of states for complex metal-organic frameworks. |

Experimental Protocol: Machine Learning Potential for High-Throughput Phonons

This protocol is adapted from workflows used to develop accurate MLIPs for phonon calculations in metal-organic frameworks (MOFs) and other systems [8] [7].

1. Objective: To generate a machine-learning potential that accurately predicts harmonic phonon properties while minimizing the number of required DFT force calculations. 2. Materials/Software:

- DFT software (e.g., VASP, Quantum ESPRESSO)

- Machine learning potential framework (e.g., MACE [8] [7])

- Atomic Simulation Environment (ASE) [7] 3. Procedure:

- Step 1: Dataset Curation. Select a diverse set of representative structures (e.g., 127 MOFs) covering the chemical and structural space of interest.

- Step 2: Structure Generation. For each material, generate a limited number of supercells (≈6). In these supercells, randomly perturb all atoms with displacements between 0.01 Å and 0.05 Å.

- Step 3: DFT Reference Calculations. Perform DFT calculations on these perturbed supercells to obtain accurate energies and forces, resulting in a dataset of several million force components.

- Step 4: Model Training. Train a universal MLIP (e.g., MACE model) on this aggregated dataset. The model learns the mapping from atomic structures to energies and forces.

- Step 5: Phonon Calculation. Use the trained MLIP to predict forces for the displacements required by the finite-difference method. Pass these forces to a phonon post-processing tool (e.g., phonopy) to construct the dynamical matrix and compute the phonon band structure. 4. Critical Sampling Parameters:

- Displacement Range: 0.01 - 0.05 Å.

- Perturbation Type: Collective random displacements of all atoms.

- Training Set Size: ~15,000 supercell structures with ~8 million force components. 5. Troubleshooting:

- Persistent imaginary frequencies: Fine-tune the pre-trained model on additional data from molecular dynamics or strain trajectories of the problematic material [7].

- Poor transferability: Ensure the training dataset encompasses a wide diversity of elements and bonding environments.

Visualizing Sampling-Accelerated Workflows

The following diagram illustrates the core contrast between a traditional finite-displacement workflow and a sampling-accelerated approach, highlighting where strategic sampling is applied to reduce computational cost.

Diagram Title: Sampling Workflows for Phonon Calculations

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Computational Tools for Robust Phonon Calculations

| Item | Function in Phonon Calculations | Protocol Consideration |

|---|---|---|

| AMS/DFTB [12] | Performs geometry optimization and phonon calculations using semi-empirical quantum methods. | Select "Very Good" convergence and "Optimize Lattice" for rigorous pre-phonon optimization. Use "Symmetric" k-grid for highly symmetric systems. |

| MACE-MP-0 Model [7] | A foundation machine learning interatomic potential. | Provides a strong pre-trained starting point; can be fine-tuned on specific material classes (e.g., MOFs) to correct imaginary modes. |

| Phonopy [8] | A widely used code for phonon calculations using the finite displacement method. | Interfaces with MLIPs; requires a well-tested displacement distance (e.g., 0.01 Å) and a supercell size large enough to converge force constants. |

| Density Functional Theory (DFT) [8] [20] | The first-principles method for computing reference energies and forces. | Requires stringent numerical settings (high cutoff, dense k-mesh) and appropriate dispersion corrections for molecular crystals. |

| Curated Phonon Database (e.g., MDR) [8] | Repository of existing phonon data for materials. | Serves as a benchmark and training data source for developing new machine learning models. |

The path to physically sound phonon results is paved with meticulous sampling strategies. As demonstrated, improper sampling during geometry optimization or force constant calculation is a primary culprit for introducing imaginary frequencies and other non-physical artifacts. The advent of machine learning potentials and statistical estimation techniques offers a paradigm shift, enabling researchers to bypass traditional cost-accuracy trade-offs by leveraging intelligent, data-driven sampling. By adhering to the detailed protocols and leveraging the tools outlined in this document, researchers can effectively navigate common pitfalls, ensuring their phonon dispersion calculations are not only computationally efficient but also rigorously grounded in physical reality.

Choosing Your Method: DFPT, Finite Displacement, and Modern Machine Learning Approaches

Density Functional Perturbation Theory (DFPT) provides an efficient, analytical framework for computing the second-order derivatives of the total energy of a crystalline system with respect to various perturbations, most commonly atomic displacements for calculating lattice dynamical properties [22]. Unlike the finite-displacement method, which requires constructing and calculating forces in supercells commensurate with the phonon wavevector, DFPT directly calculates the dynamical matrix at any wavevector q within the primitive cell [23]. This makes DFPT particularly powerful for obtaining phonon band structures, as it avoids the intensive computational cost associated with large supercells.

The fundamental output of a DFPT phonon calculation is the dynamical matrix. For a generic point q in the Brillouin zone, the phonon frequencies ωq,v and eigenvectors are obtained by solving the generalized eigenvalue problem defined by the dynamical matrix [24]. For polar materials, the long-range dipole-dipole interaction must be accounted for in the limit q→0 to correctly describe the splitting between longitudinal optical (LO) and transverse optical (TO) modes. This requires the additional calculation of Born effective charges (BECs) and the dielectric tensor within the same DFPT framework [24] [25].

DFPT Convergence Studies and Grid Sampling Protocols

The accuracy of DFPT calculations is critically dependent on the sampling of the Brillouin zone. Two distinct grids must be converged: the k-point grid for the electronic wavefunctions and the q-point grid for the phonon wavevectors.

Electron Wavevector (k-point) Grid Convergence

The k-point grid density directly impacts the accuracy of the computed phonon frequencies, especially for properties like the LO-TO splitting. A systematic convergence study is essential.

A high-throughput study on 48 semiconducting materials established that a k-point grid density should be chosen with at least 1000 k-points per reciprocal atom (kpra) to achieve well-converged phonon frequencies and LO-TO splittings [26]. Using a symmetric, Γ-centered grid is crucial, as shifted grids that break symmetry can lead to significantly larger errors, sometimes exceeding 10 cm⁻¹, even at high densities [26].

Table 1: Convergence of Phonon Frequencies with k-point Grid Density

| k-point Grid Density (kpra) | Fraction of Converged Materials (F)* [%] | Typical Error in LO-TO Splitting | Recommendation |

|---|---|---|---|

| ~500 | ~40% | Significant, > 10 cm⁻¹ | Insufficient for most studies |

| ~1000 | > 80% | Well-converged | Recommended for high-throughput |

| ~1500 | > 95% | Fully converged | For high-precision studies |

*F is defined as the fraction of materials for which the phonon frequencies are converged within a predefined threshold [26].

Phonon Wavevector (q-point) Grid Convergence

The q-point grid determines the set of wavevectors used for the DFPT calculation itself. For property calculations beyond a single q-point, an interpolation strategy is employed: DFPT calculations are performed on a coarse q-grid, and a Fourier interpolation is used to obtain frequencies at any other point in the Brillouin zone [22].

The required density of the initial q-grid depends on the complexity of the system and the range of the interatomic force constants. A grid with a density of approximately 1000 q-points per reciprocal atom (qpra) is generally sufficient to converge phonon frequencies for thermodynamic properties to within a few cm⁻¹ [26]. For the subsequent interpolation to produce a smooth phonon dispersion or density of states (DOS), a much finer path or grid is used, which is computationally cheap once the force constants are known [22].

Table 2: Convergence of Phonon DOS and Thermodynamic Properties with q-point Grid

| q-point Grid Density (qpra) | Convergence of Phonon Frequencies | Convergence of Thermodynamic Properties | Typical Use Case |

|---|---|---|---|

| ~500 | Moderate | Poor | Initial screening |

| ~1000 | Good (~1-5 cm⁻¹) | Good | Standard calculations |

| >1500 | Excellent (< 1 cm⁻¹) | Excellent | High-precision studies, complex materials |

Workflow for Systematic Convergence

DFPT Implementation and Method Selection

DFPT is implemented in many major ab initio software packages, but the available features and methodological constraints vary.

Table 3: DFPT Implementation and Method Selection in CASTEP [25]

| Feature / Hamiltonian | DFPT (Phonon) | DFPT (E-field) | Finite Displacement (FD) |

|---|---|---|---|

| Ultrasoft Pseudopotentials (USP) | ✘ Not Available | ✘ Not Available | ✓ Available |

| Norm-Conserving Pseudopotentials (NCP) | ✓ Available | ✓ Available | ✓ Available |

| LDA, GGA Functionals | ✓ Available | ✓ Available | ✓ Available |

| DFT+U | ✘ Not Available | ✘ Not Available | ✓ Available |

| Hybrid Functionals (e.g., PBE0) | ✘ Not Available | ✘ Not Available | ✓ Available |

As shown in Table 3, a critical constraint in some codes, like CASTEP, is that DFPT is not implemented for use with ultrasoft pseudopotentials [27] [25]. In such cases, one must either use norm-conserving pseudopotentials, which require a higher plane-wave energy cutoff, or resort to the finite-displacement method [27].

Table 4: Recommended DFPT Calculation Methods for Target Properties [25]

| Target Property | Preferred Method | Key Settings |

|---|---|---|

| IR/Raman Spectrum | DFPT at q=0 with NCPs | Calculate Born effective charges and dielectric tensor |

| Phonon Dispersion or DOS | DFPT + Interpolation with NCPs | Use coarse q-grid; interpolate to fine path |

| Born Effective Charges (Z*) | DFPT E-field with NCPs | Direct calculation from mixed derivatives |

| Thermodynamic Properties | Same as Phonon DOS | Use interpolated DOS on dense q-grid |

Validation and Analysis of Results

Numerical Precision and Validation Indicators

High-throughput studies rely on automated indicators to flag potentially problematic calculations. Key indicators include [24]:

- Acoustic Sum Rule (ASR) breaking: The frequencies of the three acoustic modes at the Γ-point should be zero. A significant deviation (> 30 cm⁻¹) suggests a lack of convergence, often with respect to the plane-wave cutoff.

- Charge Neutrality Sum Rule (CNSR) breaking: The sum of the Born effective charges over all atoms in the unit cell for each Cartesian direction should be zero. A significant deviation (e.g., > 0.2) indicates potential issues.

- Imaginary Frequencies: Small negative frequencies near the Γ-point can be a numerical artifact of poor k- or q-point sampling, while large imaginary frequencies away from Γ may indicate a real structural instability.

Thermodynamic Properties from Phonons

Once a converged phonon density of states g(ω) is obtained, key thermodynamic properties can be calculated within the harmonic approximation [24]. These include the Helmholtz free energy (ΔF), the phonon contribution to the internal energy (ΔEph), the constant-volume specific heat (Cv), and the entropy (S). The expressions for these properties are standard integrals over the phonon DOS [24].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 5: Key Computational Tools for DFPT Phonon Calculations

| Tool / Resource | Function / Purpose | Example Use Case |

|---|---|---|

| ABINIT | DFT/DFPT software package | High-throughput phonon database generation [24] [26] |

| CASTEP | DFT/DFPT software package | Phonon dispersion, DOS, and thermodynamic properties [27] [22] |

| Quantum ESPRESSO | DFT/DFPT software package | Phonon dispersion calculations with plane-wave basis [28] |

| Phonopy | Post-processing tool | Force constants analysis and phonon band structure plotting [23] |

| VASP | DFT/DFPT software package | Phonon calculations using the finite-displacement or DFPT approach [23] |

| PseudoDojo | Pseudopotential library | Provides consistent, high-quality norm-conserving pseudopotentials [24] |

| ASE (Atomic Simulation Environment) | Python library | Setting up and running phonon calculations via finite displacement [29] |

| Materials Project Database | Open data repository | Access to pre-computed phonon band structures and derived properties [24] |

Density Functional Perturbation Theory provides a robust and efficient framework for calculating lattice dynamical properties from first principles. The accuracy of these calculations is paramount and is primarily governed by the convergence of two key parameters: the electronic k-point grid and the phononic q-point grid. A systematic approach, starting with a Γ-centered k-point grid of at least 1000 points per reciprocal atom and a commensurate q-point grid for interpolation, forms the foundation of a reliable DFPT phonon study. Adherence to these protocols, combined with an understanding of the constraints inherent in different computational codes, enables researchers to produce predictive and validated results that can powerfully complement experimental data in materials science and drug development research.

The finite displacement method (FDM), also known as the frozen phonon approach, is a fundamental technique for calculating phonon properties in materials science. This method obtains the second derivatives of the system energy with respect to atomic displacements, known as interatomic force constants (IFCs), by numerically evaluating the forces resulting from finite atomic displacements [30]. The accuracy and reliability of FDM calculations critically depend on two key parameters: supercell size selection and displacement step magnitude. Proper selection of these parameters ensures accurate phonon spectra while maintaining computational efficiency, which is particularly important for high-throughput materials screening and complex systems in drug development research where predictive material properties guide formulation decisions.

Theoretical Background

Fundamentals of Phonon Calculations

In the harmonic approximation, the potential energy of a crystal lattice can be expanded as a Taylor series around the equilibrium positions:

[U = U0 + \frac{1}{2} \sum{ls\alpha,l't\beta} \frac{\partial^2 U}{\partial u{ls\alpha} \partial u{l't\beta}} u{ls\alpha} u{l't\beta} + \cdots]

where the second derivatives represent the interatomic force constants (IFCs) [30]. Phonons represent the fundamental modes of collective atomic vibrations in periodic crystals and serve as quantum representations of lattice thermal vibrations. These quasiparticles play crucial roles in various material properties including thermodynamic stability, phase transition tendencies, heat capacity, Helmholtz free energy, and lattice thermal conductivity [30].

Finite Displacement Method Framework

The finite displacement method operates by:

- Creating atomic displacements: Systematically displacing atoms from their equilibrium positions in a supercell geometry

- Force calculations: Computing the Hellmann-Feynman forces on all atoms using density functional theory (DFT) or other electronic structure methods

- Force constant extraction: Utilizing finite difference techniques to obtain the IFCs in real space from the force-displacement relationships

The mathematical relationship between forces and displacements is given by:

[F{i} = -\sumj \Phi{ij} uj]

where (Fi) represents the force on atom (i), (uj) is the displacement of atom (j), and (\Phi_{ij}) are the interatomic force constants [30].

Supercell Size Selection

Fundamental Principles

Supercell size selection represents a critical compromise between computational cost and physical accuracy. The supercell must be sufficiently large to:

- Decouple periodic images: Ensure interactions between displaced atoms and their periodic images are negligible

- Capture long-range interactions: Account for the full decay length of atomic interactions, particularly important in metallic systems and polar materials with long-range electrostatic effects

- Commensurate with q-points: For phonons at wavevectors (q = (\frac{n1}{m1}, \frac{n2}{m2}, \frac{n3}{m3})), traditional diagonal supercells require size (m1 \times m2 \times m_3) [30]

Diagonal vs. Nondiagonal Supercells

Table 1: Comparison of Supercell Approaches for Finite Displacement Method

| Aspect | Diagonal Supercell | Nondiagonal Supercell |

|---|---|---|

| Construction | Simple extension of primitive cell along lattice vectors | Complex transformation using supercell matrix |

| Size Requirement | (m1 \times m2 \times m3) for q-point ((\frac{n1}{m1}, \frac{n2}{m2}, \frac{n3}{m_3})) | Least common multiple of (m1, m2, m_3) [30] |

| Computational Efficiency | Lower for large q-point sets | ~10x faster than diagonal approach [30] |

| Implementation | Widely available in codes like Phonopy, PHON, PHONON | Currently in specialized codes like ARES-Phonon [30] |

| System Complexity Scaling | Becomes prohibitive for complex systems | More efficient with increasing system complexity [30] |

Practical Selection Guidelines

- Convergence Testing: Always perform phonon frequency convergence tests with increasing supercell size [31]

- Minimal Size Criterion: Supercell should be large enough that force constants decay to negligible values at half the supercell size [31]

- Symmetry Considerations: Utilize crystal symmetry to reduce computational burden; symmetry-adapted displacements can significantly reduce the number of required calculations [23]

- Material-Specific Considerations:

- Polar materials: Require special treatment for long-range dipole interactions which may necessitate correction schemes or larger supercells [31]

- Metallic systems: May require larger supercells due to slow decay of interatomic forces

- Molecular crystals: Consider the molecular dimensions when selecting supercell size

The following workflow illustrates the systematic approach to supercell selection and convergence testing:

Displacement Step Guidelines

Optimal Displacement Magnitude

The displacement step size represents a critical parameter that balances numerical accuracy against anharmonic effects:

- Numerical Accuracy: Too small displacements exacerbate numerical noise in finite difference calculations

- Anharmonic Effects: Too large displacements introduce significant higher-order terms beyond the harmonic approximation

- Typical Range: Displacement magnitudes typically range from 0.01 Å to 0.03 Å in practice [31]

Implementation Considerations

Table 2: Displacement Generation Methods and Characteristics

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| VASP Internal Driver (IBRION=6) | Automatic displacement generation with ELPHPOTGENERATE=True [31] | Integrated workflow, minimal user intervention | May generate more displacements than strictly necessary [31] |

| External Tools (phelel, phonopy) | Symmetry-adapted displacement generation with commands like phelel -d --dim 2 2 2 [31] |

Optimal displacement sets, computational efficiency | Requires additional software and workflow integration |

| Positive-Negative Displacement Pairs | Using --pm option in phelel to generate ± displacements [31] | Improved numerical accuracy through cancellation of odd-order terms | Doubles the number of calculations |

Step Size Optimization Protocol

- Initial Testing: Perform calculations with displacement steps of 0.005, 0.01, 0.015, 0.02, and 0.03 Å

- Stability Assessment: Monitor the stability of force constants with respect to displacement magnitude

- Anharmonicity Check: Evaluate the significance of higher-order terms by comparing positive and negative displacements

- Numerical Noise Assessment: Examine the smoothness of the force-displacement relationship

- Final Selection: Choose the smallest displacement that provides numerically stable results without significant anharmonic contributions

Computational Workflows

Integrated Finite Displacement Methodology

The following diagram illustrates the complete workflow for finite displacement phonon calculations, incorporating both supercell generation and displacement protocols:

VASP-Specific Implementation

For VASP calculations, the following protocol ensures consistent results:

Geometry Optimization

- Use

IBRION=2with appropriateISIFsettings (2 for atomic positions, 3 for full cell relaxation) [23] - Employ tight convergence thresholds (

EDIFF=1E-8,EDIFFG=-0.001) - Maintain symmetry with

ISYM=2unless studying symmetry-broken systems

- Use

Finite Displacement Calculations

Post-Processing

- Extract force constants with phonopy:

phonopy --fc vasprun.xml[23] - Generate phonon dispersion and density of states

- Calculate thermodynamic properties (Helmholtz free energy, heat capacity)

- Extract force constants with phonopy:

The Scientist's Toolkit

Essential Software Solutions

Table 3: Computational Tools for Finite Displacement Method Calculations

| Software Tool | Primary Function | Key Features | Implementation Note |

|---|---|---|---|

| ARES-Phonon | Phonon calculations | Implements both diagonal and nondiagonal supercell methods [30] | ~10x faster for nondiagonal approach; interfaces with multiple DFT codes |

| Phonopy | Phonon analysis | Force constant extraction from finite displacements [23] | Works with VASP, QE; phonopy --fc vasprun.xml for DFPT data |

| phelel | Workflow management | Electron-phonon coupling calculations; displacement generation [31] | Use phelel -d --dim 2 2 2 -c POSCAR-unitcell --pm for ± displacements |

| VASP | DFT calculations | Electronic structure; force calculations with IBRION=6/7/8 [23] [31] | ELPHPOTGENERATE=True for electron-phonon potential |

| CASTEP | DFT calculations | Finite displacement and DFPT methods [25] | Use finite displacement for ultrasoft pseudopotentials, DFT+U |

Machine Learning Enhancement

Recent advances integrate machine learning potentials to accelerate finite displacement calculations:

- Synchronous Learning: Combining with machine learning potential software (e.g., ACNN) can reduce computation time by approximately 90% while maintaining accuracy comparable to first-principles calculations [30]

- Training Data Generation: The multiple displacement patterns naturally generate abundant datasets for machine learning potential training [30]

- Workflow Integration: ML potentials can replace DFT in force calculations after proper training and validation

Validation and Troubleshooting

Quality Assessment Metrics

- Acoustic Sum Rule: Enforcement of the acoustic sum rule correction (typically < 10 cm⁻¹) [25]

- Imaginary Frequencies: Small imaginary frequencies may indicate numerical issues rather than true instabilities

- Convergence Testing: Systematic convergence with k-point sampling, supercell size, and displacement step

- Method Comparison: Where possible, compare with DFPT results at high-symmetry points

Common Issues and Solutions

- Slow Convergence with Supercell Size: Consider nondiagonal supercell approach or correction schemes for long-range interactions

- Imaginary Frequencies at Zone Center: Check geometry optimization convergence and symmetry preservation

- Numerical Instability in Forces: Increase electronic convergence criteria (

EDIFF=1E-8) and use accurate precision settings (PREC=Accurate) - Computational Burden: Implement symmetry-adapted displacements and consider machine learning acceleration

The finite displacement method remains a powerful approach for phonon calculations, particularly for systems where density functional perturbation theory faces limitations. Careful attention to supercell size selection and displacement step parameters ensures accurate and efficient calculations. The emergence of nondiagonal supercell methods and machine learning acceleration significantly enhances the applicability of FDM to complex materials systems, opening new possibilities for predictive materials design in pharmaceutical development and beyond. As computational resources advance and methodologies refine, the finite displacement method continues to evolve as an essential tool in the computational materials scientist's toolkit.

Leveraging Machine Learning Potentials (e.g., MACE) for High-Throughput Phonon Screening

High-throughput screening of material properties, such as phonon-mediated behaviors, is crucial for accelerating the discovery of materials for energy, thermal management, and electronic applications. Traditional methods for phonon calculations, primarily based on Density Functional Theory (DFT), are computationally prohibitive for large-scale screening, especially for complex systems with large unit cells like Metal-Organic Frameworks (MOFs) [32]. The finite-displacement method, which requires energy and force calculations on multiple supercells, exemplifies this computational bottleneck [33]. Machine Learning Interatomic Potentials (MLIPs) have emerged as a powerful tool to overcome this barrier, offering near-DFT accuracy at a fraction of the computational cost. Among these, the Multi-Atomic Cluster Expansion (MACE) architecture represents a state-of-the-art approach that enables rapid and accurate high-throughput phonon calculations across a diverse chemical space [33] [34]. These protocols detail the application of MLIPs, specifically MACE models, for high-throughput phonon screening, providing a framework integral to thesis research on optimizing phonon dispersion calculations.

Key Machine Learning Potential Models and Performance

The MACE architecture utilizes an equivariant message-passing graph tensor network, encoding many-body information of atomic features in each layer to achieve high-fidelity predictions of potential energy surfaces [32]. Several MACE models, fine-tuned for specific applications, have demonstrated exceptional performance in phonon calculations. The following table summarizes key models relevant for high-throughput screening.

Table 1: Key MACE Models for High-Throughput Phonon Calculations

| Model Name | Training Data | Target Materials | Key Phonon-Related Performance |

|---|---|---|---|

| MACE-MP-0 (Foundation Model) | MPtrj dataset (150k inorganic crystals) [32] | Broad inorganic materials | RMSE of 33 meV/atom in energies vs. DFT on QMOF database [32] |

| MACE-MP-MOF0 | Fine-tuned on 127 representative MOFs [32] | Metal-Organic Frameworks (MOFs) | Corrects imaginary phonon modes of MACE-MP-0; accurately predicts phonon density of states, thermal expansion, and bulk moduli [32] |

| Universal MACE (Study) | 2,738 crystal structures (77 elements), 15,670 supercells [33] [34] | Diverse unary and binary materials | MAE of 0.18 THz for vibrational frequencies; 86.2% accuracy for dynamical stability classification; MAE of 2.19 meV/atom for Helmholtz free energy at 300K [33] |

Quantitative Performance Comparison

The accuracy of MLIPs is critical for reliable phonon screening. The universal MACE model demonstrated superior performance in predicting key phonon properties when validated against a held-out set of 384 materials [33]. The table below quantifies its performance against DFT reference data.

Table 2: Quantitative Performance Metrics of a Universal MACE Model for Phonon Properties

| Property | Metric | Performance vs. DFT |

|---|---|---|

| Vibrational Frequencies | Mean Absolute Error (MAE) | 0.18 THz [33] |

| Dynamical Stability | Classification Accuracy | 86.2% [33] |

| Helmholtz Vibrational Free Energy (300K) | Mean Absolute Error (MAE) | 2.19 meV/atom [33] |

| Helmholtz Vibrational Free Energy (1000K) | Mean Absolute Error (MAE) | 9.30 meV/atom [33] |

| Polymorphic Transitions (300K) | Agreements with DFT | 16 out of 19 identified transitions [33] |

Application Note: High-Throughput Phonon Screening Protocol

This protocol describes a complete workflow for high-throughput phonon screening of crystalline materials using a pre-trained universal MACE potential. The process efficiently predicts harmonic phonon spectra, dynamical stability, and vibrational free energies.

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for MLIP Phonon Screening

| Item/Tool | Function/Description | Application Note |

|---|---|---|

| Pre-trained MACE Model | A machine learning interatomic potential that maps atomic configurations to energies and forces. | The model (e.g., universal MACE) serves as the surrogate for DFT, providing the force constants for lattice dynamics [33] [34]. |

| Reference Crystal Structures | CIF files or POSCARs of the materials to be screened. | Structures must be pre-processed and validated. The protocol is most reliable for unary and binary systems [33]. |

| Supercell Generator | Software script/tool to construct supercells from primitive cells. | Required for the finite-displacement method. Supercell size must be converged to ensure accurate long-range force constants [13]. |

| Atomic Environment Descriptors | Numerical representations of the chemical environment around each atom. | Intrinsic to the MACE model. Ensures rotational and translational invariance of the potential [33]. |

| Phonon Post-Processing Code | Software (e.g., Phonopy, ALM) to calculate phonon dispersion and DOS from force constants. | Takes the force constants matrix computed via MACE and solves the eigenvalue problem to obtain phonon frequencies and modes [33]. |

Step-by-Step Screening Protocol

Input Structure Preparation

- Obtain the primitive crystal structure of the target material.

- Generate a

POSCARfile for the primitive cell. For 2D materials, ensure a sufficient vacuum layer is included to prevent spurious interactions between periodic images [13]. - For the finite-displacement method, use a script (e.g., with

pymatgen) to create a3x3x3supercell (or a size-converged one) and save it as a newPOSCARfile [13].

Model Selection and Force Inference

- Select a suitable pre-trained MACE model (see Table 1). For general inorganic materials, a universal model like the one described in [33] is appropriate. For MOFs, use a specialized model like MACE-MP-MOF0 [32].

- Apply the selected MACE model to the supercell structure. The model will predict the total energy and, crucially, the Hellmann-Feynman forces on every atom in the supercell.

- To build the force constant matrix, small displacements are applied to each atom in the supercell. Traditionally, this requires a DFT calculation for each displacement. With MACE, the forces for these displaced configurations are obtained via rapid inference, drastically reducing computational time [33].

Phonon Property Calculation

- Use the set of forces from all displaced configurations to construct the force constant matrix using the finite-displacement method as implemented in tools like Phonopy.

- The dynamical matrix is diagonalized to obtain the phonon frequencies and eigenvectors across the Brillouin zone.

- From the phonon frequencies, compute derived properties including:

- Phonon Dispersion Curves

- Phonon Density of States (DOS)

- Identification of Imaginary Frequencies (to assess dynamical stability)

Thermodynamic Property Extraction

- Using the phonon DOS, calculate temperature-dependent thermodynamic properties within the harmonic approximation.

- Key outputs include:

- Helmholtz Vibrational Free Energy

- Entropy

- Constant-Volume Heat Capacity

- These properties allow for the assessment of polymorphic phase stability at different temperatures [33].

The following workflow diagram summarizes this high-throughput screening process.

Figure 1: High-Throughput Phonon Screening Workflow. This diagram outlines the automated protocol for screening material phonon properties using a Machine Learning Interatomic Potential (MLIP).

Protocol for Fine-Tuning MACE Potentials for Custom Material Classes

For material classes not well-represented in universal models (e.g., complex MOFs, ternary compounds), fine-tuning a foundation model on a targeted dataset is necessary. This protocol details the process for developing a specialized MACE potential, such as MACE-MP-MOF0.

Research Reagent Solutions for Fine-Tuning

Table 4: Essential Tools for Fine-Tuning a MACE Model

| Item/Tool | Function/Description |

|---|---|

| Foundation MACE Model | A broadly pre-trained model (e.g., MACE-MP-0) which provides a robust starting point for transfer learning [32]. |