Overcoming Linear Dependency in Basis Sets: A Practical Guide for Robust Density of States Calculations in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on diagnosing, resolving, and preventing linear dependency in basis sets, a common numerical instability in electronic structure calculations.

Overcoming Linear Dependency in Basis Sets: A Practical Guide for Robust Density of States Calculations in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on diagnosing, resolving, and preventing linear dependency in basis sets, a common numerical instability in electronic structure calculations. Covering foundational theory to advanced applications, it details practical methodologies like automated dependency removal and basis set pruning. The guide further explores troubleshooting techniques for error mitigation and outlines validation protocols to ensure the reliability of calculated Density of States (DOS), a critical property for predicting drug-target interactions and material properties in pharmaceutical development.

Understanding Linear Dependency: What It Is and Why It Disrupts DOS Calculations

Defining Linear Dependency in the Context of Atomic Basis Sets

Frequently Asked Questions (FAQs)

What is linear dependency in a basis set? In computational chemistry, a basis set is a set of functions used to represent molecular orbitals. Linear dependency occurs when one basis function within the set can be represented as a linear combination of other functions in the same set [1] [2]. This situation is analogous to the mathematical concept where a set of vectors is linearly dependent if one vector can be written as a combination of the others [3].

Why is linear dependency a problem in calculations? Linear dependency causes the overlap matrix—which describes how basis functions interact—to become singular or nearly singular [4]. This leads to severe numerical instabilities, preventing the self-consistent field (SCF) procedure from converging and causing electronic structure programs to fail [4] [5]. It effectively means the basis set describes the same part of space multiple times without adding new information.

What are the common causes of linear dependency? The primary causes are:

- Using basis sets with diffuse functions, especially on atoms with large coordination numbers or in systems with atoms in close proximity [5].

- Incorporating extra "tight" functions (for core-electron properties) into a standard basis set, which can introduce functions with exponents too similar to existing ones [4].

- Using very large, uncontracted basis sets, where the probability of including functions with similar spatial extents is higher [4].

How can I avoid linear dependencies in my calculations? To minimize risk, use balanced, standard basis sets appropriate for your method and chemical system [5]. Avoid indiscriminately adding diffuse or tight functions unless necessary. For heavy elements, consider using effective core potentials (ECPs) to reduce the number of basis functions [5]. If linear dependency occurs, manually remove basis functions with very similar exponents [4] or use algorithms like the pivoted Cholesky decomposition to automatically detect and remove dependent functions [4].

Troubleshooting Guide: Identifying and Resolving Linear Dependency

Symptom: Calculation fails with errors related to linear dependency, overlap matrix, or SCF convergence.

Step 1: Diagnosis and Confirmation

Linear dependency is diagnosed by analyzing the eigenvalue spectrum of the overlap matrix of the basis functions [4]. Most electronic structure programs perform this check automatically and will issue a warning or error.

- What to look for: The error message often explicitly mentions "linear dependence," "overlap matrix," or eigenvalues falling below a pre-defined tolerance level [4].

- Manual Check: If you can access the overlap matrix eigenvalues, a very small eigenvalue (e.g., < 1x10⁻⁷) indicates that the corresponding eigenvector (a combination of your basis functions) is nearly redundant.

Step 2: Resolution Procedures

Once confirmed, apply the following methodologies to resolve the issue.

Method 1: A Priori Manual Removal of Suspect Functions This method is effective when linear dependency is caused by adding extra functions to a standard basis set.

- Identify Function Exponents: List the exponents (which control the spatial extent) of the basis functions for the problematic atom [4].

- Find Similar Exponents: Look for pairs of exponents, particularly from different parts of the basis set (e.g., the standard set and the added "tight" functions), that are very close in value percentage-wise [4].

- Remove One Function: From each pair of highly similar exponents, remove one function from your input.

- Re-run Calculation: The linear dependency errors should be resolved, often resulting in a lower and more physically meaningful Hartree-Fock energy [4].

Method 2: Use of Advanced Algorithms (Recommended) A more robust and general solution is to use algorithms designed to cure basis set overcompleteness.

- Pivoted Cholesky Decomposition: This method can be implemented to automatically identify and remove linearly dependent basis functions during the calculation [4]. It requires only the overlap matrix and is more systematic than manual removal. Implementations are available in electronic structure codes like ERKALE, Psi4, and PySCF [4].

Method 3: Basis Set Selection and Decontraction

- Avoid Over-augmentation: Be cautious when using heavily augmented basis sets (e.g.,

aug-cc-pV9Zwith extra diffuse or tight functions) [4] [5]. For DFT, thedef2basis set family is often more robust than the augmented correlation-consistent family [5]. - Decontraction: In some cases, decontractracting the basis set (using the primitive Gaussians directly) can help, but this may require higher numerical integration grids and can sometimes exacerbate linear dependency issues [5].

Step 3: Verification

After applying a fix, verify that:

- The calculation runs without linear dependency errors.

- The resulting energy is lower than that from the problematic calculation and is consistent with expectations for the system [4].

- The molecular properties (geometries, energies) are physically reasonable.

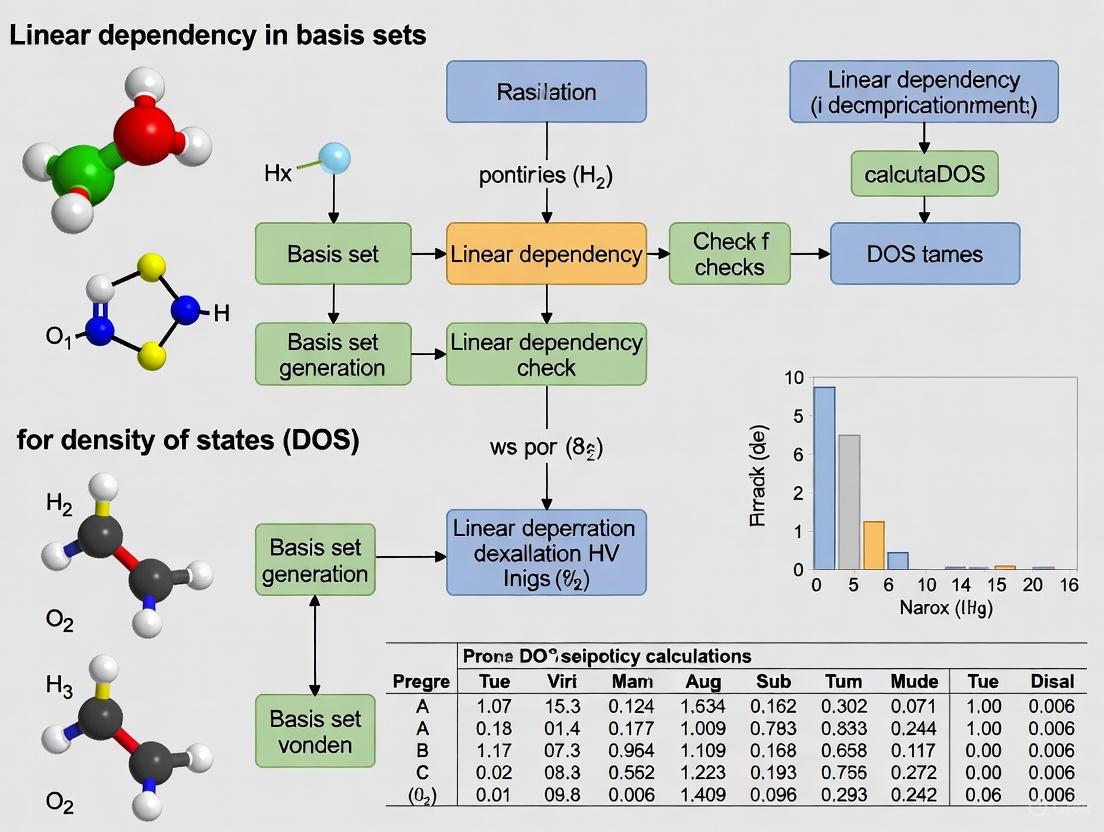

Visual Guide: Linear Dependency Workflow

The following diagram illustrates the logical process for diagnosing and resolving linear dependency issues in basis set calculations.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key "research reagents"—in this context, computational tools and basis sets—essential for working with atomic basis sets and mitigating linear dependency.

| Item/Reagent | Function/Explanation | Key Considerations |

|---|---|---|

Pople Basis Sets (e.g., 6-31G*) [1] |

Split-valence polarized sets. Efficient for HF/DFT on organic molecules. | Notation (e.g., 6-31+G*) indicates polarization (*) and diffuse (+) functions, which can introduce linear dependencies [1] [5]. |

Dunning Correlation-Consistent (e.g., cc-pVNZ) [1] |

Designed for systematic convergence to CBS limit in correlated calculations. | The aug- (augmented) versions include diffuse functions, increasing the risk of linear dependency [4] [5]. |

Ahlrichs def2 Family (e.g., def2-TZVP) [5] |

Balanced polarized basis sets covering most of the periodic table. Recommended for DFT. | More reliable and less prone to issues than older families for DFT. Appropriate auxiliary basis sets are readily available for RI approximations [5]. |

| Overlap Matrix [4] | A matrix of integrals representing the overlap between basis functions. The primary diagnostic tool. | Small eigenvalues of this matrix directly indicate linear dependencies within the basis set [4]. |

| Pivoted Cholesky Decomposition [4] | An algorithm to automatically detect and remove linearly dependent basis functions. | A general solution implemented in codes like Psi4 and PySCF. It uses the overlap matrix to customize the basis for a specific system [4]. |

| Effective Core Potentials (ECPs) [5] | Replaces core electrons with a potential, reducing the number of basis functions needed. | Recommended for heavy elements (beyond Kr) to reduce computational cost and mitigate linear dependency risks from large all-electron basis sets [5]. |

Troubleshooting Guide: Resolving Common Basis Set Issues

Scenario 1: SCF Convergence Failure in Anion or Excited-State Calculations

- Observed Problem: The Self-Consistent Field (SCF) procedure fails to converge, exhibits oscillatory behavior, or produces physically unrealistic results when studying anions, excited states, or systems with large, "soft" electron densities.

- Primary Cause: Lack of Diffuse Functions. Standard basis sets are designed to describe electrons close to the atomic nuclei. They lack the flexibility to describe the more diffuse electron clouds found in anions, excited states, or molecules with significant dipole moments [6] [1].

- Solution: Augment your basis set with diffuse functions.

- Action: For Pople-style basis sets (e.g., 6-31G), add one '+' sign for diffuse functions on heavy atoms or '++' for functions on all atoms, including hydrogen (e.g.,

6-31++G*) [1] [7]. For Dunning-style correlation-consistent basis sets (e.g., cc-pVDZ), use the "AUG-" prefix (e.g.,aug-cc-pVDZ) [7]. - Rationale: Diffuse functions are Gaussian functions with very small exponents, giving them a more spatially extended shape. This allows the basis set to accurately represent the "tail" portion of the electron density that is distant from the atomic nuclei [6] [1].

- Action: For Pople-style basis sets (e.g., 6-31G), add one '+' sign for diffuse functions on heavy atoms or '++' for functions on all atoms, including hydrogen (e.g.,

Scenario 2: Unexpected Molecular Orbital Phase or Electron Density Distribution

- Observed Problem: The phase or shape of virtual orbitals (like the LUMO) appears inverted or significantly altered when switching to a larger basis set, particularly one that includes diffuse functions. The total electron density, however, remains largely consistent [8].

- Primary Cause: Arbitrary Phase of Individual Orbitals. The sign (red/blue color) of a single molecular orbital is arbitrary and has no physical meaning; only the square of the wavefunction (which gives the electron density) is physically observable [8].

- Solution: Focus on physically meaningful properties.

- Action: Ignore the phase of individual orbitals. Instead, analyze the total electron density, spatial orbital nodes, or orbital energies. Most software packages have a "switch phases" button that can invert the colors for visualization clarity [8].

- Rationale: The electron density arises from the square of the orbital wavefunction, which eliminates the positive/negative phase information. The overall shape and nodal structure are what matter, not the specific color assignment of a lobe [8].

Scenario 3: Slow SCF Convergence or Erratic Behavior in Large Systems

- Observed Problem: When using very large basis sets, especially those with many diffuse functions on large molecules, the SCF calculation becomes slow to converge or behaves erratically.

- Primary Cause: Linear Dependence in the Basis Set. An over-complete basis set can lead to near-duplicate basis functions, causing numerical instability. The program detects this as very small eigenvalues in the basis set overlap matrix [9].

- Solution: Increase the linear dependence threshold.

- Action: In Q-Chem, adjust the

BASIS_LIN_DEP_THRESH$remvariable. The default is6(threshold = 10⁻⁶). For a poorly behaved SCF, try increasing this to5(threshold = 10⁻⁵) to project out more near-degenerate functions [9]. - Rationale: This threshold tells the program at what point to consider a basis function combination as numerically linearly dependent and remove it, stabilizing the calculation [9].

- Action: In Q-Chem, adjust the

Frequently Asked Questions (FAQs)

Q1: What exactly are diffuse functions, and when are they essential? A1: Diffuse functions are Gaussian basis functions with a very small exponent value. This small exponent means they decay slowly and are spatially extended, allowing them to describe regions of low electron density far from the nucleus. They are essential for the accurate calculation of:

- Anions and dipole moments [1].

- Excited states [9].

- Intra- and intermolecular bonding, particularly when involving weak interactions like hydrogen bonding or van der Waals forces [1].

- Any system with a "soft," diffuse electron cloud [6].

Q2: How do polarization functions differ from diffuse functions? A2: While both add flexibility to a basis set, they serve different purposes:

- Polarization Functions (e.g., d-functions on carbon, p-functions on hydrogen) allow the electron density to change its shape away from spherical symmetry. This is crucial for modeling the distortion of atomic electron clouds during chemical bond formation [6] [1]. They are denoted by

*or(d,p)in basis set names [6]. - Diffuse Functions extend the "tail" of the electron density to describe electrons that are far from the nucleus, as discussed above [6].

Q3: What does the notation for Pople basis sets (e.g., 6-31+G) mean? A3: The notation is decoded as follows [1]:

6-31: The core atomic orbitals are described by 6 primitive Gaussians. The valence orbitals are split into two parts: an inner part with 3 primitives and an outer part with 1 primitive.+: A single set of diffuse functions is added to heavy atoms (anything except H and He).++adds them to hydrogen and helium as well.*or(d,p): Polarization functions are added—*typically means d-functions on heavy atoms, while(d,p)explicitly indicates d-functions on heavy atoms and p-functions on hydrogen [6] [1].

Experimental Protocol: Basis Set Selection and Diagnostics for DOS Research

Objective: To establish a robust methodology for selecting appropriate basis sets and diagnosing linear dependency issues in Density of States (DOS) research, ensuring both accuracy and computational feasibility.

Methodology:

- Foundational Calculation: Begin geometry optimization and initial DOS calculation with a standard double-zeta polarized basis set (e.g.,

6-31G*orcc-pVDZ) [1] [7]. - Inclusion of Diffuse Functions: For properties sensitive to long-range electron density (e.g., electron affinity, excited states, weak interactions), augment the basis set with diffuse functions (e.g.,

6-31+G*oraug-cc-pVDZ) [6] [7]. - Basis Set Hierarchy: To systematically approach the Complete Basis Set (CBS) limit for highly accurate energy calculations, use a hierarchy of correlation-consistent basis sets (e.g., cc-pVDZ → cc-pVTZ → cc-pVQZ) and employ empirical extrapolation techniques [1] [7].

- Linear Dependency Check: When using large, diffuse basis sets on big systems, monitor the SCF convergence. For erratic behavior, enable the program's built-in linear dependence check and adjust the threshold (e.g.,

BASIS_LIN_DEP_THRESHin Q-Chem) if necessary [9]. - Result Validation: Compare key observables (e.g., HOMO-LUMO gap, orbital compositions, total energy) across different basis set levels to ensure results are converged with respect to basis set size.

Table: Common Basis Sets and Their Characteristics for DOS Studies

| Basis Set | Type | Polarization? | Diffuse? | Recommended Use Case in DOS Research |

|---|---|---|---|---|

| STO-3G | Minimal | No | No | Initial testing or very large systems where accuracy is secondary [1] [7]. |

| 6-31G* | Valence Double-Zeta | Yes (on heavy atoms) | No | Standard geometry optimizations; preliminary DOS scans [1] [7]. |

| 6-31+G* | Valence Double-Zeta | Yes (on heavy atoms) | Yes (on heavy atoms) | Anions, excited states, and systems with weak intermolecular interactions [1] [7]. |

| cc-pVDZ | Correlation-Consistent | Yes | No | Good starting point for post-Hartree-Fock (correlated) DOS calculations [1] [7]. |

| aug-cc-pVDZ | Correlation-Consistent | Yes | Yes | High-accuracy DOS for electron-affinity, excited-states, and Rydberg states [7]. |

The following workflow outlines the logical decision process for managing basis sets and diagnosing linear dependency:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Materials for Basis Set Studies

| Item | Function & Application |

|---|---|

| Pople-Style Basis Sets (e.g., 6-31G, 6-311G) | Split-valence basis sets efficient for Hartree-Fock and Density Functional Theory (DFT) calculations on large molecules. The intuitive notation (e.g., + for diffuse, * for polarization) makes them widely accessible [1] [7]. |

| Dunning's Correlation-Consistent Basis Sets (e.g., cc-pVXZ) | Systematic hierarchies (X = D, T, Q, 5...) designed for high-accuracy, systematically converging post-Hartree-Fock calculations toward the complete basis set (CBS) limit [1] [7]. |

| Diffuse Functions | "Augmenting" reagents for basis sets. Critical for describing anions, excited states, and weak interactions by modeling the distant "tail" of electron density [6] [1]. |

| Polarization Functions | "Shape-modifying" reagents for basis sets. Allow electron density to distort from atomic symmetry, essential for accurate description of chemical bonding and molecular polarization [6] [1]. |

| Effective Core Potentials (ECPs) (e.g., LanL2DZ, SDD) | Replace core electrons with a potential for heavy atoms, significantly reducing computational cost while maintaining accuracy for valence electron properties [7]. |

Linear Dependence Threshold (e.g., BASIS_LIN_DEP_THRESH) |

A diagnostic and corrective parameter. Used to stabilize SCF calculations by removing numerically redundant basis functions in large, over-complete basis sets [9]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental relationship between the density of states (DOS) and a material's mechanical properties? The electronic density of states, particularly the value at the Fermi level (N(Ef)), is a key descriptor of mechanical properties. A lower N(Ef) often indicates stronger, stiffer bonds with a covalent, directional nature, resulting in higher elastic moduli (both bulk and shear). Consequently, N(Ef) provides a direct correlation to ductility, as evidenced by the Pugh ratio (G/B) [10].

Q2: Why might my calculated DOS appear physically incorrect or lack expected features? An insufficient quality DOS is often a consequence of inappropriate parameters in the underlying electronic structure calculation. To exhibit fine features of the electronic structure, such as Van Hove singularities or accurate band edges, the calculation must be well-converged with respect to critical parameters like the basis set and k-point sampling [11].

Q3: In plane-wave calculations, how does the basis set choice lead to incorrect results? The plane-wave basis set is truncated at a specified cutoff energy. If this cutoff is too low, the basis set is incomplete, leading to errors in the total energy and its derivatives, which directly impacts the accuracy of the derived DOS. The calculation becomes discontinuous with respect to changes in cell size or shape at a fixed cutoff, potentially causing jagged energy-volume curves and unphysical results [12].

Q4: What is a specific numerical instability related to internal coordinates, and how does it manifest? A common instability occurs when several atoms become co-linear during a geometry optimization. The internal coordinate system used by some optimizers has inherent limitations in handling such linear arrangements, leading to errors such as "FormBX had a problem" or "Linear angle in Bend/Tors," which can prevent the optimization from converging to a valid structure for subsequent DOS analysis [13].

Troubleshooting Guide: Common Errors and Solutions

This guide diagnoses common computational errors that can lead to numerical instability and an incorrect density of states.

Error: Discontinuous Energy and Incorrect DOS with Changing Volume

- Symptoms: Jagged energy-volume (E-V) curves; the volume for minimum energy does not coincide with the volume for zero pressure; inaccurate DOS across different volumes.

- Primary Cause: In plane-wave based calculations, using a fixed cutoff energy causes the number of plane waves in the basis set to change discontinuously as the unit cell volume or shape changes [12].

- Solution: Implement a finite basis set correction. This technique accounts for the difference between the number of states in an ideal, infinite basis and the number actually used. It allows for interpolation of results under the more physical condition of a fixed number of basis states [12].

- Verification: The parameter

dEtot/d lnEcutindicates convergence. A value of less than 0.1 eV/atom is sufficient for most calculations, while below 0.01 eV/atom is considered very well converged [12].

Error: Basis Set Truncation and False Band Gap Features

- Symptoms: Inaccurate total energies; potential appearance of false band gaps or incorrect band gap widths in the DOS; poor convergence of material properties.

- Primary Cause: The DOS is derived from the electronic band structure, which is highly sensitive to the completeness of the basis set. Truncating the plane-wave basis set at too low an energy excludes important high-frequency components of the wavefunctions [12].

- Solution: Systematically increase the plane-wave cutoff energy until the total energy converges within a required tolerance. For properties like DOS that depend on absolute energy values, a high cutoff is essential [12].

- Protocol:

- Perform a series of single-point energy calculations on an identical structure.

- Gradually increase the plane-wave cutoff energy in each calculation.

- Plot the total energy versus the cutoff energy. The calculation is considered converged when the energy change falls below a predefined threshold (e.g., 1 meV/atom).

Error: Internal Coordinate Failure During Geometry Optimization

- Symptoms: Error messages such as "

FormBX had a problem," "Error in internal coordinate system," or "Linear angle in Bend" during geometry optimization, preventing the acquisition of a valid structure for DOS calculation [13]. - Primary Cause: The optimizer's internal coordinate system (Z-matrix) fails when atoms become co-linear or near-co-linear, creating a mathematical singularity [13].

- Solution: Switch from internal coordinates to Cartesian coordinates for the optimization.

- Method: Use the keyword

opt=cartesianin your input file. This method increases the number of optimization steps but completely avoids the linear dependency issue in the coordinate system [13]. - Alternative Workflow: If the system is large, perform a few initial steps with

opt=cartesian, save the partially optimized structure, and then restart the optimization using the default (internal) method.

- Method: Use the keyword

Error: "Variable index is out of range" in Z-Matrix

- Symptoms: Calculation termination with the error message "

Variable index is out of range" [13]. - Primary Cause: An input error where a variable used to define an atom in the Z-matrix was not added to the variable list for optimization [13].

- Solution: Carefully check the Z-matrix specification in the input file. Ensure every variable (e.g., a bond length

R1, an angleA1) used to define the geometry is also included in the variable list that follows the Z-matrix [13].

Error: "RedCar/ORedCr failed for GTrans" in Transition State Searches

- Symptoms: Failure during a QST2 transition-state search with the error "

RedCar/ORedCr failed for GTrans" [13]. - Primary Cause: Typically caused by incorrect atom numbering between the reactant and product structures, or an internal program issue [13].

- Solution:

- Confirm Atom Ordering: Ensure the atom numbering is identical in the reactant and product configurations provided to the

QST2calculation. - Use Alternative Methods: If the error persists, use the

QST3method (which requires specifying the reactant, product, and an initial guess for the transition state) or aBerny(TS) optimization instead [13].

- Confirm Atom Ordering: Ensure the atom numbering is identical in the reactant and product configurations provided to the

Diagnostic Workflow for DOS Calculation Failures

The following diagram illustrates the logical process for diagnosing and resolving common issues that lead to an incorrect Density of States.

The Researcher's Toolkit: Essential Computational Reagents

The table below details key components and parameters critical for performing stable and accurate Density of States calculations.

Table 1: Essential "Research Reagents" for Stable DOS Calculations

| Item/Parameter | Function & Rationale | Convergence/Quality Check |

|---|---|---|

| Plane-Wave Cutoff Energy | Determines the highest kinetic energy plane wave in the basis set. A low value truncates the basis, leading to inaccurate energies and DOS [12]. | Converge total energy with respect to cutoff. Ensure dEtot/d lnEcut < 0.1 eV/atom [12]. |

| k-point Grid | Samples the Brillouin zone to integrate over wavevectors. A sparse grid fails to capture DOS features, causing false peaks or gaps [12] [11]. | Test DOS for changes with increasingly dense k-point grids until key features are stable. |

| Finite Basis Set Correction | A correction factor that mitigates energy discontinuities when cell parameters change, crucial for stable E-V curves and correct pressure calculations [12]. | Apply during cell optimization with a non-converged basis set. Check for smooth E-V curves. |

Optimization Algorithm (opt=cartesian) |

A solver that uses Cartesian coordinates instead of internal coordinates, avoiding numerical failure when atoms become co-linear [13]. | Use when errors like "FormBX had a problem" or "Linear angle in Bend" occur. |

| Pseudopotential (or PAW dataset) | Replaces core electrons to reduce computational cost. The "hardness" of the potential determines the required cutoff energy [12]. | Use softer pseudopotentials for lower cutoffs, but ensure transferability for target properties. |

FAQ: Common Questions on Linear Dependency

Q1: What is linear dependency in the context of computational chemistry? Linear dependency occurs when one basis function in your calculation can be expressed as a linear combination of other basis functions in the set. This creates a mathematical problem for solvers that require linearly independent functions, making the basis set "over-complete" rather than independent. This is analogous to having redundant equations in a linear system where one equation provides no new information [14] [15].

Q2: What are the immediate error messages indicating linear dependency? The specific error message varies by software, but common indicators include:

- "Matrix is singular" or "Matrix is numerically singular" [15]

- "Overlap matrix is singular"

- "Last 2 dimensions of an array must be square" (particularly in Python-based solutions) [15]

- "SCF convergence failure" or "Wavefunction instability" without other obvious causes

- "Zero eigenvalues" or "Very small eigenvalues" detected in the overlap matrix

Q3: What computational conditions most often cause linear dependency? Linear dependency typically arises from using large basis sets, especially those with diffuse functions on heavy atoms or in systems with a large number of atoms. It is also common when using high-zeta basis sets (e.g., quadruple-zeta or higher) and when molecules have atoms in close proximity or near-symmetry [16].

Q4: How is linear dependency formally diagnosed? The most robust diagnostic method is to compute the rank of your basis set representation and check if it is less than the number of basis functions. A full-rank matrix indicates linear independence, while a reduced rank indicates dependency. This can be assessed by performing a Singular Value Decomposition (SVD) and inspecting for zero (or near-zero) singular values [15].

Q5: What is the relationship between basis set size and linear dependency? Larger basis sets, while generally providing higher accuracy, significantly increase the risk of linear dependency. This is a critical trade-off in computational design [16].

Table: Basis Set Choices and Linear Dependency Risk

| Basis Set Type | Typical Use Case | Relative Risk of Linear Dependency |

|---|---|---|

| Minimal (e.g., STO-3G) | Preliminary calculations | Very Low |

| Double-Zeta (e.g., cc-pVDZ) | Standard accuracy studies | Low |

| Triple-Zeta (e.g., cc-pVTZ) | High accuracy studies | Moderate |

| Quadruple-Zeta (e.g., cc-pVQZ) | Benchmark calculations | High |

| Augmented/Diffuse (e.g., aug-cc-pVXZ) | Anions, excited states, weak interactions | Very High |

Diagnostic and Solution Protocol

Diagnostic Workflow for Linear Dependency The following workflow provides a systematic method for diagnosing and resolving linear dependency issues in computational chemistry calculations.

Experimental Protocol 1: Matrix Rank Assessment for Linear Dependency

Objective: To determine if a set of vectors (basis functions) is linearly independent by calculating the rank of the matrix they form.

Procedure:

- Matrix Formation: Represent your basis set as a matrix A, where each column corresponds to a basis function evaluated at certain points.

- Rank Calculation: Use a numerical linear algebra library to compute the matrix rank. In Python, this is done with

numpy.linalg.matrix_rank(A)[15]. - Interpretation: If the

rank(A)is less than the number of columns (basis functions), linear dependency exists. The difference between the number of columns and the rank indicates the number of redundant functions.

Sample Python Code Snippet:

Experimental Protocol 2: Singular Value Decomposition (SVD) Diagnosis

Objective: To identify linear dependencies and quantify the degree of dependency using SVD.

Procedure:

- Matrix Formation: Construct matrix A as in Protocol 1.

- SVD Computation: Perform SVD:

U, s, Vt = linalg.svd(A). - Singular Value Analysis: Examine the singular values

s. The number of non-zero singular values equals the matrix rank. Values very close to zero (e.g., < 1e-10) indicate numerical linear dependency [15]. - Condition Number: Calculate the condition number as

max(s)/min(s). A very large condition number (> 1e12) suggests near-dependency.

Remedial Actions Workflow Once linear dependency is confirmed, this workflow guides you through potential solutions.

Research Reagent Solutions: Computational Tools

Table: Essential Computational Tools for Linear Dependency Management

| Tool Name | Function | Application Context |

|---|---|---|

| Matrix Rank Analysis | Determines the number of linearly independent basis functions [15] | Initial diagnosis of linear dependency |

| Singular Value Decomposition (SVD) | Identifies numerical dependencies and quantifies their magnitude [15] | Advanced diagnosis and condition number analysis |

| Basis Set Pruning | Removes high-exponent or diffuse functions that cause dependency | System-specific basis set optimization |

| Condition Number Calculator | Assesses numerical stability of the basis set [15] | Pre-calculation risk assessment |

| Gramian Determinant Test | Classical linear algebra test for independence (det(AᵀA) ≈ 0 indicates dependency) [15] |

Alternative diagnostic method |

Practical Strategies and Software Solutions for Robust Calculations

I have gathered the available information to address your query. While the search results do not contain specific details about the "LDREMO" keyword, they provide a strong foundation for understanding linear dependency issues in computational chemistry. The following guide is structured to help you troubleshoot these common problems.

FAQs on Linear Dependency and Basis Sets

What is linear dependency in a basis set, and why is it a problem? Linear dependency occurs when one or more basis functions in your set can be expressed as a linear combination of others. This makes the overlap matrix singular or nearly singular (ill-conditioned), which prevents the self-consistent field (SCF) procedure from converging. In practice, it often arises when using large, diffuse basis sets, as their widespread functions can become mathematically redundant [17].

The calculation failed with a "linear dependence" error. What are my first steps? Your immediate actions should be:

- Check Your Basis Set: Ensure you have not accidentally specified an overly diffuse basis set for all atoms if it is not needed.

- Inspect Molecular Geometry: A very small distance between two atoms (e.g., in a poorly optimized structure) can cause their basis functions to be nearly identical, triggering linear dependency.

- Review Output File: Look for warning messages early in the output, which often indicate the number of basis functions removed or the condition number of the overlap matrix.

I need diffuse functions for accuracy in non-covalent interactions, but they cause linear dependency. What can I do? This is a known challenge, often called the "conundrum of diffuse basis sets": they are a blessing for accuracy but a curse for numerical stability and computational efficiency [17]. You have several options:

- Use a Mixed Basis Set: Apply a more compact basis set for atoms that do not require a high level of description (e.g., carbon atoms in a solvent shell) and a diffuse set for key atoms (e.g., in the active site).

- Employ Auxiliary Basis Sets: Techniques like the Complementary Auxiliary Basis Set (CABS) singles correction can help achieve high accuracy with more compact, low quantum-number basis sets, thereby mitigating the linear dependency issue [17].

Troubleshooting Guide: Linear Dependency

Follow this workflow to systematically diagnose and resolve linear dependency issues in your calculations.

Detailed Methodologies

1. Diagnosing from the Output File Examine your Gaussian output (.log file) immediately after the "Initialization" section. Look for lines like:

A very small eigenvalue (close to zero) confirms linear dependency. The output may also list the specific combinations of basis functions causing the issue.

2. Protocol for Systematic Basis Set Reduction If linear dependency is detected, follow this empirical protocol to select an alternative basis set:

| Basis Set Characteristic | High-Risk Choice (Causes LD) | Recommended Alternative | Rationale |

|---|---|---|---|

| Diffuseness | aug-cc-pVXZ, def2-SVPD | cc-pVXZ, def2-SVP | Reduces functional overlap in space [17] |

| Size | def2-QZVPP, cc-pV5Z | def2-TZVP, cc-pVTZ | Fewer functions reduce redundancy risk |

| Element Applicability | Diffuse on heavy atoms | Diffuse only on key atoms (e.g., O, N) | Maintains accuracy where needed |

3. Protocol for Geometry Checking and Correction

- Visualize the structure using a molecular viewer (e.g., GaussView, Avogadro).

- Measure Interatomic Distances: Pay special attention to any distance shorter than 0.8 Å, as this is a common cause.

- Correct the Geometry:

- If from a crystallographic database, ensure no atoms are accidentally overlapped.

- If from a prior optimization, consider re-optimizing with a tighter convergence criterion or a different method.

- Manually adjust the problematic coordinates and restart the calculation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" – the methods and basis sets essential for managing linear dependency.

| Item / Keyword | Function / Purpose | Application Note |

|---|---|---|

| Gen keyword | Allows specification of a mixed basis set for different atoms. | Critical for applying diffuse functions only where chemically necessary. |

| ExtraBasis & ExtraDensityBasis | Specifies auxiliary basis sets for specific methods. | Used in CABS and Density Fitting to improve accuracy without increasing primary basis set size [17]. |

| Integral IOp | Controls integral computation thresholds. | Advanced use; IOp(3/33=1) can print overlap matrix eigenvalues for diagnosis. |

| SCF keyword | Controls the SCF convergence algorithm. | Using SCF=XQC can sometimes help convergence in difficult cases. |

| Compact Basis Sets (e.g., cc-pVDZ, def2-SVP) | A smaller, non-diffuse basis set for initial testing. | Use to establish a stable baseline before introducing diffuseness [17]. |

| CABS Singles Correction | A computational technique to recover correlation energy. | Enables the use of compact basis sets while maintaining accuracy for NCIs [17]. |

Frequently Asked Questions (FAQs)

1. What is manual basis set pruning and why is it necessary in computational chemistry? Manual basis set pruning is the process of intentionally removing specific basis functions to manage issues like linear dependence, particularly when using large, augmented basis sets (e.g., aug-cc-pVTZ). This is crucial for maintaining numerical stability in calculations, as linear dependence can cause convergence failures and inaccurate results in electronic structure modeling for DOS research [18].

2. How do I identify problematic functions that should be pruned?

Problematic functions are identified through linear dependence checks. Most quantum chemistry software, like Q-Chem, uses a standard keyword (e.g., LIN_DEP_THRESH) to detect and report near-linear dependencies in the basis set. Functions contributing to these dependencies, often found in high angular momentum functions or diffuse functions in augmented sets, are candidates for pruning [18].

3. What are the best practices for selecting basis set pairings to avoid pruning? The most reliable strategy is to use a small basis set that is a proper subset of your target basis set. This not only enhances accuracy but also improves computational efficiency. For example, using 6-31G as the small basis for a 6-31G* target calculation is a validated pairing [18]. The table below summarizes recommended pairings.

4. My calculation with an aug-cc-pVTZ basis failed due to linear dependence. What should I do?

For large, augmented basis sets, it is recommended to use a predefined, properly truncated subset. In Q-Chem, the racc-pVTZ basis is designed as a subset of aug-cc-pVTZ specifically to avoid linear dependence issues. Switching to this predefined subset is a preferred solution over manual, ad-hoc pruning [18].

5. Can I use general or mixed basis sets in a dual-basis calculation to emulate pruning?

Yes, you can employ user-specified general or mixed basis sets in dual-basis calculations. The target basis is specified in the standard $basis section, while a smaller, secondary basis is placed in a $basis2 section. This is activated with BASIS2 = BASIS2_GEN or BASIS2 = BASIS2_MIXED and effectively allows you to perform calculations with a pruned basis set [18].

Research Reagent Solutions: Essential Basis Sets for Pruning

The following table lists key basis sets and their recommended subsets, which act as pre-validated "pruned" versions for stable calculations [18].

| Target Basis Set | Recommended Subset (Pruned) Basis | Primary Application Context |

|---|---|---|

| cc-pVTZ | rcc-pVTZ | High-accuracy correlation-consistent calculations |

| cc-pVQZ | rcc-pVQZ | Very high-accuracy correlation-consistent calculations |

| aug-cc-pVDZ | racc-pVDZ | Calculations requiring diffuse functions on smaller atoms |

| aug-cc-pVTZ | racc-pVTZ | Calculations requiring diffuse functions, avoiding linear dependence |

| aug-cc-pVQZ | racc-pVQZ | High-accuracy calculations with diffuse functions |

| 6-31G* | r64G, 6-31G | DFT and HF calculations on first- and second-row elements |

| 6-31G | r64G, 6-31G | As above, with additional polarization |

| 6-31++G | 6-31G* | Calculations with diffuse and polarization functions |

| 6-311++G(3df,3pd) | 6-311G, 6-311+G | Large basis set for high-level methods like MP2 or CCSD(T) |

Experimental Protocol: A Workflow for Managing Basis Set Linear Dependence

This protocol provides a step-by-step methodology for identifying and resolving linear dependency issues, a critical procedure for ensuring robust Density of States (DOS) research.

1. Problem Identification

- Objective: Determine if your electronic structure calculation is failing due to linear dependence in the basis set.

- Procedure:

a. Run a single-point energy or geometry optimization calculation with your target basis set (e.g.,

aug-cc-pVTZ). b. Check the output log file for warnings or errors explicitly mentioning "linear dependence" or "overcomplete basis." c. Note the reported condition number or the number of basis functions removed if the software attempts automatic remediation.

2. Basis Set Diagnosis and Selection

- Objective: Choose an appropriate strategy to resolve the linear dependence.

- Procedure:

a. Consult Predefined Pairings: First, consult the table of recommended basis set pairings above. If your target basis is listed, use its designated subset for the smaller basis in a dual-basis calculation or as a direct replacement [18].

b. Dual-Basis Setup: If a predefined subset exists, configure a dual-basis calculation. Specify the target basis in the

$basisgroup and the subset basis in the$basis2group. c. Linear Dependence Threshold: If you must use the full basis, adjust theLIN_DEP_THRESHkeyword to a stricter value (e.g.,1.0E-06) to force the removal of numerically problematic functions [18].

3. Calculation and Validation

- Objective: Execute the modified calculation and validate the results.

- Procedure: a. Run the calculation with the pruned or subset basis set. b. Confirm the absence of linear dependence errors in the output log. c. Compare key properties (e.g., total energy, HOMO-LUMO gap, DOS profile) with results from a stable, smaller basis set to ensure the new results are physically reasonable and not an artifact of aggressive pruning.

The logical workflow for this protocol is summarized in the following diagram:

Workflow for Managing Basis Set Linear Dependence

Your Pivoted Cholesky FAQs Answered

Q1: What is the fundamental difference between a standard Cholesky decomposition and a pivoted Cholesky decomposition?

The standard Cholesky decomposition factorizes a symmetric positive-definite matrix (A) uniquely into the product of a lower triangular matrix and its transpose, (A = LL^T) [19] [20]. The pivoted Cholesky decomposition, also known as Cholesky decomposition with complete pivoting, introduces a permutation matrix (P) for enhanced numerical stability. It returns a permutation matrix (P) and a unique upper triangular matrix (R) such that (P^T A P = R^T R) [19]. The permutation is chosen to bring the largest remaining diagonal element to the pivot position at each iteration, which helps control round-off errors and is particularly crucial for positive semidefinite or ill-conditioned matrices [19].

Q2: How does the pivoting strategy work, and what is its statistical interpretation?

The pivoting strategy selects the pivot element at each step (k) by examining the submatrix (B = A(k:n, k:n)) and choosing the index (l) corresponding to the maximum value on the diagonal of (B) [19]. Rows and columns (k) and (l) are then swapped before proceeding with the standard Cholesky step.

If (A) is a variance or covariance matrix, the pivoting order has a clear statistical interpretation [19] [21]:

- The first pivot corresponds to the element with the largest variance.

- The second pivot is the index of the element with the largest variance conditioned on the first element.

- The third pivot is the index with the largest variance conditioned on the first two, and so on. This makes it a greedy algorithm for uncertainty reduction.

Q3: My decomposition is failing for a covariance matrix that should be positive semidefinite. How can I fix this?

This is a common issue often caused by numerical round-off errors making the matrix slightly indefinite. A practical solution is to add a small value (e.g., (1 \times 10^{-5})) to the diagonal of your covariance matrix (\Sigma) [21]. This is equivalent to adding a tiny amount of independent Gaussian noise to your process, which regularizes the matrix without significantly impacting the results. The pivoted Cholesky is more robust to such issues, but this regularization can ensure successful decomposition.

Q4: When using pivoted Cholesky for low-rank approximation, how do I choose the stopping point (max_rank)?

In low-rank approximations, you can terminate the algorithm early after (K) steps, yielding a rank-(K) approximation [22]. The parameter max_rank controls the number of columns in the output. The accuracy can be controlled by a diagonal tolerance diag_rtol; the decomposition can be stopped when the next pivot element is less than diag_rtol multiplied by the first pivot element [22]. The optimal rank depends on your application's error tolerance.

Troubleshooting Common Experimental Issues

| Issue | Possible Cause | Solution |

|---|---|---|

| Decomposition fails for a covariance matrix | Numerical round-off errors make the matrix numerically indefinite. | Add a small regularization term (e.g., 1e-5 * np.eye(n)) to the matrix diagonal [21]. |

| Poor low-rank approximation accuracy | The chosen max_rank is too low, or the diag_rtol tolerance is too large. |

Increase max_rank or tighten diag_rtol. Monitor the approximation error ( |A - LL^T| ). |

| Algorithm is too slow for large matrices | Using a naive Python implementation with nested loops. | Utilize highly optimized library functions (e.g., tfp.math.pivoted_cholesky) [22] or accelerate code with Numba [23]. |

Experimental Protocols & Workflows

Detailed Methodology: Probabilistic View for Gaussian Processes

The connection between pivoted Cholesky and Gaussian Processes (GPs) is foundational. In GP inference, the covariance matrix (K) must be inverted. The pivoted Cholesky decomposition (P^T K P = R^T R) is used for this, and the pivot order can be interpreted as a greedy selection of data points to maximize information gain (entropy reduction) [24]. A novel research direction involves using this to select the most informative points for sparse GPs.

Protocol: Generating a Low-Rank Approximation for a Covariance Matrix

This protocol uses the TensorFlow Probability function tfp.math.pivoted_cholesky [22].

- Define your covariance function and parameters. For example, use a squared-exponential kernel: (\Sigma{ij} = \sigma1^2 \exp(-(i - j)^2 / \sigma_2^2)).

- Construct the covariance matrix ( \Sigma ) for your data points.

- Set approximation parameters. Choose the maximum rank

max_rankfor your low-rank factor and a relative tolerancediag_rtol. - Compute the decomposition. Call

lr = tfp.math.pivoted_cholesky(matrix=Sigma, max_rank=max_rank, diag_rtol=diag_rtol). - Use the result. The matrix

lris the low-rank factor such that ( \Sigma \approx lr \cdot lr^T ). This factor can be used for efficient linear algebra in preconditioners [22] [24].

Logical Workflow for Pivoted Cholesky Decomposition

The Scientist's Toolkit

| Research Reagent / Tool | Function in Experiment |

|---|---|

| TensorFlow Probability (TFP) | Provides the pivoted_cholesky function for computing (partial) pivoted decompositions, directly enabling low-rank approximation experiments [22]. |

| Covariance Matrix | The symmetric positive-semidefinite matrix being decomposed; often arises from kernel functions in GPs or correlation between basis functions [21]. |

| Permutation Matrix (P) | The output that defines the reordering of rows and columns to ensure numerical stability; interprets the sequence of conditional variances [19]. |

| Upper Triangular Matrix (R) | The unique decomposition factor such that (P^T A P = R^T R); the core output used for solving linear systems or low-rank approximation [19]. |

| Regularization Parameter | A small constant added to the matrix diagonal to ensure numerical positive definiteness and successful decomposition [21]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is linear dependency in basis sets, and why is it a critical problem in calculating Density of States (DOS) for drug discovery?

Linear dependency occurs when the basis functions used in a quantum chemical calculation are not linearly independent, leading to a numerically ill-conditioned overlap matrix. This is a critical problem because it causes instability in the self-consistent field procedure, resulting in inaccurate molecular orbital energies and, consequently, an unreliable Density of States (DOS). In drug discovery, an unreliable DOS directly compromises the prediction of key molecular properties like reactivity, binding affinity, and electronic excitation energies, which are essential for assessing a drug candidate's potential [25].

FAQ 2: My calculation for a large molecular system (e.g., a protein-ligand complex) failed with a linear dependency error. What are my primary options to resolve this?

When dealing with large molecules, linear dependency becomes more probable due to the large number of basis functions. Your primary options are:

- Use a different basis set: Switch to a smaller or more robust basis set, though this may reduce accuracy.

- Employ a plane wave basis set: Plane wave basis sets are inherently free from the linear dependency problems that can plague Gaussian-type orbital basis sets for large, densely packed molecular structures [25].

- Leverage local correlation methods: For wavefunction-based theories like CCSD(T), using domain-based local pair-natural orbital (DLPNO) methods can help manage the computational cost and potential instabilities when calculating interaction energies for large systems [25].

FAQ 3: How can I systematically assess if my dataset is suitable for training a machine learning model to predict molecular properties?

Before integrating datasets for machine learning, a rigorous Data Consistency Assessment (DCA) is crucial. This involves:

- Checking for distributional misalignments: Analyze if the molecular property endpoints (e.g., half-life, clearance) from different sources follow similar statistical distributions.

- Identifying annotation discrepancies: Detect inconsistent property annotations for the same molecules across different datasets.

- Analyzing chemical space coverage: Evaluate whether the combined data provides a representative and broad coverage of the chemical space of interest.

Tools like

AssayInspectorcan automate this process by generating statistical summaries, visualizations, and diagnostic reports to identify outliers, batch effects, and dataset discrepancies [26].

Troubleshooting Guides

Issue 1: Inaccurate Non-Covalent Interaction Energies in Large Molecules

Problem Description: Calculations of non-covalent interaction energies (e.g., for protein-ligand binding or molecular crystal packing) for large molecules on the hundred-atom scale show significant discrepancies when comparing different high-level quantum mechanics methods, such as between Diffusion Monte Carlo (DMC) and CCSD(T) [25].

Diagnosis: A primary source of this discrepancy can be "overcorrelation" in the standard CCSD(T) method, particularly its (T) component. For large, polarizable molecules, the perturbative treatment of triple excitations in (T) can overestimate the attraction, leading to an interaction energy that is too strong. This is related to the method's difficulty with systems exhibiting large polarizabilities [25].

Resolution Protocol:

- Method Selection: For large molecules, consider using a modified coupled-cluster approach, such as CCSD(cT). This method includes selected higher-order terms that screen the bare Coulomb interaction, mitigating the overcorrelation issue seen in standard CCSD(T) without a significant increase in computational cost [25].

- Basis Set Check: Ensure your atom-centered Gaussian basis set is of sufficient quality and is not suffering from near-linear dependencies. For densely packed structures, using a plane wave basis set can provide an unbiased assessment and avoid these issues [25].

- Validation: Where possible, compare your results against other highly accurate methods like DMC or experimental data (if available) to validate the findings.

Table: Key Characteristics of Quantum Methods for Large Molecules

| Method | Key Feature | Advantage for Large Molecules | Consideration |

|---|---|---|---|

| CCSD(T) | "Gold standard"; perturbative triples | High accuracy for small systems | Can overcorrelate for large, polarizable systems [25] |

| CCSD(cT) | Screened triple excitations | Reduces overcorrelation; more robust for large systems | A modified approach to the standard (T) [25] |

| Plane Wave CCSD(T) | Uses plane wave basis set | Avoids linear dependency of Gaussian basis sets | Requires specialized computational setup [25] |

| DLPNO-CCSD(T) | Local correlation approximation | Makes CCSD(T) feasible for large molecules | Introduces local approximations that need checking [25] |

Issue 2: Data Heterogeneity Degrading Molecular Property Prediction Models

Problem Description: After integrating multiple public datasets to train a machine learning model for predicting molecular properties (e.g., ADME - Absorption, Distribution, Metabolism, and Excretion), the model's predictive performance decreases instead of improving.

Diagnosis: The degradation is likely caused by hidden inconsistencies between the datasets. These can include:

- Distributional misalignments: Differences in experimental protocols or conditions leading to shifts in property value distributions.

- Annotation discrepancies: The same molecule having different property values in different source datasets.

- Lack of representativeness: The combined data may not uniformly cover the chemical space, creating biases [26].

Resolution Protocol:

- Pre-Modeling Data Consistency Assessment (DCA): Use a tool like

AssayInspectorto systematically profile and compare all datasets you plan to integrate [26]. - Generate Diagnostic Reports: The tool provides alerts on conflicting annotations, significantly different endpoint distributions, and datasets with low molecular overlap.

- Informed Data Curation: Based on the DCA report, decide whether to:

- Exclude a dataset with irreconcilable differences.

- Standardize the data if discrepancies are minor and harmonizable.

- Stratify the training, treating data from different sources as separate groups.

The workflow below outlines this diagnostic process.

Data Integration Troubleshooting Workflow

Experimental Protocols & Reagent Solutions

Detailed Methodology: Data Consistency Assessment (DCA) for Molecular Property Data

This protocol is adapted from methodologies developed for robust molecular property prediction, utilizing the AssayInspector tool [26].

1. Objective: To systematically identify inconsistencies across multiple molecular property datasets prior to integration for machine learning model training.

2. Materials and Reagents:

- Datasets: Public or proprietary molecular property datasets (e.g., half-life, clearance, solubility).

- Software: The

AssayInspectorPython package (available at https://github.com/chemotargets/assay_inspector). - Computational Environment: Standard Python data science stack (e.g., RDKit, SciPy, NumPy).

3. Procedure:

Step 3.1: Data Collection and Preprocessing

- Gather all datasets intended for integration.

- Apply consistent pre-processing: standardize molecular structures (e.g., using RDKit), normalize text-based annotations, and handle missing values uniformly.

Step 3.2: Configure and Run AssayInspector

- Load the standardized datasets into

AssayInspector. - Configure the tool to calculate relevant molecular descriptors (e.g., ECFP4 fingerprints, 1D/2D RDKit descriptors) and specify the property endpoint for comparison.

- Execute the analysis to generate a comprehensive report.

Step 3.3: Analyze the Diagnostic Output The tool generates three key types of diagnostics:

- Tabular Summary: Review key statistics (mean, standard deviation, quartiles) for the property endpoint across each dataset. Use the two-sample Kolmogorov-Smirnov test results to identify significantly different distributions.

- Visualization Plots: Examine plots for property distribution, chemical space (via UMAP projection), and dataset intersection to visually identify misalignments and outliers.

- Insight Report: Heed alerts flagging "conflicting datasets" (differing annotations for shared molecules), "dissimilar datasets" (based on descriptor profiles), and "divergent datasets" (with low molecular overlap).

Step 3.4: Make Data Integration Decisions Based on the DCA report, decide on the integration strategy: exclude problematic data, standardize values, or proceed with stratified learning.

4. Expected Output: A curated, consistent, and integrated dataset suitable for training reliable predictive models, along with a diagnostic report documenting all identified issues and corrective actions taken.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Reliable DOS and Property Prediction

| Item / Software | Function in Research |

|---|---|

AssayInspector Package |

A model-agnostic Python tool for systematic Data Consistency Assessment (DCA) prior to model training. It identifies outliers, batch effects, and annotation discrepancies across datasets [26]. |

| Plane Wave Basis Sets | An alternative to Gaussian-type orbital basis sets in quantum chemistry calculations. They are numerically stable and avoid the linear dependency problems that can occur in large molecules with traditional basis sets [25]. |

| CCSD(cT) Method | A modified coupled-cluster method that provides more accurate noncovalent interaction energies for large, polarizable molecules compared to the standard CCSD(T) by mitigating "overcorrelation" [25]. |

| Domain-based Local Pair-Natural Orbital (DLPNO) Methods | Approximations that reduce the computational cost of high-level ab initio methods like CCSD(T), making them applicable to large drug-like molecules while controlling for potential errors from local approximations [25]. |

Diagnosing Errors and Optimizing Basis Sets for Specific Targets

FAQ: Handling "Linear Dependency in Basis Set" Errors

What does the "linear dependency in basis set" error mean in simple terms?

In computational chemistry, a basis set is a collection of mathematical functions used to describe the behavior of electrons in a molecule. A "linear dependency" error occurs when two or more of these functions become so similar that the computer can no longer treat them as independent entities. This breaks the underlying mathematical procedure, specifically the matrix inversion required to solve the Schrödinger equation, because it makes the relevant matrix non-invertible [27].

What are the most common root causes for this error in drug discovery research?

This error frequently arises in projects relevant to drug discovery, such as modeling metalloenzyme active sites or large biomolecular complexes. The primary causes are:

- Overlapping Functions in Diffuse Basis Sets: Using large, diffuse basis sets (e.g., for modeling non-covalent interactions in protein-ligand binding) on atoms in close proximity can cause their basis functions to overlap excessively [28].

- Large Systems with Many Basis Functions: When studying large systems like protein-protein interfaces or supramolecular assemblies, the total number of basis functions can become very large. The probability of linear dependence increases with system size [28].

- Metal Complexes with High Coordination: Modeling transition metal complexes in catalysts or metallodrugs often involves many atoms and large basis sets, creating a prime scenario for this error, especially when using pseudopotentials or effective core potentials that add many diffuse functions [28].

What is a step-by-step diagnostic protocol to identify the root cause?

Follow this systematic diagnostic workflow to pinpoint the source of the linear dependency in your calculation.

Step 1: Inspect Molecular Geometry

- Methodology: Visually inspect your molecular structure file (e.g.,

.xyz,.gjf,.com) using a visualization tool like GaussView, Avogadro, or PyMol. - Diagnostic Action: Look for any two atoms with an improbably small interatomic distance (less than 0.1 Å is a strong indicator). This is a common issue with poorly optimized structures or files with manual input errors.

- Expected Outcome: A clean geometry with all interatomic distances respecting typical van der Waals radii.

Step 2: Analyze Basis Set Choice

- Methodology: Review the input file's

route sectionto identify the chosen basis set. Cross-reference the basis set's definition in your quantum chemistry program's library (e.g., in Gaussian, ORCA). - Diagnostic Action: Identify if you are using a basis set with highly diffuse functions, such as "aug-" (augmented) basis sets (e.g., aug-cc-pVDZ) or those with multiple diffuse shells. These are prone to causing linear dependence, especially in dense regions of the molecule.

- Expected Outcome: Confirmation that the basis set is appropriate for the system's size and electronic properties.

Step 3: Check for Redundant Atoms

- Methodology: Use software scripting (e.g., with Python or a command-line tool like

obabel) to analyze the coordinate list in your input file. - Diagnostic Action: Write a simple script to calculate all interatomic distances and flag any pair of atoms with identical or nearly identical coordinates (differences < 0.001 Å), which suggests a file corruption or input error.

- Expected Outcome: A unique set of atomic coordinates.

Step 4: Verify Internal Coordinates

- Methodology: Check if your calculation is set to use redundant Cartesian coordinates. This is often the default in programs like Gaussian.

- Diagnostic Action: In your input file, add the

%nosavedirective to prevent large checkpoint files and useIOp(3/32=2)to print the linear dependence information to the output log, which can help identify the problematic functions. - Expected Outcome: The output log will specify the exact basis functions that are linearly dependent.

Step 5: System Size Check

- Methodology: Perform a single-point energy calculation on a smaller, core fragment of your system (e.g., just the active site of an enzyme without the entire protein backbone).

- Diagnostic Action: If the error disappears with the smaller fragment, the root cause is the sheer number of basis functions in the full system.

- Expected Outcome: Successful calculation on the subsystem confirms the error is scale-related.

What are the proven solutions and workarounds?

Based on the root cause identified in the diagnostic steps above, implement the corresponding solution from the table below.

| Root Cause | Solution Category | Specific Protocol / Reagent | Key Function |

|---|---|---|---|

| Atoms too close | Geometry Correction | Use a molecular mechanics force field (e.g., UFF, GAFF via OpenFF/OpenMM [29]) for a preliminary geometry optimization to resolve steric clashes. | Provides a physically reasonable starting geometry. |

| Overly diffuse basis set | Basis Set Pruning | Use a segmented basis set or remove diffuse functions from heavy atoms not involved in the interactions of interest (e.g., gen keyword in Gaussian). |

Reduces overlap between basis functions. |

| System too large | Model Simplification | Switch to a smaller, more computationally efficient method like GFN2-xTB [29] for initial geometry optimizations, then refine with a higher-level method. | Enables handling of large systems. |

| System too large | Advanced Modeling | Employ a Neural Network Potential (NNP) like Meta's eSEN or UMA models trained on the OMol25 dataset [28]. | Provides DFT-level accuracy for massive systems at a fraction of the cost. |

| General Prevention | Integral Accuracy | Increase the integral accuracy grid. In Gaussian, use Int=UltraFine. |

Improves numerical precision in matrix operations. |

How can I validate that the fix was successful?

After implementing a solution, you must verify that the error is resolved and the results are physically meaningful.

- Calculation Completion: The primary validation is that the job runs to completion without a "linear dependency" error.

- Output Log Scrutiny: Check the output log for warnings about the overlap matrix or the SCF procedure. A clean output is ideal.

- Result Rationality: Validate the results. Check that the final optimized geometry is sensible, energies are converged, and molecular properties (like frequencies) are physically reasonable. Compare against a known reliable calculation of a similar, smaller system if possible.

- Reproducibility: The calculation should be reproducible with consistent results across multiple runs.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for preventing and diagnosing linear dependency issues.

| Item / Resource | Function in Diagnosis/Prevention | Relevance to DOS Research |

|---|---|---|

| GFN2-xTB | A semiempirical quantum chemical method ideal for fast geometry optimizations and pre-screening of large molecular systems, avoiding initial linear dependency issues [29]. | Provides a robust starting geometry for subsequent high-level Density of States (DOS) calculation. |

| Neural Network Potentials (NNPs) | Pre-trained models (e.g., eSEN, UMA) can compute energies and forces with DFT-level accuracy for systems too large for conventional DFT, thus bypassing the basis set dependency entirely [28]. | Enables DOS analysis of massive biomolecular complexes or materials that are otherwise computationally intractable. |

| OMol25 Dataset | A massive, high-accuracy dataset of quantum chemical calculations. Can be used to validate the expected energy ranges and properties for your system [28]. | Serves as a benchmark for validating the accuracy of your own DOS computations and method choices. |

| Pseudopotentials / ECPs | Replace core electrons in heavy atoms with an effective potential, reducing the number of basis functions required and mitigating linear dependence risk [28]. | Crucial for including heavy elements (e.g., transition metals in catalysts) in DOS studies without overwhelming the calculation. |

Int=UltraFine Grid |

An input keyword in programs like Gaussian that increases the accuracy of integral calculations, helping to numerically stabilize the SCF procedure [29]. | A simple but effective fix that can resolve numerical instabilities that manifest as linear dependencies. |

Troubleshooting Guides

1. How do I resolve a "CHOLSK BASIS SET LINEARLY DEPENDENT" error?

This error indicates that the basis functions in your quantum chemical calculation are not linearly independent, which prevents the matrix solver from proceeding. The primary causes and solutions are outlined below.

| Cause | Description | Solution |

|---|---|---|

| Diffuse Functions | Presence of basis functions with very small exponents (highly diffuse) [30]. | Manually remove basis functions with exponents below a threshold (e.g., 0.1) [30]. |

| Molecular Geometry | Atoms positioned too close together, causing their basis functions to overlap excessively [30]. | Use the LDREMO keyword to systematically remove linearly dependent functions [30]. |

Using the LDREMO Keyword:

The LDREMO keyword instructs the code to diagonalize the overlap matrix in reciprocal space and remove functions corresponding to eigenvalues below a defined threshold. The syntax is:

The threshold for removal is <integer> * 10^-5. It is recommended to start with a value of 4. This feature only works in serial mode (running with a single process) [30].

2. How do I fix an "ILA DIMENSION EXCEEDED - INCREASE ILASIZE" error?

This error occurs when the pre-allocated memory for handling integral arrays is insufficient for your system's size and basis set [30].

- Solution: Explicitly increase the

ILASIZEparameter in your input file. The default value is often 6000. You will need to consult your specific quantum chemistry software's manual for the exact procedure to set this parameter, as it is program-dependent [30].

Frequently Asked Questions

Why am I encountering these errors even when using a built-in, optimized basis set?

Built-in basis sets, especially those designed for molecular systems (like mTZVP), often include diffuse functions to ensure high accuracy. While optimized, they are not immune to geometric factors. If atoms in your specific crystal or molecular structure are closer together than in the systems the basis set was tested on, it can trigger linear dependence [30].

I used LDREMO and now get an ILASIZE error. What should I do?

This is not uncommon. The LDREMO process can sometimes require additional memory resources, pushing the calculation past the default ILASIZE limit. You should address the ILASIZE error first by increasing the parameter as described in the troubleshooting guide. After adjusting ILASIZE, the calculation with LDREMO should proceed [30].

Are there functional-specific considerations for these errors?

Yes. Some composite functionals (e.g., B973C) are explicitly designed for use with specific basis sets (e.g., mTZVP). Modifying the basis set by removing functions to fix linear dependence can introduce errors and invalidate the functional's parameterization. If linear dependency persists, it may be more appropriate to select a different functional and basis set combination that is better suited for your system, such as those developed for bulk materials rather than isolated molecules [30].

Experimental Protocols

Protocol 1: Systematic Approach to Resolving Linear Dependence

- Initial Assessment: Run your calculation in serial mode to ensure all error messages are visible [30].

- First Remediation Step: Add the

LDREMO 4keyword to your input file and re-run the calculation [30]. - Handle Subsequent Errors: If an

ILASIZEerror appears, consult your software manual and increase theILASIZEparameter in your input file. Re-run the calculation [30]. - Alternative Path (Advanced): If

LDREMOis insufficient, consider manually editing the basis set to remove diffuse functions with the smallest exponents, but be aware of the potential impact on the functional's validity [30].

Protocol 2: Statistical Benchmarking for Method Selection

When calculating properties like second hyperpolarizability (γ) for nonlinear optics, a robust statistical approach is crucial for evaluating computational methods [31].

- Full-Factorial Design: Employ an in silico full-factorial experimental design to systematically evaluate the performance of different computational methods (e.g., HF, DFT with various functionals, CCSD) and basis sets [31].

- Basis Set Selection: The Sadlej-pVTZ basis set has been shown to deliver exceptional performance for hyperpolarizability calculations, as the presence of diffuse functions is mandatory [31].

- Method Evaluation: Move beyond the Mean Absolute Deviation (MAD) as a sole metric. Use parameters from linear correlation analysis (slope, intercept, and R²) to more accurately rate the performance of various computational methods against experimental reference data [31].

The Scientist's Toolkit

Research Reagent Solutions: Computational Components

In computational chemistry, the "reagents" are the methodological components selected for the calculation.

| Item | Function in "Experiment" |

|---|---|

| Basis Set (e.g., Sadlej-pVTZ, mTZVP) | A set of mathematical functions that describe the distribution of electrons in an atom or molecule. It is the fundamental basis for the quantum mechanical model [31]. |

| Functional (e.g., B973C, LC-BLYP) | In Density Functional Theory (DFT), this is the rule that defines the exchange-correlation energy, a key term that determines the accuracy of the calculation [30] [31]. |

LDREMO Keyword |

A computational "reagent" that automatically identifies and removes linearly dependent basis functions to ensure numerical stability [30]. |

| ILASIZE Parameter | A memory allocation parameter that defines the size for handling integral arrays, preventing memory overflow errors in large systems [30]. |

Workflow Visualization

The diagram below outlines a logical workflow for diagnosing and resolving the discussed errors, integrating both computational and statistical considerations.

Frequently Asked Questions

1. What is the single most recommended basis set for DFT calculations on drug-like molecules? For Density Functional Theory (DFT) calculations on drug-like molecules, the def2-TZVP basis set is highly recommended as a starting point [32]. It offers a good balance of accuracy and computational cost for systems of this size. The closely related def2-SVP basis set was used for the large-scale QMugs dataset of drug-like molecules [33].

2. My calculation failed due to "linear dependence" in the basis set. What should I do? Linear dependence occurs when basis functions are too similar, causing numerical instability [4]. To address this:

- For small molecules: Manually remove basis functions with very similar exponents, particularly high-exponent "tight" functions [4].

- General solution: Use a pivoted Cholesky decomposition, a method implemented in programs like Psi4 and ERKALE, to automatically identify and remove linearly dependent functions [4].

3. How do I choose between double-zeta, triple-zeta, and larger basis sets? The choice involves a trade-off between accuracy and computational cost, which is critical for large drug-like molecules.

- Double-zeta (e.g., def2-SVP, cc-pVDZ): A reasonable minimum, but may lack sufficient accuracy for some properties [16] [34].

- Triple-zeta (e.g., def2-TZVP, cc-pVTZ): Recommended for a good cost/accuracy balance in DFT. This is often the best choice for final production calculations [32] [34].

- Larger sets (Quadruple-zeta and beyond): Typically used for high-accuracy benchmark studies or for converging properties like atomization energies to the complete basis set (CBS) limit [16].

4. When are "diffuse functions" necessary, and when should I avoid them? Diffuse functions are essential for accurately modeling anions, weak intermolecular interactions, and electronic properties like dipole moments [35]. However, they significantly increase computational cost and can introduce or worsen linear dependencies, especially for large, polarizable molecules [4] [34]. Use them only when necessary.

5. Are older basis sets like 6-31G* still acceptable to use? While functional, more modern basis sets are generally superior. It is recommended to avoid old Pople basis sets like 6-31G* as there are many more modern basis sets that perform better [32]. For DFT, the def2 family or pcseg-n series are better choices [32] [34].

Troubleshooting Guide

| Problem | Likely Cause | Recommended Solution |

|---|---|---|

| SCF convergence failure | Basis set too large/diffuse, causing linear dependence [4] [34] | Switch to a smaller basis; remove diffuse functions; use Cholesky decomposition [4] |

| Inaccurate reaction energies | Inadequate basis set size | Upgrade from double-zeta to triple-zeta [32] |

| Poor description of anions or weak bonds | Lack of diffuse functions [35] | Use an augmented basis (e.g., aug-def2-SVP) |

| Unexpectedly high computational cost | Overly large basis for system size/method | Switch to a more efficient basis (e.g., pcseg-n for DFT) [34] |

Basis Set Comparison for Drug-Like Molecules

The following table summarizes key basis sets, highlighting their typical use cases and trade-offs in the context of studying drug-like molecules.

| Basis Set Family | Examples | Zeta-Level | Best For | Considerations |

|---|---|---|---|---|

| Karlsruhe (def2) | def2-SVP, def2-TZVP [32] |

Double, Triple | General-purpose DFT on medium-to-large systems [33] [32] | Widely supported; good default choice [32] |

| Jensen (pcseg-n) | pcseg-1, pcseg-2 [34] |

Double, Triple | DFT calculations; often outperforms Pople sets at similar cost [34] | Highly recommended for molecular properties in DFT [34] [35] |

| Dunning (cc-pVXZ) | cc-pVDZ, cc-pVTZ [7] |

Double, Triple, etc. | High-accuracy wavefunction methods (e.g., CCSD(T)) [32] | Can be slow; use segmented variants for efficiency [34] |

| Pople | 6-31G*, 6-311+G [7] |

Double, Triple | Legacy or method-specific (e.g., SMD solvation) | Considered outdated; modern alternatives are preferred [32] [34] |

Experimental Protocols

Protocol 1: Standard Workflow for Geometry Optimization and Energy Calculation of a Drug-like Molecule This protocol is based on methodologies used to generate the QMugs dataset [33].

- Initial Conformer Generation: Use software like RDKit with the ETKDG method to generate an ensemble of initial molecular conformers [33].

- Conformer Optimization: Optimize the geometry of the generated conformers using a fast, semi-empirical method like GFN2-xTB [33].