Overcoming Interdisciplinary Feasibility Challenges in Biomedical System Analysis

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to diagnose and resolve interdisciplinary feasibility challenges in complex system analysis.

Overcoming Interdisciplinary Feasibility Challenges in Biomedical System Analysis

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to diagnose and resolve interdisciplinary feasibility challenges in complex system analysis. It bridges foundational theories like Systems Thinking and Activity Theory with practical methodologies, including structured feasibility assessments and coordination frameworks like Multidisciplinary Design Optimization (MDO). By exploring common barriers—from knowledge gaps and conflicting terminologies to operational misalignments—and presenting proven troubleshooting strategies, this guide empowers teams to validate their collaborative efforts and enhance the impact of interdisciplinary knowledge flows in biomedical and clinical research.

Understanding Interdisciplinary Feasibility: Core Concepts and Critical Barriers

Defining Interdisciplinary System Analysis in a Biomedical Context

FAQs: Core Concepts and Common Challenges

What is Interdisciplinary System Analysis in a biomedical context? Interdisciplinary System Analysis is an approach that uses structured methods from systems engineering and systems science to understand and address complex problems in biomedical research [1]. It involves integrating knowledge, skills, methods, and tools from fields like medicine, biology, engineering, and data science to model complex systems, manage dynamic interactions, and identify optimal solutions [2] [1] [3]. This is essential for navigating the interconnected components within biological systems and healthcare environments.

Why is a systems approach crucial for troubleshooting interdisciplinary research? Biomedical systems are inherently complex, with numerous components that interact and change over time, leading to emergent behaviors [1]. A reductionist approach that examines parts in isolation is often inadequate. Systems analysis provides tools to model these interconnections and dynamic changes, making it possible to identify the root causes of problems that span multiple disciplines, such as an experimental failure involving both biological variability and instrumentation error [4] [1].

What are common reasons for failure in interdisciplinary experiments? Failures often stem from unanticipated interactions between system components. Specific causes can include:

- Improper Technique: Minor deviations in protocol, such as inconsistent aspiration during cell culture washes, can introduce significant variability [5].

- Reagent and Material Failure: Expired reagents, improper storage conditions, or low plasmid concentration can cause experiments like PCR or cloning to fail [6].

- Instrument Malfunction: Miscalibrated equipment or software bugs can produce anomalous results [5].

- Knowledge Gaps: Teams may lack a shared understanding of the preconditions or assumptions from different disciplines, leading to flawed experimental design [1].

How can our team effectively manage an interdisciplinary project? Successful management requires breaking down disciplinary silos and fostering collaboration [4] [3]. Key strategies include:

- Defining a Shared System Vision: Start by collectively defining the system's boundaries and project goals from multiple perspectives [7].

- Implementing Knowledge Management: Systematically capture and share organizational knowledge embedded in processes to prevent the loss of critical information [7].

- Promoting Collaborative Troubleshooting: Use structured sessions where team members from different fields work together to diagnose problems, leveraging diverse expertise to propose and evaluate hypotheses [5].

Troubleshooting Guides

Guide 1: A Structured Six-Step Diagnostic Process for General Experimental Failure

This universal framework is adapted from laboratory troubleshooting principles and aligns with systems analysis methodologies [6] [1].

Table: Six-Step Diagnostic Process

| Step | Description | Key Systems Analysis Consideration |

|---|---|---|

| 1. Identify the Problem | Define the specific discrepancy between expected and observed outcomes without assuming a cause. | Clearly delineate the system boundaries where the problem is manifesting [1]. |

| 2. List Possible Causes | Brainstorm all potential explanations across disciplines (e.g., biological, chemical, engineering, computational). | Use interdisciplinary team discussions to identify a wide range of preconditions and variables [6] [3]. |

| 3. Collect Data | Gather existing data from controls, equipment logs, reagent records, and procedural notes. | This is analogous to gathering data on system components and their states to inform model building [1]. |

| 4. Eliminate Explanations | Use the collected data to rule out as many hypotheses as possible. | Systematically evaluate potential mediators and moderators within the system [6]. |

| 5. Check with Experimentation | Design targeted, small-scale experiments to test the remaining, most likely causes. | Treat this as a focused test of a specific hypothesized causal pathway within the larger system [1]. |

| 6. Identify the Root Cause | Analyze results from step 5 to confirm the cause and implement a corrective plan. | Identify the specific mechanism whose activation led to the failure, and update protocols accordingly [6]. |

Guide 2: Troubleshooting a Failed PCR (A Practical Example)

This guide applies the six-step process to a common laboratory technique.

Table: Troubleshooting a Failed PCR Reaction

| Step | Action and Questions to Ask |

|---|---|

| 1. Identify Problem | "No PCR product is detected on the agarose gel, while the DNA ladder is visible." |

| 2. List Causes | Consider each reaction component: Taq polymerase (inactive), MgCl₂ (wrong concentration), primers (degraded, wrong sequence), template DNA (degraded, low concentration, contaminants), dNTPs (degraded). Also consider equipment (thermal cycler block temperature inaccurate) and procedure (incorrect cycling program) [6]. |

| 3. Collect Data | • Controls: Did the positive control work?• Reagents: Check expiration dates and storage conditions of the PCR kit.• Procedure: Review lab notebook against manufacturer's protocol for deviations [6]. |

| 4. Eliminate Causes | If the positive control worked and reagents were stored correctly, you can largely eliminate the master mix reagents as the source of failure. |

| 5. Experiment | Test the integrity and concentration of the template DNA via gel electrophoresis and a spectrophotometer [6]. |

| 6. Identify Cause | If the template DNA is degraded or too dilute, this is the confirmed cause. The solution is to prepare a new, high-quality template. |

Guide 3: A Systems Analysis Approach to Implementation Failure

This guide is for troubleshooting complex, multi-level projects, such as implementing a new diagnostic technology in a clinical setting [1].

Table: Troubleshooting Implementation Failure with Systems Analysis

| Step | Description and Application |

|---|---|

| 1. Model the System | Develop a model (e.g., a causal loop diagram or process map) of the implementation process. Identify all components: people, workflows, technologies, and policies. |

| 2. Specify the Strategy | Clearly define the implementation strategy (e.g., "training clinicians"). Hypothesize the specific mechanism it should activate (e.g., "skill building") and the required preconditions (e.g., "clinicians have time to attend") [1]. |

| 3. Interrogate the Model | Use the model to trace why the strategy failed. Was the mechanism not activated due to missing preconditions? Was the mechanism activated but its effect attenuated by a different, unanticipated mechanism (e.g., low motivation)? Were there feedback loops (e.g., social learning) that influenced the outcome? [1] |

| 4. Adapt and Re-test | Based on the analysis, adapt the strategy (e.g., offer flexible training times) or address newly identified contextual barriers. Monitor the system's response to confirm the fix. |

Workflow and Signaling Pathway Diagrams

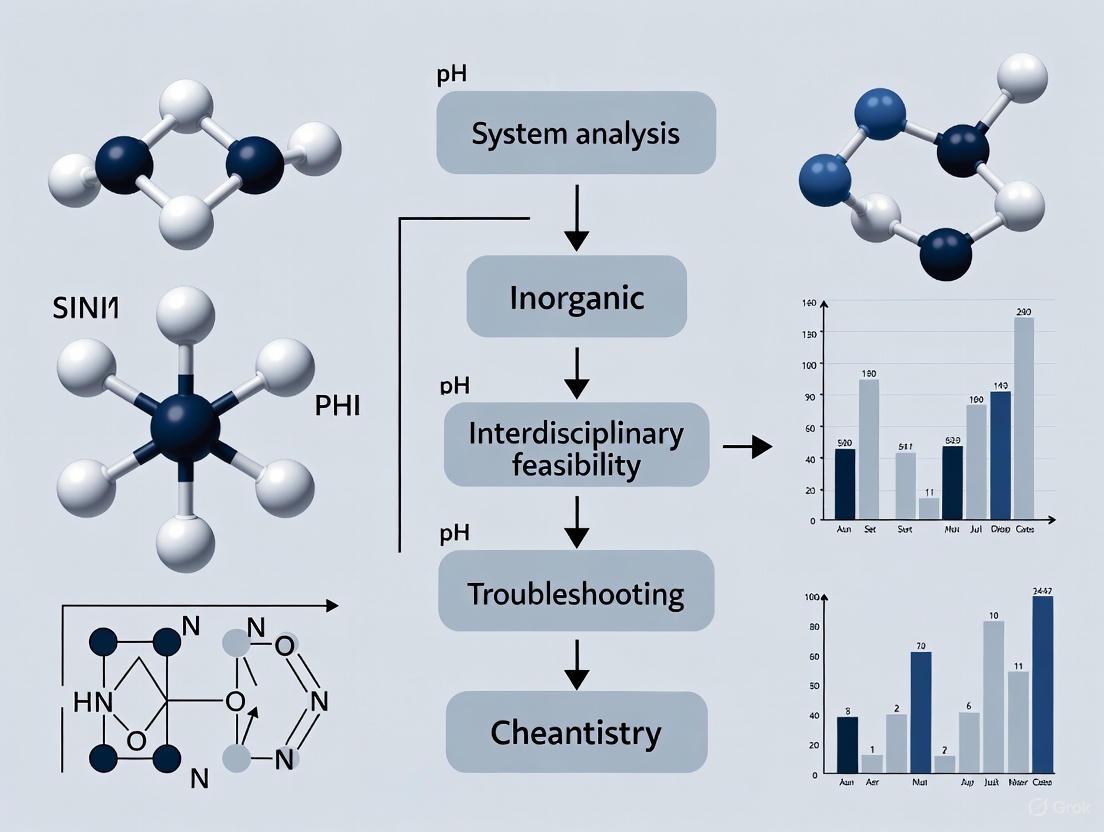

Diagram 1: Core Workflow of Interdisciplinary System Analysis

This diagram visualizes the systematic, iterative process of analyzing and solving complex biomedical problems.

Diagram 2: Troubleshooting Logic for Experimental Research

This diagram maps the decision-making pathway for diagnosing the source of an experimental error.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Reagents and Materials

| Item | Function in Experiment |

|---|---|

| PCR Master Mix | A pre-mixed solution containing Taq DNA Polymerase, dNTPs, MgCl₂, and reaction buffers. It simplifies PCR setup and improves reproducibility by ensuring consistent reagent quality and concentration [6]. |

| Competent Cells | Specially prepared bacterial cells (e.g., DH5α, BL21) that can take up foreign plasmid DNA. They are essential for cloning and plasmid propagation. Their transformation efficiency is critical for successful experiments [6]. |

| Plasmid Vectors | Small, circular DNA molecules used as carriers to clone, amplify, and express genetic material in competent cells. They contain essential elements like an origin of replication and antibiotic resistance genes [6]. |

| Restriction Enzymes | Enzymes that cut DNA at specific recognition sequences. They are fundamental tools for molecular cloning, allowing for the precise assembly of genetic constructs [5]. |

| Antibiotics for Selection | Antibiotics (e.g., Ampicillin, Kanamycin) are added to growth media to select for cells that have successfully taken up a plasmid containing the corresponding resistance gene [6]. |

| Agarose Gels | Used for gel electrophoresis to separate DNA fragments by size. This is a critical step for analyzing the products of PCR, restriction digestion, and checking DNA quality [6]. |

The Critical Role of Systems Thinking in Managing Complexity

Conceptual Foundations: From Linear to Systems Thinking

In the context of interdisciplinary feasibility research, a paradigm shift from linear thinking to systems thinking is fundamental for managing complexity effectively. Linear thinking approaches problems with a deterministic, step-by-step mindset, often treating components in isolation. This approach is inadequate for complex, dynamic systems where components interact in non-obvious ways [4].

Systems thinking, in contrast, involves understanding the entire system and the dynamic interplay of its constituent parts. It emphasizes iterative processes and adaptation over fixed predictions, which is essential for navigating uncertainty in research [4]. This perspective reveals outcomes and behaviors not readily apparent through isolated analysis of individual components, making it crucial for addressing complex interdisciplinary challenges [4].

For research on systems analysis, this means moving beyond optimizing single variables to understanding how changes ripple through the entire interconnected network of a project. This holistic view is a necessary evolution for tackling "wicked" problems that span multiple disciplines [4].

Troubleshooting Interdisciplinary Feasibility: A Systems Approach

Successful system analysis research requires anticipating and managing challenges that arise at the intersections of different disciplines, methodologies, and stakeholder perspectives. The following guide addresses common issues through a systems thinking lens.

Frequently Asked Questions

Q: Our interdisciplinary team is struggling with a unified understanding of the core research problem. Each discipline seems to be solving a different issue. How can we create alignment?

- A: This is a classic epistemic challenge in interdisciplinary research, where different disciplines hold varying assumptions about what constitutes central questions and valid knowledge [8]. To address this:

- Develop a Shared Conceptual Map: Before diving into solutions, facilitate workshops to co-create a high-level visual map of the system you are studying. This helps expose and integrate different mental models.

- Define a Unifying Goal: Clearly articulate a superordinate goal that transcends individual disciplinary objectives, such as "developing a feasible intervention to improve X outcome within Y constraints." The Mandala consortium, for example, anchored its work on the goal of transforming an urban food system to improve human and planetary health [8].

- A: This is a classic epistemic challenge in interdisciplinary research, where different disciplines hold varying assumptions about what constitutes central questions and valid knowledge [8]. To address this:

Q: Our project has successfully modeled a complex system, but our findings are not being adopted by stakeholders. What are we missing?

- A: This often indicates a gap in the social and symbolic dimensions of collaboration, where power dynamics and a lack of trust hinder the uptake of research [8]. The solution involves:

- Early and Continuous Engagement: Integrate stakeholders (e.g., community members, industry partners, policy makers) from the beginning of the research process, not just at the dissemination stage. This fosters a sense of shared ownership.

- Build Trust Through Transparency: Be transparent about research limitations and acknowledge different forms of expertise beyond academia. This helps overcome the "credibility tax" that external experts sometimes face [9].

- A: This often indicates a gap in the social and symbolic dimensions of collaboration, where power dynamics and a lack of trust hinder the uptake of research [8]. The solution involves:

Q: Our computational model is highly accurate on historical data, but fails when real-world conditions change unexpectedly. How can we make our analysis more resilient?

- A: This highlights the challenge of unanticipated change and the limitations of static models. Complex systems are adaptive, and research must be likewise [10].

- Incorporate Scenario Planning: Move from single-point predictions to exploring multiple future scenarios. Use your model to test how the system behaves under various unexpected conditions.

- Design for Flexibility: Implement modular research designs and flexible tools that can adapt. In clinical trials, for instance, this means using interactive response technology (IRT) that allows for real-time adjustments to dosing or cohort management in response to new data [10].

- A: This highlights the challenge of unanticipated change and the limitations of static models. Complex systems are adaptive, and research must be likewise [10].

Q: How can we effectively identify the most impactful points for intervention within a complex, interconnected system?

- A: Relying on linear, reductionist analysis often leads to local optimizations that create global problems.

- Use Leverage Point Analysis: Employ systems thinking tools like Causal Loop Diagrams (CLDs) to map the feedback loops governing system behavior. Interventions that alter the strength or direction of these feedback loops often have higher transformative potential [8].

- Look for Emergent Properties: Focus on understanding the interactions between components, not just the components themselves. The most promising intervention points are often found at the intersections of different sub-systems or disciplines [4].

- A: Relying on linear, reductionist analysis often leads to local optimizations that create global problems.

Common Error Codes and Resolutions

| Error Code / Symptom | Root Cause (Systems Perspective) | Resolution Protocol |

|---|---|---|

| SILO-01: Divergent team goals | Social/Epistemic Misalignment: Disciplines working in parallel (multidisciplinary) rather than integrated (interdisciplinary) [8]. | Facilitate co-creation of a shared project vision and a systems map. Establish joint problem-definition workshops. |

| MODEL-02: Model predictions consistently deviate from reality | Over-reductionism: Model boundaries are too narrow, missing critical externalities or feedback loops [4]. | Conduct a boundary analysis. Engage stakeholders to identify missing links and expand the system model to include key influencing factors. |

| DATA-03: Incompatible data structures hinder integration | Lack of Interoperability: Data systems were designed in isolation without standards for exchange [11]. | Implement a three-layer interoperability framework (Data, Integration, Presentation) to standardize data exchange without overhauling legacy systems [11]. |

| STAKE-04: Stakeholder rejection of valid findings | Symbolic Dimension Failure: Power dynamics and lack of trust were not managed, leading to a deficit of collaborative legitimacy [8]. | Re-engage stakeholders through transparent dialogue. Acknowledge different expertise and incorporate their feedback into the research process. |

Methodologies and Experimental Protocols

Implementing systems thinking requires structured methodologies and tools. The table below differentiates key concepts often used interchangeably.

Table: Distinguishing Frameworks, Methodologies, and Tools

| Concept | Definition | Key Characteristics | Example in Systems Analysis |

|---|---|---|---|

| Framework | A flexible conceptual structure that organizes principles and guides analysis [12]. | Defines what to address, not how. Provides a mental model. | Systems Theory: Conceptualizes problems as interconnected components (inputs, processes, outputs, feedback) [12]. |

| Methodology | A systematic, step-by-step pathway for solving problems or achieving objectives [12]. | Prescriptive, sequential, and repeatable. Defines how to execute. | DMAIC (Define, Measure, Analyze, Improve, Control): A structured data-driven methodology from Six Sigma for process improvement [12]. |

| Tool | A specific technique or instrument used to execute tasks within a methodology or framework [12]. | Action-oriented, singular purpose. The "nuts and bolts" of implementation. | Causal Loop Diagram (CLD): A visual tool for mapping feedback loops and non-linear relationships within a system [8]. |

Protocol: Developing a Causal Loop Diagram (CLD) for Interdisciplinary Feasibility Analysis

Objective: To visually map the key variables and their causal relationships within a complex system, identifying reinforcing and balancing feedback loops that drive system behavior. This protocol is essential during the problem-structuring phase of research [8].

Materials:

- Whiteboard or digital modeling software.

- Multi-disciplinary team members.

- Domain experts and stakeholders.

Methodology:

- Define the Problem Scope: Clearly state the central problem or key behavior to be modeled (e.g., "low adoption rate of a new research protocol").

- Identify Key Variables: Brainstorm a list of 10-20 variables that are relevant to the problem. Variables should be nouns or noun phrases (e.g., "Project Trust," "Resource Allocation," "Communication Overhead").

- Map Causal Links: For each pair of connected variables, draw an arrow indicating the direction of influence.

- Label the arrow with an "S" (Same) if an increase in the cause leads to an increase in the effect, or a decrease in the cause leads to a decrease in the effect.

- Label the arrow with an "O" (Opposite) if an increase in the cause leads to a decrease in the effect, or vice versa.

- Identify Feedback Loops:

- Reinforcing Loop (R): A cycle of causes and effects that amplifies a change in a direction. These are engines of growth or collapse.

- Balancing Loop (B): A cycle of causes and effects that seeks stability and counteracts change. These are goal-seeking structures.

- Analyze for Insight: Use the completed CLD to identify potential leverage points. Interventions that alter the structure of a key feedback loop often have the highest impact.

The workflow for this protocol, including its iterative nature, is visualized below.

The Researcher's Toolkit: Essential Reagents for Systems Analysis

This table details key conceptual "reagents" and tools necessary for conducting rigorous systems analysis in interdisciplinary research.

Table: Key Research Reagents for Systems Analysis

| Tool / Reagent | Function in Analysis | Application Context |

|---|---|---|

| Causal Loop Diagram (CLD) | Maps the causal relationships between variables in a system, highlighting feedback loops that drive system behavior [8]. | Used in the problem-structuring phase to develop a shared hypothesis about system dynamics. |

| Interoperability Framework | Provides a three-layer model (Data, Integration, Presentation) to enable disparate systems and data sources to work together [11]. | Critical for research projects that need to integrate heterogeneous data from multiple partners or legacy systems. |

| Stakeholder Collaboration Matrix | A framework for identifying relevant stakeholders and planning their engagement across epistemic, social, and symbolic dimensions [8]. | Ensures research is grounded in real-world needs and builds the necessary trust for implementation. |

| System Dynamics Modeling | A methodology for creating computer simulation models to test policies and scenarios in complex systems over time. | Used to simulate the long-term impacts of different interventions before committing resources to real-world trials. |

| Root Cause Analysis (RCA) | Functions as both a framework and a methodology for drilling down past symptoms to identify underlying systemic causes of problems [12]. | Applied when a project faces repeated failures or unexpected outcomes to address core issues, not just surface-level effects. |

The relationships between these core tools and the research lifecycle are shown in the following diagram.

► FAQs on Feasibility Dimensions

1. What is the core purpose of assessing technical, operational, and economic feasibility? The core purpose is to systematically evaluate whether a proposed project or system is viable from multiple, critical perspectives before committing significant resources. This interdisciplinary analysis helps identify potential points of failure, ensure the project is technically possible, operationally sustainable, and economically worthwhile, thereby de-risking the initiative [13] [14].

2. In the context of a new laboratory information management system (LIMS), what does technical feasibility assess? Technical feasibility for a new LIMS assesses whether the necessary technology, infrastructure, and expertise are available or obtainable. This includes evaluating software and hardware requirements, system compatibility with existing instruments, data interoperability standards (like HL7 or FHIR in healthcare), and the adequacy of in-house technical expertise to implement and maintain the system [15] [14].

3. How is operational feasibility different from technical feasibility? While technical feasibility asks "Can we build it?", operational feasibility asks "Will it be used effectively and integrated into our workflows?". It assesses human resources, organizational culture, management systems, and day-to-day processes to determine if the project will meet user needs and function smoothly within the existing operational environment [13] [14].

4. What are some common financial metrics used in an economic feasibility analysis? Common financial metrics used to evaluate economic feasibility include [13] [14]:

- Return on Investment (ROI): Measures the profitability of the investment.

- Net Present Value (NPV): Calculates the present value of all future cash flows.

- Internal Rate of Return (IRR): The discount rate that makes the NPV of a project zero.

- Payback Period: The time required to recover the initial investment costs.

5. A recurring technical failure in our interdisciplinary data pipeline is disrupting research. How should we troubleshoot this? This often points to a challenge in data interoperability. A structured troubleshooting approach is recommended [15]:

- Phase 1: Diagnosis: Use observability tools to gain real-time visibility into the pipeline and pinpoint where the failure occurs (e.g., data ingestion, transformation, or exchange).

- Phase 2: Analysis: Check for inconsistencies in data formats, protocols, or a lack of semantic understanding (common vocabularies) between different systems.

- Phase 3: Resolution: Implement or enforce industry-standard data formats (e.g., JSON, XML) and APIs to ensure syntactic and semantic interoperability.

6. Our project is technically sound and funded, but user adoption is low. What operational factors should we re-examine? Low adoption typically indicates operational feasibility issues. Key areas to re-examine include [13] [14]:

- User Experience (UX): Is the system difficult or unintuitive for the end-users (rescientists, technicians)?

- Change Management: Was sufficient training and support provided? Were users involved in the design process?

- Workflow Integration: Does the system disrupt established and efficient workflows instead of streamlining them?

- Maintenance and Serviceability: Is the system easy to maintain and troubleshoot without causing excessive downtime?

► Troubleshooting Guides

Troubleshooting Guide 1: Resolving Technical Feasibility Challenges in System Integration

- Problem: Incompatible data systems and formats are creating silos, hindering data exchange, and preventing a holistic system analysis.

- Core Principle: Achieve data interoperability by ensuring systems can access, exchange, and cooperatively use data [15].

- Methodology:

- Assess the Current State: Map all existing systems, data flows, and identify specific interoperability gaps [15].

- Adopt Industry Standards: Leverage widely accepted data standards and protocols (e.g., HL7 for healthcare, JSON for web APIs) to ensure compatibility [15] [16].

- Implement API-Driven Architecture: Use APIs to enable seamless, real-time data exchange between different systems, both internal and external [15].

- Apply a Multi-Layer Interoperability Framework:

- Syntactic Interoperability: Ensure data exchange using compatible formats and protocols (e.g., XML, JSON) [15] [16].

- Semantic Interoperability: Use common data models, vocabularies, and ontologies to ensure the meaning of the data is preserved and understood consistently across all systems [15] [16].

The following workflow visualizes this structured approach to troubleshooting technical integration problems:

Troubleshooting Guide 2: Addressing Operational Feasibility and User Adoption Issues

- Problem: A technically sound system is facing low user adoption, leading to underutilization and failure to achieve projected operational benefits.

- Core Principle: Design for the user and integrate into existing workflows. Operational feasibility tests whether a project is sustainable from the organization's standpoint regarding processes and human resources [14].

- Methodology:

- Conduct a User-Centric Design Review: Gather feedback from end-users to identify pain points, usability issues, and features that do not align with their actual workflow needs [17].

- Evaluate Workflow Integration: Analyze how the system fits into daily routines. Does it create extra steps or disrupt efficient processes? [14]

- Audit Training and Support Systems: Determine if initial and ongoing training is adequate and accessible. Is there a clear support channel for troubleshooting? [14]

- Assess Maintenance and Serviceability: Review whether the system is designed for easy maintenance. High-wear components should be easily accessible, and documentation must be clear [18].

The logical relationship for diagnosing and resolving operational feasibility issues is outlined below:

► Quantitative Data for Feasibility Analysis

The following table summarizes key financial metrics essential for conducting a rigorous economic feasibility analysis. These metrics provide a quantitative foundation for deciding whether a project is financially viable [13] [14].

| Financial Metric | Calculation / Definition | Feasibility Indicator |

|---|---|---|

| Return on Investment (ROI) | (Net Benefits / Total Costs) × 100 | A positive percentage indicates a profitable investment. Higher percentage is better. |

| Net Present Value (NPV) | Sum of the present values of all cash flows (inflows and outflows) | NPV > 0: The project is expected to generate value and is economically feasible. |

| Internal Rate of Return (IRR) | The discount rate that makes the NPV of all cash flows equal to zero. | IRR > the company's required rate of return (hurdle rate): The project is acceptable. |

| Payback Period | Initial Investment Cost / Annual Net Cash Inflow | Shorter payback periods are preferred, indicating a quicker recovery of the initial investment. |

► The Researcher's Toolkit: Key Reagents for Feasibility Analysis

This table details essential methodological "reagents" for designing and executing a robust feasibility study in system analysis research.

| Research Reagent | Function in the Feasibility Experiment |

|---|---|

| SWOT Analysis | A strategic planning tool used to identify and analyze the internal (Strengths, Weaknesses) and external (Opportunities, Threats) factors relevant to a project's feasibility [13] [14]. |

| Cost-Benefit Analysis (CBA) | A systematic process for calculating and comparing the total costs and total benefits of a project to determine its economic feasibility and justify its pursuit [13]. |

| PESTLE Analysis | A framework used to scan the external macro-environmental factors (Political, Economic, Social, Technological, Legal, Environmental) that could impact the project's feasibility and success [14]. |

| Sensitivity Analysis | A financial modeling technique used to understand how different values of an independent variable (e.g., project cost, timeline) impact a particular dependent variable (e.g., NPV), assessing the project's robustness to change [14]. |

| Interoperability Framework | A standardized architecture (e.g., based on syntactic, semantic, and organizational levels) that provides guidelines for achieving seamless data exchange between different systems, crucial for technical feasibility [15] [16]. |

Interdisciplinary collaboration is a critical driver of innovation in complex fields like drug discovery and system analysis. It integrates diverse scientific disciplines, areas of expertise, and fields of study to address complex health questions and yield a more comprehensive understanding of problems [19]. However, this integration process is frequently hampered by recurring collaboration barriers, primarily knowledge gaps and terminology conflicts.

These barriers stem from what researchers describe as vastly "diverging thought worlds" among specialists [20]. In drug discovery, for example, teams combine specialists from medicinal chemistry, structural biology, preclinical safety, and translational medicine—each with distinct scientific practices, problem-solving approaches, communication patterns, timelines, and technologies for knowledge creation [20]. Effective collaboration requires not just performing domain-specific work but successfully combining competences across these knowledge boundaries [20].

This technical support center provides actionable troubleshooting guidance to help researchers, scientists, and drug development professionals identify, diagnose, and overcome these recurring barriers within their interdisciplinary feasibility studies.

FAQs: Troubleshooting Common Collaboration Issues

Q1: What are the most common symptoms of terminology conflicts in an interdisciplinary team?

A: Teams experiencing terminology conflicts often display:

- Misinterpreted Requirements: Team members consistently deliver work that doesn't meet the expectations of colleagues from other disciplines due to differing interpretations of key terms [20].

- Communication Avoidance: Specialists hesitate to contribute in broad team discussions, preferring to communicate only within their own disciplinary subgroups [21].

- Repeated Clarifications: Meetings are dominated by efforts to clarify basic concepts rather than advancing scientific questions [20].

- Siloed Documentation: Teams produce documents with dense, discipline-specific jargon that is inaccessible to the wider team.

Q2: How can we distinguish between a true knowledge gap and a simple terminology conflict?

A: The table below outlines key diagnostic differences:

| Characteristic | Terminology Conflict | Fundamental Knowledge Gap |

|---|---|---|

| Primary Symptom | Misunderstandings in communication; assumptions about shared definitions [20] | Inability to align on common goals or methodological approaches [21] |

| Effect on Workflow | Causes delays and rework as outputs are misinterpreted [20] | Halts progress entirely, as critical path tasks cannot be defined or executed [21] |

| Resolution Focus | Creating shared glossaries and facilitating translation between domains [20] | Strategic onboarding of new expertise or interprofessional training [21] [22] |

| Team Climate | Frustration coupled with a willingness to engage | Disengagement, confusion, and a lack of collective problem-solving |

Q3: What specific strategies can help bridge terminology differences during technical discussions?

A: Effective strategies include:

- Cross-Disciplinary Anticipation: Specialists should consciously anticipate the procedures, requirements, and expectations of other domains. For example, a computational chemist should consider the synthesizability of a designed compound [20].

- Structured Dialogue Techniques: Implement "learning conversations" and structured feedback systems that explicitly allocate time for explaining disciplinary assumptions [23] [22].

- Visual Workflows: Use diagrams to create a shared, less language-dependent representation of processes and relationships (see Section 4).

- Glossary Co-creation: Develop a living, team-owned document that defines critical terms with examples from different disciplinary viewpoints.

Q4: Our team has identified a critical knowledge gap. What formal and informal steps should we take?

A: Address knowledge gaps through a balanced approach:

- Formal Action: The project leader should formally reconfigure the team structure to onboard the necessary specialists or sub-teams with the missing expertise [20].

- Informal Action: Encourage "triangulation," a practice where team members systematically cross-check assumptions and findings across disciplines to establish reliability [20]. Furthermore, foster an environment where team members feel empowered to seek knowledge from "sub-team outsiders" who can provide fresh perspectives [20].

Q5: What role does technology play in mitigating these collaboration barriers?

A: Technology is a key enabler:

- Collaboration Platforms: Use electronic health records, project management software, and secure communication apps to streamline information sharing and make workflows transparent [22].

- Digital Resources: Implement centralized, digital repositories for project documents, protocols, and glossaries to ensure a single source of truth [23].

- Data Integration Tools: Leverage platforms that facilitate the sharing of clinical trial data and real-world research data, which helps align different specialists around a common dataset [24].

Diagnostic Protocols for Identifying Collaboration Barriers

Protocol for Mapping Terminology Landscapes

Objective: To systematically identify and document discipline-specific terminology that may cause conflicts in an interdisciplinary team.

Materials Needed:

- Whiteboard or digital collaboration canvas

- Audio recorder for meetings

- Facilitator from a neutral discipline

Methodology:

- Stimulated Elicitation: Select a core project concept (e.g., "efficacy," "validation," "model"). Ask each specialist to write down their own definition and a key associated method.

- Round-Robin Explanation: In a team meeting, facilitate a session where each member explains their definition and method without interruption.

- Divergence Mapping: The facilitator maps the different definitions and highlights points of semantic conflict (e.g., where one term has multiple meanings) and semantic gaps (e.g., where a concept from one discipline has no equivalent in another).

- Glossary Formulation: Collaboratively draft a single working definition for each contested term for use in the project. Document disagreements in an appendix.

Expected Output: A project-specific glossary that clarifies terminology and explicitly notes areas where compromises have been made for interdisciplinary coherence.

Protocol for Auditing Knowledge Boundaries

Objective: To visualize and assess the distribution of critical knowledge across the team, identifying potential gaps.

Materials Needed:

- Self-assessment questionnaires

- Knowledge mapping software (e.g., a simple spreadsheet or network tool)

Methodology:

- Skill & Knowledge Inventory: Create a list of all technical and methodological skills critical to the project's feasibility. Have each team member self-rate their proficiency (e.g., Expert, Proficient, Familiar, None).

- Dependency Matrix Analysis: Create a matrix linking project tasks to the required skills. Identify tasks where required skills are absent or available from only one team member (a "single point of failure").

- Flow Anticipation Workshop: For tasks with knowledge dependencies (e.g., the output of one specialist is the input for another), run a scenario-planning session to anticipate how uncertainties in one domain might impact work in another [20].

Expected Output: A knowledge map of the team that highlights critical dependencies and vulnerabilities, guiding targeted training or recruitment.

Visualization of Collaboration Workflows and Diagnostics

Interdisciplinary Feasibility Assessment Workflow

Cross-Disciplinary Synchronization Model

Research Reagent Solutions for Collaboration Analysis

The following table details key methodological "reagents" for diagnosing and treating collaboration barriers in interdisciplinary research.

| Tool / Method | Primary Function | Application Context |

|---|---|---|

| Terminology Glossary | Creates a shared vocabulary by defining discipline-specific terms in a project-specific context [20]. | Mitigates terminology conflicts; used at project kick-off and updated throughout. |

| Formal Sub-Teams | Structures work around specific scientific questions by grouping relevant, interdependent specialists [20]. | Provides clear boundaries and accountability for tackling complex, multi-faceted problems. |

| Cross-Disciplinary Anticipation | An informal practice where specialists proactively consider the needs and constraints of other domains in their work [20]. | Prevents workflow blockages and misaligned outputs (e.g., a compound that is difficult to synthesize). |

| Workflow Synchronization | The explicit alignment of timelines and pacing of activities across different disciplines [20]. | Ensures that cross-disciplinary inputs and outputs are available when needed, avoiding delays. |

| Triangulation | The practice of cross-checking research findings and assumptions across different disciplines and experimental setups [20]. | Enhances the reliability of knowledge and reveals hidden assumptions that could derail a project. |

| Interprofessional Training | Training programs where professionals learn about, from, and with each other to break down stereotypes and build mutual respect [22]. | Builds a foundation of shared understanding and improves long-term team communication and function. |

This case study analyzes the root causes of failure in clinical Artificial Intelligence (AI) collaborations, synthesizing lessons from recent high-profile setbacks in the healthcare and pharmaceutical sectors. The analysis reveals that technological limitations are rarely the primary culprit. Instead, persistent collaboration gaps between clinical and technical teams, misaligned incentives, and fundamental data challenges emerge as the dominant failure modes. This report translates these findings into a practical troubleshooting guide and resource toolkit, enabling researchers and drug development professionals to proactively diagnose and mitigate these risks in their own interdisciplinary system analysis research.

Quantitative Analysis of Failure Trends

Recent industry analyses quantify the significant challenges facing AI initiatives in biomedical fields. The data reveals a landscape where failure is common, and success requires navigating complex technical and commercial environments.

Table 1: AI Project Failure and Investment Trends (2025 Data)

| Sector / Metric | Reported Failure Rate | Key Contributing Factor | Source |

|---|---|---|---|

| Corporate AI (Broad) | 95% of projects fail to demonstrate profit-and-loss impact. | Lack of alignment between technology and business workflows. | MIT Report [25] |

| AI Drug Development | $18+ billion invested, with few approved drugs reaching market. | Macroeconomic factors (e.g., high interest rates) and regulatory challenges drying up venture capital. | Fortune Analysis [26] |

| Business AI (Broad) | 42% of businesses scrapped the majority of their AI initiatives. | Leadership disconnect and unrealistic expectations. | TechFunnel [27] |

| Drug Candidate Failure | ~56% of drug candidates fail due to safety problems, such as toxicity. | Toxicity issues often detected too late in preclinical stages, creating a "death sentence" for development. | Drug Target Review [28] |

Table 2: Analysis of AI Drug Development Challenges

| Challenge Category | Specific Issue | Impact / Example |

|---|---|---|

| Commercial & Funding | Drying venture capital; fewer than 20 deals worth half the peak 2021 sum in 2025. | Companies like Recursion tabling drugs post-merger; BenevolentAI delisting. [26] |

| Technology & Validation | Scrutiny on technology readouts; mixed results in clinical trials. | Recursion's mid-stage trial for a neurovascular drug found it safe but lacking evidence of effectiveness, causing shares to fall. [26] |

| Process & Incentives | Misaligned incentives for early toxicity testing; the 10+ year drug development bottleneck. | Early-stage biotech focuses on efficacy data to secure funding, deferring complex safety questions. [28] |

Troubleshooting Guide: Root Causes and Protocols for Mitigation

This section provides a diagnostic and procedural framework for addressing the most common failure modes in clinical AI collaborations.

Collaboration Gap: Doctor-Engineer Misalignment

- Presenting Problem: AI models are technically sound but are rejected by clinical end-users or fail to integrate into clinical workflows. The system's outputs are deemed clinically irrelevant or unsafe.

- Root Cause: A fundamental disconnect between the clinical problem space and the engineering solution space. Doctors and engineers often struggle to find common ground, leading to poor implementation, loss of momentum, and broken follow-up systems. [29]

- Troubleshooting FAQs:

- Q: How can I tell if my project is suffering from a collaboration gap?

- A: Look for these key indicators: 1) Clinical team complaints that the tool is "unusable" or "doesn't fit our workflow," 2) Engineering team frustration that "doctors keep changing requirements," 3) Low adoption rates of a technically finished product, and 4) Protracted meetings where basic medical terminology or technical concepts require repeated explanation.

- Q: What is a proven methodology to bridge this gap?

- A: Implement a Structured, Sustained Collaboration Protocol.

- Protocol Objective: To create a shared mental model and common language between clinical and technical teams, ensuring the AI solution addresses a high-value clinical problem in a functionally viable way.

- Experimental/Methodology Protocol:

- Form a Tripartite Leadership Team: Establish a co-leadership model comprising a clinically active physician, a lead AI engineer, and a project manager fluent in both domains.

- Conduct Joint Problem-Framing Workshops: Before any coding begins, hold workshops to define the clinical problem in precise medical terms and jointly map the existing clinical workflow. Use process mapping techniques to identify specific pain points.

- Develop a "Shared Language" Glossary: Collaboratively build a living document defining key clinical terms (e.g., "hemodynamic instability," "treatment-resistant") and technical terms (e.g., "model confidence score," "feature importance") to ensure unambiguous communication.

- Create Rapid, Interactive Prototyping Cycles: Instead of long development cycles, build minimal viable products (MVPs) or interactive mock-ups for weekly or bi-weekly feedback sessions with clinical end-users. This validates utility and usability early.

- Establish a Continuous Feedback Loop: Use structured channels (e.g., dedicated Slack channels, weekly syncs) for ongoing feedback during development. Post-deployment, maintain a closed-loop system for reporting issues and implementing updates. [29]

- Q: How can I tell if my project is suffering from a collaboration gap?

Data Integrity and Domain Applicability Failures

- Presenting Problem: An AI model achieves high accuracy on internal test sets but fails dramatically in real-world validation, producing nonsensical or dangerously inaccurate outputs (hallucinations) when exposed to clinical data.

- Root Cause: The use of generic, foundation AI models that are not purpose-built for the complexities of clinical data. These models fail to correctly interpret medical jargon, abbreviations, and the semi-structured nature of healthcare records. [30]

- Troubleshooting FAQs:

- Q: Our model is a state-of-the-art LLM. Why is it failing on clinical notes?

- A: State-of-the-art in general language does not equate to proficiency in the clinical domain. Clinical language is a specialized sub-language with unique challenges:

- Terminology & Context: Abbreviations are highly ambiguous (e.g., "AS" could mean "aortic stenosis" or "as"). A model trained on general text (e.g., Wikipedia, Reddit) will lack the context to disambiguate. [30]

- Semi-Structured Data: Clinical notes are not pure prose; they contain implicit tables, lists, and structured data. Generic models trained on well-formed prose (e.g., books, news articles) struggle to parse this format, especially when formatting is lost. [30]

- Hallucinations: Without domain-specific grounding, generic models statistically generate plausible-sounding but factually incorrect information, such as inferring a patient's physical activity level was "two glasses of wine per week." [30]

- A: State-of-the-art in general language does not equate to proficiency in the clinical domain. Clinical language is a specialized sub-language with unique challenges:

- Q: What is the corrective protocol for this failure?

- A: Implement a Purpose-Built AI Model Strategy.

- Protocol Objective: To develop or fine-tune an AI model specifically equipped to handle the nuances, terminology, and structure of clinical data.

- Experimental/Methodology Protocol:

- Domain-Specific Pre-training or Fine-Tuning: Start with a base model and continue training it on a large, diverse corpus of clinical text (e.g., de-identified clinical notes, medical literature, lab reports). This teaches the model clinical language patterns.

- Implement Contextual Disambiguation Training: Actively train the model to interpret abbreviations and terms based on document type and context. For example, teach it that "Pt" in a lab report likely means "platinum" (for blood tests), in a rehab note means "physiotherapy," and in a consultation note means "patient." [30]

- Integrate Clinical Knowledge Guardrails: Anchor the model's reasoning to established, evidence-based clinical guidelines and curated medical knowledge. This prevents overgeneralization and hallucination by providing a factual framework. For example, a guideline-informed AI would know that chronic, baseline hypotension does not meet admission criteria, whereas a naive AI might recommend admission. [31]

- Build a Human-in-the-Loop Validation Workflow: Design workflows where AI outputs are paired with source evidence. For instance, when AI extracts a diagnosis, it also provides a link to the source text in the medical record, allowing a clinician to rapidly verify accuracy. This is critical for trust and compliance. [30] [31]

- Q: Our model is a state-of-the-art LLM. Why is it failing on clinical notes?

The "Last Mile" Problem: Clinical Integration and Trust

- Presenting Problem: A validated and accurate AI model is successfully deployed into the clinical environment, but adoption is low, and clinicians do not trust its outputs.

- Root Cause: The solution was designed as a technology push rather than a user-centered tool that fits seamlessly into the clinical workflow and earns trust through transparency.

- Troubleshooting FAQs:

- Q: Our model's accuracy metrics are excellent. Why don't clinicians trust it?

- A: Trust is built on transparency and understanding, not just metrics. Clinicians cannot risk patient safety on a "black box" recommendation. If the AI cannot explain why it reached a conclusion in a way that aligns with clinical reasoning, it will be met with skepticism.

- Q: What is the protocol for building trust and ensuring adoption?

- A: Implement a Human-AI Collaboration and Transparency Framework.

- Protocol Objective: To transition the AI from a black-box tool to a transparent "teammate" that enhances, rather than replaces, clinical decision-making.

- Experimental/Methodology Protocol:

- Provide Explainable AI (XAI) Outputs: Design the system interface to show not just the prediction, but also the supporting evidence. For example, highlight the specific phrases in the clinical note that contributed most to the model's decision (e.g., "recommended admission due to findings: 'new oxygen requirement,' 'tachycardia,' 'fever'"). [31]

- Design for Workflow Integration, Not Disruption: Integrate the AI tool directly into the Electronic Health Record (EHR) system. The output should appear in the context of the patient's chart, not in a separate, standalone application that requires clinicians to switch screens and break their workflow.

- Position AI as a Safety Net or Assistant: Frame the AI's role correctly. It excels at rapid data processing and pattern recognition, flagging potential issues a tired human might miss (e.g., "potential drug interaction detected" or "note mentions chest pain not yet on problem list"). This positions the AI as a cognitive aid, not a replacement. [31]

- Establish Clear Accountability and Oversight: Maintain a "human-in-the-loop" for final decision-making. Very careful contracting and operational protocols must define accountability for errors. The clinician must always be the final decision-maker, with the AI acting as a powerful support tool. [30]

- Q: Our model's accuracy metrics are excellent. Why don't clinicians trust it?

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and data "reagents" essential for building robust, clinically viable AI systems.

Table 3: Essential Research Reagents for Clinical AI Collaborations

| Research Reagent | Function / Explanation | Relevance to Failure Mitigation |

|---|---|---|

| Curated Clinical Guidelines (e.g., MCG) | Provides a framework of evidence-based medical knowledge to ground AI reasoning and prevent hallucinations or incorrect generalizations. [31] | Acts as a "knowledge guardrail," directly addressing failure mode 3.2 by ensuring clinical validity. |

| Domain-Specific Language Models (e.g., clinically trained NLP models) | AI models pre-trained or fine-tuned on massive datasets of clinical text (notes, reports, literature) to understand medical jargon, abbreviations, and context. [30] | The core solution for failure mode 3.2, enabling accurate interpretation of semi-structured clinical data. |

| De-identified Clinical Data Corpus | A large, diverse, and high-quality dataset of real-world clinical records used for training and validating purpose-built models. Represents the "fuel" for clinical AI. | Fundamental for preventing overfitting and ensuring generalizability, a key aspect of failure mode 3.2. |

| Structured Collaboration Framework (e.g., shared project glossary, joint workshops) | A methodological "reagent" that defines the processes, communication standards, and meeting structures for interdisciplinary teams. [29] | The primary tool for mitigating failure mode 3.1 (Collaboration Gap). |

| Explainable AI (XAI) Software Libraries | Tools and algorithms (e.g., SHAP, LIME) that help interpret complex AI models, showing which input features most influenced a given decision. | Critical for building the transparency required to solve failure mode 3.3 (The "Last Mile" Problem). |

| Human-in-the-Loop (HITL) Workflow Platform | A software platform that integrates AI outputs with human review tasks, ensuring a clinician can easily verify, override, and provide feedback on AI suggestions. [30] [31] | The operational backbone for implementing the trust-building protocols in failure mode 3.3. |

Workflow Visualization: From Failure to Success

The following diagram synthesizes the insights from this case study into a visual workflow, contrasting the pathological pathways leading to failure with the recommended protocols for success. This serves as a high-level diagnostic and strategic map for researchers.

A Methodological Toolkit for Assessing and Structuring Collaboration

Conducting a Comprehensive Interdisciplinary Feasibility Study

Frequently Asked Questions (FAQs)

1. What is the primary goal of an interdisciplinary feasibility study? The primary goal is to determine whether a complex research project is practical and viable before full implementation. It assesses if the necessary expertise, methods, and resources from different disciplines can be successfully integrated to address a multifaceted problem [32] [1].

2. What are common signs that our interdisciplinary project might be in trouble? Common signs include: researchers from different fields interpreting results in conflicting ways due to differing disciplinary criteria; difficulties in mastering both the explicit and tacit skills required across disciplines; and failure to agree on a common methodological approach for evaluation [32].

3. How can we effectively troubleshoot collaboration issues within our interdisciplinary team? Effective troubleshooting involves verifying the root of the problem through direct observation and questioning team members. Follow a logical process: identify the specific collaboration challenge, establish a theory for its probable cause, test your theory, and then develop a plan of action to resolve it [33] [34].

4. Why is it critical to document all steps during the feasibility phase? Documenting findings, actions, and outcomes is crucial for creating a record that can be referred to if similar problems arise later. It also helps in communicating what has already been tried to new team members or stakeholders, saving time and preventing repeated mistakes [33] [34].

5. Our project involves both predictive (engineering) and explanatory (behavioral) modeling. How can we reconcile these methods? Acknowledge this methodological difference as a point of convergence rather than conflict. Use a structured, process-oriented approach where the common research question guides decisions at each stage, allowing both types of models to provide complementary insights into the problem [32].

Troubleshooting Guides

Problem: Inability to Recruit Adequate Participants for a Clinical Feasibility Study

Issue: Difficulty enrolling a sufficient number of eligible participants in a study, for example, for a home-based rehabilitation program [35] or a new clinical evaluation method [36].

| Troubleshooting Step | Actionable Protocol | Expected Outcome |

|---|---|---|

| 1. Verify & Identify | Analyze recruitment data and interview staff to pinpoint specific bottlenecks (e.g., low eligibility, high refusal rates). | A clear understanding of the stage at which recruitment fails. |

| 2. Establish Theory of Cause | Research indicates common causes include patient travel time, lack of motivation, and preference for single-provider care [35] [36]. | A documented hypothesis for the low recruitment. |

| 3. Test the Theory | Survey potential participants or use focus groups to understand their reluctance. | Validated or refined reasons for non-participation. |

| 4. Plan & Implement Solution | Leverage digital platforms and collaborate with patient advocacy groups to widen reach [37]. For reluctant patients, emphasize the benefits of interdisciplinary care [36]. | A multifaceted recruitment strategy is launched. |

| 5. Verify & Document | Compare recruitment rates before and after implementing new strategies. Document the successful and unsuccessful approaches. | Improved recruitment and a knowledge base for future studies [34]. |

Problem: Unexpected Results or System Behavior During Evaluation

Issue: The research prototype or intervention behaves in an unexpected way during the feasibility testing phase, making results difficult to interpret.

| Troubleshooting Step | Actionable Protocol | Expected Outcome |

|---|---|---|

| 1. Verify the Problem | Carefully note the specific unexpected symptom. Attempt to reproduce the issue consistently. Compare the system's behavior to its expected functioning. | A confirmed and reproducible problem. |

| 2. Establish Theory of Cause | Form a theory on the probable cause. In systems research, this often stems from not testing code thoroughly before experiments or from unaccounted contextual factors (preconditions) affecting the implementation mechanism [38] [1]. | A hypothesis linking a potential cause to the observed effect. |

| 3. Test the Theory | If a code issue is suspected, return to a version of the prototype that passed all tests and re-run experiments. If a contextual factor is suspected, use systems analysis methods to model and test the influence of different variables [1] [38]. | Identification of the root cause. |

| 4. Plan & Implement Solution | For code issues, fix the bug and add a test case to prevent regression. For contextual issues, adapt the strategy or model to account for the newly identified factor. | A corrected and more robust system or model. |

| 5. Verify & Document | Re-run the full suite of experiments with the fix in place. Ensure the unexpected behavior is resolved and that no new issues were introduced. Document the problem and solution. | Validated results and improved research documentation [38]. |

Quantitative Feasibility Data

The following data, synthesized from published feasibility studies, provides benchmarks for key metrics.

Table 1: Feasibility Metrics from Pilot Studies

| Feasibility Metric | REACH Rehabilitation Program [35] | Interdisciplinary Hip Evaluation [36] |

|---|---|---|

| Recruitment Rate | Not Specified | 81% of eligible patients enrolled |

| Retention/Adherence | 79.1% completed 6-month follow-up | 100% retention for primary outcome measures |

| Participant Satisfaction | Higher satisfaction reported in intervention group | Less decisional conflict post-evaluation |

| Time Burden | Not Specified | Interdisciplinary evaluation took 23.5 minutes longer on average |

| Key Feasibility Finding | Home-based, interdisciplinary intervention is feasible and positively perceived | The interdisciplinary evaluation model is clinically feasible |

Experimental Protocols

Protocol 1: Implementing a Home-Based Interdisciplinary Rehabilitation Program

This protocol is adapted from a feasibility study for survivors of critical illness [35].

- Team Assembly (Community of Practice): Form an interdisciplinary team including physical therapists, occupational therapists, dietitians, researcher-clinicians, and patient representatives.

- Training: Conduct joint training sessions for all professionals on the core concepts (e.g., Post-Intensive Care Syndrome) and the principles of the intervention.

- Intervention Design:

- Initiate the program with a handover from hospital to community-based therapists.

- Use a core outcome set (CoS) for standardized measurement.

- Physical therapy starts at home within one week of discharge, progressing to clinic-based training.

- Implement screening protocols to trigger referrals to occupational therapy (for fatigue, cognition, daily activities) and dietetics (for malnutrition risk).

- Evaluation: Employ a mixed-methods approach, collecting quantitative data (functional capacity, quality of life) and qualitative feedback from both patients and professionals.

Protocol 2: Applying Systems Analysis to Study Implementation Mechanisms

This protocol provides a structured approach to studying how and why an implementation strategy works within a complex system [1].

- Define the System and Strategy: Clearly describe the implementation context (the system) and the specific strategy being tested (e.g., a new training protocol for clinicians).

- Hypothesize Mechanisms: Formally state the hypothesized mechanism(s) through which the strategy is expected to work. For example, "Training will improve implementation outcomes through the mechanism of skill-building."

- Identify Preconditions and Moderators: Specify the factors necessary for the mechanism to activate (preconditions, e.g., clinicians can attend training) and factors that might influence the strength of the mechanism (moderators, e.g., clinicians' desire to learn).

- Model and Simulate: Use systems analysis methods (e.g., qualitative modeling, simulation) to map the relationships between the strategy, its mechanisms, preconditions, moderators, and outcomes. Simulate different scenarios to test the robustness of the hypothesis.

- Refine the Understanding: Use the results of the modeling and simulation to refine the understanding of the mechanism and guide potential adaptations to the implementation strategy.

Experimental Workflow Visualization

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Materials for Interdisciplinary Feasibility Research

| Item / Solution | Function / Rationale |

|---|---|

| Community of Practice (CoP) | A structured network of professionals from different fields that facilitates peer-to-peer learning, shares expertise, and co-creates the intervention, ensuring it is grounded in multiple disciplines [35]. |

| Core Outcome Set (CoS) | A standardized, agreed-upon set of measures collected across all study participants. This ensures that all disciplinary perspectives are measured consistently, allowing for integrated analysis [35]. |

| Systems Analysis Methods | A suite of qualitative or quantitative modeling techniques used to understand the interdependent relationships and dynamic changes within a complex system, helping to identify how and why an intervention works [1]. |

| Mixed-Methods Approach | A research design that integrates quantitative data (e.g., questionnaires, performance metrics) and qualitative data (e.g., interviews, open-ended feedback). This provides a more complete picture of feasibility, capturing both "what" happened and "why" [35]. |

| Automated Experimentation Pipeline | A fully scripted workflow that automates the entire experimental process, from building software artifacts to running tests and generating reports. This is critical for reproducibility and for efficiently obtaining incremental feedback during prototyping [38]. |

Applying the PIECES Framework to Diagnose System-Level Problems

What is the PIECES Framework and how can it help diagnose system-level issues in an interdisciplinary research environment?

The PIECES Framework is a structured checklist designed to comprehensively identify and classify problems within an existing information system. In the context of interdisciplinary feasibility research, it provides a common language and systematic approach for diagnosing issues that span multiple disciplines, such as those encountered in drug development. The acronym PIECES stands for Performance, Information (and Data), Economics, Control (and Security), Efficiency, and Service [39] [40].

For researchers and scientists, this framework is invaluable because it moves troubleshooting beyond isolated technical fixes to a holistic analysis. It ensures that all potential facets of a system problem—from data accuracy and processing speed to cost implications and user satisfaction—are systematically evaluated [40]. This is particularly crucial for novel and complex projects where the starting knowledge base is inherently limited, and information asymmetry can put research teams at a disadvantage [41].

How do I use the PIECES Framework to analyze a problem?

Using the PIECES Framework involves evaluating your system against each of its six categories. The following table provides a structured checklist of questions to guide your analysis. This ensures a comprehensive diagnostic process, helping you to pinpoint specific, actionable issues [39] [40].

| PIECES Category | Diagnostic Questions to Ask |

|---|---|

| Performance | Is system throughput insufficient? Is response time slower than expected for data analysis or simulation tasks? [39] |

| Information & Data | Are data outputs inaccurate, irrelevant, or difficult to produce? Are data inputs difficult to capture, error-prone, or captured redundantly? Is stored data poorly organized, insecure, or inaccessible for interdisciplinary analysis? [39] |

| Economics | Are operational costs unknown, untraceable, or too high? Are there missed opportunities to explore new research markets or improve current processes for better profitability? [39] |

| Control & Security | Is there too little control, leading to data editing errors, processing errors, or potential breaches of data privacy regulations (e.g., GxP)? Conversely, is there too much control, creating bureaucratic red tape that slows down research? [39] |

| Efficiency | Do people, machines, or computers waste time or materials? Is data redundantly input, processed, or information redundantly generated? Is the effort required for routine tasks excessive? [39] |

| Service | Is the system difficult to learn or awkward to use? Is it inflexible to new experimental scenarios or incompatible with other laboratory systems? Does it produce unreliable or inconsistent results? [39] |

What is a logical troubleshooting methodology to follow after identifying potential problems with PIECES?

Once PIECES has helped identify the broad categories of problems, a structured troubleshooting methodology should be followed to effectively diagnose and resolve the root cause. The following workflow integrates the CompTIA methodology, a standard in IT support, with the analytical nature of research environments [34].

The detailed steps are as follows:

- Identify the problem: Gather information from users, error messages, and system logs. Question users to identify symptoms and determine what has changed recently. Duplicate the problem to confirm it and approach multiple issues one at a time [34].

- Establish a theory of probable cause: Question the obvious and consider multiple approaches. Use the PIECES classification to guide your hypotheses. Consult vendor documentation, scientific forums, and colleagues to form a data-backed theory [34].

- Test the theory to determine the cause: Perform diagnostic tests to confirm or deny your theory. This may involve checking individual system components, running simulations with known-good parameters, or isolating variables in a test environment. If the theory is disproven, return to step 2 [34].

- Establish a plan of action and implement the solution: Develop a clear plan to resolve the root cause. For complex systems, this may require a phased rollout, change management procedures, or a back-out plan to reverse changes if necessary. Then, carefully implement the solution [34].

- Verify full system functionality: Have end-users test the system in real-world scenarios to ensure the problem is resolved and no new issues were introduced. This is critical for ensuring data integrity in experimental workflows [34].

- Document findings, actions, and outcomes: Keep detailed records of the problem, the diagnostic process, the solution implemented, and any lessons learned. This documentation is invaluable for future troubleshooting and for building institutional knowledge [33] [34].

What are common interdisciplinary feasibility challenges and how can PIECES help address them?

Interdisciplinary projects face unique hurdles that the PIECES framework can help surface and manage. A key challenge is the fragmentation of knowledge and literature across different fields, which can lead to an incomplete understanding of project feasibility [41]. The table below outlines common challenges and maps them to the relevant PIECES categories.

| Challenge | Description | Relevant PIECES Categories |

|---|---|---|

| Knowledge Silos | Critical information and data are not effectively shared or are in incompatible formats across disciplines, leading to gaps and misunderstandings. [42] | Information, Service [39] |

| Communication Gaps | Inefficient communication between team members from different backgrounds slows progress and can lead to decision-making errors. [42] | Efficiency, Control, Service [39] |

| Unclear Ownership | Contribution and ownership of work can become obscured in collaborative teams, leading to friction and unmet expectations. [42] | Control, Service [39] |

| Tool & System Incompatibility | Research systems and software from different disciplines are not coordinated or are incompatible, creating workflow bottlenecks. [39] [42] | Performance, Efficiency, Service [39] |

| Navigating Regulatory Requirements | Difficulty in ensuring that novel, complex projects meet all regulatory compliance guidelines from various domains (e.g., GMP, GLP). [43] [44] | Control, Information [39] |

FAQs for the Research Scientist

Q: My experimental data analysis is taking too long, which is bottlenecking my research. What PIECES areas should I investigate? A: This primarily falls under Performance (throughput and response time) and Efficiency (wasted time and resources). Investigate your software's computational load, the potential for optimizing analysis algorithms, or whether hardware upgrades are needed. Also, check if data is being processed redundantly [39].

Q: My team is struggling with inconsistent data from a shared instrument. How can PIECES guide a solution? A: This touches multiple categories. Focus on Information (accuracy and timeliness of data), Control (potential processing errors or lack of standardized operating procedures), and Service (system reliability). A solution might involve implementing stricter data entry controls, regular calibration checks, and clearer user training [39].

Q: We are starting a new, highly interdisciplinary project. How can we use PIECES proactively? A: Use the PIECES checklist at the project's feasibility stage to anticipate potential problems [41] [40]. For example, you can define Information requirements for data sharing upfront, establish Control protocols for data integrity, and evaluate whether proposed systems will provide adequate Service to all user groups. This proactive application helps in designing a more robust and feasible project from the outset [39] [40].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and their functions relevant to troubleshooting system-level issues in a pharmaceutical or biotech research context.

| Research Reagent / Material | Function in Troubleshooting |

|---|---|

| Differential Scanning Calorimetry (DSC) | Used to study thermal properties of drug formulations, helping to identify stability issues, polymorphism, and other solid-state characteristics that can cause manufacturing problems. [44] |

| Dynamic Vapor Sorption (DVS) | Measures how materials absorb and desorb moisture, which is critical for understanding the hygroscopicity and physical stability of APIs and formulations during development and storage. [44] |

| Laser Diffraction | Analyzes particle size distribution, a key parameter in troubleshooting tableting, compaction, and flowability issues in solid dosage form manufacturing. [44] |

| Raman Spectroscopy | Provides chemical and structural information about materials. It is used for identifying components, monitoring reactions, and detecting crystallization or contamination in complex mixtures. [44] |

| X-Ray Powder Diffraction (XRPD) | Determines the crystallographic structure of a material. Essential for identifying polymorphs in active pharmaceutical ingredients (APIs), which can significantly impact drug solubility and bioavailability. [44] |

Leveraging Multidisciplinary Design Optimization (MDO) for Team Coordination

Frequently Asked Questions (FAQs)

Q1: What is the core value of MDO for research team coordination, beyond computational automation? The greatest value of MDO often lies in the upfront process of problem formulation rather than in automated optimization alone. This process involves clarifying interdisciplinary relationships by identifying key variables, which provides a clear coordination roadmap before committing significant resources. It maps interdependencies, defines shared variables, and aligns coordination strategies with how teams actually work, preventing the pitfalls of siloed thinking and costly rework [45].

Q2: What are the main architectural strategies for MDO, and how do I choose between them? MDO architectures represent different trade-offs between computational efficiency and team autonomy. The strategic choice is between centralized efficiency and distributed flexibility [45].

- Centralized Approaches (e.g., All-at-Once, Simultaneous Analysis and Design): These reduce computational inefficiency but require tighter organizational control and access to all disciplinary models simultaneously.

- Distributed Approaches (e.g., Individual Disciplinary Feasible, Collaborative Optimization): These preserve team autonomy and data privacy but come at the cost of higher coordination overhead and computational iteration between teams [45].

Q3: Our team struggles with late-stage integration problems. How can MDO help? MDO directly addresses the "Throw-It-Over-The-Wall" problem common in sequential workflows. By establishing a unified optimization framework that connects models from every discipline from the start, MDO allows you to catch interdisciplinary conflicts early, before they explode during integration. This reduces iteration loops and the dreaded late-stage rework, as design decisions are grounded in full-system reality from day one [46].

Q4: What are the critical variable types we need to define to implement MDO? Clearly defining three key variable types is central to the MDO problem formulation process [45]:

- Design Variables: Parameters that each team directly controls and can adjust.

- Coupling Variables: Information that is shared between teams, representing the interdisciplinary dependencies.

- Response Variables: The output that each team produces from its analysis or experiments.

Troubleshooting Guides

Issue 1: Failure to Achieve Interdisciplinary Feasibility

Problem: Disciplines are optimizing for their local objectives, but their solutions are incompatible when brought together. The coupled variables do not converge, leading to an infeasible overall system design.

Solution:

- Verify Coupling Variable Identification: Ensure all parameters shared between disciplines (e.g., the output of one team's model that becomes an input for another's) are explicitly identified and defined as coupling variables [45].

- Check Architecture Fit: Your MDO architecture might be inappropriate. If using a distributed method like Collaborative Optimization (CO), confirm that the system-level optimizer is properly reconciling discrepancies in the shared variables. For highly coupled problems, a more centralized architecture might be necessary to enforce feasibility [45].

- Implement Convergence Monitoring: Introduce a formal process to track the values of coupling variables across optimization iterations. This helps identify which specific variables are failing to converge and which teams are involved in the deadlock.

Issue 2: High Computational Cost and Slow Iteration

Problem: Disciplinary analyses (e.g., complex simulations, wet-lab experiments) are so time-consuming or expensive that running the full MDO process is impractical.

Solution:

- Develop Surrogate Models: Replace high-fidelity, computationally intensive disciplinary models with faster, approximate surrogate models (also called metamodels). These can be built using data from a designed set of experiments [46] [47].