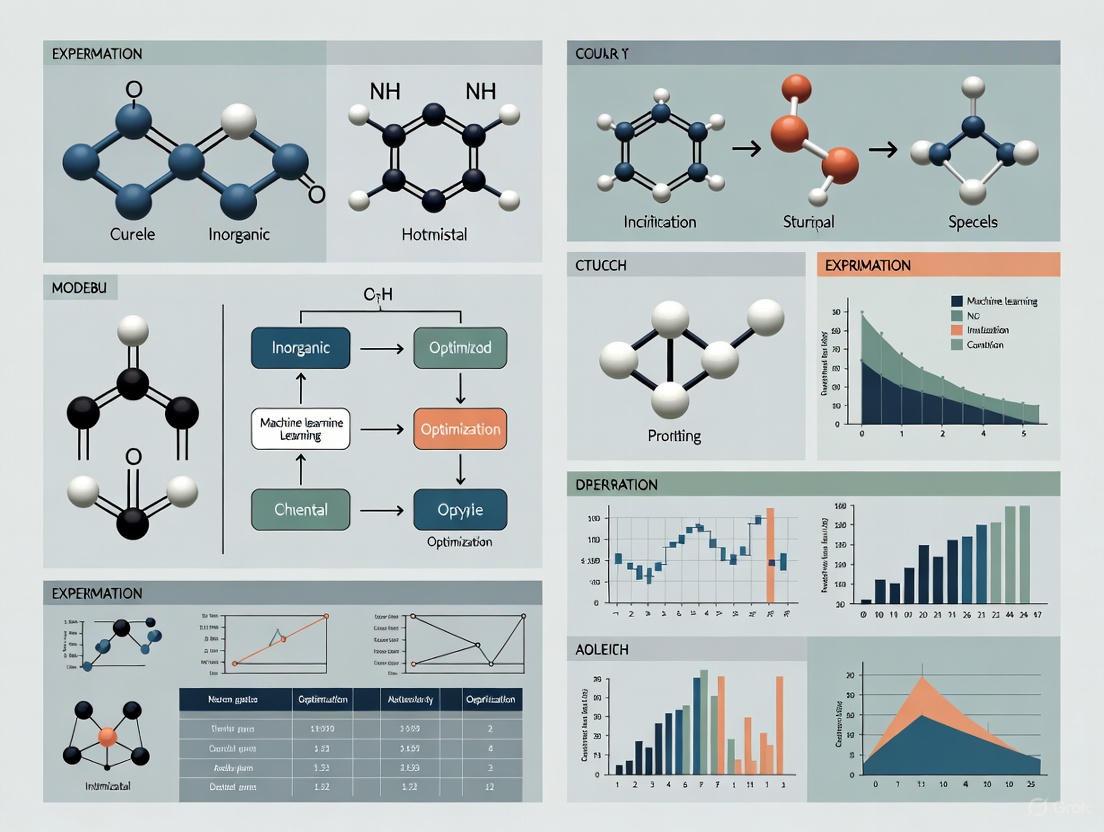

Optimizing Experimental Conditions in Machine Learning for Drug Discovery: A Guide for Researchers

This article provides a comprehensive guide for researchers and drug development professionals on optimizing experimental conditions in machine learning.

Optimizing Experimental Conditions in Machine Learning for Drug Discovery: A Guide for Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing experimental conditions in machine learning. It covers foundational principles, advanced methodological applications, practical troubleshooting for common challenges, and rigorous validation techniques. By synthesizing current best practices and real-world case studies, this resource aims to accelerate the development of robust, efficient, and reliable ML-driven experiments in biomedical research, ultimately reducing development timelines and costs while improving predictive accuracy.

Core Principles: Why Optimization is Crucial for ML in Drug Discovery

Troubleshooting Guides

Guide: Addressing High Experimental Costs and Resource Use

Problem: Experimental costs are exceeding budget, driven by high reagent use and inefficient designs.

Solution: Implement Design of Experiments (DOE) to replace One-Factor-at-a-Time (OFAT) approaches.

- Step 1: Identify all potential factors and responses for your assay or process.

- Step 2: Choose a screening design (e.g., fractional factorial) to identify the most influential factors with a minimal number of experimental runs [1].

- Step 3: Use a response surface methodology (e.g., D-optimal design) to model interactions and find an optimal set of conditions [1].

- Step 4: Conduct a robustness test to determine how sensitive your optimized process is to small variations in factor levels [1].

Expected Outcome: Significantly reduced experimental runs and reagent consumption. Case studies show DOE can use 6 times fewer wells than a full factorial design and cut expensive reagent use by half while maintaining quality [1].

Guide: Overcoming Poor Clinical Trial Efficiency

Problem: Clinical trials are plagued by slow patient recruitment, high costs, and operational delays.

Solution: Leverage AI-driven tools and optimized operational models.

- Step 1: Utilize AI to analyze Electronic Health Records (EHRs) and real-world data to identify and pre-screen eligible patients, especially for rare diseases [2].

- Step 2: Implement AI-powered platforms to design adaptive clinical trials that can modify dosage or patient population mid-stream based on interim results [2].

- Step 3: Adopt tech-enabled Functional Service Provider (FSP) models. These partners provide specialized resources and technology (like automated data management) to reduce database lock times and manual effort [3].

- Step 4: Ensure complete data visibility and fluid data sharing with Contract Research Organizations (CROs) to enable real-time study adjustments [4].

Expected Outcome: Faster patient recruitment, reduced trial duration, and lower operational costs. Sponsors using FSP models have reported over 30% cost reductions in complex trial areas [3].

Frequently Asked Questions (FAQs)

FAQ 1: How can AI and Machine Learning (ML) realistically reduce drug discovery timelines?

AI and ML accelerate drug discovery by predicting molecular behavior, generating novel drug candidates, and repurposing existing drugs. For instance, AI platforms have designed a novel drug candidate for idiopathic pulmonary fibrosis in just 18 months, a process that traditionally takes many years [2]. ML models can also predict binding affinities and physicochemical properties of molecules, drastically shortening the identification of promising drug candidates [5] [2].

FAQ 2: Our R&D productivity is declining despite increased spending. What strategic shifts can help?

The industry faces a core challenge: R&D investment is at record levels, but success rates are falling. The probability of success for a Phase 1 drug has dropped to 6.7% [6]. To counter this:

- Focus on "Right-to-Win": Strategically assess portfolios to focus on areas where you have a true competitive advantage and can build leading market positions [6].

- Data-Driven Trial Design: Design clinical trials as critical experiments with clear go/no-go criteria, rather than exploratory missions. Use AI to optimize trial designs for a higher likelihood of success [6].

- Process Excellence: Standardize and simplify data and content workflows across clinical, regulatory, and safety functions to eliminate manual efforts and inconsistencies [4].

FAQ 3: What is the regulatory stance on using AI in drug development?

The FDA recognizes the increased use of AI and is developing a risk-based regulatory framework to promote innovation while ensuring safety and efficacy. The Center for Drug Evaluation and Research (CDER) has an AI Council to oversee its activities and policy. For sponsors, it is crucial to follow FDA draft guidance, such as "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision Making for Drug and Biological Products" [7]. The FDA's experience with over 500 submissions containing AI components from 2016 to 2023 informs this evolving guidance [7].

FAQ 4: We have limited data for a new target. How can we optimize experiments effectively?

For scenarios with limited prior knowledge, a sequential DOE approach is highly effective:

- Begin with a highly fractional factorial design to screen a wide range of factors with a minimal number of runs.

- Use the results to identify key drivers.

- Follow with an optimization design focused only on those critical factors. A real-world example screened 22 factors in only 320 runs—a task that would have required millions of runs with a full factorial approach [1].

Data Presentation: R&D Cost and Efficiency Metrics

Table 1: Quantitative Data on R&D Challenges and Efficiency Gains

| Metric | Industry Challenge / Benchmark | Source |

|---|---|---|

| Average Phase 1 Success Rate | 6.7% (2024) | [6] |

| Internal Rate of Return (IRR) for R&D | 1.2% (2022) | [1] |

| Capitalized Pre-launch R&D Cost | $161M - $4.54B per new drug | [1] |

| DOE Efficiency Gain | 6x fewer runs vs. full factorial | [1] |

| AI-Driven Candidate Design | 18 months for a novel drug candidate | [2] |

| FSP Model Cost Reduction | >30% in complex trials (e.g., rare diseases) | [3] |

| ML Prototype Time Prediction | >87% accuracy, <1 day average error | [8] |

Experimental Protocols

Protocol: AI-Augmented Virtual Screening for Hit Identification

Objective: To rapidly identify potential drug candidates from large chemical libraries using AI-based virtual screening.

Methodology:

- Data Curation: Compile a library of known active and inactive compounds against your target from public databases (e.g., ChEMBL, PubChem). Annotate compounds with relevant physicochemical descriptors.

- Model Training: Train a deep learning classifier (e.g., a Convolutional Neural Network) to distinguish between active and inactive molecules based on their structural features [2].

- Virtual Screening: Apply the trained model to screen an in-house or commercial virtual library of millions of compounds. The model will rank compounds based on their predicted probability of activity.

- Post-Screen Analysis: Select the top-ranking compounds for further analysis. Use additional AI tools to predict ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties to prioritize the most promising leads for experimental validation [5] [2].

Significance: This methodology can identify drug candidates in days, as demonstrated by platforms that found candidates for Ebola in less than a day, compared to months or years with traditional High-Throughput Screening (HTS) [2].

Protocol: Design of Experiments (DOE) for Cell Culture Media Optimization

Objective: To optimize a cell culture media formulation for maximum yield while minimizing the cost of expensive components.

Methodology:

- Factor Screening:

- Input: Select factors for screening (e.g., concentrations of growth factors, cytokines, glucose, lipids).

- Experimental Design: Use a fractional factorial design (e.g., a Plackett-Burman design) to investigate a wide range of factors with a minimal number of experimental runs (e.g., 22 factors in 320 runs) [1].

- Response: Measure cell density or viability.

- Output: Identify the 3-5 most critical factors influencing yield.

- Optimization:

- Input: The critical factors identified in the screening phase.

- Experimental Design: Use a response surface methodology (e.g., Central Composite Design or a D-optimal design) to model the complex interactions between these factors and find the optimal concentration levels [1].

- Response: Measure cell yield and quality.

- Output: A predictive model that identifies peak conditions for yield and cost reduction.

- Robustness Testing:

- Input: The optimized factor levels.

- Experimental Design: Use a small set of experiments to vary the optimal levels slightly (e.g., ±10%) to test the process's sensitivity.

- Response: Measure yield consistency.

- Output: Verification that the process remains effective despite minor variations, a key requirement for regulatory approval [1].

Significance: This protocol can reduce media costs "by an order of magnitude" and increase cellular yield, turning a previously untenable process into a commercially viable one [1].

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Research Reagents and Materials for Optimized Experimentation

| Item | Function | Application Note |

|---|---|---|

| Growth Factors & Cytokines | Signal proteins that regulate cell growth, differentiation, and survival. | A major cost driver in mammalian cell culture. DOE can optimize concentrations to halve usage while maintaining yield [1]. |

| AI-Generated Novel Compounds | Novel chemical entities designed by generative AI models to hit specific biological targets. | AI can design new molecules with desired properties, creating candidates not found in existing libraries [5] [2]. |

| Generic Reagents | Non-proprietary buffers, salts, and common chemicals. | Using international non-proprietary name (INN) prescribing for reagents is a policy measure to control costs without compromising quality [9]. |

| Biosimilars & Generics | Biologically similar or chemically identical versions of originator biologics/drugs. | Substitution with generics and biosimilars is a pivotal policy for health systems to manage pharmaceutical expenditure [9]. |

Core Concepts and Experimental Optimization Framework

The integration of Deep Learning (DL), Transfer Learning (TL), and Federated Learning (FL) into research protocols represents a paradigm shift in optimizing experimental conditions. These methodologies directly address critical bottlenecks in data efficiency, privacy, and resource allocation, which is paramount in fields like drug development. The following table outlines the primary function of each paradigm and its role in experimental optimization.

| Paradigm | Primary Function | Role in Experimental Optimization |

|---|---|---|

| Deep Learning (DL) | Uses multi-layered neural networks to learn complex, hierarchical patterns from large-scale datasets. [10] | Provides the foundational model architecture for high-dimensional data analysis and prediction. |

| Transfer Learning (TL) | Leverages knowledge (e.g., pre-trained model weights) from a source domain to improve learning in a target domain with limited data. [10] | Dramatically reduces the data and computational resources required for new experiments by fine-tuning pre-existing models. [10] |

| Federated Learning (FL) | Enables model training across decentralized devices or data sources (e.g., different hospitals) without sharing the raw data itself. [11] [12] | Allows for collaborative experimentation on sensitive datasets while preserving data privacy and addressing data sovereignty concerns. [11] |

| Federated Transfer Learning (FTL) | Combines FL and TL to collaboratively train models across parties where features and data distributions may differ. [12] | Optimizes experiments involving multiple, heterogeneous data owners with limited local data, mitigating system and data heterogeneity. [12] |

A principled framework for integrating these paradigms is Bayesian Optimal Experimental Design (BOED). BOED uses probabilistic models to identify experimental designs expected to yield the most informative data, thereby maximizing the value of each experiment. It is particularly powerful for complex models where scientific intuition may be insufficient. [13] [14]

- Utility: BOED formalizes the search for optimal experimental parameters (e.g., stimulus selection, measurement timing) by framing it as an optimization problem, maximizing a utility function such as expected information gain. [13]

- Application to ML Paradigms: BOED can guide which data points to acquire for fine-tuning in TL, or determine the optimal frequency and aggregation methods for model updates in an FL setting. [13] [15]

Detailed Methodologies and Experimental Protocols

Protocol 1: Implementing Transfer Learning for Limited Data Experiments

This protocol is designed for scenarios with scarce labeled data, such as medical image analysis with a small dataset of MRI scans.

Procedure:

- Select a Pre-trained Model: Choose a large-scale pre-trained model (e.g., a CNN trained on ImageNet) as a feature extractor. The early layers of this model capture universal features like edges and textures. [10]

- Freeze Feature Extractor: Keep the weights of the pre-trained model's initial layers frozen to preserve the general knowledge they contain.

- Replace and Train Classifier: Replace the final, task-specific layers of the pre-trained model with new layers tailored to your target task (e.g., classifying MRI scans). Train only these new layers on your limited target dataset. [10]

- Optional Fine-Tuning: If computational resources allow, perform a subsequent round of training with a very low learning rate to fine-tune all layers of the network on the target data, potentially unlocking higher performance.

Protocol 2: Deploying Federated Learning for Collaborative Research

This protocol enables multiple institutions (e.g., in a drug discovery consortium) to collaboratively train a model without centralizing sensitive data.

Procedure:

- Initialize Global Model: A central server initializes a global machine learning model.

- Distribute Model: The server sends the current global model to all participating client devices or institutions.

- Local Training: Each client trains the model on its local, private dataset. No raw data leaves the client's device.

- Transmit Model Updates: Clients send only the updated model weights (or gradients) back to the central server.

- Aggregate Updates: The server aggregates these updates (e.g., using Federated Averaging) to produce an improved global model.

- Repeat: Steps 2-5 are repeated for multiple communication rounds until the model converges. [11] [12]

Protocol 3: Bayesian Optimization for Hyperparameter Tuning

This protocol efficiently finds the optimal hyperparameters for your DL, TL, or FL model, minimizing the number of costly training runs.

Procedure:

- Define Search Space: Specify the hyperparameters to optimize (e.g., learning rate, batch size) and their plausible ranges.

- Choose Surrogate Model: Select a probabilistic surrogate model, typically a Gaussian Process, to approximate the objective function (e.g., validation accuracy).

- Select Acquisition Function: Choose an acquisition function (e.g., Expected Improvement) to decide the next hyperparameter set to evaluate by balancing exploration and exploitation.

- Iterate and Update: For each iteration, use the acquisition function to select the next hyperparameter configuration, run the training job, and update the surrogate model with the new result. Continue until a stopping condition is met. [13] [15]

Troubleshooting Guides and FAQs

Federated Learning

Q: Our global federated model is performing poorly due to non-IID (non-Independently and Identically Distributed) data across clients. What can we do?

- A: Non-IID data is a common challenge in FL. Several strategies can help:

- Use Advanced Aggregation Algorithms: Replace simple averaging with algorithms like FedProx or SCAFFOLD, which are explicitly designed to handle data heterogeneity by correcting for local client drift. [12]

- Employ Federated Transfer Learning (FTL): FTL techniques can help align the feature distributions across different clients, mitigating the impact of non-IID data. [12]

- Client Selection: Implement strategic client selection protocols that prioritize clients with more representative data distributions in each round.

Q: Communication bottlenecks are slowing down our federated learning process. How can we reduce communication latency?

- A: To improve communication efficiency:

- Model Compression: Apply techniques like quantization (reducing numerical precision of weights) or pruning (removing insignificant weights) to shrink the size of model updates before transmission. [11]

- Structured Updates: Enforce constraints on the model updates to make them more compressible.

- Increase Local Epochs: Perform more local training epochs on client devices between communication rounds, which reduces the total number of rounds required.

Transfer Learning

Q: My model is overfitting after fine-tuning on a small target dataset. How can I prevent this?

- A: Overfitting is a key risk in transfer learning. Address it by:

- Stronger Regularization: Increase dropout rates, add L1/L2 regularization to the new layers, or use early stopping.

- Data Augmentation: Artificially expand your small target dataset using transformations like rotation, flipping, and scaling for images, or synonym replacement for text.

- Differential Learning Rates: Use a much lower learning rate for the pre-trained layers and a higher one for the newly added classifier layers. This gently fine-tunes the features without destroying them.

Q: The performance of my transferred model is worse than expected. What are the potential causes?

- A: This can occur due to a domain mismatch. If the source domain (e.g., general images) is too dissimilar from your target domain (e.g., medical scans), the pre-trained features may not be relevant.

- Solution: Consider using a model pre-trained on a source domain closer to your target task. Alternatively, employ domain adaptation techniques, a sub-field of TL, to explicitly minimize the discrepancy between the source and target feature distributions. [12]

General Model Performance

Q: My model's performance is poor. How do I determine if the issue is with the data or the model architecture?

- A: Always start by investigating the data. [16] [17]

- Audit Your Data: Check for common data issues:

- Missing Values: Impute or remove samples with missing features. [16]

- Class Imbalance: Check if the data is skewed towards one class and use resampling or data augmentation to re-balance it. [16]

- Outliers: Use visualization tools like box plots to identify and handle outliers. [16]

- Feature Scaling: Ensure all input features are on a similar scale using normalization or standardization. [16]

- Proceed to Model Fixes: After verifying the data, proceed with a systematic approach:

- Feature Selection: Use methods like Univariate Selection, Principal Component Analysis (PCA), or tree-based feature importance to select the most relevant features. [16]

- Model Selection: Try different families of algorithms to find the best fit for your data. [16]

- Hyperparameter Tuning: Systematically search for the optimal hyperparameters using methods like Bayesian Optimization. [16]

- Cross-Validation: Use k-fold cross-validation to ensure your model generalizes well and to check for overfitting/underfitting. [16]

- Audit Your Data: Check for common data issues:

Workflow and System Diagrams

Federated Transfer Learning Workflow

Bayesian Optimal Experimental Design Logic

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and tools essential for implementing the discussed ML paradigms in an experimental research context.

| Item | Function |

|---|---|

| Pre-trained Models (e.g., on ImageNet) | Acts as a source of generalized feature extractors for vision tasks, providing a powerful starting point for Transfer Learning and drastically reducing required training data and time. [10] |

| Federated Learning Framework (e.g., PySyft, Flower) | Software libraries that provide the necessary infrastructure for secure, multi-party model training, including communication protocols and aggregation algorithms. [12] |

| Bayesian Optimization Library (e.g., Ax, BoTorch) | Provides the tools to implement Bayesian Optimal Experimental Design for tasks like hyperparameter tuning and optimal stimulus selection, maximizing information gain from experiments. [13] |

| Simulator Models | A computational model of the scientific phenomenon from which researchers can simulate data. This is a core requirement for applying BOED to complex, likelihood-free models common in cognitive science and biology. [13] |

| Data Augmentation Tools | Functions that generate synthetic training data through transformations (e.g., rotation, noise addition), helping to combat overfitting in data-scarce scenarios like Transfer Learning. [16] |

Core Concepts: Frequently Asked Questions (FAQs)

1. What is Bayesian Optimization, and when should I use it?

Bayesian Optimization (BO) is a powerful strategy for finding the global optimum of black-box functions that are expensive to evaluate and for which derivative information is unavailable [18] [19]. It is best-suited for optimization problems over continuous domains with fewer than 20 dimensions [18]. You should consider using BO in the following situations [20] [21]:

- The objective function is a black-box (e.g., a complex simulation or a physical experiment).

- Each evaluation is costly in terms of time, computational resources, or money.

- The function is noisy.

- The function is multi-modal (has many local optima), making it easy for other methods to get stuck.

- You have a limited budget for the number of function evaluations.

2. How does Bayesian Optimization differ from Grid Search or Random Search?

Unlike Grid Search or Random Search, which do not use past performance to inform future searches, BO uses a probabilistic model to incorporate all previous evaluations. This allows it to intelligently decide which parameter set to test next, dramatically improving search efficiency and reducing the number of expensive function evaluations required [22].

3. What are the core components of the Bayesian Optimization algorithm?

The BO algorithm consists of two fundamental components that work together:

- Surrogate Model: A probabilistic model built from all previous evaluations that approximates the expensive, unknown objective function. The most common choice is a Gaussian Process (GP) [18] [23] [20].

- Acquisition Function: A function that uses the surrogate model's predictions to determine the next most promising point to evaluate by automatically balancing exploration (probing uncertain regions) and exploitation (refining known good regions) [24] [20].

4. What are the most common acquisition functions and how do I choose?

The table below summarizes the most common acquisition functions.

| Acquisition Function | Mathematical Intuition | Best Used For |

|---|---|---|

| Expected Improvement (EI) [23] | Selects the point with the largest expected improvement over the current best value. | General-purpose optimization; offers a good balance between exploration and exploitation [23]. |

| Probability of Improvement (PI) [24] | Selects the point with the highest probability of improving upon the current best value. | Quickly converging to a known good region, but can get stuck in shallow local optima. |

| Upper Confidence Bound (UCB) [25] | Selects the point that maximizes the mean prediction plus a multiple of its standard deviation (uncertainty). | Explicitly controlling the exploration/exploitation trade-off with the β parameter. |

Troubleshooting Common Experimental Problems

Problem 1: The optimization process is converging to a sub-optimal solution (a local optimum).

- Potential Cause: Over-exploitation due to incorrect prior width or inadequate exploration. If the surrogate model's uncertainty is underestimated, the algorithm may over-exploit and miss the global optimum [25].

- Solutions:

- Adjust the prior: Widen the prior of the GP kernel's amplitude to allow the model to consider a broader range of function values [25].

- Tune the acquisition function: For UCB, increase the

βparameter to weight uncertainty more heavily, encouraging more exploration. For EI or PI, use a version that includes a trade-off parameter (likeξorϵ) to promote exploration [24] [21]. - Revisit initial sampling: Ensure your initial set of points (e.g., via Sobol sequences) is sufficiently large and space-filling to build a good initial surrogate model [20].

Problem 2: The optimization is slow, and the time between suggestions is too long.

- Potential Cause: Computational bottleneck in fitting the surrogate model or maximizing the acquisition function. The cost of fitting a Gaussian Process scales cubically

O(n³)with the number of observationsn[19]. - Solutions:

- Use a different surrogate model: For high-dimensional problems (e.g., >20 parameters), consider alternatives like Tree-structured Parzen Estimators (TPE) [22] or the SAASBO algorithm, which is designed for high-dimensional spaces [20].

- Improve acquisition function maximization: Use an efficient numerical optimizer (e.g., L-BFGS) to maximize the acquisition function and confirm it is converging properly [25].

- Implement batched evaluations: Use a batch acquisition function to suggest multiple points for parallel evaluation, amortizing the cost of model fitting [18].

Problem 3: How do I handle experimental constraints in my optimization?

- Solution: Incorporate constraints directly into the BO loop. You can model constraints as separate black-box functions,

g_i(x), that must be non-negative (g_i(x) ≥ 0). The acquisition function is then modified to only suggest points with a high probability of being feasible [20].

Detailed Experimental Protocol: Implementing a Standard BO Loop

This protocol provides a step-by-step methodology for setting up and running a Bayesian Optimization experiment, as commonly implemented in libraries like Ax, BoTorch, and GPyOpt [20].

Objective: Find the input x that minimizes (or maximizes) a costly black-box function f(x).

Materials and Software Requirements

- Programming Environment: Python 3.7 or higher.

- Key Libraries: A BO framework such as

Ax[23],BoTorch,scikit-optimize, orGPyOpt. - Computation: A computer cluster or cloud instance for expensive function evaluations.

Procedure

Define the Search Space:

- Precisely define the feasible region

𝕏for your parameters. This is typically a bounded, continuous space (e.g.,0 ≤ x ≤ 10) or a mixed space of continuous, integer, and categorical parameters.

- Precisely define the feasible region

Initialize with Space-Filling Design:

- Evaluate the objective function

f(x)at an initial set of points{x₁, x₂, ..., xₙ}. Do not use a grid. - Method: Use a quasi-random, low-discrepancy sequence like a Sobol sequence to generate

npoints (a common starting number is 10-20). This ensures the initial points are evenly spread across the search space [20]. - Record the observed values

yᵢ = f(xᵢ) + ε, whereεis observational noise. The set of all initial observations isD_{1:n} = {(xᵢ, yᵢ)}.

- Evaluate the objective function

Begin the Sequential Optimization Loop (Repeat until evaluation budget is exhausted): a. Build the Surrogate Model: * Using the current dataset

D, train a Gaussian Process (GP) surrogate modelM. The GP is defined by a mean function (often set to zero) and a covariance kernel (e.g., the Matérn or RBF kernel) [25] [20]. * The GP will provide a posterior predictive distribution for any newx: a meanμ(x)and varianceσ²(x). b. Calculate the Acquisition Function: * Using the GP posteriorM, compute an acquisition functionα(x)over the entire search space𝕏. A standard choice is Expected Improvement (EI) [23]:EI(x) = E[max(μ(x) - f(x⁺), 0)]wheref(x⁺)is the best-observed value so far. c. Select the Next Evaluation Point: * Find the pointxₙ₊₁that maximizes the acquisition function:xₙ₊₁ = argmax_{x ∈ 𝕏} α(x)This requires solving an auxiliary optimization problem, typically with a standard optimizer like L-BFGS. d. Evaluate the Objective Function: * Query the expensive black-box function at the new point to obtainyₙ₊₁ = f(xₙ₊₁). e. Update the Dataset: * Augment the dataset with the new observation:D = D ∪ {(xₙ₊₁, yₙ₊₁)}.Return the Best Solution:

- After the loop finishes, report the point in the final dataset

Dwith the best objective value,x^{*} = argmax_{(x, y) ∈ D} y.

- After the loop finishes, report the point in the final dataset

Workflow and Signaling Diagrams

Bayesian Optimization Core Workflow

Surrogate Model and Acquisition Function Interaction

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key computational "reagents" and tools required for implementing Bayesian Optimization in an experimental setting, such as drug discovery or materials science.

| Research Reagent / Tool | Function in the Experiment | Key Considerations |

|---|---|---|

| Gaussian Process (GP) Surrogate [18] [20] | Serves as a probabilistic substitute for the expensive true objective function, enabling prediction and uncertainty quantification at unobserved points. | Choice of kernel (e.g., RBF, Matérn) encodes assumptions about function smoothness. Hyperparameters (lengthscale, amplitude) critically affect performance [25]. |

| Expected Improvement (EI) Function [23] | The "decision-maker" that proposes the next experiment by balancing the pursuit of higher performance (exploitation) with reducing uncertainty (exploration). | The most widely used acquisition function due to its good practical performance and intuitive balance [23]. |

| Sobol Sequence Generator [20] | Produces the initial set of experiments. Its low-discrepancy property ensures the parameter space is uniformly and efficiently sampled before the sequential BO loop begins. | Superior to random or grid sampling for initial design. The number of initial points should be a multiple of the problem's dimensionality. |

| Numerical Optimizer (e.g., L-BFGS) | An auxiliary solver used to find the global maximum of the acquisition function in each BO cycle. | Inadequate maximization is a common pitfall that can lead to poor performance; the optimizer must be robust [25]. |

| BO Software Framework (e.g., Ax, BoTorch) [23] | Provides a pre-fabricated, tested implementation of the entire BO loop, including GP fitting, acquisition functions, and numerical utilities. | Essential for ensuring experimental reproducibility, reliability, and leveraging state-of-the-art algorithms without building from scratch. |

Frequently Asked Questions (FAQs)

Q1: What are the most critical parameters to define at the start of a biomedical ML optimization problem? The most critical parameters form the core of your optimization problem and fall into three main categories. First, model parameters are the internal variables that the ML algorithm learns from the training data, such as the weights in a neural network [26]. Second, hyperparameters are the configuration variables external to the model that you must set before the training process begins; these include the learning rate, the number of layers in a deep network, or the number of trees in a random forest [27]. Third, and specific to biomedical contexts, are domain parameters, which ensure the model is grounded in biological reality. These include the intended patient population, clinical use conditions, and integration into the clinical workflow [28].

Q2: Which performance metrics should I prioritize for a clinically relevant model? Metric selection must be driven by the model's intended clinical use. You should employ a portfolio of metrics to evaluate different dimensions of performance [29]. For technical performance, standard metrics like Area Under the Curve (AUC), F1 score, and logarithmic loss are common starting points [26]. However, to ensure clinical relevance, you must also define domain-specific metrics that measure clinical validity and utility, such as alignment with established biomedical knowledge and conformity with medical standards [29]. Furthermore, ethical metrics—including fairness (e.g., demographic parity), robustness to data shifts, and explainability—are non-negotiable for trustworthy biomedical AI [29].

Q3: What are the common constraints in biomedical ML, and how can I handle them? Biomedical ML projects face several unique constraints. Regulatory constraints are paramount, requiring adherence to good machine learning practices (GMLP) and standards for data security, such as 21 CFR Part 11, and robust design processes [28]. Data constraints are also frequent; these include limited dataset sizes, the need for training and test sets to be independent, and the requirement that datasets be representative of the intended patient population across factors like race, ethnicity, age, and gender [28]. Finally, resource constraints, such as computational capacity and energy requirements, can limit model complexity [29]. Addressing these often involves trade-offs, for instance, opting for a simpler, more explainable model over a black-box model to meet regulatory and ethical constraints [29].

Q4: How can I prevent my model from learning spurious correlations instead of true biological signals? To mitigate this risk, focus on data quality and model design. Your reference dataset must be well-characterized and clinically relevant to ensure the model learns meaningful features [28]. During model design, actively mitigate known risks like overfitting by using techniques such as regularization and dropout [26] [27]. Furthermore, tailor your model design to the available data and its intended use, and ensure it undergoes performance testing under clinically relevant conditions to validate that its predictions are biologically sound [28].

Troubleshooting Guides

Poor Model Generalization to New Patient Data

Symptoms: The model performs well on the training and internal test sets but shows significantly degraded performance when applied to new data from a different hospital or patient subgroup.

Diagnosis and Solutions:

Check Dataset Representativeness: The training data may not adequately represent the intended patient population.

- Solution: Apply sound statistical principles to create datasets that are representative of the end-user patient population in terms of genetics, race, ethnicity, age, and gender [28].

- Action: Conduct a thorough analysis of your dataset's demographics and clinical characteristics compared to the target population.

Assess Data Dependence: The test set may not be independent of the training data, leading to an overly optimistic performance assessment.

- Solution: Ensure that training and test datasets are independent by eliminating sources of dependence such as the same patient, data acquisition method, or data acquisition site [28].

- Action: Implement rigorous data splitting protocols that partition data at the patient or institution level, not just at the sample level.

Review Model Robustness: The model may be overfitting to noise in the training data.

Unacceptable Performance in Specific Patient Subgroups

Symptoms: The model performs well on average but fails for specific demographic or clinical subgroups, indicating potential bias.

Diagnosis and Solutions:

Audit for Bias: The training data may be skewed, and the model may not have been evaluated on important subgroups.

- Solution: Intentionally test the model's performance on important subgroups defined by race, ethnicity, age, or gender [28].

- Action: Disaggregate your model performance metrics by key demographic and clinical variables to identify performance gaps.

Implement Fairness Metrics: The optimization process may have only targeted overall accuracy.

- Solution: Incorporate ethical metrics like demographic parity or counterfactual fairness directly into your evaluation framework [29].

- Action: During model selection, choose models that not only have high overall accuracy but also minimize performance disparities across subgroups.

Model is a "Black Box" and Lacks Clinical Interpretability

Symptoms: Clinicians are hesitant to trust the model's predictions because the reasoning behind decisions is not transparent.

Diagnosis and Solutions:

Incorporate Explainability (XAI) Methods: The model design may prioritize prediction accuracy over interpretability.

- Solution: Use explainability techniques, both intrinsic (e.g., sparse rule sets) and post-hoc (e.g., feature importance scores, class activation maps), to probe the model's decision logic [29] [30].

- Action: Provide users with clear information on the basis for the model's decision-making and its known limitations [28].

Focus on Human-AI Team Performance: The evaluation may be focused solely on the AI model in isolation.

- Solution: Assess the performance of the human and AI as a team, rather than the AI model alone [28].

- Action: Conduct user studies to ensure the model's outputs are presented in a way that enhances, rather than hinders, clinical decision-making.

Key Parameters, Metrics, and Constraints in Biomedical ML Optimization

The following tables summarize core components of defining an optimization problem in biomedical machine learning.

Table 1: Key Parameter Categories in Biomedical ML Optimization

| Parameter Category | Description | Examples |

|---|---|---|

| Model Parameters | Internal variables learned by the model from the training data. | Weights and biases in a neural network [26]. |

| Hyperparameters | External configuration variables set before the training process. | Learning rate, number of hidden layers, number of trees in a random forest, dropout rate [26] [27]. |

| Domain Parameters | Variables that ground the model in the biomedical context and intended use. | Intended patient population, clinical use conditions, integration into clinical workflow [28]. |

Table 2: Core Metrics for Evaluating Trustworthy Biomedical ML

| Metric Category | Purpose | Specific Examples |

|---|---|---|

| Technical Performance | To evaluate the predictive accuracy and robustness of the model. | AUC, F1 Score, Logarithmic Loss, Confusion Matrix, kappa [26]. |

| Ethical & Safety | To ensure the model is fair, robust, and respects privacy. | Fairness (Demographic Parity), Robustness, Privacy Guarantees (e.g., Differential Privacy) [29]. |

| Domain Relevance | To ensure the model is clinically valid and useful. | Clinical Validity, Utility, Alignment with Biomedical Knowledge [29]. |

Table 3: Common Constraints in Biomedical ML Projects

| Constraint Type | Nature of the Limitation | Examples and Mitigations |

|---|---|---|

| Regulatory & Compliance | Legal and quality standards that must be met. | GMLP principles, FDA/EMA regulations (e.g., 21 CFR Part 11), data security and privacy laws (GDPR) [28]. |

| Data Quality & Availability | Limitations stemming from the training and testing data. | Limited dataset size, need for independent train/test sets, representativeness of patient population [26] [28]. |

| Resource & Technical | Computational and practical limits on model development. | Computational budget, energy requirements, model deployment infrastructure [29]. |

| Trade-off Constraints | Inherent tensions between different desirable model qualities. | Accuracy vs. Interpretability, Performance vs. Privacy, Fairness between subgroups (Fairness Impossibility Results) [29]. |

Experimental Workflow and Optimization Trade-offs

Workflow for Defining the Biomedical ML Optimization Problem

The following diagram outlines a high-level workflow for structuring an optimization problem in this domain.

The Trustworthiness Trade-Off Triangle

A fundamental challenge in biomedical ML optimization is navigating the inherent tensions between key objectives.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagent Solutions for Biomedical ML

| Tool / Reagent | Category | Function in the Experiment |

|---|---|---|

| High-Quality, Curated Datasets | Data | The foundational resource for training and testing models. Data must be accurate, complete, and representative of the intended patient population to maximize predictability [26] [28]. |

| Programmatic ML Frameworks | Software | Open-source libraries that provide the algorithms and computational structures for building and training models. Examples include TensorFlow, PyTorch, and Scikit-learn [26] [27]. |

| Optimization Algorithms | Software | The engines that adjust model parameters to minimize error. These range from gradient-based methods (e.g., Adam, SGD) for deep learning to population-based approaches for complex hyperparameter tuning [27]. |

| Performance Evaluation Metrics | Methodology | A defined set of quantitative measures (see Table 2) used to objectively assess the technical, ethical, and domain-specific performance of the model [29] [26]. |

| Reference Standards & Gold Standard Data | Data & Methodology | Independently generated, well-characterized datasets used to validate model performance and generalizability, helping to ensure the model captures true biological signals [26] [28]. |

Advanced Methods and Real-World Applications in Biomedical Research

Adaptive experimentation addresses a fundamental challenge in machine learning research and drug development: optimizing complex systems with vast configuration spaces where each evaluation is resource-intensive and time-consuming. In these "black-box" optimization problems, the relationship between inputs and outputs is not fully understood in advance. Platforms like Ax use machine learning to automate and guide this experimentation process, employing Bayesian optimization to actively propose new configurations for sequential evaluation based on insights gained from previous results. This enables researchers to efficiently identify optimal parameters for everything from AI model hyperparameters to molecular design configurations, significantly accelerating the research lifecycle while managing experimental constraints. [31] [32]

Ax Platform Architecture and Core Components

Ax is designed as a modular, open-source platform for adaptive experimentation. Its architecture centers on three high-level components that manage the optimization process: the Experiment tracks the entire optimization state; the GenerationStrategy contains methodology for producing new arms to try; and the optional Orchestrator conducts full experiments with automatic trial deployment and data fetching. [33]

Data Model and Workflow

The core data model revolves around several key objects that structure how optimization problems are defined and executed:

- SearchSpace: Defines the parameters to be tuned and their allowable values, including range parameters (int or float with bounds), choice parameters (set of values), and fixed parameters (single value). It can also include parameter constraints that define restrictions across parameters. [33]

- OptimizationConfig: Specifies the experiment's goals through one or multiple objectives to be minimized/maximized and optional outcome constraints that place restrictions on how other metrics can be moved. [33]

- Trial: Represents a single evaluation with one or more parameterizations (Arms). Trials progress through statuses from CANDIDATE to RUNNING to COMPLETED/FAILED. [33]

- BatchTrial: A special trial type for evaluating multiple arms jointly when results are subject to nonstationarity, requiring simultaneous deployment. [33]

The following diagram illustrates the core adaptive experimentation workflow in Ax:

Bayesian Optimization Engine

At its core, Ax employs Bayesian optimization as its default algorithm for adaptive experimentation. This approach is particularly effective for balancing exploration (learning how new configurations perform) and exploitation (refining configurations observed to be good). The Bayesian optimization loop follows these steps: [31]

- Evaluate candidate configurations by trying them out and measuring their effects

- Build a surrogate model using the collected data, typically a Gaussian Process (GP) that can make predictions while quantifying uncertainty

- Identify promising configurations using an acquisition function from the Expected Improvement (EI) family

- Repeat until finding an optimal solution or exhausting the experimental budget

This method excels in high-dimensional settings where covering the entire search space through grid or random search becomes exponentially more costly. [31]

FAQs: Common Technical Issues and Solutions

Parameter Configuration Issues

Q: How do I handle parameter constraints in my search space? A: Ax supports linear parameter constraints for numerical parameters (int or float), including order constraints (x1 ≤ x2), sum constraints (x1 + x2 ≤ 1), or weighted sums. However, non-linear parameter constraints are not supported due to challenges in transforming them to the model space. For equality constraints, consider reparameterizing your search space to use inequality constraints instead. For example, if you need x1 + x2 + x3 = 1, define x1 and x2 with the constraint x1 + x2 ≤ 1, then substitute 1 - (x1 + x2) where x3 would have been used. [33]

Q: Why does Ax sometimes suggest parameterizations that violate my constraints? A: Ax predicts constraint violations based on available data, but these predictions aren't always correct, especially early in an experiment when data is limited. Since Ax proposes trials before receiving their actual measurement data, the observed metric values may differ from predictions. As the experiment progresses and more data is collected, the model's predictions of constraint violations become more accurate. [33]

Trial Management Problems

Q: What is the difference between Trial and BatchTrial, and when should I use each? A: Regular Trial contains a single arm and is appropriate for most use cases. BatchTrial contains multiple arms with weights indicating resource allocation and should only be used when arms must be evaluated jointly due to nonstationarity. For cases where multiple arms are evaluated independently (even if concurrently), use multiple single-arm Trials instead, as this allows Ax to select the optimal optimization algorithm. [33]

Q: How do I interpret the different trial statuses (CANDIDATE, STAGED, RUNNING, etc.)? A: Trial statuses represent phases in the experimentation lifecycle: CANDIDATE (newly created, modifiable), STAGED (deployed but not evaluating, relevant for external systems), RUNNING (actively evaluating), COMPLETED (successful evaluation), FAILED (evaluation errors), ABANDONED (manually stopped), and EARLYSTOPPED (stopped based on intermediate data). Trials generated via Client.getnext_trials enter RUNNING status once the method returns. [33]

Optimization and Analysis Challenges

Q: Can Ax handle multiple competing objectives in drug discovery projects? A: Yes, Ax supports multi-objective optimization through objective thresholds that provide reference points for exploring Pareto frontiers. For example, when jointly optimizing drug efficacy and toxicity, you can specify that even high efficacy values with toxicity beyond a feasibility threshold are not part of the Pareto frontier to explore. This helps balance trade-offs between competing objectives common in drug development. [33]

Q: How can I understand the influence of different parameters on my outcomes? A: Ax provides a suite of analysis tools including sensitivity analysis to quantify how much each input parameter contributes to results. You can also generate plots showing the effect of one or two parameters across the input space, visualize trade-offs between different metrics via Pareto frontiers, and access various diagnostic tables. These tools help researchers understand system behavior beyond just identifying optimal configurations. [31]

Troubleshooting Guides

Installation and Setup Issues

| Problem | Solution |

|---|---|

| Installation failures on different operating systems | Use pip3 install ax-platform for Linux. For Mac, first run conda install pytorch -c pytorch followed by pip3 install ax-platform. [34] |

| Missing dependencies or version conflicts | Ensure you have compatible Python (3.7+) and install core dependencies like PyTorch separately before installing Ax. |

| Database connectivity for production storage | Ax supports MySQL for industry-grade experimentation management. Configure connection parameters through Ax storage configuration. [34] |

Optimization Failures and Diagnostics

| Symptom | Possible Causes | Resolution Steps |

|---|---|---|

| Optimization not converging | Search space too large, insufficient trials, or noisy evaluations | Increase trial budget, adjust parameter bounds, implement replication to handle noise |

| Parameter suggestions seem random | Early optimization phase | Ax uses Sobol sequences initially for space-filling design before transitioning to Bayesian optimization |

| Constraint violations frequent | Model uncertainty high or constraints too restrictive | Increase optimization iterations, relax constraints if possible, or adjust acquisition function |

| Performance worse than random search | Misconfigured OptimizationConfig | Verify objective direction (use "-" prefix for minimization) and metric names match those returned in raw_data |

Experimental Protocols and Methodologies

Standard Optimization Procedure

The following diagram details the core ask-tell optimization loop used in Ax:

Step-by-Step Protocol:

Initialize Client and Configure Experiment

Define Optimization Objective

Execute Optimization Loop

Retrieve Optimal Configuration

Advanced Protocol: Multi-Objective Optimization with Constraints

For complex drug discovery scenarios with multiple competing objectives:

Configure Optimization with Multiple Metrics

Implement Early Stopping for Resource Efficiency

- Configure EarlyStoppingStrategy to halt unpromising trials early

- Particularly valuable for lengthy drug property calculations or clinical simulations

The Scientist's Toolkit: Research Reagent Solutions

Essential Ax Components for Drug Discovery Optimization

| Component | Function | Application Example |

|---|---|---|

| RangeParameter | Defines numeric parameters with upper/lower bounds | Molecular weight ranges, concentration levels |

| ChoiceParameter | Defines categorical parameters from a set of options | Functional groups, scaffold types, solvent choices |

| FixedParameter | Sets immutable parameters across all trials | Fixed core structure, invariant experimental conditions |

| ParameterConstraint | Applies linear constraints between parameters | Mass balance in mixtures, structural feasibility rules |

| OptimizationConfig | Specifies objectives and outcome constraints | Optimize efficacy while constraining toxicity |

| Gaussian Process | Surrogate model for predicting metric behavior | Modeling complex parameter-efficacy relationships |

| Expected Improvement | Acquisition function for trial selection | Balancing exploration of new regions vs. exploitation of known promising areas |

Performance Metrics and Comparative Analysis

Optimization Efficiency Benchmarks

| Metric | Random Search | Grid Search | Ax Bayesian Optimization |

|---|---|---|---|

| Trials to convergence (Hartmann6) | 150+ | 100+ | ~20-30 [35] |

| Parameter dimensionality support | Low-Medium | Very Low | High (100+ parameters) [31] |

| Constraint handling capability | Limited | Limited | Comprehensive [33] |

| Parallel trial evaluation | Basic | Limited | Advanced (synchronous & asynchronous) [36] |

Ax provides a robust, production-ready platform for adaptive experimentation that enables drug development researchers to efficiently optimize complex experimental conditions. By leveraging Bayesian optimization and providing comprehensive analysis tools, Ax addresses the core challenges of resource-intensive experimentation in machine learning research and drug discovery. Its modular architecture supports both simple optimization tasks and complex, multi-objective problems with constraints, making it particularly valuable for domains where experimental evaluations are costly or time-consuming. As adaptive experimentation continues to evolve, platforms like Ax will play an increasingly critical role in accelerating scientific discovery through data-driven optimization.

Optimizing Molecular Modeling and Virtual Screening with Deep Learning

Core Concepts & Optimization Challenges

What is the fundamental shift that Deep Learning introduces to traditional virtual screening?

Traditional virtual screening relies on a "search and scoring" framework, where heuristic algorithms explore binding conformations and physics-based or empirical scoring functions evaluate binding strengths. These methods are often simplified to meet the efficiency demands of large-scale screening, which can compromise accuracy [37].

Deep Learning (DL) circumvents this traditional framework. Instead of explicitly searching and scoring, DL models learn to directly predict binding affinities and poses from data. This data-driven approach can enhance both the accuracy and processing speed of virtual screening [37]. For instance, Graph Neural Networks (GNNs) can process molecular graphs to directly predict biological activity, capturing complex, hierarchical structural relationships that are difficult to model with traditional methods [38].

What are the key performance metrics for evaluating Deep Learning-based docking (DLLD) tools?

Evaluating a DLLD tool requires looking at multiple, interconnected metrics. It is crucial to not focus on a single number but to consider the tool's performance across the following aspects [37]:

| Metric Category | Specific Metrics | Description and Significance |

|---|---|---|

| Pose Prediction Accuracy | Success Rate | The primary measure of a model's ability to predict the correct binding conformation of a ligand. |

| Screening Power | AUC (Area Under the Curve), F1 Score | Measures the model's ability to correctly rank active compounds over inactive ones, crucial for hit identification. |

| Computational Efficiency | Screening Time/Cost | The computational time required to screen a library of a given size; vital for practical application to large databases. |

| Physical Plausibility | Structural Checks (e.g., bond lengths, angles) | Assesses whether the generated molecular structures are physically realistic and chemically valid. |

The performance can be striking. For example, the VirtuDockDL pipeline, which uses a GNN, achieved an accuracy of 99%, an F1 score of 0.992, and an AUC of 0.99 on the HER2 dataset, outperforming other tools like DeepChem (89% accuracy) and AutoDock Vina (82% accuracy) [38].

Where do traditional docking and virtual screening most commonly fail, creating a need for DL optimization?

Traditional methods face several persistent challenges that DL aims to address [39]:

- Inaccurate Scoring and Poses: A long-standing issue is the poor correlation between docking scores and actual experimental binding affinity. The top-ranked molecules from a docking screen often include many false positives (non-binders) and miss true binders (false negatives). Furthermore, the predicted binding pose (how the molecule sits in the pocket) is often incorrect, leading to a "garbage in, garbage out" problem for downstream simulations [39].

- The Multiparameter Optimization Problem: A strong binder does not make a drug. Tools must also optimize for absorption, distribution, metabolism, excretion (ADME), toxicity, and synthesizability simultaneously. Traditional pipelines often lack cleanly integrated models for these diverse parameters [39].

- Scalability to Ultra-Large Libraries: Commercially available chemical libraries now contain tens to hundreds of billions of compounds. Conventional docking tools like AutoDock Vina are not designed for this scale, making comprehensive screening computationally prohibitive [39].

Troubleshooting Common Experimental Issues

FAQ: Our DL model for activity prediction achieves high training accuracy but performs poorly on new, unseen data. What could be the cause?

This is a classic sign of overfitting, where your model has memorized the training data instead of learning generalizable patterns.

Troubleshooting Guide:

| Step | Question to Ask | Potential Solution |

|---|---|---|

| 1 | Is our training dataset large and diverse enough? | DL models require extensive data. Consider using large, diverse public datasets like Meta's Open Molecules 2025 (OMol25), which contains over 100 million high-accuracy quantum chemical calculations covering biomolecules, electrolytes, and metal complexes [40]. |

| 2 | Are we using the right molecular representations? | Relying solely on simple fingerprints may not be sufficient. Incorporate graph-based representations that preserve atomic and bond information, or use tools like RDKit to generate a wider array of molecular descriptors and fingerprints to provide a more complete picture to the model [41] [38]. |

| 3 | Is our model architecture overly complex? | Simplify the model by reducing the number of layers or neurons. Introduce or increase the dropout rate, a technique that randomly ignores a subset of neurons during training to prevent co-adaptation, as used in the VirtuDockDL GNN architecture [38]. |

| 4 | Are we properly validating the model? | Ensure you are using a held-out test set that is never used during training for final evaluation. Employ k-fold cross-validation to get a more robust estimate of model performance. |

FAQ: Our DL-predicted binding poses are physically implausible, with incorrect bond lengths or angles. How can we fix this?

This challenge of physical plausibility is common in some DLLD models, which may prioritize success rates over local chemical realism [37].

Troubleshooting Guide:

| Step | Question to Ask | Potential Solution |

|---|---|---|

| 1 | Does the model incorporate physical constraints? | Move towards "conservative-force" models. Models like Meta's eSEN can be fine-tuned to predict conservative forces, which directly correspond to the physical forces acting on atoms, leading to more realistic geometries and better-behaved potential energy surfaces [40]. |

| 2 | Are we using high-quality training data? | The accuracy of the model is bounded by the accuracy of its training data. Utilize datasets like OMol25, which are calculated at high levels of quantum chemical theory (e.g., ωB97M-V/def2-TZVPD), ensuring high-quality ground-truth geometries and energies [40]. |

| 3 | Can we integrate a post-processing check? | Implement a rule-based filtering step to flag or discard poses with bond lengths or angles outside a chemically reasonable range. Tools like RDKit can be used for this validation [41]. |

FAQ: Our virtual screening of a billion-compound library is too slow. How can we accelerate the process?

This is a computational scalability issue. Screening billions of compounds requires a optimized pipeline.

Troubleshooting Guide:

| Step | Question to Ask | Potential Solution |

|---|---|---|

| 1 | Can we use a cheaper pre-filter? | Implement a tiered screening strategy. Use a fast, lightweight ML model (e.g., a pre-trained GNN) to rapidly screen the entire billion-compound library and prioritize a few hundred thousand top candidates. This shortlist can then be processed with a more accurate, but slower, docking or DL tool [38]. |

| 2 | Are we leveraging hardware acceleration? | Ensure your software (e.g., PyTorch Geometric, TensorFlow) is configured to use GPUs. DL inference on GPUs can be orders of magnitude faster than CPU-based traditional docking [27] [38]. |

| 3 | Is our pipeline optimized for throughput? | Use tools designed for batch processing of large datasets. The VirtuDockDL pipeline, for example, is built for automation and can handle large-scale datasets efficiently [38]. |

Detailed Experimental Protocols & Workflows

Protocol: Implementing a Graph Neural Network for Virtual Screening

This protocol outlines the methodology for building a GNN-based screening pipeline, as demonstrated by tools like VirtuDockDL [38].

1. Molecular Data Processing:

- Input: Collect SMILES strings of compounds to be screened.

- Processing: Use the RDKit cheminformatics toolkit to convert SMILES strings into molecular graph objects.

- Graph Representation: Formalize each molecule as a graph ( G=(V, E) ), where ( V ) is the set of nodes (atoms) and ( E ) is the set of edges (bonds) [38].

2. Feature Extraction and Engineering:

- Node Features: Encode atom-level information (e.g., atom type, degree, hybridization).

- Edge Features: Encode bond-level information (e.g., bond type, conjugation).

- Molecular Descriptors: Use RDKit to calculate global physicochemical descriptors such as Molecular Weight (MolWt), Topological Polar Surface Area (TPSA), and the octanol-water partition coefficient (MolLogP) [38].

3. GNN Model Architecture (Example):

- Core Layers: Implement specialized GNN layers (e.g., from PyTorch Geometric) for graph convolution operations.

- Key Operations:

- Linear Transformation & Batch Normalization: Stabilize the learning process.

- Activation Function: Apply a ReLU function to introduce non-linearity.

- Residual Connections: Add the input of a layer to its output to mitigate the vanishing gradient problem in deep networks.

- Dropout: Randomly deactivate a subset of neurons during training to prevent overfitting [38].

- Feature Fusion: The graph-derived features ( h{agg} ) are concatenated with the engineered molecular descriptors ( f{eng} ) and passed through a fully connected layer: ( f{combined} = ReLU(W{combine} \cdot [h{agg}; f{eng}] + b_{combine}) ), where ([;]) denotes concatenation [38].

4. Training and Validation:

- Training: Train the model on a labeled dataset (e.g., compounds with known activity against a target).

- Validation: Rigorously assess the model on a held-out test set using metrics from Table 1 (e.g., AUC, F1 Score).

Protocol: Integrated ML and Docking for Natural Inhibitor Discovery

This protocol summarizes a successful study that combined machine learning and molecular docking to identify natural inhibitors for epilepsy [42].

1. Machine Learning-Based Virtual Screening:

- Model Training: Train multiple machine learning models (e.g., Support Vector Machine, Random Forest) on a dataset of known active and inactive compounds.

- Model Selection: Evaluate and select the best-performing model. In the referenced study, a Random Forest model achieved 93.43% accuracy [42].

- Virtual Screening: Apply the trained model to screen a large library of phytochemicals (e.g., 9,000 compounds) to identify a shortlist of potential active compounds (e.g., 180 hits) [42].

2. Structure-Based Validation:

- Molecular Docking: Take the ML-prioritized hits and dock them into the binding site of the target protein (e.g., S100B) using tools like AutoDock Vina or similar.

- Analysis: Identify compounds that form significant and stable interactions within the binding pocket, confirming their potential as inhibitors [42].

| Resource Name | Type | Function and Application |

|---|---|---|

| RDKit | Software Library | An open-source toolkit for cheminformatics, used for processing SMILES strings, calculating molecular descriptors, generating fingerprints, and creating molecular graphs for DL models [41] [38]. |

| PyTorch Geometric | Software Library | A library built upon PyTorch specifically for deep learning on graphs and irregular structures. Essential for building and training GNNs for molecular data [38]. |

| OMol25 (Open Molecules 2025) | Dataset | A massive dataset from Meta FAIR containing over 100 million high-accuracy quantum chemical calculations. Used for pre-training or fine-tuning neural network potentials and property prediction models [40]. |

| VirtuDockDL | Software Pipeline | An automated Python-based pipeline that uses a GNN for virtual screening. It combines ligand- and structure-based screening with deep learning and is designed for user-friendliness and high throughput [38]. |

| eSEN / UMA Models | Pre-trained Models | Neural Network Potentials (NNPs) provided by Meta, pre-trained on the OMol25 dataset. They provide fast and accurate computations of molecular energies and forces, useful for geometry optimization and dynamics [40]. |

| ZINC15 / PubChem | Chemical Database | Public databases containing millions of commercially available compounds. Used for building virtual screening libraries [41]. |

Hyperparameter Tuning for Predictive Models in Toxicity and Efficacy Studies

In the field of computational toxicology and drug development, building predictive models for toxicity and efficacy is a critical task that can significantly accelerate research and reduce costs. Hyperparameter tuning is an essential step in this process, as it helps create models that are both accurate and reliable. This technical support center provides troubleshooting guides and FAQs to help researchers navigate common challenges in optimizing their machine learning experiments.

Frequently Asked Questions (FAQs)

Q1: My model achieves 99% training accuracy but fails on real-world toxicity data. What is the most likely cause?

This is a classic sign of overfitting. The model has likely learned the noise and specific patterns in your training data rather than generalizable relationships. Common causes include:

- Data Leakage: Information from your test set may have inadvertently been used during training, giving the model an unrealistic advantage [43].

- Insufficient Data Preprocessing: Issues like improperly handled missing values (e.g., encoded as zeros) or un-scaled features can mislead the model [44] [43].

- Overly Complex Model: The model architecture may be too complex for the amount of available training data.

Q2: For predicting organ-specific toxicity, which hyperparameter tuning method should I start with to save time and computational resources?

For most scenarios in toxicity prediction, Bayesian Optimization is the recommended starting point. It is more efficient than Grid or Random Search because it builds a probabilistic model of your objective function and intelligently selects the next set of hyperparameters to evaluate based on previous results [45] [46]. This is crucial when using resource-intensive models like deep neural networks on large toxicology datasets from sources like TOXRIC or ChEMBL [47].

Q3: I'm tuning a neural network for molecular toxicity classification. The training process is slow, making extensive tuning impractical. What can I do?

Implement Automated Early Stopping. This technique automatically halts the training of unpromising trials when their performance appears to have plateaued or is worse than other trials [48] [49]. Frameworks like Optuna provide built-in pruning algorithms (e.g., MedianPruner, HyperbandPruner) that can be integrated directly into your training loop to save significant computational time [49].

Q4: How can I ensure my hyperparameter tuning process is reproducible for a scientific publication?

Reproducibility is a cornerstone of scientific research. To ensure your tuning is reproducible:

- Set a Random Seed: Always set the random seed for the random number generators in your code (e.g., in Python, using

random.seed(),numpy.random.seed()). - Use Fixed Splits: Use the same training/validation/test splits for all your experiments.

- Log Everything: Meticulously log the hyperparameters, random seed, data splits, and the resulting performance metrics for every single trial in your optimization study [48].

Troubleshooting Guides

Problem 1: Overfitting During Hyperparameter Tuning

Symptoms: The model performs exceptionally well on the training/validation data used for tuning but shows a significant performance drop on a held-out test set or new experimental data [44].

Solutions:

- Review Data Splits: Double-check your data splitting procedure to ensure there is absolutely no leakage between the training and test sets. For time-series toxicological data, ensure a temporal cutoff is applied to prevent using future data for training [43].

- Increase Regularization: Tune hyperparameters that control model complexity and prevent overfitting. The table below lists key regularization hyperparameters for different model types:

| Model Type | Key Regularization Hyperparameters |

|---|---|

| Deep Neural Networks | Dropout Rate, L1/L2 Regularization Strength [46] |

| Tree-Based Models (e.g., Random Forest) | Maximum Depth, Minimum Samples per Split/Leaf, alpha (for XGBoost) [45] [49] |

| General Models | Regularization parameter C (in SVM, Logistic Regression) [49] |

- Simplify the Model: Reduce the model's capacity by tuning parameters that control its size, such as the number of layers in a neural network or the number of trees in an ensemble.

Problem 2: The Tuning Process is Too Slow

Symptoms: A single model training run takes hours/days, making it impossible to explore a wide hyperparameter space.

Solutions:

- Adopt a Smarter Search Algorithm: Replace exhaustive methods like Grid Search with Bayesian Optimization (e.g., using Optuna or Ray Tune) or at least Random Search to find good hyperparameters with fewer trials [48] [45].

- Implement Pruning/Early Stopping: As mentioned in the FAQs, use frameworks that support automated pruning to terminate underperforming trials early [49].

- Parallelize the Search: Use hyperparameter optimization libraries that support parallel computation. For example, Ray Tune and Optuna can distribute trials across multiple CPUs or machines, drastically reducing the total wall-clock time required [48] [49].

Problem 3: Unstable or Poor Model Performance

Symptoms: High variance in model performance across different training runs or consistently low performance metrics.

Solutions:

- Check Data Quality and Preprocessing: This is a critical first step. Ensure missing values are handled correctly, features are properly scaled, and outliers are addressed. Visualize your data distributions to catch impossible values [44] [43].

- Tune the Learning Rate: The learning rate is often the most critical hyperparameter. If it's too high, the model may diverge; if it's too low, training is slow and may get stuck in a poor local minimum. Use a log-scale to search for the optimal value (e.g., between 1e-5 and 1e-1) [45] [46].

- Use Cross-Validation: Perform hyperparameter tuning using K-Fold Cross-Validation instead of a single train-validation split. This provides a more robust estimate of model performance and reduces the chance of your hyperparameters overfitting to a specific validation set [45].

Experimental Protocols

Protocol 1: Bayesian Optimization for a Toxicity Classification Model

This protocol outlines the steps for performing hyperparameter tuning using Bayesian Optimization with the Optuna framework on a dataset from a source like TOXRIC or ChEMBL [47] [49].

Workflow Diagram:

Methodology:

- Define the Objective Function: This function takes a

trialobject from Optuna as input. Inside the function, you use thetrialobject to suggest values for the hyperparameters you want to optimize. The function then builds and trains the model (e.g., a Random Forest or a Neural Network) using those suggested hyperparameters and returns a performance score (e.g., mean cross-validation accuracy) [49]. - Create a Study and Optimize: A "study" object is created in Optuna, which defines the direction of optimization (maximize or minimize the objective). The

optimizemethod is then called on this study, which runs the Bayesian Optimization loop for a specified number of trials (n_trials). Optuna manages the probabilistic model and decides which hyperparameters to try next [49].

Example Code Snippet (Python using Optuna and Scikit-learn):

Protocol 2: Systematic Evaluation and Failure Analysis

After tuning, a rigorous evaluation is necessary to validate the model's generalizability and identify its weaknesses [43].

Workflow Diagram:

Methodology:

- Held-Out Test Set Evaluation: After selecting the best hyperparameters, retrain your model on the entire training set and evaluate it on a completely untouched test set. This provides the best estimate of its real-world performance [45].

- Failure Analysis: Go beyond aggregate metrics. Manually inspect the cases where your model makes incorrect predictions. Look for patterns: are there specific molecular substructures, toxicity endpoints (e.g., hepatotoxicity vs. cardiotoxicity), or ranges of experimental values where the model consistently fails? [43].

- Iterate: Use the insights from the failure analysis to refine your approach. This might involve collecting more data for the problematic subgroups, engineering new features, or adjusting the hyperparameter space and re-tuning.

The Scientist's Toolkit: Research Reagent Solutions

This table details key digital "reagents" – databases and software tools – essential for building and tuning predictive toxicology models.

| Resource Name | Type | Primary Function in Toxicity Modeling |

|---|---|---|

| TOXRIC [47] | Database | Provides a comprehensive collection of compound toxicity data for training models on various endpoints (acute, chronic, carcinogenicity). |

| ChEMBL [47] | Database | A manually curated database of bioactive molecules with drug-like properties, providing bioactivity and ADMET data for model training. |

| DrugBank [47] | Database | Offers detailed drug data, including chemical structures, targets, and adverse reaction information, useful for feature engineering. |

| Optuna [49] | Software Framework | A hyperparameter optimization framework that simplifies the implementation of Bayesian Optimization and provides efficient sampling and pruning algorithms. |

| Ray Tune [48] | Software Library | A scalable library for hyperparameter tuning that supports distributed computing and integrates with various optimization algorithms and ML frameworks. |

| Scikit-learn [45] | Software Library | Provides implementations of standard ML models, GridSearchCV, and RandomSearchCV, serving as a foundational tool for building and tuning models. |

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

FAQ 1: How can AI/ML models predict solubilization technologies and optimize drug-excipient interactions? AI and machine learning (ML) models are trained on large datasets of molecular structures and their known physicochemical properties. These models can predict the solubility of new drug candidates and identify the most effective solubilization technologies or excipient combinations. This reduces reliance on traditional trial-and-error methods in formulation development, significantly accelerating early-stage research [50].

FAQ 2: What is the role of a "digital twin" in preclinical evaluation, and how does it accelerate research? A digital twin is a virtual model of a biological system, such as an organ, trained on multi-modal data. In preclinical evaluation, it acts as a personalized digital control arm by accurately forecasting organ function and generating the counterfactual outcome (the untreated effects). This enables a powerful paired statistical analysis, allowing for direct comparison between an observed treatment and the digital twin-generated outcome within the same organ. This method can reveal therapeutic effects missed by traditional studies and is designed to accelerate drug discovery by reducing the required study size [50].

FAQ 3: What are the primary barriers to wider AI adoption in the pharmaceutical industry? Key barriers include evolving regulatory guidance and the need to control specific risks associated with AI models. Regulatory approaches are centering on a risk assessment that evaluates how the AI model's behavior impacts the final drug product's quality, safety, and efficiency for the patient. For regulated bioanalysis, controls must be in place to prevent the risk of hallucination (the creation of data not present in the source), requiring robust audit trails to ensure compliance [50].

Troubleshooting Common Experimental Issues