Mastering Inorganic Chemical Analysis: 2025 Guide to Techniques, Troubleshooting, and Validation

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on current inorganic chemical analysis techniques.

Mastering Inorganic Chemical Analysis: 2025 Guide to Techniques, Troubleshooting, and Validation

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on current inorganic chemical analysis techniques. It bridges foundational knowledge with advanced applications, covering core principles, hands-on methodological training, systematic troubleshooting for techniques like ICP-OES and GC, and robust validation strategies using Certified Reference Materials. The content synthesizes information from the latest 2025 symposia, peer-reviewed research, and professional training resources to offer a practical roadmap for enhancing analytical accuracy and efficiency in biomedical and clinical research.

Building Your Analytical Foundation: Core Principles and Emerging Techniques

Fundamental Principles of Combustion Chemistry and Result Interpretation

Combustion, commonly known as burning, is a high-temperature exothermic redox chemical reaction between a fuel (the reductant) and an oxidant, usually atmospheric oxygen, that produces oxidized, often gaseous products in a mixture termed as smoke [1]. This process represents a chemical chain reaction that occurs with the evolution of both heat and light, making it fundamental to numerous applications ranging from energy production and propulsion systems to industrial processes and safety engineering [2] [3]. For researchers in inorganic chemical analysis, understanding combustion principles is crucial for analyzing material transformations, energy release patterns, and emission products across various scientific and industrial contexts.

The essential requirement for combustion to occur involves three main components: a fuel to be burned, a source of oxygen, and a source of heat [3]. Interestingly, while heat is necessary to initiate combustion, it is also a product of the reaction itself, creating a self-sustaining process under appropriate conditions [3]. The original substance consumed in the process is called the fuel, which can exist in solid, liquid, or gaseous states, while the oxidizer is typically oxygen from the air, though other oxidants are possible in specialized applications [1] [3].

Fundamental Chemical Principles

The Combustion Reaction Mechanism

At its core, combustion is an exothermic redox process that follows distinct chemical pathways. The reaction mechanism involves the rapid oxidation of fuel components, resulting in the release of substantial thermal energy. The general form of a hydrocarbon combustion reaction follows this pattern:

Fuel + Oxidizer → Oxidized Products + Heat [2]

For instance, when octane (a primary component of gasoline) undergoes complete combustion, the reaction proceeds as follows:

2C₈H₁₈(l) + 25O₂(g) → 16CO₂(g) + 18H₂O(g) [2]

This balanced equation demonstrates the stoichiometric relationship where the hydrocarbon fuel combines with oxygen to produce carbon dioxide and water vapor as the primary products, with significant heat release throughout the process.

A critical concept in combustion chemistry is activation energy – the initial energy input required to initiate the chemical reaction [2]. This explains why combustible materials like gasoline do not spontaneously ignite when simply exposed to air; they require an initial energy source such as a spark, flame, or sufficient heat to overcome this activation barrier [2]. Once initiated, the exothermic nature of the reaction provides the necessary energy to sustain the process until either the fuel or oxidant is depleted.

Types of Combustion Reactions

Combustion processes are categorized based on their reaction completeness, environmental conditions, and physical characteristics:

Complete Combustion: Occurs with sufficient oxygen supply, allowing the fuel to react completely to produce carbon dioxide and water as the primary products [1]. This represents the ideal combustion scenario from an efficiency perspective.

Incomplete Combustion: Takes place when insufficient oxygen is available, or when the combustion process is quenched prematurely [1]. This results in partially oxidized products such as carbon monoxide, hydrogen, and carbon (soot or ash), which represent both energy inefficiency and environmental pollutants.

Smoldering: A slow, low-temperature, flameless form of combustion sustained by heat evolution when oxygen directly attacks the surface of condensed-phase fuel [1]. This typically incomplete combustion reaction occurs in materials like coal, cellulose, wood, and synthetic foams.

Spontaneous Combustion: Occurs through self-heating followed by thermal runaway when internal exothermic reactions rapidly accelerate to ignition temperatures [1]. Materials like phosphorus can self-ignite at room temperature, while organic compost can generate sufficient heat to reach combustion points.

Turbulent Combustion: Characterized by turbulent flame dynamics that enhance mixing between fuel and oxidizer, making it particularly relevant for industrial applications including gas turbines and internal combustion engines [1].

Experimental Methodologies in Combustion Research

Core Experimental Framework

Combustion research employs specialized methodologies to quantify reaction dynamics, emission profiles, and energy conversion efficiency. The experimental framework typically involves controlled environments where key parameters can be systematically manipulated and measured. Standardized protocols are essential for generating comparable, high-quality data across different research institutions [4].

The development of scientific predictive models represents a significant focus in contemporary combustion research, with experiments serving to validate and refine these models [4]. The systematic storage and management of experimental data through platforms like SciExpeM (Scientific Experiments and Models) enables large-scale analysis of multiple experiments and models, facilitating knowledge extraction and discovery [4]. This approach helps overcome traditional limitations of manual analysis by detecting systematic features or errors in models or data.

Data Acquisition and Measurement Techniques

Modern combustion analysis utilizes sophisticated measurement technologies to capture critical parameters during combustion events:

Laser Diagnostics: Advanced techniques including laser-induced fluorescence (LIF), particle image velocimetry (PIV), and coherent anti-Stokes Raman scattering (CARS) enable non-intrusive measurement of species concentrations, temperature fields, and flow velocities in reacting flows [5]. These optical methods provide high spatial and temporal resolution for analyzing flame structure and dynamics.

Pressure Analysis: Cylinder pressure curves are fundamental data sources in combustion analysis, providing information for calculating heat release rates, combustion timing, and cyclic variations [6]. High-resolution pressure transducers capture data at crank angle resolutions of one degree or finer for accurate characterization.

Emission Spectroscopy: Techniques for quantifying pollutant formation (NOx, CO, soot) during combustion processes provide critical data for environmental impact assessments [5]. These measurements help validate chemical kinetic mechanisms for pollutant formation and destruction.

Temperature Measurement: Both contact (thermocouples) and non-contact (pyrometry, CARS) methods track thermal profiles throughout combustion processes, providing essential data for energy balance calculations [6].

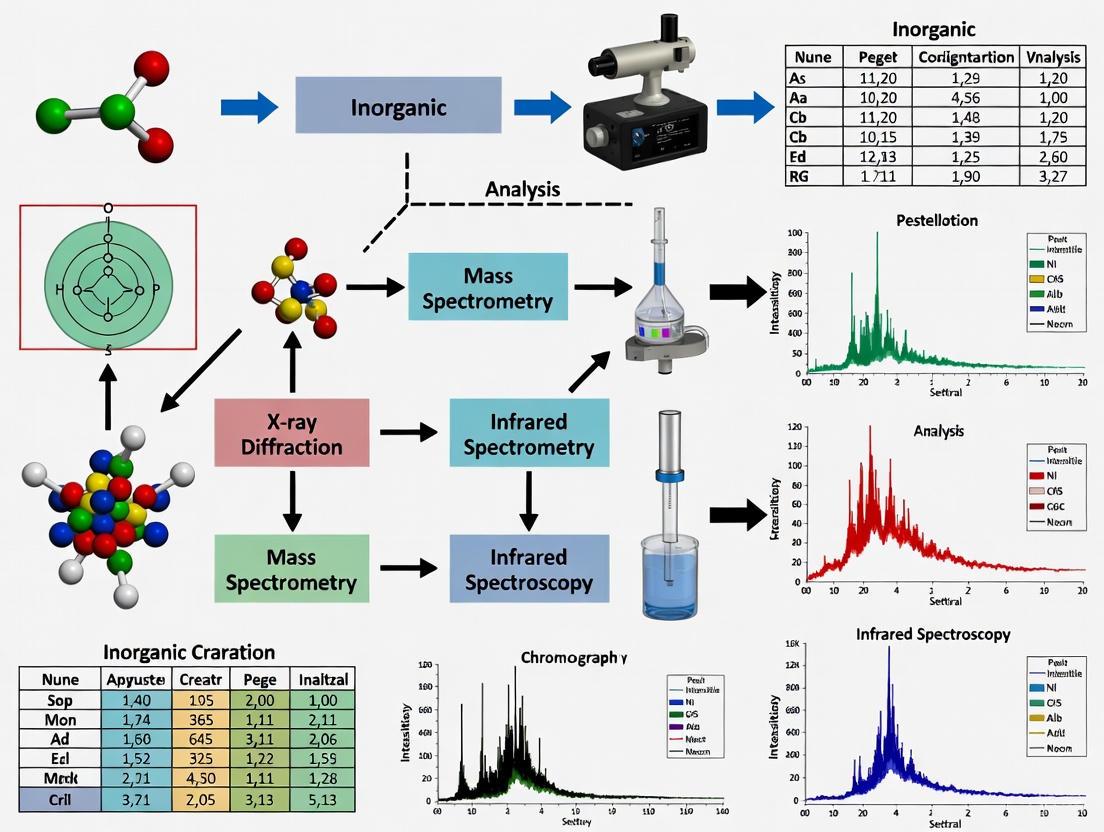

The following workflow diagram illustrates the sequential process of combustion data acquisition and analysis:

Combustion Data Analysis Workflow

Data Interpretation and Analysis Protocols

Combustion Data Classification

Combustion analysis generates diverse data types that require different interpretation approaches. The results from combustion experiments can be logically grouped into direct and indirect categories, each with distinct calculation methodologies and error propagation characteristics [6].

Table 1: Classification of Combustion Analysis Results

| Category | Data Type | Calculation Basis | Example Parameters | Error Sensitivity |

|---|---|---|---|---|

| Direct Results | Raw measured data | Derived directly from raw pressure curves | Maximum pressure, Pressure rise position, Knock detection, Misfiring, Combustion noise, Injection timing | Similar magnitude to signal errors |

| Indirect Results | Computed data | Complex calculations using raw data + additional parameters | Heat release rate, Indicated mean effective pressure (IMEP), Combustion temperature, Burn rate, Energy conversion | Error multiplication (order of magnitude higher) |

Critical Calculation Methodologies

The transformation of raw combustion data into meaningful parameters requires specialized calculation approaches:

Direct Result Calculations extract immediately observable parameters from primary measurement signals. For pressure-based measurements, this includes identifying maximum pressure values and their angular positions, calculating rates of pressure rise, detecting knock through high-frequency oscillations, identifying misfiring cycles, and analyzing combustion noise characteristics [6]. These calculations typically require crank angle resolution of one degree, with higher resolution needed for high-frequency phenomena like knock analysis.

Indirect Result Calculations involve more complex transformations that combine raw data with additional engine parameters and physical models. Key methodologies include:

- Heat Release Analysis: Calculated from the pressure curve using the first law of thermodynamics and requiring accurate determination of top dead center (TDC) and appropriate polytropic exponents [6].

- Indicated Mean Effective Pressure (IMEP): Represents the theoretical constant pressure that would produce the same net work as the actual cycle, highly sensitive to correct TDC determination [6].

- Combustion Temperature: Derived through thermodynamic relationships between pressure, volume, and composition data.

- Burn Rate Analysis: Calculates the rate of fuel mass conversion based on pressure development and thermodynamic relationships.

These indirect calculations are particularly sensitive to correct system parameterization, especially accurate TDC determination, appropriate polytropic exponents for heat release analysis, and proper zero-level correction for pressure signals [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Combustion research requires specialized materials and analytical tools to conduct controlled experiments and accurate measurements. The following table details essential components of the combustion researcher's toolkit:

Table 2: Essential Research Materials for Combustion Experiments

| Category/Reagent | Chemical Formula/Specification | Primary Function | Application Context |

|---|---|---|---|

| Reference Fuels | |||

| Hydrogen | H₂ | High-purity fuel for fundamental flame studies | Laminar flame speed measurements, kinetic mechanism validation |

| Octane | C₈H₁₈ | Primary reference component for gasoline surrogates | Automotive engine research, ignition delay studies |

| Synthetic Air | O₂/N₂ mixture | Controlled oxidizer for laboratory experiments | Fundamental combustion studies without atmospheric variability |

| Oxidizers | |||

| Nitrous Oxide | N₂O | Specialized oxidizer in propellant systems | Rocket combustion studies, high-temperature oxidation processes |

| Analytical Standards | |||

| Carbon Monoxide | CO | Calibration gas for emissions analysis | Sensor calibration, exhaust gas measurement validation |

| Nitrogen Oxides | NO/NO₂ | Reference standards for pollutant analysis | NOx formation studies, emissions control development |

| Catalytic Materials | |||

| Platinum Catalysts | Pt | Oxidation catalyst for emissions control | After-treatment system research, catalytic combustion studies |

| Fire Safety Materials | |||

| Flame Retardants | Various compounds | Materials for fire suppression studies | Fire safety research, combustion inhibition mechanisms |

Advanced Combustion Research Frontiers

Contemporary combustion research extends beyond traditional hydrocarbon fuels to address emerging energy and environmental challenges. Current investigative frontiers include:

Renewable and Biofuels: Detailed chemical kinetic mechanisms for biofuels and other renewable energy carriers, with emphasis on combustion efficiency and emission characteristics [5]. Research focuses on oxidation pathways of biofuels, ammonia, and other sustainable energy vectors.

Pollutant Formation and Reduction: Mechanistic studies of pollutant formation pathways, particularly nitrogen oxides (NOx), soot precursors, and carbon monoxide, toward developing effective reduction strategies [5]. This research directly addresses environmental impact mitigation in combustion systems.

Turbulent Combustion Interaction: Investigation of the complex coupling between turbulence and chemistry in practical combustion devices [5]. Advanced computational models bridge fundamental flame studies with engineering application requirements.

Fire Safety Science: Application of combustion principles to fire dynamics, material flammability, and suppression mechanisms for built environments and wildland interfaces [5]. This research directly informs safety standards and protection systems.

These research domains increasingly rely on advanced diagnostic techniques and computational tools to unravel complex interactions between chemical kinetics, transport phenomena, and system geometries across multiple spatial and temporal scales.

Quality Assurance and Data Validation

Robust quality assurance protocols are essential for generating reliable combustion data. Key considerations include:

Data Quality Management: Automated frameworks like SciExpeM address data quality challenges common in scientific repositories, including experimental errors, misrepresentation issues, data entry mistakes, and insufficient metadata [4]. These systems implement validation procedures to maintain data integrity throughout the research lifecycle.

Uncertainty Quantification: Critical evaluation of measurement uncertainties and their propagation through calculation pathways, particularly for indirect results where initial signal errors can magnify significantly [6]. Proper uncertainty characterization is essential for result interpretation and model validation.

Model Validation Protocols: Systematic comparison of computational model predictions with experimental measurements across a range of operating conditions [4]. This process identifies model limitations and guides refinement efforts to improve predictive capabilities.

The integration of these quality assurance measures throughout the experimental process ensures the generation of reliable, reproducible data that effectively supports combustion research and development objectives while maintaining scientific rigor.

Elemental analysis is a fundamental tool in scientific research and industrial quality control, providing critical data on the chemical composition of a vast range of materials. For researchers and drug development professionals, selecting the appropriate analytical technique is paramount for obtaining accurate, reliable, and relevant data. This guide provides an in-depth examination of three core instrumental techniques: Organic Elemental Analyzers, Inductively Coupled Plasma Optical Emission Spectrometry (ICP-OES), and Inductively Coupled Plasma Mass Spectrometry (ICP-MS). Each technique possesses distinct operating principles, capabilities, and ideal application areas. Organic Elemental Analyzers are specialized for the rapid determination of key non-metallic elements in organic matrices. In contrast, ICP-OES and ICP-MS are plasma-based techniques renowned for their ability to perform multi-element analysis at trace and ultra-trace levels across diverse sample types, including biological and environmental materials. Understanding the strengths, limitations, and specific methodological requirements of these instruments is essential for effective application in research and development, particularly within regulated environments like pharmaceutical labs where compliance with standards such as ICH Q3D is critical [7] [8] [9]. This whitepaper frames this technical knowledge within the context of building effective training resources for inorganic chemical analysis techniques.

Organic Elemental Analyzers

Principle and Applications

Organic Elemental Analyzers determine the concentrations of key non-metallic elements—primarily carbon (C), hydrogen (H), nitrogen (N), oxygen (O), and sulfur (S)—in organic samples. The analysis is based on the high-temperature combustion principle, where the sample is rapidly combusted in a pure oxygen atmosphere at furnace temperatures exceeding 1,000 °C. This process quantitatively converts the sample into simple gaseous combustion products (e.g., CO₂, H₂O, N₂, SO₂). The resulting gas mixture is separated by specific adsorption columns and swept by an inert carrier gas to a detector, typically a Thermal Conductivity Detector (TCD), for quantification. Modern instruments incorporate features like patented ball valve technology for blank-free sample transfer and Advanced Purge and Trap (APT) technology to handle challenging C:N ratios of up to 12,000:1. These analyzers are designed for high reliability, minimal sample preparation, and secure, unattended 24/7 operation, making them ideal for high-throughput environments [10].

Key Specifications and Training

Sample Requirements and Throughput: These analyzers are designed for solid or liquid organic samples. They require minimal preparation, typically involving precise weighing into small capsules. The analysis is exceptionally fast, providing results for multiple elements in just a few minutes, which enables high sample throughput. Detection Limits: The technique is primarily used for quantitative major component analysis, not ultra-trace detection. Results are typically reported as weight percentages of the measured elements in the sample. To ensure optimal instrument operation and data quality, structured training is essential. Providers like Elementar offer tiered courses, from Level 1 (covering basic software operation, sample preparation, and system readiness assessment) to Level 2 (covering principles of analysis, routine maintenance, and troubleshooting of leaks, blockages, and exhausted chemicals) [11].

ICP-OES and ICP-MS

Fundamental Principles

Both ICP-OES and ICP-MS use an argon inductively coupled plasma as a high-temperature (6000-10000 K) excitation and ionization source. However, they differ fundamentally in their detection mechanisms.

- ICP-OES (Inductively Coupled Plasma Optical Emission Spectrometry): The plasma excites the atoms and ions of the elements present in the sample. As these excited species return to lower energy states, they emit light at characteristic wavelengths. An optical spectrometer disperses this light, and its intensity at specific wavelengths is measured and quantified to determine elemental concentrations [12] [8].

- ICP-MS (Inductively Coupled Plasma Mass Spectrometry): The plasma serves to ionize the atoms in the sample. The resulting ions are then extracted into a mass spectrometer, which separates them based on their mass-to-charge ratio (m/z). A detector counts the number of ions at each specific m/z, providing extremely sensitive quantification and the ability to perform isotopic analysis [12] [13] [8].

Comparative Technical Specifications

The choice between ICP-OES and ICP-MS is primarily driven by required detection limits, sample matrix, and budget, as detailed in the table below.

Table 1: Technical comparison of ICP-OES and ICP-MS

| Parameter | ICP-OES | ICP-MS |

|---|---|---|

| Detection Principle | Measurement of emitted light [12] | Measurement of ion counts by mass [12] |

| Typical Detection Limits | Parts per billion (ppb) to parts per million (ppm) [12] | Parts per trillion (ppt) [12] |

| Dynamic Range | Up to 10^6 [12] | Up to 10^8 [12] |

| Isotopic Analysis | Not possible | Possible [12] |

| Sample Throughput | High, suitable for routine analysis [12] | Generally lower than ICP-OES |

| Tolerance to Dissolved Solids | High (can handle up to ~30% TDS) [14] | Low (typically requires <0.2% TDS) [12] |

| Primary Interferences | Spectral (overlapping emission lines) [12] | Isobaric (overlapping atomic masses) and polyatomic [12] |

| Initial Instrument Cost | Lower [12] | 2–3 times higher than ICP-OES [12] |

| Operational Complexity & Cost | Moderate; easier to operate and maintain [12] | High; requires skilled operators and ultra-pure reagents [12] |

Applications in Research and Industry

The sensitivity and multi-element capabilities of both techniques make them indispensable across numerous fields.

- ICP-OES Applications: Ideal for applications where high throughput and robust analysis of complex matrices are more critical than ultra-trace detection. Common uses include environmental monitoring (e.g., water quality), food safety, agricultural analysis, metallurgy, and pharmaceutical raw material testing [12] [14].

- ICP-MS Applications: Essential for scenarios demanding the highest sensitivity and isotopic information. It is the gold standard for ultra-trace metal analysis in clinical and toxicological research (e.g., blood, urine), pharmaceutical impurity testing per ICH Q3D guidelines, geochemical and cosmochemical studies, forensic science, and analysis of high-purity materials in the semiconductor industry [12] [7] [9]. Laser Ablation (LA) ICP-MS allows for direct solid microanalysis, which is crucial for geological samples like carbonates [13].

Experimental Protocols and Workflows

Sample Preparation: Microwave Digestion

For accurate ICP-OES and ICP-MS analysis of solid samples, proper digestion is critical to dissolve the sample into a clear aqueous solution and eliminate the organic matrix. Microwave-assisted acid digestion is the preferred modern method.

- Protocol Overview: A representative sample (typically 0.1-0.5 g) is weighed into a clean, chemically inert digestion vessel. Concentrated acids are added—most commonly nitric acid (HNO₃) alone or in combination with hydrochloric (HCl) or hydrogen peroxide (H₂O₂). For challenging matrices like silicates or alloys, hydrofluoric acid (HF) may be required. The sealed vessels are heated in the microwave under a precisely controlled temperature and pressure program. Temperatures range from 180°C for biological tissues to 280°C for refractory materials like ceramics, with hold times of 15-30 minutes [9].

- Innovations and Best Practices: Recent advancements include Single Reaction Chamber (SRC) technology, which allows simultaneous digestion of different sample types in the same run. To control contamination at ultra-trace levels, laboratories employ high-purity reagents, automated acid purification systems (sub-boiling distillation), automated dosing stations, and specialized acid steam cleaning systems for vessels [9].

A Standard Workflow for ICP-MS Analysis of Plant Material

The following workflow diagram outlines the key steps for determining trace metals in a plant material like cannabis, a challenging application due to low regulatory limits and a complex organic matrix [14].

Diagram: Trace Metal Analysis in Plant Material by ICP-MS

Key Experimental Details:

- Digestion Optimization: A high-temperature digestion (e.g., 230°C) is crucial for complete decomposition of the organic matrix, minimizing residual carbon that can cause spectral interferences [14].

- Matrix-Matched Calibration: To ensure accuracy, calibration standards must closely mimic the final digested sample solution. This includes matching the acid concentration and adding key matrix components found in the digest, such as carbon (as potassium hydrogen phthalate) and calcium, to compensate for non-spectral and spectral interferences [14].

- Interference Management: The Collision/Reaction Cell (CRC) in the ICP-MS is used to mitigate polyatomic interferences that would otherwise compromise the accuracy of results for elements like arsenic and lead [14] [7].

Enhancing ICP-OES Sensitivity for Trace Analysis

While ICP-MS offers superior sensitivity, ICP-OES can be a viable alternative for some trace applications when sensitivity is optimized. A key area for improvement is the sample introduction system. Research shows that using a high-efficiency nebulizer (e.g., the OptiMist Vortex), which employs an external impact surface to create a finer aerosol, can enhance ICP-OES sensitivity by approximately a factor of two compared to standard concentric nebulizers. This approach, combined with minimal post-digestion dilution, allows ICP-OES to meet challenging detection limits, such as analyzing toxic heavy metals (As, Cd, Pb, Hg) in cannabis products or high-purity metals for the semiconductor industry [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key consumables and reagents essential for preparing samples for elemental analysis, particularly for ICP-OES and ICP-MS.

Table 2: Essential Research Reagents and Materials for Elemental Analysis

| Item | Function & Importance |

|---|---|

| High-Purity Acids (e.g., HNO₃, HCl) | Primary reagents for sample digestion. Must be trace metal grade to minimize background contamination and achieve low detection limits [9]. |

| Certified Reference Materials (CRMs) | Materials with certified elemental concentrations. Used for method validation and ensuring analytical accuracy [8]. |

| Multi-Element Calibration Standards | Used to establish calibration curves. Commercially available or custom-made from single-element stocks to match the analytical requirements [14]. |

| Internal Standard Solution | A known amount of an element not present in the samples is added to all standards and samples. Used to correct for instrument drift and matrix suppression/enhancement effects, especially in ICP-MS [14]. |

| Ultrapure Water (Type I) | Used for all sample dilutions and preparation of solutions. Essential for maintaining low blanks. |

| Microwave Digestion Vessels | Chemically inert, pressure-rated vessels (often PTFE or PFA) designed for safe and efficient high-temperature/pressure sample digestion [9]. |

| Gas Purification System | Removes impurities and moisture from argon and other gases, ensuring stable plasma operation and preventing detector damage [8]. |

| Automated Liquid Handling System | Improves precision of dilutions/standard preparation, enhances lab safety by reducing analyst exposure to acids, and increases throughput [9]. |

Organic Elemental Analyzers, ICP-OES, and ICP-MS form a complementary suite of powerful techniques for elemental analysis. The choice of instrument is a strategic decision based on analytical requirements, sample type, and operational constraints. Organic Elemental Analyzers provide unmatched speed and efficiency for quantifying major non-metallic components in organic substances. ICP-OES stands out as a robust, cost-effective workhorse for routine multi-element analysis at ppm-ppb levels in complex matrices. ICP-MS is the undisputed champion for ultra-trace (ppt) analysis, isotopic studies, and meeting the most stringent regulatory limits. For researchers and drug development professionals, a deep understanding of these techniques' principles, capabilities, and associated workflows—from sample preparation via microwave digestion to advanced interference management—is fundamental to generating high-quality, reliable data. This knowledge forms the core of effective training and method development in modern analytical laboratories.

Handling and Synthesis of Air- and Moisture-Sensitive Compounds

The handling and synthesis of air- and moisture-sensitive compounds are critical skills in advanced inorganic and organometallic chemistry research. Many reactive species, including catalysts, hydrides, and organometallic complexes, undergo rapid decomposition upon exposure to atmospheric oxygen or moisture, leading to compromised experimental results, failed syntheses, and safety hazards. This guide provides a comprehensive framework for the safe and effective management of these compounds, specifically contextualized for researchers developing and applying inorganic chemical analysis techniques. Mastery of these techniques is foundational for ensuring sample integrity, obtaining reproducible analytical data, and advancing research in drug development and materials science.

Fundamental Principles and Definitions

Understanding the Risks

Compounds are classified as air- or moisture-sensitive if they react chemically with atmospheric oxygen (O₂), water vapor (H₂O), or both. These reactions can manifest as precipitation, color change, gas evolution, or generation of heat (exothermicity). The primary risks include:

- Product Degradation: Reaction with air/moisture alters chemical structure, rendering the compound useless for its intended application, such as catalysis or pharmaceutical development [15].

- Hazard Generation: Reactions can produce flammable gases (e.g., from water-reactive metals), toxic fumes, or cause pressure build-up in sealed containers [16].

- Analytical Interference: Contamination from decomposition products can skew results from sensitive analytical techniques like Fourier Transform Infrared (FTIR) spectroscopy, a key method for inorganic material analysis [17].

Key Quantitative Terms

Familiarity with the following terms is essential for protocol development and documentation [15]:

- Floor Life: The maximum time a Moisture-Sensitive Device (MSD) or compound can be exposed to the ambient factory environment (typically ≤30°C/60% RH) before its integrity is compromised. This concept is directly analogous to the safe exposure time for chemicals outside of a controlled atmosphere.

- Shelf Life: The total time a material can be stored in its properly sealed moisture barrier bag (MBB) without degrading.

- Manufacturer's Exposure Time (MET): The maximum allowable time from the completion of a drying process (e.g., baking) to the final sealing of the package.

Essential Equipment and Reagent Solutions

Successful work with sensitive materials requires a suite of specialized equipment and reagents. The following table details the core components of the researcher's toolkit.

Table 1: Essential Research Reagent Solutions and Equipment for Handling Air- and Moisture-Sensitive Compounds

| Item | Primary Function | Key Specifications & Notes |

|---|---|---|

| Glovebox | Provides an inert atmosphere (typically N₂ or Ar) for handling, weighing, and synthesizing compounds. | Maintains oxygen and moisture levels below 1 ppm; often includes an integrated cold trap and solvent purification system. |

| Schlenk Line | A dual-manifold vacuum/inert gas system for performing reactions, filtrations, and transfers under an inert atmosphere. | Standard glassware includes Schlenk flasks and bombs. Proficiency in technique is critical to prevent air ingress. |

| Moisture Barrier Bag (MBB) | A sealed, low-permeability bag used for storing moisture-sensitive components and chemicals [15] [18]. | Often used with desiccants and Humidity Indicator Cards (HIC). |

| Desiccant | A material that absorbs water vapor from a confined space, maintaining low relative humidity (RH) [15]. | Common types include silica gel, molecular sieves, and calcium chloride. |

| Humidity Indicator Card (HIC) | A card with sensitive dots that change color (e.g., blue to pink) to indicate the relative humidity level inside a sealed package [15]. | Used to verify the dryness of the storage environment before use. |

| Dry Cabinet | An enclosed storage cabinet that actively maintains a low-humidity environment [19] [15]. | Ideal RH for sensitive electronics and chemicals is ≤5% [15]. Can use desiccant or nitrogen purging [19]. |

| ESD-Safe Containers | Bags, trays, and boxes made from conductive or dissipative materials to prevent damage from electrostatic discharge [18]. | Vital for protecting sensitive solid-state electronic and metallorganic compounds. |

| Heat Sealer | A device that creates an airtight seal on Moisture Barrier Bags, ensuring long-term integrity [15]. | A poor seal will drastically reduce the effective shelf life of stored items. |

Storage and Handling Protocols

Quantitative Storage Standards

Adherence to quantitative standards is non-negotiable for maintaining compound stability. The following table, adapted from industry standards for moisture-sensitive devices, provides a critical framework for managing chemical exposure.

Table 2: Moisture Sensitivity Levels (MSLs) and Corresponding Handling Requirements

| MSL | Floor Life at ≤30°C/60% RH | Required Handling Action |

|---|---|---|

| 1 | Unlimited at ≤30°C/85% RH | Standard handling; no special baking required. |

| 2 | 1 Year | Use within specified time. |

| 2a | 4 Weeks | Use within specified time. |

| 3 | 168 Hours | Use within one week after opening sealed package. |

| 4 | 72 Hours | Use within 72 hours after opening sealed package. |

| 5 | 48 Hours | Use within 48 hours after opening sealed package. |

| 5a | 24 Hours | Use within 24 hours after opening sealed package. |

| 6 | Mandatory bake before use | Must be baked prior to use. After baking, must be processed within the time limit specified on the label (e.g., before the next reflow cycle) [15]. |

Step-by-Step Handling and Inspection Protocol

The following workflow ensures the integrity of moisture-sensitive materials from receipt to use. This procedure is vital for maintaining the quality of research samples and precursors.

Title: Moisture-Sensitive Material Inspection Workflow

Detailed Protocol Steps:

- Incoming Quality Inspection: Upon receipt of a sealed Moisture Barrier Bag (MBB), immediately inspect its exterior for any signs of holes, gouges, tears, or punctures. Any compromise of the bag's integrity can lead to component exposure and potential degradation [15]. Also, verify the bag seal date to calculate the remaining shelf life.

- Opening and HIC Verification: Open the MBB in a controlled environment and immediately check the Humidity Indicator Card (HIC). The color of the indicator dots signifies the internal humidity [15]:

- Blue Dots: Indicate the Relative Humidity (RH) is within the safe, dry limit. The material can proceed to use.

- Pink Dots: Indicate the RH has been exceeded. The material must undergo a baking (drying) procedure before use. The specific time and temperature for baking are dictated by the material's sensitivity and package type [15].

- Post-Baking Inspection: After the baking cycle, the components should be inspected again. If the HIC still indicates high humidity, a second baking cycle may be necessary. Components that fail after multiple baking attempts should be rejected and returned to the supplier [15].

Chemical Segregation and Storage Logic

Proper chemical storage is paramount for safety. Incompatible materials stored together can lead to violent reactions. The following diagram outlines the logical segregation strategy for common hazardous chemical classes.

Title: Chemical Segregation Logic for Safe Storage

Key Segregation Rules [16]:

- Acids and Bases: Must be stored separately from each other, as their reaction is highly exothermic.

- Flammables and Oxidizers: Must be strictly segregated. Oxidizers can provide oxygen and dramatically intensify a fire involving flammable materials.

- Flammable Acids (Organic) and Oxidizing Acids (Inorganic): Should be separated from each other.

- Water-Reactive and Pyrophoric Substances: Must be stored away from all water sources and require specialized handling procedures (e.g., using inert atmosphere gloveboxes for pyrophorics) [16].

Synthesis and Analysis Workflow

Executing synthetic procedures and preparing samples for analysis requires a meticulous, integrated approach that combines atmosphere control with standard chemical techniques. The following workflow charts the path from a stable starting material to a characterized, air-sensitive product.

Title: Air-Sensitive Compound Synthesis and Analysis Workflow

Detailed Methodologies:

- Synthesis Setup: All glassware should be thoroughly dried in an oven prior to use. The reaction is set up in a glovebox or on a Schlenk line. The Schlenk line technique involves repeatedly evacuating the flask and refilling it with an inert gas (typically nitrogen or argon) to remove atmospheric contaminants.

- Reaction Monitoring: For reactions in progress, samples can be withdrawn using air-tight syringes under a positive pressure of inert gas. Techniques like thin-layer chromatography (TLC) or in-situ spectroscopy can be used to monitor reaction progression without exposure to air.

- Product Work-up and Purification: Standard techniques like filtration, centrifugation, and crystallization must be adapted. Filtration can be performed using Schlenk fritted filters, and centrifugation can be done with sealed tubes. Recrystallization requires solvents that have been dried and degassed.

- Product Analysis: The analytical technique must be chosen based on the compound's sensitivity.

- FTIR Spectroscopy: This is a powerful tool for inorganic materials, providing information on chemical composition, structure, and phase identification [17]. Samples can be prepared as Nujol mulls between salt plates in a glovebox or in sealed, gas-tight transmission cells.

- X-ray Diffraction (XRD): For single-crystal XRD, a crystal is typically mounted under an inert oil and transferred to the diffractometer's cold stream, which is often under a nitrogen atmosphere.

- NMR Spectroscopy: Air-sensitive NMR samples are prepared in specially designed tubes (J. Young's tap tubes) or standard tubes that are sealed with a septum after being prepared in a glovebox.

The rigorous handling and synthesis of air- and moisture-sensitive compounds form the bedrock of reliable research in inorganic chemistry and drug development. By integrating the precise quantitative standards for storage, the logical frameworks for safe chemical management, and the meticulous experimental workflows outlined in this guide, researchers can ensure the integrity of their compounds from synthesis through analysis. This disciplined approach directly translates to more reproducible analytical data, such as that obtained from FTIR and XRD, and ultimately accelerates the development of new materials and pharmaceutical agents. Proficiency in these techniques is not merely a technical skill but a fundamental component of the research methodology that underpins innovation in the field.

Cinematic Molecular Science via Electron Microscopy and Advanced Gas Sensing Materials

The fields of inorganic chemical analysis are undergoing a revolutionary transformation, driven by the convergence of high-resolution imaging and intelligent sensing technologies. This whitepaper details two pivotal domains—advanced electron microscopy and next-generation gas sensing materials—that are redefining the capabilities of researchers in material science, chemistry, and drug development. These technologies provide unprecedented insights into molecular and atomic structures, enabling a "cinematic" view of processes previously beyond direct observation. For research professionals, mastering these techniques is no longer optional but essential for leading innovation in nanotechnology, semiconductor development, biologics, and environmental monitoring. This guide provides a comprehensive technical foundation, including quantitative market contexts, detailed experimental protocols, and visualization of workflows, serving as a critical training resource for advancing inorganic chemical analysis techniques.

The Electron Microscopy Revolution: Visualizing the Invisible

Market Dynamics and Key Segments

Electron microscopy (EM) has evolved from a specialized imaging tool to a cornerstone of modern analytical science. The global market, valued at US$4.54 billion in 2024, is projected to reach US$10.24 billion by 2034, growing at a compound annual growth rate (CAGR) of 8.52% [20]. This expansion is fueled by escalating demand in life sciences, nanotechnology, and semiconductor industries. The table below summarizes key quantitative market data for strategic planning of research resource allocation.

Table 1: Global Electron Microscopy Market Forecast and Segmental Analysis

| Parameter | 2024-2025 Data | Projected Growth/Forecast |

|---|---|---|

| Overall Market Size | US$4.54B (2024) [20] | US$10.24B by 2034 (CAGR: 8.52%) [20] |

| Leading Product Type (2024) | Scanning Electron Microscopes (SEM) (~41% share) [20] | Transmission Electron Microscopes (TEM) - Fastest growth (2025-2034) [20] |

| Leading Technology (2024) | Conventional Electron Microscopy (~50% share) [20] | Cryo-Electron Microscopy (Cryo-EM) - Fastest growth (2025-2034) [20] |

| Leading Application (2024) | Materials Science & Nanotechnology (~36% share) [20] | Life Sciences & Structural Biology - Fastest growth [20] |

| Leading End User (2024) | Academic & Research Institutes (~38% share) [20] | Pharma & Biotech Companies - Fastest growth [20] |

| Leading Region (2024) | North America (39% share) [20] | Asia Pacific - Fastest growing region [20] |

Key Technical Trends and Innovations

The technological landscape of electron microscopy is being reshaped by several key trends that enhance its capabilities and accessibility.

- AI and Automation Integration: Artificial intelligence is revolutionizing data acquisition and image processing. AI algorithms now enable intelligent adaptive sampling, automated image alignment, noise reduction, and feature recognition, drastically reducing manual intervention and accelerating analysis [20] [21]. For instance, Thermo Fisher Scientific's Krios 5 Cryo-TEM utilizes AI-driven automation to study molecular structures at unprecedented throughput and fidelity [20].

- The Rise of Cryo-Electron Microscopy (Cryo-EM): Cryo-EM has emerged as a transformative technology, particularly in structural biology. It allows for the imaging of biomolecules in their near-native, vitrified state at near-atomic resolution, overcoming the limitations of traditional crystallization methods [20] [21]. This is revolutionizing the study of proteins, viruses, and cellular complexes.

- Advanced 3D Imaging and Volume EM (vEM): Techniques like serial block-face SEM (SBF-SEM) and focused ion beam SEM (FIB-SEM) are enabling the detailed 3D reconstruction of samples, from nanomaterials to entire organelles and neural circuits [20]. This provides volumetric ultrastructural context that 2D imaging cannot capture.

- Correlative Microscopy: There is a growing trend towards linking electron microscopy with other modalities, such as light microscopy (Correlative Light and Electron Microscopy - CLEM). This allows researchers to navigate large samples using light microscopy and then zoom in for high-resolution structural detail with EM, providing a comprehensive view from macro- to nano-scale [20].

Experimental Protocol: Cryo-Electron Microscopy for Protein Structure Determination

The following protocol details the workflow for determining a protein's 3D structure using single-particle Cryo-EM, a cornerstone technique in modern structural biology.

Diagram 1: Cryo-EM analysis workflow for protein structure determination.

1. Protein Purification and Preparation

- Objective: Obtain a homogeneous, monodisperse protein solution at high purity (>95%).

- Procedure:

- Express the target protein in a suitable system (e.g., insect or mammalian cells).

- Purify using affinity (e.g., Ni-NTA for His-tagged proteins), ion-exchange, and size-exclusion chromatography (SEC).

- Use SEC buffer (e.g., 20 mM HEPES pH 7.5, 150 mM NaCl) to ensure buffer compatibility and particle stability. Confirm monodispersity via analytical SEC or dynamic light scattering.

2. Sample Vitrification (Grid Preparation)

- Objective: Rapidly freeze the sample in a thin layer of amorphous ice to preserve native structure.

- Procedure:

- Use a plasma cleaner (e.g., Gatan Solarus) to glow-discharge a holey carbon grid (e.g., Quantifoil R1.2/1.3) to render it hydrophilic.

- Apply 3-4 µL of protein solution (e.g., 0.5-3 mg/mL) to the grid.

- Blot excess liquid with filter paper for 2-5 seconds in an environment of >95% humidity.

- Plunge-freeze the grid rapidly into a liquid ethane/propane mixture cooled by liquid nitrogen using a vitrification device (e.g., Thermo Fisher Scientific Vitrobot).

- Store the grid under liquid nitrogen until data collection.

3. Automated Data Acquisition

- Objective: Collect thousands of high-quality, low-dose micrographs of individual protein particles.

- Procedure:

- Load the grid into a Cryo-TEM (e.g., Thermo Fisher Scientific Krios or Glacios) equipped with a direct electron detector (e.g., Gatan K3) and a energy filter.

- Use software (e.g., SerialEM or EPU) to automate the process.

- Set the microscope to a calibrated magnification (e.g., 105,000x corresponding to a pixel size of ~0.82 Å/pixel).

- Use a low electron dose rate (~1.0 e⁻/Ų/frame) to minimize beam-induced damage.

- Collect movie stacks (e.g., 40 frames per exposure) from multiple, non-overlapping holes.

4. Image Processing and 3D Reconstruction

- Objective: Process the collected movie stacks to compute a high-resolution 3D density map.

- Procedure:

- Pre-processing: Use motion correction (e.g., MotionCor2) to align movie frames and correct for beam-induced motion. Estimate the contrast transfer function (CTF) parameters (e.g., using CTFFIND4 or Gctf).

- Particle Picking: Autopick particles from micrographs using template-based or AI-driven methods (e.g., in cryoSPARC or RELION). Extract ~1-2 million particle images.

- 2D Classification: Perform multiple rounds of 2D classification to remove non-particle images, aggregates, and contaminants, retaining a clean set of particles.

- Initial Model Generation: Generate an initial 3D model ab initio (e.g., in cryoSPARC) or by using a known homologous structure as a reference.

- 3D Heterogeneous Refinement: Separate structural heterogeneity (e.g., different conformations, bound/unbound states) by sorting particles into several 3D classes. Select the most homogeneous classes for high-resolution refinement.

- High-Resolution Reconstruction: Refine the selected particles against the initial model, perform CTF refinement, and Bayesian polishing to obtain a final, high-resolution 3D map. Calculate the global resolution using the Fourier Shell Correlation (FSC=0.143) criterion.

5. Model Building and Validation

- Objective: Build and validate an atomic model into the final EM density map.

- Procedure:

- If a known atomic structure exists, perform rigid-body docking into the map.

- For de novo model building, use software like Coot to trace the polypeptide chain and place amino acid side chains.

- Real-space refine the model against the map using Phenix or ISOLDE.

- Validate the model using metrics such as FSC, map-model correlation, and MolProbity to check for steric clashes and rotamer outliers.

The Scientist's Toolkit: Key Reagents for Electron Microscopy

Table 2: Essential Research Reagents and Materials for Electron Microscopy

| Item | Function/Application | Technical Notes |

|---|---|---|

| Holey Carbon Grids | Support film for samples in TEM/Cryo-EM. | Quantifoil or C-flat grids with defined hole size and spacing are standard for cryo-EM. |

| Cryogenic Storage Dewars | Long-term storage of vitrified grids under liquid nitrogen. | Maintains samples at -196°C to prevent ice crystal formation and radiation damage. |

| Negative Stains (e.g., Uranyl Acetate) | Enhance contrast for conventional TEM of biological samples. | Heavy metal salts scatter electrons; requires careful handling and disposal. |

| Resin Kits (e.g., Epon, Spurr's) | For sample embedding in room-temperature TEM. | Provides structural support for ultra-thin sectioning with a microtome. |

| Cryo-Protectants (e.g., Trehalose) | Additive to buffer to improve particle stability and ice quality during vitrification. | Helps to preserve the native structure of delicate macromolecules. |

| Gold Nanoparticles (e.g., BSA-Gold) | Fiducial markers for tomography for 3D reconstruction. | Provides reference points for aligning tilt series images. |

Advanced Gas Sensing Materials: The Intelligent Nose

Market Dynamics and Material Fundamentals

The gas sensor industry is undergoing a parallel revolution, driven by demands for environmental monitoring, industrial safety, and non-invasive medical diagnostics. The market, valued at USD 2.90 billion in 2023, is expected to grow at a CAGR of 9.5% from 2023 to 2030 [22]. This growth is fueled by the integration of IoT, AI, and nanotechnology. The table below summarizes the core performance metrics and mechanisms of the most prevalent class of gas sensors: Metal-Oxide Semiconductors (MOS).

Table 3: Fundamentals and Performance Metrics of Metal-Oxide Semiconductor (MOS) Gas Sensors

| Parameter | Description | Typical Values/Examples |

|---|---|---|

| Primary Mechanism | Change in electrical resistance upon adsorption/desorption of gas molecules on the material surface [23]. | For n-type MOS (e.g., SnO₂), resistance decreases in reducing gases (e.g., CO, H₂) and increases in oxidizing gases (e.g., O₂, NO₂) [23]. |

| Sensitivity (S) | The ratio of sensor resistance in target gas to that in air (or vice versa) [23]. | S = Rgas/Rair for oxidizing gases; S = Rair/Rgas for reducing gases [23]. |

| Operating Temperature | Temperature range for optimal sensor performance, often requiring external heating [23]. | 200-400°C for pristine n-type MOS (e.g., SnO₂, WO₃) [23]. Doping/composites can lower this. |

| Response/Recovery Time | Time taken for the sensor to reach 90% of its final response upon gas exposure (response) and after gas removal (recovery) [23]. | Target: Seconds to a few minutes for rapid detection. |

| Key Material Strategies | Methods to enhance sensitivity, selectivity, and stability. | Nanostructuring, noble metal doping (Pd, Au), heterojunction formation (e.g., ZnFe₂O₄/SnO₂) [23]. |

Key Technical Trends and Innovations

The field of gas sensing is being reshaped by material science and data-driven innovations.

- Nanomaterials and Novel Sensing Materials: The use of graphene, carbon nanotubes, metal-organic frameworks (MOFs), and nanostructured metal oxides (e.g., SnO₂ nanosheets, WO₃ nanowires) is leading to sensors with dramatically increased surface area, enhanced sensitivity, and faster response times [22] [24] [23].

- Wearable and Flexible Sensors: The integration of sensing materials into flexible substrates like textiles and polymers is enabling a new class of wearable gas sensors for personal health monitoring (e.g., detecting biomarkers in breath) and environmental exposure tracking [24] [25].

- IoT and Intelligent Sensing Networks: Gas sensors are increasingly becoming IoT-enabled nodes that transmit data to the cloud for real-time monitoring, predictive maintenance in industrial settings, and large-scale air quality mapping in smart cities [22] [25].

- AI and Machine Learning for Enhanced Selectivity: A major challenge for MOS sensors is selectivity in complex gas mixtures. AI and machine learning algorithms are now being deployed to analyze complex signal patterns from sensor arrays (e-noses), enabling the accurate identification and quantification of individual gases [22] [25].

Experimental Protocol: Fabrication of a Nanostructured MOS Gas Sensor

This protocol outlines the steps for creating a chemiresistive gas sensor based on palladium-doped tin oxide (Pd-SnO₂) for detecting reducing gases like acetone.

Diagram 2: Fabrication workflow for a nanostructured metal-oxide gas sensor.

1. Synthesis of Pd-SnO₂ Nanomaterial (Hydrothermal Method)

- Objective: To produce SnO₂ nanoparticles doped with palladium to enhance sensitivity and selectivity.

- Procedure:

- Dissolve 2.11 g of SnCl₄·5H₂O in 40 mL of deionized water under magnetic stirring.

- Separately, dissolve an appropriate amount of PdCl₂ (e.g., 1 at% relative to Sn) in 10 mL of DI water with a drop of HCl to aid dissolution.

- Slowly add the PdCl₂ solution to the SnCl₄ solution under vigorous stirring.

- Adjust the pH of the mixed solution to ~10 using aqueous NaOH (1 M), which will result in the formation of a white precipitate.

- Transfer the solution into a 100 mL Teflon-lined stainless-steel autoclave and heat at 180°C for 12 hours.

- Allow the autoclave to cool naturally. Collect the resulting precipitate via centrifugation, wash several times with ethanol and DI water, and dry in an oven at 60°C for 6 hours.

- Finally, calcine the powder in a muffle furnace at 500°C for 2 hours in air to obtain crystalline Pd-SnO₂ nanoparticles.

2. Sensor Substrate Preparation

- Objective: To create a platform with interdigitated electrodes (IDEs) for resistance measurements.

- Procedure:

- Use a standard alumina (Al₂O₃) substrate (e.g., 5 mm x 5 mm) with a prefabricated gold or platinum IDE pattern.

- Clean the substrate sequentially in an ultrasonic bath with acetone, ethanol, and DI water for 10 minutes each, then dry with nitrogen gas.

3. Sensing Film Deposition and Annealing

- Objective: To form a stable, porous film of the sensing material across the electrodes.

- Procedure:

- Prepare a sensing ink by dispersing 10 mg of the synthesized Pd-SnO₂ powder in 1 mL of a 1:1 v/v mixture of DI water and ethanol. Add a drop of Nafion solution (5 wt%) as a binder.

- Sonicate the mixture for 30-60 minutes to form a homogeneous suspension.

- Deposit the ink onto the active area of the IDE substrate using drop-casting or spin-coating.

- Age the deposited film overnight at room temperature.

- Sinter the sensor chip on a hotplate at 400°C for 1 hour to remove the binder and stabilize the film, ensuring good electrical contact with the electrodes.

4. Sensor Testing and Data Acquisition

- Objective: To characterize the sensor's response to target gases (e.g., acetone) under controlled conditions.

- Procedure:

- Place the sensor in a sealed test chamber (e.g., a quartz tube inside a tube furnace) with electrical feedthroughs connected to a digital multimeter or source meter (e.g., Keithley 2450).

- Use mass flow controllers to mix a certified target gas (e.g., 100 ppm acetone in air) with synthetic air to achieve desired concentrations (e.g., 1-100 ppm).

- Set the operating temperature of the sensor using the tube furnace. The optimal temperature (e.g., 300°C for acetone) should be determined experimentally.

- Record the resistance of the sensor (Rair) in synthetic air until a stable baseline is achieved.

- Expose the sensor to the target gas concentration and record the resistance (Rgas) until it stabilizes.

- Purge the chamber with synthetic air and record the resistance until it recovers to the baseline.

- Calculate the sensor response (S) as S = Rair / Rgas for acetone (a reducing gas).

5. Data Analysis and Machine Learning Integration

- Objective: To improve selectivity and analyze complex data from sensor arrays.

- Procedure:

- Collect response data (resistance transients) for multiple gases and concentrations.

- Extract features from the response curves, such as steady-state response, response time, recovery time, and integral of the transient.

- Use these features to train a machine learning model (e.g., a Support Vector Machine or Random Forest classifier) on a labeled dataset to identify unknown gases in a mixture.

The Scientist's Toolkit: Key Materials for Advanced Gas Sensors

Table 4: Essential Research Reagents and Materials for Advanced Gas Sensors

| Item | Function/Application | Technical Notes |

|---|---|---|

| Metal Oxide Precursors | Source material for synthesizing sensing layers. | E.g., SnCl₄, WO₃ powder, Zn(Ac)₂. Purity is critical for reproducible performance. |

| Noble Metal Dopants | Catalysts to enhance sensitivity and selectivity. | Chloride or nitrate salts of Palladium (Pd), Platinum (Pt), Gold (Au). |

| Interdigitated Electrode (IDE) Substrates | Platform for film deposition and electrical measurement. | Alumina substrates with Pt or Au electrodes are standard for high-temperature operation. |

| Flexible Polymer Substrates | Base for wearable and stretchable sensor devices. | Polyimide (PI), Polyethylene Terephthalate (PET), or Polydimethylsiloxane (PDMS). |

| Conductive Inks/Nanomaterials | Active sensing materials and conductive traces. | Dispersions of Graphene, Carbon Nanotubes (CNTs), or MXenes (Ti₃C₂Tₓ) [24] [25]. |

| Mass Flow Controllers (MFCs) | Precisely control gas concentration in test chambers. | Essential for generating accurate and reproducible gas mixtures for sensor calibration. |

The trajectories of electron microscopy and advanced gas sensing are clear: both are moving towards greater integration, intelligence, and accessibility. EM is evolving into an automated, AI-driven platform capable of visualizing dynamic processes at the atomic scale, while gas sensors are becoming distributed, intelligent nodes in a vast IoT network, providing real-time chemical intelligence. For researchers in inorganic chemical analysis, the mastery of these techniques is paramount. The detailed protocols and foundational knowledge provided in this whitepaper serve as a critical resource for training and development, empowering scientists to leverage these cinematic molecular science tools. This will undoubtedly accelerate breakthroughs across drug development, materials engineering, nanotechnology, and environmental science, shaping the future of scientific discovery.

Applied Methodologies: From Sample Preparation to Data Analysis in Practice

Optimizing Sample Preparation to Reduce Analytical Drawbacks

Effective sample preparation is the cornerstone of reliable inorganic chemical analysis. For researchers in drug development and materials science, suboptimal preparation can introduce significant analytical drawbacks, including inaccurate stoichiometry, analyte loss, and poor recovery rates, ultimately compromising data integrity and regulatory compliance. This guide details optimized protocols and methodologies to mitigate these challenges, ensuring that subsequent analysis by techniques such as Inductively Coupled Plasma Mass Spectrometry (ICP-MS) yields precise and accurate results. The procedures are framed within the essential context of building robust training resources for analytical techniques.

Key Parameters in Sample Preparation Optimization

The quality of the final analytical data is directly influenced by several critical parameters during sample preparation. The following table summarizes these factors and their impact.

Table 1: Key Parameters Influencing Digestion Quality and Analytical Outcomes

| Parameter | Optimization Consideration | Impact on Analysis |

|---|---|---|

| Temperature [26] | Controlled heating in microwave digestion systems to safely reach high temperatures. | Ensures complete sample digestion without evaporative loss of volatile analytes. |

| Pressure [26] | Use of sealed vessels to achieve elevated vapor points, with controlled venting. | Prevents analyte loss and allows for safer digestion of complex matrices. |

| Acid Selection & Concentration [26] | Matching the acid matrix to the sample type (e.g., high-carbon materials). | Critical for achieving clear, fully digested solutions and complete trace element recovery. |

| Sample Size [26] | Balancing sample mass to avoid overpressure or incomplete reactions. | Too large a sample can lead to overpressure; too small can hinder detection of low-level analytes. |

| Homogeneity & Distribution | Ensuring uniform distribution of the sample, as in spin-coated polymer films [27]. | Reduces relative standard deviation (RSD) and improves reproducibility. |

Detailed Experimental Protocols

Laser Ablation-ICP-MS for Nanoparticle Stoichiometry

This procedure enables accurate determination of nanoparticle composition with minimal sample quantity.

- Primary Materials: Nanoparticle sample (≈1 mg), appropriate polymeric solution (e.g., in a spin-coating compatible polymer), Si wafer, elemental aqueous stock solutions for matrix-matched standards [27].

- Sample Preparation:

- Dispersion: Disperse approximately 1 mg of the nanoparticle sample into the polymeric solution.

- Spin Coating: Deposit the mixture onto a clean Si wafer using a spin coater to create a thin, uniform polymer film containing evenly distributed nanoparticles.

- Standard Preparation: Prepare matrix-matched calibration standards by mixing elemental stock solutions with the same polymer solution and spin-coating them onto separate Si wafers following an identical procedure [27].

- Analysis & Validation:

- LA-ICP-MS Analysis: Ablate the prepared films using the optimized laser and ICP-MS parameters.

- Validation with RM: Use a reference material of known stoichiometry, such as yttria-doped zirconia ((ZrO₂)₀.₉₂(Y₂O₃)₀.₀₈), to validate the entire procedure. The experimentally determined stoichiometry should agree with the certified values [27].

- Performance Metrics: When thoroughly optimized, this method can achieve a relative standard deviation (RSD) of < 2% for standards and < 3–8% for NP samples, with detection limits below 0.2000 µg/g for all analyzed elements [27].

Microwave Digestion for Trace Metal Analysis

Optimized microwave digestion is crucial for preparing liquid samples for ICP analysis.

- Primary Materials: Microwave digestion system with sealed vessels, high-purity acids (e.g., HNO₃, HCl), representative sample.

- Workflow:

- Sample Weighing: Precisely weigh an optimal sample mass into the digestion vessel. The amount should be small enough to prevent overpressure but sufficient for analyte detection [26].

- Acid Addition: Add the optimized mixture and volume of acids. The specific acid matrix is critical for efficient, complete digestion [26].

- Sealed Digestion: Run the microwave digestion program, which uses sealed vessels to safely reach high temperatures. The internal pressure elevates boiling points, allowing for more effective digestion without evaporative loss [26].

- Controlled Venting: After digestion, the system allows for controlled venting of excess gases to prevent sudden pressure changes and potential analyte loss [26].

- Dilution & Analysis: After cooling, dilute the resulting clear digestate to volume and proceed with ICP-OES or ICP-MS analysis.

- Key Considerations:

The logical relationship and workflow for developing and validating an analytical method, incorporating the above protocols, is outlined below.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of the protocols requires the use of specific, high-quality materials. The following table details key research reagent solutions.

Table 2: Essential Research Reagent Solutions and Materials for Sample Preparation

| Item | Function & Application |

|---|---|

| Matrix-Matched Standards [27] | Calibration standards prepared in a similar matrix to the sample (e.g., polymer film for NPs) to correct for matrix effects and enable accurate quantification. |

| High-Purity Acids [26] | Nitric (HNO₃), hydrochloric (HCl); used to digest samples in microwave systems. High purity is essential to prevent contamination of trace analytes. |

| Polymeric Solution for Spin Coating [27] | A polymer used to disperse and immobilize nanoparticle samples on a substrate (e.g., Si wafer), ensuring uniform distribution for LA-ICP-MS analysis. |

| Silicon Wafer Substrate [27] | Provides a flat, inert surface for depositing uniform thin films of polymer-embedded samples or standards for LA-ICP-MS. |

| Certified Reference Material (CRM) [27] | A reference material of known stoichiometry (e.g., yttria-doped zirconia) used to validate the accuracy of the entire analytical procedure. |

| Sealed Microwave Digestion Vessels [26] | Specialized containers that withstand high temperature and pressure, allowing for complete sample digestion without loss of volatile elements. |

Analytical Method Validation and Quality Control

A validated method is not merely tested but is demonstrably suitable for its intended use [28]. Validation provides evidence that the analytical procedure consistently yields reliable results that can be trusted for product release and regulatory submission.

- Following ICH Q2(R1) Guidelines: The validation process should assess characteristics such as accuracy, precision, specificity, detection limit, quantitation limit, linearity, and range [28]. The acceptable criteria for these parameters should be derived from historical data and justified by product specifications.

- The Importance of Assay Range: The valid assay range of the new method must be capable of "bracketing" the product specifications. For instance, the method must be accurate and precise not only at the specification limits but also well above and below them to reliably detect out-of-specification results [28].

- Accounting for Assay Bias: All analytical procedures, especially biological assays, have a degree of bias. It is critical to estimate this bias through recovery studies. As long as the bias is consistent and understood, release specifications can be adjusted to compensate for it, ensuring correct assessment of product quality [28].

The diagram below illustrates the critical relationship between product specifications, the required method performance, and the instrument's capabilities, which is fundamental to a successful validation.

Inductively Coupled Plasma-Optical Emission Spectroscopy (ICP-OES) has established itself as a cornerstone technique for elemental analysis in inorganic chemical research. The technique provides robust, rapid, multi-element analysis of solutions, with detection limits at part-per-billion (ng/mL) levels or below for most elements and the capability to analyze over 70 elements in a single run. [29] For researchers in drug development and materials science, ICP-OES offers the unique combination of wide dynamic range, excellent sensitivity, and relatively straightforward operation compared to other elemental analysis techniques. [30] The fundamental principle underlying ICP-OES involves using argon plasma operating at temperatures of 6000-10000 K to atomize and excite sample elements, then measuring the characteristic wavelength and intensity of light emitted as electrons return to lower energy states. [31] This emitted light provides both qualitative identification (based on wavelength) and quantitative determination (based on intensity) of elements present in the sample. [31]

Table 1: Key Performance Characteristics of Modern ICP-OES Systems

| Parameter | Typical Range | High-Performance Capability | Significance for Mass Fraction Determination |

|---|---|---|---|

| Detection Limits | ppt to ppb (ng/mL) for most elements [29] | Tens of ppt (pg/mL) for brightly emitting elements (Be, Mg, Ca, Sr, Ba) [29] | Enables trace element quantification in complex matrices |

| Dynamic Linear Range | 3-5 decades for some systems | Up to 8-10 decades with advanced detection [32] | Allows determination of major and trace elements in single run without dilution |

| Short-Term Precision | Typically ~1% RSD or better [33] | <0.2% RSD with high-performance protocols [33] | Essential for high-accuracy mass fraction determination |

| Analysis Time | <1 minute per sample after calibration [29] | Simultaneous multi-element detection [29] | High throughput for quality control and research applications |

The technique's robustness against matrix effects—particularly in radially viewed configurations—makes it particularly valuable for analyzing complex samples encountered in pharmaceutical development and inorganic materials research. [32] While ICP-mass spectrometry (ICP-MS) offers lower detection limits, ICP-OES maintains distinct advantages for applications where its detection limits are sufficient, including lower instrument and maintenance costs, higher tolerance to total dissolved solids (up to 300 g/L NaCl with specialized introduction systems), and reduced susceptibility to severe matrix effects. [29] This technical guide provides a comprehensive framework for implementing ICP-OES specifically for high-accuracy elemental mass fraction determination, with detailed methodologies, validation protocols, and practical considerations for researchers.

Core Principles and Instrumentation

Fundamental Physics and Instrument Components

The analytical capability of ICP-OES stems from fundamental atomic processes occurring within high-temperature argon plasma. When sample aerosol enters the plasma, the extreme energy causes processes including vaporization, atomization, ionization, and excitation. [31] The core physical principle exploited is that excited atoms or ions emit photons of characteristic wavelengths when electrons transition from higher to lower energy states, with the intensity of emitted radiation proportional to the number of atoms/ions of that element. [31] According to Kirchhoff's Law, atoms and ions can only absorb the same energy that they emit, meaning they absorb and emit light at identical wavelengths. [31]

An ICP-OES instrument consists of four essential subsystems that must be properly optimized for high-accuracy work. First, the sample introduction system typically includes a peristaltic pump, nebulizer, and spray chamber, which collectively generate a fine, consistent aerosol from liquid samples. [32] The inductively coupled plasma source, sustained by a radio frequency (RF) generator and argon gas flow, provides the high-temperature environment (6000-10000 K) necessary for efficient atomization and excitation. [30] The wavelength separation system (typically an echelle spectrometer with high-resolution grating) disperses the polychromatic light from the plasma into individual wavelengths. [34] [32] Finally, the detection system (photomultiplier tubes or solid-state CCD/CMOS detectors) measures the intensity at specific wavelengths. [32]

The Critical Role of Resolution

Spectral resolution—defined as the full width at half maximum (FWHM) of an emission line—profoundly impacts analytical capability, particularly for complex matrices. [32] High resolution is essential for separating analyte wavelengths from potentially interfering spectral lines emitted by other elements in the sample, especially for line-rich matrices like rare earth elements, iron, tungsten, or uranium. [34] [32] The benefits of high resolution extend beyond mere interference avoidance; it also improves the signal-to-background ratio (SBR) by reducing the portion of background measured with the peak intensity, which directly enhances detection limits as they are inversely proportional to SBR. [32]

Figure 1: ICP-OES Analytical Workflow

The critical importance of resolution is exemplified in rare earth element analysis, where emission spectra contain numerous closely spaced lines. In one documented case, accurate determination of lanthanum at 333.749 nm in a cerium matrix was impossible with low-resolution ICP-OES (<8 pm) due to incomplete separation from cerium's spectral lines. [34] Only high-resolution instrumentation (<5 pm) achieved sufficient separation to permit accurate quantification at parts-per-million levels. [34] Similarly, lutetium determination at 261.542 nm in gadolinium matrix required high resolution to separate the analyte peak from overlapping matrix spectral features. [34]

Implementing High-Accuracy Methodology

Sample Preparation Protocols

Proper sample preparation is the foundational step for achieving high-accuracy results, as errors introduced at this stage cannot be corrected later in the analytical process. For solid samples, digestion remains the most common preparation method. Recent trends emphasize greener approaches that reduce toxic solvent use and implement microextractions where possible. [35]

Plant Material Digestion Protocol (adapted from recent literature [35]):

- Sample Cleaning: Wash with tap water followed by distilled/deionized water to remove adhering particles.

- Drying: Oven-dry at 50-80°C until constant weight or freeze-dry to preserve volatile elements.

- Communition: Grind dried samples using grinders, blenders, or agate/porcelain mortars to homogeneous powder.

- Sieving: Pass powder through appropriate mesh sieve (typically <150 μm) to ensure uniform particle size.

- Digestion: Weigh 0.2-0.5 g accurately into digestion vessels. Add 5-10 mL nitric acid (HNO₃), potentially with additions of hydrogen peroxide (H₂O₂) or hydrochloric acid (HCl) depending on matrix.

- Microwave Digestion: Program with ramped temperature increase to 150-200°C over 20-30 minutes.

- Post-digestion Processing: Cool, transfer to volumetric flask, make to volume with deionized water. Possible filtration if undigested particles remain.

For high-purity rare earth matrices or specialized materials like NdFeB magnets, sample preparation follows similar principles but with specific considerations. High-purity cerium oxide (CeO₂) and gadolinium oxide (Gd₂O₃) are typically prepared at high concentrations (20-100 g/L) with appropriate dilutions for different impurity elements. [34] NdFeB magnet samples require acid digestion with nitric acid (5 mL HNO₃ for 0.5 g sample) to achieve complete dissolution. [34]

Table 2: Research Reagent Solutions for High-Accuracy ICP-OES

| Reagent/Material | Specification | Function in Analysis | Application Notes |

|---|---|---|---|

| Nitric Acid (HNO₃) | High-purity, trace metal grade | Primary digestion oxidant for organic matrices | Minimizes spectral interferences; forms soluble nitrate salts [35] |

| Hydrogen Peroxide (H₂O₂) | High-purity, 30% | Secondary oxidant in digestion | Enhances organic matter destruction when combined with HNO₃ [35] |

| Single-element Standard Solutions | Certified reference materials (NIST-traceable) | Calibration curve establishment | Spex CertiPrep solutions used in high-purity REE analysis [34] |

| Internal Standard Solution (Sc, Y, or In) | High-purity, mixed or single element | Correction for instrumental drift & matrix effects | Yttrium commonly used when its wavelengths don't overlap with analytes [30] |

| High-Purity Argon Gas | ≥99.996% | Plasma gas and aerosol transport | Sustains stable plasma; lower purity causes instability |

Calibration Strategies for High Accuracy

Calibration methodology selection critically impacts result accuracy, particularly for complex matrices. While external calibration with matrix-matched standards works for many applications, higher-accuracy approaches include:

Standard Addition Method: Particularly valuable for high-purity REE analysis and complex matrices where perfect matrix matching is challenging. [34] This approach involves spiking samples with known concentrations of analytes, which effectively accounts for matrix effects by ensuring standards and samples share identical matrix composition. In practice, multiple aliquots of the sample are spiked with increasing known concentrations of analytes, and the measured signal is plotted against spike concentration. The negative x-intercept corresponds to the original analyte concentration in the sample. This method provided excellent accuracy for rare earth impurity determination in cerium and gadolinium matrices, with spike recoveries confirming method validity. [34]

Common Analyte Internal Standard (CAIS) Method: For achieving ultra-high precision with uncertainties <0.2%, the CAIS method calibrates the remaining effect of varying matrix concentration on the ratio of analyte to internal standard emission intensities. [33] This approach uses two emission lines (typically an atom line and an ion line) from the same element that respond differently to changes in matrix concentration. The reference ratio of these two lines is used to correct analyte signals, significantly reducing matrix-induced errors. [33]

Matrix Matching: When standard addition is impractical due to large sample numbers, careful matrix matching of calibration standards to samples provides a viable alternative. This requires thorough knowledge of the sample matrix composition and preparation of custom calibration standards that mimic this composition as closely as possible.

Optimization of Operational Parameters

Instrument parameters must be systematically optimized to achieve both high sensitivity and robustness—the ability to maintain accuracy despite variations in sample composition. [32] The magnesium ratio (Mg II 280.270 nm/Mg I 285.213 nm intensity ratio) serves as a valuable diagnostic for plasma robustness, with higher ratios (typically >5 for axial view, >8 for radial view) indicating more robust conditions that minimize matrix effects. [32] [33]

Table 3: Operational Parameter Optimization for High-Accuracy Work