Managing Disk Space for Large Basis Set Calculations: A Complete Guide for Computational Researchers

This article provides computational researchers and drug development professionals with comprehensive strategies for managing the substantial disk space requirements of large basis set calculations.

Managing Disk Space for Large Basis Set Calculations: A Complete Guide for Computational Researchers

Abstract

This article provides computational researchers and drug development professionals with comprehensive strategies for managing the substantial disk space requirements of large basis set calculations. Covering foundational concepts through advanced optimization techniques, it explores how basis set selection directly impacts storage needs, presents practical management methodologies, offers troubleshooting for common storage issues, and outlines validation approaches to ensure calculation integrity. By implementing these data management strategies, scientists can maintain efficient workflows while leveraging the higher accuracy of advanced basis sets for more reliable research outcomes in biomedical and clinical applications.

Understanding Basis Sets and Their Storage Impact in Computational Chemistry

What Are Basis Sets? Core Definitions and Their Role in Quantum Chemistry Calculations

Core Definitions: Understanding Basis Sets

In theoretical and computational chemistry, a basis set is a set of functions (called basis functions) that is used to represent the electronic wave function in methods like Hartree-Fock or density-functional theory (DFT). This representation turns the partial differential equations of the quantum chemical model into algebraic equations suitable for efficient implementation on a computer [1].

In practical terms, within the linear combination of atomic orbitals (LCAO) approach, the molecular orbitals (\psii) are constructed as a linear combination of basis functions (\phi\mu):

[ \psii = \sum{\mu} c{\mu i} \phi{\mu} ]

Here, (c_{\mu i}) are the molecular orbital coefficients determined by solving the Schrödinger equation [1]. The basis functions are typically centered on atomic nuclei, and using a finite set of them is a key approximation. Calculations approach the complete basis set (CBS) limit as the finite set is expanded towards an infinite, complete set of functions [1].

The Scientist's Toolkit: Common Types of Basis Functions

While several types of functions exist, Gaussian-type orbitals (GTOs) are by far the most common in modern quantum chemistry software for efficient computation [1] [2].

| Basis Function Type | Key Feature | Primary Use Context |

|---|---|---|

| Slater-type orbitals (STOs) | Better representation of electron density (exponential decay). | Theoretically motivated but computationally difficult [1]. |

| Gaussian-type orbitals (GTOs) | Efficient computation; product of two GTOs is another GTO. | Standard in most quantum chemistry programs [1] [2]. |

| Plane Waves | Natural periodicity. | Predominantly solid-state and periodic systems [1] [2]. |

| Numerical Atomic Orbitals | Defined on a numerical grid. | Specific methods and codes (e.g., ADF) [1]. |

Basis Set Hierarchies and Nomenclature

Basis sets are organized in hierarchies of increasing size and accuracy, which also lead to higher computational cost [1]. The table below summarizes this progression.

| Basis Set Tier | Example Names | Key Characteristics | Impact on Disk Space & Cost |

|---|---|---|---|

| Minimal | STO-3G, STO-4G | One basis function per atomic orbital. Fastest, least accurate. | Lowest disk usage, suitable for initial scans. |

| Split-Valence | 3-21G, 6-31G, 6-311G | Multiple functions for valence electrons. Good balance of cost/accuracy [3]. | Moderate increase in storage. 6-31G* is a common compromise [3]. |

| Polarized | 6-31G, 6-31G(d,p) | Adds functions with higher angular momentum (e.g., d, f) [1]. | Significant increase in file sizes for integrals. |

| Diffuse | 6-31+G, 6-311++G | Adds functions with small exponents for "electron tails." Crucial for anions [1] [3]. | Further increases matrix sizes, especially with ++ for all atoms. |

| Correlation-Consistent | cc-pVDZ, cc-pVTZ, cc-pVQZ | Designed for systematic convergence to CBS limit for correlated methods [1] [4]. | High to very high disk usage (e.g., cc-pVQZ can have 400+ functions for acetone) [3]. |

| Augmented Correlation-Consistent | aug-cc-pV5Z | Adds multiple diffuse functions to correlation-consistent sets. | Extremely high disk usage, often for final, high-accuracy single-point calculations. |

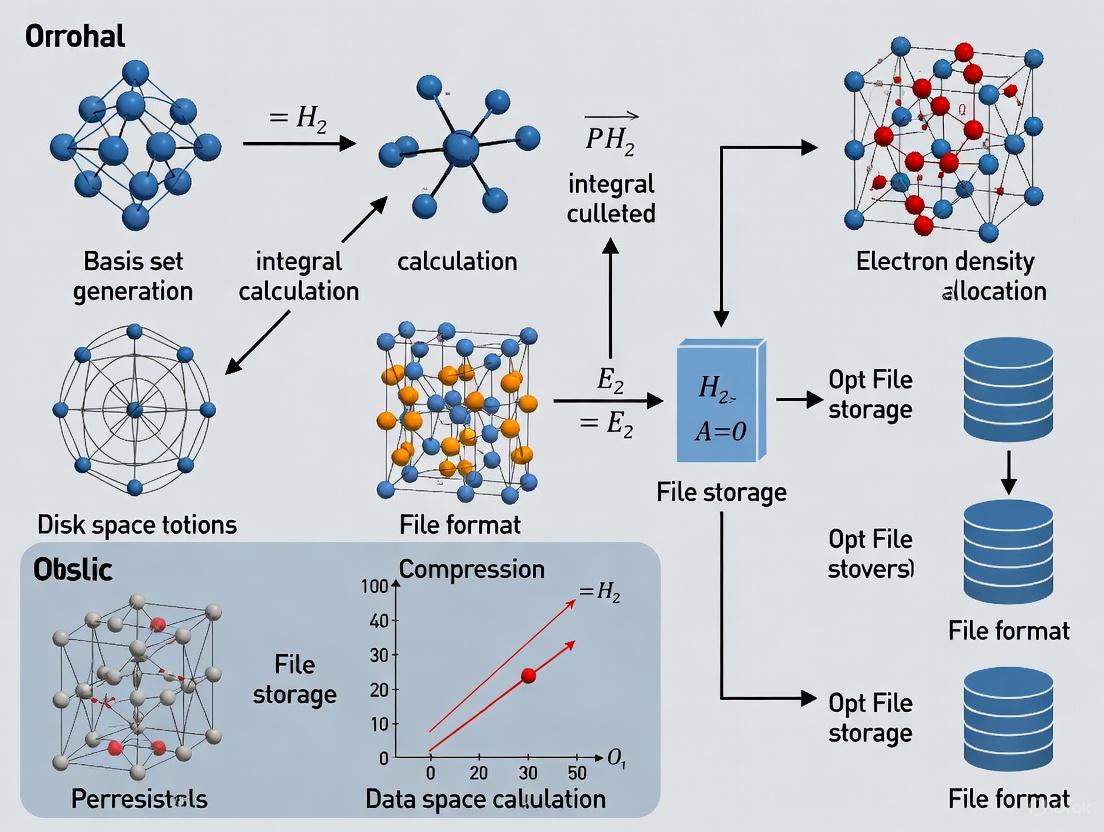

Workflow for Basis Set Selection and Management

The following diagram illustrates a decision workflow for selecting and managing basis sets in a research project, with consideration for managing computational resources.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: What does a basis set name like "6-31G" actually mean?

The notation for Pople-style basis sets is X-YZg. Here, X denotes the number of primitive Gaussians forming each core atomic orbital basis function. The Y and Z indicate that the valence orbitals are composed of two basis functions ("double-zeta"); the first is a linear combination of Y primitive Gaussians, and the second is a linear combination of Z primitives [1]. The asterisks indicate added polarization functions: a single * means d-type polarization functions on atoms heavier than helium, while also adds p-type functions to hydrogen atoms [1] [3].

Q2: My calculation failed with a "disk space" error. How can I proceed?

Disk space issues often arise from large basis sets. The number of two-electron integrals scales roughly with the fourth power of the number of basis functions (N⁴) [5].

- Short-Term Fix: Use a smaller basis set. If you were attempting a calculation with a quintuple-zeta basis, try a triple-zeta basis first.

- Long-Term Strategy: Many computational chemistry packages (e.g., CP2K, Q-Chem) offer integral compression or "direct" methods that compute integrals on-the-fly instead of storing them to disk, at the cost of increased computation time [2]. Activating these options can drastically reduce disk usage.

- Check Your System: Ensure your scratch directory has sufficient free space and that the environment variables pointing to it are correctly set.

Q3: How do I choose the right basis set for my project?

The choice involves balancing accuracy and computational cost [2].

- For initial geometry optimizations: A polarized double-zeta basis set like 6-31G* often provides a good compromise between speed and reasonable accuracy [3].

- For final energy calculations: Use the largest correlation-consistent basis set your resources allow (e.g., cc-pVQZ or aug-cc-pVTZ) [1] [4]. Systematic studies using a sequence like cc-pVDZ → cc-pVTZ → cc-pVQZ allow for extrapolation to the complete basis set (CBS) limit [3].

- For systems with anionic character or weak interactions: Always include diffuse functions (e.g., 6-31+G* or aug-cc-pVDZ) [1] [3].

- For heavy elements: Consider using effective core potentials (ECPs) with matched basis sets like LANL2DZ or SDD, which reduce the number of explicit electrons and basis functions, saving disk space and computation time [3] [4].

Q4: I encountered an error about "mixed relativistic and non-relativistic basis sets." What does this mean?

This error occurs when you use basis sets designed for different theoretical treatments (relativistic vs. non-relativistic) on different atoms within the same molecule. This is common when modeling systems with heavy and light elements [6].

- Solution: Ensure consistency. Use relativistic basis sets (e.g., ANO-RCC) for all atoms, or use a workaround by specifying the basis set for each atom directly in your molecular coordinate (XYZ) input file to override inconsistent defaults [6].

Q5: What is the concrete impact of basis set size on a calculation?

The effect is profound, as shown in this Hartree-Fock data for an acetone molecule [3].

| Basis Set | Number of Basis Functions | Relative Computational Time |

|---|---|---|

| STO-3G | 26 | 0.05 |

| 6-31G | 48 | 0.3 |

| 6-31G* | 72 | 1 (Reference) |

| 6-311G* | 90 | 3 |

| 6-311++G | 130 | 25 |

| cc-pVTZ | 204 | 82 |

| cc-pVQZ | 400 | 3400 |

As the basis set grows, the number of basis functions increases, leading to a dramatic increase in computational time and disk space required to store intermediate results [3] [5].

Frequently Asked Questions

1. What is a basis set in computational chemistry and why is its choice critical? A basis set is a set of functions used to represent the electronic wave function, turning partial differential equations into algebraic equations suitable for computational implementation [1]. The choice is a critical trade-off between accuracy and computational cost. Using a larger, more accurate basis set increases the number of basis functions, which dramatically increases memory and disk space requirements [7] [8].

2. My calculation with a cc-pVQZ basis set failed due to insufficient disk space. What are my options? This is a common issue with large, extended basis sets. You have several options:

- Use a Smaller Basis Set: For initial calculations, consider using a polarized triple-zeta basis set like 6-311+G(d,p) or cc-pVTZ, which offer a good balance of accuracy and cost [1] [8].

- Leverage Basis Set Extrapolation: Perform calculations with two smaller basis sets (e.g., cc-pVTZ and cc-pVQZ) and extrapolate the results to the complete basis set (CBS) limit. This can provide accuracy comparable to a larger basis set calculation at a reduced cost [8] [9].

- Check System Resources: For Hartree-Fock calculations on a modern computer, limiting the total number of basis functions to less than 600 is a practical guideline for an overnight job [8].

3. When are diffuse functions necessary, and what is their computational impact? Diffuse functions are extended functions with small exponents that provide flexibility to the "tail" portion of atomic orbitals far from the nucleus [1]. They are essential for accurately modeling anions, systems with dipole moments, and weak intermolecular interactions [1] [9]. However, they significantly increase the number of basis functions and can lead to self-consistent field (SCF) convergence difficulties [9]. For weak interactions with triple-zeta basis sets, some studies suggest diffuse functions may be unnecessary if counterpoise correction is applied [9].

4. What is the difference between Pople-style and Dunning-style basis sets?

- Pople-style basis sets (e.g., 6-31G(d), 6-311++G(2df,2pd)) are split-valence sets often denoted as X-YZg. They are generally more efficient for Hartree-Fock and Density Functional Theory calculations [1].

- Dunning-style correlation-consistent basis sets (e.g., cc-pVXZ where X=D, T, Q, 5, 6) are designed to systematically converge post-Hartree-Fock (correlated) calculations to the complete basis set limit. They are typically the preferred choice for high-accuracy wavefunction-based methods [1] [8].

5. How can I manage disk space in very large calculations? For systems with hundreds of atoms, even standard triple-zeta basis sets can require terabytes of disk space [10]. Strategies include:

- Using in-core algorithms when possible, which are much faster and avoid disk I/O bottlenecks [8].

- Exploring hybrid approaches that combine distributed memory and compression techniques, which have been shown to extend the simulatable problem size in large-scale quantum simulations [11].

- Ensuring your storage is thick-provisioned if working in a virtualized environment, as thin provisioning can lead to system failure if the disk runs out of space while expanding [12].

Troubleshooting Guides

Problem: Calculation fails with "No space left on device" or "PSIO Error" during a CCSD or SAPT calculation with a large basis set.

- Explanation: Coupled-cluster (CCSD) and symmetry-adapted perturbation theory (SAPT) methods with large basis sets like cc-pVQZ generate enormous amounts of temporary data. A calculation with 678 orbitals (cc-pVQZ level) can easily exhaust multiple terabytes of disk space [13] [10].

- Solution:

- Downgrade the Basis Set: Start with a cc-pVTZ or 6-311G* calculation to test the system's resource usage.

- Use a Reduced System: Perform the calculation on a smaller model system or fragment of your molecule to estimate resource needs.

- Increase Available Disk Space: If possible, allocate more storage, ensuring it is thick-provisioned to prevent dynamic allocation failures [12].

- Optimize Software Settings: Consult your software documentation for options to reduce disk usage, such as increasing integral thresholds or using direct methods that recompute integrals instead of storing them.

Problem: Self-consistent field (SCF) calculations fail to converge with augmented basis sets.

- Explanation: The inclusion of diffuse functions (e.g., in 6-31+G* or aug-cc-pVDZ) can cause numerical instability in the SCF procedure, leading to convergence failure [9].

- Solution:

- Use Minimal Augmentation: For weak interaction calculations with DFT, consider using minimal-augmented basis sets (e.g., ma-TZVPP) which add fewer diffuse functions and reduce BSSE and SCF issues [9].

- Apply Numerical Stabilization: Use software options like "SCF=QC" (in Gaussian) or increased integral accuracy to aid convergence.

- Start with a Stable Basis: First converge the SCF with a standard basis set (e.g., 6-31G*), then use the resulting orbitals as an initial guess for the calculation with the diffuse functions.

Basis Set Hierarchy and Resource Guide

The table below summarizes key basis sets, their characteristics, and approximate memory requirements for a Ne atom calculation to help you plan your resources [8].

| Basis Set | Type | Key Characteristics | Approx. Memory for Ne Atom |

|---|---|---|---|

| STO-3G | Minimal | Fastest; 3 Gaussians per Slater-type orbital; poor accuracy [1]. | - |

| 3-21G | Split-Valence | Double-zeta for valence electrons; better than minimal [1] [4]. | - |

| 6-31G(d) | Polarized Double-Zeta | Adds d-type polarization functions to heavy atoms; good for geometry [1]. | 2 MW |

| 6-311+G(d,p) | Polarized Triple-Zeta with Diffuse | Triple-zeta valence, diffuse and polarization functions; good general purpose [1] [4]. | - |

| cc-pVDZ | Correlation-Consistent DZ | Designed for correlated methods; includes polarization [1] [4]. | 2 MW |

| cc-pVTZ | Correlation-Consistent TZ | More functions than cc-pVDZ; improved accuracy [1] [4]. | 3 MW |

| cc-pVQZ | Correlation-Consistent QZ | Higher angular momentum functions; for high accuracy [1] [4]. | 8 MW |

| cc-pV5Z | Correlation-Consistent 5Z | Near-complete basis set accuracy; very expensive [1] [8]. | 48 MW |

| cc-pV6Z | Correlation-Consistent 6Z | For the highest accuracy; extreme computational cost [8]. | 300 MW |

The Scientist's Toolkit: Essential Materials and Reagents

| Item | Function in Computational Experiments |

|---|---|

| Minimal Basis Sets (e.g., STO-3G) | Used for initial molecular structure searches and dynamics on very large systems due to low computational cost [1]. |

| Polarized Double-Zeta Sets (e.g., 6-31G*) | A standard choice for optimizing molecular geometries and calculating vibrational frequencies at the HF or DFT level [1] [8]. |

| Polarized Triple-Zeta Sets (e.g., 6-311+G(d,p), cc-pVTZ) | Used for single-point energy calculations, properties like electron density, and for initiating correlated methods. 6-311+G(d,p) is good for anions [1] [8]. |

| Correlation-Consistent Basis Sets (cc-pVXZ) | The primary choice for high-accuracy post-HF calculations (e.g., CCSD(T)) and for systematic convergence to the CBS limit via extrapolation [1] [9]. |

| Counterpoise (CP) Correction | A procedure to correct for Basis Set Superposition Error (BSSE), which is crucial for accurate calculation of weak interaction energies [9]. |

Experimental Protocol: Basis Set Extrapolation for Weak Interactions

This protocol is adapted from recent research for accurately calculating weak intermolecular interaction energies using a two-point basis set extrapolation, which can reduce the need for costly large basis sets [9].

1. Objective To obtain a highly accurate complete basis set (CBS) limit estimate for density functional theory (DFT) interaction energies using a computationally efficient extrapolation from smaller basis sets.

2. Materials and Computational Methods

- Software: A quantum chemistry package capable of DFT single-point energy calculations (e.g., ORCA, Gaussian, Psi4).

- Functional: B3LYP-D3(BJ) is recommended for its reliable treatment of weak interactions [9].

- Basis Sets: The standard def2-SVP and def2-TZVPP basis sets [9].

- System Preparation: Geometries of the monomer and complex structures.

3. Procedure

- Step 1: Geometry Preparation. Obtain or optimize the geometries of the isolated monomers (A and B) and the complex (AB).

- Step 2: Single-Point Energy Calculations. Perform single-point energy calculations for A, B, and AB using both the def2-SVP and def2-TZVPP basis sets.

- Step 3: Calculate Raw Interaction Energies. For each basis set (X), compute the raw interaction energy: ( \Delta E{int}^{X} = E{AB}^{X} - E{A}^{X} - E{B}^{X} )

- Step 4: Apply Extrapolation. Use the exponential-square-root (expsqrt) formula to extrapolate the interaction energy to the CBS limit. The optimized parameter for B3LYP-D3(BJ) is ( \alpha = 5.674 ). ( \Delta E{int}^{CBS} = \Delta E{int}^{TZ} - \frac{ \Delta E{int}^{TZ} - \Delta E{int}^{DZ} }{ e^{-5.674 \cdot \sqrt{3}} - e^{-5.674 \cdot \sqrt{2}} } \cdot e^{-5.674 \cdot \sqrt{3}} ) (Note: Here, DZ corresponds to def2-SVP and TZ to def2-TZVPP).

4. Analysis and Validation The extrapolated result, ( \Delta E_{int}^{CBS} ), has been shown to be comparable in accuracy to a more expensive CP-corrected calculation with a larger, minimally-augmented basis set (ma-TZVPP) [9]. This protocol significantly reduces computational cost and SCF convergence issues.

Frequently Asked Questions

FAQ 1: Why do my computational chemistry calculations suddenly require so much more disk space?

The increase in disk space requirements is directly tied to the complexity of the basis set you are using. In quantum chemistry calculations, the number of two-electron integrals that must be computed and stored scales approximately with the fourth power of the number of basis functions [14]. This means that if you double the number of functions in your basis set, the disk space needed to store the integrals can increase by up to 16 times. This exponential growth is a fundamental mathematical aspect of the calculations.

FAQ 2: What is the practical difference in resource requirements between a minimal basis set like STO-3G and a larger one like cc-pVQZ?

The difference is substantial. A minimal basis set uses the fewest possible functions to represent atomic orbitals, while a correlation-consistent polarized valence quadruple-zeta (cc-pVQZ) basis set uses a much larger number of functions, including multiple polarization layers [4]. For a first-row atom, the cc-pVQZ basis has significantly more primitives and contracted Gaussian functions than STO-3G. This directly translates to a massive increase in the number of integrals that need to be calculated and stored on disk during a computation.

FAQ 3: Which specific scratch files grow the most, and can I manage their location?

The Read-Write file (.rwf) is typically the largest scratch file and often benefits the most from being placed on a high-capacity, fast storage system [15] [16]. You can control the location of this and other scratch files using Link 0 commands like %RWF=path, %Int=path, and %D2E=path in your Gaussian input file. For very large calculations, you can even split the Read-Write file across multiple disks to mitigate storage bottlenecks on a single filesystem [15].

FAQ 4: Are there alternative methods that can reduce the disk space burden of large basis sets?

Yes, machine learning approaches are emerging as a powerful alternative. Frameworks like the Materials Learning Algorithms (MALA) package are designed to bypass direct Density Functional Theory (DFT) calculations, instead using machine-learned models to predict electronic properties [17]. Since these models do not need to compute and store the vast number of integrals required by traditional methods, they can operate at scales far beyond standard DFT, drastically reducing disk space requirements for large-scale simulations.

Troubleshooting Guide

Problem: Jobs fail due to insufficient disk space in the scratch directory.

Solution: Follow this systematic approach to diagnose and resolve the issue:

- Estimate Disk Needs: Before running a job, check the complexity of your basis set. The Gaussian website provides detailed lists of available basis sets [4]. Understand that moving from a double-zeta to a triple-zeta basis, or adding diffuse and polarization functions, will cause a sharp, non-linear increase in the number of integrals.

- Configure Scratch Directory:

- Manage Scratch Files in Input:

- Use the

%RWFcommand in your Gaussian input file to explicitly direct the large Read-Write file to a specific, high-capacity disk [15]. - For massive calculations, use the syntax

%RWF=loc1,size1,loc2,size2,...to split the file across multiple disks, which can help overcome single-disk capacity limits [15].

- Use the

- Clean Up Post-Processing: Scratch files are usually deleted after a successful run. However, they can accumulate from jobs that terminate abnormally [16]. Implement a regular cleanup policy for your scratch directory, such as clearing it at system boot time, to prevent wasted space from leftover files.

Problem: Need to run calculations with large basis sets on systems with limited local storage.

Solution: Utilize Gaussian's file splitting capabilities and consider architectural choices:

- Split Scratch Files: As a best practice, use the

%RWF,%Int, and%D2Ecommands to distribute different scratch files across separate storage devices. This prevents any single disk from becoming a bottleneck and allows you to leverage smaller, faster disks for certain file types [15]. - Leverage High-Performance Computing (HPC) Resources: For production work requiring large basis sets, submit jobs to a cluster or supercomputer. These environments are typically configured with large, fast, and often centralized scratch storage systems (like network-attached storage) that are designed to handle the intensive I/O demands of quantum chemistry software [15].

Basis Set Complexity and Resource Implications

Table 1: Comparison of common basis set types and their general impact on computational resources.

| Basis Set Type | Example(s) | Key Characteristics | Typical Resource Impact (vs. Minimal Basis) |

|---|---|---|---|

| Minimal | STO-3G [4] | Fewest functions per atom. | Baseline (1x). |

| Split-Valence | 3-21G, 6-31G [4] | Different function counts for core vs. valence electrons. | Moderate increase in disk and memory. |

| Polarized | 6-31G(d), 6-31G [4] | Adds functions for angular momentum (d, f orbitals). | Significant increase in number of integrals. |

| Diffuse | 6-31+G, aug-cc-pVDZ [4] | Adds functions for electron-rich regions (anions, lone pairs). | Further increases system size and integral count. |

| High-Zeta Correlation-Consistent | cc-pVTZ, cc-pVQZ, cc-pV5Z [4] | Multiple "zeta" levels and polarization functions for high accuracy. | Exponential growth in disk space and CPU time; required for many advanced methods. |

Table 2: Scratch files used by Gaussian and their management strategies [15].

| File Type | Typical Filename | Purpose | Management Strategy |

|---|---|---|---|

| Checkpoint | .chk |

Stores wavefunction, orbitals, and properties. | Use %Chk to save for post-processing analysis. |

| Read-Write | .rwf |

Primary scratch for integrals and intermediate results. | Often the largest file; use %RWF to place on high-capacity storage or split across disks. |

| Integral | .int |

Stores two-electron integrals (can be large). | Use %Int to specify an alternate location. |

| Integral Derivative | .d2e |

Stores derivative integrals. | Use %D2E to specify an alternate location. |

| Scratch | .skr |

General temporary scratch file. | Usually managed automatically by the system. |

Experimental Protocols for Resource Estimation

Protocol 1: Profiling Disk Usage for Different Basis Sets

- System Setup: Configure your Gaussian environment, ensuring

GAUSS_SCRDIRis set to a monitored scratch directory [15]. - Molecular System Selection: Choose a small, standardized test molecule (e.g., a water molecule).

- Calculation Execution:

- Run a series of single-point energy calculations on the same molecular geometry.

- For each calculation, use a different basis set from the following series: STO-3G, 3-21G, 6-31G(d), 6-311+G(d,p), and cc-pVTZ [4].

- Use the

%RWF=./myjob.rwfcommand to give the Read-Write file a predictable name.

- Data Collection: After each job completes, check the output log for the "Final file size" of the Read-Write file or directly note the size of the

myjob.rwffile before it is deleted. - Analysis: Plot the disk usage (Read-Write file size) against the number of basis functions for your test molecule. This will visually demonstrate the steep, non-linear growth in storage requirements.

Protocol 2: Implementing a Disk Space Mitigation Strategy

- Problem Identification: Identify a molecule and method (e.g., DFT with a cc-pVQZ basis set) that consistently fails due to lack of disk space on your current system.

- Strategy Formulation:

- Option A (File Splitting): Modify the input file to use

%RWF=/disk1/job1.rwf,50GB,/disk2/job1.rwf,50GBto split the Read-Write file across two different storage volumes [15]. - Option B (Alternative Method): For a suitable system, use the MALA software package. Prepare input data, train a machine learning model to learn the electronic structure, and then use the model for inference on larger systems, monitoring the significantly reduced disk I/O [17].

- Option A (File Splitting): Modify the input file to use

- Validation: Run the calculation with the mitigation strategy in place and confirm successful completion. Compare the total runtime and result accuracy against the original, failing job (if available) to evaluate the trade-offs.

Research Reagent Solutions

Table 3: Key software and computational tools for managing large-scale calculations.

| Item | Function / Purpose | Reference / Source |

|---|---|---|

| Gaussian 16/09 | Quantum chemistry software package for electronic structure calculations. | [15] [16] |

| Basis Set Library (e.g., BSE) | Provides standardized basis set definitions for accurate and reproducible calculations. | [4] |

| Materials Learning Algorithms (MALA) | A machine learning framework that bypasses direct DFT to predict electronic properties, reducing disk I/O. | [17] |

| Linda Parallel Processing | Facilitates parallel computation across multiple nodes, which can help manage memory and disk load. | [15] |

Frequently Asked Questions

What is the primary trade-off when selecting a basis set? The choice of a basis set is almost always a trade-off between accuracy and computational cost (including CPU time, memory, and disk storage for storing wavefunctions, integrals, and other data) [18]. A larger, more accurate basis set will lead to significantly greater demands on computational resources.

My calculation with a large basis set fails to converge. What should I check? SCF convergence problems with large basis sets are common [19]. First, ensure your calculation has a sufficient planewave cutoff energy (or grid spacing). The cutoff must be high enough to accommodate the largest exponent in your basis set; an insufficient cutoff is a frequent cause of convergence failures and incorrect energies [19]. Second, large Gaussian-type orbital (GTO) basis sets can develop linear dependencies, making convergence difficult. Using basis sets designed for numerical stability (like MOLOPT) is recommended for production calculations [19].

When is a frozen core approximation appropriate, and when should I avoid it?

The frozen core approximation is recommended to speed up calculations, especially for heavy elements, and it generally does not significantly impact most results [18]. However, you should use an all-electron basis set (Core None) for:

- Calculations of properties at atomic nuclei [18].

- Calculations using Meta-GGA or Hybrid density functionals [18].

- Geometry optimizations under pressure [18].

How do I choose between different "zeta" levels? The basis set hierarchy, from least to most accurate and costly, is typically: SZ < DZ < DZP < TZP < TZ2P < QZ4P [18]. The table below summarizes common use cases.

| Basis Set | Full Name | Recommended Use Cases | Key Considerations |

|---|---|---|---|

| SZ | Single Zeta | Quick test calculations [18] | Results are often inaccurate [18]. |

| DZ | Double Zeta | Pre-optimization of structures [18] | Lacks polarization; poor for virtual orbitals properties [18]. |

| DZP | Double Zeta + Polarization | Geometry optimizations of organic systems [18] | Good for energy differences (error cancellation) [18]. |

| TZP | Triple Zeta + Polarization | Recommended default for best performance/accuracy balance [18] | Captures trends in properties like band gaps very well [18]. |

| TZ2P | Triple Zeta + Double Polarization | Accurate calculations; good virtual orbital space description [18] | More computationally demanding than TZP [18]. |

| QZ4P | Quadruple Zeta + Quadruple Polarization | Benchmarking and high-accuracy reference data [18] | Highly computationally intensive [18]. |

Troubleshooting Guides

Problem: Managing Disk Space in Large-Scale Calculations

Issue: Calculations with large basis sets (TZ2P, QZ4P) generate massive amounts of data, quickly exhausting available disk space and causing job failures [20].

Solution Strategy:

- Implement a Quota System: Use a script or your job scheduler to enforce a disk space quota for calculations, preventing any single job from consuming all available space.

- Proactive Monitoring: Set up alerts to trigger when disk usage reaches a critical threshold (e.g., 80%) [20]. This allows you to clean up files or terminate problematic jobs before a crash occurs.

- Selective Archiving: After a job completes successfully, archive only essential output files (e.g., final wavefunctions, converged geometries, and key properties). Delete large temporary files and checkpoints from failed calculations.

Problem: Basis Set Selection for Target Properties

Issue: Selecting a basis set that is either too large (wasting resources) or too small (producing inaccurate results) for the property of interest.

Solution Strategy: Follow a systematic decision workflow to match the basis set to your research goal.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Purpose |

|---|---|

| TZP Basis Set | The recommended workhorse. Offers the best balance of accuracy and computational cost for a wide range of properties, including geometry optimizations and energy differences [18]. |

| Frozen Core Approximation | A "reagent" to reduce computation time. Keeps core orbitals frozen, significantly speeding up calculations for heavy elements without major accuracy loss for many properties [18]. |

| DZP Basis Set | An efficient choice for initial geometry optimizations, particularly for organic systems, before refining with a larger basis set [18]. |

| MOLOPT Basis Sets | Specially optimized GTO basis sets that constrain the overlap matrix condition number, improving numerical stability and SCF convergence in condensed-phase calculations [19]. |

All-Electron Basis Set (Core None) |

Essential for calculating properties sensitive to the core electron density, such as hyperfine couplings or when using specific density functionals like Meta-GGAs and Hybrids [18]. |

Experimental Protocols & Benchmarking

Protocol: Benchmarking Basis Set Accuracy and Cost This protocol helps quantify the trade-off for your specific system.

- System Selection: Choose a representative, smaller model system that captures the essential chemistry of your larger research target.

- Basis Set Hierarchy: Run a single-point energy calculation on the same geometry using basis sets across the hierarchy: SZ, DZ, DZP, TZP, TZ2P.

- Data Collection: For each calculation, record:

- Total Energy: The final SCF energy.

- CPU Time: The total computation time.

- Disk Usage: The size of the output and scratch directories.

- Property of Interest: e.g., HOMO-LUMO gap, reaction energy.

- Data Analysis:

- Use the result from the largest basis set (e.g., QZ4P) as your reference value [18].

- Calculate the absolute error in energy (or your property) for each smaller basis set.

- Plot the error versus the computational cost (CPU time or disk usage) to visualize the trade-off.

The table below shows an example for a (24,24) carbon nanotube [18].

| Basis Set | Energy Error (eV/atom) | CPU Time Ratio |

|---|---|---|

| SZ | 1.8 | 1.0 |

| DZ | 0.46 | 1.5 |

| DZP | 0.16 | 2.5 |

| TZP | 0.048 | 3.8 |

| TZ2P | 0.016 | 6.1 |

| QZ4P | (reference) | 14.3 |

Frequently Asked Questions

Why are my matrix files so large, and how can I reduce their size? Your files are likely storing dense matrices, where every single element (including zeros) is written to disk. In computational research, matrices are often sparse, meaning most of their elements are zero. A 25,000 x 48,401 matrix with a sparsity of 99.9% consumes 10 GB as a dense matrix but far less when stored in a sparse format [21]. Switch to a sparse matrix file format like the Matrix Market coordinate format, which only stores the non-zero entries [22].

What is the difference between the Array and Coordinate formats for matrices? The Array Format is for dense matrices and stores every matrix element in column-wise order. The Coordinate Format is for sparse matrices and stores only the non-zero elements, listing their row index, column index, and value for each [22].

How does the choice of integer data type impact my data storage? Choosing an integral data type determines the range of numbers you can store and the amount of disk space or memory required. Using a data type larger than necessary wastes space [23].

| Data Type Size (bits) | Common Name | Unsigned Integer Range | Common Usage |

|---|---|---|---|

| 8 | byte, octet | 0 to 255 | Single characters, small integers |

| 16 | word | 0 to 65,535 | Integers, pointers, UCS-2 characters [23] |

| 32 | doubleword, longword | 0 to 4,294,967,295 | Integers, pointers [23] |

| 64 | quadword, long long | 0 to 1.8e+19 | Large integers, pointers [23] |

How can I quickly estimate the file size of a dense matrix? You can estimate the size using this formula: File Size (Bytes) = (Number of Rows) × (Number of Columns) × (Size of a Single Data Element in Bytes) For example, a 50,000 x 50,000 matrix of 8-byte double-precision floating-point numbers would require: 50,000 × 50,000 × 8 bytes = 20 GB. Storing this as a 4-byte single-precision float would halve the size to 10 GB.

Troubleshooting Guides

Issue: Running Out of Disk Space for Large Matrix Files

Problem: Your computational experiments involving large basis sets are generating matrix files that consume excessive disk space.

Solution: Implement sparse matrix storage. A matrix with a high percentage of zero elements is a candidate for sparse storage. The memory consumption of a matrix with 25,000 documents and 48,401 unique words was reduced from 10 GB to a fraction of that after conversion to a sparse format [21].

Methodology: How to Convert to a Sparse Format

- Determine Sparsity: Calculate the percentage of elements in your matrix that are zero. If sparsity is high (e.g., above 80-90%), sparse storage will be beneficial.

- Choose a Format: Select a standard sparse matrix format. The Matrix Market Coordinate Format is a widely supported, human-readable option [22].

- Write the File:

- The first line is a header (e.g.,

%%MatrixMarket matrix coordinate real general). - The second line contains the number of rows, columns, and non-zero entries.

- All subsequent lines each contain one non-zero element's row index, column index, and value [22].

- The first line is a header (e.g.,

Example: Matrix Market File

This 5x5 matrix has only 8 non-zero entries out of 25 total elements [22].

Issue: Managing Large Checkpoint Files from Long-Running Calculations

Problem: Checkpoint files from quantum chemistry packages (e.g., TURBOMOLE, VASP) are too large for available disk space or difficult to transfer.

Solution: Utilize compression and efficient file formats.

Methodology: A Protocol for Handling Checkpoint Files

- Use Built-in Compression: If your computational software supports it, enable compression for checkpoint files to reduce their size.

- Post-Process Files: After a job completes, compress large checkpoint files using utilities like

gziportarfor archiving. The Matrix Market website notes that most of the data files they distribute are compressed usinggzip[22]. - Leverage Sparse Formats: For checkpoint data that is matrix-based (e.g., wavefunctions, density matrices in certain representations), explore if the software can output in a sparse format.

- Estimate Size: Before a large calculation, estimate the potential checkpoint file size. For a matrix-heavy output, use the dense matrix size estimation formula above as a starting point. Be aware that system limits (like

ulimitin UNIX) can restrict maximum file sizes [24].

Issue: Selecting the Correct Integer Data Type for Data Storage

Problem: Incorrect integer data type selection leads to wasted disk space or, worse, integer overflow and corrupted data.

Solution: Match the data type to the range of values you need to store.

Methodology: Selecting an Integral Data Type

- Identify Value Range: Determine the minimum and maximum values your data can possess.

- Choose Signed or Unsigned: If all values are positive or zero, use an unsigned integer. If negative values are possible, you must use a signed integer (which uses one bit for the sign, reducing the positive range) [23].

- Select the Smallest Adequate Type: Refer to the data type table above and choose the smallest type that can accommodate your value range.

Example: If you are storing atomic indices in a molecule (e.g., 1 to 10,000), a 16-bit unsigned integer (range 0 to 65,535) is sufficient. Using a 64-bit integer would be inefficient.

The Scientist's Toolkit: Essential Digital Materials

| Item | Function | Relevance to Large Basis Set Calculations |

|---|---|---|

| Sparse Matrix Library (e.g., SciPy) | Provides data structures and algorithms for efficient creation, storage, and manipulation of sparse matrices. | Crucial for handling the large, sparse matrices common in quantum chemistry and materials science simulations without running out of memory or disk space [21]. |

| Matrix Market Format | A simple, human-readable file exchange format for dense and sparse matrices. | An excellent standard for archiving matrix data or transferring it between different research groups and software packages [22]. |

| Harwell-Boeing Format | Another established format for exchanging sparse matrix data, using a fixed-length 80-column format for portability [22]. | A historical and widely recognized format for sparse matrices from scientific computations. |

| Gzip Compression Utility | A standard tool for file compression. | Significantly reduces the size of text-based data files (like matrices and checkpoints) for archiving and transfer [22]. |

| File Size Estimation Script | A custom script to estimate the file size of dense matrices before a calculation runs. | Helps researchers proactively manage disk space and avoid job failures due to a full disk. |

Workflow: Managing Large Numerical Data Files

The following diagram illustrates the decision process for handling large numerical data files effectively.

Practical Strategies for Efficient Basis Set Storage Management

Implementing Smart File Naming Conventions and Scalable Directory Structures

FAQs: Organizing Computational Research Data

What are the core components of an effective file naming convention?

An effective file name is a principal identifier that provides clues about the file's content, status, and version [25]. A robust naming convention includes these key components [26]:

- Project/Experiment Identifier: Clear project name (e.g.,

CatalysisStudy) - Descriptive Content: What the file contains (e.g.,

Frequencies,Optimization) - Date: In YYYYMMDD format for chronological sorting [26]

- Researcher Initials: Identify responsible team member

- Version Number: Sequential with leading zeros (e.g.,

v01,v02) [26]

How can proper file naming help manage large basis set calculations?

Strategic file naming provides immediate context about calculation parameters, helping researchers quickly identify relevant data without opening files. This is crucial when managing multiple similar calculations with different basis sets or theoretical methods.

Example: 20231125_Catalysis_FeComplex_cc-pVTZ_CCSD_Freq_v02.log immediately tells you the date, project, molecule, basis set, method, calculation type, and version.

What are the most common file naming mistakes that hinder research productivity?

- Overly complicated names that teams won't consistently use [27]

- Starting names with generic terms like "draft" or "final" [26]

- Using special characters (

< > | [ ] & $ + \ / : * ? ") that cause cross-platform issues [27] - Inconsistent date formats that prevent proper chronological sorting

- Omitting leading zeros in version numbers, breaking numerical order [26]

How should our research group implement a new file naming convention?

- Document standards in a README.txt file within project folders [26]

- Train all team members on the consistent format [27]

- Use batch renaming tools for existing files when possible [25]

- Apply conventions early in file lifecycle, ideally when creators generate files [27]

Troubleshooting Guides

Problem: Cannot Locate Specific Calculation Results

Symptoms: Spending excessive time searching for files; uncertainty about which version is most current; team members working with outdated files.

Diagnosis and Resolution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Verify naming convention adherence | Identify if problem stems from inconsistent naming |

| 2 | Search by core calculation parameters (basis set, method) | Locate files with specific technical attributes |

| 3 | Check date stamps and version numbers | Identify most recent version chronologically |

| 4 | Implement batch renaming for inconsistent files [25] | Apply consistent naming across all relevant files |

Prevention: Establish and document clear naming conventions that all team members follow [26]. Example: YYYYMMDD_Project_Molecule_BasisSet_Method_Type_Researcher_v##.ext

Problem: Disk Space Exhausted by Temporary Calculation Files

Symptoms: Calculations failing due to insufficient disk space; inability to determine which files can be safely archived or deleted.

Diagnosis and Resolution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Identify largest files by file extension and naming pattern | Locate primary space consumers |

| 2 | Check calculation output for completion status | Identify which temporary files can be safely removed |

| 3 | Archive completed calculations with clear naming | Free active disk space while maintaining data integrity |

| 4 | Implement naming that distinguishes active vs. archived work | Quickly identify calculation status from filename |

Prevention: Include status indicators in filenames (e.g., _ACTIVE_, _ARCHIVE_) and establish protocols for regular cleanup of temporary files.

Quantitative Data for Resource Planning

The following table summarizes typical disk space requirements for various molecular systems to assist with resource planning and allocation:

| Molecule | Point Group | Basis Set | Basis Functions | Maximum Disk Usage |

|---|---|---|---|---|

| Propane | C2v | AVQZ' | 480 | 53.0 GB |

| Acetone | C2v | AVQZ' | 500 | 61.5 GB |

| ClNO | Cs | AV5Z+2d1f(Cl) | 402 | 48.7 GB |

| Cyclopropane | C2v | AVQZ' | 420 | 30.9 GB |

| Pyrrole | C2v | AVQZ' | 550 | 89.8 GB |

| Benzene | D2h | AVQZ' | 660 | 96.0 GB |

| Furan | C2v | AVQZ' | 520 | 72.4 GB |

| Calculation Type | Memory Allocation Rule | Notes |

|---|---|---|

| General CCSD | Memory = (Number of basis functions)4 / 131072 MB | Formula provides rough estimate |

| RHF Reference (CCMAN2) | 50% of general formula | Reduced due to symmetry |

| Forces or Excited States | 2x general formula | Increased memory requirements |

| CCMAN2 Exclusive Node | 75-80% of total available RAM | Optimal performance setting |

Experimental Protocols

Protocol: Implementing a Research Group File Naming Convention

Purpose: Establish consistent, searchable, and informative file names across all research projects to improve data location, collaboration, and reproducibility.

Materials:

- Existing research files

- Batch renaming utility software [25]

- Team documentation (README.txt files)

Methodology:

- Inventory existing files to determine current naming patterns and identify gaps [26]

- Design naming convention using the format:

YYYYMMDD_Project_Molecule_BasisSet_Method_Researcher_v##.ext - Document the convention in a README.txt file within project folders [26]

- Train all team members on the new standard with hands-on examples

- Implement batch renaming of existing files using appropriate software tools [25]

- Establish quality control with regular checks for adherence

Expected Outcomes: Reduced time locating files; clearer version control; improved collaboration; easier data archival and retrieval.

Protocol: Managing Disk Space for Large Basis Set Calculations

Purpose: Proactively manage storage resources to prevent calculation failures due to insufficient disk space.

Materials:

- Storage monitoring tools

- Archival system (tape, cloud, or external storage)

- File naming convention system

Methodology:

- Estimate requirements using the formula:

(Number of basis functions)^4 / 131072 MB[28] - Monitor active calculations with clear naming indicating status (

_RUNNING_,_COMPLETE_) - Implement tiered storage: active projects on fast storage, completed work on archival storage

- Establish cleanup schedule for temporary files with naming that distinguishes temporary vs. permanent files

- Use calculation-specific folders with consistent structure across projects

Expected Outcomes: Fewer calculation failures due to disk space; efficient storage allocation; maintained access to important results.

The Scientist's Toolkit: Research Reagent Solutions

Computational Research Essentials

| Item | Function | Specification Guidelines |

|---|---|---|

| Batch Renaming Utility | Mass renaming of inconsistently named files [25] | Supports regular expressions; handles multiple files |

| README Template | Document naming conventions and folder structures [26] | Clear examples; rationale for standards |

| Disk Space Monitor | Track storage allocation in real-time | Alerts when thresholds exceeded |

| Calculation Estimator | Predict disk and memory needs [28] | Based on basis set size and method |

| Archive Manager | System for moving completed calculations to archival storage | Maintains metadata and accessibility |

Directory Structure Visualization

Diagram: Scalable Research Directory Structure

Diagram: File Naming Convention Logic

Diagram: Troubleshooting Workflow for Missing Files

Quantum chemistry calculations, particularly those employing large basis sets, generate substantial volumes of data. Managing the disk space required for these outputs is a critical challenge in computational research. This guide details practical methodologies for using lossless data compression to efficiently manage these files without the risk of data loss, ensuring the original results can be perfectly reconstructed from their compressed state [29].

Frequently Asked Questions (FAQs)

1. What is lossless compression and why is it important for quantum chemistry data? Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data. This is essential for scientific data where every bit of numerical precision must be maintained for results to be valid, unlike lossy compression which sacrifices some data for greater compression [29].

2. Which types of quantum chemistry files are best suited for lossless compression? Text-based output files (e.g., log files containing energies, geometries, and vibrational frequencies) and checkpoints containing wavefunction data typically contain significant statistical redundancy, making them highly compressible. Binary files may also be compressed, though the achieved ratio can be lower.

3. How much disk space can I expect to save?

Savings depend heavily on the file type and content. Text-based output files can often be reduced to 25-40% of their original size (a compression ratio of 2.5:1 to 4:1). The $rem variable MEM_TOTAL specifies the limit of the total memory the user’s job can use [30]. Compression ratios for binary files are generally lower.

4. Will compressing my output files affect my analysis workflows? Yes, you will need to decompress files before they can be read by standard analysis tools. It is most efficient to incorporate compression and decompression steps into automated scripting workflows rather than performing them manually.

5. What are the most common lossless compression algorithms for this task? Common general-purpose algorithms includeDEFLATE (used in ZIP and gzip), LZMA (used in 7zip and xz), and BZIP2. These use a combination of techniques like dictionary-based algorithms (LZ77) and entropy encoding (Huffman coding) to reduce file size without losing information [29].

Troubleshooting Guides

Problem: Low Compression Ratio

Issue: Compressed file size is not significantly smaller than the original.

Diagnosis and Solutions:

- Cause: File is already compressed or contains random data. Some output formats or checkpoint files might already use a compressed format internally.

- Solution: Check the file type. Attempting to compress an already compressed file (e.g., a ZIP file) will not yield further savings. Focus compression efforts on plain text output and log files.

- Cause: The algorithm is not well-suited to the data.

- Solution: Experiment with different compression tools and algorithms (e.g., switch from gzip to xz or 7zip) as they use different methods and can produce varying results on different data types [29].

Problem: "Disk Full" Error During Compression

Issue: The compression process fails due to insufficient disk space.

Diagnosis and Solutions:

- Cause: Many compression utilities need space for both the original and new compressed file.

- Solution: Ensure you have free disk space equivalent to at least the size of the file you are compressing. For large-scale compression, work in batches. Quantum recommends keeping available disk space at 20% or more for optimal system performance [31].

Problem: SCF Convergence Issues with Large Basis Sets

Issue: When using large basis sets (e.g., QZV3P), the Self-Consistent Field (SCF) calculation fails to converge, or converges to an incorrect energy.

Diagnosis and Solutions:

- Cause: Inadequate CUTOFF value for the plane-wave grid. The CUTOFF value must be high enough to accommodate the largest exponent in your large basis set. An insufficient CUTOFF can lead to convergence failures and incorrect energies [32].

- Solution: Calculate the required CUTOFF. Multiply the largest exponent in your basis set by the

REL_CUTOFF(default is 40). For example, an oxygen exponent of ~12 in a QZV3P set requires a CUTOFF of ~480 Ry [32].

- Solution: Calculate the required CUTOFF. Multiply the largest exponent in your basis set by the

- Cause: Increased linear dependencies and poor condition number in large Gaussian-type orbital (GTO) basis sets. Larger basis sets have a greater risk of numerical instability [32].

Data Presentation: Compression Tools Comparison

The following table summarizes common lossless compression tools and their key characteristics for easy comparison.

| Tool | Primary Algorithm | Key Features | Best Use Cases |

|---|---|---|---|

| gzip | DEFLATE | Fast compression/decompression, universally available [29] | General-purpose, quick archiving of text-based log files |

| bzip2 | Burrows-Wheeler Transform | Generally higher compression than gzip, slower [29] | Archiving where size is prioritized over speed |

| 7zip / xz | LZMA | Very high compression ratios, slower compression [29] | Long-term storage of large datasets where maximum space savings are critical |

| ZIP | DEFLATE | Ubiquitous support, especially on Windows, can bundle multiple files [29] | Sharing multiple related files (e.g., input, output, and checkpoint files) |

Experimental Protocols

Protocol 1: Method for Benchmarking Compression Efficiency

Objective: To quantitatively evaluate the effectiveness of different lossless compression tools on a set of standard quantum chemistry output files.

Materials:

- A collection of output files from a quantum chemistry calculation (e.g., a Gaussian .log file, a checkpoint file).

- Access to command-line compression tools (gzip, bzip2, xz).

Methodology:

- Preparation: Note the original size (in MB) of each file to be tested.

- Compression: Compress each file using different tools. Example commands:

gzip -k filename.logbzip2 -k filename.logxz -k filename.log

- Data Collection: Record the size of each resulting compressed file (e.g.,

filename.log.gz,filename.log.bz2,filename.log.xz). - Calculation: For each file-tool combination, calculate the compression ratio and percentage reduction.

- Compression Ratio = Original Size / Compressed Size

- Percentage Reduction = [(Original Size - Compressed Size) / Original Size] * 100

- Analysis: Summarize results in a table to identify the most effective tool for your specific file types.

Protocol 2: Workflow for Managing Disk Space in Large-Scale Studies

Objective: To implement a systematic, automated approach for compressing and archiving data from a high-throughput study using large basis sets.

Materials:

- A high-performance computing (HPC) cluster or workstation.

- A batch scripting language (e.g., Bash, Python).

- A chosen compression tool (e.g., xz for high compression).

Methodology:

- Organization: After a calculation is complete, move all output files (log, checkpoint, etc.) for a single job into a dedicated directory named with a unique job identifier.

- Compression Script: Create a script that:

- Iterates over all job directories.

- Uses the chosen tool to compress all suitable files within the directory.

- Logs the compression activity and any errors.

- Data Integrity Check: The script should generate checksums (e.g., SHA-256) for the original files before compression. These checksums should be stored in a manifest file. After decompression in the future, the checksums can be verified to ensure data integrity.

- Cleanup: Once compression and verification are successful, the script can be configured to remove the original, uncompressed files to free up disk space.

- Decompression: For analysis, create a corresponding decompression script that unpacks the files and verifies their checksums against the manifest.

Workflow Diagram

The following diagram illustrates the logical workflow for the disk space management protocol described above.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and their functions relevant to managing data from calculations with large basis sets.

| Item | Function / Explanation |

|---|---|

| Basis Set (e.g., QZV3P) | A set of functions (Gaussian-type orbitals) used to represent molecular orbitals; larger sets like QZV3P offer higher accuracy but drastically increase computational cost and output size [32]. |

| SCF Convergence Algorithm (e.g., DIIS, CG) | A mathematical procedure to find a self-consistent solution to the quantum chemical equations; robust algorithms like CG with FULL_KINETIC preconditioner are often needed for stability with large basis sets [32]. |

| CUTOFF / REL_CUTOFF | Parameters defining the planewave grid used to represent the electron density; a sufficiently high CUTOFF (e.g., 480 Ry for QZV3P) is critical for accuracy with large basis sets [32]. |

| Lossless Compression Tool (e.g., XZ) | Software that reduces file size without data loss, essential for archiving the large output and scratch files from correlated methods (e.g., MP2, CCSD(T)) [30] [29]. |

| Checksum (e.g., SHA-256) | A unique digital fingerprint of a file; used to verify data integrity after compression and decompression, ensuring no corruption has occurred [29]. |

Automated Archiving Protocols for Completed Calculations and Interim Results

Frequently Asked Questions (FAQs)

Q: My calculation node has run out of disk space and jobs are failing. What is the first thing I should do?

A: The first step is to use the df -h command to identify which specific partition is full [33]. Once you know the affected partition, use commands like du -h --max-depth=1 | sort -h to find the largest directories and ls -laShr to list files within a directory by size, helping you pinpoint the data consuming the most space [34].

Q: What are the most common causes of disk space exhaustion in computational research? A: The most common causes are [34]:

- Large result files: The primary output files from your calculations (e.g., checkpoint, wavefunction, or trajectory files) are much larger than anticipated.

- Interim data buildup: Numerous interim results from ab initio molecular dynamics (AIMD) or other sampling methods are retained [35].

- Log and backup files: An accumulation of system logs, application logs, and locally created backup files.

- Failed or old data: Data from old or failed experiments that were not purged.

Q: Is it safe to delete large files from my calculation directories to free up space? A: You must exercise extreme caution. Never move or delete active datastore files or transaction logs, as this can cause irreversible data corruption [33]. Before deleting any calculation files, ensure you have a verified, archived copy in a separate storage location. If you are unsure about a file's importance, do not delete it [34].

Q: How can I automate the archiving process to prevent future disk space issues?

A: You can use system utilities like cron to schedule regular tasks [33]. A cron job can be configured to automatically run archiving scripts that compress and move completed calculation results and interim data to a long-term storage system, keeping your active workspace clear.

Troubleshooting Guide: High Disk Space Usage

Monitor Disk Usage

Proactive monitoring is key to avoiding emergencies. The following methods can be used:

- Command-Line Monitoring: Regularly run

df -hto check disk space usage across all partitions [33] [34]. - Configure Alerts: Set up baseline alerts to send email notifications when available disk space falls below a predefined threshold (e.g., 2GB) [33].

- Manual Checks: For a standalone system, use the administration UI to check hardware storage statistics [33].

Locate Large Files and Directories

If you receive an alert or a job fails, use these commands to find space-consuming items [34]:

- Identify Large Directories:

- List Large Files in a Directory:

Common Root Causes and Solutions

| Root Cause | Description | Solution |

|---|---|---|

| Large Result Files | Primary output files (e.g., from VASP calculations [35]) consuming excessive space. | Implement an automated protocol to archive completed calculations to dedicated storage. |

| Proliferation of Interim Data | Numerous files from AIMD sampling or other intermediate steps [35]. | Script a process to evaluate, compress, and archive interim results based on project phase completion. |

| Log File Accumulation | System and application logs filling the /var/log directory. |

Schedule regular log rotation and compaction; safely delete older, non-essential log files [33]. |

| Local Backups | Local backup snapshots of websites or databases consuming space. | Purge unnecessary local backups after confirming successful transfer to a remote archive [34]. |

If the Appliance Runs Out of Space

If a partition reaches 100% capacity:

- Run

df -hto confirm the full partition [33]. - If the

/usr/tideway(or similar) partition is full, rundu /usr/tideway | sort -nr | head -n 30to find the largest files [33]. - If the datastore partition is full and some space remains, attempt to compact it using the

--smallest firstoption [33]. - If no space remains, the only solution is to allocate a new, larger disk and migrate the data [33].

Experimental Protocol: Automated Archiving Workflow

Objective: To establish a standardized, automated method for archiving completed quantum-mechanical calculations and significant interim results to maintain sufficient disk space on active computation nodes.

Methodology:

Data Classification:

- Completed Calculations: Defined as jobs that have reached the "OPTIMIZED GROUND-STATE GEOMETRY" or other terminal state [35]. All output files are flagged for archiving.

- Interim Results: Data from "ab initio molecular dynamics (AIMD)" trajectories or other sampling methods are evaluated. Only key snapshots and summary statistics are retained for long-term analysis, while raw transient data is purged after a stability confirmation [35].

Archiving Procedure:

- Compression: Identified files are compressed into a single timestamped archive file using

tarandgziporbz2. - Transfer: The archive is transferred to a designated long-term storage system (e.g., a network-attached storage or a dedicated data server).

- Verification: A checksum (e.g., MD5) is generated for the archive before and after transfer to ensure data integrity.

- Local Cleanup: Upon successful transfer and verification, the original files are removed from the active computation node.

- Compression: Identified files are compressed into a single timestamped archive file using

Automation via Cron:

- The above procedure is scripted (e.g., in a Bash or Python script).

- A

cronjob is scheduled to execute the script at regular intervals (e.g., daily at 2:00 AM) to ensure continuous disk maintenance [33].

Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

cron Scheduler |

A Unix-based job scheduler used to automate the execution of scripts at predefined times, essential for running archiving protocols without manual intervention [33]. |

df -h & du Commands |

Command-line utilities for monitoring disk usage (df -h) and identifying the size of files and directories (du), forming the basis of disk space troubleshooting [33] [34]. |

tar / gzip |

Standard Unix utilities for combining multiple files into a single archive file (tar) and compressing it (gzip or bzip2) to save storage space and bandwidth during transfer. |

Checksum Tool (e.g., md5sum) |

A program that generates a unique digital fingerprint (hash) for a file, used to verify that data was transferred without corruption. |

| LASSO Regression | A statistical method (Least Absolute Shrinkage and Selection Operator) that can be used in protocol optimization to automatically identify and retain the most significant data, reducing redundancy [35]. |

Core Concepts of Tiered Storage

Tiered storage is an architectural approach that organizes data across different types of storage media based on specific requirements for performance, cost, availability, and recovery [36]. This method is fundamental to Information Lifecycle Management (ILM), allowing organizations to reduce total storage costs while maintaining compliance and ensuring performance for critical applications [36].

Defining Data by Temperature

- Hot Data: Frequently accessed, mission-critical information that demands fast retrieval speeds, such as active calculation projects, ongoing analysis, or real-time processing data [37] [38]. This data typically resides on high-performance storage like Solid State Drives (SSDs) [37] [38].

- Warm Data: Information accessed occasionally but not daily, such as recently completed calculations or data from a few days prior that may be needed for verification [36]. Warm storage balances accessibility with cost-efficiency [38].

- Cold Data: Rarely accessed information that must be retained for compliance, reference, or potential future analysis, like completed research data, archival records, or raw datasets from concluded experiments [37] [39]. Cold storage prioritizes cost-effectiveness over retrieval speed [37].

Table: Storage Tier Characteristics Comparison

| Characteristic | Hot Storage | Warm Storage | Cold Storage |

|---|---|---|---|

| Access Frequency | Frequent, daily access [37] | Occasional, periodic access [36] | Seldom or never accessed [37] |

| Access Speed | Fast, low latency [37] [38] | Moderate retrieval times [38] | Slow, may take hours or days [37] |

| Storage Media | SSDs, NVMe [40] [38] | HDDs, lower-performance SSDs [40] | Tape, object storage, low-cost HDDs [37] [40] |

| Cost | Higher [37] | Moderate [36] | Lower [37] |

| Use Case Examples | Active calculations, real-time analysis [37] | Recent results, verification data [36] | Archived projects, compliance data [37] |

Implementing Tiered Storage for Computational Research

Tiered Storage Architecture

A multi-tiered storage architecture organizes storage media hierarchically, with the highest performance media at the top (Tier 0/1) and progressively more cost-effective, higher-capacity options at lower tiers [36].

Data Movement Workflow

The following diagram illustrates how data automatically transitions between storage tiers based on access patterns and predefined policies throughout its lifecycle.

Tiered Storage Implementation Methodology

Assessment and Planning Phase

Data Profiling: Analyze current data usage patterns to identify frequently accessed files versus dormant data [40] [41]. Use monitoring tools to track I/O activity and user behavior [40].

Performance Requirements Definition: Identify performance-critical applications that require low latency and high throughput [41]. Categorize computational workloads based on their storage performance needs.

Policy Creation: Establish tiering rules based on business needs and compliance requirements [40]. Define when data should transition between tiers based on access patterns [39].

Configuration and Deployment

Storage Tier Configuration: Set up different storage tiers within the storage management system [40]. Integrate with existing computational workflows and research applications.

Automation Setup: Implement policy engines or software-defined storage controllers that track metadata and initiate migrations automatically [40].

Testing and Validation: Verify that tiered storage operates seamlessly without disrupting research workflows [40]. Test data retrieval from cold storage to ensure acceptable performance.

Troubleshooting Guide

Common Issues and Solutions

Table: Tiered Storage Troubleshooting Guide

| Problem | Possible Causes | Solution Steps | Prevention Tips |

|---|---|---|---|

| Poor Tiered Performance | Misaligned tiering policies [40], Filter driver issues [42] | 1. Run Storage Tiers Optimization [43]2. Verify filter drivers running (fltmc command) [42]3. Review and adjust tiering policies |

Regularly audit tiering rules [40] |

| Files Failing to Tier | Files in use [42], Sync pending [42], Network issues | 1. Check file access status2. Verify initial upload completion [42]3. Confirm network connectivity to cloud storage [42] | Ensure proper file closure in applications |

| Failed File Recalls | Network connectivity issues [42], Corrupt reparse points [42] | 1. Check internet connectivity [42]2. Verify cloud storage accessibility [42]3. Check event logs for specific error codes | Monitor network stability |

| Unexpected Storage Costs | Excessive data movement [40], Incorrect tier assignment | 1. Review data transition policies2. Analyze access patterns3. Adjust cooling periods | Implement centralized monitoring [40] |

Performance Monitoring

- Tiering Activity: Monitor Event ID 9003, 9016, and 9029 in Telemetry event log for tiering operations [42]

- Recall Activity: Track Event ID 9005, 9006, 9009, and 9059 for recall operations and reliability metrics [42]

- Capacity Planning: Regularly assess storage utilization and project future needs based on research growth trajectories [41]

Frequently Asked Questions (FAQs)

What is the minimum file size for tiering? The minimum supported file size is based on the file system cluster size (typically double the file system cluster size). For example, if the file system cluster size is 4 KiB, the minimum file size is 8 KiB [42].

How much can I save with tiered storage? Savings depend on the percentage of cold data. If 80% of your data is cold and you move it from SSD to object storage, you can expect approximately 70% cost reduction based on typical cloud pricing [39].

Does tiered storage impact query performance? Modern systems use caching mechanisms where frequently accessed cold data is cached locally after the first access, making subsequent queries nearly as fast as those on hot data [39].

How do I determine the right cooling period for my data? Analyze access patterns over time. Computational research data typically shows sharp decline in access frequency after 30-90 days, making this an optimal cooling period for initial policy setting.

Can I manually control what data tiers where? Yes, most tiered storage systems allow for manual policy assignment to specific datasets or file types to ensure critical research data remains in appropriate tiers.

Research Reagent Solutions

Table: Essential Storage Solutions for Computational Research

| Solution Type | Example Products/Services | Function | Best For |

|---|---|---|---|

| Hot Storage | Azure Hot Blobs [37], AWS S3 Standard [38], Google Cloud Persistent SSDs [38] | High-performance storage for active calculations | Ongoing basis set computations, real-time analysis |

| Warm Storage | Azure Cool Storage [38], AWS S3 Standard-IA [38] | Cost-effective storage for recently accessed data | Recent research data, verification datasets |

| Cold Storage | Amazon Glacier [37], Google Coldline [38], Azure Archive [38] | Low-cost archival for rarely accessed data | Completed research data, compliance archives |

| Storage Management | Apache Doris [39], Druva [36] | Automated data tiering and lifecycle management | Implementing policy-based storage optimization |

| Monitoring Tools | Built-in telemetry logs [42], Inventory management software [44] | Track storage utilization and access patterns | Capacity planning and performance optimization |

Distributed Storage Solutions for Multi-Node Computational Environments

Troubleshooting Guides

Guide 1: Resolving "No Space Left on Device" in a Distributed Cluster

Problem: A multi-node computational job has failed. The log files and system alerts indicate a "No space left on device" error on several worker nodes.

Diagnosis: This error occurs when the persistent disk or scratch space on one or more cluster nodes reaches full capacity. In computational research, this is frequently caused by large temporary files from basis set calculations, excessive logging, or unchecked data replication within the distributed file system [45] [46].

Solution:

- Identify the Full Disk: Connect to the affected node(s) and run

df -hto check disk usage across all mount points. Identify which partition is at or near 100% capacity [45]. - Locate Large Files: On the full partition, use the

ducommand to find the largest files or directories. For example, rundu /path/to/partition | sort -nr | head -n 30to list the 30 largest items [45]. - Take Remedial Action:

- If the /tmp directory is full: Safely delete unnecessary temporary files [45].

- If log files are the issue: Implement log rotation or archive old logs to a different storage system [45].

- If datastore files are growing rapidly: Compact the datastore if the system supports it (e.g., using a tool like

tw_ds_compact). Schedule this compaction regularly to prevent recurrence [45]. - Resize the Disk: If files cannot be deleted, you may need to resize the disk. For a VM, this often involves stopping the instance, increasing the disk size via the cloud provider's console or CLI (e.g.,

gcloud compute disks resize), and then restarting the instance. You may also need to manually resize the file system to utilize the new space [46].

Prevention:

- Implement proactive disk space monitoring and configure alerts for when usage exceeds a defined threshold (e.g., 80%) [45].

- Schedule regular cleanup jobs for temporary directories and implement log rotation policies.

- For distributed file systems like HDFS, monitor data replication levels to prevent unnecessary storage duplication [47].

Guide 2: Troubleshooting Scratch Disk Space Exhaustion During Parallel Calculations

Problem: A large-scale basis set calculation fails mid-process, and the application logs indicate it ran out of scratch disk space.

Diagnosis: Quantum chemistry codes (e.g., BAND, VASP) often use scratch space to write temporary matrices and other intermediate data. The required scratch space can grow dramatically with the number of basis functions, k-points, and system size [48].

Solution:

- Check Application Logs: Verify that the error is related to scratch disk space and note which directory was used.

- Free Up Immediate Space: Clear other non-essential files from the scratch partition, if possible.

- Increase Available Scratch Space:

- Add More Nodes: In distributed environments, increasing the number of nodes can distribute the scratch space requirement, as the needed space is often "fully distributed" across the cluster [48].

- Reconfigure I/O Mode: Some software allows you to change how temporary matrices are written to disk. For instance, setting

Programmer Kmiostoragemode=1can switch to a "fully distributed" storage mode, which can help manage space usage across nodes [48]. - Use a Larger Shared Filesystem: If the scratch directory is on a shared network filesystem like NFS, consider upgrading to a larger or more performant distributed file system like Lustre or GlusterFS [49].

Prevention:

- Before running a job, estimate the required scratch space based on the system size and basis set. Monitor scratch space usage during initial test runs.

- Configure your computational software to use a dedicated, large-capacity, and high-performance scratch filesystem.

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary distributed storage patterns, and how do I choose one?

The choice of pattern depends on your data access patterns and consistency requirements, guided by the CAP theorem [47].

| Storage Pattern | Description | Best Use Case |

|---|---|---|

| Data Partitioning/Sharding | Splits a dataset into smaller fragments distributed across multiple nodes [47] [50]. | Horizontal scaling of databases; managing very large datasets that exceed a single node's capacity. |

| Data Replication | Maintains copies of data partitions on multiple nodes [47] [50]. | Ensuring high availability and fault tolerance for critical data. |

| Distributed File Systems | Provides a unified file system interface across multiple storage nodes (e.g., HDFS, CephFS, GlusterFS) [47] [49]. | Storing and processing large files in batch-oriented workloads (HPC, analytics). |

| Object Storage | Manages data as objects in a flat namespace, accessible via APIs (e.g., Amazon S3) [47]. | Storing unstructured data like checkpoints, model files, and general research data. |

Storage Pattern Selection

FAQ 2: Which distributed storage solution is best for containerized drug discovery platforms?