Machine Learning vs DFT for Compound Stability Prediction: A Comprehensive Guide for Researchers

This article provides a comparative analysis of Machine Learning (ML) and Density Functional Theory (DFT) for predicting compound thermodynamic stability, a critical task in materials science and drug development.

Machine Learning vs DFT for Compound Stability Prediction: A Comprehensive Guide for Researchers

Abstract

This article provides a comparative analysis of Machine Learning (ML) and Density Functional Theory (DFT) for predicting compound thermodynamic stability, a critical task in materials science and drug development. We explore the foundational principles of both approaches, detail cutting-edge methodological frameworks like ensemble learning and bond-aware graph networks, and address key challenges such as DFT error correction and model generalizability. By examining validation strategies and performance metrics from recent research, this guide equips scientists with the knowledge to select and optimize computational strategies for accelerated and reliable stability assessment of new compounds, from inorganic materials to pharmaceutical candidates.

Understanding the Computational Battlefield: Core Principles of DFT and ML for Stability

Theoretical Foundation: From Formation Energy to Stability

The primary goal of computational screening in materials and drug discovery is to identify stable compounds. A fundamental metric for this assessment is the decomposition energy (ΔHd), which quantifies the thermodynamic stability of a material relative to competing compounds in its chemical space [1]. Unlike the formation energy (ΔHf), which measures the energy of a compound formed from its constituent elements, ΔHd is determined by a convex hull construction in formation energy-composition space [1]. A compound with a negative ΔHd is thermodynamically stable, while a positive value indicates it is unstable or metastable and will tend to decompose [1] [2].

This distinction is critical. While ΔHf values can span a wide range (e.g., -1.42 ± 0.95 eV/atom), ΔHd typically operates on a much finer energy scale (0.06 ± 0.12 eV/atom) [1]. This makes predicting stability a subtle problem; a model can have low error in predicting ΔHf but still perform poorly on ΔHd if the relative energy differences within a chemical space are not captured with high precision. Accurate prediction of ΔHd is therefore a more rigorous and application-relevant test for computational models [1].

Performance Comparison: Machine Learning vs. Density Functional Theory

The following table summarizes the performance of various computational approaches for predicting compound stability, a key application where ΔHd is the target property.

Table 1: Performance Comparison of Stability Prediction Methods

| Method Category | Specific Model / Approach | Key Input Features | Performance on Stability Prediction (ΔHd) | Computational Cost & Throughput |

|---|---|---|---|---|

| Compositional ML Models | ElemNet [1] | Elemental stoichiometry only | Poor performance; high rate of false stable predictions [1] | Very high (millions of compounds/day) |

| Magpie [1] [2] | Statistical features from elemental properties (e.g., radius, electronegativity) | Poor performance; struggles in sparse chemical spaces [1] | Very high | |

| Roost [1] [2] | Chemical formula treated as a graph of elements | Poor performance; limited by compositional information alone [1] | Very high | |

| Advanced ML Models | ECSG (Ensemble Framework) [2] | Electron configuration, elemental properties, and interatomic interactions | High Accuracy (AUC = 0.988); high sample efficiency [2] | High |

| Structural ML Models | Structural Model [1] | Crystalline atomic structure | Non-incremental improvement over compositional models; capable of detecting stable materials efficiently [1] | Medium (requires known structure) |

| Traditional Computational | Density Functional Theory (DFT) [1] [2] | Atomic numbers and positions | High Accuracy; considered the reference standard but not perfect [1] | Very Low (days/weeks for large screens) |

Key Performance Insights

- The Accuracy Gap: While ML models, particularly compositional ones, can predict the formation energy (ΔHf) with accuracy approaching DFT, this does not translate to accurate stability predictions (ΔHd) [1]. The core issue is a lack of systematic error cancellation when comparing energies of similar compounds, a feature inherent in DFT calculations [1].

- The Structural Advantage: ML models that incorporate structural information show a "nonincremental improvement" in stability prediction compared to those using composition alone [1]. However, a significant constraint is that the ground-state structure is often unknown for novel compositions [1].

- Ensemble and Novel Frameworks: Recent models like ECSG, which combine multiple knowledge sources (electron configuration, atomic properties, interatomic interactions) through stacked generalization, demonstrate that carefully designed ML models can mitigate inherent biases and achieve high accuracy and sample efficiency [2].

Experimental Protocols for Validation

Benchmarking ML Models for Stability Prediction

A critical protocol for validating any model's utility for materials discovery is its performance on predicting stability via the convex hull construction [1].

- Dataset: Use a large, standardized database of calculated formation energies, such as the Materials Project (85,014 unique compositions used in one study) [1].

- Model Training: Train ML models to predict the formation energy (ΔHf) of compounds. Models can be compositional (e.g., Magpie, Roost, ElemNet) or structural [1].

- Stability Calculation: For a given chemical space (e.g., all compounds containing elements A and B), calculate the predicted ΔHf for all entries. Construct the convex hull from these predicted values.

- Performance Metric: Calculate the decomposition energy (ΔHd) for each compound using the ML-predicted hull. Compare the stability classification (stable/unstable) and the value of ΔHd against the ground-truth values derived from DFT-calculated hulls. Metrics include accuracy of stable identification and mean absolute error in ΔHd [1].

Validation via First-Principles Calculations

For new ML-discovered stable compounds, the definitive validation involves first-principles calculations [2].

- Procedure: Take compositions predicted to be stable by the ML model (e.g., with negative ΔHd). Perform DFT calculations to determine their precise formation energy and atomic structure.

- Hull Construction: Construct a new, definitive convex hull using the DFT-calculated formation energies of the predicted compound and all other known compounds in the same chemical space.

- Success Criterion: The predicted compound is validated if it lies on the DFT-derived convex hull (ΔHd ≤ 0), confirming its thermodynamic stability [2].

Workflow and Relationship Diagrams

Convex Hull Construction for ΔHd

The following diagram illustrates the fundamental process of determining thermodynamic stability from formation energies.

Title: Determining Stability via Convex Hull

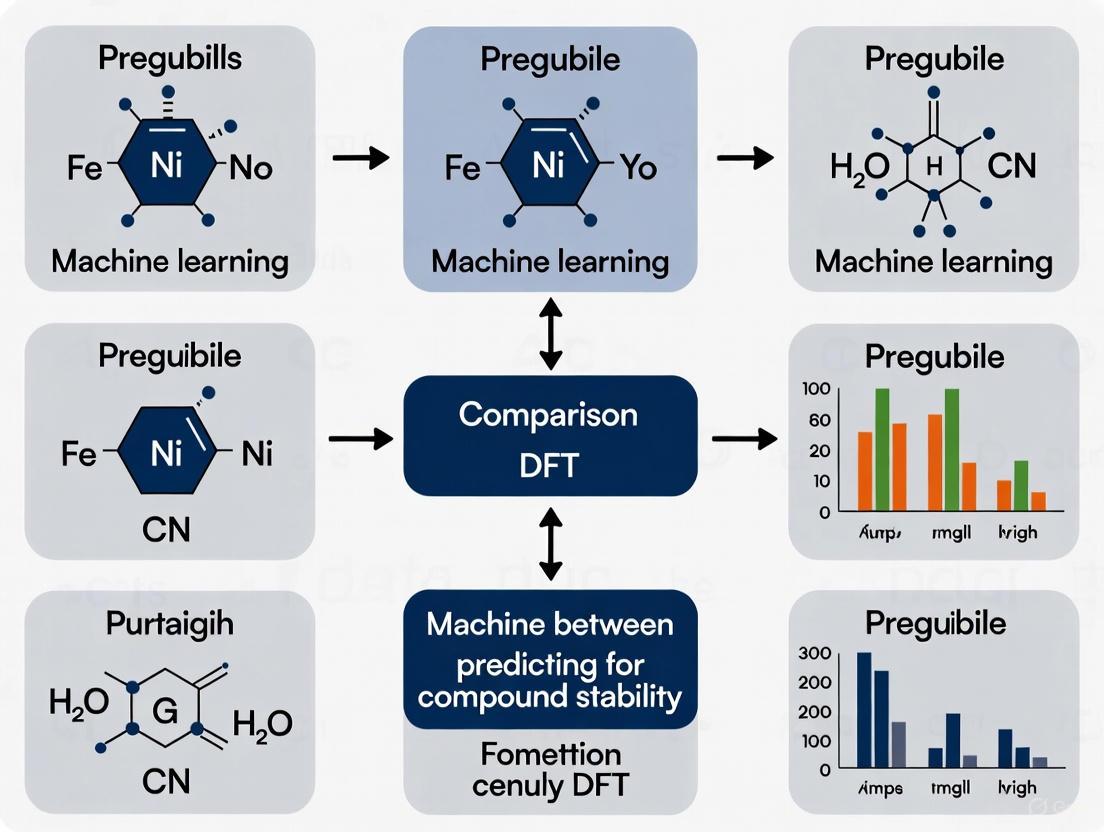

ML vs DFT Workflow for Stability Prediction

This diagram compares the typical workflows for predicting compound stability using Machine Learning and Density Functional Theory.

Title: ML vs DFT Stability Prediction Workflow

The Scientist's Toolkit: Essential Research Solutions

Table 2: Key Resources for Computational Stability Research

| Category | Item / Solution | Function & Application |

|---|---|---|

| Computational Frameworks | Compositional ML Models (e.g., Magpie, Roost, ElemNet) [1] | Predict formation energy and stability from chemical formula alone; useful for initial high-throughput screening. |

| Structural ML Models [1] | Predict formation energy and stability using atomic structure information; higher accuracy but requires a known structure. | |

| Ensemble ML Frameworks (e.g., ECSG) [2] | Combine multiple models to reduce inductive bias and improve the accuracy and sample efficiency of stability predictions. | |

| Reference Databases | Materials Project (MP) [1] [2] | A vast database of DFT-calculated properties for inorganic compounds, used for training ML models and benchmarking. |

| Joint Automated Repository for Various Integrated Simulations (JARVIS) [2] | A database incorporating DFT data and ML tools for materials design, used for model training and testing. | |

| Validation Software | Density Functional Theory (DFT) Codes | First-principles calculation software used as the gold standard to validate ML-predicted stable compounds [2]. |

| Experimental Platforms | High-Throughput Screening (HTS) Platforms | Automated experimental systems used to physically test the stability or activity of computationally predicted hits [3] [4]. |

In the computational discovery of new materials, density functional theory (DFT) has long served as the foundational workhorse for predicting thermodynamic stability. The concept of the convex hull is central to this process, providing an unambiguous thermodynamic criterion for determining whether a compound can exist stably or will decompose into other phases. Constructed in formation energy-composition space, the convex hull represents the set of phases with the lowest possible formation energies, defining the ground state of a chemical system. A compound's stability is quantified by its distance to the convex hull (ΔHd), which represents the energy penalty per atom for decomposition into other stable phases in the system. A compound with ΔHd = 0 eV/atom is thermodynamically stable, while positive values indicate instability or metastability.

The critical challenge in computational materials science lies in accurately calculating the formation energies that underpin this convex hull construction. While DFT provides a first-principles approach without empirical parameters, its predictive power faces limitations from systematic errors in exchange-correlation functionals and substantial computational costs. These challenges have motivated the emergence of machine learning (ML) approaches as potential alternatives or supplements. This article provides a detailed comparison of these methodologies, examining their performance in predicting compound stability through the lens of convex hull analysis, with a focus on accuracy, computational efficiency, and practical applicability in research settings.

Methodological Approaches: DFT and Machine Learning Protocols

Density Functional Theory: The Established Benchmark

DFT calculates formation energies from first principles by solving the quantum mechanical many-body problem for electrons. The standard protocol involves:

- Total Energy Calculations: Using plane-wave or localized basis sets to compute the total energy of the compound and its constituent elements in their reference states.

Formation Energy Calculation: The formation enthalpy (ΔHf) is determined using the equation:

ΔHf = H(compound) - ΣxiH(element i)

where H(compound) is the enthalpy per atom of the compound, xi is the concentration of element i, and H(element i) is the enthalpy per atom of element i in its standard state [5].

Convex Hull Construction: After calculating ΔHf for all competing phases in a chemical system, the convex hull is built as the lower envelope of formation energies across compositions. The distance from any phase to this hull defines its decomposition enthalpy (ΔHd) [1].

High-throughput DFT databases like the Materials Project have automated this process, calculating hull distances for thousands of compounds, though these calculations remain computationally intensive, often requiring thousands to millions of CPU hours for comprehensive phase space exploration [1].

Machine Learning Approaches: Emerging Methodologies

Machine learning methods for stability prediction employ diverse strategies, each with distinct protocols:

Compositional Models: These use only chemical formula as input, employing features like elemental fractions, atomic numbers, and physicochemical properties. Training involves supervised learning on existing DFT databases. Representative models include Magpie (using elemental properties), ElemNet (deep learning on stoichiometry), and Roost (graph neural networks) [1].

Structural Models: These incorporate atomic arrangement information, requiring known crystal structures. They typically demonstrate superior performance but are limited to compositions with pre-determined structures [1].

Hybrid DFT-ML Workflows: These employ ML as a pre-screening tool to identify promising candidates before DFT validation. For instance, in discovering low-work-function perovskites, researchers used ML to screen 23,822 candidates before performing high-precision DFT on a reduced subset, ultimately identifying 27 stable compounds [6].

Error-Correction Models: Some approaches train ML models to predict the discrepancy between DFT-calculated and experimental formation enthalpies. These models utilize neural networks with structured feature sets including elemental concentrations, atomic numbers, and interaction terms [5].

Table 1: Key Machine Learning Model Types for Stability Prediction

| Model Type | Input Data | Advantages | Limitations |

|---|---|---|---|

| Compositional | Chemical formula only | Fast screening of novel compositions | Lower accuracy for stability prediction |

| Structural | Crystal structure | Higher accuracy for known structures | Requires predetermined atomic positions |

| Universal Interatomic Potentials | Atomic coordinates | Transferable across systems | Training computationally intensive |

| Error-Correction | DFT results + experimental data | Improves DFT accuracy | Limited by experimental data availability |

Comparative Performance Analysis: Accuracy and Computational Efficiency

Formation Energy Prediction Accuracy

The predictive accuracy for formation energies varies significantly between methods:

DFT Performance: Standard DFT calculations with generalized gradient approximation (GGA) functionals typically achieve mean absolute errors (MAE) of 0.06-0.15 eV/atom for formation energies compared to experimental values. This error range becomes particularly significant for stability determination where energy differences between competing phases can be as small as 0.01 eV/atom [5] [1].

Machine Learning Models: Compositional ML models can approach or even surpass DFT-level accuracy for formation energy prediction. Recent benchmarks show MAE values of 0.08-0.12 eV/atom on test sets, comparable to DFT disagreements with experiment. However, this accuracy doesn't necessarily translate to reliable stability predictions [1].

Error-Correction ML: Machine learning approaches that correct DFT errors have demonstrated significant improvements. In one study, a neural network model reduced errors in formation enthalpy predictions for Al-Ni-Pd and Al-Ni-Ti systems, enabling more reliable phase stability determinations [5].

Table 2: Accuracy Comparison for Formation Energy and Stability Prediction

| Method | Formation Energy MAE (eV/atom) | Stability Prediction Accuracy | False Positive Rate |

|---|---|---|---|

| DFT (GGA) | 0.06-0.15 [5] [1] | High (benchmark) | Low |

| Compositional ML | 0.08-0.12 [1] | Variable, often poor [1] | High for some models [1] |

| Structural ML | 0.05-0.10 [1] | Improved over compositional [1] | Moderate |

| Universal Interatomic Potentials | ~0.05 [7] | Highest among ML approaches [7] | Low [7] |

| DFTB | Varies by system [8] | Good for pre-screening [8] | System-dependent |

Computational Efficiency and Resource Requirements

Computational cost represents a critical differentiator between methods:

DFT Calculations: A single DFT calculation for a medium-sized unit cell (50-100 atoms) can require hours to days on high-performance computing clusters, with comprehensive hull construction for a ternary system potentially needing hundreds to thousands of such calculations [8] [5].

DFTB Approach: The Density Functional Tight Binding method, as implemented in DFTB+CASM frameworks, can be up to an order of magnitude faster than DFT for predicting formation energies and convex hulls while maintaining reasonable accuracy for materials like SiC and ZnO [8].

Machine Learning Inference: Once trained, ML models can predict formation energies in milliseconds to seconds, enabling rapid screening of thousands of candidates. However, this excludes the substantial computational cost of training, which can require extensive datasets and computational resources [1] [7].

Hybrid Workflows: Combined ML-DFT approaches optimize the trade-off between speed and accuracy. For example, in perovskite discovery, ML pre-screening reduced 23,822 candidates to a manageable number for DFT validation, dramatically increasing efficiency [6].

Diagram 1: Hybrid ML-DFT workflow for efficient stability prediction. ML rapidly pre-screens large composition spaces, while DFT provides accurate validation for promising candidates.

Stability Prediction Performance

Crucially, accurate formation energy prediction doesn't guarantee reliable stability determination:

The Stability Prediction Challenge: Stability depends on small energy differences between competing phases (ΔHd), typically 1-2 orders of magnitude smaller than formation energies themselves. While ΔHf spans -1.42±0.95 eV/atom on average, ΔHd averages just 0.06±0.12 eV/atom [1].

Error Cancellation in DFT: DFT benefits from systematic error cancellation when comparing chemically similar compounds, making it more reliable for stability prediction than absolute formation energies alone [1].

ML Limitations: Compositional ML models exhibit high false-positive rates, incorrectly predicting many unstable compounds as stable. This impedes their direct use for materials discovery without DFT verification [1] [7].

Universal Interatomic Potentials: Among ML approaches, universal interatomic potentials (UIPs) have shown the most promise for stability prediction, outperforming compositional and structural models in recent benchmarks [7].

Research Reagent Solutions: Computational Tools for Stability Prediction

Table 3: Essential Computational Tools for Stability Prediction

| Tool/Software | Type | Primary Function | Application Context |

|---|---|---|---|

| CASM | Software package | Clusters Approach to Statistical Mechanics | Automated construction of cluster expansions and phase diagrams [8] |

| DFTB+ | Computational method | Density Functional Tight Binding | Accelerated formation energy calculations [8] |

| EMTO-CPA | DFT code | Exact Muffin-Tin Orbitals with Coherent Potential Approximation | Total energy calculations for disordered alloys [5] |

| Matbench Discovery | Benchmarking framework | Evaluation platform for ML energy models | Standardized comparison of stability prediction methods [7] |

| Universal Interatomic Potentials | ML force fields | Interatomic potentials with broad element coverage | Structure relaxation and energy estimation across diverse chemistries [7] |

DFT remains the indispensable workhorse for reliable convex hull construction and thermodynamic stability prediction, particularly for its systematic error cancellation when comparing similar compounds. However, its computational expense limits comprehensive phase space exploration. Machine learning approaches, especially compositional models, show impressive formation energy prediction capabilities but face challenges with stability determination accuracy due to the subtle energy differences involved.

The most promising path forward lies in hybrid methodologies that leverage the respective strengths of both approaches. ML models excel at rapid screening of vast composition spaces, while DFT provides quantitative validation for promising candidates. Universal interatomic potentials represent particularly exciting developments, approaching the accuracy of DFT for structure relaxation and energy estimation at dramatically reduced computational cost.

As benchmarking frameworks like Matbench Discovery continue to standardize evaluation, and ML models incorporate more physical information, the synergy between machine learning and first-principles calculations will likely accelerate, enabling more efficient and accurate discovery of novel stable materials for technological applications.

Diagram 2: Taxonomy of computational methods for material stability prediction, showing the relationship between DFT, machine learning, and hybrid approaches.

The prediction of compound stability, a cornerstone of materials science and drug design, is undergoing a fundamental transformation. For decades, density functional theory (DFT) has served as the primary computational tool for determining material stability from quantum mechanical principles. While DFT has achieved notable successes—predicting properties like equilibrium volumes, elastic constants, and structural stability—its intrinsic energy resolution errors often limit predictive accuracy for critical applications such as formation enthalpies and phase stability, particularly in complex multi-element systems [5].

The emerging paradigm leverages machine learning (ML) to create surrogate models that learn the relationship between a material's composition/structure and its properties from existing data, achieving accuracy comparable to first-principles methods at a fraction of the computational cost. This shift from solving physical equations to learning patterns from data represents a fundamental change in how computational prediction is approached, enabling rapid screening of vast chemical spaces that were previously inaccessible [9] [10].

Fundamental Comparison: DFT Versus ML Approaches

Core Principles and Methodologies

Density Functional Theory (DFT) operates from first principles by solving the quantum mechanical many-body problem to determine electron distributions and system energies. It requires no experimental input beyond fundamental constants, providing a theoretically complete description of electronic structure. However, this completeness comes at significant computational expense, with calculation time scaling approximately as O(N³) with system size [5] [11].

Machine Learning (ML) for stability prediction employs statistical models trained on existing data (either experimental or computational) to identify patterns connecting compositional/structural features to stability. Unlike DFT, ML methods are empirically calibrated, with accuracy dependent on the quality and representativeness of training data. Their computational cost is primarily concentrated in the training phase, while prediction for new compounds is extremely fast [9] [12].

Table 1: Fundamental Comparison Between DFT and ML Approaches

| Aspect | Density Functional Theory (DFT) | Machine Learning (ML) |

|---|---|---|

| Theoretical Basis | Quantum mechanics principles | Statistical pattern recognition |

| Computational Scaling | O(N³) with system size | O(1) for prediction after training |

| Data Requirements | None beyond fundamental constants | Large datasets of known compounds |

| Transferability | Universal in principle | Domain-dependent |

| Accuracy Limitations | Exchange-correlation functional error | Training data quality and coverage |

| Typical Applications | Detailed electronic structure analysis, small systems | High-throughput screening, large chemical spaces |

Performance Comparison: Accuracy and Efficiency

Recent studies directly comparing DFT and ML performance reveal a complex landscape where each approach excels in different regimes. For the prediction of MAX phase stability, ML classifiers including Random Forest (RFC), Support Vector Machine (SVM), and Gradient Boosting Tree (GBT) demonstrated remarkable efficiency, screening 4,347 potential MAX phases to identify 190 promising candidates. Subsequent DFT validation confirmed that 150 of these ML-predicted phases met thermodynamic and intrinsic stability criteria, representing a 79% success rate for the ML pre-screening [9].

In alloy thermodynamics, ML corrections to DFT have shown significant improvement in accuracy. A neural network approach to correct DFT-calculated formation enthalpies reduced errors by systematically learning the discrepancy between DFT calculations and experimental measurements for binary and ternary alloys. The model utilized a multi-layer perceptron (MLP) regressor with three hidden layers, with optimization through leave-one-out cross-validation to prevent overfitting [5].

Table 2: Quantitative Performance Comparison for Stability Prediction

| Method | Computational Time | Accuracy | Throughput | Key Limitations |

|---|---|---|---|---|

| DFT (Standard) | Hours to days per compound | ~80-90% for simple systems | Low (1-10 compounds/day) | Systematic functional errors |

| DFT with ML Correction | Minutes to hours + training | ~90-95% for trained systems | Medium (10-100 compounds/day) | Domain transfer requires retraining |

| Pure ML (Random Forest) | Seconds after training | ~85-92% for similar chemistry | High (1,000+ compounds/day) | Limited extrapolation capability |

| Pure ML (Neural Network) | Seconds after training | ~88-95% for similar chemistry | High (1,000+ compounds/day) | Large training data requirements |

Experimental Protocols and Methodologies

Machine Learning Workflow for Stability Prediction

The following diagram illustrates the comprehensive workflow for ML-assisted stability prediction, highlighting the iterative process of model development and validation:

Data Curation and Feature Engineering

The foundation of any successful ML model is high-quality, curated data. For MAX phase stability prediction, researchers compiled a dataset of 1,804 known MAX phase combinations with their stability labels, drawing from literature and experimental studies. Feature selection included elemental descriptors (electronegativity, atomic radius, valence electron count) and structural descriptors (lattice parameters, bonding characteristics) [9].

For alloy stability, the feature set typically includes elemental concentrations, weighted atomic numbers, and interaction terms to capture chemical complexity. As demonstrated in high-entropy alloy research, optimal descriptors often combine microstructure-based features (nearest-neighbor compositions, Voronoi volumes) with electronic-structure-based features (electrostatic potential, d-band center, Bader charges) to achieve the highest prediction accuracy [12].

Model Training and Validation Protocols

The ML pipeline employs rigorous validation to ensure generalizability. For alloy formation enthalpy prediction, researchers implemented both leave-one-out cross-validation (LOOCV) and k-fold cross-validation to prevent overfitting. The neural network architecture was a multi-layer perceptron (MLP) with three hidden layers, with hyperparameters optimized through systematic search [5].

For MAX phase screening, multiple classifier types including Random Forest (RFC), Support Vector Machine (SVM), and Gradient Boosting Tree (GBT) were trained and compared. The models were evaluated using standard classification metrics (precision, recall, F1-score) with the best-performing model deployed for large-scale screening [9].

DFT Validation Protocols

Despite the rise of ML methods, DFT remains the validation standard for ML predictions due to its first-principles nature. For the 190 ML-predicted MAX phases, researchers performed full DFT calculations to verify thermodynamic and mechanical stability through formation energy calculations, elastic constant analysis, and phonon dispersion calculations [9].

The DFT workflow typically involves:

- Geometry optimization to find equilibrium structures

- Formation energy calculation relative to elemental phases

- Mechanical stability assessment via elastic constants

- Dynamic stability verification through phonon calculations

This comprehensive validation ensures that ML predictions satisfy fundamental physical constraints beyond statistical correlations.

Case Studies: Experimental Validation and Performance

MAX Phase Discovery with ML Guidance

A landmark demonstration of the ML paradigm emerged from the discovery of Ti₂SnN, a previously unreported MAX phase. The research workflow began with ML screening of 4,347 potential MAX phase combinations, identifying 190 promising candidates. Subsequent DFT calculations verified that 150 possessed both thermodynamic and mechanical stability. From these, Ti₂SnN was selected for experimental synthesis, successfully produced through Lewis acid substitution reactions at 750°C [9].

This case exemplifies the power of the ML-DFT partnership: ML rapidly identified promising candidates from a vast chemical space, DFT provided rigorous physical validation, and experimental synthesis confirmed the prediction. The entire process dramatically accelerated what would have been years of trial-and-error experimentation.

Alloy Thermodynamics with ML-Corrected DFT

In alloy systems, researchers have developed hybrid approaches that leverage the strengths of both methods. A neural network was trained to predict the discrepancy between DFT-calculated and experimentally measured formation enthalpies for binary and ternary alloys. When applied to Al-Ni-Pd and Al-Ni-Ti systems—important for high-temperature aerospace applications—the ML-corrected DFT showed significantly improved agreement with experimental phase diagrams compared to raw DFT calculations [5].

The success of this approach highlights that systematic DFT errors often follow recognizable patterns that ML can learn and correct, providing accuracy approaching experimental measurements while maintaining the generality of first-principles methods.

High-Entropy Alloy Descriptor Optimization

For complex multi-component systems like high-entropy alloys (HEAs), descriptor selection becomes critical. Research on C- or N-doped VNbMoTaWTiAl₀.5 HEAs systematically evaluated six types of microstructure-based descriptors and seven types of electronic-structure-based descriptors. Using linear regression with leave-one-out cross-validation, the optimal descriptor combinations achieved prediction accuracy (Q²) of 75% and 80% for C and N doping stability, respectively [12].

This study demonstrated that no single descriptor adequately captures doping stability; instead, combinations of descriptors representing different aspects of the local chemical environment are necessary for accurate predictions.

Table 3: Case Study Performance Summary

| Case Study | ML Method | Dataset Size | Prediction Accuracy | Experimental Validation |

|---|---|---|---|---|

| MAX Phase Screening | Random Forest Classifier | 1,804 training compounds | 79% success rate (150/190) | Ti₂SnN successfully synthesized |

| Alloy Enthalpy Correction | Neural Network (MLP) | Binary/ternary alloy datasets | Significant improvement over raw DFT | Improved phase diagram agreement |

| HEA Dopant Stability | Linear Regression + Feature Selection | DFT-calculated doping energies | Q² = 75-80% (cross-validated) | Physically interpretable descriptors |

Implementing ML-driven stability prediction requires both computational tools and conceptual frameworks. The following resources represent essential components of the modern computational materials scientist's toolkit:

Computational Infrastructure and Software

- DFT Packages (VASP, CASTEP): First-principles electronic structure codes for generating training data and validating ML predictions [11] [12]

- ML Libraries (scikit-learn, TensorFlow): Open-source machine learning frameworks for implementing classifiers and regression models

- Descriptor Generation Tools (Pymatgen, ChemEnv): Software for calculating structural and chemical descriptors from atomic coordinates [12]

- High-Throughput Calculation Infrastructure: Automated workflow systems for managing thousands of concurrent DFT and ML calculations

Methodological Frameworks

- Genetic Algorithms for Feature Selection: Evolutionary approaches for identifying optimal descriptor combinations from large candidate pools [13]

- Cross-Validation Protocols (LOOCV, k-fold): Robust validation techniques to prevent overfitting and ensure model generalizability [5]

- Uncertainty Quantification Methods: Techniques for estimating prediction reliability and domain of applicability

- Transfer Learning Approaches: Methods for leveraging pre-trained models on new chemical systems with limited data [14]

The evidence from recent studies points toward a hybrid future rather than a complete replacement of DFT by ML. While ML demonstrates superior efficiency for high-throughput screening across vast chemical spaces, DFT provides the fundamental physical validation necessary for confident prediction. The most successful workflows leverage ML to identify promising regions of chemical space, then apply rigorous DFT validation to verify predictions before experimental synthesis.

This partnership paradigm acknowledges that data-driven approaches excel at pattern recognition across large datasets, while first-principles methods provide physical grounding and reliability outside training domains. As ML methodologies continue to mature and datasets expand, the balance may shift further toward data-driven approaches, but the fundamental need for physical validation will likely maintain DFT's role in the computational materials science toolkit.

For researchers and drug development professionals, this evolution enables unprecedented exploration of chemical space, dramatically accelerating the discovery timeline for new materials and therapeutic compounds. By understanding the complementary strengths and limitations of both approaches, scientists can strategically deploy these tools to maximize research efficiency and prediction reliability.

The discovery and design of new compounds, crucial for applications from drug development to energy storage, hinges on accurately predicting material stability. Traditionally, this domain has been ruled by first-principle physical laws, primarily through Density Functional Theory (DFT). DFT provides a fundamental, law-based approach derived from quantum mechanics to compute formation energies and determine thermodynamic stability [15]. In contrast, a new paradigm has emerged: machine learning (ML) offers a data-driven methodology that identifies complex statistical patterns within existing datasets to make rapid stability predictions [1] [16]. This guide objectively compares the performance, experimental protocols, and underlying philosophies of these two approaches, providing scientists and researchers with a clear framework for selecting the appropriate tool for compound stability prediction.

Performance Comparison: Quantitative Analysis

Direct comparison of DFT and ML reveals a fundamental trade-off: computational speed versus physical fidelity and reliability. The table below summarizes their performance based on published data.

Table 1: Performance Comparison: DFT vs. Machine Learning for Stability Prediction

| Feature | Density Functional Theory (DFT) | Machine Learning (ML) |

|---|---|---|

| Underlying Philosophy | Physical laws (Quantum Mechanics) [15] | Statistical patterns from data [1] [16] |

| Primary Predictions | Enthalpy of formation (ΔHf) [15] | Stability (via learned ΔHf or direct classification) [1] [16] |

| Typical Workflow | Solving Kohn-Sham equations [15] | Feature extraction and model training [16] [17] |

| Computational Speed | Slow (Hours to days per structure) | Fast (Milliseconds per structure after training) [1] |

| Accuracy on Formation Energy | High, but with systematic errors [15] | Can approach DFT-level accuracy [1] |

| Accuracy on Stability (ΔHd) | Reliable, benefits from error cancellation [1] | Poor for compositional models; struggles with subtle energy differences [1] |

| Data Requirements | Minimal; requires only atomic structure | Large, curated datasets of known compounds [1] [16] |

| Interpretability | High; results from physical principles | Low; "black box" statistical model [18] |

| Best Use Case | Final stability validation, understanding mechanisms | High-throughput screening of candidate compositions [1] [16] |

A critical finding from recent studies is that accurate prediction of formation energy (ΔHf) does not guarantee accurate prediction of stability, which is determined by the decomposition enthalpy (ΔHd) [1]. The energy range of ΔHd is typically 1-2 orders of magnitude smaller than that of ΔHf, making it a much more subtle quantity to predict. DFT, despite its errors, benefits from a systematic cancellation of error when comparing energies of chemically similar compounds to determine stability. In contrast, ML models, particularly those based only on composition (compositional models), often fail to capture these delicate relative energies, leading to a high rate of false positives in stability prediction [1].

Experimental Protocols and Methodologies

The DFT-Based Workflow

The DFT approach is grounded in solving the electronic structure problem. The core protocol involves:

- Input Structure Preparation: Defining the crystal structure (atomic positions and lattice parameters) of the compound of interest [15].

- Total Energy Calculation: Using the Exact Muffin-Tin Orbital (EMTO) method or similar techniques in combination with the coherent potential approximation (CPA) to compute the total energy of the compound [15].

- Reference State Calculation: Calculating the total energy of each constituent element in its ground-state structure (e.g., FCC for Al, Ni, Pd; HCP for Ti) [15].

- Formation Enthalpy (ΔHf) Calculation: The formation enthalpy per atom is computed using the equation: ΔHf = H(compound) - [cAH(A) + cBH(B) + cCH(C)] where H is the enthalpy per atom, and c is the elemental concentration [15].

- Stability Assessment via Convex Hull Construction: The key step for determining stability is the convex hull construction in formation enthalpy-composition space.

- Stable compounds lie on the convex hull—the lower convex envelope of all formation enthalpies in a chemical space.

- The decomposition enthalpy (ΔHd) is the energy difference between a compound and the convex hull. A positive ΔHd indicates instability [1].

The following diagram illustrates the convex hull construction, a critical concept for stability assessment in both DFT and ML.

Diagram 1: The Convex Hull for Stability. Stable compounds (green) lie on the convex hull (blue line). Unstable compounds (red) lie above it; their decomposition enthalpy (ΔHd) is the vertical distance to the hull.

The Machine Learning Workflow

The ML workflow for stability prediction relies on learning from existing data. A typical protocol for a compositional model (which uses only the chemical formula) is:

- Data Curation and Feature Engineering:

- Compile a large dataset of known compounds with their properties (e.g., formation energy, stability label) from databases like the Materials Project, OQMD, or ICSD [1] [16].

- For compositional models, features are created from the chemical formula. These can be simple elemental fractions (ElFrac), or more complex representations like Magpie, which include elemental properties (electronegativity, atomic radius) [1]. Advanced models like ElemNet or Roost use deep learning to learn the representation directly from stoichiometry [1].

- For structural models, features describing the crystal structure are also included [1].

- Model Training and Validation:

- A model (e.g., Gradient-Boosted Regression Trees, Neural Networks) is trained to map the input features to the target output, such as the formation enthalpy (ΔHf) or a stability label [16] [17].

- The dataset is split into training and testing sets. Model performance is rigorously assessed using cross-validation on the test set, with metrics like Mean Absolute Error (MAE) for ΔHf and accuracy for stability classification [1] [15].

- Prediction and Validation:

- The trained model is used to predict the stability of new, unseen compositions.

- Predictions, especially for compounds identified as stable, are often validated with DFT calculations [16].

A key development is using ML not as a replacement for DFT, but as a correction tool. One study trained a neural network to predict the error between DFT-calculated and experimental formation enthalpies, using features like elemental concentrations, weighted atomic numbers, and interaction terms. This hybrid approach significantly improved the accuracy of phase stability predictions [15].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This section details the key computational "reagents" and tools essential for research in this field.

Table 2: Essential Computational Tools for Stability Prediction

| Tool / 'Reagent' | Function | Relevance to DFT/ML |

|---|---|---|

| DFT Codes (e.g., EMTO, VASP) | Solves the Kohn-Sham equations to compute total energy from first principles. | Core engine for DFT calculations [15]. |

| Materials Databases (e.g., MP, OQMD, ICSD) | Repository of computed (DFT) and experimental crystal structures and properties. | Source of ground-truth data for training and validating ML models [1] [16]. |

| Compositional Descriptors (e.g., ElFrac, Magpie) | Converts a chemical formula into a numerical vector for ML processing. | Critical input features for compositional ML models [1]. |

| Structural Descriptors | Encodes crystal structure geometry (e.g., symmetry, coordination) into a numerical representation. | Enables structural ML models, which show superior performance to compositional ones [1]. |

| ML Algorithms (e.g., XGBoost, Graph Neural Networks) | The statistical model that learns the relationship between input features and target properties. | Core engine for making ML-based predictions [1] [16]. |

| Convex Hull Construction Algorithm | Determines the thermodynamic ground state and decomposition energy of compounds. | Essential for determining stability from both DFT-calculated and ML-predicted energies [1]. |

The dichotomy between physical laws and statistical patterns is not a winner-take-all battle. The evidence shows that DFT remains the more reliable method for final stability validation due to its foundation in physical law and its robustness in calculating the subtle energy differences that determine stability [1]. However, its computational expense makes it ill-suited for screening vast chemical spaces.

Conversely, ML excels at high-throughput screening, rapidly identifying promising candidate materials from millions of possible compositions, but requires careful handling and is not yet reliable enough to be the sole arbiter of stability [1] [16].

The most promising path forward is a hybrid approach that leverages the strengths of both. This can take the form of ML models that correct systematic errors in DFT [15], or using ML for initial screening followed by high-fidelity DFT validation. This synergistic philosophy, combining the interpretability of physical laws with the power of statistical patterns, is poised to most effectively accelerate the discovery of new stable compounds for science and industry.

Building Predictive Models: Advanced ML Frameworks and Real-World Applications

The accurate prediction of compound stability represents a fundamental challenge in materials science and drug development. Traditional approaches, primarily relying on Density Functional Theory (DFT) calculations, establish the energy of compounds through computationally intensive quantum mechanical simulations. While DFT provides a valuable physical basis for stability assessment, its computational expense creates a significant bottleneck for high-throughput screening of novel compounds. The emergence of machine learning (ML) offers a promising alternative, capable of rapidly predicting stability by learning from existing materials data. However, the performance of these ML models depends critically on how the input materials are numerically represented, known as feature representation or descriptors [19].

The selection of input representation directly influences a model's accuracy, sample efficiency, and generalizability. Different representations encode varying degrees of chemical intuition and physical principles, from simple elemental compositions to sophisticated electron configurations and bond graphs. This guide objectively compares the performance of prominent representation strategies within the broader context of the machine learning versus DFT paradigm for compound stability prediction, providing researchers with the data needed to select appropriate representations for their specific applications.

Comparative Analysis of Input Representation Strategies

The pursuit of optimal material representations has led to several distinct strategies, each with unique strengths and limitations. The following sections and comparative data explore the most impactful approaches.

Table 1: Comparison of Input Representation Strategies for Stability Prediction

| Representation Type | Key Description | Encoded Information | Reported AUC/Performance | Sample Efficiency | Key Advantages |

|---|---|---|---|---|---|

| Elemental Composition (Magpie) [20] | Statistical features (mean, range, etc.) derived from elemental properties (atomic number, radius, etc.). | Atomic-scale properties and their statistical variations across a composition. | ~0.95 (Baseline) | Baseline | Simple, interpretable, requires no structural data. |

| Bond Graph (Roost) [20] | Chemical formula represented as a dense graph of atoms; message-passing with attention mechanisms. | Interatomic interactions within a crystal structure. | ~0.96 (Baseline) | Baseline | Captures complex, non-local relationships between atoms. |

| Electron Configuration (ECCNN) [20] | Matrix representation of the electron configuration of constituent atoms, processed by a CNN. | Fundamental electron distribution across energy levels, an intrinsic atomic property. | ~0.97 (Baseline) | 7x more efficient than baseline models | Introduces less inductive bias; strong physical basis. |

| Ensemble with Stacked Generalization (ECSG) [20] | A "super learner" that combines Magpie, Roost, and ECCNN models. | Multi-scale knowledge: atomic, interatomic, and electronic structure. | 0.988 (AUC) | Achieves baseline accuracy with 1/7 the data | Mitigates individual model bias; state-of-the-art performance. |

| Graph Networks (GNoME) [21] | Graph representation of crystal structures, scaled with deep learning and active learning. | Structural and compositional information. | >80% precision (stable structures), ~11 meV/atom energy error | High (enabled discovery of 2.2M new structures) | Exceptional generalization; enables large-scale discovery. |

The Electron Configuration Paradigm: ECCNN and ECSG

The Electron Configuration Convolutional Neural Network (ECCNN) model introduces a representation based on the fundamental electron structure of atoms. The input is a matrix encoding the electron configuration of the material's constituent elements, which is then processed through convolutional layers to extract relevant features for stability prediction [20]. This approach leverages an intrinsic atomic property—the distribution of electrons in energy levels—that is directly related to an element's chemical reactivity and bonding behavior.

The ECSG (Electron Configuration models with Stacked Generalization) framework represents a significant advancement by integrating multiple representations. It operates on the principle that models built on different domain knowledge bases (Magpie for atomic properties, Roost for interatomic interactions, and ECCNN for electron configuration) introduce different inductive biases. By combining them, ECSG creates a more robust and accurate "super learner" [20].

The Power of Graph-Based Representations: GNoME and Roost

Graph-based representations conceptualize a material as a network of atoms (nodes) connected by bonds or interactions (edges). The Roost model treats the chemical formula as a complete graph and employs a graph neural network with an attention mechanism to capture the critical interatomic interactions that govern thermodynamic stability [20]. This approach effectively learns the relationships between atoms, moving beyond simple stoichiometry.

Scaling this paradigm, the GNoME (Graph Networks for Materials Exploration) project uses state-of-the-art graph networks trained through large-scale active learning. The model starts with diverse candidate structures generated through symmetry-aware substitutions and random structure search. The GNoME model filters these candidates, with promising structures evaluated by DFT. The resulting data is then fed back into the model in an iterative flywheel, dramatically improving performance over cycles [21].

Table 2: Performance Metrics of Scaled Graph Network (GNoME) [21]

| Metric | Initial Performance | Final Performance after Active Learning |

|---|---|---|

| Prediction Error | ~21 meV/atom (initial model) | ~11 meV/atom |

| Hit Rate (Structures) | < 6% | > 80% |

| Hit Rate (Compositions) | < 3% | ~33% |

| Stable Discoveries | - | 2.2 million new structures |

Machine Learning as a Corrective Tool for DFT

Beyond operating as a standalone predictor, machine learning also enhances traditional DFT. One approach involves using ML to correct the intrinsic errors of DFT exchange-correlation functionals. A neural network model can be trained to predict the discrepancy (ΔH_error) between DFT-calculated and experimentally measured formation enthalpies [15]. The model uses a structured feature set including elemental concentrations, weighted atomic numbers, and interaction terms. Once trained, it can be applied to correct DFT outputs for new compounds, thereby improving the reliability of phase stability predictions without the cost of higher-fidelity calculations [15].

Experimental Protocols and Methodologies

Protocol for the ECSG Framework Ensemble Model

The ECSG framework's experimental validation followed a rigorous protocol [20]:

- Base Model Training: The three base models—Magpie (gradient-boosted regression trees), Roost (graph neural network), and ECCNN (convolutional neural network)—were trained on compositional and crystal structure data from materials databases.

- Stacked Generalization: The predictions from these base models were used as input features to train a meta-learner, which produced the final stability prediction.

- Performance Evaluation: The model was tested on data from the JARVIS database, with the primary metric being the Area Under the Curve (AUC) score for stability classification. The AUC quantified the model's ability to distinguish between stable and unstable compounds.

- Sample Efficiency Analysis: The researchers measured the amount of training data required for ECSG to achieve a performance level equivalent to that of existing standalone models.

- External Validation: The model's practical utility was demonstrated by deploying it to explore new 2D wide bandgap semiconductors and double perovskite oxides. The stability of the discovered materials was subsequently verified using first-principles DFT calculations.

Protocol for Large-Scale Active Learning (GNoME)

The GNoME discovery pipeline involved a cyclic process of prediction and verification [21]:

- Candidate Generation:

- Structural Path: Generate candidate crystals via modifications (e.g., symmetry-aware partial substitutions) of known crystals.

- Compositional Path: Generate reduced chemical formulas by relaxing oxidation-state constraints.

- Model Filtration: Filter the large candidate pool (over 10^9 in the structural path) using the GNoME graph network. This involved volume-based test-time augmentation and uncertainty quantification via deep ensembles.

- DFT Verification: Evaluate the filtered candidates using DFT calculations (VASP) with standardized settings from the Materials Project. This step consumes computational resources but provides ground-truth data.

- Active Learning Loop: Incorporate the energies of the relaxed structures from DFT back into the training dataset. This iterative process continuously improves the model's accuracy and generalization over multiple rounds.

Table 3: Key Computational Tools and Databases for Stability Prediction Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| Materials Project (MP) [20] [21] | Database | A vast repository of computed crystal structures and properties, serving as a primary source of training data. |

| JARVIS [20] | Database | Another integrated database for materials data, used for benchmarking model performance. |

| Open Quantum Materials Database (OQMD) [20] [21] | Database | Provides high-throughput DFT calculations for materials, used for training and validation. |

| Vienna Ab initio Simulation Package (VASP) [21] | Software | A widely used software package for performing DFT calculations to verify model predictions. |

| GNoME [21] | Machine Learning Model | A scaled graph network model for large-scale materials discovery. |

| ECSG Framework [20] | Machine Learning Model | An ensemble model combining multiple representations for high-accuracy stability prediction. |

| BigSolDB [22] | Database | A large-scale solubility dataset used for training property prediction models like FastSolv. |

The accurate prediction of compound stability is a cornerstone of materials science and drug discovery, critically influencing the efficiency of developing new functional materials and therapeutic agents. For years, Density Functional Theory (DFT) has been the primary computational tool for this task, providing insights into formation energies and phase stability from first principles. However, its predictive accuracy is often limited by intrinsic energy resolution errors, and its computational expense makes large-scale screening prohibitive [5]. Machine learning (ML) has emerged as a powerful alternative, capable of rapidly predicting material properties by learning from existing data. A pivotal study highlighted a critical caveat: while ML models can predict formation energies with DFT-like accuracy, their performance drastically deteriorates when tasked with the ultimate goal of predicting compound stability, a non-incremental challenge that underscores the need for more sophisticated architectures [23].

This comparison guide objectively evaluates three powerful ML architectures—Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), and Ensemble Methods—within the specific context of compound stability prediction. We dissect their performance, experimental protocols, and ideal use cases, providing researchers and drug development professionals with the data needed to select the optimal architecture for their discovery pipeline.

The selection of a model architecture fundamentally shapes the type of information it can process and its predictive capabilities. Below, we compare the core principles and strengths of GNNs, CNNs, and Ensemble Methods.

Graph Neural Networks (GNNs) are specifically designed for non-Euclidean, graph-structured data. They operate through message-passing and aggregation mechanisms, where each node in a graph (e.g., an atom in a molecule) updates its state by aggregating features from its neighboring nodes (e.g., bonded atoms). This makes them exceptionally well-suited for directly modeling molecular structures, capturing intricate relationships between atoms, bonds, and their topologies [24] [25].

Convolutional Neural Networks (CNNs) excel at processing data with spatial or grid-like structures, such as images. In materials science, CNNs are often adapted for composition-based models by using clever input representations. For instance, the Electron Configuration Convolutional Neural Network (ECCNN) represents a compound's elemental composition as a 2D matrix based on electron configuration data, using convolutional layers to extract spatially local patterns that may correlate with stability [20].

Ensemble Methods leverage the collective power of multiple base models (learners) to achieve superior robustness and accuracy than any single model could. The core idea is to reduce variance and bias by combining predictions. Stacked Generalization (Stacking) is a common technique where the predictions of several base models (e.g., a GNN, a CNN, and a gradient-boosting model) are used as inputs to a meta-learner, which makes the final prediction. This approach mitigates the limitations and inductive biases of individual models [20] [26].

Quantitative Performance Comparison

Experimental data from recent studies allows for a direct comparison of these architectures on tasks related to stability and property prediction. The following table summarizes key performance metrics.

Table 1: Performance Comparison of ML Architectures on Stability and Related Tasks

| Architecture | Model / Framework | Dataset / Task | Key Performance Metric | Result | Reference |

|---|---|---|---|---|---|

| Ensemble | ECSG (Electron Configuration with Stacked Generalization) | Predicting thermodynamic stability (JARVIS database) | AUC (Area Under the Curve) | 0.988 | [20] |

| Ensemble | Voting & Stacking (XGBoost & LightGBM) | Predicting asphalt volumetric properties | R² Score | Excellent values, further improved by ensemble | [26] |

| GNN | MetaboGNN | Liver metabolic stability prediction | RMSE (% parent compound remaining) | 27.91 (Human), 27.86 (Mouse) | [25] |

| GNN | GNN variants (GCN, GAT, GraphSAGE) | Learner performance prediction (across 4 datasets) | F1-Score | Consistently high (0.85-0.98), improved by ensemble | [24] |

| GNN + Ensemble | Boosting-GNN | Node classification on imbalanced datasets | Average Performance Improvement | +4.5% over base GNNs | [27] |

| CNN | ECCNN (Component of ECSG) | Predicting compound stability | Sample Efficiency | Achieved same accuracy with 1/7 the data | [20] |

The data reveals a compelling hierarchy. Ensemble methods, particularly those employing stacked generalization, achieve the highest predictive accuracy for stability classification, as evidenced by the near-perfect AUC of the ECSG framework [20]. GNNs demonstrate strong performance in modeling complex, structured data like molecules and educational interactions, with their effectiveness further enhanced when integrated into ensemble setups [24] [27]. CNNs show remarkable sample efficiency, a significant advantage in domains where labeled experimental data is scarce and costly to produce [20].

Detailed Experimental Protocols

To ensure reproducibility and provide a deeper understanding of the cited results, this section details the methodologies behind key experiments.

The ECSG Ensemble Framework for Thermodynamic Stability

The ECSG framework was designed to amalgamate models from distinct knowledge domains to mitigate individual inductive biases [20].

- Base-Level Models: The framework integrates three distinct models:

- Magpie: A model that uses statistical features (mean, deviation, range) of elemental properties (e.g., atomic radius, electronegativity) and is trained with XGBoost.

- Roost: A GNN-based model that represents the chemical formula as a graph of atoms and uses message-passing with an attention mechanism to model interatomic interactions.

- ECCNN: A custom CNN that uses a 2D matrix representation of electron configurations as input to capture intrinsic atomic properties.

- Meta-Level Model: The predictions from these three base models are used as input features for a meta-learner, which is trained to produce the final, refined prediction of compound stability.

- Data Source: The model was trained and validated on data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database.

- Evaluation Protocol: Performance was evaluated using the Area Under the Receiver Operating Characteristic Curve (AUC) to measure classification accuracy between stable and unstable compounds.

Diagram 1: ECSG ensemble framework workflow.

MetaboGNN for Metabolic Stability

MetaboGNN was developed to predict liver metabolic stability, a key parameter in drug discovery [25].

- Data Representation: Molecular structures were represented as graphs, with atoms as nodes and bonds as edges.

- Model Architecture: The core is a Graph Neural Network. A key innovation was the use of a Graph Contrastive Learning (GCL) pre-training step. This technique learns robust, transferable molecular representations by encouraging the model to produce similar embeddings for different augmented views of the same molecule and dissimilar embeddings for different molecules.

- Interspecies Data: The model was trained on parallel data from both human liver microsomes (HLM) and mouse liver microsomes (MLM), allowing it to explicitly account for and learn from interspecies enzymatic differences.

- Evaluation: Model performance was quantified using Root Mean Square Error (RMSE) between the predicted and experimentally measured percentage of the parent compound remaining after incubation.

Diagram 2: MetaboGNN training and prediction process.

Stable-GNN for Out-of-Distribution Generalization

This experiment addressed the challenge of GNN performance degradation under distribution shifts (Out-of-Distribution, OOD) [28].

- Core Problem: Standard GNNs assume training and test data are from the same distribution. The Stable-GNN model was designed to improve generalization to unseen, different test distributions.

- Methodology: The model incorporates a feature sample weighting decorrelation technique in a Random Fourier Transform space. This technique learns to assign weights to training samples to eliminate spurious correlations between features, forcing the model to rely on genuine causal features for prediction.

- Outcome: The Stable-GNN model demonstrated not only superior performance on data from the training distribution but also significantly reduced prediction bias on data from unknown test distributions, outperforming standard GNN models.

The Scientist's Toolkit: Research Reagent Solutions

Moving from experimental protocols to practical implementation, the following table details key computational tools and datasets that function as essential "research reagents" in this field.

Table 2: Essential Resources for Compound Stability ML Research

| Resource Name | Type | Primary Function in Research | Relevance to Architectures |

|---|---|---|---|

| JARVIS Database | Database | Provides curated data on material properties (formation energies, structures) for training and validation. | All architectures (Ensemble, GNN, CNN) |

| Materials Project (MP) Database | Database | A extensive repository of DFT-calculated material properties; used as a benchmark and data source. | All architectures [23] |

| TUDataset & OGB | Dataset Library | Standardized graph datasets for benchmarking GNN performance on tasks like molecular property prediction. | GNN [28] |

| CETSA (Cellular Thermal Shift Assay) | Experimental Platform | Provides quantitative, in-cell validation of drug-target engagement; used for experimental ground-truth. | Validation for all architectures [29] |

| XGBoost / LightGBM | Software Library | High-performance implementations of gradient boosting, used as stand-alone models or as meta-learners in ensembles. | Ensemble [24] [26] |

| Random Fourier Features (RFF) | Algorithmic Technique | Approximates kernel functions to efficiently decorrelate features and improve model stability. | GNN (Stable-GNN) [28] |

| Graph Contrastive Learning (GCL) | Algorithmic Technique | A self-supervised learning method used to pre-train GNNs on graph data, improving generalizability. | GNN (MetaboGNN) [25] |

The experimental data clearly indicates that there is no single "best" architecture for all scenarios in compound stability prediction. The choice is dictated by the specific research constraints and goals.

- For Maximum Predictive Accuracy: Ensemble methods that strategically combine diverse models, such as the ECSG framework, currently set the state-of-the-art. Their ability to mitigate the inductive bias of any single model makes them exceptionally powerful, albeit at the cost of increased complexity and computational requirements [20].

- For Native Molecular Representation: Graph Neural Networks are the architecture of choice when molecular structure is known and can be directly represented. Their native ability to model graph-structured data and recent advancements in improving their stability and generalizability make them a powerful tool for drug discovery applications [28] [25].

- For Data-Limited Regimes: Convolutional Neural Networks and other composition-based models offer a compelling advantage when labeled data is scarce. Their sample efficiency can accelerate early-stage screening projects [20].

The future of the field lies in the continued hybridization of these approaches. Frameworks that integrate GNNs or CNNs into sophisticated ensembles, supported by robust experimental validation tools like CETSA, will provide the most reliable and actionable predictions. This will ultimately compress discovery timelines and enhance the identification of novel, stable compounds and effective therapeutics.

The prediction of thermodynamic stability is a cornerstone in the discovery of new inorganic compounds. Traditional methods, primarily based on Density Functional Theory (DFT), establish stability by calculating a compound's decomposition energy (ΔHd) and its position on the convex hull of formation energies. [20] While foundational, DFT is hampered by significant computational costs and intrinsic errors in its energy functionals, which can limit its predictive accuracy for formation enthalpies and phase stability, particularly in complex ternary systems. [5]

Machine learning (ML) offers a paradigm shift, providing a rapid and resource-efficient alternative. However, many ML models are built on specific, limited domain knowledge, which can introduce inductive biases and constrain their performance and generalizability. [20] This case study examines the Electron Configuration Stacked Generalization (ECSG) framework, an ensemble ML approach designed to mitigate these limitations. We will objectively evaluate its performance against alternative models and DFT, analyze its experimental protocols, and detail the practical tools required for its implementation.

The ECSG Framework: Architecture and Methodology

The ECSG framework is an ensemble method that integrates three distinct composition-based ML models, each grounded in a different domain of knowledge. This design aims to create a synergistic "super learner" that minimizes the individual biases of its components. [20]

Core Architecture and Base Models

The strength of ECSG lies in its combination of models that operate on different physical scales and principles. [20] The table below summarizes the three base-level models integrated into the ECSG framework.

Table 1: Base-Level Models in the ECSG Ensemble Framework

| Model Name | Underlying Domain Knowledge | Core Algorithm | Input Features |

|---|---|---|---|

| Magpie [20] | Atomic properties & their statistics | Gradient Boosted Regression Trees (XGBoost) | Statistical features (mean, deviation, range, etc.) of elemental properties like atomic number, mass, and radius. [20] |

| Roost [20] | Interatomic interactions & message passing | Graph Neural Network (GNN) | The chemical formula represented as a complete graph of its constituent atoms. [20] |

| ECCNN [20] | Fundamental electron configuration | Convolutional Neural Network (CNN) | A matrix encoding the electron configuration (energy levels and electron counts) of the material. [20] |

The Electron Configuration Convolutional Neural Network (ECCNN) is a novel contribution of the framework. It uses a 118×168×8 matrix as input, which encodes the electron configuration of the material, an intrinsic atomic property that is less reliant on manually crafted features and thus may introduce less bias. The architecture involves two convolutional layers with 64 filters each, batch normalization, max pooling, and fully connected layers for prediction. [20]

The Stacked Generalization Workflow

The ECSG framework employs a specific meta-learning strategy to combine its base models. The following diagram illustrates this workflow.

Figure 1: ECSG Stacked Generalization Workflow. The framework integrates predictions from three base models (Magpie, Roost, ECCNN) operating on different principles. These predictions form a set of meta-features that are fed into a meta-model (a logistic regressor) to produce the final, refined stability prediction. [20]

Performance Comparison: ECSG vs. Alternatives

The ECSG framework has been rigorously tested, demonstrating superior performance not only against its constituent models but also in a broader context of computational efficiency compared to DFT.

Quantitative Performance Metrics

Experimental validation on data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database shows that ECSG achieves state-of-the-art performance in classifying compound stability. [20]

Table 2: Quantitative Performance Comparison of Stability Prediction Models

| Model / Framework | AUC Score | F1 Score | Accuracy | Data Efficiency |

|---|---|---|---|---|

| ECSG (Ensemble) | 0.988 [20] | 0.755 [30] | 0.808 [30] | Uses only 1/7 of data to match performance of existing models [20] |

| ECCNN (Base model) | Not Reported | 0.726 [30] | 0.773 [30] | Standard |

| Roost (Base model) | Not Reported | 0.714 [30] | 0.761 [30] | Standard |

| Magpie (Base model) | Not Reported | 0.669 [30] | 0.722 [30] | Standard |

| Other ML (e.g., Neural Network for DFT error correction) | 0.886 (for enthalpy prediction) [5] | Not Reported | Not Reported | Standard |

The ensemble model's high Area Under the Curve (AUC) score of 0.988 signifies an excellent ability to distinguish between stable and unstable compounds. Furthermore, its exceptional data efficiency means it can achieve performance levels that other models require seven times more data to reach, drastically reducing the computational cost of data generation. [20]

Comparison with DFT Workflows

While DFT remains the foundational method for stability assessment, ML frameworks like ECSG offer complementary advantages. The table below compares their key characteristics.

Table 3: ECSG vs. DFT for Stability Prediction

| Aspect | ECSG Framework | Traditional DFT |

|---|---|---|

| Primary Input | Chemical composition only [20] | Atomic composition and precise crystal structure |

| Computational Speed | Very fast (minutes to hours for prediction) | Slow (hours to days per compound) |

| Resource Cost | Low (after model training) | High (significant CPU/GPU resources) |

| Key Strength | High-throughput screening of compositional space; exceptional data efficiency [20] | High-fidelity energy calculations; provides electronic structure insights |

| Key Limitation | Relies on quality of training data; black-box nature | Systematic errors in formation enthalpies [5]; requires known structures |

| Typical Use Case | Rapid exploration of novel chemical spaces and pre-screening [20] | Detailed validation and investigation of specific candidate materials |

It is important to note that ML and DFT are not mutually exclusive. A common and powerful strategy is to use ML for high-throughput screening to identify promising candidates, which are then validated using high-precision DFT calculations. This hybrid approach has been successfully demonstrated in other studies, such as the discovery of stable low-work-function perovskite oxides. [6]

Experimental Protocols and Implementation

Detailed Workflow for Reproducing ECSG

The ECSG framework's implementation, as detailed in its associated GitHub repository, provides a clear pathway for training and prediction. [30] The following diagram and breakdown outline the key steps.

Figure 2: ECSG Experimental Workflow. The process involves data preparation, feature extraction, training base models with cross-validation, building the ensemble meta-model, and finally making predictions. [30]

- Step 1: Data Preparation: The input must be a CSV file containing at least two columns:

material-idandcomposition(e.g., "Fe2O3"). For training, a third columntarget(True/False for stability) is required. [30] - Step 2: Feature Processing: Users can choose to generate features at runtime or load pre-processed feature files to save time on large datasets. This is handled by the

feature.pyscript. [30] - Step 3 & 4: Model Training: The

train.pyscript initiates the process. It trains the three base models (Magpie, Roost, ECCNN) using 5-fold cross-validation. [30] The predictions from these models on the validation folds are then used as features to train the meta-model, which is a logistic regressor. [20] - Step 5: Prediction: The trained ECSG model can be used to predict the thermodynamic stability of new compounds using the

predict.pyscript. Results are saved in a CSV file with atargetcolumn indicating the stability prediction. [30]

The Researcher's Toolkit

Implementing the ECSG framework requires a specific software and hardware environment. The following table details the key requirements as specified in the official repository. [30]

Table 4: Essential Research Reagents and Solutions for ECSG Implementation

| Item / Resource | Function / Role | Specification / Version |

|---|---|---|

| ECSG GitHub Repository | Primary source for code and demo data | HaoZou-csu/ECSG [30] |

| Core Python Packages | Provides the computational backbone | Python (≥3.8), PyTorch (≥1.9.0, ≤1.16.0), scikit-learn, xgboost, pymatgen, matminer [30] |

| Key ML & Chemistry Libraries | Enables specific model operations and materials analysis | torch_geometric (for Roost GNN), torch-scatter (or custom functions), smact [30] |

| Computing Resources | Hardware for efficient model training and prediction | Recommended: 128 GB RAM, 40 CPU processors, 24 GB GPU, 4 TB disk storage [30] |

The ECSG framework represents a significant advancement in the machine learning-based prediction of thermodynamic stability for inorganic compounds. By strategically integrating models based on atomic properties, interatomic interactions, and fundamental electron configuration through stacked generalization, it achieves a level of performance and data efficiency that surpasses its individual components and other single-hypothesis models.

Its high AUC (0.988) and exceptional data efficiency make it a powerful tool for the rapid exploration of vast compositional spaces, acting as a highly effective pre-screening filter before more resource-intensive DFT validation. While DFT remains indispensable for providing deep physical insights and high-fidelity validation, ECSG establishes a compelling case for ensemble ML as a cornerstone in the modern materials discovery pipeline, accelerating the identification of novel, stable compounds for applications ranging from catalysis to energy technologies.

The accurate prediction of compound stability is a critical challenge in materials science and drug discovery. Traditional approaches, primarily based on Density Functional Theory (DFT), offer high fidelity but at prohibitive computational costs, often consuming up to 70% of supercomputer allocations in the materials science sector [7]. This resource-intensive nature drives the demand for efficient alternatives, positioning machine learning (ML) as a transformative solution. ML models can produce results orders of magnitude faster than ab initio simulations, making them ideal for high-throughput screening campaigns where they act as efficient pre-filters for more demanding, high-fidelity methods [7].

This case study focuses on Bond-Aware Graph Networks for Molecular Metabolic Stability (MS-BACL), a model representative of advanced Graph Neural Networks (GNNs) that use Graph Contrastive Learning (GCL). We will objectively compare its performance and methodology against other state-of-the-art approaches, including the closely related MetaboGNN model and universal machine learning interatomic potentials (uMLIPs), within the broader context of accelerating stability prediction.

Quantitative Performance Comparison

Benchmarking is essential for evaluating ML models. Frameworks like Matbench Discovery address the disconnect between standard regression metrics and more task-relevant classification metrics for materials discovery [7]. The table below summarizes the predictive performance of MS-BACL and its key competitors on relevant biochemical and thermodynamic stability tasks.

Table 1: Performance Comparison of Metabolic Stability Prediction Models

| Model Name | Architecture Type | Key Features | Reported Metric | Performance Value | Dataset Used |

|---|---|---|---|---|---|

| MS-BACL | Graph Neural Network | Bond-Aware, Graph Contrastive Learning | (Information Not Available in Search Results) | (Information Not Available in Search Results) | (Information Not Available in Search Results) |

| MetaboGNN | Graph Neural Network | GCL Pretraining, Interspecies Difference Learning | RMSE (HLM) | 27.91 (% remaining) | 2023 South Korea Data Challenge (3,498 train, 483 test molecules) [31] |