Machine Learning Prediction of Inorganic Compound Stability: Advanced Models, Applications, and Future Directions

The prediction of inorganic compound thermodynamic stability is a critical challenge in accelerating the discovery of new materials for applications ranging from energy storage to drug development.

Machine Learning Prediction of Inorganic Compound Stability: Advanced Models, Applications, and Future Directions

Abstract

The prediction of inorganic compound thermodynamic stability is a critical challenge in accelerating the discovery of new materials for applications ranging from energy storage to drug development. This article provides a comprehensive overview of how machine learning (ML) is revolutionizing this field. We explore the foundational principles of stability prediction, detail cutting-edge methodological approaches including ensemble models and graph neural networks, and address key challenges such as data scarcity and model bias. A comparative analysis of model performance and validation strategies underscores the transformative potential of ML. For researchers and drug development professionals, this synthesis offers a practical guide to leveraging these computational tools to navigate vast compositional spaces and prioritize promising candidates for synthesis.

The Foundation of Stability: Why Machine Learning is Revolutionizing Inorganic Materials Discovery

The discovery and development of new inorganic compounds with tailored properties are fundamental to advancements in fields ranging from renewable energy to pharmaceuticals. However, this process is severely hampered by a fundamental computational bottleneck: the limitations of traditional density functional theory (DFT) and experimental methods in efficiently and accurately predicting thermodynamic stability. Thermodynamic stability, typically represented by decomposition energy (ΔHd), serves as a critical filter for identifying synthesizable materials from the vast compositional space of possible compounds [1]. Conventional approaches for determining this stability involve constructing a convex hull using formation energies derived from either experimental investigation or DFT calculations [1]. These methods, while foundational, are characterized by profound inefficiencies. DFT calculations consume substantial computational resources, while experimental synthesis and characterization are both time-intensive and costly [1]. This bottleneck fundamentally restricts the pace at which new materials can be discovered and validated, necessitating a paradigm shift toward more efficient computational strategies.

The Inherent Limitations of Traditional DFT

The Exchange-Correlation Problem

At the heart of DFT's limitations lies the exchange-correlation (XC) functional, a term that encapsulates the complex quantum mechanical interactions between electrons. Although DFT reformulates the computationally intractable many-electron Schrödinger equation into a tractable form, the exact expression for the universal XC functional remains unknown [2]. Scientists must therefore rely on approximations, of which hundreds exist, creating a "zoo of different XC functionals" from which researchers must select [2]. This approximation introduces significant errors that limit DFT's predictive power. Present XC functionals typically exhibit errors 3 to 30 times larger than the threshold for chemical accuracy (approximately 1 kcal/mol), which is necessary for reliably predicting experimental outcomes [2]. This margin of error is too large to shift the balance of molecule and material design from being driven by laboratory experiments to being driven by computational simulations.

Specific Challenges in Practical Applications

The theoretical shortcomings of DFT translate into several critical practical challenges, particularly when modeling materials for specific applications like microwave absorption:

- Deviation from Real Atomic Configurations: DFT calculations often employ idealized atomic structures that deviate from real materials containing defects, interfaces, and complex microstructures. This idealization limits the reliability of DFT when interpreting microscopic mechanisms in specific systems [3].

- Inability to Model Alternating Electromagnetic Fields: Standard DFT frameworks cannot adequately calculate electronic states under alternating electromagnetic fields, which is a significant limitation for designing functional materials like microwave absorbers where dynamic field responses are crucial [3].

- Errors in Strongly Correlated Systems: The generalized functionals used in DFT produce substantial computational errors when dealing with strongly correlated electron systems, which are common in transition metal oxides and other important inorganic compounds [3].

Table 1: Key Limitations of Traditional DFT and Their Implications

| Limitation | Technical Description | Impact on Materials Discovery |

|---|---|---|

| Approximate XC Functional | No universal form known; must use approximations [2]. | Limited accuracy (errors 3-30x > chemical accuracy); insufficient for predictive design [2]. |

| High Computational Cost | Computation scales cubically with the number of electrons [2]. | Limits system size and throughput; inefficient for exploring vast compositional spaces [1]. |

| Strong Correlation Errors | Inadequate treatment of strongly correlated electron systems [3]. | Reduced reliability for important material classes like transition metal oxides and f-electron systems [3]. |

| Static Ground-State Focus | Inability to calculate electronic states under alternating EM fields [3]. | Hinders design of functional materials (e.g., microwave absorbers) dependent on dynamic responses [3]. |

The Experimental Bottleneck

Traditional experimental approaches for determining compound stability face their own set of constraints. Establishing a convex hull for stability assessment requires extensive experimental investigation of compounds within a given phase diagram, a process that is inherently slow, resource-intensive, and low-throughput [1]. The "extensive compositional space of materials" means that the number of compounds that can be feasibly synthesized in a laboratory represents only "a minute fraction of the total space," creating a needle-in-a-haystack problem [1]. Furthermore, experimental characterization techniques often lack the spatial and temporal resolution needed for in situ observation of microscopic processes such as carrier dynamics, localized charge transfer, and polarization behavior [3]. This limitation restricts the fundamental understanding of structure-property relationships essential for rational materials design.

Machine Learning as a Pathway Through the Bottleneck

Ensemble Learning for Stability Prediction

Machine learning (ML) offers a promising avenue for circumventing the computational and experimental bottlenecks by enabling rapid and cost-effective predictions of compound stability [1]. Unlike DFT, ML models can screen potential compounds in seconds rather than hours or days. A particularly effective approach involves ensemble frameworks based on stacked generalization (SG), which amalgamate models rooted in distinct domains of knowledge to mitigate the inductive biases inherent in single-model approaches [1]. The Electron Configuration models with Stacked Generalization (ECSG) framework, for instance, integrates three distinct models:

- Magpie: Utilizes statistical features from elemental properties.

- Roost: Conceptualizes chemical formulas as graphs to capture interatomic interactions.

- ECCNN (Electron Configuration Convolutional Neural Network): Uses electron configuration as intrinsic input, reducing hand-crafted feature biases [1].

This ensemble approach has demonstrated remarkable efficacy, achieving an Area Under the Curve (AUC) score of 0.988 in predicting compound stability and requiring only one-seventh of the data used by existing models to achieve equivalent performance [1]. This represents a significant improvement in sample efficiency, dramatically accelerating the discovery process.

Enhancing DFT with Machine Learning

Beyond replacing DFT for initial screening, ML is also being integrated directly with DFT to enhance its accuracy. Novel approaches are using machine learning, trained on high-quality quantum many-body (QMB) data, to discover more universal XC functionals [4]. For example, including both the interaction energies of electrons and the potentials that describe how that energy changes at each point in space provides a stronger foundation for training ML models, as potentials highlight small system differences more clearly than energies alone [4]. In a significant milestone, Microsoft Research developed the Skala XC functional using a deep-learning approach trained on an unprecedented dataset of diverse, highly accurate molecular structures. This model reaches the accuracy required to reliably predict experimental outcomes, bridging the gap between QMB accuracy and DFT efficiency [2].

Table 2: Comparison of Traditional and ML-Enhanced Computational Approaches

| Aspect | Traditional DFT | ML for Stability Prediction | ML-Enhanced DFT |

|---|---|---|---|

| Primary Function | First-principles energy calculation [2] | High-throughput stability screening [1] | Accurate property prediction [2] |

| Computational Cost | High (cubic scaling) [2] | Very Low [1] | Moderate (higher than pure DFT for small systems) [2] |

| Key Innovation | Reformulation of Schrödinger equation [2] | Ensemble models (e.g., ECSG) [1] | Deep-learned XC functionals (e.g., Skala) [2] |

| Accuracy | 3-30x chemical accuracy [2] | AUC = 0.988 for stability [1] | Reaches chemical accuracy (~1 kcal/mol) [2] |

| Data Dependency | Minimal for calculations | Requires training data [1] | Requires extensive high-accuracy QMB data [2] |

Experimental Protocols

Protocol 1: High-Throughput Stability Screening Using Ensemble ML

Purpose: To rapidly and accurately predict the thermodynamic stability of inorganic compounds using the ECSG framework. Materials and Software: Python environment with PyTorch/TensorFlow, JARVIS or Materials Project database access, ECSG model architecture [1]. Procedure:

- Data Acquisition: Download formation energies and decomposition energies for inorganic compounds from the Materials Project or JARVIS database [1].

- Feature Encoding:

- Model Training:

- Independently train the three base models (ECCNN, Magpie, Roost) on the training dataset.

- ECCNN Architecture: Process the input matrix through two convolutional layers (64 filters of 5×5), followed by batch normalization, max pooling (2×2), and fully connected layers [1].

- Stacked Generalization:

- Use the predictions of the base models as input features for a meta-level model.

- Train the meta-learner to produce the final stability prediction [1].

- Validation:

- Evaluate model performance using Area Under the Curve (AUC) metrics on a held-out test set.

- Validate predictions against first-principles calculations for novel compounds [1].

Protocol 2: Developing a Machine-Learned XC Functional

Purpose: To enhance the accuracy of DFT by training a machine-learning model to approximate the exchange-correlation functional. Materials and Software: High-performance computing cluster, quantum chemistry software (e.g., PySCF, Q-Chem), automated data generation pipeline [2]. Procedure:

- Reference Data Generation:

- Model Architecture Design:

- Training:

- Train the model on the generated dataset using substantial computational resources (e.g., via cloud computing platforms like Azure) [2].

- Employ optimization algorithms to minimize the difference between predicted and reference energies.

- Validation and Testing:

Table 3: Key Computational Tools and Databases for Stability Prediction

| Resource Name | Type | Primary Function | Relevance to Stability Prediction |

|---|---|---|---|

| Materials Project (MP) | Database | Repository of computed materials properties [1] | Provides formation energies for training ML models and constructing convex hulls [1]. |

| JARVIS | Database | Repository of computed materials properties [1] | Source of benchmark data for validating stability prediction models [1]. |

| ECSG Framework | Software | Ensemble machine learning model [1] | Predicts compound stability with high accuracy (AUC=0.988) and sample efficiency [1]. |

| Skala Functional | Software | Machine-learned XC functional [2] | Enhances DFT accuracy to chemical accuracy for predicting formation energies [2]. |

| Active Learning (AL) | Methodology | Strategy for optimizing data generation [3] | Selects the most informative data points for DFT calculations to improve ML training efficiency [3]. |

| Graph Neural Networks (GNNs) | Software | Neural networks for graph-structured data [3] | Transmits atomic information graphically, establishing correlations between atomic configurations and electronic properties [3]. |

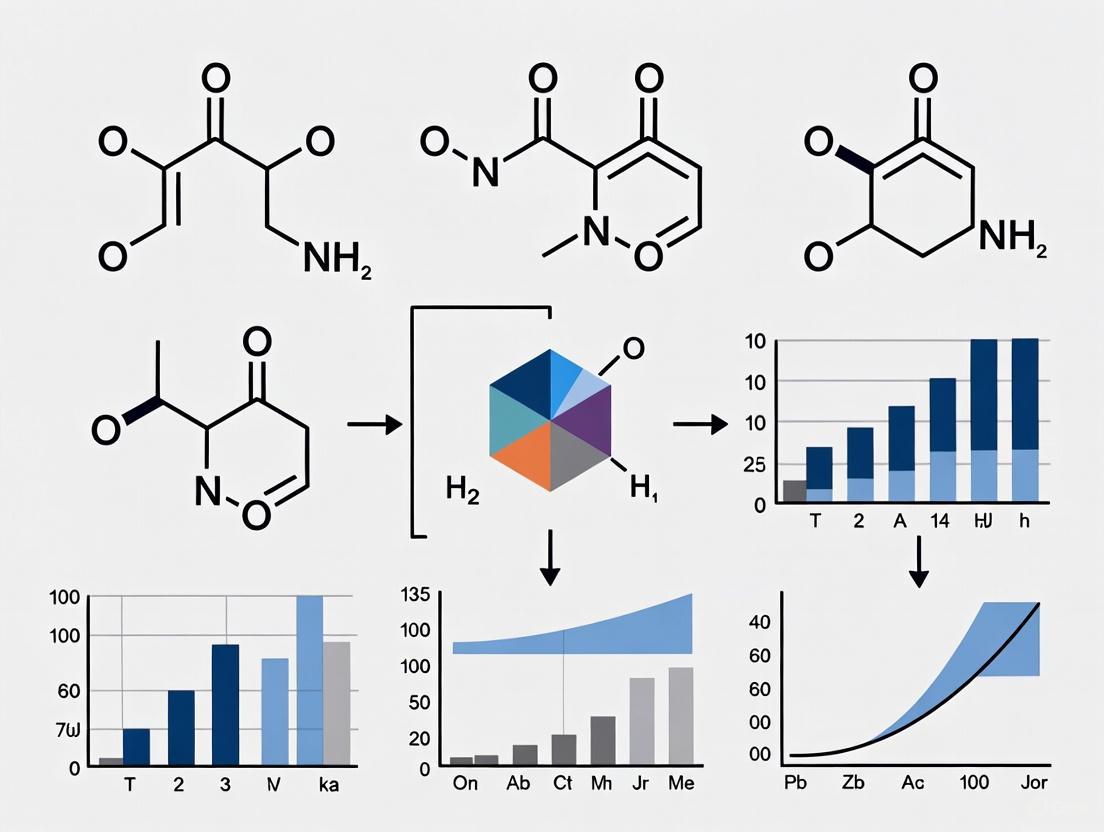

Workflow Visualization

Diagram 1: Overcoming the computational bottleneck in material discovery.

The computational bottleneck presented by traditional DFT and experimental methods has long constrained the pace of inorganic materials discovery. The limitations of DFT, particularly the unknown exact exchange-correlation functional, and the resource-intensive nature of experimental synthesis and characterization create a fundamental barrier to rapid innovation. However, the integration of machine learning, through both direct stability prediction and the enhancement of DFT itself, offers a transformative pathway forward. Ensemble models like ECSG enable high-throughput screening with remarkable accuracy and sample efficiency, while ML-derived XC functionals like Skala bring DFT calculations closer to experimental accuracy. These approaches, used in concert with traditional methods and validated through targeted experimentation, are poised to accelerate the discovery of next-generation materials for applications across science and technology.

The discovery of new inorganic compounds with desirable properties has long been a painstaking process, often described as searching for a needle in a haystack due to the vastness of compositional space [1]. Traditional methods for determining key properties like thermodynamic stability, crucial for predicting compound synthesizability, rely heavily on resource-intensive experimental investigations or Density Functional Theory (DFT) calculations [1]. The advent of high-throughput screening (HTS) has begun to change this landscape by generating massive amounts of chemical and biological data [5]. However, the true paradigm shift is being driven by the integration of machine learning (ML), which can rapidly learn from existing HTS data to predict material properties, dramatically accelerating the exploration of new compounds and reducing reliance on costly computations and experiments [1] [6]. This document details the application notes and protocols for employing ML in the HTS pipeline, specifically within the context of predicting inorganic compound stability.

The performance of various machine learning models in predicting material stability is quantified using standardized metrics. The following table summarizes key results from recent studies, providing a benchmark for comparison.

Table 1: Performance Metrics of Machine Learning Models for Compound Stability and Property Prediction

| Study Focus / Model Name | Key Metric | Performance Value | Dataset Used | Reference |

|---|---|---|---|---|

| Thermodynamic Stability (ECSG Ensemble) | Area Under the Curve (AUC) | 0.988 | JARVIS Database [1] | |

| Thermodynamic Stability (ECSG Ensemble) | Data Efficiency | Achieved equivalent accuracy with 1/7 of the data required by existing models | JARVIS Database [1] | |

| Electrochemical Window (Classification) | Accuracy | >0.98 | Over 16,000 Li-containing compounds [7] | |

| Electrochemical Window (Regression) | Mean Absolute Error (MAE) | 0.19 / 0.21 V (left/right ECW limits) | Over 16,000 Li-containing compounds [7] | |

| Selenium-Based Compounds Stability | Model Validation | R² = 0.92 (DFT validation) | 618 Se-based compounds [6] |

Experimental Protocols for ML-Aided HTS

This section provides detailed methodologies for implementing machine learning in high-throughput screening workflows for inorganic compound stability.

Protocol: Data Collection and Curation from Public Repositories

Objective: To programmatically gather a large dataset of compound structures and properties for training machine learning models. Materials: Computing environment with internet access and programming capabilities (Python recommended). Procedure:

- Identify Data Source: Access a public repository such as PubChem [5] or materials-specific databases like the Materials Project (MP) or Open Quantum Materials Database (OQMD) [1].

- Construct Query URL: Use programmatic interfaces like the PubChem Power User Gateway (PUG)-REST. Construct a URL with the following components [5]:

- Base:

https://pubchem.ncbi.nlm.nih.gov/rest/pug/ - Input:

compound/smiles/COC/property - Output:

JSON - Example: To retrieve data for aspirin (CID 2244), the URL would be:

https://pubchem.ncbi.nlm.nih.gov/rest/pug/compound/cid/2244/JSON

- Base:

- Automate Data Retrieval: For large datasets, write a script to iterate through a list of compound identifiers (e.g., CIDs, SMILES strings) and execute the queries.

- Data Parsing and Storage: Parse the returned data (e.g., in JSON, CSV, or ASN.1 format) and store it in a structured local database for further processing [5]. Notes: Always check the specific data licensing and usage terms provided by the repository.

Protocol: Feature Engineering and Model Selection for Stability Prediction

Objective: To prepare compound data and select an appropriate ML model architecture for accurate stability prediction. Materials: Processed dataset of inorganic compounds; ML libraries (e.g., Scikit-learn, TensorFlow, PyTorch). Procedure:

- Feature Representation: Choose a composition-based feature representation. Common approaches include [1]:

- Magpie: Calculate statistical features (mean, deviation, range) from a list of elemental properties like atomic number, mass, and radius.

- Roost: Represent the chemical formula as a graph of atoms to model interatomic interactions.

- Electron Configuration (EC): Encode the electron configuration of constituent elements into a matrix format suitable for convolutional neural networks (CNNs).

- Model Training:

- Single Models: Train individual models like Gradient Boosted Regression Trees (for Magpie features) or Graph Neural Networks (for Roost features) [1].

- Ensemble Framework (Recommended): For superior performance and reduced bias, use a stacked generalization framework. Train multiple base models (e.g., Magpie, Roost, and an Electron Configuration CNN) and use their outputs as inputs to a meta-learner model to make the final prediction [1].

- Model Validation: Validate model performance using a hold-out test set or cross-validation, reporting metrics such as AUC, accuracy, and MAE as applicable (see Table 1).

Protocol: DFT Validation of ML Predictions

Objective: To computationally validate the stability of ML-predicted stable compounds. Materials: First-principles calculation software (e.g., VASP, Quantum ESPRESSO); high-performance computing (HPC) resources. Procedure:

- Candidate Selection: Select the top candidate compounds identified by the ML model as being thermodynamically stable.

- Structure Optimization: Perform geometry optimization to find the most stable crystal structure for each candidate.

- Energy Calculation: Calculate the formation energy and decomposition energy (ΔHd) of the compound. The decomposition energy is the energy difference between the compound and its competing phases on the convex hull [1].

- Stability Assessment: A compound is considered thermodynamically stable if its decomposition energy (ΔHd) is negative, indicating it lies on the convex hull of the phase diagram [1] [6]. Notes: This protocol is computationally expensive and should be applied to a shortlist of promising candidates filtered by the ML model.

Workflow Visualization

The following diagram illustrates the integrated ML-HTS workflow for stable compound discovery.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for ML-Driven HTS in Materials Science

| Category / Item Name | Function / Description | Relevance to Workflow |

|---|---|---|

| Public Data Repositories | ||

| Materials Project (MP) / OQMD | Databases of computed crystal structures and properties for inorganic materials. | Primary source of training data for stability prediction models [1]. |

| PubChem | Largest public repository of chemical structures and biological activity data. | Source for HTS bioassay data and compound information [5]. |

| Cambridge Crystallographic Data Centre (CCDC) | Repository for experimentally determined organic and metal-organic crystal structures. | Source of experimental structural data for validation and training [6]. |

| Computational Tools & Libraries | ||

| PUG-REST (PubChem) | A REST-style interface for programmatic access to PubChem data. | Enables automated, large-scale data retrieval for building datasets [5]. |

| DFT Software (VASP, Quantum ESPRESSO) | First-principles calculation packages for electronic structure. | Used for final validation of ML-predicted stable compounds [1] [6]. |

| ML Libraries (Scikit-learn, TensorFlow, PyTorch) | Open-source libraries for implementing machine learning algorithms. | Core environment for building, training, and deploying predictive models [1] [6]. |

| Feature Representation Models | ||

| Magpie | Generates statistical features from elemental properties. | Provides a robust and interpretable feature set for ML models [1]. |

| Roost (Representations from Ordered Structures) | A graph neural network model for learning from crystal structure compositions. | Captures complex interatomic interactions directly from the composition [1]. |

The discovery and development of new inorganic compounds are fundamental to advancements in energy, computing, and healthcare. A critical first step in this process is the accurate prediction of a compound's thermodynamic stability, which determines its synthesizability and viability for real-world applications. Traditional methods for establishing stability, primarily through experimental synthesis or density functional theory (DFT) calculations, are notoriously resource-intensive and low-throughput, creating a major bottleneck in materials discovery [1].

The paradigm has shifted with the emergence of extensive materials databases and sophisticated machine learning (ML) models. These resources enable researchers to rapidly screen vast compositional spaces in silico, prioritizing the most promising candidates for further investigation. Among these resources, the Materials Project, the Open Quantum Materials Database (OQMD), and the Joint Automated Repository for Various Integrated Simulations (JARVIS) have become cornerstones of modern computational materials science. This Application Note provides a detailed protocol for leveraging these three key databases within a research workflow focused on machine learning prediction of inorganic compound stability.

Database Comparative Analysis

Each of the three major databases offers a unique set of data, tools, and capabilities. Their strategic integration is key to a robust research protocol. The table below summarizes their core characteristics for direct comparison.

Table 1: Key Characteristics of Major Materials Databases

| Feature | Materials Project | Open Quantum Materials Database (OQMD) | JARVIS |

|---|---|---|---|

| Primary Focus | High-throughput DFT calculations for materials design [8] | DFT-computed properties of stable and hypothetical materials [8] | Multimodal, multiscale infrastructure integrating DFT, FF, ML, and experiments [9] [10] |

| Example Data & Properties | Formation energy, crystal structure, band structure [8] | Formation energy, stability (energy above hull) [8] | DFT, Force-Field, ML properties, experimental data from microscopy/cryogenics [9] [10] |

| Key Strength | Extensive and widely used; enables high-throughput screening [8] | Large volume of data (~341,000 materials); useful for ML model training [8] | Uniquely integrates computational and experimental data; includes beyond-DFT methods [9] [10] |

| Reported MAE vs. Experiments (Formation Energy) | ~0.078 eV/atom [8] | ~0.083 eV/atom [8] | ~0.095 eV/atom (without empirical corrections) [8] |

Experimental Protocols for ML-Based Stability Prediction

This section outlines a detailed, sequential protocol for a research project aiming to train a machine learning model to predict the thermodynamic stability of inorganic compounds.

Protocol 1: Data Acquisition and Unified Curation

Objective: To gather a coherent and consistent training dataset from multiple databases. Reagents & Resources:

- Computational Environment: Python programming environment with key libraries:

pymatgen(for accessing database APIs and manipulating structures),matminer(for data retrieval and featurization) [8]. - Data Sources: Direct API access or downloadable datasets from the Materials Project, OQMD, and JARVIS websites.

Procedure:

- Data Retrieval: Access each database programmatically using their respective APIs. For stability prediction, key data to retrieve includes:

- Chemical Composition: The elemental formula of the compound (e.g., NaCl, TiO₂).

- Formation Energy (ΔHf): The energy of formation of the compound from its elements, in eV/atom.

- Energy Above Hull (Ehull): The decomposition energy (ΔHd), which quantifies thermodynamic stability. Compounds with Ehull = 0 eV/atom are considered stable [1].

- Crystal Structure (Optional): Atomic positions and lattice parameters for structure-based models.

Data Unification: Merge datasets from the different sources. Critically, this requires:

- Resolving Nomenclature: Ensuring compound identifiers are consistent across databases.

- Handling Discrepancies: Different DFT calculation parameters (pseudopotentials, exchange-correlation functionals) can lead to varying property values. It is essential to note these differences and, for a unified model, decide on a strategy (e.g., applying a consistent correction scheme or training on data from a single source and using others for transfer learning).

Data Labeling: For a classification task (stable vs. unstable), create a binary label where compounds with Ehull = 0 eV/atom are labeled "stable" and all others are "unstable."

Figure 1: The workflow for data acquisition, curation, and model training illustrates the protocol's logical flow and key decision points.

Protocol 2: Feature Engineering and Model Training

Objective: To convert raw composition data into a machine-readable format and train a predictive ML model. Reagents & Resources:

- Featurization Tools:

matminerprovides numerous featurization methods [8]. - ML Libraries:

scikit-learnfor traditional models,PyTorchorTensorFlowfor deep learning models like ElemNet [8] or ECCNN [1]. - Computational Resources: GPUs are highly beneficial for training deep learning models.

Procedure:

- Featurization: Convert the chemical composition of each compound into a numerical vector (descriptor). Common approaches include:

- Elemental Property Statistics (e.g., Magpie): For each element in a composition, properties like atomic number, radius, and electronegativity are used. Statistics (mean, range, variance) across the composition are calculated to form the feature vector [1].

- Atomic Fraction Vectors: Simple vectors of elemental fractions, as used in ElemNet [8].

- Electron Configuration (e.g., ECCNN): A more advanced method that encodes the electron configuration of constituent atoms into a matrix, capturing intrinsic atomic characteristics that may reduce model bias [1].

Model Selection and Training:

- Baseline Models: Start with simpler models like Gradient Boosted Regression Trees (e.g., XGBoost) trained on Magpie features [1].

- Advanced Deep Learning: Employ deep neural networks like ElemNet (for composition) or graph neural networks like Roost (which models the composition as a graph of interacting atoms) [1].

- Ensemble Methods: For highest performance, use a stacked generalization (ensemble) framework. For example, the ECSG model combines the predictions of Magpie, Roost, and ECCNN models using a meta-learner, effectively mitigating the individual biases of each base model and achieving state-of-the-art accuracy (AUC > 0.98) [1].

Model Validation: Perform rigorous k-fold cross-validation. The primary evaluation metric for stability classification is the Area Under the Receiver Operating Characteristic Curve (AUC-ROC).

Protocol 3: Transfer Learning for Enhanced Experimental Accuracy

Objective: To bridge the gap between DFT-computed data and experimental observations, improving the real-world predictive accuracy of the ML model. Rationale: Models trained solely on DFT data inherit its inherent discrepancies versus experiment (e.g., ~0.1 eV/atom MAE for formation energy). Transfer learning can mitigate this [8].

Procedure:

- Pre-training: Train a deep neural network (e.g., ElemNet architecture) on a large source dataset of DFT-computed properties, such as OQMD with ~341,000 materials [8]. This allows the model to learn a rich set of foundational features.

Fine-Tuning: Take the pre-trained model and perform additional training (fine-tuning) on a smaller, high-quality dataset of experimental formation energies (e.g., the SSUB database with ~1,963 samples) [8]. The learning rate for this step should be very low.

Validation: This approach has been shown to achieve an MAE of ~0.06 eV/atom against experimental data, outperforming the baseline DFT discrepancy and models trained from scratch on experimental data alone [8].

Figure 2: The transfer learning process improves experimental prediction accuracy by leveraging large DFT datasets.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools and Resources for ML-Driven Stability Prediction

| Item Name | Function/Description | Relevance to Protocol |

|---|---|---|

| Matminer | An open-source Python library for data mining in materials science [8]. | Used for retrieving data from databases, featurizing compositions (e.g., Magpie), and managing datasets. |

| JARVIS-Tools | A Python package for automating materials design workflows and integrating with simulation software like VASP and LAMMPS [10]. | Essential for setting up and analyzing DFT calculations to validate ML predictions or generate new data. |

| ALIGNN | Atomistic Line Graph Neural Network; a model that incorporates bond information for accurate property prediction [9] [10]. | Provides a state-of-the-art, readily available model for property prediction that goes beyond simple composition. |

| ElemNet | A deep neural network architecture that uses only elemental composition as input [8]. | Serves as a powerful deep learning baseline and is the core architecture for the transfer learning protocol. |

| ECSG Model | An ensemble framework combining models based on electron configuration (ECCNN), atomic statistics (Magpie), and graph attention (Roost) [1]. | Represents a cutting-edge approach for achieving maximum predictive accuracy and data efficiency in stability classification. |

From Data to Prediction: A Deep Dive into Machine Learning Models and Their Real-World Applications

In the field of machine learning (ML) for materials science, accurately predicting the stability of inorganic compounds is a critical step in accelerating the discovery of new materials. The choice of input representation—how a compound's chemical information is encoded for the ML model—fundamentally shapes the predictive performance, computational cost, and practical applicability of the approach. Two primary paradigms dominate this area: composition-based and structure-based models. Composition-based models use only the chemical formula, while structure-based models incorporate the geometric arrangement of atoms. This Application Note delineates the strengths, limitations, and optimal use cases for each representation type, providing detailed protocols for their implementation within research focused on machine learning prediction of inorganic compound stability [1] [11].

Technical Comparison of Model Representations

The following table summarizes the core characteristics of composition-based and structure-based input representations.

Table 1: Comparison of Input Representations for Stability Prediction

| Feature | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Input Data | Elemental stoichiometry (e.g., "CaTiO₃") [1] | Crystallographic Information File (CIF) containing atomic coordinates and lattice parameters [11] |

| Information Scope | Elemental proportions and their statistical properties [1] | 3D atomic structure, including bond lengths, angles, and symmetry [11] |

| Primary Advantage | High-throughput screening; applicable when structure is unknown [1] | Higher information fidelity; can distinguish between polymorphs [11] |

| Key Limitation | Cannot differentiate between different structural polymorphs of the same composition [1] | Requires a defined crystal structure, which is often the target of prediction [1] |

| Data Availability | Easily derived from chemical databases or formulated a priori [1] | Requires experimental determination (X-ray diffraction) or computationally expensive DFT relaxation [1] |

| Computational Cost | Generally lower, enabling rapid screening of vast compositional spaces [1] | Higher, due to the complexity of processing 3D structural data [11] |

| Sample Efficiency | High (e.g., can achieve AUC >0.98 with ~1/7th the data required by some structure models) [1] | Can require more data to learn structural relationships effectively [1] |

| Example Models | Magpie [1], Roost [1], ElemNet [1], ECCNN [1] | CGCNN [11], PU-GPT-embedding [11] |

Detailed Experimental Protocols

Protocol 1: Implementing a Composition-Based Stability Prediction Workflow

This protocol outlines the steps for building a super learner ensemble model (ECSG) for thermodynamic stability prediction using only compositional information [1].

Research Reagent Solutions:

- Databases: Materials Project (MP) or Open Quantum Materials Database (OQMD) for labeled stability data (e.g., decomposition energy, ΔHd) [1].

- Feature Generation Libraries: Python-based libraries for calculating Magpie features (atomic number, radius, electronegativity, etc.) and for encoding electron configurations.

- ML Frameworks: TensorFlow or PyTorch for building neural networks (ECCNN), and XGBoost for tree-based models.

- Validation Metric: Area Under the Curve (AUC) of the Receiver Operating Characteristic curve, targeting >0.98 [1].

Procedure:

- Data Curation: Download a dataset of inorganic compounds with known thermodynamic stability labels (e.g., stable if on the convex hull). Clean the data and split into training, validation, and test sets.

- Feature Engineering (Input Representation): a. Magpie Model: For each element in a compound, compute a set of elemental properties. Then, generate statistical features (mean, range, mode, etc.) across all elements in the compound to form the input vector [1]. b. Roost Model: Represent the chemical formula as a graph where nodes are elements, and use a message-passing graph neural network to learn the representation directly [1]. c. ECCNN Model: Encode the composition into a 2D matrix (118 elements × 168 electron orbital features × 8 properties). This matrix serves as the input for a Convolutional Neural Network [1].

- Base Model Training: Independently train the three base models (Magpie, Roost, ECCNN) on the same training dataset.

- Stacked Generalization (Super Learner): a. Use the trained base models to generate predictions on the validation set. b. Use these predictions as new input features to train a meta-learner (e.g., a linear model or another simple classifier) to produce the final stability prediction [1].

- Model Validation: Evaluate the final ECSG model on the held-out test set, reporting AUC and other relevant metrics. Validate predictions with DFT calculations on a subset of novel compounds [1].

Composition-Based Super Learner Workflow

Protocol 2: Implementing a Structure-Based Synthesizability Prediction Workflow

This protocol describes using Large Language Model (LLM) embeddings of text-based crystal structure descriptions for positive-unlabeled (PU) learning of synthesizability [11].

Research Reagent Solutions:

- Structural Database: Materials Project (MP) for CIF files of synthesized and hypothetical structures [11].

- Text Description Tool: Robocrystallographer to convert CIF files into human-readable text descriptions [11].

- Embedding Model: OpenAI's

text-embedding-3-largemodel to generate numerical vector representations of the text descriptions [11]. - PU-Learning Framework: A binary classifier (e.g., a neural network) designed for positive-unlabeled learning tasks.

Procedure:

- Data Preparation: a. Obtain CIF files for both synthesized (positive) and hypothetical (unlabeled) inorganic crystal structures from MP. Filter for structures with ≤30 atomic sites per unit cell (MP30) to manage complexity [11]. b. Use Robocrystallographer to generate a textual description for each CIF file. Example output: "The crystal structure is cubic, with a perovskite-like arrangement of corner-sharing TiO₆ octahedra, and Ca atoms occupying the cuboctahedral cavities." [11].

- Text Embedding Generation: For each text description, use the

text-embedding-3-largemodel to compute a 3072-dimensional numerical vector. This vector encapsulates the semantic information of the structure [11]. - Model Training (PU-Classifier): Train a neural network classifier using the text embeddings as input. The model is trained to distinguish the known synthesized (positive) examples from the hypothetical (unlabeled) set, accounting for the PU-learning paradigm [11].

- Prediction and Explanation: a. Prediction: Use the trained PU-classifier to predict the synthesizability probability of new hypothetical structures. b. Explanation (Optional): For explainability, a fine-tuned LLM (StructGPT) can be used to generate natural language justifications for the predictions, highlighting structural features that influence synthesizability [11].

Structure-Based Synthesizability Prediction

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Resources for ML-Driven Stability Prediction

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| Materials Project (MP) [1] [11] | Database | Provides a vast repository of computed and experimental data for inorganic compounds, including formation energies and crystal structures, essential for training and benchmarking. |

| Joint Automated Repository for Various Integrated Simulations (JARVIS) [1] | Database | Another key database for DFT-computed properties, used for model training and validation. |

| Robocrystallographer [11] | Software Tool | Converts crystallographic information (CIF files) into standardized, human-readable text descriptions, enabling the use of NLP and LLMs for structure-based prediction. |

| OpenAI text-embedding-3-large [11] | AI Model | Generates high-dimensional numerical embeddings from text descriptions of crystal structures, serving as a powerful input representation for ML models. |

| Positive-Unlabeled (PU) Learning Framework [11] | Methodological Framework | Addresses the core challenge in synthesizability prediction, where only positive (synthesized) examples are known, and hypothetical materials are unlabeled. |

| Density Functional Theory (DFT) [1] | Computational Method | The computational benchmark for validating ML-predicted stable compounds, providing accurate formation and decomposition energies. |

The discovery of new inorganic compounds is fundamentally limited by the challenge of accurately and efficiently predicting thermodynamic stability. Traditional methods, primarily based on Density Functional Theory (DFT), are computationally intensive and time-consuming, creating a bottleneck in the materials development pipeline [1]. Machine learning (ML) has emerged as a powerful tool to accelerate this process by predicting stability directly from chemical composition or structural information, enabling the rapid screening of vast compositional spaces [12].

Two advanced architectures exemplify different and complementary approaches to this problem: the Electron Configuration Convolutional Neural Network (ECCNN), which leverages the intrinsic electronic structure of atoms, and the Representing Organic Structures with Graph Neural Networks (Roost) model, which captures complex interatomic interactions. This application note details the operational principles, performance benchmarks, and implementation protocols for these two architectures within the context of a broader research thesis on ML-driven prediction of inorganic compound stability.

Electron Configuration Convolutional Neural Network (ECCNN)

The ECCNN architecture is a composition-based model designed to mitigate the inductive biases often introduced by hand-crafted feature sets. Its core premise is that electron configuration (EC) is an intrinsic atomic property crucial for understanding chemical properties and reaction dynamics, and it serves as the primary input for first-principles calculations [1].

Architectural Workflow: The input to ECCNN is a matrix of dimensions 118 (elements) × 168 × 8, encoded from the electron configurations of the constituent atoms in a material [1]. This input matrix undergoes feature extraction through two consecutive convolutional operations, each employing 64 filters with a kernel size of 5 × 5. The second convolutional layer is followed by a batch normalization (BN) operation and a 2 × 2 max pooling layer to stabilize training and reduce dimensionality. The extracted features are then flattened into a one-dimensional vector and passed through fully connected (dense) layers to produce the final prediction of thermodynamic stability, typically quantified by the decomposition energy (ΔHd) [1].

Table 1: ECCNN Architecture Specifications

| Component | Specification | Purpose |

|---|---|---|

| Input Dimension | 118 × 168 × 8 | Encoded electron configuration of the material |

| Convolutional Layers | 2 layers, 64 filters (5×5) | Feature extraction from electron configuration data |

| Pooling | 2 × 2 Max Pooling | Dimensionality reduction and translational invariance |

| Normalization | Batch Normalization | Stabilizes and accelerates training |

| Output | Stability (e.g., ΔHd) | Prediction of thermodynamic stability |

Roost (Representing Organic Structures with Graph Neural Networks)

The Roost model adopts a graph-based representation of materials. It conceptualizes a chemical formula as a complete graph (a graph where every pair of distinct vertices is connected by a unique edge), where nodes represent atoms and edges represent the interactions between them [1]. This structure is processed using a message-passing graph neural network (GNN) to learn rich representations for predicting properties.

Architectural Workflow (Message Passing): Roost operates under the Message Passing Neural Network (MPNN) framework [13]. For a graph representing a crystal structure:

- Each atom (node) is initialized with a feature vector, hv⁰.

- In the message-passing phase, each node aggregates "messages" (mvᵗ⁺¹) from its neighboring nodes. This is governed by a learnable message function, Mₜ(·), which considers the features of the node, its neighbors, and the connecting edges [13].

- Each node then updates its own feature vector using an update function, Uₜ(·), which combines its current state with the aggregated message: hvᵗ⁺¹ = Uₜ(hvᵗ, mvᵗ⁺¹) [13].

- This process is repeated for K steps, allowing information to propagate across the graph. Finally, a readout function pools the node embeddings from the entire graph to generate a single, graph-level representation used for the stability prediction [1] [13].

Table 2: Roost Architecture Specifications

| Component | Specification | Purpose |

|---|---|---|

| Graph Representation | Complete Graph | Models all interatomic interactions |

| Core Mechanism | Message Passing Neural Network (MPNN) | Learns representations from graph structure |

| Key Feature | Attention Mechanism | Captures importance of different atomic interactions |

| Information Propagation | K-hop neighborhood (via K steps) | Allows information to travel across the graph |

| Readout | Permutation-invariant pooling | Generates a graph-level embedding from atoms |

Quantitative Performance Comparison

In experimental validations, models are typically evaluated on their ability to classify compounds as stable or unstable, often using the Area Under the Curve (AUC) of the Receiver Operating Characteristic curve. An ensemble framework named ECSG, which integrates ECCNN, Roost, and another model (Magpie), has demonstrated state-of-the-art performance [1].

Table 3: Performance Benchmarks of Stability Prediction Models

| Model / Framework | Key Input Representation | Reported Performance (AUC) | Sample Efficiency |

|---|---|---|---|

| ECSG (Ensemble) | Electron Configuration, Graph, Atomic Properties | 0.988 | Requires only 1/7 of data to match benchmark performance [1] |

| ECCNN (Base Model) | Electron Configuration | High (Contributes to ECSG) | Excellent [1] |

| Roost (Base Model) | Compositional Graph (Complete Graph) | High (Contributes to ECSG) | Good [1] |

| Universal Interatomic Potentials | Atomistic Structure | High (As per Matbench Discovery) | Varies by model [12] |

Experimental Protocols

Protocol 1: Training an ECCNN for Stability Classification

Objective: To train an ECCNN model from scratch to predict the thermodynamic stability of inorganic compounds using their chemical formulas.

Materials & Reagents:

- Training Dataset: A labeled dataset of inorganic compounds with known stability, such as from the Materials Project (MP) or JARVIS databases. The target variable is typically the decomposition energy (ΔHd) or a binary stable/unstable label derived from the convex hull [1] [12].

- Computing Environment: A machine with a GPU, Python 3.x, and deep learning libraries (e.g., TensorFlow/Keras or PyTorch).

Procedure:

- Data Preprocessing:

- Input Encoding: Convert the chemical formula of each compound into the ECCNN input tensor (118 × 168 × 8). This involves representing the electron configuration for each element present in the compound and normalizing the values [1].

- Target Definition: Calculate the target variable. For binary classification, define stable compounds as those with ΔHd = 0 eV/atom (on the convex hull) and unstable otherwise [12].

- Data Splitting: Split the dataset into training, validation, and test sets (e.g., 80/10/10). Ensure splits are time-based or cluster-based to avoid data leakage for a prospective benchmark [12].

Model Construction:

- Implement the ECCNN architecture as described in Section 2.1.

- Initialize the convolutional and dense layers with appropriate initializers (e.g., He normal).

Model Training:

- Compilation: Use the Adam optimizer and a loss function suitable for the task (e.g., binary cross-entropy for classification).

- Hyperparameter Tuning: Utilize optimization frameworks like Optuna or Hyperopt to tune key hyperparameters [14] [15].

- Learning Rate: Search in log space (e.g., 1e-5 to 1e-2).

- Number of Dense Layers/Units: Tune based on model performance and complexity.

- Batch Size: Adjust based on available GPU memory.

- Training Loop: Train the model on the training set, using the validation set for early stopping to prevent overfitting.

Model Evaluation:

Protocol 2: Implementing Roost for Prospective Materials Screening

Objective: To apply a pre-trained Roost model in a high-throughput virtual screening campaign to identify novel stable crystals.

Materials & Reagents:

- Candidate Dataset: A large, unlabeled dataset of hypothetical inorganic compositions, generated through substitution or other generative methods.

- Pre-trained Model: A Roost model that has been trained on a comprehensive database like the Materials Project or OQMD [1] [16].

- Validation Tool: Access to DFT codes (e.g., VASP, Quantum ESPRESSO) for final validation of top candidates [12].

Procedure:

- Candidate Generation:

- Define the chemical search space (e.g., all possible ternary oxides).

- Generate candidate chemical formulas, ensuring they are charge-balanced.

Model Inference & Screening:

- Graph Construction: For each candidate composition, build the complete graph representation required by Roost.

- Prediction: Use the pre-trained Roost model to predict the stability (e.g., energy above hull) for all candidates.

- Ranking: Rank the candidates based on the predicted stability metric.

Candidate Selection and Validation:

- Filtering: Apply a confidence threshold to select the top-ranked candidates predicted to be stable. Be aware that accurate regressors can still have high false-positive rates near the stability boundary, so classification metrics are crucial [12].

- DFT Validation: Perform full DFT relaxation and convex hull analysis on the top candidates to confirm their stability. This step is critical to verify the ML predictions [1] [12].

- Iteration: Use the DFT-validated results to potentially fine-tune the ML model, creating an active learning loop.

Protocol 3: Constructing an Ensemble Super-Learner (ECSG Framework)

Objective: To build the ECSG ensemble super-learner by stacking ECCNN, Roost, and Magpie to achieve maximum predictive accuracy and sample efficiency [1].

Procedure:

- Base Model Training:

- Independently train the three base models (ECCNN, Roost, and Magpie) on the same training dataset. Each model must be trained with its respective input representation (electron configuration, graph, and atomic property statistics).

Meta-Feature Generation:

- Use the trained base models to generate predictions (meta-features) on the validation set. The output from each model for each sample in the validation set becomes a new feature.

Meta-Learner Training:

- Train a meta-learner (a relatively simple model like logistic regression or a shallow decision tree) on the validation set using the base models' predictions as input and the true labels as the target.

Inference with the Ensemble:

- For a new, unseen composition, first get the predictions from all three base models.

- Feed these three predictions into the trained meta-learner to produce the final, consensus prediction of stability.

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Item Name | Function / Application | Example Sources |

|---|---|---|

| Materials Databases | Provide training data (formation energies, structures) and benchmarking sets. | Materials Project (MP), Open Quantum Materials Database (OQMD), JARVIS, Alexandria [1] [17] |

| Hyperparameter Optimization Libraries | Automate the tuning of model parameters for optimal performance. | Optuna, Scikit-opt, Hyperopt [14] [15] |

| Graph Neural Network Libraries | Provide building blocks for implementing and training Roost and other GNN models. | PyTorch Geometric, Deep Graph Library (DGL) |

| Benchmarking Frameworks | Standardized evaluation of model performance in a discovery context. | Matbench Discovery [12] |

| DFT Software Packages | Provide high-fidelity validation of ML predictions and generate training data. | VASP, Quantum ESPRESSO, CASTEP |

Future Perspectives and Scaling

The field is moving towards larger models and datasets. Research into scaling laws for GNNs suggests that increasing model size and dataset volume can systematically improve prediction accuracy for atomistic properties [18]. Future work may involve developing foundational GNN models with billions of parameters trained on terabyte-scale datasets, akin to trends in large language models [18]. Furthermore, integrating network science to analyze material synthesis pathways presents a promising avenue to bridge the gap between predicting stable crystals and identifying feasible synthetic routes [19].

Ensemble learning, particularly stacked generalization (stacking), has emerged as a powerful machine learning technique to mitigate the inductive biases inherent in single-model approaches. This is critically important in the field of inorganic materials science, where accurately predicting properties like thermodynamic stability from composition alone remains challenging due to the complex, multi-scale factors governing material behavior [1]. Stacking integrates multiple, diverse base models into a super-learner, strategically reducing variance and bias to achieve more robust and accurate predictions than any single model could provide [20] [21].

This protocol outlines the application of stacked generalization for predicting the thermodynamic stability of inorganic compounds, using the recently developed Electron Configuration Stacked Generalization (ECSG) framework as a detailed case study [1] [22].

Key Concepts and Theoretical Foundation

The Bias-Variance Trade-off in Materials Prediction

A core challenge in machine learning is the bias-variance tradeoff [20] [21].

- Bias measures the average difference between a model's predictions and the true values, often resulting from overly simplistic assumptions (underfitting).

- Variance measures a model's sensitivity to fluctuations in the training data, leading to overfitting [20].

- Ensemble methods like stacking address this by combining models to balance their individual weaknesses, yielding a lower overall error [21].

- Bagging (Bootstrap Aggregating): Trains multiple instances of the same model in parallel on different data subsets (e.g., Random Forest) to reduce variance [23].

- Boosting: Trains models sequentially, with each new model focusing on the errors of its predecessors (e.g., AdaBoost, XGBoost) to reduce bias [23].

- Stacking (Stacked Generalization): A heterogeneous parallel method that combines predictions from different types of base models using a meta-learner to produce final predictions [20]. This approach leverages diverse "knowledge domains" to create a more generalized and accurate super-learner [1].

Application Note: The ECSG Framework for Stability Prediction

Framework Rationale

Predicting inorganic compound stability from composition alone is difficult. Single-model approaches often rely on specific domain knowledge (e.g., elemental fractions or graph representations of crystals), which can introduce substantial inductive bias and limit model generalizability [1]. The ECSG framework mitigates this by integrating three distinct modeling perspectives into a single stacked ensemble [1]:

- ECCNN (Electron Configuration Convolutional Neural Network): Utilizes fundamental electron configuration data.

- Roost (Representation Learning from Stoichiometry): Models the chemical formula as a graph of interacting atoms.

- Magpie (Materials Agnostic Platform for Informatics and Exploration): Employs statistical features of elemental properties.

The ECSG model demonstrated superior performance in predicting thermodynamic stability, quantified by the decomposition energy (ΔHd), on datasets from the Materials Project (MP) and Joint Automated Repository for Various Integrated Simulations (JARVIS) [1] [22].

Table 1: Performance Metrics of the ECSG Ensemble and its Constituent Models on Stability Prediction.

| Model / Framework | AUC Score | Key Input Features | Primary Domain Knowledge |

|---|---|---|---|

| ECSG (Ensemble) | 0.988 [1] | Multiple feature sets | Integrated multi-scale knowledge |

| ECCNN (Base Model) | - | Electron configuration matrix | Electronic structure |

| Roost (Base Model) | - | Chemical formula (graph) | Interatomic interactions |

| Magpie (Base Model) | - | Elemental property statistics | Atomic physical properties |

A critical advantage of ECSG is its remarkable sample efficiency. The framework achieved performance comparable to existing models using only one-seventh of the training data, significantly reducing computational resource requirements for model development [1].

Experimental Protocols

Workflow for Implementing Stacked Generalization

The following diagram illustrates the end-to-end workflow for implementing a stacking ensemble, modeled after the ECSG approach.

Protocol 1: Data Preparation and Feature Engineering

Objective: Prepare training data and generate diverse input features for base models. Materials: Access to materials databases (e.g., Materials Project, OQMD, JARVIS) providing composition and formation energy or stability labels.

Data Collection:

- Compile a dataset of inorganic compounds with known thermodynamic stability labels (e.g., "stable" or "unstable" based on the convex hull). A sample from the Materials Project is suitable [22].

- Structure data as a CSV file with columns:

material-id,composition(e.g., "Fe2O3"), andtarget(Boolean stability label) [22].

Feature Generation for Base Models:

- For ECCNN: Encode the electron configuration of each composition into a 2D matrix (e.g., shape 118×168×8 representing elements, energy levels, and electron counts) [1]. This can be performed at runtime or preprocessed and saved.

- For Roost: Process the chemical formula into a graph representation where nodes are elements and edges represent interactions [1].

- For Magpie: Calculate a vector of statistical features (mean, range, variance, etc.) for a suite of elemental properties (e.g., atomic number, radius, electronegativity) for each composition [1].

- Note: To save computation time during cross-validation, precompute and save all features using a script like

feature.py[22].

Protocol 2: Training the Stacking Ensemble with Cross-Validation

Objective: Train the ECSG ensemble model using k-fold cross-validation to generate unbiased meta-features. Materials: Preprocessed feature sets from Protocol 1.

Split Data: Partition the entire dataset into k folds (e.g., k=5) [22] [23].

Train Base Models and Generate Meta-Features:

- For each fold

i(where i=1 to k):- Treat fold

ias the validation set; the remaining k-1 folds are the training set. - Train each of the three base models (ECCNN, Roost, Magpie) on the k-1 training folds.

- Use the trained models to predict probabilities (for classification) or values (for regression) on the held-out validation fold

i[1] [23].

- Treat fold

- After processing all k folds, you will have a complete set of out-of-sample predictions (meta-features) for the entire dataset from each base model.

- For each fold

Train the Meta-Model:

- Stack the out-of-sample predictions from all base models into a new feature matrix (the meta-feature set).

- Train the meta-model (e.g., logistic regression, XGBoost) using this meta-feature set and the original stability labels [1].

Final Model Fitting:

- Retrain each base model on the entire training dataset.

- These final base models and the trained meta-model together constitute the deployable ECSG ensemble [1].

Protocol 3: Model Validation and Deployment for Discovery

Objective: Validate ensemble performance and screen new compounds.

Validation:

- Evaluate the ensemble on a held-out test set using metrics relevant to materials discovery: Area Under the Curve (AUC), F1 Score, and notably, the False Negative Rate (FNR). A low FNR is critical to avoid missing promising stable materials [22].

Deployment for Screening:

- Input a new chemical composition.

- The trained base models generate their respective predictions.

- The meta-model takes these predictions as input and outputs the final, consensus stability prediction [22].

- Apply first-principles calculations (e.g., DFT) to top candidates for final validation, as performed in case studies on 2D semiconductors and double perovskites [1].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Ensemble Learning in Materials Science.

| Item Name | Function/Description | Example Use Case in Protocol |

|---|---|---|

| JARVIS/MP/OQMD Databases | Source of labeled training data (composition and stability). | Provides the composition and target labels for model training [1]. |

| ECCNN Feature Encoder | Encodes chemical composition into an electron configuration matrix. | Generates input features for the ECCNN base model [1]. |

| Roost Model | Graph neural network that learns from chemical formulas. | Serves as a base model capturing interatomic interactions [1]. |

| Magpie Feature Set | A set of statistical features from elemental properties. | Provides the feature vector for the Magpie base model (often using XGBoost) [1]. |

| Meta-Learner (e.g., Logistic Regression) | The model that learns to optimally combine base model predictions. | Final step in the stacking ensemble for robust prediction [1] [22]. |

| DFT Calculation (e.g., VASP) | First-principles validation for top candidate materials. | Final validation of model predictions before experimental synthesis [1]. |

Stacked generalization represents a paradigm shift in the machine learning-driven discovery of inorganic materials. By strategically integrating diverse modeling perspectives, the ECSG framework successfully mitigates the inductive bias that plagues single-model approaches, resulting in unprecedented accuracy and data efficiency for stability prediction. The detailed application notes and protocols provided here offer a template for researchers to implement this powerful strategy, accelerating the rational design of novel, thermodynamically stable compounds for advanced technological applications.

The discovery of new two-dimensional (2D) wide bandgap (WBG) semiconductors is pivotal for advancing next-generation nanoelectronics, deep-ultraviolet photodetectors, and flexible optoelectronics. A significant bottleneck in this discovery process is the rapid and accurate assessment of a material's thermodynamic stability, which determines its synthesizability and long-term viability. This application note details a machine learning (ML) framework, based on the Electron Configuration Stacked Generalization (ECSG) model, for predicting the stability of inorganic 2D WBG semiconductors. The protocol is designed to integrate seamlessly into a high-throughput computational screening pipeline, enabling researchers to efficiently identify promising candidate materials for experimental synthesis.

Machine Learning Framework for Stability Prediction

Model Architecture and Workflow

The prediction of compound stability is framed as a classification task, where the goal is to identify whether a hypothetical compound is thermodynamically stable based on its composition. The ECSG model employs a stacked generalization approach, which combines multiple base-level machine learning models to create a more accurate and robust super-learner [1]. This ensemble method mitigates the inductive biases inherent in any single model.

The framework integrates three distinct base models, each founded on different physical or chemical principles:

- ECCNN (Electron Configuration Convolutional Neural Network): This model uses the electron configuration of constituent elements as its primary input. The electron configuration defines the distribution of electrons in atomic energy levels and is a fundamental property that underlies chemical behavior and bonding. By leveraging this intrinsic atomic characteristic, the ECCNN model minimizes the reliance on manually crafted features, thereby reducing potential bias [1].

- Roost: This model represents a material's chemical formula as a graph, where atoms are nodes and their interactions are edges. It utilizes a graph neural network with an attention mechanism to capture the complex interatomic relationships that govern material stability [1].

- Magpie: This model uses a set of statistical features (e.g., mean, range, mode) derived from a wide array of elemental properties (e.g., atomic number, radius, electronegativity). These features provide a holistic, property-based representation of a material's composition [1].

The outputs of these three base models are then used as input features for a meta-learner, which is trained to produce the final, high-fidelity stability prediction. This architecture ensures that the model benefits from complementary perspectives on the factors governing stability [1].

Quantitative Performance

The ECSG model has been rigorously validated on materials databases, demonstrating high predictive accuracy as summarized in Table 1.

Table 1: Performance Metrics of the ECSG Stability Prediction Model

| Metric | Score | Evaluation Context |

|---|---|---|

| Area Under the Curve (AUC) | 0.988 | Predictive performance on stability classification within the JARVIS database [1]. |

| Data Efficiency | ~1/7 of data required | Achieves performance equivalent to existing models using only one-seventh of the training data [1]. |

Experimental Protocol for Stability Prediction

This protocol outlines the steps for applying the ECSG framework to screen for stable 2D wide bandgap semiconductors.

Data Curation and Preprocessing

- Define Compositional Space: Identify the set of elements and the range of compositions to be explored for novel 2D WBG materials. For instance, the search may focus on ternary oxides or chalcogenides.

- Generate Candidate Formulas: Use combinatorial methods to generate potential chemical formulas within the defined space. Valence balance rules should be applied to filter out chemically implausible compositions.

- Encode Input Features: For each candidate formula, generate the three distinct input representations required by the base models:

- For ECCNN: Encode the material's composition into a 118×168×8 matrix representing the electron configurations of the constituent elements [1].

- For Roost: Represent the composition as a stoichiometrically weighted set of atoms.

- For Magpie: Calculate a vector of statistical features from a list of elemental properties.

Model Application and Prediction

- Load Pre-trained Models: Utilize the pre-trained ECCNN, Roost, and Magpie base models, as well as the stacked meta-learner.

- Execute Predictions: Run the encoded candidate materials through each of the three base models to obtain their initial stability probabilities.

- Meta-LeveI Prediction: Feed the outputs from the three base models into the meta-learner to generate the final, consensus stability score for each candidate.

- Screen Candidates: Rank candidates based on their predicted stability scores and select the top-ranked materials for further validation. A high score indicates a high probability of being thermodynamically stable.

Validation and Downstream Analysis

- First-Principles Validation: Perform Density Functional Theory (DFT) calculations on the top-ranked candidates to verify their stability by computing their energy above the convex hull [1] [24].

- Property Prediction: For validated stable compounds, use complementary ML models, such as the Multistage Ensemble Learning Rapid Screening Network (MELRSNet), to predict key electronic properties like bandgap width to confirm their suitability as WBG materials [24].

- Experimental Synthesis: The final, computationally validated candidates become high-priority targets for experimental synthesis and characterization.

The following workflow diagram illustrates the integrated computational and experimental validation process for identifying stable 2D wide-bandgap semiconductors.

The Scientist's Toolkit

This section catalogs the essential computational and data resources required to implement the stability prediction protocol.

Table 2: Essential Research Reagents & Computational Tools

| Tool/Resource | Function/Description | Application in Protocol |

|---|---|---|

| ECSG Model | An ensemble ML framework for stability prediction. | Core predictive model that integrates ECCNN, Roost, and Magpie [1]. |

| MELRSNet | A hierarchical ML framework for bandgap prediction. | Used to predict the ultrawide bandgap of stable candidates [24]. |

| Materials Project (MP) | A database of computed material properties. | Source of training data and a reference for DFT validation [1] [24]. |

| JARVIS Database | A repository for quantum-mechanical properties. | Used for model training and benchmarking [1]. |

| DFT (VASP) | Software for first-principles quantum mechanical calculations. | Used for final validation of thermodynamic stability via convex hull analysis [25] [24]. |

| High-Throughput Computing | Infrastructure for parallel computation. | Enables rapid screening of thousands of candidate materials. |

The integration of the ECSG machine learning framework provides a powerful, data-driven protocol for accelerating the discovery of stable 2D wide bandgap semiconductors. By leveraging ensemble learning and electron configuration features, this approach achieves high predictive accuracy with exceptional data efficiency. The outlined workflow—from data curation and ML screening to DFT validation—offers researchers a robust and actionable pathway to identify the most promising synthetic targets, thereby streamlining the transition from computational design to experimental realization.

The discovery of novel double perovskite oxides (DPOs), materials with the general formula A₂BB′O₆, is pivotal for advancing technologies in catalysis, energy storage, and optoelectronics [26] [27]. Their exceptional compositional and structural flexibility allows for the tailoring of specific properties [26]. However, this very flexibility creates a vast chemical space that is prohibitively expensive and time-consuming to explore using traditional experimental methods or even first-principles computational techniques like Density Functional Theory (DFT) [28] [1]. This case study, situated within a broader thesis on machine learning (ML) prediction of inorganic compound stability, details how a targeted ML workflow can overcome this bottleneck. We demonstrate a protocol for efficiently identifying stable, high-performance DPO candidates, thereby accelerating their development for practical applications.

Machine Learning Workflow & Methodology

The standard framework for ML-aided discovery involves a sequential process of data curation, model training, prediction, and experimental validation. The following diagram outlines a generalized workflow for discovering stable double perovskites with targeted properties.

Data Curation and Feature Engineering

The first critical step involves assembling a high-quality dataset for model training.

- Data Sources: Large computational databases, such as the Materials Project (MP) and the Open Quantum Materials Database (OQMD), are primary sources. These provide computed properties, including formation energy and band structure, for thousands of known compounds [1] [29]. For example, one study sourced 2,937 double perovskite entries from the MP database to train a model predicting the nature of the bandgap [29].

- Stability Labeling: The thermodynamic stability of a compound is often quantified by its Energy Above the Convex Hull (Eₕ). Compounds with Eₕ = 0 eV/atom are considered stable, while those with Eₕ > 0 are metastable or unstable [30]. A typical threshold for screening is Eₕ ≤ 50 meV/atom [30].

- Feature Selection: The input features for the ML model are crucial. They can include:

- Elemental Properties: Statistical measures (mean, deviation, range) of atomic properties (e.g., ionic radius, electronegativity, electron affinity) for the A, B, and B′ sites [1].

- Electronic Structure: Features derived from the electron configuration of constituent elements have been shown to highly correlate with stability [1].

- Stability Descriptors: Traditional chemical descriptors like the Goldschmidt tolerance factor (t) and the octahedral factor (μ) are also used as inputs [29] [30].

Model Development and Key Algorithms

A hierarchical modeling approach is often employed to sequentially screen for stability and then predict target properties.

- Stability Classification: The first model is a classifier that predicts whether a hypothetical composition is stable. The XGBoost algorithm is widely used for this task. One model achieved an accuracy of 0.919, precision of 0.937, and recall of 0.935 in identifying stable perovskites [30].

- Property Regression: A second model predicts quantitative properties of the stable candidates identified in the first step. For predicting the Eₕ value, a XGBoost regression model can achieve a high coefficient of determination (R²) of 0.916 and a root mean square error (RMSE) of 24.2 meV/atom [30]. For electronic properties like band gap, a hierarchical ML screening process is effective, using one model to classify materials as wide band gap (Eg ≥ 0.5 eV) and a second regression model to predict the exact band gap value [28].

- Model Interpretation: Techniques like SHapley Additive exPlanations (SHAP) are used to interpret model predictions and identify the most influential features. For stability prediction, the highest occupied molecular orbital (HOMO) energy and the elastic modulus of the B-site element are often top contributors [30].

High-Throughput Screening and Validation

The trained models are deployed to screen vast virtual libraries of candidate compositions.

- Virtual Library Generation: A constraint satisfaction technique can generate millions of hypothetical perovskite compositions (e.g., over 1.1 million) that satisfy basic chemical rules [30].

- ML Screening: The ML models rapidly screen this library. For instance, one study screened 23,822 candidate materials to identify those with a low work function, ultimately selecting 27 for high-precision DFT validation [31].

- Validation: Promising ML-predicted candidates are validated using higher-fidelity computational methods (e.g., hybrid-DFT or GW calculations) and, ultimately, by synthesis and experimental characterization [31].

Key Findings & Data Presentation

Performance of ML Models in DPO Discovery

The application of the described workflow has yielded highly accurate and efficient models for predicting DPO properties. The table below summarizes the performance metrics of various ML models from recent studies.

Table 1: Performance Metrics of Machine Learning Models for Double Perovskite Property Prediction

| Prediction Task | ML Algorithm | Key Performance Metrics | Application Outcome | Source |

|---|---|---|---|---|

| Thermodynamic Stability (Classification) | XGBoost | Accuracy: 0.919, Precision: 0.937, F1-Score: 0.932 | Screened 682,143 stable perovskites from 1.1M+ virtual combinations | [30] |

| Energy Above Convex Hull, Eₕ (Regression) | XGBoost | R²: 0.916, RMSE: 24.2 meV/atom | Accurately predicted stability for DFT-validated candidates | [30] |

| Band Gap Nature (Direct/Indirect) | Light Gradient Boosting (LGBM) | Accuracy: 0.89, F1-Score: 0.90 | Identified 176 promising Br-based direct bandgap DPOs | [29] |

| Work Function (< 2.5 eV) | Ensemble ML | High-Precision Recall | Discovered 27 stable low-work-function perovskites; Ba₂TiWO₈ and Ba₂FeMoO₆ synthesized | [31] |

| Oxidation Temperature | XGBoost | R²: 0.82, RMSE: 75 °C | Identified multifunctional materials for harsh environments | [25] |

Experimental Validation: From Prediction to Synthesis

A critical measure of the workflow's success is the experimental validation of ML-predicted candidates.

- Case Study: Low-Work-Function Perovskites: An ML-guided study screened 23,822 candidate materials to find stable perovskites with a low work function. This led to the identification and subsequent successful synthesis of Ba₂TiWO₈ and Ba₂FeMoO₆ [31].

- Functional Performance: The synthesized materials demonstrated promising functional properties. Ba₂FeMoO₆ exhibited exceptional long-term cycling stability as a Li-ion battery electrode, enduring 10,000 cycles at a high current density [31]. Ba₂TiWO₈ showed catalytic activity for both NH₃ synthesis and decomposition under mild conditions [31].

Experimental Protocols

Protocol 1: Solid-State Synthesis of Polycrystalline Double Perovskite Oxides

This is a standard method for synthesizing gram-scale quantities of double perovskite powders [27].

Principle: High-temperature reaction of solid precursor powders to form the desired crystalline oxide phase through diffusion.

Materials:

- Precursor Oxides/Carbonates: High-purity (>99%) powders of carbonates (e.g., BaCO₃, SrCO₃) and oxides (e.g., TiO₂, Fe₂O₃, WO₃, MoO₃).

- Grinding Media: Agate mortar and pestle, or ball milling apparatus with zirconia vessels and balls.

- Furnace: High-temperature furnace capable of reaching 1200-1400°C.