Machine Learning in Inorganic Materials Synthesis: From Predictive Models to Autonomous Discovery

This article comprehensively explores the transformative role of machine learning (ML) in accelerating and optimizing the synthesis of inorganic materials.

Machine Learning in Inorganic Materials Synthesis: From Predictive Models to Autonomous Discovery

Abstract

This article comprehensively explores the transformative role of machine learning (ML) in accelerating and optimizing the synthesis of inorganic materials. It covers foundational concepts where ML addresses the traditional trial-anderror bottlenecks in techniques like chemical vapor deposition and hydrothermal methods. The review delves into advanced methodological frameworks, including hierarchical attention networks and generative models, for predicting optimal synthesis conditions and material properties. It critically examines challenges related to data quality, model interpretability, and generalizability, while also presenting validation strategies that demonstrate ML models can outperform human experts in predicting synthesizability. Finally, the article synthesizes key takeaways and future directions, highlighting the implications for developing more efficient, data-driven synthesis pipelines in materials science and related biomedical applications.

The Synthesis Bottleneck: How Machine Learning is Revolutionizing Traditional Materials Discovery

The synthesis of inorganic materials with tailored properties is a cornerstone of advancements in energy storage, catalysis, and electronics. However, achieving precise control over material structure and properties is fundamentally hindered by the multi-variable nature of synthesis processes. Techniques such as chemical vapor deposition (CVD) and hydrothermal synthesis are influenced by a complex interplay of numerous parameters, where slight variations can significantly impact the final product's phase, morphology, and functionality. This challenge creates a vast, high-dimensional parameter space that is difficult to navigate using traditional, intuition-based experimental approaches.

The integration of machine learning (ML) and data-driven methodologies is transforming this domain, offering powerful tools to deconvolute these complex relationships and accelerate the discovery and optimization of novel materials. This Application Note explores the specific challenges of multi-variable synthesis, provides detailed experimental protocols for navigating parameter spaces, and highlights how ML frameworks can establish robust, predictive links between synthesis conditions and material outcomes.

The Multi-Variable Synthesis Landscape

Inorganic materials synthesis is characterized by its sensitivity to a wide array of interdependent processing conditions. The following examples from recent research illustrate the breadth and criticality of these parameters.

Hydrothermal Synthesis of VS₂ and WSe₂

Hydrothermal synthesis, a popular solution-based route, is governed by several key variables. A systematic study on the hydrothermal growth of vanadium disulfide (VS₂) identified that precursor molar ratio, reaction temperature, time, and ammonia concentration are all decisive in controlling the morphology and phase purity of the resulting nanosheets [1]. The research demonstrated that optimizing these parameters could reduce the conventional reaction time from 20 hours to just 5 hours while maintaining phase purity, highlighting the profound impact of systematic parameter optimization [1].

Similarly, a study on tungsten diselenide (WSe₂) nanostructures found that reaction temperature and growth duration directly influence crystallite size, morphology, and the presence of impurities [2]. The study reported a clear morphological transition from aggregated particles to flake-like nanostructures with increasing temperature, while reaction time primarily affected crystal refinement and stacking [2].

Table 1: Key Parameters in Hydrothermal Synthesis of TMDCs and Their Effects

| Synthesis Parameter | Material System | Impact on Material Properties | Optimal Range / Observation |

|---|---|---|---|

| Precursor Molar Ratio (NH₄VO₃:TAA) | VS₂ [1] | Controls phase purity and structural integrity of nanosheets. | Ratios of 1:2.5, 1:5, 1:7.5, and 3:5 were systematically investigated. |

| Reaction Temperature | VS₂ [1] | Determines nucleation kinetics and growth rate. | Studied between 100°C and 220°C. |

| WSe₂ [2] | Drives morphological transformation. | Increased temperature changed morphology from aggregated particles to flake-like nanostructures. | |

| Reaction Time | VS₂ [1] | Impacts crystallinity and phase purity. | Time reduced from 20 h to 5 h while maintaining quality. |

| WSe₂ [2] | Influences crystal refinement and stacking. | Studied between 36 h and 60 h. | |

| Ammonia Concentration | VS₂ [1] | Affects interlayer spacing and deposition uniformity. | Volumes between 2 mL and 6 mL were evaluated. |

The Data Challenge in Synthesis Science

A significant hurdle in applying ML to materials synthesis is the quality and structure of available data. A critical reflection on text-mining attempts revealed that datasets compiled from literature sources often struggle with the "4 Vs" of data science: Volume, Variety, Veracity, and Velocity [3]. For instance, an effort to text-mine solid-state and solution-based synthesis recipes encountered issues such as inconsistent reporting of parameters, the use of synonyms for synthesis operations, and difficulties in automatically reconstructing balanced chemical reactions from text, resulting in a low overall extraction yield [3]. These inherent biases and inconsistencies in historical data can limit the performance of machine-learned models for predictive synthesis.

Machine Learning-Guided Synthesis Workflows

To overcome the limitations of traditional methods, a new paradigm combining high-throughput experimentation, robust data management, and ML modeling is emerging. This approach transforms the iterative "cook-and-look" cycle into a closed-loop, autonomous discovery process.

Generative Models for Inverse Design

Generative models represent a shift from screening known materials to creating novel ones. MatterGen, a diffusion-based generative model, directly generates stable, diverse inorganic crystal structures across the periodic table [4]. The model can be fine-tuned to steer the generation toward materials with desired chemistry, symmetry, and functional properties (e.g., mechanical, electronic, magnetic), effectively performing inverse design [4]. As a proof of concept, a material generated by MatterGen was synthesized, and its measured property was within 20% of the target value [4].

ML for Synthesis Parameter Optimization

Machine learning also optimizes synthesis pathways for target materials. ML-driven robotic laboratories—or "Robot scientists"—integrate AI and automated robotic systems to conduct experiments, analyze data, and optimize synthesis conditions with minimal human intervention [5]. These platforms can drastically accelerate the mapping of synthesis parameter spaces, such as optimizing temperature, time, and precursor compositions to achieve a desired crystalline phase or morphology [5].

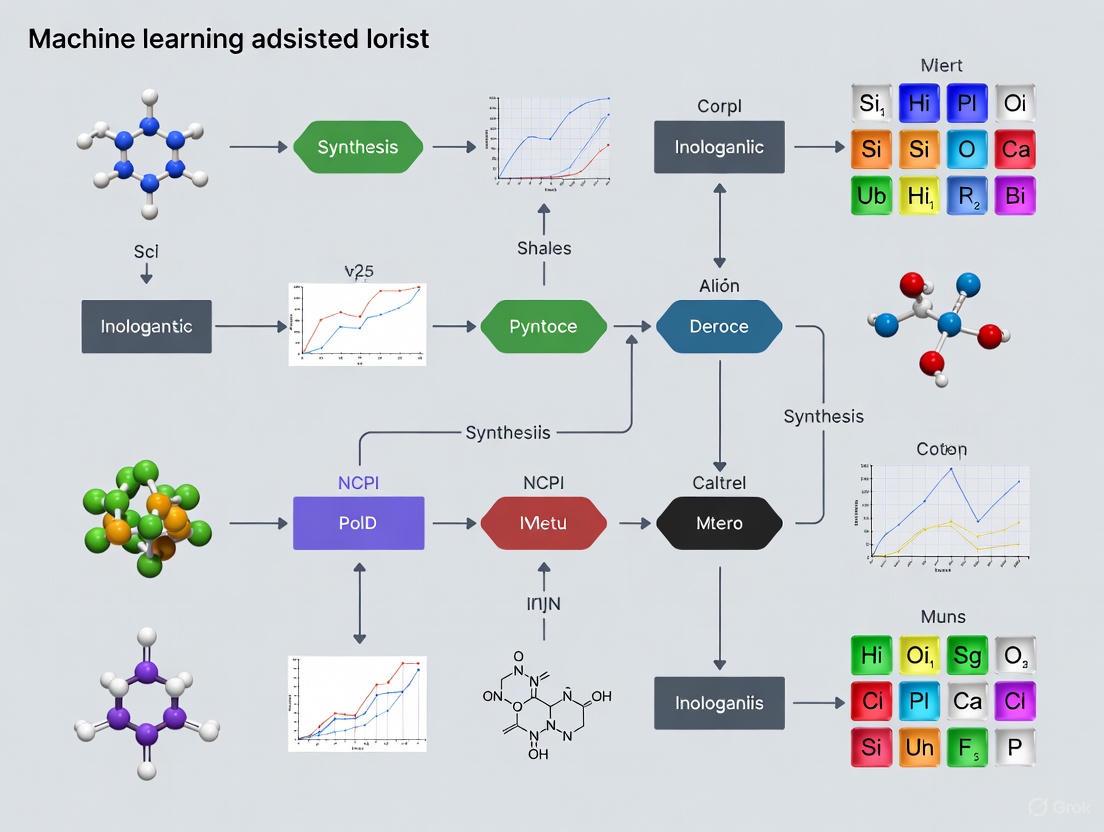

The diagram below outlines a comparative workflow between traditional and ML-accelerated approaches to synthesis optimization.

Detailed Experimental Protocol: Hydrothermal Synthesis of VS₂ Nanosheets

The following protocol, adapted from Shahzad et al., details the systematic hydrothermal synthesis of layered VS₂ nanosheets on a stainless-steel mesh substrate, providing a practical example of managing multiple synthesis variables [1].

Research Reagent Solutions

Table 2: Essential Materials and Their Functions for VS₂ Hydrothermal Synthesis

| Reagent/Material | Function in the Protocol | Specifications & Notes |

|---|---|---|

| Ammonium Metavanadate (NH₄VO₃) | Vanadium (V) precursor | Provides the metal source for VS₂ formation. |

| Thioacetamide (TAA, C₂H₅NS) | Sulfur (S) precursor | Decomposes under heat to release S²⁻ ions. |

| Ammonia Solution (NH₃·H₂O) | pH modifier and complexing agent | Facilitates dissolution of NH₄VO₃ and influences interlayer spacing. |

| Stainless Steel Mesh (316L) | Growth substrate | 3D porous scaffold for lateral growth of freestanding VS₂ nanosheets. |

| Deionized Water | Solvent | Reaction medium for hydrothermal synthesis. |

Step-by-Step Procedure

- Precursor Solution Preparation: Dissolve ammonium metavanadate (NH₄VO₃) and thioacetamide (TAA) in 30 mL of deionized water at a predetermined molar ratio (e.g., 1:2, 1:5, 1:7.5). Add a specified volume of ammonia solution (2–6 mL) to the mixture.

- Stirring: Magnetically stir the solution for 1 hour at room temperature until a homogeneous black solution is obtained, indicating the complete dissolution of NH₄VO₃.

- Autoclave Setup: Transfer the homogeneous solution into a 50 mL Teflon-lined stainless-steel autoclave. Insert a rectangular piece of stainless-steel mesh (e.g., 1.8 × 4.8 cm²), ensuring it is fully immersed in the solution.

- Hydrothermal Reaction: Seal the autoclave and place it in a preheated oven. Heat to the target reaction temperature (e.g., 100°C, 140°C, 180°C, or 220°C) for a specified holding time (e.g., ≤1, 2, 3, 5, 10, or 20 hours).

- Product Recovery: After the reaction, allow the autoclave to cool to room temperature naturally. Open the autoclave and carefully retrieve the stainless-steel mesh, which will now have freestanding VS₂ flakes grown on its surface (VS₂/SS).

- Washing and Drying: Thoroughly wash the retrieved VS₂/SS mesh multiple times with deionized water and ethanol to remove any residual ions and by-products. Dry the sample in a vacuum oven at 60°C for 12 hours.

Characterization and Validation

- Structural Analysis: Use X-ray diffraction (XRD) to confirm the crystal structure and phase purity of the synthesized VS₂. Compare the diffraction patterns with reference data.

- Morphological Analysis: Perform field emission scanning electron microscopy (FE-SEM) to analyze the morphology (e.g., nanosheets, hierarchical structures) and uniformity of the deposited material.

- Elemental Analysis: Conduct energy-dispersive X-ray spectroscopy (EDS) coupled with SEM for elemental mapping to confirm the V:S ratio and spatial distribution.

Machine Learning Integration

To implement an ML-guided optimization loop for this protocol:

- Data Logging: Record all synthesis variables (precursor ratios, temperatures, times, ammonia volumes) and corresponding characterization results (phase purity, morphology descriptors) in a structured database.

- Model Training: Use this dataset to train a machine learning model (e.g., a random forest regressor or a neural network) to predict material outcomes from synthesis parameters.

- Iterative Optimization: Employ optimization algorithms (e.g., Bayesian optimization) to suggest new synthesis parameter sets that are predicted to improve the material outcome, then validate these suggestions experimentally to close the loop.

Essential Tools for the Modern Synthesis Scientist

Navigating multi-variable synthesis requires a toolkit that spans from traditional characterization to advanced computational software.

Table 3: Key Software and Analytical Tools for Synthesis Research

| Tool Category | Example(s) | Application in Synthesis Research |

|---|---|---|

| Generative ML Models | MatterGen [4] | Inverse design of novel, stable inorganic crystal structures with target properties. |

| Data Analysis & ML Platforms | Python (scikit-learn, PyTorch), R [6] | Developing custom models for property prediction and synthesis parameter optimization. |

| Automated ML (AutoML) | AutoGluon, TPOT, H₂O.ai [5] | Automating the process of model selection and hyperparameter tuning. |

| 3D Data Visualization & Analysis | Thermo Scientific Avizo Software [7] | AI-aided analysis and visualization of 3D microstructural data (e.g., from FIB-SEM). |

| High-Throughput Computation | Density Functional Theory (DFT) [4] [5] | Calculating material properties (e.g., formation energy) for database generation and model training. |

The challenge of multi-variable synthesis in materials science is being met by a new, data-driven paradigm. As detailed in this application note, the systematic investigation of synthesis parameters—coupled with machine learning models for generative design and parameter optimization—provides a powerful framework for navigating complex parameter spaces. The integration of automated robotic laboratories and high-throughput characterization will further accelerate this process, creating a closed-loop system where ML models not only predict but also drive experimental validation.

Future advancements will hinge on improving the quality, volume, and standardization of synthesis data [3], developing more interpretable ML models that provide chemical insights [5], and the wider adoption of these integrated workflows by the research community. By embracing these tools and methodologies, researchers can transform the art of materials synthesis into a more predictable and accelerated science, unlocking next-generation functional materials for a wide range of technological applications.

The discovery and synthesis of novel inorganic materials have traditionally been guided by experimental intuition and laborious, sequential trial-and-error. This process is often slow, resource-intensive, and unable to efficiently navigate the vastness of chemical space. However, a new paradigm is emerging, fueled by advances in data science and machine learning (ML). This paradigm shift moves materials design from a largely empirical endeavor to a rational, data-driven process. By leveraging large-scale computational and experimental data, machine learning is now poised to accelerate the entire materials design pipeline, from initial prediction to final synthesis, offering a systematic approach to finding the optimal material for any given application [8].

This document outlines the key components of this data-driven approach, providing application notes and protocols for researchers in inorganic materials synthesis and drug development. We detail the data sources, machine learning methodologies, and experimental frameworks that are enabling this transformative change.

The efficacy of any data-driven approach is contingent on the quality, volume, and diversity of the underlying data. For materials science, this data is housed in several key databases, which can be categorized as either repositories of known synthesized compounds or libraries of hypothetical materials.

Table 1: Key Databases for Data-Driven Materials Design

| Database Name | Type of Data | Key Features | Number of Materials/Entries |

|---|---|---|---|

| Materials Project [8] [9] | Computed Properties | Computed properties of known and predicted inorganic materials; includes analysis tools. | >200,000 materials [9] |

| Inorganic Crystal Structure Database (ICSD) [8] | Experimental Structures | A comprehensive collection of experimentally determined inorganic crystal structures. | >190,000 structures [8] |

| Cambridge Structural Database (CSD) [8] | Experimental Structures | A repository for small-molecule organic and metal-organic crystal structures. | >1.1 million structures [8] |

| Text-Mined Synthesis Recipes [3] | Experimental Procedures | A dataset of synthesis parameters (precursors, temperatures, times) extracted from scientific literature. | ~67,457 recipes (solid-state & solution) [3] |

| CoRE MOF Database [8] | Curated Experimental Structures | A collection of experimentally synthesized metal-organic frameworks, curated for computational readiness. | ~10,000 structures [8] |

A critical challenge is that these databases often have inherent biases and lack diversity in certain areas of chemical space. For instance, experimental MOF databases are concentrated in the small-pore region, while hypothetical databases cover more large-pore structures [8]. Understanding these distributions through unsupervised learning is a vital first step to avoid drawing incorrect conclusions from the data [8].

Core Methodologies and Protocols

Protocol 1: Multi-Fidelity Bayesian Optimization for Materials Screening

This protocol describes an alternative to the traditional "computational funnel," which requires pre-defined knowledge of method accuracy and fixed resource allocation. The Multi-Fidelity Bayesian Optimization (MFBO) approach dynamically learns the relationships between different data sources (e.g., cheap computational simulations and expensive experimental measurements) to reduce the total cost of optimization [10].

Application Note: This method is particularly valuable when high-fidelity experimental data is scarce and expensive, but large amounts of lower-fidelity computational data are available.

Procedure:

- Define the Optimization Goal: Identify the target material property to be optimized (e.g., battery capacity, catalytic activity) and specify the target (high) fidelity, which is typically the experimental measurement.

- Initialize the Model: Start with a small set of initial data points that span multiple fidelities. A multi-output Gaussian process is used as the core model to correlate the different fidelities [10].

- Iterative Optimization Loop: a. Train the Model: Train the multi-output Gaussian process on all data collected so far. b. Calculate Target Acquisition: Compute a standard acquisition function (e.g., Expected Improvement) based on the model's predictions at the target fidelity [10]. c. Select Next Sample and Fidelity: For the candidate points with the highest acquisition scores, identify the specific fidelity (experimental or computational) that, when measured, would most reduce the prediction variance at that point per unit cost. This is the core of the Targeted Variance Reduction (TVR) algorithm [10]. d. Execute Experiment/Calculation and Update: Perform the selected measurement and add the new data point (input parameters, fidelity, resulting property value) to the training set.

- Termination: Repeat Step 3 until the budget is exhausted or a performance threshold is met.

Advantages:

- Cost Reduction: Reduces overall optimization cost by a factor of three on average compared to standard funnels by smartly allocating resources [10].

- Dynamic Learning: Does not require pre-specified knowledge of the accuracy or cost-ranking of different methods.

- Progressive: Allows for flexible termination and dynamic re-allocation of resources.

Protocol 2: Text-Mining Synthesis Recipes for Anomaly Detection

This protocol focuses on extracting synthesis insights from the vast body of scientific literature. The goal is not to build a predictive model, but to identify rare, anomalous recipes that defy conventional wisdom and can inspire new mechanistic hypotheses [3].

Application Note: This approach is useful when standard synthesis models fail to provide novel insights due to data limitations. The focus shifts from regression to knowledge discovery.

Procedure:

- Data Procurement: Obtain full-text permissions from scientific publishers and download papers published after 2000 in HTML/XML format [3].

- Identify Synthesis Paragraphs: Use a classifier to identify paragraphs in the manuscript that describe inorganic synthesis procedures based on the presence of specific keywords [3].

- Extract Targets and Precursors:

a. Replace all chemical compound names with a general

<MAT>tag. b. Use a BiLSTM-CRF (Bidirectional Long Short-Term Memory with a Conditional Random Field) neural network model, trained on manually annotated data, to label each<MAT>tag as a target material, precursor, or other (e.g., atmosphere, solvent) based on sentence context [3]. - Construct Synthesis Operations: Use Latent Dirichlet Allocation (LDA) to cluster synonyms and keywords into topics corresponding to specific synthesis operations (e.g., heating, mixing, drying). Extract associated parameters (temperature, time) [3].

- Compile Recipes: Combine the extracted information into a structured database (e.g., JSON format) [3].

- Anomaly Detection and Analysis: Manually or semi-automatically screen the compiled recipes to identify procedures that are unusual or run counter to established intuition. These anomalies become candidates for generating new scientific hypotheses about reaction mechanisms, which must then be validated experimentally [3].

Workflow Visualization

Data-Driven Materials Optimization Workflow

Text-Mining for Synthesis Insight

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Data-Driven Materials Synthesis

| Item / Solution | Function / Role in the Workflow |

|---|---|

| Hypothetical Materials Databases (e.g., ToBaCCo) [8] | Provides a large search space of computationally generated, potentially synthesizable structures for initial screening. |

| High-Throughput Screening (HTS) Robotics [11] | Automates the synthesis and characterization of thousands of material samples, generating the large-scale experimental data required for ML models. |

| Multi-Fidelity Machine Learning Model [10] | The core algorithm that fuses data from different sources (e.g., computation and experiment) to guide the discovery process and reduce costs. |

| Text-Mined Synthesis Database [3] | Serves as a knowledge base of historical synthesis procedures, enabling the analysis of trends and the detection of anomalous, high-value recipes. |

| Metal-Organic Framework (MOF) Precursor Libraries [8] | Well-defined sets of metal nodes and organic linkers used for the rational and combinatorial synthesis of porous materials. |

Synthesizability in Machine Learning-Assisted Inorganic Materials Synthesis

Definition of Key Concepts

Synthesizability

In the context of inorganic crystalline materials discovery, synthesizability is defined as a material's potential to be synthetically accessible through current laboratory capabilities, regardless of whether it has been synthesized and reported yet [12]. This distinguishes it from the mere existence of a material in databases, framing it as a forward-looking prediction crucial for guiding discovery efforts. The core challenge lies in the absence of a universal synthesizability principle, as the successful synthesis of a material depends on a complex interplay of thermodynamic stabilization, kinetic reaction pathways, selective nucleation, and non-physical considerations such as reactant cost and equipment availability [12].

Feature Engineering

Feature engineering is the process of creating, selecting, and transforming input variables (features) from raw data to significantly improve the performance and accuracy of machine learning models [13]. In materials science, this involves converting raw chemical composition, structural data, or synthesis conditions into meaningful representations that allow models to effectively learn underlying patterns. While deep learning can automate some feature learning, particularly for image or text data, domain-specific feature engineering remains critical for tabular and scientific data, offering benefits in model accuracy, reduced overfitting, enhanced interpretability, and greater computational efficiency [14] [13].

Historical Data

Historical data in this field refers to the comprehensive, cumulative record of previously synthesized and characterized inorganic crystalline materials, as cataloged in databases like the Inorganic Crystal Structure Database (ICSD) [12]. This data serves as the foundational positive set for training machine learning models. It encapsulates the implicit knowledge and constraints of solid-state chemistry learned through decades of experimental work, enabling models to infer the complex, multi-faceted rules governing successful synthesis without being explicitly programmed with physical laws [12] [5].

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Precision | Key Advantage | Key Limitation |

|---|---|---|---|

| SynthNN (Synthesizability Classification) [12] | 7x higher than DFT formation energy [12] | Learns chemistry directly from data; outperforms human experts | Requires a large database of known materials for training |

| DFT Formation Energy [12] | ~50% of synthesized materials captured [12] | Based on fundamental thermodynamic principles | Fails to account for kinetic stabilization; misses many viable materials |

| Charge-Balancing Proxy [12] | 37% of known materials are charge-balanced [12] | Computationally inexpensive and chemically intuitive | Inflexible; performs poorly for metallic/covalent materials and many ionic compounds |

Table 2: Common Feature Engineering Techniques for Materials Data

| Technique Category | Example Methods | Application in Materials Science |

|---|---|---|

| Feature Creation [13] | Domain-specific, Data-driven, Synthetic | Creating features from domain knowledge (e.g., ionic radii, electronegativity) or combining existing features. |

| Feature Transformation [13] | Normalization, Scaling, Encoding, Logarithmic Transformation | Preparing categorical (e.g., space groups) and numerical (e.g., formation energy) data for model consumption. |

| Feature Selection [13] | Filter, Wrapper, Embedded Methods | Identifying the most relevant physical descriptors to simplify models and avoid overfitting. |

Experimental Protocols

Protocol: Building a Synthesizability Classification Model (SynthNN)

1. Objective: To train a deep learning model that classifies inorganic chemical formulas as synthesizable or unsynthesizable.

2. Data Acquisition and Curation:

- Positive Data: Extract chemical formulas of synthesized inorganic crystalline materials from the Inorganic Crystal Structure Database (ICSD) [12].

- Negative Data: Artificially generate a larger set of chemical formulas that are not present in the ICSD. Acknowledge that this set is "unlabeled" rather than definitively "unsynthesizable," as it may contain viable but undiscovered materials [12].

3. Feature Representation:

- Utilize the

atom2vecframework. This method represents each chemical formula via a learned atom embedding matrix that is optimized alongside other neural network parameters [12]. - This approach allows the model to learn an optimal, task-specific representation of chemical compositions directly from the distribution of historical data, without relying on pre-defined human features [12].

4. Model Training with Positive-Unlabeled Learning:

- Implement a semi-supervised Positive-Unlabeled (PU) learning algorithm to account for the uncertain nature of the "negative" data [12].

- The algorithm treats artificially generated formulas as unlabeled data and probabilistically reweights them during training based on their likelihood of being synthesizable [12].

- The ratio of artificially generated formulas to synthesized formulas (referred to as

N_synth) is a critical hyperparameter [12].

5. Model Validation:

- Evaluate model performance using standard classification metrics (e.g., precision, recall, F1-score) against the curated dataset [12].

- Conduct a head-to-head comparison against traditional methods (e.g., charge-balancing, DFT formation energy) and human experts to benchmark predictive precision and speed [12].

Protocol: Feature Engineering Workflow for Materials Data

1. Data Cleaning:

- Identify and handle missing values by determining the mechanism (MCAR, MAR, MNAR) and applying appropriate strategies like deletion or imputation, ensuring no data leakage from the test set [14].

- Correct inconsistencies in data entry or units of measurement.

2. Feature Creation:

- Domain-Specific Features: Create new features based on materials science knowledge, such as average electronegativity, ionic potential, or deviation from charge neutrality [13].

- Data-Driven Features: Use automated tools like

Featuretoolsto generate new features by applying mathematical operations across related data points [13].

3. Feature Transformation:

- Encoding: Convert categorical variables (e.g., crystal system, presence of certain elements) into numerical form using techniques like one-hot encoding [13].

- Scaling: Normalize numerical features (e.g., atomic radius, molecular weight) to a consistent scale using standardization or min-max scaling to ensure they contribute equally during model training [13].

- Log Transformation: Apply to highly skewed numerical data (e.g., particle size distributions) to create a more balanced, normal-like distribution [14].

4. Feature Selection:

- Apply filter methods (e.g., correlation analysis with the target variable), wrapper methods (e.g., recursive feature elimination), or embedded methods (e.g., Lasso regularization) to select the most relevant feature subset and reduce model complexity [13].

Workflow and Relationship Visualizations

Diagram 1: High-level workflow for ML-driven synthesizability prediction.

Diagram 2: The iterative process of feature engineering for materials data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ML-Driven Materials Synthesis Research

| Resource / Tool | Type | Function / Application |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [12] | Data Repository | Provides the foundational "historical data" of experimentally reported inorganic crystal structures for model training. |

| Atom2Vec [12] | Algorithm / Representation | Learns optimal numerical representations of chemical compositions directly from data, serving as a powerful feature creation method. |

| Featuretools [13] | Software Library | Automates feature engineering from structured data, enabling the creation of complex features for material compositions and properties. |

| Positive-Unlabeled (PU) Learning Algorithms [12] | Machine Learning Method | Enables robust model training when only positive (synthesized) examples are definitive, and negative examples are uncertain. |

| TPOT / AutoGluon [5] | Automated Machine Learning (AutoML) | Automates the process of model selection, hyperparameter tuning, and feature engineering, streamlining the ML pipeline. |

The acceleration of inorganic materials discovery through computational prediction has created an urgent bottleneck: the synthesis of predicted materials. While high-throughput calculations can screen thousands of hypothetical compounds, transforming these digital designs into physical reality requires synthesis recipes that conventional methods cannot provide. Text-mining the extensive body of scientific literature offers a promising path to building the knowledge base needed for predictive synthesis [3]. This Application Note details the methodologies, challenges, and analytical frameworks for extracting and leveraging text-mined synthesis data, contextualized within machine learning-assisted inorganic materials research. We provide experimental protocols for data extraction, curation, and modeling specifically tailored for researchers and scientists engaged in accelerated materials development.

Foundational Datasets and Their Characteristics

Between 2016 and 2019, pioneering efforts yielded two substantial datasets of inorganic synthesis procedures extracted from scientific literature. These form the cornerstone for data-driven synthesis planning.

Table 1: Key Text-Mined Inorganic Synthesis Datasets

| Synthesis Type | Number of Recipes | Source Publications | Extraction Yield | Primary Use Cases |

|---|---|---|---|---|

| Solid-State Synthesis | 31,782 | 5,3538 paragraphs | 28% (15,144 with balanced reactions) | Precursor selection, temperature optimization, reaction pathway analysis [3] |

| Solution-Based Synthesis | 35,675 | Not specified | Not specified | Solvent selection, precursor interactions, nanoparticle synthesis [15] |

These datasets capture essential synthesis parameters including target materials, precursors, quantities, synthesis actions (mixing, heating, drying), and corresponding attributes (temperature, time, atmosphere) [15]. Each recipe is formatted to facilitate computational analysis, with many augmented with balanced chemical reactions enabling reaction energetics calculation using DFT-calculated bulk energies from databases like the Materials Project [3].

Text-Mining and Natural Language Processing Methodologies

The transformation of unstructured synthesis descriptions from scientific papers into structured, machine-readable data requires a sophisticated NLP pipeline.

Protocol: Natural Language Processing Pipeline for Synthesis Extraction

Objective: Convert prose descriptions of synthesis methods into structured recipes with identified targets, precursors, and synthesis operations.

Materials and Software Requirements:

- Full-text scientific publications with HTML/XML format (post-2000 recommended)

- Natural language processing libraries (e.g., SpaCy, NLTK)

- Bi-directional Long Short-Term Memory with Conditional Random Field (BiLSTM-CRF) model

- Latent Dirichlet Allocation (LDA) implementation for topic modeling

Procedure:

- Literature Procurement: Secure full-text permissions from publishers and download publications in parsable formats.

- Synthesis Paragraph Identification: Identify synthesis paragraphs using probabilistic classification based on keywords associated with inorganic materials synthesis.

- Materials Entity Recognition:

a. Replace all chemical compounds with a

<MAT>placeholder tag. b. Apply a BiLSTM-CRF model trained on manually annotated paragraphs to classify each<MAT>tag as target, precursor, or other based on sentence context clues [3]. - Synthesis Operation Classification: a. Apply Latent Dirichlet Allocation to cluster synonymous keywords into topics corresponding to specific synthesis operations. b. Classify sentence tokens into categories: mixing, heating, drying, shaping, quenching, or not an operation. c. Extract relevant parameters for each operation type.

- Recipe Compilation: Combine extracted precursors, targets, and operations into a structured JSON database. Attempt to balance chemical reactions by including volatile atmospheric gases.

Troubleshooting:

- For low yield in reaction balancing, verify precursor-target mappings and check for incomplete atmospheric condition reporting.

- For inaccurate operation classification, expand the training set of manually annotated synthesis paragraphs.

Figure 1: NLP workflow for extracting structured synthesis recipes from scientific literature.

Critical Data Quality Assessment

A retrospective evaluation of text-mined synthesis datasets against the "4 Vs" of data science reveals significant limitations that impact their utility for predictive modeling [3].

Table 2: Data Quality Assessment of Text-Mined Synthesis Datasets

| Dimension | Assessment | Impact on Predictive Modeling |

|---|---|---|

| Volume | 31,782 solid-state recipes; 35,675 solution-based recipes | Limited training data for ML models compared to diversity of possible inorganic materials [3] |

| Variety | Limited exploration of chemical space; anthropogenic biases toward known successful syntheses | Models capture how chemists have synthesized materials rather than fundamental principles [3] |

| Veracity | 28% extraction yield for solid-state recipes with balanced reactions; text-mining errors | Noisy labels impact model training; missing parameters require imputation [3] |

| Velocity | Static historical snapshot; does not incorporate latest publications | Inability to adapt to emerging synthesis strategies or newly reported materials [3] |

Analytical and Machine Learning Approaches

Protocol: Anomalous Recipe Analysis for Hypothesis Generation

Objective: Identify unusual synthesis procedures that defy conventional intuition to generate novel mechanistic hypotheses.

Materials:

- Text-mined synthesis database with structured recipes

- Computational materials data source (e.g., Materials Project) for calculated properties

- Statistical analysis software (e.g., Python, R)

Procedure:

- Feature Engineering: Compute features for each synthesis recipe including reaction thermodynamics, precursor properties, and synthesis conditions.

- Anomaly Detection: Apply isolation forests, one-class SVMs, or clustering algorithms to identify outliers in feature space.

- Manual Curation: Domain experts manually examine anomalous recipes to identify potentially novel synthesis strategies.

- Hypothesis Formulation: Develop testable mechanistic hypotheses based on anomalous patterns.

- Experimental Validation: Design targeted experiments to validate hypotheses derived from anomalous recipes.

Application Note: This approach successfully led to new mechanistic insights about solid-state reaction kinetics and precursor selection that were experimentally validated [3].

Protocol: Synthesis Outcome Prediction with Chemical Descriptors

Objective: Train machine learning models to predict synthesis outcomes such as reaction success, phase purity, or morphological characteristics.

Materials:

- Structured synthesis recipes with outcome annotations

- Chemical descriptors (e.g., elemental properties, ionic radii, electronegativity)

- Machine learning frameworks (e.g., scikit-learn, PyTorch)

Procedure:

- Data Preprocessing: Clean the dataset, handle missing values, and encode categorical variables.

- Descriptor Calculation: Compute features for precursors and target materials using compositional descriptors.

- Model Training: Implement random forest, gradient boosting, or graph neural network models to predict synthesis outcomes.

- Model Validation: Use k-fold cross-validation and hold-out test sets to evaluate model performance.

- Interpretation: Apply SHAP or LIME analysis to identify key factors influencing synthesis outcomes.

Figure 2: Machine learning workflow for predicting synthesis outcomes from text-mined recipes and chemical descriptors.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Text-Mining and Analysis

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| BiLSTM-CRF Model | Algorithm | Materials entity recognition from synthesis text | Identifies and classifies targets, precursors, and other materials in paragraphs [3] |

| Latent Dirichlet Allocation | Algorithm | Topic modeling for synthesis operation classification | Clusters synonymous keywords into synthesis action categories [3] |

| ULSA Framework | Framework | Unified language of synthesis actions | Standardizes representation of inorganic synthesis protocols [15] |

| ACE Transformer Model | Pre-trained Model | Converts prose synthesis descriptions into action sequences | Extracts synthesis protocols for heterogeneous catalysis; adaptable to other materials families [16] |

| Text-Mined Synthesis Dataset | Database | Structured compilation of synthesis recipes | Provides training data for predictive models and analysis of synthesis trends [15] |

Emerging Approaches and Standardization Guidelines

Recent advances in large language models (LLMs) offer promising avenues for improving synthesis protocol extraction. The ACE (sAC transformEr) transformer model demonstrates capability in converting unstructured synthesis paragraphs into structured action sequences with approximately 66% information capture accuracy as measured by Levenshtein similarity [16].

Protocol: Guideline for Machine-Readable Synthesis Reporting

Objective: Improve text-mining efficiency by standardizing how synthesis procedures are reported in scientific literature.

Guidelines:

- Action-Oriented Language: Use consistent verb-noun structures for synthesis steps.

- Explicit Parameter Association: Clearly associate parameters with corresponding actions.

- Structured Format: Separate distinct synthesis steps into different sentences or paragraphs.

- Minimize Ambiguity: Avoid pronouns and implicit references to previously mentioned materials.

- Complete Specification: Include all relevant parameters for each synthesis action.

Application Note: Implementing these guidelines in synthesis reporting improved machine-reading accuracy significantly, with the ACE model showing enhanced performance on guideline-modified protocols compared to original texts [16].

This Application Note has detailed methodologies for extracting, processing, and leveraging text-mined synthesis data for predictive modeling in inorganic materials research. While current datasets face challenges in volume, variety, veracity, and velocity, they nonetheless provide valuable resources for understanding synthesis trends and generating novel hypotheses. The integration of improved natural language processing methods, coupled with standardized reporting guidelines, promises to enhance the utility of literature-mined synthesis data. As these approaches mature, they will play an increasingly important role in accelerating the discovery and synthesis of novel functional materials.

ML Frameworks in Action: Predictive Models, Optimization Algorithms, and Real-World Applications

The integration of machine learning (ML) into inorganic materials synthesis represents a paradigm shift, moving beyond traditional trial-and-error approaches towards data-driven discovery and optimization. This guide details practical protocols for applying three core ML algorithms—XGBoost, Support Vector Machines (SVMs), and Neural Networks (NNs)—to critical tasks in inorganic materials research, including synthesis outcome classification and property regression. By providing standardized application notes, performance benchmarks, and experimental workflows, this document serves as a practical toolkit for researchers and scientists aiming to accelerate materials development cycles.

The table below summarizes the documented performance of XGBoost, SVM, and Neural Network models in specific inorganic materials synthesis and property prediction tasks, providing a benchmark for algorithm selection.

Table 1: Performance Benchmarks of ML Algorithms in Inorganic Materials Research

| Algorithm | Application | Reported Performance | Key Advantages |

|---|---|---|---|

| XGBoost | Classification of MoS₂ growth status via CVD [17] [18] | High prediction accuracy (e.g., >88% accuracy, 0.91 AUROC) [18] | Handles mixed data types well; strong with small-medium datasets; high interpretability [18]. |

| SVM | Prediction of electrophoretic mobility of organic/inorganic compounds [19] | RMSE: 0.2569 (test set); superior to Multiple Linear Regression [19] | Effective in high-dimensional spaces; robust with small datasets [19] [20]. |

| SVM | Prediction of polymer mechanical/thermal properties [20] | Widely applied for property prediction and process optimization [20] | Versatile with kernel functions (RBF, polynomial) to model nonlinearity [20]. |

| Neural Network (HATNet) | Classification of MoS₂ synthesis & regression of CQD photoluminescent quantum yield [17] | 95% classification accuracy; MSE of 0.003 (inorganic CQYs) [17] | Automates feature engineering; captures complex, high-order parameter interactions [17]. |

| Neural Network (PFP) | Universal potential for atomistic simulations across 45 elements [21] | Accurately predicts properties like lithium diffusion in LiFeSO₄F [21] | High generalizability for property prediction across diverse chemical spaces [21]. |

| Neural Network (Federated) | Prediction of material formation energy from multi-source databases [22] | Model accuracy nearly equivalent to training on a single combined database [22] | Enables collaborative model training without sharing raw data (solves "data island" problem) [22]. |

Experimental Protocols and Workflows

Protocol 1: XGBoost for Synthesis Condition Optimization

This protocol outlines the use of XGBoost for classifying successful synthesis conditions, as applied in the chemical vapor deposition (CVD) of 2D materials like MoS₂ [18].

Data Collection and Preprocessing

- Input Features: Compile experimental parameters such as reaction temperature (°C), precursor type and concentration, carrier gas flow rate (sccm), chamber pressure (Pa), and reaction time (min) [17] [18].

- Target Variable: Define a binary classification label (e.g., "successful growth" vs. "failed growth") based on post-synthesis characterization (e.g., Raman spectroscopy, microscopy) [18].

- Data Cleansing: Handle missing values (e.g., through imputation or removal) and detect outliers (e.g., removing data points where target values deviate by >15% from others under identical parameters) [23]. Normalize or standardize numerical features.

Model Training with Hyperparameter Optimization

- Software Stack: Implement using Python libraries (e.g.,

xgboost,scikit-learn). - Data Splitting: Split the dataset into training (e.g., 80%) and test (e.g., 20%) sets, ensuring similar feature distributions [23].

- Hyperparameter Tuning: Utilize a multi-algorithm ensemble optimization framework like Nevergrad. Key hyperparameters to optimize include:

max_depth: Maximum depth of a tree (e.g., 3 to 10).learning_rate: Shrinks the feature weights to make the boosting process more conservative (e.g., 0.01 to 0.3).n_estimators: Number of boosting rounds.subsample: Fraction of samples used for fitting individual trees [23].

- Validation: Employ 5-fold cross-validation on the training set to assess model generalizability during tuning [23].

- Software Stack: Implement using Python libraries (e.g.,

Model Evaluation and Interpretation

- Performance Metrics: Evaluate the final model on the held-out test set using accuracy, area under the ROC curve (AUROC), and confusion matrices [18].

- Interpretation: Apply SHapley Additive exPlanations (SHAP) to identify the most influential experimental parameters (e.g., reaction temperature is often critical) for model predictions [23].

XGBoost Synthesis Optimization Workflow

Protocol 2: Support Vector Machines (SVM) for Property Prediction

This protocol describes the use of SVM for regression (SVR) to predict properties like electrophoretic mobility or mechanical properties based on molecular or structural descriptors [19] [20].

Feature Engineering and Selection

- Descriptor Calculation: Compute molecular or crystal structure descriptors. These can be simple (e.g., atomic radius, elemental properties) or complex (quantum-chemical descriptors from DFT calculations) [19].

- Feature Selection: Use algorithms like the Successive Projections Algorithm (SPA) to select a subset of descriptors with low multi-collinearity to improve model performance and interpretability [19].

Model Training with Kernel Selection

- Data Preparation: Split data into training and test sets. Standardize features to have zero mean and unit variance, which is critical for SVM performance.

- Kernel Choice: Test different kernel functions to handle nonlinear relationships:

- Hyperparameter Tuning: Optimize key parameters via grid or random search. For an RBF kernel, this includes:

C: Regularization parameter (controls trade-off between maximizing margin and minimizing error).gamma: Kernel coefficient (defines the influence of a single training example).

Validation and Prediction

- Validation: Use k-fold cross-validation on the training set to ensure model robustness.

- Testing: Evaluate the final SVR model on the test set using metrics like Root Mean Square Error (RMSE) and Coefficient of Determination (R²) [19].

Protocol 3: Neural Networks for Advanced Synthesis and Property Prediction

This protocol covers the application of advanced neural networks, from specialized architectures like HATNet to universal potentials like PFP [17] [21].

Data Preparation for Deep Learning

- Input Representation: For synthesis optimization (e.g., with HATNet), input features are similar to XGBoost (experimental parameters) [17]. For atomistic simulations (e.g., with PFP), input is the atomic structure (element types and 3D coordinates) [21].

- Dataset Scaling: Deep learning often requires large datasets. For universal potentials, this involves aggregating massive datasets from diverse sources, including unstable and hypothetical structures to improve robustness [21].

Model Configuration and Training

- HATNet for Synthesis: Implement a Hierarchical Attention Transformer Network. This architecture uses a shared attention-based encoder with multi-head attention mechanisms to automatically learn complex interactions between synthesis parameters for multiple tasks (classification and regression) [17].

- Universal NNP (PFP): Employ a model that uses a neural network architecture designed to be element-agnostic, capable of handling any combination of 45 elements. The architecture should leverage higher-order geometric features and satisfy necessary physical invariances (e.g., rotation, translation) [21].

- Federated Learning: To train on distributed data without centralization, use a federated learning schema. A central server coordinates training by aggregating model parameter updates from multiple clients holding local datasets, thus preserving data privacy [22].

Simulation and Prediction

- Property Prediction: Use the trained network to predict properties like formation energy or band gap [22].

- Atomistic Simulations: Deploy the universal NNP in Molecular Dynamics (MD) or Nudged Elastic Band (NEB) simulations to study finite-temperature dynamics, diffusion pathways, and activation energies [21].

Neural Network Prediction and Simulation

Research Reagent and Computational Solutions

The table below lists key computational and experimental "reagents" essential for conducting ML-guided materials synthesis research.

Table 2: Essential Research Reagent Solutions for ML-Guided Materials Synthesis

| Category | Item | Function and Application Notes |

|---|---|---|

| Computational Frameworks | XGBoost Library [23] | Provides the core implementation of the XGBoost algorithm for classification and regression tasks. |

| Nevergrad Optimization Library [23] | Enables gradient-free hyperparameter optimization for ML models, integrating algorithms like CMA-ES and PSO. | |

| Neural Network Potential (PFP) [21] | A universal potential for atomistic simulations across 45 elements, replacing DFT in large-scale MD. | |

| Data Sources | Historical Synthesis Database [18] | A curated dataset of past experimental conditions and outcomes, serving as the foundational training data. |

| High-Throughput Computation Databases (e.g., Materials Project) [21] | Sources of DFT-calculated properties for training machine learning potentials and property predictors. | |

| Software & Libraries | SHAP (SHapley Additive exPlanations) [23] | Provides post-hoc model interpretability, quantifying the contribution of each input feature to a prediction. |

| Federated Learning Framework [22] | A software architecture that enables multi-institutional model training without sharing raw local data. | |

| Synthesis Parameters (Features) | Reaction Temperature [17] [18] | A critical continuous variable in CVD and hydrothermal synthesis, strongly influencing growth outcomes. |

| Precursor Concentration & Gas Flow Rates [17] | Continuous variables defining the chemical environment and mass transport during synthesis. | |

| Chamber Pressure [17] | A key continuous parameter in vacuum-based synthesis techniques like CVD. |

The optimization of synthesis conditions for advanced inorganic materials represents a significant challenge in materials science, traditionally relying on time-consuming and costly trial-and-error experimentation [17]. The chemical vapor deposition (CVD) process, crucial for producing two-dimensional materials like molybdenum disulfide (MoS₂), is influenced by numerous interdependent factors including reaction temperature, chamber pressure, and carrier gas flow rate, creating a complex optimization landscape [17]. To address these challenges, Hierarchical Attention Networks (HATNet) have emerged as a transformative deep learning architecture capable of automatically capturing intricate, high-order feature dependencies within experimental parameters [17]. This application note details the implementation, performance, and experimental protocols for HATNet in machine learning-assisted inorganic materials synthesis research, providing scientists with practical frameworks for deploying these advanced architectures in their experimental workflows.

Core Architectural Principles of HATNet

HATNet fundamentally extends the capabilities of traditional machine learning approaches through its hierarchical multi-head self-attention (H-MHSA) mechanism, which systematically models relationships across different scales of feature abstraction [24]. Unlike conventional transformer architectures that compute attention across all patches or tokens simultaneously—leading to prohibitive computational complexity for large-scale material datasets—H-MHSA employs a structured, multi-tiered approach [24].

The processing pipeline operates through three distinct phases of feature relationship capture:

- Local Relationship Modeling: The input image or feature representation is first divided into small patches, with self-attention computed within localized groups to capture fine-grained, short-range dependencies [24]

- Global Dependency Modeling: These local patches are progressively merged into larger units, with self-attention calculated for the reduced set of merged tokens to model long-range, global dependencies across the feature space [24]

- Feature Aggregation: The locally and globally attentive features are intelligently aggregated to produce a unified representation that preserves both granular details and contextual understanding [24]

This hierarchical strategy dramatically reduces computational complexity from O(N²d) in standard transformers to O(NG₁² + N²/G₂²), where G₁ represents local window size and G₂ denotes the global merge factor, enabling efficient processing of high-dimensional material synthesis data [25].

Table 1: Performance Comparison of HATNet Against Traditional ML Methods in Material Synthesis

| Model | Task | Performance Metric | Value | Computational Efficiency |

|---|---|---|---|---|

| HATNet | MoS₂ Growth Classification | Accuracy | 95% [17] | Moderate |

| HATNet | CQD PLQY Estimation (Inorganic) | MSE | 0.003 [17] | Moderate |

| HATNet | CQD PLQY Estimation (Organic) | MSE | 0.0219 [17] | Moderate |

| XGBoost | MoS₂ Synthesis | Accuracy | Lower than HATNet [17] | High |

| SVM | Material Property Prediction | Limited in capturing complex dependencies [17] | N/A | High |

Application in Inorganic Materials Synthesis

MoS₂ Synthesis Optimization

In the chemical vapor deposition of MoS₂, HATNet has demonstrated exceptional capability in classifying synthesis outcomes based on experimental parameters. The network processes multiple interdependent variables including temperature gradients, precursor concentration ratios, pressure conditions, and gas flow rates, learning their complex interactions through its hierarchical attention mechanism [17]. The model achieves a remarkable 95% classification accuracy in predicting successful growth conditions, significantly outperforming traditional methods like XGBoost and support vector machines [17]. This performance advantage stems from HATNet's ability to automatically discover and weight the most critical parameter interactions without relying on manual feature engineering, which has traditionally limited the effectiveness of machine learning in synthesis optimization.

Carbon Quantum Dot Yield Estimation

For photoluminescent quantum yield (PLQY) estimation of carbon quantum dots, HATNet operates on hydrothermal synthesis parameters, capturing the nonlinear relationships between precursor compositions, reaction times, temperature profiles, and surface functionalization agents [17]. The architecture achieves a mean squared error of 0.003 on inorganic compositions and 0.0219 on organic compositions, demonstrating both high precision and adaptability across material classes [17]. This dual capability for classification and regression tasks within a unified framework positions HATNet as a versatile tool for materials scientists seeking to optimize synthesis conditions across diverse material systems.

Experimental Protocols and Methodologies

Data Preparation Protocol

Materials Synthesis Data Collection

- Collect comprehensive synthesis data including precursor specifications, temperature profiles, pressure conditions, reaction durations, and environmental parameters [17]

- For MoS₂ CVD synthesis: Document substrate preparation methods, sulfurization time, precursor evaporation rates, and quenching procedures [17]

- For CQD hydrothermal synthesis: Record precursor molar ratios, solvent compositions, autoclave specifications, heating rates, and cooling methods [17]

- Annotate all experimental outcomes with corresponding characterization data (SEM, TEM, PL spectra, XRD) [17]

Feature Preprocessing Pipeline

- Normalize all continuous parameters to zero mean and unit variance

- Encode categorical variables (e.g., substrate type, precursor source) using one-hot encoding

- Partition data into training (70%), validation (15%), and test (15%) sets maintaining temporal consistency if applicable

- Implement data augmentation through synthetic minority over-sampling for imbalanced outcome classes

HATNet Implementation Protocol

Model Configuration

Training Procedure

- Initialize model with He normal weight initialization

- Employ Adam optimizer with learning rate of 0.001, β₁=0.9, β₂=0.999

- Implement learning rate scheduling with reduction factor of 0.5 on validation loss plateau

- For classification tasks: Use categorical cross-entropy loss with class weighting for imbalanced datasets

- For regression tasks: Employ mean squared error loss with gradient clipping at norm 1.0

- Train for maximum 500 epochs with early stopping after 30 epochs without validation improvement

- Regularize using dropout rate of 0.1 and L2 weight decay of 0.0001

Model Validation Protocol

Performance Assessment

- For classification: Compute accuracy, precision, recall, F1-score, and ROC-AUC

- For regression: Calculate mean squared error, mean absolute error, and R² coefficient

- Perform k-fold cross-validation (k=5) to ensure robustness across data splits

- Compare against baseline models (XGBoost, SVM, standard transformers) using paired statistical tests

Interpretability Analysis

- Extract and visualize attention weights from both local and global attention layers

- Identify highly weighted feature interactions for scientific insight

- Generate sensitivity analysis by perturbing input parameters and monitoring output changes

Research Reagent Solutions and Materials

Table 2: Essential Research Materials for HATNet-Assisted Material Synthesis

| Material/Reagent | Specification | Function in Experimental Setup | Supplier Considerations |

|---|---|---|---|

| Molybdenum Precursors | (NH₄)₂MoO₄, MoO₃, MoCl₅ | CVD precursor for MoS₂ synthesis [17] | Purity >99.99%, particle size <45μm |

| Sulfur Precursors | S powder, (C₂H₅)₂S | Sulfur source for chalcogenization [17] | Anhydrous, purity >99.98% |

| Carbon Quantum Dot Precursors | Citric acid, urea, glucose | Carbon source for hydrothermal synthesis [17] | ACS reagent grade, store in dry conditions |

| Substrates | SiO₂/Si, sapphire, graphene | Growth substrate for 2D materials [17] | RCA cleaned, surface characterization required |

| CVD System | 3-zone furnace, quartz tubes | Controlled environment for material growth [17] | Precise temperature control (±1°C), gas flow regulation |

| Hydrothermal Reactors | Teflon-lined autoclaves | High-pressure, high-temperature CQD synthesis [17] | Pressure-rated, corrosion-resistant |

| Characterization Tools | Raman, PL, SEM, TEM | Material property validation [17] | Calibration standards required |

Cross-Domain Validation and Adaptability

The effectiveness of HATNet architectures extends beyond inorganic materials synthesis, demonstrating robust performance across diverse scientific domains. In medical imaging, a HATNet variant achieved 98.73% accuracy in segmenting 24 distinct anatomical and pathological structures in panoramic dental radiographs, leveraging hierarchical multi-scale attention to balance global context and local precision [26]. For micro-expression recognition, Hierarchical Feature Aggregation Networks (HFA-Net) incorporating multi-scale attention blocks captured subtle facial dynamics through local feature extraction and global dependency modeling [27]. In histopathological image analysis, HATNet matched the classification accuracy of 87 U.S. pathologists in diagnosing breast biopsy specimens, utilizing holistic attention to learn representations from clinically relevant tissue structures without explicit supervision [28]. These cross-domain successes underscore HATNet's fundamental capability to model complex, hierarchical relationships across diverse data modalities, reinforcing its value as a versatile architecture for scientific discovery.

Implementation Considerations for Materials Research

Computational Infrastructure Requirements

- GPU memory: Minimum 8GB for small datasets, 16GB+ for large-scale synthesis optimization

- RAM: 32GB minimum, 64GB recommended for in-memory data processing

- Storage: Fast SSD storage for efficient data loading and augmentation

- Software: Python 3.8+, TensorFlow 2.8+ or PyTorch 1.12+, CUDA 11.6+

Integration with Experimental Workflows

- Establish automated data pipelines from laboratory instrumentation to feature storage

- Implement real-time prediction capabilities for guided synthesis optimization

- Develop visualization dashboards for attention weight interpretation and model diagnostics

- Create continuous learning frameworks for model refinement with new experimental data

Validation and Reproducibility Framework

- Document all hyperparameters and random seeds for experimental replication

- Version control for both code and dataset iterations

- Perform ablation studies to quantify contribution of architectural components

- Compare against domain-specific physical models where available

The integration of Hierarchical Attention Networks into inorganic materials synthesis research represents a paradigm shift in experimental optimization, moving from traditional trial-and-error approaches to data-driven, predictive science. The architectural flexibility, interpretability features, and demonstrated performance advantages of HATNet position it as a foundational tool for accelerating the discovery and development of advanced materials systems.

The discovery of novel inorganic crystalline materials is fundamental to technological progress in areas ranging from clean energy to quantum computing. A critical bottleneck in this process is synthesizability–determining whether a proposed chemical composition can be successfully synthesized in the laboratory. Traditional approaches relying on chemical intuition and trial-and-error are inefficient, often requiring extensive experimental resources [12] [29].

Machine learning, particularly deep learning, offers a transformative approach to this challenge. This Application Note details SynthNN, a deep learning model for synthesizability classification of inorganic crystalline materials directly from their chemical compositions. Framed within a broader thesis on machine learning-assisted inorganic materials synthesis, this document provides researchers with a comprehensive guide to the model's operational principles, performance benchmarks, and protocols for application within materials discovery workflows.

SynthNN Model Fundamentals

Problem Formulation and Learning Framework

SynthNN reformulates material discovery as a classification task, predicting whether a given inorganic chemical formula is synthesizable. The model is trained on data from the Inorganic Crystal Structure Database (ICSD), which contains compositions of previously synthesized and structurally characterized materials [12] [30].

A key challenge is the lack of confirmed negative examples; unsynthesizable materials are rarely reported. SynthNN addresses this through a Positive-Unlabeled (PU) learning approach. The training dataset is augmented with a large number of artificially generated 'unsynthesized' material compositions. The model treats these as unlabeled data and probabilistically reweights them according to their likelihood of being synthesizable [12].

Architecture and Representational Learning

SynthNN leverages an atom2vec representation, which uses a learned atom embedding matrix optimized alongside other neural network parameters [12]. This approach allows the model to:

- Learn Optimal Representations: It discovers chemically relevant descriptors directly from the distribution of synthesized materials, without relying on pre-defined features or human bias [12].

- Infer Chemical Principles: Experimental evidence indicates SynthNN autonomously learns fundamental chemical concepts such as charge-balancing, chemical family relationships, and ionicity from the data alone [12] [30].

The following diagram illustrates the core architecture and learning workflow of SynthNN.

Performance Benchmarking

SynthNN's performance has been rigorously evaluated against both computational baselines and human experts.

Quantitative Comparison Against Computational Methods

The table below summarizes the performance of SynthNN compared to a charge-balancing heuristic and random guessing. Precision, a critical metric for discovery efficiency, indicates the proportion of predicted synthesizable materials that are likely to be correct.

Table 1: Performance comparison of synthesizability prediction methods [12]

| Method | Key Principle | Positive Class Precision |

|---|---|---|

| SynthNN | Data-driven classification with deep learning | 7x higher than DFT formation energy |

| Charge-Balancing | Net neutral ionic charge based on common oxidation states | Similar to SynthNN for detecting unsynthesized materials, but poor overall (only 37% of known materials are charge-balanced) |

| Random Guessing | Predictions weighted by class imbalance | Baseline performance level |

Comparison Against Human Experts

In a head-to-head material discovery challenge involving 20 expert material scientists, SynthNN demonstrated superior efficiency and accuracy [12]:

- Precision: Achieved 1.5x higher precision than the best human expert.

- Speed: Completed the discovery task five orders of magnitude faster than the best human expert.

Protocol for Applying SynthNN in Materials Discovery Workflows

This protocol outlines the steps for integrating SynthNN to screen candidate materials, a process that can be seamlessly incorporated into computational material screening or inverse design workflows [12].

Materials Screening Workflow

The typical screening workflow and the integration point of SynthNN are visualized below.

Step-by-Step Procedure

Input Preparation

- Action: Compile a list of candidate chemical formulas for screening. The input should be in a standardized text format (e.g., "CsCl", "TiO2").

- Notes: No structural information is required, enabling the screening of hypothetical, undiscovered materials.

Model Inference

- Action: Submit the list of chemical formulas to the SynthNN model for prediction.

- Output: The model returns a synthesizability classification (e.g., synthesizable/not synthesizable) and/or a probability score for each candidate.

Result Triage and Prioritization

- Action: Rank the candidate materials based on the model's synthesizability score and other desirable properties identified in prior screening steps.

- Notes: This step helps experimentalists focus resources on the most promising candidates that are both functional and likely synthesizable.

Experimental Validation

- Action: Proceed with laboratory synthesis of the high-priority candidates.

- Notes: Model predictions are not a guarantee of synthesizability. Experimental validation remains crucial, as synthesis outcomes can be influenced by kinetics, specific reaction conditions, and other factors not captured by the composition-based model [12] [29].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and data resources essential for working with synthesizability prediction models like SynthNN.

Table 2: Essential resources for computational synthesizability prediction

| Resource Name | Type | Function in Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [12] | Data Repository | Provides a comprehensive collection of experimentally reported crystalline structures, serving as the primary source of positive training data for models like SynthNN. |

| atom2vec [12] | Material Representation | A learned featurization method that converts chemical formulas into numerical vectors, allowing the model to discern patterns without pre-defined chemical rules. |

| Positive-Unlabeled (PU) Learning Algorithms [12] | Machine Learning Framework | Enables model training in scenarios with only confirmed positive examples and a set of unlabeled examples (which contain both positive and negative instances). |

| Text-Mining Pipelines (e.g., for solution-based synthesis) [31] | Data Extraction Tool | Automates the extraction of structured synthesis recipes and parameters from scientific literature, expanding the data available for more advanced synthesis prediction. |

The integration of human expertise with machine intelligence represents a paradigm shift in computational materials discovery. The Materials Expert-AI (ME-AI) framework is a structured approach to hybrid AI that strategically combines the computational power of artificial intelligence with the contextual understanding and intuitive reasoning of human materials scientists. This framework addresses a critical bottleneck in computationally accelerated materials discovery: while high-throughput methods can predict new materials, they provide little guidance on actual synthesis parameters such as precursors, reaction temperatures, and processing times [3].

The ME-AI framework operates on the core principle of augmentation rather than automation, positioning AI as a tool that enhances human capabilities rather than replacing them. This approach is particularly valuable in materials synthesis research, where anthropogenic biases, cultural factors in experimental reporting, and the complex, multi-dimensional nature of synthesis parameters present challenges for purely data-driven approaches [3]. By leveraging the complementary strengths of human intuition and machine intelligence, the ME-AI framework enables more efficient navigation of the complex synthesis space for novel inorganic materials.

Table 1: Core Principles of the ME-AI Framework

| Principle | Description | Application to Materials Synthesis |

|---|---|---|

| Transparency | All stages of the AI process must be documented and reproducible [32] | Clear documentation of training data sources, feature selection, and model parameters for synthesis prediction |

| Validity | AI outputs must be methodologically sound and contextually relevant [32] | Ensuring synthesis predictions align with chemical principles and experimental constraints |

| Reliability | Consistent performance across diverse materials systems and conditions [32] | Robust prediction of synthesis parameters for both known and novel material classes |

| Comprehensiveness | Inclusion of diverse data sources and experimental contexts [32] | Incorporating literature data, experimental failures, and anomalous results in training data |

| Reflective Agency | Human experts maintain oversight and critical engagement [32] | Scientist-in-the-loop validation of AI-generated synthesis recommendations |

Quantitative Foundations of Hybrid AI Performance

The performance of hybrid AI systems in scientific applications depends on multiple interdependent factors that collectively determine their effectiveness. Research on human-AI hybrid performance has identified 24 critical factors that influence outcomes, grouped into four primary clusters: technological capabilities, human factors, task characteristics, and organizational context [33]. Understanding these factors is essential for designing effective ME-AI systems for materials research.

Analysis of factor dependencies reveals that transparency and trust emerge as the most influential nodes in the performance network, with disproportionate impact on overall system effectiveness [33]. In materials synthesis applications, this translates to the AI system's ability to provide interpretable rationales for its synthesis recommendations and to establish a track record of reliable predictions. The complex, non-linear interdependencies between these factors mean that human-AI collaboration in materials science likely forms a dynamic, evolving system rather than a simple combination of inputs [33].

Table 2: Key Performance Factors for Human-AI Hybrid Systems in Materials Research

| Factor Category | Critical Factors | Impact on Materials Synthesis Research |

|---|---|---|

| Technological Capabilities | Transparency, interpretability, accuracy, reliability [33] | Determines how well scientists can understand and trust AI synthesis recommendations |

| Human Factors | Domain expertise, cognitive biases, trust calibration, mental models [33] | Affects how materials scientists interpret and apply AI-generated synthesis strategies |

| Task Characteristics | Complexity, structure, novelty, time constraints [33] | Influences which synthesis problems are suitable for AI assistance versus human expertise |

| Collaboration Dynamics | Communication protocols, role allocation, feedback mechanisms [33] | Shapes how human scientists and AI systems interact throughout the research process |

The quantitative performance of machine learning systems in materials science applications varies significantly based on data quality and algorithm selection. Studies applying multiple supervised learning algorithms to materials classification problems have found that classification and regression tree (CART) and logistic regression (LR) algorithms often demonstrate superior performance for structured materials data [34]. In one systematic analysis, the inclusion of additional feature types (e.g., cuticular traits beyond macroscopic traits) improved identification accuracy from approximately 75% to over 90%, highlighting the importance of comprehensive data collection for hybrid AI systems [34].

Application Notes: ME-AI Framework for Predictive Materials Synthesis

Protocol 1: Text-Mining Synthesis Recipes with Human-in-the-Loop Validation

Purpose: Extract and structure synthesis parameters from scientific literature to create training data for predictive synthesis models.

Experimental Workflow:

- Literature Procurement: Obtain full-text permissions from major scientific publishers (Springer, Wiley, Elsevier, Royal Society of Chemistry, etc.). Filter for papers with HTML/XML formats published after 2000 for optimal parsing [3].

- Synthesis Paragraph Identification: Implement probabilistic assignment based on paragraphs containing keywords associated with inorganic materials synthesis. Use manual annotation of 100+ synthesis paragraphs to validate classification accuracy [3].

- Materials Extraction: Replace all chemical compounds with

<MAT>placeholders and implement a bi-directional Long Short-Term Memory neural network with conditional random field layer (BiLSTM-CRF) to identify targets, precursors, and reaction media based on sentence context clues [3]. - Synthesis Operation Classification: Apply Latent Dirichlet Allocation (LDA) to cluster synonyms for synthesis operations (e.g., "calcined," "fired," "heated"). Manually assign token labels for annotated sets (100+ paragraphs, 664+ sentences) to train classification models [3].

- Recipe Compilation: Combine extracted precursors, targets, and operations into structured JSON database. Attempt to build balanced chemical reactions including volatile atmospheric gasses for reaction energetics calculation [3].

- Human Expert Validation: Implement cyclical validation with domain experts reviewing anomalous recipes and random samples. Target extraction yield of 28-30% from classified synthesis paragraphs to balanced reactions [3].

Text Mining Synthesis Data

Protocol 2: Anomaly Detection for Novel Synthesis Hypothesis Generation

Purpose: Identify anomalous synthesis recipes that defy conventional intuition to generate novel mechanistic hypotheses.

Experimental Workflow:

- Data Quality Assessment: Evaluate text-mined synthesis datasets against the "4 Vs" framework: Volume, Variety, Veracity, and Velocity. Acknowledge limitations in data completeness and representation [3].

- Feature Engineering: Encode both qualitative traits (e.g., precursor types, synthesis methods) using label encoding and one-hot encoding approaches to convert categorical variables into machine-readable formats [34].