Linear Dependency in Basis Sets: Causes, Consequences, and Solutions for Computational Drug Discovery

This article provides a comprehensive analysis of linear dependency in basis sets, a critical challenge in computational chemistry that directly impacts the accuracy and stability of quantum mechanical calculations for...

Linear Dependency in Basis Sets: Causes, Consequences, and Solutions for Computational Drug Discovery

Abstract

This article provides a comprehensive analysis of linear dependency in basis sets, a critical challenge in computational chemistry that directly impacts the accuracy and stability of quantum mechanical calculations for drug discovery. We explore the foundational mathematical principles of linear independence and spanning sets, detail the methodological causes of dependency in chemical systems, and present practical troubleshooting and optimization strategies used in modern software. Furthermore, we examine validation techniques and comparative performance of different basis sets, with specific applications to pharmaceutical research including QSPR modeling and AI-assisted drug design. This guide equips researchers and drug development professionals with the knowledge to identify, prevent, and resolve linear dependency issues, thereby enhancing the reliability of computational predictions in biomedical applications.

The Mathematical Basis of Linear Dependency: From Vector Spaces to Molecular Orbitals

Defining Linear Independence and Span in Vector Spaces

Linear algebra provides the foundational mathematical framework for numerous scientific computing applications, including computational chemistry and drug discovery. The concepts of linear independence and span are fundamental to understanding vector spaces, which in turn form the basis for representing molecular structures, predicting properties, and optimizing chemical compounds [1]. In computational research, particularly in basis set applications, grasping how linear dependencies arise is crucial for developing accurate models and avoiding numerical instability in simulations [2].

This technical guide examines the mathematical definitions of linear independence and span, explores their interrelationships, and demonstrates their critical importance in basis set research with direct applications to drug development and materials science. We provide researchers with both theoretical foundations and practical methodologies for identifying and addressing linear dependence issues in experimental settings.

Mathematical Foundations and Definitions

Linear Independence: Formal Definition

In linear algebra, a set of vectors ( S = {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}_n} ) in a vector space ( V ) is linearly independent if the vector equation:

[ a1\mathbf{v}1 + a2\mathbf{v}2 + \cdots + an\mathbf{v}n = \mathbf{0} ]

has only the trivial solution ( a1 = a2 = \cdots = a_n = 0 ) [3] [4].

Conversely, the set is linearly dependent if there exist scalars ( a1, a2, \ldots, an ), not all zero, that satisfy the equation. This implies that at least one vector in the set can be expressed as a linear combination of the others [4] [5]. For example, if ( a1 \neq 0 ), we can write:

[ \mathbf{v}1 = -\frac{a2}{a1}\mathbf{v}2 - \cdots - \frac{an}{a1}\mathbf{v}_n ]

This formal definition has important implications:

- Any set containing the zero vector is automatically linearly dependent [3]

- Two vectors are linearly dependent if and only if they are collinear (one is a scalar multiple of the other) [3]

- If a subset of vectors is linearly dependent, then the entire set is linearly dependent [3]

Span: Formal Definition

The span of a set of vectors ( S = {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}_n} ) is the set of all possible linear combinations of those vectors [6] [7]. Formally:

[ \text{span}(S) = \left{ \lambda1\mathbf{v}1 + \lambda2\mathbf{v}2 + \cdots + \lambdan\mathbf{v}n \mid \lambda1, \lambda2, \ldots, \lambda_n \in K \right} ]

where ( K ) is the field over which the vector space is defined [6].

An equivalent definition characterizes the span as the intersection of all subspaces of ( V ) that contain ( S ), making it the smallest subspace containing ( S ) [8]. This dual characterization provides both algebraic and geometric perspectives on the concept.

For example, the span of two non-collinear vectors in ( \mathbb{R}^3 ) is a plane through the origin, while the span of three linearly independent vectors in ( \mathbb{R}^3 ) is the entire space [3].

Relationship Between Linear Independence and Span

Linear independence and span are complementary concepts that together define the notion of a basis in vector spaces. The Increasing Span Criterion establishes that a set of vectors ( {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}n} ) is linearly independent if and only if, for every ( k ), the vector ( \mathbf{v}k ) is not in the span of the previous vectors ( {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}_{k-1}} ) [3].

This relationship reveals that linear independence ensures that each vector in a set contributes something new to the span that couldn't already be represented by linear combinations of the others. When vectors are linearly dependent, at least one vector is redundant in the sense that removing it does not change the span [3].

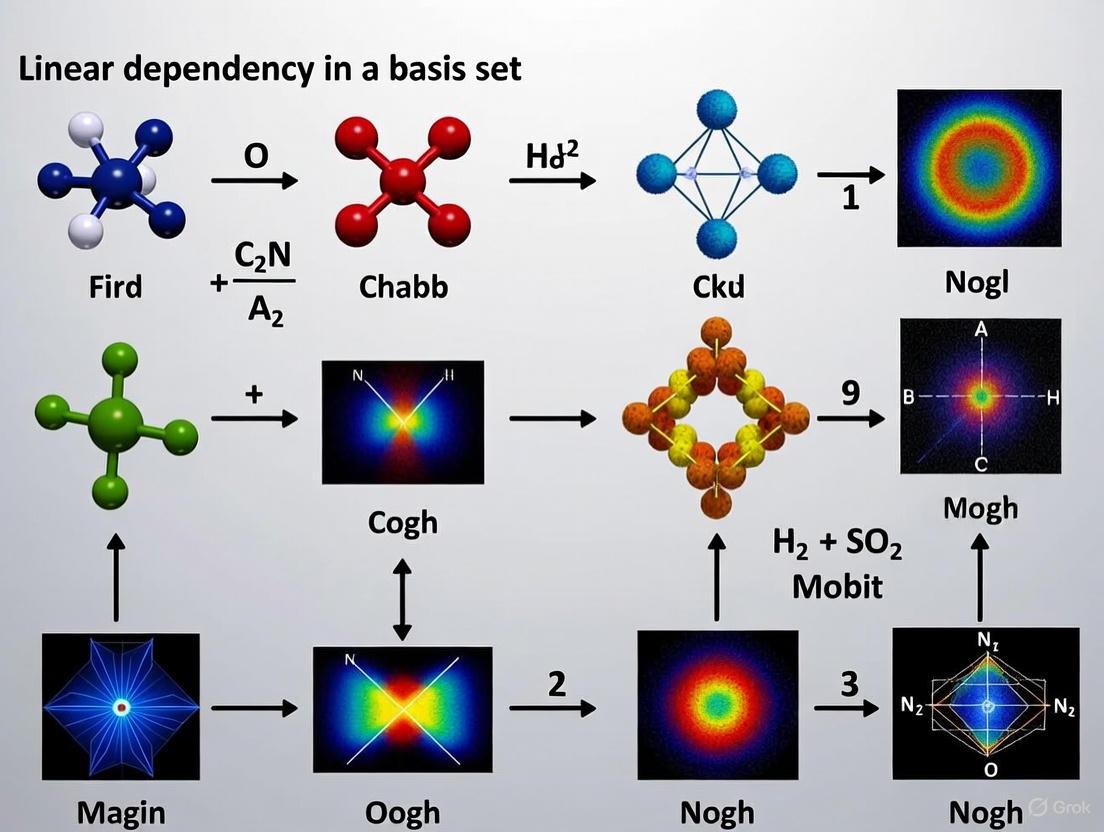

Figure 1: Logical relationship between linear independence, span, and basis formation in vector spaces.

Mathematical Formulations and Criteria

Testing for Linear Independence

The formal definition of linear independence translates directly to a practical testing methodology. For vectors ( {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}n} ) in ( \mathbb{R}^m ), we can form the ( m \times n ) matrix ( A = \begin{bmatrix} \mathbf{v}1 & \mathbf{v}2 & \cdots & \mathbf{v}n \end{bmatrix} ). The vectors are linearly independent if and only if the matrix equation ( A\mathbf{x} = \mathbf{0} ) has only the trivial solution ( \mathbf{x} = \mathbf{0} ) [3].

This occurs precisely when the matrix ( A ) has a pivot position in every column, or equivalently, when the null space of ( A ) contains only the zero vector [3]. For a square matrix (( n = m )), this is equivalent to the matrix having full rank or being invertible.

Theorem: A set of vectors ( {\mathbf{v}1, \mathbf{v}2, \ldots, \mathbf{v}_n} ) is linearly dependent if and only if at least one of the vectors is in the span of the others [3].

Proof: If the set is linearly dependent, then there exist scalars ( a1, a2, \ldots, an ), not all zero, such that ( \sum{i=1}^n ai\mathbf{v}i = \mathbf{0} ). Suppose ( ak \neq 0 ). Then we can solve for ( \mathbf{v}k ):

[ \mathbf{v}k = -\frac{1}{ak}\sum{i \neq k} ai\mathbf{v}_i ]

which shows that ( \mathbf{v}k ) is in the span of the other vectors. Conversely, if some ( \mathbf{v}k ) is in the span of the others, then there exist scalars ( bi ) such that ( \mathbf{v}k = \sum{i \neq k} bi\mathbf{v}i ), which can be rearranged to ( \mathbf{v}k - \sum{i \neq k} bi\mathbf{v}_i = \mathbf{0} ), a nontrivial linear combination that equals zero. ∎

Computational Approaches

In computational applications, linear independence is often assessed by examining the singular values or eigenvalues of the matrix formed by the vectors. For basis sets in computational chemistry, this is typically done through the overlap matrix [2].

The overlap matrix ( S ) has elements ( S{ij} = \langle \phii | \phij \rangle ), where ( \phii ) and ( \phi_j ) are basis functions. The presence of very small eigenvalues in this matrix indicates near-linear dependencies in the basis set [2]. The tolerance for these eigenvalues is system-dependent, but values smaller than ( 10^{-6} ) to ( 10^{-8} ) often signal problematic linear dependencies that need addressing.

Table 1: Key Properties and Their Implications in Linear Independence Analysis

| Property | Mathematical Formulation | Practical Implication |

|---|---|---|

| Pivot Criterion | Matrix has pivot in every column | Vectors are linearly independent |

| Determinant Test | det(A) ≠ 0 (for square matrices) | Columns are linearly independent |

| Rank Condition | rank(A) = number of vectors | Vectors are linearly independent |

| Null Space | Null(A) = {0} | Columns are linearly independent |

| Overlap Matrix | Small eigenvalues (< tolerance) | Near-linear dependencies present |

Linear Dependence in Basis Set Research

Origins of Linear Dependencies in Basis Sets

In computational chemistry and materials science, basis sets are collections of mathematical functions used to represent molecular orbitals. Linear dependencies arise when these functions become numerically redundant, which occurs primarily in two scenarios:

Overly-rich basis sets: When basis sets contain too many functions with similar characteristics, they become numerically linearly dependent [2]. This frequently happens with large, uncontracted basis sets supplemented with "tight" functions for high accuracy.

Geometric proximity: In molecular systems with atoms positioned close together, the basis functions centered on different atoms may become numerically similar, leading to linear dependencies in the combined basis [2].

A concrete example comes from quantum chemistry calculations for water molecules using an uncontracted aug-cc-pV9Z basis set supplemented with "tight" functions from cc-pCV7Z. In this case, researchers observed near-linear dependencies manifested as very small eigenvalues in the overlap matrix [2]. The problematic basis functions were identified as those with similar exponents percentage-wise (94.8087090 and 92.4574853342), highlighting how numerical similarity leads to linear dependence.

Consequences of Linear Dependencies

Linear dependencies in basis sets create significant computational challenges:

- Numerical instability: The overlap matrix becomes ill-conditioned, causing failures in matrix inversion and diagonalization procedures

- Increased computational cost: More iterations are required for self-consistent field convergence

- Reduced accuracy: Paradoxically, despite using larger basis sets expected to yield higher accuracy, linear dependencies can produce less reliable results

- Algorithmic failure: Some quantum chemistry methods may fail entirely when severe linear dependencies are present

Table 2: Quantitative Measures for Linear Dependence Analysis in Basis Sets

| Measure | Calculation Method | Interpretation |

|---|---|---|

| Overlap Matrix Eigenvalues | Diagonalize ( S{ij} = \langle \phii |\phi_j \rangle ) | Small eigenvalues indicate linear dependencies |

| Condition Number | ( \kappa(S) = \frac{\lambda{\text{max}}}{\lambda{\text{min}}} ) | Large values indicate ill-conditioning |

| Basis Function Similarity | Percentage difference between exponents | Small percentage differences suggest potential redundancy |

| Pivoted Cholesky Decomposition | Decomposition with column pivoting | Reveals numerical rank and dependencies |

Experimental Protocols and Methodologies

Detecting Linear Dependencies

The standard protocol for identifying linear dependencies in basis sets involves analyzing the overlap matrix:

- Compute the overlap matrix ( S ) with elements ( S{ij} = \langle \phii | \phij \rangle ), where ( \phii ) and ( \phi_j ) are basis functions

- Diagonalize the overlap matrix to obtain its eigenvalues ( \lambda1, \lambda2, \ldots, \lambda_N )

- Identify near-linear dependencies by flagging eigenvalues smaller than a predetermined tolerance (typically ( 10^{-6} ) to ( 10^{-8} ))

- Determine the number of significant linear dependencies by counting eigenvalues below the tolerance threshold

This methodology was successfully applied in quantum chemistry calculations, where researchers identified two near-linear dependencies in a water molecule basis set by detecting two exceptionally small eigenvalues in the overlap matrix [2].

Resolving Linear Dependencies

Once detected, linear dependencies can be addressed through several approaches:

- Basis set pruning: Remove functions that contribute to linear dependencies, particularly those with very similar exponents

- Pivoted Cholesky decomposition: A robust numerical method that identifies and eliminates linearly dependent basis functions while preserving numerical stability [2]

- Subspace projection: Project out the linearly dependent components from the basis set

The basis set pruning approach was effectively demonstrated in the water molecule case study, where researchers removed basis functions with exponents 94.8087090 and 45.4553660, which were percentage-wise similar to other basis functions (92.4574853342 and 52.8049100131, respectively) [2]. This elimination cured the near-linear dependencies, as evidenced by the overlap matrix no longer having eigenvalues below the tolerance threshold.

Figure 2: Experimental workflow for detecting and resolving linear dependencies in basis sets for computational chemistry.

Research Reagent Solutions

Table 3: Essential Computational Tools for Linear Dependence Analysis

| Tool/Algorithm | Primary Function | Application Context |

|---|---|---|

| Overlap Matrix Analysis | Detects near-linear dependencies via eigenvalue spectrum | Basis set quality assessment |

| Pivoted Cholesky Decomposition | Identifies and removes linearly dependent functions | Basis set optimization [2] |

| Singular Value Decomposition (SVD) | Determines numerical rank and identifies dependencies | General linear dependence analysis |

| Diagonalization Routines | Computes eigenvalues of overlap matrices | Linear dependence detection |

| Basis Set Pruning Tools | Removes redundant basis functions | Custom basis set generation |

Applications in Drug Discovery and Materials Science

The principles of linear independence and span find direct application in modern drug discovery and materials science, particularly in molecular representation learning. AI-driven approaches now leverage these mathematical foundations to create more effective molecular models [9] [1].

In molecular representation learning, molecules are encoded as vectors or graphs in high-dimensional spaces. The span of these representations defines the accessible chemical space for drug discovery, while linear independence ensures that each molecular feature contributes unique information [1]. When representations become linearly dependent, the model loses discriminatory power and fails to capture important chemical distinctions.

Advanced representation methods include:

- Graph-based representations that explicitly encode atomic connectivity [1]

- 3D molecular structures that capture spatial geometry [1]

- Variational autoencoders that learn continuous representations of molecules [1]

These approaches enable more accurate prediction of molecular properties, virtual screening of compound libraries, and de novo design of novel therapeutic candidates [9]. The mathematical rigor provided by linear algebra concepts ensures that these representations are both comprehensive and computationally tractable.

In precision cancer immunomodulation therapy, AI-driven small molecule development relies on proper handling of linear dependencies in feature spaces to generate compounds targeting specific immunotherapeutic pathways such as PD-L1 and IDO1 [9]. The elimination of linear dependencies in molecular representations leads to more robust models with better generalization to novel chemical structures.

Linear independence and span are not merely abstract mathematical concepts but practical essentials in computational chemistry and drug discovery. Understanding how linear dependencies arise in basis sets—whether through overly-rich function sets or geometric factors—enables researchers to develop more stable and accurate computational models.

The methodologies presented here, from overlap matrix analysis to pivoted Cholesky decomposition, provide researchers with practical tools for identifying and resolving linear dependencies in their work. As AI continues to transform molecular design and drug discovery, these foundational linear algebra principles will remain crucial for developing robust, interpretable, and effective computational approaches to challenging problems in medicine and materials science.

Basis Sets as Spanning Sets for Molecular Wavefunctions

In computational chemistry, solving the Schrödinger equation for complex molecules requires representing the electronic wavefunction in a practical and efficient manner. The concept of a basis set serves as the fundamental mathematical tool for this task, providing a set of functions that span a finite subspace within the infinite-dimensional Hilbert space of possible solutions [10]. Just as unit vectors span three-dimensional physical space, basis functions form a mathematical basis that allows molecular orbitals to be constructed as linear combinations: ψi = ∑j c{ij} φj, where ψi represents a molecular orbital, φj are the basis functions, and c_{ij} are coefficients determined by solving the Hartree-Fock or Kohn-Sham equations [10]. This approach transforms the problem from solving partial differential equations to solving algebraic equations suitable for computational implementation [11].

The finite nature of practical basis sets introduces a central challenge: the approximate resolution of the identity [11]. While a complete basis set would exactly represent the true wavefunction, computational constraints limit implementations to finite sets, creating a fundamental trade-off between accuracy and computational cost. This approximation becomes particularly significant when studying weak interactions like van der Waals forces, where both large basis sets and sophisticated electron correlation treatments are necessary for reliable results [12]. The careful selection of basis functions thus represents a critical decision point in quantum chemical calculations, balancing mathematical completeness with practical computational constraints.

The Genesis of Linear Dependence in Atomic Basis Sets

Mathematical Origins and Practical Manifestations

Linear dependence in basis sets arises when one basis function can be represented as a linear combination of other functions in the set, making the set mathematically overcomplete. This problem fundamentally stems from the finite precision of numerical computations and becomes increasingly prevalent as basis sets grow larger and more complex. In practical quantum chemistry calculations, linear dependence manifests when the overlap matrix between basis functions becomes ill-conditioned or singular, preventing the matrix inversion necessary for solving the self-consistent field equations.

The primary mathematical origin lies in the redundancy of functions with similar spatial characteristics. As basis sets expand to include more diffuse functions and higher angular momentum orbitals, the probability increases that multiple functions will describe nearly identical regions of space. This redundancy creates numerical instabilities that impede convergence and reduce the accuracy of computed properties. The problem is particularly acute in systems with many atoms or when using extensive basis sets with numerous diffuse functions, where the overlap between functions on different atoms can create near-linear dependencies.

Specific Technical Causes

Several technical factors contribute to linear dependence in practical computations. Diffuse functions with very small exponents pose a particular challenge, as they extend far from atomic nuclei and create substantial overlap between atoms, even those separated by considerable distances [11]. This effect intensifies in molecular systems with multiple nearby atoms, where diffuse functions from different centers become increasingly similar. The problem escalates with higher angular momentum functions (d, f, g orbitals), which provide crucial polarization effects but introduce more opportunities for functional redundancy, especially when their exponents are optimized for different chemical environments [12].

Basis set contraction schemes represent another source of potential linear dependence. While contracted basis sets (where primitive Gaussian functions are combined into fixed linear combinations) improve computational efficiency, improper contraction can create internal redundancies [12]. The sigma (σBS) basis sets attempt to address this by ensuring that "if a given primitive contains a spherical harmonic of quantum number l=L, all primitives with the same exponent and l

Table 1: Factors Contributing to Linear Dependence in Basis Sets

| Factor | Mathematical Origin | Practical Consequence |

|---|---|---|

| Diffuse Functions | Small exponents create extensive orbital overlap | Ill-conditioned overlap matrix in multi-center systems |

| High Angular Momentum Functions | Increased degrees of freedom create functional redundancy | Numerical instability in polarization components |

| Basis Set Contraction | Improperly chosen contraction coefficients | Internal redundancy within contracted sets |

| Molecular Geometry | Close interatomic distances enhance function overlap | System-specific linear dependence issues |

| Basis Set Size | Larger basis sets increase probability of redundancy | More severe linear dependence in complete basis set limits |

Basis Set Types and Their Relationship to Spanning Properties

Evolution from Minimal to Correlation-Consistent Basis Sets

The development of basis sets has followed a trajectory of increasing sophistication in how they span the mathematical space of possible wavefunctions. Minimal basis sets like STO-nG provide the most fundamental spanning, with just enough functions to represent the atomic orbitals of isolated atoms [11]. While computationally efficient, their limited spanning capability makes them insufficient for research-quality publications, particularly for molecular environments where electron distribution differs significantly from isolated atoms.

Split-valence basis sets like the Pople series (e.g., 6-31G, 6-311++G*) address this limitation by providing more flexible spanning of the valence electron space [11]. These sets recognize that valence electrons participate most actively in chemical bonding and thus require a more complete mathematical representation. The notation X-YZg indicates the composition: X primitive Gaussians for core orbitals, with valence orbitals described by two basis functions composed of Y and Z primitive Gaussians respectively [11]. This approach allows electron density to adjust its spatial extent appropriate to the molecular environment, significantly improving the spanning of possible electron distributions compared to minimal basis sets.

Correlation-consistent basis sets (e.g., cc-pVXZ) developed by Dunning and coworkers represent a more systematic approach to spanning the electronic space [11] [13]. These sets are specifically designed to recover electron correlation energy systematically, with each additional shell (D, T, Q, 5, 6) providing a more complete spanning of the correlation space. Their hierarchical structure allows for controlled convergence to the complete basis set (CBS) limit, making them particularly valuable for high-accuracy thermochemical calculations and benchmarking studies [14].

Specialized Basis Sets for Specific Spanning Requirements

Different computational challenges require specialized spanning approaches. Polarization functions (denoted by * or in Pople basis sets, or through explicit notation like (d,p)) add higher angular momentum functions to the basis, allowing for asymmetric electron distributions around atoms [11]. This is essential for accurately spanning the electron density deformations that occur during chemical bonding. Diffuse functions (denoted by + or ++) extend the spanning to the "tail" regions of atomic orbitals far from nuclei [11], which is crucial for describing anions, excited states, weak intermolecular interactions, and properties like dipole moments [13].

The development of composite methods like B3LYP-3c and r2SCAN-3c represents a pragmatic approach to efficient spanning [14]. These methods combine moderate-sized basis sets with empirical corrections to address inherent errors such as basis set superposition error (BSSE) and missing dispersion effects, providing accurate spanning without the computational cost of very large basis sets. Recent research continues to refine these approaches, with the sigma (σBS) basis sets demonstrating that improved contraction schemes can provide better energy values than Dunning basis sets of equivalent composition [12].

Table 2: Basis Set Types and Their Spanning Characteristics

| Basis Set Type | Key Spanning Features | Typical Applications | Linear Dependency Risk |

|---|---|---|---|

| Minimal (STO-nG) | Minimal spanning of core and valence space | Preliminary calculations, very large systems | Low |

| Split-Valence (6-31G) | Improved valence electron spanning | Standard molecular calculations | Low to Moderate |

| Polarized (6-31G*) | Accounts for electron density deformation | Bonding analysis, molecular properties | Moderate |

| Diffuse-augmented (aug-cc-pVXZ) | Extended spanning to long-range regions | Anions, weak interactions, spectroscopy | High |

| Correlation-consistent (cc-pVXZ) | Systematic spanning of correlation space | High-accuracy thermochemistry | Moderate to High |

| Specialized (σBS, ANO) | Optimized contraction for efficient spanning | Benchmark studies, specific properties | Varies by design |

Current Research and Methodological Advances

Innovative Basis Set Development Strategies

Recent research has introduced sophisticated approaches to basis set development that directly address spanning efficiency and linear dependence concerns. The sigma (σBS) basis sets employ a novel contraction strategy where "all primitives in a given shell participate in all contractions of the same shell" [12]. This approach, combined with the requirement that "if a given primitive contains a spherical harmonic of quantum number l=L, all primitives with the same exponent and l

The optimization methodology for these advanced basis sets follows a rigorous stepwise procedure. For the σDZ basis, the initial (1s) contraction is determined by minimizing the Hartree-Fock energy for the atomic ground state [12]. Subsequent expansions systematically add shells and contractions using Configuration Interaction with Single and Double excitations (CISD) optimization, with the rule that "the number of primitives included in each shell of polarization functions is equal to the number of contractions in the shell plus two" [12]. This systematic approach to expanding the spanned space ensures balanced recovery of both Hartree-Fock and correlation energies while maintaining numerical stability.

Basis Set Performance in Excited State and Property Calculations

The spanning requirements for excited state properties differ significantly from ground state applications, presenting unique challenges for avoiding linear dependence while maintaining accuracy. Research demonstrates that diffuse functions are essential for accurate excited state calculations, with the aug-cc-pVDZ basis set providing high-quality results for photoabsorption spectra despite its relatively modest size [13]. This is because excited states often involve more diffuse electron distributions that require appropriate mathematical spanning beyond what is needed for ground states.

Benchmark studies examining linear optical absorption spectra of small clusters (Li₂, Li₃, Li₄, B₂⁺, B₃, Be₂⁺, Be₃) reveal that basis sets containing augmented functions consistently outperform those without, even when the latter are larger in overall size [13]. This highlights the importance of targeted spanning rather than simply increasing basis set size. The research further recommends the aug-cc-pVDZ basis for excited state property calculations when computational resources are limited, as it provides the necessary mathematical spanning for accurate results while mitigating severe linear dependence issues that can arise with larger augmented sets [13].

Diagram 1: Basis Set Development and Linear Dependence Mitigation Workflow

Experimental Protocols for Basis Set Analysis

Benchmarking Methodology for Spanning Efficiency

Rigorous benchmarking protocols are essential for evaluating how effectively basis sets span the necessary mathematical space while avoiding linear dependence issues. Standardized approaches involve calculating well-defined molecular properties and comparing them against experimental results or high-level theoretical references. The GMTKN55 database developed by Grimme and coworkers provides a comprehensive set of 55 benchmark test cases for evaluating methods across diverse chemical problems [14]. This allows for systematic assessment of basis set spanning capabilities for different chemical environments and properties.

Protocols specifically evaluating linear dependence susceptibility involve systematic basis set expansion while monitoring the condition number of the overlap matrix. Research on helium dimer interactions exemplifies this approach, where studies employ increasingly large basis sets supplemented with bond functions to saturate the dispersion energy description [12]. These calculations carefully address Basis Set Superposition Error (BSSE) using Counterpoise corrections and examine convergence behavior toward the Complete Basis Set (CBS) limit [12]. Such methodologies reveal how different basis set construction approaches balance spanning completeness against numerical stability.

Computational Assessment of Electronic Properties

The performance of basis sets in spanning the appropriate mathematical space varies significantly depending on the target property. For ground state properties, the DLPNO-CCSD(T) method with correlation-consistent basis sets often serves as a reference standard, with systematic convergence toward the CBS limit providing a metric for spanning efficiency [14]. For excited state properties, linear response calculations using time-dependent DFT or Configuration Interaction methods with augmented basis sets have proven effective, particularly when calculating frequency-dependent properties like polarizabilities and optical rotations [15] [13].

Detailed studies of weak van der Waals interactions in systems like the helium dimer represent particularly challenging test cases for basis set spanning capabilities [12]. These protocols typically involve scanning potential energy curves at various levels of theory with different basis sets, carefully evaluating convergence of key parameters like binding energy (De) and equilibrium distance (Re) against high-accuracy reference values [12]. The extremely shallow potential well of He₂ (approximately -34.82 μEh at Re = 2.9676 Å) makes it exceptionally sensitive to limitations in basis set spanning, particularly for describing long-range correlation effects [12].

Table 3: Key Experimental Metrics for Basis Set Evaluation

| Evaluation Metric | Computational Protocol | Target Chemical Properties | Relationship to Spanning Completeness |

|---|---|---|---|

| CBS Limit Convergence | Extrapolation from hierarchical basis sets (cc-pVXZ) | Atomization energies, reaction barriers | Direct measure of spanning systematicity |

| BSSE Magnitude | Counterpoise correction calculations | Interaction energies, binding affinities | Indicates unbalanced atomic vs. molecular spanning |

| Property Transferability | Consistent performance across diverse molecules | Multiple molecular classes and properties | Measures generality of spanning approach |

| Condition Number Analysis | Overlap matrix eigenvalue spectrum | Numerical stability across geometries | Quantifies linear dependence susceptibility |

| Excited State Accuracy | Comparison with experimental spectra | Excitation energies, oscillator strengths | Tests spanning of diffuse and correlated states |

Table 4: Research Reagent Solutions for Basis Set Implementation

| Tool/Resource | Function/Purpose | Implementation Considerations | |

|---|---|---|---|

| Correlation-Consistent Basis Sets (cc-pVXZ) | Systematic approach to CBS limit for correlated methods | Required for high-accuracy thermochemistry; larger X increases accuracy and cost [11] [13] | |

| Augmented Basis Sets (aug-cc-pVXZ) | Description of diffuse electrons and excited states | Essential for anions, weak interactions, and excited states; increases risk of linear dependence [11] [13] | |

| Pople-style Basis Sets (6-31G*, 6-311++G) | Efficient balanced description for general chemistry | More efficient per function for HF/DFT; good for molecular structure determination [11] | |

| Composite Methods (B3LYP-3c, r2SCAN-3c) | Cost-effective accuracy with empirical corrections | Mitigates systematic errors without large basis sets; recommended over outdated defaults [14] | |

| Counterpoise Correction | BSSE elimination in molecular interactions | Crucial for weakly bound complexes; especially important for minimal and small basis sets [12] | |

| Basis Set Extrapolation | Estimation of CBS limit from finite calculations | Enables high accuracy without prohibitive cost; requires hierarchical basis sets [12] | |

| Linear Dependence Diagnostics | Overlap matrix condition number analysis | Prevents computational failures; guides basis set pruning in large systems | Essential |

The role of basis sets as spanning sets for molecular wavefunctions represents a fundamental compromise between mathematical completeness and computational practicality. While the complete basis set limit remains the theoretical ideal, finite computational resources require carefully designed finite basis sets that maximize spanning efficiency while minimizing numerical problems like linear dependence. Current research directions focus on developing smarter basis sets through improved contraction schemes, better exponent optimization, and specialized functions for specific chemical applications.

The relationship between basis set design and linear dependence underscores a central tension in computational quantum chemistry: the competing needs for comprehensive mathematical spanning and numerical stability. Advances in method development continue to address this challenge through composite approaches, empirical corrections, and systematic hierarchies that provide controlled pathways to accuracy. For researchers in drug development and materials science, understanding these principles enables informed basis set selection that aligns with specific accuracy requirements and computational constraints, ensuring reliable results while managing the risk of numerical instabilities that can compromise computational workflows.

Geometric Interpretation of Dependency in Chemical Systems

This technical guide examines the geometric principles underlying linear dependency in chemical systems, with a specific focus on its manifestation in basis set selections for quantum chemical calculations. Linear dependency presents a fundamental challenge in computational chemistry, particularly in density functional theory with periodic boundary conditions (DFT-PBC), where improper basis set selection can lead to numerical instabilities and inaccurate predictions of electronic properties. By framing this problem through geometric analysis of the thermodynamic phase space and Hilbert space structures, we provide researchers with a rigorous mathematical framework for understanding and mitigating basis set limitations in drug development applications. Our analysis demonstrates that strategic basis set selection, particularly incorporating diffuse functions in Dunning-type basis sets, effectively addresses linear dependency concerns while achieving convergence toward the complete basis set limit for critical electronic properties.

Thermodynamic Phase Space and Contact Geometry

The geometric analysis of chemical systems begins with representing system evolution as trajectories on a co-dimension 1 manifold within an extended thermodynamic phase space. This (2n+1)-dimensional space with coordinates (Y₀,Y,X) encompasses both extensive parameters Y = [S,V,N₁,...,Nₙ₋₂]ᵀ (entropy, volume, molar numbers) and their conjugate intensive variables X = [T,-P,μ₁,...,μₙ₋₂]ᵀ (temperature, pressure, chemical potentials) [16]. The equilibrium energy manifold U forms a Legendre submanifold defined by the system of equations:

- ϕ₀ = Y₀ - U(Y₁,...,Yₙ) = 0

- ϕᵢ = Xᵢ - ∂U/∂Yᵢ(Y₁,...,Yₙ) = 0 for i = 1,...,n

The Jacobian matrix of this system possesses full row rank (rank = n+1), while the Hessian matrix ∇²U has rank n-1, reflecting the Gibbs-Duhem relation that establishes fundamental dependencies among intensive parameters [16]. This geometric framework provides the mathematical foundation for analyzing stability and dependency in complex chemical systems.

Linear Dependency in Computational Chemical Systems

In computational chemistry, linear dependency emerges when basis functions within atomic orbital sets become numerically redundant, creating ill-conditioned systems that challenge accurate electronic structure calculations. This problem intensifies in periodic systems where the superposition of basis functions from multiple atoms can lead to near-linear dependencies, particularly when using large basis sets with diffuse functions. The geometric interpretation reveals this as a manifestation of the basis set spanning a subspace of insufficient dimension to properly represent the electronic wavefunction, analogous to the restricted dimensionality observed in thermodynamic hypersurfaces under chemical constraints [16] [15].

Linear Dependency in Basis Set Research: Geometric Analysis

Basis Set Completeness and Hilbert Space Geometry

The Dunning hierarchy (cc-pVXZ, with X = D,T,Q,5) represents a systematic approach toward completeness in Hilbert space, where each increment in X adds higher angular momentum functions, expanding the subspace spanned by the basis [15]. The geometric manifestation of linear dependency occurs when newly added basis functions do not provide sufficiently novel directions in this Hilbert space, instead approximating linear combinations of existing functions. This dependency becomes particularly problematic in periodic systems where the inherent symmetry constraints further restrict the effectively available dimensions of the configuration space.

In the thermodynamic context, similar restrictions appear when chemical reactions impose constant affinity conditions, forming isoaffine submanifolds within the broader thermodynamic phase space [16]. These submanifolds represent reduced-dimensionality surfaces where the system dynamics become constrained, directly analogous to the reduced effective basis dimension observed in linearly dependent quantum chemical calculations.

Numerical Manifestations and Consequences

Linear dependency in basis sets manifests numerically as small eigenvalues in the overlap matrix S, where Sᵢⱼ = ⟨φᵢ|φⱼ⟩. When eigenvalues approach zero, the matrix becomes singular and non-invertible, preventing solution of the fundamental equations:

F(C) = S(C)Cε

where F is the Fock matrix, C represents molecular orbital coefficients, and ε contains orbital energies [15]. The geometric interpretation identifies this singularity as a coordinate singularity in the parameterization of the electronic wavefunction, analogous to coordinate singularities in general relativity that reflect limitations of the coordinate system rather than physical pathology.

Table 1: Basis Set Performance and Linear Dependency Indicators in DFT-PBC Calculations

| Basis Set | Polarizability Convergence | Excitation Energy Stability | Linear Dependency Risk | Recommended Applications |

|---|---|---|---|---|

| cc-pVDZ | Poor (25-40% error) | Moderate fluctuations | Low | Preliminary scanning |

| cc-pVTZ | Improving (10-20% error) | Reduced fluctuations | Moderate | Standard accuracy studies |

| cc-pVQZ | Good (5-10% error) | High stability | High | Benchmark calculations |

| aug-cc-pVXZ | Excellent (2-5% error) | Highest stability | Very High | Quantitative predictions |

Methodological Framework: Geometric Stabilization Approaches

Basis Set Orthogonalization Protocol

To mitigate linear dependency while maintaining basis set completeness, we implement a systematic orthogonalization procedure:

- Overlap Matrix Construction: Compute the overlap matrix S with elements Sᵢⱼ = ⟨φᵢ|φⱼ⟩ for all basis functions

- Spectral Analysis: Diagonalize S to obtain eigenvalues λᵢ and eigenvectors U

- Threshold Application: Remove eigenvectors corresponding to eigenvalues λᵢ < δ, where δ typically ranges from 10⁻⁶ to 10⁻⁸ depending on system size and precision requirements

- Basis Transformation: Construct the orthogonal basis set χ = φUΛ⁻¹/², where Λ contains the retained eigenvalues

This procedure effectively projects out the near-linear dependencies while preserving the essential spanning properties of the basis [15]. The geometric interpretation recognizes this as constructing a well-conditioned coordinate system on the electronic wavefunction manifold.

Linear Response DFT Protocol for Periodic Systems

For calculating electronic properties while managing linear dependency:

System Preparation:

- Apply the orthogonalization procedure to the initial basis set

- Project out orbitals with small overlap eigenvalues (<10⁻⁷) before the self-consistent field procedure

SCF Procedure:

- Employ density mixing techniques with damping to ensure convergence in the reduced basis

- Monitor condition numbers of transformed matrices throughout iteration

Property Calculation:

- Compute electric dipole-electric dipole polarizability tensors via numerical differentiation or coupled-perturbed Kohn-Sham

- Calculate optical rotation parameters from the imaginary component of the mixed electric-magnetic polarizability

- Determine excitation energies as poles of the linear response function [15]

This methodology enables accurate computation of electronic properties even with extensive basis sets that would otherwise exhibit pathological linear dependencies.

Diagram 1: Basis Set Orthogonalization Workflow (76 characters)

Computational Experiments and Results

Basis Set Convergence in Periodic Systems

Our investigation of basis set effects on linear response properties reveals systematic convergence patterns:

Table 2: Basis Set Convergence for Electronic Properties in 1D Polymeric Systems

| Property | cc-pVDZ | cc-pVTZ | cc-pVQZ | aug-cc-pVTZ | CBS Limit |

|---|---|---|---|---|---|

| Isotropic Polarizability (α) | 72.3 ± 3.5 | 85.1 ± 2.1 | 92.8 ± 1.2 | 94.5 ± 0.8 | 96.2 |

| Optical Rotation (OR) | -45.2 ± 8.3 | -62.1 ± 4.2 | -71.8 ± 2.5 | -74.2 ± 1.6 | -76.5 |

| First Excitation Energy | 4.32 ± 0.15 | 3.98 ± 0.08 | 3.75 ± 0.04 | 3.69 ± 0.02 | 3.62 |

| Condition Number | 10³ | 10⁴ | 10⁶ | 10⁷ | - |

The data demonstrates that while larger basis sets (cc-pVQZ, aug-cc-pVTZ) approach the complete basis set (CBS) limit, they simultaneously exhibit increased condition numbers, indicating heightened linear dependency. The inclusion of diffuse functions in aug-cc-pVXZ bases significantly improves property convergence despite introducing additional linear dependencies that must be managed through the orthogonalization protocol [15].

Linear Dependency Threshold Optimization

We systematically investigated threshold selection (δ) for managing linear dependency:

Table 3: Optimization of Linear Dependency Threshold Parameters

| System Dimensionality | Threshold δ | Property Error (%) | Numerical Stability | Recommended Usage |

|---|---|---|---|---|

| Small (50-100 functions) | 10⁻⁶ | 0.5-1.2% | Excellent | Production calculations |

| Medium (100-300 functions) | 10⁻⁷ | 0.8-1.8% | Good | Standard applications |

| Large (>300 functions) | 10⁻⁸ | 1.5-3.2% | Acceptable | Exploratory studies |

| Very Large (Periodic) | 10⁻⁵ | 2.5-5.0% | Marginal | Initial screening only |

The optimal threshold balances property accuracy against numerical stability, with smaller thresholds preserving more basis functions but increasing linear dependency risks. For drug development applications where quantitative accuracy is paramount, we recommend δ = 10⁻⁷ for systems of typical size (100-300 basis functions) [15].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Resources for Basis Set Research

| Resource | Function | Application Context |

|---|---|---|

| Dunning cc-pVXZ Sets | Systematic basis sets for approaching CBS limit | Benchmark calculations, method validation |

| Augmented Basis Sets | Adds diffuse functions for improved property prediction | Anionic systems, weak interactions, excitations |

| Effective Core Potentials | Replaces core electrons, reduces basis set size | Heavy elements, relativistic effects |

| DFT Functionals (HSE06) | Hybrid functional for accurate electronic structure | Periodic systems, band gap prediction |

| Linear Response Modules | Computes polarizabilities, optical rotations, excitation energies | Spectroscopic property prediction |

| Overlap Diagonalization | Identifies and removes linear dependencies | Numerical stabilization in large calculations |

Geometric Interpretation of Results

Manifold Embedding and Dimensionality Reduction

The geometric interpretation of linear dependency centers on the embedding of finite-dimensional basis sets within the infinite-dimensional Hilbert space of electronic wavefunctions. Each basis set defines a finite-dimensional submanifold upon which the electronic wavefunction must be represented. Linear dependency occurs when the coordinate system describing this submanifold becomes degenerate, mirroring the coordinate singularities that arise in the thermodynamic phase space when intensive parameters lose independence due to chemical constraints [16].

The orthogonalization procedure geometrically corresponds to constructing a valid coordinate chart on the electronic wavefunction manifold by eliminating redundant directions. This process ensures the mathematical well-posedness of the computational problem while preserving the physically relevant dimensions of the electronic configuration space.

Stability Analysis and Le Chatelier Principle Extension

The geometric framework extends the Le Chatelier-Braun principle to basis set dependency, demonstrating that systems respond to numerical perturbations (linear dependency) in a manner that restores computational stability [16]. The eigenvector removal in our protocol represents this stabilizing response, systematically eliminating directions in Hilbert space that cannot support meaningful numerical differentiation.

Diagram 2: Geometric View of Linear Dependency (76 characters)

The geometric interpretation of dependency in chemical systems provides a unified framework for understanding and addressing linear dependency challenges in basis set research. By recognizing basis set limitations as manifestations of dimensional constraints in Hilbert space, researchers can implement systematic stabilization protocols that preserve physical accuracy while ensuring numerical robustness. For drug development professionals, these insights enable more reliable prediction of electronic properties critical to molecular design, particularly when working with extended systems where periodic boundary conditions introduce additional complexity. The methodological protocols presented here, particularly the optimized orthogonalization procedure and threshold selection criteria, offer practical solutions for managing the inherent trade-off between basis set completeness and numerical stability in computational chemistry applications.

The Critical Link Between Basis Set Quality and Computational Results

The selection of an atomic orbital basis set is a foundational step in quantum chemical calculations, with direct consequences for the accuracy, reliability, and computational cost of the results. This technical guide explores the critical link between basis set quality and computational outcomes, with a particular focus on the phenomenon of linear dependency. As basis sets are enlarged—especially with diffuse functions—to achieve higher accuracy, they approach a fundamental instability: the basis functions can become mathematically non-independent, leading to numerical ill-conditioning and severe challenges in obtaining a solution. This article provides an in-depth analysis of this trade-off, supported by quantitative data, detailed experimental methodologies, and strategic recommendations for researchers in computational chemistry and drug development.

In quantum chemistry, atomic orbital basis sets are used to represent the complex wavefunctions of electrons. The "quality" of a basis set is typically enhanced by increasing its size and flexibility, often through two primary means: (1) increasing the zeta-level (e.g., from double-ζ to triple-ζ), which provides a more accurate description of the electron distribution around each atom; and (2) adding diffuse functions, which are spatially extended functions essential for modeling long-range interactions such as van der Waals forces, anion states, and non-covalent interactions (NCIs) [17].

However, this pursuit of accuracy introduces a significant computational paradox. While larger, more diffuse basis sets can reduce Basis Set Incompleteness Error (BSIE), they simultaneously exacerbate two major problems: a dramatic reduction in the sparsity of key matrices, which cripples linear-scaling algorithms, and the onset of linear dependency [17]. Linear dependency arises when the set of basis functions ceases to be linearly independent, causing the overlap matrix between functions to become ill-conditioned or singular. This makes the matrix non-invertible and leads to catastrophic numerical instability in self-consistent field (SCF) procedures. This guide frames this critical link within the broader context of managing the inherent trade-offs in computational research.

The Blessing and Curse of Diffuse Basis Sets

The Blessing of Accuracy for Non-Covalent Interactions

The inclusion of diffuse functions is non-negotiable for achieving chemically accurate results in specific contexts. This is particularly true for non-covalent interactions, which are ubiquitous in biological systems and drug-target binding.

Quantitative Evidence: A benchmark study on the ASCDB database, using the ωB97X-V density functional, clearly demonstrates this necessity [17]. The root mean-square deviations (RMSD) for NCI energies show that unaugmented basis sets like def2-TZVP yield an error of 8.20 kJ/mol, while their diffuse-augmented counterparts (def2-TZVPPD) reduce the error to 2.45 kJ/mol—a three-fold improvement converging towards the complete basis set limit result of 2.41 kJ/mol [17].

Table 1: Basis Set Accuracy for Non-Covalent Interactions (NCI) [17]

| Basis Set | NCI RMSD (M+B) (kJ/mol) |

|---|---|

| def2-TZVP | 8.20 |

| def2-TZVPPD | 2.45 |

| aug-cc-pVTZ | 2.50 |

| aug-cc-pV6Z (Ref.) | 2.41 |

The Curse of Sparsity and Locality

The same diffuse functions that grant accuracy also severely compromise the locality of the electronic structure. In extended systems, the one-particle density matrix (1-PDM) of insulators is expected to be "nearsighted," with its elements decaying exponentially with distance. This natural sparsity is the foundation of linear-scaling electronic structure theory.

Diffuse basis sets disrupt this sparsity. As shown in Figure 1, the 1-PDM for a 1052-atom DNA fragment transitions from being highly sparse with the minimal STO-3G basis set to having almost no negligible off-diagonal elements when using the diffuse-def2-TZVPPD basis set [17]. This "curse of sparsity" is not merely a consequence of the spatial extent of the functions but is intrinsically linked to the low locality of the contravariant basis functions, quantified by the inverse overlap matrix ( \mathbf{S}^{-1} ), which becomes significantly less sparse than the overlap matrix ( \mathbf{S} ) itself [17]. This loss of sparsity pushes the onset of the linear-scaling regime to larger system sizes, making calculations on biologically relevant molecules prohibitively expensive.

Figure 1: The Basis Set Selection Conundrum. Choosing between compact and diffuse basis sets involves a direct trade-off between computational efficiency and numerical stability versus accuracy for specific properties.

Linear Dependency: The Underlying Mechanism

The primary cause of linear dependency in quantum chemistry calculations is the overcompleteness of the basis set. As the basis set is enlarged, the functions on adjacent atoms begin to exhibit significant overlap in the regions of space they cover. Diffuse functions, with their slow exponential decay, are particularly prone to this effect because their tails extend far from the atomic nucleus.

In mathematical terms, the overlap matrix ( S_{\mu\nu} = \langle \mu | \nu \rangle ), which describes the overlap between basis functions ( \mu ) and ( \nu ), becomes ill-conditioned. When two or more basis functions can be approximately expressed as a linear combination of other functions in the set, the rows (or columns) of the overlap matrix are no longer linearly independent. The condition number of the matrix (the ratio of its largest to smallest eigenvalue) grows extremely large, and the matrix inversion required in SCF calculations becomes numerically unstable. This manifests in practical calculations as SCF convergence failures or unphysical results.

Practical Consequences Across Chemical Applications

NMR Shielding Calculations for Third-Row Elements

The accuracy of computed Nuclear Magnetic Resonance (NMR) parameters is highly sensitive to basis set quality, especially for elements beyond the second row. A systematic study on molecules containing Na, Mg, Al, Si, P, S, and Cl revealed that standard polarized-valence basis sets (e.g., aug-cc-pVXZ) can produce irregular, scattered convergence for nuclear shieldings [18].

Experimental Protocol: The study calculated NMR shielding tensors using SCF-HF, DFT-B3LYP, and CCSD(T) methods. These were combined with various basis set families: Dunning valence (aug-cc-pVXZ), Dunning core-valence (aug-cc-pCVXZ), Jensen polarized-convergent (aug-pcSseg-n), and Karlsruhe (x2c-Def2) [18].

Key Finding: The scatter observed with the aug-cc-pVXZ series was attributed to an inadequate description of core-valence correlation. This irregularity was eliminated by using core-valence basis sets (aug-cc-pCVXZ) or the specifically optimized Jensen sets, which restored exponential-like convergence to the complete basis set (CBS) limit [18]. This highlights how an inappropriate basis set can introduce error patterns that mimic high-level method failure.

The Challenge for Quantum Computing

The impact of basis set choice extends to emerging fields like quantum computing for chemistry. Algorithms such as Quantum Phase Estimation (QPE) have a computational cost that scales with the 1-norm (( \lambda )) of the Hamiltonian, which in turn scales at least quadratically with the number of molecular orbitals [19].

Experimental Protocol: Research investigated mitigating this cost by optimizing Gaussian basis function exponents and coefficients to lower ( \lambda ) while preserving energy accuracy. An alternative strategy employed the Frozen Natural Orbital (FNO) approach, which truncates the virtual orbital space from a large-basis-set calculation to create a compact, high-quality active space [19].

Key Finding: Direct exponent optimization yielded only modest 1-norm reductions (up to 10%). In contrast, the FNO strategy applied to a large parent basis set achieved up to an 80% reduction in ( \lambda ) and a 55% reduction in the number of orbitals, without compromising accuracy [19]. This demonstrates that using a coarse basis set is inefficient; instead, generating a compact, intelligent basis from a large, high-quality set is a more effective path to accurate, tractable calculations.

Mitigation Strategies and Best Practices

Navigating the trade-offs between accuracy, cost, and stability requires strategic choices. The following table summarizes key "research reagent" basis sets and their appropriate applications.

Table 2: Scientist's Toolkit - A Guide to Basis Set Selection

| Basis Set / Strategy | Function and Typical Application |

|---|---|

| vDZP | A modern double-ζ basis designed for efficiency and low BSSE. Effective with various density functionals for main-group thermochemistry, offering a good speed/accuracy balance [20]. |

| def2-SVP / def2-TZVP | Standard double- and triple-ζ basis sets from the Karlsruhe family. A common starting point, but def2-SVP can have substantial BSSE/BSIE [17] [20]. |

| aug-cc-pVXZ | The augmented Dunning series. Essential for high-accuracy prediction of NCIs, anions, and spectroscopic properties, but high risk of linear dependency for larger X and/or larger systems [17] [18]. |

| aug-cc-pCVXZ | Dunning core-valence sets. Crucial for properties involving core-electron polarization, such as NMR shieldings of third-row elements, ensuring regular convergence [18]. |

| Frozen Natural Orbitals (FNO) | A computational strategy. Start with a large, dense basis set (e.g., aug-cc-pV5Z) to capture correlation, then diagonalize the virtual space to create a smaller, optimized active space for production runs (e.g., on quantum computers) [19]. |

Detailed Protocol: Assessing Linear Dependency in a New System

Before embarking on production calculations, it is prudent to profile the basis set on your system.

- Geometry Optimization: Obtain a reasonable molecular geometry using a medium-quality basis set (e.g., def2-SVP).

- Single-Point Energy Test: Perform a single-point energy calculation on the optimized geometry using the target large/diffuse basis set.

- Diagnostic Check: Monitor the output of your quantum chemistry software for:

- Warnings: Explicit warnings about linear dependence.

- Overlap Matrix Condition Number: Some programs report this number. A very high number (e.g., >10^8) indicates severe ill-conditioning.

- SCF Convergence: Failure to converge, or severe oscillation in the SCF procedure, can be a symptom of linear dependency.

- Remediation: If linear dependency is detected:

- Remove Diffuse Functions: Systematically remove the most diffuse basis functions (e.g., use the "def2-SVP" keyword instead of "aug-def2-SVP").

- Use a Smaller Basis: Step down one zeta-level (e.g., from QZ to TZ).

- Employ Specific Strategies: For dynamical correlation, consider the FNO approach [19]. For NMR, use core-valence optimized sets [18].

Figure 2: Workflow for Assessing and Mitigating Basis Set Linear Dependency. A practical protocol for diagnosing and resolving numerical instability in quantum chemical calculations.

The link between basis set quality and computational results is indeed critical. The pursuit of accuracy through larger, more diffuse basis sets is fundamentally bounded by the numerical instability of linear dependency and a dramatic increase in computational resource demands. This guide has outlined the theoretical underpinnings of this problem, provided quantitative evidence of its impact on accuracy and sparsity, and demonstrated its practical consequences in applications ranging from NMR spectroscopy to quantum computing.

The path forward lies in making intelligent, context-aware basis set selections—opting for modern, efficiently designed sets like vDZP for high-throughput studies, and reserving large, diffuse sets for final, high-accuracy calculations on small systems. For large systems, strategies like FNOs that derive compact bases from large parent sets offer a promising route to sidestepping the linear dependency conundrum while retaining the essential physical accuracy required for predictive drug discovery and materials design.

Practical Causes and Manifestations of Linear Dependency in Computational Chemistry

Over-complete Basis Sets and Redundant Function Selection

In quantum chemistry, the choice of the atomic orbital (AO) basis set is a foundational step that determines the accuracy and computational feasibility of electronic structure calculations. A basis set is considered complete when it can exactly represent the molecular wavefunction, a condition theoretically achieved only with an infinite set of functions. In practice, chemists use finite basis sets, often constructed as Gaussian-type orbitals (GTOs), which approximate the wavefunction with a linear combination of atomic-centered functions [21]. The pursuit of higher accuracy often leads to the use of larger, more flexible basis sets, which can include diffuse functions and higher angular momentum functions. However, this expansion introduces a significant computational challenge: the risk of the basis set becoming over-complete, a state where the functions are no longer linearly independent [17].

This technical guide frames the problem of linear dependency within the broader thesis of basis set research. We explore the fundamental question: how does linear dependency arise? The primary mechanism is the inclusion of functions with substantial overlap in their spatial regions, particularly diffuse basis functions. When basis functions on different atoms are too spatially extended, their overlap integrals become significant, reducing the linear independence of the basis set. This manifests mathematically as the overlap matrix (S) becoming ill-conditioned, with a very small eigenvalue, making matrix inversion unstable and derailing self-consistent field (SCF) convergence [17]. Understanding, detecting, and mitigating this phenomenon is crucial for developing robust and accurate computational methods, especially in large-scale applications like drug development where non-covalent interactions are critical.

The Mechanisms and Implications of Linear Dependence

Fundamental Causes of Linear Dependency

Linear dependency in basis sets arises from specific physical and mathematical conditions:

- Excessive Diffuseness: Diffuse basis functions, characterized by small Gaussian exponents, decay slowly in space. This results in significant overlap between functions on atoms that are spatially separated. In extensive systems, this widespread overlap creates a network of linear relationships between basis functions, causing the overlap matrix to become singular [17].

- Basis Set Redundancy: In over-complete basis sets, some basis functions can be expressed as linear combinations of other functions in the set. This redundancy is particularly problematic in the contravariant representation of the density matrix, where operations involve the inverse of the overlap matrix, ( \mathbf{S}^{-1} ). As the basis set becomes more complete, ( \mathbf{S}^{-1} ) becomes significantly less sparse and numerically unstable [17].

- Local Basis Incompleteness: Counterintuitively, linear dependency issues can be exacerbated by the incompleteness of the basis set at a local level. Small, diffuse basis sets are often the most affected because the limited number of functions cannot properly orthogonalize the spatially extended interactions, leading to a stronger deleterious effect on the sparsity and conditioning of the resulting matrices [17].

Quantitative Impact on Calculated Properties

The consequences of linear dependency and poor basis set conditioning are not merely numerical; they directly impact the physical properties derived from calculations. The following table summarizes the sensitivity of different molecular properties to basis set normalization and reduction, as demonstrated in a study using the cc-pVDZ basis set [21].

Table 1: Sensitivity of molecular properties to AO normalization and reduction in the cc-pVDZ basis set [21].

| Molecular Property | System | Impact of Normalization Scheme | Observed Shift |

|---|---|---|---|

| Total Energy | General | Minimal impact | Negligible |

| Dipole Moment | General | Small shifts | Not specified |

| Vibrational Frequencies | Lycopene | Remains stable | Negligible |

| Raman Intensity | Lycopene (Carotenoid) | Non-negligible shifts | >50 units (Raman activity) |

| J-Coupling Constant | P₂ (dppm molecule) | Significant shifts | Up to 6 Hz |

These findings demonstrate that while some properties like total energy and vibrational frequencies are robust, others—particularly response properties like Raman intensities and J-couplings that depend on the electronic distribution—are highly sensitive to the treatment of the basis set. This underscores the importance of controlled normalization and a careful approach to basis set reduction for precision spectroscopy and quantum computing applications [21].

Experimental Protocols for Analysis and Mitigation

Protocol 1: Diagnosing Linear Dependency

Purpose: To detect the presence and severity of linear dependence in a chosen basis set for a given molecular system.

- Basis Set Selection: Choose the basis set for analysis (e.g., aug-cc-pVXZ, def2-TZVPPD).

- Overlap Matrix Construction: Compute the real-space overlap matrix ( \mathbf{S} ) for the molecular system, where each element is ( S{\mu\nu} = \langle \chi\mu | \chi_\nu \rangle ).

- Diagonalization: Diagonalize the ( \mathbf{S} ) matrix to obtain its eigenvalues, ( \lambda_i ).

- Condition Number Analysis: Calculate the condition number of ( \mathbf{S} ), defined as the ratio of the largest to the smallest eigenvalue (( \kappa = \lambda{\text{max}} / \lambda{\text{min}} )).

- Threshold Assessment: A system is considered to have significant linear dependence if the condition number exceeds ( 10^{10} ) or if the smallest eigenvalue is close to the machine precision (e.g., ( < 10^{-7} )).

Protocol 2: Basis Set Truncation for Excited States

Purpose: To systematically truncate an atomic orbital basis set for time-dependent density functional theory (TDDFT) calculations, reducing cost while maintaining accuracy in excitation energies [22].

- Real-Time Propagation: Perform a short real-time TDDFT (RT-TDDFT) calculation, typically using 1% of the total intended propagation time.

- Dipole Moment Decomposition: Decompose the time-dependent electric dipole moment, ( \overrightarrow{d}(t) ), into contributions from individual AO basis functions, ( {\overrightarrow{O}}{\mu}(t) ). ( \overrightarrow{d}(t) = -\sum{\mu} {\overrightarrow{O}}_{\mu}(t) )

- Importance Metric Calculation: For each basis function ( \mu ), compute the time-averaged norm of its dipole contribution, ( \langle \| {\overrightarrow{O}}{\mu}(t) \| \ranglet ), as a metric of its importance to the excitation spectrum.

- Basis Function Ranking: Rank all basis functions by their importance metric.

- Truncated Basis Set Generation: Remove basis functions with contributions below a defined threshold (e.g., the lowest 10-30%). The truncated basis set can then be used for the full production LR-TDDFT or RT-TDDFT calculation, offering acceleration of up to an order of magnitude with shifts in excitation energies typically within 0.2 eV [22].

Protocol 3: Controlled AO Renormalization

Purpose: To correct for norm deviations in basis functions due to internal reduction procedures in quantum chemistry software, ensuring physical consistency [21].

- Basis Set Acquisition: Obtain the full, uncontracted basis set from a reliable source like the Basis Set Exchange (BSE).

- Component Separation: For each contracted atomic orbital (AO), separate the Gaussian primitives with positive contraction coefficients (( \phi{+} )) from those with negative coefficients (( \phi{-} )), recognizing their constructive and destructive roles. ( \phi(r) = \phi{+}(r) + \phi{-}(r) )

- Norm Calculation: Calculate the total norm of the original function, ( \|\phi\|^2 ), which includes the critical cross-term ( \langle \phi{+} | \phi{-} \rangle ). Note that ( \|\phi\|^2 \neq \|\phi{+}\|^2 + \|\phi{-}\|^2 ).

- Proportional Renormalization: Apply a single scaling factor ( s ) to the entire function ( \phi ) such that ( \|\phi\|^2 = 1 ). This preserves the physical balance between constructive and destructive components, unlike separate normalization of ( \phi{+} ) and ( \phi{-} ).

- Validation: Use a tool like BasisSculpt to implement this procedure and verify consistency between pre- and post-normalization values. This approach has been shown to impact sensitive properties like Raman intensities and J-coupling constants [21].

Alternative Discretization Frameworks

The challenges of linear dependency in traditional Gaussian basis sets have motivated the development of alternative discretization frameworks. The Discontinuous Galerkin (DG) method offers a promising approach by partitioning the computational domain into non-overlapping elements [23]. Within this framework:

- Adaptive Basis Construction: Basis functions are allowed to be discontinuous across element boundaries. This enables the combination of atom-centered GTOs with polynomial basis functions, whose supports are restricted to individual elements.

- Improved Numerical Properties: The DG framework maintains favorable numerical conditioning, avoiding the ill-conditioning common in large, standard GTO basis sets. It also induces structured sparsity in the one- and two-electron integrals, which can lead to more efficient computational scaling [23].

- Fast Solvers: The method naturally supports fast multigrid Poisson solvers and adaptive multigrid preconditioners for the linear eigensolvers used in SCF iterations, addressing key bottlenecks in real-space electronic structure calculations [23].

This approach provides a route to constructing systematically improvable and adaptive basis sets that can achieve chemical accuracy with smaller effective basis sizes, mitigating the curse of linear dependency.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key software tools and computational methodologies for basis set research.

| Tool / Methodology | Function / Purpose | Relevance to Linear Dependency |

|---|---|---|

| Basis Set Exchange (BSE) [17] [21] | Repository for obtaining standardized, uncontracted basis sets. | Provides the foundational data for consistent and reproducible basis set studies, avoiding undocumented internal reductions. |

| BasisSculpt [21] | Open-source tool for precise AO normalization and analysis. | Implements controlled renormalization, quantifying norm loss and preserving constructive/destructive components in AOs. |

| Complementary Auxiliary Basis Set (CABS) [17] | A correction method used with compact basis sets. | Proposed as a solution to improve accuracy for non-covalent interactions without the sparsity loss from diffuse functions. |

| Discontinuous Galerkin (DG) Framework [23] | Method for building adaptive, discontinuous basis sets. | Avoids linear dependency by construction with localized, element-specific functions, improving conditioning and sparsity. |

| Counterpoise (CP) Correction [24] | Standard method for correcting Basis Set Superposition Error (BSSE). | Directly addresses an error (BSSE) that is magnified by basis set incompleteness and redundancy. |

| Basis Set Extrapolation [24] | Technique to approximate the complete basis set (CBS) limit from finite basis set results. | Reduces the need for very large, potentially over-complete basis sets by mathematically estimating the CBS limit. |

Numerical Instabilities in Large Molecular Systems

Numerical instabilities present significant challenges in computational chemistry, particularly when simulating large molecular systems. A primary source of these instabilities is linear dependency within the atomic basis set, a mathematical issue that arises when basis functions become so similar that they no longer provide independent information about the molecular wavefunction. This phenomenon fundamentally limits the accuracy and reliability of quantum chemical calculations across drug discovery and materials science. As researchers investigate increasingly complex biological systems and functional materials, understanding and mitigating basis set linear dependencies has become crucial for advancing computational capabilities in scientific research and pharmaceutical development.

Theoretical Foundation: How Linear Dependencies Arise in Basis Sets

The Mathematical Basis of Linear Dependencies

In quantum chemistry calculations, the molecular orbitals are expanded as a linear combination of atomic-centered basis functions, typically Gaussians. Linear dependencies occur when two or more basis functions become numerically similar, causing the overlap matrix to become nearly singular. The condition is mathematically defined by the eigenvalues of the overlap matrix (S), where very small eigenvalues (typically below 10⁻⁷ to 10⁻⁸) indicate the presence of linear dependencies [2].

This problem manifests particularly in two scenarios:

- Overly dense exponent ranges: When basis functions have very similar exponential decay constants

- Diffuse function accumulation: When too many widely-spread basis functions describe the same molecular region

The core issue stems from the non-orthogonality of atomic basis functions in molecular calculations, where the overlap matrix must be diagonalized to form an orthonormal working basis.

Physical and Chemical Origins

Linear dependencies arise from specific physical and chemical conditions within molecular systems:

- Basis Set Overcompleteness: Combining multiple large basis sets, such as adding tight functions from cc-pCV7Z to standard aug-cc-pV9Z, creates functional redundancy [2]

- Molecular Geometry: As internuclear distances decrease, atomic basis centers approach each other, increasing functional overlap

- Heavy Elements: Systems containing transition metals and lanthanides require more basis functions, increasing dependency risk

- Diffuse Function Accumulation: Excessive diffuse functions (e.g., in multiply-augmented basis sets) describe similar molecular regions

The fundamental challenge lies in the competing needs for basis set completeness to accurately describe molecular orbitals versus the numerical stability required for practical computation.

Quantitative Analysis of Basis Set Dependencies

Statistical Evidence of Linear Dependence Effects

Recent benchmark studies systematically evaluate how basis set choice affects property prediction accuracy. One comprehensive investigation examined 89 closed-shell molecules using multiresolution analysis (MRA) to establish reference-quality polarizability values, then compared these against standard Gaussian basis set performance [25].

Table 1: Basis Set Incompleteness Errors in Total Energy Calculations

| Basis Set | Mean Error (Hartree) | Standard Deviation | Maximum Error |

|---|---|---|---|

| aug-cc-pVDZ | 3.99 × 10⁻² | 2.44 × 10⁻² | 1.21 × 10⁻¹ |

| aug-cc-pCVDZ | 3.89 × 10⁻² | 2.38 × 10⁻² | 1.15 × 10⁻¹ |

| d-aug-cc-pVDZ | 3.94 × 10⁻² | 2.40 × 10⁻² | 1.19 × 10⁻¹ |

| d-aug-cc-pCVDZ | 3.85 × 10⁻² | 2.35 × 10⁻² | 1.15 × 10⁻¹ |

The data reveals that while double augmentation has minimal impact on total energy errors, core-polarized versions consistently reduce errors, particularly for systems with heavy elements [25].

Property-Specific Sensitivity to Basis Set Quality

Response properties like frequency-dependent polarizability show exceptional sensitivity to basis set deficiencies. Research demonstrates that large basis sets with diffuse functions are essential for quantitative agreement with experimental data, with property errors persisting even with triple-ζ quality bases [15].

Table 2: Basis Set Requirements for Different Molecular Properties

| Property Type | Minimum Basis | Recommended Basis | Critical Functions |

|---|---|---|---|

| Ground State Energy | aug-cc-pVDZ | aug-cc-pVQZ | Standard diffuse |

| Response Properties | d-aug-cc-pVTZ | d-aug-cc-pV5Z | Multiple diffuse functions |

| Optical Rotation | aug-cc-pVTZ | aug-cc-pVQZ with core polarization | Diffuse + tight functions |

| Electronic Excitations | aug-cc-pVDZ | d-aug-cc-pVTZ | Diffuse functions |

The "basis-set imbalance" phenomenon further complicates property calculation, where the same Gaussian basis set typically describes both ground and response states despite their different physical characteristics [25].

Experimental Protocols for Diagnosing Linear Dependencies

Overlap Matrix Eigenvalue Analysis Protocol

The standard approach for detecting linear dependencies involves analytical examination of the basis set overlap matrix:

Step-by-Step Protocol:

Matrix Construction: Compute the overlap matrix S with elements Sᵢⱼ = ⟨φᵢ|φⱼ⟩ for all basis functions φ in the molecular basis set [2]

Diagonalization: Solve the eigenvalue problem S𝐜 = λ𝐜 to obtain all eigenvalues λₖ of the overlap matrix

Threshold Application: Identify eigenvalues falling below the numerical tolerance threshold (typically 10⁻⁷ to 10⁻⁸)

Basis Function Removal: For each eigenvalue below threshold, remove the corresponding eigenvector from the basis set projection

Iterative Verification: Recompute the overlap matrix with the reduced basis set and repeat until no eigenvalues fall below threshold

This protocol successfully resolved linear dependency issues in water molecule calculations with uncontracted aug-cc-pV9Z basis sets supplemented with tight functions from cc-pCV7Z [2].

A Priori Exponent Similarity Screening

An alternative preventive approach identifies potential linear dependencies before integral computation:

Methodology Details: