Leveraging the Materials Project Database for Inorganic Materials in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on utilizing the Materials Project (MP) database for inorganic materials discovery and application.

Leveraging the Materials Project Database for Inorganic Materials in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on utilizing the Materials Project (MP) database for inorganic materials discovery and application. It covers foundational knowledge of the database's computationally-predicted data, practical methodologies for data access via the MP API, solutions to common technical challenges, and strategies for data validation. By synthesizing the latest database developments with real-world application scenarios, this guide aims to empower scientists to efficiently integrate high-throughput computational materials data into their biomedical research and development workflows, accelerating innovation in areas such as drug delivery systems and biomedical devices.

Understanding the Materials Project: A Gateway to Computed Inorganic Materials Data

The Materials Project (MP) represents a transformative, decade-long initiative funded by the Department of Energy to pre-compute the properties of inorganic crystals and molecules, creating an unparalleled open-access database to dramatically accelerate the process of materials discovery and design [1] [2]. By leveraging advanced high-throughput calculations on supercomputers and innovative data-mining algorithms, the MP provides researchers with predictive data on electronic, magnetic, elastic, and thermodynamic properties, enabling targeted experimentation and reducing the traditional timeline for materials development from decades to years [2] [3]. This in-depth technical guide details the core mission, architecture, data composition, and practical methodologies for utilizing the MP database, framed within the broader context of its indispensable role in modern inorganic materials research for applications ranging from next-generation batteries to carbon capture technologies [3].

Core Mission and Vision

The overarching mission of the Materials Project is to fundamentally reshape the scientific discovery process for materials science. It aims to replace intuitive, trial-and-error approaches with a data-driven paradigm where materials properties can be screened in silico before synthesis is ever attempted in a laboratory [3].

- Vision of Networked Discovery: The project envisions a future of "fundamentally networked and data-intensive scientific discovery," where computational results are immediately validated against high-order methodologies and experiments, and the accumulated knowledge of materials can be queried with something akin to a Google search [2]. The stated goal is to allow researchers to "find the set of compounds {X} which has the series of optimized properties {Y} to improve application Z" [2].

- Accelerating Materials Design: As a PuRe Data Resource, the MP makes high-quality, curated data easier to find, access, and reuse, directly supporting the goals of the Materials Genome Initiative to reduce the time and cost of bringing new materials to market [3].

- Global Research Impact: This resources empowers a global community of over 400,000 registered researchers and has been cited in more than 19,000 scientific publications, underscoring its profound impact on the field [3].

Database Architecture and Data Composition

The Materials Project database is a complex, multi-faceted resource built on a foundation of high-throughput first-principles calculations, primarily using density functional theory (DFT). Its architecture is designed to store, relate, and serve vast quantities of computed and experimental data through a user-friendly web interface and a powerful API.

Table 1: Core Materials Project Database Statistics and Content

| Category | Data Type | Scale/Count | Last Updated |

|---|---|---|---|

| Crystalline Materials | Known & Predicted Inorganic Crystals | >154,000 materials [3] | v2025.09.25 [4] |

| Molecular Data | Small Molecules | >172,000 molecules [3] | Information not in search results |

| GNoME Materials | r2SCAN-calculated structures | ~30,000 added (v2025.04.10) [4] | v2025.04.10 [4] |

| Property Data | Bonding, oxidation states, electronic structure | Millions of associated properties [3] | Continuously updated [3] |

Data Generation and Computational Methods

The data within the MP is generated through automated high-throughput workflows run on Department of Energy supercomputers [3]. A key aspect of using the database effectively is understanding the different levels of theory used for the calculations, as this affects the accuracy and applicability of the data.

Table 2: Key Computational Methods and Data Types in the Materials Project

| Computational Functional | run_type Identifier |

Description & Use Case |

|---|---|---|

| PBE (GGA) | GGA |

A standard generalized gradient approximation functional; a workhorse for many early MP calculations. [5] |

| PBE+U | GGA+U |

Incorporates a Hubbard U parameter to better describe strongly correlated electrons, such as in transition metal oxides. [5] |

| r2SCAN | r2SCAN |

A modern meta-GGA functional that provides improved accuracy for formation energies and other properties; increasingly prioritized in new workflows. [4] |

The thermodynamic data presented in the MP, crucial for determining phase stability, is often a mixture derived from calculations using these different functionals. The database employs a defined hierarchy for this data [4]:

GGA_GGA+U_R2SCAN(Mixed)r2SCAN(Pure meta-GGA)GGA_GGA+U(Mixed)

This thermo_type determines which data is displayed on the Materials Explorer and served via the API by default [4].

Distinguishing Computational and Experimental Data

A critical skill for researchers using the Materials Project is accurately discerning the origin of the data, as the vast majority of properties are computationally predicted.

- Primary Data Source: It is explicitly stated that "most of the data served by MP's API is computationally predicted by MP" [6]. These are the results of DFT calculations performed by the project itself.

- The

theoreticalTag: For crystal structures, atheoreticaltag ofFalsein a material document indicates that the representative structure is the "same" - within a set of tolerances - as an experimentally obtained structure from a source like the Inorganic Crystal Structure Database (ICSD) [6]. It is vital to note that even these entries have been computationally "relaxed" using the experimental structure as an initial input, meaning the final atomic positions and properties are the result of a simulation [6]. - Sources of Experimental Data: The MP does incorporate some directly experimental data, though it is accessed through specific endpoints [6]. This includes:

- Thermodynamical data from the "Thermo" app and its corresponding API endpoint.

- Ion energies used for constructing Pourbaix diagrams.

- Reference enthalpies of formation used in the Reaction Calculator.

- Curated datasets available via the

portal.mpcontribs.org.

Table 3: Identifying Data Provenance in the Materials Project

| Data Type | Typical Origin in MP | How to Identify and Access |

|---|---|---|

| Crystal Structure | Primarily computational relaxation of ICSD entries or ab initio predictions. | theoretical tag in material document; icsd IDs in database_IDs field. [6] [7] |

| Electronic Properties | Computed by MP (e.g., Band structure, DOS). | Default data from summary endpoint; accessed via get_bandstructure_by_material_id or get_dos_by_material_id. [7] |

| Experimental Thermodynamics | Sourced from experimental literature. | Accessed via the /materials/{formula}/exp API endpoint or the "Thermo" app. [6] |

A Practical Workflow for Database Queries and Analysis

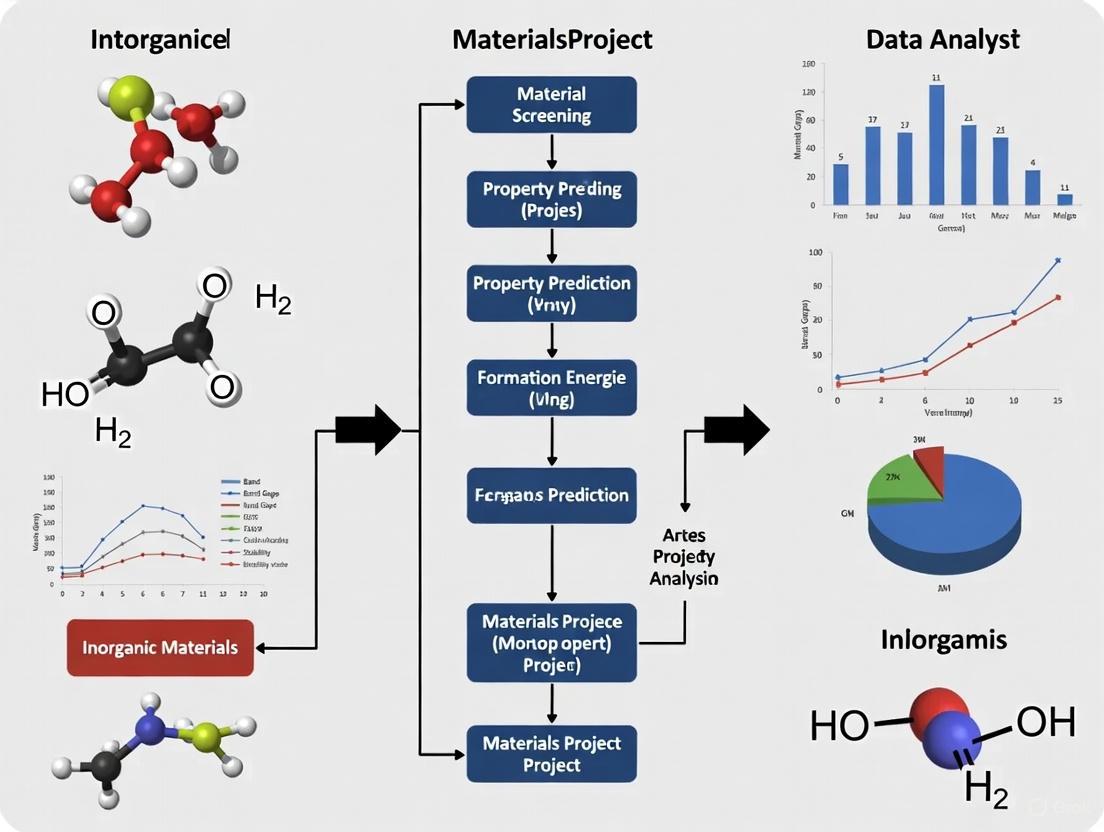

Effective utilization of the Materials Project requires interaction with its REST API using the dedicated Python client, MPRester. The following workflow and corresponding diagram illustrate a standard research query process.

Figure 1: A standardized workflow for querying the Materials Project database, from initial setup to final analysis.

Detailed Methodology for a Common Research Query

The following Python code exemplifies a detailed protocol for querying the database, mirroring the workflow in Figure 1. This example finds all stable compounds in the Si-O chemical system with a band gap greater than 0.5 eV.

Table 4: Essential Digital Tools and Concepts for Materials Project Research

| Tool or Concept | Function & Purpose | Technical Notes |

|---|---|---|

| MPRester Python Client | The primary interface for programmatically querying the MP database. | Enables complex searches with filters and is essential for automating data retrieval. [5] [7] |

| Materials Project API Key | A unique authentication token granting access to the API. | Required for using MPRester; obtained free of charge from the MP website profile page. [5] |

| Pymatgen Library | A powerful Python library for materials analysis. | Deeply integrated with MP; used for parsing MP data, structure manipulation, and advanced analysis like phase diagram construction. [7] |

material_id (MP-ID) |

The unique identifier for every material in the database (e.g., mp-149 for silicon). |

The primary key for retrieving all data associated with a specific material. [5] [7] |

| Property Filters | Search parameters like elements, band_gap, energy_above_hull. |

Allows for targeted discovery of materials meeting specific criteria without downloading the entire database. [5] |

Database Currency and Versioning

The Materials Project is a dynamic resource, with its underlying database undergoing regular updates and versioned releases. These updates can include new materials, corrections to existing data, and changes to data processing schemes [4].

- Versioning System: The MP uses a clear date-based versioning system (e.g.,

v2025.09.25). A detailed changelog is maintained, summarizing major changes, new content additions, and corrections for each version [4]. - Recent Updates and Implications for Research:

- r2SCAN Functional: A significant ongoing effort is the incorporation of materials calculated with the more accurate r2SCAN functional. The database now accepts materials with only r2SCAN calculations, indicating a strategic shift in computational priorities [4].

- GNoME Structures: The database has integrated tens of thousands of novel crystal structures predicted by Google's GNoME (Graph Networks for Materials Exploration) project. Researchers must accept a BY-NC (non-commercial) license to access this subset of the data [4].

- Continuous Improvement: The changelog reveals an active process of quality control, including the deprecation of documents with unreasonable elastic moduli and fixes for bugs in data mapping [4]. Researchers are advised to consult the changelog to understand the precise dataset underlying their analysis.

Applications and Impact on Materials Research

The predictive data provided by the Materials Project has become a cornerstone for innovation across numerous technological domains, enabling researchers to identify promising candidate materials with unprecedented speed.

- Next-Generation Energy Storage: The database has been "particularly important for battery technology," leading to the discovery of novel ionic conductors for solid-state batteries and improved Li-ion battery materials for energy storage [3]. The Battery Explorer application within MP allows for direct computational screening of electrode materials.

- Environmental Sustainability: Research using MP data has identified materials suited for carbon dioxide (CO2) capture to mitigate greenhouse gases, contributing directly to the development of sustainable and affordable technologies [3].

- Electronic and Functional Materials: Success stories include the discovery of ferroelectrics for switches and microelectronic devices, showcasing the utility of the database for optoelectronics and other advanced applications [1] [3].

The Materials Project has successfully established itself as an indispensable, high-throughput computational engine for the global materials research community. By providing open access to pre-computed properties for hundreds of thousands of known and predicted materials, it embodies a paradigm shift from serendipitous discovery to rational, data-driven materials design. Its core mission—to dramatically accelerate the journey from a material's conception to its practical application—is supported by a robust and ever-evolving database architecture, powerful programmatic interfaces, and a commitment to data quality and currency. As the database continues to integrate more accurate computational methods and expand its scope, it will remain a foundational resource for researchers and scientists working to solve the world's most pressing technological and environmental challenges.

The accuracy of computational materials discovery is fundamentally anchored in the choice of the exchange-correlation (XC) functional within density functional theory (DFT). For over a decade, large-scale materials databases, such as the Materials Project (MP), have relied predominantly on the Generalized Gradient Approximation (GGA), often supplemented with a Hubbard U parameter (GGA+U) to better describe localized electrons in transition metal compounds [8]. While this approach has enabled the calculation of properties for hundreds of thousands of materials, GGA and GGA+U possess well-documented systematic errors, particularly related to electron self-interaction, which can lead to inaccuracies in predicting formation energies, electronic structures, and magnetic properties [8] [9]. The quest for higher fidelity has now ushered in the era of meta-GGA functionals, with the restored regularized strongly constrained and appropriately normed (r2SCAN) functional at the forefront, offering a superior balance of accuracy and numerical stability [9] [10].

This transition presents a significant practical challenge: the immense computational investment embodied in existing GGA(+U) databases. Recomputing millions of materials with the more computationally intensive r2SCAN (which has 4–5× the cost of GGA) is neither resource-efficient nor necessary, as the highest accuracy is often only critical for materials near the convex hull of stability [8]. Consequently, the materials science community requires robust methodologies to navigate this mixed-data landscape. This guide provides an in-depth technical overview of the frameworks and practices for effectively combining GGA+U and r2SCAN data, a capability that is now integral to the Materials Project database and vital for researchers pursuing inorganic materials design [11] [4].

Theoretical Foundations: Understanding the Functional Hierarchy

The Limitations of GGA and GGA+U

The Perdew-Burke-Ernzerhof (PBE) GGA functional and its GGA+U extension have been the workhorses of high-throughput DFT. However, their limitations are particularly pronounced in specific classes of materials:

- Strongly Correlated Systems: For materials with localized d or f orbitals, GGA often fails to accurately describe electronic correlations, leading to incorrect predictions of electronic and magnetic structure. GGA+U addresses this by introducing an on-site Coulomb correction, but its accuracy is highly dependent on the empirically determined

Uparameter [9] [8]. - Formation Energy Errors: The mean absolute error (MAE) in GGA(+U) formation energies is on the order of 50–200 meV/atom, even after the application of empirical corrections [8].

- Magnetic Properties: GGA tends to overestimate magnetic exchange coupling, while GGA+U tends to underestimate it, leading to corresponding inaccuracies in predicting magnetic transition temperatures [9].

The Advent of Meta-GGA: The r2SCAN Functional

The r2SCAN meta-GGA functional represents a significant step up Jacob's ladder of DFT approximations. It incorporates the kinetic energy density, allowing it to satisfy more physical constraints than GGA. Key advantages include:

- Improved Accuracy: r2SCAN has been shown to reduce errors in predicted formation energies of strongly-bound materials by approximately 50% compared to PBEsol GGA [8].

- Better Magnetic Predictions: For predicting Néel temperatures in antiferromagnetic materials, r2SCAN achieves a Pearson correlation coefficient of 0.98 with experimental values, drastically outperforming GGA and GGA+U [9].

- Numerical Stability: Unlike its predecessor SCAN, r2SCAN is designed with regularizations that mitigate numerical instabilities, making it more suitable for high-throughput computations [9] [10].

Table 1: Comparison of DFT XC Functionals for Materials Properties

| Functional | Formation Energy MAE | Typical Computational Cost | Key Strengths | Key Limitations |

|---|---|---|---|---|

| GGA (PBE) | ~50-200 meV/atom [8] | 1x (Baseline) | Broad applicability, speed | Systematic errors for correlated systems |

| GGA+U | Similar to GGA (varies with U) | ~1x | Improved description of localized states | U parameter is empirical and system-dependent |

| r2SCAN | ~25-50% lower than GGA [8] | 4-5x [8] | High accuracy for energies & magnetism | Higher computational cost, requires careful workflow |

The Mixing Scheme: A Bridge Between Levels of Theory

To leverage the existing investment in GGA(+U) calculations while incorporating the enhanced accuracy of r2SCAN, the Materials Project employs a sophisticated mixing scheme [11] [8]. The core idea is to treat electronic energies as the sum of a reference energy and a relative energy, enabling consistent cross-functional comparisons.

Core Principles of the Mixing Scheme

The scheme is built on two foundational rules [11]:

- Construction from a GGA(+U) Hull: The process begins with a convex hull built from GGA(+U) formation energies. When an r2SCAN calculation is available for a material, its formation energy is integrated by adding its relative energy difference (( \Delta E{\text{ref}} )) to the GGA(+U) reference energy (( E{\text{ref}} )) at that composition.

- Full r2SCAN Hull Construction: A convex hull can be constructed using only r2SCAN formation energies, but only if r2SCAN calculations exist for every reference structure on the stable hull. Missing GGA(+U) materials can be incorporated by adding their ( \Delta E_{\text{ref}} ) to the r2SCAN reference energy.

This approach avoids the pitfalls of "naive mixing," where simply replacing individual GGA(+U) energies with r2SCAN ones can cause severe distortions to the convex hull, such as incorrectly stabilizing or destabilizing phases [8].

Workflow for Mixed Phase Diagram Construction

The following diagram illustrates the logical workflow for applying the GGA/GGA+U/r2SCAN mixing scheme, as implemented in the Materials Project.

Practical Implementation: Calculation Workflows and Data Retrieval

The r2SCAN Calculation Workflow

The Materials Project employs a specific two-step computational workflow to generate r2SCAN data efficiently [10]:

- Initial GGA Optimization: A full structural optimization is first performed using the PBESol GGA functional. This step provides a reliable initial guess for the structure and charge density at a lower computational cost.

- Final r2SCAN Optimization: Using the PBESol-optimized structure as input, a final structural optimization is performed with the r2SCAN functional. This two-step process significantly speeds up the overall meta-GGA calculation while maintaining high accuracy.

This workflow underscores the synergistic use of different levels of theory within a single framework.

Navigating the Materials Project Database

With the introduction of the mixing scheme, users must be aware of different data fields when querying the API. The critical distinction lies in the thermo_type parameter.

Table 2: Key Data Query Types in the Materials Project API

| Thermo Type | Description | Data Origin | Use Case |

|---|---|---|---|

GGA_GGA+U_R2SCAN |

Corrected formation energy using the mixing scheme. | Mix of GGA, GGA+U, and r2SCAN data. | Default choice for accurate phase stability analysis (e.g., building phase diagrams). |

R2SCAN |

Raw, uncorrected formation energy from a standalone r2SCAN calculation. | Pure r2SCAN calculation only. | Assessing the pure r2SCAN result for a single material; not for direct mixing. |

It is crucial to note that GGA_GGA+U_R2SCAN is the recommended and default thermodynamic data type as it ensures a consistent and comparable set of energies across the database [4] [12]. A query for a material like Ag₂O (mp-353) will return a formation energy of -0.314 eV/atom with GGA_GGA+U_R2SCAN, which is the mixed value, versus -0.169 eV/atom with R2SCAN, which is the raw value [12]. Furthermore, not all materials in a chemical system may have r2SCAN calculations; the mixing scheme ensures the best possible hull is constructed from all available data [12].

For researchers embarking on projects utilizing mixed-fidelity data, the following tools and resources are essential.

Table 3: Essential Computational Tools and Resources

| Tool / Resource | Function | Relevance to GGA+U/r2SCAN Research |

|---|---|---|

| Materials Project API | Programmatic interface to query calculated material properties. | Retrieving GGA_GGA+U_R2SCAN and R2SCAN thermo_types for materials [12]. |

| pymatgen | Python library for materials analysis. | Contains compatibility classes for applying mixing scheme corrections to custom data. |

| VASP | Widely used DFT software package. | Primary engine for running r2SCAN calculations; requires specific settings for stability [9]. |

| Two-Step Workflow | PBESol optimization followed by r2SCAN optimization [10]. | Standard protocol for generating new r2SCAN data efficiently and reliably. |

| MP Documentation | Official methodology documentation. | Reference for mixing scheme details, calculation parameters, and pseudopotential choices [11] [10]. |

The development of robust mixing schemes marks a pivotal advancement in the evolution of materials databases. It allows the community to strategically integrate higher-fidelity r2SCAN data into the vast existing landscape of GGA+U calculations, thereby enhancing the accuracy of phase stability predictions without necessitating a prohibitively expensive full recomputation. This hybrid data landscape, now actively supported by the Materials Project, empowers researchers to make more reliable predictions of material thermodynamics and properties.

The future trajectory points towards an increasing prevalence of meta-GGA and hybrid functional data. As of early 2025, the Materials Project has already incorporated tens of thousands of new r2SCAN calculations, including materials from the GNoME project, and has updated its data hierarchy to prioritize GGA_GGA+U_R2SCAN thermodynamic data [4]. For the practicing materials scientist, proficiency in navigating this landscape—understanding the theoretical underpinnings, the practical workflow for calculation, and the correct methods for data retrieval—is no longer optional but essential for cutting-edge computational materials design.

A Comprehensive Guide to Structural, Electronic, Thermodynamic, and Elasticity Data in Materials Project Databases for Inorganic Materials Research

The systematic development of advanced inorganic materials relies on the integration and analysis of four fundamental classes of data: structural, electronic, thermodynamic, and elastic properties. These data types form the cornerstone of computational materials science, enabling researchers to predict material behavior, stability, and performance across diverse applications from energy storage to information technology. The emergence of large-scale materials databases such as the Materials Project (MP), Inorganic Crystal Structure Database (ICSD), and Alexandria has created unprecedented opportunities for data-driven materials discovery [13]. These repositories aggregate calculated and experimental properties for hundreds of thousands of inorganic compounds, serving as essential resources for the materials science community.

Structural data encompasses the geometric arrangement of atoms in crystal lattices, including space group symmetry, lattice parameters, and atomic coordinates. Electronic data describes how electrons are distributed and behave in materials, governing properties like electrical conductivity and optical characteristics. Thermodynamic data quantifies energy relationships and phase stability, while elastic properties describe a material's response to mechanical stress. Together, these data types provide a comprehensive framework for understanding and predicting material performance [14] [15].

The integration of machine learning with these foundational datasets has accelerated materials discovery, enabling researchers to navigate the vast compositional space of potential inorganic compounds more efficiently than traditional experimental approaches alone [16] [17]. This guide provides a technical overview of these key data types, their computational and experimental determination, and their application in inorganic materials research within the context of modern materials databases.

Structural Data

Fundamental Concepts and Significance

Structural data forms the foundational layer of materials informatics, providing the atomic-level blueprint that determines virtually all other material properties. The Inorganic Crystal Structure Database (ICSD) represents the world's largest database for fully determined inorganic crystal structures, containing crystallographic data for published inorganic and organometallic structures alongside theoretically calculated structure models [18]. With over 16,000 new entries added annually, the ICSD provides an indispensable resource for materials science and crystallography research. Recent enhancements to the ICSD include expanded representation and analysis of coordination polyhedra, uniform naming and classification of minerals, and integration of external links to additional data sources [18].

Crystal structures are typically defined by their unit cell – the repeating unit comprising atom types (chemical elements), coordinates, and periodic lattice – which collectively describe the complete symmetry and geometry of the crystalline material [13]. The accurate determination of these structural parameters enables researchers to understand and predict material behavior across diverse applications.

Computational Approaches and Databases

Table 1: Major Structural Databases for Inorganic Materials

| Database Name | Primary Focus | Number of Structures | Key Features |

|---|---|---|---|

| ICSD | Experimentally determined inorganic crystal structures | 16,000 new entries annually | Mineral standardization, coordination polyhedra analysis [18] |

| Materials Project (MP) | Computationally derived structures | >130,000 | High-throughput DFT calculations, structural relationships [13] |

| Alexandria | Enhanced computed structures | 607,683 (in Alex-MP-20 dataset) | Combined with MP data for generative modeling [13] |

| JARVIS | Computational materials data | Not specified in sources | Used for benchmarking ML models [17] |

Generative models like MatterGen represent a significant advancement in structural prediction capabilities. This diffusion-based generative model creates stable, diverse inorganic materials across the periodic table by gradually refining atom types, coordinates, and the periodic lattice through a customized diffusion process [13]. MatterGen generates structures that are more than twice as likely to be new and stable compared to previous models, with generated structures being more than ten times closer to the local energy minimum according to Density Functional Theory (DFT) calculations [13].

The structural prediction workflow typically involves generating candidate structures, which are then relaxed using DFT to find their local energy minimum. The stability is assessed by calculating the energy above the convex hull defined by reference datasets such as Alex-MP-ICSD, which contains 850,384 unique structures recomputed from MP, Alexandria, and ICSD databases [13]. A structure is considered stable if its energy per atom after relaxation is within 0.1 eV per atom above this convex hull.

Electronic Properties Data

Key Electronic Parameters and Their Determination

Electronic properties data encompasses fundamental characteristics that govern how materials interact with electrons and electromagnetic fields, critically influencing applications in electronics, optoelectronics, and energy conversion. Key electronic parameters include band gap, density of states, electronic conductivity, and magnetic properties. These properties are predominantly determined through computational approaches, particularly Density Functional Theory (DFT), which has become the standard method for predicting electronic structure of materials [14].

Band gap – the energy difference between the valence and conduction bands – determines whether a material behaves as a conductor, semiconductor, or insulator. This property can be calculated using DFT with various exchange-correlation functionals, though the accuracy depends heavily on the functional choice. For instance, screening materials for specific electronic applications often involves filtering based on band gap ranges, such as selecting materials with band gaps between 0.1-3.0 eV for semiconductor applications [19].

Machine Learning Approaches

Machine learning has emerged as a powerful tool for predicting electronic properties, significantly reducing computational costs compared to traditional DFT calculations. Ensemble machine learning frameworks based on electron configuration have demonstrated remarkable accuracy in predicting thermodynamic stability, which correlates strongly with electronic structure [17]. These models achieve high performance with significantly less data than previous approaches – requiring only one-seventh of the data used by existing models to achieve the same performance level [17].

The Electron Configuration Convolutional Neural Network (ECCNN) represents a novel approach that addresses the limited understanding of electronic internal structure in current models [17]. By using electron configuration information directly as input, ECCNN captures intrinsic atomic characteristics that influence electronic behavior with less inductive bias than models relying on manually crafted features. When combined with other models through stacked generalization in the ECSG framework, it achieves an Area Under the Curve score of 0.988 in predicting compound stability within the JARVIS database [17].

Thermodynamic Data

Fundamental Principles and Computational Methods

Thermodynamic data provides crucial information about the energy landscape and stability of materials, governing phase transitions, chemical reactions, and synthesizability. The Gibbs free energy (G) represents a central thermodynamic quantity, defining the maximum reversible work potential under constant temperature and pressure according to the fundamental equation G = E - TS, where E is the total energy, T is temperature, and S is entropy [20].

Traditional computational methods for determining thermodynamic properties include:

- Density Functional Theory (DFT): Calculates total energy from electronic structure

- Density Functional Perturbation Theory (DFPT): Extends DFT to compute vibrational properties and phonon modes essential for finite-temperature Gibbs free energy

- Molecular Dynamics and Monte Carlo simulations: Used in conjunction with thermodynamic integration for free energy calculations [20]

These methods, while accurate, are computationally demanding and time-consuming, creating bottlenecks in high-throughput materials discovery.

Machine Learning and Physics-Informed Approaches

Table 2: Thermodynamic Data Sources and Prediction Methods

| Data Source/Method | Data Type | Size | Application |

|---|---|---|---|

| NIST-JANAF Database | Experimental thermodynamic data | 694 materials | Gas phase materials at 1200K [20] |

| PhononDB | Computational phonon data | 873 materials | Metal oxide compounds at varying temperatures [20] |

| ThermoLearn PINN | Multi-output prediction | N/A | Simultaneous prediction of G, E, and S [20] |

| Ensemble ML (ECSG) | Stability prediction | N/A | AUC of 0.988 for compound stability [17] |

Physics-Informed Neural Networks (PINNs) have emerged as particularly effective for thermodynamic prediction, especially in data-limited scenarios common in materials science. The ThermoLearn model exemplifies this approach, integrating the Gibbs free energy equation directly into its loss function to simultaneously predict all three thermodynamic quantities (G, E, and S) [20]. This multi-output model demonstrates a 43% improvement in normal scenarios and even greater enhancement in out-of-distribution regimes compared to next-best models, showcasing the value of incorporating physical principles into machine learning frameworks [20].

For stability prediction specifically, ensemble machine learning methods based on stacked generalization have proven highly effective. These approaches combine models rooted in distinct domains of knowledge – such as the Magpie model (emphasizing statistical features of elemental properties), Roost (conceptualizing chemical formulas as graphs of elements), and ECCNN (based on electron configuration) – to create a super learner that mitigates individual model biases and enhances predictive performance [17].

Elastic Properties Data

Key Elastic Properties and Experimental Measurement

Elastic properties describe a material's response to mechanical stress and strain, providing crucial insights for structural applications, mechanical behavior, and even related properties like thermal conductivity. The elastic stiffness tensor (C̄̄) contains up to 21 independent coefficients (in Voigt notation) that define how stress relates to strain in the linear regime [14]. From these fundamental coefficients, derived properties include:

- Bulk modulus (B): Resistance to uniform compression

- Shear modulus (G): Resistance to shear deformation

- Young's modulus (E): Stiffness in tension/compression

- Poisson's ratio (ν): Lateral contraction per unit axial extension

Experimental determination of elastic properties employs several techniques, each with specific limitations:

- Brillouin spectroscopy: Requires transparent samples with complicated preparation

- Inelastic neutron/X-ray scattering: Time-consuming with stringent sample size requirements

- Resonant ultrasound spectroscopy (RUS): Accurate but needs large, properly oriented samples

- Impulse-stimulated light scattering: Requires multiple crystal orientations [14]

These experimental challenges make computational approaches particularly valuable for high-throughput screening of elastic properties.

Computational Prediction and Machine Learning

Table 3: Accuracy of Computational Methods for Elastic Properties

| Method | Bulk Modulus Error | Shear Modulus Error | Computational Cost | Recommendation |

|---|---|---|---|---|

| RSCAN (meta-GGA) | Most accurate overall | Most accurate overall | High | Recommended for highest accuracy [14] |

| PBESOL/Wu-Cohen (GGA) | High accuracy | High accuracy | Medium | Good balance of accuracy/speed [14] |

| PBE (GGA) | Least accurate | Least accurate | Medium | Discouraged for elastic properties [14] |

| MACE (ML potential) | ~1.5-2× worse than best DFT | ~1.5-2× worse than best DFT | 3-4 orders faster | Recommended for high-throughput [14] |

| CGCNN | MAE <13, R² ≈1 [19] | MAE <13, R² ≈1 [19] | Low | Suitable for large-scale prediction [19] |

Crystal Graph Convolutional Neural Networks (CGCNNs) have demonstrated remarkable effectiveness in predicting elastic properties of inorganic crystals. Recent studies trained two CGCNN models using shear modulus and bulk modulus data of 10,987 materials from the Matbench v0.1 dataset, achieving high accuracy (mean absolute error <13, coefficient of determination R² close to 1) with good generalization ability [19] [21]. These models were subsequently applied to predict elastic properties for 80,664 inorganic crystals, significantly expanding available elastic data resources for material design [19].

The selection of exchange-correlation functionals in DFT calculations significantly impacts the accuracy of computed elastic properties. Meta-GGA functionals like RSCAN provide the most accurate description overall, closely followed by PBESOL or Wu-Cohen GGA formulations [14]. The commonly used PBE functional offers the least accurate representation of elastic properties, making it poorly suited for such calculations despite its popularity for other material properties [14].

Experimental Protocols and Methodologies

Density Functional Theory Calculations

DFT represents the foundational computational method for determining structural, electronic, thermodynamic, and elastic properties of inorganic materials. The standard workflow involves:

Geometry Optimization: The crystal structure is relaxed to its ground state configuration by minimizing forces on atoms and stresses on the unit cell. This typically employs the PBE functional or more advanced functionals like PBESOL or RSCAN for improved accuracy [14].

Property Calculation: Once the ground state structure is obtained, various properties are computed:

- Electronic properties: Band structure, density of states using DFT with appropriate exchange-correlation functionals

- Elastic properties: Calculating the elastic stiffness tensor by applying small strains and determining the stress response

- Thermodynamic properties: Using Density Functional Perturbation Theory to obtain phonon dispersion and related thermal properties [20] [14]

Stability Assessment: Formation energies are calculated and used to construct convex hull diagrams to determine thermodynamic stability relative to competing phases [17] [13].

For accurate elastic property calculation, specific protocols have been established. Plane wave cut-off energies typically range from 330 to 800 eV based on convergence tests for relevant chemical elements, with k-point spacings of 0.04-0.05 Å⁻¹ ensuring well-converged results. Ultrasoft pseudopotentials generated on-the-fly using consistent exchange-correlation functionals maintain calculation consistency [14].

Machine Learning Implementation

Machine learning approaches for materials property prediction follow distinct protocols based on model architecture:

Ensemble Stability Prediction (ECSG Framework):

- Input Representation: Materials are represented through three complementary descriptors:

- Magpie: Statistical features of elemental properties

- Roost: Graph representation of chemical formulas

- ECCNN: Electron configuration matrix (118×168×8) [17]

Model Architecture:

- ECCNN employs two convolutional operations with 64 filters (5×5)

- Batch normalization and 2×2 max pooling after second convolution

- Fully connected layers for final prediction [17]

Stacked Generalization: Base model outputs are used as inputs to a meta-level model that produces final predictions [17]

CGCNN for Elastic Properties:

- Graph Representation: Crystals are represented as graphs with atoms as nodes and edges connecting nearby atoms

- Convolutional Layers: Multiple graph convolutional layers capture local chemical environments

- Pooling and Readout: Global pooling combines atom features into crystal-level representations for property prediction [19]

Computational Materials Discovery Workflow: This diagram illustrates the integrated computational approaches for predicting material properties and stability.

Generative Materials Design

The MatterGen framework implements a sophisticated protocol for inverse materials design:

Diffusion Process: Customized diffusion gradually refines atom types, coordinates, and periodic lattice

- Coordinate diffusion uses wrapped Normal distribution respecting periodic boundaries

- Lattice diffusion approaches cubic lattice with average atomic density

- Atom types diffused in categorical space with masked states [13]

Adapter Modules for Fine-tuning: Tunable components injected into each layer enable conditioning on property labels

- Classifier-Free Guidance: Steers generation toward target property constraints [13]

Validation involves DFT relaxation of generated structures and assessment against the convex hull of reference datasets. Successful structures demonstrate energy within 0.1 eV per atom above the convex hull and low RMSD (<0.076 Å) from DFT-relaxed structures [13].

Research Reagent Solutions: Computational Tools and Databases

Table 4: Essential Computational Resources for Inorganic Materials Research

| Resource Name | Type | Primary Function | Access |

|---|---|---|---|

| Materials Project | Database | DFT-calculated properties for >130,000 materials | https://materialsproject.org [13] |

| ICSD | Database | Experimentally determined crystal structures | https://icsd.products.fiz-karlsruhe.de [18] |

| CASTEP | Software | DFT calculation with plane wave basis set | Commercial [14] |

| Phonopy | Software | Phonon calculations for thermodynamic properties | Open source [20] |

| CGCNN | Software/Model | Graph neural network for property prediction | Open source [19] |

| MatterGen | Generative Model | Stable material generation with property control | Not specified [13] |

| ThermoLearn | PINN Model | Multi-output thermodynamic prediction | https://github.com/Sudo-Raheel/ThermoLearn [20] |

These computational tools and databases form the essential "research reagents" for modern inorganic materials science. The Materials Project provides comprehensive DFT-calculated data for over 130,000 materials, serving as a foundational resource for high-throughput screening and machine learning [13]. The ICSD offers the largest collection of experimentally determined inorganic crystal structures, essential for validating computational predictions and understanding real-world material systems [18].

Specialized software packages enable specific property calculations: CASTEP implements DFT with various exchange-correlation functionals optimized for different material classes [14], while Phonopy computes phonon dispersion and thermodynamic properties essential for finite-temperature behavior [20]. Emerging machine learning tools like CGCNN provide fast, accurate property predictions, and generative models like MatterGen enable inverse design of materials with targeted characteristics [19] [13].

Materials Data Ecosystem: This diagram shows the relationships between data sources, computational methods, and property outputs in inorganic materials research.

The integration of these resources creates a powerful ecosystem for materials discovery, where traditional computational methods provide training data for machine learning models, which in turn enable rapid screening and generative design of novel materials with targeted properties. This synergistic approach accelerates the materials development cycle from years to months or weeks, particularly for applications in energy storage, catalysis, and electronic devices [16] [15].

The field of computational materials science is undergoing a transformative shift, driven by the convergence of large-scale deep learning and advanced quantum mechanical calculations. This whitepaper examines two of the most significant recent developments: the massive expansion of predicted stable materials through the GNoME (Graph Networks for Materials Exploration) project and the growing adoption of the r2SCAN density functional as a new standard for accuracy in materials databases. These developments are reshaping the Materials Project database, a cornerstone resource for inorganic materials research, enabling unprecedented exploration of chemical space and more reliable prediction of functional materials for applications from clean energy to information processing.

The GNoME Project: Scaling Deep Learning for Materials Discovery

The GNoME project represents a breakthrough in applying deep learning to materials discovery, achieving an order-of-magnitude improvement in prediction efficiency. By scaling up graph neural networks through large-scale active learning, GNoME has expanded the number of known stable crystals from approximately 48,000 to over 421,000—an almost tenfold increase in humanity's catalog of stable inorganic crystals [22]. This expansion includes the discovery of 2.2 million crystal structures deemed stable with respect to previous computational and experimental databases, with 381,000 of these occupying the updated convex hull of truly novel materials [22] [23].

The project employed two complementary discovery frameworks: a structure-based approach that modified existing crystals using advanced substitution techniques, and a composition-based approach that predicted stability from chemical formulas alone before generating candidate structures [22]. Through six rounds of active learning, where model predictions were verified using Density Functional Theory (DFT) calculations and then incorporated into subsequent training, the GNoME models achieved unprecedented accuracy, predicting energies to 11 meV atom⁻¹ and achieving a precision rate of over 80% for structure-based stable predictions [22].

Methodological Advances

Candidate Generation and Filtration

GNoME's success stems from its sophisticated candidate generation and filtration strategies, which enabled efficient exploration of combinatorially vast chemical spaces:

Symmetry-Aware Partial Substitutions (SAPS): This novel framework generalized common substitution approaches by enabling partial replacements of ions while respecting crystal symmetry. Using Wyckoff positions obtained through symmetry analysis, SAPS allowed partial replacements from 1 to all atoms of a candidate ion, considering only unique symmetry groupings at each level to control combinatorial growth [23]. This method proved particularly valuable for discovering complex structures like double perovskites (A₂BB′O₆) that would not be found through complete ionic substitutions [23].

Relaxed Oxidation-State Constraints: For compositional discovery, GNoME introduced relaxed constraints on oxidation-state balancing instead of strict oxidation-state balancing, which had previously limited discovery of materials like Li₁₅Si₄ that deviate from conventional valence rules [23].

Enhanced Substitution Probabilities: The team modified probabilistic models for ionic species substitution to prioritize novel discovery rather than likely substitutions based on existing data. By setting minimum probability values to zero and thresholding high-probability substitutions, the framework enabled efficient exploration of composition space through branch-and-bound algorithms [23].

Model Architecture and Training

GNoME utilized graph neural networks (GNNs) that treated crystal structures as graphs with atoms as nodes and bonds as edges. The models employed a message-passing formulation where aggregate projections were shallow multilayer perceptrons (MLPs) with swish nonlinearities [22]. A critical architectural insight was normalizing messages from edges to nodes by the average adjacency of atoms across the entire dataset, which significantly improved performance—reducing the mean absolute error (MAE) from the previous benchmark of 28 meV atom⁻¹ to 21 meV atom⁻¹ on the initial training set from the Materials Project [22].

The iterative active learning process served as a data flywheel, with each round of DFT verification improving subsequent model performance. The final models demonstrated emergent out-of-distribution generalization, accurately predicting structures with five or more unique elements despite their underrepresentation in the initial training data [22].

Key Findings and Experimental Validation

The GNoME discoveries have substantially diversified the known space of inorganic crystals. The project identified many materials with more than four unique elements—a region of chemical space that had previously proven difficult to explore [22]. Prototype analysis revealed that GNoME added more than 45,500 novel prototypes, representing a 5.6-fold increase beyond the approximately 8,000 prototypes previously known from the Materials Project [22].

Experimental validation confirmed the predictive power of the approach: 736 of the GNoME-predicted stable structures had already been independently experimentally realized, providing strong confirmation of the method's accuracy [22]. The phase-separation energy (decomposition enthalpy) distribution of discovered quaternary materials closely matched that of the Materials Project, indicating that the new materials are meaningfully stable with respect to competing phases rather than merely "filling in the convex hull" with marginally stable compounds [22].

Table: Key Quantitative Outcomes of the GNoME Project

| Metric | Pre-GNoME | Post-GNoME | Improvement |

|---|---|---|---|

| Known stable crystals | ~48,000 | 421,000 | ~10x |

| Novel stable crystals on convex hull | - | 381,000 | - |

| Prediction error (energy) | 28 meV atom⁻¹ (benchmark) | 11 meV atom⁻¹ | ~2.5x |

| Hit rate (structure-based) | <6% (initial) | >80% (final) | >13x |

| Hit rate (composition-based) | <3% (initial) | 33% (final) | >11x |

| Novel prototypes | ~8,000 | >45,500 | ~5.6x |

The r2SCAN Functional: A New Standard for Accuracy

Theoretical Background and Advantages

The r2SCAN (regularized strongly constrained and appropriately normed) functional is a meta-GGA density functional that addresses fundamental limitations of the GGA (Generalized Gradient Approximation) and GGA+U functionals that have traditionally dominated materials databases. While GGA and GGA+U calculations are computationally efficient, they exhibit significant limitations, including:

- Self-interaction error: Improper treatment of electron self-repulsion, particularly problematic for localized d and f electrons [24]

- Inaccurate formation energies: Mean absolute errors of ~194 meV/atom for the PBE GGA functional, with particularly large errors in oxides and strongly bound systems [24]

- Non-universal Hubbard U: The +U correction is semi-empirical and system-dependent, with no precise definition of an "optimal" U value [24]

The r2SCAN functional achieves better numerical stability than its predecessor SCAN while maintaining high accuracy, with MAE of ~84 meV/atom for formation energies [24]. It provides more accurate predictions of formation energies, crystal volumes, magnetism, and band gaps, particularly for strongly bound compounds [24].

Integration into Materials Project Database

The Materials Project has progressively integrated r2SCAN calculations into its database, representing a significant shift in its computational approach:

- v2022.10.28: Initial incorporation of (R2)SCAN calculations as pre-release data, making them available for advanced users alongside default GGA(+U) data [4]

- v2024.12.18: Major update adding 15,483 GNoME-originated materials calculated using r2SCAN and modifying the definition of a "valid material" to accept those with only r2SCAN calculations [4]

- v2025.04.10: Addition of 30,000 GNoME-originated materials calculated using r2SCAN [4]

- Thermodynamic data hierarchy: Implementation of a new preference order for thermodynamic data: GGAGGA+UR2SCAN > r2SCAN > GGA_GGA+U, resolving display issues for materials with valid thermodynamic data that failed previous mixing schemes [4]

This integration has required new approaches to data management, particularly regarding the GNoME structures, which are licensed for non-commercial use (BY-NC) and now require explicit acceptance of this license for access through Materials Project interfaces and APIs [4].

Table: r2SCAN Integration Timeline in Materials Project Database

| Database Version | Release Date | Key r2SCAN Additions |

|---|---|---|

| v2022.10.28 | October 2022 | Initial pre-release data available |

| v2024.12.18 | December 2024 | 15,483 GNoME r2SCAN materials; acceptance of materials with only r2SCAN calculations |

| v2025.02.12 | February 2025 | 1,073 Yb materials recalculated with Yb_3 pseudo-potential and r2SCAN |

| v2025.04.10 | April 2025 | 30,000 GNoME r2SCAN materials |

Cross-Functional Transferability in Machine Learning Interatomic Potentials

The Transfer Learning Challenge

The coexistence of GGA/GGA+U and r2SCAN data in materials databases has created both opportunities and challenges for developing machine learning interatomic potentials (MLIPs). Foundation potentials (FPs) such as CHGNet, M3GNet, and GNoME have demonstrated remarkable transferability across diverse chemical spaces, but they inherently inherit the limitations of their training data [24] [25].

The central challenge lies in the significant energy scale shifts and poor correlation (Pearson ρ ≈ 0.09) between GGA/GGA+U and r2SCAN total energies [24] [25]. These energy differences can reach tens of eV per atom—far beyond the precision targets of MLIPs (≈30 meV/atom)—creating a "negative transfer" problem where fine-tuning GGA-trained models directly on r2SCAN data actually degrades performance [25].

Energy Referencing Solution

Recent research has identified that the core issue stems from different energy references between functionals rather than fundamental physics discrepancies. The solution involves elemental energy referencing through a multi-step process:

- Pre-training: Train foundation potential (e.g., CHGNet) on extensive GGA/GGA+U data

- Reference calculation: Compute r2SCAN atomic reference energies (E_{AtomRef}^{r2SCAN}) via least-squares fitting using a subset of r2SCAN calculations

- Reference substitution: Replace the GGA AtomRef with the r2SCAN values in the pre-trained model

- Fine-tuning: Freeze the AtomRef and fine-tune the neural network components on r2SCAN data [25]

This approach aligns the energy scales before fine-tuning, reducing the initial prediction error from tens of eV to within tens of meV [25]. After alignment, the residuals between functionals show strong correlation (ρ ≈ 0.93), enabling stable and efficient transfer learning [25].

Performance and Data Efficiency

The energy referencing strategy dramatically improves data efficiency. With only 1,000 r2SCAN structures, transfer learning with proper energy referencing matches the accuracy achieved by training from scratch on 10,000 structures [25]. At full scale, this approach achieves energy MAE of 11.8 meV/atom and force MAE of approximately 36 meV/Å [25].

The scaling law analysis reveals a power-law relationship between dataset size and error, with transfer learning providing consistently lower errors across all dataset sizes compared to training from scratch [25]. This demonstrates that low-fidelity data builds the foundational chemical knowledge, while high-fidelity data enables refinement—when proper energy alignment is maintained.

Experimental Protocols and Workflows

GNoME Discovery Workflow

The GNoME materials discovery process follows a structured, iterative workflow that combines candidate generation, neural network filtration, and DFT verification.

Diagram Title: GNoME Active Learning Workflow

The workflow begins with candidate generation using two parallel approaches. The structural pipeline employs symmetry-aware partial substitutions (SAPS) and enhanced probabilistic substitutions to generate novel crystal structures from existing materials [23]. Simultaneously, the compositional pipeline uses relaxed oxidation-state constraints to generate novel chemical formulas, then creates 100 random structures for each promising composition using ab initio random structure searching (AIRSS) [22].

Candidate structures are filtered through GNoME neural networks using volume-based test-time augmentation and uncertainty quantification through deep ensembles [22]. Promising candidates are clustered, and polymorphs are ranked for DFT evaluation using standardized Materials Project settings in VASP (Vienna Ab initio Simulation Package) [22]. Successfully relaxed structures are added to the database and incorporated into the training set for the next active learning round, creating the iterative improvement cycle.

Cross-Functional Transfer Learning Protocol

For machine learning interatomic potentials, the cross-functional transfer learning protocol enables effective knowledge transfer from GGA to r2SCAN functionals.

Diagram Title: Cross-functional Transfer Learning Steps

The protocol begins with pre-training a foundation potential (FP) such as CHGNet on large-scale GGA/GGA+U datasets [25]. The critical step involves calculating r2SCAN-specific atomic reference energies (E{AtomRef}^{r2SCAN}) by solving the least-squares equation E{AtomRef} = (AᵀA)⁻¹AᵀEDFT, where A is the composition matrix and EDFT is the vector of r2SCAN total energies for a set of representative structures [25]. These reference energies are then substituted into the pre-trained model, effectively shifting the energy baseline to align with r2SCAN. Finally, with the atomic reference energies frozen, the graph neural network components are fine-tuned on the target r2SCAN data, enabling the model to learn the residual corrections while maintaining proper energy scaling.

Table: Key Computational Resources for GNoME and r2SCAN Research

| Resource/Software | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| GNoME Models | Deep Learning Models | Prediction of crystal stability and formation energies | Provides state-of-the-art stability predictions; enables high-throughput screening of novel materials [22] [23] |

| r2SCAN Functional | Quantum Mechanical Method | High-fidelity DFT calculations | Offers improved accuracy for formation energies, electronic properties, and strongly-bound systems [24] |

| VASP (Vienna Ab initio Simulation Package) | DFT Software | First-principles quantum mechanical calculations | Used for DFT verification in GNoME active learning; industry standard for materials simulation [22] |

| CHGNet/M3GNet | Foundation Potentials | Machine learning interatomic potentials | Pre-trained models that can be fine-tuned for specific applications; enable rapid molecular dynamics [24] [25] |

| pymatgen | Python Library | Materials analysis and crystal generation | Provides symmetry analysis for SAPS; materials compatibility analysis; workflow management [23] |

| Materials Project API | Data Interface | Programmatic access to materials data | Enables retrieval of GNoME structures, r2SCAN calculations, and thermodynamic data [4] |

The integration of GNoME materials and r2SCAN calculations represents a paradigm shift in the Materials Project database and computational materials science broadly. The GNoME project has demonstrated that scaled deep learning can overcome traditional discovery bottlenecks, while the transition to r2SCAN reflects the field's increasing emphasis on accuracy and reliability. The development of cross-functional transfer learning protocols further bridges these advances, enabling the community to leverage existing GGA-based knowledge while advancing toward higher-fidelity computational materials design. As these technologies mature, they promise to accelerate the discovery of functional materials for energy storage, catalysis, electronics, and other critical applications, fundamentally expanding the boundaries of materials innovation.

In the context of inorganic materials research, particularly within materials project databases, data provenance refers to the comprehensive documentation of the origin, history, and methodological lineage of a data point. It encompasses the complete narrative of how data was generated, what materials and processes were involved, and any transformations or analyses it underwent. In simpler terms, provenance details the "who, what, when, where, and how" of data creation and handling. As biological research has recognized, these details "determine an experiment’s results, specify how it can be reproduced, and condition our analyses and interpretations" [26]. The core challenge in modern materials science is effectively distinguishing between computationally-predicted data, derived from quantum mechanical calculations or machine learning models, and experimental data, obtained through direct physical measurement and characterization.

The absence of robust provenance tracking creates significant reproducibility crises. When data is shorn of its immediate context, the methodological information that was transparent to the original researcher becomes difficult to reconstruct, even by others within the same research group [26]. This reconstruction often relies on "private communications, rereading notebook entries, polling one's own or a group's collective memory" – all methods that are notoriously unreliable [26]. For materials project databases serving diverse researchers and drug development professionals, establishing clear provenance is not merely administrative but fundamental to scientific integrity, enabling users to assess data quality, understand limitations, and build upon existing research with confidence.

Methodologies for Provenance Capture

Core Principles for Provenance Management

Establishing effective provenance requires adherence to several foundational principles. The most critical is real-time capture: provenance information must be recorded as the experiment or computation is planned, performed, and analyzed [26]. Post-hoc annotation is notoriously unreliable and often incomplete. The system must be designed for simplicity and integration with the researcher's natural workflow; the easier and more helpful the capture process is to the experimentalist or computationalist, the more routinely it will be adopted [26]. Furthermore, provenance frameworks must be flexible and extensible to accommodate the wide variety of experimental and computational practices across inorganic materials science without imposing stifling standards.

A successful implementation, as demonstrated in a nine-year maize genetics study, relies on a joint effort between experimentally and computationally inclined researchers [26]. Experimentalists must repeatedly demonstrate their workflows and critically test prototypes, while computationalists must observe these processes, identify unstated assumptions, and design minimally intrusive, efficient capture systems. This collaborative, iterative approach maximizes the practicality and adoption of the provenance framework.

Tracking Experimental Data Provenance

For experimental data pertaining to inorganic materials, provenance capture must document the entire lifecycle from synthesis to characterization.

- Unique Identification System: The heart of a robust provenance system is a unique identifier for every physical object and sample that contributes to data production [26]. This includes precursor materials, synthesized compounds, and characterized samples. Identifiers should be mnemonic, distinguishing between types of objects (e.g., 'S' for sample, 'P' for powder precursor) and should contain redundant information to guard against loss [26].

- Contemporaneous Data Recording: Every action or datum involving a tracked object should be recorded at the moment of the action or shortly thereafter, using systems like barcodes scanned into a spreadsheet on a tablet [26]. This contemporaneous collection is a primary safeguard against data corruption or loss.

- Detailed Methodological Capture: The provenance record must extend beyond sample identity to include the full experimental context:

- Synthesis Parameters: Precursors, concentrations, temperatures, atmospheres, durations, and equipment used.

- Processing Conditions: Annealing temperatures, pressing pressures, milling times, etc.

- Characterization Techniques and Settings: For XRD, include the instrument model, radiation source, scan range, and step size. For spectroscopy, document the laser wavelength, power, and grating.

Tracking Computational Data Provenance

For computationally-predicted data, provenance must capture the digital workflow with similar rigor.

- Software and Code Versioning: Document the exact software versions (e.g., VASP 6.3.0, Quantum ESPRESSO 7.2), including any patches or modifications. For in-house scripts, use version control systems (e.g., Git) and record the specific commit hash.

- Input Parameters and Potentials: Record all input parameters for the calculation, such as exchange-correlation functionals (e.g., PBE, SCAN), convergence criteria (energy, force), k-point meshes, and cutoff energies. Critically, document the pseudopotential or basis set used, including its source and version.

- Workflow and Post-Processing: Capture the sequence of computational steps, such as structure relaxation followed by electronic structure analysis. Any post-processing scripts or transformations applied to the raw output data must also be recorded to ensure the final reported value is fully traceable.

Visualization of Provenance Workflows

To make the complex relationships within provenance data understandable, clear visualizations are essential. The following diagrams, created using Graphviz with an accessible color palette, illustrate core workflows for managing and distinguishing data types.

Integrated Provenance Tracking Workflow

The following diagram outlines the overarching system for capturing and distinguishing computational and experimental data provenance within a materials database.

Diagram 1: Integrated workflow for computational and experimental data provenance.

Experimental Data Provenance Chain

This diagram details the specific chain of custody and transformation for experimental data, highlighting key tracking points.

Diagram 2: Detailed provenance chain for experimental data generation.

Data Presentation and Comparison Standards

Quantitative Data Comparison Framework

When presenting data within a materials database, especially for comparison between computational predictions and experimental validation, it is crucial to use appropriate numerical summaries and visualizations. The goal is to summarize the data for each group (e.g., computed vs. measured) and compute the differences between their central values [27].

Table 1: Numerical Summary for Comparing Computated and Experimental Lattice Parameters of a Perovskite Oxide

| Data Source | Sample Size (n) | Mean Lattice Parameter (Å) | Standard Deviation (Å) | Median (Å) | IQR (Å) |

|---|---|---|---|---|---|

| DFT Calculations (PBE) | 15 | 3.95 | 0.04 | 3.94 | 0.05 |

| Experimental (Literature) | 28 | 3.92 | 0.07 | 3.91 | 0.09 |

| Difference (Comp - Exp) | - | +0.03 | - | +0.03 | - |

The table structure follows established practices for relational research questions, clearly separating summaries for each group and highlighting the difference between them [27]. Notice that standard deviation and sample size are not provided for the difference, as these metrics lack meaning for a single comparative value [27].

Visualization for Comparative Data Analysis

Selecting the correct graph is vital for effective comparison. The choice depends on the data type, the number of groups, and the story you need to tell [28] [29].

Table 2: Selection Guide for Comparative Data Visualization

| Visualization Type | Primary Use Case | Best for Data Complexity | Accessibility Considerations |

|---|---|---|---|

| Boxplots [27] | Comparing distributions and identifying outliers across multiple groups. | Moderate to large datasets. | Use patterns or shapes in addition to color for different groups [30]. Ensure contrast ratio of 3:1 for adjacent data elements [30]. |

| Bar Charts [28] | Comparing categorical data or summary statistics (e.g., mean values) across groups. | Limited number of categories. | Directly label bars where possible instead of relying only on a color legend [30]. |

| Line Charts [28] | Displaying trends or changes in a variable over a continuous interval (e.g., temperature). | Time-series or sequential data. | Use different line styles (dashed, dotted) and markers in addition to color [30]. |

| 2-D Dot Charts [27] | Comparing individual data points across a few groups; ideal for small datasets. | Small to moderate amounts of data. | Ensure sufficient contrast between dots and background (4.5:1 for text) [31]. |

For the data summarized in Table 1, a boxplot would be the most appropriate choice as it effectively shows the distribution, central tendency, and spread of both the computational and experimental data sets, allowing for a direct visual comparison [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

For experimental research in inorganic materials, particularly synthesis and characterization, a standard set of reagents and tools is fundamental. The following table details key items and their functions, which should be meticulously tracked as part of experimental provenance.

Table 3: Essential Research Reagents and Materials for Inorganic Synthesis

| Item/Reagent | Function / Purpose | Key Provenance Tracking Parameters |

|---|---|---|

| Metal Salt Precursors (e.g., Acetates, Nitrates, Chlorides) | Source of metal cations in the final inorganic compound. | Supplier, Purity (%), Lot Number, Chemical Formula, Molecular Weight. |

| Solvents (e.g., Water, Ethanol, Toluene) | Medium for chemical reactions and purification processes. | Supplier, Purity, Grade (e.g., ACS, Anhydrous), Lot Number. |

| Fuel Agents (e.g., Glycine, Urea) | Used in combustion synthesis methods to initiate and sustain exothermic reaction. | Supplier, Purity, Lot Number. |

| Gases (e.g., Argon, Nitrogen, Oxygen, Hydrogen) | Creating inert atmospheres or specific reactive environments during synthesis. | Supplier, Purity (e.g., 99.999%), Composition of gas mixture. |

| Crucibles & Boats (e.g., Alumina, Platinum) | Containers for high-temperature solid-state reactions. | Material composition, Volume/Capacity, Supplier. |

| Barcode/Labeling System | Provides unique identifiers for all samples and precursors, enabling traceability [26]. | Identifier schema, Label material (e.g., heat-resistant tags). |

| Electronic Lab Notebook (ELN) | Central digital platform for recording procedures, observations, and linking to data files. | Software name, Version, Data export format. |

The consistent use and documentation of these materials form the bedrock of reproducible experimental science. The unique identifier system, in particular, links these physical materials directly to the digital data they help generate, creating an auditable trail from raw powder to published result [26].

Practical Implementation: Accessing and Applying MP Data in Research Workflows

The Materials Project (MP) is a decade-long effort from the Department of Energy to compute and make publicly available the properties of inorganic crystals and molecules, with the goal of accelerating materials discovery for applications such as better batteries, solar energy, catalysts, and more [1]. At the heart of this initiative is the Materials Project Application Programming Interface (API), which provides programmatic access to this wealth of computationally generated data. For researchers, scientists, and development professionals working with inorganic materials, the MP API serves as a critical gateway to structured materials data that can inform research directions, validate hypotheses, and provide computational context for experimental work.

Installation and Initial Setup

Package Installation

The MP API is accessed through the mp-api Python client. Installation is straightforward using pip:

Alternatively, for those who prefer installation from source, the package can be installed directly from the repository:

API Key Authentication

To use the API, you must obtain a unique API key from your Materials Project account dashboard after logging into the website [32]. The preferred method for instantiating the client uses Python's context manager for proper session management, with two primary authentication approaches:

Option 1: Direct API Key Passing

Option 2: Environment Variable

Table: Authentication Methods Comparison

| Method | Implementation | Security Consideration |

|---|---|---|

| Direct Key Passing | API key passed as string argument | Key visible in code |

| Environment Variable | Key set in MPAPIKEY environment variable | More secure, keeps key out of codebase |

Core API Endpoints for Materials Research

The MP API organizes data into specialized endpoints, each serving specific types of materials data [32]. Understanding these endpoints is crucial for efficient data retrieval.

Table: Essential API Endpoints for Materials Research

| Endpoint | Document Model | Primary Research Application |

|---|---|---|

/materials/summary |

SummaryDoc | Materials screening and property filtering |

/materials/electronic_structure |

ElectronicStructureDoc | Band structure, density of states, electronic properties |

/materials/thermo |

ThermoDoc | Thermodynamic properties and phase stability |

/materials/xas |

XASDoc | X-ray absorption spectroscopy data |

/materials/elasticity |

ElasticityDoc | Mechanical properties and elastic constants |

/materials/surface_properties |

SurfacePropDoc | Surface energies and properties |

/materials/synthesis |

SynthesisSearchResultModel | Synthesis recipes and conditions |

Basic Query Patterns and Methodologies

Querying by Material IDs

The most straightforward query retrieves data for specific Materials Project identifiers:

This methodology is particularly useful when researchers have identified specific materials of interest through the MP website or previous research and need to retrieve comprehensive data for further analysis.

Property-Based Filtering

For materials discovery applications, property-based filtering enables identification of materials meeting specific criteria:

This query identifies all silicon-oxygen compounds with band gaps between 0.5 eV and 1.0 eV, demonstrating a common materials screening workflow for electronic applications.

Field Selection for Efficient Data Retrieval

To optimize data retrieval performance, especially when dealing with large datasets, it's recommended to specify only the required fields:

This methodology significantly improves response times by reducing data transfer. The fields_not_requested attribute of returned documents indicates which available fields were excluded.

Determining Computational Methods and Data Provenance

A critical aspect of using computational materials data is understanding which density functional theory (DFT) functional was used for structure relaxation and property calculation [5]. Different functionals (PBE, PBE+U, r2SCAN) have varying accuracies for different material systems.

This experimental protocol enables researchers to trace property data to its computational source, with run_type values of "GGA" indicating PBE, "GGA_U" indicating PBE+U, and "r2SCAN" indicating r2SCAN calculations [5].

Experimental vs Computational Data Distinction

Understanding the nature of MP data is crucial for appropriate research application. The majority of data served by the MP API is computationally predicted [6]. The theoretical tag in material documents indicates whether a material has an experimental counterpart in databases like ICSD, but this refers specifically to the structure comparison, not the properties.

Most property data on MP is computationally derived, though some experimentally obtained data exists in specific contexts:

- Thermodynamical data through the "Thermo" app and corresponding API endpoints

- Ion energies for Pourbaix diagram construction

- Reference enthalpies of formation in the Reaction Calculator

- Curated experimental datasets available through the MPContribs portal [6]

The Researcher's Toolkit: Essential API Concepts

Table: Key Concepts for Effective API Utilization

| Concept/Tool | Function in Research | Implementation Example |

|---|---|---|

| Material ID (mp-id) | Unique identifier for specific polymorphs | "mp-149" for silicon |

| Task ID | Reference to individual calculation | Tracing property provenance |

| Field Filtering | Optimizing data retrieval performance | fields=["material_id", "band_gap"] |

| Element Queries | Screening by chemical composition | elements=["Si", "O"] |

| Property Ranges | Filtering materials by property values | band_gap=(0.5, 1.0) |

| Convenience Functions | Simplified access to common data | get_structure(), get_dos() |

Advanced Query Methodologies

Not all MP data is available through the summary endpoint. Specialized data requires accessing specific endpoints:

Chemical System Queries with Functional Specification

For researchers requiring data from specific computational methods:

This methodology ensures consistency in computational method selection across a chemical system, important for comparative studies.