Inorganic Crystal Structure Prediction: From Foundational Principles to AI-Driven Discovery in Materials and Pharmaceuticals

This article provides a comprehensive overview of the principles and modern practices of inorganic crystal structure prediction (CSP), tailored for researchers, scientists, and drug development professionals.

Inorganic Crystal Structure Prediction: From Foundational Principles to AI-Driven Discovery in Materials and Pharmaceuticals

Abstract

This article provides a comprehensive overview of the principles and modern practices of inorganic crystal structure prediction (CSP), tailored for researchers, scientists, and drug development professionals. It begins by exploring the foundational challenges of navigating complex energy landscapes and the historical context of the field. The core of the article details the methodological spectrum, from established ab initio and global search algorithms to the transformative impact of machine learning interatomic potentials (MLIPs) and generative AI. It further addresses critical troubleshooting and optimization strategies for improving accuracy and computational efficiency, including error quantification and handling of complex systems like hydrates. Finally, the article establishes rigorous validation and benchmarking frameworks, such as the CSPBench suite, to objectively evaluate algorithmic performance. This guide synthesizes these elements to demonstrate how robust and accelerated CSP is enabling targeted materials design and de-risking pharmaceutical development.

The CSP Challenge: Navigating Energy Landscapes and Historical Foundations

Predicting the crystal structures of inorganic and organic materials from first principles represents one of the most formidable challenges in computational materials science and chemistry. The ability to accurately determine how atoms arrange themselves into periodic crystal lattices would revolutionize fields ranging from pharmaceutical development to the design of advanced functional materials. In pharmaceuticals, crystal structures directly influence critical properties such as drug solubility, stability, and bioavailability [1] [2]. For functional materials like organic semiconductors, electronic conductivity varies significantly with molecular arrangement, making crystal structure control paramount for achieving desired electronic properties [1]. Despite decades of research, crystal structure prediction (CSP) remains a grand challenge due to the vastness of chemical space, the subtlety of interatomic interactions, and the complex energy landscapes that contain numerous local minima [3] [4].

The core challenge of CSP lies in identifying the most stable crystal structure from an astronomical number of possible arrangements. For even relatively simple molecules, the number of possible packing arrangements can be enormous, and the energy differences between competing polymorphs are often small—typically less than a few kilojoules per mole [3]. This precision requirement demands computational methods of exceptional accuracy. Recent advances have begun to transform CSP from a theoretical exercise into a more reliable and actionable procedure that can be used in combination with experimental evidence to direct crystal form selection and establish control [3]. This whitepaper examines the current state of CSP methodologies, with particular emphasis on machine learning and free-energy calculation approaches that are redefining the field's capabilities.

Fundamental Challenges in Crystal Structure Prediction

The Polymorphism Problem and Energy Landscape Complexity

The phenomenon of polymorphism—where the same chemical compound can exist in multiple crystal structures—presents a fundamental challenge for CSP. These different polymorphs can exhibit markedly different physical properties, with significant implications for material performance and regulatory approval. The case of ritonavir, an antiviral drug where a previously unknown polymorph emerged with dramatically reduced solubility, exemplifies the serious consequences of incomplete polymorph prediction [3]. The computational difficulty arises from the fact that crystal energy landscapes often contain multiple structures with very similar lattice energies but significantly different packing arrangements.

The stability relationships between polymorphs can be monotropic (one form is always the most stable) or enantiotropic (the relative stability changes with temperature). accurately mapping these relationships requires free-energy calculations that account for temperature effects, not just static lattice energies [3]. For inorganic materials, additional complexity arises from the need to consider diverse bonding types—including metallic, ionic, and covalent bonding—often within the same material. The vastness of the chemical space to be explored has been described as "akin to exploring a multidimensional surface, one step at a time" [4].

Limitations of Conventional Computational Approaches

Traditional CSP methods have relied heavily on density functional theory (DFT) calculations and force fields for structure relaxation. While DFT can provide accurate results depending on the calculation level, it is computationally expensive, time-consuming, and requires extensive computational resources [1]. Force fields enable more rapid structural relaxation but often lack the accuracy of quantum mechanical methods [1]. These limitations become particularly acute when dealing with weak intermolecular interactions that are critical in organic crystals, such as van der Waals forces, hydrogen bonds, and π–π stacking [1]. Even minor variations in these interactions can give rise to entirely different crystal structures, making accurate prediction exceptionally difficult.

Table 1: Key Challenges in Crystal Structure Prediction

| Challenge Category | Specific Technical Hurdles | Impact on Prediction Accuracy |

|---|---|---|

| Energy Landscape | Multiple local minima, small energy differences (< few kJ/mol) between polymorphs | High probability of missing most stable form |

| Computational Cost | DFT calculations economically unfeasible for comprehensive search | Limits scope of search space exploration |

| Weak Interactions | Van der Waals forces, hydrogen bonding, π-π stacking in organic crystals | Difficulty capturing subtle stabilization effects |

| Temperature Effects | Free-energy calculations requiring thermodynamic integration | Static lattice energies insufficient for real-world conditions |

| Multi-component Systems | Hydrates, solvates with variable stoichiometry | Complexity beyond single-component crystals |

Modern Methodological Frameworks for CSP

Machine Learning-Enhanced Workflows

Recent breakthroughs in CSP have leveraged machine learning to dramatically improve prediction efficiency and accuracy. The SPaDe-CSP (Space group and Packing Density predictor for Crystal Structure Prediction) workflow exemplifies this approach, combining machine learning-based lattice sampling with structure relaxation via a neural network potential (NNP) [1] [2]. This methodology employs a unique strategy where ML models first predict the most probable space groups and crystal densities, filtering out unstable, low-density candidates before computationally intensive relaxation steps [2]. Specifically, the workflow employs two machine learning models—space group and packing density predictors—that use molecular fingerprints (MACCSKeys) as input features to reduce the generation of low-density, less-stable structures [1].

The structure generation in SPaDe-CSP begins with predicting space group candidates and crystal density using trained LightGBM models. One of the predicted space group candidates is randomly selected, and lattice parameters are sampled within predetermined ranges. The sampled space group and lattice parameters are checked against the predicted density tolerance using molecular weight and Z value, and if they satisfy the criteria, molecules are placed in the lattice [1]. This initial structure generation continues until 1000 crystal structures are produced for each run. The generated structures are then optimized with a neural network potential (PFP21 version 6.0.0 at CRYSTALU0PLUS_D3 mode) using the limited BFGS algorithm with a force threshold of 0.05 eV/Å and up to 2000 iterations [1].

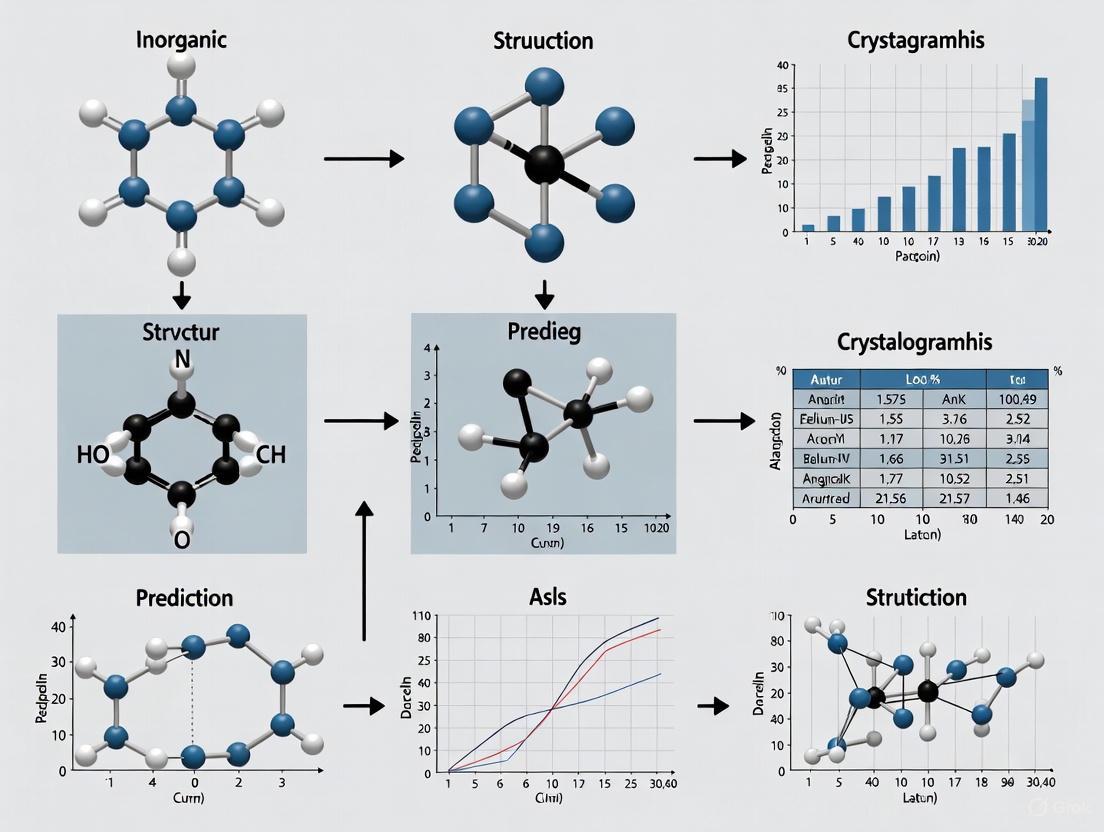

Figure 1: Machine Learning-Enhanced CSP Workflow. This diagram illustrates the SPaDe-CSP approach that uses ML-based filtering to reduce computational waste on unstable candidates [1] [2].

In tests on 20 organic crystals of varying complexity, the SPaDe-CSP approach achieved an 80% success rate—twice that of a random CSP—demonstrating its effectiveness in narrowing the search space and increasing the probability of finding the experimentally observed crystal structure [1] [2]. The researchers also identified key structural descriptors that correlate linearly with success rate, indicating both crystal- and molecule-level structural influences on prediction effectiveness [2].

Advanced Free-Energy Calculation Methods

Accurately predicting crystal form stability under real-world conditions requires moving beyond static lattice energies to temperature-dependent free-energy calculations. state-of-the-art approaches now combine multiple computational techniques to achieve the necessary accuracy while remaining computationally feasible. The TRHu(ST) method (temperature- and relative-humidity-dependent free-energy calculations with standard deviations) exemplifies this composite approach, combining the PBE0 + MBD + Fvib approach with an additional single-molecule correction and reduces CPU time requirements by blending force field and ab initio calculations [3].

This methodology explicitly handles imaginary and very soft vibrational modes, hydrogen-bond stretch vibrations, and methyl-group rotations through enhanced sampling techniques [3]. For industrially relevant compounds, the calculated free energies now achieve standard errors of just 1–2 kJ mol⁻¹, making them sufficiently accurate for practical applications in polymorph risk assessment [3]. Perhaps most significantly, these advances enable the placement of crystal structures with different hydrate stoichiometries on the same energy landscape, with defined error bars, as a function of temperature and relative humidity [3].

Table 2: Composite Free-Energy Calculation Components

| Calculation Component | Physical Effect Captured | Implementation in TRHu(ST) Method |

|---|---|---|

| PBE0 Functional | Hybrid DFT with 25% Hartree-Fock exchange | Improved electronic structure description |

| Many-Body Dispersion (MBD) | Long-range correlation effects | Critical for weak intermolecular forces |

| Vibrational Free Energy (Fvib) | Temperature-dependent vibrational contributions | Phonon calculations at finite temperature |

| Single-Molecule Correction | Conformational flexibility | Accounts for intramolecular degrees of freedom |

| Explicit Sampling | Anharmonic vibrations, methyl rotations | Enhanced sampling for specific modes |

A critical advancement in modern CSP is the rigorous quantification of computational errors, which has received almost no attention historically. By analyzing a carefully curated benchmark of experimental free-energy differences, researchers have established transferable error estimation parameters: standard deviation of the energy error per water molecule (σH₂O = 0.641 kJ mol⁻¹) and standard deviation of the energy error per atom (σat = 0.191 kJ mol⁻¹) for non-water atoms [3]. These parameters enable extrapolation of observed errors to chemical compounds not part of the benchmark, accounting for molecular size and chemical variability, which is essential for quantitative risk assessment in industrial applications.

Generative AI and Text-Guided Approaches

The most recent frontier in CSP involves generative artificial intelligence models that can navigate chemical space using textual descriptions alongside structural data. The Chemeleon model exemplifies this approach, employing denoising diffusion techniques for compound generation using textual inputs aligned with structural data via cross-modal contrastive learning [4]. This model bridges the gap between textual descriptions and crystal structure generation through a framework called Crystal CLIP, which aligns text embedding vectors with graph embeddings derived from equivariant graph neural networks (GNNs) [4].

Another innovative architecture, CrystalFormer, represents a transformer-based autoregressive model specifically designed for space group-controlled generation of crystalline materials [5]. By explicitly incorporating space group symmetry, CrystalFormer significantly reduces the effective complexity of crystal space, which is essential for data- and compute-efficient generative modeling [5]. The model learns to generate crystals by directly predicting the species and coordinates of symmetry-inequivalent atoms in the unit cell, leveraging the prominent discrete and sequential nature of the Wyckoff positions [5].

For property prediction directly from text descriptions, the LLM-Prop framework demonstrates that large language models can outperform traditional GNN-based approaches on several key metrics, despite having fewer parameters [6]. This approach fine-tunes the encoder part of T5 models on text descriptions of crystal structures, outperforming state-of-the-art GNN-based methods by approximately 8% on predicting band gap and 65% on predicting unit cell volume [6]. This surprising effectiveness of text-based approaches highlights potential limitations in how current GNNs capture critical crystallographic information such as space group symmetry and Wyckoff sites.

Experimental Protocols and Benchmarking

Data Curation and Model Training Standards

The foundation of reliable CSP lies in carefully curated datasets and standardized training protocols. For organic crystal prediction, researchers typically extract datasets from the Cambridge Structural Database (CSD) with stringent quality filters: Z' = 1, organic, not polymeric, R-factor < 10, no solvent presence [1]. Additional filters based on statistical distributions of crystallographic parameters ensure data quality, with typical ranges including lattice lengths (2 ≤ a, b, c ≤ 50 Å) and angles (60 ≤ α, β, γ ≤ 120°) to encompass the vast majority (>97.9%) of initial search results while systematically removing extreme outliers [1]. For machine learning applications, the curated dataset is typically split into training and test subsets by an 8:2 ratio, with models evaluated using appropriate metrics—cross-entropy loss for space group prediction and L2 loss for density prediction [1].

For inorganic materials, the Materials Project database serves as a primary source, typically filtered to structures containing 40 or fewer atoms in the primitive unit cell to capture diverse material properties and structural variations [4]. To assess model generalizability, chronological splitting of test sets—where models are evaluated on structures discovered after those in the training set—provides a more rigorous assessment of predictive capability for genuinely new materials [4].

Performance Metrics and Validation Standards

Robust validation of CSP methods requires multiple complementary metrics. For generative models, key evaluation criteria include:

- Validity: The proportion of structurally valid outputs that satisfy crystallographic constraints [4]

- Success Rate: The probability of finding experimentally observed crystal structures across multiple runs [1]

- Property Prediction Accuracy: Comparison between computed and experimental properties such as band gap, formation energy, and unit cell volume [6]

The establishment of reliable experimental benchmarks for free-energy differences has been particularly significant for advancing CSP methodology. These benchmarks combine data from multiple sources: solid–solid free-energy differences obtained from solubility ratios, reversible phase transitions between polymorphs, and hydrate–anhydrate phase transitions as a function of relative humidity [3]. At phase-transition points, the free energies of two forms are equal by definition, providing critical reference points for validating computational methods.

Table 3: Key Reagent Solutions for Computational CSP

| Computational Tool | Type | Primary Function in CSP |

|---|---|---|

| Neural Network Potentials (PFP21) | Force Field | Structure relaxation with near-DFT accuracy at reduced cost |

| MACCSKeys | Molecular Fingerprint | Feature representation for ML-based space group and density prediction |

| LightGBM | Machine Learning Model | Prediction of space group candidates and crystal densities |

| PyXtal | Python Library | Random crystal structure generation for baseline comparisons |

| Matlantis | Computational Platform | Pre-trained NNP for structure optimization |

The field of crystal structure prediction is undergoing a transformative period, driven by advances in machine learning, accurate free-energy calculations, and generative AI approaches. The integration of these methodologies is steadily closing the gap between computational prediction and experimental reality, making CSP an increasingly actionable tool for materials design and polymorph risk assessment. The ability to place crystal structures with different hydrate stoichiometries on the same energy landscape as a function of temperature and relative humidity represents a particular breakthrough for pharmaceutical applications [3].

Despite significant progress, important challenges remain. accurately modeling the complex interplay between intra- and intermolecular interactions in flexible molecules requires further method development. extending current approaches to multi-component systems—including solvates, co-crystals, and disordered materials—presents additional frontiers. The integration of CSP with experimental techniques in iterative design-make-test-analyze cycles promises to further accelerate materials discovery. As methods continue to mature, crystal structure prediction is poised to become an indispensable component of the materials development toolkit, potentially transforming discovery timelines across pharmaceuticals, energy materials, and advanced manufacturing.

In the field of inorganic crystal structure prediction (CSP) research, the concept of an energy landscape provides a powerful framework for understanding the crystalline forms a molecule can adopt. A crystal energy landscape represents the set of plausible crystal packings for a chemical species, mapping out the energetic relationship between different possible configurations and revealing the thermodynamic and kinetic behavior of crystal systems [7]. Computational exploration of these landscapes enables researchers to anticipate stable crystalline arrangements, rationalize polymorphic behavior, and guide the discovery of new functional materials. The core challenge in CSP lies in efficiently navigating these high-dimensional energy surfaces to identify the global minimum—the most thermodynamically stable crystal structure—while also characterizing metastable polymorphs that may have significant practical applications [7] [8].

The energy landscape approach has transformed materials discovery, with applications ranging from pharmaceutical development to organic electronics. Different polymorphs can exhibit dramatically different physical and chemical properties, including density, melting point, hardness, solubility, and bioavailability, making polymorph prediction crucial for industries where material performance is critical [8]. Late-appearing polymorphs have caused significant issues in the pharmaceutical industry, necessitating redesign of production processes and sometimes leading to market recalls [8]. By mapping the complete energy landscape, researchers can identify such risks early in development and design crystallization strategies to target specific polymorphs with desirable characteristics.

Fundamental Concepts and Terminology

Key Landscape Features

- Local Minima: States corresponding to crystal structures that are stable to small perturbations but may not be the most thermodynamically favorable overall. Each minimum represents a potential polymorph [9].

- Global Minimum: The lowest energy state on the landscape, corresponding to the thermodynamically most stable crystal structure under given conditions.

- Energy Barriers: The energy differences separating local minima, which determine the kinetic accessibility of different polymorphs [9].

- Basins of Attraction: Regions of the energy landscape that drain to a particular local minimum during energy minimization, defining the "catchment area" for each structure.

Disconnectivity Graphs

A disconnectivity graph is a specialized visualization tool that condenses the continuous, high-dimensional potential energy surface into a discrete representation of local minima and the energy barriers separating them [9]. In these graphs, the vertical axis represents energy, while the horizontal arrangement shows how minima are connected through transition states. Each branch tip represents a local minimum, and branches join at the lowest energy barrier connecting those minima [9]. This visualization reveals the overall organization of the landscape, showing which structures are easily interconvertible and which are separated by significant barriers.

Quantitative Performance of CSP Methods

Recent large-scale validations demonstrate the remarkable progress in crystal structure prediction methodologies. The tables below summarize key performance metrics from landmark studies.

Table 1: Large-Scale Validation of CSP Methods (Taylor et al.)

| Validation Metric | Performance | Scope of Study |

|---|---|---|

| Experimental Structures Located | 99.4% | Over 1000 small, rigid organic molecules [7] |

| Structures Ranked as Most Stable | 74% | Accounting for thermal effects uncertainty [7] |

| Methodology | Force-field-based CSP with quasi-random sampling [7] |

Table 2: Pharmaceutical-Relevant CSP Validation (Nature Communications Study)

| Validation Category | Performance | Dataset Characteristics |

|---|---|---|

| Single Polymorph Molecules | 100% success in sampling experimental structure | 33 molecules, RMSD < 0.50 Å for 25-molecule cluster [8] |

| Top-2 Ranking (Before Clustering) | 26 of 33 molecules [8] | Includes MK-8876, Target V, naproxen [8] |

| Multiple Polymorph Molecules | All known Z' = 1 polymorphs reproduced [8] | 33 molecules including ROY, Olanzapine, Galunisertib [8] |

| Methodology | Hierarchical ranking (FF → MLFF → DFT) [8] | 66 total molecules, 137 unique crystal structures [8] |

Methodologies for Mapping Energy Landscapes

The Monte Carlo Threshold Algorithm

The Monte Carlo threshold algorithm is a powerful method for mapping energy barriers between crystal structures that overcomes limitations of traditional CSP approaches [9]. Unlike standard methods that only locate local minima, this algorithm provides estimates of the energy barriers separating structures, offering insight into kinetic stability and polymorph interconversion pathways.

Experimental Protocol:

- Initialization: Begin from a local minimum structure on the energy landscape [9].

- Monte Carlo Sampling: Generate random perturbations to molecular translations, rotations, and unit cell parameters, accepting only moves that maintain the system's energy below a defined "lid" energy [9].

- Lid Energy Increment: Systematically increase the lid energy in increments (typically 5 kJ mol⁻¹), allowing the trajectory to access new energy basins separated by higher barriers [9].

- Trajectory Merging: Combine trajectories from multiple starting structures (often known polymorphs) to build a global picture of landscape connectivity [9].

- Disconnectivity Graph Construction: Analyze the connected minima at each energy level to create a comprehensive representation of the energy landscape [9].

Table 3: Threshold Algorithm Parameters and Specifications

| Parameter | Typical Setting | Purpose/Rationale |

|---|---|---|

| Energy Lid Increment | 5 kJ mol⁻¹ [9] | Balance between precision and computational cost |

| Move Types | Translations, rotations, unit cell changes [9] | Sample crystal packing variables |

| Step Size Cutoffs | Chosen for similar energy changes across move types [9] | Ensure efficient sampling |

| Molecular Flexibility | Rigid molecules in current implementations [9] | Simplifies initial implementation |

Conformation-family Monte Carlo (CFMC)

The CFMC method maintains a database of low-energy structures clustered into families, with search biased toward the most promising regions [10]. This approach extends basic Monte Carlo methods by considering whole families of conformations rather than single structures.

Workflow:

- Database Initialization: Generate Nf random structures through random unit cell generation and molecular placement [10].

- Family-based Sampling: Select structures from the current "generative family" with Boltzmann-weighted probabilities [10].

- Structure Modification: Apply internal (local) or external (global) moves to create new trial structures [10].

- Family Assignment: Classify new structures into existing families or create new families based on structural similarity [10].

- Generative Family Update: Apply Metropolis criterion to potentially transition to exploring new families [10].

Hierarchical Energy Ranking

Modern CSP workflows often employ a multi-stage approach to balance accuracy and computational cost [8]:

- Initial Sampling: Generate trial crystal structures using force-field-based methods [8].

- Machine Learning Refinement: Apply neural network potentials (e.g., MACE equivariant message-passing networks) for improved energy rankings [7].

- DFT Validation: Final ranking using periodic density functional theory with dispersion corrections [8].

- Free Energy Calculations: Evaluate temperature-dependent stability using free energy methods [8].

Diagram 1: Hierarchical Energy Ranking Workflow. This multi-stage approach combines computational efficiency with high accuracy.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Tools for Energy Landscape Mapping

| Tool/Resource | Function | Application Context |

|---|---|---|

| Global Lattice Energy Explorer (GLEE) [7] | Quasi-random sampling of crystal packing | Initial structure generation [7] |

| DMACRYS [9] | Lattice energy minimization with accurate force fields | Structure optimization with atomic multipoles [9] |

| Machine Learning Potentials [7] | Neural network corrections to force field energies | Improved energy rankings [7] |

| Cambridge Structural Database (CSD) [8] | Repository of experimental crystal structures | Validation and methodology development [8] |

| Distributed Multipole Analysis (DMA) [7] | Derivation of atom-centered multipoles | Electrostatic description for force fields [7] |

| Disconnectivity Graph Analysis [9] | Visualization of energy landscape connectivity | Interpretation of polymorph relationships [9] |

Applications and Implications for Drug Development

The ability to comprehensively map crystal energy landscapes has profound implications for pharmaceutical development and materials science. When CSP methods identify multiple low-energy minima close in energy, this indicates a significant risk of polymorphism that must be addressed during drug development [7]. Conversely, landscapes with a single deep global minimum suggest systems likely to be monomorphic under standard conditions. This predictive capability enables proactive risk management rather than reactive response to late-appearing polymorphs.

Energy landscape analysis also facilitates the targeted discovery of metastable polymorphs with enhanced functional properties. Recent studies have identified high-energy polymorphs through desolvation of solvates that exhibit exceptional properties for gas storage, molecular separations, and photocatalytic applications [9]. By understanding both the thermodynamic and kinetic aspects of these landscapes, researchers can design crystallization pathways to access these valuable metastable forms.

Diagram 2: Crystallization Pathways on Energy Landscape. Different processing conditions can lead to different polymorphic outcomes.

The field of energy landscape mapping continues to evolve rapidly, with several promising directions emerging. Machine learning approaches are being increasingly integrated throughout the CSP pipeline, from accelerated energy evaluations to the analysis of structure-function relationships that evade simple inspection [7]. The development of transferable, machine-learned energy potentials trained on large and diverse CSP datasets shows particular promise for improving predictive accuracy while maintaining computational efficiency [7].

Another significant frontier is the extension of these methods to more complex systems, including flexible molecules with multiple conformational degrees of freedom, co-crystals, and solvates [9]. Current rigid-molecule approaches provide valuable insight but must be expanded to address the full complexity of pharmaceutical compounds. As these methodological advances progress, energy landscape analysis is poised to become an increasingly central tool in rational materials design, enabling researchers to navigate the complex energy surfaces of molecular crystals with growing confidence and predictive power.

The comprehensive mapping of crystal energy landscapes represents a transformative capability in solid-state chemistry and materials science. By moving beyond simple local minimization to characterize the global connectivity and barriers within these high-dimensional surfaces, researchers can now rationalize polymorphic behavior, predict stable crystalline forms, and design targeted synthesis strategies for functional materials. As validation studies on increasingly large and diverse molecular sets demonstrate the reliability of these approaches [7] [8], energy landscape analysis is establishing itself as an essential component of computational materials discovery and pharmaceutical development.

Crystal structure prediction (CSP) represents a fundamental challenge in computational materials science and drug development, with methodologies diverging significantly between inorganic and organic domains. While both fields aim to determine the most stable crystalline arrangement of atoms or molecules from their chemical composition, their distinct chemical bonding, dominant interactions, and structural complexities necessitate specialized approaches [11] [1]. For inorganic crystals, the prediction problem primarily involves identifying global minima on energy landscapes defined by strong, directional covalent and ionic bonds within often-binary compound systems [11]. In contrast, organic CSP must navigate the subtler interplay of weak intermolecular forces and conformational flexibility in multi-component molecular systems, where accurate energy ranking demands exceptional precision [1] [12]. This technical guide examines the core methodological differences between these domains, framed within the advancing paradigm of inorganic CSP research, to provide researchers and pharmaceutical professionals with a comprehensive comparison of current predictive capabilities and limitations.

Fundamental Distinctions in Chemical Nature and Bonding

The foundational differences between inorganic and organic crystals originate at the atomic and molecular level, directly influencing prediction strategies and computational challenges.

Inorganic crystals are typically characterized by strong, directional covalent and ionic bonds that form extended atomic networks with specific coordination environments [11]. These materials often exhibit high symmetry and relatively simple unit cells with atoms occupying precise crystallographic positions. The bonding strength creates deep, well-defined energy minima, making the potential energy landscape more discrete but often computationally expensive to evaluate with quantum mechanical methods [13].

Organic molecular crystals, however, are stabilized by significantly weaker intermolecular forces including van der Waals interactions, hydrogen bonds, and π-π stacking [1] [2]. These weaker interactions create a much flatter potential energy surface with numerous closely spaced local minima corresponding to different molecular packing arrangements. As noted in recent CSP research, "even minor variations in these interactions can give rise to entirely different crystal structures, making accurate prediction difficult" [1]. Additionally, organic molecules frequently exhibit considerable conformational flexibility due to rotatable bonds, exponentially increasing the configurational space that must be explored during prediction [1].

Table 1: Fundamental Chemical Distinctions Between Inorganic and Organic Crystals

| Characteristic | Inorganic Crystals | Organic Molecular Crystals |

|---|---|---|

| Primary Bonding | Strong covalent/ionic bonds | Weak intermolecular forces (van der Waals, hydrogen bonding) |

| Potential Energy Landscape | Deep, well-defined minima | Flat surface with numerous closely-spaced minima |

| Energy Differences | Often significant between polymorphs | Small (few kJ/mol) between polymorphs |

| Molecular Flexibility | Typically rigid atomic arrangements | Significant conformational flexibility |

| Symmetry | Generally high symmetry | Often lower symmetry |

Methodological Approaches in Crystal Structure Prediction

CSP methodologies for both domains share a common two-stage framework—structure generation followed by structure relaxation—but diverge significantly in their implementation details and technical emphasis.

Structure Generation and Search Algorithms

Inorganic CSP leverages sophisticated global optimization algorithms to navigate complex energy landscapes. Evolutionary algorithms like USPEX and particle swarm optimization methods like CALYPSO have demonstrated particular effectiveness [13]. These approaches iteratively generate and refine crystal structures while incorporating physical constraints and symmetry considerations. Recent advances include mathematical optimization-based search paradigms and template-based methods that exploit known structural motifs [11] [13]. The search space, while vast, is constrained by the relatively rigid nature of atomic coordination preferences.

Organic CSP must contend with the dual challenges of molecular conformation and packing arrangement. While random structure generation remains common, recent machine learning approaches have significantly improved efficiency. The SPaDe-CSP workflow exemplifies this progress, employing ML-based space group and packing density predictors to reduce the generation of low-density, unstable structures before computationally intensive relaxation [1] [2]. This "sample-then-filter" strategy narrows the search space by predicting the most probable space groups and crystal densities from molecular fingerprints, specifically adapting constraint strategies that have proven effective for inorganic systems [1].

Structure Relaxation and Energy Evaluation

The critical stage of structure relaxation and energy ranking highlights perhaps the most significant technical divergence between inorganic and organic CSP.

Inorganic CSP has increasingly embraced universal machine learning interatomic potentials (MLIPs) trained on extensive DFT datasets to accelerate structure relaxation while maintaining quantum-mechanical accuracy [13] [14]. Models like M3GNet and other graph neural network-based potentials enable rapid exploration of compositional and configurational spaces [13]. The stronger bonding in inorganic systems means that energy differences between viable polymorphs are often substantial enough to be reliably captured by these potentials.

Organic CSP faces a more formidable challenge as different polymorphs "are often separated by only a few kJ/mol per molecule in energy" [12]. This necessitates exceptional accuracy in thermodynamic stability evaluation. While neural network potentials like PFP and ANI have shown promise [1], many workflows still require dispersion-inclusive DFT for final ranking, creating computational bottlenecks [12]. Recent approaches like FastCSP demonstrate that universal MLIPs like the Universal Model for Atoms (UMA) can potentially eliminate the need for DFT re-ranking, but system-specific MLIPs currently achieve the most reliable results [12].

Table 2: Methodological Comparison of CSP Workflows

| Methodological Aspect | Inorganic CSP | Organic CSP |

|---|---|---|

| Primary Search Algorithms | Evolutionary algorithms (USPEX), particle swarm optimization (CALYPSO) | Random sampling, machine learning-guided sampling, genetic algorithms |

| Structure Generation Focus | Atomic placement with coordination constraints | Molecular packing with conformational flexibility |

| Key ML Applications | Universal MLIPs, composition-based generative models | Space group prediction, density prediction, specialized MLIPs |

| Accuracy Requirements | ~1-10 eV/atom for stability assessment | <1 kJ/mol (~0.01 eV/atom) for polymorph ranking |

| Successful Workflows | CALYPSO, USPEX, GNOA, MatterGen | SPaDe-CSP, FastCSP, system-specific MLIP approaches |

Workflow Visualization

(Inorganic vs. Organic CSP Workflow Comparison)

Performance Benchmarking and Success Rates

Quantitative performance assessment reveals significant disparities in CSP capabilities across domains. Benchmark studies demonstrate that inorganic CSP algorithms successfully predict known structures with varying degrees of reliability, though "the performance of the current CSP algorithms is far from being satisfactory" according to recent evaluations [13]. Template-based methods achieve higher success when applied to structures similar to known templates, while ML potential-based approaches are becoming increasingly competitive with DFT-based methods [13].

For organic systems, the SPaDe-CSP workflow achieves an 80% success rate across 20 diverse organic molecules—double the success rate of random sampling approaches [1] [2]. This performance improvement stems directly from effective search space narrowing through machine learning guidance. Nevertheless, success rates remain strongly influenced by molecular and crystal complexity, with flexible molecules presenting persistent challenges [1].

The critical role of neural network potentials is increasingly evident in both domains. As noted in benchmark evaluations, ML potential-based CSP algorithms "are now able to achieve competitive performances compared to the DFT-based algorithms" with performance "strongly determined by the quality of the neural potentials as well as the global optimization algorithms" [13].

Experimental Protocols and Methodologies

Representative Inorganic CSP Protocol (CALYPSO/USPEX)

- Initialization: Define chemical composition and establish initial population of structures with random symmetric lattices and atomic coordinates while respecting minimum interatomic distances [13].

- Structure Generation: Apply evolutionary operations (heredity, mutation, permutation) or particle swarm optimization to generate candidate structures while preserving physical constraints [13].

- Local Optimization: Perform structure relaxation using DFT or MLIPs (e.g., M3GNet) to locate local energy minima. This typically involves ionic relaxation with fixed unit cell dimensions followed by full cell optimization [13].

- Fitness Evaluation: Calculate formation energies relative to competing phases using the convex hull construction. Structures with positive hull distance are eliminated [13].

- Iteration: Repeat steps 2-4 for multiple generations (typically 20-50) until convergence is achieved, indicated by consistent reproduction of low-energy structures [13].

Representative Organic CSP Protocol (SPaDe-CSP)

- Input Preparation: Extract molecular structure from experimental data or perform conformational analysis. Generate SMILES string and convert to molecular fingerprint (e.g., MACCSKeys) [1].

- Machine Learning Guidance: Predict probable space groups and crystal density using trained LightGBM models. Apply probability threshold and density tolerance window to filter candidates [1] [2].

- Lattice Sampling: Generate crystal structures using PyXtal's 'from_random' function, but only for ML-predicted space groups and within the predicted density range [1].

- Structure Relaxation: Optimize generated structures using neural network potentials (PFP at CRYSTALU0PLUS_D3 mode) with L-BFGS algorithm (2000 iterations maximum, force threshold 0.05 eV/Å) [1].

- Energy Ranking: Construct energy-density diagrams and identify low-energy polymorphs. Compare predicted structures with experimental data when available [1].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Computational Tools for Crystal Structure Prediction

| Tool/Resource | Type | Primary Application | Function |

|---|---|---|---|

| Cambridge Structural Database (CSD) | Database | Organic CSP | Provides experimental structural data for training ML models and validation [1] |

| Materials Project | Database | Inorganic CSP | Curated repository of computed inorganic crystal structures and properties [4] |

| Universal MLIPs (M3GNet, UMA) | Machine Learning Potential | Both (emphasis inorganic) | Accelerated structure relaxation with near-DFT accuracy across diverse compositions [13] [12] |

| Specialized MLIPs (PFP, ANI) | Machine Learning Potential | Organic CSP | Accurate energy evaluation for organic molecules with specific parameterization [1] [12] |

| MACCSKeys | Molecular Descriptor | Organic CSP | Molecular fingerprint representation for ML-based space group and density prediction [1] |

| CALYPSO/USPEX | Search Algorithm | Inorganic CSP | Global optimization for crystal structure prediction using evolutionary algorithms [13] |

| Genarris | Search Algorithm | Organic CSP | Random structure generation for molecular crystals with duplicate removal [12] |

Emerging Trends and Future Directions

The convergence of artificial intelligence approaches is reshaping both inorganic and organic CSP landscapes. For inorganic materials, generative AI models like Chemeleon demonstrate the potential of text-guided generation using denoising diffusion techniques trained on both textual descriptions and structural data [4]. Similarly, MatterGen represents advances in diffusion-based generation specifically optimized for inorganic compounds [14]. Large language models like CrystaLLM show surprising capability in generating plausible inorganic structures through autoregressive modeling of CIF file tokens [15].

Organic CSP is benefiting from increasingly universal and accurate MLIPs that eliminate the need for system-specific retraining. The FastCSP framework exemplifies this trend, leveraging the Universal Model for Atoms to provide "accurate, transferable modeling across diverse material systems" without molecule-specific fine-tuning [12]. This approach potentially obviates the need for classical force field pre-screening or DFT-based re-ranking, significantly accelerating workflow throughput.

Cross-pollination of methodologies between domains is also emerging as a fruitful direction. The inpainting generation method of CHGGen, initially developed for inorganic systems, shows promise for organic applications where host-guest interactions are relevant [14]. Similarly, constraint strategies successful in inorganic CSP are being adapted to organic contexts, as demonstrated by SPaDe-CSP's adaptation of density prediction to narrow search spaces [1].

The crystal structure prediction landscape reveals both stark contrasts and promising convergence points between inorganic and organic methodologies. Inorganic CSP leverages strong bonding and relatively rigid structural motifs to employ powerful global optimization algorithms, while organic CSP must navigate the subtler energy landscapes of weak intermolecular forces using sophisticated machine learning guidance. Both domains are being transformed by neural network potentials that offer DFT-level accuracy at dramatically reduced computational cost, though organic applications demand exceptional precision for reliable polymorph ranking. As benchmark studies indicate substantial room for improvement in both domains, the emerging trend toward universal models and cross-domain methodological transfer offers promising pathways for accelerated discovery. For pharmaceutical researchers and materials scientists alike, these advances promise increasingly reliable in silico crystal structure prediction, potentially transforming materials design and drug development pipelines.

The Critical Role of Polymorphism in Functional Materials and Pharmaceuticals

Polymorphism, the ability of a solid substance to exist in multiple distinct crystal structures, represents a fundamental phenomenon with profound implications across pharmaceutical development and advanced materials science. These variations in three-dimensional crystalline arrangement are unpredictable and result in significantly differing physicochemical properties, including melting point, solubility, dissolution rates, bioavailability, and stability [16]. In pharmaceuticals, approximately 85% of marketed drugs exhibit polymorphism, making this a rule rather than an exception in drug development [17]. The well-documented case of the antiviral drug Ritonavir, which experienced a market withdrawal after a more stable, less soluble polymorph unexpectedly appeared in the formulated product, underscores the critical importance of polymorph control, with estimated losses exceeding US$250 million [17]. More recently, in 2023, spontaneous crystallization was observed in certain bottles of cyclosporine oral solution, ultimately resulting in a product recall in 2024 due to concerns over content uniformity [18].

Beyond pharmaceuticals, polymorphism enables the engineering of tailored functionalities in advanced materials. Recent research demonstrates how different polymorphs of a highly luminescent benzofuranyl molecule exhibit dramatically different photonic properties: one polymorph functions as a flexible optical waveguide with 52% photoluminescence quantum yield, another as a rigid block exhibiting amplified spontaneous emission, and a third as a plate crystal ideal for highly luminant photonic devices [19]. This multifunctionality arising from a single chemical entity highlights the transformative potential of polymorph control in designing next-generation materials. The following sections provide a comprehensive technical examination of polymorphism's effects, characterization methodologies, and emerging prediction strategies, with particular emphasis on their integration within inorganic crystal structure prediction research frameworks.

Polymorphism in Pharmaceutical Development

Strategic Patent Considerations

The complex interplay between intellectual property strategy and polymorph science requires careful navigation throughout drug development. Critically, an initial patent application directed to a pharmaceutical compound itself constitutes prior art against subsequently filed polymorph patents [16]. Therefore, the compound patent specification should include a synthetic method for making the compound but should strategically exclude working examples reciting specific recrystallization conditions, generic disclosures of suitable recrystallization solvents or conditions, or general discussions concerning physical forms of the compound [16]. This approach preserves future patenting opportunities for specific polymorphs.

Polymorph characterization in patent applications requires meticulous documentation. Applications should include detailed information concerning recrystallization conditions and solvent mixtures that yield the specific polymorph, alongside comprehensive analytical data including X-ray powder diffraction (XRPD) spectra showing all peaks (strong, intermediate, and minor), differential scanning calorimetry (DSC) thermograms, and infrared (IR) spectra [16]. Claim strategy must balance scope with enforceability; claiming based on large numbers of XRPD peaks may create enforcement difficulties, as the patentee must establish that alleged infringing material contains each claimed peak [16]. Conversely, claiming by only a few major peaks may leave claims vulnerable to challenges for lack of written description or enablement [16]. A robust strategy pursues claims of varying scope to the polymorph characterized by: (1) the complete XRPD pattern, (2) major peaks only, (3) major and moderate peaks combined, and (4) melting point, DSC, and/or IR spectra either independently or together with XRPD information [16].

Table 1: Polymorph Patent Strategy Considerations

| Strategic Element | Key Consideration | Best Practice |

|---|---|---|

| Timing of Filing | Relationship to compound patent prior art date | Delay filing past compound patent date to maximize patent term for highly polymorphic compounds [16] |

| Claim Scope | Balance between breadth and enforceability | Pursue multiple claim sets of varying specificity [16] |

| Geographical Strategy | Divergent legal standards between regions | In Europe, focus on polymorphs with unexpected superior properties due to higher inventive step requirements [16] |

| Disclosure Content | Sufficiency for written description and enablement | Include XRPD peak tables with express teachings defining polymorph by major, intermediate, and minor peaks [16] |

The "Disappearing Polymorph" Phenomenon and Risk Mitigation

The phenomenon of "disappearing polymorphs" describes situations where a previously reproducible crystalline form becomes irreproducible over time, often coinciding with the emergence of a new, more stable polymorphic form [18]. This occurs because crystalline solids tend to evolve toward more thermodynamically stable packing arrangements, meaning initially discovered polymorphs may not represent the most stable form [18]. Trace contamination with seed crystals or partial dissolution followed by recrystallization during storage can trigger such conversions, potentially rendering the original form irreproducible [18].

A comprehensive solid form screening workflow represents the primary risk mitigation strategy against disappearing polymorphs and unexpected polymorphic transitions. This screening is typically performed twice during drug development: in the preclinical stage to select the solid form proceeding to clinical trials, and in the clinical stage to comprehensively characterize the solid form landscape and identify potentially more optimal forms [17]. A recent extensive survey of 476 new chemical entities studied between 2016-2023 revealed that an average of 5.5 crystal forms were found for free forms and 3.7 for salts, demonstrating the prevalence of polymorphism for pharmaceutical compounds [17]. The survey also identified increasing structural complexity and molecular weight of new chemical entities in recent years, which often presents additional challenges for crystallization and obtaining high-quality forms for development [17].

Polymorphism in Functional Materials Design

In functional materials, polymorphism provides a powerful tool for engineering specific physical and optical properties without altering chemical composition. Recent research on the highly luminescent compound 1,4-bis(benzofuran-2-yl)-2,3,5,6-tetrafluorophenylene (BFTFP) demonstrates this principle with exceptional clarity. BFTFP exhibits three distinct polymorphs (α, β, and γ) with dramatically different morphological and photonic characteristics [19].

The BFTFPα polymorph forms as flexible fibers exhibiting elastic flexibility with optical waveguiding capabilities while maintaining 52% photoluminescence quantum yield—among the highest values reported for elastic organic single crystals [19]. The BFTFPβ polymorph grows as rigid blocks and exhibits amplified spontaneous emission under excitation using a nanosecond pulsed laser, attributed to their rigidity and monomeric luminescence [19]. The BFTFP_γ polymorph forms platelet crystals that exhibit intense luminescence from their basal facets, making them ideal media for highly luminant photonic devices such as vertical cavity surface emitting lasers [19].

This polymorphism-induced multifunctionality demonstrates how crystal structure control enables the design of materials with tailored properties for specific applications. Similar principles apply to inorganic photostrictive materials, where constructing a polymorphic phase boundary significantly enhances performance for wireless microelectromechanical devices [20]. These examples underscore the critical importance of understanding polymorphic landscapes in functional materials development.

Experimental Methodologies for Polymorph Characterization

Comprehensive Polymorph Screening Protocol

A robust polymorph screening methodology integrates multiple analytical techniques to fully characterize the solid-form landscape. The following protocol, adapted from contemporary research on Tegoprazan, provides a framework for systematic polymorph investigation [18]:

Materials Preparation:

- Procure or synthesize all known solid forms (amorphous, polymorphs, hydrates, solvates) of the compound

- Verify identity and phase purity of each form using PXRD and DSC before experimentation

- For Tegoprazan, three solid forms were characterized: amorphous, Polymorph A (thermodynamically stable), and Polymorph B (metastable) [18]

Conformational Analysis:

- Construct conformational energy landscapes using relaxed torsion scans with appropriate force fields (e.g., OPLS4)

- Perform scans for key dihedral angles in 10° increments for each tautomeric form

- Calculate Boltzmann-weighted probabilities from relative energies

- Validate computational models with experimental solution structures from nuclear Overhauser effect (NOE)-based nuclear magnetic resonance (NMR) spectroscopy [18]

Intermolecular Interaction Assessment:

- Extract hydrogen-bonded dimers from crystal structures of identified polymorphs

- Perform single-point energy calculations using density functional theory with empirical dispersion corrections (e.g., wB97X-D3(BJ)/def2-TZVPP) [18]

- Compare stabilization energies to understand preferential packing motifs

Phase Behavior Analysis:

- Conduct solubility measurements across multiple solvents (e.g., methanol, acetone, water)

- Perform differential scanning calorimetry (DSC) to identify thermal events and phase transitions

- Monitor time-dependent phase transformations using powder X-ray diffraction (PXRD)

- Execute slurry experiments in relevant solvents with PXRD monitoring to observe solvent-mediated phase transformations [18]

Kinetic Profiling:

- Model transformation kinetics using the Kolmogorov–Johnson–Mehl–Avrami (KJMA) equation

- Derive empirical rate parameters for polymorphic conversions [18]

- Assess stability under accelerated conditions (e.g., 40°C/75% relative humidity)

Table 2: Essential Analytical Techniques for Polymorph Characterization

| Technique | Key Information | Experimental Parameters |

|---|---|---|

| X-ray Powder Diffraction (XRPD) | Crystal structure fingerprint, phase identification | Scan range: 5-40° 2θ; Step size: 0.02°; Cu Kα radiation [18] |

| Differential Scanning Calorimetry (DSC) | Melting points, phase transitions, thermal stability | Heating rate: 10°C/min; Nitrogen purge gas [18] |

| Thermogravimetric Analysis (TGA) | Solvent/water content, decomposition profiles | Heating rate: 10°C/min; Nitrogen atmosphere |

| Single Crystal X-ray Diffraction | Definitive crystal structure determination | Low-temperature measurement (~100-150K) [1] |

| Solid-state NMR (ssNMR) | Molecular conformation, dynamics | Cross-polarization magic angle spinning (CP-MAS) |

The Scientist's Toolkit: Essential Research Reagents and Equipment

Table 3: Essential Materials and Reagents for Polymorph Research

| Item | Function/Application | Specific Example |

|---|---|---|

| Multiple Solvent Systems | Polymorph screening via recrystallization | Methanol, acetone, water for solvent-mediated phase transformations [18] |

| Cambridge Structural Database | Reference crystal structure data | Access to >1.2 million organic crystal structures [1] |

| Neural Network Potentials | Efficient crystal structure relaxation | Pre-trained models (PFP, ANI) for near-DFT accuracy at lower cost [1] |

| High-Throughput Crystallization Platforms | Automated polymorph screening | 96-well plate systems with varying temperature and evaporation conditions |

| Structure Determination from Powder Diffractometry | Solving crystal structures without single crystals | Rietveld refinement for structure solution [18] |

Computational Prediction of Polymorphic Structures

Machine Learning-Enhanced Crystal Structure Prediction

Traditional crystal structure prediction (CSP) methods face significant challenges due to the computationally intensive nature of exploring potential energy landscapes. Recent advances integrate machine learning to dramatically improve prediction efficiency. The SPaDe-CSP workflow (Space group and Packing Density predictor for Crystal Structure Prediction) exemplifies this approach, combining machine learning-based lattice sampling with structure relaxation via neural network potentials [1].

This workflow employs two key machine learning models: a space group predictor and a packing density predictor, both trained on molecular fingerprints (MACCSKeys) derived from the Cambridge Structural Database [1]. These models reduce the generation of low-density, less stable structures by narrowing the search space before computationally expensive structure relaxation. In validation tests on 20 organic crystals of varying complexity, this approach achieved an 80% success rate—twice that of random CSP—demonstrating significant efficiency improvements [1].

The structure relaxation phase utilizes neural network potentials (e.g., PFP21) trained on density functional theory data to achieve near-DFT accuracy at substantially reduced computational cost [1]. This combination of intelligent sampling and efficient relaxation addresses fundamental challenges in CSP, particularly for flexible organic molecules with multiple torsional degrees of freedom where weak intermolecular interactions (van der Waals forces, hydrogen bonds, π-π stacking) dominate crystal packing arrangements [1].

CSP Workflow: Machine learning-guided crystal structure prediction

Conformational Analysis and Energy Landscape Mapping

For flexible molecules, understanding conformational preferences is essential for accurate polymorph prediction. The Tegoprazan study demonstrates a CSP-independent strategy that combines computational and experimental approaches [18]. Researchers constructed conformational energy landscapes using relaxed torsion scans with the OPLS4 force field, exploring two key dihedral angles in 10° increments for each tautomeric form [18]. Boltzmann-weighted probabilities calculated from relative energies were compared with experimental solution structures derived from NOE-based NMR, revealing that dominant solution conformers corresponded closely to the packing motif of the stable Polymorph A [18].

This approach identified that polymorph selection in Tegoprazan is governed by solution-phase conformational preferences, tautomerism, and solvent-mediated hydrogen bonding [18]. Protic solvents favored direct crystallization of the stable Polymorph A, while aprotic solvents promoted transient formation of metastable Polymorph B [18]. Such insights provide a complementary framework to traditional CSP for guiding polymorph control in flexible drug molecules.

Regulatory and Quality Control Considerations

The regulatory landscape for polymorph control continues to evolve in response to well-publicized incidents like the Ritonavir case. While the FDA provides guidance for polymorphic forms in drug development, every drug candidate presents unique challenges, and no method provides absolute confidence that all potential solid forms have been identified [17]. This uncertainty was highlighted by the serendipitous discovery of a new Ritonavir polymorph (Form III) 24 years after the appearance of Form II, despite extensive previous characterization [17].

Quality control strategies must address both thermodynamic and kinetic factors influencing polymorphic stability. As demonstrated in the Tegoprazan study, solvent-mediated phase transformations follow predictable kinetics that can be modeled using approaches like the KJMA equation [18]. Understanding these transformation pathways enables the design of robust manufacturing processes that minimize the risk of unexpected polymorphic conversions during production or storage.

The integration of computational prediction with experimental validation represents the future of polymorph risk mitigation. As computational methods continue advancing, particularly through machine learning approaches, the pharmaceutical industry gains increasingly powerful tools for navigating the complex solid-form landscape early in development, potentially avoiding costly issues in later stages.

Polymorphism remains a critical consideration in both pharmaceutical development and functional materials design, presenting both challenges and opportunities. In pharmaceuticals, comprehensive polymorph screening and characterization are essential for ensuring product stability, efficacy, and regulatory compliance. In materials science, polymorph control enables the engineering of tailored physical and optical properties from a single chemical entity. Emerging computational approaches, particularly those integrating machine learning with efficient structure relaxation, are dramatically improving our ability to predict and control polymorphic outcomes. These advances, combined with robust experimental protocols and strategic intellectual property management, provide a framework for harnessing the power of polymorphism while mitigating associated risks across scientific and industrial domains.

The CSP Methodological Spectrum: From Ab Initio to Generative AI

Crystal structure prediction (CSP) represents the fundamental challenge of determining the most stable crystalline arrangement of a material based solely on its chemical composition [11]. This problem stands as a central pillar in theoretical crystal chemistry, with John Maddox famously noting in 1988 the ongoing "scandal" that scientists could not predict the structure of even the simplest crystalline solids from knowledge of their composition alone [21]. The solution to this problem has matured significantly with the development of sophisticated computational methodologies that combine quantum mechanical accuracy with efficient global optimization algorithms.

For inorganic crystals specifically, the most critical aspect of CSP is developing an effective search algorithm to navigate the vast configuration space of possible atomic arrangements [11]. The field has evolved from early empirical methods to sophisticated guided-sampling algorithms and, more recently, data-driven approaches [11]. This technical guide examines the established workhorses in inorganic CSP: ab initio methods that provide accurate energy evaluations, and global search algorithms that efficiently explore potential energy landscapes to identify stable crystalline configurations.

Fundamental CSP Methodologies

Crystal structure prediction methodologies universally incorporate two fundamental algorithmic components: a method for assessing material stability (typically through energy evaluation) and a search algorithm for exploring the design space [11]. The effectiveness of any CSP approach depends on the careful integration of these two components.

The CSP Problem Formulation

The CSP problem can be formally stated as: given a chemical composition and optional external constraints (such as pressure or temperature), identify the crystal structure that minimizes the free energy of the system. For inorganic systems, this involves determining:

- The crystal system and space group symmetry

- The lattice parameters (a, b, c, α, β, γ)

- The atomic positions within the unit cell

- The number of formula units per unit cell

The complexity arises from the exponential growth of possible configurations with increasing number of atoms, making exhaustive search computationally intractable for all but the simplest systems.

Mathematical Optimization Paradigm

A mathematical optimization-based search paradigm has emerged as a powerful alternative approach to CSP [11]. This formulation treats CSP as a direct optimization problem, seeking to minimize the system's energy function E(x) subject to crystallographic constraints:

min E(x) subject to: x ∈ C

where x represents the crystallographic variables (lattice parameters, atomic positions, space group) and C represents the crystallographic constraints (symmetry operations, minimum interatomic distances, etc.). This formulation enables the application of powerful optimization techniques from mathematical programming to the CSP problem.

Ab Initio Methods for Energy Evaluation

Ab initio (first-principles) methods provide the foundation for accurate energy evaluation in modern CSP workflows. These quantum mechanical approaches compute material properties directly from fundamental physical constants without empirical parameters.

Density Functional Theory (DFT)

Density Functional Theory has become the cornerstone method for ab initio crystal structure prediction due to its favorable balance between accuracy and computational efficiency. DFT methods approximate the solution to the many-body Schrödinger equation by focusing on electron density rather than wavefunctions.

Key implementations in CSP workflows:

The ABINIT software suite, for example, calculates "optical, mechanical, vibrational, and other observable properties of materials" starting from quantum equations of density functional theory [22]. It can handle "molecules, nanostructures and solids with any chemical composition" using "complete and robust tables of atomic potentials" [22].

Beyond Standard DFT: Advanced Electronic Structure Methods

For systems where standard DFT approximations prove insufficient, more sophisticated ab initio methods are employed:

| Method | Application in CSP | Strength |

|---|---|---|

| DFT+U | Strongly correlated electron systems | Corrects self-interaction error for d/f electrons |

| GW Approximation | Accurate band structures | Improved quasiparticle energies |

| Hybrid Functionals | Better electronic properties | Mixes exact HF exchange with DFT exchange |

| RPA (Random Phase Approximation) | van der Waals bonding | Accurate treatment of dispersion forces |

ABINIT implements several of these advanced methods, including "GW calculations" for charged excitations and "Bethe-Salpeter approach" for neutral optical excitations [23]. These methods enable researchers to go "beyond the standard DFT framework" when "correlated electrons are to be considered" [23].

Density Functional Perturbation Theory (DFPT)

Density-Functional Perturbation Theory provides an efficient framework for calculating response properties, including phonon spectra and elastic constants [23]. This powerful formalism allows ABINIT to "address directly all such properties in the case that are connected to derivatives of the total energy with respect to some perturbation," including "all dynamical effects due to phonons and their coupling" and "temperature-dependent properties due to phonons" [23].

Global Search Algorithms

Global search algorithms form the exploratory engine of CSP, navigating the high-dimensional, multi-minima potential energy surface to identify low-energy crystal structures.

Evolutionary Algorithms: USPEX

The Universal Structure Predictor: Evolutionary Xtallography (USPEX) method represents one of the most successful evolutionary algorithm approaches to CSP. Since its development in 2004, USPEX has been used by over 10,600 researchers worldwide and has demonstrated superior performance in blind tests of inorganic crystal structure prediction [21].

Key Algorithmic Features:

- Population-based search maintains a diverse set of candidate structures

- Variation operators include heredity (mixing parent structures), mutation (perturbing structures), and lattice mutation

- Natural selection favors lower-energy structures for reproduction

- Fingerprint functions enable structural diversity preservation through a niching technique [21]

- Cell reduction technique eliminates unphysical regions of search space [21]

USPEX has proven efficient for systems with up to 100-200 atoms per unit cell, with difficulties for larger systems arising primarily from "the increasing cost of ab initio calculations for increasing system sizes, and also due to the rapidly increasing number of energy minima" [21].

Particle Swarm Optimization: CALYPSO

The CALYPSO (Crystal structure AnaLYsis by Particle Swarm Optimization) method implements a corrected particle swarm optimization algorithm for crystal structure prediction. This approach mimics social behavior in bird flocking or fish schooling to navigate the potential energy surface.

Algorithmic Characteristics:

- Swarm intelligence leverages collective behavior of structure population

- Local and global search balance through particle movement rules

- Structural similarity checking prevents premature convergence

- Symmetry constraints reduce search space dimensionality

Performance Comparison of Global Search Methods

Quantitative comparisons demonstrate the relative performance of different global search algorithms:

Table 1: Performance comparison of search algorithms for LJ clusters (adapted from USPEX documentation [21])

| System | Method | Success Rate (%) | Average Number of Structures |

|---|---|---|---|

| LJ38 | USPEX | 100 | 35 |

| LJ38 | PSO | 100 | 605 |

| LJ38 | Minima Hopping | 100 | 1190 |

| LJ55 | USPEX | 100 | 11 |

| LJ55 | PSO | 100 | 159 |

| LJ55 | Minima Hopping | 100 | 190 |

| LJ75 | USPEX | 100 | 2145 |

| LJ75 | PSO | 98 | 2858 |

Table 2: Performance comparison for TiO₂ with 48 atoms/cell [21]

| Method | Success Rate (%) | Number of Relaxations |

|---|---|---|

| USPEX (cell splitting) | 100 | 41 |

| USPEX (no symmetry) | 100 | 80 |

| PSO | Not specified | Not specified |

The data clearly shows the efficiency of evolutionary algorithms, particularly USPEX, in locating global minima with fewer energy evaluations compared to other methods.

Integrated CSP Workflows

Successful crystal structure prediction requires careful integration of ab initio methods with global search algorithms into cohesive computational workflows.

Standard CSP Protocol

Structure Relaxation and Convergence

Structure relaxation represents a computationally intensive component of CSP workflows. Conventional approaches typically rely on force fields or density functional theory (DFT) calculations [1]. Recent advances incorporate machine learning to accelerate this process:

"Neural network potentials (NNPs) trained on DFT data have gained attention for achieving near-DFT-level accuracy at a fraction of the cost." [1]

The relaxation process typically employs algorithms such as:

- Broyden-Fletcher-Goldfarb-Shanno (BFGS) for efficient local optimization

- Limited-memory BFGS (L-BFGS) for large systems

- Conjugate gradient methods

- FIRE algorithm for molecular dynamics-based relaxation

Convergence criteria typically include thresholds for:

- Energy change between iterations (e.g., < 10⁻⁵ eV/atom)

- Maximum force on atoms (e.g., < 0.01 eV/Å)

- Maximum stress components (e.g., < 0.1 GPa)

Successful implementation of CSP requires access to specialized software tools, databases, and computational resources.

Table 3: Essential Research Reagent Solutions for CSP

| Resource | Type | Function | Examples |

|---|---|---|---|

| Ab Initio Codes | Software | Electronic structure calculations | VASP, ABINIT [22], Quantum ESPRESSO, CASTEP |

| Structure Predictors | Software | Global structure optimization | USPEX [21], CALYPSO |

| Structure Databases | Data Repository | Experimental reference data | Cambridge Structural Database (CSD) [24], Materials Project [4] |

| Force Fields | Interatomic Potentials | Efficient energy evaluation | Classical FFs, Neural Network Potentials (NNPs) [1] |

| Analysis Tools | Software | Structure characterization | VESTA, Pymatgen [12] |

The Cambridge Structural Database (CSD) deserves special mention as "the world's largest curated repository of experimental crystal structures" containing "over 1.3 million accurate 3D structures derived from X-ray, neutron, and electron diffraction analyses" [24]. This database serves as an essential resource for both method validation and knowledge-based approaches.

Applications and Validation

The accuracy and reliability of ab initio methods and global search algorithms have been demonstrated through numerous applications and rigorous blind tests.

Successful Predictions in Materials Science

Established CSP methodologies have enabled remarkable predictions of novel materials with exceptional properties:

High-Tc Superconductivity in H₃S: "A new sulfur hydride H₃S that hardly occurs at atmospheric pressure was theoretical predicted through USPEX code to be formed at high pressure." The "estimated Tc of Im-3m phase for H₃S at 200 GPa achieves a very high value of 191~204 K," setting a record for superconducting temperature that was later verified experimentally [21].

Novel Alloy Phases: "Novel phases Al₃Sc₂ and AlTa₇, previously unknown, have been identified as stable" through USPEX-assisted searches [21].

Nitrogen-Rich Materials: "Sodium pentazolate, a new high energy density material was discovered by researchers from University of South Florida using USPEX." The "pentazole anion is stabilized in the condensed phase by sodium cations at pressures exceeding 20 GPa" [21].

Performance in Blind Tests

The rigorous CCDC blind tests have provided objective assessment of CSP methodology performance over more than two decades [12]. These tests require participants to predict crystal structures of target compounds starting from only chemical diagrams. The evolution of methodology in these blind tests reflects the growing sophistication of the field:

"In the early blind tests only classical force fields were used, whereas in more recent blind tests the use of dispersion-inclusive density functional theory (DFT) for final stability ranking has become an established best practice." [12]

The most recent seventh blind test saw "the first use of machine learning interatomic potentials (MLIPs) for the CSP problem," indicating the ongoing evolution of methodology while still relying on the fundamental framework of ab initio methods and global search [12].

Current Limitations and Future Directions

Despite significant advances, established CSP methodologies face several challenges that guide future development.

Persistent Challenges

System Size Limitations: Current methods remain limited to systems with "up to 100-200 atoms/cell" due to "the increasing cost of ab initio calculations for increasing system sizes, and also due to the rapidly increasing number of energy minima" [21].

Accuracy-Speed Tradeoff: The "high computational cost of dispersion-inclusive DFT methods limits the scale at which they can be applied" [12], necessitating hierarchical approaches that sacrifice some accuracy for speed.

Polymorph Energy Ranking: The ability to correctly rank polymorphs with energy differences often smaller than "~4 kJ/mol" remains challenging [25], with "more than 50% of structures in the CCDC" having "energy differences between pairs of polymorphs smaller than ~2 kJ/mol" [25].

Emerging Paradigms

The field is witnessing the emergence of complementary approaches that build upon established workhorses:

Machine Learning Potentials: Universal models like the "Universal Model for Atoms (UMA)" enable "accurate predictions of energies and forces at a fraction of the cost of quantum mechanical methods" [12].

Generative AI: "Generative diffusion models (e.g., DiffCSP and MatterGen)" offer new approaches to "crystal structure prediction (mapping from chemical formula as input to candidate crystal structures as output)" [4].

Topological Approaches: Methods like CrystalMath derive "governing principles for the arrangement of molecules in a crystal lattice" from geometric analysis of known structures, enabling "prediction of stable structures and polymorphs without relying on interatomic interaction models" [25].

These emerging methodologies do not replace established workhorses but rather integrate with them, creating hybrid approaches that leverage the strengths of both physics-based and data-driven paradigms.

The Rise of Machine Learning Interatomic Potentials (MLIPs) for Accurate and Fast Relaxation