Hybrid Materials 2025: Characterizing Emergent Properties for Breakthroughs in Drug Development

This article explores the pivotal role of hybrid materials in modern drug discovery and development, with a specific focus on characterizing their emergent properties.

Hybrid Materials 2025: Characterizing Emergent Properties for Breakthroughs in Drug Development

Abstract

This article explores the pivotal role of hybrid materials in modern drug discovery and development, with a specific focus on characterizing their emergent properties. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning foundational concepts, cutting-edge methodological applications, and optimization strategies. It details how the convergence of hybrid AI, quantum computing, and novel composite materials is creating a paradigm shift, enabling the precise simulation of molecular interactions, the development of advanced drug delivery systems, and the design of more effective therapeutics. The content synthesizes the latest 2025 research and real-world case studies to offer a validated, forward-looking perspective on the field.

Defining Hybrid Materials and Their Emergent Properties in a Biomedical Context

What Are Hybrid Materials? Bridging Classical and Quantum Domains for Drug Discovery

What Are Hybrid Materials? Bridging Classical and Quantum Domains for Drug Discovery

In the quest to accelerate and refine the process of drug discovery, hybrid materials have emerged as a transformative class of substances. They are fundamentally defined as systems that intricately combine organic and inorganic components at the nanometer or molecular scale, creating a new material with properties superior to those of its individual parts [1]. This synergy is particularly powerful in pharmaceutical and biomedical applications, where these materials can be engineered to exhibit tailored mechanical strength, specific bioactivity, and controlled drug release profiles. The "bridging" in the title refers to the integration of classical materials science with the burgeoning field of quantum-inspired computational design. This confluence is creating a new paradigm where the physical synthesis of advanced biomaterials is guided by quantum computing and artificial intelligence (AI), enabling researchers to explore molecular interactions and material properties with unprecedented speed and precision [2] [3].

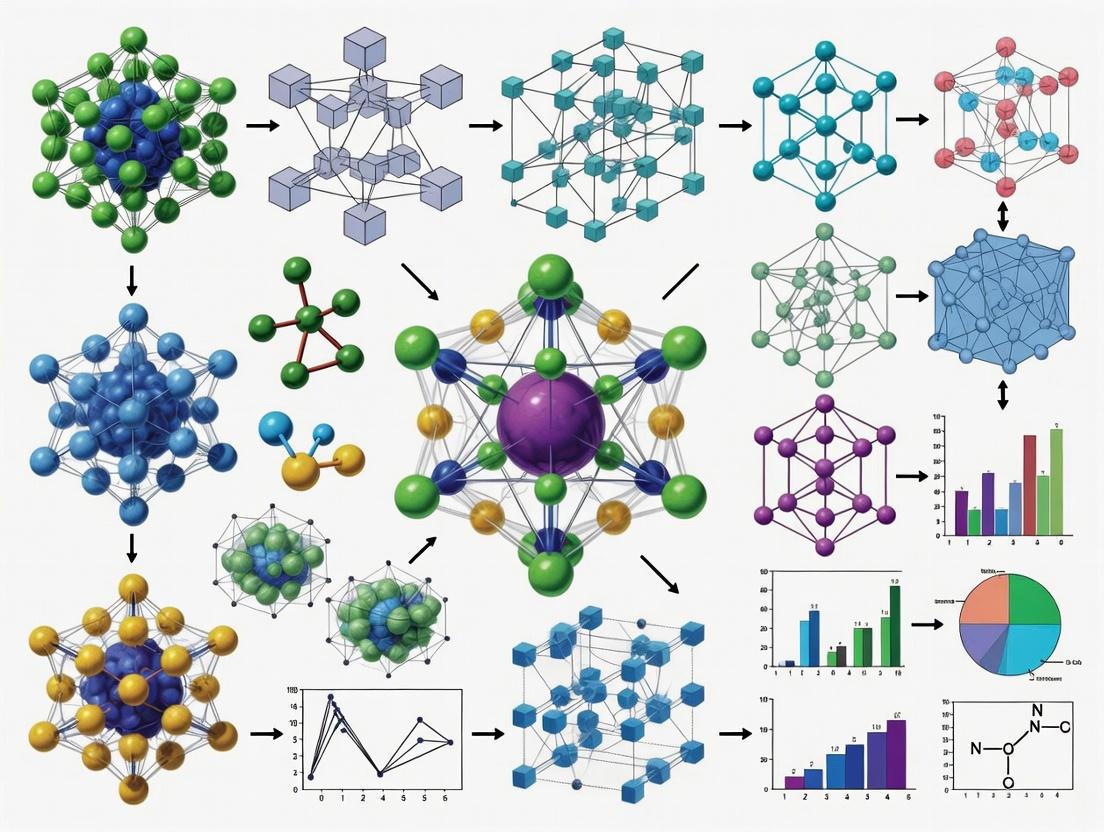

The investigation of hybrid materials is not confined to a single methodology. It is supported by a diverse "Scientist's Toolkit" that includes experimental synthesis, advanced computational modeling, and rigorous biological evaluation. The following diagram illustrates the core logical workflow that connects the fundamental concepts of hybrid materials to their ultimate application in drug discovery.

The Scientist's Toolkit: Key Reagents and Materials for Hybrid Material Research

The development and application of hybrid materials in drug discovery rely on a specific set of reagents and analytical techniques. The table below details key components of the research toolkit, drawing from experimental protocols used in recent studies.

Table 1: Essential Research Reagent Solutions for Hybrid Material Development

| Item Name / Category | Function / Role in Research | Example from Literature |

|---|---|---|

| Transition Metal Salts | Serves as the inorganic metal center, defining coordination geometry, redox activity, and often the core bioactivity (e.g., antimicrobial, anticancer). | Nickel(II) sulfate (NiSO₄) used as the metal precursor in a novel antimicrobial hybrid complex [4]. |

| Organic Ligands / Linkers | Coordinates with the metal center to form the hybrid structure; contributes to target binding (e.g., via hydrogen bonding) and modulates properties like solubility and electronic tunability. | 3-aminomethylpyridine and similar pyridine-based ligands used for synthesizing Ni(II) and other metal complexes [4]. |

| Structuring Agents / Sol-Gel Precursors | Directs the formation of the material's architecture during synthesis (e.g., porous frameworks) and can be used to create biocompatible coatings. | Ethylenediamine (ED) in cobalt phosphate hybrids; Silicon/Zirconium alkoxides in sol-gel synthesis for bioactive glasses and carriers [5] [1]. |

| Computational Modeling Software | Enables quantum chemical studies (e.g., NBO, FMO, RDG analysis) and molecular docking simulations to predict stability, reactivity, and binding affinity before synthesis. | Used to perform Hirshfeld surface and molecular docking analyses against P. aeruginosa targets (7PTF, 7PTG), predicting superior binding over ciprofloxacin [4]. |

| Characterization Techniques | Determines the crystal structure, morphological, optical, and thermal properties of the synthesized hybrid material. | Single-crystal X-ray Diffraction (XRD), FT-IR spectroscopy, and thermal analysis (TG/DTG) [4] [5]. |

Comparative Performance: Hybrid Materials and AI-Driven Discovery Platforms

The true value of hybrid materials is demonstrated by comparing their performance against traditional approaches and among different next-generation strategies. The following tables quantify this performance across material properties and computational efficiency.

Table 2: Performance Comparison of Drug Discovery Approaches

| Discovery Approach | Key Performance Metrics | Reported Experimental Data & Results |

|---|---|---|

| Traditional Drug Discovery | Timeline: ~5 years to clinical candidate [6].Efficiency: Requires synthesis of thousands of compounds [6].Hit Rate: Low, high experimental burden. | High-throughput screening and structure-based design are resource-intensive [2]. |

| AI-Driven Discovery | Timeline: Compressed to ~2 years or less for some candidates [6].Efficiency: Up to 70% faster design cycles, requiring 10x fewer compounds synthesized [6].Hit Rate: Improved candidate selection. | Exscientia's CDK7 inhibitor candidate required only 136 synthesized compounds [6]. Model Medicines' GALILEO platform achieved a 100% hit rate (12/12 compounds) in validated in vitro antiviral assays [2]. |

| Quantum-Enhanced AI (Hybrid Approach) | Timeline: Projected to be highly accelerated.Efficiency: 21.5% improvement in filtering non-viable molecules vs. AI-only models [2].Hit Rate: Capable of identifying active compounds for difficult targets. | Insilico Medicine's quantum-classical pipeline screened 100 million molecules, leading to 15 synthesized compounds and 2 with real biological activity against the difficult KRAS-G12D cancer target [2]. |

Table 3: Experimental Bioactivity of a Novel Nickel(II) Hybrid Material

| Assay Type | Test Details & Targets | Results & Comparative Performance |

|---|---|---|

| Antimicrobial Activity | Tested against Gram-positive and Gram-negative bacteria. | The Ni(II)-3AMP complex "notably outperformed ciprofloxacin" against pathogens like Pseudomonas aeruginosa and E. coli [4]. |

| Molecular Docking | Simulated binding against P. aeruginosa DNA gyrase targets (7PTF & 7PTG). | Showed "superior binding affinity... compared to ciprofloxacin," with highly favorable docking scores and multiple hydrogen bonds indicating stable interactions [4]. |

| Antioxidant Activity | Evaluated via ABTS and DPPH assays. | Demonstrated "higher efficacy... in ABTS compared to DPPH assays" [4]. |

Experimental Protocols: Methodologies for Synthesis and Analysis

Protocol 1: Synthesis of a Nickel(II)-Based Hybrid Complex

This protocol outlines the synthesis of a novel Ni(II) hybrid material with documented antimicrobial efficacy [4].

- Key Reagents: 3-aminomethylpyridine (organic ligand), Nickel(II) sulfate (

NiSO₄), concentrated sulfuric acid (H₂SO₄), distilled water. - Procedure:

- Dissolve Nickel(II) sulfate (23.21 mg, 0.15 mmol) in water.

- Add this solution to a separate aqueous solution of 3-aminomethylpyridine (32.44 mg, 0.3 mmol).

- Add four drops of concentrated sulfuric acid (96%) to the mixture to create an acidic environment.

- Leave the solution under constant stirring for 15 minutes.

- Allow the mixture to stand undisturbed at room temperature for two weeks, during which light blue prismatic crystals of the hybrid complex form.

- Characterization Methods: The resulting crystals were characterized using Single-crystal X-ray Diffraction (XRD) to determine crystal structure, FT-IR spectroscopy to confirm chemical bonds, and thermal analysis (TG/DTG) to assess stability [4].

Protocol 2: Workflow for an AI-Driven & Quantum-Enhanced Discovery Pipeline

This protocol describes a computational hybrid approach, combining AI and quantum methods for in silico drug candidate screening [2].

- Key Tools: Quantum Circuit Born Machines (QCBMs) for molecular generation, deep learning models for screening and optimization.

- Procedure:

- Molecular Generation: Use a quantum-enhanced generator (QCBM) to create a vast and diverse virtual library of molecular structures.

- Initial Screening: Apply deep learning models to screen this massive library (e.g., 100 million molecules) based on predicted properties like binding affinity and solubility.

- Lead Refinement: Narrow down the list to a smaller set of candidates (e.g., 1.1 million) for more detailed classical computational analysis.

- Final Selection & Synthesis: Select the most promising candidates (e.g., 15 compounds) for chemical synthesis and subsequent in vitro biological testing.

- Output: The result is a shortlist of synthesized compounds with a high probability of biological activity, as demonstrated by the identification of a molecule with binding affinity to the challenging KRAS-G12D cancer target [2].

The workflow below synthesizes the core components of the research toolkit and the experimental protocols into a single, integrated discovery pipeline, from conceptualization to final application.

The exploration of hybrid materials represents a fundamental shift in the approach to drug discovery. By strategically combining organic and inorganic components, and further bridging the physical and digital realms through AI and quantum computing, scientists are creating a powerful new paradigm. The experimental data clearly shows that these approaches—whether manifesting as a novel Ni(II) complex with superior antimicrobial activity or an AI-generated small molecule—can outperform traditional methods in efficiency, success rate, and the ability to tackle previously "undruggable" targets. The future of the field lies in the deeper integration of these hybrid strategies, where iterative cycles of computational prediction and experimental validation will continue to accelerate the development of life-saving therapeutics.

Emergent properties represent a fundamental paradigm in materials science, where complex systems exhibit novel functionalities that are not simply the sum of their individual components' properties. In hybrid materials, this phenomenon arises from the intricate, often non-linear, interactions between chemically distinct organic and inorganic phases across multiple length scales. This guide compares the emergent properties and characterization data for three classes of hybrid materials, providing researchers and drug development professionals with a structured analysis of their performance relative to conventional alternatives.

Defining Emergence in Hybrid Material Systems

In condensed matter, complexity arises from emergent behaviors that cannot be understood by analyzing individual constituents in isolation. [7] These behaviors are the product of a material's multiscale organization, where hierarchical architectures and nonlinear interactions span from molecular to macroscopic domains. [7] The challenge and opportunity lie in characterizing these architectures to understand and engineer their emergent functions, which underpin the behavior of next-generation functional materials and adaptive technologies. [7]

In hybrid organic-inorganic materials, this synergy is particularly potent. These materials combine the distinct characteristics of different components, preserving their individual attributes while giving rise to emergent behaviors from their synergistic interactions. [8] [9] For instance, a purely inorganic polyoxometalate (POM) may possess catalytic activity, but when covalently bonded to a biomolecule, the resulting hybrid can exhibit entirely new properties such as enhanced biocompatibility, lower off-target toxicity, and novel bioactivity, paving the way for advanced therapeutic applications. [8]

Comparative Analysis of Emerging Hybrid Materials

The following section provides a data-driven comparison of three hybrid material systems where emergent properties are prominently displayed.

Table 1: Performance Comparison of Hybrid Materials with Conventional Counterparts

| Material System | Key Components | Synthesis Method | Emergent Property | Quantitative Performance Data | Primary Application |

|---|---|---|---|---|---|

| Rare Earth-HOF (REHM-HOF) [10] | Rare Earth Ions, Hydrogen-Bonded Organic Framework | Post-synthetic modification (Coordination & Ion Exchange) | Luminescence Response Sensing | High energy transfer efficiency via "antenna effect"; Tunable emission. [10] | Anti-counterfeiting, Chemical Sensing, Intelligent Detection |

| Glaphene [11] | Graphene, Silica Glass | Two-step, single-reaction chemical vapor deposition | Novel Semiconducting Behavior | Metallic (graphene) & insulating (silica) components form a semiconductor. [11] | Advanced Electronics, Photonics, Quantum Systems |

| POM-Biomolecule Hybrid [8] | Polyoxometalate (e.g., Lindqvist, Keggin), Biomolecule | Covalent post-functionalization (e.g., on AE-NH2 POM) | Enhanced Biocompatibility & Catalytic Activity | Lower off-target toxicity; Multi-electron transfer catalysis. [8] | Drug Delivery, Targeted Therapies, Bio-catalysis |

| Selenium-Based Hybrid [9] | Selenium Dibromide (SeBr₂), Cetyltrimethylammonium | Slow Evaporation at Room Temperature | Semiconducting & Enhanced Dielectric Properties | Optical Band Gap: ~3.30 eV; Phase transition at ~417 K. [9] | Advanced Electronic, Energy Storage, Dielectric Devices |

Table 2: Analysis of Advantages and Limitations

| Material System | Key Advantages | Current Limitations & Characterization Challenges |

|---|---|---|

| Rare Earth-HOF (REHM-HOF) [10] | Mild synthesis; Structural diversity; Precise anchoring of luminescent centers. [10] | Long-term stability; Multifunctional integration; Translation to real-world applications. [10] |

| Glaphene [11] | Atomically thin; New electronic properties from hybrid bonding; Beyond stacked 2D materials. [11] | Complex synthesis requiring custom high-temperature, low-pressure apparatus. [11] |

| POM-Biomolecule Hybrid [8] | Atomically precise; Tunable properties; Combines POM reactivity with biomolecule specificity. [8] | Understanding bio-interface; Long-term stability in biological environments. [8] |

| Selenium-Based Hybrid [9] | Straightforward synthesis; Stable framework; Tailorable electrical properties. [9] | Understanding charge transport mechanisms; Probing structure-property relationships at the atomic scale. [9] |

Experimental Protocols for Characterizing Emergent Properties

Protocol: Probing Emergent Luminescence in REHM-HOFs

The functionalization of Hydrogen-Bonded Organic Frameworks (HOFs) with rare-earth ions enables luminescence response sensing via the "antenna effect."

- Material Synthesis: Construct the HOF scaffold under mild synthesis conditions. Subsequently, introduce rare-earth ions (e.g., Eu³⁺, Tb³⁺) into the framework using post-synthetic modification strategies, primarily via coordination or ion exchange. [10]

- Energy Transfer Tuning: Optimize the energy transfer efficiency from the HOF "antenna" to the rare-earth ion luminescent centers. This step is crucial for enhancing the luminescence output and is a direct result of the synergistic interaction between the components. [10]

- Luminescence Spectroscopy: Characterize the emergent luminescent properties using photoluminescence spectroscopy. Measure the emission intensity, lifetime, and quantum yield.

- Sensor Testing: Expose the REHM-HOF to target analytes (chemical vapors, ions, or physical stimuli like temperature). Monitor the changes in the luminescence signal (e.g., intensity, wavelength shift, or lifetime) as the emergent responsive behavior. [10]

Protocol: Verifying Emergent Electronic Properties in Glaphene

This protocol confirms the formation of a true hybrid 2D material with emergent semiconducting properties, verified against quantum simulations.

- Chemical Synthesis: Employ a two-step, single-reaction method. Use a liquid chemical precursor containing both silicon and carbon. Under low-pressure conditions, first grow graphene by tuning oxygen levels during heating, then shift conditions to favor the formation of a chemically bonded silica layer. [11]

- Structural Verification: Use techniques like transmission electron microscopy (TEM) and X-ray diffraction (XRD) to confirm the single, atom-thick compound structure and verify the unique interface bonding between graphene and silica. [11]

- Electronic Property Measurement: Use techniques like spectroscopic ellipsometry to determine the optical band gap, confirming a transition from a metal (graphene) and an insulator (silica) to a semiconductor (glaphene).

- Theoretical Validation: Conduct quantum mechanical simulations to model the hybrid system. Compare the simulated electronic structure and collective vibrations with experimental results to confirm the emergence of new properties not present in the individual parent materials. [11]

The following workflow diagram illustrates the integrated experimental and computational approach for verifying emergent properties in a hybrid material like glaphene.

Protocol: Assessing Emergent Bioactivity in POM-Biomolecule Hybrids

This protocol evaluates the enhanced functionality and biocompatibility emerging from the covalent linkage of a POM to a biomolecule.

- Hybrid Synthesis: Select an amino-functionalized POM platform, such as (AE-NH₂). Covalently conjugate the biomolecule (e.g., a peptide, antibiotic, or sugar) to the POM cluster via post-functionalization, using coupling agents to form stable amide or other bonds. [8]

- Structural Confirmation: Use nuclear magnetic resonance (NMR) and mass spectrometry to verify the successful formation of the conjugate and its molecular structure.

- Bioactivity Assay: Test the hybrid's biological activity (e.g., antitumor or antibacterial efficacy) in vitro and compare it to the unconjugated POM. The emergent property is often a different or enhanced activity profile. [8]

- Biocompatibility Assessment: Evaluate cytotoxicity against healthy human cell lines. The emergent property here is often significantly reduced off-target toxicity compared to the parent POM, a critical factor for clinical potential. [8]

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Hybrid Materials Research

| Item / Reagent | Function & Role in Emergent Behavior |

|---|---|

| Rare Earth Salts (e.g., EuCl₃, TbCl₃) [10] | Serves as the luminescent center in REHM-HOFs. Interaction with the HOF "antenna" enables emergent luminescence sensing. |

| HOF Organic Linkers (e.g., carboxylic acid derivatives) [10] | Forms the crystalline, porous scaffold. Its structure dictates the assembly and enables post-synthetic modification with rare-earth ions. |

| Polyoxometalate (POM) Platforms (e.g., AE-NH₂) [8] | Acts as the tunable inorganic building block. Covalent attachment of biomolecules leads to emergent biocompatibility and bioactivity. |

| 2D Material Precursors (e.g., Si/C precursor for glaphene) [11] | Enables the bottom-up synthesis of novel 2D hybrids. The chemical merger of different classes of materials (metal/insulator) creates emergent electronic properties. |

| Cetyltrimethylammonium Bromide (CTAB) [9] | Acts as an organic surfactant and structure-directing agent in selenium-based hybrids, guiding self-assembly and influencing dielectric properties. |

| Selenium Dibromide (SeBr₂) [9] | Provides the inorganic component with distinctive electronic properties. Its integration into a hybrid organic framework leads to emergent semiconducting and dielectric behavior. |

The study of emergent properties in hybrid materials is moving from observation to rational design. As characterization techniques like multimodal mapping and machine learning models improve, they bridge the gap between multiscale structure and function. [7] This progress enables the targeted engineering of hybrid materials, such as REHM-HOFs for advanced sensing or POM-biomolecule conjugates for precision therapy, where the whole is definitively greater than the sum of its parts. The future of the field lies in leveraging these insights to solve complex challenges in electronics, medicine, and energy.

The field of materials science is undergoing a profound transformation, driven by the convergence of novel material classes and advanced characterization technologies. Research into hybrid materials now focuses significantly on understanding and leveraging their emergent properties—complex behaviors that arise from the interaction of components rather than from the components themselves. This guide provides a comparative analysis of two pivotal classes at the forefront of this research: Hybrid AI-Quantum systems and Sustainable Bio-Nanocomposites.

The characterization of these materials demands sophisticated methodologies that bridge computational prediction and experimental validation. As researchers and drug development professionals well know, the accurate measurement of emergent phenomena—such as quantum coherence in superconducting materials or the controlled release of antimicrobials from nanocomposite films—is critical for translating fundamental research into practical applications. This guide objectively compares the performance, experimental protocols, and research tools essential for advancing the field of hybrid materials.

Performance Comparison of Key Material Classes

The following tables synthesize quantitative data and key characteristics for the two focal material classes, providing a basis for objective comparison.

Table 1: Performance and Characteristics of Hybrid AI-Quantum Material Systems

| Performance Metric | Hybrid AI-Quantum Systems | Key Experimental Findings |

|---|---|---|

| Quantum Advantage | Completed benchmark calculation in ~5 minutes vs. 10^25 years for classical supercomputer [12] | Google's Willow chip (105 qubits) demonstrated exponential error reduction [12] |

| Error Correction | Error rates reduced to record lows of 0.000015% per operation [12] | Algorithmic fault tolerance techniques reduced error correction overhead by up to 100x [12] |

| Qubit Performance | 105 physical qubits (Google Willow); 200 logical qubits targeted (IBM Quantum Starling, 2029) [12] | Microsoft Majorana 1 topological architecture demonstrated inherent stability [12] |

| Material Simulation | 12% performance improvement over classical HPC in medical device simulation [12] | IonQ 36-qubit computer outperformed classical methods [12] |

| Application Speed | Quantum Echoes algorithm ran 13,000x faster than classical supercomputers [12] | Out-of-order time correlator algorithm demonstrated verifiable quantum advantage [12] |

Table 2: Performance and Characteristics of Sustainable Bio-Nanocomposites

| Performance Metric | Sustainable Bio-Nanocomposites | Key Experimental Findings |

|---|---|---|

| Antimicrobial Efficacy | CuO-based active films significantly reduced total viable bacterial counts, Gram-negative pathogens, and fungi [13] | Nano-Ag, ZnO, and CuO integrated into films disrupt cell membranes via reactive oxygen species [13] |

| Barrier Properties | Nanomaterials enhanced mechanical strength and barrier efficiency against oxygen and moisture [13] | Nano-clays used as oxygen scavengers delay oxidation-related spoilage [13] |

| Sensing Capability | pH-sensitive films with anthocyanins showed visible color changes as spoilage progressed [13] | Carbon nanotubes and metal oxide nanowires detected gases like ammonia and ethylene [13] |

| Shelf-life Extension | Active packaging with natural extracts (clove, cinnamon, rosemary oil) delayed microbial growth [13] | Multifunctional nano-packaging materials delivered active compounds (zerumbone, turmeric oil) [13] |

| Biodegradability | Integration with biodegradable matrices (chitosan, gelatin, alginate) supports circular economy [13] | Bio-based smart packaging made from renewable, biodegradable materials [13] |

Table 3: Cross-Domain Comparison of Research Maturity and Application Potential

| Characteristic | Hybrid AI-Quantum Systems | Sustainable Bio-Nanocomposites |

|---|---|---|

| Technology Readiness | Early R&D with rapid prototyping (AI-driven); limited to specialized labs [14] [12] | Advanced development with some commercial applications [13] |

| Primary Research Focus | Error correction, qubit stability, quantum advantage demonstration [15] [12] | Functional enhancement, safety validation, scalability [13] |

| Characterization Complexity | Extreme (requires ultra-low temp, coherence time measurement) [12] | Moderate (requires migration testing, toxicity assessment) [13] |

| Commercial Potential | $72B by 2035 (quantum computing forecast) [15] | Addressing $1.2B quantum communication market (2024) [15] |

| Key Limitation | Quantum resource requirements and coherence times [12] | Potential nanomaterial migration and environmental impact [13] |

Experimental Protocols for Characterizing Emergent Properties

Protocol for AI-Driven Fabrication of Quantum Materials

The AI-driven molecular-beam epitaxy (MBE) protocol represents a groundbreaking approach to creating delicate quantum materials like high-temperature iron selenide superconductors, which traditionally require exceptional craftsmanship [14].

Methodology Overview:

- Reinforcement Learning Framework: Unlike supervised learning, the AI uses a reward-maximizing model that filters better superconductors from lower-performing ones, using optimal results to iteratively improve its fabrication strategy without needing extensive pre-labeled datasets [14].

- Real-Time Adaptive Adjustment: The AI robot analyzes diffraction patterns during the atomic-layer deposition process, making instant adjustments to growth conditions that would typically require years of human experience to master [14].

- Iterative Material Optimization: The system focuses on finding which materials work best rather than comparing to a predetermined ideal, allowing it to discover novel fabrication approaches and potentially new quantum material phases [14].

Validation Measures:

- Successful fabrication of atomically thin superconducting quantum materials extending to wafer scales [14].

- Comparison of superconducting properties against materials produced by expert researchers (fewer than 10 groups globally successfully fabricate these materials) [14].

Protocol for Testing Bio-Nanocomposite Functional Performance

The characterization of sustainable bio-nanocomposites for smart food packaging focuses on measuring their active and intelligent functionalities, which emerge from the integration of nanomaterials with biodegradable matrices [13].

Methodology Overview:

- Active Functionality Assessment:

- Antimicrobial Testing: Measure reduction in total viable bacterial counts (e.g., Gram-negative pathogens) and spoilage fungi after exposure to nano-enabled films (e.g., containing CuO, nano-Ag, ZnO) [13].

- Barrier Performance Testing: Quantify oxygen transmission rates through nano-clay incorporated films to evaluate antioxidant preservation efficacy [13].

- Controlled Release Measurement: Monitor the release kinetics of active compounds (e.g., zerumbone, turmeric oil) from natural polymer-based films to inhibit oxidation [13].

- Intelligent Functionality Assessment:

- Freshness Sensing Validation: Expose pH-sensitive films (e.g., incorporating red cabbage anthocyanins) to spoilage metabolites and document visible color changes using standardized colorimetric scales [13].

- Gas Detection Sensitivity: Test carbon nanotube-based sensors against target volatile organic compounds (VOCs) like ammonia and ethylene to determine detection thresholds and response times [13].

- Traceability Performance: Evaluate RFID and NFC integration for temperature history monitoring and supply chain transparency throughout simulated distribution cycles [13].

Validation Measures:

- Documentation of shelf-life extension for perishable products (e.g., meats, seafood, fruits) compared to conventional packaging [13].

- Migration studies and lifecycle analyses to ensure consumer safety and regulatory compliance for nano-enabled materials [13].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Essential Research Reagents and Materials for Hybrid Materials Research

| Research Reagent/Material | Function in Research | Application Context |

|---|---|---|

| Molecular-Beam Epitaxy (MBE) System | Precise atomic-layer deposition of quantum materials [14] | Fabrication of iron selenide superconductors [14] |

| Reinforcement Learning AI Platform | Self-optimization of material fabrication parameters without extensive labeled data [14] | Autonomous discovery of optimal quantum material growth conditions [14] |

| Quantum Chemistry Toolkits | Simulation of molecular behavior at subatomic level for material property prediction [16] | SandboxAQ's platform for battery material discovery [16] |

| Metal/Metal Oxide Nanoparticles (Ag, ZnO, CuO) | Provide antimicrobial activity through reactive oxygen species generation [13] | Active food packaging films for shelf-life extension [13] |

| Natural Polymer Matrices (Chitosan, Gelatin, Alginate) | Biodegradable substrates for nanomaterial integration [13] | Sustainable packaging with embedded sensing capabilities [13] |

| pH-Sensitive Anthocyanins | Visual freshness indicators through color change response to spoilage metabolites [13] | Intelligent packaging for real-time quality monitoring [13] |

| Carbon Nanotubes & Quantum Dots | High-sensitivity detection of gases and contaminants via electrical or optical signal changes [13] | Sensors for volatile organic compounds in intelligent packaging [13] |

| Graph Neural Networks (GNNs) | Prediction of complex material behavior like battery degradation from time-series data [16] | Performance forecasting for energy storage materials [16] |

The comparative analysis of Hybrid AI-Quantum Systems and Sustainable Bio-Nanocomposites reveals distinct yet complementary research trajectories. Quantum material systems demonstrate transformative potential for computational supremacy and complex material simulation but face significant characterization challenges related to error correction and stability. Conversely, bio-nanocomposites offer immediately applicable solutions for sustainability and smart functionality, with research priorities centered on safety validation and scalable manufacturing.

For researchers and drug development professionals, the convergence of these fields presents compelling opportunities. AI-quantum systems may eventually revolutionize molecular simulation for drug discovery, while bio-nanocomposites offer novel platforms for drug delivery and biomedical devices. The continued characterization of emergent properties in both material classes will undoubtedly yield unexpected discoveries and applications, driving the next generation of materials science innovation.

The pharmaceutical industry has reached a definitive inflection point in 2025, marked by the strategic integration of hybrid approaches that blend physical and computational research methodologies. This transformation is driven by mounting pressures including escalating research and development costs, declining R&D productivity, and unprecedented patent cliffs putting $236 billion in sales at risk by 2030 [17]. Simultaneously, technological advancements in artificial intelligence, quantum computing, and data analytics have matured to a point where they can deliver tangible value across the drug development pipeline.

Hybrid approaches no longer represent speculative future concepts but have become established, value-driving strategies. According to Deloitte's 2025 survey of biopharma R&D executives, 53% reported increased laboratory throughput and 45% saw reduced human error as direct results of digital modernization efforts [17]. The industry is witnessing a fundamental shift from siloed, sequential research to integrated, predictive environments where wet and dry lab insights continuously inform one another, creating an accelerated innovation cycle that is revolutionizing traditional pharmaceutical R&D models.

Quantitative Impact: Measuring the Hybrid Advantage

The transformative impact of hybrid R&D approaches is quantifiable across critical performance indicators. The following comparative analysis illustrates how integrated methodologies are enhancing productivity and output compared to traditional models.

Table 1: Performance Metrics Comparison Between Traditional and Hybrid R&D Approaches

| Performance Indicator | Traditional R&D | Hybrid R&D Approach | Data Source |

|---|---|---|---|

| Preclinical Timeline Reduction | Baseline | 25-50% reduction | World Economic Forum [18] |

| New Drug Discovery Influence | Not applicable | 30% of new drugs discovered using AI | World Economic Forum [18] |

| Clinical Trial Recruitment | 85% fail to recruit on time | 59% increase in hybrid trial adoption | Within3 [19] |

| Lab Throughput Improvement | Baseline | 53% of organizations report increase | Deloitte [17] |

| Human Error Reduction | Baseline | 45% of organizations report reduction | Deloitte [17] |

| Therapy Discovery Pace | Baseline | 27% report faster discovery | Deloitte [17] |

Table 2: Financial and Strategic Impact of Hybrid R&D Modernization

| Impact Category | Current Hybrid Performance | Future Projection | Source |

|---|---|---|---|

| Projected Pipeline Value | $197B in new modalities (60% of total) | Accelerated growth | BCG [20] |

| R&D IT Cost Savings | Up to 30% freed for reinvestment | Enables AI/automation scaling | McKinsey [21] |

| Lab Digitalization ROI | 37% track quantitative metrics | 80% sustaining/increasing investment | Deloitte [17] |

| AI Value Potential | Early implementation | $53B annual value across R&D chain | McKinsey [21] |

The data demonstrates that hybrid approaches are delivering substantial operational and financial benefits. Beyond these metrics, hybrid strategies are enhancing probability of technical success and improving portfolio decision-making by providing richer data sets and predictive capabilities [21]. Companies that have implemented integrated tech stacks report faster cycle times from drug discovery to market launch, with AI-driven tools accelerating molecule design and clinical development processes [21].

Core Hybrid Methodologies: Experimental Protocols and Workflows

Integrated Biological and Digital Target Identification

Objective: To identify and validate novel therapeutic targets by combining multi-omics data with AI-powered computational analysis.

Experimental Protocol:

- Multi-omics Data Collection: Generate transcriptomic, proteomic, and metabolomic profiles from patient-derived samples (e.g., tissues, biofluids) representing both disease and healthy states [17].

- Data Product Curation: Convert raw omics data into standardized research data products using FAIR principles (Findable, Accessible, Interoperable, Reusable) [17]. Apply structured ontologies for semantic consistency across datasets.

- Quantum-Enhanced Simulation: Employ quantum computing systems to model protein folding dynamics and binding site accessibility, with particular value for orphan proteins with limited experimental data [22].

- AI-Powered Target Prioritization: Implement ensemble machine learning models that integrate:

- Genetic association signals from genome-wide association studies (GWAS)

- Expression quantitative trait loci (eQTL) data

- Protein-protein interaction networks

- Literature-derived knowledge graphs

- Experimental Validation: Confirm computational predictions using CRISPR-based functional genomics in relevant cellular models, measuring impact on disease-relevant phenotypes.

Figure 1: Hybrid Target Identification Workflow

AI-Guided Molecular Design with Experimental Validation

Objective: To accelerate the design and optimization of therapeutic candidates with desired properties using hybrid computational-experimental approaches.

Experimental Protocol:

- Generative Molecular Design: Utilize generative AI models trained on chemical structures and biological activity data to propose novel molecular entities with predicted target engagement [23].

- In Silico Property Prediction: Employ both classical force fields and emerging quantum computational methods to predict key drug properties including:

- Binding affinity and specificity

- Pharmacokinetic parameters

- Toxicity and off-target effects [22]

- Automated Synthesis and Screening: Integrate computational outputs with automated laboratory systems for compound synthesis and high-throughput screening [17].

- Data Feedback Loop: Establish a closed-loop system where experimental results continuously refine and improve computational models through iterative learning [17].

- Lead Optimization: Use structure-activity relationship (SAR) data from both simulations and experiments to guide compound optimization with reduced cycle times [22].

Table 3: Research Reagent Solutions for Hybrid Molecular Design

| Reagent/Technology | Function in Hybrid Workflow | Application Context |

|---|---|---|

| Quantum Processing Units | Enable precise molecular simulation at quantum level | Electronic structure calculation for small molecules & proteins [22] |

| Generative AI Platforms | Create novel molecular structures with optimized properties | De novo drug design beyond chemical space of training data [23] |

| Automated Synthesis Instruments | Physically produce computationally designed compounds | High-throughput analog synthesis for SAR exploration [17] |

| Multi-parameter Screening Assays | Provide experimental validation of predicted properties | Measure binding, functional activity, and early toxicity signals [17] |

| Electronic Lab Notebooks | Capture structured data for model refinement | Create FAIR data products for continuous AI training [21] |

Hybrid Clinical Trial Implementation

Objective: To enhance clinical trial efficiency, patient diversity, and data richness by combining traditional and decentralized elements.

Experimental Protocol:

- Digital Patient Recruitment: Implement AI-driven protocol optimization to address recruitment challenges, using predictive analytics to identify ideal trial sites and patient populations [24].

- Mixed Data Collection Framework:

- Traditional site-based assessments for complex measurements

- Remote patient monitoring through wearable sensors and mobile health technologies

- Patient-reported outcomes collected via digital platforms [19]

- Real-World Evidence Integration: Incorporate real-world data from electronic health records, claims data, and patient registries to augment clinical trial findings and provide comparative effectiveness context [24].

- Adaptive Design Enablement: Utilize continuous data flow to inform potential trial modifications through adaptive design elements, optimizing trial parameters based on accumulating evidence [24].

- Patient-Centric Engagement: Deploy digital engagement tools to improve retention, with hybrid models balancing the convenience of remote participation with the value of selective in-person interactions [19].

Figure 2: Hybrid Clinical Trial Framework

Technological Infrastructure: The Hybrid R&D Stack

The successful implementation of hybrid R&D requires a sophisticated technological infrastructure that seamlessly connects computational and physical research environments. Modern pharma R&D organizations are building what McKinsey describes as a "next-generation technology stack" with four integrated layers [21]:

- Infrastructure Layer: Cloud-based resources providing scalability, security, and computational power for demanding AI and simulation workloads [21].

- Data Layer: Centralized data management systems that adhere to FAIR principles, enabling the integration and curation of diverse data types from clinical, omics, and real-world sources [21].

- Application Layer: Core systems including electronic data capture (EDC), clinical data management systems (CDMS), and electronic lab notebooks that operationalize research workflows [21].

- Analytics Layer: AI and machine learning tools that generate insights from integrated data, supporting decision-making from discovery through development [21].

This modular architecture enables organizations to maintain flexibility while maximizing existing resources. Leading companies are leveraging this infrastructure to achieve what Deloitte identifies as a "predictive lab environment" where AI, digital twins, and automation work together to guide scientific decisions [17]. In these advanced implementations, insights from physical experiments and in silico simulations inform each other in real time, significantly shortening experimental cycles by minimizing trial and error.

Emerging Frontiers: Quantum Computing and Novel Modalities

The hybrid approach is extending into frontier technologies that promise to further transform pharmaceutical R&D. Quantum computing represents a particularly promising frontier, with McKinsey estimating $200-500 billion in potential value creation for the life sciences industry by 2035 [22]. Unlike classical computing, quantum systems perform first-principles calculations based on quantum physics, enabling highly accurate molecular simulations without complete reliance on existing experimental data [22].

Major pharmaceutical companies are already exploring quantum applications through strategic partnerships:

- AstraZeneca is collaborating with Amazon Web Services, IonQ, and NVIDIA on quantum-accelerated computational chemistry workflows [22].

- Boehringer Ingelheim is working with PsiQuantum to calculate electronic structures of metalloenzymes critical for drug metabolism [22].

- Merck KGaA and Amgen are collaborating with QuEra to predict biological activity of drug candidates based on molecular descriptors [22].

Simultaneously, hybrid approaches are accelerating the development of novel therapeutic modalities, which now account for $197 billion or 60% of the total pharma projected pipeline value [20]. The 2025 landscape shows particularly strong growth in antibodies (including monoclonal antibodies, antibody-drug conjugates, and bispecifics), recombinant proteins (driven by GLP-1 agonists), and nucleic acid therapies [20]. These advanced modalities benefit significantly from hybrid approaches as their complexity often exceeds what traditional empirical methods can efficiently address.

Implementation Challenges and Strategic Considerations

Despite the clear benefits, implementing hybrid R&D approaches presents significant organizational and technical challenges. Deloitte's survey reveals that only 11% of organizations have achieved a fully predictive lab environment where AI and automation are seamlessly integrated [17]. Common barriers include:

- Data Quality and Integration: Legacy data systems with limited interoperability create significant obstacles. 84% of R&D executives acknowledge that new technologies require a robust data foundation [17].

- Cultural Resistance: Transitioning to data-driven decision-making requires substantial cultural change and upskilling of scientific staff [18].

- ROI Measurement: Just 37% of organizations use quantitative metrics to track return on investment from digital initiatives, making continued funding justification challenging [17].

- Regulatory Alignment: Evolving regulatory frameworks for AI-derived insights and hybrid trial methodologies require ongoing navigation and engagement [24] [25].

Successful organizations address these challenges through focused strategies including comprehensive lab modernization roadmaps aligned with R&D objectives, robust data governance, "research data product" development, and cultural change programs that foster digital adoption [17]. Companies that effectively implement these strategies are positioned to achieve what PwC identifies as "reinvented R&D" - fundamentally changing the cost and timeline for bringing new drugs to market while expanding possibilities to address unmet medical needs [26].

The year 2025 indeed represents a definitive inflection point for pharmaceutical R&D, with hybrid approaches transitioning from promising pilots to core strategic capabilities. The integration of computational and experimental methods is delivering measurable improvements in research productivity, clinical efficiency, and portfolio value. Companies that have embraced this transformation are already seeing accelerated discovery timelines, enhanced probability of technical success, and improved decision-making across the development pipeline.

As hybrid methodologies continue to evolve, they will increasingly incorporate emerging technologies like quantum computing and advanced AI, further blurring the boundaries between physical and digital research. The organizations that will lead the pharmaceutical industry in the coming decade are those making strategic investments today in the technological infrastructure, data assets, and human capabilities needed to fully realize the potential of hybrid R&D. The revolution is no longer coming—it has arrived, and hybrid approaches are now the fundamental engine of innovation in pharmaceutical research and development.

Cutting-Edge Characterization Techniques and Real-World Biomedical Applications

The quest to understand and engineer complex materials is fundamentally a multiscale problem. Emergent properties in condensed matter and biological systems—such as catalytic activity, conductivity, or drug binding—arise from nonlinear interactions that span from the molecular to the macroscopic domain [7]. Traditional computational approaches, developed primarily for ideal crystalline solids, often fall short in describing the rich, hierarchical organization of soft materials, biomolecules, and disordered systems. Quantum-classical workflows represent a paradigm shift in computational molecular simulation, integrating the respective strengths of quantum and classical computing to overcome these limitations. By leveraging quantum processors for computationally intractable subproblems and classical resources for broader simulation context, these hybrid approaches offer a promising path toward accurately modeling emergent properties in complex molecular systems. This guide provides a comprehensive comparison of emerging quantum-classical workflows, detailing their experimental protocols, performance metrics, and applicability to different research scenarios in materials characterization and drug development.

Comparative Analysis of Quantum-Classical Workflows

The landscape of quantum-classical workflows for molecular simulation has diversified significantly, with distinct approaches emerging from leading research groups and commercial entities. The table below provides a structured comparison of four prominent methodologies, highlighting their core functions, implementation details, and current performance benchmarks.

Table 1: Comparative Analysis of Quantum-Classical Workflows for Molecular Simulation

| Workflow Name / Provider | Core Computational Function | Algorithm/Implementation | Reported Performance & Advantages |

|---|---|---|---|

| IonQ Chemical Dynamics [27] | Calculating atomic-level forces for molecular dynamics | Quantum-Classical Auxiliary-Field Quantum Monte Carlo (QC-AFQMC) on trapped-ion qubits | More accurate force calculations vs. classical methods; enables carbon capture material design [27] |

| Quantinuum Error-Corrected Chemistry [28] | Scalable, fault-tolerant molecular energy calculations | Quantum Phase Estimation (QPE) with logical qubits on System Model H2; InQuanto software platform | First end-to-end error-corrected workflow; path to quantum advantage in chemistry [28] |

| IBM Periodic Materials [29] | Band gap calculation for periodic materials | Sample-based Quantum Diagonalization (SQD) of Extended Hubbard Model; LUCJ ansatz | Computes electronic properties (e.g., band gaps) for correlated materials beyond pure classical methods [29] |

| BQP/Classiq Digital Twin [30] | Solving linear systems for CFD & digital twins | Variational Quantum Linear Solver (VQLS) via automated circuit synthesis on CUDA-Q | Reduced qubit counts/circuit size vs. traditional quantum linear solvers; integrates with HPC [30] |

Detailed Experimental Protocols and Workflows

Quantum-Enhanced Force Calculations for Molecular Dynamics

Protocol Overview: This workflow, demonstrated by IonQ in collaboration with a global automotive manufacturer, focuses on calculating atomic-level forces to trace chemical reaction pathways, a critical capability for designing advanced materials like carbon capture substrates [27].

Step-by-Step Methodology:

- System Preparation: Define the molecular system and identify critical points on the potential energy surface where significant chemical changes occur (e.g., transition states).

- Quantum Computation: Execute the Quantum-Classical Auxiliary-Field Quantum Monte Carlo (QC-AFQMC) algorithm on a quantum processor (e.g., IonQ Forte) to compute the nuclear forces at these critical points. This differs from previous approaches that focused only on isolated energy calculations.

- Classical Integration: Feed the calculated forces into established classical computational chemistry workflows. These forces provide the critical gradients needed to map reaction pathways and estimate reaction rates with higher accuracy than purely classical methods.

- Analysis & Validation: Use the traced pathways to inform the design of new materials. Performance is validated by comparing simulated reaction rates and pathways against experimental data or high-level theoretical benchmarks.

Error-Corrected Molecular Energy Simulation

Protocol Overview: Quantinuum's workflow demonstrates a scalable, end-to-end pipeline for molecular energy calculations, incorporating quantum error correction (QEC) to enhance result fidelity—a critical step toward fault-tolerant quantum chemistry [28].

Step-by-Step Methodology:

- Problem Formulation: Select a target molecule and generate its second-quantized electronic Hamiltonian using a classical method like Hartree-Fock.

- Error Correction Encoding: Map the problem onto logical qubits using an advanced quantum error-correcting code (e.g., the concatenated symplectic double code mentioned in their research). This code is designed for high performance on Quantinuum's H2 processor, which features all-to-all qubit connectivity [28].

- Quantum Processing: Execute the Quantum Phase Estimation (QPE) algorithm on the error-corrected logical qubits to compute the ground-state energy of the molecular system.

- Decoding & Correction: Employ real-time, GPU-accelerated classical decoders (e.g., integrated via NVIDIA NVQLink) to detect and correct errors that occurred during the quantum computation, thereby improving logical fidelity [28].

- Result Verification: Compare the computed energy with known values for small molecules to validate the entire workflow's accuracy and scalability.

AI-Driven Neural Network Potentials as a Classical Benchmark

Protocol Overview: As a powerful classical baseline, workflows utilizing Neural Network Potentials (NNPs) trained on massive datasets like Meta's OMol25 demonstrate the current state-of-the-art in machine-learned molecular dynamics [31]. This approach is critical for contextualizing the potential of emerging quantum methods.

Step-by-Step Methodology:

- Dataset Curation: Assemble a massive and diverse dataset of quantum chemical calculations. The OMol25 dataset, for example, contains over 100 million calculations at the ωB97M-V/def2-TZVPD level of theory, covering biomolecules, electrolytes, and metal complexes [31].

- Model Training: Train an NNP (e.g., Meta's eSEN or UMA models) on this dataset. These models learn to predict potential energy surfaces and forces directly from atomic structures.

- Conservative Force Fine-Tuning: For accurate dynamics, a "direct-force" model is first trained and then fine-tuned to predict "conservative forces," which ensures physical correctness and improves stability in simulations [31].

- Deployment in MD: The trained NNP is deployed in place of a traditional quantum mechanics calculator in molecular dynamics (MD) simulation packages, allowing for accurate simulations of large systems over long timescales at a fraction of the computational cost of ab initio MD.

The following diagram illustrates the structural relationship and data flow between these primary workflow types and their components.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of advanced molecular simulation workflows requires both specialized software and powerful hardware. The table below lists key resources as referenced in the latest research and commercial offerings.

Table 2: Essential Research Reagents & Computational Resources

| Category | Item / Platform | Function in Workflow |

|---|---|---|

| Software & Platforms | InQuanto (Quantinuum) [28] | Quantum computational chemistry platform for developing and running quantum simulations. |

| NVIDIA CUDA-Q [30] | Open-source platform for hybrid quantum-classical computing in HPC environments. | |

| Classiq Platform [30] | Automates quantum circuit synthesis, optimizing for performance and resource usage. | |

| WESTPA [32] | Weighted Ensemble Simulation Toolkit for enhanced sampling in molecular dynamics. | |

| Datasets | OMol25 (Meta) [31] | Massive dataset of quantum chemical calculations for training neural network potentials. |

| Hardware | NVIDIA RTX 6000 Ada GPU [33] | Provides massive parallel processing (18k+ CUDA cores) and 48 GB VRAM for classical MD and AI model inference. |

| NVIDIA H200 GPU [34] | Accelerates large-scale graph analysis and quantum compilation tasks in hybrid workflows. | |

| BIZON X5500 Workstation [33] | Customizable, multi-GPU workstation optimized for high-throughput molecular dynamics. |

The field of quantum-classical molecular simulation is rapidly advancing on multiple fronts. Workflows like IonQ's force calculation and Quantinuum's error-corrected chemistry are pushing the boundaries of what is possible with quantum processors for specific, impactful subproblems [27] [28]. Simultaneously, classical AI-driven approaches, powered by monumental datasets like OMol25, are setting a remarkably high bar for general-purpose molecular modeling [31]. The emerging consensus is that a synergistic, multi-scale strategy will be essential for tackling the grand challenge of emergent properties. Future progress will likely be driven by tighter integration between these paradigms—using quantum computers to generate high-fidelity training data for NNPs, or employing classical AI to reduce the resource burden on quantum processors—ultimately creating a unified computational toolkit for the design of next-generation functional materials and therapeutics.

The field of materials science and drug discovery is undergoing a transformative shift with the integration of artificial intelligence (AI) and deep learning. Traditional methods for characterizing molecular properties and biological activities have long relied on experimental assays that are often time-consuming, costly, and low-throughput. The emergence of hybrid materials with complex, tunable structures has further exacerbated this challenge, as their multifunctional nature demands sophisticated characterization approaches that can predict emergent properties before synthesis [35] [36]. In this context, AI-driven characterization represents a paradigm shift, enabling researchers to move from retrospective analysis to predictive design.

Deep learning models, particularly those based on graph neural networks (GNNs) and convolutional neural networks (CNNs), have demonstrated remarkable capabilities in extracting meaningful patterns from molecular structures and predicting their properties with high accuracy. These approaches are revolutionizing how researchers profile molecular behavior across diverse domains—from predicting the bioactivity of kinase inhibitors in drug discovery to forecasting the taste properties of small molecules in food chemistry [37] [38]. By learning directly from molecular representation data, these models can establish complex structure-property relationships that would be difficult to discern through traditional quantitative structure-activity relationship (QSAR) methods alone.

The application of these techniques to hybrid materials characterization is particularly promising. Metal-protein hybrid materials, for instance, represent a novel class of functional materials that exhibit exceptional physicochemical properties and tunable structures, rendering them valuable for diverse fields including materials engineering, biocatalysis, biosensing, and biomedicine [36]. AI-driven characterization can accelerate the design and development of these multifunctional and biocompatible hybrid materials by predicting their properties and performance before resource-intensive synthesis and testing.

This comparison guide provides an objective assessment of deep learning approaches for predictive molecular profiling, with a specific focus on their application within hybrid materials research. We present performance comparisons across multiple methodologies, detailed experimental protocols, and essential resources to equip researchers with the knowledge needed to implement these cutting-edge techniques in their characterization workflows.

Performance Benchmarking: Comparative Analysis of Deep Learning Approaches

Molecular Representation Strategies for Property Prediction

The performance of deep learning models in molecular profiling heavily depends on the choice of molecular representation. Different encoding strategies capture varying aspects of chemical structure, leading to significant differences in predictive accuracy across various tasks. Based on comprehensive benchmarking studies, several representation approaches have emerged as particularly effective for property prediction.

In a large-scale comparison study focused on taste prediction, GNN-based models demonstrated superior performance compared to other approaches [37]. The research evaluated multiple representation strategies on a dataset comprising 2,601 molecules with taste classifications. Consensus models that combined diverse molecular representations showed improved performance, with the molecular fingerprints + GNN consensus model emerging as the top performer. This highlights the complementary strengths of GNNs, which learn molecular representations directly from graph structures, and molecular fingerprints, which encode specific structural patterns as binary vectors.

For kinase profiling prediction—a critical task in drug discovery—a comprehensive benchmark evaluating 136,290 models revealed that descriptor-based machine learning models generally slightly outperform fingerprint-based models [38]. The study, which utilized a dataset of 141,086 unique compounds and 216,823 bioassay data points for 354 kinases, found that random forest (RF) as an ensemble learning approach displayed the overall best predictive performance among conventional methods. Single-task graph-based deep learning models were generally inferior to conventional descriptor- and fingerprint-based machine learning models; however, the corresponding multi-task models significantly improved the average accuracy of kinase profile prediction.

Table 1: Performance Comparison of Molecular Representation Approaches for Property Prediction

| Representation Method | Prediction Task | Best Model | Performance Metric | Key Advantage |

|---|---|---|---|---|

| Graph Neural Networks (GNNs) | Taste prediction | Molecular fingerprints + GNN consensus | Outperformed other single representations | Captures topological structure + specific features |

| Molecular Descriptors | Kinase profiling | Random Forest (RF) | Best among conventional ML | Physicochemical properties encoding |

| Molecular Fingerprints | Kinase profiling | Multi-task FP-GNN | AUC: 0.807 | Combines structural patterns with multi-task learning |

| Fusion Models | Kinase profiling | RF::AtomPairs + FP2 + RDKitDes | AUC: 0.825 | Ensemble approach maximizes predictive power |

| Convolutional Neural Networks (CNNs) | Drug-target interaction | CNN on SMILES strings | Varies by specific task | Processes textual molecular representations |

Regression vs. Classification for Continuous Biomarker Prediction

In computational pathology, the choice between regression and classification approaches for predicting continuous biomarkers from histopathology images has significant implications for model performance. A systematic comparison published in Nature Communications demonstrated that regression-based deep learning significantly enhances the accuracy of biomarker prediction compared to classification-based approaches, while also improving the predictions' correspondence to regions of known clinical relevance [39].

The study developed a contrastively-clustered attention-based multiple instance learning (CAMIL) regression approach and evaluated its performance for predicting homologous recombination deficiency (HRD)—a clinically relevant pan-cancer biomarker measured as a continuous score—from pathology images across nine cancer types. The regression approach consistently outperformed classification methods, with the CAMIL regression model achieving AUROCs above 0.70 in 5 out of 7 tested cancer types in The Cancer Genome Atlas (TCGA) cohort [39]. In external validation cohorts, the model achieved even higher AUROCs, reaching 0.96 in endometrial cancer (UCEC).

Table 2: Performance of Regression vs. Classification for HRD Prediction from Pathology Images

| Cancer Type | CAMIL Regression (AUROC) | CAMIL Classification (AUROC) | Graziani et al. Regression (AUROC) |

|---|---|---|---|

| Breast Cancer (BRCA) | 0.78 [0.75-0.81] | Lower than CAMIL Regression | Significantly lower (p ≤ 0.0167) |

| Colorectal Cancer (CRC) | 0.76 [0.65-0.87] | Lower than CAMIL Regression | Significantly lower (p ≤ 0.01) |

| Pancreatic Adenocarcinoma (PAAD) | 0.72 [0.62-0.81] | Lower than CAMIL Regression | Similar performance |

| Lung Adenocarcinoma (LUAD) | 0.72 [0.67-0.77] | Lower than CAMIL Regression | Similar performance |

| Endometrial Cancer (UCEC) | 0.82 [0.78-0.86] | Lower than CAMIL Regression | Similar performance |

Beyond quantitative performance metrics, regression-based prediction scores provided higher prognostic value than classification-based scores in a large cohort of colorectal cancer patients [39]. This demonstrates that preserving the continuous nature of biomarker measurements rather than dichotomizing them leads to more clinically relevant predictions—a critical consideration for molecular profiling in hybrid materials research where properties often exist along a continuum rather than in discrete categories.

Experimental Protocols: Methodologies for AI-Driven Characterization

Workflow for Regression-Based Deep Learning in Biomarker Prediction

The experimental protocol for implementing regression-based deep learning approaches follows a structured workflow that can be adapted for various molecular profiling tasks. The CAMIL regression method, which has demonstrated state-of-the-art performance for continuous biomarker prediction, combines self-supervised learning with attention-based multiple instance learning in a multi-stage process [39].

Data Preparation and Preprocessing The initial phase involves collecting and preprocessing whole slide images (WSIs) of tissue specimens, which in the case of molecular profiling could be adapted for various characterization data types. For histopathology applications, WSIs are divided into smaller patches or tiles of manageable size for neural network processing. These regions may contain less relevant tissues, necessitating careful curation. The ground truth continuous biomarker values (e.g., HRD scores, expression values, or other molecular measurements) are obtained through molecular genetic sequencing of corresponding tissue samples.

Feature Extraction with Self-Supervised Learning A feature extractor trained through self-supervised learning (SSL) processes each tile to generate representative feature vectors [39]. This approach is particularly valuable when labeled data is scarce, as it allows the model to learn meaningful representations without extensive manual annotation. The self-supervised learning step enables the model to capture morphological features that may correlate with molecular biomarkers without direct supervision.

Attention-Based Multiple Instance Learning The feature vectors from all tiles are aggregated using an attention-based multiple instance learning (attMIL) model. This approach assigns attention weights to each tile, effectively allowing the model to focus on the most informative regions while suppressing less relevant areas [39]. The attention mechanism provides interpretability by highlighting which regions contributed most significantly to the prediction.

Regression and Continuous Value Prediction The aggregated features are passed through a regression head that outputs a continuous prediction value. This preserves the rich information contained in continuous biomarker measurements rather than forcing them into artificial categorical bins. The model is trained using site-aware cross-validation splits to mitigate batch effects that commonly plague multi-site studies [39].

The following workflow diagram illustrates the key steps in this process:

Benchmarking Protocol for Molecular Representation Comparison

Comprehensive benchmarking of different molecular representations and machine learning approaches requires a standardized protocol to ensure fair comparison. The large-scale kinase profiling study [38] and taste prediction research [37] provide robust methodologies that can be adapted for evaluating molecular profiling approaches for hybrid materials.

Dataset Curation and Splitting The first critical step involves assembling a high-quality, diverse dataset with reliable experimental measurements. For kinase profiling, this involved collecting 141,086 unique compounds with 216,823 well-defined bioassay data points for 354 kinases from multiple sources including ChEMBL, PubChem, BindingDB, and Zinc [38]. For taste prediction, the dataset comprised 2,601 molecules from ChemTastesDB, classified into categories such as sweet, bitter, umami, sour, and salty [37]. The dataset is then randomly split into training (70-80%), validation (10%), and test sets (10-20%), ensuring representative distribution across categories.

Molecular Representation Calculation Multiple molecular representations are calculated for each compound:

- Fingerprints: Morgan fingerprints, PubChem fingerprints, Daylight fingerprints, RDKit fingerprints, ESPF fingerprints, and ErG fingerprints [37] [38]

- Molecular Descriptors: RDKit molecular descriptors capturing physicochemical properties

- Graph Representations: Molecular graphs with atomic and bond features for GNN-based approaches

Model Training and Evaluation For each representation type, multiple machine learning and deep learning models are trained and evaluated using consistent validation protocols. The kinase profiling study evaluated 12 different ML and DL methods, including K-nearest neighbors (KNN), naive Bayesian (NB), support vector machine (SVM), random forest (RF), XGBoost, deep neural networks (DNN), graph convolutional network (GCN), graph attention network (GAT), message passing neural networks (MPNN), Attentive FP, D-MPNN (Chemprop), and FP-GNN [38]. Performance is evaluated using area under the receiver operating characteristic curve (AUROC) and other relevant metrics, with statistical significance testing to validate differences between approaches.

Implementing AI-driven characterization approaches requires familiarity with specific software tools, databases, and computational resources. The following table details essential components of the molecular profiling toolkit, drawn from the methodologies described in the benchmark studies.

Table 3: Research Reagent Solutions for AI-Driven Molecular Profiling

| Tool/Resource | Type | Function | Application Example |

|---|---|---|---|

| RDKit | Open-source cheminformatics software | Calculates molecular descriptors, fingerprints, and graph representations | Feature extraction for machine learning models [37] [38] |

| DeepChem | Deep learning library | Provides implementations of graph neural networks for molecular data | Building GNN models for property prediction [38] |

| DeepPurpose | Molecular modeling toolkit | Integrates multiple molecular representation methods and prediction models | Comparative analysis of representation strategies [37] |

| ChEMBL Database | Bioactivity database | Provides curated bioactivity data for kinase inhibitors and other targets | Training data for predictive models [38] |

| ChemTastesDB | Taste compound database | Contains taste classifications for organic and inorganic compounds | Training data for taste prediction models [37] |

| The Cancer Genome Atlas (TCGA) | Cancer genomics database | Provides histopathology images and molecular profiling data | Training regression models for biomarker prediction [39] |

| Molecular Fingerprints | Structural representation | Encodes molecular structures as binary vectors for machine learning | Input features for random forest and other ML models [37] [38] |

| Graph Neural Networks | Deep learning architecture | Learns molecular representations directly from graph structures | Capturing complex structure-property relationships [37] [38] |

The selection of appropriate tools depends on the specific characterization task. For predicting discrete categories, conventional machine learning models like random forest applied to molecular fingerprints may provide excellent performance with computational efficiency [38]. For more complex prediction tasks involving continuous properties or requiring interpretation of structure-property relationships, graph neural networks often deliver superior results despite their higher computational requirements [37]. The emerging best practice involves employing ensemble approaches that combine multiple representation strategies to leverage their complementary strengths.

The comprehensive comparison of deep learning approaches for molecular profiling reveals several strategic insights for researchers working in hybrid materials characterization. First, the choice of molecular representation significantly impacts model performance, with different excelling in specific tasks. Graph neural networks generally outperform other approaches for complex structure-property relationship modeling, while simpler fingerprint-based representations combined with random forest models can provide excellent performance with greater computational efficiency [37] [38].

Second, preserving the continuous nature of molecular properties through regression approaches rather than categorical classification enhances predictive accuracy and clinical relevance [39]. This is particularly important for hybrid materials research, where properties often exist along a continuum and subtle variations can significantly impact functionality.

Third, multi-task learning and ensemble methods consistently outperform single-model approaches by leveraging complementary information across related tasks and representation strategies [38]. Implementing these advanced architectures requires greater computational resources and expertise but delivers substantially improved performance for complex molecular profiling challenges.

As AI-driven characterization continues to evolve, these approaches will play an increasingly vital role in accelerating the design and development of novel hybrid materials with tailored properties. By implementing the benchmarking protocols and strategic recommendations outlined in this guide, researchers can harness the power of deep learning to unlock new frontiers in predictive molecular profiling.

The development of hybrid bio-nanocomposites represents a paradigm shift in materials science, merging renewable resources with nanotechnology to create sustainable advanced materials. These complexes combine bio-based polymers or natural fibers with nanoscale reinforcements, yielding emergent properties not present in individual components. Within this research context, advanced thermal and mechanical characterization techniques are indispensable for deciphering these emergent properties. Differential Scanning Calorimetry (DSC), Thermogravimetric Analysis (TGA), and Dynamic Mechanical Analysis (DMA) form a critical triad of analytical methods that provide complementary insights into the thermal transitions, degradation profiles, and viscoelastic behavior of these sophisticated materials. This guide objectively compares the performance of various hybrid bio-nanocomposites by synthesizing experimental data from recent studies, providing researchers with a standardized framework for evaluating material performance across different systems.

The "hybrid" nature of these materials often generates synergistic effects. For instance, natural fibers provide sustainability and low density, while nanofillers enhance mechanical strength and thermal stability, creating a new class of materials with property profiles superior to conventional composites. However, these emergent properties also present characterization challenges, as interface interactions, dispersion quality, and component compatibility dramatically influence final performance. The systematic application of DSC, TGA, and DMA allows researchers to not only quantify these properties but also understand the fundamental structure-property relationships governing material behavior, thereby accelerating the development of next-generation sustainable materials for automotive, aerospace, and biomedical applications.

Experimental Protocols for Core Characterization Techniques

Sample Preparation Methodologies

Consistent sample preparation is fundamental for obtaining reliable and comparable data across different material systems. The studies referenced herein generally follow a structured approach:

Material Selection and Pretreatment: Bio-based components (e.g., sisal fibers, chicken feather keratin, thermoplastic starch) typically undergo surface treatments to improve interfacial adhesion with polymer matrices. For example, sisal fibers are treated with a 5 wt.% NaOH solution for 4 hours, followed by thorough washing and drying at 80°C for 24 hours to remove moisture [40]. Keratin from chicken feathers is often mixed with halloysite nanoclays under dynamic conditions to create nanohybrid reinforcements [41].

Nanofiller Incorporation: Nanoscale reinforcements such as Carbon Nanotubes (CNTs), nanodiamonds (NDs), or nano-TiO₂ are integrated using methods designed to ensure homogeneous dispersion. Melt blending in a high-shear thermokinetic mixer is commonly employed for thermoplastics like polypropylene (PP) [42], while sonication and mechanical stirring are typical for epoxy-based systems [40].

Composite Fabrication: Hand lay-up followed by compression molding is standard for thermoset composites [40] [43]. For thermoplastics, melt blending followed by injection or compression molding into ASTM-standard test specimens is the norm [42] [44].

Standardized Instrumental Protocols

To ensure cross-study comparability, the following instrumental parameters represent consolidated standard practices derived from the cited research:

Differential Scanning Calorimetry (DSC)

- Purpose: Analyze thermal transitions (glass transition Tg, melting Tm, crystallization T_c, enthalpy changes, degree of crystallinity).

- Standard Protocol: Samples (5-10 mg) are sealed in aluminum pans. The temperature program involves heating from -50°C to 200°C at a rate of 10°C/min under a nitrogen purge (50 mL/min). An isothermal hold erases thermal history, followed by controlled cooling at the same rate [42] [44].

Thermogravimetric Analysis (TGA)

- Purpose: Determine thermal stability, decomposition temperatures, and filler content.

- Standard Protocol: Samples (5-15 mg) are heated in a platinum or alumina crucible from ambient temperature to 650-800°C at 10°C/min under a nitrogen atmosphere to prevent oxidative degradation [40] [42] [41].

Dynamic Mechanical Analysis (DMA)

- Purpose: Characterize viscoelastic properties (storage modulus E', loss modulus E'', damping factor tan δ) as a function of temperature and/or frequency.

- Standard Protocol: Tests are performed in single cantilever or three-point bending mode on rectangular bars. A temperature ramp from 25°C to 160°C at 3°C/min, with a strain amplitude of 0.05% and a frequency of 1 Hz, is commonly used [45] [40] [42].