GPT Models for Chemical Data Extraction: A Performance Comparison and Practical Guide for Researchers

The vast majority of chemical knowledge is locked within unstructured text in scientific literature and patents, creating a significant bottleneck for data-driven discovery.

GPT Models for Chemical Data Extraction: A Performance Comparison and Practical Guide for Researchers

Abstract

The vast majority of chemical knowledge is locked within unstructured text in scientific literature and patents, creating a significant bottleneck for data-driven discovery. This article provides a comprehensive performance comparison of GPT models for extracting structured chemical data, from reactions and material properties to synthesis parameters. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of LLMs in chemistry, details practical extraction methodologies and agentic workflows, addresses key challenges like hallucination and cost optimization, and delivers a rigorous validation framework based on recent benchmarking studies. By synthesizing the latest research, this guide aims to empower scientists to select the right GPT model and strategy to efficiently unlock valuable chemical insights from text.

The New Frontier: How GPT Models are Revolutionizing Chemical Data Mining

The Unstructured Data Problem in Chemistry and Materials Science

In chemistry and materials science, a vast repository of scientific knowledge remains locked within unstructured natural language, primarily in the form of millions of published research papers and patents. This creates a significant bottleneck for data-driven research and the application of artificial intelligence in molecular design and materials discovery. While structured data is crucial for innovative and systematic materials design, only a minuscule fraction of available research data exists in usable structured forms. Quantitative analysis reveals a staggering disparity: millions of research papers are published annually compared to merely thousands of datasets deposited in chemistry and materials science repositories each year [1]. This massive imbalance highlights the immense untapped potential lying dormant in scientific literature—data that could accelerate the discovery of novel compounds, materials, and therapeutic agents if it could be efficiently extracted and structured [1].

The fundamental challenge stems from what researchers describe as a "death by 1000 cuts" problem. While automating extraction for one specific case might be manageable, the sheer scale of variations in reporting formats, terminology, and contextual presentation makes the overall problem intractable through traditional methods [1]. Rule-based approaches and smaller machine learning models trained on manually annotated corpora have historically struggled with the diversity of topics and reporting formats in chemical research [1]. As recently as 2019, researchers still faced significant challenges in reliably extracting chemical information from older PDF documents, with development timelines stretching to multiple months for each new use case [1].

LLMs as a Transformative Solution

The advent of large language models represents a paradigm shift in addressing chemistry's unstructured data challenge. Unlike previous approaches, LLMs can solve tasks for which they haven't been explicitly trained, presenting a powerful and scalable alternative for structured data extraction [1]. This capability is particularly valuable in scientific domains where labeled training data is scarce. Researchers have demonstrated that workflows that previously required weeks or months to develop can now be prototyped in a matter of days using LLMs [1].

The transformative potential of LLMs lies in their ability to understand complex scientific language and relationships that span multiple sentences or even different sections of a document. This capability enables them to identify and extract intricate scientific relationships that challenge traditional natural language processing methods [2]. Furthermore, LLMs can be augmented with external tools such as web search and synthesis planners, expanding their capabilities beyond simple text comprehension to functioning as active research assistants [3].

Performance Comparison of GPT Models and Alternatives

Quantitative Performance Metrics Across Chemical Domains

Table 1: Performance Comparison of LLMs on Chemical Data Extraction Tasks

| Model | Task Domain | Performance Metrics | Key Strengths | Limitations/Costs |

|---|---|---|---|---|

| GPT-4.1 | Thermoelectric Property Extraction | F1 ≈ 0.91 (thermoelectric), F1 ≈ 0.82 (structural) [4] | Highest extraction accuracy | Higher computational cost |

| GPT-4.1 Mini | Thermoelectric Property Extraction | Nearly comparable to GPT-4.1 [4] | Cost-effective for large-scale deployment | Slightly reduced accuracy |

| GPT-4.0 | Chemical-Disease Relation Extraction | F1 = 87% (precise extraction) [2] | Excellent for complex relationship identification | |

| GPT-3.5 | Polymer Property Extraction | Extracted >1 million property records [5] | Balanced performance and cost efficiency | |

| Claude-opus | Chemical-Disease Relation Extraction | Evaluated for comprehensive extraction [2] | Strong comprehensive extraction capabilities | |

| Claude 3.5 | Clinical Trial Data Extraction | Subject of ongoing RCT evaluation [6] | Potential for AI-human collaborative extraction | |

| LlaMa 2 | Polymer Property Extraction | Comparable extraction to GPT-3.5 [5] | Open-source alternative | |

| ChemDFM | General Chemical Tasks | Surpasses most open-source LLMs [7] | Domain-specific pre-training | Limited track record for extraction |

Benchmarking Against Human Expertise

The ChemBench framework provides critical insights into how LLMs perform relative to human chemical expertise. When evaluated against a curated set of more than 2,700 question-answer pairs spanning diverse chemical topics, the best-performing LLMs outperformed the best human chemists included in the study on average [3]. This remarkable finding contextualizes the potential of these models for chemical information processing. However, the benchmarking also revealed that models still struggle with some basic chemical tasks and often provide overconfident predictions that may mislead users [3]. This performance gap underscores the continued importance of human oversight and domain expertise in the data extraction pipeline.

Experimental Protocols and Workflow Architectures

Multi-Agent Extraction Workflow for Material Properties

Table 2: Agent Roles in Thermoelectric Data Extraction Pipeline

| Agent Name | Primary Function | Specific Responsibilities |

|---|---|---|

| MatFindr | Material Candidate Finder | Identifies promising material candidates in text |

| TEPropAgent | Thermoelectric Property Extractor | Extracts specific TE properties (ZT, Seebeck coefficient, etc.) |

| StructPropAgent | Structural Information Extractor | Identifies structural attributes (crystal class, space group, doping) |

| TableDataAgent | Table Data Extractor | Parses and extracts data from tables and captions |

Advanced extraction pipelines have evolved beyond simple prompting to sophisticated multi-agent architectures. The workflow for extracting thermoelectric and structural properties from scientific articles employs four specialized LLM-based agents operating within the LangGraph framework [4]. This approach demonstrated its efficacy by processing approximately 10,000 full-text scientific articles and creating a dataset of 27,822 property-temperature records with normalized units, spanning key thermoelectric properties including figure of merit (ZT), Seebeck coefficient, conductivity, resistivity, power factor, and thermal conductivity [4].

The preprocessing stage of this workflow is crucial for efficiency and accuracy. It involves extracting content from structured XML or HTML formats (preferable to PDF for consistent parsing), followed by removal of non-relevant sections such as "Conclusion" and "References" that typically don't contain material property information [4]. The remaining text is filtered using rule-based scripts with regular expression patterns to retain only sentences likely to contain thermoelectric or structural properties, significantly reducing token counts and computational costs for downstream processing [4].

Chemical Reaction Extraction from Patent Literature

For extracting chemical reaction data from patents, researchers have developed a specialized multi-stage pipeline. The process begins with identifying reaction-containing paragraphs using a Naïve-Bayes classifier that demonstrated superior performance (precision = 96.4%, recall = 96.6%) compared to a BioBERT model in cross-validation [8]. The reaction paragraphs are then processed by LLMs for named entity recognition (NER) to extract chemical reaction entities including reactants, solvents, workup, reaction conditions, catalysts, and products along with their quantities [8].

This approach demonstrated its value by not only extracting 26% additional new reactions from the same set of patents compared to previous non-LLM based methods but also by identifying wrong entries in previously curated datasets [8]. The final stages involve converting identified chemical entities in IUPAC format to SMILES format and performing atom mapping between reactants and products to validate the extracted reactions [8].

Polymer Property Extraction Framework

The extraction of polymer-property data presents unique challenges due to the expansive chemical design space and non-standard nomenclature. Researchers addressed this through a dual-stage filtering system to optimize computational efficiency when processing a corpus of 2.4 million full-text articles [5]. The first filter employs property-specific heuristic filters to detect paragraphs mentioning target polymer properties, which identified approximately 2.6 million paragraphs (~11% of total) as potentially relevant [5]. The second filter applies a NER filter to identify paragraphs containing all necessary named entities (material name, property name, property value, unit), further refining the set to about 716,000 paragraphs (~3% of total) containing complete extractable records [5].

This pipeline successfully extracted over one million records corresponding to 24 properties of more than 106,000 unique polymers from approximately 681,000 polymer-related articles, creating the largest such dataset currently available [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for LLM-Based Chemical Data Extraction

| Component | Function | Implementation Examples |

|---|---|---|

| Preprocessing Tools | Convert documents to processable formats | XML/HTML parsers, Regular expressions, PDF-to-text converters (Nougat, Marker) [4] |

| Filtering Mechanisms | Identify relevant text segments | Naïve-Bayes classifiers [8], Heuristic filters [5], NER filters [5] |

| LLM Orchestration | Coordinate multiple specialized agents | LangGraph framework [4], Custom Python pipelines [4] |

| Domain-Specific Models | Handle chemical nomenclature | MaterialsBERT [5], ChemBERT [8], ChemDFM [7] |

| Validation Systems | Ensure extracted data quality | Cross-referencing with physical laws [1], Human verification [6], Atomic mapping [8] |

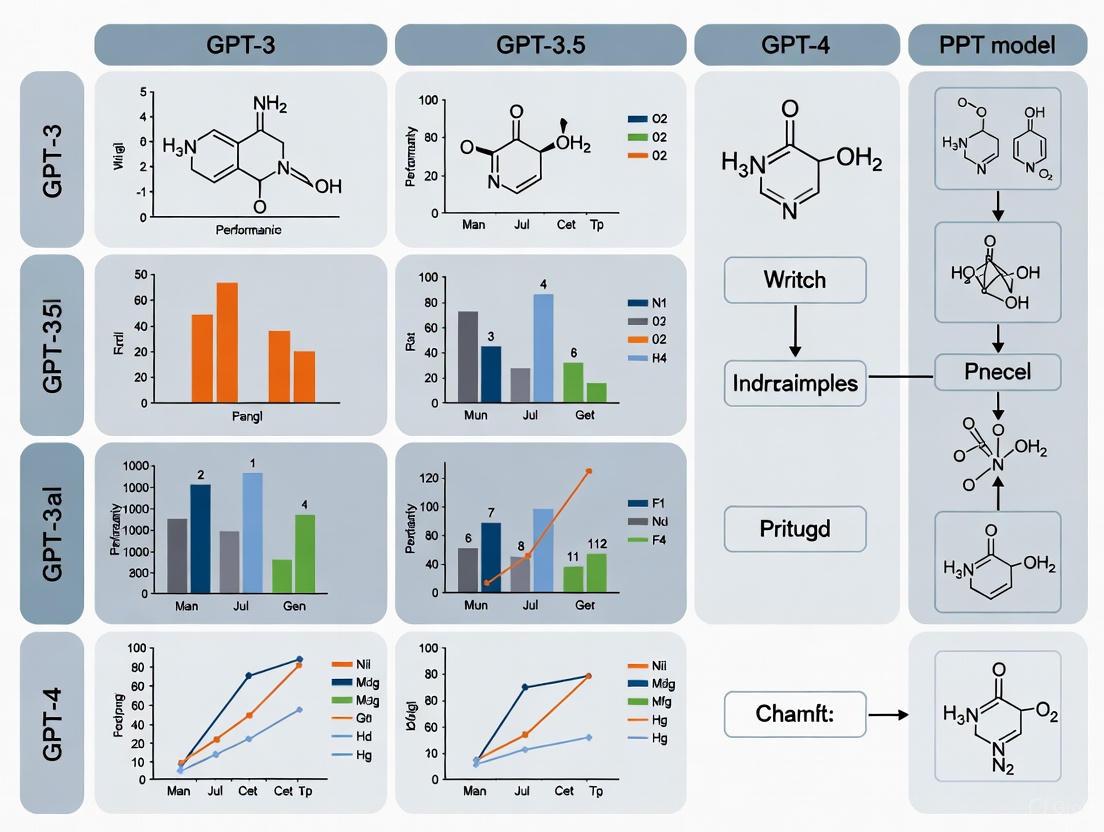

Workflow Visualization

Cost-Quality Tradeoffs and Optimization Strategies

The implementation of LLM-based extraction pipelines requires careful consideration of cost-quality tradeoffs. Research demonstrates that while GPT-4.1 achieves the highest extraction accuracy (F1 ≈ 0.91 for thermoelectric properties), GPT-4.1 Mini offers nearly comparable performance at a fraction of the cost, enabling more sustainable large-scale deployment [4]. One study processing approximately 10,000 full-text articles reported a total API cost of $112, highlighting the potential for cost-effective extraction at scale [4].

Optimization strategies identified across multiple studies include:

- Intelligent filtering to reduce token counts before LLM processing [5]

- Dynamic token allocation based on content complexity [4]

- Model selection tailored to specific extraction tasks [8]

- Early exit mechanisms for straightforward extractions [4]

For polymer property extraction, researchers found that applying a dual-stage filtering system reduced the number of paragraphs requiring LLM processing from 23.3 million to approximately 716,000 (just 3% of the original corpus), dramatically reducing computational costs while maintaining extraction quality [5].

The field of LLM-based chemical data extraction is rapidly evolving, with several promising directions emerging. The development of domain-specific foundation models like ChemDFM, trained on 34 billion tokens from chemical literature and fine-tuned using 2.7 million instructions, points toward more chemically aware AI systems [7]. These specialized models demonstrate significantly improved performance on chemical tasks while maintaining robust general abilities [7].

Future research needs to address several key challenges, including:

- Cross-document analysis to connect disjoint data published in separate articles [1]

- Multimodal approaches that integrate textual, tabular, and image data [1]

- Improved handling of implicit information and complex scientific relationships [2]

- Reduction of model "hallucinations" and overconfident predictions [3]

The integration of AI-human collaborative approaches, such as the randomized controlled trial evaluating Claude 3.5 for clinical trial data extraction, represents another promising direction that may combine the scalability of AI with the critical reasoning of human experts [6].

In conclusion, LLM-based approaches have demonstrated remarkable capabilities in addressing the unstructured data problem in chemistry and materials science, with GPT models showing particularly strong performance across diverse extraction tasks. As these technologies continue to mature and domain-specific models emerge, they hold the potential to dramatically accelerate materials discovery and drug development by unlocking the vast knowledge currently trapped in scientific literature.

Large Language Models (LLMs) represent a transformative technology for chemical information extraction, enabling researchers to convert unstructured scientific literature into structured, machine-readable data. These models operate on a fundamental principle of token-based text completion, where they process input text by breaking it into smaller units called tokens and predicting the most probable subsequent tokens based on patterns learned during training [1]. In chemical contexts, this process becomes particularly complex due to the specialized nomenclature, symbolic representations, and domain-specific knowledge required for accurate interpretation.

The application of LLMs to chemical data extraction addresses a critical bottleneck in materials informatics: while the vast majority of chemical knowledge exists in unstructured natural language formats, structured data remains essential for systematic materials design and discovery [1]. Traditional rule-based approaches to chemical information extraction have faced significant challenges in handling the diversity of reporting formats and terminology across chemical literature, requiring extensive manual customization for each new use case [1]. The emergence of LLMs has dramatically changed this landscape by providing a scalable alternative that can adapt to various extraction tasks without explicit retraining.

How LLMs Process Chemical Information

Tokenization and Chemical Representation

At the most basic level, LLMs process chemical information through tokenization, where input text is decomposed into discrete units that the model can understand. For general language, tokens typically represent words, subwords, or characters, but this process becomes particularly challenging with chemical terminology due to the prevalence of specialized notation, mathematical expressions, and structural representations [1]. Chemical formulas, systematic nomenclature, and notation such as SMILES (Simplified Molecular Input Line Entry System) strings often undergo suboptimal splitting during tokenization, which can limit model performance on chemical tasks [1].

Advanced chemical LLMs have begun addressing these limitations through specialized encoding procedures for molecular representations and equations. For instance, some models employ wrapping techniques where SMILES strings are enclosed within special tags (e.g., [STARTSMILES][ENDSMILES]) to signal that they should be treated differently from regular text [3]. This approach allows the model to recognize and process chemical structures as distinct entities rather than arbitrary character sequences, significantly improving performance on chemically-aware tasks.

Knowledge Representation and Reasoning in Chemical LLMs

LLMs demonstrate remarkable capabilities in chemical reasoning despite being trained primarily on general text corpora. This emergent ability stems from their training on massive scientific datasets that include chemical literature, patents, and textbooks, allowing them to develop internal representations of chemical concepts and relationships [3]. When processing chemical information, LLMs leverage these representations to perform tasks such as:

- Named Entity Recognition (NER) for identifying chemical compounds, properties, and conditions within unstructured text [8]

- Relationship extraction to establish connections between entities (e.g., linking a catalyst to its corresponding reaction) [1]

- Structured data population by extracting specific properties and their values into predefined schemas [4]

The reasoning capabilities of chemical LLMs were systematically evaluated in the ChemBench framework, which assessed models across diverse question types requiring knowledge, reasoning, calculation, and chemical intuition [3]. Surprisingly, the best-performing models in this evaluation outperformed expert human chemists on average, though they still struggled with certain basic tasks and exhibited overconfident predictions [3].

Performance Comparison of LLM Approaches

Extraction Accuracy Across Chemical Domains

Table 1: Performance Comparison of LLMs on Chemical Data Extraction Tasks

| Model | Extraction Task | Domain | Performance Metric | Score | Reference |

|---|---|---|---|---|---|

| GPT-4 | Table Data Extraction | Materials Science | F1 Score | 96.8% | [9] |

| GPT-4.1 | Thermoelectric Properties | Materials Science | F1 Score | 91% | [4] |

| GPT-4.1 | Structural Properties | Materials Science | F1 Score | 82% | [4] |

| Claude 3 Opus | Synthesis Condition Extraction | Metal-Organic Frameworks | Completeness | Highest | [10] |

| Gemini 1.5 Pro | Synthesis Condition Extraction | Metal-Organic Frameworks | Accuracy | Highest | [10] |

| GPT-4 Turbo | Synthesis Condition Extraction | Metal-Organic Frameworks | Logical Reasoning | Strong | [10] |

| Specialized Agentic Systems | Nanozymes Data Extraction | Nanomaterials | F1 Score | 80% | [11] |

The performance of LLMs varies significantly across different chemical domains and extraction tasks. For table data extraction from materials science literature, MaTableGPT achieved an exceptional F1 score of 96.8% by implementing specialized strategies for table representation and segmentation [9]. In the domain of thermoelectric materials, GPT-4.1 demonstrated strong performance with F1 scores of 91% for thermoelectric properties and 82% for structural attributes [4].

When evaluating synthesis condition extraction for metal-organic frameworks (MOFs), different models exhibited distinct strengths: Claude 3 Opus provided the most complete synthesis data, while Gemini 1.5 Pro achieved the highest accuracy and adherence to prompt requirements [10]. GPT-4 Turbo, while less effective in quantitative metrics, demonstrated superior logical reasoning and contextual inference capabilities [10].

Cost-Performance Tradeoffs

Table 2: Cost-Effectiveness Analysis of LLM Extraction Methods

| Extraction Method | GPT Usage Cost | Labeling Cost | Extraction Accuracy | Best Use Cases |

|---|---|---|---|---|

| Zero-Shot Learning | Low | None | Moderate (~80-85% F1) | Simple extraction tasks |

| Few-Shot Learning | Moderate (e.g., $5.97) | Low (10 I/O examples) | High (>95% F1) | Most balanced approach [9] |

| Fine-Tuning | High | High | Highest | Specialized, high-volume tasks |

| Agentic Systems | Variable | Moderate | Variable (F1 0.19-0.80) | Complex, multi-step extractions [11] |

The choice of learning method significantly impacts both performance and cost in chemical data extraction pipelines. Comprehensive evaluation reveals that few-shot learning emerges as the most balanced approach, delivering high extraction accuracy (>95% F1) while maintaining reasonable costs (approximately $5.97 per task with only 10 input-output examples required) [9]. This approach leverages a small number of annotated examples to guide the model without the extensive labeling requirements of full fine-tuning.

Agentic systems demonstrate more variable performance, with specialized systems like nanoMINER achieving F1 scores of 0.80 on specific extraction tasks, while general-purpose agents may perform significantly worse (F1 scores as low as 0.19) [11]. This highlights the importance of domain adaptation in chemical information extraction, where tailored solutions often outperform general approaches.

Experimental Protocols and Methodologies

Workflow for Chemical Data Extraction

The extraction of chemical information from scientific literature typically follows a structured workflow that can be implemented through various technical approaches. The following diagram illustrates a generalized agentic workflow for chemical data extraction:

Step 1: Data Collection and Preprocessing The workflow begins with collecting digital object identifiers (DOIs) for relevant scientific articles through publisher APIs or keyword-based searches [4]. The full-text articles are retrieved in structured formats (XML or HTML) when available, as these enable more consistent parsing compared to PDF files. Preprocessing involves removing irrelevant sections (e.g., conclusions, references) and filtering sentences likely to contain target chemical information using rule-based pattern matching [4].

Step 2: Specialized Extraction Agents Modern approaches employ multiple specialized LLM-based agents that work in concert:

- Material Candidate Finder: Identifies relevant material systems discussed in the text [4]

- Property Extractor: Focuses on extracting specific chemical and physical properties [4]

- Structural Information Extractor: Captures structural attributes such as crystal class, space group, and doping strategies [4]

- Table Data Extractor: Specifically processes tabular data, which often contains rich comparative information [9]

Step 3: Validation and Integration Extracted data undergoes validation through techniques such as follow-up questioning to filter hallucinated information [9], cross-referencing between different sections of the paper, and logical consistency checks based on chemical principles. Validated records are then integrated into structured databases with normalized units and standardized terminology.

Evaluation Frameworks and Metrics

Rigorous evaluation of chemical LLM performance requires specialized benchmarks such as ChemBench, which comprises over 2,700 question-answer pairs spanning diverse chemical topics and difficulty levels [3]. This framework assesses models across multiple dimensions:

- Knowledge: Recall of factual chemical information

- Reasoning: Application of chemical principles to solve problems

- Calculation: Performing chemical computations

- Intuition: Demonstrating human-aligned chemical preferences

For extraction tasks, standard evaluation metrics include:

- Completeness: Whether all relevant parameters are extracted [10]

- Correctness: Accuracy of the extracted information [10]

- Characterization-free compliance: Adherence to instructions about what data to exclude [10]

- Groundedness: Whether questions and answers are properly anchored in the provided context [10]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for LLM-Based Chemical Extraction

| Component | Function | Examples/Implementation |

|---|---|---|

| Chemical Text Representation | Standardized encoding of chemical structures | SMILES, SELFIES, InChI [12] |

| Named Entity Recognition | Identification of chemical entities | Specialized tags for molecules, units, equations [3] |

| Table Processing | Extraction of data from diverse table formats | JSON/TSV conversion, table splitting [9] |

| Multi-Agent Frameworks | Complex, multi-step extraction tasks | LangGraph, specialized agents for different property types [4] |

| Validation Mechanisms | Ensuring extracted data quality | Follow-up questioning, chemical rule checking [9] |

| Benchmarking Suites | Performance evaluation | ChemBench, ChemX, domain-specific benchmarks [3] [11] |

The field of chemical information extraction using LLMs is rapidly evolving, with several emerging trends shaping its future development. Multi-agent systems represent a promising direction, enabling more complex extraction workflows through specialized agents that collaborate on different aspects of the task [11] [4]. However, current benchmarks indicate that general-purpose agents still struggle with chemical domain adaptation, highlighting the need for continued development of chemistry-specific solutions [11].

Another significant trend is the integration of multimodal approaches that combine textual analysis with image processing for extracting information from figures, charts, and molecular diagrams [13] [11]. As noted in benchmarking studies, the ability of LLMs to accurately interpret data from scientific figures remains an area requiring improvement, pointing toward future opportunities for enhanced AI-assisted data extraction [13].

The development of specialized chemical representation methods continues to be crucial for improving model performance. Techniques that provide special treatment of molecular representations and equations have shown promise, though current benchmarking suites often fail to account for these specialized processing approaches [3].

In conclusion, LLMs have demonstrated remarkable capabilities in extracting chemical information from diverse sources, with performance often rivaling or exceeding human experts in specific tasks. However, significant challenges remain in handling domain-specific terminology, complex representations, and context-dependent ambiguities. The ongoing development of specialized benchmarks, extraction methodologies, and evaluation frameworks will be essential for advancing the field and realizing the full potential of LLMs in accelerating chemical research and discovery.

The evolution of Generative Pre-trained Transformer (GPT) models represents a pivotal shift in artificial intelligence applications for scientific research. Initially designed as general-purpose tools for natural language processing, these models are increasingly being adapted and specialized to tackle complex challenges in chemistry and materials science. This transition from generalist to chemically-aware systems addresses a critical bottleneck in data-driven research: the vast majority of chemical knowledge remains locked within unstructured natural language in scientific publications, making it inaccessible for computational analysis and machine learning [1]. The emergence of chemically-specialized systems marks a significant advancement in how researchers can extract structured, actionable data from text, enabling more efficient discovery and development of novel compounds and materials [1].

This transformation is driven by the unique requirements of chemical research, where specialized notations like SMILES strings, IUPAC nomenclature, and molecular formulas present interpretation challenges for general-purpose models [14]. Early GPT models often struggled with fundamental chemical representations—interpreting "CO" as carbon monoxide rather than the state of Colorado, or "Co" as cobalt rather than a company [14]. The latest generation of models has made substantial progress in bridging this gap, developing capabilities that range from precise chemical data extraction to autonomous experimental design and execution [15]. This guide examines the performance trajectory of GPT models in chemical applications, providing researchers with experimental data and methodologies for selecting appropriate models for their specific chemical data extraction needs.

Performance Comparison: Quantitative Metrics Across Chemical Tasks

Comprehensive benchmarking provides crucial insights into the evolving capabilities of GPT models for chemical research. The ChemBench framework, evaluating over 2,700 question-answer pairs across diverse chemical topics, reveals significant performance variations between models and human experts [3].

Table 1: Overall Performance on Chemical Knowledge and Reasoning Tasks

| Model/System | Overall Accuracy (%) | Knowledge Questions (%) | Reasoning Questions (%) | Calculation Questions (%) |

|---|---|---|---|---|

| Best LLM (Average) | >50% (Outperformed best human) | Data not available in search results | Data not available in search results | Data not available in search results |

| Human Chemists (Expert) | <50% (Average) | Data not available in search results | Data not available in search results | Data not available in search results |

| GPT-4 | Data not available in search results | Data not available in search results | Data not available in search results | Data not available in search results |

| ChemDFM | Varied (Outperformed GPT-4 on many tasks) | Data not available in search results | Data not available in search results | Data not available in search results |

The benchmarking results indicate that the best LLMs can outperform human chemists on average in terms of chemical knowledge and reasoning capabilities [3]. However, the models still exhibit significant weaknesses in specific areas, including basic tasks and providing overconfident predictions that require careful validation by domain experts [3].

Specialized Chemical Data Extraction

For specific chemical data extraction tasks, model performance varies considerably based on the complexity of the target information and the extraction methodology employed.

Table 2: Performance on Specific Chemical Data Extraction Tasks

| Task | Best Model | Performance Metrics | Key Limitations |

|---|---|---|---|

| Thermoelectric Property Extraction | GPT-4.1 | F1 ≈ 0.91 (thermoelectric), F1 ≈ 0.82 (structural) [4] | High computational cost for large-scale deployment |

| Chemical-Disease Relation Extraction | GPT-4.0 | F1 = 87% (precise extraction), F1 = 73% (comprehensive extraction) [2] | Struggles with implicit meaning in biomedical texts |

| SMILES to IUPAC Conversion | o3-mini (reasoning) | Significant improvement over near-zero accuracy of earlier models [16] | Requires validation of non-standard IUPAC names |

| NMR Structure Elucidation | o3-mini (reasoning) | 74% accuracy for molecules with ≤10 heavy atoms [16] | Performance decreases with molecular complexity |

Specialized extraction workflows demonstrate that model performance can be optimized through task-specific adaptations. The agentic workflow described by Ghosh and Tewari, which integrates dynamic token allocation and multi-agent extraction, achieved high accuracy in extracting thermoelectric and structural properties from thousands of full-text articles [4]. Similarly, sophisticated prompting strategies for chemical-disease relation extraction substantially improved performance for identifying complex relationship types beyond simple co-occurrence [2].

Chemical Reasoning and Structure Interpretation

Recent "reasoning models" represent a significant advancement in chemical reasoning capabilities, particularly for tasks requiring deep structural understanding and problem-solving.

Table 3: Performance on Chemical Reasoning Tasks (ChemIQ Benchmark)

| Model | Overall Accuracy (%) | Molecular Interpretation Tasks | Structure-Property Relationships |

|---|---|---|---|

| o3-mini (reasoning) | 28%-59% (depending on reasoning level) [16] | Significant improvement in SMILES understanding | Data not available in search results |

| GPT-4o (non-reasoning) | 7% [16] | Poor performance on SMILES tasks | Data not available in search results |

| Earlier GPT Models | Near-zero on SMILES to IUPAC [16] | Unable to interpret molecular structures | Data not available in search results |

The dramatic performance improvement with reasoning models highlights how specialized training approaches can overcome previous limitations in molecular comprehension [16]. These models demonstrate reasoning processes that mirror human chemist approaches to problem-solving, suggesting a deeper conceptual understanding rather than superficial pattern recognition [16].

Experimental Protocols and Methodologies

Chemical Benchmarking Methodology

The ChemBench framework employs a rigorous methodology for evaluating chemical capabilities of LLMs [3]. The benchmark corpus consists of 2,788 question-answer pairs compiled from diverse sources, including manually crafted questions, university exams, and semi-automatically generated questions based on curated chemical databases [3]. Each question undergoes quality assurance review by at least two scientists in addition to the original curator, supplemented by automated checks [3].

The framework encompasses a wide range of topics from general chemistry to specialized fields like inorganic, analytical, and technical chemistry [3]. Questions are classified by the skills required to answer them: knowledge, reasoning, calculation, intuition, or combinations thereof [3]. Unlike benchmarks consisting primarily of multiple-choice questions, ChemBench includes both multiple-choice (2,544) and open-ended questions (244) to better reflect real-world chemistry research and education [3].

To address cost concerns for routine evaluations, ChemBench-Mini provides a curated subset of 236 questions that represent a diverse and balanced distribution of topics and skills from the full corpus [3]. This subset was used for human expert evaluations to contextualize model performance [3].

Agentic Data Extraction Workflow

Large-scale chemical data extraction employs sophisticated multi-agent workflows optimized for accuracy and computational efficiency [4]. The process begins with DOI collection and article retrieval, targeting approximately 10,000 open-access articles from major scientific publishers (Elsevier, RSC, Springer) using keyword searches for "thermoelectric materials," "ZT," and "Seebeck coefficient [4]."

The preprocessing pipeline utilizes automated Python scripts to extract key components from XML and HTML article formats, including full text, metadata, and tables [4]. Non-relevant sections like "Conclusion" and "References" are removed, and the remaining text is filtered using rule-based pattern matching to retain only sentences likely to contain thermoelectric or structural properties [4].

The core extraction workflow employs four specialized LLM-based agents operating within a LangGraph framework [4]:

- Material Candidate Finder (MatFindr): Identifies potential material systems discussed in the text

- Thermoelectric Property Extractor (TEPropAgent): Focuses on extracting performance metrics (ZT, Seebeck coefficient, conductivity, etc.)

- Structural Information Extractor (StructPropAgent): Targets structural attributes (crystal class, space group, doping)

- Table Data Extractor (TableDataAgent): Specifically processes data presented in tabular format

This modular approach allows each agent to specialize in a well-defined sub-task, improving overall accuracy while managing computational costs through dynamic token allocation strategies [4].

Domain Adaptation Methodology

The development of chemically-specialized models like ChemDFM employs a systematic two-stage specialization process to bridge the gap between general-purpose LLMs and domain-specific requirements [14]. This methodology demonstrates how domain adaptation can transform general AI tools into chemically-aware research partners.

The first stage, domain pre-training, leverages the open-source LLaMA-13B model and conducts further pre-training using an extensive corpus of chemical literature containing 34 billion tokens extracted from over 3.8 million papers and 1,400 textbooks [14]. This exposure to domain-specific language and concepts builds foundational chemical knowledge.

The second stage, instruction tuning, refines the model using 2.7 million chemistry-focused instructions derived from chemical databases [14]. This phase specifically addresses the representational gap between natural language and specialized chemical notations by incorporating tasks such as molecular notation alignment, effectively training the model to seamlessly translate between diverse molecular representations like SMILES, IUPAC names, and molecular formulas [14].

This approach preserves the general reasoning capabilities of the underlying LLM while instilling deep chemical expertise, creating models that can understand both natural language instructions and chemical representations [14]. The success of this methodology highlights the importance of careful data curation and the value of domain expertise in AI development for scientific applications [14].

Table 4: Key Research Reagent Solutions for Chemical AI Applications

| Resource | Type | Primary Function | Application Examples |

|---|---|---|---|

| ChemBench | Evaluation Framework | Standardized assessment of chemical knowledge and reasoning [3] | Model comparison, capability gap identification |

| ChemDFM | Domain-Specific LLM | Chemistry-focused foundation model with specialized knowledge [14] | Research assistance, molecular design, literature analysis |

| Agentic Extraction Workflow | Methodology | Large-scale structured data extraction from literature [4] | Creating structured datasets from unstructured text |

| Coscientist | AI System | Autonomous design, planning, and execution of experiments [15] | Reaction optimization, automated experimentation |

| ChemIQ | Benchmark | Assessment of molecular comprehension and reasoning [16] | Evaluating SMILES understanding, structural reasoning |

| Reaxys/SciFinder | Database | Grounding LLM outputs in authoritative chemical information [15] | Synthesis planning, fact verification |

The evolution of GPT models from general-purpose tools to chemically-aware systems has substantially advanced their utility for chemical research. Current models demonstrate impressive capabilities in chemical knowledge recall, reasoning, and specialized data extraction, with the best models outperforming human chemists on average in benchmark evaluations [3]. The development of domain-adapted models like ChemDFM and sophisticated agentic workflows has enabled large-scale extraction of structured chemical information from scientific literature at unprecedented scales [4] [14].

However, significant challenges remain. Models still struggle with basic tasks in some areas, provide overconfident predictions, and require careful validation by domain experts [3]. The computational resources needed for training and deployment present accessibility barriers, and comprehensive evaluation remains complex [14]. Future advancements will likely focus on improved numerical reasoning, multimodal capabilities for spectroscopic data interpretation, tighter integration with chemical tools and databases, and more efficient model architectures [14]. As these chemically-aware systems continue to evolve, they promise to transform from tools into collaborative research partners, accelerating discovery across chemical sciences and drug development.

Performance Comparison of GPT Models for Chemical Data Extraction Research

The automation of data extraction from scientific literature is revolutionizing fields like chemistry and materials science, where vast amounts of critical information remain locked in unstructured text. This guide objectively compares the performance of various Generative Pre-trained Transformer (GPT) models for extracting chemical data, specifically focusing on reaction data, material properties, and synthesis protocols. As large language models (LLMs) continue to evolve at a rapid pace, understanding their specific capabilities, limitations, and cost-performance trade-offs is essential for researchers, scientists, and drug development professionals seeking to implement these technologies in their workflows [17] [4].

Key Application Areas in Chemical Data Extraction

Material Properties Extraction

The automated extraction of material properties represents a core application where LLMs demonstrate significant utility. Successful implementations have focused on creating large, machine-readable datasets from scientific literature, coupling performance metrics with structural context that is often absent from existing databases [4]. Specific properties that have been successfully extracted include thermoelectric properties (figure of merit ZT, Seebeck coefficient, conductivity, resistivity, power factor, and thermal conductivity) and structural attributes (crystal class, space group, and doping strategy) [4] [18]. For perovskite materials, bandgap extraction has been a particular focus due to its critical importance for optoelectrical properties in solar cell research [19].

Synthesis Protocols and Reaction Data

Extracting synthesis parameters and reaction data represents another significant application area. LLMs have been deployed to extract structured information about synthesis conditions, doping procedures, and experimental parameters from full-text scientific articles [4] [19]. This capability is particularly valuable for creating comprehensive databases that link synthesis conditions with material properties, enabling more efficient materials discovery and optimization [18].

Experimental Protocols for Benchmarking GPT Models

Dataset Preparation and Curation

Benchmarking GPT models for chemical data extraction requires carefully curated datasets with ground truth annotations. The following methodologies have been employed in recent studies:

TrialReviewBench Construction: For clinical evidence synthesis, researchers created a benchmark from 100 published systematic reviews containing 2,220 clinical studies. This involved manual extraction of 1,334 study characteristics and 1,049 study results to serve as ground truth for evaluating extraction accuracy [20].

Thermoelectric Materials Corpus: For material properties extraction, researchers collected approximately 10,000 full-text scientific articles related to thermoelectric materials, focusing on open-access articles from major publishers including Elsevier, the Royal Society of Chemistry (RSC), and Springer. The preprocessing pipeline extracted key components such as full text, metadata, and tables from both XML and HTML formats, removing non-relevant sections like "Conclusion" and "References" [4].

Perovskite Bandgap Annotation: For bandgap extraction from perovskite literature, researchers developed specialized annotation protocols focusing on five different perovskite materials (three hybrid and two inorganic halide perovskites). This created a standardized evaluation framework for comparing model performance on extracting material-property relationships as [material, property, value, unit] quadruples [19].

Evaluation Metrics and Methodologies

Standardized evaluation metrics are critical for objective model comparison:

Accuracy Measurements: For structured data extraction, F1 scores, precision, and recall are calculated by comparing LLM-extracted data against manually curated ground truth [4] [18] [19].

Hallucination Assessment: For generative models, the tendency to produce values or texts not found in the original text (hallucination) is quantified by checking extracted information against source documents [19].

Cost-Benefit Analysis: Total API costs are calculated per records processed, enabling practical comparisons between models of different sizes and capabilities [4].

Diagram Title: LLM Benchmarking Workflow

Performance Comparison of GPT Models

Quantitative Performance Metrics

Table 1: Performance Comparison of GPT Models for Material Property Extraction

| Model | Extraction Task | F1 Score | Precision | Recall | Cost per 1M Tokens (Input/Output) | Context Window |

|---|---|---|---|---|---|---|

| GPT-4.1 | Thermoelectric properties | 0.91 | N/R | N/R | N/R | Up to 1M [21] |

| GPT-4.1 Mini | Thermoelectric properties | 0.889 | N/R | N/R | N/R | Up to 1M [21] |

| GPT-4 | Perovskite bandgaps | ~0.82* | N/R | N/R | $10/$30 [22] | 128K [22] |

| GPT-4o | General chemical data | N/R | N/R | N/R | $2.50/$10 [22] | 128K [22] |

| GPT-4o Mini | General chemical data | N/R | N/R | N/R | $0.15/$0.60 [22] | 128K [22] |

| GPT-3.5 Turbo | General chemical data | N/R | N/R | N/R | $0.50/$1.50 [22] | 16K [22] |

Note: N/R = Not Reported in Source; *Estimated from performance description [19]

Task-Specific Performance Analysis

Table 2: Specialized Performance Across Chemical Data Types

| Model | Material Properties | Structural Features | Synthesis Parameters | Clinical Evidence | Key Strengths |

|---|---|---|---|---|---|

| GPT-4.1 | Excellent (F1: 0.91) [4] | Very Good (F1: 0.82) [4] | Good [4] | N/R | Highest accuracy for complex extractions |

| GPT-4.1 Mini | Very Good (F1: 0.889) [18] | Very Good (F1: 0.833) [18] | Good [18] | N/R | Near-GPT-4.1 performance at lower cost |

| GPT-4 | Good (Comparable to QA MatSciBERT) [19] | Moderate [19] | Moderate [19] | 16-32% lower accuracy than specialized systems [20] | Strong general capabilities |

| GPT-4o | N/R | N/R | N/R | N/R | Multimodal, fast response |

| Ensemble Models | N/R | N/R | N/R | 65.6% exact agreement with clinicians [23] | Improved reliability |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for LLM-Based Chemical Data Extraction

| Research Component | Function | Implementation Example |

|---|---|---|

| LangGraph Framework | Enables multi-agent workflows for complex extraction tasks | Coordinates specialized agents (MatFindr, TEPropAgent, StructPropAgent) [4] |

| Pydantic Models | Defines structured output formats for extracted data | Creates validated schemas for resume data (e.g., Education, WorkExperience) [17] |

| Vector Database (FAISS) | Enables efficient retrieval of relevant text passages | Indexes tokenized article text for relevant paragraph retrieval [4] |

| Token Allocation System | Dynamically manages token distribution based on content complexity | Allokens max_tokens based on cleaned text length [4] |

| Regular Expression Filtering | Identifies sentences likely to contain target properties | Uses pattern matching to retain only relevant sentences [4] |

| Conditional Table Parsing | Extracts data from structured tables in scientific articles | Parses XML/HTML tables from Elsevier, RSC, and Springer formats [4] |

| Question Answering (QA) Models | Provides hallucination-resistant extraction for specific queries | Fine-tuned MatSciBERT for bandgap extraction [19] |

Workflow Architecture for Chemical Data Extraction

Diagram Title: Multi-Agent Extraction Architecture

Cost-Benefit Analysis for Research Implementation

When implementing GPT models for chemical data extraction at scale, cost considerations become paramount alongside performance metrics. The experimental data reveals significant cost differentials between models, with GPT-4.1 Mini offering nearly comparable performance to GPT-4.1 at a fraction of the cost, making it particularly suitable for large-scale deployment [18]. For example, a workflow processing ~10,000 full-text scientific articles achieved comprehensive data extraction at a total API cost of approximately $112, demonstrating the cost-effectiveness of carefully optimized model selection [4].

Specialized extraction pipelines like TrialMind have demonstrated 63.4% reduction in data extraction time while simultaneously increasing accuracy by 23.5% compared to manual methods, highlighting the operational efficiency gains possible through well-designed LLM implementations [20]. These systems also show remarkable consistency, with ensemble models achieving 92% alignment with clinicians' "do/do not intervene" decisions in clinical evidence synthesis tasks [23].

This comparison guide demonstrates that GPT-4.1 currently delivers the highest extraction accuracy for chemical data, particularly for complex thermoelectric and structural properties. However, GPT-4.1 Mini provides a compelling alternative for large-scale implementations where cost efficiency is paramount. The performance differences between models highlight the importance of task-specific evaluation, as models excel in different extraction scenarios. As LLM technology continues to evolve, the development of specialized workflows incorporating multiple AI agents, structured output validation, and dynamic token allocation will further enhance the accuracy and efficiency of chemical data extraction systems. Researchers should consider both quantitative performance metrics and operational requirements when selecting models for specific chemical data extraction applications.

From Text to Structured Data: Practical GPT Workflows for Chemical Extraction

The vast majority of chemical knowledge exists locked within unstructured natural language, such as scientific articles and research papers [1]. Converting this information into structured, actionable data is crucial for accelerating materials design, drug development, and scientific discovery. End-to-end extraction pipelines are integrated processes that move raw text data from its source to a final, consumable structured format, encompassing stages from ingestion and processing to storage and analysis [24]. Within chemical data extraction, the emergence of large language models (LLMs), particularly GPT-class models, offers a transformative shift from traditional manual curation and narrowly-focused rule-based systems [1] [5]. This guide provides a performance-focused comparison of GPT models and alternative methodologies, offering researchers a clear framework for selecting and implementing extraction pipelines.

Pipeline Architecture and Workflow

An effective end-to-end extraction pipeline for chemical data involves a sequence of logical stages designed to maximize data quality and processing efficiency. The workflow must handle the specific challenges of scientific text, including complex nomenclature, implicit relationships, and data reported across multiple sentences [2] [5].

The following diagram illustrates the core workflow of a hybrid LLM-NER (Named Entity Recognition) pipeline for extracting chemical data from scientific literature.

Experimental Protocols for Performance Comparison

To objectively evaluate the performance of different extraction models, researchers employ standardized experimental protocols. The methodologies below are derived from recent, rigorous studies in chemical and biomedical data extraction.

Protocol 1: Chemical-Disease Relation (CDR) Extraction

This protocol, designed for precise and comprehensive relation extraction, tests the model's ability to identify complex relationships between chemicals and diseases from document-level text [2].

- Task Definition: The model must extract chemical-disease entity pairs and accurately classify their relationship type (e.g., "induced" or "treated"). The comprehensive extraction task further requires identifying side effects, accelerating factors, and mitigating factors.

- Dataset: A self-constructed dataset where models are evaluated on their ability to handle document-level context, as single sentences often fail to capture the full scope of these relationships [2].

- Workflow Variants: The study tests multiple workflows combining different modules:

- Entity Extraction: Using either an LLM or a pre-trained NER model (ConNER).

- Relation Extraction: Employing either a direct extraction method or a method guided by entity lists (Note_prompt).

- Follow-up Inquiry: A step to reduce model "hallucination" by forcing a "yes/no" reflection on the extracted relations.

- Semantic Disambiguation: Leveraging the model's internal knowledge to resolve synonyms and term variations [2].

- Evaluation Metrics: Precision, Recall, and F1-score.

Protocol 2: Large-Scale Polymer-Property Extraction

This protocol assesses the scalability and cost-effectiveness of models when processing millions of scientific paragraphs [5].

- Task Definition: Extract structured records of polymer properties (e.g., bandgap, refractive index, gas permeability) from a corpus of over 2.4 million full-text journal articles.

- Pipeline Architecture: A dual-stage filtering approach is used to optimize cost and efficiency:

- Heuristic Filter: Identifies paragraphs mentioning target properties using manually curated keywords.

- NER Filter (MaterialsBERT): Verifies the presence of all necessary entities (polymer name, property, value, unit) to confirm an extractable record exists.

- Models Compared: The filtered paragraphs are processed using MaterialsBERT (a domain-specific NER model), GPT-3.5, and LlaMa 2.

- Evaluation Metrics: Quantity of extracted records, quality (accuracy), processing time, and monetary/computational cost [5].

Performance Data and Comparative Analysis

The following tables consolidate quantitative results from key experiments, enabling a direct comparison of model performance across different extraction tasks.

Table 1: Performance on Chemical-Disease Relation (CDR) Extraction Tasks [2]

| Model | Task Type | Precision (%) | Recall (%) | F1-Score (%) |

|---|---|---|---|---|

| GPT-3.5 | Precise Extraction | 85 | 89 | 87 |

| GPT-4.0 | Precise Extraction | 84 | 88 | 86 |

| Claude-opus | Precise Extraction | 83 | 87 | 85 |

| GPT-3.5 | Comprehensive Extraction | 71 | 75 | 73 |

Table 2: Large-Scale Polymer-Property Extraction: Model Comparison [5]

| Model | Extraction Paradigm | Primary Strength | Key Limitation | Cost Consideration |

|---|---|---|---|---|

| GPT-3.5 | LLM (Zero/Few-shot) | High flexibility for complex relationships; eliminates need for annotated data | Prone to "hallucination"; output variability | Significant monetary cost at scale |

| LlaMa 2 | Open-source LLM | No API costs; customizable | Lower performance vs. commercial LLMs | High computational (environmental) cost |

| MaterialsBERT | NER Pipeline | High precision on entity recognition; lower cost | Struggles with cross-sentence relationships | Lower operational cost |

Table 3: Performance of Pipeline vs. Sequence-to-Sequence vs. GPT Models on Rare Disease RE [25]

| Model Paradigm | End-to-End F1-Score | Key Finding |

|---|---|---|

| Pipeline (NER → RE) | Highest | Well-designed pipeline models offer substantial performance gains at a lower cost and carbon footprint. |

| Sequence-to-Sequence | Slightly lower than Pipeline | Competitive performance, not far behind pipeline models. |

| GPT Models | Lowest (>10 F1 points behind Pipeline) | Despite having 8x more parameters, they underperform smaller conventional models when training data is available. |

The Scientist's Toolkit: Research Reagent Solutions

Building an effective extraction pipeline requires a combination of software tools and conceptual components. The following table details essential "research reagents" for constructing chemical data extraction pipelines.

Table 4: Essential Tools and Components for Chemical Data Extraction Pipelines

| Tool / Component | Type | Function in Pipeline | Example Tools / Models |

|---|---|---|---|

| LLMs (General-purpose) | Foundation Model | Performs zero-shot/few-shot extraction of entities and complex relationships. | GPT-3.5, GPT-4, Claude-opus [2] [5] |

| Domain-Specific NER Models | Pre-trained Model | Accurately identifies scientific entities (materials, properties) with high precision. | MaterialsBERT, ChemBERT [5] |

| Data Management Platform | Infrastructure | Stores and organizes extracted data and metadata for easy retrieval and analysis. | Expipe [26] [27] |

| Heuristic / Rule Engine | Software Component | Provides initial, high-recall filtering of relevant text passages before deep processing. | Custom keyword filters [5] |

| Real-Time Data Processing | Infrastructure | Handles streaming data transformation and ingestion for live data sources. | Apache Flink, Estuary Flow [24] [28] |

The performance comparison reveals a nuanced landscape for chemical data extraction. While GPT models demonstrate impressive capability, especially for complex relation extraction tasks where they can achieve F1-scores above 85% [2], they are not a universal solution. For large-scale, cost-sensitive extraction of well-defined entities, traditional pipeline approaches with domain-specific NER models like MaterialsBERT can be more effective and efficient [25] [5]. The optimal architecture often depends on the specific research goal: LLMs excel in flexibility and handling unseen relation types with minimal setup, whereas pipeline models offer superior performance and lower cost for well-defined, large-scale extraction tasks. Future progress will likely involve hybrid methods that leverage the strengths of both paradigms to build more accurate, efficient, and scalable chemical data extraction systems.

Specialized AI Agents for Targeted Extraction (Thermoelectric, Polymer, and Reaction Data)

The acceleration of materials discovery is fundamentally constrained by the vast quantity of scientific knowledge locked within unstructured text, tables, and figures in research articles. Traditional manual curation is unable to keep pace with the volume of published literature. The advent of large language models (LLMs) has initiated a paradigm shift, enabling the automated extraction of structured, actionable data. This guide objectively compares the performance of specialized AI agents, framing their capabilities within a broader thesis on the application of GPT models for chemical data extraction research. We synthesize experimental data from recent benchmarking studies to provide researchers with a clear comparison of accuracy, methodology, and applicability across key chemical domains.

Performance Comparison of Specialized AI Agents

The following tables summarize the performance metrics of various AI agents as reported in recent studies, providing a quantitative basis for comparison.

Table 1: Overall Performance of AI Agents on Chemical Data Extraction Tasks

| AI Agent / Model | Primary Domain | Reported Performance (F1 Score) | Key Strengths |

|---|---|---|---|

| GPT-4.1 [4] [18] | Thermoelectrics | 0.91 (Thermoelectric), 0.82-0.83 (Structural) | High accuracy, generalizable workflow |

| nanoMINER [29] | Nanomaterials & Nanozymes | 0.80 (Nanozymes), Up to 0.98 for specific parameters | High precision, multimodal integration (text + figures) |

| Single-agent (GPT-5) [11] | General Chemical (Nanozymes) | 0.58 (Nanozymes) | Robust document preprocessing |

| GPT-5 Thinking [11] | General Chemical | 0.19 (Complexes), 0.02 (Nanozymes) | Extended reasoning, but poor for direct extraction |

| SLM-Matrix [11] | General Materials | 0.39 (Complexes), 0.22 (Nanozymes) | Uses small language models |

| FutureHouse [11] | General | 0.06 (Complexes), 0.09 (Nanozymes) | Multi-agent, but low performance on chemical data |

Table 2: Detailed Extraction Performance of nanoMINER on Nanozyme Data [29]

| Extracted Parameter | Precision | Recall | F1 Score |

|---|---|---|---|

| Kinetic Parameters (Km, Vmax) | 0.98 | - | - |

| Minimal/Maximal Substrate Concentration | 0.98 | - | - |

| Chemical Formulas | - | - | ~1.00* |

| Coating Molecule Weight | 0.66 | - | - |

| *Normalized Levenshtein distance close to zero, indicating near-perfect extraction. |

Experimental Protocols and Workflows

The performance data presented above is derived from rigorously benchmarked experiments. This section details the methodologies employed by the top-performing agents.

Thermoelectric Property Extraction Protocol

The high-performing agent for thermoelectric data, as detailed in Ghosh et al., follows a structured, multi-agent workflow [4] [18].

- DOI Collection and Article Retrieval: The process begins with collecting Digital Object Identifiers (DOIs) for relevant research articles by querying publishers (Elsevier, RSC, Springer) with keywords like "thermoelectric materials" and "ZT." Full-text articles are retrieved in structured XML or HTML formats where possible, as they are more reliably parsed than PDFs [4].

- Preprocessing: An automated Python pipeline extracts and cleans the article content. It removes non-relevant sections (e.g., "Conclusions," "References") and uses a rule-based script with regular expressions (generated with ChatGPT assistance) to filter sentences likely to contain thermoelectric or structural properties. This ensures downstream LLM prompts are focused and token-efficient [4].

- Multi-Agent Extraction (LangGraph Framework): The core extraction is handled by a system of four specialized agents [4]:

- Material Candidate Finder (MatFindr): Identifies discussed material systems.

- Thermoelectric Property Extractor (TEPropAgent): Extracts performance metrics (ZT, Seebeck coefficient, etc.).

- Structural Information Extractor (StructPropAgent): Extracts attributes like crystal class and space group.

- Table Data Extractor (TableDataAgent): Parses data from tabular formats.

- Benchmarking: The workflow was validated on a manually curated set of 50 papers, with GPT-4.1 achieving the highest accuracy [4] [18].

Multimodal Nanomaterial Data Extraction (nanoMINER)

The nanoMINER system employs a multi-agent, multimodal approach to achieve its high-precision extraction, particularly for nanomaterial and nanozyme data [29].

- PDF Processing and Tool Initialization: The system begins by processing input PDF documents. Specialized tools extract text, images, and plots. The YOLO model is used for visual data extraction to detect figures, tables, and schemes within these images [29].

- Strategic Text Segmentation: The extracted textual content is segmented into chunks of 2048 tokens to facilitate efficient processing [29].

- Multi-Agent Orchestration (ReAct Agent): A central ReAct agent, based on GPT-4o, orchestrates the workflow. It manages specialized tools and agents, performs function-calling, and merges incoming information. The key specialized components are [29]:

- NER Agent: A fine-tuned Mistral-7B or Llama-3-8B model trained to extract essential entity classes (e.g., chemical formulas, crystal systems) from the text segments.

- Vision Agent: Based on GPT-4o, this agent processes graphical images and non-standard tables, linking visual data with textual descriptions.

- Information Aggregation and Output: The main agent aggregates information from the NER and Vision agents. The final output is processed to ensure a structured and consistent format, such as a CSV file [29].

The Scientist's Toolkit: Research Reagent Solutions

Building and evaluating AI agents for chemical data extraction requires a suite of software tools and platforms. The following table details key "research reagents" used in the featured experiments.

Table 3: Essential Tools for AI Agent Development and Evaluation

| Tool / Platform | Function | Example Use Case |

|---|---|---|

| LangGraph [4] | Framework for building stateful, multi-agent applications. | Orchestrating the interaction between specialized agents (e.g., MatFindr, TEPropAgent). |

| tiktoken [4] | OpenAI's tokenizer for fast Byte Pair Encoding (BPE). | Counting tokens in cleaned text to manage prompt length and API costs. |

| marker-pdf SDK [11] | Converts PDFs into structured Markdown with high accuracy. | Document preprocessing in single-agent approaches to ensure reproducible text conversion. |

| YOLO Model [29] | Real-time object detection system. | Detecting and identifying figures, tables, and schemes within article PDFs for visual analysis. |

| ChemBench [3] | Automated evaluation framework for LLM chemical knowledge. | Benchmarking the fundamental chemical capabilities of LLMs before deploying them in agents. |

| ChemX [11] | A collection of 10 manually curated datasets for benchmarking. | Evaluating and comparing the performance of different agentic systems on nanomaterials and small molecules. |

| PolyInfo Database [30] | A large, curated database of polymer properties. | Serving as a high-fidelity data source for fine-tuning domain-specific models like PolySea. |

The effectiveness of GPT models in chemical data extraction is highly dependent on the format of the input data. The table below summarizes the performance of leading models across textual, tabular, and multi-modal inputs, highlighting their suitability for different chemical data extraction tasks.

| Input Format | Exemplar Model/Approach | Reported Performance (F1 Score) | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Textual (Scientific Prose) | GPT-4.1 (Agentic Workflow) [4] [18] | 0.91 (Thermoelectric), 0.82 (Structural) [4] | High accuracy for explicit data; captures cross-sentence context [4] [8] | Struggles with nuanced, subjective criteria [31] |

| Tabular Data | GPT-4.1 with Table Parser [4] | Integrated in full-text performance | Extracts rich quantitative data; normalizes units [4] | Performance is contingent on accurate table identification [4] |

| Images/Diagrams | RxnIM (Specialized MLLM) [32] | 0.88 (Reaction Component ID) [32] | Parses reaction schemes; interprets condition text [32] | Requires specialized training on synthetic data [32] |

| Multi-modal (PDF Graphics) | MERMaid (VLM Pipeline) [33] | 0.87 (End-to-End Accuracy) [33] | Integrates figures and text; generates knowledge graphs [33] | Performance depends on visual complexity [33] |

Experimental Protocols for Performance Benchmarking

Protocol for Text-Based Chemical Entity Extraction

This protocol, used to benchmark GPT-4.1 for extracting material properties, demonstrates a high-accuracy, agentic workflow [4] [18].

- Data Collection & Preprocessing: DOIs for ~10,000 thermoelectric material articles were collected from publishers (Elsevier, RSC, Springer). Full-text articles were retrieved in XML or HTML format. The text was preprocessed by removing non-relevant sections (e.g., "References," "Conclusion") and filtering sentences using a rule-based script with regex patterns for keywords related to material types and properties [4].

- Agentic Extraction Workflow: A multi-agent system was built using the LangGraph framework. Key agents included:

- MatFindr: Identifies material candidates in the text.

- TEPropAgent: Extracts thermoelectric properties (e.g., ZT, Seebeck coefficient).

- StructPropAgent: Extracts structural attributes (e.g., crystal class, space group).

- TableDataAgent: Parses data from tables and their captions [4].

- Benchmarking & Validation: Performance was validated on a manually curated set of 50 papers. The F1 score was calculated by comparing LLM-extracted data against the human-curated gold standard [4].

Protocol for Multi-Modal Reaction Image Parsing

This methodology outlines the training and evaluation of RxnIM, a specialized Multimodal Large Language Model (MLLM) for parsing chemical reaction images [32].

- Synthetic Dataset Generation: Due to the lack of large-scale labeled image data, a novel generation algorithm was used. Structured reaction data from the Pistachio database was used to create visual reaction components. These components were assembled into images following predefined reaction patterns, incorporating variations in font, line width, and layout. This process generated 60,200 synthetic training images with ground-truth data [32].

- Model Architecture & Training:

- Architecture: RxnIM integrates a unified task instruction framework, a multimodal encoder to align image and text features, a specialized ReactionImg tokenizer, and an LLM decoder [32].

- Training Strategy: A three-stage strategy was employed:

- Pretraining: Object detection on the large-scale synthetic dataset.

- Task Training: Training to identify reaction components and interpret conditions.

- Fine-tuning: Enhancement on a smaller set of manually curated real images [32].

- Evaluation Metrics: The model was evaluated on reaction component identification (localization and role classification) and reaction condition interpretation (text recognition and meaning). Performance was measured using a soft match F1 score, achieving an average of 88%, outperforming previous state-of-the-art methods by 5% [32].

Workflow Visualization for Chemical Data Extraction

Text-Centric Extraction Pipeline

The following diagram illustrates the automated, agent-based workflow for extracting structured material property data from scientific text [4].

Multi-Modal Image Parsing Workflow

This diagram details the end-to-end process for extracting machine-readable reaction data from images using a specialized MLLM like RxnIM [32] or MERMaid [33].

The Scientist's Toolkit: Essential Research Reagents

This table lists key digital "reagents" and resources essential for building and executing chemical data extraction pipelines.

| Tool/Resource | Type | Primary Function in Extraction |

|---|---|---|

| GPT-4.1 / Claude 3.5 | General-Purpose LLM | Serves as the core reasoning engine for text comprehension, entity recognition, and data structuring in agentic workflows [6] [4]. |

| RxnIM | Specialized MLLM | A pre-trained model specifically designed for parsing chemical reaction images into structured data, eliminating the need for custom model training [32]. |

| LangGraph | Framework | Enables the orchestration of multi-agent AI systems where specialized LLM agents work collaboratively on complex extraction tasks [4]. |

| Pistachio/ORD | Chemical Database | Provides high-quality, structured reaction data that serves as a gold standard for validation and for generating synthetic training data [32] [8]. |

| ChemicalTagger | Rule-Based Parser | A grammar-based tool for chemical named entity recognition, often used as a benchmark or component in hybrid extraction pipelines [8]. |

| Nougat/Marker | PDF-to-Text Model | Converts scientific PDFs into structured, machine-readable markdown or XML, which is more reliable than raw PDF text extraction for downstream processing [4]. |

In chemical data extraction research, the vast majority of valuable knowledge exists locked within unstructured text in scientific articles, presenting a significant bottleneck for data-driven discovery [1]. To overcome this, researchers increasingly leverage Large Language Models (LLMs) like GPT, adapting them to this specialized domain primarily through two strategies: prompt engineering and fine-tuning [34] [1]. This guide provides an objective comparison of these methods, framing them within the specific context of chemical data extraction to help researchers and drug development professionals select the optimal approach for their projects.

What are Fine-Tuning and Prompt Engineering?

Fine-tuning is the process of retraining a pre-trained LLM on a specialized, domain-specific dataset. This process adjusts the model's internal parameters (weights and biases), effectively adapting its knowledge and behavior to excel in a specific domain, such as understanding chemical nomenclature or extracting reaction parameters [35] [36].

Prompt engineering, in contrast, guides the model's output without altering its internal parameters. It is the art of crafting and refining input prompts—by providing clear context, specific instructions, and examples—to elicit more accurate and relevant responses from the pre-trained model [35] [37].

At-a-Glance Comparison

The table below summarizes the core differences between fine-tuning and prompt engineering from the perspective of a chemical data extraction workflow.

| Feature | Prompt Engineering | Fine-Tuning |

|---|---|---|

| Definition | Modifying input prompts to guide the model’s output without changing its internal weights [35]. | Retraining a model on a specialized dataset to adapt its parameters for a specific domain [35]. |

| Core Method | Iterative refinement of prompt language, structure, and context [35] [38]. | Data preparation, hyperparameter adjustment, and supervised training on a labeled dataset [35] [39]. |

| Resource Investment | Lower; requires human expertise but minimal computational cost [39] [37]. | Higher; demands significant computational power, time, and a curated dataset [35] [39]. |

| Flexibility | High; prompts can be quickly adapted for different tasks or sub-domains [35] [38]. | Lower; the model becomes specialized and is less adaptable to new tasks without retraining [35] [37]. |

| Best for Chemical Data Extraction | Prototyping, extracting diverse data types, tasks where the model's base knowledge is sufficient [1]. | Specialized, high-volume tasks (e.g., property extraction), regulated outputs, and overcoming model limitations [38]. |

Quantitative Performance Comparison

Empirical studies, including those in scientific domains, provide concrete data on the performance trade-offs between these two methods. The following table summarizes key findings relevant to research applications.

| Performance Metric | Prompt Engineering | Fine-Tuning | Context & Findings |

|---|---|---|---|

| Output Quality (Cosine Similarity) | ~0.89 (with low statistical uncertainty) [34]. | ≥0.94 (consistently) [34]. | In a multi-agent AI for sustainable protein research, fine-tuning achieved higher mean cosine similarity to ideal outputs, though prompt engineering showed lower variance [34]. |

| Code Generation (MBPP Score) | Does not consistently outperform fine-tuned models [40]. | Outperformed GPT-4 with prompt engineering by 28.3% points [40]. | While for code, this demonstrates fine-tuning's potential for superior performance on specific, structured tasks [40]. |

| Inference Speed | Slower per request, especially with long, complex prompts [38]. | Fast per request after deployment, as it runs a specialized model [38]. | Fine-tuned models avoid the latency introduced by long prompts and data retrieval steps used in other methods like RAG [38]. |

Experimental Protocols for Domain Adaptation

To implement and compare these strategies in a chemical data extraction pipeline, researchers can follow these detailed methodologies.

Protocol 1: Fine-Tuning for Chemical Information Extraction

This protocol is adapted from successful fine-tuning applications in scientific fields [35] [34].

- Data Selection and Preparation: Curate a high-quality dataset of text-label pairs. For chemistry, this could be sentences from scientific papers labeled with entities like

catalyst,yield, orreaction_temperature[35] [1]. The data must be cleaned, de-duplicated, and formatted (e.g., into JSONL). - Model and Hyperparameter Selection: Choose a base model (e.g., a version of GPT, Llama, or a model like CodeBERT for specific tasks). For resource efficiency, use Parameter-Efficient Fine-Tuning (PEFT) methods like Low-Rank Adaptation (LoRA), which freezes most of the model's weights and only trains a small number of additional parameters [35] [36].

- Training and Optimization: The model is further trained on the curated dataset. Techniques like dropout and regularization are applied to mitigate overfitting, where the model memorizes the training data and fails to generalize [35].

- Validation: Continuously evaluate the model's performance on a held-out validation set to monitor for overfitting and adjust training parameters as needed [35].

Protocol 2: Prompt Engineering for Structured Data Extraction

This protocol leverages frameworks for using LLMs in chemistry, emphasizing the synergy between domain expertise and model capabilities [1].

- Task Analysis and Prompt Design: Define the precise structured output required (e.g., a JSON object with specific keys for chemical properties). Design an initial prompt that provides clear instructions, context, and the desired output format.

- Iterative Refinement and In-Context Learning: Input the prompt and a sample text to the LLM. Analyze the output for errors or deviations. Refine the prompt by adding more explicit instructions, examples (few-shot learning), or chain-of-thought reasoning. This is a creative, trial-and-error process [35] [38].

- Domain-Specific Validation: Implement checks using chemical expertise to validate the model's outputs. This can include checks for chemical validity or adherence to physical laws, which provides a unique opportunity to guide and correct the LLM [1].

Workflow Visualization

The diagrams below illustrate the core workflows for fine-tuning and prompt engineering in a chemical data extraction context.

Fine-Tuning Workflow

Prompt Engineering Workflow

The Scientist's Toolkit: Research Reagent Solutions