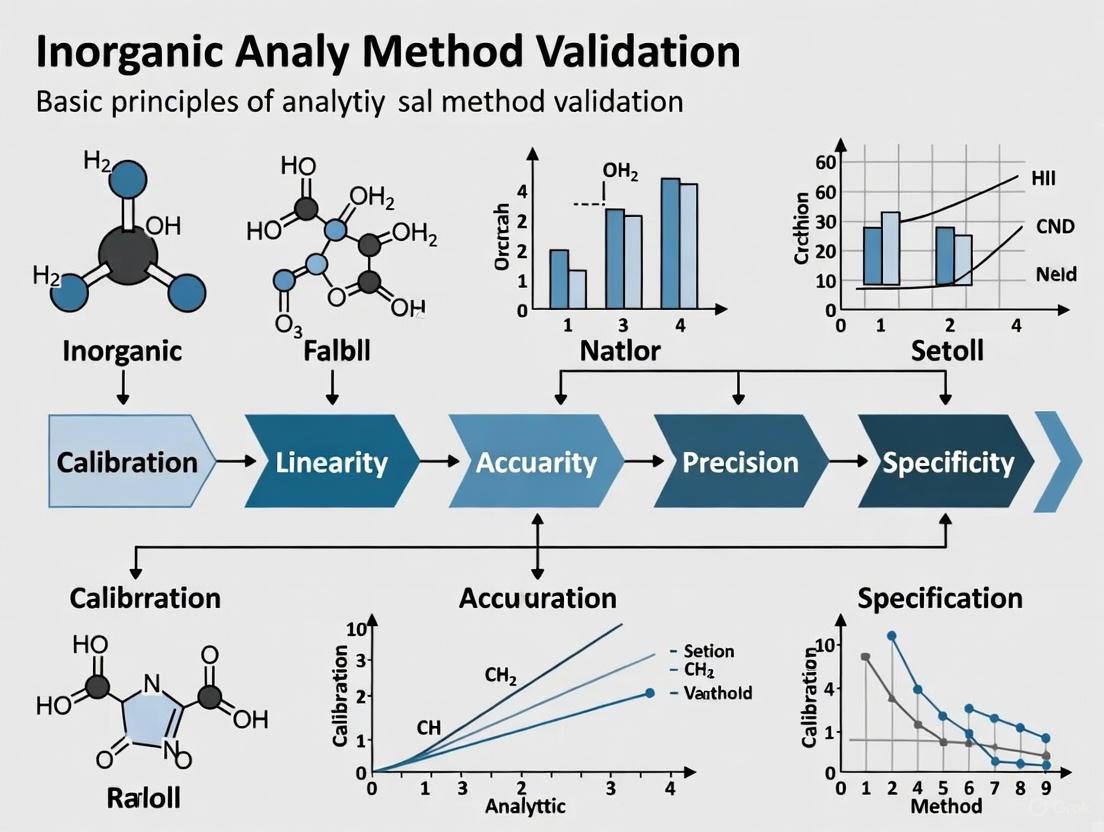

Fundamental Principles and Modern Practices for Validating Inorganic Analytical Methods

This article provides a comprehensive guide to inorganic analytical method validation, tailored for researchers, scientists, and drug development professionals.

Fundamental Principles and Modern Practices for Validating Inorganic Analytical Methods

Abstract

This article provides a comprehensive guide to inorganic analytical method validation, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles outlined in international guidelines like ICH Q2(R2), detailing core validation parameters such as accuracy, precision, and specificity. The content explores practical methodological applications, including techniques like HPLC/ICP-MS, and addresses common troubleshooting and optimization challenges. Furthermore, it examines modern validation paradigms, including lifecycle management and the enhanced approach under ICH Q14, offering a comparative analysis to ensure regulatory compliance and data reliability in pharmaceutical and biomedical research.

Core Principles and Regulatory Frameworks for Inorganic Method Validation

Method validation is a fundamental process in analytical science, serving as the cornerstone for generating reliable and trustworthy data. Within the context of inorganic analytical method validation research, it is formally defined as the confirmation by examination and the provision of objective evidence that the particular requirements for a specific intended use are fulfilled [1]. This process ensures that an analytical method is capable of producing results that meet the precise needs of its application, from drug development and manufacturing to environmental monitoring and food safety.

The "fitness-for-purpose" concept is the central guiding principle in modern method validation. It moves beyond a one-size-fits-all checklist and instead advocates for a tailored approach where the scope and stringency of validation are directly aligned with the method's intended application [1] [2]. This principle acknowledges that the rigorous validation required for a regulatory submission in pharmaceutical development differs from that needed for an early-stage research tool. This guide explores the purpose of method validation and elaborates on the practical application of the fitness-for-purpose concept, providing a structured framework for researchers and scientists.

The Core Purpose of Method Validation

The primary purpose of method validation is to establish, through laboratory studies, that the performance characteristics of an analytical method are suitable for its intended use. This process provides confidence that the method will consistently yield accurate and precise results under defined conditions, thereby ensuring data integrity [3].

In regulated industries, such as pharmaceuticals, method validation is not merely a scientific best practice but a regulatory requirement. It is critical for compliance with guidelines from agencies like the FDA and EMA, and frameworks such as ICH Q2(R2) [2] [3]. For drug development professionals, validated methods are indispensable for nonclinical safety studies, clinical trials, and regulatory submissions (e.g., IND, NDA, BLA) [4]. Ultimately, method validation is a key component of quality assurance, directly impacting consumer safety and product quality by ensuring that products meet specified purity, potency, and safety standards [5] [3].

The "Fitness-for-Purpose" Concept Explained

The fitness-for-purpose concept introduces a graded and flexible approach to validation. The core tenet is that the extent and depth of validation should be commensurate with the stage of product development and the specific decision-making role of the analytical data [1] [2].

A Tiered Validation Strategy

This concept recognizes that the requirements for a method evolve throughout a product's lifecycle, from early discovery to commercial release.

Fit-for-Purpose vs. Fully Validated Assays

The practical application of this concept is often framed as a choice between fit-for-purpose and fully validated assays, each serving distinct project phases [4].

Table: Comparison of Fit-for-Purpose and Fully Validated Assays

| Feature | Fit-for-Purpose Assay | Validated Assay |

|---|---|---|

| Primary Purpose | Early-stage research, feasibility testing, exploratory studies | Regulatory-compliant clinical data, commercial lot release |

| Level of Validation | Partial, optimized for specific study needs | Fully validated per FDA/EMA/ICH guidelines |

| Flexibility | High – can be adjusted and optimized as needed | Low – must follow strict, locked Standard Operating Procedures (SOPs) |

| Regulatory Status | Not required for early research; not suitable for submissions | Required for clinical trials and regulatory approvals |

| Typical Applications | Biomarker analysis, PK/PD screening, lead compound identification | GLP safety studies, clinical bioanalysis, IND/CTA submissions |

A Framework for Fitness-for-Purpose Validation

Implementing a fitness-for-purpose strategy involves a structured, multi-stage process. This lifecycle approach ensures the method remains suitable as requirements evolve.

The Method Validation Lifecycle

The validation process is iterative, often involving multiple rounds of validation as a product progresses from development to commercialization [1] [2].

Categorizing Assays for Validation

A practical starting point is to classify the biomarker or analytical method into one of five categories, as this determines which performance parameters must be evaluated [1].

Table: Performance Parameters for Different Assay Categories

| Performance Characteristic | Definitive Quantitative | Relative Quantitative | Quasi-Quantitative | Qualitative |

|---|---|---|---|---|

| Accuracy | + | |||

| Trueness (Bias) | + | + | ||

| Precision | + | + | + | |

| Reproducibility | + | |||

| Sensitivity | + | + | + | + |

| LLOQ | LLOQ | LLOQ | ||

| Specificity | + | + | + | + |

| Dilution Linearity | + | + | ||

| Parallelism | + | + | ||

| Assay Range | + | + | + | |

| Range Definition | LLOQ–ULOQ | LLOQ–ULOQ |

Abbreviations: LLOQ = lower limit of quantitation; ULOQ = upper limit of quantitation.

Experimental Protocols for Key Validation Parameters

For a method to be deemed fit-for-purpose, its critical performance characteristics must be experimentally verified. The following protocols outline standard methodologies for assessing these parameters.

Accuracy Profile for Definitive Quantitative Methods

For definitive quantitative assays, such as those used for inorganic ion analysis, the accuracy profile is a powerful fit-for-purpose tool. It is constructed from the total error, which is the sum of systematic error (bias) and random error (intermediate precision), and uses a β-expectation tolerance interval to visually predict the confidence interval (e.g., 95%) for future results against pre-defined acceptance limits [1].

Detailed Protocol:

- Preparation: Prepare 3-5 concentration levels of calibration standards and 3 levels of validation samples (VS), representing low, medium, and high concentrations on the calibration curve.

- Analysis: Analyze each VS in triplicate on 3 separate days to capture inter-day and intra-day variation.

- Calculation: For each concentration level, calculate the total error (bias + intermediate precision) and the β-expectation tolerance interval.

- Visualization: Plot the tolerance intervals for each concentration level. If the intervals fall entirely within the pre-defined acceptance limits (e.g., ±25% for biomarkers), the method is considered fit-for-purpose. This profile also simultaneously determines sensitivity (LLOQ, ULOQ) and the effective dynamic range [1].

Validation of a Specific Inorganic Analytical Method

A recent study on ion chromatography (IC) methods for determining sodium, potassium, phosphate, and sorbitol in phosphate syrup provides a model validation protocol for inorganic analysis [6].

Experimental Workflow and Reagents: The study utilized two separate IC systems:

- Cation Analysis: An IonPac CS16 column with 50 mM methanesulfonic acid as the mobile phase at a flow rate of 0.5 mL/min.

- Anion/Sorbitol Analysis: An IonPac AS19 column with mobile phases of 50 mM and 20 mM NaOH at a flow rate of 1.0 mL/min.

Validation Data Collection:

- Sensitivity: The limit of detection (LOD) for all analytes was determined via signal-to-noise ratio and found to be below 0.001 mM.

- Linearity: Excellent linearity was demonstrated with determination coefficients (R²) greater than 0.999 for all analytes.

- Precision: Both intra-day and inter-day precision were assessed, yielding relative standard deviations (RSD%) of no more than 1%.

- Accuracy: Measured via recovery studies, accuracy ranged from 98% to 101% for all ions, well within acceptable limits [6].

Table: Essential Research Reagents for IC Method Validation

| Reagent / Material | Function in the Analytical Method |

|---|---|

| Ion Chromatography System | Platform for separating and detecting ionic analytes. |

| IonPac CS16 Column | Stationary phase for the separation of cations (e.g., Na⁺, K⁺). |

| IonPac AS19 Column | Stationary phase for the separation of anions (e.g., PO₄³⁻) and sorbitol. |

| Methanesulfonic Acid (MSA) | Mobile phase electrolyte used for cation separation. |

| Sodium Hydroxide (NaOH) | Mobile phase electrolyte used for anion and sorbitol separation. |

| Certified Reference Standards | High-purity materials of Na⁺, K⁺, PO₄³⁻, and sorbitol used to prepare calibration standards for quantifying unknowns. |

Method validation, guided by the fitness-for-purpose principle, is an indispensable discipline in analytical science. It ensures that the data generated is not only scientifically sound but also relevant and reliable for its specific decision-making context. The move towards a flexible, lifecycle-based approach, as seen in graduated and generic validation strategies, allows for efficient resource allocation without compromising data quality. For researchers in drug development and inorganic analysis, mastering this concept is crucial for navigating the path from exploratory research to regulatory approval, ultimately ensuring that every analytical method is rigorously demonstrated to be fit for its intended purpose.

Analytical method validation provides documented evidence that a laboratory procedure is robust, reliable, and reproducible for its intended purpose throughout its lifecycle. This process is fundamental to pharmaceutical development and quality control, ensuring that analytical data generated for drug substances and products is trustworthy and meets regulatory standards. For researchers focused on inorganic analytical method validation, understanding these guidelines ensures that methods for analyzing metal impurities, elemental contaminants, or inorganic pharmaceutical ingredients are scientifically sound and regulatory-compliant. The global regulatory landscape for analytical procedures is primarily shaped by three major bodies: the International Council for Harmonisation (ICH) through its Q2(R2) guideline, the U.S. Food and Drug Administration (FDA), and the European Medicines Agency (EMA). While each authority has its specific implementation frameworks, substantial harmonization exists, particularly through the adoption of ICH standards, which provide a unified approach to validation parameters, terminology, and methodology.

Core Guidelines and Recent Updates

ICH Q2(R2): Validation of Analytical Procedures

The ICH Q2(R2) guideline, finalized in March 2024, provides the foundational framework for validating analytical procedures used in the testing of chemical and biological drug substances and products [7]. It is a revision of the earlier Q2(R1) standard and reflects modern analytical technologies and scientific understanding. This guideline outlines the core validation components that demonstrate an analytical procedure is suitable for its intended purpose, covering concepts such as accuracy, precision, specificity, and linearity [8]. The scope includes procedures for release and stability testing of commercial drug substances and products, and it can be applied to other analytical procedures within a risk-based control strategy [8]. In July 2025, ICH released comprehensive training materials to support global implementation and consistent application of both Q2(R2) and the related ICH Q14 guideline on analytical procedure development [9].

FDA Requirements

The FDA incorporates ICH guidelines into its regulatory framework. The agency has formally adopted the ICH Q2(R2) guideline, recognizing it as an acceptable standard for method validation [7]. For specific product areas, the FDA also provides supplemental guidance. For instance, the "Method Validation Guidelines" from the Office of Foods and Veterinary Medicine cover detecting microbial pathogens in foods and feeds and chemical methods for the Foods and Veterinary Medicine Program [10]. For biomarker assays, the FDA's 2025 guidance recommends using the approach described in ICH M10 for drug assays as a starting point, while acknowledging that biomarker assays require unique considerations for measuring endogenous analytes [11].

EMA Requirements

The EMA, representing the European Union, similarly adheres to ICH standards. The agency references ICH Q2(R2) in its scientific guidelines on specifications, analytical procedures, and analytical validation, which help medicine developers prepare marketing authorization applications for human medicines [12]. The EMA emphasizes that these guidelines apply to various analytical purposes, including assay, purity, impurity, identity, and other quantitative or qualitative measurements [8]. For advanced therapy medicinal products (ATMPs), the EMA provides additional specific guidelines requiring detailed quality documentation and characterization of the active substance [13].

Comprehensive Validation Parameters and Experimental Protocols

The following table summarizes the core validation parameters as outlined in ICH Q2(R2) and related guidelines, along with their definitions and methodological approaches essential for inorganic analytical methods.

Table 1: Core Analytical Method Validation Parameters and Protocols

| Validation Parameter | Definition | Typical Experimental Protocol & Methodology |

|---|---|---|

| Accuracy | Closeness of agreement between accepted reference/value and measured value [8] | For inorganic assays: Analyze a sample of known concentration (e.g., CRM) in triplicate. Compare measured vs. true value. Report as % recovery.For impurities: Spike drug substance/product with known impurity concentrations. Determine mean recovery (%) of added impurity. |

| Precision | Degree of agreement among individual test results (Repeatability, Intermediate Precision) [8] | Repeatability: Analyze multiple preparations (n=6) of a homogeneous sample. Calculate %RSD.Intermediate Precision: Vary analyst, day, equipment. Use ANOVA to assess variance components. |

| Specificity | Ability to assess analyte unequivocally despite potential interferences [8] | Chromatography: Compare chromatograms of blank, placebo, standard, and stressed samples. Resolve analyte peak from impurities.Spectroscopy: Demonstrate no interference from matrix at analyte's wavelength. |

| Detection Limit (LOD) | Lowest amount of analyte detectable, not quantifiable [8] | Signal-to-Noise: Typically 3:1 or 2:1 ratio.Standard Deviation Method: Analyze low-level samples, calculate LOD = 3.3σ/S (σ=standard deviation, S=slope of calibration curve). |

| Quantitation Limit (LOQ) | Lowest amount of analyte quantifiable with precision/accuracy [8] | Signal-to-Noise: Typically 10:1 ratio.Standard Deviation Method: Analyze low-level samples, calculate LOQ = 10σ/S. Verify with precision/accuracy at LOQ level. |

| Linearity | Ability to produce results proportional to analyte concentration [8] | Prepare and analyze a minimum of 5 concentrations spanning the claimed range. Plot response vs. concentration. Calculate correlation coefficient, y-intercept, slope, and residual sum of squares. |

| Range | Interval between upper/lower concentration levels with precision, accuracy, linearity [8] | Established from linearity data, confirming precision, accuracy, and linearity are met at range limits. Typically 80-120% of test concentration for assay, LOQ-120% for impurities. |

| Robustness | Capacity to remain unaffected by small, deliberate parameter variations [8] | Vary key parameters (e.g., pH, mobile phase composition, temperature, flow rate) in a systematic design (e.g., DoE). Monitor impact on system suitability criteria (e.g., resolution, tailing). |

Application to Inorganic Analytical Methods

For inorganic analytical method validation research, such as Inductively Coupled Plasma (ICP) assays or ion chromatography, specific considerations apply:

- Specificity: Demonstrate resolution from other metal ions or inorganic components in the matrix. This may involve testing with potential interfering substances.

- Accuracy and Precision in Spike Recovery: For trace element analysis, accuracy is often established through spike recovery experiments using certified reference materials (CRMs) that closely match the sample matrix.

- Linearity and Range: Prepare standard solutions across the specified range, including a blank. For techniques like ICP-MS, the dynamic range can be extensive but may require evaluation for potential detector saturation at high concentrations and sufficient signal at the lower end.

The Analytical Procedure Lifecycle Workflow

The following diagram illustrates the interconnected stages of the analytical procedure lifecycle, integrating development, validation, and ongoing monitoring as guided by ICH Q2(R2) and Q14.

This lifecycle view, reinforced by ICH Q14, encourages a holistic approach where method development (identifying critical method parameters) directly informs a more effective and risk-based validation strategy [9]. Continuous monitoring of method performance during routine use provides data to support future changes, which are then managed through a structured process to maintain the validated state.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials critical for successfully executing analytical method validation studies, particularly in the context of inorganic analysis.

Table 2: Essential Reagents and Materials for Analytical Method Validation

| Reagent/Material | Critical Function in Validation | Key Considerations for Inorganic Analysis |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish accuracy and calibration traceability to SI units. | Use matrix-matched CRMs for recovery studies. Verify purity of inorganic salt standards for primary standard preparation. |

| High-Purity Solvents & Reagents | Minimize background interference and false positives; ensure method specificity. | Use trace metal grade acids, ultra-pure water (e.g., 18.2 MΩ·cm). Assess solvent blank contribution to LOD/LOQ. |

| Stable & Well-Characterized Sample Lots | Assess precision, robustness, and system suitability. | Ensure sample homogeneity and stability for the duration of testing, especially for metal speciation studies. |

| Chromatographic Columns & Stationary Phases | Define method selectivity, efficiency, and resolution for LC- or IC-based methods. | Select columns suitable for inorganic ions (e.g., ion-exchange). Document column performance (e.g., plate count, asymmetry) as system suitability. |

| System Suitability Standards | Verify chromatographic/spectroscopic system performance before validation runs. | Prepare mixture of key analytes and potential interferences. Establish pass/fail criteria (e.g., resolution, peak asymmetry, sensitivity). |

Navigating the global regulatory requirements for analytical method validation demands a thorough understanding of ICH Q2(R2), FDA, and EMA guidelines. These frameworks, while distinct in origin, are largely harmonized around core principles of accuracy, precision, specificity, and robustness. For scientists engaged in inorganic analytical method validation, successfully implementing these guidelines requires a lifecycle approach that integrates thoughtful procedure development (ICH Q14), rigorous experimental validation of all relevant parameters (ICH Q2(R2)), and the use of high-quality reagents and materials. By adhering to these structured protocols and understanding the strategic intent behind the guidelines, researchers can develop reliable, validated methods that not only meet regulatory scrutiny but also consistently generate high-quality data to support drug development and ensure patient safety.

In the field of inorganic analytical method validation, demonstrating that an analytical procedure is fit for its intended purpose is a fundamental requirement for regulatory compliance and scientific integrity. This process establishes, through laboratory studies, that the method's performance characteristics meet the requirements for the intended analytical application and provide assurance of reliability during normal use. At the core of this validation lie four essential parameters—accuracy, precision, specificity, and linearity—that form the foundation for generating reliable, reproducible, and meaningful analytical data. These parameters are critical across various industries, including pharmaceuticals, environmental monitoring, and food safety, where the validity of analytical results directly impacts product quality and public health. This technical guide examines these core validation parameters within the context of inorganic analytical method validation research, providing researchers, scientists, and drug development professionals with detailed methodologies and experimental protocols for their evaluation.

Accuracy

Accuracy measures the exactness of an analytical method, defined as the closeness of agreement between an accepted reference value and the value found in a sample [14] [15]. It is typically expressed as the percentage of analyte recovered by the assay and provides critical information about a method's trueness [16].

Experimental Protocol for Determining Accuracy

To document accuracy, guidelines recommend collecting data from a minimum of nine determinations over a minimum of three concentration levels covering the specified range (i.e., three concentrations, three replicates each) [15]. For drug substances, accuracy measurements are obtained by comparison to a standard reference material or a second, well-characterized method. For drug product assays, accuracy is evaluated by analyzing synthetic mixtures spiked with known quantities of components [15].

For the quantification of impurities, accuracy is determined by analyzing samples (drug substance or drug product) spiked with known amounts of impurities. When impurities are unavailable, method specificity becomes the primary demonstration of accuracy [15]. The data should be reported as the percentage recovery of the known, added amount, or as the difference between the mean and true value with confidence intervals (e.g., ±1 standard deviation) [15].

Best Practices for Accuracy Assessment

- Use Certified Reference Materials (CRMs): When available, CRMs provide the most reliable basis for accuracy assessment [14]

- Spike Recovery Experiments: For matrices where CRMs are unavailable, spike known quantities of analyte into placebo or sample matrix [16]

- Standard Additions Method: Particularly valuable for complex matrices where matrix effects may impact results [14]

- Independent Method Comparison: Compare results with those from a second, well-characterized procedure [17]

Table 1: Accuracy Acceptance Criteria Based on Analyte Concentration

| Concentration Level | Typical Acceptance Criteria (% Recovery) | Application Context |

|---|---|---|

| Active Ingredient (100%) | 98.0–102.0% | Drug substance assay [15] |

| Impurity Quantification | 95.0–105.0% | Related substances testing |

| Trace Analysis | 80.0–120.0% | Residual solvents, heavy metals |

Precision

Precision of an analytical method is defined as the closeness of agreement among individual test results from repeated analyses of a homogeneous sample [15]. Precision is commonly evaluated at three levels: repeatability, intermediate precision, and reproducibility.

Experimental Protocols for Precision Assessment

Repeatability (Intra-assay Precision)

Repeatability refers to the ability of the method to generate the same results over a short time interval under identical conditions [15]. To document repeatability, guidelines suggest analyzing a minimum of nine determinations covering the specified range of the procedure (i.e., three concentrations, three repetitions each) or a minimum of six determinations at 100% of the test or target concentration [15]. Results are typically reported as percentage relative standard deviation (%RSD).

Intermediate Precision

Intermediate precision refers to the agreement between results from within-laboratory variations due to random events, such as different days, analysts, or equipment [15]. An experimental design should be used so the effects of individual variables can be monitored. Intermediate precision results are typically generated by two analysts who prepare and analyze replicate sample preparations, each using their own standards, solutions, and possibly different instruments [15].

Reproducibility

Reproducibility refers to the results of collaborative studies among different laboratories and is expressed as standard deviation [14]. This represents the highest level of precision assessment and is typically conducted for method standardization across multiple facilities [17].

Acceptance Criteria for Precision

Table 2: Precision Acceptance Criteria Based on Analyte Concentration

| Concentration Level | Acceptance Criteria (%RSD) | Precision Level |

|---|---|---|

| Active Ingredient (100%) | ≤1.0–2.0% | Repeatability [15] |

| Impurity Quantification | ≤5.0% | Intermediate precision |

| Trace Analysis | ≤10.0–15.0% | Reproducibility |

Specificity

Specificity is the ability to measure accurately and specifically the analyte of interest in the presence of other components that may be expected to be present in the sample [15]. For inorganic analysis, this ensures that a peak's response is due to a single component without interference from the sample matrix, excipients, impurities, or degradation products [16].

Experimental Protocol for Specificity Assessment

Specificity is demonstrated through resolution, plate number (efficiency), and tailing factor measurements [15]. For identification purposes, specificity is demonstrated by the ability to discriminate between other compounds in the sample or by comparison to known reference materials [15].

For assay and impurity tests, specificity can be shown by the resolution of the two most closely eluted compounds, typically the major component and a closely eluted impurity [15]. When impurities are available, demonstrate that the assay is unaffected by the presence of spiked materials. If impurities are unavailable, compare test results to a second well-characterized procedure [15].

Advanced Specificity Assessment Techniques

Modern specificity assessment incorporates powerful orthogonal techniques:

- Peak Purity Testing: Using photodiode-array (PDA) detection or mass spectrometry (MS) to demonstrate specificity by comparison to a known reference material [15]

- Chromatographic Specificity: Evaluation of representative chromatograms with appropriate labeling of individual components to demonstrate specificity [17]

- Forced Degradation Studies: Samples stored under relevant stress conditions (light, heat, humidity, acid/base hydrolysis, oxidation) help demonstrate specificity in the presence of degradation products [15]

Linearity

Linearity of an analytical method is its ability to elicit test results that are directly proportional to the analyte concentration in samples within a given range [17] [15]. The linear range of detectability depends on the compound analyzed and the detector used [17].

Experimental Protocol for Linearity Assessment

Linearity is typically established across a minimum of five concentration levels with appropriate minimum ranges specified by guidelines [15]. The working sample concentration and samples tested for accuracy should be within the demonstrated linear range [17].

The linearity of detectability that obeys Beer's law is dependent on the analyzed compound and detector used [17]. Data to be reported generally include the equation for the calibration curve line, the coefficient of determination (r²), residuals, and the curve itself [15].

Range Considerations

The range of an analytical procedure is the interval between the upper and lower concentrations of analyte for which the method has demonstrated suitable linearity, accuracy, and precision [16] [18]. ICH Q2(R2) specifies minimum ranges for different types of analytical procedures:

Table 3: Minimum Recommended Ranges for Analytical Procedures

| Analytical Procedure | Minimum Specified Range | Linearity Expectation (r²) |

|---|---|---|

| Assay of Drug Substance | 80–120% of test concentration | ≥0.998 [15] |

| Impurity Testing | 50–120% of specification level | ≥0.990 |

| Content Uniformity | 70–130% of test concentration | ≥0.998 |

Methodological Relationships and Workflow

The diagram below illustrates the logical relationships and workflow between the four essential validation parameters and their role in the overall method validation process:

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful validation of accuracy, precision, specificity, and linearity requires specific high-quality materials and reagents. The following table details essential components for conducting proper method validation studies:

Table 4: Essential Research Reagent Solutions for Method Validation

| Reagent/Material | Function in Validation | Application Examples |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish accuracy through comparison with known reference values [14] | Drug substance assay, impurity quantification |

| High-Purity Analytical Standards | Evaluate linearity, prepare calibration curves, determine LOD/LOQ [17] | Method calibration, range establishment |

| Matrix-Matched Materials | Assess specificity by testing analyte detection in presence of sample matrix [15] | Specificity testing, recovery studies |

| Internal Standards | Improve precision by correcting for instrumental variations [14] | Precision testing, quantitative analysis |

| Reagents for Forced Degradation | Establish specificity through stress testing (acid, base, oxidants) [15] | Specificity demonstration, stability testing |

The four parameters of accuracy, precision, specificity, and linearity form an interdependent framework that ensures analytical methods generate reliable results fit for their intended purpose. Accuracy guarantees the closeness to true values, precision ensures result consistency, specificity confirms the method's ability to distinguish the analyte from interferences, and linearity establishes the proportional relationship between concentration and response across the method's working range. For researchers in inorganic analytical method validation, a thorough understanding and systematic application of the experimental protocols outlined in this guide provides the foundation for developing robust, reliable analytical methods that meet regulatory standards and scientific rigor. As regulatory frameworks evolve with initiatives like ICH Q2(R2) and ICH Q14, embracing a lifecycle approach to method validation that begins with clear objectives and incorporates risk-based principles will further enhance the reliability and sustainability of analytical methods in pharmaceutical development and quality control.

In the realm of inorganic analytical method validation, defining the lower limits of an assay is fundamental to understanding its capabilities and ensuring it is fit for purpose [19]. The Limit of Detection (LOD), Limit of Quantitation (LOQ), and the analytical range collectively describe the concentration interval over which a method can reliably detect and measure an analyte. These parameters are critical for researchers and drug development professionals who must guarantee the reliability of data used in decision-making processes, from assessing impurity profiles in active pharmaceutical ingredients (APIs) to monitoring environmental contaminants [20] [21].

This guide provides an in-depth examination of the core principles, calculation methodologies, and experimental protocols for establishing the LOD, LOQ, and range, framed within the context of analytical method validation.

Fundamental Definitions and Relationships

Core Concepts

Limit of Blank (LoB): The LoB is the highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested. It characterizes the background noise of the method. Statistically, the LoB is defined as the 95th percentile of the blank measurement distribution [19] [22]. It is calculated as:

LoB = meanblank + 1.645(SDblank) [19]

This assumes a Gaussian distribution, where 95% of blank measurements will fall below this value.

Limit of Detection (LOD): The LOD is the lowest analyte concentration that can be reliably distinguished from the LoB. While the analyte can be detected at this level, it cannot be precisely quantified. The LOD is always greater than the LoB [19]. Per CLSI EP17 guidelines, a sample at the LOD concentration should be distinguishable from the LoB 95% of the time, accounting for both Type I (false positive) and Type II (false negative) errors [19] [23]. A common formula is:

LOD = LoB + 1.645(SD_low concentration sample) [19]

Limit of Quantitation (LOQ): The LOQ is the lowest concentration at which the analyte can not only be detected but also quantified with acceptable accuracy and precision [19] [24]. It is the level that meets predefined goals for bias and imprecision (e.g., a coefficient of variation of 20% or less) [21] [24]. The LOQ is equal to or greater than the LOD and often resides at a much higher concentration [19].

The logical and statistical relationships between these parameters are illustrated in the following workflow:

Methodologies for Determining LOD and LOQ

There are multiple accepted approaches for determining LOD and LOQ, each with its specific applications and requirements as summarized in the table below.

Table 1: Overview of Methods for Determining LOD and LOQ

| Method | Basis of Calculation | Typical Applications | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Standard Deviation of the Blank & Slope [22] [25] [26] | Uses mean and SD of blank measurements and the slope of the calibration curve. | Instrumental methods where a reproducible blank is available. | Directly characterizes background noise; grounded in clear statistics. | Requires an analyte-free blank matrix, which can be challenging for complex samples [27]. |

| Standard Deviation of Response & Slope [22] [25] [26] | Uses the standard error of the regression (e.g., SD of y-intercepts or residual SD) and the slope of the calibration curve. | Quantitative instrumental methods, particularly chromatography [26]. | Does not require a true blank; uses data from the calibration curve. | Assumes the calibration curve is linear in the low-concentration range. |

| Signal-to-Noise Ratio (S/N) [22] [25] [28] | Compares the analyte signal to the background noise. | Chromatographic methods (HPLC, GC) and other techniques with a baseline. | Simple, intuitive, and widely used in industry for impurities [28]. | Can be subjective; dependent on instrument settings and baseline stability. |

| Visual Evaluation [22] [25] | Determination by the analyst of the lowest concentration that can be detected or quantified. | Non-instrumental methods (e.g., inhibition tests) or early method development. | Practical and straightforward for non-instrumental techniques. | Subjective and not suitable for formal validation of quantitative methods. |

Detailed Calculation Procedures

Based on Standard Deviation of the Blank and Slope

This method relies on analyzing a statistically significant number of blank samples.

Where:

- σ = Standard deviation of the response from multiple measurements of the blank.

- S = Slope of the analytical calibration curve.

The multipliers 3.3 and 10 are derived from statistical confidence levels and are endorsed by ICH Q2(R1) guidelines [26]. They are chosen to minimize the probabilities of false positive (α) and false negative (β) errors to acceptable levels (typically 5% each) [23].

Based on Calibration Curve: Standard Deviation of Response and Slope

This is a widely applicable approach, particularly in chromatography.

Where:

- σ = Standard deviation of the response. This can be the standard error (SE) of the regression, the residual standard deviation (s_y/x), or the standard deviation of the y-intercept of the calibration curve [25] [26].

- S = Slope of the calibration curve.

An example using linear regression output from software like Excel is shown below. The standard error of the regression is used as the estimate for σ [26].

Table 2: Example LOD/LOQ Calculation from Calibration Curve Data

| Parameter | Value | Source |

|---|---|---|

| Slope (S) | 1.9303 | Linear regression of calibration curve (Area vs. Concentration) |

| Standard Error (σ) | 0.4328 | Linear regression output |

| LOD Calculation | 3.3 × 0.4328 / 1.9303 = 0.74 ng/mL | Derived value |

| LOQ Calculation | 10 × 0.4328 / 1.9303 = 2.24 ng/mL | Derived value |

Based on Signal-to-Noise Ratio

This approach is common in chromatographic techniques.

- LOD: The analyte concentration that yields a S/N ratio of 3:1 [25] [28].

- LOQ: The analyte concentration that yields a S/N ratio of 10:1 [25] [28].

The signal-to-noise ratio can be calculated as S/N = 2H/h, where H is the height of the analyte peak and h is the range of the background noise in a chromatogram over a distance equal to 20 times the width at half the height of the peak [23].

Experimental Protocols and Validation

Determining LOD and LOQ is not merely a calculation; it requires a rigorous experimental design to ensure the results are statistically sound and reproducible.

General Workflow for Determination and Validation

The following diagram outlines a robust workflow for establishing and verifying LOD and LOQ, integrating recommendations from CLSI and ICH guidelines [19] [27].

Key Experimental Considerations

- Sample Replicates: Both CLSI EP17 and ICH guidelines emphasize the need for a sufficient number of replicates to obtain reliable estimates of standard deviation. While a manufacturer establishing a method might use 60 replicates, a laboratory verifying the method can typically use 20 replicates for both blank and low-concentration samples [19].

- Sample Matrix: The blank and low-concentration samples must be prepared in a matrix that is commutable with real patient or test samples to accurately reflect the method's performance in practice [19] [27].

- Precision Conditions: The experiment should capture the expected variability of the method, which may include testing over multiple days, using different reagent lots, and involving multiple operators to account for inter-assay variability [24].

- Verification: The calculated LOD and LOQ are only estimates until they are experimentally confirmed. This involves preparing and analyzing multiple replicates (e.g., n=6) at the proposed LOD and LOQ concentrations. For the LOQ, the results must demonstrate predefined accuracy and precision (e.g., ±20% bias and CV for the LOQ) [26] [28].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for LOD/LOQ Studies

| Item | Function in LOD/LOQ Determination | Critical Considerations |

|---|---|---|

| Analyte-Free Matrix | Serves as the "blank" sample for determining LoB and background signal. | Must be commutable with real samples; can be difficult to obtain for complex inorganic matrices [27]. |

| Primary Reference Standard | Used to prepare precise calibration standards and low-concentration samples. | High purity and known stoichiometry are essential for accurate concentration assignment. |

| Volumetric Glassware & Micro-pipettes | For accurate and precise preparation of sample dilutions, especially at very low concentrations. | Regular calibration is critical. Using class A glassware reduces uncertainty. |

| Chromatographic Solvents & Mobile Phases | In HPLC/IC methods, these create the analytical environment. The blank is often the mobile phase. | High-purity "HPLC-grade" solvents minimize baseline noise and ghost peaks, improving S/N. |

| Sample Preparation Equipment (e.g., filters, solid-phase extraction cartridges) | Used to process samples and blanks. | Can introduce contamination or adsorb the analyte, affecting LoB and LOD; recovery studies are essential. |

Defining the Analytical Range

The analytical range (or measurement range) is the interval between the lower limit of quantitation (LLOQ) and the upper limit of quantitation (ULOQ) within which an analytical procedure provides results with acceptable accuracy, precision, and linearity [21].

- The LLOQ is typically the LOQ of the method. For bioanalytical methods, the LLOQ is the lowest calibration standard with an analyte response at least five times that of the blank, and with precision and accuracy within ±20% [21].

- The ULOQ is the highest concentration of the calibration curve where the analyte response is reproducible and meets accuracy and precision goals (often within ±15%) [21].

- A method's range must cover all concentrations expected in study samples. The calibration curve should not be extrapolated below the LLOQ or above the ULOQ. Samples with concentrations exceeding the ULOQ must be diluted and re-analyzed [21].

Establishing the LOD, LOQ, and range is a critical process in demonstrating that an analytical method is fit for its intended purpose. This requires a strategic choice of determination methodology, followed by a rigorous experimental protocol and statistical analysis. By adhering to established guidelines and empirically verifying calculated limits, researchers and scientists can ensure the generation of reliable, defensible data at the very extremes of an assay's capability, thereby solidifying the foundation for sound scientific and regulatory decision-making.

The Role of Robustness Testing in Method Validation

Within the structured framework of inorganic analytical method validation, robustness testing serves as a critical gatekeeper, determining whether a method transitions from a controlled development environment to reliable routine use. Robustness is formally defined as a measure of a method's capacity to remain unaffected by small but deliberate variations in procedural parameters listed in the method documentation [29]. This evaluation provides a clear indication of a method's suitability and reliability during normal application [30].

For researchers and drug development professionals, understanding robustness is not merely an academic exercise—it represents a practical necessity for ensuring data integrity and regulatory compliance. As highlighted in methodological guidelines, robustness traditionally may not be considered a validation parameter in the strictest sense because it is typically investigated during method development, once the method is at least partially optimized [30]. This strategic positioning during development allows parameters that significantly affect method performance to be identified early, enabling the establishment of appropriate system suitability tests and control limits. Investing resources in robustness testing during early phases ultimately saves considerable time, energy, and expense throughout the method's lifecycle by preventing future failures during transfer or validation.

Robustness Versus Ruggedness: Critical Distinctions

A precise understanding of terminology is essential for proper method validation, particularly in distinguishing between robustness and ruggedness. While these terms are often used interchangeably in casual scientific discourse, they represent distinct and measurable characteristics within formal validation frameworks [30].

Robustness addresses parameters internal to the method—those factors explicitly written into the procedure. In chromatographic methods, this includes specified parameters such as mobile phase pH (±0.1 units), flow rate (±10%), column temperature (±2°C), or detection wavelength [30]. The variations introduced during robustness testing are deliberate, controlled alterations of these method-specified parameters.

Ruggedness refers to parameters external to the method—those environmental or operational factors not specified in the procedure. The United States Pharmacopeia (USP) defines ruggedness as "the degree of reproducibility of test results obtained by the analysis of the same samples under a variety of normal, expected operational conditions," including different laboratories, analysts, instruments, and reagent lots [30]. The term "ruggedness" is increasingly being replaced by "intermediate precision" in modern guidelines to better harmonize with International Conference on Harmonization (ICH) terminology [30].

This distinction is crucial for designing appropriate validation studies. A simple rule of thumb: if a parameter is written into the method documentation, its evaluation falls under robustness testing; if it represents normal laboratory-to-laboratory variation (e.g., different analysts, instruments), it constitutes ruggedness or intermediate precision assessment [30].

Methodological Approaches to Robustness Testing

Key Parameters for Evaluation

Robustness testing requires systematic variation of critical method parameters that experience has shown most likely to impact analytical results. For inorganic analysis techniques such as ICP-OES and ICP-MS, the key parameters typically include [14]:

- RF power settings

- Nebulizer, spray chamber, and torch design and alignment

- Sampler and skimmer cone design and construction material

- Buffer composition and concentration

- Temperature (laboratory and spray chamber)

- Integration time

- Reaction/collision cell type or conditions

For chromatographic methods commonly used in pharmaceutical analysis, critical parameters expand to include [30]:

- Mobile phase composition (pH, buffer concentration, organic solvent proportion)

- Flow rate

- Detection wavelength

- Column characteristics (type, lot, temperature)

- Gradient profile variations

Experimental Design Strategies

Robustness testing has evolved from inefficient univariate approaches (changing one variable at a time) to sophisticated multivariate designs that evaluate multiple factors simultaneously. This approach not only improves efficiency but also reveals potential interactions between variables that might otherwise remain undetected [30]. Four common multivariate design approaches facilitate comprehensive robustness assessment:

- Screening designs efficiently identify critical factors affecting robustness, ideal for investigating larger numbers of factors [30]

- Comparative designs enable selection between different methodological alternatives [30]

- Response surface modeling helps optimize conditions to hit targets or maximize responses [30]

- Regression modeling quantifies the dependence of response variables on process inputs [30]

For robustness studies, screening designs are typically most appropriate. Among these, three specific methodologies have proven particularly effective:

Table 1: Comparison of Experimental Designs for Robustness Testing

| Design Type | Key Characteristics | Applications | Advantages | Limitations |

|---|---|---|---|---|

| Full Factorial | Investigates all possible combinations of factors at multiple levels (typically 2^k runs) | Methods with ≤5 factors where comprehensive assessment is required | No confounding of effects; Identifies all interactions | Number of runs increases exponentially with additional factors |

| Fractional Factorial | Carefully chosen subset of full factorial combinations (2^k-p runs) | Methods with >5 factors where resource constraints exist | Maintains efficiency while evaluating multiple factors | Some effects are aliased or confounded; Requires careful fraction selection |

| Plackett-Burman | Highly economical designs in multiples of 4 rather than power of 2 | Screening many factors to identify critically important ones | Maximum efficiency for evaluating main effects | Cannot detect interaction effects; Limited to main effects only |

Each design approach offers distinct advantages, with the selection dependent upon the number of factors to investigate and the resources available. For most chromatographic methods, fractional factorial designs provide an optimal balance between comprehensiveness and practicality [30].

Implementation Workflow

The following diagram illustrates a systematic workflow for planning and executing robustness testing:

Practical Implementation Framework

Establishing Testing Parameters

Successful robustness testing begins with selecting appropriate parameters and variation ranges. The factors chosen should reflect those most likely to encounter normal variation during routine method use. The table below exemplifies parameters and typical variation ranges for a chromatographic method:

Table 2: Example Robustness Testing Parameters and Ranges for an HPLC Method

| Parameter | Nominal Value | Testing Range | Acceptance Criteria | Impact Assessment |

|---|---|---|---|---|

| Mobile Phase pH | 4.5 | ±0.2 units | Resolution >2.0 | High impact on selectivity |

| Flow Rate | 1.0 mL/min | ±10% | %RSD <2.0% | Moderate impact on retention |

| Column Temperature | 30°C | ±3°C | Peak symmetry 0.8-1.5 | Variable impact |

| Organic Modifier | 45% Acetonitrile | ±3% absolute | Retention time %RSD <2% | High impact on retention |

| Detection Wavelength | 254 nm | ±5 nm | No baseline disturbance | Low impact typically |

| Buffer Concentration | 25 mM | ±5 mM | Resolution maintained | Moderate impact on capacity factor |

Statistical Analysis and Interpretation

Following data collection through designed experiments, statistical analysis determines which parameter variations significantly affect method outcomes. For a two-level factorial design, the effect of each factor can be calculated as the difference between the average responses at the high and low levels of that factor [30]. Effects exceeding statistically determined thresholds or demonstrating practical significance require method modification or explicit control in the final documentation.

The Monte Carlo approach provides an alternative robustness assessment strategy, particularly valuable for evaluating classifier robustness in machine learning applications. This method repeatedly perturbs input data with increasing noise levels while monitoring changes in model performance and parameters, effectively quantifying a method's tolerance to data variability [31].

Case Study: Robustness Testing for an Inorganic Analytical Method

In trace analysis using ICP-OES or ICP-MS, robustness testing might evaluate the impact of variations in RF power, nebulizer gas flow, and sample uptake rate on key performance metrics including accuracy, precision, and detection limits [14]. A fractional factorial design could efficiently examine these factors while assessing potential interactions.

For example, a method determining trace metals in pharmaceutical ingredients might test the robustness against variations in:

- Plasma RF power (±0.1 kW)

- Nebulizer flow rate (±5%)

- Sample introduction system (two different nebulizer types)

- Integration time (±50%)

The output measurements would include signal stability, matrix effects, and the method's sensitivity to slight alterations in these operational parameters, establishing the boundaries for reliable method operation [14].

The Researcher's Toolkit: Essential Materials and Reagents

Successful robustness testing requires careful selection of materials and reagents that mirror final method conditions. The following table outlines critical components:

Table 3: Essential Research Reagent Solutions for Robustness Testing

| Reagent/Material | Function in Robustness Testing | Critical Quality Attributes | Application Notes |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Establish accuracy and traceability; Evaluate method bias | Certified purity and uncertainty; Stability | Select matrix-matched CRMs when available [14] |

| System Suitability Standards | Verify method performance under varied conditions | Well-characterized resolution and response | Should contain all key analytes at relevant concentrations |

| Chromatographic Columns | Evaluate column-to-column and lot-to-lot variability | Reproducible manufacturing specifications | Test at least two different column lots if possible |

| Buffer Components | Assess impact of pH and concentration variations | Pharmaceutical grade purity; Lot consistency | Prepare fresh solutions to avoid degradation effects |

| Mobile Phase Solvents | Determine effect of organic modifier variations | HPLC grade; Low UV absorbance; Controlled water content | Use consistent supplier unless specified otherwise |

| Sample Preparation Reagents | Test impact of extraction efficiency | High purity; Minimal background interference | Include in robustness study if variation expected |

Robustness testing does not exist in isolation but functions as an integral component of the comprehensive method validation framework. The relationship between robustness testing and other validation parameters can be visualized as follows:

As depicted, robustness testing serves as a bridge between method development and full validation, informing the establishment of system suitability tests that ensure the method's continued reliability [30]. The control limits derived from robustness studies become embedded within these system suitability tests, providing ongoing verification that the method remains within its demonstrated robust operating space [14] [30].

Robustness testing represents an indispensable component of analytical method validation, particularly within pharmaceutical development and inorganic analysis where method reliability directly impacts product quality and patient safety. By deliberately challenging method parameters within reasonable operating ranges, researchers can establish a method's resilient operational boundaries and define appropriate system suitability criteria.

The implementation of structured, statistically designed experiments—whether full factorial, fractional factorial, or Plackett-Burman designs—enables efficient evaluation of multiple factors and their potential interactions. This systematic approach to robustness assessment ultimately strengthens the method validation package, facilitates smoother technology transfer, and ensures generation of reliable, defensible analytical data throughout the method's lifecycle.

For researchers and drug development professionals, investing in comprehensive robustness testing during method development and validation represents not merely a regulatory compliance exercise, but a fundamental scientific practice that enhances methodological understanding and ensures data integrity long after method implementation.

Implementing and Applying Validated Inorganic Analytical Methods

The selection of appropriate analytical techniques is a cornerstone of reliable inorganic analysis in pharmaceutical and environmental research. The fundamental principle of method validation is to demonstrate that any analytical procedure is suitable for its intended purpose and will consistently yield reliable results [32]. This guide provides an in-depth technical comparison of four pivotal techniques—High-Performance Liquid Chromatography (HPLC), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), Gas Chromatography (GC), and UV-Vis Spectrophotometry—framed within the rigorous context of analytical method validation requirements for inorganic analysis. With the recent modernization of regulatory guidelines through ICH Q2(R2) and ICH Q14, the approach to method validation has evolved from a prescriptive checklist to a science- and risk-based lifecycle model [18]. This paradigm shift emphasizes building quality into methods from the initial development stages through the definition of an Analytical Target Profile (ATP), which prospectively summarizes the method's intended purpose and required performance characteristics [18].

Technical Comparison of Analytical Techniques

Table 1: Comparison of Key Analytical Techniques for Inorganic Analysis

| Technique | Primary Applications | Detection Limits | Key Strengths | Sample Requirements |

|---|---|---|---|---|

| HPLC | Separation of non-volatile compounds, bio-pharmaceuticals, ions | Variable (depends on detector) | High separation efficiency, biocompatible materials, handles complex mixtures | Liquid samples, may require derivation |

| ICP-MS | Trace elemental determination, multi-element analysis | ppt (part-per-trillion) range [33] | Exceptional sensitivity, wide dynamic range, isotopic analysis capability | Liquid, solid (with laser ablation), gaseous samples [33] |

| GC | Volatile compounds, residual solvents, environmental contaminants | Variable (depends on detector) | High resolution for complex mixtures, excellent for volatile analytes | Volatile and thermally stable compounds |

| UV-Vis | Quantitative analysis of chromophores, concentration determination | ppm (part-per-million) range [34] | Simple operation, cost-effective, excellent for quantitative analysis | Requires light-absorbing species |

High-Performance Liquid Chromatography (HPLC)

Modern HPLC systems have evolved significantly to address diverse analytical challenges. The Agilent Infinity III LC Series exemplifies current capabilities with pressures up to 1300 bar and flow rates up to 5 mL/min, enabling faster analysis with improved resolution [35]. For biopharmaceutical applications, bio-inert systems constructed with MP35N, gold, ceramic, and polymers provide enhanced resistance to high-salt mobile phases under extreme pH conditions [35]. The Shimadzu i-Series represents trends toward compact, integrated systems with eco-friendly designs that reduce energy consumption while maintaining performance capabilities up to 70 MPa [35]. These advancements make contemporary HPLC particularly valuable for method validation parameters requiring specificity, precision, and accuracy in complex matrices.

Inductively Coupled Plasma Mass Spectrometry (ICP-MS)

ICP-MS has become the premier technique for trace elemental determinations, capable of analyzing approximately 80 elements from the periodic table with detection limits at or below the part-per-trillion (ppt) level [33]. The technique utilizes argon gas as a plasma source to generate ionization temperatures of 6000–10,000 K, efficiently ionizing most elements (>90%) in the hot plasma [33]. The instrumental configuration consists of several critical components: a sample introduction system (nebulizer and spray chamber), ICP-torch and RF coil, vacuum-interface system, interference removal system (collision/reaction cell), ion optics, mass spectrometer filtration system, and detector [33]. For method validation, understanding and controlling ICP-MS interferences—including isobars, polyatomic ions, doubly-charged ions, and physical effects—is essential for obtaining accurate results [33]. The technique's exceptional sensitivity makes it particularly valuable for validating methods requiring extremely low detection and quantitation limits.

Gas Chromatography (GC)

GC method validation ensures that quantitative analysis methods are reliable, accurate, and suitable for their intended purposes in regulated industries such as pharmaceuticals, environmental monitoring, and food safety [36]. The validation parameters for GC include specificity (ability to unambiguously identify target analytes without interference), linearity (typically with a correlation coefficient ≥0.999 across the working range), accuracy (evaluated through recovery studies, typically 98-102%), and precision (both repeatability and intermediate precision with RSD <2% and <3% respectively) [36]. Robustness testing deliberately varies chromatographic parameters such as carrier gas flow or oven temperature to assess the method's resilience to minor operational changes [36]. The use of high-accuracy standards is particularly critical in GC method validation to ensure precise calibration, minimize systematic errors, and meet stringent regulatory requirements from bodies such as the FDA and ICH [36].

UV-Vis Spectrophotometry

UV-Vis spectrophotometry remains a fundamental technique for quantitative analysis, particularly for compounds containing chromophores. A 2025 study validating a UV-Vis method for ascorbic acid determination in beverage preparations demonstrated excellent linearity (r²=0.995) with LOD and LOQ values of 0.429 ppm and 1.3 ppm respectively [34]. The precision results showed a %RSD of 0.126% with an accuracy (% recovery) of 103.5%, meeting pharmacopeia limits of 90-110% [34]. Similarly, a method for Rifampicin quantification in biological matrices validated according to ICH guidelines demonstrated excellent linearity (r²=0.999), LOD values of 0.25-0.49 μg/mL, and acceptable accuracy (%RE -11.62% to 14.88%) and precision (%RSD 2.06% to 13.29%) [37]. These performance characteristics make UV-Vis a valuable technique for methods where ultra-trace detection isn't required but cost-effectiveness, simplicity, and reliability are priorities.

Analytical Method Validation Framework

Core Validation Parameters

The validation of analytical methods, regardless of the specific technique, requires demonstration that established performance characteristics consistently meet predefined criteria for the intended applications [32] [18]. The core validation parameters defined in ICH Q2(R2) include:

- Accuracy: The closeness of agreement between the measured value and the true value [18]. For trace analysis, accuracy is best established through analysis of certified reference materials (CRMs) or comparison with independent validated methods [14].

- Precision: The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample [18]. This includes repeatability (same operating conditions), intermediate precision (different days, analysts, equipment), and reproducibility (between laboratories) [32].

- Specificity: The ability to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradation products, or matrix components [18].

- Linearity and Range: The ability to obtain test results proportional to analyte concentration within a given range [18]. The range is the interval between upper and lower concentration levels that have been demonstrated to be determined with suitable precision, accuracy, and linearity [36].

- Limit of Detection (LOD) and Limit of Quantitation (LOQ): LOD represents the lowest amount of analyte that can be detected but not necessarily quantified, while LOQ is the lowest amount that can be quantitatively determined with acceptable accuracy and precision [18]. For trace analysis, LOD is defined as 3SD₀ (where SD₀ is the standard deviation as concentration approaches zero), while LOQ is defined as 10SD₀ [14].

- Robustness: The capacity of a method to remain unaffected by small, deliberate variations in method parameters [18]. Robustness testing identifies critical operational parameters and establishes tolerances for their control [14].

Method Validation Lifecycle Approach

The contemporary approach to method validation emphasizes a lifecycle model beginning with the definition of an Analytical Target Profile (ATP) that prospectively summarizes the method's intended purpose and required performance characteristics [18]. This represents a significant evolution from the traditional prescriptive validation approach to a more scientific, risk-based framework that continues throughout the method's entire operational life [18]. The enhanced approach introduced in ICH Q14 allows for greater flexibility in post-approval changes through a science-based control strategy, while maintaining rigorous quality standards [18].

Experimental Protocols for Method Validation

Protocol for ICP-MS Method Validation in Biological Samples

The determination of trace elements like lead and cadmium in blood matrices requires rigorous validation to ensure clinical reliability. A validated method for blood lead (Pb-B) and cadmium (Cd-B) determination using ICP-MS incorporates several critical steps [38]:

- Sample Preparation: Deproteinization of blood samples by addition of 5% nitric acid to eliminate protein presence and exclude the influence of organic matrix on determination results.

- Instrumentation Parameters: ICP-MS system equipped with Meinhard concentric glass nebulizer and Cyclonic spray chamber, nickel-based interface cones, RF power of 1,075 W, nebulizer gas flow of 0.8-1.0 L/min, and monitoring of isotopes 204Pb, 206Pb, 207Pb, 208Pb, and 114Cd.

- Quality Control: Use of certified reference materials including BCR 634 Lyophilised Human Blood, Seronorm Trace Elements Whole Blood, and Recipe ClinChek Whole Blood Controls.

- Performance Characteristics: Method demonstrates detection limits of 0.16 μg/L for Pb-B and 0.08 μg/L for Cd-B with excellent correlation (r = 0.9988, P < 0.0001 for Pb-B) compared to reference methods [38].

Protocol for UV-Vis Method Validation

The validation of UV-Vis spectrophotometric methods follows ICH guidelines with specific experimental protocols:

- Specificity Testing: Verify the ability to quantify analyte unequivocally in the presence of expected matrix components through scanning across appropriate wavelength ranges [37].

- Linearity and Range: Prepare standard solutions across the concentration range (e.g., 10-18 ppm for ascorbic acid) and analyze to establish calibration curve with correlation coefficient ≥0.999 [34].

- Accuracy and Precision: Perform recovery studies by spiking known amounts of analyte into sample matrix; calculate % recovery and %RSD for repeatability and intermediate precision [34] [37].

- LOD and LOQ Determination: Calculate based on signal-to-noise ratios of 3:1 for LOD and 10:1 for LOQ, or through statistical methods using standard deviation of the response and slope of the calibration curve [36] [34].

Protocol for GC Method Validation

GC method validation requires systematic experimental approaches for each validation parameter:

- Specificity: Compare retention times of analytes in standard solutions to those in sample solutions to ensure accurate identification without interference [36].

- Linearity: Prepare and analyze at least five concentration levels from LOQ to 120% of working level; calculate correlation coefficient with acceptance criteria ≥0.999 [36].

- Robustness Testing: Deliberately vary chromatographic parameters including carrier gas flow rate (±10%), oven temperature (±2°C), and injection volume to assess method resilience [36].

Essential Research Reagent Solutions

Table 2: Key Research Reagents for Analytical Method Validation

| Reagent/Material | Technical Function | Application Examples |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides matrix-matched quality control with certified analyte concentrations | BCR 634 Lyophilised Human Blood for ICP-MS [38] |

| High-Purity Acids & Reagents | Minimizes contamination background in trace analysis | 5% HNO₃ for blood deproteinization in ICP-MS [38] |

| Internal Standard Solutions | Corrects for instrument drift and matrix effects | Rhodium or Iridium in ICP-MS blood analysis [38] |

| Chromatography Columns | Stationary phases for compound separation | Bio-inert columns for HPLC analysis of biopharmaceuticals [35] |

| Calibration Standards | Establishes quantitative relationship between response and concentration | Trace Metal Analysis Standards for ICP-MS and AAS [38] |

Technique Selection Strategy

Selecting the appropriate analytical technique requires systematic consideration of multiple factors aligned with the method's intended purpose:

- Define Analytical Requirements: Establish detection limit needs, required precision, analytical range, sample throughput, and matrix complexity before technique selection.

- Match Technique to Application: Reserve ICP-MS for ultra-trace elemental analysis (ppt levels), HPLC for non-volatile compound separation, GC for volatile compounds, and UV-Vis for routine quantification where sensitivity requirements are less stringent.

- Consider Infrastructure and Expertise: Evaluate available instrumentation, operational costs, and technical expertise required for each technique.

- Plan for Validation Early: Incorporate validation requirements during method development rather than as a final step, using risk assessment to focus validation efforts on critical method aspects [18].

- Implement Lifecycle Management: Establish procedures for ongoing method monitoring and controlled change management to maintain validated status throughout the method's operational life [18].

The selection of analytical techniques for inorganic analysis must be guided by both technical capabilities and validation requirements to ensure generated data meets quality standards. HPLC excels in separating complex mixtures of non-volatile compounds, ICP-MS provides unparalleled sensitivity for trace elemental analysis, GC offers high resolution for volatile compounds, and UV-Vis delivers cost-effective quantitative analysis. The modern validation framework emphasized in ICH Q2(R2) and Q14 promotes a systematic, lifecycle approach that begins with clear definition of analytical requirements and continues through controlled method changes. By integrating appropriate technique selection with rigorous validation practices, researchers can ensure their analytical methods consistently generate reliable, defensible data suitable for regulatory submissions and critical decision-making in pharmaceutical development and environmental monitoring.

Method development is a critical, systematic process in pharmaceutical analysis that transforms an analytical objective into a validated, reliable procedure [39]. Within the framework of inorganic analytical method validation research, this process ensures that the resulting method is not only scientifically sound but also meets stringent regulatory requirements for consistency, accuracy, and precision. A well-developed method serves as the foundation for quality control, stability studies, and bioavailability research, making its robustness paramount. This guide provides a step-by-step approach, from initial scoping to final optimization, specifically contextualized for researchers, scientists, and drug development professionals engaged in the analysis of inorganic compounds and related substances. The process demands a balance between scientific rigor and practical applicability, often requiring compromises between resolution, sensitivity, and analysis time.

Phase 1: Objective Definition and Scoping

The first phase focuses on precisely defining the analytical problem and establishing the boundaries of the method. A poorly defined objective inevitably leads to a method that, while technically functional, fails to address the core analytical need.

1.1 Define the Primary Analytical Question Begin by articulating the fundamental question the method must answer. This involves identifying the specific analytes (e.g., active pharmaceutical ingredient (API), key inorganic impurities, degradants) and the primary goal of the analysis, such as assay/potency testing, impurity profiling, dissolution testing, or content uniformity [39]. The goal dictates the performance requirements; for instance, an impurity method requires higher sensitivity and the ability to resolve closely eluting compounds compared to an assay method.

1.2 Establish Method Requirements and Constraints Formalize the criteria for success and the practical limitations of the method. This includes:

- Target Acceptance Criteria: Define the required specificity, accuracy, precision, linearity, range, and robustness based on regulatory guidelines (e.g., ICH Q2(R2)).

- Sample Characteristics: Determine the sample matrix's complexity, the physicochemical properties of the analytes (e.g., solubility, pKa, stability, chromophores), and the required sample preparation steps [39].

- Throughput and Resource Constraints: Consider the required sample throughput, available equipment (HPLC, IC, ICP-MS), and the operational environment (e.g., quality control lab versus research lab).

Phase 2: Method Selection and Initial Setup

With a clear objective, the next phase involves selecting the most appropriate analytical technique and initial conditions based on the nature of the analytes.

2.1 Technique Selection The choice of technique is predominantly guided by the analytes' chemical nature. While Reverse Phase Chromatography is suitable for many organic molecules, inorganic analytes often require specialized techniques [39].

- Ion Exchange Chromatography: This is the preferred technique for the separation of inorganic anions and cations [39].

- Reverse Phase Ion Pairing Chromatography: Can be used for charged inorganic species when paired with an ion-pair reagent [39].

- Size Exclusion Chromatography: Applicable for analyzing inorganic compounds with higher molecular weights, such as certain polymers or complexes [39].

- Inductively Coupled Plasma-Mass Spectrometry (ICP-MS): For ultra-trace elemental analysis and speciation.

2.2 Initial Condition Selection Consult available literature on the product or similar compounds to inform initial parameter choices [39]. Key considerations include:

- Column Selection: For ion analysis, a suitable ion-exchange column (e.g., with quaternary ammonium groups for anions) is essential [39]. Start with a 100-150 mm column length and a particle size of 3-5 µm for a balance of efficiency and speed [39].

- Detector Selection: If analytes have chromophores, a UV detector is suitable. For trace analysis of specific inorganic ions, more specialized detectors like conductivity (for IC) or mass spectrometry (e.g., the Vocus B CI-TOF-MS for volatile inorganic compounds) are indispensable [40] [39].

- Wavelength: For UV detection, use the λmax of the analyte for greatest sensitivity. Avoid wavelengths below 200 nm due to increased noise [39].

- Elution Mode: Choose between isocratic and gradient elution. Isocratic is simpler and adequate for samples with one or two components. Gradient elution is more effective for complex samples with a wide range of analyte polarities, as it helps achieve higher resolution and reduces retention times for strongly retained components [39].

Table 1: Comparison of Common Separation Modes for Inorganic Analytes

| Separation Mode | Best For | Stationary Phase Example | Mobile Phase |

|---|---|---|---|

| Ion Exchange [39] | Inorganic anions/cations | Quaternary Ammonium (for anions) | Aqueous buffer (e.g., Carbonate/Bicarbonate) |

| Reverse Phase Ion Pairing [39] | Charged species (strong acids/bases) | C18 Bonded | Buffer with Ion-Pair Reagent (e.g., Alkanesulfonate) |

| Size Exclusion [39] | High molecular weight analytes | Porous Polymer/Silica | Aqueous or Organic Solvent |

Phase 3: Parameter Optimization and Robustness Testing

Once initial separation is achieved, the method is systematically refined to improve resolution, efficiency, and speed. This phase employs structured experimentation.

3.1 The Optimization Workflow

The goal is to find the set of conditions that provides adequate resolution (Rs > 2.0 is generally desirable) in the shortest possible runtime. A systematic approach is far more efficient than one-factor-at-a-time (OFAT) experimentation.

3.2 Key Parameters for Optimization The following parameters have the most significant impact on separation quality and are the primary levers for optimization [39]:

- Mobile Phase pH and Composition: For ionizable analytes, pH is the most critical parameter. It controls the degree of ionization and thus the analyte's retention. Adjusting the buffer concentration can also impact peak shape and retention.

- Gradient Program and Flow Rate: In gradient elution, the slope of the gradient (i.e., the rate of change of the strong solvent) is key to balancing resolution and runtime. Flow rate directly affects backpressure and analysis time.

- Column Temperature: Temperature influences retention, efficiency, and selectivity. Increasing temperature typically reduces retention time and backpressure and can improve peak shape.

Table 2: Parameter Optimization Guide

| Parameter | Primary Effect | Typical Adjustment Range | Considerations |

|---|---|---|---|