Environmental Scanning in Biomedical Research: A Guide to Methods, Applications, and Best Practices

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to environmental scanning methodologies.

Environmental Scanning in Biomedical Research: A Guide to Methods, Applications, and Best Practices

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to environmental scanning methodologies. It covers foundational concepts, defines environmental scanning within a health services and research context, and explores its critical role in informing strategic decision-making. The piece details practical methodological frameworks like RADAR-ES, PESTLE, and SWOT, supported by real-world applications from clinical and translational science. It also addresses common challenges such as data overload and ethical considerations, and concludes with guidance on validating findings and comparing environmental scanning to related methodological approaches to ensure rigorous, actionable insights for biomedical innovation.

What is Environmental Scanning? Defining the Core Concept for Researchers

In the contemporary landscape of drug development and scientific research, the methodologies of Business Intelligence (BI) and environmental scanning have become indispensable for navigating complex data ecosystems and accelerating discovery. Business Intelligence comprises the technological processes, strategies, and tools that organizations use to analyze business information and transform raw data into meaningful, actionable insights [1] [2]. When systematically applied within a research context—particularly the rigorous framework of environmental scanning—these disciplines empower scientists and drug development professionals to convert vast, multi-source data into strategic intelligence. This technical guide delineates the core principles, processes, and applications of BI and environmental scanning, providing researchers with a structured methodology to enhance data-driven decision-making in scientific innovation.

Deconstructing Business Intelligence: Core Concepts and Processes

Defining the Business Intelligence Framework

Business Intelligence (BI) is a set of technological processes for the collection, management, and analysis of organizational data to yield insights that inform strategic and operational decisions [1]. It enables organizations to gain a comprehensive view of their operations and market context by combining data from internal sources (e.g., financial and operational data) and external sources (e.g., market data, competitor information) [2]. This integrated approach creates intelligence that would not be possible from any single data source alone. The ultimate objective of BI is to allow for the easy interpretation of large data volumes, helping organizations identify new strategic opportunities and achieve a competitive advantage [2].

The Evolution of Business Intelligence

The term "business intelligence" was first coined in 1865 by Richard Millar Devens, who used it to describe how banker Sir Henry Furnese profited from receiving and acting upon environmental information before his competitors [2]. The modern conceptualization began to take shape in 1958 when IBM researcher Hans Peter Luhn defined intelligence as "the ability to apprehend the interrelationships of presented facts in such a way as to guide action towards a desired goal" [2]. The field matured technologically in the late 20th century with the development of data management systems, decision support systems (DSS), and eventually the sophisticated BI platforms we know today [1].

Key BI Processes and Workflows

The BI process typically follows a structured workflow that transforms raw data into actionable intelligence [1]:

- Data Identification and Sourcing: Recognizing relevant data from diverse sources including data warehouses, data lakes, cloud storage, industry statistics, supply chain systems, CRM platforms, and social media.

- Data Collection and Preparation: Gathering and cleaning data through manual collection (e.g., spreadsheets) or automated ETL (Extract, Transform, Load) programs.

- Data Analysis: Applying data mining, discovery, and modeling tools to identify trends, patterns, and anomalies.

- Data Visualization and Reporting: Presenting findings through reports, charts, and interactive dashboards using tools like Tableau, Cognos Analytics, or Microsoft Excel.

- Action Plan Development: Formulating actionable insights based on historical data analysis against key performance indicators (KPIs).

Business Intelligence vs. Business Analytics

A crucial distinction exists between Business Intelligence (BI) and Business Analytics (BA). BI is primarily descriptive, focusing on what has happened and what is currently happening in the business based on existing data. It answers questions like "How many new customers were acquired last month?" or "Is order size increasing or decreasing?" In contrast, Business Analytics is a subset of BI that is prescriptive and forward-looking, using statistical and predictive models to recommend what should be done to achieve desired outcomes [1]. For example, BA might predict which marketing strategies would most benefit the organization based on historical data patterns.

Table 1: Core Components of Business Intelligence Systems

| Component Category | Specific Elements | Function in BI Architecture |

|---|---|---|

| Data Management | Data Warehousing, Data Marts, Data Integration | Aggregates data from multiple sources into a centralized repository for analysis [1] [2]. |

| Analysis Techniques | Online Analytical Processing (OLAP), Data Mining, Process Mining | Supports multidimensional queries and pattern discovery in large datasets [1] [2]. |

| Reporting & Visualization | Dashboards, KPIs, Performance Metrics | Communicates insights through accessible visual formats for timely decision-making [2]. |

| Advanced Analytics | Predictive Modeling, Prescriptive Analytics, Text Mining | Uses statistical techniques to forecast future trends and optimize decisions [2]. |

Environmental Scanning: The Research Methodology Framework

Defining Environmental Scanning in Research Context

Environmental scanning is the systematic process of gathering, analyzing, and interpreting relevant data about the internal and external environment of an organization to predict future events and identify opportunities and threats [3]. For researchers and drug development professionals, it serves as a critical component of strategic planning, helping them understand how their scientific domain and market landscape are evolving. When properly implemented as a continuous process, environmental scanning enables research organizations to stay ahead of disruptive technologies, identify emerging research opportunities, and drive innovation through data-informed strategy [3].

Core Elements of Environmental Scanning

Effective environmental scanning in research environments focuses on four key elements [3]:

- Emerging Trends Monitoring: Tracking shifts in scientific paradigms, research methodologies, funding patterns, and publication trends that signal important developments in a field.

- New Market Entries and Technologies: Identifying new research tools, technological platforms, competitor institutions, and collaborative opportunities entering the scientific landscape.

- Substitute Offerings and Methodologies: Recognizing alternative approaches, technologies, or solutions that could potentially replace current research methodologies or therapeutic strategies.

- Forward-looking Indicators: Monitoring signals of change through grant announcements, regulatory guidance, patent applications, and scientific conference proceedings.

Structured Methodologies for Environmental Scanning

PESTEL Analysis Framework

The PESTEL analysis provides a comprehensive framework for scanning the macro-environmental factors affecting research organizations [3]:

- Political: Government policies on research funding, intellectual property laws, and regulatory approval pathways for new therapeutics.

- Economic: Economic conditions affecting research investment, healthcare funding models, and resource allocation for R&D.

- Social: Demographic trends, patient advocacy movements, and public acceptance of novel therapies or technologies.

- Technological: Advancements in research technologies, computational capabilities, and disruptive innovations in drug development platforms.

- Environmental: Environmental regulations affecting laboratory practices, sustainability initiatives, and green chemistry requirements.

- Legal: Compliance requirements, ethical guidelines, liability considerations, and legal frameworks governing research.

SWOT Analysis Framework

The SWOT analysis assesses an organization's internal Strengths and Weaknesses alongside external Opportunities and Threats [3]. For research institutions, this involves:

- Strengths: Core research competencies, specialized equipment, proprietary technologies, and distinguished scientific personnel.

- Weaknesses: Resource limitations, technical capability gaps, procedural inefficiencies, and knowledge deficits.

- Opportunities: Emerging research fields, collaborative partnerships, funding initiatives, and technological breakthroughs.

- Threats: Competitive pressures, funding cuts, regulatory challenges, and intellectual property conflicts.

Competitive Intelligence Gathering

Competitive intelligence involves systematically gathering and analyzing information about competitor activities, strategies, and innovations [3]. For drug development, this includes monitoring competitor clinical trials, publication outputs, patent applications, and regulatory submissions to identify market gaps and strategic opportunities.

Integrating BI and Environmental Scanning in Pharmaceutical Research

Strategic Applications in Drug Development

The integration of BI and environmental scanning creates a powerful framework for pharmaceutical research and development:

- Research Portfolio Optimization: Using BI tools to analyze historical research performance data combined with environmental scanning of emerging scientific trends to allocate resources to the most promising therapeutic areas.

- Clinical Trial Strategy: Applying environmental scanning to identify suitable trial sites, patient populations, and regulatory requirements while using BI to monitor trial progress and operational efficiency.

- Competitive Positioning: Combining BI analysis of internal capabilities with environmental scanning of competitor pipelines and market dynamics to identify strategic advantages.

- Technology Adoption Decisions: Using environmental scanning to identify emerging research technologies and BI to analyze their potential return on investment based on historical adoption patterns.

Quantitative Data Management in Research BI

Effective BI implementation in research requires systematic handling of quantitative data. The presentation of this data follows established statistical principles [4] [5]:

Table 2: Quantitative Data Presentation Methods in Research BI

| Presentation Method | Best Use Cases | Implementation Guidelines |

|---|---|---|

| Frequency Distribution Tables | Initial data organization, identifying patterns [4]. | 6-16 class intervals of equal size; clear headings with units specified [4] [5]. |

| Histograms | Displaying distribution of continuous data [4] [5]. | Contiguous bars with area proportional to frequency; horizontal axis as number line [5]. |

| Frequency Polygons | Comparing multiple distributions on same diagram [4]. | Points placed at midpoint of intervals connected by straight lines [5]. |

| Line Diagrams | Illustrating trends over time [4]. | Time on horizontal axis, measured variable on vertical axis; useful for research metrics. |

| Scatter Diagrams | Demonstrating correlation between two variables [4]. | Plotting paired measurements to visualize relationships and patterns. |

Experimental Protocols for Environmental Scanning Research

For researchers implementing environmental scanning methodologies, the following protocol provides a structured approach:

Protocol Title: Comprehensive Environmental Scanning for Research Strategy Development

Objective: To systematically identify, analyze, and interpret external and internal factors affecting research direction and resource allocation.

Methodology:

- Define Scanning Boundaries: Establish the scope of the scan including therapeutic areas, technologies, timeframes, and geographic considerations.

- Identify Information Sources: Catalog relevant databases, scientific publications, patent repositories, conference proceedings, regulatory documents, and expert networks.

- Implement Data Collection: Deploy both automated tools (e.g., literature alerts, AI-based monitoring) and manual methods (e.g., expert interviews, conference attendance).

- Analyze and Synthesize: Apply structured frameworks (PESTEL, SWOT) to categorize findings and identify interrelationships.

- Validate Findings: Cross-reference information across multiple sources and consult with domain experts to confirm significance.

- Disseminate Intelligence: Distribute synthesized findings through reports, dashboards, and presentations tailored to different stakeholder groups.

Quality Control: Establish criteria for source credibility, implement cross-validation procedures, and document all methodologies for reproducibility.

Visualization Frameworks for Research Intelligence

Business Intelligence Process Workflow

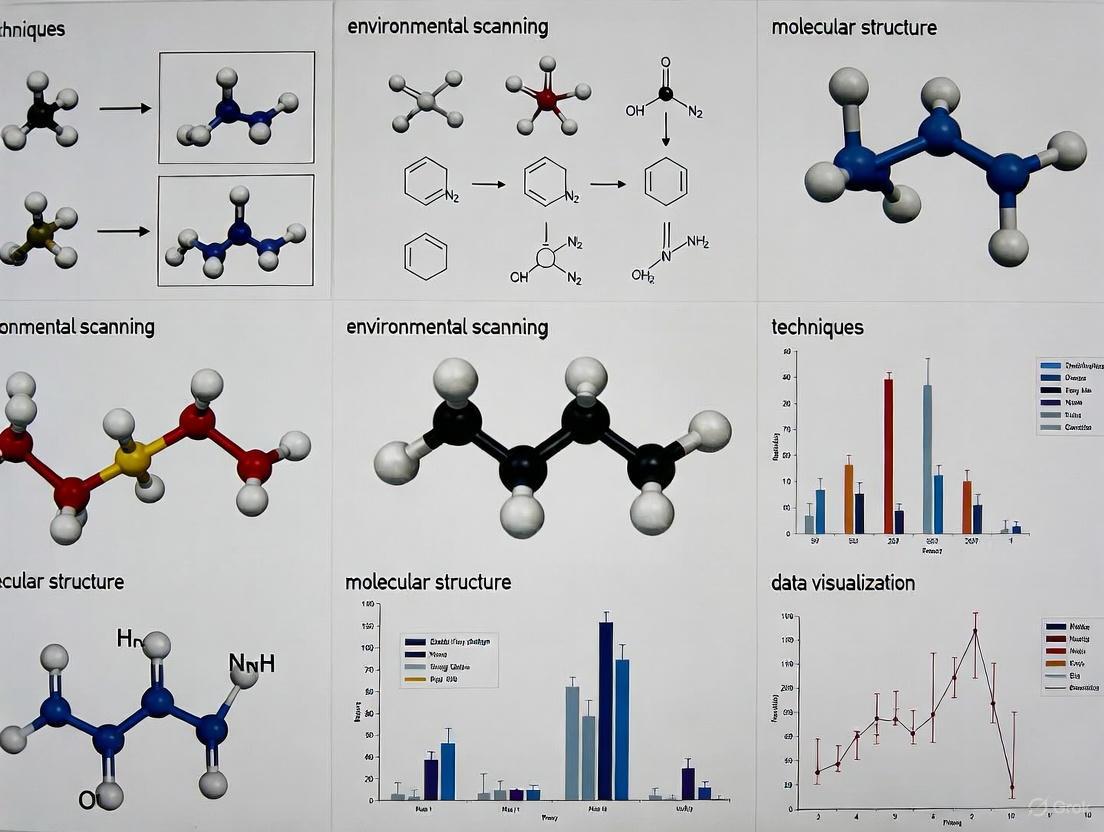

The following diagram illustrates the integrated workflow of business intelligence processes within research environments:

Environmental Scanning Framework

This diagram maps the comprehensive environmental scanning framework essential for research strategy:

The Researcher's Toolkit: Essential Solutions for Intelligence Operations

Table 3: Research Reagent Solutions for Intelligence Operations

| Tool Category | Specific Solutions | Research Application & Function |

|---|---|---|

| Data Integration Tools | ETL Platforms, Data Warehouses, Data Lakes | Consolidate structured and unstructured research data from multiple sources for unified analysis [1] [2]. |

| Analytical Engines | OLAP Systems, Statistical Software, Predictive Modeling Tools | Enable multidimensional analysis of research data and predictive forecasting of research outcomes [1] [2]. |

| Visualization Platforms | BI Dashboards, Scientific Graphing Tools, Mapping Software | Transform complex research data into accessible visual formats for interpretation and decision-making [1] [4]. |

| Competitive Intelligence | Patent Databases, Publication Alert Systems, Clinical Trial Registers | Monitor competitor research activities, publication outputs, and intellectual property developments [3]. |

| Environmental Monitoring | AI Literature Scanners, Regulatory Tracking, Social Media Analytics | Systematically track external developments in science, technology, regulations, and market dynamics [3]. |

The strategic integration of Business Intelligence methodologies with systematic environmental scanning creates a powerful framework for advancing drug development and scientific research. By adopting these structured approaches to data collection, analysis, and interpretation, research organizations can transform disconnected information into actionable intelligence. This synthesis enables more effective strategic planning, optimized resource allocation, and enhanced competitive positioning in the rapidly evolving scientific landscape. As the volume and complexity of research data continue to grow, these disciplined approaches to intelligence gathering and analysis become increasingly essential for research organizations committed to innovation and scientific excellence.

Research and Development (R&D) serves as a critical engine for innovation, economic growth, and addressing complex societal challenges. Its purpose extends far beyond the laboratory; effective R&D directly informs evidence-based program development and strategic policy-making. In an era of rapid scientific advancement, environmental scanning has emerged as a vital methodological approach that enables R&D organizations to systematically collect, analyze, and utilize internal and external data. This process enhances strategic planning and ensures that R&D investments are aligned with evolving needs and opportunities [6] [7].

Environmental scanning is defined as "the acquisition and use of information about events, trends, and relationships in an organization's external environment, the knowledge of which would assist management in planning the organization's future course of action" [8]. For R&D-intensive fields like pharmaceutical development, this involves analyzing multiple domains including technological advancements, regulatory landscapes, funding priorities, and public health needs. By comprehending the vision of health and medicine, environmental scanning can predict the future, emerging issues, trends, and keep up with changes, making it an indispensable tool for R&D managers and policy-makers [6].

Environmental Scanning Methodologies for R&D

Core Process Models

Environmental scanning employs structured methodologies to transform raw data into actionable intelligence. Most models propose six main steps for conducting an environmental survey in complex systems like healthcare R&D [6]. These have been refined through applications in various public health and research contexts:

- Step 1: Determine Leadership and Capacity: Designate a coordinator or team to champion the entire environmental scan process from development to dissemination with clear roles and responsibilities [7].

- Step 2: Establish Focal Area and Purpose: Specify a clear purpose to anchor the process and focus the organization's limited time, energy, and resources. This ensures the scan remains focused and its scope clear [7].

- Step 3: Create Timeline and Incremental Goals: Establish a timeline at the outset, planning activities to optimize the process and stay on task. This is particularly crucial for time-sensitive R&D fields [7].

- Step 4: Determine Information Needs: Brainstorm all topics and resources that could inform the environmental scan, casting a wide net to avoid missing critical information [7].

- Step 5: Identify and Engage Stakeholders: Create a diverse, iterative list of people or organizations that have information on each topic. Stakeholders are key to success and may expand the original list of topics [7].

- Step 6: Collect, Analyze, and Disseminate: Implement the information gathering through determined methods, analyze findings, and share results with stakeholders to inform strategic planning and decision-making [7].

Data Collection Frameworks

Environmental scanning integrates multiple strategies for information collection, employing mixed-methods approaches that combine quantitative and qualitative data [8] [7]. The specific methodology should be tailored to the R&D context and strategic objectives.

Table 1: Environmental Scanning Data Collection Methods for R&D

| Method Type | Specific Approaches | Application in R&D Context |

|---|---|---|

| Literature Assessment | Systematic reviews, gray literature analysis, patent databases | Identifying technological gaps, assessing competitive landscape, avoiding research duplication |

| Stakeholder Engagement | Key informant interviews, focus groups, surveys | Understanding user needs, clinical adoption barriers, practitioner perspectives |

| Policy Analysis | Legislative tracking, regulatory guideline review | Anticipating compliance requirements, identifying policy barriers or incentives |

| Data Analysis | Secondary data analysis, market research, clinical trends | Quantifying disease burden, identifying unmet medical needs, market sizing |

A protocol for an environmental scan in medical research adopted an innovative approach combining a formal information search with an explanatory design that includes both quantitative and qualitative data. This involved surveys to collect demographic information, participant experience and interests in research and scholarly activities, complemented by focus groups to collect qualitative data on perspectives regarding research expansion [8].

R&D Funding Landscape and Policy Implications

Federal R&D Investment Priorities

Understanding the funding landscape is crucial for R&D planning and policy development. Federal support for R&D focuses on potential returns related to national defense, public health, public safety, the environment, energy security, and advancing knowledge generally [9].

Table 2: Federal R&D Funding Profile: FY2024-FY2026 Request (budget authority, in current dollars)

| Agency/Department | FY2024 Actual (in billions) | FY2025 Estimate (in billions) | FY2026 Request (in billions) | Percentage Change (FY2025-FY2026) | Primary Focus Areas |

|---|---|---|---|---|---|

| Department of Defense (DOD) | - | $91.9 | $112.9 | +23% | National security technologies, experimental development |

| National Institutes of Health (NIH) | - | $46.0 | $27.0 | -41% | Basic biomedical research, translational medicine |

| Department of Energy (DOE) | - | $19.9 | $16.7 | -16% | Energy security, basic physical sciences |

| NASA | - | $11.0 | $7.2 | -34% | Aeronautics, space technologies |

| National Science Foundation (NSF) | - | $7.0 | $3.1 | -55% | Fundamental science, engineering research |

| Total Federal R&D | - | $192.2 | $181.4 | -6% | Cross-cutting national priorities |

Source: CRS, calculated from Office of Management and Budget [9]

The distribution of R&D funding signals strategic priorities, with the majority concentrated in a subset of federal agencies. Approximately 92% of the total R&D funding requested in the President's FY2026 budget would go to five agencies, with DOD (62%) and NIH (15%) combined accounting for 77% of all proposed federal R&D funding [9].

Tax Policy as an R&D Incentive Mechanism

Beyond direct funding, tax incentives represent a critical policy tool for stimulating private-sector R&D investment. The research and development (R&D) tax credit directly reduces tax liability dollar-for-dollar, unlike deductions that only reduce taxable income [10].

Recent legislative changes have significant implications for R&D strategy:

- The One Big Beautiful Bill Act (OBBBA) provides relief to taxpayers by restoring the option to fully deduct domestic R&D expenses, reversing prior capitalization rules that mandated five-year amortization for domestic R&D [10].

- The law introduces Section 174A, which restores full expensing for domestic R&D expenditures for tax years beginning after December 31, 2024 [10].

- Small businesses may apply up to $500,000 of their R&D credits against both employer Social Security and Medicare taxes, providing crucial cash-flow benefits to early-stage organizations investing in R&D [10].

Experimental Protocols and Documentation Standards

Qualification Framework for R&D Activities

The R&D tax credit operates under specific qualification rules centered on a four-part test that provides a framework for defining legitimate R&D activities [10]:

- Permitted Purpose: Activities must aim to develop new or improved business components (function, performance, reliability, quality).

- Technological in Nature: The research must fundamentally rely on principles of physical, biological, engineering, or computer sciences.

- Elimination of Uncertainty: Activities must intend to discover information that eliminates technical uncertainty regarding capability, methodology, or design.

- Process of Experimentation: Substantially all activities must involve a process of evaluating alternatives through testing, modeling, simulation, or trial and error.

Documentation and Compliance Protocols

Recent court cases have emphasized the importance of proper documentation when claiming R&D credits. The following documentation practices are essential for both compliance and effective knowledge management [10]:

- Record Technical Uncertainties: Document the specific technical challenges and unknown factors at project inception.

- Document Experimentation Processes: Maintain detailed records of hypotheses tested, experimental designs, results, and iterative modifications.

- Associate Costs with Activities: Implement time-tracking systems that create nexus between employee time, supply costs, and specific R&D projects.

Proposed changes to Form 6765 will require detailed information about business components or projects eligible for the R&D tax credit, including lists of qualified R&D business components, amount of qualified research expenses claimed for each, and descriptions of information sought and alternatives evaluated [10].

Visualization of Environmental Scanning in R&D

The following diagram illustrates the integrated environmental scanning process for R&D organizations, showing how data collection feeds into strategic decision-making for program and policy development.

Environmental Scanning Process for R&D

Essential Research Toolkit for R&D Organizations

Table 3: Research Reagent Solutions for Strategic R&D Management

| Tool Category | Specific Solutions | Function in R&D Strategy |

|---|---|---|

| Information Synthesis Tools | Literature surveillance systems, patent analytics, data mining platforms | Identifying emerging technologies, assessing competitive landscape, detecting innovation opportunities |

| Stakeholder Engagement Platforms | Survey tools, interview protocols, focus group guides | Gathering diverse perspectives, validating assumptions, identifying barriers to adoption |

| Policy Analysis Frameworks | Legislative tracking systems, regulatory change alerts | Anticipating policy shifts, identifying compliance requirements, shaping advocacy positions |

| Financial Modeling Tools | R&D tax credit calculators, ROI analysis templates, portfolio optimization models | Quantifying financial impacts, optimizing resource allocation, demonstrating program value |

| Knowledge Management Systems | Electronic lab notebooks, project documentation platforms | Capturing institutional knowledge, supporting compliance requirements, facilitating collaboration |

Environmental scanning provides R&D organizations with a systematic approach to navigating complex and rapidly changing technological landscapes. By implementing structured scanning methodologies, organizations can transform scattered data into strategic intelligence that directly informs program development and policy decisions. The most important task of managers and policy makers is to make decisions, and they can use environmental scanning models to collect, analyze, and interpret data, identify important patterns and trends so that they can make evidence-based decisions [6].

For the pharmaceutical and drug development sector, this integrated approach enables more responsive adaptation to regulatory changes, therapeutic area prioritization, and investment targeting. The critical purpose of R&D therefore expands beyond discovery to encompass strategic intelligence functions that ensure research activities remain aligned with evolving health needs, technological capabilities, and policy environments. Organizations that institutionalize environmental scanning as a core competency position themselves to allocate resources more effectively, anticipate market shifts, and ultimately deliver greater impact through their innovation portfolios.

Environmental scanning is a systematic process for monitoring an organization's external and internal environments to identify early signals of potential changes, opportunities, and threats [11]. For researchers, scientists, and drug development professionals, this practice is crucial for anticipating technological breakthroughs, regulatory shifts, and emerging public health needs. Within this discipline, understanding the hierarchy of signals—from faint early indicators to broad, transformative forces—provides a critical foundation for strategic foresight and proactive research and development planning [12] [13].

This guide details the core terminology of environmental scanning, focusing on three hierarchical concepts: weak signals, micro trends, and macro trends. We will define each concept, distinguish them based on key characteristics, and provide methodologies for their systematic identification and analysis within a research context, particularly relevant to the pharmaceutical and life sciences sectors.

Core Terminology and Hierarchical Relationship

Weak Signals

A weak signal is an early, fragmented indicator of a potential change that may become significant in the future [14]. These signals are often ambiguous, isolated, and emerge from the periphery of a given field. According to Ansoff (1975), they represent simple observations of discontinuities where the underlying causes and potential impacts are not yet fully understood [12]. In a research context, they are the first "storm warnings from tomorrow" [14].

- Characteristics: They are low in signal strength, high in uncertainty, and difficult to detect amidst noise.

- Examples in Drug Development: An early-stage preprint on a novel drug target mechanism; a single clinical trial with an unexpected, minor side effect; a niche patent filing for an unconventional drug delivery system.

Micro Trends

Micro trends are the first concrete signs of emerging patterns. They represent the consolidation of several related weak signals into an observable, more coherent development [12]. They are often the initial manifestation of a new direction within a specific domain or regional market.

- Characteristics: They have a more defined shape and direction than weak signals but are still limited in scope and duration.

- Examples in Drug Development: The growing adoption of a specific digital biomarker for a particular disease class in a specific region; a shift towards patient-centric clinical trial designs within a specific therapeutic area.

Macro Trends

Macro trends are broad, pervasive patterns of change that shape the landscape of entire industries and societies over a longer period [12]. They are powerful, overarching forces that are clearly observable and supported by substantial data. A macro trend is often composed of and reinforced by multiple converging micro trends.

- Characteristics: They are long-lasting, widespread, and impact all areas of life and business.

- Examples in Drug Development: The macro-trend of personalized medicine, driven by advances in genomics and proteomics; the shift towards value-based healthcare, emphasizing patient outcomes over service volume.

The Foresight Iceberg Model

The relationship between these concepts is effectively visualized using the "iceberg model" [12]. In this model, macro trends form the massive, deep foundation of the iceberg. Micro trends are closer to the surface, making up the structure that supports the visible tip. Weak signals are the faint ripples on the water's surface, the first hints of the iceberg's presence and movement. A fad or hype, by contrast, is like a small piece of ice on the surface with no substantial structure beneath it; it is of limited duration and strategic significance [12].

The following diagram illustrates this hierarchical relationship and the process of signal evolution:

Diagram: The Signal Evolution Hierarchy, showing the progression from weak signals to macro trends.

The table below provides a structured comparison of weak signals, micro trends, and macro trends across key dimensions relevant to research and drug development.

| Feature | Weak Signals | Micro Trends | Macro Trends |

|---|---|---|---|

| Definition | Early, fragmented hints of potential change [14] | Observable, concrete developments in specific domains [12] | Broad, pervasive patterns shaping societies and industries [12] |

| Effect Duration | Uncertain; may fade or evolve | 3-5 years [12] | 25-30 years [12] |

| Scope & Impact | Highly localized/niche; potential for high impact | Limited to specific regions, markets, or research fields [12] | Global; impacts all areas of life and business [12] |

| Detection Method | Broad horizon scanning, expert networks, analysis of preprints/patents [14] [11] | Analysis of publication trends, clinical trial registries, market research data [15] | Analysis of long-term datasets, demographic shifts, global policy directions [12] |

| Level of Uncertainty | Very High | Medium | Low |

| Example in Pharma | Single paper on AI-predicted protein folding | Adoption of continuous manufacturing for specific drug types | Global push for regulatory harmonization |

Methodologies for Identification and Analysis

Detecting Weak Signals

Detecting weak signals requires a proactive and systematic scanning strategy because they are easy to overlook due to cognitive biases like confirmation bias [14].

- Experimental Protocol: Passive and Active Environmental Scanning [11]

- Define Scan Scope: Identify core research areas (e.g., oncology, neurology) and adjacent fields (e.g., materials science, AI).

- Passive Scanning (Collecting Existing Knowledge):

- Data Sources: Systematic reviews of preprint servers (e.g., bioRxiv), early-stage patent filings, scientific conference abstracts, and niche expert blogs.

- Procedure: Use automated alerts with broad keywords. The goal is "casual and opportunistic" data collection from established external contacts and sources [11].

- Analysis: Log signals in a centralized database (e.g., a dedicated foresight platform [13] or shared spreadsheet). Record the source, date, and initial observation without extensive early interpretation.

- Active Scanning (Creating New Knowledge):

- Data Sources: Engaging with key opinion leaders (KOLs) through structured interviews or focus groups; conducting small-scale exploratory surveys.

- Procedure: The organization takes action by directly engaging with the environment to obtain "rigorous and objective" data and is willing to "revise or update existing knowledge" based on reactions [11].

- Analysis: Compare insights from active scanning with findings from passive scanning to validate and enrich understanding.

Tracking and Validating Trends

Once weak signals are identified, the next step is to track their potential evolution into trends.

- Experimental Protocol: Trend Tracking through Automated Research [15]

- Define Objectives & Metrics: Select consistent metrics aligned with strategic goals (e.g., researcher adoption rates of a new technology, shifting investor focus in a therapeutic area).

- Questionnaire Design: Develop a streamlined survey with core tracking questions that remain consistent over time. Incorporate advanced methods like Key Driver Analysis to understand underlying factors.

- Data Collection Waves: Launch research on a consistent schedule (e.g., quarterly, annually) to the same target audience parameters for reliable comparison.

- Statistical Analysis: Use automated statistical testing to identify significant lifts or declines in key metrics between research waves, distinguishing meaningful shifts from random noise [15].

The workflow for the entire process, from detection to strategic action, is shown below:

Diagram: Workflow for Identifying Signals and Trends.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key tools and platforms that facilitate effective environmental scanning and trend analysis in a research context.

| Tool / Solution | Function | Application in Research |

|---|---|---|

| Preprint Server Alerts (e.g., bioRxiv) | Passive scanning of non-peer-reviewed early research [11] | Detecting weak signals of novel mechanistic pathways or methodological innovations. |

| Patent Database Analytics | Tracking early-stage intellectual property and innovation landscapes. | Identifying weak signals in drug delivery systems or new therapeutic compound classes. |

| Automated Trend Tracking Platform (e.g., quantilope) | Systematic measurement of quantitative data over time with statistical testing [15] | Validating the growth of micro-trends, such as shifting clinician preferences for drug attributes. |

| Foresight Platform (e.g., FIBRES) | Centralized repository for collecting, clustering, and tracking signals and trends [13] | Enabling collaborative sense-making and monitoring the evolution of weak signals into micro trends. |

| Structured Expert Elicitation | Formal process for gathering and quantifying expert judgment [11] | Interpreting ambiguous weak signals and estimating the potential impact of emerging trends. |

For drug development professionals and researchers, mastering the distinction between weak signals, micro trends, and macro trends is not an academic exercise but a strategic imperative. A robust environmental scanning system that actively monitors for weak signals while tracking established trends enables organizations to move from a reactive to a proactive stance. This foresight is the foundation for building resilience, driving innovation, and ultimately delivering transformative therapies in an increasingly complex and fast-paced world. By implementing the methodologies and utilizing the tools outlined in this guide, research teams can better anticipate the future, ensuring they are not left behind as the scientific landscape evolves.

Environmental scanning (ES) is a crucial methodological approach in health services delivery research (HSDR), employed to examine a wide range of healthcare services, practices, policies, issues, programs, technologies, trends, and opportunities through the collection, synthesis, and analysis of existing and potentially new data from a variety of sources [16]. This process helps inform decision-making in shaping responses to current challenges and future health service delivery needs. Originating in the disciplines of business and information science in the 1960s, environmental scanning became an integral part of strategic planning to identify trends and potential threats to improve organizational performance [17]. Despite its widespread adoption in healthcare, a significant lack of methodological guidance has persisted, leading to inconsistent implementation and reporting of ES in the literature [16] [6].

The RADAR-ES framework emerges as a comprehensive solution to these challenges, providing researchers and health services stakeholders with a structured, evidence-informed methodological framework for conceptualizing, planning, and implementing environmental scans specifically in HSDR contexts [16] [18]. Developed through a rigorous process that adapted McMeekin et al's methodology for framework development, RADAR-ES integrates findings from literature reviews, stakeholder surveys, and Delphi studies with experts in the field [16]. This framework addresses a critical gap in health services research methodology by offering standardized guidance that enhances the consistency, quality, and trustworthiness of environmental scanning activities.

The RADAR-ES Framework: Core Components and Principles

Definition and Conceptual Foundation

The RADAR-ES framework operationalizes environmental scanning in health services research as "a methodology used to examine a wide range of healthcare services, practices, policies, issues, programs, technologies, trends, and opportunities through the collection, synthesis, and analysis of existing and potentially new data from a variety of sources to help inform decision-making in shaping responses to current challenges and future health service delivery needs" [16]. This comprehensive definition establishes ES as a distinct methodology rather than merely a data collection technique, emphasizing its role in evidence-informed decision-making for healthcare services.

The conceptual foundation of RADAR-ES distinguishes it from other methodological approaches commonly confused with environmental scanning, such as scoping reviews or mixed methods designs [16]. While these methodologies may share some characteristics with ES, RADAR-ES positions environmental scanning as a unique approach specifically focused on understanding current services, issues, trends, and other aspects of service delivery through the examination of both existing and new data sources [16]. This differentiation is crucial for researchers seeking to select the most appropriate methodological approach for their specific research questions in health services delivery.

The Five Phases of RADAR-ES

The RADAR-ES framework consists of five distinct phases that guide researchers through the entire process of conducting an environmental scan in health services research [16] [18]. These phases provide a logical sequence for conceptualizing, planning, implementing, and reporting ES findings:

Phase 1: Recognizing the Issue - This initial phase involves identifying and defining the specific health services issue, problem, or phenomenon that will be the focus of the environmental scan. Researchers establish the scope, context, and purpose of the scan during this foundational stage.

Phase 2: Assessing Factors for ES - In this phase, researchers conduct a preliminary assessment of factors relevant to the environmental scan, including available resources, data sources, stakeholder interests, and potential constraints that might influence the scanning process.

Phase 3: Developing an ES Protocol - This phase involves creating a comprehensive protocol that outlines the methodological approach, data collection strategies, analysis methods, and timeline for the environmental scan. The protocol serves as a roadmap for the entire ES process.

Phase 4: Acquiring and Analyzing the Data - During this phase, researchers implement the data collection strategies outlined in the protocol, gathering information from diverse sources, then synthesizing and analyzing this data to identify key patterns, trends, and insights.

Phase 5: Reporting the Results - The final phase focuses on effectively communicating the findings of the environmental scan to relevant stakeholders, including recommendations for how these findings should inform decision-making in health services delivery.

Table 1: The Five Phases of the RADAR-ES Framework

| Phase | Title | Key Activities |

|---|---|---|

| 1 | Recognizing the Issue | Problem identification, scope definition, context establishment |

| 2 | Assessing Factors for ES | Resource evaluation, stakeholder analysis, constraint identification |

| 3 | Developing an ES Protocol | Methodology selection, data collection planning, timeline creation |

| 4 | Acquiring and Analyzing the Data | Information gathering, synthesis, pattern identification |

| 5 | Reporting the Results | Findings communication, recommendation development, knowledge translation |

Methodological Workflow

The following diagram illustrates the sequential workflow and key decision points within the RADAR-ES framework's five-phase structure:

Detailed Methodological Protocols for RADAR-ES Implementation

Phase 1: Recognizing the Issue

The initial phase of RADAR-ES requires researchers to clearly identify and articulate the health services issue that will be the focus of the environmental scan. This process begins with a preliminary literature review to establish what is already known about the topic and to identify knowledge gaps [16]. Researchers should engage key stakeholders early in this phase to ensure the issue is relevant and meaningful to health services delivery contexts. This collaborative approach helps refine the research focus and establishes shared ownership of the scanning process.

Protocol implementation for this phase involves developing a clear problem statement that specifies the health services context, target population (if applicable), and the specific aspects of service delivery to be examined. Researchers should document the scope boundaries, including any limitations or exclusions, to maintain focus throughout the scanning process. Establishing explicit inclusion and exclusion criteria at this stage provides methodological rigor and ensures the environmental scan remains feasible within resource constraints [16].

Phase 2: Assessing Factors for ES

This assessment phase requires a systematic evaluation of internal and external factors that may influence the environmental scan. Internal factors include available expertise, budgetary constraints, timeframe, and technological resources [3]. External factors encompass the political, economic, social, technological, environmental, and legal (PESTEL) context that might affect the scanning process or its findings [3] [19]. This comprehensive assessment ensures the environmental scan is designed with realistic parameters and adequate support structures.

Methodologically, this phase incorporates tools such as stakeholder analysis matrices and resource inventories to systematically catalog relevant factors [16]. Researchers should identify potential data sources, including existing literature, gray literature, administrative data, expert opinions, and emerging information sources. The assessment should also evaluate potential barriers to data access and strategies to address these challenges. Documenting this assessment provides transparency and helps justify methodological decisions made in subsequent phases.

Phase 3: Developing an ES Protocol

The protocol development phase represents the core planning component of RADAR-ES, where researchers create a comprehensive roadmap for the entire environmental scan. The protocol should explicitly outline the methodological approach, including specific procedures for data identification, selection, extraction, and synthesis [16]. This includes detailing search strategies for literature databases, criteria for including or excluding information sources, and methods for documenting the search process to ensure reproducibility.

A robust ES protocol must also address ethical considerations, particularly when the scan involves human stakeholders or sensitive organizational data [16]. The protocol should establish quality assurance mechanisms, such as peer review of search strategies or independent double-screening of sources, to enhance the rigor and credibility of findings. Additionally, researchers should develop a timeline with specific milestones and deliverables, assigning clear responsibilities to team members to ensure accountability throughout the scanning process.

Phase 4: Acquiring and Analyzing the Data

During the data acquisition and analysis phase, researchers implement the strategies outlined in the ES protocol to gather and synthesize information from diverse sources. Data collection typically involves multiple approaches, including systematic literature searches, review of organizational documents, stakeholder interviews, surveys, and observation of service delivery environments [16]. The multi-method approach ensures comprehensive coverage of the issue from various perspectives, enhancing the validity of findings.

Analysis in environmental scanning follows an iterative process of data synthesis, pattern identification, and meaning-making [16]. Unlike systematic reviews that focus primarily on published literature, ES analysis integrates information from diverse sources to develop a holistic understanding of the current landscape. Analytical techniques may include thematic analysis for qualitative data, descriptive statistics for quantitative data, and triangulation across different data sources to verify findings. The analysis should identify not only current trends and practices but also emerging issues, innovations, and potential future developments in health services delivery.

Phase 5: Reporting the Results

The final phase focuses on effectively communicating the environmental scan findings to relevant stakeholders. Reporting should be tailored to different audiences, including researchers, healthcare administrators, policy makers, and practitioners [16]. A comprehensive ES report typically includes an executive summary, introduction/methodology, detailed findings organized thematically, discussion of implications, and specific recommendations for decision-making. Visual representations such as matrices, maps, or diagrams can enhance understanding of complex relationships and patterns identified through the scan.

Beyond traditional reporting formats, knowledge translation activities should facilitate the application of ES findings to health services delivery contexts [16]. This may include presentations to stakeholder groups, development of policy briefs, creation of decision support tools, or workshop facilitation to discuss implementation strategies. Researchers should also consider disseminating findings through peer-reviewed publications to contribute to the methodological advancement of environmental scanning in health services research.

Table 2: Data Types and Sources for Environmental Scans in Health Services Research

| Data Category | Specific Sources | Application in Health Services Research |

|---|---|---|

| Published Literature | Academic journals, books, conference proceedings | Evidence-based practices, theoretical frameworks, methodological approaches |

| Gray Literature | Technical reports, working papers, government documents, theses | Policy contexts, unpublished initiatives, implementation experiences |

| Organizational Data | Annual reports, strategic plans, service statistics, performance metrics | Institutional contexts, resource allocation, service patterns |

| Stakeholder Input | Expert interviews, focus groups, surveys, deliberative dialogues | Practical insights, contextual understanding, consensus building |

| Digital Sources | Social media, websites, databases, registries | Emerging trends, public perceptions, innovation tracking |

The Researcher's Toolkit: Essential Methods and Analytical Frameworks

Complementary Analytical Frameworks

Environmental scanning in health services research frequently incorporates established analytical frameworks to structure the examination of internal and external factors. The RADAR-ES framework is compatible with several such approaches that help organize and interpret scan findings:

PESTEL Analysis: This framework examines macro-environmental factors across Political, Economic, Social, Technological, Environmental, and Legal domains [3] [19]. In health services research, political factors might include healthcare policies and regulations; economic factors encompass funding models and resource allocation; social factors consider demographic and cultural trends; technological factors address innovations in treatment and delivery; environmental factors involve physical infrastructure and spatial considerations; and legal factors include compliance requirements and liability issues.

SWOT Analysis: This approach assesses internal Strengths and Weaknesses alongside external Opportunities and Threats [3] [19]. For health services applications, strengths might include specialized expertise or efficient processes; weaknesses could involve resource limitations or access barriers; opportunities may encompass emerging technologies or partnership potential; and threats might include competing services or changing reimbursement models.

STEEP Analysis: Similar to PESTEL, this framework categorizes external factors into Social, Technological, Economic, Environmental, and Political domains [20]. This variation is particularly useful for scans focused on broader societal trends affecting health services delivery, such as aging populations, digital health adoption, economic constraints, climate health impacts, or health policy reforms.

These analytical frameworks provide structured approaches to categorize and make sense of the diverse information collected during an environmental scan, facilitating systematic comparison across different domains and identification of interrelationships between factors.

Successful implementation of RADAR-ES requires various tools and resources throughout the five phases. The following table details essential components of the researcher's toolkit for conducting environmental scans in health services research:

Table 3: Research Reagent Solutions for Environmental Scanning in Health Services

| Tool/Resource | Function | Application in RADAR-ES |

|---|---|---|

| Literature Databases | Access to peer-reviewed research | Systematic identification of published evidence during data acquisition |

| Gray Literature Search Protocols | Identification of unpublished materials | Locating organizational reports, policy documents, and implementation guides |

| Stakeholder Engagement Frameworks | Structured involvement of key informants | Ensuring relevant perspectives inform issue recognition and factor assessment |

| Data Management Systems | Organization and storage of diverse information | Managing multiple data types throughout acquisition and analysis phases |

| Qualitative Analysis Software | Systematic coding and interpretation of textual data | Supporting thematic analysis of interviews, documents, and other qualitative sources |

| Visualization Tools | Graphical representation of patterns and relationships | Communicating complex findings in reporting phase through maps and diagrams |

Applications in Health Services and Drug Development Contexts

The RADAR-ES framework has significant applicability across various health services and drug development contexts. In health services delivery research, environmental scanning can inform strategic planning, service development, policy formulation, and quality improvement initiatives [16] [6]. Specific applications include assessing community health needs, evaluating implementation readiness for new interventions, identifying barriers to service access, and mapping available resources for specific patient populations.

In drug development and pharmaceutical services, environmental scanning provides systematic approaches to monitor the rapidly evolving landscape of therapeutic innovations, regulatory changes, market dynamics, and healthcare system readiness for new treatments [6]. Environmental scans can identify emerging research priorities, track competitor activities, assess adoption barriers for novel therapies, and inform patient access strategies. The structured approach of RADAR-ES ensures these scanning activities produce comprehensive, reliable information to support evidence-based decision-making throughout the drug development lifecycle.

The methodology is particularly valuable for understanding complex health service environments where multiple factors interact to influence delivery outcomes. By systematically examining practices, policies, technologies, and trends from diverse data sources, RADAR-ES enables researchers and health service stakeholders to develop nuanced understandings of current challenges and future needs in healthcare delivery [16]. This comprehensive perspective is essential for developing responsive, effective strategies in dynamic healthcare environments characterized by rapid technological change, evolving patient expectations, and constrained resources.

The RADAR-ES framework represents a significant advancement in the methodology of environmental scanning for health services research. By providing a structured, five-phase approach to conceptualizing, planning, and implementing environmental scans, this framework addresses a critical gap in methodological guidance previously noted by researchers and stakeholders [16] [6]. The standardized procedures enhance the consistency, quality, and trustworthiness of ES findings, supporting more robust evidence-informed decision-making in health services delivery.

For researchers, scientists, and drug development professionals, RADAR-ES offers a comprehensive methodology for examining the complex landscapes in which healthcare services operate and new therapies are developed and implemented. The framework's flexibility allows adaptation to various contexts while maintaining methodological rigor, making it suitable for diverse applications across the health sector. As environmental scanning continues to evolve as a distinct methodology, RADAR-ES provides a solid foundation for further methodological refinement and application in addressing current and future challenges in health services delivery.

Environmental scanning represents a critical, systematic methodology within the drug development landscape, enabling organizations to navigate immense complexity and uncertainty. This technical guide delineates how structured scanning of scientific, technological, regulatory, and competitive environments facilitates the identification of emerging opportunities and the early detection of potential risks. By integrating quantitative models, data visualization, and strategic analysis, environmental scanning provides a foundational evidence-base for decision-making, from exploratory research through late-stage clinical trials. Framed within a broader thesis on environmental scanning techniques, this whitepaper offers drug development professionals a rigorous framework to enhance R&D efficiency, optimize resource allocation, and ultimately improve the probability of success in delivering new therapies.

In the context of drug development, environmental scanning is defined as the systematic process of collecting, analyzing, and interpreting external and internal data to inform strategic decision-making [6]. The drug development industry faces a critical paradox: despite monumental advancements in foundational sciences like genomics and biotechnology, the rate of new molecular entity approval has remained stagnant amid skyrocketing research and development expenditures [21]. This inefficiency is frequently compounded by an attrition rate of 40-50% for chemical entities even in Phase III clinical trials, representing catastrophic late-stage failures [21]. Environmental scanning serves as an organizational imperative to counter these trends by raising awareness of emerging pressures, including scientific breakthroughs, regulatory shifts, competitive landscapes, and evolving patient demographics [6].

The core value proposition of environmental scanning lies in its ability to transform raw data into actionable intelligence. For research scientists and development professionals, this translates to multiple strategic advantages:

- Informed Go/No-Go Decisions: Providing quantitative and qualitative evidence to guide portfolio prioritization.

- Strategic Resource Allocation: Directing investments toward the most promising targets and technologies.

- Risk Mitigation: Identifying potential obstacles early in the development lifecycle when course corrections are less costly.

- Opportunity Identification: Revealing novel therapeutic avenues, collaborative partnerships, or underserved markets.

Within a research framework, environmental scanning moves beyond passive observation to become an active, disciplined process that is integrated throughout the drug development value chain.

Core Principles and Methodological Framework

The application of environmental scanning in healthcare and drug development is not merely an ad-hoc activity but a structured process. A recent scoping review of the healthcare literature identified that the most practical models typically encompass six primary steps [6]. These steps provide a reproducible methodology for research teams.

Table 1: Core Steps in the Environmental Scanning Process for Drug Development

| Step | Process Description | Key Activities in Drug Development Context |

|---|---|---|

| 1. Data Collection | Systematic gathering of internal and external data [6]. | Mining scientific literature, patent databases, clinical trial registries, regulatory guidance, and competitive intelligence. |

| 2. Data Organization | Structuring and categorizing collected information for analysis [6]. | Using standardized taxonomies for therapeutic areas, technologies, and development phases. |

| 3. Data Analysis | Interpreting data to identify significant patterns and trends [6]. | Applying statistical models, trend analysis, and SWOT (Strengths, Weaknesses, Opportunities, Threats) frameworks. |

| 4. Interpretation | Deriving meaning from the analysis to understand implications [6]. | Assessing the strategic impact of a new scientific discovery or a competitor's clinical trial result. |

| 5. Strategic Planning | Integrating insights into the organization's decision-making and planning processes [6]. | Updating target product profiles, refining clinical development plans, or adjusting research priorities. |

| 6. Monitoring & Alerting | Continuously tracking the environment for changes and early warnings [6]. | Setting up automated alerts for specific keywords, competitors, or regulatory updates. |

The effectiveness of this process hinges on several core principles. It must be continuous rather than episodic, systematic to ensure comprehensive coverage, and integrated so that insights are fed directly into R&D and strategic planning workflows [6]. Furthermore, the process should leverage both passive scanning (broad monitoring) and active searching (focused inquiry for specific information) to balance serendipity with direction.

The following diagram illustrates the cyclical and iterative nature of this process, highlighting how it fuels a continuous learning cycle.

Quantitative Modeling and Data Integration

A cornerstone of modern environmental scanning in drug development is the adoption of Model-Based Drug Development (MBDD). MBDD is a paradigm and mindset that promotes the use of mathematical models to delineate the path and focus of drug development [21]. In this framework, models serve as both the instruments and the aims of development, creating an iterative cycle where models inform strategy, and new data refines the models [21].

Key Modeling Concepts and Definitions

To ensure clarity, it is essential to distinguish between several related quantitative disciplines often referenced in this context:

- Pharmacokinetic-Pharmacodynamic (PK-PD) Modeling: A mathematical approach that links the change in drug concentration over time to the relationship between the concentration at the effect site and the intensity of the observed response [21].

- Exposure-Response Modeling: A similar approach to PK-PD modeling, where "exposure" can be a drug concentration or a summary metric (e.g., AUC), and "response" can be any efficacy or safety measure [21]. This term is often favored in clinical settings.

- Pharmacometrics: The scientific discipline that uses mathematical models based on biology, pharmacology, physiology, and disease for quantifying the interactions between drugs and patients [21]. It bridges data and information from various sources.

- Quantitative Pharmacology: A multidisciplinary approach that emphasizes the integration of relationships between diseases, drug characteristics, and individual variability across studies and development phases for rational decision-making [21].

- Model-Based Drug Development (MBDD): The overarching paradigm that encompasses the use of all available information and knowledge, formalized through models, to improve the efficiency and success rate of the entire drug development process [21].

The following workflow depicts how these quantitative elements integrate into a cohesive MBDD strategy, from pre-clinical to clinical stages.

Experimental Protocols for Key Modeling Exercises

To ground these concepts in practical application, below is a detailed methodology for a foundational environmental scanning activity: developing an integrated exposure-response model to inform Phase 3 trial design.

Protocol: Integrated Exposure-Response Analysis for Dose Selection and Trial Powering

Objective: To quantify the relationship between drug exposure (e.g., steady-state concentration) and primary clinical efficacy endpoint(s) and safety markers, enabling optimal dose selection and sample size calculation for a Phase 3 registrational trial.

Data Sources and Preparation:

- Source Data: Aggregate all available PK, PD, efficacy, and safety data from Phase 1 and Phase 2 studies.

- Data Formatting: Standardize dataset into a non-linear mixed-effects modeling-friendly format (e.g., .csv). Essential columns include:

STUDYID,USUBJID,TIMESTAMP,ACTIVITY(e.g., "Dosing", "PK Sample", "Efficacy Assessment"),DV(dependent variable, e.g., concentration, efficacy score), and relevant covariates (e.g., weight, renal function, disease severity) [21]. - Quality Control: Perform rigorous data cleaning and validation to identify and address outliers or missing data patterns.

Modeling Software and Tools:

- Primary Software: Utilize non-linear mixed-effects modeling software such as

NONMEM,R(withnlmixrpackage),Monolix, orPhoenix NLME. - Scripting: Develop and version-control model script files for full reproducibility.

- Primary Software: Utilize non-linear mixed-effects modeling software such as

Model Development Steps:

- Step 1: Base Model Development. Develop a structural model describing the typical relationship between exposure and response. For continuous endpoints, this may be a linear,

Emax, or logistic model. Estimate between-subject and residual variability. - Step 2: Covariate Model Building. Systematically test the influence of patient demographics, pathophysiological factors, and other covariates on model parameters to understand sources of variability.

- Step 3: Model Evaluation. Validate the final model using diagnostic plots (e.g., observed vs. predicted, residuals), visual predictive checks (VPC), and bootstrap analysis.

- Step 1: Base Model Development. Develop a structural model describing the typical relationship between exposure and response. For continuous endpoints, this may be a linear,

Simulation and Application:

- Virtual Population: Simulate a virtual patient population for the planned Phase 3 trial, reflecting the expected demographic and clinical characteristics.

- Trial Simulation: Simulate the clinical outcome for the virtual population across a range of proposed doses and sample sizes.

- Output Analysis: Calculate the probability of trial success (power) for each scenario. The final output is a data-driven recommendation for the Phase 3 dose and sample size, maximizing the chance of success while minimizing patient exposure to subtherapeutic or unsafe doses.

Data Visualization for Environmental Intelligence

Effective communication of insights derived from environmental scanning is paramount. The choice of visualization should be dictated by the specific question the data is intended to answer [22]. Below are key chart types relevant to drug development data.

Table 2: Guide to Data Visualization for Environmental Scanning in Drug Development

| Visualization Goal | Recommended Chart Type | Application Example in Drug Development |

|---|---|---|

| Comparison | Bar Chart (Vertical/Horizontal) | Comparing efficacy endpoint values (e.g., mean change from baseline) across different dose groups in a Phase 2 trial [22]. |

| Distribution | Histogram or Density Plot | Visualizing the distribution of pharmacokinetic parameters (e.g., clearance) in a population to identify subpopulations [22]. |

| Relationship | Scatter Plot | Assessing the correlation between a biomarker level and clinical efficacy to support biomarker validation [22]. |

| Composition (Static) | Stacked Bar Chart | Showing the proportion of patients with different grades of adverse events (e.g., Mild, Moderate, Severe) per treatment arm [22]. |

| Composition (Over Time) | Stacked Area Chart | Illustrating the changing proportion of competitor assets across different therapeutic modalities (e.g., small molecule, mAb, cell therapy) over a 10-year period [22]. |

| Multivariate Analysis | Heat Map | Visualizing gene expression data across multiple patient samples or conditions to identify signature patterns for target identification [22]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of experiments that generate critical data for environmental scanning relies on a suite of essential reagents and tools. The following table details key materials used in foundational drug development assays.

Table 3: Key Research Reagent Solutions for Drug Development assays

| Reagent/Material | Function and Application | Technical Specification Notes |

|---|---|---|

| Human Primary Cells | Provide a physiologically relevant in vitro system for target validation and toxicity screening. | Source (e.g., donor, tissue), passage number, and characterization (e.g., flow cytometry for cell surface markers) are critical. |

| ELISA/Singleplex Assay Kits | Quantify soluble biomarkers, cytokines, or therapeutic drug concentrations in plasma/serum/tissue lysates. | Validate for specificity, sensitivity, and dynamic range in the biological matrix of interest. |

| Phospho-Specific Antibodies | Detect activation states of signaling pathway targets (e.g., p-ERK, p-AKT) via Western Blot or IHC. | Specificity for the phosphorylated epitope must be confirmed via knockout/knockdown controls. |

| LC-MS/MS System | The gold standard for quantitative bioanalysis of small molecule drugs and their metabolites in biological fluids. | Method development must address selectivity, sensitivity, matrix effects, and linearity. |

| Flow Cytometry Panels | Characterize complex cell populations and their phenotypes in blood or tissue samples (e.g., immunophenotyping). | Panel design requires careful fluorochrome brightness and spillover compensation considerations. |

Environmental scanning, when executed as a disciplined, model-driven process, is indispensable for modern drug development. It provides the evidential backbone for strategic decisions, from initial target selection to late-stage clinical trial design. By systematically collecting and analyzing internal and external data, and leveraging quantitative frameworks like MBDD, organizations can illuminate the path forward, identifying promising opportunities while anticipating and mitigating risks that have historically plagued the industry. The integration of these techniques fosters a culture of evidence-based decision-making, ultimately enhancing R&D productivity and accelerating the delivery of new medicines to patients. For the research scientist and development professional, proficiency in these environmental scanning techniques is no longer a luxury but a fundamental component of professional competency.

Frameworks in Action: Applying PESTLE, SWOT, and RADAR-ES in Biomedicine

In the complex landscape of strategic management, environmental scanning provides a systematic approach for organizations to understand both internal and external factors that influence their performance and decision-making. For researchers, scientists, and drug development professionals, selecting the appropriate analytical framework is crucial for navigating regulatory requirements, technological advancements, and competitive pressures. This technical guide examines three predominant frameworks—PESTLE, STEEP, and SWOT—detailing their structures, applications, and methodological considerations within scientific and research contexts. These frameworks serve as foundational tools for strategic planning, enabling professionals to convert analytical insights into actionable strategies amid rapidly evolving technological and regulatory environments [23] [24].

Each framework offers a distinct lens for analysis: PESTLE investigates macro-environmental factors, STEEP provides a variant of this external analysis, and SWOT delivers a balanced assessment of both internal and external environments. Understanding their unique components, intersections, and appropriate applications is essential for organizations operating in research-intensive sectors like pharmaceutical development, where regulatory compliance, ethical considerations, and technological innovation significantly impact strategic outcomes [25] [26].

Core Framework Definitions and Components

PESTLE Analysis

PESTLE analysis represents a comprehensive macro-environmental scanning tool that examines six critical external domains. The acronym denotes Political, Economic, Social, Technological, Legal, and Environmental factors that collectively shape an organization's operating environment [27] [24]. This framework is particularly valuable for organizations requiring a structured approach to understanding external forces beyond their direct control.

Political factors encompass government policies, regulatory frameworks, and geopolitical dynamics that may impact organizational operations. For research and drug development, this includes regulatory approval processes, healthcare policies, and international trade agreements affecting material sourcing or technology transfer [24] [28]. Economic factors analyze macroeconomic conditions including inflation, interest rates, and economic growth patterns that influence research funding, capital investment, and market demand for developed products [27] [24]. Social factors investigate demographic trends, cultural attitudes, and population health characteristics that determine product acceptance and market needs [24] [28].

Technological factors evaluate innovations, research methodologies, and technological infrastructures that enable or disrupt existing development paradigms. In pharmaceutical contexts, this includes advancements in drug delivery systems, diagnostic technologies, and research instrumentation [24] [28]. Legal factors examine statutory requirements, compliance obligations, and judicial precedents governing industry operations, including intellectual property protection, liability considerations, and regulatory enforcement mechanisms [27] [24]. Environmental factors assess ecological influences, resource availability, and sustainability considerations that may affect manufacturing processes, supply chain logistics, and corporate social responsibility imperatives [27] [24].

STEEP Analysis

STEEP analysis provides a contextual framework for scanning external macro-environmental factors, structured around five analytical dimensions: Social, Technological, Economic, Environmental, and Political factors [23] [29]. While similar to PESTLE, STEEP typically excludes the dedicated legal dimension, instead integrating legal considerations within the political and environmental categories. This framework serves effectively for preliminary environmental scanning where legal factors are less dominant or can be appropriately incorporated within other domains.

Social factors in STEEP analysis focus on cultural norms, educational attainment, and workforce demographics that influence research directions and product development priorities [29]. Technological factors emphasize innovation trajectories, research and development activities, and technology transfer mechanisms that drive competitive advantage in knowledge-intensive industries [23] [29]. Economic factors examine capital availability, market stability, and investment patterns that determine the financial viability of research initiatives and development projects [29].

Environmental factors address ecological concerns, climate impacts, and sustainability requirements that increasingly shape research agendas, particularly in areas like green chemistry, environmental toxicology, and sustainable manufacturing processes [23] [29]. Political factors analyze governmental stability, policy orientations, and international relations that establish the regulatory context for scientific research and product commercialization [29].

SWOT Analysis

SWOT analysis represents a comprehensive strategic planning tool that evaluates both internal and external organizational environments. The framework synthesizes internal attributes (Strengths and Weaknesses) with external conditions (Opportunities and Threats) to provide a balanced strategic assessment [23] [30]. For research organizations and drug development teams, SWOT facilitates critical evaluation of capabilities, resources, and strategic positioning.

Strengths represent internal competencies, resources, and advantages that enhance an organization's competitive position. In research contexts, these may include specialized expertise, proprietary technologies, strong research networks, or distinctive intellectual property portfolios [30] [31]. Weaknesses constitute internal limitations, resource constraints, or competitive disadvantages that hinder performance. Examples include funding gaps, technical capability limitations, or organizational inefficiencies that impede research progress [30] [31].

Opportunities reflect external circumstances that could be leveraged for organizational advantage. These may include emerging research fields, funding initiatives, collaborative partnerships, or market needs aligning with organizational capabilities [30] [31]. Threats encompass external challenges that may jeopardize performance or competitiveness. For research organizations, these might include funding cuts, regulatory changes, competitive innovations, or technological disruptions that undermine current research approaches [30] [31].

Table 1: Comparative Framework Components

| Framework | Analytical Focus | Core Components | Primary Applications |

|---|---|---|---|

| PESTLE | External macro-environment | Political, Economic, Social, Technological, Legal, Environmental | Strategic planning, risk assessment, market entry decisions |