Environmental Monitoring Systems Performance Comparison 2025: A Strategic Guide for Research and Drug Development

This article provides researchers, scientists, and drug development professionals with a comprehensive, evidence-based comparison of environmental monitoring systems (EMS).

Environmental Monitoring Systems Performance Comparison 2025: A Strategic Guide for Research and Drug Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive, evidence-based comparison of environmental monitoring systems (EMS). It covers foundational principles, modern methodologies, best practices for troubleshooting, and a rigorous framework for system validation and selection. The guide synthesizes current market trends, technological advancements like AI and IoT integration, and regulatory requirements to empower professionals in making data-driven decisions that ensure product safety, data integrity, and compliance in biomedical research.

Understanding Environmental Monitoring Systems: Core Components and Critical Parameters

The field of environmental monitoring has undergone a fundamental transformation, evolving from relying on isolated data collection tools to operating sophisticated, connected networks. A modern Environmental Monitoring System (EMS) is an integrated architecture that links sensors and endpoints to a centralized data platform, transforming raw environmental readings into actionable intelligence through validation, visualization, and analysis [1]. This shift is driven by the convergence of Internet of Things (IoT) connectivity, advanced data analytics, and the pressing need for real-time decision-making in sectors ranging from pharmaceutical manufacturing to urban planning [2] [3].

This evolution represents a change in both technology and capability. Standalone tools, such as a portable sound level meter or a gas detector, capture measurements for a specific parameter at a single point in time [1]. In contrast, a connected monitoring system automates data collection from numerous such instruments, creating a continuous stream of validated information across multiple locations [1]. The core thesis of this guide is that this architectural shift—from tools to networks—yields significant, quantifiable gains in data accuracy, operational response time, and cost-effectiveness, which are critical for research and compliance-driven environments.

System Architecture: The Layers of a Modern EMS

A modern EMS functions as a layered network where each tier has a distinct role in moving data from the physical environment to the decision-maker. The architecture is typically composed of five key layers [1]:

- Endpoints & Sensors: These are the physical devices at the edge of the network, responsible for capturing environmental data. Examples include particulate matter (PM) sensors for air quality, pH sensors for water quality, Class 1 sound level meters for noise monitoring, and sensors for temperature and humidity [1] [4].

- Edge & Communications: This layer is responsible for moving data from sensors to the platform. It involves gateways and communication technologies like LoRaWAN (for low-power, long-range needs), LTE/5G (for higher bandwidth), or Wi-Fi [1] [2].

- Data Platform: Acting as the system's brain, this platform ingests data streams, stores time-series records, and performs automated Quality Assurance/Quality Control (QA/QC). This includes checks for range limits, spike detection, and calibration tracking to ensure data integrity [1].

- Visualization & Alerts: Here, raw data is transformed into actionable insights through dashboards, heatmaps, and trend analyses. User-defined thresholds can trigger automated alerts and initiate workflow escalations to enable immediate response [1] [5].

- Integrations: The most advanced systems are not siloed; they connect to other business and operational systems like Environmental Health and Safety (EHS) software, Computerized Maintenance Management Systems (CMMS), and Geographic Information Systems (GIS) via APIs and webhooks [1].

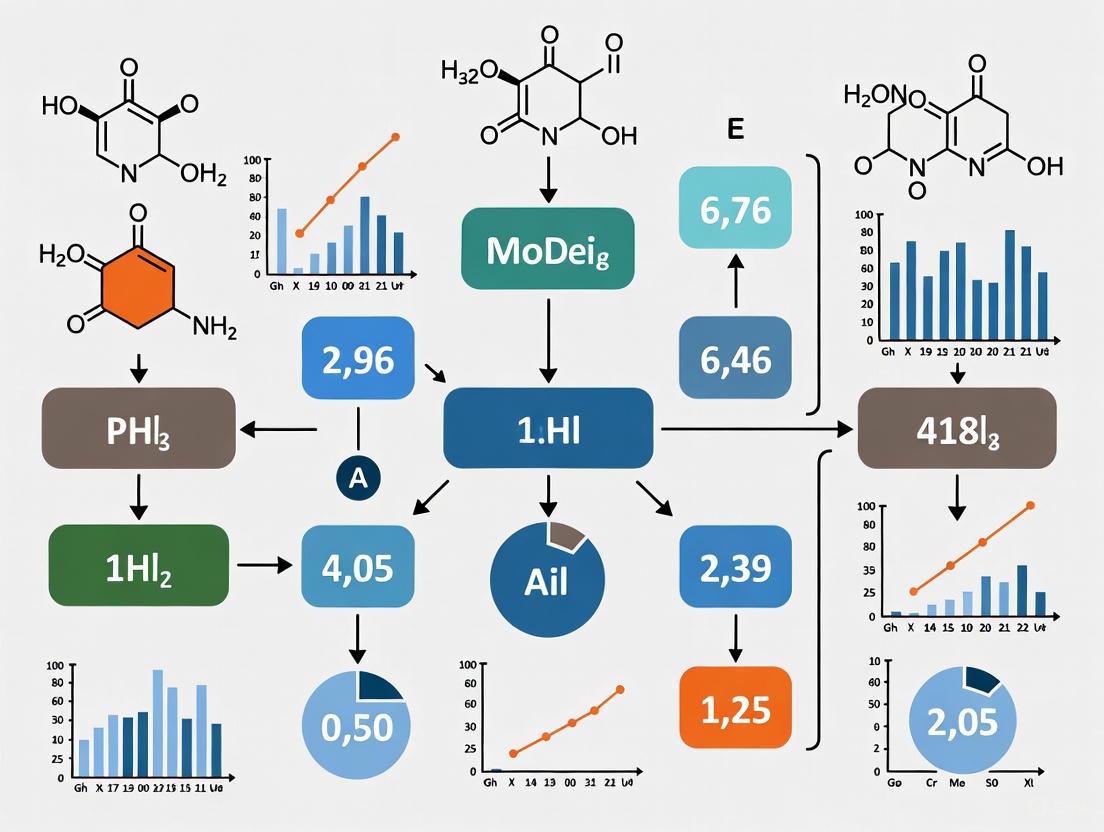

The following diagram illustrates the data flow and logical relationships between these layers.

Performance Comparison: Connected Networks vs. Standalone Tools

The transition from standalone tools to connected networks yields significant, quantifiable advantages across key performance indicators essential for research and industrial applications.

Table 1: Performance Comparison of Standalone Tools vs. Connected EMS Networks

| Performance Indicator | Standalone Tools | Connected EMS Network | Experimental / Citation Source |

|---|---|---|---|

| Data Accuracy & Integrity | Relies on manual recording; high risk of human error (typos, omissions) [5]. | Automated data collection and transmission; automated QA/QC checks (range, spike, drift) [1]. | |

| Problem Response Time | Delayed, as issues are only found during periodic manual checks [5]. | Immediate; real-time alerts trigger instant notifications for rapid response [5] [3]. | |

| Regulatory Compliance | Manual data compilation for reports is time-consuming; harder to demonstrate compliance during audits [5]. | Automated report generation; complete audit trails from detection to resolution [5] [1]. | |

| Operational Cost & ROI | High ongoing labor costs for data collection and entry; higher risk of costly batch failures [3]. | 40-60% reduction in monitoring labor; 60% reduction in contamination incidents; prevents major batch losses [3]. | |

| Spatial & Temporal Coverage | Limited to the specific place and time of manual measurement; creates data gaps [1]. | Continuous, multi-point monitoring provides a holistic view of conditions across space and time [5] [1]. |

Experimental & Case Study Data

Case Study: QMRA for Legionella in Cooling Towers

A 2025 study leveraged a large-scale regulatory monitoring database to demonstrate the power of integrated data systems for public health protection. Researchers analyzed 105,463 monthly Legionella pneumophila test results from cooling towers in Quebec, Canada, to develop a Quantitative Microbial Risk Assessment (QMRA) model [6].

- Experimental Protocol: The methodology involved statistical modeling of site-specific variations in pathogen concentration from the extensive database. These models were integrated into a screening-level QMRA to predict human health risks from aerosol exposure [6].

- Key Findings: The analysis identified that maintaining an average L. pneumophila concentration below 1.4 × 10⁴ CFU L⁻¹ was necessary to meet a health-based target. It successfully identified 137 cooling towers at risk due to predicted rare peak concentrations above 10⁵ CFU L⁻¹, a finding only possible with large-scale, connected monitoring data [6]. This showcases how a networked EMS moves beyond simple compliance testing to proactive risk prediction.

Experiment: Non-Random Resampling for Monitoring Design

Academic research has validated methodologies for optimizing monitoring programs using existing data. A technique known as non-random resampling allows researchers to "experiment with the past" by artificially degrading a complete long-term dataset to determine the optimal design of a future monitoring program [7].

- Experimental Protocol:

- Start with a complete, long-term monitoring dataset.

- Subsample the data in non-random ways (e.g., reducing the number of sampling sites, shortening the monitoring duration, or decreasing the sampling frequency).

- Calculate a key metric (e.g., population trend) for each subsample.

- Compare the subsample metrics to the "true value" from the complete dataset to understand how different sampling strategies affect the accuracy and power of the monitoring program [7].

- Application: This approach helps determine the minimum monitoring length and frequency required to detect species trends with statistical confidence, maximizing the value of information gained for every dollar spent on monitoring [7].

The Research Toolkit: Essential Components for a Modern EMS

Table 2: Key Research Reagent Solutions and Components in a Modern EMS

| Item | Function in the EMS | Research Application Example |

|---|---|---|

| Air Quality Mapping Node | Networked sensors for particulate matter (PM1, PM2.5, PM10) and gases; provides georeferenced data for hotspot analysis [1]. | Urban air quality studies; tracking industrial emission dispersion [4] [2]. |

| Class 1 Sound Level Meter | Provides survey-grade accuracy for environmental noise monitoring; can be configured as a fixed node for continuous logging [1]. | Assessing community noise impact from construction or transport infrastructure [8]. |

| Multi-Gas Monitor | Configurable instrument for detecting a range of gases (e.g., CO, SO₂, VOCs); often used for mobile or task-based monitoring [1]. | Personal exposure studies in industrial settings; confined space entry monitoring [1]. |

| IoT Communication Gateway | Device that aggregates data from multiple sensors and transmits it to the cloud via cellular, LoRaWAN, or other wireless protocols [1] [2]. | Enabling real-time data collection from remote or distributed sensor networks. |

| Data Platform with QA/QC | Cloud-based software that performs automated data validation (range, spike, flatline checks) and manages device calibration records [1]. | Ensuring data integrity and creating a defensible, audit-ready record for research publications or regulatory submissions. |

The evidence from both industry implementation and scientific research confirms that modern Environmental Monitoring Systems represent a paradigm shift. The move from standalone tools to connected, intelligent networks is no longer a luxury but a necessity for research and industries where data integrity, speed, and compliance are paramount. The architectural framework of a modern EMS provides the scaffolding for turning environmental data into a strategic asset, enabling proactive risk management, enhancing operational efficiency, and ultimately supporting safer and more sustainable operations.

In environmental science, the ability to make data-driven decisions hinges on the performance of Environmental Monitoring Systems (EMS). These systems provide the critical data on air quality, water levels, and meteorological parameters that inform public health initiatives and environmental policy [9]. The architecture of an EMS—comprising its endpoints, communication networks, platform, and applications—directly determines the reliability, accuracy, and usability of the data it produces. For researchers and drug development professionals, selecting the right system is paramount, as environmental conditions can significantly impact sensitive processes and long-term studies. This guide provides an objective, data-driven comparison of different EMS architectural approaches, focusing on their operational performance and suitability for research applications.

System Architectures and Performance Comparison

Environmental Monitoring Systems can be broadly categorized by their core communication technology, which dictates their capabilities, scalability, and ease of integration with modern IT infrastructure. The table below compares two prevalent architectural paradigms.

Table 1: Performance Comparison of EMS Communication Architectures

| Feature | Traditional IPv4/Proprietary IoT Systems | Next-Generation IPv6-Based Systems |

|---|---|---|

| Network Protocol | IPv4 with potential Network Address Translation (NAT) or proprietary protocols [10] | Native IPv6 [10] |

| Key Differentiator | Mature, widely deployed technology [10] | Massively scalable address space for global device identification [10] |

| End-to-End Connectivity | Often indirect, requiring gateways for data aggregation [10] | Direct, peer-to-peer communication is possible [10] |

| Data Accessibility | Data typically routed through a central server for user access [10] | Users can access individual monitoring devices directly via a unique IP address [10] |

| Inherent Security | Relies on add-on security measures [10] | Incorporates IPSec security protocol at the protocol level [10] |

| Ideal Research Application | Localized, small-to-medium scale studies with centralized data logging | Large-scale, distributed sensor networks requiring granular, device-level access and management |

Quantitative data from an experimental IPv6-based monitoring system demonstrates its operational viability. The system successfully achieved continuous data acquisition for parameters like air quality, rainfall, water level, pH, wind speed, temperature, and humidity [9]. Furthermore, the implementation of a simplified IPv6 protocol stack on resource-constrained ARM hardware shows that advanced networking can be achieved even on cost-effective devices, making sophisticated monitoring accessible for more research budgets [10].

Experimental Protocols for EMS Performance Evaluation

To ensure the reliability and accuracy of an Environmental Monitoring System, a rigorous evaluation of its performance is essential. The following methodology outlines key experiments that can be used to benchmark an EMS in a research context.

Experiment 1: Endpoint Data Accuracy and Precision

- Objective: To validate the accuracy and precision of data collected by endpoint sensors against reference-standard instruments.

- Protocol: Co-locate the EMS sensors with calibrated, high-accuracy reference instruments in a controlled environmental chamber or a representative field location. Simultaneously record measurements (e.g., PM2.5 concentration, temperature, humidity) from both the EMS and the reference instruments at a fixed interval (e.g., every 5 minutes) over a minimum period of 14 days.

- Data Analysis: Calculate key statistical measures, including the mean absolute error (MAE) and root mean square error (RMSE), to quantify accuracy. Determine standard deviation and coefficient of variation for repeated measurements under stable conditions to assess precision. Linear regression can be used to establish a correlation coefficient (R²) between the EMS data and the reference data.

Experiment 2: Communication Reliability and Latency

- Objective: To assess the robustness of the communication layer and the timeliness of data transmission.

- Protocol: Deploy multiple EMS endpoints at varying distances from the data aggregation point or platform. For systems using wireless protocols like Wi-Fi or LPWAN, systematically test communication reliability in both line-of-sight and non-line-of-sight conditions. Measure data packet loss rate over a 24-hour cycle and average data transmission latency (time from sensor measurement to platform receipt) under different network loads.

- Data Analysis: Report the percentage of successfully transmitted data packets and average latency in milliseconds. The results can be visualized to show the relationship between signal strength, distance, and data reliability.

Experiment 3: Platform Data Integrity and Storage Performance

- Objective: To verify that the platform layer correctly receives, stores, and processes data without corruption or loss.

- Protocol: Implement a script to generate a known set of "test" data points with unique identifiers and timestamps. Inject this data stream into the platform interface. After a set period, query the platform's database and export the stored data.

- Data Analysis: Compare the exported data with the original transmitted data. The data integrity rate is calculated as the percentage of data points that are perfectly matched in value, identifier, and timestamp. This test confirms the platform's reliability in handling the data pipeline.

The workflow for implementing and validating an EMS, incorporating these evaluation protocols, is illustrated below.

The Researcher's Toolkit: Essential Components for an EMS

Building or selecting a robust Environmental Monitoring System requires an understanding of its core components. The table below details the essential "research reagents"—the key hardware and software elements—that constitute a modern EMS.

Table 2: Essential Research Reagents for an Environmental Monitoring System

| Component | Function | Research Application Example |

|---|---|---|

| Sensors | Convert physical environmental parameters (e.g., PM2.5, temperature, pH) into electrical signals [9] [10]. | Measuring real-time exposure to particulate matter in a study on air quality and health outcomes [9]. |

| Microcontroller (e.g., Arduino) | Serves as the embedded brain of the endpoint; collects data from sensors, processes it, and manages communication [9]. | The core of a custom-built, cost-effective monitoring node for dense, hyper-local sensor deployment [9]. |

| IPv6 Network Stack | Software that enables the microcontroller to communicate over the internet using the IPv6 protocol, providing a globally unique address [10]. | Allows each sensor node in a vast network to be individually accessed and queried directly for granular data collection [10]. |

| Embedded Web Server | Software running on the microcontroller that allows remote users to access data and configure the device via a standard web browser [10]. | Enables researchers to view live data feeds and manage device settings in the field without physical retrieval. |

| Communication Modules (GSM/Wi-Fi) | Provide the physical layer for data transmission from the endpoint to the central platform or directly to the user [9]. | Transmitting field data from a remote water quality monitoring site to a central laboratory database in near real-time [9]. |

The logical relationship between these components, forming the architectural layers of the system, is shown in the following diagram.

The transition from traditional systems to modern, IP-based architectures represents a significant advancement in environmental monitoring technology. The data confirms that IPv6-based systems, with their global addressability and direct endpoint access, offer a scalable and robust framework for scientific research [10]. By applying the experimental protocols and performance metrics outlined in this guide—from assessing endpoint accuracy with MAE and RMSE to measuring communication packet loss—researchers can make objective, evidence-based decisions when implementing an EMS. This rigorous, data-driven approach to system selection and validation ensures that the resulting environmental data is reliable enough to support critical research and development efforts, from ensuring laboratory environmental controls to studying the ecological impact of new compounds.

In pharmaceutical manufacturing and research, environmental monitoring is a critical program designed to assess and control the cleanliness and safety of manufacturing facilities, particularly cleanrooms, to ensure they meet stringent quality standards [11]. The ultimate goal is to prevent microbial, particulate, and endotoxin/pyrogen contamination in sterile products, a principle enshrined in major international regulations from the FDA, EMA, and WHO [12] [13]. Modern guidelines, such as the EU GMP Annex 1, emphasize a holistic and proactive approach implemented through a Contamination Control Strategy , which is a planned set of controls derived from a deep understanding of the product and process [13]. Quality Risk Management principles are applied to identify, evaluate, and control potential risks to product quality, where environmental monitoring acts as a crucial verification tool confirming that designed controls are effective and maintained in a state of control [13]. This guide provides a comparative analysis of the key parameters—viable and non-viable particles, air quality, and physical factors like noise—within this framework.

Comparative Analysis of Monitoring Parameters & Regulatory Standards

The following parameters form the backbone of any environmental monitoring program in controlled environments. The limits and requirements are harmonized across major international regulations, though nuanced differences exist.

Non-Viable Particle Monitoring

Non-viable particles are inert materials such as dust, fibers, or skin flakes. While not living, they can act as vehicles for viable contaminants and disrupt unidirectional airflow [14]. Monitoring is performed using laser-based particle counters that provide real-time data on the concentration and size distribution of airborne particles, typically at sizes ≥0.5µm and ≥5.0µm [14] [15].

Table 1: Non-Viable Particle Limits for Cleanroom Classification and Monitoring (particles/m³ of air)

| Cleanroom Grade / Class | Particle Size | ISO Designation | EU GMP/WHO (At-Rest) | FDA (At-Rest) | Routine Monitoring Action Limit (EU GMP/WHO) |

|---|---|---|---|---|---|

| Grade A / Class 100 | ≥ 0.5 µm | ISO 5 | 3,520 [13] | 3,520 [13] | 3,520 [13] |

| Grade A / Class 100 | ≥ 5.0 µm | ISO 5 | Not specified (for classification) [13] | Not specified [13] | 29 [13] |

| Grade B / Class 1,000 | ≥ 0.5 µm | ISO 7 | 352,000 [13] | 352,000 [13] | 3,520 [13] |

| Grade B / Class 1,000 | ≥ 5.0 µm | ISO 7 | 2,930 [13] | Not specified [13] | 2,900 [13] |

| Grade C / Class 10,000 | ≥ 0.5 µm | ISO 8 | 3,520,000 [13] | 3,520,000 [13] | 352,000 [13] |

| Grade C / Class 10,000 | ≥ 5.0 µm | ISO 8 | 29,300 [13] | Not specified [13] | 29,000 [13] |

Viable (Microbiological) Particle Monitoring

Viable monitoring detects living microorganisms, such as bacteria, fungi, and spores, which pose a direct risk to product sterility [14]. This is assessed using methods like active air samplers, passive settling plates, and surface monitoring [11]. Results are expressed in Colony Forming Units (CFU).

Table 2: Action Limits for Viable (Microbiological) Monitoring

| Sample Type | Grade A / Class 100 | Grade B / Class 1,000 | Grade C / Class 10,000 | Grade D / Class 100,000 |

|---|---|---|---|---|

| Active Air (CFU/m³) | <1 [13] | 10 [13] | 100 [13] | 200 [13] |

| Settle Plates (CFU/4 hours) | <1 [13] | 5 [13] | 50 [13] | 100 [13] |

| Contact Plates (CFU/plate) | <1 [13] | 5 [13] | 25 [13] | 50 [13] |

| Glove Fingertips (CFU/plate) | <1 [13] | 5 [13] | - | - |

Noise Monitoring

While not directly related to product sterility, noise monitoring is essential for occupational health in pharmaceutical and research facilities, particularly in areas with high-noise equipment [16] [17].

Table 3: Noise Exposure Limits and Parameters

| Parameter | Workplace (Occupational) | Environmental |

|---|---|---|

| Primary Standard | OSHA / EU Directive 2003/10/EC [17] | EU Directive 2002/49/EC [17] |

| Exposure Limit (8-hr TWA) | 85 dBA (Upper Action Value) [16] [17] | ~65 dBA (Daytime) [17] |

| Absolute Exposure Limit | 87 dBA [17] | ~55 dBA (Nighttime) [17] |

| Monitoring Equipment | Noise dosimeters, Sound level meters [17] | Noise Monitoring Terminals (NMTs) [17] |

| Key Objective | Prevent hearing loss in workers [16] | Manage community noise pollution [17] |

Performance Comparison of Monitoring Technologies

Conventional vs. Rapid Microbiological Methods

The core comparison in viable monitoring lies between traditional growth-based methods and emerging rapid technologies.

Table 4: Conventional vs. Real-Time Viable Particle Monitoring

| Feature | Traditional Active Air Sampling | Laser-Induced Fluorescence (LIF) |

|---|---|---|

| Technology Principle | Impaction onto agar media & incubation [11] | Optical particle counting & fluorescence detection [15] |

| Detection Metric | Colony Forming Units (CFU) [11] | Fluorescent optical particle count [15] |

| Time to Result | 2-5 days (incubation) [11] | Real-time (seconds/minutes) [15] |

| Data Continuity | Discrete, point-in-time samples [15] | Continuous, temporally-resolved data [15] |

| Correlation with Non-Viable Counts | Low to moderate correlation observed in studies [18] | Directly correlated, as it is an enhanced form of particle counting [15] |

| Intervention in Grade A | Required for media placement [15] | Minimal; instrument outside critical zone [15] |

| Primary Application | Compendial, compliance-based monitoring [15] | In-process control, root-cause investigation [15] |

Experimental Protocol: Correlation Study Between Non-Viable and Viable Counts

A key area of research involves determining if non-viable particle counts can predict microbial contamination, which would allow for more responsive control.

Objective: To investigate the correlation between the number of airborne colony-forming units (CFU) and non-viable particles (≥0.5µm and ≥5.0µm) during a simulated manufacturing process.

Methodology (Based on a Reviewed Study [18]):

- Study Design: Parallel measurements are taken in a controlled environment (e.g., an operational cleanroom).

- Simulated Process: A typical aseptic process, such as media fills or component assembly, is performed to generate environmental activity.

- Monitoring:

- Viable Air Sampling: Active air samplers are placed at critical locations and operated for a set duration (e.g., 1 cubic meter of air per sample). Samples are incubated, and CFUs are counted [18].

- Non-Viable Particle Counting: Laser particle counters are set to continuously monitor and log particle counts (particles/m³) at the same locations and times.

- Data Analysis: A statistical correlation analysis (e.g., Pearson or Spearman correlation coefficient) is performed between the paired datasets of CFU/m³ and particle counts for each size threshold.

Typical Findings: A narrative review of 11 studies found that the correlation between particles and CFU is inconsistent, reporting strong, moderate, low, or no correlation. This suggests particle counting cannot reliably replace conventional microbial surveillance [18].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 5: Key Materials for Environmental Monitoring

| Item | Function |

|---|---|

| Tryptic Soy Agar (TSA) | General-purpose culture medium for the recovery of bacteria and fungi from air and surface samples [11]. |

| Sabouraud Dextrose Agar (SDA) | Selective culture medium designed for the enhanced recovery of fungi and yeast [11]. |

| Contact Plates | Contain solid culture media with a convex surface for sampling flat surfaces (equipment, gowns) [11]. |

| Neutralizing Agents | Additives (e.g., Lecithin, Polysorbate) in culture media to inactivate residual disinfectants for accurate sampling [11]. |

| Laser Particle Counter | Instrument for real-time counting and sizing of non-viable particles to verify cleanroom classification [14]. |

| Active Air Sampler | Instrument that draws a known volume of air onto a culture medium for viable microbial collection [11]. |

| Noise Dosimeter | Personal wearable device that measures an individual worker's cumulative noise exposure over a work shift [17]. |

| Class 1 Sound Level Meter / NMT | Precision instrument for accurate, short-term (sound level meter) or long-term, unattended (Noise Monitoring Terminal) noise measurements [17]. |

Workflow and Technology Visualization

Conventional Viable Monitoring Workflow

Laser-Induced Fluorescence (LIF) Technology

The landscape of environmental monitoring in pharmaceutical and research settings is defined by a clear regulatory framework that mandates rigorous control of non-viable particles, viable microorganisms, and occupational noise. While traditional, growth-based methods for viable monitoring remain the compendial standard, technological advancements like Laser-Induced Fluorescence offer compelling advantages in speed and data richness for in-process control and investigation. A robust monitoring program must be built on a foundation of Quality Risk Management, integrating data from both conventional and modern techniques to form a dynamic Contamination Control Strategy. This ensures not only compliance but also the proactive safeguarding of product quality and patient safety.

The global environmental monitoring market is undergoing a rapid transformation, moving from traditional manual methods toward integrated, real-time data systems. For researchers, scientists, and drug development professionals, this shift is not merely a matter of convenience but an operational imperative driven by regulatory pressure, technological advancement, and the critical need for data integrity. In pharmaceutical manufacturing, for instance, manual environmental monitoring (EM) can no longer keep pace with modern quality and compliance demands [3]. The convergence of Internet of Things (IoT) sensor technology, artificial intelligence (AI), and sophisticated data platforms is creating a new paradigm for environmental monitoring systems. This guide provides a performance comparison of these emerging real-time systems against traditional alternatives, framing the analysis within the broader context of academic and industrial research. The market data is unequivocal; the pharmaceutical environmental monitoring market alone was valued at USD 2.5 billion in 2024 and is anticipated to grow to USD 5.1 billion by 2033, exhibiting a compound annual growth rate (CAGR) of 8.7% [3]. This growth is fueled by the tangible benefits real-time systems offer, including enhanced accuracy, proactive risk management, and significant operational efficiencies.

The expansion of the environmental monitoring market is propelled by a confluence of powerful drivers that make the adoption of advanced systems a strategic necessity.

Quantitative Market Growth Projections

The following table summarizes the projected growth across various environmental monitoring segments, illustrating the sector's robust expansion.

Table 1: Environmental Monitoring Market Growth Projections (2025-2033)

| Market Segment | 2024/2025 Baseline Value | Projected Value | CAGR | Time Period | Primary Drivers |

|---|---|---|---|---|---|

| Global Pharmaceutical EM | USD 2.5 Billion [3] | USD 5.1 Billion [3] | 8.7% [3] | 2024-2033 | Regulatory tightening, competitive pressure, technological integration [3] |

| IoT Environmental Monitoring Tools | USD 0.11 Billion (2017) [19] | USD 21.49 Billion [19] | - | 2017-2025 | Demand for smarter solutions to reduce environmental impact [19] |

| IoT Sensor Technology | - | USD 4,760.2 Million [19] | 3.6% [19] | - | Stricter environmental regulations, pollution awareness, real-time data demand [19] |

| Soil Monitoring Market (Services Component) | - | - | 16.30% [20] | - | Need for professional data analysis and subscription-based dashboards [20] |

Primary Market Drivers

- Regulatory Tightening: Regulatory agencies worldwide are progressively tightening requirements. For example, the FDA has issued new guidelines recommending more frequent environmental monitoring in high-risk areas, emphasizing continuous rather than periodic checks [3]. Manual systems are incapable of delivering the frequency, consistency, and immediate response capabilities these regulations demand.

- Technological Integration and Competitive Advantage: The integration of IoT sensors, AI-powered analytics, and automation is a core growth driver. These technologies are transforming monitoring by enabling real-time data collection and analysis, which enhances accuracy, efficiency, and compliance [3]. Companies that implement these solutions report measurable improvements, such as a 60% reduction in contamination incidents and a 40% improvement in compliance rates [3].

- Demand for Sustainability and Operational Efficiency: There is a rapidly rising demand for IoT-based sustainability solutions, with a potential market opportunity expected to reach $250 billion by 2026 [19]. These systems help reduce greenhouse gas emissions and improve operational performance; a 1% increase in environmental performance correlates with a 0.114% increase in operational performance [19]. In agriculture, sensor networks with machine-learning analytics have achieved water savings of up to 30% and reduced fertilizer usage by 40% [20].

Comparative Analysis of Monitoring System Types

The performance characteristics of manual, sensor-based, and remote sensing systems vary significantly. The choice between them depends on the application's requirement for temporal resolution, spatial coverage, and data accuracy.

Performance Comparison of Monitoring Modalities

Table 2: Performance Comparison of Environmental Monitoring System Types

| Feature | Manual / Traditional Systems | IoT / Real-Time Sensor Systems | Remote Sensing (Satellite/Drone) |

|---|---|---|---|

| Temporal Resolution | Periodic (e.g., daily, weekly); low frequency [3] [21] | Continuous; high frequency (real-time) [3] [19] | Varies (snapshots); depends on satellite revisit cycles [21] |

| Data Latency | High (hours to days) for lab analysis [21] | Low (seconds to minutes) [19] | Moderate to high (requires data processing) [21] |

| Key Measured Parameters | Microbial contamination, particulate counts [3] | PM1, PM2.5, PM10, CO2, VOCs, NOx, temperature, humidity, pressure, water quality (pH, DO, turbidity) [22] [23] [21] | Chlorophyll-a, turbidity, total suspended solids, surface temperature, water color indices [21] |

| Typical Applications | Periodic cleanroom checks, compliance sampling [3] | Pharmaceutical cleanroom monitoring, smart agriculture, indoor air quality, perimeter water monitoring [3] [19] [1] | Large-scale water body assessment, ocean health, deforestation tracking, regional air quality events [19] [21] |

| Reported Accuracy | Subject to human error in collection and counting [3] | High (e.g., NDIR CO2 sensors are gold standard; particle counters ±10%) [22] [23] | Requires robust inversion models and atmospheric correction (e.g., Chl-a model R²=0.91) [21] |

| Advantages | Established protocols, no capital investment in advanced tech | Real-time alerts, predictive analytics, automated reporting, reduced labor [3] [19] | Large spatial coverage, synoptic view, access to remote areas [21] |

| Limitations | Reactive, high labor cost, unable to capture dynamic changes, prone to error [3] [21] | Initial investment, requires calibration and maintenance, potential connectivity needs [19] [21] | Susceptible to weather/cloud cover, measures surface/column data not in-situ, complex data processing [21] |

Experimental Protocols for System Validation

For research and compliance purposes, validating the performance of monitoring systems is crucial. The following are detailed methodologies cited in the literature for key application areas.

- Pharmaceutical Cleanroom Monitoring: A documented pilot implementation strategy involves running real-time systems alongside manual processes in parallel to validate performance [3]. This includes deploying IoT sensors in critical Grade A/B zones to continuously monitor air quality, surface contamination, and personnel monitoring parameters while simultaneously conducting traditional settle plates and active air sampling. The data sets are compared to establish correlation and validate the automated system's accuracy and reliability before full-scale deployment [3].

- Water Quality Monitoring: A research study employed a genetic algorithm to optimize a support vector machine (GA-SVM) model for predicting water quality trends [21]. The experimental protocol used 5,000 pieces of historical sensor data to train and validate the model, which demonstrated high prediction accuracy (RMSE = 0.04474, R² = 0.96580) for the period 2018-2023 [21]. This protocol validates the use of sensor data combined with AI for predictive monitoring.

- Air Quality Monitor Performance Testing: Independent evaluations, such as those by the South Coast Air Quality Management District (AQMD) Air Quality Sensor Performance Evaluation Center, test the accuracy of consumer-grade air monitors against reference instruments costing upwards of $20,000 [23]. Devices are placed in controlled environments and exposed to known concentrations of pollutants. Their readings are compared to those from high-fidelity reference analyzers, with performance metrics like ±10% accuracy for PM2.5 being reported for top-tier models [23].

Architecture of a Modern Real-Time Monitoring System

A modern Environmental Monitoring System (EMS) is a layered network that turns sensor readings into defensible decisions. Its architecture ensures data integrity from collection to action [1].

System Architecture and Data Flow

The following diagram visualizes the logical flow of data and actions in a real-time environmental monitoring system, integrating components from the sensor level to end-user applications.

Diagram Title: Real-Time Environmental Monitoring System Architecture

This architecture highlights a layered approach:

- Endpoints & Sensors: The physical devices that measure parameters like particulates, gases, water quality, and climate conditions [1]. Modern nodes include local data buffering to prevent loss during communication outages [1].

- Edge & Communications: The "edge layer" moves data via protocols like LoRaWAN, LTE/5G, or Wi-Fi, balancing coverage, power use, and cost [1].

- Data Platform: The system's core, responsible for data ingest, secure storage, and automated Quality Assurance/Quality Control (QA/QC) including range, spike, and drift analysis to maintain accuracy [1].

- Visualization & Alerts: Converts raw data into actionable insights through dashboards, heatmaps, and automated alerts with escalation workflows [1].

- Integrations: Ensures the EMS does not operate in isolation by connecting to other business systems (EHS, CMMS, ERP) via APIs to trigger automated actions [1].

The Researcher's Toolkit: Essential Components for Environmental Monitoring

Building or evaluating an environmental monitoring system requires an understanding of its core technological components. The table below details key "research reagent solutions"—the fundamental hardware, software, and sensing technologies that form the building blocks of modern systems.

Table 3: Essential Research Components for Environmental Monitoring Systems

| Component / Solution | Type | Primary Function | Key Specifications / Examples |

|---|---|---|---|

| NDIR CO₂ Sensor | Sensor | Precisely measures carbon dioxide (CO₂) levels; considered the gold standard for consumer-grade CO₂ monitoring [22] [23]. | Used in Aranet4 HOME and AirGradient One; long lifespan, requires less calibration [22] [23]. |

| Laser Scattering Particle Counter | Sensor | Measures particulate matter (PM1, PM2.5, PM10) by estimating particle concentration based on light scattering [22] [23]. | Plantower PMS5003/PMS6003; used in AirGradient One and PurpleAir Zen [22] [23]. |

| Gas Sensor (Metal Oxide) | Sensor | Detects relative changes in levels of volatile organic compounds (VOCs) and nitrogen oxides (NOx) [22]. | Sensirion SGP41; good at identifying sudden changes indicating a problem [22]. |

| LoRaWAN (Long Range Wide Area Network) | Communication Protocol | Provides long-range, low-power communication for distributed outdoor sensor deployments [1]. | Ideal where frequent data transmission is not critical; enables scalable deployment [1]. |

| QA/QC with Spike/Drift Detection | Software/Algorithm | Automatically validates incoming sensor data to maintain accuracy and flag instrument issues [1]. | Part of the data platform; uses range limits and drift analysis for automated data validation [1]. |

| Predictive Analytics (AI/ML) | Software/Algorithm | Uses historical and real-time data to forecast environmental trends and contamination risks [3] [19]. | Moves beyond reactive monitoring to predictive contamination control [3]. |

| Satellite Hyperspectral Imaging | Remote Sensing Tool | Enables large-scale mapping of soil and water parameters like organic carbon and chlorophyll-a [21] [20]. | Used in precision agriculture and ocean monitoring; provides high spatial resolution [21] [20]. |

The expansion of the environmental monitoring market is inextricably linked to the demonstrable superiority of real-time, connected systems over traditional manual methods. The drivers—regulatory demands, the proven ROI of advanced technologies, and the global push for sustainability—are not transient but foundational shifts. For the research and drug development community, the implications are clear: the future of environmental monitoring lies in integrated systems that provide continuous, validated, and actionable data. This transition enables a more proactive, predictive approach to quality control and environmental management, transforming data from a historical record into a strategic asset for safeguarding products, processes, and the planet.

For researchers and drug development professionals, navigating the complex regulatory environment for environmental monitoring systems is a critical component of ensuring product quality and patient safety. This guide provides a detailed comparison of three cornerstone frameworks governing this space: the U.S. Food and Drug Administration's 21 CFR Part 11 for electronic records and signatures, the European Union's Good Manufacturing Practice (GMP) Annex 1 on the manufacture of sterile medicinal products, and relevant ISO standards for environmental management and cleanroom classification.

Understanding the interplay between these frameworks is essential for designing robust monitoring systems, passing regulatory inspections, and facilitating global market access for pharmaceutical products. This analysis objectively compares the scope, technical requirements, and implementation approaches mandated by each regulatory body, providing a foundation for strategic decision-making in research and development.

The following table summarizes the primary focus and application context of each regulatory framework.

Table 1: Core Focus of the Regulatory Frameworks

| Framework | Primary Focus & Scope | Regulatory Context & Authority |

|---|---|---|

| FDA 21 CFR Part 11 | Establishes criteria for using electronic records and electronic signatures as equivalent to paper records and handwritten signatures [24]. | Mandatory for FDA-regulated industries (drugs, biologics, medical devices) when using electronic systems for required records [25]. |

| EU GMP Annex 1 | Provides supplementary guidelines for the manufacture of sterile medicinal products, with a comprehensive focus on contamination control strategies [26] [27]. | Legally enforced within the European Economic Area for all manufacturers of sterile human and veterinary medicinal products [26]. |

| ISO Standards (e.g., ISO 14644-1) | Specifies technical requirements for the classification of air cleanliness by particle concentration in cleanrooms and associated controlled environments [13]. | Internationally recognized standards, often adopted by reference by both FDA and EU GMP regulations for cleanroom classification and monitoring [13]. |

Technical Requirements for Environmental Monitoring

A critical area where these frameworks intersect is in the control and monitoring of manufacturing environments, particularly for sterile products. The following tables compare the specific technical requirements for non-viable and viable particle monitoring.

Non-Viable Particle Monitoring Limits

Non-viable particle monitoring is a key cleanroom control parameter. The limits for the highest grade of cleanroom (EU Grade A / ISO 5 / FDA Class 100) are compared below [13].

Table 2: Non-Viable Particle Limits for the Critical Zone (Grade A/ISO 5/Class 100)

| Framework | Particle Size ≥ 0.5 µm (particles/m³) | Particle Size ≥ 5.0 µm (particles/m³) | Monitoring State |

|---|---|---|---|

| EU GMP Annex 1 | 3,520 | Not specified for classification; Action limit of 29 for routine monitoring [13] | In-operation |

| FDA Guidance | 3,520 (Class 100) | Not specified [13] | In-operation |

| ISO 14644-1 | 3,520 (ISO 5) | 29 (ISO 5) | At-rest or In-operation (as specified) |

Key Insight: While harmonized on the 0.5 µm limit, a significant difference exists for 5.0 µm particles. The 2022 EU GMP Annex 1 introduces a strict action limit of 29 particles/m³ for routine monitoring, reflecting a risk-based focus on detecting rare but significant contamination events, whereas the 2004 FDA guidance does not specify a limit for this size [13].

Viable (Microbiological) Monitoring Action Levels

Microbiological monitoring is essential for assessing the biological quality of the cleanroom environment. The action levels for the highest grade areas are as follows [13].

Table 3: Viable Particle Action Levels for the Critical Zone (Grade A/ISO 5/Class 100)

| Monitoring Method | EU GMP Annex 1 (Grade A) | FDA Guidance (Class 100) |

|---|---|---|

| Settle Plates (diameter 90 mm), CFU/4 hours | No growth expected | No growth expected (per table footnote) |

| Air Samples (CFU/m³) | No growth expected | No growth expected (per table footnote) |

| Contact Plates (diameter 55 mm), CFU/plate | No growth expected | - |

| Glove Print (5 fingers), CFU/glove | No growth expected | - |

Key Insight: All frameworks enforce a near-zero tolerance for microbial contamination in the critical processing zone, with any growth triggering an investigation [13]. EU GMP Annex 1 provides a more comprehensive set of methods, including explicit requirements for glove and garment monitoring.

Implementation and Compliance Approaches

The frameworks differ in their philosophical approach to ensuring quality, which directly impacts system implementation.

Foundational Philosophy and System Controls

- FDA 21 CFR Part 11: Procedural and Technical Security: The regulation mandates specific controls for systems handling electronic records. These include validation of systems for accuracy and reliability, secure audit trails that are time-stamped and tamper-evident, strict access controls via unique user IDs, and controls for electronic signatures to ensure they are legally binding [24] [25]. The focus is on data integrity and security within computerized systems.

- EU GMP Annex 1: Holistic Quality Risk Management: This guideline champions a proactive, holistic approach centered on a Contamination Control Strategy (CCS). The CCS is a planned set of controls for microorganisms, endotoxins, and particles, derived from a deep product and process understanding [13]. It is underpinned by Quality Risk Management (QRM), which is used to identify, evaluate, and control all potential risks to quality, positioning environmental monitoring as a verification tool for the overall CCS [13].

- ISO Standards: Technical and System Foundations: ISO standards provide the universal technical and managerial foundations. For example, the ISO 14644 series offers standardized methodologies for cleanroom classification and testing, while ISO 13485 specifies requirements for a quality management system for medical device manufacturers, which is now being aligned with FDA's QMSR [28] [13].

Experimental and Monitoring Protocols

The following workflow diagram illustrates the typical process for establishing and maintaining an environmental monitoring program under these frameworks.

Diagram 1: Environmental Monitoring Program Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successfully implementing a compliant environmental monitoring system requires specific tools and materials. The following table details key components.

Table 4: Essential Materials for Environmental Monitoring and Control

| Item / Reagent | Primary Function | Application Context |

|---|---|---|

| Tryptic Soy Agar (TSA) Plates | Culture medium for the recovery of aerobic microorganisms via active air sampling and settle plates [13]. | Viable environmental monitoring in cleanrooms (Grade A/B/C/D). |

| Sabouraud Dextrose Agar (SDA) Plates | Culture medium for the recovery of fungi (molds and yeasts) [13]. | Viable environmental monitoring, particularly useful for monitoring in lower-grade areas and for detecting seasonal trends. |

| Neutralizing Agar | Culture medium containing agents to inactivate residual disinfectants (e.g., quaternary ammonium compounds) on surfaces. | Viable surface monitoring (contact plates, swabs) to ensure accurate microbial recovery without false negatives from disinfectant carryover. |

| Particle Counter | Instrument for measuring the concentration of non-viable airborne particles of specific sizes (e.g., ≥ 0.5 µm and ≥ 5.0 µm) [13]. | Non-viable particle monitoring for cleanroom classification and routine monitoring. Must be qualified and used with isokinetic probes in unidirectional airflow. |

| Microbial Identification System | Tools (genetic or biochemical) for identifying environmental isolates to the species level [13]. | Investigation of excursions and trend analysis. Essential for root cause analysis when a sterility test failure occurs. |

| Validated Software Platform | Computerized system for managing electronic records, data integrity, and audit trails [24] [25]. | Compliance with 21 CFR Part 11 for all electronic environmental monitoring records, signatures, and data. |

The regulatory frameworks of FDA 21 CFR Part 11, EU GMP Annex 1, and ISO standards, while overlapping in their goal of ensuring product quality, impose distinct and specific requirements. FDA 21 CFR Part 11 provides the foundational requirements for data integrity in computerized systems. EU GMP Annex 1 details a modern, risk-based contamination control strategy for sterile manufacturing. ISO standards, notably the 14644 series, supply the essential technical protocols for cleanroom classification and monitoring that are referenced by the other two regulatory bodies.

For researchers and developers, the key to success lies in an integrated approach. A robust environmental monitoring program must be built on a Contamination Control Strategy (CCS) as required by Annex 1, using the technical methods outlined in ISO standards, with all generated electronic data managed in compliance with 21 CFR Part 11. Understanding this interplay is paramount for designing effective experiments, selecting appropriate reagents and equipment, and ultimately achieving compliance in the global regulatory landscape.

Implementing EMS in Research and Drug Development: From Deployment to Data Integration

For researchers, scientists, and drug development professionals, the integrity of environmental monitoring data is paramount. The selection of a deployment model—encompassing both connectivity (Fixed vs. Mobile) and infrastructure (Cloud vs. On-Premise)—directly influences data accuracy, system reliability, and regulatory compliance. These choices form the foundational architecture of a monitoring network, determining how data is captured, transmitted, stored, and secured. Within the context of performance comparison for environmental monitoring systems, this guide provides an objective analysis of these critical technologies, supported by experimental data and structured methodologies to inform evidence-based decision-making.

Fixed vs. Mobile Connectivity for Environmental Monitoring

Connectivity forms the critical communication link between field sensors and data analysis platforms. The choice between Fixed and Mobile solutions dictates the reliability, speed, and location flexibility of your environmental data pipeline.

Core Technology and Performance Comparison

Fixed Wireless Access (FWA) provides a dedicated, line-of-sight connection by transmitting radio signals between a fixed antenna on the monitoring site and a nearby cell tower [29] [30]. This point-to-point or point-to-multipoint link is engineered for stability, often featuring service level agreements (SLAs) that guarantee uptime and performance [30]. In contrast, Mobile Broadband (4G LTE/5G) operates on a shared public network, where bandwidth is consumed competitively among all users in a coverage area, leading to potential network congestion and variable speeds [29] [30].

Table 1: Performance Comparison of Fixed and Mobile Connectivity

| Performance Metric | Fixed Wireless | Mobile Broadband |

|---|---|---|

| Typical Download Speed | Up to 10 Gbps dedicated [30] | "Up to" 100 Mbps, often 1-100 Mbps [30] |

| Typical Upload Speed | Symmetrical (equal to download) [30] | Asymmetrical (significantly slower than download) [30] |

| Reliability & Uptime | High; SLA-backed, monitored service [30] | Variable; best-effort, no guarantees [30] |

| Latency | Low and consistent [29] | Can fluctuate with network load |

| Data Caps | Typically no usage caps [30] | Often usage-capped, with throttling after a limit [30] |

Experimental Data and Research Findings

Empirical analysis of deployment factors confirms that platform competition and infrastructure are primary drivers for fixed broadband adoption [31]. Performance data from operational networks demonstrates that FWA provides a more reliable service at a fixed location, while mobile broadband offers superior location flexibility [29]. The "up to" speed advertised for mobile broadband can result in real-world performance as low as 1 Mbps in congested areas, making it unsuitable for high-frequency data transmission from multiple sensors [30]. Furthermore, fixed wireless is engineered with a "fade margin" to minimize performance impacts from weather, whereas mobile signals can be significantly degraded by building materials like metal [30].

Decision Workflow: Selecting a Connectivity Model

The following diagram outlines the logical decision process for researchers choosing between fixed and mobile connectivity, based on site-specific requirements.

Cloud vs. On-Premise Infrastructure for Data Management

The infrastructure model governs how the vast quantities of data collected by environmental sensors are stored, processed, and analyzed. This choice balances control against flexibility and operational overhead.

Architectural and Economic Comparison

In an On-Premise deployment, all hardware, software, and data storage are managed on the researcher's own infrastructure, behind the organization's firewall [32] [33]. This model provides complete local control. Cloud Computing relies on a third-party provider's servers, with resources accessed on-demand via the internet, typically through a subscription model [32] [33].

Table 2: Economic and Operational Comparison of Cloud and On-Premise Infrastructure

| Factor | On-Premise | Cloud |

|---|---|---|

| Upfront Cost | High initial investment in hardware and licenses [33] [34] | Low to none; pay-as-you-go subscription [33] [34] |

| Ongoing Maintenance | Continuous cost for space, power, and expert IT staff [32] [33] | Handled by the provider; reduces internal needs [33] [34] |

| Scalability | Limited; requires purchasing and installing new hardware [34] | Highly flexible; resources can be adjusted instantly [33] [34] |

| Upgrades | Costly; may require new hardware or system re-configurations [33] | Typically included in subscription; performed automatically [33] |

| Control & Customization | Complete control over data, systems, and upgrades [33] [34] | Limited by provider's standardized configurations [33] |

Security, Compliance, and Data Integrity Protocols

For environmental and drug development research, data security and regulatory compliance are non-negotiable.

- Security: On-premise infrastructure offers greater security control by keeping all data within a private infrastructure, avoiding exposure to third parties [32] [34]. While cloud security is a common concern, major cloud providers now often offer robust security measures that can surpass what individual organizations can afford on their own [33].

- Compliance: On-premise environments are often preferred in highly regulated industries (e.g., banking, government) as they allow direct verification that all controls and policies are followed, easing compliance with standards like HIPAA or FERPA [32] [34]. In a cloud model, enterprises must perform due diligence to ensure their third-party provider is fully compliant with all relevant regulatory mandates in their industry [32].

- Data Integrity and Access: On-premise systems operate independently from internet connectivity, ensuring seamless access to data and software even during external network outages [34]. Cloud computing, however, is entirely dependent on a stable internet connection; interruptions will cut off access to work resources and data [33] [34].

Decision Workflow: Selecting an Infrastructure Model

The logical pathway for selecting the appropriate data management infrastructure is guided by primary research constraints and objectives.

The Researcher's Toolkit: Essential Components of an Environmental Monitoring System

Building a robust environmental monitoring system requires the integration of specialized components. The table below details key research reagent solutions and hardware essential for assembling a functional monitoring network, as derived from real-world system architectures [4] [1].

Table 3: Research Reagent Solutions for Environmental Monitoring Systems

| Component | Function | Example Products / Specifications |

|---|---|---|

| Air Quality Sensors | Measure concentrations of critical air pollutants and particulates. | Sensors for PM1, PM2.5, PM10, SO2, NOX, O3, CO [4] [1]; e.g., dnota Bettair Air Quality Mapping System [1]. |

| Water Quality Probes | Track key physicochemical parameters of water bodies. | Probes for temperature, pH, conductivity, turbidity, dissolved oxygen [1]. |

| Acoustic Monitors | Quantify noise pollution levels with survey-grade accuracy. | Class 1 Sound Level Meters; e.g., Casella CEL-633.A1 for environmental noise monitoring [1]. |

| Multi-Gas Monitors | Detect and measure hazardous gases in mobile or task-based work zones. | Configurable multi-gas instruments; e.g., RAE Systems QRAE 3 or MultiRAE Plus [1]. |

| Communication Gateway | Transmit sensor data to the central platform securely. | Gateways using LoRaWAN (low power, long-range), LTE/5G (high bandwidth), or Wi-Fi [1]. |

| Data Platform & Analytics | The core system for data ingest, storage, QA/QC, visualization, and alerting. | Cloud or on-premise software with time-series database, dashboards, threshold alarms, and calibration tracking [1]. |

Integrated System Architecture and Experimental Protocol

A modern Environmental Monitoring System (EMS) is a layered network that automates the collection, validation, and analysis of environmental data across dispersed locations [1]. Understanding this architecture is a prerequisite for designing effective deployment experiments.

The system transforms raw sensor readings into actionable intelligence through a coordinated workflow across distinct layers [1]:

- Endpoints/Sensors: Measure environmental parameters (e.g., air, water, noise) [1].

- Edge & Communications: Transmit data via protocols like LoRaWAN or LTE/5G [1].

- Data Platform: Stores and validates time-series data, managing QA/QC and calibration [1].

- Visualization & Alerts: Converts data into dashboards and triggers actionable alarms [1].

- Integrations: Connects to other systems (EHS, CMMS) via APIs [1].

Experimental Protocol for Deployment Model Comparison

To objectively compare the performance of different deployment models (Fixed vs. Mobile, Cloud vs. On-Premise) for environmental monitoring, researchers should adopt a structured experimental methodology.

- Objective: To quantify the impact of connectivity and infrastructure models on data reliability, transmission latency, and operational overhead in a continuous environmental monitoring scenario.

- Hypothesis: Fixed Wireless connectivity will provide lower latency and higher data reliability than Mobile Broadband, while Cloud infrastructure will offer greater scalability and lower initial setup costs than On-Premise solutions, though with potential long-term subscription costs and internet dependency.

- Methodology:

- Site Selection: Establish two identical monitoring stations at a fixed location, equipped with matched sensors (e.g., particulate matter, noise levels).

- Variable Control: One station will use Fixed Wireless connectivity, the other Mobile Broadband. Both will stream data to both a Cloud and an On-Premise data platform in a parallel setup.

- Data Collection Period: Conduct continuous monitoring over a minimum of 30 days to capture normal and potential peak usage or adverse weather conditions.

- Metrics Quantification:

- Latency: Measure time intervals from sensor reading to platform receipt.

- Data Packet Loss: Calculate the percentage of data packets failed to transmit.

- Uptime: Log total and unscheduled downtime for each connectivity model.

- Cost Tracking: Document all upfront and ongoing costs for both infrastructure models.

- Scalability Test: Simulate a data load increase to measure system response and resource scaling effort.

This protocol, emphasizing controlled variables and quantitative metrics, allows for an evidence-based selection of deployment models tailored to specific research needs and constraints.

The accuracy and reliability of environmental monitoring systems are fundamentally dictated by the strategic placement of their core components: sensors, network nodes, and physical sampling probes. For researchers and drug development professionals, understanding this synergy is critical for generating defensible data, particularly under stringent regulatory frameworks. Optimal Sensor Placement (OSP) ensures that data collected from discrete points accurately represents the state of the entire system, whether for reconstructing a deformation field in a structure or determining the concentration of particulate matter in emissions [35]. Concurrently, the positioning of sensor nodes in a Wireless Sensor Network (WSN) is vital for maintaining data integrity during transmission, conserving energy, and ensuring complete coverage of the monitored area [36]. Furthermore, in stack emissions monitoring, the principle of isokinetic sampling—collecting a gas sample at the same velocity as the gas stream—is the cornerstone of extracting a representative sample, without which measurements of particulate matter are invalid [37] [38] [39]. This guide objectively compares the performance of different placement strategies and sampling techniques, providing a foundational resource for the design and validation of environmental monitoring systems in critical research and development applications.

Sensor and Node Placement Strategies for Maximum Efficacy

The placement of sensors and network nodes is a multi-objective optimization problem that directly impacts a system's performance, cost, and longevity. The strategies can be broadly classified into static and dynamic approaches, each with distinct advantages and trade-offs.

Static Placement Strategies

Static placement involves determining optimal node positions prior to network deployment. This approach is common in controlled environments and for applications with predictable operational patterns.

- Coverage-Oriented Placement: The primary goal is to ensure the sensor network covers the entire region of interest. The performance is measured by the area coverage percentage and the uniformity of node distribution. As demonstrated in structural health monitoring, a well-planned OSP can achieve high-accuracy shape sensing with a minimal number of strain gauges [35].

- Connectivity-Oriented Placement: This strategy focuses on maintaining a strongly connected network topology to ensure reliable data transmission. Key performance metrics include network connectivity strength and the average path length from sensor nodes to the base station. Research indicates that a hierarchical topology can significantly reduce energy consumption in multi-hop networks [36].

- Energy-Driven Placement: By strategically placing nodes, particularly data-aggregating cluster heads or base stations, the overall energy expenditure of the network can be minimized. This directly prolongs the network's operational lifetime. Studies show that optimal base-station placement can mitigate energy bottlenecks and balance the load across the network [36].

Table 1: Comparison of Static Node Placement Strategies

| Strategy | Primary Objective | Key Performance Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Coverage-Oriented | Maximize monitored area | Coverage percentage, node density | Ensures no blind spots, simple to model | May ignore network connectivity and energy use |

| Connectivity-Oriented | Ensure reliable data paths | Network connectivity, path length | Robust data transmission, reduced latency | May lead to over-provisioning of nodes |

| Energy-Driven | Prolong network lifetime | Total energy consumption, network lifetime | Cost-effective, sustainable for long-term use | Optimal placement is often NP-Hard and complex to solve [36] |

Dynamic Placement Strategies

In many real-world applications, static optimality becomes void due to changing conditions such as node failures, shifting traffic patterns, or evolving monitoring requirements. Dynamic strategies allow for adjustment during network operation.

- Sink Repositioning: This involves moving the data collection point (sink) in response to changes in network traffic. This technique effectively prevents energy holes and bottlenecks that form around a static sink, balancing energy consumption and extending network lifetime [36].

- Coordinated Node Movement: For critical missions, multiple nodes can be repositioned in a coordinated manner. This is used to repair network partitions, improve coverage after node failures, or adapt to new event hotspots. While highly effective, this strategy requires complex coordination algorithms and is more feasible with mobile robotic nodes [36].

The choice between static and dynamic strategies depends on the application's constraints and requirements. Static methods are simpler and less costly to deploy, while dynamic methods offer superior adaptability and resilience in unpredictable environments.

Isokinetic Sampling Probes (ISPs): The Gold Standard for Representative Stack Sampling

Isokinetic Sampling is the reference method mandated by environmental protection agencies worldwide (e.g., US EPA Method 5, BS EN 13284-1) for determining particulate matter emissions from stationary sources [37] [38]. Its core principle is to extract a sample from a gas stream (like a stack or duct) at a velocity identical to the velocity of the gas at the sampling point.

The Principle and Importance of Isokinetic Sampling

When sampling is isokinetic, the streamlines of the gas are not distorted as they enter the probe nozzle, ensuring that the concentration and size distribution of particles entering the probe are identical to those in the main gas stream. Non-isokinetic sampling leads to significant errors:

- Sub-isokinetic sampling (sample velocity < gas velocity) causes an over-representation of larger, heavier particles due to their inertia, leading to a positively biased measurement.

- Super-isokinetic sampling (sample velocity > gas velocity) results in an under-representation of larger particles, yielding a negatively biased measurement [39].

The accuracy of this method is paramount, as it forms the basis for calibrating Continuous Emission Monitoring Systems (CEMS) and for demonstrating compliance with emission limit values (ELVs) [38].

Performance Analysis and Reliability

Despite its status as the standard reference method, the reliability of isokinetic sampling, particularly at low particulate concentrations, has been the subject of research. An analysis of data from 21 UK processes revealed critical insights into the distribution of particulate matter within the sampling train, a key indicator of potential measurement inaccuracies [38].

Table 2: Experimental Data on Particulate Distribution in Isokinetic Sampling

| Process Particulate Concentration | Average Particulate Mass Collected on Filter | Average Particulate Mass Collected in Rinse | Percentage of Total Sample in Rinse |

|---|---|---|---|

| < 5 mg/m³ | 19.3% | 80.7% | 80.7% |

| > 5 mg/m³ | 43.6% | 56.4% | 56.4% |

This data shows that for low-concentration processes (<5 mg/m³), which are increasingly common due to stricter regulations, the majority of the particulate mass is found not on the primary filter but in the rinse of the probe and sampling train. This suggests significant particulate bounce, blow-off, or condensation losses within the sampling system. The study concluded that there was no strong correlation between this distribution and parameters like stack velocity or isokinetic percentage, highlighting a fundamental methodological challenge at low concentrations and raising questions about the overall accuracy and uncertainty of the method in such contexts [38]. Other research corroborates these findings, suggesting that nozzle geometry and super-isokinetic practices can lead to an underestimation of emissions by up to 13% [38].

Experimental Protocols for Placement and Sampling

To ensure the validity and reproducibility of data, adherence to standardized experimental protocols is essential.

Protocol for Optimal Sensor Placement using the Modal Method

The Modal Method is a recognized technique for shape sensing and optimal sensor placement, particularly in Structural Health Monitoring (SHM) [35].

- Finite Element (FE) Model: Develop a detailed FE model of the structure to be monitored.

- Modal Analysis: Perform a modal analysis on the FE model to extract the mode shapes for both displacements (( \phid )) and strains (( \phis )).

- Mode Selection: Identify a reduced set of modes (( M )) that can accurately represent the static deformation under the expected load cases using a procedure like the least-squares fit of modal coordinates [35].

- Sensor Position Optimization: The optimal sensor positions are those that minimize the condition number of the strain modal shape matrix (( \phi_s )) or a similar criterion, ensuring the system of equations is well-conditioned for solving the inverse problem.

- Displacement Reconstruction: After placing sensors at the optimal locations and collecting strain data (( \varepsilon )), the displacement field (( u )) is reconstructed using the equation: ( u = \phid (\phis^T \phis)^{-1} \phis^T \varepsilon ) [35].

Protocol for US EPA Method 5 Isokinetic Sampling

This is the foundational protocol for particulate matter emissions measurement [37].

- Pre-test Preparation: Determine the sampling location and traverse points (Method 1), measure stack gas velocity (Method 2), and analyze gas composition for dry molecular weight (Method 3). Weigh the filter in a controlled environment.

- Equipment Setup: Assemble the sampling train, which includes a nozzle and probe, a filter in a heated oven, a series of impingers in an ice bath, a vacuum pump, and a dry gas meter for measuring sample volume.

- Isokinetic Sampling: Insert the probe into the stack and adjust the sample flow rate so that the velocity at the nozzle entrance equals the stack gas velocity at each traverse point. This is maintained throughout the sampling period.

- Post-test Analysis: Carefully recover the probe and impingers, and rinse them into a container. Weigh the filter and the solids from the rinse. The total mass of particulate is the sum of the filter gain and the solids from the rinse.

- Calculation: Calculate the particulate concentration using the total mass collected and the total volume of dry gas sampled, corrected to standard conditions.

Visualization of Strategies and Workflows

The Researcher's Toolkit: Essential Research Reagent Solutions

Table 3: Key Equipment for Sensor Networks and Isokinetic Sampling

| Item | Function | Application Context |

|---|---|---|

| Strain Gauges / FOS | Measure surface strain at discrete points. | Optimal Sensor Placement for shape sensing in SHM [35]. |

| Arduino Nano 33 IoT / Microprocessors | Acts as a sensor node for data acquisition, processing, and wireless transmission. | Realizing a Wireless Sensor Network (WSN) [35]. |

| Isokinetic Sampling Probe (e.g., SUTO iTEC device) | Ensures representative sample extraction by matching stack and sample velocities. | Particle measurement in compressed air according to ISO 8573-4 [39]. |

| Type-S Pitot Tube | Measures stack gas velocity, which is critical for calculating isokinetic sampling rate. | US EPA Method 2 and integrated into the probe assembly [37]. |

| Heated Probe & Filter Oven | Maintains sample gas temperature above the dew point to prevent condensation and particulate loss. | US EPA Method 5 and BS EN 13284-1 [37]. |

| Impinger Train (Cold Box) | Cools and saturates the sample gas to condense and capture moisture. | Essential for determining stack gas moisture content [37]. |

Best Practices for Personnel, Surface, and Air Monitoring in Cleanrooms

In regulated industries such as pharmaceuticals, biotechnology, and medical devices, maintaining a controlled environment is paramount for product safety and efficacy. Cleanroom environmental monitoring (EM) is a critical system designed to collect and analyze data related to airborne particles and microorganisms on surfaces and personnel. Its primary goal is to provide sterility assurance during aseptic operations and ensure compliance with stringent Good Manufacturing Practice (GMP) and ISO standards [40]. A robust EM program acts as an early warning system, detecting contamination risks before they can compromise product batches.

The consequences of inadequate monitoring can be severe, leading to product recalls, regulatory fines, and potential harm to patients [41]. Furthermore, up to 80% of cleanroom contamination originates from personnel working within them, highlighting the need for comprehensive monitoring that encompasses air, surfaces, and people [41]. This guide details the best practices for these three critical areas, providing a framework for researchers and drug development professionals to build a data-driven contamination control strategy (CCS) that aligns with modern regulatory expectations.

Personnel Monitoring: Managing the Primary Contamination Source

Even in highly automated facilities, personnel represent the most significant variable and potential source of contamination in aseptic environments. Humans naturally shed up to 40,000 skin cells per minute, and movements can increase particle emission five to tenfold [41] [42]. Personnel monitoring is therefore not a matter of distrust but a scientific necessity to assess microbial shedding from gloves, gowns, and other exposed areas [42] [40].

Key Methods and Procedures

The cornerstone of personnel monitoring is contact plate sampling on critical gowning sites. This involves using pre-filled nutrient media plates to culture microorganisms transferred from personnel onto growth media [43].

- Sampling Technique: Trained personnel press contact plates (such as RODAC plates) against critical gowning sites—typically gloved fingers, chest, and forearms—for a defined period (e.g., 10 seconds) with gentle, even pressure [43] [44].

- Sample Handling: After sampling, plates must be accurately labeled for full traceability, stored in designated incubators, and all relevant details entered into a digital monitoring logbook [43].

- Training and Simulation: Effective monitoring requires thorough training. Immersive simulation-based training builds muscle memory and confidence, teaching operators proper techniques like avoiding touching agar surfaces and handling plates correctly to preserve sample integrity [43].

Experimental Protocol for Personnel Monitoring Validation

To validate the effectiveness of a personnel monitoring program and gowning procedures, the following experimental protocol can be implemented.

Table 1: Experimental Protocol for Personnel Monitoring Validation

| Protocol Step | Description | Critical Parameters |

|---|---|---|

| 1. Preparation | Ensure contact plates are within expiry and growth-promoting. Personnel must be fully gowned. | Media qualification (e.g., USP <61>), successful gowning certification [40]. |

| 2. Sampling | Apply contact plates to predefined critical sites for a specified duration. | Consistent pressure and contact time (e.g., 10 seconds) across all samples [44]. |

| 3. Incubation | Incubate plates under defined conditions for microbial growth. | Dual-temperature incubation (e.g., 20-25°C for fungi, 30-35°C for bacteria) for up to 5 days [44]. |

| 4. Data Analysis | Count Colony-Forming Units (CFU) and compare to established action limits. | Trend data over time; investigate any counts exceeding alert/action levels [40] [44]. |

Essential Research Reagent Solutions

Table 2: Key Reagents for Personnel Monitoring

| Item | Function | Application Notes |

|---|---|---|

| Contact Plates (RODAC) | Contains culture medium (e.g., TSA, SDA) for direct surface sampling. | Often include neutralizing agents (e.g., Letheen broth) to counter residual disinfectants [44]. |

| Neutralizing Diluent | Inactivates disinfectants on sampled surfaces to allow microbial growth. | Crucial for obtaining accurate results after cleaning cycles [44]. |

| Incubators | Provides controlled temperature for microbial growth. | Requires dual-temperature capability for recovery of different microbial types [44]. |

Figure 1: Personnel Monitoring Workflow. This diagram outlines the key steps for a personnel monitoring procedure, from preparation through to corrective action.

Surface Monitoring: Ensuring Microbial Control on Equipment and Facilities