Environmental Forecasting and Scenario Planning: Advanced Models for Biomedical Research and Drug Development

This article provides a comprehensive examination of environmental forecasting models and scenario planning methodologies, tailored for researchers and professionals in drug development and biomedical science.

Environmental Forecasting and Scenario Planning: Advanced Models for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive examination of environmental forecasting models and scenario planning methodologies, tailored for researchers and professionals in drug development and biomedical science. It explores the foundational principles of forecasting complex environmental systems, details cutting-edge hybrid methodologies that integrate quantitative data with qualitative expert judgment, and addresses critical challenges such as data imbalance and model uncertainty. By presenting rigorous validation frameworks and comparative analyses of traditional versus machine-learning approaches, this resource aims to equip scientists with the knowledge to leverage environmental forecasting for enhanced decision-making in pharmaceutical research, from assessing compound environmental risks to predicting climate-related health impacts.

The Foundations of Environmental Forecasting: From Concepts to Complex Systems

Defining Environmental Forecasting and Its Core Principles

Environmental forecasting is the systematic process of utilizing scientific data and models to predict future environmental conditions and changes [1]. It encompasses a wide array of natural systems, including atmospheric, hydrological, ecological, and geological processes, with the fundamental intention of providing actionable insights into potential environmental shifts [1]. This enables proactive measures for mitigation, adaptation, and sustainable resource management, serving as a critical tool for informing decision-making across various sectors from agriculture and urban planning to disaster preparedness and conservation efforts [1].

In the context of ecological systems, forecasting is formally defined as "the process of predicting the state of ecosystems, ecosystem services, and natural capital, with fully specified uncertainties, and is contingent on explicit scenarios of climate, land use, human population, technologies, and economic activity" [2]. Because all decision-making is ultimately based on what will happen in the future, environmental decision-making fundamentally depends on forecasts to make those predictions, and their uncertainties, explicit [2].

Core Principles of Environmental Forecasting

The practice of environmental forecasting is governed by several interconnected core principles that ensure scientific rigor and practical utility. These principles form the foundational framework for effective prediction and are summarized in the table below.

Table 1: Core Principles of Environmental Forecasting

| Principle | Description | Significance |

|---|---|---|

| Interdisciplinary Foundation | Draws upon established scientific principles across meteorology, ecology, geology, and social sciences [1]. | Provides a holistic understanding of complex environmental systems. |

| Uncertainty Quantification | Acknowledges and specifies uncertainties as probabilistic estimations rather than definitive pronouncements [1] [2]. | Enables informed risk assessment and management; builds trust through transparency. |

| Iterative Forecasting & Validation | Employs frequent iterative forecasts with out-of-sample testing against future observations [2]. | Accelerates scientific learning, improves model accuracy, and enables adaptive management. |

| Scenario-Based Planning | Develops plausible future scenarios based on different environmental and socio-economic drivers [1] [3]. | Allows exploration of a range of potential futures and assessment of associated risks and opportunities. |

| Actionable Communication | Tailors forecast dissemination to be accessible, understandable, and useful for specific stakeholders and decision-makers [1]. | Bridges the gap between science and policy, ensuring forecasts lead to tangible actions. |

These principles are operationalized through a structured workflow that integrates data, modeling, and stakeholder engagement to produce actionable insights for decision-making.

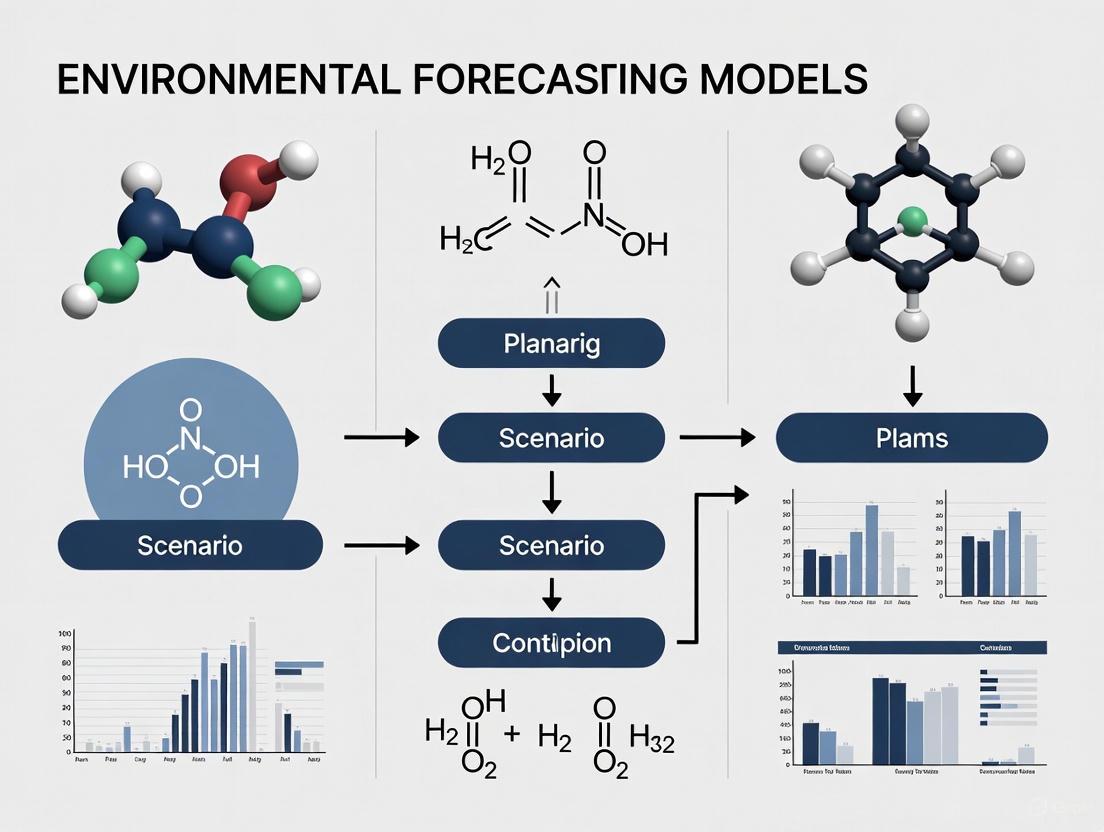

Figure 1: Environmental Forecasting Workflow. This diagram illustrates the iterative, multi-stage process of developing environmental forecasts, from defining goals to supporting adaptive management decisions.

Scenario Planning as a Core Methodological Approach

Scenario planning is a critical methodology within environmental forecasting, defined as a decision-making process which identifies and plans for various future options [4]. It helps stakeholders to make better decisions for possible future conditions by comparing and assessing different plausible narratives, creating a framework to consider several novel situations, not just what may be expected based on the past [3]. This approach is particularly valuable for managing high uncertainty in both environmental conditions and human systems, such as urban growth [4].

Application Protocol: Integrating Urban Growth Prediction with Flood Risk Scenarios

This protocol outlines a methodology for integrating urban growth prediction with sea-level rise scenarios to assess future flood exposure, advancing traditional scenario planning [4].

Objective: To predict potential future urban growth and flood risk scenarios and assess future urban flood exposure at multiple spatial scales (e.g., city and neighborhood levels).

Pre-Workshop Phase:

- Problem Scoping: The lead practitioner identifies the desired goals of the scenario development process and recruits relevant stakeholders [3].

- Data Acquisition:

- Collect historical land cover data (e.g., from satellite imagery) for the region of interest over multiple time periods (e.g., 10-20 years).

- Compile spatial explanatory variables: Distance to roads, distance to city center, elevation, slope, zoning regulations, protected areas, etc.

- Acquire current 100-year floodplain maps and sea-level rise (SLR) scenarios (e.g., low, high, extreme) from relevant authorities (e.g., NOAA) [4].

- Model Selection and Development:

- Select a Land Change Model (LCM), such as the Land Transformation Model (LTM), which uses a GIS-based artificial neural network to predict future urban growth based on relationships between historic land cover and explanatory factors [4].

- Calibrate the model using historical data.

Workshop Phase:

- Scenario Definition: Collaboratively define distinct urban growth scenarios with stakeholders [3]. Three common typologies are:

- Business as Usual (BAU): Assumes future growth follows historical patterns without new interventions.

- Growth as Planned: Assumes future growth adheres to the current land use plan.

- Resilient Growth: Assumes all future development is directed outside of high-risk areas like the 100-year floodplain [4].

- Scenario Simulation: Run the calibrated LCM for each defined urban growth scenario to generate projected land use maps for the target year.

- Flood Risk Delineation: Overlay the projected urban growth maps with the various SLR scenarios to delineate future flood risk areas.

Post-Workshop Phase:

- Multi-Scalar Evaluation: Quantify the amount of projected urban growth exposed to flood risk for each scenario combination (e.g., BAU + High SLR) at both the city and neighborhood scales [4].

- Impact Assessment and Decision Support: Compare the results across scenarios to evaluate the efficacy of the current land use plan ("Growth as Planned") in mitigating future flood risk. Use this analysis to inform strategic planning, land use regulations, and infrastructure investments.

The Researcher's Toolkit: Essential Reagents & Solutions

Environmental forecasting relies on a suite of conceptual frameworks, computational tools, and data sources. The table below details key "research reagents" essential for conducting forecasting research and development.

Table 2: Essential Research Reagents and Solutions for Environmental Forecasting

| Tool/Reagent | Category | Function & Application |

|---|---|---|

| Land Change Models (LCMs) | Computational Model | Predicts future land use change based on historic data and spatial drivers; used for urban growth scenario planning [4]. |

| Global Climate Models (GCMs) | Computational Model | Simulates complex interactions within the Earth's climate system; used for long-term climate projections [1]. |

| Ensemble Forecasting | Methodological Framework | Utilizes multiple models or configurations to generate a range of possible futures; provides robust assessment of uncertainty [1]. |

| Integrated Assessment Models (IAMs) | Computational Model | Links environmental, economic, and social systems to explore interactions between human activities and the environment [1]. |

| Remote Sensing Data | Data Source | Provides real-time, spatially detailed environmental data (e.g., satellite imagery) for model initialization, calibration, and validation [1]. |

| Scenario Narratives | Qualitative Framework | Plausible, structured stories about the future; used in participatory scenario planning to explore decision options under deep uncertainty [3]. |

| Markov Chains | Statistical Model | Describes the probability of transitioning from one state (e.g., a land cover type) to another; used for predicting future statuses of environmental sustainability [5]. |

| Big Data Analytics | Analytical Technique | Processes vast, complex datasets to identify patterns and improve forecast accuracy; applied in supply chain sustainability and decision forecasting [6]. |

Concluding Synthesis

Environmental forecasting represents a critical nexus between scientific prediction and proactive decision-making. Its core principles—interdisciplinarity, uncertainty quantification, iterative validation, scenario planning, and actionable communication—provide a robust framework for navigating complex environmental challenges. As the field evolves, the integration of advanced technologies like artificial intelligence and big data analytics, coupled with a strong emphasis on co-production with stakeholders through methods like scenario planning, will further enhance its capacity to inform a more sustainable and resilient path forward [7] [6]. The protocols and tools detailed herein offer researchers and practitioners a foundational guide for applying these principles to pressing environmental problems.

Within environmental forecasting, Traditional Weather Prediction and Climate Risk Forecasting represent two distinct paradigms designed for different temporal scales and end-user applications. Traditional weather forecasting focuses on predicting the specific state of the atmosphere—such as temperature, precipitation, and wind—at a given location and time, typically from hours to about two weeks into the future [8] [9]. Its primary goal is to inform daily decisions and provide warnings for immediate extreme weather events. In contrast, climate risk forecasting is a broader process that predicts potential harms and opportunities arising from long-term alterations in climate patterns. It deals with statistics of weather over years, decades, or even centuries, focusing on shifts in averages, variability, and the frequency of extreme events to inform proactive strategic planning [10] [11].

The core distinction lies in their treatment of initial conditions and predictive certainty. Weather forecasting is an initial-value problem; highly dependent on precise, current atmospheric measurements. Its accuracy decays rapidly beyond approximately one week due to the chaotic nature of the atmosphere [8] [9]. Climate risk forecasting, however, is a boundary-value problem. It is not concerned with predicting the weather on a specific date in the future but with characterizing the probable distribution of weather events over long periods based on external forcings, such as greenhouse gas concentrations [9]. This fundamental difference dictates their respective methodologies, applications, and the interpretation of their outputs, which is critical for researchers and drug development professionals relying on environmental data for project planning and risk assessment.

Comparative Analysis: Core Characteristics and Outputs

The following table summarizes the quantitative and qualitative distinctions between traditional weather prediction and climate risk forecasting, highlighting their divergent objectives, methodologies, and outputs.

Table 1: Comparative Analysis of Traditional Weather Prediction and Climate Risk Forecasting

| Characteristic | Traditional Weather Prediction | Climate Risk Forecasting |

|---|---|---|

| Primary Objective | Predict specific atmospheric conditions for short-term decision-making and immediate hazard warnings [8]. | Assess long-term shifts in climate statistics (means, extremes) to inform strategic risk management and resilience planning [11]. |

| Forecasting Horizon | Hours to approximately 7-14 days [9]. | Seasonal outlooks to decades or even centuries [10] [11]. |

| Core Methodology | Numerical Weather Prediction (NWP) using physics-based models initialized with current atmospheric data [8] [12]. | Scenario analysis using Global Climate Models (GCMs) and statistical downscaling, often employing probabilistic approaches [13] [11]. |

| Nature of Output | Deterministic (e.g., max temperature 25°C) and increasingly probabilistic (e.g., 60% chance of rain) [8]. | Probabilistic and scenario-based (e.g., the likelihood of a 2°C temperature increase under a specific emissions pathway) [13] [11]. |

| Key Input Parameters | Current temperature, pressure, humidity, wind observations from stations, satellites, and radar [8]. | Greenhouse gas emission scenarios, ocean circulation patterns, atmospheric chemistry, and land-use changes [11] [12]. |

| Treatment of Initial Conditions | Critically important; models are frequently re-initialized with the latest data for accuracy [9]. | Less critical; models are run for long periods to reach their own equilibrium, independent of a specific starting weather state [9]. |

| Typical Spatial Resolution | High resolution (e.g., kilometers or less) to capture specific weather phenomena like thunderstorms [9]. | Coarser resolution (e.g., tens to hundreds of kilometers) due to computational constraints over long simulations [9]. |

This comparative framework underscores that these models are complementary tools rather than interchangeable. For instance, a drug development professional might use a weather forecast to plan a critical shipment of temperature-sensitive clinical trial materials next week, while simultaneously using climate risk forecasts to assess the long-term viability of a raw material supply chain over the next 30 years.

Methodological and Application Frameworks

Methodological Workflows

The operationalization of these forecasting models follows distinct workflows, from data assimilation to the final output. The diagram below illustrates the core processes for both traditional weather prediction and climate risk forecasting.

Application in Scenario Planning and Risk Assessment

Climate Risk Forecasting is intrinsically linked to scenario analysis, a well-established method for developing strategic plans that are robust to a range of plausible futures [13]. For researchers and drug development professionals, this is a critical tool for enhancing strategic thinking and challenging "business-as-usual" assumptions. Scenarios are not predictions but hypothetical constructs that are plausible, distinctive, consistent, relevant, and challenging [13].

The process for applying scenario analysis to climate-related risks involves a structured protocol [13]:

- Governance and Scoping: Integrate scenario analysis into strategic planning and enterprise risk management processes. Identify key internal and external stakeholders.

- Materiality Assessment: Determine the organization's current and anticipated exposure to climate-related risks and opportunities (e.g., market shifts, policy changes, physical risks to operations) [13].

- Scenario Definition: Select and define a range of scenarios, such as a 2°C-aligned scenario or other plausible futures. Input parameters may include carbon price, energy mix, technology development, and policy strength [13].

- Impact Evaluation: Evaluate the potential effects on the organization's strategic and financial position under each scenario. This can be qualitative (narratives) or quantitative (using models to illustrate pathways and outcomes) [13].

- Response Identification: Use the results to identify decisions for managing identified risks and opportunities, such as adjusting strategic plans or supply chain logistics.

- Documentation and Disclosure: Document the process, key inputs, assumptions, analytical methods, outputs, and potential management responses.

In the specific context of drug development, scenario planning is invaluable for managing clinical supply chain unpredictability. Key application facets include [14]:

- Packaging Design Strategy: Determining optimal packaging (e.g., single-unit vs. multiple-unit packs) to manage expiry and shipping costs under varying enrollment scenarios.

- Sourcing/Manufacturing Strategy: Modeling alternative manufacturing plans and lead times to mitigate risks of waste from slow enrollment or batch loss.

- Distribution Strategy: Optimizing the depot network (global, regional, local) and evaluating the impact of changes in shipping frequency or protocol amendments that add new countries.

Experimental Protocols and Research Reagents

Protocol for Conducting a Climate Risk Scenario Analysis

This protocol provides a detailed methodology for researchers to assess the resilience of a strategic plan, such as a clinical trial program or supply chain, against future climate risks.

Title: Quantitative Climate Risk Scenario Analysis for Strategic Asset Resilience. Objective: To evaluate the potential financial and operational impacts of a range of climate scenarios on a defined asset or portfolio over a 30-year horizon. Materials: See Section 4.2 for the "Research Reagent Solutions" table.

Procedure:

- System Boundary Definition:

- Clearly define the asset or system under analysis (e.g., a specific manufacturing facility, a key transportation route, a raw material sourcing region).

- Map the relevant value chain (upstream and downstream) and identify the geographic locations of critical nodes [13].

Scenario and Model Selection:

- Select at least two contrasting climate scenarios, such as the IPCC's SSP1-2.6 (strong mitigation) and SSP5-8.5 (high emissions) scenarios [13] [12].

- Acquire relevant climate model output data (e.g., from the Coupled Model Intercomparison Project - CMIP) for these scenarios, focusing on variables of interest (e.g., temperature extremes, precipitation, sea-level rise).

Data Processing and Downscaling:

- If the native resolution of the Global Climate Models (GCMs) is too coarse, apply statistical downscaling techniques to refine the climate projections to a location-specific level [11].

- Bias-correct the model data using historical observations to improve local accuracy.

Impact Model Integration:

- Feed the processed climate data (e.g., projected heatwaves, flood frequency) into sector-specific impact models.

- Example for Health Research: Use climate-driven disease vector models (e.g., for malaria or dengue) to project future changes in clinical trial site suitability or patient population health.

- Example for Supply Chain: Use hydrological models and flood maps to assess the future risk of business interruption at a coastal manufacturing plant.

Financial and Operational Quantification:

- Translate physical impacts into financial metrics (e.g., cost of downtime, insurance premiums, capital expenditures for adaptation) or operational metrics (e.g., days of operation lost, changes in productivity).

- Apply a suitable discount rate to future costs and benefits for a Net Present Value (NPV) analysis [13].

Sensitivity and Uncertainty Analysis:

- Perform a sensitivity analysis on key assumptions, such as the discount rate, carbon price, or the pace of technological adaptation [13].

- Quantify uncertainty by using output from an ensemble of multiple climate models, rather than relying on a single model projection.

Reporting and Visualization:

- Document the process, key inputs, assumptions, analytical methods, and outputs.

- Visualize results using probabilistic risk curves (e.g., exceedance probability curves for cost impacts) and summary tables comparing scenarios.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools, datasets, and models essential for conducting advanced climate risk and weather forecasting research.

Table 2: Essential Research Reagents for Environmental Forecasting

| Reagent / Tool Name | Type | Primary Function & Application | Source / Reference |

|---|---|---|---|

| Global Climate Models (GCMs) | Software Model | Simulate global climate system dynamics over decades/centuries under different forcing scenarios; used for climate projections [11] [12]. | E.g., Models from IPCC Assessment Reports (via CMIP) |

| Numerical Weather Prediction (NWP) Models | Software Model | Simulate short-term atmospheric physics for weather forecasting; initialized with real-time data [8] [9]. | E.g., WRF, GFS, IFS (ECMWF) |

| Statistical Downscaling Tools | Computational Method | Refine coarse GCM output to higher-resolution, location-specific climate information for local risk assessments [11]. | Various R/Python packages (e.g., climate4R, xclim) |

| Scenario Input Parameters | Data Set | Pre-defined sets of assumptions (carbon price, energy mix, policy) for consistent scenario analysis across studies [13]. | E.g., IEA, IPCC Scenarios |

| Probabilistic Forecasting Framework | Analytical Framework | A set of tools and metrics (e.g., EVC diagram) to evaluate the economic value of probabilistic forecasts of continuous variables [15]. | Custom development based on peer-reviewed literature [15] |

Understanding the distinct roles of traditional weather prediction and climate risk forecasting is imperative for researchers and drug development professionals navigating an increasingly volatile environmental landscape. Weather models provide the essential, high-resolution data needed for operational resilience—securing logistics, protecting infrastructure from immediate extremes, and ensuring the continuity of clinical trials. Climate risk models, coupled with rigorous scenario analysis, provide the foundation for strategic resilience—informing long-term investments, adapting supply chains, and evaluating the systemic risks that could impact drug development pipelines over the coming decades.

The integration of these tools allows for a comprehensive risk management approach. For instance, a pharmaceutical company can use climate risk scenarios to decide whether to build a new manufacturing facility in a region projected to face severe water stress, while relying on precise weather forecasts to protect the site's operations from an incoming hurricane. As the climate continues to change, the ability to leverage both forecasting paradigms will be a key differentiator in building robust, adaptable, and successful research and development enterprises.

The Critical Role of Scenario Planning in Navigating Deep Uncertainty

Scenario planning (SP) has emerged as a critical strategic tool for navigating deep uncertainty in environmental forecasting and resource management. It enables researchers and decision-makers to move beyond single-point predictions and explore a set of plausible futures shaped by specific trajectories of change [16]. Unlike technical modeling approaches that rely on forecasting, SP employs a structured "what-if" process to identify key uncertainties, potential impacts, and management responses under conditions where statistical predictions prove inadequate [17] [18]. This approach has become particularly valuable in climate adaptation and environmental management, where decision-makers must confront complex, non-linear systems and irreducible uncertainties about future states [16].

The fundamental strength of scenario planning lies in its ability to reconcile conflicting objectives between development needs and environmental concerns, particularly in domains like energy systems and natural resource management [19]. By creating multiple plausible futures rather than relying on a single prediction, SP helps organizations prepare for conceivable consequences, enabling them to become more adaptable and dynamic in their strategic planning [19]. This methodological approach has evolved significantly from its origins in post-World War II defense strategy to its current applications across ecosystem management, energy planning, public health, and climate adaptation [16].

Scenario Planning Methodologies and Typologies

Categorical Framework of Scenario Approaches

Scenario planning methodologies can be categorized into three distinct types based on their temporal orientation and underlying logic. The classification below reflects different philosophical approaches to addressing uncertainty and complexity in strategic planning [18].

Table 1: Scenario Planning Typologies and Characteristics

| Scenario Type | Temporal Direction | Planning Objective | Key Characteristics |

|---|---|---|---|

| Predictive Scenarios | Present → Future | Estimate probable future situations | Uses past and present knowledge; often quantitative; seeks most likely outcome |

| Exploratory Scenarios | Present → Future | Estimate plausible continuation of current trends | Based on current realities, knowledge, and major trends; includes trend and framing scenarios |

| Normative Scenarios | Future → Present | Identify paths to reach a particular vision of the future | Begins with a desirable (or sometimes undesirable) endpoint; works backward to identify necessary actions |

Predictive scenarios utilize historical and current data to forecast the most statistically probable futures, making them particularly useful for short-to-medium-term planning where system behaviors remain relatively stable [18]. In contrast, exploratory scenarios extend present realities and trends to envision plausible futures without assigning specific probabilities, making them valuable for considering a broader range of possibilities in complex systems [18]. Normative scenarios adopt a backcasting approach, starting with a specific vision of the future (often desirable) and working backward to identify the policies, innovations, and actions required to achieve or avoid that future state [18].

Participatory Scenario Planning (PSP) in Environmental Contexts

Within environmental forecasting, Participatory Scenario Planning (PSP) has gained prominence as a specialized approach that emphasizes stakeholder involvement in scenario development [16]. PSP recognizes that complex environmental challenges require integrating diverse forms of knowledge, including scientific expertise, local knowledge, and management experience. This approach builds consensus, trust, cooperation, and social learning among participants from various backgrounds [16]. Unlike technical modeling exercises conducted exclusively by experts, PSP treats scenario development as both a technical process and a mechanism for stakeholder engagement, creating buy-in for eventual implementation of adaptation strategies.

The distinctive feature of PSP lies in its ability to bridge the science-policy interface by facilitating direct interaction between researchers, policymakers, practitioners, and other stakeholders [16]. This collaborative process helps manage the intrinsic uncertainty of climate systems by incorporating both scientific uncertainty from climate model projections and management-based uncertainty derived from participants' practical experiences [16]. The outcome is typically a set of climate scenario narratives that represent plausible and divergent climate futures developed in concert with stakeholder management priorities.

Quantitative and Qualitative Integration in Scenario Development

Comparative Analysis of Scenario Development Approaches

The integration of quantitative and qualitative methods represents a sophisticated advancement in scenario planning methodology. Each approach brings distinct strengths and limitations to the forecasting process, as detailed in the following comparative analysis.

Table 2: Qualitative versus Quantitative Approaches in Scenario Planning

| Aspect | Qualitative Approaches | Quantitative Approaches | Integrated Approaches |

|---|---|---|---|

| Primary Focus | Expert judgment, narratives, stakeholder perspectives | Data patterns, statistical models, simulations | Combines data-driven foundations with expert insight |

| Key Strengths | Flexible, innovative, longer-term outlooks, identifies disruptive signals | Objective, reproducible, handles complex data relationships, validates patterns | Robust, comprehensive, balances creativity with analytical rigor |

| Key Limitations | Subjective, dependent on expert selection, challenging validation | Constrained by historical data, may miss emerging trends, assumes continuity | Resource-intensive, requires interdisciplinary collaboration |

| Time Horizon Effectiveness | More effective for long-term forecasts | Effectiveness decreases with longer time horizons | Maintains effectiveness across time horizons |

Hybrid Methodological Frameworks

Recent methodological innovations have focused on integrating qualitative and quantitative approaches to overcome their individual limitations. The Learning Scenario Development Model (LSDM) represents one such hybrid framework that combines machine learning techniques with expert judgment [19]. This approach begins with a quantitative foundation where data mining and machine learning algorithms analyze historical time-series data to identify hidden patterns and establish a "business as usual" (BAU) reference scenario [19]. The model then incorporates a qualitative layer where domain experts suggest modifications to input variables based on their understanding of emerging trends, policy interventions, and potential disruptions [19].

This integrated approach is particularly valuable for addressing the predictive limitations of purely quantitative models in complex, non-linear systems. As demonstrated in climate science, simpler physics-based models can sometimes outperform sophisticated deep-learning approaches in predicting regional surface temperatures, highlighting the importance of incorporating domain knowledge and physical laws into forecasting approaches [20]. Similarly, in ecological impact assessments, quantitative future climate scenarios derived from Global Climate Models must be carefully downscaled and interpreted through expert judgment to become useful for natural resource management decision-making [21].

Application Protocols for Environmental Forecasting

Standardized Participatory Scenario Planning Protocol

The following protocol outlines a standardized methodology for implementing Participatory Scenario Planning (PSP) in environmental forecasting contexts, synthesized from multiple systematic reviews of PSP applications [16]:

Phase 1: Foundation Building

- Stakeholder Mapping and Engagement: Identify and recruit diverse stakeholders representing scientific expertise, management experience, policy perspectives, and local knowledge. Ensure representation across relevant sectors, disciplines, and power dynamics.

- Problem Framing and Scope Definition: Collaboratively define the central challenge, geographic boundaries, time horizons, and key decision points. Establish shared terminology and objectives through facilitated dialogue.

- System Description: Characterize the current situation using strategic analysis tools (PESTEL, SWOT, systems mapping) to identify key variables, actors, relationships, and drivers of change.

Phase 2: Scenario Development

- Critical Uncertainty Identification: Through structured brainstorming and voting techniques, identify the two most critical uncertainties with the potential to significantly impact future outcomes.

- Scenario Framework Creation: Use a 2x2 matrix technique based on the axes defined by the critical uncertainties to establish four distinct scenario frameworks representing contrasting future conditions.

- Scenario Narrative Elaboration: Develop rich, descriptive narratives for each scenario quadrant, detailing how events, trends, and decisions might unfold in each future. Incorporate quantitative data where available to enhance credibility.

Phase 3: Scenario Validation

- Internal Consistency Checking: Assess the logical coherence of each scenario's sequence of events and the compatibility of all elements within each scenario.

- Plausibility Testing: Challenge scenario assumptions and logic through expert review and reality testing against known physical processes and historical analogues.

- Divergence Confirmation: Verify that scenarios represent meaningfully different futures rather than variations of the same underlying narrative.

Phase 4: Consequence Analysis and Implementation

- Management Response Identification: For each scenario, identify robust adaptation strategies that would be effective across multiple futures and scenario-specific actions appropriate for particular futures.

- Decision Support Integration: Connect scenario insights to actual decision processes, policy development, and strategic planning cycles within participating organizations.

- Monitoring Framework Development: Establish indicators and signaling mechanisms to track which scenario(s) may be emerging over time to enable adaptive management.

Quantitative Climate Scenario Development Protocol

For researchers developing quantitative climate scenarios to inform ecological impact assessments, the following protocol provides a standardized methodology [21]:

Data Acquisition and Processing

- Source climate projection data from multiple Global Climate Models (GCMs) and downscaled derivatives relevant to the geographic region of interest.

- Apply bias correction and statistical downscaling techniques to align GCM outputs with local observational data.

- Process data to extract management-relevant variables (e.g., temperature extremes, drought indices, growing season length) at appropriate temporal and spatial scales.

Uncertainty Characterization

- Represent climate model uncertainty by including projections from multiple models across the ensemble.

- Incorporate scenario uncertainty using multiple Representative Concentration Pathways (RCPs) or Shared Socioeconomic Pathways (SSPs).

- Quantify internal variability through analysis of multiple model runs or statistical techniques.

Scenario Construction

- Develop a reference scenario based on historical trends and "business as usual" projections.

- Create alternative scenarios representing plausible deviations from the reference case based on expert judgment about potential tipping points, non-linearities, and emergent constraints.

- Quantitatively express scenarios as ranges, probabilities, or threshold exceedances rather than single-point estimates.

Ecological Scenario Integration

- Link climate scenarios to ecological response models where possible, explicitly representing uncertainty in ecological processes.

- Frame scenarios to inform specific management decisions, such as application of the Resist-Accept-Direct framework for ecosystem intervention.

- Communicate scenarios through accessible visualizations and decision-support tools tailored to practitioner needs.

Essential Research Toolkit for Scenario Planning

Methodological Framework and Analytical Tools

The successful implementation of scenario planning requires a diverse toolkit of methodological frameworks, analytical techniques, and facilitation resources. The following table summarizes essential components for conducting rigorous scenario planning exercises in environmental forecasting contexts.

Table 3: Research Reagent Solutions for Scenario Planning

| Tool Category | Specific Methods/Techniques | Primary Function | Application Context |

|---|---|---|---|

| Methodological Frameworks | Intuitive Logics; Probabilistic Modified Trends; La Prospective | Provide structured processes for scenario development | Foundation setting; scenario generation; consequence analysis |

| System Analysis Tools | PESTEL; SWOT; Structural Analysis; Systems Mapping | Characterize current system state and key relationships | Initial system description; driver identification; relationship mapping |

| Forecasting Techniques | Delphi Method; Trend Impact Analysis; Cross-Impact Analysis | Extrapolate future developments from current trends | Exploratory scenario development; identifying emerging issues |

| Scenario Generation Methods | 2x2 Matrix; Morphological Analysis; Backcasting | Create contrasting scenario narratives and frameworks | Scenario framework creation; normative scenario development |

| Decision Support Tools | Robust Decision Making; Decision Scaling; Adaptation Pathways | Connect scenarios to specific decisions and policies | Consequence analysis; strategy development; implementation planning |

Specialized Analytical Techniques

Beyond the general methodological approaches, several specialized techniques enhance the analytical rigor of scenario planning processes:

Structural Analysis facilitates the organization of collective discussion to describe a system using a matrix of relationships, helping participants identify the most influential drivers within a complex system [18]. Morphological Analysis provides a systematic method for identifying and investigating the total set of possible configurations in a complex problem space, supporting the development of comprehensive scenario sets [18]. Cross-Impact Analysis enables the assessment of how different scenario elements and driving forces might interact, revealing secondary and tertiary consequences that might otherwise be overlooked [18].

For quantitative scenario development, Linear Pattern Scaling (LPS) offers a straightforward technique for estimating local climate responses to global change, demonstrating particular utility for temperature projections where it can outperform more complex deep-learning approaches [20]. When employing machine learning techniques, feature selection algorithms help reduce problem dimensionality while ensuring investigation of all possible optimum solutions, forming a crucial component of Learning Scenario Development Models [19].

Visualizing Scenario Planning Processes and Methodologies

Participatory Scenario Planning Workflow

Participatory Scenario Planning (PSP) incorporates stakeholder engagement throughout a structured four-phase process that moves from foundation building through scenario development, validation, and consequence analysis. This workflow emphasizes iterative refinement and practical application of scenarios for decision support [16].

Integrated Qualitative-Quantitative Scenario Development

The Learning Scenario Development Model (LSDM) integrates quantitative machine learning approaches with qualitative expert judgment to create robust multi-scenario forecasts. This hybrid methodology leverages the pattern recognition capabilities of data-driven algorithms while incorporating domain expertise about emerging trends and potential policy interventions [19].

Scenario planning represents an indispensable methodology for navigating deep uncertainty in environmental forecasting and resource management. By moving beyond single-point predictions to explore multiple plausible futures, scenario planning enables researchers and decision-makers to develop more robust strategies that remain effective across a range of possible future conditions. The integration of qualitative and quantitative approaches through frameworks like Participatory Scenario Planning and the Learning Scenario Development Model enhances both the credibility and relevance of scenarios for real-world decision-making [17] [19].

The critical value of scenario planning lies not in its ability to predict the future, but in its capacity to reframe strategic thinking, challenge mental models, and build organizational resilience in the face of uncertainty. As environmental challenges become increasingly complex and interconnected, scenario planning offers a structured yet flexible approach for engaging with deep uncertainty while maintaining scientific rigor and practical relevance. For researchers and professionals working at the intersection of environmental science and decision-making, mastering scenario planning methodologies is no longer optional—it is essential for developing effective strategies in an increasingly uncertain world.

Application Note 1: Forecasting Infectious Disease Outbreaks for Public Health Preparedness

Table 1: Global Infectious Disease Threat Landscape and Preparedness Status (2023-2024 Data)

| Metric Category | Specific Indicator | Value / Finding | Source |

|---|---|---|---|

| Outbreak Activity | Countries reporting re-emerging infectious disease outbreaks (2024) | Over 40 countries | WHO Disease Outbreak News [22] |

| Pathogen Monitoring | Priority pathogens with epidemic potential under WHO monitoring | More than 20 pathogens | WHO Disease Outbreak News [22] |

| Preparedness Funding | Annual shortfall in global pandemic preparedness funding | > $10 billion | World Bank, 2024 [22] |

| Antimicrobial Resistance | Direct deaths attributable to AMR (2019) | 1.27 million | The Lancet, 2022 [22] |

| Antimicrobial Resistance | Projected annual deaths by 2050 without action | 10 million | WHO AMR Fact Sheet [22] |

Experimental Protocol: Time-Series Forecasting for Epidemic Risk

Objective: To model and forecast regional epidemic risk using historical incidence data and optimized time-series algorithms.

Materials & Reagents:

- Epidemiological Data: Historical case counts (e.g., weekly/monthly incidence) for the pathogen of interest.

- Computational Environment: Software supporting statistical modeling and nonlinear optimization (e.g., R, Python with SciPy).

- Modeling Algorithms: Implementation of Simple Moving Average (SMA), Weighted Moving Average (WMA), Exponential Smoothing (ES), and Holt-Winters models.

Procedure:

- Data Curation: Collect and clean historical time-series data. Address missing values using imputation techniques.

- Model Implementation: Apply five core forecasting models:

- Simple Moving Average (SMA)

- Weighted Moving Average (WMA)

- Exponential Smoothing (ES)

- Holt-Winters Additive Model

- Holt-Winters Multiplicative Model

- Parameter Optimization: Employ nonlinear optimization techniques (e.g., L-BFGS-B algorithm) to estimate model-specific parameters (e.g., smoothing constants

(α, β, γ)for Holt-Winters) that minimize error metrics. - Model Validation: Partition data into training and validation sets. Evaluate model accuracy using Mean Absolute Error (MAE) and Mean Squared Error (MSE).

- Forecast Generation: Utilize the model with the lowest validation error to generate near-term forecasts (e.g., 6-12 months).

- Scenario Planning: Develop alternative forecasts based on different assumptions (e.g., increased transmission rates, intervention efficacy) to model potential outbreak trajectories [23].

Workflow Diagram: Infectious Disease Forecasting and Response Pipeline

Research Reagent Solutions: Disease Forecasting Toolkit

Table 2: Essential Components for an Infectious Disease Forecasting Framework

| Component / Reagent | Function / Application | Example / Specification |

|---|---|---|

| Epidemiological Data | Provides the foundational time-series data for model training and validation. | Case counts, mortality data, genomic surveillance data from health agencies (e.g., WHO, CDC). |

| Statistical Software | Platform for implementing forecasting models, optimization, and error analysis. | R, Python with libraries (Pandas, Statsmodels, Scikit-learn). |

| Computational Resources | Hardware for running potentially resource-intensive optimization and model simulations. | Multi-core processors, cloud computing services (AWS, Google Cloud). |

| Scenario Planning Framework | Structured methodology to develop and evaluate alternative future states based on model outputs. | Driver-based planning templates, assumption validation matrices [23]. |

Application Note 2: Assessing Pharmaceutical Environmental Risk and Antimicrobial Resistance

Table 3: Environmental Burden of Pharmaceuticals and Antimicrobial Resistance

| Risk Factor | Key Statistic | Implication | Source |

|---|---|---|---|

| Antimicrobial Use | Over 70% of antibiotics sold globally are used in animal agriculture. | Major driver of environmental AMR selection pressure. | WHO AMR Fact Sheet [22] |

| Pollution as Health Risk | Pollution is the world's largest environmental risk factor for disease and premature death. | Contextualizes the public health burden of pharmaceutical pollutants. | Global Risks Report 2025 [24] |

| Health Inequity | 92% of pollution-related deaths occur in low- and middle-income countries. | Highlights the disproportionate impact on vulnerable populations. | Global Risks Report 2025 [24] |

Experimental Protocol: Environmental Risk Assessment for Pharmaceutical Residues

Objective: To detect, quantify, and forecast the environmental impact and resistance selection potential of pharmaceutical residues.

Materials & Reagents:

- Water Samples: Surface, ground, and wastewater samples from relevant catchment areas.

- Detection Technology: Biosensors, LC-MS/MS, or Surface-Enhanced Raman Spectroscopy (SERS) platforms.

- Microbiological Media: Culture media for cultivating environmental bacterial isolates.

- Antibiotic Discs: Standardized discs with relevant antibiotics for susceptibility testing.

Procedure:

- Sample Collection: Deploy autonomous sensors or grab samples from strategic locations (e.g., downstream from pharmaceutical manufacturers, wastewater treatment plants) [25].

- Pollutant Detection:

- Utilize advanced detection techniques (e.g., biosensors, SERS) for rapid, sensitive identification of pharmaceutical residues [26].

- Employ mass spectrometry for precise quantification of pollutant concentrations.

- AMR Selection Assay:

- Isolate environmental bacteria from collected samples.

- Perform antibiotic susceptibility testing (AST) on isolates against a panel of clinically relevant antibiotics.

- Correlate MIC (Minimum Inhibitory Concentration) values with localized pollutant concentrations.

- Data Integration & Modeling:

- Integrate pollutant concentration data, AST results, and geospatial information into a central data-sharing platform [26].

- Use machine learning frameworks (e.g., Random Forest, LSTM networks) to model and forecast the relationship between pharmaceutical pollution levels and the emergence of AMR hotspots [27].

- Risk Projection: Forecast future AMR burdens under different pollution scenarios to inform regulatory thresholds and mitigation strategies.

Workflow Diagram: Pharmaceutical Environmental Risk Assessment

Research Reagent Solutions: Environmental Risk Assessment Toolkit

Table 4: Essential Materials for Pharmaceutical Environmental Risk Analysis

| Component / Reagent | Function / Application | Example / Specification |

|---|---|---|

| Autonomous Sensors | For in-situ, real-time monitoring of water quality and specific contaminants. | Deployable sensor systems for urban waterways and effluent streams [25]. |

| Advanced Detection Kits | For sensitive and specific identification of pharmaceutical residues in complex environmental samples. | Biosensor kits, SERS substrates, immunoassay kits [26]. |

| Reference Standards | Certified analytical standards for quantifying specific pharmaceutical compounds via LC-MS/MS. | USP/EP certified active pharmaceutical ingredient (API) standards. |

| Data Integration Platform | A centralized system for storing, sharing, and analyzing heterogeneous environmental and AMR data. | Cloud-based data-sharing platforms with API access [26]. |

Application Note 3: Integrating Environmental Pollutant Detection with Public Health Surveillance

Table 5: Public Health Impact of Environmental Pollutants and Climate Change

| Risk Category | Key Statistic | Public Health Consequence | Source |

|---|---|---|---|

| Air Pollution | 7 million premature deaths annually are linked to air pollution. | Elevated burden of respiratory and cardiovascular diseases. | WHO, 2023 [22] |

| Climate-Related Poverty | Climate-related health risks could push 100 million people into poverty by 2030. | Exacerbates health inequities and vulnerability. | World Bank [22] |

| Disease Vector Spread | Vector-borne diseases are spreading to new regions due to warming climates. | Increased population exposure to diseases like dengue and malaria. | WHO Climate Change and Health [22] |

Experimental Protocol: Linking Pollutant Exposure to Population Health Outcomes

Objective: To establish a causal framework between environmental pollutant exposure and health outcomes using integrated data and forecasting models.

Materials & Reagents:

- Environmental Data: Air quality indices, water pollution measurements, soil contamination data.

- Health Data: De-identified electronic health records (EHRs), disease incidence registries, mortality records.

- Omics Technologies: Platforms for genomic, transcriptomic, or metabolomic profiling of biospecimens.

Procedure:

- High-Dimensional Data Collection:

- Collect high-dimensional environmental data (

pvariables from sensors, satellites) and health data (pvariables from EHRs, omics) fornsubjects or geographical units [28].

- Collect high-dimensional environmental data (

- Exposure Assessment:

- Leverage advanced detection technologies (nanotechnology, biosensors, multi-omics) to characterize the full spectrum of pollutant exposures [26].

- Use geospatial mapping to link exposure data to residential or clinical locations of populations.

- Data Integration and Feature Selection:

- Temporal Forecasting:

- Apply Long Short-Term Memory (LSTM) networks to the integrated environment-health dataset to forecast future disease incidence based on projected pollution trends and climate scenarios [27].

- Policy Threshold Identification:

- Use multi-criteria decision analysis (e.g., TOPSIS) and SHAP explainability analysis to identify critical policy thresholds for pollutant levels that optimize health and economic outcomes [27].

Workflow Diagram: Environmental Health Surveillance and Forecasting

Research Reagent Solutions: Environmental Health Surveillance Toolkit

Table 6: Essential Materials for Integrated Environmental Health Analysis

| Component / Reagent | Function / Application | Example / Specification |

|---|---|---|

| Portable Detection Devices | For on-site, rapid measurement of specific pollutants (e.g., heavy metals, particulate matter). | Hand-held biosensors, portable mass spectrometers, SERS-based field kits [26]. |

| Omics Profiling Kits | For uncovering molecular mechanisms linking exposure to health effects. | Microarrays, next-generation sequencing kits for transcriptomics, metabolomics assay panels. |

| Data Analytics Software | For handling high-dimensional data, performing feature selection, and running complex forecasting models. | IBM SPSS, DataRobot, R/Bioconductor packages for genomic data [28] [23]. |

| Geographic Information System | For spatial analysis and visualization of exposure data and health outcome clusters. | ArcGIS, QGIS with spatial statistics modules. |

Methodologies in Action: Building and Applying Forecasting Models

Within environmental forecasting and scenario planning, decision-makers increasingly face deep uncertainties arising from complex, interacting systems that change over time. This complexity leads to significant knowledge gaps and unpredictable surprises, making it difficult to specify appropriate models and parameters. Hybrid modeling has emerged as a powerful approach to mitigate this deep uncertainty by fitting data, models, and computational experiments together to simulate complex systems. By integrating quantitative data with qualitative expertise, these frameworks allow for an ongoing modeling process where uncertainty is gradually reduced through the dynamic adjustment of simulation systems with real-time data [29]. This integration is particularly critical for complex environmental systems, where both measurable data and human experiential knowledge are essential for robust forecasting and planning. Such approaches enable the exploration of diverse future scenarios, improving both prediction accuracy and system sensitivity to uncertain changes [29].

Theoretical Foundations of Hybrid Modeling

Core Principles and Definitions

Hybrid modeling intentionally integrates quantitative and qualitative methods within a single research project to answer the same overarching question [30]. In the context of environmental forecasting:

- Quantitative methods reveal measurable patterns and trends across large datasets, providing information on what is happening within environmental systems through statistical analysis and computational modeling.

- Qualitative methods provide essential context and reveal the why behind observed phenomena—delivering insights into motivations, decision-making processes, mental models, and contextual factors that pure numerical data cannot capture [30].

This integration moves beyond simply using both methods in the same project to a deliberate, planned integration where both data types work synergistically to provide a holistic understanding of complex environmental problems.

Addressing Deep Uncertainty in Complex Systems

Complex environmental systems involve various components and mechanisms that interact in non-linear ways and evolve over time, creating significant deep uncertainty. This uncertainty leaves decision-makers with severe knowledge inadequacy and vulnerable to unpredictable future surprises. Hybrid modeling frameworks are specifically designed to address these challenges by [29]:

- Developing multiple plausible models from a hybrid perspective

- Performing enormous computational experiments to explore scenario diversity

- Incorporating real-time data into diverse forecasts to dynamically adjust simulations

- Enabling an ongoing modeling and analysis process where deep uncertainty is gradually mitigated

Hybrid Modeling Protocols for Environmental Forecasting

The successful implementation of hybrid modeling requires structured methodological approaches. The table below summarizes three primary research designs for integrating quantitative and qualitative evidence:

Table 1: Mixed-Method Research Designs for Hybrid Modeling

| Research Design | Sequence | Primary Application | Key Strengths |

|---|---|---|---|

| Explanatory Sequential [30] | Quant → Qual | Explain quantitative patterns with qualitative insights | Uses qualitative data to illuminate reasons behind quantitative trends |

| Exploratory Sequential [30] | Qual → Quant | Develop and test hypotheses in unfamiliar domains | Uses qualitative insights to inform subsequent quantitative validation |

| Convergent Parallel [30] | Quant + Qual Simultaneously | Triangulate findings from different methodological angles | Provides complementary evidence efficiently through simultaneous data collection |

Protocol 1: Explanatory Sequential Design for Scenario Validation

This design begins with quantitative analysis followed by qualitative investigation to explain or explore the quantitative findings in greater depth [30].

Phase 1: Quantitative Modeling and Scenario Generation

- Step 1.1: Develop multiple quantitative models (e.g., polynomial, sinusoidal, or hybrid functions) to capture non-linear and cyclical environmental relationships [31]

- Step 1.2: Perform computational experiments to generate diverse future scenarios and identify patterns or outliers requiring explanation

- Step 1.3: Document quantitative performance metrics (e.g., accuracy percentages, R² values) for each model variant [31]

Phase 2: Qualitative Expert Elicitation

- Step 2.1: Recruit domain experts (scientists, policymakers, local knowledge holders) through purposive sampling

- Step 2.2: Conduct semi-structured interviews or focus groups using quantitative results as discussion prompts

- Step 2.3: Specifically probe explanations for unexpected patterns, model limitations, and contextual factors absent from quantitative data

Phase 3: Integrated Analysis and Model Refinement

- Step 3.1: Systematically compare qualitative themes with quantitative patterns

- Step 3.2: Refine quantitative models based on qualitative insights about system mechanisms

- Step 3.3: Develop enriched scenario narratives that combine statistical projections with contextual understanding

Protocol 2: Dynamic Exploratory Hybrid Modeling

This advanced protocol fits data, models, and computational experiments together in an ongoing process to simulate complex systems with deep uncertainty [29].

Phase 1: System Characterization and Multi-Model Development

- Step 1.1: Map system components, relationships, and potential emergent behaviors through stakeholder engagement and literature review

- Step 1.2: Develop multiple plausible models from a hybrid modeling perspective, including:

- Polynomial functions to capture non-linear relationships between variables like temperature and energy metrics [31]

- Sinusoidal functions to represent cyclical or seasonal patterns in environmental data [31]

- Combined hybrid functions that integrate both approaches for improved accuracy in capturing complex system behavior [31]

Phase 2: Computational Experimentation and Scenario Exploration

- Step 2.1: Design and execute enormous computational experiments to explore the diversity of possible future scenarios

- Step 2.2: Identify critical uncertainties and decision-relevant scenario clusters through quantitative output analysis

Phase 3: Dynamic Data Integration and Model Adjustment

- Step 3.1: Incorporate real-time monitoring data into diverse forecasts to dynamically adjust the simulation system

- Step 3.2: Continuously validate and refine models against observed system behavior

- Step 3.3: Facilitate ongoing stakeholder engagement to interpret results and inform decision-making in response to changing conditions

The workflow for this dynamic exploratory approach can be visualized as follows:

Protocol 3: Convergent Parallel Design for Decision Support

This design conducts qualitative and quantitative research simultaneously yet independently, then analyzes the results together to provide comprehensive decision support [30].

Phase 1: Parallel Data Collection

- Step 1.1 (Quantitative): Implement statistical models using historical data to project future environmental conditions under various parameterizations

- Step 1.2 (Qualitative): Conduct interviews, focus groups, and participatory workshops with stakeholders to identify concerns, preferences, and contextual constraints

Phase 2: Independent Analysis

- Step 2.1: Analyze quantitative data using appropriate statistical methods and visualization techniques

- Step 2.2: Analyze qualitative data using thematic analysis, coding, and narrative development

Phase 3: Results Integration

- Step 3.1: Compare and contrast findings from both methodological streams using joint displays

- Step 3.2: Identify areas of convergence, divergence, and complementarity between datasets

- Step 3.3: Develop integrated conclusions and recommendations that address both statistical projections and human dimensions

Successful implementation of hybrid modeling requires specific methodological tools and resources. The table below details key solutions for environmental forecasting applications:

Table 2: Research Reagent Solutions for Hybrid Modeling

| Category | Specific Tool/Technique | Function in Hybrid Modeling |

|---|---|---|

| Quantitative Functions | Polynomial Regression [31] | Captures non-linear relationships between environmental variables (e.g., temperature and energy metrics) |

| Sinusoidal Functions [31] | Models cyclical or seasonal patterns in environmental data | |

| Hybrid Functions [31] | Combines multiple mathematical approaches to improve prediction accuracy of complex systems | |

| Qualitative Methods | Framework Synthesis [32] | Provides a structured approach for analyzing and synthesizing qualitative evidence |

| Meta-Ethnography [32] | Enables interpretation and translation of qualitative studies across contexts | |

| Thematic Analysis | Identifies, analyzes, and reports patterns within qualitative data | |

| Integration Frameworks | DECIDE Evidence Framework [32] | Supports structured decision-making by integrating diverse types of evidence |

| WHO-INTEGRATE Framework [32] | Provides methodology for developing guidelines using mixed-method evidence | |

| Logic Models [32] | Illustrates hypothesized relationships between interventions and outcomes | |

| Computational Tools | Dynamic Exploratory Modeling [29] | Enables ongoing simulation adjustment through real-time data incorporation |

| Scenario Exploration Tools | Facilitates analysis of diverse future scenarios under deep uncertainty |

Application in Environmental Forecasting: Case Example

The application of a hybrid modeling approach to energy forecasting demonstrates the practical implementation and benefits of this methodology.

Case Study: Energy Production and Consumption Forecasting

A recent study introduced an advanced mathematical methodology for predicting energy generation and consumption based on temperature variations in regions with diverse climatic conditions [31]. Using a comprehensive dataset of monthly energy production, consumption, and temperature readings spanning ten years (2010-2020), researchers applied polynomial, sinusoidal, and hybrid modeling techniques to capture the non-linear and cyclical relationships between temperature and energy metrics.

Quantitative Findings:

- The hybrid model, combining sinusoidal and polynomial functions, achieved 79.15% accuracy in estimating energy consumption using temperature as a predictor variable [31]

- This model effectively captured seasonal and non-linear consumption patterns, demonstrating significant improvement over conventional models

- The polynomial model for energy production yielded partial accuracy (R² = 0.65), highlighting the need for more advanced techniques to fully capture the temperature-dependent nature of energy production [31]

Integration with Qualitative Expertise: Domain experts provided critical contextual understanding about:

- Socioeconomic factors influencing energy consumption patterns beyond temperature relationships

- Infrastructure limitations affecting the implementation of model recommendations

- Policy constraints and opportunities for applying forecasting results

The relationship between model components and outcomes in this energy forecasting application can be visualized as follows:

Implementation Guidelines and Best Practices

Planning and Coordination for Successful Integration

Effective hybrid modeling requires thoughtful coordination, particularly regarding timing and resource allocation [30]:

- Intentional Design: Plan qualitative and quantitative components together from the start, ensuring both methods align under the same research goal rather than producing disconnected insights

- Resource Allocation: Account for the additional time and resources required to manage multiple protocols, participant groups, and data types

- Methodological Alignment: Use each method for what it's best suited to—quantitative for measuring effects and patterns, qualitative for exploring reasons and contexts

Data Integration and Visualization Standards

The presentation of hybrid modeling results requires careful consideration to ensure clarity and accessibility:

- Structured Tables: Present quantitative data in clearly structured tables with defined headers and bodies, appropriate color differentiation, and adequate contrast for readability [33]

- Visualization Contrast: Ensure all visual elements, including diagrams, charts, and tables, meet minimum color contrast ratio thresholds of at least 3:1 for graphical objects and user interface components [34]

- Responsive Design: Create visualizations that maintain readability across different devices and platforms, ensuring accessibility for all stakeholders [33]

Validation and Continuous Improvement

Robust hybrid modeling implementations incorporate mechanisms for ongoing validation and refinement:

- Triangulation: Systematically compare findings from quantitative and qualitative streams to identify converging evidence, contradictions, and complementary insights

- Stakeholder Feedback: Engage domain experts and end-users in interpreting integrated results and refining modeling approaches

- Dynamic Updating: Establish processes for incorporating new data and insights to continuously improve model accuracy and relevance [29]

Machine Learning and AI in Geospatial Environmental Prediction

The integration of Artificial Intelligence (AI) and Machine Learning (ML) with geospatial analysis, an emerging field often termed Geospatial Artificial Intelligence (GeoAI), is fundamentally transforming environmental forecasting and scenario planning [35]. This paradigm shift enables researchers to process and analyze massive volumes of spatial data—from satellite imagery and IoT sensors to administrative records—at unprecedented scales and resolutions [36] [35]. For environmental scientists and policy-makers, these technologies provide powerful new capabilities for modeling complex systems, predicting future scenarios, and developing robust strategies for challenges ranging from climate change adaptation to sustainable resource management [19] [37]. By leveraging advanced algorithms including deep learning and computer vision, GeoAI facilitates more precise exposure assessment, dynamic scenario exploration, and higher-fidelity projections of environmental futures than previously possible [38] [35].

The core value of GeoAI for environmental prediction lies in its ability to uncover hidden patterns within complex, multi-dimensional datasets that traditional modeling approaches might overlook [19] [35]. For instance, deep learning models can analyze historical satellite imagery to track deforestation patterns, predict pest outbreaks, or model urban heat islands with increasing accuracy [38]. Furthermore, the integration of real-time data streams from in-situ sensors and citizen science initiatives creates living forecasting systems that continuously update and refine their predictions [39]. This technical evolution supports a critical methodological shift in environmental planning: from static predictions to dynamic, adaptive scenario planning under deep uncertainty [19] [40].

Key Applications and Quantitative Performance

GeoAI technologies are being deployed across diverse environmental domains with measurable impacts on prediction accuracy and operational efficiency. The table below summarizes the performance metrics for prominent applications in precision agriculture, a field that has extensively adopted these approaches.

Table 1: Performance Metrics of GeoAI Applications in Precision Agriculture (2025)

| Application Area | AI-GIS Technique Used | Estimated Yield Improvement (%) | Resource Savings (e.g., Water, Fertilizers) (%) | Sustainability Impact |

|---|---|---|---|---|

| Precision Crop Monitoring | Deep Learning on Satellite/UAV Imagery | +15–40% | Water: 18–30%; Fertilizers: 12–25% | Reduced Input Waste |

| Disease & Pest Detection | Image Recognition, Spatio-Climatic Modeling | +10–25% | Pesticide: 20–40% | Lower Environmental Toxicity |

| Soil & Water Resource Management | Predictive Analytics, Moisture Mapping | +8–14% | Water: 25–50% | Water Conservation |

| Climate Risk Assessment | AI-Driven Weather Forecasting & Risk Mapping | Yield Loss Avoidance (5–20%) | Disaster-Related Losses: Up to 40% | Climate Resilience |

| Farm Automation & Robotics | GIS-Guided Navigation, AI Scheduling | +10–20% | Labor: 30–70% | Reduced Carbon Footprint |

Beyond agriculture, climate modeling represents another critical application domain. Early climate models, such as those developed by Syukuro Manabe at the Geophysical Fluid Dynamics Laboratory, demonstrated remarkable forecasting accuracy decades before their predictions could be verified [41]. These models successfully predicted specific patterns of climate response including global warming from CO₂, stratospheric cooling, Arctic amplification (where the Arctic warms 2-3 times faster than the global average), land-ocean contrast (land warming approximately 1.5 times more than ocean), and delayed Southern Ocean warming [41]. The accuracy of these early physical models has established a foundation of confidence for contemporary AI-enhanced approaches, which now build upon this physical understanding with data-driven insights [41] [42].

For coastal and estuary management, GeoAI tools like Long Short-Term Memory (LSTM) networks are being deployed to forecast salinity changes and inundation patterns under various sea-level rise scenarios [39]. These models provide accessible alternatives to computationally expensive traditional hydrodynamic models, enabling more stakeholders to participate in climate adaptation planning [39]. Similarly, in urban planning, GeoAI integrates multiple data streams to model urban heat island effects, optimize resource allocation, and predict areas at greatest risk from extreme weather events [35].

Experimental Protocols and Methodological Frameworks

The Learning Scenario Development Model (LSDM) for Energy Forecasting

The Learning Scenario Development Model (LSDM) represents a sophisticated hybrid methodology that combines quantitative machine learning with qualitative expert judgment to develop robust environmental forecasts [19]. This approach was specifically designed to address the limitations of single-prediction models in complex systems like global natural gas markets, but its framework is readily adaptable to various environmental forecasting domains [19].

Table 2: LSDM Protocol Workflow for Environmental Forecasting

| Phase | Key Procedures | Data Inputs | Outputs/Deliverables |

|---|---|---|---|

| 1. Data Mining & Preprocessing | Data cleansing, dimensionality reduction, feature selection using algorithms like Principal Component Analysis | Historical time-series data (e.g., consumption, land use, climate variables) | Curated dataset, identified key predictor variables |

| 2. Business-as-Usual (BAU) Scenario Modeling | Apply machine learning algorithms (Neural Networks, Genetic Algorithms) to historical data to establish reference trends | Cleaned historical data, identified features | BAU scenario projection with confidence intervals |

| 3. Alternative Scenario Generation | Expert panels manipulate input variables based on policy interventions, emerging trends, or disruptive events | BAU model, qualitative expert judgments, policy targets | Multiple alternative scenarios (e.g., "Sprawl" vs. "Conservation") |

| 4. Scenario Validation & Refinement | Logical controls, accuracy checks, backtesting against historical periods | All scenario outputs, observational data | Validated scenario set with documented assumptions |

The LSDM protocol specifically addresses the challenge of integrating data-driven insights with expert knowledge, creating a structured process for generating scenarios that are both empirically grounded and cognizant of potential system disruptions that may not be evident in historical data alone [19]. In application, this methodology has demonstrated that hybrid models like bat-neural network (BNN) and genetic-neural network (GNN) can effectively capture complex nonlinear relationships in environmental systems while maintaining computational efficiency [19].

GeoAI Protocol for Environmental Exposure Assessment

For environmental health researchers, the following protocol outlines a standardized approach for implementing GeoAI in exposure assessment studies, particularly those investigating relationships between place-based environmental factors and health outcomes [35]:

Diagram 1: GeoAI Environmental Assessment Workflow

Step 1: Data Acquisition and Curation

- Satellite-derived remote sensing data: Sources include Landsat (30m resolution), MODIS (250m-1km), and Copernicus programs, providing historical data back to 1985 with annual updates [35]. Key applications include land cover classification, greenspace quantification, and air pollution modeling through aerosol optical depth measurements [35].

- Administrative data: Government-collected datasets (e.g., National Census, American Community Survey) providing socioeconomic context at census tract or block group levels [35].

- Street View imagery: Platform-based imagery (Google Street View, Baidu, Mapillary) for characterizing built environment features, though temporal consistency may vary [35].

- IoT and sensor data: Real-time streams from environmental sensors, GPS-enabled devices, and participatory science initiatives providing high spatiotemporal resolution [39] [35].

Step 2: Data Preprocessing

- Satellite image correction: Apply algorithms to address cloud cover, atmospheric anomalies, and surface reflectance issues [35].

- Geocoding and normalization: Standardize spatial references across datasets and normalize predictors as required by specific algorithms [35].

- Temporal alignment: Ensure consistent timeframes across diverse data sources, particularly when integrating historical data with contemporary measurements [35].

Step 3: Model Selection and Training

- Convolutional Neural Networks (CNNs): Preferred for image-based analysis (satellite imagery, Street View) for feature detection and classification [38] [35].

- Recurrent Neural Networks (LSTMs): Appropriate for time-series forecasting of environmental variables such as river discharge, salinity changes, or pollution levels [39].

- Spatially-explicit machine learning: Incorporate spatial dependence directly into ML algorithms through techniques such as spatial weighting or convolutional approaches [35].

- Training considerations: Ensure high-quality labeled feature data for supervised learning; address class imbalance through techniques like oversampling or weighted loss functions [35].

Step 4: Validation and Uncertainty Quantification

- Spatial cross-validation: Employ spatial blocking techniques to avoid overestimation of performance due to spatial autocorrelation [35].

- Comparison with gold standards: Validate predictions against ground-truthed measurements or established monitoring networks [35].

- Uncertainty propagation: Quantify and communicate uncertainty from multiple sources including input data quality, model selection, and parameter estimation [35].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of GeoAI for environmental prediction requires both computational resources and specialized data assets. The following table catalogues essential "research reagents" for designing and executing GeoAI studies.

Table 3: Essential Research Reagent Solutions for GeoAI Environmental Prediction

| Resource Category | Specific Tools & Platforms | Primary Function | Access Considerations |

|---|---|---|---|

| Software & Computing Platforms | Python/R with libraries (GeoPandas, TensorFlow, PyTorch), QGIS, ArcGIS, Google Earth Engine | Data processing, model development, spatial analysis and visualization | Open-source options available; commercial platforms may offer enhanced support and integration |

| Satellite Data Products | Landsat, Sentinel, MODIS, Planet Labs constellations | Land cover classification, change detection, vegetation health monitoring, broad-area monitoring | Free tier available for major programs; high-resolution data may require purchase |

| Environmental AI Models | Pre-trained models for specific tasks (e.g., land cover classification, building footprint detection) | Transfer learning, model benchmarking, rapid prototyping | Varying licensing restrictions; some open-source models available |

| Computational Infrastructure | Cloud computing platforms (AWS, Google Cloud, Azure), High-Performance Computing (HPC) clusters | Processing large geospatial datasets, training complex deep learning models | Cost models vary; institutional access may be available |

| Citizen Science Data Platforms | GLOBE Mosquito Habitat Mapper, GLOBE Land Cover, iNaturalist | Ground-truthing, temporal monitoring, capturing hyper-local environmental phenomena | Data quality protocols essential; may require customization for specific research questions |