Ensuring Reliability in Inorganic Analysis: A Comprehensive Guide to Ruggedness Testing

This article provides a complete framework for implementing ruggedness testing in inorganic analytical methods, tailored for researchers and drug development professionals.

Ensuring Reliability in Inorganic Analysis: A Comprehensive Guide to Ruggedness Testing

Abstract

This article provides a complete framework for implementing ruggedness testing in inorganic analytical methods, tailored for researchers and drug development professionals. It covers foundational principles, distinguishing ruggedness from robustness, and outlines a step-by-step methodological approach incorporating risk assessment and experimental design. The guide also addresses common troubleshooting scenarios and details the process for achieving regulatory validation, ensuring methods produce reliable, reproducible data across different laboratories, instruments, and analysts.

Ruggedness Testing Fundamentals: Building a Foundation for Reliable Inorganic Analysis

In the field of inorganic analysis, the reliability of data is not merely a preference but a fundamental requirement. Ruggedness refers to the degree of reproducibility of test results obtained from the analysis of the same sample under a variety of normal, but variable, test conditions [1]. These conditions can include different laboratories, analysts, instruments, reagent lots, and elapsed assay times. The related concept of robustness is defined as a measure of an analytical procedure's capacity to remain unaffected by small but deliberate variations in method parameters [2] [1]. While the terms are sometimes used interchangeably in the literature, robustness typically refers to an method's resilience to intentional, controlled parameter changes within a single laboratory, whereas ruggedness often encompasses a broader assessment of its performance across unintended, real-world variations encountered during inter-laboratory studies.

For researchers and drug development professionals, establishing ruggedness is not merely an academic exercise—it is a practical necessity for regulatory compliance, method transfer between facilities, and ultimately, for ensuring the safety and efficacy of pharmaceutical products. A method that performs excellently under ideal, controlled conditions but fails to produce consistent results when subjected to normal laboratory variations is of little practical value. This guide explores how rigorous ruggedness testing serves as the cornerstone for generating inorganic data that can be trusted across time, instruments, and locations.

Experimental Protocols for Assessing Ruggedness

The Structured Approach to Ruggedness Testing

Implementing a ruggedness test requires a systematic, multi-stage process to ensure comprehensive evaluation of an analytical method. The approach must be thorough yet practical, balancing scientific rigor with resource constraints. The United States Pharmacopeia describes ruggedness as "the degree of reproducibility of test results obtained by the analysis of the same sample under a variety of normal test conditions" [1]. The International Conference on Harmonization (ICH) further clarifies that robustness/ruggedness provides "an indication of reliability during normal usage" [1].

The following workflow outlines the critical stages in designing and executing a proper ruggedness test:

Key Phases of Ruggedness Evaluation

Phase 1: Selection of Factors and Levels

The first critical step involves identifying which method parameters to test and determining appropriate variation ranges. Factors are typically selected from the analytical procedure description or environmental conditions that could reasonably vary during normal method use [1]. For inorganic analysis using techniques like ICP-OES or ICP-MS, critical parameters often include:

- RF power and integration time [2]

- Nebulizer, spray chamber, and torch design [2]

- Temperature (laboratory and spray chamber) [2]

- Concentration of reagents and mobile phase composition [2] [1]

- Sampler and skimmer cone design and construction material [2]

Factor levels (the specific values to be tested) should represent variations expected during method transfer between laboratories or instruments. For quantitative factors, extreme levels are typically chosen symmetrically around the nominal level described in the method procedure [1].

Phase 2: Experimental Design Selection

Proper experimental design is crucial for efficiently evaluating multiple factors simultaneously. Two-level screening designs are most commonly employed, including:

- Fractional Factorial (FF) Designs where the number of experiments (N) is a power of two [1]

- Plackett-Burman (PB) Designs where N is a multiple of four, allowing examination of up to N-1 factors [1]

The choice between designs depends on the number of factors being examined and considerations regarding the statistical interpretation of results. For example, examining 7 factors might utilize a Plackett-Burman design with 12 experiments or a Fractional Factorial design with 16 experiments [1].

Phase 3: Response Measurement and Data Analysis

Both assay responses and system suitability test (SST) responses should be measured during ruggedness testing. For inorganic trace analysis, key responses typically include:

- Accuracy or Bias established through analysis of certified reference materials (CRMs) [2]

- Repeatability (single laboratory precision) expressed as standard deviation [2]

- Limit of Detection (LOD) defined as 3*SD₀, where SD₀ is standard deviation as analyte concentration approaches zero [2]

- Limit of Quantitation (LOQ) defined as 10*SD₀ [2]

The effect of each factor on the response (Eₓ) is calculated as the difference between the average responses when the factor was at its high level versus its low level [1]. These effects are then analyzed statistically, often using normal or half-normal probability plots to identify which factors exert statistically significant influence on method results [1].

Comparative Analysis: Ruggedness vs. Robustness

Conceptual and Practical Distinctions

While ruggedness and robustness are related concepts in method validation, understanding their distinctions is crucial for proper implementation. The table below compares their key characteristics:

Table 1: Comparison of Ruggedness and Robustness Testing

| Aspect | Ruggedness | Robustness |

|---|---|---|

| Definition | Reproducibility under a variety of normal test conditions [1] | Capacity to remain unaffected by small, deliberate variations in method parameters [2] [1] |

| Primary Focus | Inter-laboratory performance and transferability [3] [1] | Intra-laboratory parameter sensitivity [1] |

| Typical Variations | Different laboratories, analysts, instruments, reagent lots, days [1] | Controlled changes to operational parameters (pH, temperature, flow rate) [2] [1] |

| Testing Scope | Broader, assessing real-world variability [3] | Narrower, examining specific parameter effects [1] |

| Regulatory Emphasis | Method reproducibility and transfer between facilities [3] | Method resilience and parameter control limits [1] |

| Resource Requirements | Typically higher (multiple operators/environments) [3] | Typically lower (single laboratory) [3] |

Ruggedness Testing Versus Collaborative Trials

Ruggedness testing occupies a strategic middle ground between single-laboratory robustness testing and full collaborative trials. The comparative analysis reveals significant differences in approach and resource allocation:

Table 2: Method Evaluation Approaches Compared

| Characteristic | Ruggedness Testing | Collaborative Trials | Robustness Testing |

|---|---|---|---|

| Primary Objective | Estimate inter-laboratory uncertainty [3] | Establish reproducibility precision [3] | Identify critical parameters [1] |

| Number of Laboratories | One (with simulated variations) [3] | Multiple (typically 8-15) [3] | One [1] |

| Cost Factor | Much cheaper [3] | Expensive (~£30,000 per method) [3] | Least expensive [1] |

| Regulatory Standing | Screening tool [3] | Gold standard [3] | Method development aid [1] |

| Variation Type | Deliberate parameter perturbations [3] | Natural inter-laboratory differences [3] | Small, deliberate parameter changes [1] |

| Application Stage | Prior to full collaborative trial [3] | Final validation stage [3] | During method development/optimization [1] |

A key research initiative demonstrated that modified ruggedness tests could be applied to estimate measurement uncertainty across ten different chemical analyses covering trace elements, trace organic compounds, anions, and proximate analytes across a concentration range from 89ppb to 56% [3]. This study highlighted the potential for ruggedness testing to provide uncertainty benchmarks comparable to those derived from far more expensive collaborative trials.

Essential Reagents and Materials for Ruggedness Evaluation

The Scientist's Toolkit for Ruggedness Studies

Conducting proper ruggedness tests requires specific materials and reagents designed to challenge method parameters under controlled conditions. The following table outlines essential components for a comprehensive ruggedness evaluation:

Table 3: Essential Research Reagent Solutions for Ruggedness Testing

| Reagent/Material | Function in Ruggedness Assessment | Application Examples |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish accuracy/bias through analysis of materials with certified analyte concentrations [2] | Trace element analysis, method calibration verification |

| Homogenized Laboratory Samples | Provide consistent test material for multiple analysis runs under varied conditions [3] | Inter-laboratory comparison studies, long-term precision assessment |

| Reagents of Different Purity Grades/Lots | Evaluate method sensitivity to variations in reagent quality [1] | Testing impact on background levels, contamination risks |

| Alternative Chromatographic Columns | Assess separation performance across different column batches or manufacturers [1] | HPLC/IC method transfer studies, column longevity testing |

| Buffer Solutions at Varied pH | Determine method tolerance to mobile phase pH fluctuations [1] | ICP-MS stability testing, ion chromatography optimization |

Strategic Implementation of Ruggedness Testing

Implementing an effective ruggedness testing program requires strategic planning and execution. The modified ruggedness testing approach described in research involves experts pre-determining critical features of each analytical method, with perturbations based on uncontrolled variations likely between laboratories [3]. These deliberate variations are typically introduced using randomized combinations of perturbed levels, with approximately 20 complete analyses per method on homogenized laboratory samples [3].

For inorganic trace analysis, the ruggedness testing should specifically evaluate parameters critical to spectroscopic and spectrometric techniques, including RF power, nebulizer and torch design, sampler and skimmer cone configuration, reaction/collision cell conditions, and resolution capabilities [2]. The outcomes of these tests inform not only method validation documentation but also the establishment of system suitability test (SST) limits to ensure ongoing method performance [1].

Ruggedness testing represents a cornerstone of reproducible inorganic data because it bridges the gap between idealized method performance and real-world application. By systematically challenging analytical methods with the types of variations inevitably encountered in practice, researchers can develop truly robust methods that generate reliable data regardless of normal operational fluctuations. This approach ultimately strengthens the scientific validity of analytical results while providing a cost-effective strategy for ensuring method reliability throughout its lifecycle.

In the field of analytical chemistry, particularly within regulated environments like pharmaceutical development, the integrity of a single data point can have monumental consequences, influencing patient diagnoses or determining product safety [4]. Two analytical parameters—robustness and ruggedness—serve as critical safeguards to ensure methods consistently produce accurate and precise results. Although these terms are often used interchangeably, they represent distinct validation concepts that address different sources of methodological variability [5] [6]. A clear understanding of this distinction is fundamental for developing reliable analytical methods, especially for inorganic analysis where complex sample matrices introduce additional challenges.

This guide provides analytical scientists with a structured comparison of these essential validation parameters, supported by experimental approaches and data interpretation frameworks that ensure methodological reliability during technology transfer and routine application.

Conceptual Definitions and Key Distinctions

Robustness: Resistance to Internal Parameter Variations

Robustness is defined as the capacity of an analytical method to remain unaffected by small, deliberate variations in its internal method parameters [5] [4]. It represents an intra-laboratory assessment performed during method development to identify which operational parameters are most sensitive to change, thereby establishing acceptable control limits for each [1].

Examples of factors tested in robustness studies include:

- Mobile phase composition (e.g., pH, buffer concentration, organic modifier ratio) in chromatography [1]

- Operational parameters (e.g., flow rate, column temperature, detection wavelength) [1]

- Instrumental parameters (e.g., RF power, nebulizer flow, integration time) in ICP-OES/ICP-MS [2]

- Sample preparation variables (e.g., extraction time, temperature, solvent volume) [5]

Ruggedness: Resilience to External Laboratory Conditions

Ruggedness refers to the degree of reproducibility of test results when the same method is applied under a variety of normal, real-world conditions across different testing environments [6] [7]. It assesses the method's performance when subjected to broader, environmental variations typically encountered during method transfer between laboratories [4].

Examples of factors tested in ruggedness studies include:

- Different analysts with varying skill levels and techniques [7]

- Different instruments of the same type but from various manufacturers or models [4]

- Different laboratories with unique environmental conditions (temperature, humidity) [6]

- Different reagent batches, columns, or consumable lots [7]

- Testing performed on different days to account for temporal variations [7]

Table 1: Conceptual and Practical Distinctions Between Ruggedness and Robustness

| Feature | Robustness Testing | Ruggedness Testing |

|---|---|---|

| Primary Objective | Identify critical method parameters and establish their control limits [1] | Demonstrate method reproducibility under different testing environments [7] |

| Nature of Variations | Small, deliberate, and controlled changes to internal method parameters [4] | Broader, environmental factors representing real-world variability [4] |

| Testing Scope | Intra-laboratory (within the same lab) [4] | Inter-laboratory (between different labs) [4] |

| Typical Timing | During method development/optimization [6] [1] | Later in validation, often before method transfer [4] |

| Key Question | "How well does the method withstand minor tweaks to its defined parameters?" [4] | "How well does the method perform when used by different people on different equipment in different locations?" [4] |

| Regulatory Emphasis | ICH guidelines on method robustness [6] | USP definition of method ruggedness [6] |

Methodological Approaches and Experimental Designs

Strategic Application of Experimental Designs

Both robustness and ruggedness testing benefit tremendously from structured experimental design (DOE) approaches, which enable efficient evaluation of multiple factors simultaneously while minimizing the total number of required experiments [8] [9].

For robustness testing, two-level screening designs such as Plackett-Burman or fractional factorial designs are most frequently employed [8] [1]. These designs allow for the examination of numerous factors (f) with a minimal number of experimental runs (N), typically N = f + 1 [1]. The key advantage is the ability to identify which factors from a potentially large set have significant effects on method outcomes, thus directing attention to parameters that require tight control.

For ruggedness testing, nested designs or nested Analysis of Variance (ANOVA) approaches are often applied, particularly when assessing the impact of multiple external factors such as different analysts, instruments, and laboratories [6]. These designs help quantify the variance components attributable to each external factor, providing insight into the major sources of variability when methods are transferred.

Experimental Workflow for Robustness and Ruggedness Assessment

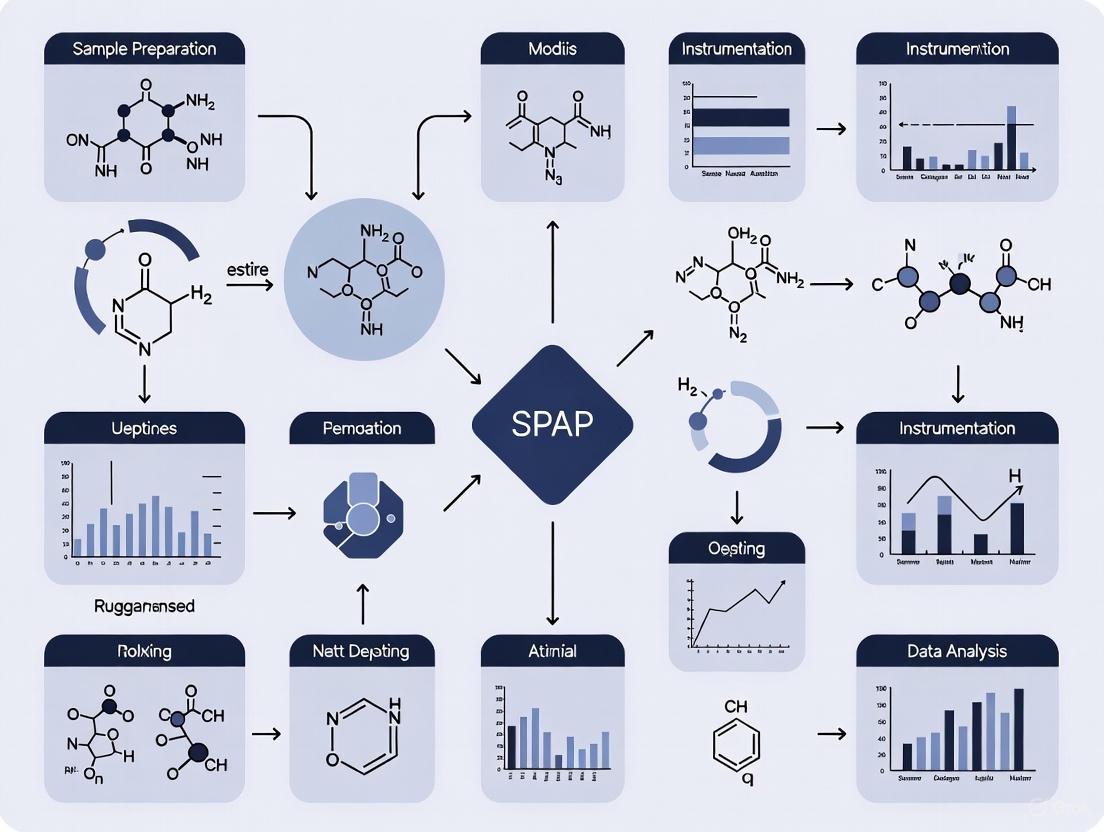

The following diagram illustrates the systematic workflow for planning and executing both robustness and ruggedness studies:

Practical Implementation of a Robustness Test: An HPLC Case Study

To illustrate a practical implementation, consider a robustness test for an HPLC assay of an active compound and related substances [1]. The objective is to determine the method's sensitivity to variations in critical operational parameters.

Selected Factors and Levels: Table 2: Experimental Factors and Levels for an HPLC Robustness Test [1]

| Factor | Type | Low Level (-1) | Nominal Level (0) | High Level (+1) |

|---|---|---|---|---|

| Mobile Phase pH | Quantitative | 3.9 | 4.0 | 4.1 |

| Flow Rate (mL/min) | Quantitative | 0.9 | 1.0 | 1.1 |

| Column Temperature (°C) | Quantitative | 28 | 30 | 32 |

| Organic Modifier (%) | Mixture-related | 24 | 25 | 26 |

| Detection Wavelength (nm) | Quantitative | 298 | 300 | 302 |

| Buffer Concentration (mM) | Quantitative | 18 | 20 | 22 |

| Column Manufacturer | Qualitative | Supplier A | Nominal | Supplier B |

| Reagent Batch | Qualitative | Batch X | Nominal | Batch Y |

Experimental Design and Execution: A Plackett-Burman design with 12 experimental runs was selected to efficiently examine these eight factors [1]. The experiments were executed in a randomized sequence to minimize the impact of uncontrolled variables, or alternatively, in an anti-drift sequence if column aging was a concern. Responses measured included both assay results (e.g., percent recovery of the active compound) and system suitability parameters (e.g., critical resolution between compounds).

Data Analysis and Interpretation: The effect of each factor (Eₓ) on the responses was calculated as the difference between the average results when the factor was at its high level versus its low level [1]. These effects were then statistically interpreted using normal probability plots or by comparing them to a critical effect value derived from dummy factors or from the error estimate of the design [1]. Factors demonstrating statistically significant effects were identified as critical and required tighter specification in the method documentation.

Essential Reagents and Materials for Testing

The reliability of both robustness and ruggedness studies depends on the consistent quality of research materials. The following table catalogues essential reagent solutions and materials required for executing these validation studies, particularly for inorganic analytical methods.

Table 3: Essential Research Reagent Solutions and Materials for Ruggedness and Robustness Testing

| Reagent/Material | Function in Testing | Critical Quality Attributes |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish accuracy and traceability; evaluate method bias across different conditions [2] | Certified uncertainty, stability, matrix matching |

| HPLC/UPLC Columns (Multiple Lots) | Assess separation robustness to column variability; critical for chromatographic methods [1] | Stationary phase chemistry, lot-to-lot reproducibility, particle size |

| Chromatographic Mobile Phase Buffers | Evaluate robustness to pH and composition fluctuations [1] | pH accuracy, buffer capacity, purity, consistency |

| ICP-MS Calibration Standards | Verify instrumental response robustness across different instruments and days [2] | Elemental purity, stability, acid matrix compatibility |

| Sample Preparation Solvents & Reagents | Test extraction efficiency robustness to reagent quality and supplier variations [7] | Purity grade, low background contamination, supplier consistency |

| System Suitability Test Mixtures | Verify performance of instrumentation before validation experiments [6] | Stability, well-characterized response factors |

Data Interpretation and Regulatory Considerations

Statistical Analysis of Factor Effects

The data derived from robustness and ruggedness tests require appropriate statistical analysis to distinguish meaningful effects from random experimental noise. For robustness tests, the calculation of factor effects is followed by graphical analysis using normal probability plots or half-normal probability plots [1]. In these plots, non-significant effects tend to fall along a straight line, while significant effects deviate from this line.

Alternatively, statistical significance can be determined by comparing the absolute factor effects to a critical effect value [1]. This critical effect can be estimated from the standard error of the effects, often derived from dummy factors (in Plackett-Burman designs) or from the error estimate of the experimental design. Factors with effects exceeding the critical value are considered to have a statistically significant influence on the method performance.

For ruggedness testing, Analysis of Variance (ANOVA) is particularly useful for quantifying the variance components attributable to different external factors such as analyst, instrument, and day [6]. This approach helps identify which factors contribute most to total method variability, guiding improvements for method transfer protocols.

Regulatory Expectations and Compliance

Regulatory bodies like the FDA and EMA, along with international harmonization initiatives (ICH), emphasize the importance of demonstrating method validity [6]. While robustness testing is explicitly mentioned in the ICH Q2 guideline, ruggedness is often addressed under the broader concept of intermediate precision or reproducibility [6] [7].

A key regulatory outcome of robustness testing is the establishment of system suitability test (SST) limits [6] [1]. These predefined criteria must be met before the method can be used for actual sample analysis, ensuring the method is performing as validated. For instance, if a robustness test reveals that a small change in mobile phase pH significantly affects the resolution between two critical peaks, the method documentation should specify a tight pH range and include a resolution requirement in the SST.

Robustness and ruggedness, while complementary, address fundamentally different aspects of analytical method validation. Robustness represents the method's inherent stability to minor, intentional variations in its internal parameters, serving as an early warning system during development. Ruggedness demonstrates the method's practical resilience to the inevitable variations encountered in different real-world environments, proving its transferability and reproducibility.

A method may be robust but not rugged—surviving deliberate parameter changes in a single lab but failing when transferred to another instrument or analyst. Conversely, a method cannot be truly rugged without first being robust. Therefore, a systematic validation strategy incorporating both assessments is indispensable for developing reliable analytical methods that stand up to regulatory scrutiny and ensure consistent, high-quality data throughout the method lifecycle.

The Critical Role of Ruggedness in Regulatory Compliance and Method Transfer

In the highly regulated pharmaceutical and analytical industries, the reliability of data is paramount. Ensuring that an analytical method can consistently produce accurate and precise results across the varying conditions of real-world laboratories is a fundamental requirement of quality control systems. Within this framework, ruggedness and robustness emerge as two critical validation parameters that safeguard data integrity, though they address different aspects of method reliability [4].

Robustness is defined as the capacity of an analytical procedure to remain unaffected by small, deliberate variations in method parameters [6] [10]. It represents an internal, intra-laboratory check performed during method development. The goal is to identify which specific method parameters are most sensitive to change, thereby establishing a controlled range within which the method remains reliable. For example, in a High-Performance Liquid Chromatography (HPLC) method, robustness testing might involve deliberately altering the pH of the mobile phase, column temperature, or flow rate within a small, justifiable range to see if the results (e.g., retention time, peak shape) change significantly [10] [4].

In contrast, ruggedness is a measure of the reproducibility of test results obtained from the analysis of the same samples under a variety of normal, but variable, test conditions [6]. It evaluates the method's performance against broader, "environmental" factors such as different analysts, different instruments, different laboratories, different reagent lots, and different days [6] [7] [4]. Where robustness tests the method's stability under minor, controlled "stresses," ruggedness tests its consistency in the hands of different users and in different settings, making it the ultimate litmus test for a method's transferability and long-term utility [4].

Table 1: Core Definitions and Focus of Ruggedness and Robustness

| Aspect | Robustness | Ruggedness |

|---|---|---|

| Primary Focus | Stability under small variations in method parameters [10] | Reproducibility across different conditions, operators, and locations [7] |

| Type of Variations | Minor, deliberate changes (e.g., temperature, pH, flow rate) [10] | Larger, real-world factors (e.g., different analysts, instruments, labs) [10] |

| Typical Scope | Intra-laboratory (within one lab) [4] | Inter-laboratory (between multiple labs or analysts) [4] |

| Key Question | "How well does the method withstand minor tweaks to its procedure?" [4] | "How consistently does the method perform in different hands and different settings?" [4] |

Regulatory Imperative and the Method Transfer Process

Ruggedness is not merely a best practice; it is deeply embedded in global regulatory frameworks. While the International Council for Harmonisation (ICH) Q2 guideline is the definitive framework for analytical method validation and uses the term "intermediate precision" to cover the concept of within-laboratory variations, regulatory bodies explicitly demand proof of a method's reliability across different testing environments [7].

The U.S. Food and Drug Administration (FDA) requires robustness studies in submission packages, and the European Medicines Agency (EMA) expects extensive documentation of intermediate precision conditions [7]. Furthermore, the United States Pharmacopeia (USP) defines ruggedness explicitly as the degree of reproducibility of test results under a variety of normal conditions, such as different laboratories and analysts [6]. A method that has not been evaluated for ruggedness poses a significant risk to regulatory compliance and product approval.

The practical application of ruggedness is most evident during analytical method transfer, a formal process that qualifies a receiving laboratory to perform an analytical method that was previously validated by a sending laboratory [11]. This is a common and critical activity in the global pharmaceutical industry, occurring when production sites change, when analytical testing is outsourced, or when methods move from development to commercial manufacturing sites [11] [12].

Table 2: Common Analytical Method Transfer Approaches

| Transfer Approach | Description | Typical Use Case |

|---|---|---|

| Comparative Testing [11] [13] | A predetermined number of samples from the same lot are analyzed by both the sending and receiving units, and the results are compared against pre-defined acceptance criteria. | The most common approach; particularly useful when the method is already validated at the transferring site [11]. |

| Covalidation [11] [12] | The method is validated simultaneously at multiple sites. The receiving site participates in the validation study, typically by performing intermediate precision or reproducibility testing. | Suitable when a method is transferred from a development site to a commercial site before full validation is complete [12]. |

| Revalidation [11] [13] | The receiving laboratory repeats all or part of the original validation work. | Used when the sending laboratory is not involved or the original validation was not performed to ICH standards [11]. |

| Transfer Waiver [11] [13] | A formal transfer is waived based on a justified risk assessment. | Applicable for compendial methods (e.g., from USP) or when the receiving lab is already highly familiar with a very similar method [11]. |

The success of a method transfer hinges on excellent communication and thorough knowledge sharing from the sending to the receiving laboratory, including all method descriptions, validation reports, and tacit "tricks of the trade" not always captured in written procedures [11]. The transfer is governed by a detailed protocol that defines objective acceptance criteria, which are often based on the method's historical performance and validation data, particularly reproducibility [11].

Experimental Protocols for Assessing Ruggedness

A well-designed ruggedness study is systematic and statistically sound. The following section outlines a generalized protocol that can be adapted for various analytical techniques, including those used in inorganic analysis.

Key Factors and Experimental Design

The first step is to identify the critical factors to be evaluated. For a broad ruggedness study, these typically include [7] [4]:

- Analyst: Different analysts with varying levels of experience and technique.

- Instrument: Different models or units of the same type of instrument (e.g., two different ICP-OES or HPLC systems).

- Laboratory Environment: Different testing locations, accounting for potential differences in ambient temperature, humidity, and water purity.

- Reagent Lots/Batches: Different lots of critical reagents, solvents, or consumables.

- Time: Analyses performed on different days to account for instrument drift and other temporal variations.

To efficiently evaluate the impact of these multiple factors, structured experimental designs are recommended over testing one variable at a time [8] [7]. The Plackett-Burman design is a highly efficient screening design that allows for the investigation of a large number of factors (N) with a minimal number of experimental runs (N+1) [8] [7]. This makes it ideal for initial ruggedness testing to identify which factors have a significant influence on the method's results.

Sample Analysis and Statistical Analysis Workflow

The following diagram illustrates the logical workflow for executing a ruggedness study, from design to final assessment.

The core of the analysis involves statistical tools to quantify and compare variability. Analysis of Variance (ANOVA) is a powerful technique that can determine if the differences observed between the results from different analysts, instruments, or days are statistically significant [7]. Furthermore, calculating the Relative Standard Deviation (RSD) or standard deviation of the results obtained across all the varied conditions provides a direct measure of the method's reproducibility [11] [7]. The observed variability is then compared against pre-defined acceptance criteria, which are based on the method's intended use and product specifications [11]. If the variability is within acceptable limits, the method is considered rugged for the tested factors.

Essential Research Reagent Solutions for Ruggedness Testing

The reliability of a ruggedness study is contingent on the quality and consistency of the materials used. The following table details key reagents and consumables that are critical for experiments, particularly in the context of inorganic analysis.

Table 3: Key Research Reagent Solutions for Analytical Testing

| Item | Function & Importance in Ruggedness |

|---|---|

| Certified Reference Materials (CRMs) [2] | Provides a benchmark with known analyte concentrations to establish method accuracy and track performance across different analysts and instruments. |

| High-Purity Reagents & Solvents [7] [2] | Minimizes background interference and variability. Testing different lots is a key part of ruggedness to ensure consistency isn't lot-dependent. |

| Standardized Calibration Solutions [2] | Ensures that all instruments and analysts are using the same baseline for quantification, reducing a major source of inter-laboratory bias. |

| Specified Chromatographic Columns [6] [4] | For separation techniques, the column's selectivity is critical. Ruggedness testing often involves using columns from different batches or manufacturers. |

| Consumables of Defined Quality (e.g., sampler cones, nebulizers) [2] | Worn or variably manufactured consumables in techniques like ICP-MS can drastically affect sensitivity and stability, making them a factor in ruggedness. |

Comparative Data and Real-World Impact

Quantitative Transfer Criteria

The ultimate demonstration of a method's ruggedness is its successful transfer to a new laboratory. The acceptance criteria for this transfer are specific and quantitative, directly reflecting the method's performance across sites. The following table summarizes typical acceptance criteria for common tests, illustrating how ruggedness is quantitatively measured in a regulatory context [11].

Table 4: Typical Acceptance Criteria for Analytical Method Transfer

| Test | Typical Transfer Acceptance Criteria |

|---|---|

| Identification | Positive (or negative) identification obtained at the receiving site [11]. |

| Assay | Absolute difference between the results from the sending and receiving sites is typically not more than 2-3% [11]. |

| Related Substances (Impurities) | Criteria vary with impurity level. For low-level impurities, recovery of 80-120% for spiked samples may be used. For higher levels (e.g., >0.5%), absolute difference criteria apply [11]. |

| Dissolution | Absolute difference in mean results is NMT 10% at time points when <85% is dissolved, and NMT 5% when >85% is dissolved [11]. |

Case Studies and Cost-Benefit Analysis

Real-world case studies highlight the critical importance of ruggedness testing. For instance, a pharmaceutical company may discover during transfer that their HPLC method for impurity analysis is unexpectedly sensitive to minor column temperature fluctuations, which was not detected in earlier, single-lab validation [7]. Another common finding is that analyst technique significantly impacts the results of a complex sample preparation, necessitating enhanced training protocols to ensure consistency [7].

Investing in comprehensive ruggedness testing during method development provides a substantial return on investment. While it requires upfront resources, it prevents far more costly failures downstream. Benefits include [7]:

- Reduced regulatory submission delays, which can cost over $100,000 per day.

- Prevention of expensive manufacturing investigations when methods fail after transfer to a quality control lab.

- Lower risk of product recalls and associated brand reputation damage.

- Decreased need for method revalidation, saving approximately 60-80 hours of analyst time per method.

Ruggedness is a cornerstone of reliable analytical science, serving as the critical bridge between a method's theoretical validation and its practical, reproducible application in a globalized and regulated industry. It is the definitive proof that a method is not only scientifically sound but also practically deployable, ensuring that a drug product or material is assessed consistently whether tested in New York, Singapore, or Zurich. By integrating a robustness-first mindset during development, followed by rigorous ruggedness assessment, laboratories can future-proof their methods, guarantee data integrity, and ensure seamless regulatory compliance and successful method transfers throughout a product's lifecycle.

In the field of inorganic analysis, the reliability of analytical data is paramount, influencing critical decisions in drug development, environmental monitoring, and quality control. Ruggedness is defined as a measure of an analytical method's capacity to remain unaffected by small, deliberate variations in method parameters, demonstrating its reliability during normal usage conditions [8]. For researchers and scientists developing inorganic analytical methods, understanding and testing for ruggedness is not merely a regulatory formality but a fundamental aspect of ensuring data integrity. A method's ruggedness is tested by examining its reproducibility under a variety of real-world conditions, such as different analysts, instruments, and laboratories [4].

This concept is distinct from, yet complementary to, robustness, which is an internal, intra-laboratory study performed during method development to determine how sensitive a method is to small, premeditated changes in its parameters (e.g., mobile phase pH, flow rate, column temperature) [4]. While robustness testing identifies a method's sensitive parameters and establishes controllable limits, ruggedness testing validates that the method produces reproducible results when deployed across the expected range of operational environments. For inorganic analysis, which often involves techniques like ICP-OES, AAS, and ion chromatography, these tests are vital for confirming that complex sample matrices and variable environmental conditions do not compromise analytical results.

Key Variable Categories in Inorganic Analysis

The reliability of an inorganic analytical method can be influenced by a multitude of factors, which can be systematically categorized into environmental, instrumental, and analyst-related variables. A clear understanding of these categories allows for a more structured and effective ruggedness testing protocol.

Environmental Variables

Environmental variables pertain to the physical conditions of the laboratory space where the analysis is conducted. Although these factors are often external to the analytical instrument itself, they can have a profound impact on the stability of the analytical measurement and the properties of the samples and standards.

- Temperature: Fluctuations in ambient laboratory temperature can affect reaction kinetics, the stability of standard solutions, and the performance of sensitive instrumental components. For instance, in techniques like electrothermal AAS, the temperature program of the graphite furnace is a critical parameter that must be controlled precisely [8].

- Humidity: Variations in relative humidity can lead to the absorption of moisture by hygroscopic samples or standards, altering their effective concentration and leading to quantitative errors.

- Light Intensity: For light-sensitive analytes, such as certain metal complexes or species prone to photodegradation, uncontrolled light exposure during sample preparation or storage can result in analyte decomposition and inaccurate results.

Instrumental Variables

Instrumental variables are associated with the operational parameters of the analytical equipment and the reagents used. These are often the primary focus of robustness studies during method development [4].

- Flow Rate (e.g., in HPLC-ICP-MS): A minor shift in flow rate, for example from 1.0 mL/min to 1.1 mL/min, can change retention times and peak shapes, potentially affecting resolution and quantitative accuracy [4].

- Mobile Phase Composition: Small changes in the ratio of solvents or the pH of the mobile phase (e.g., a shift from pH 4.0 to 4.1) can significantly alter the separation efficiency and detector response in chromatographic techniques coupled with inorganic detection [8] [4].

- Gas Pressure/Purity (e.g., in ICP-MS): The purity and pressure of the plasma gas, auxiliary gas, and collision/reaction cell gases are critical for signal stability, sensitivity, and the effective reduction of polyatomic interferences.

- Reagent and Column Batches: Using reagents, columns, or consumables from different manufacturers or different lot numbers can introduce variability, as their performance characteristics may differ subtly [4].

Analyst Variables

Analyst variables encompass the human element and sample handling procedures in the analytical process. Ruggedness testing across different analysts is crucial for inter-laboratory reproducibility [4].

- Sample Preparation Technique: Variations in how different analysts perform steps such as weighing, dilution, digestion, and extraction can introduce significant variability. This includes differences in vortexing time, sonication duration, and filtration techniques.

- Calibration Practices: The preparation of calibration standards and the construction of the calibration curve can vary between analysts, impacting the final quantitative result.

- Instrument Operation and Maintenance: Differences in how analysts tune instruments, perform quality control checks, and conduct routine maintenance can affect long-term method performance.

Table 1: Key Variable Categories in Inorganic Analysis

| Category | Specific Examples | Potential Impact on Analysis |

|---|---|---|

| Environmental | Laboratory temperature, Humidity, Light intensity | Affects reaction kinetics, solution stability, and sample integrity [14]. |

| Instrumental | Flow rate, Mobile phase pH, Gas pressure, Reagent batch | Alters retention time, signal sensitivity, resolution, and detection limits [8] [4]. |

| Analyst | Sample preparation technique, Calibration practices | Introduces variability in recovery, precision, and accuracy [4]. |

Experimental Designs for Ruggedness Testing

A systematic approach to experimental design is essential for efficiently evaluating the numerous variables that can affect an analytical method. Chemometric tools, particularly factorial designs, are the most efficient and recommended approaches for this purpose [8].

Full Factorial Design

A two-level full factorial design is a powerful tool for a preliminary evaluation of factors. It involves testing all possible combinations of the chosen factors at two levels (e.g., high and low). This approach allows for the development of linear models and can estimate not only the main effect of each variable but also the interaction effects between them. For example, a 2³ full factorial design would test three variables (like pH, temperature, and flow rate) at two levels each, requiring 8 experimental runs. However, this design becomes impractical when the number of factors is high, as the number of experiments grows exponentially [8].

Plackett-Burman Design

When the number of factors to be investigated is large, the Plackett-Burman design is the most recommended and frequently employed approach for robustness and ruggedness studies [8]. This is a highly fractional factorial design that allows for the screening of a large number of factors (N-1 factors) with a minimal number of experimental runs (N runs, where N is a multiple of 4). While it is primarily used to estimate main effects and assumes interactions are negligible, it is extremely efficient for identifying the most influential variables from a large set early in the method validation process.

Response Surface Methodologies

For a more detailed exploration of critical factors, especially when response surfaces are curved, methodologies like Box-Behnken and Central Composite Designs are employed [8]. These designs are used after critical factors have been identified through screening designs to model quadratic relationships and find optimal method conditions.

Experimental Design Workflow for Ruggedness Testing

Protocols for Key Experiments

Implementing a structured protocol is key to generating meaningful and defensible ruggedness data. The following provides a detailed methodology for a ruggedness test, adaptable to techniques like ICP-OES or ion chromatography.

Defining Scope and Selecting Variables

- Objective: To determine the impact of X environmental, Y instrumental, and Z analyst variables on the accuracy and precision of the determination of [Target Analyte, e.g., Lead] in [Matrix, e.g., drinking water] using [Analytical Technique, e.g., ICP-OES].

- Variable Selection: Based on prior knowledge and robustness testing, select variables for assessment. For a multi-laboratory ruggedness test, analyst and instrument variables are typically included.

- Response Metrics: Define the critical response metrics to be monitored. These typically include accuracy (as % recovery of a known standard), precision (as % relative standard deviation, %RSD), and any other critical performance indicators like retention time or signal-to-noise ratio.

Experimental Setup and Execution

- Sample Preparation: A homogeneous batch of sample material, spiked with a known concentration of the target analyte, is distributed to all participating analysts or laboratories. A standard operating procedure (SOP) for sample preparation is provided, but analysts are permitted to use their normal techniques unless specified.

- Instrumental Analysis: Each analyst performs the analysis using their assigned instrument. Key instrumental parameters identified in the design (e.g., plasma power, nebulizer flow rate for ICP-OES) are varied between their designated high and low levels according to the experimental design matrix.

- Data Collection: Each participant reports the raw data for the predefined response metrics for every experimental run.

Data Analysis and Interpretation

- Statistical Analysis: The data collected from the experimental runs are analyzed using statistical software. For a Plackett-Burman or factorial design, the main effect of each variable is calculated.

- Main Effect Calculation: The main effect of a variable is the difference between the average response when the variable is at its high level and the average response when it is at its low level. A large absolute value of the main effect indicates that the variable has a substantial influence on the method's performance.

- Identification of Critical Factors: Variables that produce a statistically significant change (e.g., using ANOVA or a t-test) in the response metrics are deemed critical factors. The acceptance criteria should be defined a priori (e.g., a change in recovery of more than ±5% or a doubling of the %RSD may be considered significant).

Table 2: Example Ruggedness Test Results for a Trace Metal Analysis Method (ICP-OES)

| Variable Tested | Variable Category | Effect on % Recovery | Effect on %RSD | Judgment |

|---|---|---|---|---|

| Plasma Power | Instrumental | +2.1% | +0.8% | Acceptable |

| Nebulizer Flow Rate | Instrumental | -6.5% | +3.2% | Critical |

| Different Analyst | Analyst | -1.8% | +1.5% | Acceptable |

| Laboratory Temperature | Environmental | +0.9% | +0.5% | Acceptable |

| Digestion Time | Analyst | -4.2% | +2.1% | Critical |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and materials essential for conducting rigorous inorganic analysis and ruggedness testing, along with their specific functions.

Table 3: Essential Research Reagent Solutions for Inorganic Analysis and Ruggedness Testing

| Reagent/Material | Function in Analysis |

|---|---|

| High-Purity Standards | Certified reference materials for accurate instrument calibration and quantification of target inorganic analytes. |

| Ultra-Pure Acids (HNO₃, HCl) | Used for sample digestion and dissolution to bring solid samples into solution without introducing contaminants. |

| Internal Standard Solution | Added to samples and standards to correct for instrument drift and matrix effects in techniques like ICP-MS. |

| Tuning Solutions | Used for daily optimization and performance verification of instruments like ICP-MS to ensure sensitivity and stability. |

| Mobile Phase Buffers | Critical for maintaining consistent pH and ionic strength in chromatographic separations (e.g., IC) to ensure reproducible retention times. |

| Certified Reference Materials (CRMs) | Real-world matrix-matched materials with certified analyte concentrations used for method validation and verifying accuracy. |

A systematic approach to identifying and testing key environmental, instrumental, and analyst variables is fundamental to developing rugged inorganic analytical methods. By employing structured experimental designs like Plackett-Burman and full factorial designs, researchers can efficiently screen a large number of factors to identify those that are critical to method performance [8]. The experimental data generated from these protocols not only fulfills regulatory requirements but, more importantly, builds a foundation of confidence in the analytical results. For drug development professionals and scientists, this rigorous validation ensures that methods will transfer successfully between laboratories and analysts, and will consistently produce reliable data throughout the method's lifecycle, thereby safeguarding product quality and patient safety.

Executing Ruggedness Studies: A Step-by-Step Methodology for Inorganic Methods

Incorporating a Risk-Based Approach to Identify Critical Method Parameters

In the field of inorganic analytical methods research, the reliability and reproducibility of methods are paramount. Analytical method ruggedness is defined as an experimental evaluation of how a method performs when subjected to variations in normal operating conditions, such as different analysts, instruments, or laboratory environments [15]. This concept is distinct from robustness, which focuses on a method's capacity to remain unaffected by small, deliberate variations in method parameters [7]. A risk-based approach to ruggedness testing systematically identifies critical method parameters that could significantly affect analytical results if they varied during routine use. This proactive strategy allows researchers to focus control efforts on factors that matter most, thereby enhancing method reliability and facilitating smoother method transfer between laboratories. Incorporating risk assessment early in method development represents a paradigm shift from traditional quality-by-testing (QbT) toward modern Analytical Quality by Design (AQbD) principles, which build quality into the method from the outset rather than testing for it at the end [16].

Theoretical Framework: Risk-Based Principles

Fundamental Concepts and Regulatory Context

The International Council for Harmonisation (ICH) defines risk as "the combination of the probability of occurrence of harm and the severity of that harm" [16]. In the context of analytical method development, this translates to identifying which parameters, if varied, could lead to method failure or unreliable results. A risk-based approach begins with defining the Analytical Target Profile (ATP)—a formal statement of the required method performance characteristics [16]. This patient-focused approach ensures that method development is driven by its intended purpose in pharmaceutical quality control.

The transition from Quality by Testing (QbT) to Analytical Quality by Design (AQbD) represents a fundamental shift in pharmaceutical quality control. While QbT employs an unstructured "trial-and-error" approach varying one factor at a time, AQbD incorporates prior knowledge, risk management, and design of experiments (DoE) throughout the analytical method life-cycle [16]. This systematic approach provides a deeper understanding of method parameters and their interactions, ultimately leading to the definition of a Method Operable Design Region (MODR) where method performance is guaranteed with a defined probability.

The Risk Assessment Process

Implementing a risk-based approach involves a structured process to identify, analyze, and evaluate potential risks to method performance [15] [16]:

- Parameter Identification: Systematically list all method parameters that might affect performance

- Risk Analysis: Assess the potential impact and probability of occurrence for each parameter

- Risk Evaluation: Prioritize parameters based on their risk level to determine which require experimental investigation

This process can be facilitated through various tools, including Failure Mode Effects Analysis (FMEA) and risk matrices, which provide visual representations of risk priorities. The output guides subsequent experimental designs by highlighting parameters with the highest potential impact on method performance.

Experimental Design for Ruggedness Testing

Selection of Factors and Levels

The first step in designing a ruggedness test is selecting which factors to investigate and determining appropriate levels for testing. Factors typically include instrumental parameters (e.g., column temperature, flow rate, detection wavelength), environmental conditions (e.g., temperature, humidity), and operational variables (e.g., analyst technique, reagent sources) [1]. For quantitative factors, two extreme levels are generally chosen symmetrically around the nominal level described in the method procedure. The interval between these levels should represent variations expected during method transfer between laboratories. For qualitative factors (e.g., column manufacturer, reagent batch), two discrete levels are compared, typically including the nominal level and an alternative [1].

Table 1: Example Factors and Levels for an HPLC Ruggedness Test

| Factor Type | Parameter | Low Level (-1) | Nominal Level (0) | High Level (+1) |

|---|---|---|---|---|

| Quantitative | Mobile phase pH | -0.2 units | As specified | +0.2 units |

| Quantitative | Column temperature | -3°C | As specified | +3°C |

| Quantitative | Flow rate | -0.1 mL/min | As specified | +0.1 mL/min |

| Quantitative | Detection wavelength | -3 nm | As specified | +3 nm |

| Qualitative | Column batch | Batch A | Reference batch | Batch B |

| Environmental | Ambient temperature | -5°C | As controlled | +5°C |

| Procedural | Extraction time | -10% | As specified | +10% |

| Procedural | Centrifuge speed | -5% | As specified | +5% |

Experimental Design Selection

Screening designs that efficiently evaluate multiple factors with minimal experiments are most appropriate for ruggedness testing. Fractional factorial (FF) and Plackett-Burman (PB) designs are commonly employed as they allow examining f factors in as few as f+1 experiments [1]. The choice between designs depends on the number of factors being investigated and considerations regarding the statistical interpretation of results. For example, a study examining 7 factors might use a Plackett-Burman design with 12 experiments, which allows estimating 7 factor effects while using the remaining columns for dummy factors to assist in statistical interpretation [1].

Table 2: Comparison of Experimental Designs for Ruggedness Testing

| Design Type | Number of Factors | Minimum Experiments | Interactions Estimated | Best Use Case |

|---|---|---|---|---|

| Full factorial | k | 2^k | All | Small number of factors (≤4) |

| Fractional factorial | k | 2^(k-p) | Some | Moderate factors (5-8) |

| Plackett-Burman | k | Multiple of 4 (≥ k+1) | None | Screening many factors (7-11) |

| One-factor-at-a-time | k | k+1 | None | Not recommended for ruggedness |

Methodologies and Protocols

Risk Assessment Protocol

Prior to experimental ruggedness testing, a systematic risk assessment should be conducted [15] [16]:

- Define the Analytical Target Profile (ATP): Clearly state the method's purpose and required performance characteristics

- Identify Potential Risk Parameters: Brainstorm all method parameters that might affect performance

- Assess Risk Priority: Evaluate each parameter based on its potential impact on method results and the probability of variation occurring

- Categorize Parameters: Classify parameters as high, medium, or low risk based on the assessment

- Select Parameters for Testing: Focus experimental designs on high and medium-risk parameters

This protocol ensures that experimental resources are directed toward factors most likely to affect method performance during transfer or routine use.

Ruggedness Testing Experimental Protocol

The experimental protocol for ruggedness testing involves several critical steps [1]:

- Experimental Sequence Definition: While random execution is often recommended, when drift or time effects occur (e.g., HPLC column aging), an anti-drift sequence that confounds time effects with less critical factors may be preferable

- Replication Strategy: Include replicated experiments at nominal conditions at regular intervals to monitor and correct for time-related drift

- Sample Selection: Measure solutions representative of the method's application, considering concentration ranges and sample matrices

- Response Measurement: Record both assay responses (e.g., content determinations) and system suitability test (SST) responses (e.g., resolution, peak asymmetry)

For practical reasons, experiments may be blocked by factors that are difficult to change frequently, such as column manufacturer. In such cases, all experiments at one factor level are performed before switching to the other level.

Data Analysis and Interpretation

Statistical Analysis of Factor Effects

The effect of each factor on the response is calculated as the difference between the average responses when the factor was at its high level and the average when it was at its low level [1]. For a factor X, the effect (E_X) on response Y is calculated as:

E_X = (Average Y at high X level) - (Average Y at low X level)

These effects are then subjected to statistical analysis to determine their significance. Two primary approaches are used:

- Graphical Analysis: Normal or half-normal probability plots can visually identify significant effects that deviate from a straight line formed by unimportant effects [1]

- Statistical Significance Testing: Critical effects can be determined using the algorithm of Dong or by using dummy factors included in Plackett-Burman designs to estimate experimental error [1]

Interpretation and Decision Making

Based on the statistical analysis, method parameters are categorized as [15]:

- Critical Parameters: Those with statistically significant effects on method responses—these require tight control in the method procedure

- Non-Critical Parameters: Those without significant effects—these can have more flexible control limits

The results also inform the definition of system suitability test (SST) limits to ensure the method remains in control during routine use. For critical parameters, the ruggedness test defines the acceptable operating ranges that still ensure method performance.

Comparative Analysis of Approaches

Traditional vs. Risk-Based Approach

The traditional QbT approach to method development differs significantly from the modern risk-based AQbD approach:

Table 3: Comparison of Traditional QbT and Risk-Based AQbD Approaches

| Aspect | Quality by Testing (QbT) | Risk-Based AQbD |

|---|---|---|

| Development Strategy | Unstructured "trial-and-error" | Systematic, based on prior knowledge and risk assessment |

| Experimental Approach | One-Factor-at-a-Time (OFAT) | Design of Experiments (DoE) |

| Robustness Assessment | Performed at end of development | Built into development process |

| Knowledge Space | Limited to working point | Comprehensive understanding of method operability region |

| Regulatory Submission | Working point with fixed parameters | Method Operable Design Region (MODR) |

| Post-Approval Changes | Often require regulatory approval | Within MODR only require notification |

Performance Metrics and Data Comparison

Studies incorporating risk-based approaches demonstrate superior method performance and reduced failure rates during transfer. Key comparative metrics include:

Table 4: Comparative Performance Data of Different Development Approaches

| Performance Metric | Traditional QbT | Risk-Based AQbD | Improvement |

|---|---|---|---|

| Method transfer success rate | 60-70% | 90-95% | +30-35% |

| Number of experiments required | High | Optimized | 40-60% reduction |

| Method understanding | Limited | Comprehensive | Significant enhancement |

| Post-approval change flexibility | Low | High | Substantial improvement |

| Long-term reliability | Variable | Consistently high | More predictable performance |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of risk-based ruggedness testing requires specific tools and materials:

Table 5: Essential Research Reagents and Solutions for Ruggedness Testing

| Item Category | Specific Examples | Function in Ruggedness Testing |

|---|---|---|

| Chromatographic Columns | Different batches from same manufacturer; Columns from different manufacturers | Evaluate separation consistency and column-related ruggedness |

| Mobile Phase Components | Multiple lots of buffers; Different sources of organic modifiers | Assess impact of reagent quality and source on method performance |

| Reference Standards | Certified reference materials; Working standards from different sources | Verify method accuracy and identify potential interferences |

| Sample Matrices | Representative placebo formulations; Actual patient samples with variations | Evaluate method specificity and matrix effects |

| System Suitability Test Solutions | Reference mixtures at specification limits | Monitor system performance and establish acceptance criteria |

| Statistical Software | JMP, Minitab, Design-Expert | Design experiments and analyze factor effects |

Signaling Pathways and Workflow Visualization

Risk-Based Ruggedness Assessment Workflow

Experimental Design Selection Algorithm

Incorporating a risk-based approach to identify critical method parameters represents a significant advancement in ruggedness testing for inorganic analytical methods. This systematic methodology enables researchers to focus resources on parameters that truly impact method performance, leading to more robust and transferrable methods. The implementation of risk assessment tools combined with structured experimental designs provides a science-based framework for method development that aligns with regulatory expectations and modern quality paradigms. As the pharmaceutical industry continues to evolve, the adoption of these approaches will be essential for developing reliable analytical methods that ensure product quality and patient safety throughout the method life-cycle.

In the field of inorganic analysis, the reliability of data is paramount, particularly in regulated industries such as pharmaceutical development. Ruggedness testing is a critical validation parameter that measures an analytical method's reproducibility under real-world conditions, such as variations between different analysts, instruments, laboratories, or days [4]. This concept is distinct from, yet complementary to, robustness testing, which investigates a method's performance when subjected to small, deliberate variations in internal method parameters (e.g., mobile phase pH or flow rate) [4]. A method that is both robust and rugged provides confidence in its transferability across laboratories and its long-term reliability for quality control.

This guide provides a practical comparison of three cornerstone techniques for inorganic analysis—Inductively Coupled Plasma Optical Emission Spectrometry (ICP-OES), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), and Ion Chromatography (IC). It is framed within the context of ruggedness testing, offering researchers and scientists a framework for selecting and validating methods that will yield consistent, defensible data. The objective is to equip drug development professionals with the knowledge to choose the right technology based on application needs and to understand the key factors that must be controlled to ensure method ruggedness.

Fundamental Principles

- ICP-OES: This technique relies on the excitation of atoms and ions in a high-temperature argon plasma. As these excited species return to their ground state, they emit light at characteristic wavelengths. The intensity of this emitted light is measured to identify elements and determine their concentrations [17] [18].

- ICP-MS: ICP-MS also uses a high-temperature plasma to atomize and ionize the sample. However, instead of measuring light emission, it separates the resulting ions based on their mass-to-charge ratio (m/z) using a mass spectrometer. This allows for extremely sensitive elemental and isotopic analysis [17] [19] [18].

- Ion Chromatography (IC): IC is a form of liquid chromatography that separates ions (such as anions, cations, or organic acids) based on their interaction with a stationary phase (ion-exchange resin). The separated ions are then detected and quantified, typically using conductivity detection.

Comparative Technical Specifications

The selection of an analytical technique is a balance between detection capability, sample tolerance, operational complexity, and cost. The table below summarizes the key characteristics of ICP-OES, ICP-MS, and IC.

Table 1: Technical Comparison of ICP-OES, ICP-MS, and Ion Chromatography

| Aspect | ICP-OES | ICP-MS | Ion Chromatography (IC) |

|---|---|---|---|

| Detection Principle | Optical Emission | Mass Spectrometry | Ion Exchange & Conductivity/Other Detectors |

| Typical Detection Limits | Parts per billion (ppb) [17] [20] | Parts per trillion (ppt) [17] [20] | Parts per billion (ppb) to low ppm |

| Dynamic Range | Up to 4-6 orders of magnitude [18] | Up to 8-9 orders of magnitude [17] [18] | 3-4 orders of magnitude |

| Multi-Element/-Ion Capability | Simultaneous multi-element [19] | Simultaneous multi-element & isotopic [19] [18] | Sequential multi-ion |

| Sample Tolerance (TDS) | High (up to 2-30%) [21] [20] | Low (typically <0.2-0.5%) [19] [20] | Moderate (requires clean, filtered samples) |

| Primary Interferences | Spectral (overlapping lines) [17] [20] | Isobaric (polyatomic ions), space charge [22] [17] | Co-eluting ions, matrix effects |

| Isotopic Analysis | Not available [18] | Available [18] | Not available |

| Operational Cost & Expertise | Moderate cost, simpler operation [17] [20] | High cost, requires skilled personnel [17] [19] | Moderate cost, requires chromatographic expertise |

| Key Applications | Environmental, metallurgy, food, major/minor elements [17] [18] | Ultra-trace elements, clinical, nuclear, isotopic tracing [19] [23] [18] | Water analysis, pharmaceutical impurities, food additives |

Key Factors and Levels for Ruggedness Testing

A ruggedness study assesses how variations in typical operating conditions affect a method's results. The factors and levels to investigate are technique-specific.

ICP-OES and ICP-MS Factors

For plasma-based techniques, key factors influencing ruggedness include plasma conditions and sample introduction parameters.

Table 2: Key Factors and Levels for Plasma-Based Technique Ruggedness Testing

| Factor Category | Specific Factor | Typical "Normal" Level | Suggested Variation for Ruggedness Testing |

|---|---|---|---|

| Plasma Conditions | RF Power | As per method optimization (e.g., 1000-1400 W) [22] | ± 50-100 W |

| Nebulizer Gas Flow | As per method optimization (e.g., 0.63-0.85 L/min) [22] | ± 0.05 L/min | |

| Sample Uptake Rate | As per method setup (e.g., 1 mL/min) [22] | ± 0.1 mL/min | |

| Interface & Ion Optics | Ion Lens Voltages | Optimized for sensitivity [22] | ± 5% of set voltage |

| Sample Characteristics | Matrix Composition (e.g., Acid strength, Carbon content) | Dilute acid (e.g., 2% HNO₃) | Variation in acid type/strength or addition of a matrix (e.g., carbon) [21] |

| Total Dissolved Solids (TDS) | <0.2% for ICP-MS; <5% for ICP-OES [19] [20] | Intentional, controlled increase within a justifiable range |

Ion Chromatography Factors

For IC, the critical factors are related to the chromatographic separation and detection.

Table 3: Key Factors and Levels for Ion Chromatography Ruggedness Testing

| Factor Category | Specific Factor | Typical "Normal" Level | Suggested Variation for Ruggedness Testing |

|---|---|---|---|

| Eluent Conditions | Eluent Composition/Concentration | As per method (e.g., specific mM KOH or Na₂CO₃/NaHCO₃) | ± 5-10% of concentration |

| Eluent pH | As per method (e.g., pH 4.0) | ± 0.1-0.2 units [4] | |

| Flow Rate | As per method (e.g., 1.0 mL/min) | ± 0.1 mL/min [4] | |

| Separation System | Column Temperature | As per method (e.g., 30°C) | ± 2-5°C [4] |

| Column Batch/Supplier | Single batch from one supplier | Different batches or suppliers [4] | |

| Detection | Suppressor Current | As per manufacturer's recommendation | ± 5% of set current |

| Detection Temperature | As per method (e.g., 35°C) | ± 2-5°C |

Experimental Protocols for Key Studies

Protocol: Investigating Robust Plasma Conditions in ICP-MS

This protocol is based on a study exploring conditions that minimize matrix effects (a key aspect of robustness, which contributes to overall method ruggedness) [22].

- Objective: To identify operating conditions (nebulizer gas flow, RF power) that minimize matrix interferences and provide more accurate multi-element analysis.

- Materials: ICP-MS with cross-flow nebulizer; multi-element standard solution; matrix interferent solution (e.g., 6 mM Barium).

- Procedure:

- Standard Optimization: Begin with "normal" conditions optimized for sensitivity (e.g., 1000 W power, 0.85 L/min nebulizer gas).

- Interference Study: Introduce the matrix interferent and analyze the multi-element standard.

- Parameter Variation: Systematically vary key parameters:

- Nebulizer Gas Flow: Test a range from higher (e.g., 0.85 L/min) to lower flows (e.g., 0.60 L/min).

- RF Power: Test a range from lower (e.g., 1000 W) to higher powers (e.g., 1400 W).

- Data Analysis: For each set of conditions, calculate the "average interference" as the relative deviation from the expected analyte signal, averaged across all analytes. The conditions that minimize this average interference are deemed "robust" [22].

- Key Findings: A significant reduction in matrix effects was observed at lower nebulizer gas flows (e.g., 0.63 L/min) with only a factor of 2-3 reduction in sensitivity. Higher RF power also helped, but its effect was less critical than gas flow [22].

Protocol: High-Sensitivity Trace Analysis in ICP-OES

This protocol outlines an approach to enhance ICP-OES sensitivity to meet challenging detection limits, expanding its utility as a more rugged alternative to ICP-MS in some scenarios [21].

- Objective: To achieve detection limits for toxic elements in complex matrices (e.g., cannabis) that meet regulatory requirements using high-efficiency sample introduction.

- Materials: ICP-OES with axial view; high-efficiency nebulizer (e.g., V-Groove type with impact bead); microwave digestion system; nitric acid and hydrochloric acid.

- Procedure:

- Sample Digestion: Digest 1.00 g of sample with 10 mL concentrated HNO₃ and 0.3 mL concentrated HCl at 230°C using a microwave digestion system to minimize residual carbon.

- Matrix-Matched Calibration: Prepare calibration standards in a matrix that closely matches the digested sample. This is critical for accuracy and must include:

- The same acid mixture and concentration.

- ~1150 ppm carbon (as Potassium Hydrogen Phthalate) to compensate for carbon-based spectral interference.

- ~600 ppm calcium to account for potential stray light effects [21].

- Analysis: Use a high-efficiency nebulizer and cyclonic spray chamber to boost sensitivity. The large internal diameter of the selected nebulizer (~0.75 mm) avoids clogging, eliminating the need for filtration and enhancing method ruggedness [21].

- Key Findings: This approach, focusing on complete digestion and meticulous matrix-matching, allowed for the accurate quantification of challenging elements like Arsenic and Lead at low ppb levels, meeting stringent regulatory limits [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Materials for Inorganic Analysis Methods

| Item | Function | Technique Application |

|---|---|---|

| High-Purity Acids (HNO₃, HCl) | Sample digestion and dilution; minimizing background contamination. | ICP-OES, ICP-MS, IC (sample prep) |

| Multi-element/Ion Standard Solutions | Instrument calibration and quality control. | ICP-OES, ICP-MS, IC |

| Internal Standard Solution (e.g., Sc, Y, In, Lu) | Corrects for instrument drift and matrix suppression/enhancement. | ICP-OES, ICP-MS |

| High-Purity Water (Type I) | Preparation of all solutions, blanks, and mobile phases. | All |

| Ion Chromatography Eluent | Mobile phase for separation (e.g., KOH, Na₂CO₃/NaHCO₃). | IC |

| Certified Reference Materials (CRMs) | Method validation and verification of accuracy. | All |

| High-Efficiency or Rugged Nebulizer | Sample introduction; robust nebulizers resist clogging from high matrix samples. | ICP-OES, ICP-MS |

Method Selection and Ruggedness Workflow

The following diagram illustrates a systematic workflow for selecting an analytical technique and key factors to consider for ensuring method ruggedness.

Selecting the appropriate analytical technique and rigorously testing its ruggedness are foundational to generating reliable data in drug development and other scientific fields. ICP-MS offers unrivalled sensitivity for ultra-trace analysis, ICP-OES provides robust performance for high-matrix samples at moderate cost, and Ion Chromatography is the definitive technique for ionic species. The experimental protocols and factor-level comparisons provided in this guide serve as a practical starting point for designing validation studies. By systematically investigating the critical factors outlined, scientists can develop methods that are not only scientifically sound but also transferable and reproducible, ensuring data integrity throughout a product's lifecycle.

In the validation of inorganic analytical methods, ruggedness testing is a critical parameter that demonstrates the reliability of an analytical procedure under minor, deliberate variations in method conditions [8]. It simulates the changes that can be expected when transferring a method between laboratories, instruments, or operators [24]. The primary goal is to identify factors that significantly influence the method's performance to establish acceptable operational tolerances. In this context, screening designs provide a systematic, efficient framework for this evaluation, allowing scientists to screen a large number of potential factors in a limited number of experimental runs [25] [26]. This approach is far superior to the traditional one-variable-at-a-time (OVAT) method, which is time-consuming, resource-intensive, and incapable of detecting interactions between factors.

Two-level screening designs, particularly Plackett-Burman and Fractional Factorial designs, have become the chemometric tools of choice for robustness studies [25] [8]. They enable researchers to estimate the effects of multiple factors (e.g., pH, temperature, mobile phase composition, instrument parameters) on critical analytical responses (e.g., peak area, resolution, detection limit) simultaneously. The efficiency of these designs stems from the sparsity-of-effects principle, which states that most processes are driven by a limited number of main effects and low-order interactions [27] [28]. For researchers and drug development professionals, mastering these designs is essential for developing robust, transferable, and reliable analytical methods, ultimately reducing method failure rates during technology transfer and regulatory submission.

Theoretical Foundations of Fractional Factorial Designs

Basic Principles and Notation

Fractional Factorial Designs (FFDs) are a class of statistical experimental designs that form a subset (or fraction) of a full factorial design [27]. A full factorial design investigates all possible combinations of factors and their levels; for k factors each at 2 levels, this requires 2k experimental runs. FFDs significantly reduce this number by testing only a carefully selected fraction of these combinations, chosen to maximize the information gained while confounding (aliasing) effects that are presumed negligible [27] [29].

The standard notation for a two-level FFD is 2k-p, where [27]: