Ensuring Data Credibility: A Practical Guide to Cross-Validation of Inorganic Analysis Methods Between Laboratories

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to implement robust cross-laboratory validation for inorganic analysis methods.

Ensuring Data Credibility: A Practical Guide to Cross-Validation of Inorganic Analysis Methods Between Laboratories

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to implement robust cross-laboratory validation for inorganic analysis methods. Covering foundational principles, methodological applications, troubleshooting, and comparative validation, it addresses the critical need for reproducibility and reliability in scientific data. By outlining standardized protocols, best practices for managing complex datasets, and strategies to overcome common challenges like reagent variability and instrumental drift, this guide aims to enhance data credibility, reduce wasted resources, and accelerate scientific progress in biomedical and clinical research.

The Critical Importance of Reproducibility in Inorganic Analysis

In analytical chemistry and the broader scientific field, the validity of new findings is confirmed through independent verification [1]. The terms reproducibility and replicability, often used interchangeably in everyday language, have distinct and critical meanings in a scientific context. According to the National Academies of Sciences, Engineering, and Medicine, reproducibility refers to obtaining consistent results using the same input data, computational steps, methods, and code [1]. In contrast, replicability means obtaining consistent results across studies aimed at answering the same scientific question, each of which has obtained its own data [1].

The scientific community employs various replication strategies to validate and build upon existing research. The American Society for Cell Biology (ASCB) has outlined a multi-tiered approach to defining reproducibility, which includes direct, analytic, and systemic replication [2] [3]. These concepts form a framework for understanding how scientific claims are tested and confirmed, which is particularly crucial in analytical chemistry where measurements must be reliable enough to serve as a foundation for future developments in fields like biomedical sciences, life sciences, and pharmaceutical development [2] [4].

Core Concepts and Definitions

Hierarchical Framework of Replication

Scientific replication exists on a spectrum, from exact duplication of previous work to conceptual reevaluation of underlying hypotheses. The analytical chemistry community primarily recognizes four distinct types of replication, each serving a different purpose in the validation process.

Comparative Definitions of Replication Types

Table 1: Defining the Spectrum of Scientific Replication

| Replication Type | Core Objective | Key Characteristics | Primary Applications in Analytical Chemistry |

|---|---|---|---|

| Direct Replication | Reproduce a previously observed result using identical experimental design and conditions [2] [3] | Same methods, same conditions, same experimental design [2] | Establishing that a finding is reproducible; giving greater validity to scientific findings [2] |

| Analytic Replication | Reproduce scientific findings through reanalysis of the original dataset [2] [3] | Uses original data from a study with rigorous reanalysis [2] | Verification of quality control; increasing confidence in data integrity; confirming original methodology [2] |

| Systemic Replication | Reproduce a published finding under different experimental conditions [3] | Process of reproducing a study while introducing certain consistent differences [2] | Establishing reliable positive or negative results; allowing refinement of experimental design [2] |

| Conceptual Replication | Evaluate validity of a phenomenon using different experimental conditions or methods [3] | Retesting the same hypothesis using different measures or experimental designs [2] | Validation of the underlying hypothesis; confidence in the finding; elimination of false positives [2] |

Distinguishing Precision and Reproducibility Terms

In analytical method validation, understanding the specific definitions of precision-related terms is crucial for proper implementation across laboratories.

Table 2: Precision Terminology in Analytical Method Validation

| Term | Definition | Testing Environment | Purpose |

|---|---|---|---|

| Intermediate Precision | Measures variability when the same method is applied within the same laboratory under different conditions (different analysts, instruments, days) [5] | Same laboratory | Assesses method stability under normal laboratory variations [5] |

| Reproducibility | Assesses consistency of a method across different laboratories [5] | Different laboratories | Demonstrates method transferability and global robustness [5] |

| Repeatability | Capacity to obtain the same result when analyses are performed by the same operators using the same systems under the same conditions [4] | Same laboratory, same conditions | Verifies reliability of results under identical conditions [4] |

Experimental Evidence and Data Comparison

Quantitative Assessment of Method Reproducibility

Interlaboratory studies provide concrete data on the reproducibility of analytical techniques. Research on the reproducibility of methods required to identify nanoforms of substances under the EU REACH framework offers valuable insights into the achievable accuracy of common analytical techniques.

Table 3: Reproducibility Data for Analytical Techniques from Interlaboratory Studies

| Analytical Technique | Measurement Purpose | Reproducibility (Relative Standard Deviation) | Maximal Fold Difference Between Laboratories |

|---|---|---|---|

| ICP-MS | Quantification of metal impurities [6] | Low RSDR [6] | <1.5 fold [6] |

| BET | Specific surface area [6] | Low RSDR [6] | <1.5 fold [6] |

| TEM/SEM | Size and shape characterization [6] | Low RSDR [6] | <1.5 fold [6] |

| ELS | Surface potential and isoelectric point [6] | Low RSDR [6] | <1.5 fold [6] |

| TGA | Water content and organic impurities [6] | Poorer reproducibility [6] | <5 fold [6] |

Comparative Performance in Different Testing Environments

The design of reproducibility testing significantly impacts the observed variability. A 2016 study comparing reproducibility standard deviations from collaborative trials and proficiency tests in food analysis yielded unexpected results.

Table 4: Collaborative Trials vs. Proficiency Tests in Food Analysis

| Study Characteristic | Collaborative Trial | Proficiency Test |

|---|---|---|

| Method Specification | Strictly defined analytical procedure [7] | No prescribed procedure [7] |

| Expected Outcome | Expected smaller reproducibility standard deviation [7] | Expected larger reproducibility standard deviation [7] |

| Actual Finding (>10⁻⁷ mass fraction) | Slightly larger standard deviations [7] | Slightly smaller standard deviations [7] |

| Actual Finding (<10⁻⁷ mass fraction) | Slightly smaller standard deviations [7] | Slightly larger standard deviations [7] |

Methodologies for Cross-Validation Between Laboratories

Experimental Design for Cross-Validation Studies

Cross-validation between laboratories requires meticulous planning and execution. The ICH M10 guideline for bioanalytical method validation and study sample analysis emphasizes the importance of cross-validation when data from different methods or laboratories will be combined for regulatory submission and decision-making [8].

A robust cross-validation design should include:

- Sample Selection: Use enough samples (n>30) with concentrations that appropriately span the expected concentration range [8]

- Statistical Approaches: Employ multiple statistical methods including Bland-Altman plots, scatter plots, Deming regression, and Concordance Correlation Coefficient to visualize and quantify bias [8]

- Acceptance Criteria: Establish predefined criteria for equivalency, such as whether the 90% confidence interval (CI) of the mean percent difference of concentrations falls within +/-30% [8]

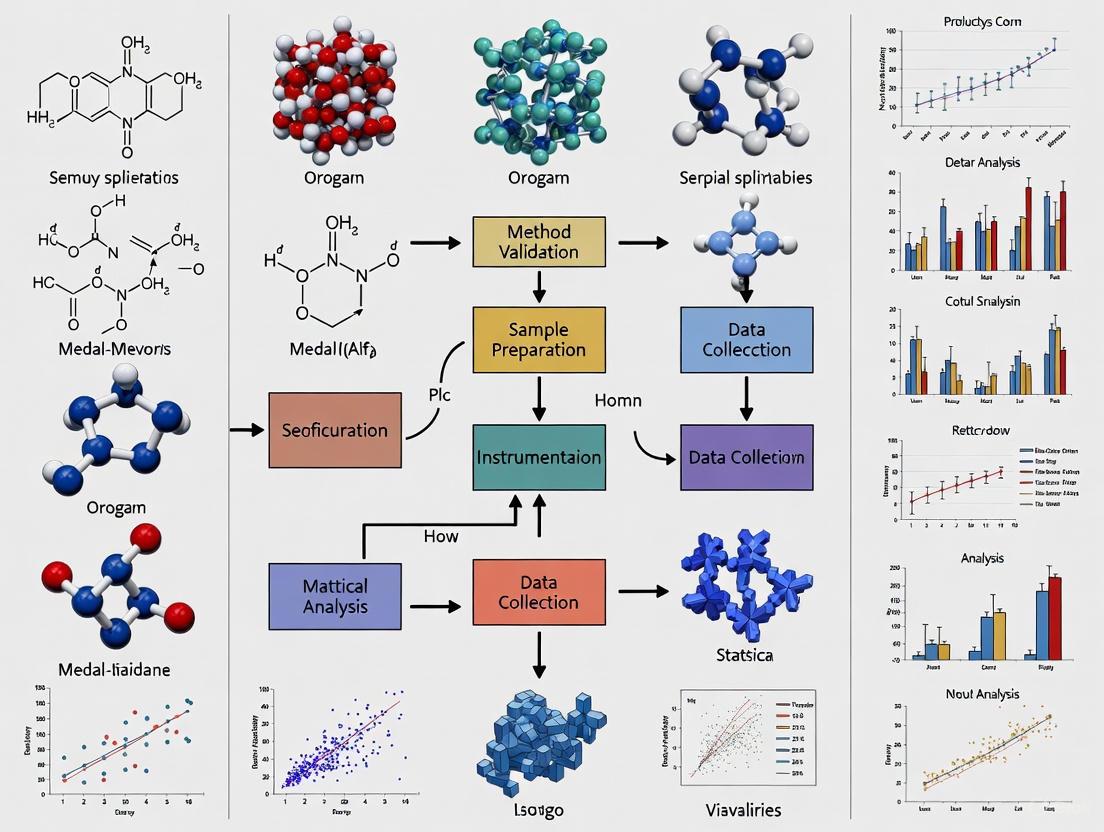

Workflow for Interlaboratory Cross-Validation

Implementing a structured workflow ensures comprehensive evaluation of analytical methods across multiple laboratories.

Research Reagent Solutions for Reproducibility Studies

Table 5: Essential Materials and Reagents for Cross-Laboratory Reproducibility Studies

| Reagent/Material | Function in Reproducibility Studies | Critical Quality Parameters |

|---|---|---|

| Authenticated Reference Materials | Provides traceable standards for method comparison between laboratories [3] | Documented provenance, purity verification, stability data [3] |

| Certified Calibration Standards | Ensures consistent quantification across different instrument platforms [8] | Certification documentation, concentration uncertainty, stability [8] |

| Quality Control Materials | Monitors analytical performance throughout the study [8] | Homogeneity, stability, matrix matching to study samples [8] |

| Characterized Cell Lines/ Microorganisms | Provides biological reference materials for bioanalytical methods [3] | Authentication (phenotypic and genotypic), contamination screening, passage number control [3] |

The Reproducibility Crisis in Scientific Research

Scope and Impact

The scientific community faces significant challenges regarding reproducibility. A 2016 Nature survey of 1,576 researchers revealed that in the field of biology alone, over 70% of researchers were unable to reproduce the findings of other scientists, and approximately 60% of researchers could not reproduce their own findings [2] [3] [4]. This reproducibility crisis has far-reaching implications, including slower scientific progress, wasted time and money, decreased efficiency, and erosion of public trust in scientific research [2] [3].

The financial impact is substantial. A 2015 meta-analysis estimated that $28 billion per year is spent on preclinical research that is not reproducible [3]. When considering avoidable waste across biomedical research, as much as 85% of expenditure may be wasted due to factors that contribute to non-reproducible research, such as inappropriate study design and failure to adequately address biases [3].

Contributing Factors to Non-Reproducibility

Multiple interconnected factors contribute to the reproducibility crisis:

- Lack of access to methodological details, raw data, and research materials [2] [3]

- Use of misidentified, cross-contaminated, or over-passaged cell lines and microorganisms [2] [3]

- Inability to manage complex datasets [2]

- Poor research practices and experimental design [2] [3]

- Cognitive biases including confirmation bias, selection bias, and reporting bias [3]

- Competitive academic culture that rewards novel findings and undervalues negative results [3]

Best Practices for Enhancing Reproducibility

Framework for Improved Reproducibility

Addressing the reproducibility crisis requires systematic changes across the scientific research ecosystem. Based on evidence from multiple studies, the following practices significantly enhance reproducibility:

Robust Sharing of Data and Materials

Use of Authenticated Biomaterials

Enhanced Training and Education

Transparent Reporting

Pre-registration of Studies

Regulatory and Standards Framework

For analytical chemistry applications in regulated environments, method validation and verification provide structured approaches to ensure reproducibility:

- Method Validation: A comprehensive process proving an analytical method is acceptable for its intended use, required when developing new methods or transferring methods between labs [9]

- Method Verification: Confirms that a previously validated method performs as expected in a specific laboratory, used when adopting standard methods in a new lab [9]

Adherence to established guidelines such as ICH M10 for bioanalytical method validation provides a standardized framework for assessing method performance and bias between laboratories [8].

The concepts of direct, analytic, and systemic replication represent a hierarchy of approaches for validating scientific findings in analytical chemistry and related disciplines. Direct replication establishes fundamental reliability of findings, analytic replication verifies data integrity and analytical processes, while systemic replication tests the broader applicability of methods across different conditions and laboratories.

The experimental evidence demonstrates that well-established analytical techniques like ICP-MS, BET, TEM/SEM, and ELS generally show good interlaboratory reproducibility with relative standard deviations below 20% and maximal fold differences typically under 1.5 between laboratories [6]. However, the reproducibility crisis highlighted by surveys showing most researchers cannot reproduce others' work (or even their own) underscores the need for systematic improvements in how scientific research is conducted, reported, and validated [2] [3] [4].

Implementing robust cross-validation protocols between laboratories, following established methodological guidelines, promoting data and material sharing, and fostering a culture that values transparency and replication are essential steps toward enhancing reproducibility in analytical chemistry and building a more reliable foundation for scientific advancement.

The self-correcting mechanism of the scientific method depends fundamentally on the ability of researchers to reproduce the findings of published studies to strengthen evidence and build upon existing work. Reproducibility serves as the cornerstone of cumulative knowledge production, ensuring transparency in research practices and validating scientific claims. However, across multiple scientific disciplines, particularly in life sciences and biomedical research, concerns have grown regarding a perceived "reproducibility crisis" characterized by the frequent inability to replicate previously published findings. This phenomenon threatens the very foundation of scientific advancement and carries substantial economic and scientific consequences.

The American Society for Cell Biology (ASCB) has developed a multi-tiered approach to defining reproducibility, recognizing subtle differences in how the term is perceived throughout the scientific community. These include direct replication (reproducing results using the same experimental design and conditions), analytic replication (reproducing findings through reanalysis of the original dataset), systemic replication (reproducing published findings under different experimental conditions), and conceptual replication (evaluating the validity of a phenomenon using different experimental conditions or methods). While standardized definitions continue to evolve, the fundamental principle remains: scientific progress depends on the verification and confirmation of research outcomes through independent repetition.

Quantifying the Financial Burden of Irreproducible Research

Direct Economic Costs

The economic impact of irreproducible research represents a significant drain on scientific resources and research efficiency. A comprehensive analysis published in PLOS Biology estimated that the United States alone spends approximately $28 billion annually on preclinical research that cannot be reproduced [10] [11] [12]. This staggering figure was derived from 2012 data indicating that of the $114.8 billion spent annually on life sciences research in the U.S., approximately $56.4 billion (49%) was allocated to preclinical research. Applying a conservative irreproducibility rate of 50% yields the $28 billion estimate for wasted expenditures [10].

The analysis employed a probability bounds approach, estimating that the cumulative prevalence of irreproducible preclinical research lies between 18% (assuming maximum overlap between error categories) and 88.5% (assuming minimal overlap between categories), with a natural point estimate of 53.3% [10]. This indicates that potentially more than half of all preclinical studies may suffer from irreproducibility issues, though precise quantification remains challenging due to inconsistent definitions of reproducibility across studies and limitations in available data.

Table 1: Estimated Financial Impact of Irreproducible Preclinical Research in the U.S.

| Metric | Value | Source/Notes |

|---|---|---|

| Annual U.S. expenditure on life sciences research | $114.8 billion | Based on 2012 data [10] |

| Annual U.S. expenditure on preclinical research | $56.4 billion | Approximately 49% of total life sciences research [10] |

| Estimated irreproducibility rate | 50% (conservative estimate) | Range of 18%-88.5% based on probability bounds analysis [10] |

| Annual cost of irreproducible preclinical research | $28 billion | Direct calculated financial impact [10] [11] [12] |

| Pharmaceutical industry replication cost per study | $500,000-$2,000,000 | Requires 3-24 months per study [10] |

Extended Economic and Opportunity Costs

Beyond these direct expenditures, irreproducible research generates substantial indirect costs and opportunity losses. The "house of cards" effect, wherein future research builds upon incorrect findings, may inflate the total economic impact to between $13.5 billion and $270 billion annually when accounting for wasted downstream resources and delayed scientific progress [13]. Pharmaceutical companies particularly suffer from developing drugs based on irreproducible findings, with medications like Prempro, Xigris, Plavix, and Avastin being approved despite pivotal clinical trials that later studies failed to reproduce [13].

The resource waste extends beyond financial considerations to encompass significant time investments from researchers. Surveys indicate that scientists spend approximately 30% of their total research time attempting to reproduce other researchers' findings [14]. For a early-career researcher on a two-year fellowship, this amounts to roughly 7.2 months of potentially unproductive effort that significantly impacts career progression in a system that often prioritizes novel findings over verification studies [14].

Primary Categories of Research Irreproducibility

The problem of irreproducible research stems from multiple interconnected factors rather than a single cause. Freedman et al. (2015) categorized the root causes of irreproducibility into four primary areas, estimating the prevalence of errors in each category [10]:

Table 2: Categories and Prevalence of Errors Leading to Irreproducible Research

| Error Category | Description | Prevalence Range | Midpoint Estimate |

|---|---|---|---|

| Study Design | Flaws in experimental design, including inadequate blinding, randomization, power calculations, and statistical analysis | 11%-27% | 19% |

| Biological Reagents and Reference Materials | Use of contaminated, misidentified, or over-passaged cell lines and microorganisms | 16%-36% | 26% |

| Laboratory Protocols | Insufficient methodological details, failure to account for environmental variables, lack of standardization | 12%-27% | 19% |

| Data Analysis and Reporting | Inappropriate statistical analysis, selective reporting of results, failure to publish negative findings | 14%-25% | 19% |

Contributing Systemic Factors

Beyond these categorical errors, several systemic factors within the scientific research environment contribute significantly to irreproducibility:

Competitive Research Culture: The academic research system disproportionately rewards novel, positive findings over negative results or replication studies. University hiring and promotion criteria often emphasize publication in high-impact journals, creating disincentives for researchers to pursue reproducibility studies [3] [15]. This "publish or perish" mentality sometimes encourages questionable research practices.

Insufficient Methodological Detail: Many publications fail to provide comprehensive methodological details necessary for other researchers to replicate experiments accurately. The Cancer Reproducibility Project found that replication teams often devoted extensive time to chasing down protocols and reagents that were inadequately described in original publications [16].

Cognitive Biases: Various subconscious biases affect research practices, including confirmation bias (interpreting evidence to confirm existing beliefs), selection bias (improper randomization), the bandwagon effect (accepting popular ideas without sufficient evaluation), and reporting bias (selectively revealing or suppressing information) [3].

Biological Complexity: Some irreproducibility stems from legitimate biological factors rather than methodological flaws. Treatment effects may depend on specific phenotypic characteristics, environmental conditions, or genetic backgrounds of model organisms. Highly standardized animal models, particularly inbred rodent strains, may produce results that cannot be generalized across different genetic backgrounds [16].

Experimental Design and Methodological Considerations

Standardized Experimental Protocols for Cross-Laboratory Validation

Implementing rigorous, standardized experimental protocols is essential for enhancing research reproducibility, particularly for cross-laboratory validation studies. The following methodological framework provides a foundation for designing reproducible experiments:

Preregistration of Study Designs: Researchers should preregister proposed scientific studies, including detailed methodologies and analysis plans, prior to initiating experiments. This approach encourages careful scrutiny of all research process components and discourages suppression of negative results that do not support initial hypotheses [3].

Comprehensive Methodological Reporting: Publications must include thorough descriptions of research methodologies, explicitly reporting key experimental parameters such as blinding procedures, instrumentation specifications, number of replicates, interpretation criteria, statistical analysis methods, randomization protocols, and criteria for data inclusion or exclusion [3]. The Reproducibility Project: Cancer Biology demonstrated that insufficient methodological detail represents a major obstacle to replicating published studies [16].

Authentication of Biological Materials: Researchers should implement rigorous authentication protocols for all biological reagents, including cell lines and microorganisms. This requires a multifaceted approach confirming phenotypic and genotypic traits while verifying the absence of contaminants. Starting experiments with traceable, authenticated reference materials and routinely evaluating biomaterials throughout the research workflow significantly enhances data reliability [3].

Robust Statistical Design: Studies must incorporate appropriate statistical power calculations during the design phase to ensure adequate sample sizes. Researchers should receive training in proper statistical methodology and experimental design to substantially improve the validity and reproducibility of their work [3]. Even well-designed replication studies require greater statistical power than original studies to confirm or refute previous results [16].

Three-Stage Research Validation Model

A proposed solution to enhance reproducibility involves a three-stage research validation process that balances exploratory innovation with rigorous verification [16]. This model addresses the fundamental tension between preclinical researchers' need for freedom to explore knowledge boundaries and clinical researchers' reliance on reproducible findings to weed out false positives.

Stage 1: Exploratory Research: This initial phase allows researchers to generate and support hypotheses without the strict constraints of statistical rigor required for confirmatory studies. Researchers can "fool around" with preliminary studies without needing every experiment to achieve statistical significance, reducing wasted resources on premature verification [16].

Stage 2: Independent Confirmatory Study: Promising findings from exploratory research progress to rigorous independent verification conducted by a separate laboratory following the highest standards of methodological rigor. This stage requires higher statistical power than the original study to properly confirm or refute previous results [16].

Stage 3: Multi-Center Validation: Successful independently replicated findings advance to validation across multiple research centers, creating the foundation for human clinical trials to test new drug candidates or therapies. This stage establishes external validity across different experimental environments and research teams [16].

Essential Research Reagents and Materials Solutions

The integrity of research reagents and reference materials represents a critical factor in ensuring experimental reproducibility. Approximately 26% of irreproducible research stems from issues with biological reagents and reference materials, making this the single largest category contributing to replication failures [10]. Implementing rigorous standards for research materials management is therefore essential for enhancing reproducibility.

Table 3: Essential Research Reagent Solutions for Reproducible Science

| Reagent Category | Key Reproducibility Challenges | Recommended Solutions | Verification Methods |

|---|---|---|---|

| Cell Lines | Cross-contamination, misidentification, phenotypic drift through serial passaging, microbial contamination | Use low-passage authenticated stocks, regular mycoplasma testing, implement cell line banking | STR profiling, isoenzyme analysis, karyotyping, morphological validation |

| Microorganisms | Genetic drift, contamination, improper preservation | Use reference strains from reputable repositories, proper cryopreservation protocols | Phenotypic characterization, genotypic verification, contamination screening |

| Antibodies | Lot-to-lot variability, specificity issues, improper validation | Request validation data from suppliers, perform in-house verification, use renewable aliquots | Western blot confirmation, immunofluorescence validation, knockout/knockdown controls |

| Chemical Compounds | Purity variability, degradation, solvent effects | Source from certified suppliers, implement proper storage conditions, verify purity before use | Chromatographic analysis, mass spectrometry, functional validation |

| Reference Materials | Lack of traceability, insufficient characterization | Use certified reference materials, implement proper storage and handling | Regular quality control testing, comparison with standards |

The substantial financial and scientific costs of non-reproducible research demand systematic reforms across the scientific enterprise. With an estimated $28 billion annually wasted on irreproducible preclinical research in the U.S. alone, and potentially billions more in downstream costs from misdirected drug development programs, the economic imperative for change is clear [10] [13]. Beyond financial considerations, irreproducible research threatens scientific progress, delays development of life-saving therapies, and erodes public trust in science.

Addressing this multifaceted challenge requires coordinated efforts across multiple stakeholders. Researchers must adopt more rigorous experimental practices, including robust statistical design, comprehensive methodological reporting, and rigorous authentication of biological materials. Journals and publishers should implement more stringent reporting requirements and create publication avenues for negative results and replication studies. Funding agencies need to establish support mechanisms for replication studies and confirmatory research, while academic institutions must reform reward structures to value reproducibility alongside innovation.

As the scientific community works to enhance research reproducibility, it must balance the need for verification with preserving the creative, exploratory nature of scientific discovery. The goal is not to achieve perfect reproducibility—which would be neither possible nor desirable for cutting-edge research—but to create a research ecosystem that produces a sufficiently high level of reliable, verifiable knowledge to efficiently advance human health and scientific understanding [16]. Through collaborative efforts to implement standards, best practices, and cultural reforms, the scientific community can reduce the staggering costs of irreproducible research while accelerating the pace of meaningful discovery.

Method validation is a critical process in analytical chemistry, demonstrating that a particular procedure is suitable for its intended purpose. For researchers and scientists involved in the cross-validation of inorganic analysis methods between laboratories, understanding three core principles—specificity, accuracy, and precision—is fundamental to ensuring reliable, reproducible results. Regulatory bodies including the International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and others mandate rigorous validation to ensure data integrity and public safety [17] [18].

The objective of validation is to demonstrate through specific laboratory investigations that the performance characteristics of the method are both suitable for the intended analytical applications and reliable [18]. In the context of cross-validation between laboratories, these principles become even more crucial as they ensure that data generated at different sites can be combined and compared with confidence, a requirement explicitly addressed in guidelines such as ICH M10 for bioanalytical methods [8]. This article examines the core principles of specificity, accuracy, and precision, providing a structured comparison and experimental protocols relevant to inorganic analysis method validation.

Defining the Core Principles

Specificity

Specificity refers to the ability of an analytical method to assess unequivocally the analyte in the presence of components that may be expected to be present in the sample matrix [17] [18]. This typically includes impurities, degradation products, or other matrix components. In practical terms, a specific method can accurately measure the target analyte without interference from other substances. For inorganic analysis, this is particularly important when dealing with complex sample matrices where multiple ions or elements may co-exist and potentially interfere with the detection or quantification of the target analyte.

Accuracy

Accuracy is defined as the closeness of agreement between a test result and the accepted reference value or true value [17] [18]. It is typically expressed as percent recovery by the assay of a known amount of analyte added to the sample, or as the difference between the mean result and the accepted true value, accompanied by confidence intervals. Accuracy indicates the correctness of measurements and is often assessed by analyzing a standard of known concentration or by spiking a placebo with a known amount of analyte.

Precision

Precision describes the closeness of agreement (degree of scatter) among a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [17] [18]. Precision is considered at three levels:

- Repeatability: Precision under the same operating conditions over a short time period (intra-assay precision).

- Intermediate Precision: Within-laboratory variations (different days, analysts, equipment, etc.).

- Reproducibility: Precision between different laboratories, often assessed through collaborative studies [18].

Unlike accuracy, which measures correctness, precision measures the reproducibility and reliability of results, regardless of their closeness to the true value.

Comparative Analysis of Validation Parameters

The following tables summarize the key aspects, measurement approaches, and acceptance criteria for specificity, accuracy, and precision, providing a clear comparison of these fundamental validation parameters.

Table 1: Core Definitions and Measurement Approaches

| Parameter | Core Definition | Primary Measurement Approach | Key Interferences |

|---|---|---|---|

| Specificity | Ability to unequivocally assess analyte amidst potential interferents [18] | Analysis of samples with and without potential interferents; chromatographic peak purity assessment | Matrix components, impurities, degradation products, structurally similar compounds |

| Accuracy | Closeness of test results to the true value [18] | Comparison to reference standard; spike recovery experiments (% recovery) [18] | Systematic errors (bias), sample preparation losses, matrix effects |

| Precision | Closeness of agreement between individual test results [18] | Repeated measurements (same sample, same conditions); statistical analysis (SD, RSD) [18] | Random errors, instrument fluctuations, environmental variations |

Table 2: Experimental Design and Acceptance Criteria

| Parameter | Typical Experimental Design | Common Acceptance Criteria | Data Presentation |

|---|---|---|---|

| Specificity | Analyze blank matrix, analyte standard, and potential interferents individually and in combination | No interference observed at analyte retention time; resolution > 1.5 between analyte and closest eluting interference | Chromatograms/spectra overlay; resolution calculations |

| Accuracy | Minimum 9 determinations over minimum 3 concentration levels covering specified range [17] | Recovery typically 98-102% for drug substance; 95-105% for formulations; RSD < 2% [17] | % Recovery with confidence intervals; difference plots |

| Precision | Minimum 6 replicate preparations of homogeneous sample; intermediate precision with different analysts/days [17] | RSD ≤ 1% for drug substance; ≤ 2% for drug product for repeatability [17] | Mean, standard deviation (SD), relative standard deviation (RSD) |

Experimental Protocols for Cross-Validation Studies

Protocol for Specificity Assessment in Inorganic Analysis

Objective: To demonstrate that the analytical method can unequivocally quantify the target inorganic analyte(s) in the presence of potential interferents that may be present in the sample matrix.

Materials and Reagents:

- High-purity reference standards of target analytes

- Potential interfering substances (other ions, matrix components)

- Appropriate solvents and reagents of analytical grade

- Certified reference materials (when available)

Procedure:

- Prepare separate solutions of the target analyte at the working concentration.

- Prepare solutions of potential interfering substances at concentrations expected in sample matrices.

- Prepare a mixture containing the target analyte and all potential interferents.

- Analyze all solutions using the validated method.

- Compare chromatograms/spectra for peak purity, resolution, and any observed interferences.

Evaluation: The method is considered specific if there is no interference observed at the retention time/migration time of the target analyte, and the analyte peak is pure (as demonstrated by diode array detection or mass spectrometry). For techniques without separation, the signal must be attributable only to the target analyte.

Protocol for Accuracy Evaluation Using Spike Recovery

Objective: To determine the accuracy of the method for quantifying inorganic analytes in specific matrices.

Materials and Reagents:

- Stock standard solutions of target analytes

- Blank matrix (free of the target analytes)

- Appropriate calibration standards

Procedure:

- Prepare a blank sample matrix (e.g., purified water for water analysis, acid-digested sample for solid analysis).

- Spike the blank matrix with known quantities of target analytes at three concentration levels (low, medium, high) covering the specified range.

- Prepare at least three replicates at each concentration level.

- Analyze all samples using the validated method.

- Calculate the recovery for each spike level using the formula: Recovery (%) = (Measured Concentration / Spiked Concentration) × 100.

Evaluation: Calculate mean recovery and relative standard deviation at each concentration level. Compare results to established acceptance criteria (typically 95-105% recovery with RSD < 5%, though this may vary based on the analyte and matrix).

Protocol for Precision Assessment (Repeatability and Intermediate Precision)

Objective: To determine the precision of the method under different conditions, simulating inter-laboratory variation.

Materials and Reagents:

- Homogeneous sample material or reference material

- Consumables from different lots (if possible)

- Multiple analysts (for intermediate precision)

Procedure:

- Repeatability: A single analyst prepares and analyzes at least six independent samples from the same homogeneous sample batch on the same day using the same instrument.

- Intermediate Precision: Different analysts analyze the same homogeneous sample on different days, using different instruments (if available), and different reagent lots.

- Calculate the mean, standard deviation, and relative standard deviation for each set of results.

Evaluation: Compare the RSD values to established acceptance criteria. For inorganic analysis at concentration levels > 1 ppm, RSD values < 5% are often acceptable for repeatability, with slightly higher values acceptable for intermediate precision.

Visualization of Method Validation Relationships

Diagram 1: Method validation parameter relationships showing how precision decomposes into sub-parameters.

Diagram 2: Method validation workflow from planning through lifecycle management, aligned with modern regulatory guidelines.

The Researcher's Toolkit for Method Validation

Table 3: Essential Research Reagent Solutions for Inorganic Analysis Method Validation

| Reagent/Material | Function in Validation | Quality Requirements | Application Notes |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Establish traceability and accuracy; method calibration | Certified purity with uncertainty statements; NIST-traceable | Select matrix-matched CRMs when possible for best accuracy |

| High-Purity Analytical Standards | Preparation of calibration standards and spike solutions | ≥99.0% purity; properly characterized and stored | Verify purity and stability before use; prepare fresh solutions as needed |

| Ultra-Pure Solvents and Acids | Sample preparation and dilution; blank preparation | Trace metal grade; low background for target analytes | Always include method blanks to account for potential contamination |

| Matrix-Matched Quality Controls | Accuracy and precision assessment in relevant matrix | Consistent composition; well-characterized | Prepare at low, medium, and high concentrations for validation |

| Stable Isotope Standards | Internal standards for mass spectrometry methods | Isotopic purity >98%; chemical purity >95% | Essential for correcting matrix effects in ICP-MS analyses |

Regulatory Context and Cross-Validation Considerations

The recent updates to regulatory guidelines, particularly ICH Q2(R2) and ICH Q14, emphasize a lifecycle approach to analytical procedures [17]. These guidelines highlight the importance of the Analytical Target Profile (ATP) - a prospective summary of the method's intended purpose and desired performance characteristics [17]. For cross-validation of inorganic analysis methods between laboratories, establishing a clear ATP at the outset is crucial for harmonizing expectations and acceptance criteria across sites.

Cross-validation between laboratories presents unique challenges, particularly in establishing statistical criteria for equivalence. Recent publications highlight ongoing debates regarding appropriate acceptance criteria for cross-validation studies [8]. Some researchers propose standardized approaches involving sufficient samples (n>30) spanning the concentration range, with initial assessment of equivalency if the 90% confidence interval of the mean percent difference is within ±30%, followed by evaluation of concentration-related bias trends [8].

The presence of an imperfect gold standard can significantly impact measured validation parameters, particularly specificity [19]. Research demonstrates that decreasing gold standard sensitivity is associated with increasing underestimation of test specificity, with this effect magnified at higher prevalence of the measured condition [19]. This is particularly relevant for inorganic analysis methods where certified reference materials may have uncertainties that affect their use as gold standards.

Specificity, accuracy, and precision represent foundational principles of method validation that are particularly critical for cross-validation of inorganic analysis methods between laboratories. As regulatory guidelines evolve toward a more holistic, lifecycle approach, understanding the interrelationships between these parameters and their appropriate assessment becomes increasingly important for researchers and drug development professionals.

The experimental protocols and comparative data presented provide a practical framework for designing and evaluating cross-validation studies. By establishing clear acceptance criteria up-front through an Analytical Target Profile and employing rigorous statistical assessment of bias and trends, laboratories can ensure that methods perform consistently across sites, supporting the reliability of analytical data used in regulatory decision-making and pharmaceutical development.

Table of Contents

- Introduction

- Defining the Key Performance Criteria

- Experimental Protocols for Determination

- A Case Study in Cross-Validation

- The Scientist's Toolkit

- Conclusion

In the field of analytical chemistry, particularly in the cross-validation of methods between laboratories for inorganic analysis, the reliability of data is paramount. Cross-validation studies are essential to ensure that assay data from all study sites where sample analysis is performed can be compared throughout clinical trials or environmental monitoring programs [20]. For results to be trusted across different instruments, operators, and locations, the analytical methods must be rigorously characterized. This guide focuses on four foundational performance criteria—Limit of Detection (LOD), Limit of Quantitation (LOQ), Linearity, and Robustness—providing a comparative framework and detailed experimental protocols to ensure your methods are fit for purpose and yield comparable results in any laboratory setting.

Defining the Key Performance Criteria

The following table summarizes the core definitions and purposes of each key performance parameter.

| Parameter | Definition | Primary Purpose |

|---|---|---|

| Limit of Blank (LoB) | The highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested [21]. | To characterize the background noise of an assay and define the threshold above which a signal can be distinguished from the blank [21]. |

| Limit of Detection (LOD) | The lowest analyte concentration likely to be reliably distinguished from the LoB and at which detection is feasible [21]. | To determine the lowest concentration at which an analyte can be detected, but not necessarily quantified with acceptable precision [21] [22]. |

| Limit of Quantitation (LOQ) | The lowest concentration at which the analyte can not only be reliably detected but at which some predefined goals for bias and imprecision are met [21]. | To establish the lowest concentration that can be measured with acceptable accuracy, precision, and total error [21] [22]. |

| Linearity | The ability of an analytical procedure to obtain test results that are directly proportional to the concentration of analyte in the sample within a given range [22] [23]. | To demonstrate a directly proportional relationship between analyte concentration and instrument response, defining the working range of the assay [23]. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [22]. | To evaluate the reliability of an analytical method during normal usage and identify critical parameters that require strict control [22]. |

It is crucial to understand the relationship between LoB, LOD, and LOQ. The LoB is determined from blank samples and represents the assay's background noise. The LOD, which is a higher concentration than the LoB, is the point where an analyte can be reliably detected. The LOQ, often the highest of the three, is the level at which precise and accurate quantification begins [21]. The linearity of a method is typically validated across a range that encompasses the LOQ to the upper limit of quantitation [22] [23].

Experimental Protocols for Determination

A robust cross-validation study requires standardized experimental protocols. The following section details the methodologies for determining each parameter, with supporting data presented in clear tables.

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

The CLSI EP17 guideline provides a standardized approach for determining LOD and LOQ, which is crucial for inter-laboratory consistency [21].

Protocol for LOD:

- Test Samples: Measure replicates (recommended n=60 for a manufacturer, n=20 for verification) of a blank sample (containing no analyte) and a low-concentration analyte sample [21].

- Calculation:

- First, calculate the LoB: LoB = meanblank + 1.645(SDblank). This assumes a Gaussian distribution, where 95% of blank values will fall below this limit [21].

- Then, calculate the LOD: LOD = LoB + 1.645(SD_low concentration sample). This ensures that 95% of measurements at the LOD concentration will exceed the LoB, minimizing false negatives [21].

- Verification: The provisional LOD is confirmed if no more than 5% of measurements from a sample with LOD concentration fall below the LoB [21].

Protocol for LOQ:

- Test Samples: Analyze replicates of samples with concentrations at or just above the LOD [21].

- Assessment: The LOQ is the lowest concentration at which the analyte can be quantified with predefined acceptable levels of bias and imprecision (e.g., a specific percentage coefficient of variation, such as 20%) [21] [22]. It is determined by testing successively higher concentrations until these goals are met.

Alternative Approach (Signal-to-Noise): For chromatographic methods, LOD and LOQ can be determined based on the signal-to-noise ratio. Typically, an LOD requires a signal-to-noise ratio of 3:1, while an LOQ requires a ratio of 10:1 [23]. These values can also be calculated using the formulas LOD = 3.3 × (SD of response / slope of calibration curve) and LOQ = 10 × (SD of response / slope) [23].

The following table summarizes the experimental requirements for LOD and LOQ.

| Parameter | Sample Type | Recommended Replicates (Establishment) | Key Calculation / Criteria |

|---|---|---|---|

| LoB | Sample containing no analyte [21] | 60 [21] | LoB = meanblank + 1.645(SDblank) [21] |

| LOD | Sample with low concentration of analyte [21] | 60 [21] | LOD = LoB + 1.645(SD_low concentration sample) [21] |

| LOQ | Sample with low concentration at or above LOD [21] | 60 [21] | Lowest concentration meeting predefined bias and imprecision goals (e.g., %CV) [21] [22] |

Linearity and Range

The ICH Q2(R2) guideline outlines the process for demonstrating linearity [22].

Protocol:

- Preparation: Prepare a minimum of 5 standard solutions of the analyte at different concentrations, typically from 80% to 120% of the target concentration [23].

- Analysis: Analyze each concentration level in triplicate [23].

- Data Analysis: Plot the measured instrument response (e.g., peak area) against the known concentration of the standards.

- Assessment: Calculate the correlation coefficient (R²), which should typically be ≥ 0.999 [23]. The slope, y-intercept, and residual sum of squares of the regression line are also evaluated to confirm linearity.

Range: The range of an analytical procedure is the interval between the upper and lower concentrations of analyte for which it has been demonstrated that the analytical procedure has a suitable level of precision, accuracy, and linearity. It is normally derived from the linearity studies [23].

The workflow for establishing linearity and range is systematic, as shown below.

Robustness

Robustness testing evaluates the method's reliability during normal use by introducing small, deliberate variations.

- Protocol:

- Identify Parameters: Select critical method parameters that could vary, such as pH of the mobile phase, temperature of the chromatographic column, flow rate, or wavelength detection [22] [23].

- Design Experiment: Systematically vary these parameters one at a time within a realistic, small range (e.g., flow rate ± 0.1 mL/min).

- Analysis: Analyze a sample (e.g., a system suitability test sample or a quality control sample) under each varied condition.

- Assessment: Compare the results (e.g., retention time, peak area, tailing factor, theoretical plates) to those obtained under standard conditions. The method is considered robust if the variations do not significantly affect the analytical results [22].

The following table illustrates how robustness can be tested for a liquid chromatography method.

| Parameter Varied | Example Variations | Measured Response |

|---|---|---|

| Mobile Phase pH | ± 0.1 units [22] | Retention time, peak shape, resolution |

| Column Temperature | ± 2°C [22] | Retention time, efficiency |

| Flow Rate | ± 0.1 mL/min [22] | Retention time, pressure, peak area |

| Detector Wavelength | ± 2 nm | Signal-to-noise ratio, peak area |

A Case Study in Cross-Validation

A cross-validation study for the bioanalysis of lenvatinib in human plasma provides a concrete example of successfully applying these principles across multiple laboratories [20].

- Objective: To ensure that lenvatinib concentrations measured at five different global laboratories using seven distinct LC-MS/MS methods produced comparable pharmacokinetic data [20].

- Methodology: Each laboratory first validated its own method according to regulatory guidelines, establishing parameters like LOD, LOQ, linearity, and robustness. For the cross-validation, a central laboratory provided blinded quality control (QC) samples and clinical study samples with known concentrations. All laboratories then assayed these samples using their respective validated methods [20].

- Key Results: The study demonstrated high inter-laboratory consistency. The accuracy of QC samples was within ±15.3%, and the percentage bias for clinical study samples was within ±11.6% [20].

- Conclusion: This successful cross-validation confirmed that lenvatinib concentrations in human plasma could be reliably compared across different laboratories and clinical studies, despite variations in specific methodological details like sample extraction technique (protein precipitation, liquid-liquid extraction, or solid-phase extraction) and chromatographic conditions [20].

The process of such a multi-laboratory cross-validation study can be visualized as follows.

The Scientist's Toolkit

For researchers undertaking method validation and cross-validation studies, certain reagents and materials are essential. The following table details key items used in the cited lenvatinib study and their general functions in bioanalytical method development [20].

| Item | Function in the Analytical Method |

|---|---|

| Analyte Reference Standard | A high-purity substance used to prepare calibration standards and quality control samples; it is the benchmark for identifying and quantifying the target analyte [20]. |

| Internal Standard | A structurally similar analogue or stable isotope-labeled version of the analyte added to all samples to correct for variability during sample preparation and analysis [20]. |

| Blank Biological Matrix | The analyte-free biological fluid (e.g., human plasma) from the species of interest, used to prepare calibration curves and QC samples to mimic the study samples [20]. |

| Sample Extraction Materials | Materials for techniques like liquid-liquid extraction (LLE) or solid-phase extraction (SPE) to isolate and purify the analyte from the complex biological matrix, reducing interference [20]. |

| Chromatography Column | The heart of the separation system, where compounds are resolved based on their chemical interactions with the stationary phase [20]. |

| Mass Spectrometer | The detection system that identifies and quantifies analytes based on their mass-to-charge ratio, providing high specificity and sensitivity [20]. |

In the context of cross-validating inorganic analysis methods between laboratories, a deep and practical understanding of LOD, LOQ, linearity, and robustness is non-negotiable. These parameters form the bedrock of a reliable analytical method, ensuring that data generated in one lab is trustworthy and comparable to data generated in another. As demonstrated by the lenvatinib case study, a rigorous approach to method validation and cross-validation, guided by established protocols from CLSI and ICH, is key to success in global multi-site studies. By systematically defining, testing, and documenting these performance criteria, researchers and drug development professionals can ensure the integrity of their data, comply with regulatory standards, and advance scientific knowledge with confidence.

The Role of Cross-Validation in Preventing Overfitting and Data Leakage

In the rigorous world of scientific research, particularly in fields involving inorganic analysis methods and drug development, the reliability of predictive models and analytical procedures is paramount. Two pervasive threats to this reliability are overfitting and data leakage. Overfitting occurs when a model learns not only the underlying patterns in the training data (the "signal") but also the random fluctuations (the "noise"), leading to poor performance on new, unseen data [24]. Data leakage, a more insidious problem, happens when information from the validation or test set unintentionally influences the training process, creating overly optimistic and biased performance estimates [25] [26]. Within the specific context of cross-validation of inorganic analysis methods between laboratories, these issues can compromise the comparability of data across different sites and instruments, potentially derailing clinical trials and regulatory submissions.

Cross-validation (CV) serves as a powerful statistical technique to combat these challenges. It is a set of data sampling methods used by algorithm developers to avoid overoptimism in overfitted models and to estimate an algorithm's generalization performance—its ability to perform well on new, independent data [27]. This guide will objectively compare the performance of various cross-validation strategies, providing experimental data and detailed protocols to help researchers select the most appropriate method for validating their analytical and predictive models.

Core Concepts and Problems

Understanding Overfitting and Data Leakage

- Overfitting: A model is considered overfit when it fits the training dataset too closely, including its noise and outliers, but has a poor fit with new datasets [24]. This is akin to a student memorizing the answers to specific practice questions instead of understanding the underlying concept, and then failing a different test on the same topic. In machine learning, this manifests as high accuracy on the training set but significantly lower accuracy on a holdout test set [24].

- Data Leakage: This occurs when there is an improper overlap between the data used for model fitting and hyperparameter tuning and those used for testing [28]. This overlap biases the model's performance, making it uninformative regarding the model's true ability to generalize. A common cause is performing preprocessing steps (like scaling or feature selection) on the entire dataset before splitting it into training and validation sets [25]. Data leakage is a significant issue that can compromise model reliability and lead to non-reproducible findings, as noted in meta-analyses of scientific studies [26].

The Principle of Cross-Validation

Cross-validation addresses overfitting and leakage by systematically partitioning the available data to simulate training and testing on multiple subsets. The fundamental logic is illustrated below:

The core idea is to use the initial training data to generate multiple mini train-test splits. This process allows for hyperparameter tuning and performance estimation using only the original dataset while maintaining a holdout set for final evaluation [24]. By ensuring that the model is evaluated on data it was not trained on during each round, cross-validation provides a more realistic estimate of generalization error and helps prevent the model from learning spurious correlations.

Cross-Validation Techniques: A Comparative Analysis

Various cross-validation techniques have been developed to address different data structures and challenges. The table below provides a high-level comparison of the most common approaches.

Table 1: Comparison of Common Cross-Validation Techniques

| Technique | Core Principle | Advantages | Disadvantages | Ideal Use Case |

|---|---|---|---|---|

| K-Fold CV [27] | Randomly split data into K folds; each fold serves as a validation set once. | Reduces variance compared to LOOCV; computationally efficient. | Can be susceptible to bias with imbalanced datasets. | Standard practice for most tabular data with a balanced distribution. |

| Stratified K-Fold [25] | Ensures each fold preserves the same class distribution as the full dataset. | Provides more reliable performance metrics for imbalanced classes. | Only addresses imbalance in the target variable. | Classification problems with imbalanced datasets. |

| Leave-One-Out CV (LOOCV) [29] | K is set to the number of samples; each sample is a validation set once. | Low bias, uses almost all data for training. | High variance; computationally expensive for large datasets [29]. | Very small datasets where maximizing training data is critical. |

| Nested CV [25] [27] | Uses an outer loop for performance estimation and an inner loop for model selection. | Provides unbiased performance estimates when tuning hyperparameters. | Computationally very intensive. | Hyperparameter tuning and algorithm selection without a separate validation set. |

| Time Series Split [25] | Training set only includes data from prior to the validation set. | Preserves temporal order, prevents future data from influencing the past. | Not applicable to non-temporal data. | Time series forecasting and any data with a temporal component. |

| Leave-Profile-Out CV (LPOCV) [28] | All samples from a distinct group (e.g., a soil profile) are held out together. | Prevents data leakage from autocorrelated samples within the same group. | May increase the variance of the performance estimate. | Grouped data (e.g., samples from the same patient, lab, or profile). |

Quantitative Performance Comparison

The choice of cross-validation strategy has a direct and measurable impact on the reported performance of a model. The following table summarizes findings from various applied studies that highlight these differences.

Table 2: Impact of CV Strategy on Reported Model Performance

| Field of Study | Model / Prediction Task | Cross-Validation Strategy | Reported Performance | Key Finding | Source |

|---|---|---|---|---|---|

| 3D Digital Soil Mapping | Prediction of soil properties (e.g., CEC, clay) | Leave-Sample-Out CV (LSOCV) | 29-62% higher (with data augmentation) | LSOCV, which ignores vertical autocorrelation, produces overly optimistic metrics due to data leakage. | [28] |

| 3D Digital Soil Mapping | Prediction of soil properties (e.g., CEC, clay) | Leave-Profile-Out CV (LPOCV) | Baseline (more realistic) | LPOCV, which prevents leakage by holding out entire profiles, provides a more realistic performance estimate. | [28] |

| Major Depressive Disorder (MDD) | Predicting treatment outcomes with MRI | Meta-analysis incl. studies with data leakage | logDOR = 2.53 | Studies with data leakage significantly inflate pooled performance estimates in meta-analyses. | [26] |

| Major Depressive Disorder (MDD) | Predicting treatment outcomes with MRI | Meta-analysis excl. studies with data leakage | logDOR = 2.02 | After removing studies with leakage, the performance advantage of MRI over clinical data is smaller and less certain. | [26] |

Experimental Protocols for Robust Validation

Standard K-Fold Cross-Validation Protocol

This is a foundational protocol for general model evaluation [25] [27].

- Data Preparation: Begin with a cleaned dataset. For subject-based data, ensure partitions are at the subject level, not the sample level, to prevent data leakage [30].

- Shuffling and Stratification: Randomly shuffle the dataset. For classification problems, use stratified K-fold to maintain the same class distribution in each fold [25].

- Fold Creation: Split the data into K subsets (folds). Common values are K=5 or K=10 [27].

- Iterative Training and Validation:

- For each iteration

iin 1 to K: - Set fold

iaside as the validation set. - Use the remaining K-1 folds as the training set.

- Train the model on the training set. Crucially, any preprocessing (e.g., scaling, imputation) must be fit on the training set and then applied to the validation set to prevent data leakage [25].

- Evaluate the model on the validation set (fold

i) and record the performance metric (e.g., accuracy, R²).

- For each iteration

- Performance Calculation: Calculate the final model performance as the average of the K recorded performance scores.

Nested Cross-Validation for Hyperparameter Tuning Protocol

This protocol should be used when you need to both tune hyperparameters and obtain an unbiased estimate of the model's generalization error [25] [27].

- Define Loops: Establish an outer loop (for performance estimation) and an inner loop (for model selection). For example, use 5-fold CV for the outer loop and 3-fold CV for the inner loop.

- Outer Loop:

- Split the data into K folds. For each outer fold

i: - Set aside outer fold

ias the test set. - Use the remaining K-1 folds as the development set.

- Split the data into K folds. For each outer fold

- Inner Loop:

- On the development set, perform a second, independent K-fold CV (the inner loop).

- Use this inner CV to train and validate models with different hyperparameters.

- Select the best-performing set of hyperparameters.

- Final Model Training and Evaluation:

- Train a new model on the entire development set using the optimal hyperparameters found in the inner loop.

- Evaluate this final model on the held-out outer test set (fold

i) and record the performance.

- Final Performance: The unbiased performance estimate is the average of the scores from the outer test folds.

The workflow for this robust method is illustrated below:

Inter-Laboratory Cross-Validation Protocol for Bioanalytical Methods

This protocol is specific to validating that different laboratories or method platforms produce comparable results, as required in drug development [20] [31].

- Sample Selection: Select a set of samples (e.g., 100 incurred study samples) that cover the applicable range of concentrations, typically based on quartiles of in-study concentration levels [31].

- Blinded Analysis: These samples are assayed once by the two bioanalytical methods or laboratories being compared. The analysis should be blinded to the expected concentrations.

- Statistical Equivalency Assessment:

- Calculate the percent difference in concentrations for each sample between the two methods.

- Compute the 90% confidence interval (CI) for the mean percent difference.

- Acceptability Criterion: The two methods are considered equivalent if the lower and upper bound limits of the 90% CI are both within ±30% [31].

- Subgroup Analysis: Perform a quartile-by-concentration analysis using the same ±30% criterion to check for biases at different concentration levels.

- Data Characterization: Create a Bland-Altman plot (percent difference vs. mean concentration) to visually characterize the agreement between the two methods and identify any concentration-dependent biases [31].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Reagent Solutions for Cross-Validation Studies

| Item | Function | Example in Bioinformatics / Analytical Chemistry |

|---|---|---|

| Quality Control (QC) Samples | Samples with known concentrations used to ensure an assay run is performing within acceptance criteria and to assess accuracy and precision. | Prepared at Low, Mid, and High concentrations (LQC, MQC, HQC) in the same matrix as study samples [20]. |

| Incurred Study Samples | Actual study samples from dosed subjects. Used to demonstrate method reproducibility and for cross-validation between labs, as they may reveal matrix effects not seen in spiked QC samples. | Used in inter-laboratory cross-validation to confirm that both methods generate comparable data for the actual samples of interest [31]. |

| Internal Standard (IS) | A compound added in a constant amount to all samples and calibration standards in an assay to correct for variability during sample preparation and analysis. | ER-227326 (structural analogue) or 13C6 stable isotope-labeled lenvatinib in LC-MS/MS methods [20]. |

| Calibration Standards | A series of samples with known analyte concentrations used to construct the calibration curve, which defines the relationship between instrument response and concentration. | Prepared by spiking working solutions into blank human plasma at multiple levels covering the quantifiable range [20]. |

| Blank Matrix | The biological fluid free of the analyte of interest. Used to prepare calibration standards and QC samples to mimic the composition of real study samples. | Drug-free blank human plasma [20]. |

Cross-validation is an indispensable tool in the modern researcher's arsenal, directly addressing the critical problems of overfitting and data leakage. As demonstrated, the choice of cross-validation strategy is not merely a technicality but has a profound impact on the reliability and interpretability of model performance and analytical method equivalency. Simple holdout validation can be sufficient for very large datasets, but K-fold and stratified K-fold are generally more robust for most applications. When hyperparameter tuning is required, nested cross-validation is necessary to avoid optimistic bias. For specialized data structures like time series or grouped data (common in inter-laboratory studies), Time Series Split and Leave-Profile-Out CV are essential to prevent data leakage and obtain realistic performance estimates.

The experimental protocols and quantitative comparisons provided here offer a roadmap for researchers, scientists, and drug development professionals to implement these methods correctly. Adhering to these rigorous validation standards, particularly in the context of cross-laboratory studies, ensures that predictive models are truly generalizable and that bioanalytical data are comparable across sites. This, in turn, strengthens the integrity of scientific findings and supports the development of safe and effective new therapies.

Designing and Executing a Collaborative Cross-Validation Study

In the globalized landscape of pharmaceutical development and inorganic materials research, the reliability of analytical data across different laboratories is paramount. Cross-validation serves as a critical process to ensure that analytical methods produce comparable and reliable results when transferred between laboratories or when data from multiple sites are combined for regulatory submissions. This is especially crucial for global clinical trials or multi-center material analysis projects, where consistent data quality is non-negotiable. The ICH M10 guideline formally recognizes this need by explicitly addressing the assessment of bias between methods, moving beyond single-laboratory validation to ensure data consistency across the entire scientific ecosystem [8].

Understanding the foundational concepts of method variability is essential. As outlined in Table 1, analytical method performance is assessed through two key precision parameters: intermediate precision and reproducibility [5]. While both measure consistency, they operate at different scopes. Intermediate precision evaluates variability within a single laboratory under different conditions (different analysts, instruments, or days), acting as an initial robustness check. Reproducibility, a broader and more rigorous assessment, measures variability between different laboratories and is often established through interlaboratory studies or collaborative trials [32] [5]. A structured approach to cross-validation ensures that methods are not only precise locally but also transferable and robust on a global scale.

Table 1: Key Precision Parameters in Method Validation

| Parameter | Testing Environment | Variables Assessed | Primary Goal |

|---|---|---|---|

| Intermediate Precision | Same laboratory | Different analysts, instruments, days, reagents | Assess method stability under normal laboratory operational variations |

| Reproducibility | Different laboratories | Lab location, equipment, environmental conditions, analysts | Demonstrate method transferability and global robustness for regulatory acceptance |

Foundational Concepts: Precision Parameters

The journey to a successfully established method begins with a clearly defined problem. In the context of cross-validation, the core problem is often the potential for systematic bias between two or more fully validated methods when data must be combined. This bias can stem from seemingly minor differences in sample preparation, instrumentation, or reagent sources. Without a formal cross-validation, such biases can remain undetected, jeopardizing the integrity of combined datasets and leading to incorrect conclusions in critical areas like pharmacokinetic analysis or material property certification.

The logical flow from problem definition to establishing a cross-validation strategy is systematic. The process starts by identifying the need to combine data, which leads directly to the requirement for demonstrating comparability between methods or laboratories. This requirement is formalized in a cross-validation plan, the execution of which determines the final outcome: whether data can be pooled or if method re-development is necessary. The following workflow diagram visualizes this decision-making pathway.

Experimental Protocols: A Case Study in Multi-Laboratory Cross-Validation

A definitive example of a well-executed cross-validation comes from a study supporting the global clinical development of lenvatinib, a multi-targeted tyrosine kinase inhibitor [20]. This study involved seven bioanalytical methods across five independent laboratories, providing a robust model for a structured approach from problem definition to method establishment.

Problem Definition and Method Establishment

The clear problem was the need to compare pharmacokinetic data from lenvatinib clinical trials conducted across different global sites. To address this, each of the five laboratories first independently established and validated their own Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS) methods for quantifying lenvatinib in human plasma. Each method was fully validated according to regulatory guidelines, ensuring that the foundational performance characteristics—such as accuracy, precision, and sensitivity—were met within each lab before the inter-laboratory comparison was attempted [20].

Cross-Validation Experimental Protocol

The core of the cross-validation study involved analyzing two types of samples across all participating laboratories [20]:

- Quality Control (QC) Samples: These are samples prepared with known concentrations of lenvatinib. They were used to directly assess the accuracy and precision of each method against a reference value.

- Clinical Study Samples: These were actual patient samples with blinded, unknown concentrations. Their analysis tested the method's performance under real-world conditions and allowed for a direct comparison of results between labs.

The specific methodologies developed at each laboratory, while all based on LC-MS/MS, showcased variations in technique, as detailed in Table 2. This diversity makes the successful cross-validation particularly compelling, demonstrating that the active ingredient concentration, not the minor methodological details, is the primary driver of a comparable result.

Table 2: Methodological Variations in the Lenvatinib Cross-Validation Study

| Laboratory & Method | Sample Prep & Volume | Internal Standard (IS) | Extraction Technique | Assay Range (ng/mL) |

|---|---|---|---|---|

| Method A | 0.2 mL Plasma | ER-227326 (structural analogue) | Liquid-Liquid Extraction (Diethyl ether) | 0.1 - 500 |

| Method B | 0.05 mL Plasma | 13C6 lenvatinib (stable isotope) | Protein Precipitation | 0.25 - 250 |

| Method C | 0.1 mL Plasma | 13C6 lenvatinib (stable isotope) | Liquid-Liquid Extraction (MTBE-IPA) | 0.25 - 250 |

| Method D | 0.2 mL Plasma | ER-227326 (structural analogue) | Liquid-Liquid Extraction (Diethyl ether) | 0.1 - 100 |

| Method E1, E2, E3 | 0.1 mL Plasma | ER-227326 or 13C6 lenvatinib | Solid Phase Extraction or Liquid-Liquid Extraction | 0.25 - 500 |

Results and Establishment of Method Equivalence

The cross-validation was successful, confirming that the lenvatinib concentrations measured in human plasma were comparable across all laboratories. The accuracy for the QC samples was within ±15.3%, and the percentage bias for the clinical study samples was within ±11.6%, meeting pre-defined acceptance criteria [20]. This narrow range of bias demonstrated that despite the different methods, all laboratories could generate equivalent data, thereby validating the approach of combining pharmacokinetic data from their respective clinical trials.

Statistical Approaches for Assessing Cross-Validation Data

While the lenvatinib study used percentage bias, the field is evolving towards more sophisticated statistical techniques, especially under the ICH M10 guideline. This guideline emphasizes the need to assess bias but does not prescribe fixed acceptance criteria, leading to an ongoing scientific debate on the best statistical practices [8].

Two prominent approaches have emerged:

- Standardized Prescriptive Approach: Nijem et al. propose a method where initial equivalency is met if the 90% confidence interval (CI) of the mean percent difference of concentrations falls within ±30%. This is followed by an assessment for concentration-dependent bias by analyzing the slope of the percent difference versus mean concentration curve [8].

- Contextual and Statistical Approach: Fjording, Goodman, and Briscoe argue that pass/fail criteria are inappropriate. They advocate for involvement of clinical pharmacology and biostatistics teams to design the cross-validation plan, using tools like Bland-Altman plots for visualizing bias and Deming regression for quantifying agreement, with the conclusion heavily weighted by the intended use of the data [8].

The following diagram illustrates the key decision points in this statistical evaluation process, from data collection through to the final interpretation of method equivalence.

The Scientist's Toolkit: Essential Reagents and Materials

The execution of a cross-validation study, particularly for inorganic or bioanalytical methods, relies on a suite of essential research reagents and materials. The lenvatinib case study highlights several critical components [20]:

- Analytical Standard: A high-purity reference material of the analyte (e.g., lenvatinib) is fundamental for preparing calibration standards and QC samples to define the analytical curve.

- Internal Standard (IS): Either a stable isotope-labeled version of the analyte (e.g., 13C6-lenvatinib) or a structural analogue (e.g., ER-227326). The IS corrects for variability in sample preparation and instrument analysis.

- Blank Matrix: The analyte-free biological or material matrix (e.g., human plasma, solvent) used to prepare calibration standards and QC samples, matching the composition of real samples.

- Sample Extraction Reagents: Solvents and materials specific to the chosen extraction technique, such as Methyl tert-butyl ether (MTBE) and Isopropanol (IPA) for liquid-liquid extraction or Solid Phase Extraction (SPE) plates (e.g., Oasis HLB, MCX).

- LC-MS/MS Mobile Phase Components: High-purity solvents (Acetonitrile, Methanol) and additives (Formic Acid, Ammonium Acetate, Ammonium Hydroxide) essential for chromatographic separation and efficient ionization in the mass spectrometer.

Selecting and Preparing Certified Reference Materials (CRMs) and Samples