Composition-Based Machine Learning for Inorganic Stability: From Foundational Concepts to Advanced Discovery

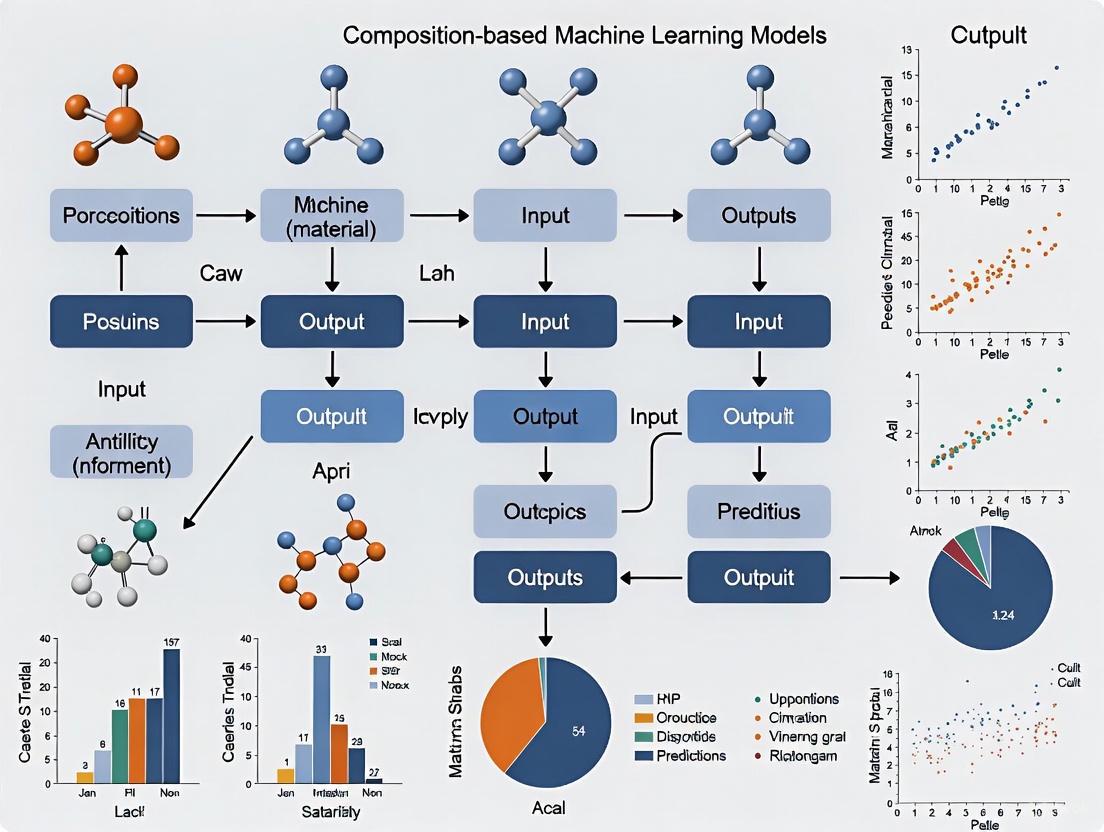

This article provides a comprehensive exploration of composition-based machine learning (ML) models for predicting the thermodynamic stability of inorganic materials.

Composition-Based Machine Learning for Inorganic Stability: From Foundational Concepts to Advanced Discovery

Abstract

This article provides a comprehensive exploration of composition-based machine learning (ML) models for predicting the thermodynamic stability of inorganic materials. Tailored for researchers and scientists, it covers the foundational principles of why stability prediction is a critical bottleneck in materials discovery and how ML offers a data-driven solution. The scope extends to detailed methodologies, including ensemble techniques and feature engineering, alongside critical discussions on overcoming common challenges like model bias and false-positive rates. Finally, the article presents rigorous validation frameworks and comparative analyses of state-of-the-art models, synthesizing key takeaways to guide the effective application of these tools in accelerating the development of novel functional materials, with implications for biomedical and clinical research.

The Why and What: Foundational Principles of Stability Prediction in Inorganic Materials

The discovery of new inorganic compounds with desirable properties has long been a fundamental challenge in materials science. The compositional space of potential inorganic materials is astronomically large, often described as akin to finding a needle in a haystack [1]. The actual number of compounds that can be feasibly synthesized in a laboratory represents only a minute fraction of this total space, creating a significant bottleneck in materials development [1]. This challenge stems from the extensive combinatorial possibilities when considering combinations of elements from the periodic table in varying proportions. Traditional experimental approaches to explore this space are characterized by inefficiency, as establishing thermodynamic stability typically requires resource-intensive experimental investigation or density functional theory (DFT) calculations to determine the energy of compounds within a given phase diagram [1]. The computation of energy via these methods consumes substantial computational resources, resulting in low efficiency and limited efficacy in exploring new compounds.

Within this context, evaluating thermodynamic stability provides a crucial strategy for constricting the exploration space [1]. By meticulously evaluating which compounds are thermodynamically stable, researchers can winnow out a substantial proportion of materials that are difficult to synthesize or endure under certain conditions, thereby notably amplifying the efficiency of materials development [1]. The thermodynamic stability of materials is typically represented by the decomposition energy (ΔHd), defined as the total energy difference between a given compound and competing compounds in a specific chemical space [1]. This metric is ascertained by constructing a convex hull utilizing the formation energies of compounds and all pertinent materials within the same phase diagram. Machine learning (ML) offers a promising avenue for expediting the discovery of new compounds by accurately predicting their thermodynamic stability, providing significant advantages in terms of time and resource efficiency compared to traditional methods [1] [2].

Quantifying the Compositional Space Challenge

The Scale of the Exploration Problem

The challenge of navigating compositional space is fundamentally a problem of scale. To understand the magnitude, consider the combinatorial possibilities when mixing even a limited number of elements. For instance, with 10 elements combined in ternary compounds, thousands of possible compositions exist before even considering different stoichiometric ratios. The V–Cr–Ti alloy system demonstrates this complexity, where conventional wisdom had limited exploration to Cr+Ti content below 10 wt.%, while machine learning approaches have revealed promising composition regions with Cr+Ti content as high as 60 wt.% [3].

Table 1: Traditional vs. ML-Accelerated Materials Discovery

| Aspect | Traditional Methods | ML-Directed Approach |

|---|---|---|

| Exploration Speed | Slow (months to years for systematic exploration) | Rapid (days to weeks for screening) |

| Resource Requirements | High (extensive experimental/DFT resources) | Low (efficient computational screening) |

| Data Efficiency | Requires full characterization of each compound | Achieves same performance with 1/7th the data [1] |

| Composition Space Coverage | Limited to small regions | Can explore vast, unexplored regions [3] |

| Bias in Exploration | Limited by researcher intuition and existing literature | Reduced through data-driven discovery [1] |

Performance Metrics of ML Approaches

The effectiveness of machine learning in addressing the compositional space challenge can be quantified through various performance metrics. Experimental results have validated the efficacy of ensemble ML models in accurately predicting compound stability, achieving an Area Under the Curve (AUC) score of 0.988 [1] [2]. Notably, these models demonstrate exceptional efficiency in sample utilization, requiring only one-seventh of the data used by existing models to achieve the same performance [1]. This data efficiency is particularly valuable for exploring composition spaces with limited existing experimental data.

Table 2: Quantitative Performance of ML Stability Prediction Models

| Model/Method | Prediction Accuracy | Data Efficiency | Key Advantages |

|---|---|---|---|

| ECSG (Ensemble) | 0.988 AUC [1] [2] | 7x more efficient than existing models [1] | Mitigates inductive bias through ensemble approach |

| ElemNet | MAE: 0.042 eV/atom (cross-validation) [3] | Trained on 341,000 compounds [3] | Deep neural network using only elemental composition |

| DFT Calculations | High but computationally expensive | Requires full calculation for each compound | Considered benchmark for accuracy |

| Traditional Experimental | High but low throughput | Extremely resource-intensive | Provides ground-truth validation |

Machine Learning Frameworks for Composition Space Navigation

Ensemble Approaches with Stacked Generalization

To address the core challenge of navigating compositional space, researchers have proposed machine learning frameworks based on ensemble methods and stacked generalization. The Electron Configuration models with Stacked Generalization (ECSG) framework integrates three distinct models to construct a super learner [1]. This approach effectively mitigates the limitations of individual models and harnesses a synergy that diminishes inductive biases, ultimately enhancing the performance of the integrated model [1]. The framework's strength lies in its ability to amalgamate models grounded in diverse knowledge sources to complement each other and mitigate bias, consequently ameliorating predictive performance.

The ECSG framework incorporates three foundational models representing different domains of knowledge [1]:

Magpie: Emphasizes statistical features derived from various elemental properties, including atomic number, atomic mass, and atomic radius. The statistical features encompass mean, mean absolute deviation, range, minimum, maximum, and mode. This model is trained using gradient-boosted regression trees (XGBoost).

Roost: Conceptualizes the chemical formula as a complete graph of elements, employing graph neural networks to learn the relationships and message-passing processes among atoms. By incorporating an attention mechanism, Roost effectively captures the interatomic interactions critical for determining thermodynamic stability.

ECCNN (Electron Configuration Convolutional Neural Network): A newly developed model designed to address the limited understanding of electronic internal structure in current models. The model uses electron configuration information as input, processed through convolutional operations to extract relevant features for stability prediction.

The ensemble approach is particularly valuable because it compensates for the limitations of individual models that are constructed based on specific domain knowledge, which can introduce biases that impact performance [1]. By integrating multiple perspectives, the framework provides a more robust prediction capability essential for reliable navigation of compositional space.

ML Ensemble Framework for Stability Prediction

Composition-Based vs. Structure-Based Models

Machine learning models for predicting material properties can be broadly categorized into structure-based models and composition-based models [1]. Structure-based models contain more extensive information, including the proportions of each element and the geometric arrangements of atoms. However, determining the precise structures of compounds can be challenging [1]. In contrast, composition-based models do not encounter this issue but are often perceived as inferior due to their lack of structure information. Nonetheless, recent research has demonstrated that composition-based models can accurately predict the properties of materials, such as energy and bandgap [1].

For the specific challenge of navigating compositional space in inorganic compounds, composition-based models offer significant advantages, particularly in the discovery of novel materials [1]. Composition-based models can significantly advance the efficiency of developing new materials, given that the composition information can be known as a priori. While databases like the Materials Project (MP) contain extensive structural information, this data is often unavailable or difficult to obtain when exploring new, uncharacterized materials [1]. Structural information typically requires complex experimental techniques or computationally expensive methods like Density Functional Theory (DFT). In contrast, compositional information can be readily obtained by sampling the compositional space, making it more accessible for high-throughput screening and the exploration of new materials [1].

Experimental Protocols and Validation Methodologies

Workflow for ML-Directed Materials Discovery

Implementing machine learning to navigate compositional space requires a systematic workflow that integrates computational prediction with experimental validation. The following detailed methodology has been proven effective in discovering new inorganic compounds:

Data Collection and Preprocessing: Gather formation energies and structural information from existing databases such as the Materials Project (MP) and Open Quantum Materials Database (OQMD) [1] [3]. For composition-based models, extract elemental compositions and corresponding thermodynamic properties.

Feature Engineering: For electron configuration-based models like ECCNN, encode the electron configuration of materials as input matrices. The input for ECCNN is a matrix with a shape of 118 × 168 × 8, encoded by the electron configuration of materials [1]. For other approaches, calculate features including statistical measures of elemental properties or graph representations of compositions.

Model Training and Validation: Train multiple base models (Magpie, Roost, ECCNN) using their respective feature representations. Employ stacked generalization to combine these models into a super learner. Validate using k-fold cross-validation and hold-out test sets, targeting performance metrics such as AUC and mean absolute error.

Composition Space Screening: Apply the trained model to screen unexplored compositional spaces. For example, in the study of V–Cr–Ti alloys, the model computed the enthalpy of formation (ΔHf) across the ternary composition space [3].

First-Principles Validation: Perform DFT calculations on the most promising candidate compositions to verify thermodynamic stability. This step is crucial for validating ML predictions before experimental synthesis [1].

Experimental Synthesis and Characterization: Finally, synthesize the predicted compounds using solid-state methods and characterize their structure and properties. For instance, in the V–Cr–Ti system, this would involve arc-melting or powder metallurgy followed by microstructure analysis and mechanical testing [3].

Workflow for ML-Directed Materials Discovery

Case Study: V–Cr–Ti Alloy Stability Prediction

A concrete example of ML-directed composition space navigation is the stability prediction of V–Cr–Ti alloys for nuclear applications [3]. The experimental protocol for this study involved:

Data Source and Model Selection: Utilizing the ElemNet model, a 17-layered fully connected deep neural network developed for predicting formation energy using only elemental composition [3]. The model was pretrained on enthalpies of formation of 341,000 compounds with unique elemental compositions determined by DFT calculations from the Open Quantum Materials Database.

Computational Methods: Operating the ElemNet code in energy-prediction mode based on the pretrained model in a Python 3.7 environment with extension modules including NumPy 1.21 and TensorFlow 1.14, considering the elemental composition of the metal alloy as the only input [3].

Validation Approach: Comparing ML-predicted formation enthalpies with the limited available DFT data for ternary V–Cr–Ti compounds, achieving excellent agreement with a mean absolute error of 0.015 eV/atom [3].

Stability Assessment: Computing the negative enthalpy of formation (-ΔHf) as a direct representation of stability and correlating these values with experimental data for ductile-brittle transition temperature (DBTT) and swelling behavior [3].

Discovery Outcome: Identifying a previously unexplored composition region with Cr+Ti ~ 60 wt.% that exhibits significantly lower DBTT compared to conventional compositions with less than 10 wt.% Cr+Ti content [3]. This demonstrates the power of ML approaches to reveal promising composition regions that conventional wisdom might overlook.

Computational Tools and Databases

Successfully navigating the compositional space of inorganic compounds requires specialized computational tools and data resources. The table below details essential components of the modern computational materials scientist's toolkit.

Table 3: Essential Research Reagents for Composition-Based ML Studies

| Resource Name | Type | Function/Purpose | Key Features |

|---|---|---|---|

| Materials Project (MP) | Database | Provides calculated properties of known and predicted materials [1] | Formation energies, crystal structures, band gaps |

| Open Quantum Materials Database (OQMD) | Database | Contains DFT-calculated formation energies for training ML models [3] | 341,000+ compounds with formation energies |

| ElemNet | ML Model | Deep neural network for formation energy prediction [3] | 17-layer network using only elemental composition |

| ECSG Framework | ML Model | Ensemble model for stability prediction [1] | Combines Magpie, Roost, and ECCNN models |

| JARVIS Database | Database | Repository for DFT calculations and ML benchmarks [1] | Includes stability data for validation |

| compositions R Package | Software Tool | Compositional data analysis within log-ratio framework [4] | Consistent analysis and modeling of compositional data |

The computational prediction of stable compositions must ultimately be validated through experimental synthesis and characterization. Key experimental resources include:

Solid-State Synthesis Equipment: High-temperature furnaces, arc melters, and spark plasma sintering apparatus for synthesizing predicted compounds. These enable the preparation of samples with precise composition control.

Characterization Tools: X-ray diffraction (XRD) systems for structural validation, scanning electron microscopes (SEM) for microstructural analysis, and thermal analysis equipment for stability assessment.

Mechanical Testing Systems: Equipment for evaluating ductile-brittle transition temperatures (DBTT), particularly important for structural materials like V–Cr–Ti alloys [3].

The challenge of navigating the vast compositional space of inorganic compounds represents a fundamental bottleneck in materials discovery. Traditional experimental and computational approaches are insufficient to explore this space systematically due to resource constraints. Composition-based machine learning models offer a transformative approach to this problem, enabling efficient screening of compositional spaces and prediction of thermodynamic stability with remarkable accuracy [1] [2].

The ensemble framework combining electron configuration information with other knowledge domains has demonstrated exceptional performance, achieving AUC scores of 0.988 in stability prediction while requiring only one-seventh of the data used by existing models [1]. This approach has proven effective in identifying previously unexplored composition regions in diverse material systems, from two-dimensional wide bandgap semiconductors to double perovskite oxides and V–Cr–Ti alloys [1] [3].

As machine learning methodologies continue to evolve and materials databases expand, the navigation of compositional space will become increasingly efficient and comprehensive. This progression will accelerate the discovery of novel materials with tailored properties for applications ranging from nuclear energy to electronics and beyond. The integration of composition-based ML models with high-throughput experimentation and first-principles validation represents a paradigm shift in materials discovery, transforming it from a serendipitous process to a systematic, data-driven engineering discipline.

Thermodynamic stability determines the synthesizability and longevity of inorganic materials, guiding the discovery of new compounds for energy and technology applications. This whitepaper establishes the critical distinction between formation enthalpy and decomposition energy, demonstrating how the convex hull construction provides the definitive metric for stability assessment. Within composition-based machine learning research, the convex hull serves as both a source of training data and a benchmark for predictive model accuracy. We present quantitative comparisons of density functional theory (DFT) performance, detailed computational protocols, and visualization of the stability evaluation framework essential for researchers navigating complex chemical spaces.

Traditional materials thermodynamics has relied heavily on formation enthalpy (ΔHf) as a stability metric, representing the energy required to form a compound from its constituent elemental phases [5]. However, this perspective proves incomplete for practical stability assessment. A compound competes thermodynamically not only with its elements but with all other compounds in its chemical space [5]. The decomposition energy (ΔHd) represents the energy change for a compound decomposing into the most stable combination of competing phases, providing the true determinant of thermodynamic stability [5] [6].

This distinction becomes crucial in high-throughput screening and machine learning, where accurate stability labels are prerequisite for model training [7]. The convex hull construction translates this theoretical principle into a computable metric, enabling the rapid classification necessary for exploring vast composition spaces [2] [7].

Theoretical Foundation: The Convex Hull in Composition Space

Geometric Definition and Stability Criterion

In geometric terms, the convex hull in materials science represents the minimum energy envelope in energy-composition space [6] [8]. For a given set of points representing compounds, the convex hull is the smallest convex set containing all points, analogous to the shape enclosed by a rubber band stretched around the points [8].

The energy above hull (Ehull) quantifies thermodynamic stability as the vertical distance from a compound's energy to this hull surface [6]. Compounds lying on the hull (Ehull = 0) are thermodynamically stable, while those above it (Ehull > 0) are unstable with respect to decomposition into the hull phases [5] [6].

Decomposition Pathways and Their Prevalence

Analysis of 56,791 compounds from the Materials Project database reveals three distinct decomposition types [5] [9]:

Table 1: Classification and Prevalence of Decomposition Reactions

| Reaction Type | Description | Prevalence | Example |

|---|---|---|---|

| Type 1 | Decomposition into elemental phases | 3% of compounds (81% are binaries) | ΔHd = ΔHf |

| Type 2 | Decomposition exclusively into other compounds | 63% of compounds | Compound bracketed by other compounds |

| Type 3 | Decomposition into both elements and compounds | 34% of compounds | Mixed decomposition products |

This distribution demonstrates that decomposition to elemental forms rarely determines compound stability, especially for non-binary systems where Type 2 reactions dominate [5]. This has profound implications for synthesis strategies, as Type 2 reaction thermodynamics are insensitive to adjustments in elemental chemical potentials [5].

Computational Framework for Stability Assessment

Density Functional Theory Methodology

First-principles calculations using DFT provide the foundation for computational stability assessment. The generalized gradient approximation (GGA) with the Perdew-Burke-Ernzerhof (PBE) functional and the meta-GGA strongly constrained and appropriately normed (SCAN) functional represent standard approaches [5] [9].

Table 2: Performance of DFT Functionals for Stability Prediction

| Functional | Mean Absolute Difference (MAD) for ΔHf | MAD for ΔHd (646 reactions) | MAD for Type 2 Reactions (231 reactions) |

|---|---|---|---|

| PBE | 196 meV/atom | 70 meV/atom | ~35 meV/atom |

| SCAN | 88 meV/atom | 59 meV/atom | ~35 meV/atom |

For the most prevalent Type 2 decomposition reactions, both functionals achieve accuracy comparable to experimental uncertainty (~35 meV/atom) [5]. Correction schemes using fitted elemental reference energies provide negligible improvement (~2 meV/atom) for decomposition energy predictions [5] [9].

Convex Hull Construction Protocol

The convex hull construction protocol involves systematic evaluation of phase relationships:

Figure 1: Computational workflow for convex hull construction and stability assessment

Step 1: Data Collection Compile all known and predicted compounds within the target composition space from crystallographic databases (ICSD, Materials Project, OQMD) [7]. For ternary and quaternary systems, ensure adequate coverage of the composition space.

Step 2: Energy Calculation Compute DFT total energies for all structures using consistent computational parameters (functional, pseudopotentials, k-point mesh, convergence criteria) [6]. Normalize energies to eV/atom to enable comparison across different compositions.

Step 3: Hull Computation Solve the N-dimensional convex hull problem using computational geometry algorithms. For a compound A(α)B(β)C(γ), the decomposition energy is calculated as: [ \Delta Hd = E{ABC} - E{A-B-C} ] where E(_{A-B-C}) represents the minimum energy combination of competing phases with the same average composition as ABC [5].

Step 4: Stability Classification Identify phases on the convex hull (thermodynamically stable) and above the hull (metastable or unstable). The energy above hull is calculated as the vertical distance to the hull surface [6].

Step 5: Decomposition Analysis For unstable compounds, determine the specific decomposition reaction and products. For example, BaTaNO(2) decomposes as: BaTaNO(2) → 2/3 Ba(4)Ta(2)O(9) + 7/45 Ba(TaN(2))(2) + 8/45 Ta(3)N(_5) [6].

Essential Research Reagents and Computational Tools

Table 3: Key Resources for Computational Stability Assessment

| Resource Category | Specific Tools/Databases | Function in Stability Research |

|---|---|---|

| DFT Codes | VASP, Quantum ESPRESSO | First-principles energy calculations |

| Materials Databases | Materials Project, OQMD, NRELMatDB | Source of reference structures and energies |

| Stability Analysis | pymatgen, PHONOPY | Convex hull construction and phase analysis |

| Machine Learning | CGCNN, MEGNet, iCGCNN | Graph neural networks for energy prediction |

Machine Learning Integration for Stability Prediction

Data Requirements and Model Architectures

Machine learning models for stability prediction require balanced training datasets containing both ground-state and higher-energy structures [7]. Graph neural networks (GNNs) that represent crystal structures as graphs with atoms as nodes and bonds as edges have emerged as particularly effective architectures [7].

The critical importance of data balance was demonstrated in models trained exclusively on ground-state structures from the ICSD, which showed significant errors (e.g., -0.733 eV/atom for PdN) when predicting energies of higher-energy polymorphs [7]. Incorporating hypothetical structures generated through ionic substitution or other structure prediction methods improves model performance for stability ranking [7].

Workflow for ML-Guided Materials Discovery

Figure 2: Machine learning workflow for stability prediction and materials discovery

Recent advances demonstrate remarkable efficiency in sample utilization, with some models requiring only one-seventh of the data used by existing approaches to achieve comparable performance (AUC score of 0.988) [2]. These models enable rapid exploration of uncharted composition spaces, particularly for complex systems like ternary transition metal compounds and double perovskite oxides [2] [10].

Experimental Validation and Synthesis Considerations

Interpreting Stability Metrics for Synthesis

The energy above hull provides crucial guidance for experimental synthesis:

- Ehull = 0 meV/atom: Thermodynamically stable; synthesizable under equilibrium conditions

- Ehull < 20-30 meV/atom: Likely synthesizable as metastable phases

- Ehull > 50 meV/atom: Increasingly challenging to synthesize

For example, BaTaNO₂ with Ehull = 32 meV/atom represents a metastable phase that can be synthesized despite its positive energy above hull [6].

Limitations and Complementary Techniques

Computational stability assessments have limitations. DFT errors (~35 meV/atom for Type 2 reactions) approach the magnitude of experimental uncertainty [5]. Additionally, convex hull analysis assumes equilibrium conditions and does not account for kinetic barriers, non-equilibrium synthesis pathways, or temperature-dependent effects beyond the harmonic approximation.

Successful research programs integrate computational stability predictions with experimental validation. The machine-learning directed approach has demonstrated reduced experimental effort in identifying new intermetallic compounds in systems like Y-Ag-In [11]. For ternary transition metal compounds, combining convex hull analysis with machine learning feature importance provides insights into structure-stability relationships [10].

The convex hull construction and decomposition energy provide the fundamental framework for assessing thermodynamic stability in inorganic materials. Moving beyond traditional formation enthalpy to decomposition energy reveals that most compounds compete thermodynamically with other compounds rather than elemental phases. Integration of these concepts with machine learning creates a powerful paradigm for accelerated materials discovery, enabling efficient navigation of vast composition spaces while grounding predictions in rigorous thermodynamics. As computational methods advance toward higher accuracy and machine learning models achieve greater data efficiency, this integrated approach promises to dramatically accelerate the discovery of stable materials for energy and technology applications.

The discovery and development of new inorganic compounds are fundamental to technological progress, from renewable energy systems to next-generation electronics. Traditionally, this process has relied on two core methodologies: experimental synthesis and density functional theory (DFT) calculations. However, the extensive compositional space of potential materials makes the exhaustive exploration of these methods prohibitively expensive and time-consuming. The actual number of compounds that can be synthesized in a laboratory represents only a minute fraction of the total compositional space, a predicament often likened to finding a needle in a haystack [1].

This article examines the intrinsic limitations and high costs associated with these conventional approaches. It further frames these challenges within the context of a promising solution: the use of composition-based machine learning (ML) models for predicting inorganic compound stability. By leveraging existing data, these models offer a pathway to significantly accelerate materials discovery while conserving substantial computational and experimental resources [1] [3].

The Computational Bottleneck: Limitations of Density Functional Theory

Fundamental Accuracy Challenges

DFT is a widely used methodology for calculating crucial material properties such as formation energy, which determines thermodynamic stability. Despite its popularity, DFT has well-documented limitations that affect its predictive accuracy. A primary issue is the intrinsic error of the exchange-correlation functionals, which can lead to an inadequate energy resolution. This is particularly problematic for calculating formation enthalpies and predicting phase stability, especially in ternary systems [12].

The method is known to struggle with several physical phenomena, including the correct description of weak, long-range interactions and spin-state energetics. These failures can lead to incorrect qualitative results, particularly in systems with strong electron correlation or complex magnetic properties. The errors are often unsystematic and highly functional-dependent, necessitating careful and computationally expensive validation [13].

Resource Intensiveness and Efficiency

Establishing thermodynamic stability typically requires constructing a convex hull using the formation energies of a target compound and all competing phases within the same phase diagram. DFT calculations for each of these points consume substantial computational resources, leading to low efficiency in exploring new compounds [1].

Table 1: Key Limitations of Density Functional Theory

| Limitation Category | Specific Challenge | Impact on Material Discovery |

|---|---|---|

| Fundamental Accuracy | Intrinsic errors in exchange-correlation functionals [12] | Limited reliability in predicting phase stability, especially for ternary systems [12] |

| Inaccurate description of weak interactions (dispersion forces) [13] | Reduced predictive power for molecular crystals and layered materials | |

| Failures in spin-state energetics [13] | Incorrect predictions for magnetic materials and transition metal complexes | |

| Computational Cost | High resource demand for total energy calculations [1] | Inefficient for rapid screening across vast compositional spaces |

| Need for multiple calculations to build a convex hull [1] | Low throughput in determining thermodynamic stability |

The Experimental Synthesis Hurdle: Cost, Time, and Labor

The traditional experimental path to discovering new materials is characterized by its labor-intensive nature. For multicomponent alloys, such as the V-Cr-Ti systems studied for nuclear applications, conducting experiments across a wide range of elemental compositions is extremely laborious [3]. Each synthesis and subsequent characterization of properties, such as the ductile-brittle transition temperature (DBTT), requires significant investment of time, specialized equipment, and expert labor. This process becomes exponentially more challenging as the number of constituent elements increases, rendering the comprehensive exploration of complex compositional spaces, like those of high-entropy alloys, practically infeasible [3].

The Machine Learning Paradigm: A Path to Accelerated Discovery

Composition-Based Machine Learning Models

Machine learning offers a promising avenue for overcoming the bottlenecks of traditional methods. By learning from existing databases of calculated and experimental properties, ML models can predict material stability directly from chemical composition, bypassing the need for exhaustive DFT or immediate synthesis [1] [3]. This approach provides significant advantages in time and resource efficiency [1].

A key advantage of composition-based models is that they do not require precise structural information, which is often unavailable for new, unexplored materials. This allows for the rapid screening of vast compositional spaces using only the chemical formula as a starting point [1].

Table 2: Comparison of Traditional Methods and Machine Learning for Stability Prediction

| Aspect | Experimental Synthesis | Density Functional Theory | Composition-Based ML |

|---|---|---|---|

| Primary Input | Raw elements & synthesis conditions | Atomic structure & composition | Elemental composition |

| Time per Sample | Weeks to months [3] | Hours to days [1] | Seconds to minutes |

| Computational Cost | Low (but high equipment/lab cost) | Very High [1] | Low |

| Exploration Speed | Very Slow | Slow | Very High |

| Key Limitation | Laborious for multicomponent systems [3] | High resource demand [1] | Reliant on quality of training data |

Key Methodologies and Workflows

Several advanced ML frameworks have been developed specifically for predicting material stability. The ECSG (Electron Configuration models with Stacked Generalization) framework integrates three distinct models to mitigate individual biases and enhance predictive performance [1]:

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model that uses electron configuration as an intrinsic material descriptor.

- Roost: A model that represents a chemical formula as a graph of elements and uses message-passing to capture interatomic interactions.

- Magpie: A model that leverages statistical features from various elemental properties.

Another approach involves using pre-trained deep learning models like ElemNet, a 17-layer deep neural network trained on hundreds of thousands of compounds from databases like the Open Quantum Materials Database (OQMD). This model can predict formation energy using only elemental composition as input [3].

The following diagram illustrates a generalized workflow for using ensemble machine learning to predict compound stability, from data sourcing to final validation.

Performance and Validation

The performance of these ML models is compelling. The ECSG framework has been reported to achieve an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database [1]. Notably, it demonstrated exceptional sample efficiency, requiring only one-seventh of the data used by existing models to achieve the same performance [1].

In a case study on V–Cr–Ti alloys, predictions of negative enthalpy of formation (-ΔHf) from the ElemNet model qualitatively agreed with experimental ductile-brittle transition temperature (DBTT) data. The model accurately reproduced the trend of increasing DBTT with Cr content below 20 wt.%, and even suggested a previously unexplored compositional region with high Cr+Ti content (~60 wt.%) that may exhibit low DBTT [3].

Furthermore, ML models can be deployed to correct DFT errors. One study trained a neural network to predict the discrepancy between DFT-calculated and experimentally measured formation enthalpies for binary and ternary alloys, thereby systematically improving the reliability of first-principles predictions [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Composition-Based Machine Learning in Materials Research

| Resource Name | Type | Primary Function | Relevance to Stability Prediction |

|---|---|---|---|

| Materials Project (MP) [1] | Database | Repository of computed material properties (e.g., formation energies from DFT). | Provides high-quality training data for machine learning models. |

| Open Quantum Materials Database (OQMD) [3] | Database | Extensive collection of DFT-calculated thermodynamic properties for hundreds of thousands of compounds. | Serves as a key dataset for training models like ElemNet. |

| JARVIS [1] | Database | Joint Automated Repository for Various Integrated Simulations, includes DFT data. | Used for benchmarking and validating model performance. |

| ElemNet [3] | Pre-trained ML Model | A deep neural network for predicting formation energy from composition. | Allows rapid stability screening without training a new model from scratch. |

| ECCNN [1] | ML Model Architecture | A convolutional neural network using electron configuration as input. | Captures intrinsic electronic structure information to predict stability. |

The limitations of traditional methods for material discovery are clear. The high computational costs of DFT and the labor-intensive nature of experimental synthesis create significant bottlenecks in the exploration of vast inorganic compositional spaces. Composition-based machine learning models emerge as a powerful alternative, demonstrating the ability to predict thermodynamic stability with high accuracy and remarkable sample efficiency. By integrating diverse knowledge sources and leveraging large existing datasets, these models can accelerate the discovery of novel, stable compounds for technological applications, effectively navigating the challenging "needle in a haystack" problem of materials science.

The discovery of novel inorganic materials has long been characterized by expensive, inefficient trial-and-error approaches, creating a significant bottleneck for technological progress across fields from clean energy to information processing. Traditional methods for assessing thermodynamic stability and synthesizability—primarily through experimental investigation and density functional theory (DFT) calculations—consume substantial computational resources and time, resulting in low efficiency for exploring new compounds. The extensive compositional space of materials means the number of compounds actually synthesized represents only a minute fraction of the total possibility, creating a "needle in a haystack" challenge for researchers. This review examines the transformative paradigm shift driven by composition-based machine learning models, which accurately predict stability and synthesizability orders of magnitude faster than conventional approaches, dramatically accelerating the materials development pipeline.

The Limitations of Traditional Approaches

Computational and Experimental Bottlenecks

Traditional stability assessment relies on constructing convex hulls using formation energies of compounds and all pertinent materials within the same phase diagram. Establishing these convex hulls typically requires experimental investigation or DFT calculations to determine the energy of compounds, processes that consume substantial computation resources and yield limited efficacy in exploring new compounds. While DFT has paved the way for extensive materials databases, its high computational cost remains prohibitive for large-scale exploration.

The Charge-Balancing Fallacy

Charge-balancing criteria has served as a commonly employed proxy for synthesizability, filtering out materials that lack net neutral ionic charge for common oxidation states. However, this chemically motivated approach demonstrates poor predictive accuracy. Among all inorganic materials that have already been synthesized, only 37% can be charge-balanced according to common oxidation states, and even among ionic binary cesium compounds—typically governed by highly ionic bonds—only 23% of known compounds are charge balanced. The inflexibility of the charge neutrality constraint cannot account for different bonding environments across material classes such as metallic alloys, covalent materials, or ionic solids.

Machine Learning Frameworks for Stability Prediction

Ensemble Model with Stacked Generalization

The Electron Configuration models with Stacked Generalization (ECSG) framework integrates three foundational models based on distinct domains of knowledge to mitigate individual model biases. This super learner amalgamates:

- Magpie: Emphasizes statistical features derived from elemental properties (atomic number, mass, radius) using gradient-boosted regression trees (XGBoost)

- Roost: Conceptualizes chemical formulas as complete graphs of elements, employing graph neural networks with attention mechanisms to capture interatomic interactions

- ECCNN: A novel electron configuration-based convolutional neural network that delineates electron distribution within atoms, encompassing energy levels and electron counts

The ECSG framework achieves an Area Under the Curve score of 0.988 in predicting compound stability within the JARVIS database and demonstrates exceptional efficiency, requiring only one-seventh of the data used by existing models to achieve equivalent performance [1] [2].

Graph Networks for Materials Exploration (GNoME)

The GNoME approach scales machine learning for materials exploration through large-scale active learning, utilizing state-of-the-art graph neural networks trained at scale to reach unprecedented generalization levels. The framework employs two parallel pipelines:

- Structural candidates: Generated through modifications of available crystals using symmetry-aware partial substitutions (SAPS)

- Compositional models: Predict stability without structural information using reduced chemical formulas

Through iterative active learning, GNoME models have discovered 2.2 million structures stable with respect to previous work, with final models achieving prediction errors of 11 meV atom⁻¹ and hit rates above 80% with structure and 33% with composition only [14].

Deep Learning Synthesizability Classification

SynthNN adopts a positive-unlabeled (PU) learning framework to predict synthesizability directly from chemical compositions without structural information. The model utilizes atom2vec representations, where each chemical formula is represented by a learned atom embedding matrix optimized alongside all neural network parameters. This approach learns optimal representations directly from the distribution of previously synthesized materials without assumptions about factors influencing synthesizability. Trained on the Inorganic Crystal Structure Database (ICSD) augmented with artificially generated unsynthesized materials, SynthNN identifies synthesizable materials with 7× higher precision than DFT-calculated formation energies [15].

Quantitative Performance Comparison

Table 1: Performance Metrics of ML Models for Stability and Synthesizability Prediction

| Model | Approach | Key Metric | Performance | Data Efficiency |

|---|---|---|---|---|

| ECSG | Ensemble learning with electron configuration | AUC score | 0.988 | 7× better than existing models |

| GNoME | Graph neural networks with active learning | Hit rate (with structure) | >80% | Improves with data scaling |

| GNoME | Graph neural networks with active learning | Hit rate (composition only) | 33% per 100 trials | Improves with data scaling |

| SynthNN | Deep learning classification | Precision vs DFT | 7× higher than formation energy | Trained on ICSD + artificial data |

| Traditional DFT | First-principles calculations | Hit rate | ~1% | Computationally intensive |

Table 2: Discovery Scale Comparison Across Methods

| Method | Stable Materials Discovered | Time Scale | Computational Cost |

|---|---|---|---|

| Traditional experimental | 20,000 (ICSD) | Decades | Extremely high |

| DFT + substitutions | 48,000 | Years | High |

| GNoME (ML-guided) | 2.2 million | Active learning cycles | Orders of magnitude reduction |

| Human experts | Limited to specialized domains | Months to years | High personnel costs |

Detailed Methodologies and Experimental Protocols

ECSG Framework Implementation

The ECCNN base model processes electron configuration data encoded as a 118×168×8 matrix representing the electron configuration of materials. The architecture employs:

- Two convolutional operations with 64 filters of size 5×5

- Batch normalization following the second convolution

- 2×2 max pooling operation

- Flattening extracted features into a one-dimensional vector

- Fully connected layers for final prediction

After training foundational models, their outputs construct a meta-level model producing final predictions through stacked generalization. Validation via first-principles calculations demonstrates remarkable accuracy in correctly identifying stable compounds, particularly for two-dimensional wide bandgap semiconductors and double perovskite oxides [1].

GNoME Active Learning Workflow

The GNoME active learning process implements:

- Candidate generation: Over 10⁹ candidates through strongly augmented substitutions and random structure search

- Model filtration: Using volume-based test-time augmentation and uncertainty quantification through deep ensembles

- Structure clustering: Polymorphs ranked for DFT evaluation

- Data flywheel: Verified structures incorporated into iterative training cycles

Through six rounds of active learning, initial hit rates of <6% (structural) and <3% (compositional) improved to >80% and 33% respectively. The final ensemble achieves 11 meV atom⁻¹ prediction error on relaxed structures, demonstrating the power of scaling laws in materials informatics [14].

SynthNN Training Protocol

SynthNN employs semi-supervised learning addressing the lack of recorded data on unsynthesizable materials:

- Positive examples: Synthesizable inorganic materials extracted from ICSD

- Unlabeled examples: Artificially generated unsynthesized materials, treated as unlabeled data and probabilistically reweighted according to likelihood of synthesizability

- Hyperparameter optimization: Including embedding dimensionality and Nₛynth ratio of artificial to synthesized formulas

This positive-unlabeled learning approach generates predictions informed by the entire spectrum of previously synthesized materials rather than proxy metrics, better capturing the complex array of factors influencing synthesizability [15].

Visualization of ML Workflows

ML vs Traditional Discovery Workflow

Table 3: Key Databases and Computational Resources for ML-Driven Materials Discovery

| Resource | Type | Key Features | Application in Research |

|---|---|---|---|

| Materials Project (MP) | Materials Database | DFT-calculated properties for ~150,000 materials | Training data for stability prediction models |

| Open Quantum Materials Database (OQMD) | Materials Database | DFT-calculated properties for ~700,000 materials | Training data for stability prediction models |

| Inorganic Crystal Structure Database (ICSD) | Experimental Database | Experimentally characterized inorganic crystal structures | Ground truth for synthesizability models |

| JARVIS | Materials Database | DFT, ML, and experimental data for ~80,000 materials | Benchmarking model performance |

| Alexandria | DFT Database | >5 million DFT calculations for periodic compounds | Training advanced ML models and interatomic potentials |

| VASP | Simulation Software | First-principles DFT calculations | Ground truth verification for ML predictions |

Case Studies and Validation

Exploration of Multi-Component Systems

GNoME has demonstrated exceptional capability in discovering materials with 5+ unique elements, a combinatorially challenging space that previously escaped human chemical intuition. The model discovered 381,000 new entries on the updated convex hull from a total of 421,000 stable crystals, representing an order-of-magnitude expansion from all previous discoveries. Phase-separation energy analysis confirms these materials are meaningfully stable with respect to competing phases rather than merely "filling in the convex hull" [14].

Two-Dimensional Wide Bandgap Semiconductors

The ECSG framework successfully navigated unexplored composition space for two-dimensional wide bandgap semiconductors, with first-principles calculations validating the remarkable accuracy of identified stable compounds. The electron configuration approach proved particularly valuable for predicting stability in these systems where traditional domain knowledge provides limited guidance [1].

Human vs Machine Performance Benchmark

In a head-to-head material discovery comparison against 20 expert material scientists, SynthNN outperformed all experts, achieving 1.5× higher precision and completing the task five orders of magnitude faster than the best human expert. Remarkably, without prior chemical knowledge, SynthNN learned chemical principles of charge-balancing, chemical family relationships, and ionicity from the data distribution of synthesized materials [15].

The integration of composition-based machine learning models represents a fundamental paradigm shift in inorganic materials discovery, enabling rapid and cost-effective screening at unprecedented scales. Ensemble methods like ECSG, large-scale graph networks like GNoME, and synthesizability classifiers like SynthNN have demonstrated order-of-magnitude improvements in efficiency, accuracy, and scale compared to traditional approaches. As these models continue to benefit from scaling laws and improved algorithms, and as materials databases expand further, machine learning will become increasingly indispensable for identifying novel functional materials to address pressing technological challenges. The successful validation of ML-predicted compounds through first-principles calculations and experimental realization confirms these approaches are not merely computational exercises but represent a transformative advancement in materials science methodology.

In the field of inorganic materials research, machine learning (ML) has emerged as a transformative tool for predicting thermodynamic stability and accelerating the discovery of new compounds. These ML approaches primarily fall into two distinct categories: composition-based and structure-based models. Composition-based models predict material properties using only the chemical formula as input, making them exceptionally valuable for high-throughput screening of novel compounds whose crystal structures are unknown [1]. In contrast, structure-based models incorporate detailed information about atomic arrangements, bonding, and crystal symmetry, typically delivering higher accuracy for properties strongly influenced by structural characteristics [16]. Understanding the relative strengths, limitations, and appropriate application contexts of these two frameworks is essential for researchers developing ML-guided strategies for inorganic stability research. This guide provides a technical comparison of these approaches, detailing their underlying methodologies, performance characteristics, and implementation protocols.

Core Conceptual Frameworks and Technical Differentiation

Composition-Based Models: Leveraging Elemental Proportions and Properties

Composition-based models operate on the fundamental principle that a material's stability and properties are determined by its constituent elements and their relative proportions. These models use chemical formulas as their starting point, which are then transformed into quantitative descriptors using domain knowledge [1].

The feature engineering process typically involves calculating statistical measures (mean, variance, range, etc.) across various elemental properties for all elements in a compound. These properties often include atomic number, atomic mass, electronegativity, valence electron count, and electron affinity [1] [17]. For example, the Magpie model leverages such statistical features of elemental properties and employs gradient-boosted regression trees for prediction [1]. Advanced deep learning approaches like ElemNet bypass manual feature engineering by using deep neural networks to automatically learn relevant patterns directly from elemental compositions [1].

A more sophisticated approach incorporates electron configurations (EC) as intrinsic atomic characteristics that introduce less inductive bias. The Electron Configuration Convolutional Neural Network (ECCNN) framework encodes electron distributions into a matrix representation processed through convolutional layers to predict stability [1]. The primary advantage of composition-based models is their applicability in exploratory settings where only compositional space is being sampled, as they can significantly narrow down candidate materials before resource-intensive structure determination is attempted [1].

Structure-Based Models: Encoding Crystalline Architecture

Structure-based models recognize that atomic arrangement fundamentally influences material properties and stability. These approaches represent crystal structures as mathematical objects that capture bonding relationships and spatial configurations [16].

Graph Neural Networks (GNNs) have become the dominant architecture for structure-based prediction, representing crystals as graphs with atoms as nodes and bonds as edges [16] [18]. Models such as CGCNN, ALIGNN, and MEGNet operate on this principle, using message-passing between connected atoms to learn structure-property relationships [18]. ALIGNN extends this further by incorporating angular information through a line graph of atomic bonds, effectively capturing three-body interactions [18]. The most advanced frameworks, including CrysGNN, explicitly encode four-body interactions (atoms, bonds, angles, and dihedral angles) to comprehensively represent periodicity and structural characteristics [18].

Structure-based models generally achieve higher accuracy than composition-based approaches for properties strongly dependent on atomic arrangement, such as mechanical properties and thermodynamic stability [16] [18]. However, they require complete crystal structure information, which is often unavailable for new, unsynthesized materials, limiting their application in discovery workflows targeting completely novel compounds [1].

Table 1: Comparison of Model Frameworks for Predicting Inorganic Material Stability

| Feature | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Primary Input | Chemical formula | Crystallographic information (atomic coordinates, space group) |

| Key Descriptors | Elemental properties statistics, electron configurations | Atomic bonds, angles, dihedral angles, periodicity |

| Common Algorithms | Random Forest, XGBoost, ECCNN, ElemNet | CGCNN, ALIGNN, MEGNet, CrysGNN |

| Primary Advantage | Applicable to unexplored composition spaces; no structure needed | Higher accuracy; captures structure-property relationships |

| Key Limitation | Lower accuracy for structure-sensitive properties | Requires complete structural data |

| Data Efficiency | High (e.g., 1/7 the data for similar performance [1]) | Lower (requires large datasets for training) |

| Interpretability | Moderate (feature importance) | Lower (complex architecture) |

Methodological Protocols and Implementation

Protocol for Composition-Based Stability Prediction

Step 1: Data Curation and Preprocessing Collect a dataset of known materials with their chemical formulas and stability labels (e.g., decomposition energy, stability above convex hull). Databases such as the Materials Project, OQMD, and JARVIS provide extensive training data. For experimental validation, curate stability measurements from literature using natural language processing tools like ChemDataExtractor [19].

Step 2: Feature Engineering Convert chemical formulas into numerical descriptors using one of these approaches:

- Statistical Feature Method: For each element in the composition, calculate statistical measures (mean, standard deviation, range, etc.) across fundamental atomic properties including electronegativity, atomic radius, valence electron count, and electron affinity [1] [17].

- Electron Configuration Method: Encode the electron configuration of each element into a matrix representation capturing energy levels and electron occupations, then process through convolutional layers [1].

- Graph Representation Method: Represent the chemical formula as a dense graph of elements (as in Roost) and use message passing with attention mechanisms to learn compositional relationships [1].

Step 3: Model Selection and Training Select an appropriate algorithm based on dataset size and complexity:

- For smaller datasets (<10,000 samples), use ensemble methods like Random Forest or XGBoost [20] [21].

- For larger datasets, employ deep learning architectures like ECCNN or ElemNet [1].

- Implement stacked generalization by combining multiple models (e.g., Magpie, Roost, and ECCNN) to reduce inductive bias and improve performance [1].

Step 4: Validation and Interpretation Validate model performance using cross-validation with a focus on compositional splits rather than random splits to assess generalization to new chemical spaces [16]. Analyze feature importance to identify which elemental properties most strongly influence stability predictions.

Protocol for Structure-Based Stability Prediction

Step 1: Data Preparation Obtain crystal structure information (CIF files) from databases like Materials Project, ICSD, or OQMD. The dataset should include structural information and target properties (e.g., energy above convex hull, formation energy).

Step 2: Structure Representation Convert crystal structures into graph representations:

- Graph Construction: Represent atoms as nodes and bonds as edges within a cutoff radius (typically 5-8 Å) [18].

- Feature Assignment: Node features include atomic number, valence, and position; edge features include bond length and bond type [18].

- Higher-Order Interactions: For advanced models, compute bond angles (three-body) and dihedral angles (four-body) to comprehensively capture local environments [18].

Step 3: Model Architecture and Training Select a GNN architecture based on property requirements:

- For general property prediction, use CGCNN or MEGNet [18].

- For properties sensitive to angular information, implement ALIGNN [18].

- For data-scarce scenarios, employ transfer learning from a model pre-trained on data-rich properties like formation energy [18].

- Implement attention mechanisms (e.g., EGAT) to prioritize important atomic interactions [18].

Step 4: Evaluation with OOD Testing Assess model performance using out-of-distribution (OOD) testing methods that evaluate generalization to structurally or compositionally distinct materials, as random splits often overestimate performance due to dataset redundancy [16].

Performance Benchmarking and Comparative Analysis

Quantitative Performance Metrics

Table 2: Performance Comparison Across Model Architectures and Tasks

| Model Type | Specific Model | Target Property | Performance Metric | Score | Data Requirements |

|---|---|---|---|---|---|

| Composition-Based | ECSG (Ensemble) | Thermodynamic Stability | AUC | 0.988 [1] | ~1/7 of data for similar performance [1] |

| Composition-Based | RFC/XGBoost/SVM | MAX Phase Stability | Accuracy | High [20] | 1,804 compositions [20] |

| Structure-Based | coGN (MatBench) | Formation Energy | MAE | 0.017 eV [16] | Large dataset with redundancy [16] |

| Structure-Based | coGN (MatBench) | Bandgap | MAE | 0.156 eV [16] | Large dataset with redundancy [16] |

| Structure-Based | ALIGNN | Formation Energy | MAE | Lower than CGCNN [18] | Extensive structural data [18] |

| Hybrid | CrysCo (CrysGNN + CoTAN) | Energy Above Hull | MAE | Outperforms SOTA [18] | Transfer learning enabled [18] |

Case Studies in Inorganic Materials Discovery

Case Study 1: Discovery of Ti₂SnN MAX Phase Researchers employed a composition-based machine learning approach using Random Forest, Support Vector Machine, and Gradient Boosting Tree models to screen for stable MAX phases. The model was trained on 1,804 MAX phase combinations with stability labels and identified 190 promising candidates from 4,347 possibilities. First-principles calculations validated 150 of these as thermodynamically and intrinsically stable. This computational guidance enabled the experimental synthesis of Ti₂SnN at 750°C through Lewis acid substitution reactions, demonstrating the practical utility of composition-based screening for discovering previously unknown inorganic compounds [20].

Case Study 2: Exploring Two-Dimensional Semiconductors and Perovskites The ECSG ensemble framework, which combines composition-based models including Magpie, Roost, and ECCNN, was applied to discover new two-dimensional wide bandgap semiconductors and double perovskite oxides. The model successfully identified novel perovskite structures that were subsequently validated using first-principles calculations, demonstrating remarkable accuracy in identifying stable compounds. This case highlights how composition-based models can effectively navigate unexplored composition spaces despite having no structural information about the target materials [1].

Case Study 3: Identifying Materials for Harsh Environments A hybrid approach was developed to discover inorganic solids with high hardness and oxidation resistance for extreme environments. The methodology combined composition-based features with structural descriptors within an XGBoost framework. The resulting model screened 15,247 pseudo-binary and ternary compounds, identifying three promising candidates with both high hardness and excellent oxidation resistance. This successful application demonstrates the power of integrating both compositional and structural information for predicting complex material behaviors under demanding conditions [21].

Essential Research Reagents and Computational Tools

Table 3: Key Research Resources for Stability Prediction Research

| Resource Name | Type | Primary Function | Access |

|---|---|---|---|

| Materials Project | Database | Provides calculated structural and thermodynamic data for training | Online [1] [16] |

| JARVIS | Database | Contains DFT-calculated properties for materials including stability | Online [1] |

| OQMD | Database | Open Quantum Materials Database with formation energies | Online [1] [16] |

| ICSD | Database | Inorganic Crystal Structure Database with experimental structures | Subscription [17] |

| ChemDataExtractor | Software Tool | Automates extraction of experimental data from literature | Open Source [19] |

| ALIGNN | Model Framework | Graph neural network incorporating angular bond information | Open Source [18] |

| CGCNN | Model Framework | Crystal Graph Convolutional Neural Network for structure-based prediction | Open Source [18] |

| Magpie | Model Framework | Composition-based model using elemental property statistics | Open Source [1] |

The selection between composition-based and structure-based modeling frameworks depends critically on the research objectives and available data. Composition-based models provide an efficient starting point for exploring novel chemical spaces and conducting initial screening of candidate materials, requiring only chemical formulas as input. Their superior data efficiency makes them particularly valuable when exploring previously uncharted compositional territory. Structure-based models deliver higher accuracy for properties strongly influenced by atomic arrangements but require complete crystallographic information, limiting their application to materials with known structures.

For comprehensive inorganic stability research, a hierarchical approach is recommended: begin with composition-based screening to identify promising regions of compositional space, then apply structure-based methods for refined prediction once candidate materials are selected. Emerging hybrid frameworks that integrate both compositional and structural information represent the most promising direction, leveraging the respective strengths of both approaches while mitigating their individual limitations. As materials databases continue to expand and algorithms become more sophisticated, the integration of these complementary frameworks will increasingly accelerate the discovery of novel inorganic materials with tailored stability characteristics.

The How: Methodologies and Real-World Applications of ML Models

The discovery of novel inorganic materials with targeted properties is a central pursuit in materials science, yet it is perpetually challenged by the vastness of the compositional space. Traditional experimental methods and high-fidelity computational simulations, such as Density Functional Theory (DFT), are often too time-consuming and resource-intensive for exhaustive exploration [1]. In this context, composition-based machine learning (ML) models have emerged as a powerful tool for rapid virtual screening and prediction of material properties, most notably thermodynamic stability [22]. The performance of these models, however, is profoundly dependent on the representation of the input chemical formula—a process known as feature engineering. The journey of feature engineering for inorganic materials has evolved from simple elemental statistics to more sophisticated, physics-informed representations such as electron configurations, each with distinct advantages and limitations. This evolution is framed within the broader thesis that the strategic design of input features is paramount for developing accurate, generalizable, and efficient ML models that can accelerate inverse design and stability research in inorganic chemistry.

The Foundation: Elemental Statistics and Classical Descriptors

The initial approaches to feature engineering for inorganic compounds leveraged readily available elemental properties. These methods transform a chemical formula into a vector of numerical features by computing statistical moments across various elemental attributes.

Core Methodology

For a given compound, a list of atomic properties is first assembled for each constituent element. These properties can include atomic number, atomic mass, atomic radius, electronegativity, group number, and more [1]. Subsequently, a set of statistical functions—such as mean, standard deviation, minimum, maximum, and mode—is applied to the list of values for each property, generating a comprehensive feature vector that describes the compound's compositional makeup [23]. This approach is exemplified by the Magpie (Materials-Agnostic Platform for Informatics and Exploration) descriptor set [1].

Experimental Protocol and Data Presentation

The application of these descriptors typically follows a standard ML workflow. A dataset of compounds with known target properties (e.g., formation energy from the Materials Project or OQMD) is split into training and test sets [24]. A model, such as Gradient Boosted Regression Trees (XGBoost), is then trained to map the feature vectors to the target property [1].

Table 1: Key Elemental Properties Used in Feature Engineering

| Category | Specific Examples | Role in Describing Material Behavior |

|---|---|---|

| Electronic Structure | Number of valence electrons, Electronegativity | Influences bonding type and strength, chemical reactivity |

| Spatial | Atomic radius, Atomic volume | Determines packing efficiency and structural stability |

| Energetic | Melting point, Boiling point | Correlates with bond strength and thermal stability |

| Periodic | Group number, Period number | Captures periodic trends and chemical similarity |

The Paradigm Shift: Electron Configuration as a Fundamental Representation

While elemental statistics provide a useful summary, they rely on pre-selected properties and may introduce human bias. Electron configuration (EC) offers a more fundamental representation by describing the distribution of electrons in atomic orbitals, which underlies all chemical behavior [25].

Theoretical Underpinnings

The electron configuration of an atom denotes the population of electrons in its atomic orbitals (e.g., 1s² 2s² 2p⁶ for neon). It is determined by the Aufbau principle, the Pauli exclusion principle, and Hund's rule, which collectively dictate the ground-state arrangement of electrons that minimizes the atom's total energy [26]. This configuration is intrinsically linked to an element's position in the periodic table and its chemical properties, including its common oxidation states and preferred bonding patterns [25].

Encoding Methods for Machine Learning

To be used as input for an ML model, the electron configuration information for all atoms in a compound must be encoded into a numerical matrix. One advanced method involves creating a large matrix (e.g., 118 elements × 168 orbital slots × 8 channels) that comprehensively represents the electron occupancy for each element in a structured format [1]. This dense representation aims to provide the model with a more direct and less biased view of the electronic structure that governs interatomic interactions.

Table 2: Comparison of Feature Engineering Approaches for Inorganic Compounds

| Feature Type | Description | Advantages | Limitations |

|---|---|---|---|

| Elemental Statistics (e.g., Magpie) | Statistical moments (mean, variance, etc.) of elemental properties [1]. | Computationally lightweight; Intuitive; Good performance for many properties. | Relies on manual feature selection; May introduce bias; Limited transferability. |

| Graph Representations (e.g., Roost) | Treats chemical formula as a graph with message-passing between atoms [1]. | Effectively captures interatomic interactions. | Can be computationally intensive; Relies on the completeness of the graph model. |

| Electron Configuration (EC) | Direct use of orbital occupation data as a feature matrix [1] [23]. | Fundamental, physics-based input; Reduces manual feature bias. | Higher dimensionality requires more complex models (e.g., CNN); Less interpretable. |

Advanced Architectures and Ensemble Strategies

Relying on a single type of feature representation can limit model performance due to inherent inductive biases. Consequently, state-of-the-art research has moved towards ensemble frameworks that integrate multiple, complementary representations.

The ECCNN Model

The Electron Configuration Convolutional Neural Network (ECCNN) is designed to process the encoded electron configuration matrix [1]. The architecture typically involves:

- Input Layer: Accepts the encoded EC matrix.

- Convolutional Layers: Apply filters to extract localized spatial patterns from the EC matrix, effectively learning salient features from the electronic structure [1].

- Fully Connected Layers: Map the extracted features to a final prediction, such as decomposition energy or stability classification [1].

Ensemble Learning with Stacked Generalization

The ECSG (Electron Configuration models with Stacked Generalization) framework exemplifies the ensemble approach [1]. It operates on two levels:

- Base-Level Models: Three distinct models are trained independently: Magpie (elemental statistics), Roost (graph representation), and ECCNN (electron configuration). Each provides a unique "perspective" on the composition-property relationship.

- Meta-Learner: The predictions from these three base models are used as input features to train a final, super-learner model (e.g., a linear model or another simple predictor). This meta-model learns to optimally combine the strengths and compensate for the weaknesses of each base model [1].

The following workflow diagram illustrates the ECSG ensemble framework:

Experimental Validation and Performance

The superiority of advanced feature engineering and ensemble methods is demonstrated through rigorous benchmarking against established datasets and traditional approaches.

Quantitative Performance Metrics

The ECSG ensemble framework, for instance, achieved an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database, significantly outperforming models based on single representations [1]. Furthermore, the integration of electron configuration features demonstrated remarkable sample efficiency, requiring only one-seventh of the training data to achieve performance equivalent to existing models that used the full dataset [1] [2].

Table 3: Performance Comparison of Different Model Architectures

| Model / Framework | Key Features | Reported Performance | Reference |

|---|---|---|---|

| ElemNet | Deep learning on elemental composition only. | Lower accuracy, significant inductive bias. | [1] |

| Magpie (XGBoost) | Classical elemental statistics. | Good baseline, but limited by feature selection. | [1] |

| ECCNN | Electron configuration input with CNN. | High accuracy, reduces bias. | [1] |

| ECSG (Ensemble) | Combines Magpie, Roost, and ECCNN. | AUC = 0.988; High sample efficiency. | [1] [2] |

Case Studies in Materials Discovery

The practical utility of these models is validated through case studies. For example, the ECSG model was deployed to explore new two-dimensional wide bandgap semiconductors and double perovskite oxides [1]. The model successfully identified several novel, thermodynamically stable compounds, which were subsequently verified using first-principles DFT calculations, confirming the model's high accuracy and potential to guide experimental synthesis efforts [1].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and datasets that are indispensable for research in this field.

Table 4: Essential "Research Reagent Solutions" for Computational Stability Prediction

| Name | Type | Function & Application |

|---|---|---|

| Materials Project (MP) | Database | Provides a vast repository of computed crystal structures and thermodynamic properties (e.g., formation energy) for training and benchmarking ML models [1]. |

| Open Quantum Materials Database (OQMD) | Database | Another extensive source of DFT-calculated data on inorganic materials, crucial for sourcing training data for stability prediction [24]. |

| Magpie | Descriptor Generator | Software for automatically generating a vector of statistical features from a chemical formula based on elemental properties [1] [23]. |

| JARVIS | Database & Tools | A repository including both computational and experimental data, used for model validation and development [1]. |

| matminer | Python Library | A platform for data mining in materials science that includes numerous feature extraction utilities and facilitates the connection between ML algorithms and materials data [23]. |

The evolution of feature engineering from elemental statistics to electron configuration representations marks a significant maturation in the field of composition-based machine learning for inorganic materials. This progression, driven by the need to reduce inductive bias and capture more fundamental chemical physics, has culminated in powerful ensemble frameworks that synergistically combine multiple knowledge domains. The experimental evidence is clear: models leveraging these advanced features, particularly within an ensemble strategy, achieve state-of-the-art predictive accuracy for thermodynamic stability while demonstrating remarkable data efficiency. As these tools become more accessible and integrated into high-throughput workflows, they hold the transformative potential to drastically accelerate the discovery and design of next-generation inorganic materials, from advanced semiconductors to robust catalyst systems, ultimately solidifying their role as an indispensable component in the materials researcher's toolkit.

The discovery of new inorganic materials with tailored stability properties is a cornerstone of advancements in energy storage, catalysis, and electronics. Traditional experimental methods and first-principles calculations, while accurate, are often prohibitively slow and resource-intensive for scanning vast compositional spaces. Composition-based machine learning (ML) models have emerged as a powerful tool to accelerate this discovery process, enabling the rapid prediction of properties like thermodynamic stability directly from a chemical formula. Among the plethora of ML algorithms, Gradient Boosting, Graph Neural Networks (GNNs), and Convolutional Neural Networks (CNNs) have demonstrated exceptional performance. This whitepaper provides an in-depth technical overview of these three core model architectures, framing them within the context of inorganic stability research. It details their fundamental principles, summarizes their predictive performance in recent studies, outlines experimental protocols for their application, and visualizes their operational workflows, serving as a scientific toolkit for researchers in materials science and drug development.

Theoretical Foundations of the Architectures

Gradient Boosting (XGBoost)