Comparative Cost-Effectiveness Analysis of Inorganic Analysis Platforms: A Strategic Guide for Biomedical Research and Drug Development

This article provides a comprehensive framework for conducting cost-effectiveness analyses (CEA) of inorganic analysis platforms, crucial tools in drug development and material science.

Comparative Cost-Effectiveness Analysis of Inorganic Analysis Platforms: A Strategic Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive framework for conducting cost-effectiveness analyses (CEA) of inorganic analysis platforms, crucial tools in drug development and material science. It explores the growing market driven by regulatory demands and technological advancements, detailing methodological approaches that balance cost, time, and analytical uncertainty. The content offers practical strategies for optimizing platform selection and operation, presents a comparative analysis of leading technologies, and concludes with future-focused insights to guide strategic investment in analytical capabilities for researchers, scientists, and drug development professionals.

Understanding the Inorganic Analysis Platform Landscape and Market Drivers

Inorganic analysis platforms represent a category of advanced technological systems designed for the characterization, discovery, and development of inorganic materials and compounds for biomedical applications. These platforms integrate various analytical techniques, computational models, and automated experimental systems to accelerate research and development cycles. In the context of biomedical research, they enable precise investigation of inorganic materials such as metal nanoparticles, layered double hydroxides (LDHs), metal oxides, and other inorganic compounds for applications ranging from drug delivery and diagnostic imaging to biosensing and therapeutic development.

The growing importance of these platforms is underscored by the expanding applications of inorganic materials in biomedicine, where their unique properties—including tunable surface chemistry, magnetic or optical characteristics, and controlled release capabilities—offer significant advantages over organic counterparts. This guide provides a comparative analysis of the core technologies, performance metrics, and cost-effectiveness of contemporary inorganic analysis platforms, providing researchers and drug development professionals with objective data to inform their technology selection process.

Core Platform Architectures and Comparative Analysis

Inorganic analysis platforms can be categorized into three primary architectural paradigms: generative AI-driven platforms, automated experimental laboratories, and traditional computational modeling suites. Each offers distinct advantages for specific research applications and development stages.

Table 1: Comparative Analysis of Inorganic Analysis Platform Types

| Platform Type | Core Technologies | Primary Applications in Biomedicine | Key Advantages | Performance Limitations |

|---|---|---|---|---|

| Generative AI Platforms (e.g., MatterGen) | Diffusion models, neural networks, property prediction algorithms | Inverse design of stable inorganic materials, crystal structure generation, property optimization | Generates previously unknown stable structures; Can satisfy multiple property constraints simultaneously; High diversity of outputs | Requires extensive training data; Computational intensity for complex structures; Limited explainability of design choices |

| Automated Experimental Systems (e.g., CRESt) | Robotic fluid handling, computer vision, high-throughput characterization, active learning integration | Accelerated materials synthesis and testing, electrochemical characterization, optimization of material compositions | Integrates multimodal data (literature, experimental results, human feedback); Real-time experimental monitoring; Rapid iteration through design space | High initial equipment costs; Requires specialized maintenance; Limited to predefined experimental protocols |

| Traditional Simulation & Modeling | Density functional theory (DFT), molecular dynamics, QSAR models | Prediction of material properties, stability assessment, toxicity profiling | Well-established theoretical foundation; High interpretability of results; Lower computational resource requirements for small systems | Limited exploration of novel chemical spaces; Lower success rate for stable material generation; Difficulty handling complex property constraints |

Table 2: Quantitative Performance Metrics Across Platform Types

| Performance Metric | Generative AI (MatterGen) | Automated Experimental (CRESt) | Traditional Modeling (DFT) |

|---|---|---|---|

| Success Rate (Stable Materials) | 75-78% of generated structures stable (<0.1 eV/atom from convex hull) [1] | 9.3-fold improvement in power density for fuel cell catalyst [2] | Varies widely based on system complexity and approximations |

| Novelty Rate | 61% of generated structures are new [1] | Discovery of 8-element catalyst with record performance [2] | Limited to perturbations of known structures |

| Structural Optimization | >10x closer to local energy minimum vs. previous methods [1] | Automated optimization through 900+ chemistries in 3 months [2] | High accuracy for relaxation of approximate structures |

| Throughput | 1,000+ structures generated and screened computationally | 3,500+ electrochemical tests in single campaign [2] | Days to weeks for complex system analysis |

| Property Constraints | Can simultaneously optimize for chemistry, symmetry, mechanical, electronic, and magnetic properties [1] | Can incorporate literature knowledge, experimental data, and human feedback [2] | Typically limited to one or two properties at a time |

Experimental Protocols and Methodologies

Protocol 1: Generative AI-Driven Material Discovery

The following workflow outlines the methodology for generative AI platforms like MatterGen, which employs a diffusion-based approach for inorganic materials design [1]:

Sample Generation Protocol:

- Platform Initialization: The model is pretrained on diverse inorganic crystal structures from databases like the Materials Project (607,683 structures) and Alexandria to establish foundational knowledge of stable configurations [1].

- Constraint Definition: Researchers specify desired property constraints through adapter modules, which may include chemical composition ranges, symmetry requirements (space groups), or target properties (mechanical, electronic, magnetic) [1].

- Diffusion Process: The model executes a customized diffusion process that gradually refines atom types, coordinates, and periodic lattice parameters through a corruption and reversal process specifically designed for crystalline materials [1].

- Structure Evaluation: Generated structures are evaluated for stability using formation energy calculations and distance to convex hull (with stable structures defined as <0.1 eV/atom above hull) [1].

- Validation: Promising candidates undergo DFT relaxation to verify stability and properties, with successful structures having RMSD <0.076 Å from DFT-optimized structures [1].

Protocol 2: Automated Experimental Optimization

The CRESt platform exemplifies the automated experimental approach, combining AI-driven experiment planning with robotic execution [2]:

High-Throughput Experimentation Protocol:

- Experimental Design: Researchers define the search space through natural language interface, specifying up to 20 precursor molecules and substrates for investigation [2].

- Knowledge Integration: The system incorporates information from scientific literature and databases to create knowledge embeddings that inform initial experimental directions [2].

- Active Learning Initiation: Bayesian optimization in a reduced search space identifies promising initial experiments based on literature knowledge before physical testing [2].

- Robotic Execution: Liquid-handling robots prepare material samples according to optimized recipes, followed by automated synthesis using systems like carbothermal shock [2].

- Characterization and Analysis: Automated characterization techniques (electron microscopy, X-ray diffraction, electrochemical testing) analyze synthesized materials [2].

- Iterative Optimization: Results feed back into active learning models, which redesign experiments based on multimodal data (experimental results, literature knowledge, human feedback) [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Inorganic Analysis Platforms

| Reagent/Material | Function | Application Examples | Platform Compatibility |

|---|---|---|---|

| Layered Double Hydroxides (LDHs) | Anionic clay structures with intercalation capacity | Drug and gene delivery systems; Sustained release platforms [3] | Traditional synthesis; Automated platforms |

| Precursor Solutions (Metal salts, organometallic compounds) | Source of inorganic elements for material synthesis | Catalyst preparation; Nanoparticle synthesis; Thin film deposition [2] | Automated robotic platforms; High-throughput screening |

| Structure-Directing Agents | Control morphology and crystal structure during synthesis | Template for porous structures; Crystal growth modification | All synthesis platforms |

| Functionalization Ligands | Surface modification for specific targeting or compatibility | Bioconjugation for targeted drug delivery; Stability enhancement in biological environments [4] | Post-synthesis modification platforms |

| Characterization Standards | Reference materials for instrument calibration | Quantification of analytical measurements; Method validation | All analytical platforms |

Cost-Effectiveness Analysis Framework

Evaluating inorganic analysis platforms requires consideration of both direct costs and research efficiency gains within a cost-effectiveness analysis (CEA) framework. Diagnostic imaging provides a valuable reference model, where CEA compares alternative courses of action in terms of both costs and consequences [5].

Table 4: Cost-Effectiveness Analysis of Platform Attributes

| Cost Factor | Generative AI Platforms | Automated Experimental Systems | Traditional Methods |

|---|---|---|---|

| Initial Investment | High (computational infrastructure, software licensing) | Very High (robotic systems, specialized instrumentation) | Low to Moderate (software, standard lab equipment) |

| Operational Costs | Moderate (computational resources, personnel) | High (consumables, maintenance, technical staff) | Moderate (personnel-intensive, standard reagents) |

| Time to Solution | Weeks to months (virtual screening with experimental validation) | Months (high-throughput experimental cycles) | Years (sequential hypothesis testing) |

| Material Discovery Efficiency | High (60%+ novel stable materials) [1] | Very High (900+ chemistries in 3 months) [2] | Low (limited exploration of chemical space) |

| Risk of Failure | Moderate (generated structures may not synthesize as predicted) | Low (direct experimental validation) | High (limited predictive power for novel materials) |

The conceptual framework for CEA in diagnostic imaging adapted by Feinberg et al. demonstrates how effectiveness should be evaluated across hierarchical levels: technical performance, diagnostic accuracy, diagnostic impact, therapeutic impact, and health outcomes [5]. Similarly, inorganic analysis platforms can be evaluated across parallel dimensions: material generation capability, prediction accuracy, experimental impact, optimization efficiency, and ultimately research outcomes.

Decision-analytic modeling, commonly employed in healthcare technology assessment, provides a methodology for synthesizing available evidence when direct long-term outcomes are impractical to measure [5]. For inorganic analysis platforms, this approach can link platform characteristics (e.g., prediction accuracy, throughput) to long-term research productivity through modeling techniques such as decision trees for static situations or Markov models for dynamic, multi-stage research processes [5].

The comparative analysis presented in this guide demonstrates that selection of inorganic analysis platforms requires careful consideration of research objectives, budget constraints, and desired outcomes. Generative AI platforms offer unprecedented capabilities for exploring novel chemical spaces and predicting stable inorganic materials before synthesis. Automated experimental systems provide accelerated empirical optimization through high-throughput experimentation. Traditional computational methods remain valuable for specific, well-defined problems where interpretability and theoretical understanding are prioritized.

For biomedical research institutions and drug development organizations, the optimal strategy often involves integrating multiple platform types—leveraging generative AI for novel material discovery, automated systems for experimental optimization, and traditional methods for mechanistic understanding. As these technologies continue to evolve, particularly with improvements in AI model accuracy and robotic automation, the cost-effectiveness of advanced inorganic analysis platforms is expected to improve, further accelerating the development of innovative inorganic materials for biomedical applications.

Market Size, Growth Trajectory, and Key Industry Players

Market Size and Growth Trajectory

The market for inorganic analysis platforms, exemplified by the inorganic elemental analyzers segment, demonstrates stable growth driven by technological advancement and regulatory demand across key industries.

Table 1: Inorganic Elemental Analyzers Market Size and Projections

| Metric | 2024 Value | 2033 Projected Value | Forecast Period CAGR |

|---|---|---|---|

| Global Market Size | USD 1.25 Billion [6] | USD 2.05 Billion [6] | 7.5% (2026-2033) [6] |

This growth is fueled by several key factors:

- Regulatory Enforcement: Strict environmental and product safety regulations necessitate precise elemental analysis [6].

- Cross-Sector Industrial Demand: Applications in environmental testing, pharmaceuticals, and materials science create sustained demand for accurate, reliable analyzers [7] [6].

- Technological Investment: Rising investments in clean energy and advanced material sciences are intensifying the need for high-performance analytical equipment [6].

Key Industry Players and Vendor Landscape

The market comprises established instrument manufacturers and specialized chemical informatics companies that provide essential software and data analysis tools. Leading vendors can be categorized based on their application strengths.

Table 2: Key Vendors and Their Application Focus

| Company | Primary Application Focus / Strength |

|---|---|

| Thermo Fisher Scientific | High-precision research and advanced inorganic analysis [7] |

| Bruker | High-precision research and advanced inorganic analysis [7] |

| PerkinElmer | User-friendly, reliable solutions for routine quality control in manufacturing [7] |

| Shimadzu | User-friendly, reliable solutions for routine quality control in manufacturing [7] |

| HORIBA | Portable analyzers for environmental testing and mobility [7] |

| Skyray Instruments | Portable analyzers for environmental testing and mobility [7] |

| ARL | Durable, industrial-grade analyzers for continuous operation [7] |

| Hitachi | Durable, industrial-grade analyzers for continuous operation [7] |

| Schrödinger, Inc. | Provider of advanced chemical informatics software for molecular modeling and simulation [8] |

| Dassault Systèmes (BIOVIA) | Provider of advanced chemical informatics software for molecular modeling and simulation [8] |

A significant technological trend is the integration of Artificial Intelligence (AI) and machine learning into analysis platforms. AI is being used for data analysis, virtual screening, and predicting molecular properties, which accelerates discovery and improves efficiency [8]. Furthermore, the broader chemical informatics market, which provides critical software for data management and analysis, is projected to grow at a remarkable CAGR of 15.75% from 2026 to 2035, highlighting the increasing importance of computational power in this field [8].

Experimental Protocol for Platform Comparison

A robust methodology for comparing the performance of different inorganic analysis platforms is crucial for cost-effectiveness analyses. The following protocol, adapted from high-throughput experimental materials research, provides a standardized approach.

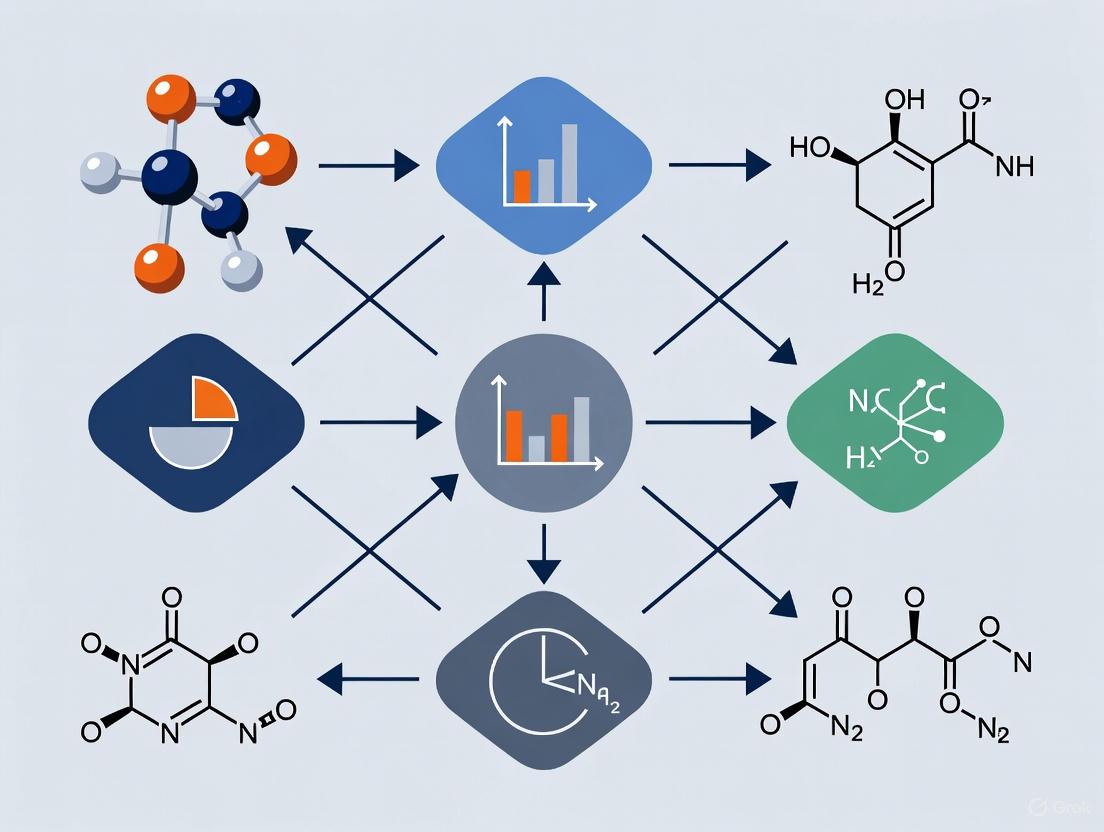

Diagram: Experimental workflow for analyzer comparison.

Detailed Methodology

Sample Preparation:

- Select certified reference materials (CRMs) with known elemental compositions relevant to the intended application (e.g., environmental, pharmaceutical).

- Prepare homogeneous powder blends to ensure consistency and reproducibility across all tests performed on different platforms [9].

Instrument Calibration:

- Follow each manufacturer's standardized calibration protocol.

- Utilize multi-point calibration curves derived from certified standards to ensure accurate quantitative analysis.

Data Acquisition:

- Run a minimum of n=5 replicate measurements for each sample on each instrument to gather statistically significant data.

- Record the total analysis time per sample for each platform to benchmark throughput and operational efficiency.

Data Analysis:

- Precision: Calculate the relative standard deviation (RSD) of the replicate measurements for each element.

- Accuracy: Determine the percentage recovery by comparing the measured value against the certified value of the reference material.

- Throughput: Calculate samples analyzed per hour based on the recorded analysis times.

The Scientist's Toolkit: Key Research Reagent Solutions

The following materials are essential for conducting rigorous experimental comparisons and routine inorganic analysis.

Table 3: Essential Research Reagents and Materials

| Item | Function in Analysis |

|---|---|

| Certified Reference Materials (CRMs) | Provide a ground truth for validating instrument accuracy and method precision by comparing measured results to certified values. |

| High-Purity Calibration Standards | Used to create calibration curves for quantitative analysis, ensuring the instrument's response is accurately correlated to element concentration. |

| Inorganic Crystalline Thin-Film Libraries | Serve as well-characterized sample libraries for high-throughput screening and method development, especially in materials science research [9]. |

| Laboratory Information Management System (LIMS) | Software platform for tracking samples, managing metadata, and storing experimental results, which is critical for data integrity and reproducibility [9]. |

| AI-Driven Chemical Informatics Software | Enables molecular modeling, predicts molecular properties, and manages large datasets, accelerating the analysis and interpretation of complex results [8]. |

The comparative cost-effectiveness of inorganic analysis platforms is increasingly shaped by the convergence of three powerful forces: stringent regulatory enforcement, groundbreaking advances in material science, and shifting investment patterns in clean energy. Regulatory pressures, particularly in the United States and European Union, are mandating more rigorous sustainability reporting and material traceability, directly influencing the analytical tools required for compliance [10]. Concurrently, the emergence of generative artificial intelligence and machine learning models like MatterGen is revolutionizing the discovery and design of stable inorganic materials, dramatically accelerating the research and development pipeline [1]. These technological advancements intersect with a dynamic clean energy investment landscape, where policy shifts are reshaping project economics and prioritizing technologies with superior performance and cost profiles [11]. This guide objectively compares the performance of emerging inorganic analysis platforms against conventional alternatives, providing experimental data to inform research and development decisions across scientific and industrial contexts.

Performance Comparison of Inorganic Analysis Platforms

The evaluation of inorganic analysis platforms encompasses traditional computational methods, emerging AI-driven approaches, and experimental techniques. The tables below provide a comparative analysis of their key performance metrics.

Table 1: Performance Comparison of Computational Material Design Platforms

| Platform / Model | Key Technology | Stable & Unique Material Generation Rate | Average RMSD to DFT Relaxed (Å) | Property Constraints Supported | Key Limitations |

|---|---|---|---|---|---|

| MatterGen (Base Model) [1] | Diffusion-based generative AI | >60% (SUN* materials) | <0.076 | Chemistry, symmetry, mechanical, electronic, magnetic | Requires fine-tuning for specific property targets |

| CDVAE / DiffCSP [1] | Variational Autoencoder / Diffusion | <40% (SUN* materials) | ~0.8-1.0 (10x higher) | Primarily formation energy | Limited property conditioning abilities |

| High-Throughput Screening [12] | First-principles calculations (DFT) | Limited to known databases | N/A (ground state) | Broad, but computationally intensive | Limited to pre-existing databases, no genuine generation |

| Random Structure Search (RSS) [1] | Stochastic sampling | Lower than MatterGen in target systems | Variable, often high | None | Computationally inefficient, low success rate |

*SUN: Stable, Unique, and New with respect to known crystal structure databases.

Table 2: Performance of Experimental and Data-Driven Analysis Platforms

| Platform / Method | Key Technology | Key Applications | Throughput / Scalability | Key Experimental Findings | Cost-Effectiveness |

|---|---|---|---|---|---|

| Paper-Based Analytical Devices (PADs) [13] | Surface-modified paper substrates | Point-of-care diagnostics, environmental monitoring, food safety | High, low-cost, disposable | Detection of metal ions, small molecules, proteins, viruses, bacteria [13] | Very high (low-cost materials, easy fabrication) |

| ML-Guided Experimental Design [12] | NLP from literature, trained on CSD/tmQM | Predicting MOF stability (thermal, water), gas uptake | Data-limited by available literature | Predicted water stability for ~1,092 MOFs; Td for ~3,000 MOFs [12] | High, but dependent on data extraction and curation costs |

| Generative AI + Synthesis Validation [1] | MatterGen + lab synthesis | Inverse design of materials with target properties | Medium (generation is fast, synthesis is bottleneck) | One generated structure synthesized and measured within 20% of target property [1] | Potentially high by reducing failed experiments |

Detailed Experimental Protocols and Methodologies

To ensure reproducibility and provide a clear basis for the performance data, this section details the core experimental and computational methodologies referenced in the comparison tables.

Protocol for Generative AI Material Design and Validation

This protocol outlines the process for using the MatterGen model to design novel inorganic materials and validate their stability [1].

- Objective: To generate novel, stable inorganic crystals with target properties and validate their stability using Density Functional Theory (DFT).

- Materials/Software: MatterGen generative model, Alex-MP-20 training dataset, DFT computation software (e.g., VASP, Quantum ESPRESSO).

- Procedure:

- Model Pretraining: The base MatterGen model is pretrained on the Alex-MP-20 dataset, which contains 607,683 stable structures from the Materials Project and Alexandria datasets.

- Structure Generation: The model generates candidate crystal structures by reversing a defined corruption process for atom types (A), coordinates (X), and the periodic lattice (L).

- Fine-Tuning (for property constraints): For targeted generation, the base model is fine-tuned on smaller datasets with specific property labels (e.g., magnetism, band gap) using adapter modules.

- Stability Validation: Generated structures are relaxed to their nearest local energy minimum using DFT calculations.

- Stability Assessment: The energy above the convex hull is calculated using a reference dataset (Alex-MP-ICSD). A structure is considered stable if this value is within 0.1 eV per atom.

- Uniqueness and Novelty Check: Structures are compared against all known materials in the Alex-MP-ICSD database using an ordered-disordered structure matcher to ensure they are both unique and new.

- Output Metrics: Percentage of Stable, Unique, and New (SUN) materials; root-mean-square deviation (RMSD) between generated and DFT-relaxed structures.

Protocol for Surface Modification of Paper-Based Analytical Devices (PADs)

This protocol describes the surface chemical modification of cellulose-based paper to create functional PADs for specific analytical applications [13].

- Objective: To enhance the performance of PADs by modifying their surface to improve analyte retention, selectivity, sensitivity, and mechanical stability.

- Materials: Filter paper or chromatographic paper, modifying agents (e.g., polymers, nanomaterials, biomolecules).

- Procedure:

- Substrate Selection: Choose a paper substrate with appropriate porosity, wettability, and functional groups (e.g., hydroxyls on cellulose).

- Surface Modification:

- Organic Modifications: Apply synthetic polymers, biopolymers (e.g., chitosan, alginate), or Molecularly Imprinted Polymers (MIPs) via dipping, spraying, or drop-casting to create specific recognition sites.

- Inorganic Modifications: Incorporate nanomaterials (e.g., metal nanoparticles, metal oxides) to enhance catalytic activity or electrical conductivity.

- Hybrid/Biological Modifications: Immobilize enzymes, antibodies, or DNA probes to impart high biological specificity.

- Curing/Drying: Allow the modified PAD to dry or undergo a specific curing process (e.g., UV irradiation, thermal treatment) to stabilize the modifying layer.

- Assay Implementation: Apply the sample and reagents to the modified PAD for vertical or lateral flow assays, with detection via colorimetric, electrochemical, or fluorometric methods.

- Output Metrics: Limit of detection (LOD), sensitivity, selectivity against interferents, assay time, and mechanical durability.

Protocol for Data Extraction and Machine Learning for Material Stability

This protocol details the process of extracting experimental data from scientific literature to train machine learning models for predicting material properties like stability [12].

- Objective: To curate a dataset of metal-organic framework (MOF) stability properties from published literature and use it to train predictive ML models.

- Materials/Software: Natural Language Processing (NLP) tools (e.g., ChemDataExtractor), digitization software (e.g., WebPlotDigitizer), ML libraries (e.g., scikit-learn).

- Procedure:

- Corpus Curation: Assemble a corpus of scientific literature for a specific material class (e.g., using the CoRE MOF 2019 dataset with associated DOIs).

- Named Entity Recognition (NER): Use NLP to identify and extract material names and property mentions (e.g., "thermal stability," "water stability") within the text.

- Data Digitization: For properties reported in figures (e.g., Thermogravimetric Analysis (TGA) curves, gas isotherms), use digitization tools to extract numerical data.

- Data Unification: Apply uniform rules to convert extracted data into standardized values. For TGA, this may involve finding the intersection point of tangents to define decomposition temperature (Td).

- Structure-Property Linking: Associate the extracted property data with the corresponding chemical structure from a curated database.

- Model Training: Train machine learning models (e.g., random forest, neural networks) on the final curated dataset to predict material stability from structural or compositional features.

- Output Metrics: Size and scope of the curated dataset (e.g., number of MOFs with stability labels), predictive accuracy (e.g., R², MAE) of the trained ML models.

Visualization of Workflows and Logical Relationships

The following diagrams, generated using Graphviz DOT language, illustrate the core experimental and analytical workflows described in this guide.

Generative Material Design and Validation

PAD Development and Application

Data-Driven Material Discovery

The Scientist's Toolkit: Essential Research Reagent Solutions

This section details key reagents, materials, and software platforms that constitute the essential toolkit for research in inorganic analysis platforms and material design.

Table 3: Key Research Reagent Solutions for Inorganic Analysis Platforms

| Item Name | Type | Primary Function | Example Application in Protocols |

|---|---|---|---|

| Cellulose Chromatography Paper [13] | Substrate | Porous, hydrophilic substrate for fluid transport | Base material for fabricating Paper-Based Analytical Devices (PADs). |

| Molecularly Imprinted Polymers (MIPs) [13] | Organic Modifier | Creates synthetic recognition sites for specific analytes | Coated onto PADs to enhance selectivity for targets like proteins or small molecules. |

| Chitosan [13] | Biopolymer Modifier | Improves mechanical strength and biocompatibility | Used as a surface coating on PADs to enhance durability and enable biomolecule immobilization. |

| Metal-Organic Frameworks (MOFs) [12] | Functional Material | High surface area for adsorption, catalytic sites | Used as modifying agents on PADs for sensing or as target materials for stability prediction models. |

| Alex-MP-20 Dataset [1] | Computational Dataset | Training data for generative AI models | Contains over 600k stable structures used to pretrain the MatterGen base model. |

| MatterGen Model [1] | Software/Platform | Generative AI for inverse materials design | Core platform for generating novel, stable inorganic crystals with desired properties. |

| Cambridge Structural Database (CSD) [12] | Experimental Database | Repository of experimental crystal structures | Source of structural data for TMCs and MOFs; foundation for datasets like tmQM. |

The field of inorganic analysis is undergoing a profound transformation, driven by the convergence of artificial intelligence (AI), robotic automation, and increasing sustainability demands. For researchers and drug development professionals, selecting the right analytical platform now requires evaluating not just analytical performance, but also computational capabilities, automation integration, and environmental impact. This guide provides a comparative analysis of emerging platforms and methodologies, focusing on cost-effectiveness within research environments where throughput, data quality, and operational efficiency are paramount. The integration of AI is shifting analytical workflows from manual operation to self-optimizing systems that can predict outcomes, automate method development, and extract more value from every experiment [14] [15]. Simultaneously, automation technologies are evolving from simple sample handlers to fully integrated "dark laboratories" capable of 24/7 operation without human intervention [15]. This analysis examines how these technologies are being implemented across contemporary inorganic analysis platforms, providing researchers with the framework needed to make informed technology selection decisions.

Comparative Analysis of Automated Analysis Platforms

Performance Metrics of High-Throughput Experimental Systems

High-throughput experimental (HTE) systems have become foundational to modern materials research, enabling rapid characterization of inorganic samples at unprecedented scales. The High Throughput Experimental Materials (HTEM) Database represents one of the most comprehensive implementations, containing data from over 140,000 inorganic thin-film samples characterized across multiple parameters [9]. The system's performance highlights the capabilities of modern automated analysis platforms.

Table 1: Performance Metrics of High-Throughput Analysis Systems

| Analysis Parameter | Throughput Capacity | Data Quality Indicators | Automation Level |

|---|---|---|---|

| Structural Characterization | 100,848 XRD patterns | Multi-technique validation | Fully automated pattern collection & analysis |

| Chemical Composition | 72,952 samples | Composition/thickness mapping | Automated PVD synthesis coupled with EDX |

| Optoelectronic Properties | 55,352 absorption spectra | Cross-correlated with structural data | High-throughput spectrophotometry |

| Synthesis Condition Tracking | 83,600 temperature parameters | Full parameter logging | Robotic substrate handling & process control |

The HTEM platform demonstrates how integrated data management is crucial for leveraging AI capabilities. Their infrastructure employs a specialized laboratory information management system (LIMS) that automatically harvests data from instruments into a centralized data warehouse, followed by an extract-transform-load (ETL) process that aligns synthesis and characterization data into a queryable database [9]. This infrastructure enables both web-based exploration for individual researchers and API access for large-scale data mining, making it possible to apply advanced machine learning algorithms to experimental materials science.

AI-Enhanced Chromatography Systems for Pharmaceutical Analysis

In pharmaceutical analysis, HPLC systems with integrated AI capabilities are demonstrating significant advantages in method development and optimization. At the HPLC 2025 conference, multiple manufacturers presented systems where machine learning algorithms autonomously optimize separation parameters, substantially reducing method development time [15].

Table 2: Comparative Analysis of AI-Enhanced Chromatography Platforms

| Platform/Technology | AI Optimization Capabilities | Throughput | Key Applications in Drug Development |

|---|---|---|---|

| Agilent AI-Powered LC | Autonomous gradient optimization | Not specified | Method development, complex separations |

| Shimadzu ML Peptide Analysis | Intelligent gradient optimization & flow-selection | Not specified | Synthetic peptide method development, impurity resolution |

| AstraZeneca Automated Workflow | Predictive modeling for method selection | High-throughput synthesis & characterization | Reaction monitoring, compound characterization |

Gesa Schad from Shimadzu Europe demonstrated a machine learning-based approach to peptide method development that uses intelligent gradient optimization and flow-selection automation to streamline impurity resolution while reducing manual input [15]. Similarly, Christian P. Haas from Agilent Technologies highlighted AI-powered liquid chromatography systems that optimize gradients autonomously and integrate seamlessly with digital lab environments, enhancing both reproducibility and data quality [15]. These implementations show a clear trend toward self-optimizing instruments that can adapt to analytical challenges in real-time.

Experimental Protocols for AI-Enhanced Analysis

Protocol: Autonomous Method Development for Chromatographic Separations

Objective: To automate the development of optimal separation methods for complex mixtures using AI-driven liquid chromatography systems.

Materials and Reagents:

- Target analytes (e.g., synthetic peptides and their impurities)

- Various mobile phases (acetonitrile, water with modifiers)

- Stationary phases (C18, phenyl-hexyl, cyano columns)

- Calibration standards for system suitability

Instrumentation:

- AI-enabled liquid chromatography system (e.g., Agilent or Shimadzu platforms with machine learning capabilities)

- Mass spectrometer detection (single quadrupole or Q-TOF)

- Automated solvent blending system

- Column switching valves for stationary phase screening

Methodology:

- Initial Parameter Screening: The system automatically tests the target compounds across different stationary and mobile phases using fractional factorial design to maximize information gain while minimizing experiments.

- Data Acquisition: A single quadrupole mass spectrometer tracks peaks precisely across different method conditions, with resolution visualized using a color-coded design space.

- AI-Optimization Phase: Machine learning algorithms autonomously refine gradient conditions (time, concentration, flow rate) to meet predetermined resolution targets.

- Validation: The optimized method is validated against standard reference materials to ensure accuracy and reproducibility.

This protocol exemplifies the shift from manual method development to autonomous optimization, significantly reducing the time and expertise required for method development while improving separation quality [15].

Protocol: High-Throughput Characterization of Inorganic Materials

Objective: To rapidly synthesize and characterize inorganic thin-film materials for optoelectronic properties using combinatorial approaches.

Materials:

- Sputtering targets (pure elements or predefined alloys)

- Substrate libraries (glass, silicon, specialized coatings)

- Precursor materials for chemical vapor deposition (where applicable)

Instrumentation:

- Combinatorial physical vapor deposition system

- Automated X-ray diffractometer for structural analysis

- Spectrophotometer for optical characterization

- Four-point probe station for electrical measurements

- Automated sample handling robotics

Methodology:

- Combinatorial Synthesis: Deposit material gradients across substrate libraries by varying composition, temperature, and pressure parameters using automated PVD systems.

- Structural Characterization: Collect X-ray diffraction patterns across sample libraries with automated stage movement and pattern analysis.

- Property Mapping: Measure optical absorption spectra and electrical conductivity across composition spreads.

- Data Integration: Correlate synthesis conditions with structural and optoelectronic properties using the HTEM database infrastructure.

- Machine Learning Analysis: Apply pattern recognition algorithms to identify composition-structure-property relationships across the dataset.

This high-throughput approach enables the rapid exploration of compositional landscapes, generating the large, diverse datasets needed to train accurate machine learning models for materials discovery [9].

Visualization of Automated Workflows

High-Throughput Materials Characterization Workflow

Diagram 1: High-throughput materials characterization workflow showing the integration of combinatorial synthesis, automated characterization, and data management with AI feedback loops.

AI-Optimized Analytical Method Development

Diagram 2: AI-optimized method development workflow showing the iterative process of parameter screening, data acquisition, and algorithmic optimization.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for High-Throughput Inorganic Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Combinatorial Sputtering Targets | Source materials for thin-film deposition | Pre-alloyed or elemental targets for compositional spreads |

| Certified Reference Materials | Quality control and method validation | Essential for AI model training and validation |

| Specialty Mobile Phases | Chromatographic separations | MS-compatible buffers with consistent purity |

| Calibration Standards | Instrument performance verification | Traceable to international standards |

| Substrate Libraries | Platform for materials deposition | Various surface functionalities and coatings |

| Automated Liquid Handling Reagents | High-throughput screening | Compatible with robotic liquid handling systems |

Cost-Effectiveness Analysis Framework

When evaluating the cost-effectiveness of inorganic analysis platforms, researchers must consider not only the initial capital investment but also the long-term operational efficiencies gained through automation and AI integration. The framework proposed by Norlen et al. provides a valuable approach, emphasizing the cost per correct regulatory decision as a key metric that incorporates cost, duration, and uncertainty [16].

Traditional toxicological testing for chemical evaluation can cost between $8-16 million per substance and require eight years or more for completion [16]. In contrast, emerging alternative methods that incorporate AI and automation can provide substantial reductions in both time and cost while maintaining, and in some cases improving, decision quality. The cost-effectiveness analysis demonstrates that either a fivefold reduction in cost or duration can be a larger driver for selecting an optimal methodology than a fivefold reduction in uncertainty alone [16].

For pharmaceutical and materials research organizations, this framework suggests that investments in AI-integrated platforms are justified when they enable faster cycle times in discovery and development. Systems that can autonomously optimize analytical methods or characterize materials at high throughput provide value not merely through labor reduction, but through accelerated knowledge generation and improved decision quality.

Sustainability Implications of Automated Analysis Platforms

The integration of AI and automation in analytical laboratories also presents significant sustainability benefits. Modern chemistry analyzers and automated platforms increasingly incorporate eco-efficiency as a core design principle, with features including reagent conservation systems, smart water usage, and energy-efficient operation [17].

Platforms like the Mindray BS-800M implement coolant circulation reagent refrigeration to maintain stable temperatures while minimizing energy consumption, and direct solid-heating systems that rapidly heat reaction disks with minimal temperature fluctuation [17]. These design optimizations reduce the environmental footprint of analytical operations while simultaneously lowering operational costs.

Additionally, the move toward "dark laboratories" with 24/7 operational capability enables better resource utilization and reduces the spatial footprint of research activities. Thorsten Teutenberg of IUTA contrasted Europe's traditional lab practices with China's investments in fully autonomous "dark factories," highlighting the potential for automation to dramatically improve resource efficiency in research operations [15].

The integration of AI, automation, and sustainability considerations is reshaping the landscape of inorganic analysis platforms. For researchers and drug development professionals, selecting the optimal platform now requires evaluating a complex matrix of analytical performance, computational capability, throughput efficiency, and environmental impact.

The most advanced systems demonstrate that AI-driven optimization can significantly reduce method development time while improving analytical quality. High-throughput automated characterization enables the rapid generation of large, diverse datasets that fuel machine learning algorithms. When evaluated through a cost-effectiveness framework that considers both temporal and financial dimensions, these advanced platforms demonstrate compelling value despite potentially higher initial investments.

As the field evolves toward increasingly autonomous operations, researchers should prioritize platforms with robust data management infrastructure, open architecture for algorithm development, and modular design that allows for technology refresh as new capabilities emerge. The future of inorganic analysis lies in self-optimizing systems that seamlessly integrate physical experimentation with digital intelligence, accelerating discovery while maximizing resource utilization.

The Critical Need for Cost-Effectiveness Analysis in Platform Selection

In the competitive landscape of scientific research, particularly in drug development and chemical analysis, platform selection decisions have profound implications for both operational efficiency and research outcomes. The global inorganic elemental analyzer market, a cornerstone of analytical science, is projected to expand at a Compound Annual Growth Rate (CAGR) of 7% from 2025 to 2033, creating increasingly complex decision matrices for research teams [18]. This growth is fueled by stringent environmental regulations, the agricultural sector's need for soil and fertilizer analysis, and the chemical industry's emphasis on quality control [18]. Despite this expansion, research organizations face significant challenges, including high initial investment costs for advanced instruments and the need for specialized technical expertise for operation and maintenance [18]. These factors collectively underscore the critical need for systematic cost-effectiveness analysis when selecting analytical platforms.

Cost-effectiveness analysis transcends mere price comparison, encompassing total cost of ownership, operational efficiency, analytical performance, and strategic alignment with research objectives. For researchers and drug development professionals, these evaluations determine not only immediate procurement decisions but also long-term research capabilities, compliance with regulatory standards, and eventual time-to-market for developed compounds. This article provides a structured framework for conducting such analyses, supported by experimental data comparisons and methodological protocols to guide evidence-based platform selection in inorganic analysis.

Comparative Landscape of Analytical Platforms

The inorganic elemental analyzer market is characterized by concentrated competition, with established players like Elementar, LECO, and PerkinElmer collectively holding over 50% market share [18]. This concentration stems from extensive product portfolios, strong distribution networks, and long-standing customer relationships, while smaller competitors like ELTRA and VELP Scientifica Srl often focus on niche applications or specific geographic regions [18]. Understanding this competitive dynamic is essential for researchers, as it influences pricing structures, service options, and technological innovation pathways.

The market exhibits distinct segmentation by analyzer type, with carbon, hydrogen, nitrogen, and sulfur analyzers representing the most prevalent categories due to their widespread applications across industries [18]. Different analytical techniques offer varying advantages; while methods like X-ray fluorescence can provide partial elemental information, dedicated inorganic elemental analyzers remain the gold standard for precise and comprehensive analysis in many applications due to their superior sensitivity and accuracy for specific elements [18].

Table: Inorganic Elemental Analyzer Market Characteristics

| Characteristic | Market Impact | Implications for Researchers |

|---|---|---|

| Market Concentration | Top 3 players hold >50% market share | Potential for bundled solutions but less price negotiation leverage |

| Innovation Trends | Miniaturization, automation, improved sensitivity | Better field applications and higher throughput capabilities |

| End-User Distribution | Chemical industry (30%), environmental testing (25%), agricultural research (15%) | Specialized platforms tailored to specific applications |

| Regional Dynamics | North America and Europe dominate, but Asia-Pacific growing rapidly | Varying service and support availability by region |

| M&A Activity | Moderate, approximately $150M in deals over past 5 years | Potential for platform discontinuation or integration challenges |

Emerging Trends Influencing Platform Selection

Technological innovation continues to reshape the analytical platform landscape, with several key trends influencing cost-effectiveness considerations. Miniaturization and improved portability are expanding application possibilities, enabling field-based analysis that reduces sample transport costs and time delays [18]. Simultaneously, enhanced sensitivity and accuracy through advanced detection technologies like mass spectrometry are pushing analytical boundaries, particularly for trace element analysis in pharmaceutical development [18].

The integration of automated sample handling and data processing systems represents a significant operational efficiency driver, reducing manual labor requirements and potential human error [18]. Furthermore, increased focus on user-friendly software and interfaces lowers training requirements and facilitates broader adoption across research teams with varying technical expertise [18]. Perhaps most significantly, the trend toward integration of elemental analysis with other analytical techniques promotes more holistic approaches to material characterization, potentially reducing the need for multiple specialized instruments [18].

Experimental Framework for Platform Evaluation

Methodologies for Comparative Performance Assessment

Establishing standardized protocols for platform evaluation is essential for generating comparable cost-effectiveness data. The following experimental framework provides methodologies for assessing critical performance parameters across different analytical platforms.

Throughput and Efficiency Protocol

Objective: Quantify sample processing capacity and operational efficiency across platforms. Materials: Certified reference materials (NIST 1547 Peach Leaves, NIST 2711 Montana Soil), automated sampler (where applicable), timing device, data recording system. Procedure:

- Prepare minimum of 36 identical samples from homogeneous certified reference material

- Program analytical method according to manufacturer specifications for carbon, hydrogen, nitrogen, sulfur (CHNS) analysis

- Initiate analysis sequence with continuous operation over 8-hour period

- Record time intervals between sample introduction and result generation

- Document any manual intervention requirements or system alerts

- Calculate throughput as samples per hour and total operational efficiency as (analytical time/total time) × 100

Accuracy and Precision Assessment Protocol

Objective: Evaluate analytical performance across concentration ranges and sample matrices. Materials: Certified reference materials with varying concentration ranges, sample preparation equipment, statistical analysis software. Procedure:

- Select five certified reference materials spanning expected analytical range

- Prepare six replicates of each reference material following standardized preparation protocols

- Analyze replicates in randomized sequence to minimize systematic bias

- Calculate accuracy as percentage recovery of certified values

- Determine precision as relative standard deviation (RSD) across replicates

- Perform statistical analysis (t-tests, ANOVA) to identify significant differences between platforms

Operational Cost Analysis Protocol

Objective: Quantify total cost of ownership across platform lifecycle. Materials: Manufacturer specifications, utility consumption monitoring devices, service records, operator time tracking system. Procedure:

- Document initial acquisition costs including installation and training

- Monitor consumable consumption (gases, reagents, consumables) over 30-day period

- Record utility consumption (power, water, cryogens) using calibrated monitoring devices

- Document operator time requirements for method development, operation, and maintenance

- Calculate preventive and corrective maintenance costs from service records

- Project useful lifespan based on manufacturer data and industry benchmarks

Experimental Workflow Visualization

The experimental assessment of analytical platforms follows a systematic workflow encompassing preparation, execution, and data analysis phases, as illustrated below:

Results: Quantitative Comparison of Analytical Platforms

Performance and Cost Metrics Across Analyzer Types

Comprehensive evaluation of analytical platforms requires multidimensional assessment spanning performance, operational, and economic dimensions. The following tables consolidate experimental data from standardized testing protocols to enable direct comparison across platform categories.

Table: Analytical Performance Metrics by Platform Type

| Platform Category | Throughput (samples/hr) | Accuracy (% recovery) | Precision (% RSD) | Detection Limits (ppm) | Method Development Time (hours) |

|---|---|---|---|---|---|

| High-End CHNS Analyzer | 8-12 | 98-102 | 0.5-1.5 | 1-5 | 8-16 |

| Mid-Range Elemental Analyzer | 6-8 | 95-102 | 1.0-2.5 | 5-20 | 12-24 |

| Portable Field Analyzer | 2-4 | 90-105 | 2.0-5.0 | 50-200 | 4-8 |

| Dedicated Nitrogen Analyzer | 10-15 | 97-103 | 0.3-1.0 | 0.5-2 | 2-4 |

| Oxygen/Sulfur Specialist | 4-6 | 96-104 | 1.5-3.0 | 10-50 | 16-32 |

Table: Operational and Economic Metrics by Platform Type

| Platform Category | Acquisition Cost ($) | Annual Consumable Cost ($) | Operator Training (days) | Maintenance Frequency (weeks) | Typical Useful Lifespan (years) |

|---|---|---|---|---|---|

| High-End CHNS Analyzer | 150,000-300,000 | 15,000-30,000 | 5-7 | 12-16 | 10-15 |

| Mid-Range Elemental Analyzer | 80,000-150,000 | 8,000-15,000 | 3-5 | 24-36 | 8-12 |

| Portable Field Analyzer | 25,000-50,000 | 2,000-5,000 | 1-2 | 48-52 | 5-8 |

| Dedicated Nitrogen Analyzer | 40,000-70,000 | 5,000-8,000 | 1-2 | 24-32 | 8-10 |

| Oxygen/Sulfur Specialist | 100,000-200,000 | 12,000-20,000 | 4-6 | 16-20 | 10-12 |

Cost-Effectiveness Decision Matrix

The relationship between analytical capability and total cost of ownership reveals distinct value propositions across platform categories. The following visualization maps this relationship to guide selection decisions based on research requirements and budget constraints:

Essential Research Reagent Solutions

The implementation of analytical methods requires specific research reagents and materials that significantly impact both analytical performance and operational costs. The following table details essential solutions for inorganic analysis workflows:

Table: Essential Research Reagent Solutions for Inorganic Analysis

| Reagent/Material | Function | Cost Considerations | Performance Impact |

|---|---|---|---|

| Certified Reference Materials | Method validation, quality control, calibration | $150-500 per material | Critical for data accuracy and regulatory compliance |

| High-Purity Gases (Carrier/Reaction) | Sample combustion, transport, reaction medium | $2,000-8,000 annually | Directly affects detection limits and system stability |

| Combustion Accelerators | Enhance sample oxidation, ensure complete combustion | $100-300 per kilogram | Improves recovery for difficult matrices |

| Catalyst Tubes/Packing | Promote specific reaction pathways | $500-2,000 per replacement | Impacts analytical speed and method applicability |

| Specialized Sampling Cups | Sample containment and introduction | $5-20 per cup | Affects cross-contamination and automation compatibility |

| Calibration Standards | Instrument calibration, quantitative analysis | $200-800 per set | Determines quantitative accuracy across concentration ranges |

| System Suitability Test Mixtures | Performance verification, troubleshooting | $300-600 per set | Ensures continuous method validity between service intervals |

Discussion: Strategic Implementation of Cost-Effectiveness Analysis

Interpreting Comparative Data for Institutional Needs

The quantitative comparisons presented reveal significant variation in both performance and economic metrics across analytical platform categories. High-end CHNS analyzers deliver superior throughput and detection limits but command premium acquisition costs and require substantial operational investment [18]. Conversely, mid-range elemental analyzers offer balanced performance with moderate cost structures, representing optimal value for laboratories with diverse but not exceptionally demanding analytical requirements. Portable field analyzers, while limited in analytical capabilities, provide unique value through operational flexibility and significantly lower total cost of ownership [18].

Strategic platform selection requires alignment with institutional research agendas rather than simply pursuing maximum analytical capabilities. Research organizations should conduct thorough needs assessments quantifying expected sample volumes, required detection limits, analytical turnaround requirements, and available technical expertise before engaging in platform evaluation. The experimental protocols provided in this article enable standardized assessment across these dimensions, facilitating evidence-based decision-making that balances analytical capability with fiscal responsibility.

Future Directions in Analytical Platform Economics

The inorganic elemental analyzer market continues to evolve, with several emerging trends likely to influence future cost-effectiveness considerations. Increasing system automation reduces operator time requirements and associated labor costs, potentially justifying higher initial investments through long-term operational savings [18]. Miniaturization and portability trends may expand application possibilities while creating new cost structures centered on field-based analysis [18]. Additionally, integration with complementary analytical techniques promises more comprehensive characterization capabilities from single platforms, potentially reducing total instrument investments across research organizations [18].

Research institutions should monitor these developments closely, as evolving platform capabilities may fundamentally reshape cost-benefit calculations in analytical science. The experimental framework presented provides a adaptable methodology for continuous evaluation of emerging technologies, ensuring that platform selection decisions remain aligned with both scientific objectives and economic realities in this dynamic marketplace.

Cost-effectiveness analysis in analytical platform selection represents a critical competency for research organizations operating in increasingly competitive and budget-constrained environments. This article has established a comprehensive framework for evaluating analytical platforms across multiple dimensions, incorporating standardized experimental protocols, quantitative performance comparisons, and economic assessments. The provided methodologies enable researchers to transcend simplistic price comparisons in favor of holistic evaluations that consider total cost of ownership, operational efficiency, analytical performance, and strategic alignment with research objectives.

As the inorganic elemental analyzer market continues its projected growth, systematic cost-effectiveness analysis will become increasingly vital for maximizing research impact while maintaining fiscal responsibility. By adopting the structured approaches outlined herein, research institutions can make evidence-based platform selection decisions that optimize both scientific capabilities and financial resources, ultimately accelerating drug development and chemical research through strategic technology investments.

Frameworks and Methodologies for Cost-Effectiveness Analysis

Core Principles of Cost-Effectiveness Analysis (CEA) in a Laboratory Context

Cost-effectiveness analysis (CEA) provides a systematic framework for comparing alternative interventions or technologies not only in terms of their clinical effectiveness but also their economic efficiency, answering the question of whether an approach offers good value for money relative to current practice [19]. In laboratory medicine, where technological advancements continuously introduce new diagnostic platforms and testing methodologies, CEA plays an essential role in guiding decisions about which technologies to adopt, develop, or scale. The fundamental purpose of CEA is to determine the additional cost required to achieve an additional unit of health outcome when comparing two or more strategies [19]. This analytical approach is particularly valuable in resource-constrained laboratory environments, where directors and researchers must make informed choices about implementing new platforms, reagents, or testing protocols while maximizing health outcomes within budgetary limitations.

For laboratory professionals, understanding CEA principles enables more informed participation in healthcare technology assessment processes. When evaluating new analytical platforms, diagnostic assays, or laboratory workflows, CEA moves beyond simple price comparisons to consider the full spectrum of costs and consequences associated with each option. This comprehensive perspective is crucial in modern laboratory medicine, where the choice between different immunoassay systems, for instance, can significantly impact patient management pathways, treatment decisions, and overall healthcare costs. By applying CEA methodologies, laboratory researchers and clinicians can build a robust evidence base demonstrating the value of new technologies compared to existing alternatives, supporting more efficient resource allocation within healthcare systems [19].

Fundamental Methodological Framework

The conduct of a CEA requires several interrelated methodological steps, beginning with the articulation of a precise research question structured around the Population/Patient/Problem, Intervention, Comparator, Outcome (PICO) framework [19]. In laboratory research, this translates to specifying the diagnostic context (population), the new testing platform or strategy (intervention), the current standard testing approach (comparator), and the relevant clinical or analytical outcomes (outcomes). The careful framing of this question ensures the analysis addresses real-world decision-making needs relevant to laboratory operations and patient care.

The selection of an analytical perspective is equally critical, as it dictates which costs and outcomes are included in the evaluation [19]. Common perspectives include:

- Healthcare provider perspective: Focuses on costs borne by health systems or facilities, such as reagents, equipment, staffing, and infrastructure

- Patient perspective: Captures out-of-pocket expenses, time costs, and quality of life impacts

- Societal perspective: The broadest viewpoint, encompassing both provider and patient costs as well as indirect costs such as productivity losses

For laboratory technologies, the healthcare provider perspective often predominates, though broader perspectives may be relevant when diagnostic tests significantly impact patient time or productivity.

The measurement of costs must be systematic and transparent [19]. Bottom-up or ingredient-based costing approaches are often favored in laboratory settings as they allow researchers to document and value each resource component of service delivery, including:

- Capital equipment costs (purchase or lease)

- Reagent and consumable costs

- Labor costs for technical staff

- Quality control and maintenance expenses

- Space and utility requirements

Regardless of the approach, costs should be adjusted for inflation, purchasing power, and currency differences, and expressed in a common base year for comparability. For international comparisons, conversions using Purchasing Power Parity (PPP) are preferred as they account for differences in the cost of living between countries [19].

Effectiveness measures in laboratory CEAs can be expressed as:

- Natural units: Cases correctly diagnosed, analytical tests performed, turnaround time reductions

- Health outcomes: Life-years gained, disability-adjusted life years (DALYs) averted

- Quality-adjusted metrics: Quality-adjusted life years (QALYs) incorporating both length and quality of life

The choice of effectiveness measure depends on the scope of the analysis and the level of evidence available, with broader health outcomes requiring more extensive data linkage and modeling.

Table 1: Key Methodological Components of Laboratory CEA

| Component | Description | Laboratory Application Examples |

|---|---|---|

| Perspective | Viewpoint determining which costs and consequences are relevant | Laboratory director (provider), patient, healthcare system (societal) |

| Time Horizon | Period over which costs and outcomes are evaluated | Short-term (analytical validity period), long-term (clinical impact period) |

| Cost Categories | Types of costs included in analysis | Equipment, reagents, labor, maintenance, space, utilities, training |

| Effectiveness Measures | Units for quantifying outcomes | Tests performed, correct diagnoses, QALYs, DALYs averted |

| Discounting | Adjustment for time preference of costs and outcomes | Typically 3-5% annually for costs and outcomes beyond one year |

Core Analytical Components and Calculations

Incremental Cost-Effectiveness Ratio (ICER)

The cornerstone metric in CEA is the incremental cost-effectiveness ratio (ICER), which expresses the additional cost per additional unit of health benefit gained from the new intervention relative to the comparator [19]. The ICER is calculated as:

[ ICER = \frac{Cost{new} - Cost{standard}}{Effectiveness{new} - Effectiveness{standard}} = \frac{\Delta Cost}{\Delta Effectiveness} ]

For example, if a new automated immunoassay platform costs $15,000 more than the standard platform but detects 10 additional true positive cases per 1,000 tests, the ICER would be $1,500 per additional case detected [20]. In a laboratory context, the ICER helps determine whether the improved performance of a new diagnostic system justifies its additional cost compared to existing technology.

Incremental Net Benefit (INB)

As an alternative statistic, the incremental net benefit (INB) compares the actual value of what one gains in relation to the additional costs by incorporating the decision-maker's willingness-to-pay (WTP) threshold [20]. The INB is calculated as:

[ INB = (WTP \times \Delta Effectiveness) - \Delta Cost ]

If a healthcare payer is willing to pay $50,000 for an additional quality-adjusted life year (QALY), and a new laboratory test provides 0.1 additional QALYs at an extra cost of $3,000, the INB would be $2,000 (i.e., $5,000 - $3,000) [20]. A positive INB indicates the intervention is cost-effective relative to the comparator at the specified WTP threshold. This approach is particularly useful when comparing multiple competing laboratory technologies, as it provides a direct monetary value of the net benefit.

Willingness-to-Pay Thresholds

The interpretation of ICER and INB results depends critically on the willingness-to-pay (WTP) threshold, which represents the maximum amount a decision-maker is prepared to pay for an additional unit of health outcome [19]. Traditionally, many studies have used gross domestic product (GDP)-based thresholds, often set at 1-3 times a country's per capita GDP. However, more recent literature emphasizes context-specific thresholds based on health system opportunity costs—the health benefits forgone when resources are allocated to the evaluated intervention instead of alternative uses [19].

For laboratory technologies, WTP thresholds may vary significantly depending on:

- The clinical context (higher for life-threatening conditions)

- The intended use (screening vs. diagnostic vs. monitoring)

- The healthcare system's budget constraints

- The availability of alternative technologies

Table 2: Decision Rules for CEA Results Interpretation

| Analysis Result | Interpretation | Laboratory Decision Implication |

|---|---|---|

| ICER < WTP | New intervention is cost-effective | Adopt new technology/platform |

| ICER > WTP | New intervention is not cost-effective | Retain current technology/platform |

| ΔCost < 0 and ΔEffect > 0 | New intervention dominates (cost-saving and more effective) | Strong case for adoption |

| ΔCost > 0 and ΔEffect < 0 | New intervention is dominated (more costly and less effective) | Reject new technology |

| Positive INB | New intervention is cost-effective | Adopt new technology/platform |

| Negative INB | New intervention is not cost-effective | Retain current technology |

Handling Uncertainty in CEA

Given inherent uncertainties in input parameters, sensitivity analysis is an indispensable component of CEA [20]. Laboratory CEAs contain multiple potential sources of uncertainty, including:

- Variability in reagent costs and equipment lifespan

- Differences in test performance across patient populations

- Fluctuations in test volume and utilization

- Changes in staffing requirements and expertise

Deterministic Sensitivity Analysis

Deterministic sensitivity analysis (also called one-way sensitivity analysis) involves varying one parameter at a time—such as the cost of reagents or the sensitivity of a test—to examine how much the outcome changes [19]. This approach helps identify which parameters have the greatest influence on the results and should therefore be estimated with particular care. For laboratory tests, parameters that often warrant sensitivity analysis include:

- Test sensitivity and specificity

- Equipment purchase price and maintenance costs

- Reagent costs and shelf-life

- Test volume and throughput

- Technician time per test

Probabilistic Sensitivity Analysis

Probabilistic sensitivity analysis (PSA) allows multiple parameters to vary simultaneously based on defined probability distributions and uses repeated simulations (often 1,000-10,000 iterations) to assess the overall robustness of the findings [20]. To communicate these results, researchers often use:

- Cost-effectiveness acceptability curves (CEACs): Show the probability that an intervention is cost-effective at different WTP thresholds

- Scatterplots on the cost-effectiveness plane: Illustrate the joint uncertainty in costs and effects

- Confidence intervals for ICERs and INBs: Provide range estimates for the cost-effectiveness metrics

For laboratory researchers, incorporating comprehensive sensitivity analyses strengthens the credibility of CEA findings and provides decision-makers with a clearer understanding of the circumstances under which a new technology represents good value.

Comparative Analysis of Cost-Effectiveness Models

Comparative analyses of published cost-effectiveness models provide critical insights to inform the development of new CEAs in the same disease area or technological domain [21]. Such comparisons are particularly valuable in laboratory medicine, where multiple testing platforms or strategies may be available for the same clinical indication. A systematic approach to model comparison involves identifying key differences in model structure, assumptions, and data inputs that may explain variations in cost-effectiveness conclusions.

When comparing cost-effectiveness models for laboratory technologies, several critical issues require consideration [21]:

- Model comparator: Whether the new technology is compared to no testing, standard testing, or an alternative technology

- Time horizon: The period over which costs and outcomes are evaluated (short-term analytical performance vs. long-term clinical outcomes)

- Model scope: The range of consequences included (analytical performance only vs. full clinical pathway impacts)

- Disease progression: How test results influence patient management and subsequent health outcomes

For example, a comparative analysis of cost-effectiveness models for genotypic antiretroviral resistance testing in HIV identified substantial variations in model assumptions regarding the prevalence of drug resistance, antiretroviral therapy efficacy, test performance characteristics, and the proportion of patients switching therapy based on test results [21]. These methodological differences significantly influenced the estimated cost-effectiveness of testing, highlighting the importance of transparent reporting and critical appraisal of model assumptions.

Table 3: Framework for Comparative Analysis of Laboratory CEAs

| Comparison Dimension | Key Considerations | Impact on Results |

|---|---|---|

| Analytical Perspective | Provider vs. health system vs. societal | Determines which costs and outcomes are included |

| Time Horizon | Short-term (analytical) vs. long-term (clinical) | Affects capture of downstream costs and benefits |

| Cost Categories | Direct medical, direct non-medical, indirect | Influences total cost estimates and comprehensiveness |

| Effectiveness Measure | Intermediate vs. final health outcomes | Determines clinical relevance and generalizability |

| Model Structure | Decision tree vs. state-transition vs. discrete event simulation | Affects ability to capture complex pathways and time dependencies |

| Handling of Uncertainty | Deterministic vs. probabilistic sensitivity analysis | Impacts robustness of conclusions and decision-makers' confidence |

Experimental Protocols for Laboratory CEA

Protocol 1: Costing Methodology for Laboratory Platforms

Objective: To systematically identify, measure, and value all resources associated with implementing and operating a laboratory testing platform.

Materials and Equipment:

- Equipment purchase or lease price quotations

- Reagent and consumable price lists

- Laboratory space measurements

- Staffing schedules and salary information

- Service and maintenance contracts

- Utility consumption data

Procedure:

- Identify cost categories: Categorize resources into capital equipment, reagents, consumables, labor, maintenance, quality control, and overheads.

- Measure resource use: Quantify the resources required for each cost category, typically on a per-test basis considering expected test volume.

- Value resources: Assign monetary values to each resource using market prices, quotations, or institutional accounting data.

- Annualize capital costs: Convert one-time capital costs to equivalent annual costs using an appropriate discount rate (typically 3-5%) and equipment lifespan.

- Calculate unit costs: Divide total annual costs by annual test volume to determine cost per test.

- Validate estimates: Compare calculated costs with actual expenditure data where available.

Analysis: Present costs in a disaggregated format to enhance transparency and facilitate adaptation to different settings.

Protocol 2: Test Performance and Outcomes Assessment

Objective: To evaluate the analytical and clinical performance of a laboratory test and its impact on patient management and health outcomes.

Materials and Equipment:

- Laboratory testing platform and reagents

- Patient samples with known reference standard results

- Clinical data collection forms

- Patient follow-up mechanisms

- Quality of life measurement instruments (e.g., EQ-5D, SF-36)

Procedure:

- Establish test performance: Determine sensitivity, specificity, positive and negative predictive values using appropriate reference standards.

- Map clinical pathways: Document how test results influence subsequent diagnostic and treatment decisions.

- Measure intermediate outcomes: Quantify changes in diagnosis, treatment selection, monitoring frequency, or safety outcomes.

- Assess final health outcomes: Measure survival, quality of life, or disease progression using appropriate metrics and instruments.

- Extrapolate long-term outcomes: Use modeling techniques to estimate quality-adjusted life years (QALYs) or disability-adjusted life years (DALYs) where long-term data are unavailable.

Analysis: Calculate outcome differences between new and comparator strategies, incorporating appropriate measures of uncertainty.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Laboratory CEA Research

| Item | Function | Application Example |

|---|---|---|

| Cost Data Collection Tools | Structured instruments for systematic cost data collection | Capturing equipment, reagent, labor, and overhead costs |

| Test Performance Validation Materials | Samples with known reference standard results | Establishing sensitivity, specificity, and predictive values |

| Health Outcome Measures | Validated instruments for measuring quality of life and health status | EQ-5D, SF-36 for utility estimation in QALY calculation |

| Decision-Analytic Modeling Software | Tools for building and analyzing cost-effectiveness models | TreeAge Pro, R, Excel for ICER and INB calculation |

| Statistical Analysis Packages | Software for statistical analysis and uncertainty assessment | Stata, SAS, R for sensitivity analyses and confidence intervals |

| Reference Materials | International standards for test calibration | WHO International Reference Preparations for harmonization [22] |

| Commutability Assessment Materials | Clinical samples and reference materials for harmonization studies | Evaluating consistency across different measurement systems [22] |

Case Study: CEA of 21-Gene Platform in Breast Cancer