Beyond the Crystal Ball: How DFT and Machine Learning are Revolutionizing Crystal Structure Prediction

This article provides a comprehensive overview of the current state of crystal structure prediction (CSP) using Density Functional Theory (DFT) and emerging machine learning (ML) methods.

Beyond the Crystal Ball: How DFT and Machine Learning are Revolutionizing Crystal Structure Prediction

Abstract

This article provides a comprehensive overview of the current state of crystal structure prediction (CSP) using Density Functional Theory (DFT) and emerging machine learning (ML) methods. Tailored for researchers and drug development professionals, it explores the foundational principles of DFT, details cutting-edge methodological workflows and their applications in pharmaceuticals and materials science, addresses common computational challenges and optimization strategies, and offers a critical comparison of different predictive approaches. By synthesizing insights from recent high-impact studies, this review serves as a guide for leveraging computational predictions to accelerate the design of new drugs and functional materials.

The Foundation of Crystal Structure Prediction: Why Accurate Models Matter in Drug and Material Design

The three-dimensional atomic-level structure of a material, its crystal structure, serves as the foundational blueprint that dictates its key physicochemical properties. In pharmaceutical development, this structure determines critical drug performance characteristics such as solubility and stability, directly impacting bioavailability and shelf-life [1]. Concurrently, in materials science and condensed matter physics, the crystal structure governs electronic properties, enabling the design of advanced materials including organic semiconductors and superconductors [1] [2]. Accurately predicting and controlling these structures is therefore paramount for rational design in both fields.

Density Functional Theory (DFT) has long been the workhorse method for modeling crystal structures and predicting their properties from first principles. However, its predictive power has been fundamentally limited by approximations in the exchange-correlation (XC) functional, a term that describes how electrons interact [3] [4]. This limitation has hindered the reliable in silico design of new drugs and materials. Recent breakthroughs are now overcoming this decades-old challenge. The integration of machine learning (ML) with DFT is dramatically improving the accuracy of quantum calculations [3] [4], while novel experimental techniques like ionic Scattering Factors (iSFAC) modelling are providing unprecedented experimental data on charge distribution within crystals [5]. This application note details these advanced protocols, providing researchers with the methodologies to leverage these advancements.

Experimental Protocols & Application Notes

Machine Learning-Augmented Density Functional Theory

The limited accuracy of traditional DFT functionals has been a major bottleneck. A transformative approach involves using machine learning to learn a more universal XC functional directly from highly accurate quantum data.

Protocol: Training a Machine-Learned XC Functional

- Reference Data Generation: Use high-accuracy wavefunction methods (e.g., CCSD(T)) to compute energies for a diverse set of molecular structures. Microsoft Research, in collaboration with expert partners, generated a dataset of ~150,000 highly accurate energy differences to serve as training labels [4].

- Model Architecture and Training: Design a deep learning architecture (e.g., Microsoft's "Skala" functional) that takes the electron density as input and predicts the XC energy. Unlike traditional "Jacob's Ladder" approaches that rely on hand-designed density descriptors, this architecture should learn relevant representations directly from the data [4].

- Validation: Assess the trained model's performance on a well-known benchmark dataset (e.g., W4-17). The Skala functional, for instance, has demonstrated accuracy required to reliably predict experimental outcomes for main group molecules, a significant leap over traditional functionals [4].

Protocol: Enhancing DFT with Potentials-Based ML Training A specific innovation from the University of Michigan involves using not just energies, but also potentials to train ML models for constructing XC functionals.

- Data Collection: Obtain exact energies and potentials for a small set of atoms and simple molecules (e.g., 5 atoms, 2 simple molecules) using high-accuracy QMB calculations [3].

- Model Training: Train an ML model to approximate the XC functional using this compact dataset. Including potentials in the training data provides a stronger foundation, as they highlight small spatial differences in electron behavior more effectively than energies alone [3].

- Deployment in DFT: Integrate the newly trained ML-generated XC functional into standard DFT calculations. This approach has been shown to yield striking accuracy, matching or outperforming widely used XC approximations while remaining computationally efficient [3].

Machine Learning-Driven Crystal Structure Prediction (CSP)

Predicting the stable crystal structure of a molecule from its chemical composition alone remains a formidable challenge. The SPaDe-CSP workflow demonstrates how ML can drastically improve the efficiency of this process for organic molecules.

- Protocol: The SPaDe-CSP Workflow

- Data Curation and Model Training:

- Curate a dataset of known organic crystal structures from the Cambridge Structural Database (CSD), applying filters for data quality (e.g., R-factor < 10, Z' = 1) [1].

- Train two separate machine learning models:

- ML-Guided Lattice Sampling:

- For a new molecule, use the trained models to predict likely space group candidates and the target density.

- Use these predictions to filter randomly sampled lattice parameters, accepting only those that satisfy the predicted density tolerance. This "sample-then-filter" strategy significantly reduces the generation of low-density, unstable structures that are common in random searches [1].

- Structure Relaxation with Neural Network Potential (NNP):

- Validation: This workflow achieved an 80% success rate in finding the experimentally observed structure for 20 organic crystals of varying complexity, doubling the success rate of a random CSP approach [1].

- Data Curation and Model Training:

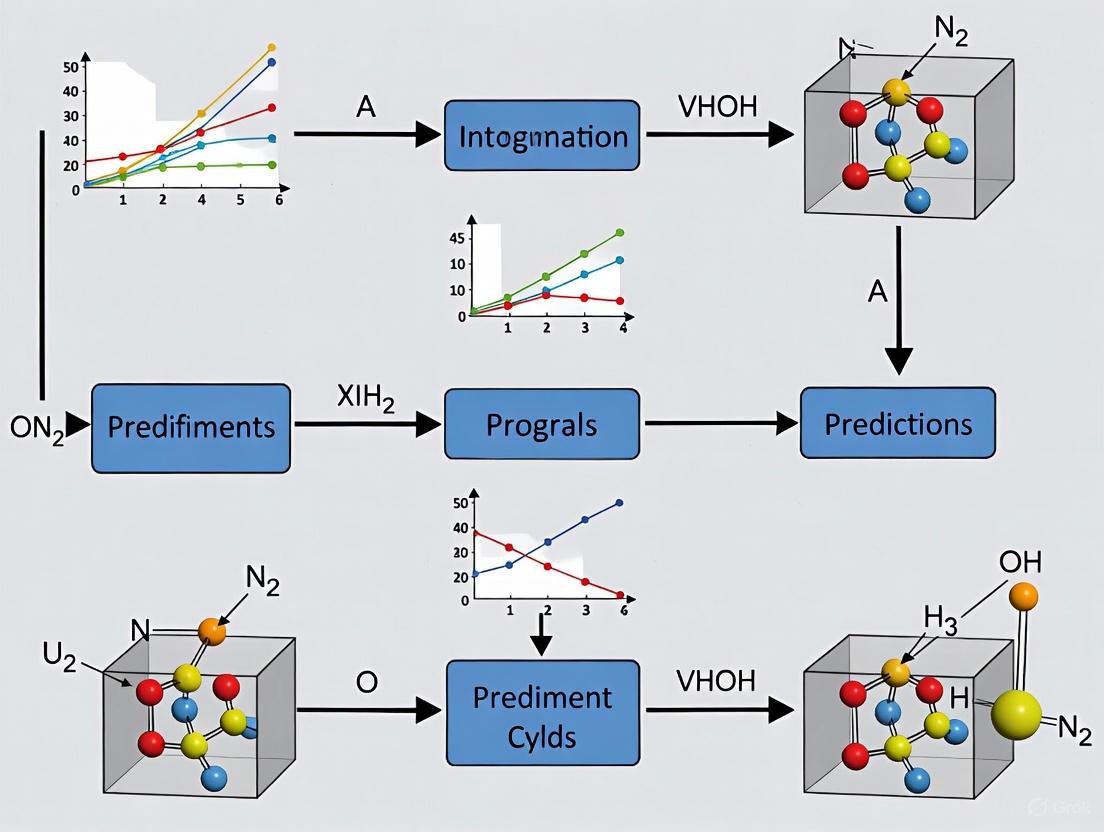

The following workflow diagram illustrates the SPaDe-CSP protocol:

Experimental Determination of Partial Atomic Charges

Atomic partial charges are crucial for understanding intermolecular interactions, reactivity, and material properties. The iSFAC modelling method provides a general experimental way to determine them.

- Protocol: iSFAC Modelling with Electron Diffraction

- Data Collection: Perform a standard 3D electron diffraction experiment on a single crystal of the compound of interest. The required crystal quality is generally the same as that used for conventional structure determination [5].

- Refinement Setup: For each atom, refine the standard nine parameters (atomic coordinates and atomic displacement parameters). Additionally, introduce one new refinable parameter per atom to describe the fraction of its ionic scattering factor [5].

- Scattering Factor Calculation: For each atom, the total scattering factor is modelled as a combination of the theoretical scattering factor of the neutral atom and the theoretical scattering factor of its ionic form, weighted by the refined charge parameter [5].

- Refinement and Analysis: Refine the structure against the diffraction data with these additional parameters. The resulting value for each atom represents its experimental partial charge on an absolute scale, providing direct insight into bond polarity and charge transfer within the crystal [5].

Data Presentation and Analysis

Performance of CSP and ML-DFT Methodologies

The following table summarizes the performance of various advanced computational methods as reported in recent studies.

Table 1: Quantitative Performance of Advanced Computational Methods

| Method / Workflow | Key Innovation | Reported Performance | System Studied / Test Set |

|---|---|---|---|

| SPaDe-CSP [1] | ML-based lattice sampling & NNP relaxation | 80% success rate in CSP (2x improvement over random search) | 20 organic crystals |

| ML-DFT (University of Michigan) [3] | ML-trained XC functional using energies & potentials | Outperformed/matched widely used XC approximations | Small atoms & molecules |

| Skala Functional (Microsoft) [4] | Deep-learned XC functional from large, accurate dataset | Reached chemical accuracy (~1 kcal/mol) on W4-17 benchmark | Main group molecules |

| XDXD Framework [6] | End-to-end deep learning from XRD data | 70.4% match rate with RMSE < 0.05 at 2.0 Å resolution | 24,000 experimental structures from COD |

Experimental Partial Charges from iSFAC Modelling

The iSFAC method quantifies charge distribution, which is critical for understanding stability and solubility. The table below shows experimental partial charges for key functional groups, demonstrating the method's ability to reveal electronic details.

Table 2: Experimentally Determined Partial Charges via iSFAC Modelling [5]

| Compound | Functional Group / Atom | Experimental Partial Charge (e) | Chemical Interpretation |

|---|---|---|---|

| Ciprofloxacin | C18 (in COOH) | +0.11 | Typical for a carboxylic acid carbon |

| O1 (in C=O) | -0.27 | ||

| Tyrosine (Zwitterion) | C9 (in COO⁻) | -0.19 | Negative charge due to electron delocalization in carboxylate |

| N1 (in NH₃⁺) | -0.46 | Negative nitrogen charge balanced by positive protons (+0.39e, +0.32e, +0.19e) | |

| Histidine (Zwitterion) | C6 (in COO⁻) | -0.25 | Negative charge due to electron delocalization in carboxylate |

| O1 (in COO⁻) | -0.31 | Strong hydrogen bond acceptor |

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful execution of the described protocols requires leveraging specific computational and experimental resources.

Table 3: Key Research Reagent Solutions for Crystal Structure Research

| Reagent / Resource | Function / Application | Examples / Notes |

|---|---|---|

| Neural Network Potentials (NNPs) | Fast, accurate force fields for structure relaxation, trained on DFT data. | PFP, ANI [1]; achieve near-DFT accuracy at lower computational cost. |

| Pre-trained ML Models for CSP | Predict crystal properties (space group, density) to guide structure search. | Space group and packing density predictors in SPaDe-CSP [1]. |

| High-Accuracy Quantum Chemistry Datasets | Training data for developing ML-based quantum chemistry models. | W4-17, datasets generated by Microsoft/Prof. Karton [4]. |

| Electron Diffraction Instrumentation | Enables crystal structure determination from sub-micron crystals and iSFAC modelling. | Crucial for pharmaceuticals and materials where growing large crystals is difficult [5]. |

| Crystallographic Databases | Source of data for training ML models and validating predictions. | Cambridge Structural Database (CSD), Crystallography Open Database (COD) [1] [6]. |

Theoretical Foundations and Modern Challenges

Density Functional Theory (DFT) is a computational powerhouse for modeling and predicting material properties at the quantum mechanical level. Its fundamental premise is that the ground-state energy of a many-electron system can be uniquely determined by its electron density, a concept that dramatically simplifies the complex problem of electron-electron interactions. This approach transforms the intractable many-body Schrödinger equation into a solvable set of equations, known as the Kohn-Sham equations, making accurate quantum mechanical calculations possible for real-world materials [7].

Despite its widespread success, standard DFT approximations face several well-documented challenges. The "band gap problem" is particularly significant, where DFT tends to underestimate the energy separation between occupied and unoccupied electron states in semiconductors and insulators. This is especially pronounced in strongly correlated systems like metal oxides, where self-interaction errors lead to inaccurate descriptions of electronic properties [8]. Furthermore, accurately modeling weak intermolecular interactions, such as van der Waals forces crucial for molecular crystal stability, requires specialized dispersion corrections [9].

The computational cost of DFT, while far lower than higher-level quantum chemistry methods, remains a bottleneck for large systems or high-throughput screening. A single dispersion-inclusive DFT calculation for a molecular crystal structure can be prohibitively expensive when thousands of candidate structures need evaluation, creating a critical barrier for applications like crystal structure prediction (CSP) [9]. These limitations have driven the development of more advanced functionals and hybrid methodologies that combine DFT with other computational approaches.

Applications in Crystal Structure Prediction

DFT is indispensable for Crystal Structure Prediction (CSP), which aims to determine the most stable three-dimensional packing arrangements of molecules in a crystal lattice. The profound industrial significance of CSP stems from the phenomenon of polymorphism, where a single molecule can form multiple distinct crystal structures with vastly different physical properties—including solubility, bioavailability, stability, and optical characteristics. This is particularly critical in pharmaceutical development, where a drug's efficacy and safety profile can depend on its solid form [9].

The central challenge in CSP lies in the energy landscape: different polymorphs are often separated by mere few kJ/mol per molecule in energy. Capturing these subtle energy differences requires exceptional accuracy from the computational method used for stability ranking [9]. Dispersion-inclusive DFT provides the necessary accuracy, but its direct application to relax and rank thousands of putative structures generated for a single compound is often computationally impractical [9].

This challenge has catalyzed the development of advanced workflows that leverage DFT data to train more efficient models. For instance, the FastCSP framework exemplifies a modern solution, using machine learning interatomic potentials (MLIPs) trained on extensive DFT datasets to perform geometry relaxation and stability ranking at a fraction of the computational cost while maintaining DFT-level accuracy [9]. Such approaches are transforming the feasibility of high-throughput CSP for industrial applications.

Table 1: Key Properties Predictable via DFT in CSP Context

| Property | Significance in CSP | Common DFT Approach |

|---|---|---|

| Lattice Energy | Primary metric for ranking polymorph stability at 0 K | Dispersion-inclusive functionals (e.g., PBE-D3) |

| Forces and Stresses | Essential for geometry optimization of crystal structures | Calculation of Hellmann-Feynman forces and stress tensors |

| Electronic Band Gap | Influences electronic properties for organic electronics | Hybrid functionals (e.g., HSE06) or DFT+U for correlated systems |

| Vibrational Frequencies | Enables calculation of finite-temperature free energy contributions | Density functional perturbation theory |

Integrated DFT and Machine Learning Protocols

The integration of DFT with machine learning (ML) represents a paradigm shift, creating powerful hybrid models that retain quantum mechanical accuracy while achieving dramatic computational speed-ups. Two primary methodologies have emerged: using DFT data to train ML models, and enhancing DFT calculations with ML-derived components.

Protocol: Developing a Machine Learning Interatomic Potential (MLIP)

This protocol outlines the steps for creating an MLIP to replace expensive DFT calculations in large-scale atomistic simulations [7] [10] [9].

Dataset Generation via DFT

- Objective: Create a diverse and representative set of atomic configurations and their corresponding DFT-calculated properties.

- Procedure:

- Sample configurations from the chemical space of interest (e.g., molecular dynamics trajectories, different polymorphs).

- Perform high-quality, dispersion-inclusive DFT calculations (e.g., using ωB97M-V, SCAN, or PBE-D3 functionals) to compute energies, forces, and stress tensors for each configuration [11] [10].

- Implement rigorous quality control: discard structures with excessively high forces, monitor for spin contamination in open-shell systems, and ensure numerical precision in calculations [11].

Model Selection and Training

- Model Choice: Select a suitable MLIP architecture, such as equivariant graph neural networks (e.g., eSEN, M3GNet) that naturally handle atomic systems [11] [10].

- Training: Train the model on the generated DFT dataset. The model learns to map atomic configurations (represented as graphs) directly to the DFT-calculated energies and forces.

- Validation: Assess model performance on a held-out test set of structures not seen during training. Key metrics include Mean Absolute Error (MAE) in energy (e.g., meV/atom) and forces (e.g., meV/Å) [11].

Deployment and Inference

- Use Case: Deploy the trained MLIP for tasks like molecular dynamics simulations or high-throughput CSP. The MLIP can predict energies and forces for new configurations several orders of magnitude faster than the original DFT calculations [9].

Protocol: Multi-Fidelity Machine Learning for Cost-Effective High-Fidelity Predictions

This protocol uses a multi-fidelity learning approach to reduce the need for large, expensive high-fidelity (e.g., SCAN functional) DFT datasets [10].

Data Collection and Fidelity Embedding

- Gather a large dataset of atomic configurations with properties calculated at a low-fidelity (lofi) level (e.g., using the PBE functional).

- For a subset of these configurations, obtain high-fidelity (hifi) data (e.g., using the more accurate but costly SCAN functional).

- Encode the fidelity information (e.g., lofi: 0, hifi: 1) as an integer input to the machine learning model [10].

Model Architecture and Training

- Use a graph-based model architecture, such as M3GNet, that incorporates a global state feature. This feature is used to embed the fidelity information as a vector [10].

- Train the model on the combined lofi and hifi dataset. The model learns the complex relationship between the different levels of theory and their associated potential energy surfaces [10].

Performance and Outcome

- The resulting multi-fidelity model can achieve accuracy comparable to a model trained exclusively on a much larger hifi dataset. Research shows that with only 10% coverage of hifi (SCAN) data, combined with lofi data, the model can match the accuracy of a model trained on a dataset with 8 times the amount of SCAN data [10].

Key Datasets and Functional Developments

The accuracy and scope of DFT and ML-DFT hybrid models are critically dependent on the quality and diversity of the underlying data. Recent years have seen the creation of massive, high-quality DFT datasets that serve as benchmarks and training resources.

Table 2: High-Fidelity DFT Datasets for Materials and Molecules

| Dataset | Scale and Composition | DFT Methodology | Primary Application |

|---|---|---|---|

| OMol25 [11] | ~83 million molecular systems; up to 350 atoms; 83 elements (H-Bi). | ωB97M-V/def2-TZVPD | Training generalizable ML interatomic potentials for diverse molecular systems. |

| OMC25 [12] [9] | Over 27 million molecular crystal structures; 12 elements; up to 300 atoms/unit cell. | Dispersion-inclusive DFT (PBE-D3) | Developing ML potentials for molecular crystal structure prediction. |

| MSR-ACC/TAE25 [13] | 76,879 total atomization energies for small molecules. | CCSD(T)/CBS (Wavefunction-based) | Training and benchmarking high-accuracy functionals (e.g., Skala). |

Alongside datasets, the development of more accurate exchange-correlation (XC) functionals remains a core area of research. The Skala functional, a deep learning-based XC functional developed by Microsoft Research, exemplifies a modern data-driven approach. Unlike traditional functionals designed with hand-crafted features, Skala learns complex non-local representations from vast amounts of high-accuracy reference data. Its key advantage is breaking the traditional trade-off between accuracy and efficiency, achieving chemical accuracy (errors below 1 kcal/mol) for small molecules while retaining the computational cost of scalable semi-local DFT [13].

Research Reagent Solutions

Table 3: Essential Computational Tools for DFT-based Crystal Structure Prediction

| Research 'Reagent' | Function / Purpose | Examples / Notes |

|---|---|---|

| DFT Code | Software that performs the core electronic structure calculation. | VASP, Quantum ESPRESSO, ORCA, CASTEP |

| Exchange-Correlation Functional | Approximates the quantum mechanical exchange-correlation energy. | PBE (GGA), SCAN (meta-GGA), ωB97M-V (hybrid meta-GGA) [11] [10] |

| Machine Learning Potential | Fast, accurate surrogate model trained on DFT data. | M3GNet, CHGNet, UMA, MACE [10] [9] |

| CSP Workflow Tool | Automates structure generation, relaxation, and ranking. | FastCSP (combines Genarris 3.0 and UMA potential) [9] |

| High-Fidelity Dataset | Provides benchmark-quality data for training or validation. | OMol25, OMC25, MSR-ACC [12] [13] [11] |

Advanced Application: Band Gap Engineering in Metal Oxides

Accurately predicting the band gaps of strongly correlated materials like metal oxides is a notorious challenge for standard DFT. The DFT+U approach, which adds a Hubbard correction term to account for localized electrons, is a widely used solution. A key protocol involves:

- Systematic U Parameter Scanning: Perform DFT+U calculations over a grid of (Ud/f, Up) values, where Ud/f corrects the metal d/f-orbitals and Up corrects the oxygen p-orbitals [8].

- Benchmarking against Experiment: Calculate the band gap and lattice parameters for each (Ud/f, Up) pair. Identify the optimal pair that minimizes the deviation from experimental values [8].

- Machine Learning Integration: Train supervised ML models (e.g., regression algorithms) on the results of the DFT+U calculations. The model learns to predict the band gap for any given (Ud/f, Up) pair, creating a fast surrogate model that generalizes well to related polymorphs at a fraction of the computational cost of a full DFT+U calculation [8].

This DFT+U+ML workflow provides a robust framework for the high-throughput screening and design of metal oxides with tailored electronic properties for applications in catalysis, electronics, and energy storage.

The accurate prediction of crystal structures and their resultant properties is a cornerstone of modern materials science and drug development. This process relies heavily on a set of key computational properties that bridge the gap between atomic arrangement and macroscopic material behavior. For researchers using density functional theory (DFT) and related methods, three properties are particularly pivotal: the band gap, which governs electronic and optical characteristics; the density of states (DOS), which provides a detailed energy-level spectrum; and partial atomic charges, which quantify charge transfer and influence intermolecular interactions. This application note details modern protocols for the precise calculation of these properties, contextualized within the framework of DFT-based crystal structure prediction research. We synthesize recent methodological advances, benchmark performance across computational approaches, and provide structured workflows to enhance the reliability and reproducibility of predictions for scientific and industrial applications.

Quantitative Benchmarking of Key Properties

Performance of Electronic Structure Methods for Band Gap Prediction

Table 1: Benchmark accuracy of DFT and many-body perturbation theory (GW) for band gap prediction on a dataset of 472 non-magnetic solids [14].

| Method | Description | Mean Absolute Error (eV) | Systematic Bias | Computational Cost |

|---|---|---|---|---|

| mBJ (meta-GGA) | Best-performing meta-GGA functional [14] | ~0.3-0.4 (est.) | Moderate underestimation | Low |

| HSE06 (Hybrid) | Best-performing hybrid functional [14] | ~0.3-0.4 (est.) | Moderate underestimation | High |

| G0W0-PPA | One-shot GW with plasmon-pole approximation [14] | Marginal gain over mBJ/HSE06 | Starting-point dependent | Very High |

| QP G0W0 | Full-frequency quasiparticle G0W0 [14] | Significant improvement over G0W0-PPA | Reduced starting-point dependence | Very High |

| QSGW | Quasiparticle self-consistent GW [14] | Low | Systematic overestimation (~15%) | Extremely High |

| QSGŴ | QSGW with vertex corrections [14] | Most Accurate | Eliminates overestimation | Highest |

DFT+U Parameters for Accurate Metal Oxide Band Gaps

Table 2: Experimentally benchmarked Hubbard U parameters (eV) for DFT+U calculations on metal oxides [8]. Optimal pairs of Ud/f (for metal d/f orbitals) and Up (for oxygen 2p orbitals) are critical for accuracy.

| Material | Materials Project ID | Optimal Ud/f (eV) | Optimal Up (eV) |

|---|---|---|---|

| Rutile TiO2 | mp-2657 | 8 | 8 |

| Anatase TiO2 | mp-390 | 6 | 3 |

| c-ZnO | mp-1986 | 12 | 6 |

| c-ZnO2 | mp-8484 | 10 | 10 |

| c-ZrO2 | mp-1565 | 5 | 9 |

| c-CeO2 | mp-20194 | 12 | 7 |

Experimental Protocols

Protocol 1: Band Gap Calculation via DFT and Beyond

Principle: The band gap is a critical property for semiconductors and insulators. Standard DFT with local (LDA) or semi-local (GGA) functionals systematically underestimates band gaps, necessitating advanced functionals or many-body methods for quantitative accuracy [14] [8].

Methodology:

Initial Structure Optimization:

- Acquire the initial crystal structure from a reliable database (e.g., ICSD, Materials Project).

- Perform a full geometry relaxation using a GGA functional (e.g., PBE) to minimize forces on atoms and stresses on the unit cell.

Self-Consistent Field (SCF) Calculation:

- Using the optimized structure, perform a single-point SCF calculation with a high plane-wave cutoff energy and a dense k-point grid to obtain a converged charge density. Adhere to reproducible computational protocols to avoid the ~20% failure rate noted in bandgap calculations [15].

Band Structure & Band Gap Calculation:

- Extract the band structure and the fundamental band gap from the Kohn-Sham eigenvalues.

- For higher accuracy, choose one of the following paths:

- Path A: Hybrid Functional (HSE06). Repeat the SCF calculation with the HSE06 hybrid functional, which mixes a portion of exact Hartree-Fock exchange, to obtain a more accurate gap [14].

- Path B: Meta-GGA Functional (mBJ). Use the mBJ potential, a non-empirical modification that improves gap prediction without the high cost of hybrids [14].

- Path C: DFT+U for Correlated Systems. For transition metal oxides and other strongly correlated materials, apply the DFT+U method. Use a linear response calculation or consult literature benchmarks (see Table 2) to determine appropriate Ud and Up values for metal and oxygen orbitals, respectively [8].

- Path D: GW Approximation. For the highest accuracy, perform a GW calculation on top of the DFT wavefunctions. Start with a one-shot

G0W0calculation, but note that full-frequency integration (QPG0W0) or self-consistent schemes (QSGW, QSGŴ) offer dramatic improvements in accuracy, albeit at extreme computational cost [14].

Data Analysis: The fundamental band gap is calculated as the energy difference between the valence band maximum (VBM) and the conduction band minimum (CBM). For G0W0, the quasiparticle energy is computed using the linearized Sternheimer equation: E^QP = E^KS + Z * <φ|Σ(E^KS) - V_XC|φ>, where Z is the renormalization factor, Σ is the self-energy, and V_XC is the DFT exchange-correlation potential [14].

Protocol 2: Machine Learning-Augmented Crystal Structure Prediction (CSP)

Principle: Predicting the stable crystal structure of an organic molecule from its chemical formula alone is a complex global optimization problem. Machine learning (ML) can drastically improve the efficiency of CSP by intelligently pruning the search space [1] [16] [17].

Methodology:

Machine Learning-Based Lattice Sampling (SPaDe):

- Input: Provide a SMILES string or other molecular representation of the organic molecule.

- Space Group Prediction: Input the molecular fingerprint (e.g., MACCSKeys) into a pre-trained ML classifier (e.g., LightGBM) to predict probable space group candidates. Filter candidates based on a probability threshold [1] [17].

- Packing Density Prediction: Use a separate pre-trained ML regression model on the same fingerprint to predict the target crystal density [1] [17].

- Structure Generation: Use the predicted space groups and density (within a tolerance window) to generate initial crystal structures, rejecting low-density, unstable candidates early.

Structure Relaxation with Neural Network Potential (NNP):

- Relax the generated crystal structures using a neural network potential (e.g., PFP) pre-trained on DFT data. This achieves near-DFT accuracy at a fraction of the computational cost of full DFT relaxation [1].

- The relaxation is typically performed using the L-BFGS algorithm with a force threshold (e.g., 0.05 eV/Å) [1].

Landscape Analysis and Ranking:

- Plot the energy-density diagram of all relaxed structures.

- Identify the global minimum and low-energy polymorphs (typically within 7 kJ/mol). The structure corresponding to the global minimum is the predicted most stable crystal form [16].

Data Analysis: The success of CSP is determined by whether the experimentally observed structure is found among the low-energy predicted structures. This ML-guided workflow (SPaDe-CSP) has been shown to double the success rate of CSP for organic molecules compared to random sampling [1] [17].

Protocol 3: Experimental Determination of Partial Atomic Charges via Electron Diffraction

Principle: Partial atomic charges are fundamental for understanding chemical bonding, reactivity, and intermolecular interactions. Unlike X-rays, electrons interact strongly with the electrostatic potential of a crystal, making electron diffraction intrinsically sensitive to charge distribution [5]. The iSFAC (ionic Scattering Factors) modeling method leverages this to assign absolute partial charges to every atom in a crystalline compound.

Methodology:

Data Collection:

- Grow a high-quality single crystal of the target compound (e.g., an antibiotic like ciprofloxacin or an amino acid like histidine).

- Collect a high-resolution 3D electron diffraction (ED) data set using a standard electron diffractometer.

Conventional Structure Refinement:

- Solve and refine the crystal structure using the ED data. This initial model includes atomic coordinates (

x, y, z) and atomic displacement parameters (ADPs) for each atom, using theoretical scattering factors for neutral atoms.

- Solve and refine the crystal structure using the ED data. This initial model includes atomic coordinates (

iSFAC Modeling and Charge Refinement:

- Introduce one additional refinable parameter per atom: its partial charge.

- The scattering factor for each atom is modeled as a linear combination of the theoretical scattering factors of its neutral and ionic forms:

f_iSFAC = (1 - q) * f_neutral + q * f_ionic, whereqis the refined partial charge [5]. - Refine all parameters (coordinates, ADPs, and charges) simultaneously against the observed ED reflection intensities.

Validation:

Data Analysis: The refined q parameter for each atom represents its experimental partial charge on an absolute scale. For example, in a carboxylate group (–COO⁻), the carbon atom may carry a negative charge (e.g., -0.19e in tyrosine) due to electron delocalization, a result that aligns with quantum chemistry but may be counter-intuitive to classical chemical intuition [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational tools and datasets for property prediction and crystal structure research.

| Tool / Resource | Type | Primary Function | Application in Research |

|---|---|---|---|

| VASP [8] | Software Package | Ab-initio DFT/DFT+U calculations | Calculating electronic structure, band gaps, and DOS for periodic systems. |

| Quantum ESPRESSo [14] | Software Package | Plane-wave DFT and GW calculations | Performing initial DFT calculations and subsequent GW corrections for accurate band gaps. |

| Cambridge Structural Database (CSD) [1] | Data Repository | Curated repository of experimental organic and metal-organic crystal structures | Source of training data for machine learning models and experimental benchmarks for CSP. |

| Materials Project [8] | Data Repository | Database of computed properties for inorganic materials | Source of crystal structures and computed properties for benchmarking, e.g., providing IDs for specific polymorphs. |

| Neural Network Potentials (NNPs) [1] [18] | Computational Method | Machine-learned interatomic potentials | Accelerating structure relaxation in CSP with near-DFT accuracy and low cost (e.g., PFP model). |

| LightGBM [1] [17] | Software Library | Machine learning framework for classification and regression | Powering ML models for space group and crystal density prediction within CSP workflows. |

| iSFAC Modeling [5] | Experimental/Methodological Protocol | Refinement of partial charges from electron diffraction data | Experimentally determining absolute partial atomic charges for any crystalline compound. |

| OMC25 Dataset [18] | Dataset | >27 million DFT-relaxed molecular crystal structures | Training and benchmarking machine learning models for molecular crystal properties. |

The predictive computational determination of crystal structures, a discipline central to modern materials science and pharmaceutical development, hinges on the accurate quantification of intermolecular forces. Crystal Structure Prediction (CSP) aims to identify all possible crystalline forms (polymorphs) of a given molecule from first principles. The central challenge in this field stems from the fact that the stability and existence of molecular crystals are governed by a delicate balance of weak intermolecular interactions, whose total energy is often comparable to the thermal energy at ambient conditions. The energy differences between competing polymorphs are frequently on the order of 1–2 kJ mol⁻¹, which is less than the thermal energy at room temperature (kT ≈ 2.5 kJ mol⁻¹) [19]. This narrow energy window, combined with the complex energy landscape of molecular crystals, makes the reliable prediction of polymorphs a formidable task for density functional theory (DFT) and other computational methods. This application note details the inherent challenges and provides structured protocols to advance the accuracy of CSP for drug development and materials design.

Fundamental Challenges in Quantifying Weak Interactions

The Physical Nature and Energy Scales of Weak Interactions

In structural chemistry, 'weak interactions' encompass all forces weaker than a covalent bond or a full ion-ion interaction in an ionic bond [19]. These forces are paramount for the stability of molecular crystals.

Table 1: Energy Scales of Intermolecular Interactions in Molecular Crystals, using Benzene as an Example [19]

| Energy Type | Description | Typical Magnitude (kJ mol⁻¹) |

|---|---|---|

| Total Molecular Energy | Total energy of an isolated molecule from quantum chemistry calculation | ~608,000,000 |

| Covalent Bond Energy | Sum total of all intramolecular covalent bond energies | ~5,463 |

| Sublimation Enthalpy | Total intermolecular interaction energy in the crystal | ~43 - 47 |

| Polymorph Energy Difference | Energy difference between different crystal forms of the same molecule | ~1 - 2 |

Physically, the cohesive energy in molecular crystals arises from a combination of:

- Coulombic forces between permanent charges and dipoles.

- Polarization forces from the mutual induction of dipoles.

- Dispersion (London) forces from correlated instantaneous dipoles.

- Pauli exclusion repulsion between closed electron shells [19].

Specific, chemically recognizable interactions are often singled out, though they represent particular combinations of the fundamental physical forces above:

- Hydrogen Bonds (D—H⋯A): Ranging from very weak (C—H⋯O, ~5 kJ mol⁻¹) to strong, charge-assisted bonds (~150 kJ mol⁻¹) [19].

- Halogen Bonds (Y—X⋯D): Involving a depletion of electron density (σ-hole) on halogens like Cl, Br, or I, with energies from 10 to 200 kJ mol⁻¹ [19].

- π-π Interactions: Governing the stacking of aromatic molecules, though their nature has been historically disputed [19].

A critical insight from analysis is that the shortest, most conspicuous intermolecular contacts are often repulsive, representing a 'collateral damage' of the overall optimization of molecular packing, while a significant share of the cohesive energy is stored in structurally non-specific molecular contacts [19].

Computational Challenges for DFT

The accuracy required for reliable CSP pushes the limits of modern DFT. The grand challenge is the exchange-correlation (XC) functional, a universal but unknown term for which no exact expression is known [4]. Standard approximations typically have errors 3 to 30 times larger than the required chemical accuracy of about 1 kcal/mol (~4.2 kJ mol⁻¹) [4]. This error margin is dangerously close to the energy differences between polymorphs, making the correct ranking of predicted crystal structures exceptionally difficult. The problem is exacerbated for dispersion forces, which are a quantum mechanical phenomenon and are not naturally described by many traditional functionals, often requiring empirical corrections.

Quantitative Data and Comparative Analysis

Table 2: Comparison of Computational Methods for CSP Energy Ranking

| Method | Key Principle | Typical Cost | Strengths | Weaknesses/Limitations |

|---|---|---|---|---|

| Classical Force Fields (FF) | Pre-defined analytical potentials (e.g., atom-atom) [19]. | Low | Fast; enables screening of vast configuration spaces. | Limited transferability; empirical parameterization; questionable physical meaning at microscopic level [19]. |

| Machine Learning Force Fields (MLFF) | Machine-learned potentials from high-accuracy quantum data [20]. | Medium | Good accuracy/cost balance; can capture complex interactions. | Dependent on quality and breadth of training data. |

| Density Functional Theory (DFT) | solves for electron density with approximated XC functional [4]. | High | Generally good accuracy for diverse chemistry. | Accuracy limited by XC functional; poor treatment of dispersion without corrections; cost prohibitive for large systems. |

| Novel Deep-Learned DFT (e.g., Skala) | Deep learning of XC functional from vast, high-accuracy data [4]. | Medium to High | Reaches experimental accuracy for main group molecules; generalizes well. | Emerging technology; cost higher than meta-GGAs for small systems [4]. |

| PIXEL Method | Electron density partitioned into pixels; energy components calculated separately [19]. | Medium | Physically intuitive energy decomposition (Coulomb, polarization, dispersion). | Involves some empirical adjustments. |

Table 3: Key Metrics from a Large-Scale CSP Validation Study [20]

| Validation Metric | Result for 33 molecules with single known form (Z'=1) | Result after clustering similar structures |

|---|---|---|

| Success Rate (experimental structure found in top 10) | 33/33 (100%) | 33/33 (100%) |

| Success Rate (experimental structure ranked #1 or #2) | 26/33 (~79%) | Improved rankings for challenging cases (e.g., MK-8876, Target V) |

Experimental Protocols

Hierarchical Crystal Structure Prediction Protocol

This protocol describes a state-of-the-art CSP workflow, validated on a diverse set of 66 molecules, for robust polymorph prediction [20].

Workflow Diagram Title: CSP Hierarchical Prediction Workflow

Materials and Reagents:

- Software: CSP software implementing systematic search algorithms.

- Computational Resources: High-performance computing (HPC) cluster.

- Force Fields: Classical molecular mechanics force fields (for initial sampling).

- Machine Learning Force Field (MLFF): A pre-trained ML potential, such as one incorporating long-range electrostatics and dispersion [20].

- Quantum Chemistry Code: For periodic DFT calculations with dispersion-corrected functionals (e.g., r2SCAN-D3) [20].

Procedure:

- Systematic Crystal Packing Search:

- Input: A single, optimized molecular structure in a standard format.

- Method: Use a systematic search algorithm that employs a divide-and-conquer strategy, breaking down the crystallographic parameter space into subspaces based on space group symmetries (e.g., common Z'=1 space groups). Consecutively search each subspace to generate a vast set of initial crystal packing candidates [20].

Initial Energy Ranking with Classical Force Field (FF):

- Method: Perform molecular dynamics (MD) simulations or geometry optimization on the candidate structures using a classical force field.

- Purpose: This step serves as a low-cost filter to identify a subset of low-energy, plausible crystal structures from the large pool generated in Step 1 [20].

Re-ranking with Machine Learning Force Field (MLFF):

- Method: Take the shortlisted candidates from Step 2 and perform further structure optimization and energy evaluation using a machine learning force field.

- Purpose: MLFFs offer a better balance of accuracy and computational cost compared to classical FFs, providing a more reliable intermediate ranking before the final DFT step [20].

Final Energy Ranking with Periodic Density Functional Theory (DFT):

- Method: Calculate the single-point energy or perform a limited optimization of the top-ranked candidates from Step 3 using periodic DFT with a robust functional and dispersion correction (e.g., r2SCAN-D3).

- Purpose: This is the highest level of theory in the protocol, providing the most accurate energy ranking for the final prediction of stable polymorphs [20].

Clustering and Analysis:

- Method: Cluster the top-ranked DFT structures based on structural similarity (e.g., using RMSD₁₅ < 1.2 Å as a threshold) to remove trivial duplicates and identify genuinely unique polymorphs [20].

- Output: A final, curated list of predicted low-energy polymorphs, ranked by their DFT-calculated lattice energies.

Protocol for Advanced DFT with Deep-Learned Functionals

This protocol outlines the use of emerging, high-accuracy deep-learned density functionals to achieve experimental-grade accuracy in energy evaluations.

Workflow Diagram Title: Deep-Learned DFT Functional Workflow

Materials and Reagents:

- Reference Data: A large, diverse dataset of molecular structures with corresponding highly accurate energies (e.g., atomization energies computed using wavefunction methods like W4-17) [4].

- Software: A scalable pipeline for generating molecular structures and computing their energies. Access to substantial cloud or HPC compute resources is critical [4].

- Deep-Learning Architecture: A dedicated neural network architecture designed to learn the exchange-correlation (XC) functional from electron density data [4].

Procedure:

- Data Generation: Create a vast and chemically diverse training set. This involves generating a wide array of molecular structures and using high-accuracy, computationally expensive wavefunction methods (e.g., those developed by experts like Prof. Amir Karton) to compute benchmark-quality energy labels [4].

- Model Training: Train a deep-learning model (e.g., Skala) to learn the mapping between the electron density of a molecule and its exchange-correlation energy. This step moves beyond traditional, hand-designed functionals by allowing the model to learn relevant features directly from the data [4].

- Validation: Rigorously test the trained model on well-established, independent benchmark datasets that were not part of the training process. The goal is to achieve chemical accuracy (~1 kcal/mol) for target properties [4].

- Application: Integrate the validated, deep-learned functional into CSP workflows for the energy evaluation step. This provides DFT-level calculations with significantly improved accuracy, enhancing the reliability of polymorph ranking [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Modern CSP

| Tool / Reagent | Type | Primary Function in CSP |

|---|---|---|

| CrystalExplorer17 | Software Package | Enables visualization and quantitative analysis of intermolecular interactions via Hirshfeld surfaces and energy frameworks [19]. |

| Machine Learning Force Fields (MLFFs) | Computational Method | Accelerates and improves the accuracy of intermediate energy ranking in hierarchical CSP protocols [20]. |

| Dispersion-Corrected DFT (e.g., r2SCAN-D3) | Quantum Chemical Method | Provides a more physically realistic treatment of dispersion forces in final energy evaluations of crystal structures [20]. |

| Deep-Learned XC Functionals (e.g., Skala) | AI-Enhanced Quantum Chemical Method | Reaches experimental accuracy for energy calculations, overcoming a fundamental limitation of traditional DFT in CSP [4]. |

| Systematic Packing Search Algorithms | Software Algorithm | Efficiently explores the vast crystallographic space to generate initial candidate structures for a given molecule [20]. |

Modern CSP Workflows: Integrating DFT, High-Throughput Screening, and Machine Learning

The accurate prediction of crystal structures starting from only a molecular diagram remains a significant challenge in computational materials science and pharmaceutical development. [21] This process, known as Crystal Structure Prediction (CSP), is crucial across multiple industries, including pharmaceuticals, agrochemicals, organic semiconductors, and high-energy materials. [21] Traditional approaches based solely on Density Functional Theory (DFT) face a fundamental trade-off between computational accuracy and efficiency, particularly when exploring complex chemical spaces with exponential configuration growth. [22] [23] The energy differences between polymorphs can be remarkably small (often less than ~4 kJ/mol, with more than 50% of structures having energy differences smaller than ~2 kJ/mol), rendering universal force fields inadequate for reliable CSP ranking. [21] This application note examines current methodologies that integrate traditional DFT workflows with emerging machine learning approaches to overcome these limitations.

Current Methodologies in Structure Exploration

Mathematical and Topological Approaches

Recent advances have demonstrated that mathematical principles can supplement or partially bypass traditional force field calculations for initial structure generation. The CrystalMath approach derives governing principles from analyzing geometric and physical descriptors in the Cambridge Structural Database (CSD), positing that in stable structures, molecules orient such that principal axes and normal ring plane vectors align with specific crystallographic directions. [21] This method enables prediction of stable structures and polymorphs without initial reliance on interatomic interaction models, significantly accelerating the preliminary exploration phase. [21]

Machine Learning Potentials for Enhanced Sampling

Machine learning interatomic potentials (MLIPs) have emerged as transformative tools for bridging the accuracy-efficiency gap in structure exploration. Neural network potentials (NNPs) like EMFF-2025 achieve DFT-level accuracy for predicting structures, mechanical properties, and decomposition characteristics of molecular crystals while dramatically reducing computational cost. [24] Universal MLIP architectures such as the Universal Model for Atoms (UMA), trained on extensive datasets like the Open Molecular Crystals 2025 (OMC25) dataset containing over 27 million molecular crystal structures, provide transferability across wide chemical spaces without system-specific retraining. [12] [25]

Table 1: Comparison of Structure Exploration Methods

| Method Type | Representative Approach | Key Features | Applications |

|---|---|---|---|

| Mathematical | CrystalMath [21] | Analyzes geometric descriptors from CSD; No interatomic potentials | Initial structure generation; Polymorph sampling |

| ML Potentials | EMFF-2025 [24] | Transfer learning with minimal DFT data; CHNO elements | High-energy materials; Decomposition studies |

| Universal MLIP | UMA [25] | Trained on OMC25 dataset; Equivariant graph neural network | High-throughput CSP for diverse organic molecules |

| Chemical-Space | Chemical-space completeness [22] | Generative models + MLFFs in closed-loop cycle | Targeted exploration of defined chemical systems |

High-Throughput Workflows

Integrated workflows like FastCSP combine advanced random structure generation with MLIP-based relaxation and ranking. [25] This open-source protocol uses Genarris 3.0 for candidate generation across multiple space groups and Z values, followed by UMA for systematic geometry relaxation and free energy evaluation. [25] The methodology achieves energy resolutions within 5 kJ/mol, over 94% recall of known polymorphs, and completes in hours what traditionally required days with DFT. [25]

Energy Minimization Protocols and Convergence

Geometry Optimization Fundamentals

Geometry optimization is the process of changing a system's nuclear coordinates and potentially lattice vectors to minimize the total energy, typically converging to the nearest local minimum on the potential energy surface given the initial geometry. [26] This "downhill" movement on the PES requires careful monitoring of multiple convergence criteria to ensure physically meaningful results. [26]

Convergence Criteria and Optimization Parameters

Proper configuration of convergence parameters is essential for reliable geometry optimization. The AMS package implements comprehensive convergence monitoring for energy changes, Cartesian gradients, step sizes, and for lattice optimizations, stress energy per atom. [26] A geometry optimization is considered converged only when all the following criteria are met [26]:

- Energy change between steps < Convergence%Energy × number of atoms

- Maximum Cartesian nuclear gradient < Convergence%Gradient

- RMS of Cartesian nuclear gradients < 2/3 Convergence%Gradient

- Maximum Cartesian step < Convergence%Step

- RMS of Cartesian steps < 2/3 Convergence%Step

Table 2: Standard Geometry Optimization Convergence Criteria [26]

| Quality Setting | Energy (Ha/atom) | Gradients (Ha/Å) | Step (Å) | StressEnergyPerAtom (Ha) |

|---|---|---|---|---|

| VeryBasic | 10⁻³ | 10⁻¹ | 1 | 5×10⁻² |

| Basic | 10⁻⁴ | 10⁻² | 0.1 | 5×10⁻³ |

| Normal | 10⁻⁵ | 10⁻³ | 0.01 | 5×10⁻⁴ |

| Good | 10⁻⁶ | 10⁻⁴ | 0.001 | 5×10⁻⁵ |

| VeryGood | 10⁻⁷ | 10⁻⁵ | 0.0001 | 5×10⁻⁶ |

Lattice Optimization and Advanced Features

For periodic systems, lattice degrees of freedom can be optimized alongside nuclear positions using Quasi-Newton, FIRE, or L-BFGS optimizers. [26] Advanced features like automatic restarts enable the optimization to recover when saddle points are detected; when PES point characterization is enabled and symmetry is disabled, the geometry can be automatically distorted along the lowest frequency mode and re-optimized. [26]

Workflow Integration and Visualization

The integration of structure exploration and energy minimization follows a logical progression from initial sampling to final refinement, incorporating both traditional and machine-learning enhanced methods.

Crystal Structure Prediction and Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for DFT Structure Prediction

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| OMC25 Dataset [12] | Training Data | Over 27 million DFT-relaxed molecular crystal structures | Training MLIPs for organic crystals |

| EMFF-2025 [24] | Neural Network Potential | DFT-level accuracy for CHNO systems | High-energy materials design |

| UMA (Universal Model for Atoms) [25] | Machine Learning Interatomic Potential | Transferable potential for diverse organic molecules | High-throughput CSP workflows |

| CrystalMath [21] | Topological Algorithm | Mathematical structure generation without force fields | Initial polymorph sampling |

| FastCSP [25] | Workflow Protocol | Integrated structure generation and MLIP relaxation | End-to-end crystal structure prediction |

| DP-GEN [24] | Active Learning Framework | Automated training data generation for NNPs | Developing specialized ML potentials |

| CSLLM [27] | Large Language Model | Synthesizability prediction and precursor identification | Experimental feasibility assessment |

Traditional DFT workflows for structure exploration and energy minimization are undergoing transformative enhancement through integration with machine learning approaches. While fundamental convergence criteria and optimization algorithms remain essential, MLIPs now enable efficient exploration of complex configurational spaces with near-DFT accuracy. [24] [25] The emergence of universal potentials, extensive training datasets, and specialized workflows like FastCSP is making high-throughput CSP increasingly accessible, potentially reducing computation times from days to hours while maintaining rigorous energy minimization standards. [25] Future developments will likely address current limitations in handling flexible molecules, multi-component systems, and further improving accuracy for fine polymorph distinctions, ultimately strengthening the bridge between computational prediction and experimental synthesis in materials design and pharmaceutical development.

High-throughput computational screening has emerged as a transformative approach in materials science, enabling the rapid identification of novel materials with tailored properties from vast chemical spaces. This paradigm is particularly powerful when applied to two important classes of materials: metal-organic frameworks (MOFs) and organic semiconductors. These materials share a common characteristic—extensive tunability through molecular building blocks—but present distinct challenges for accurate property prediction. For MOFs, the accurate prediction of electronic properties like band gaps remains challenging due to limitations of standard density functional theory (DFT) functionals [28]. Similarly, for organic semiconductors, the search for materials with targeted optoelectronic properties requires navigating intricate structure-property relationships [29]. This Application Note details protocols for high-throughput screening within the broader context of DFT-predicted crystal structures, providing researchers with methodologies to accelerate discovery while maintaining computational accuracy.

High-Throughput Screening of Metal-Organic Frameworks

Protocol for Electronic Property Prediction

Objective: To accurately predict band gaps and electronic properties of MOFs using multi-fidelity DFT calculations and machine learning.

Workflow Overview: The protocol involves a sequential approach combining different levels of theory, beginning with standard generalized gradient approximation (GGA) calculations and progressing to more accurate hybrid functionals, with machine learning models bridging the accuracy-efficiency gap [28].

Step-by-Step Procedure:

- Initial Structure Curation: Begin with a database of MOF crystal structures, such as the Quantum MOF (QMOF) Database, ensuring structural relaxation with consistent convergence criteria [28].

- Multi-Fidelity DFT Calculations:

- Perform initial band structure calculations using the PBE GGA functional.

- Select a representative subset for higher-fidelity calculations using meta-GGA (HLE17) and hybrid (HSE06*, HSE06) functionals.

- Computational Parameters: Use a plane-wave energy cut-off of 600 Ha (if using CP2K), k-point sampling via the Monkhorst-Pack algorithm, and total energy convergence of at least 10⁻⁶ Ha [28] [30].

- Electronic Structure Analysis:

- Extract band gaps, density of states, and partial atomic charges.

- Compare results across functionals, noting that hybrid functionals typically increase predicted band gaps by ~0.05-0.10 eV per percent of Hartree-Fock exchange [28].

- Machine Learning Model Implementation:

- Train graph neural network models using the multi-fidelity DFT data.

- Use crystal structure graphs as input, with nodes representing atoms and edges representing bonds.

- Validate model performance against hold-out sets of hybrid-functional calculations.

Table 1: Comparison of DFT Functionals for MOF Band Gap Prediction

| Functional | Type | HF Exchange | Median Band Gap (eV) | Computational Cost | Recommended Use |

|---|---|---|---|---|---|

| PBE | GGA | 0% | Lowest (∼0.9, ∼2.93 bimodal) | Low | Initial screening, large databases |

| HLE17 | meta-GGA | 0% | Intermediate (∼0.86, ∼3.21 bimodal) | Moderate | Cost-effective improvement over PBE |

| HSE06* | Hybrid | 10% (short-range) | Intermediate-High | High | Balanced accuracy for semiconductors |

| HSE06 | Hybrid | 25% (short-range) | Highest (unimodal ∼4.0) | Very High | Benchmarking, final validation |

Protocol for Adsorption and Separation Properties

Objective: To identify MOFs with high performance for gas separation applications (e.g., argon from air) through a hierarchical screening strategy [31].

Workflow Overview: This protocol uses a two-step screening process that efficiently narrows thousands of structures to a handful of promising candidates by first filtering on structural descriptors, then evaluating separation performance via molecular simulations [31].

Step-by-Step Procedure:

- Database Preparation and Descriptor Calculation:

- Source structures from a curated database like the CoRE MOF 2019 database.

- Calculate key structural descriptors using software such as Zeo++:

- Largest Cavity Diameter (LCD)

- Pore-Limiting Diameter (PLD)

- Geometric Surface Area (GSA)

- Void Fraction (Φ)

- Density (ρ)

- Structural Pre-screening:

- Filter MOFs based on adsorbate kinetic diameters. For Ar/O₂/N₂ separation, exclude MOFs with PLD < 3.64 Å [31].

- Grand Canonical Monte Carlo (GCMC) Simulations:

- Set simulation parameters in RASPA 2.0: temperature = 298 K, adsorption pressure = 1 bar, desorption pressure = 0.1 bar.

- Use the Peng-Robinson equation of state for fugacity conversion.

- Treat MOF frameworks as rigid, using Lennard-Jones potentials for van der Waals interactions and the Ewald summation for electrostatic interactions [31].

- Performance Evaluation:

- Calculate key performance metrics: adsorption selectivity, working capacity, and adsorbent performance score.

- Identify top candidates and analyze common structural features (e.g., specific metal nodes, presence of open metal sites, optimal pore size ranges).

Diagram 1: MOF Screening Workflow for Gas Separation (Title: MOF Screening for Gas Separation)

High-Throughput Screening of Organic Semiconductors

Protocol for Optoelectronic Property Prediction

Objective: To computationally screen organic semiconductors for specific electronic applications (e.g., photovoltaics, transistors, light emitters) by predicting key electronic properties [29].

Workflow Overview: This protocol emphasizes a calibrated, multi-level approach. It begins with lower-level theory calculations on a large candidate set, then uses a carefully benchmarked relationship to predict properties at a higher level of theory, balancing accuracy and computational cost [29].

Step-by-Step Procedure:

- Candidate Generation:

- Generate molecular structures via combinatorial substitution of known core structures or from existing databases.

- Perform initial conformational analysis and geometry optimization using semi-empirical quantum mechanics (e.g., PM7) or low-level DFT.

- Methodology Benchmarking and Calibration:

- Select a diverse subset of 50-100 molecules.

- Calculate target properties (e.g., HOMO/LUMO energies, band gaps, excitation energies) using multiple levels of theory (semi-empirical, DFT with various functionals, wavefunction methods).

- If experimental data is available, establish a linear calibration curve to correct systematic errors.

- Example calibration: HOMO energies calculated with PM7 vs. B3LYP/6-31G* (R² > 0.9 is desirable) [29].

- High-Throughput Property Calculation:

- Execute large-scale calculations using the calibrated, cost-effective method identified in benchmarking.

- Compute relevant optoelectronic properties:

- Frontier orbital energies (HOMO, LUMO)

- Band gaps / HOMO-LUMO gaps

- Excitation energies and oscillator strengths

- Reorganization energies (for charge transport)

- Multi-objective Screening:

- Apply application-specific filters. For semitransparent organic photovoltaics, simultaneously screen for high power conversion efficiency and specific visible light transmittance [32].

- Use Pareto front analysis to identify candidates balancing competing objectives.

Table 2: Target Properties for Organic Electronics Applications

| Application Domain | Key Target Properties | Computational Method | Typical Benchmark Accuracy |

|---|---|---|---|

| Organic Photovoltaics | HOMO/LUMO levels of donor/acceptor, band gap, optical absorption | TD-DFT, calibrated DFT | ~0.1-0.3 eV vs experiment [29] |

| Light Emitters (OLEDs) | Excitation energy, oscillator strength, singlet-triplet gap (ΔE_ST) | TD-DFT, TDA-DFT | <0.2 eV for S₁ and T₁ levels [29] |

| Transistors | Frontier orbital energies, reorganization energy, transfer integrals | DFT, crystal packing prediction | R² > 0.8 for mobility trends [29] |

| Thermoelectrics | Seebeck coefficient, electrical conductivity, electronic band structure | Boltzmann transport theory | Qualitative ranking reliable [29] |

Diagram 2: Organic Semiconductor Screening Workflow (Title: Organic Semiconductor Screening)

Table 3: Key Software and Databases for High-Throughput Screening

| Resource Name | Type | Primary Function | Access |

|---|---|---|---|

| CP2K | Software Package | DFT/MD calculations for periodic systems (MOFs, crystals) | Open Source [30] |

| Quantum ESPRESSO | Software Package | DFT calculations using plane-wave basis sets and pseudopotentials | Open Source [30] |

| RASPA | Software Package | Molecular simulation (GCMC, MD) for adsorption/diffusion | Open Source [31] |

| Zeo++ | Software Tool | Analysis of porous structures (pore diameters, surface area) | Open Source [31] |

| QMOF Database | Database | Curated DFT properties for thousands of MOFs | Public [28] |

| CoRE MOF 2019 | Database | Experimentally reported MOFs, prepared for simulation | Public [31] |

| Materials Project | Database & Web App | Platform for computed materials properties, including MOFs | Public [28] |

| PHONOPY | Software Tool | Calculation of phonon spectra and dynamic stability | Open Source [30] |

The prediction of crystal structures from a given chemical composition is a fundamental challenge in materials science and pharmaceutical development. Conventional Crystal Structure Prediction (CSP) methods, which typically rely on global optimization algorithms coupled with density functional theory (DFT) calculations, face significant limitations due to their computational expense and difficulty in exploring complex energy surfaces [33]. This computational bottleneck becomes particularly pronounced for organic molecular crystals, which may contain hundreds of atoms per unit cell and exhibit complex intermolecular interactions.

Machine learning (ML) has emerged as a transformative approach to overcome these limitations by providing data-driven methods that enhance prediction accuracy while drastically reducing computational costs [33]. This application note focuses on two specific ML classifiers—space group and packing density predictors—that have demonstrated remarkable effectiveness in narrowing the CSP search space. By integrating these classifiers into a comprehensive CSP workflow, researchers can significantly accelerate the discovery of stable crystal structures, enabling more efficient materials design and polymorph screening for pharmaceutical applications.

Technical Specifications and Performance Metrics

Key ML Classifiers in CSP Workflows

Table 1: Machine Learning Classifiers for Crystal Structure Prediction

| Classifier Type | Architecture/Algorithm | Primary Function | Performance Metrics | Reference |

|---|---|---|---|---|

| Space Group Predictor | Machine Learning Model | Predicts probable space groups for a given chemical composition | Integrated into workflow achieving 80% success rate in CSP | [34] |

| Packing Density Predictor | Machine Learning Model | Predicts likely packing densities to filter unrealistic structures | Integrated into workflow achieving 80% success rate in CSP | [34] |

| CrystalFormer | Transformer-based Autoregressive Model | Space group-controlled generation of crystalline materials | Enables systematic exploration of crystalline materials space | [35] |

| CGCNN with Transfer Learning | Crystal Graph Convolutional Neural Network | Predicts formation energies of pre-relaxed crystal structures | Enables 93.3% prediction accuracy in benchmark tests | [36] |

Comparative Performance of ML-Enhanced CSP Methods

Table 2: Performance Comparison of ML-Accelerated CSP Methods

| Method Name | Key Components | Test System | Success Rate | Computational Advantage | |

|---|---|---|---|---|---|

| ML-based Lattice Sampling | Space group + Packing density predictors + Neural network potential | 20 organic crystals of varying complexity | 80% (twice that of random CSP) | Reduces generation of low-density, less-stable structures | [34] |

| ShotgunCSP | Transfer learning with CGCNN + Virtual library screening | 90 different crystal structures | 93.3% | Requires only single-shot screening rather than iterative DFT | [36] |

| CrystalMath | Topological principles + Physical descriptors | 260,000+ structures from CSD | Mathematical approach without force fields | Eliminates need for system-specific force field parameterization | [21] |

Workflow Integration and Experimental Protocols

Integrated CSP Workflow with ML Classifiers

The ML classifiers for space group and packing density are typically deployed within a comprehensive CSP workflow that combines generative models with efficient structure relaxation techniques. The following diagram illustrates a representative workflow integrating these critical components:

Workflow for ML-Accelerated Crystal Structure Prediction. This diagram illustrates the integration of space group and packing density classifiers within a comprehensive CSP workflow, from chemical composition input to final structure prediction.

Protocol for Space Group Classification

Objective: Predict probable space groups for a target chemical composition to reduce the crystallographic search space.

Materials and Data Requirements:

- Chemical composition (elements and stoichiometry)

- Representative dataset of known crystal structures (e.g., Materials Project, OMC25, CSD)

- Elemental descriptors (e.g., from XenonPy library)

Procedure:

- Data Preparation and Feature Extraction

- For the target composition, collect template crystal structures with similar composition ratios from reference databases [36].

- Convert chemical compositions into compositional descriptors (e.g., 290-dimensional descriptors from XenonPy).

- Apply clustering algorithms (e.g., DBSCAN) to identify templates with high compositional similarity to the target [36].

Model Application

- Input the compositional features into the pre-trained space group classifier.

- The model outputs probabilities for likely space groups based on patterns learned from training data.

- Select the top-k most probable space groups for structure generation.

Validation

- Compare predictions with known structures of analogous compounds.

- Cross-reference with structural preferences of constituent elements.

Troubleshooting Tips:

- For compositions with limited training data, consider transfer learning approaches.

- If predictions seem unreliable, expand the selection of probable space groups to ensure coverage.

Protocol for Packing Density Classification

Objective: Predict realistic packing density ranges to filter out improbable crystal structures during candidate generation.

Materials and Data Requirements:

- Molecular weight and approximate molecular volume

- Historical packing data for similar compounds

- Database of known molecular crystal densities (e.g., CSD)

Procedure:

- Feature Calculation

- Compute molecular descriptors including molecular weight, approximate volume, and functional group composition.

- For organic molecules, calculate the molecular surface area and polarizability.

Density Prediction

- Input molecular features into the trained packing density classifier.

- The model outputs a predicted density range based on learned relationships between molecular characteristics and packing efficiency.

Application in Structure Generation

- Use the predicted density range to filter candidate structures during virtual library generation.

- Eliminate structures with densities outside the predicted range before proceeding to more computationally expensive relaxation steps.

Troubleshooting Tips:

- For flexible molecules, consider multiple conformers and their effect on packing.

- For salts and hydrates, adjust density expectations based on counterions or water content.

The Scientist's Toolkit

Table 3: Key Resources for ML-Accelerated Crystal Structure Prediction

| Resource Category | Specific Tools/Databases | Function/Purpose | Access Method |

|---|---|---|---|

| Reference Datasets | OMC25 (Open Molecular Crystals) | Provides over 27 million DFT-relaxed molecular crystal structures for training ML models | Publicly available [12] |

| Reference Datasets | Materials Project | Database of DFT-calculated properties for inorganic crystals | Publicly available [36] |

| Reference Datasets | Cambridge Structural Database (CSD) | Curated database of experimentally determined organic crystal structures | Commercial license [21] |

| ML Potentials | CGCNN (Crystal Graph Convolutional Neural Network) | Predicts formation energies of crystal structures | Open-source [36] |

| Generative Models | CrystalFormer | Transformer-based model for space group-controlled crystal generation | Research code [35] |

| Generative Models | Wyckoff Position Generator | Creates symmetry-restricted atomic coordinates | Custom implementation [36] |

| Element Descriptors | XenonPy Library | Provides 58 element descriptors for quantifying chemical similarity | Python library [36] |

Advanced Methodologies and Case Studies

ShotgunCSP: A Case Study in Integrated ML Classification

The ShotgunCSP method exemplifies the powerful synergy between ML classifiers and structure prediction. This approach utilizes single-shot screening of virtually created crystal structures with a machine-learning energy predictor, bypassing the need for iterative DFT calculations [36]. The method employs two key technical components: (1) transfer learning for accurate energy prediction of pre-relaxed crystalline states, and (2) generative models based on element substitution and symmetry-restricted structure generation.

Protocol for ShotgunCSP Implementation:

Pretraining Global Model

- Train a Crystal Graph Convolutional Neural Network (CGCNN) on diverse crystals from the Materials Project database (126,210 crystals with DFT formation energies).

- This global model learns to predict baseline formation energies across diverse crystal structures.

Transfer Learning for System Localization

- Generate several thousand virtual crystal structures for the target system using either element substitution or Wyckoff position generation.

- Perform single-point DFT calculations on these generated structures.

- Fine-tune the pretrained global model using this system-specific data to create a localized energy predictor.

Virtual Library Creation and Screening

- Apply ML-based space group and packing density classifiers to generate promising candidate structures.

- Use the transfer-learned energy predictor to screen the virtual library and identify low-energy candidates.

- Perform full DFT relaxation only on the top-ranked candidates (typically fewer than a dozen structures).

This workflow has demonstrated exceptional prediction accuracy of 93.3% in benchmark tests with 90 different crystal structures, while being significantly less computationally intensive than conventional methods [36].

CrystalMath: A Topological Alternative

For researchers seeking to avoid force field parameterization entirely, the CrystalMath approach offers a purely mathematical alternative based on topological and physical descriptors [21]. This method posits that in stable structures, molecules are oriented such that principal axes and normal ring plane vectors align with specific crystallographic directions, and heavy atoms occupy positions corresponding to minima of geometric order parameters.

The CrystalMath approach analyzes geometric relationships in over 260,000 organic molecular crystal structures from the Cambridge Structural Database to derive governing principles for molecular packing. By minimizing an objective function that encodes these orientations and atomic positions, and filtering based on van der Waals free volume and intermolecular close contact distributions, stable structures can be predicted without reliance on an interaction model [21].

Machine learning classifiers for space group and packing density represent transformative tools in crystal structure prediction, effectively addressing the computational bottlenecks of traditional methods. By intelligently narrowing the search space and prioritizing promising candidates, these classifiers enable researchers to explore structural possibilities with unprecedented efficiency. The integration of these predictors into comprehensive workflows—such as the ML-based lattice sampling approach that achieves an 80% success rate—demonstrates their practical value in accelerating materials discovery and pharmaceutical development [34].

As ML models continue to improve and datasets expand, these classification approaches will play an increasingly vital role in crystal structure prediction, potentially enabling the systematic exploration of crystalline materials space that has long been a goal of materials science [35]. The protocols and methodologies outlined in this application note provide researchers with practical guidance for implementing these powerful approaches in their own crystal structure prediction workflows.

Alzheimer's disease (AD) is a severe neurodegenerative disorder and the most common cause of dementia in the elderly, characterized clinically by progressive memory loss and cognitive impairment [37]. The "Amyloid Cascade Hypothesis" posits that the accumulation of neurotoxic amyloid-β (Aβ) peptides in the brain is a critical molecular event in AD pathogenesis [37]. β-site amyloid precursor protein cleaving enzyme 1 (BACE-1) is the rate-limiting enzyme that initiates the production of Aβ peptides from the amyloid precursor protein (APP) [38] [37]. Consequently, BACE-1 is recognized as a premier therapeutic target for the design of disease-modifying Alzheimer's drugs, and immense efforts have been dedicated to discovering potent and selective BACE-1 inhibitors [39] [40]. This application note details how structure-based drug design, anchored by the analysis of crystal structure packages (CSP), has been leveraged to identify and optimize BACE-1 inhibitors, providing a protocol for modern drug discovery campaigns.

Structural Insights: Mapping the BACE-1 Binding Pocket

A foundational step in structure-based design is a deep understanding of the target's binding site. A systematic survey of 354 crystal structures of the BACE-1 catalytic domain in complex with ligands from the Protein Data Bank (PDB) has enabled a comprehensive mapping of the enzyme's binding pocket [39].