Beyond the Bias: Advanced Strategies for Correcting Inductive Bias in Materials Machine Learning

This article provides a comprehensive guide for researchers and scientists on identifying, mitigating, and correcting inductive biases in materials machine learning.

Beyond the Bias: Advanced Strategies for Correcting Inductive Bias in Materials Machine Learning

Abstract

This article provides a comprehensive guide for researchers and scientists on identifying, mitigating, and correcting inductive biases in materials machine learning. It explores the foundational sources of bias, from uneven data distributions to architectural priors in graph neural networks. The piece details cutting-edge methodological solutions, including entropy-targeted sampling and physics-informed learning, and offers a troubleshooting framework for diagnosing and optimizing biased models. Finally, it establishes rigorous validation and comparative analysis protocols to ensure models reflect true material capabilities rather than dataset artifacts, with direct implications for accelerating robust drug development and clinical research.

What is Inductive Bias? Understanding the Hidden Assumptions in Materials ML

What is Inductive Bias and Why Does It Matter in Materials ML?

In machine learning, and specifically in materials informatics, an inductive bias refers to the set of assumptions a learning algorithm uses to predict outputs for inputs it has not encountered before [1] [2]. These built-in assumptions enable the algorithm to prioritize one solution over another, independently of the observed training data [1].

Without inductive bias, learning from limited data would be impossible. As Mitchell (1980) explained, the problem of generalizing from training examples to unseen situations cannot be solved without making assumptions about the nature of the target function [1]. In practical terms, your model's architecture, training objective, and optimization method all embed specific inductive biases that influence what patterns it learns from your materials data.

Real-World Impact on Materials Research: If your model's inductive biases misalign with the true underlying physics or chemistry of your materials system, you may develop models that appear accurate during validation but fail to guide successful synthesis or predict properties under new conditions. Understanding and correcting these biases is therefore essential for reliable materials discovery.

FAQ: Diagnosing and Troubleshooting Inductive Bias Issues

Q: My model performs well on validation data but fails on new experimental data. Could inductive bias be the problem?

A: Yes, this classic failure pattern often indicates shortcut learning - where your model has exploited superficial correlations in your training data rather than learning the underlying mechanisms [3] [4].

Troubleshooting Checklist:

- Verify data distribution alignment: Check if your training data adequately represents the full parameter space of your materials system

- Test with synthetic data: Create data where you know the ground truth physical relationships

- Implement the LCN-HCN diagnostic: Train a low-capacity network (LCN) first - if it performs nearly as well as your high-capacity network (HCN), shortcuts are likely present [4]

- Analyze saliency maps: Visualize what features your model focuses on for predictions [5]

Q: How do I choose model architectures with appropriate inductive biases for materials data?

A: Different architectures embed different fundamental assumptions:

Table: Architectural Inductive Biases for Materials Data

| Architecture | Core Inductive Bias | Best for Materials Tasks | Potential Limitations |

|---|---|---|---|

| Fully-Connected Networks | No spatial structure; global interactions | Small datasets; scalar property prediction | Poor scaling; misses local correlations |

| Convolutional Neural Networks | Translation equivariance; locality | Crystal structure classification; microstructure analysis | May struggle with long-range interactions |

| Graph Neural Networks | Relational inductive bias; permutation invariance | Molecular property prediction; complex composites | Computational intensity for large systems |

| Transformer/Attention | Global dependencies; context weighting | Multi-scale materials modeling | Data hunger; may overfit small datasets |

Q: What practical methods can reduce shortcut learning in materials property prediction?

A: Implement these evidence-based strategies:

Interpretability-Guided Inductive Bias [5]:

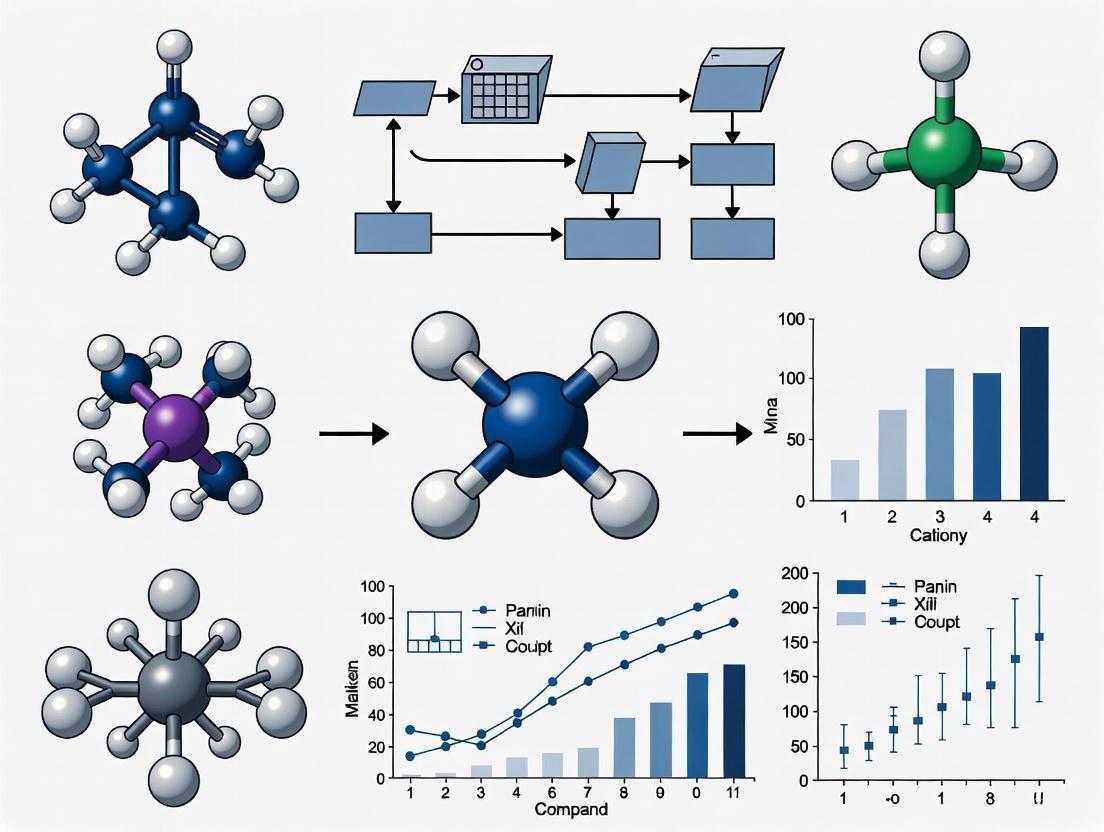

Interpretability-Guided Workflow

Two-Stage LCN-HCN Training [4]:

- Stage 1: Train a low-capacity network (LCN) on your materials data

- Stage 2: Use LCN predictions to identify "suspiciously easy" samples

- Stage 3: Downweight these samples when training your final high-capacity network

Experimental Protocols for Bias Detection and Correction

Purpose: Systematically identify all potential shortcuts in high-dimensional materials data.

Methodology:

- Unified Representation: Formalize materials data shortcuts in probability space

- Model Suite: Employ diverse models with different inductive biases

- Collaborative Mechanism: Learn the minimal set of shortcut features (Shortcut Hull)

- Diagnosis: Map the complete shortcut landscape of your dataset

Materials-Specific Adaptation:

- Include domain-specific biases (e.g., periodic boundary conditions, symmetry operations)

- Test across multiple length scales (atomic, microstructural, bulk)

Purpose: Prevent overreliance on simplistic correlations in complex materials systems.

Table: Implementation Framework

| Step | Procedure | Materials Research Application |

|---|---|---|

| 1. Capacity Calibration | Select LCN architecture that can only learn superficial features | Choose model too simple to capture true structure-property relationships |

| 2. Shortcut Detection | Train LCN and identify high-confidence predictions | Flag materials where simple features predict complex properties |

| 3. Importance Weighting | Downweight suspicious samples in HCN training | Focus HCN on challenging cases requiring deeper understanding |

| 4. Validation | Test OOD generalization | Validate on different material classes or synthesis conditions |

Key Insight: Solutions that seem "too good to be true" for complex materials problems usually are [4].

Essential Research Reagent Solutions

Table: Critical Tools for Inductive Bias Research

| Reagent/Tool | Function | Application Example |

|---|---|---|

| Low-Capacity Networks (LCN) | Shortcut detection | Identifying spurious correlations in microstructure-property data |

| Saliency Map Methods | Model decision interpretation | Verifying models use physically meaningful features |

| Shortcut Hull Learning | Comprehensive bias diagnosis | Mapping all potential shortcuts in high-throughput screening data |

| Multi-Model Suites | Bias comparison | Testing architectural assumptions across materials classes |

| Synthetic Data Generators | Controlled validation | Creating datasets with known ground-truth relationships |

Advanced Framework: Shortcut-Free Evaluation

Shortcut-Free Evaluation Pipeline

The Shortcut-Free Evaluation Framework (SFEF) enables unbiased assessment of your model's true capabilities [3]. This is particularly valuable for materials research where we need to understand if models are learning real physics or dataset-specific artifacts.

Implementation Guide:

- Diagnose existing datasets using Shortcut Hull Learning

- Construct shortcut-free benchmark datasets for your materials domain

- Evaluate models to reveal true architectural capabilities beyond preferences

- Select optimal architectures based on genuine physical understanding

Key Quantitative Findings on Inductive Biases

Table: Empirical Evidence of Inductive Bias Effects

| Study Focus | Key Finding | Relevance to Materials ML |

|---|---|---|

| Architecture Comparison [7] | CNN vs. Transformer predictivity nearly equal when diet constant | Architecture choice less critical than training data quality |

| Training Diet Effect [7] | Visual training variation has largest impact on brain predictivity | Data composition and preprocessing may outweigh model selection |

| Gradual Stacking [8] | Midas stacking improves reasoning despite similar perplexity | Training strategy can induce reasoning-friendly biases |

| LCN-HCN Method [4] | Two-stage approach reduces shortcut reliance by ~40% | Effectively mitigates spurious correlation learning |

Actionable Recommendations for Materials Researchers

Immediate Actions:

- Audit your datasets for potential shortcuts using LCN diagnostics

- Diversify your model suite to test different inductive biases

- Implement interpretability-guided training for physically consistent models

- Apply the "too-good-to-be-true" prior to skeptically evaluate surprisingly good results

Long-term Strategy:

- Develop materials-specific inductive biases encoding physical principles

- Create domain-appropriate shortcut-free benchmarks

- Establish bias-aware model selection protocols for your research group

By systematically addressing inductive biases, materials researchers can develop more robust, reliable models that capture real physical mechanisms rather than dataset artifacts, accelerating trustworthy materials discovery.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What are the most common types of data bias that affect materials prediction models?

Several specific types of data bias frequently lead to prediction failures in materials science [9]:

- Historical Bias: This occurs when your training data reflects past societal or research prejudices. For example, a model trained predominantly on data for oxide materials may perform poorly when predicting properties of novel metal-organic frameworks, because it has learned from a historically limited subset of chemistry [9].

- Selection Bias: This results from your data samples not being representative of the broader chemical or structural space. If you train a model only on data for highly stable, well-characterized crystals from a specific database (like the ICSD), it will likely produce skewed and inaccurate results when applied to metastable or novel crystal structures [9].

- Sampling Bias: A form of selection bias where the collected data does not accurately represent the target population. This often happens unintentionally, such as when data is gathered from literature that over-reports successful syntheses and under-reports failures, leading to a model that is overly optimistic about new material feasibility [9].

- Measurement Bias: This arises from incomplete or flawed data collection methods. For instance, an AI system that analyzes crystal structures might be biased if the primary characterization method (e.g., a specific type of electron microscopy) only works effectively for a certain class of materials, failing to capture the full picture for others [9].

Q2: My model performs well on validation data but fails in real-world testing. What could be wrong?

This classic sign of overfitting often stems from biased training data that does not adequately represent real-world conditions [10]. The model has likely learned the specific patterns of your limited dataset rather than the underlying physical principles of materials science.

- Primary Cause: Your training dataset likely lacks diversity. It may be based on a narrow range of synthesis conditions, a limited number of elements, or specific measurement techniques, causing the model to fail when confronted with the true variability of experimental environments [9] [11].

- Solution: Implement bias-correction algorithms and techniques like adversarial debiasing, which forces the model to ignore spurious correlations in the training data. Regularly refresh your datasets and retrain your models with new, representative data to prevent them from perpetuating outdated biases [9].

Q3: How can I check my dataset for proxy variables that might introduce bias?

Proxy variables are neutral-seeming features that indirectly correlate with protected characteristics, leading to skewed predictions [9].

- Identify Potential Proxies: Be vigilant for variables like a specific

synthesis_methodorprecursor_typethat might be strongly correlated with a desired material property in your training data but does not represent a fundamental causal relationship. A model might incorrectly learn to associate a specific furnace type with high material performance, a correlation that may not hold universally [9]. - Mitigation Strategy: Conduct a thorough correlation analysis in your dataset. Techniques like Principal Component Analysis (PCA) can help uncover hidden variable dependencies. For high-stakes predictions, it is vital to keep human experts "in-the-loop" to provide contextual understanding and ethical judgment, ensuring the model's decisions are based on real material science, not statistical artifacts [9].

Q4: What is a practical first step to mitigate bias in a new materials AI project?

The most effective initial step is to diversify your training data [9].

- Action: Actively seek and collect datasets from a wide variety of sources—different research groups, various synthesis protocols, multiple characterization techniques—to ensure a balanced and representative mix of all relevant material classes and conditions you intend the model to work with [9].

- Tool Integration: As demonstrated in the CRESt (Copilot for Real-world Experimental Scientists) platform, you can augment your data by incorporating information from diverse sources, including scientific literature, microstructural images, and experimental results. This multi-modal approach creates a more robust knowledge base for the AI, mimicking how human scientists integrate diverse information [12].

Troubleshooting Guide: Diagnosing and Correcting for Inductive Bias

Inductive bias refers to the necessary assumptions a learning algorithm uses to predict outputs of unseen inputs. The problem is not the existence of bias, but whether the specific biases are beneficial for accurately representing the material world or problematic because they create erroneous representations or unfair outcomes [13]. The following workflow provides a systematic approach to diagnosis and correction.

Diagnostic and Correction Workflow for Inductive Bias

Diagnostic Steps

Audit Training Data for Representativeness

- Objective: Determine if your dataset covers the relevant chemical, structural, and processing space.

- Protocol: Perform a statistical analysis (e.g., using PCA or t-SNE) to visualize the distribution of your data points in a reduced-dimensional space. Look for large, unexplored gaps that correspond to real-world material classes you care about. Compare the distribution of key features (e.g., elemental composition, crystal symmetry, band gap) in your data to their known distribution in broader materials literature or databases [9] [11].

Check for Proxy Variables and Spurious Correlations

- Objective: Identify if the model is relying on non-causal, data-specific correlations for its predictions.

- Protocol: Use model interpretation tools like SHAP or LIME to understand which features are most important for the model's predictions. Scrutinize high-importance features that lack a direct, physically justified link to the target property. For example, if a model predicting catalyst performance overly relies on the

research_groupfeature, it may be exploiting a proxy bias [9].

Test Model on Deliberate Edge Cases and New Experimental Data

- Objective: Evaluate the model's generalization capability beyond its training comfort zone.

- Protocol: Create a dedicated test set containing material compositions or structures that are under-represented in the training data. Better yet, use the model to propose new materials, then synthesize and test them in the lab, as done in autonomous discovery platforms like CRESt. A significant performance drop between validation and this real-world test is a key indicator of problematic inductive bias [12].

Correction Actions

Diversify Data Sources and Apply Sampling Weights

- Methodology: Actively collect data from underrepresented regions of the materials space. If that's not immediately possible, assign higher sampling weights to rare classes during training to balance their influence on the model's learning process [9].

Apply Bias-Correction Algorithms

- Methodology: Integrate technical methods like adversarial debiasing into your training pipeline. This technique involves training a companion model (the adversary) to predict a sensitive attribute (e.g., whether a material belongs to a historically over-represented class) from the main model's predictions. The main model is then trained to maximize predictive accuracy for the target property while minimizing the adversary's ability to predict the sensitive attribute, thus forcing it to learn features that are invariant to that bias [9] [14].

Implement Human-in-the-Loop Validation and Active Learning

- Methodology: For high-stakes predictions, do not rely solely on the AI. Establish a protocol where model outputs, especially for novel or high-uncertainty regions, are validated by a materials expert. Furthermore, use active learning frameworks, including reinforcement fine-tuning as seen in CrystalFormer-RL, where the model's own predictions are evaluated by discriminative models or experiments, and the results are fed back to iteratively improve the model [9] [15]. This creates a corrective feedback loop.

Quantitative Data on Bias Impacts

The table below summarizes real-world impacts of data bias, illustrating the tangible costs of inaction.

| Bias Type | Real-World Example | Impact / Quantitative Cost |

|---|---|---|

| Historical Bias [9] | Hiring algorithm trained on male-dominated tech industry data. | Amazon scrapped an AI recruiting tool for penalizing resumes containing the word "women's" [9]. |

| Selection Bias [9] | Medical diagnostic tool trained on data from a single hospital. | Model performance dropped significantly when used in different regions with more diverse patient populations [9]. |

| Sampling Bias [9] [14] | Facial recognition trained on a dataset lacking diversity. | Higher error rates for darker skin tones; one model amplified a 33% gender disparity in images to 68% [14]. |

| Proxy Variable Bias [9] | Loan approval algorithm using ZIP code data. | Unfair discrimination against people from lower-income backgrounds, even with strong credit histories [9]. |

| Feedback Loop Bias [9] | Recommendation engine for content or products. | Creates a "filter bubble," reinforcing initial biases and limiting user exposure to new options [9]. |

Experimental Protocols for Bias Mitigation

Protocol 1: Reinforcement Fine-Tuning for Property-Guided Generation

This protocol is based on the CrystalFormer-RL methodology, which uses reinforcement learning (RL) to fine-tune a generative materials model, infusing knowledge from discriminative models to reduce bias and guide discovery toward stable, high-performance materials [15].

- Objective: Correct the bias of a pre-trained generative model to favor materials with specific, desirable properties (e.g., low energy above convex hull for stability, high dielectric constant).

- Pre-Trained Model: Start with a base generative model, such as CrystalFormer, which has been pre-trained on a broad dataset of crystal structures (e.g., the Alex-20 dataset) [15].

- Reward Model: Define a reward function,

r(x), based on a discriminative model. This can be a Machine Learning Interatomic Potential (MLIP) to predict energy above hull (for stability) or a property prediction model for functional properties [15]. - RL Fine-Tuning: Use a reinforcement learning algorithm (e.g., Proximal Policy Optimization - PPO) to fine-tune the generative model. The objective is to maximize the expected reward of generated samples while preventing the model from straying too far from its original, general knowledge. This is formalized by maximizing the objective function [15]:

ℒ = 𝔼x∼pθ(x) [ r(x) - τ ln( pθ(x) / p_base(x) ) ]- Here,

pθ(x)is the current model,p_base(x)is the original pre-trained model, andτis a parameter controlling the strength of the deviation penalty [15].

- Iteration: The fine-tuned model generates new candidate structures, which are evaluated by the reward model. The reward signals are then used to update the model's policy in the next iteration, creating a closed loop that progressively reduces bias toward unstable or low-performance materials [15].

Protocol 2: Multi-Modal Active Learning with the CRESt Platform

This protocol leverages the CRESt platform to combat bias by integrating diverse data sources, moving beyond single data streams that can create a narrow, biased view [12].

- Objective: Accelerate materials discovery while maintaining reproducibility and reducing bias by incorporating literature knowledge, experimental data, and human feedback.

- Platform Setup: Employ the CRESt platform, which integrates robotic synthesizers (e.g., liquid-handling robots, carbothermal shock systems), automated characterization tools (e.g., electron microscopy, electrochemical workstations), and multi-modal AI models [12].

- Knowledge Embedding: For a given recipe, the system creates a numerical representation (embedding) based on previous knowledge from scientific literature and databases before conducting the experiment [12].

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on this high-dimensional knowledge embedding space to identify a reduced search space that captures most of the performance variability [12].

- Bayesian Optimization (BO): Use BO in this reduced, knowledge-informed space to design the next best experiment. This is more efficient than standard BO, which can get lost in a vast, poorly defined parameter space [12].

- Feedback Loop: After the experiment, feed all newly acquired data (text, images, electrochemical results) and human feedback back into the model. This updates the knowledge base and refines the search space for the next iteration, creating a self-correcting system that mitigates initial data biases [12].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and experimental components used in advanced, bias-aware materials AI research, as featured in the cited protocols.

| Item / Solution | Function in Bias-Aware Research |

|---|---|

| Pre-trained Generative Model (e.g., CrystalFormer) [15] | Provides a foundational, pre-existing distribution of crystal structures p_base(x) which serves as the starting point for reinforcement fine-tuning, helping to anchor the model in realistic chemistry. |

| Discriminative Reward Model (e.g., MLIP, Property Predictor) [15] | Acts as a source of truth or a "compass" to guide the generative model. It provides the reward signal r(x) that pushes the model to generate materials with desired properties, directly countering historical biases in the base training data. |

| Reinforcement Learning Algorithm (e.g., Proximal Policy Optimization) [15] | The engine of fine-tuning. It optimizes the objective function that balances maximizing reward with staying close to the base model, implementing the bias correction. |

| Multi-Modal Knowledge Base (as in CRESt) [12] | Aggregates text, images, and data from literature and experiments. By using diverse information sources, it reduces reliance on any single, potentially biased, data stream. |

| Automated Robotic Platform (High-Throughput Synthesis & Test) [12] | Rapidly generates large, diverse datasets of experimental results. This data is crucial for identifying and correcting gaps (selection bias) in existing theoretical or literature-based data. |

| Human-in-the-Loop Feedback [9] [12] | The researcher provides critical domain expertise, contextual understanding, and ethical judgment that pure AI models lack. This is essential for validating outputs, debugging irreproducibility, and ensuring the research remains aligned with its goals. |

FAQs on Experimental Design and Synthesis Bias

This FAQ addresses how systematic biases in experimental focus can skew research data and machine learning models, and provides strategies for mitigation.

1. How does "researcher intuition" actually introduce bias into materials science? Researcher intuition often relies on heuristics—mental shortcuts or "rules of thumb"—for efficient decision-making. While practical, these heuristics are susceptible to systematic errors and cognitive biases [16].

- Types of Problematic Heuristics: The availability heuristic leads scientists to choose synthesis methods that come to mind most easily, often those most published. The representativeness heuristic can cause assumptions that similar materials will have similar synthesis pathways, overlooking viable alternatives. The adjustment heuristic means the initial conditions (e.g., a commonly used precursor) heavily influence the final outcome, preventing sufficient exploration [16].

- Impact: This reliance on intuition and established convention can inadvertently limit the diversity of tested reactions and conditions, a form of "curricular bias" in experimental practice [16] [17].

2. What is the 'Easier-to-Synthesize' problem? The 'Easier-to-Synthesize' problem is the tendency to prioritize and repeatedly investigate materials that are more straightforward to make, rather than those that might have optimal properties. This happens because:

- Synthesis is a Pathway Problem: Creating a material requires a specific, viable reaction path. A thermodynamically stable material is not necessarily synthesizable if the kinetic pathway involves competing phases or impurities [18]. For example, the multiferroic BiFeO₃ is notoriously difficult to synthesize without impurities like Bi₂Fe₄O₉, and the solid electrolyte LLZO readily forms the impurity La₂Zr₂O₇ at high temperatures [18].

- Convenience Overrides Optimization: Once a "good enough" synthesis route is established, it becomes the convention. A study of barium titanate (BaTiO₃) recipes found that 144 out of 164 entries used the same precursors (BaCO₃ + TiO₂), a route known for high temperatures and long reaction times, despite the potential for better alternatives [18].

3. How does this experimental bias affect machine learning (ML) in materials science? ML models are trained on data from published scientific literature, which is a reflection of past experimental choices, not a comprehensive map of all possible chemical reactions.

- Amplification of Human Bias: An ML model trained on such a human-curated dataset can learn and reinforce these historical preferences. A study on vanadium borates found that a model trained on a human-generated dataset was less successful at predicting reaction outcomes than a model trained on a dataset with randomly generated conditions [19].

- Limited Discovery: This creates a feedback loop where ML models are better at proposing materials similar to known ones, potentially missing novel, high-performing materials that require unconventional synthesis routes [18] [19]. The scientific literature largely omits "negative results" (failed attempts), further skewing the data available for training [18].

Troubleshooting Guide: Identifying and Correcting for Bias

| Problem Symptom | Diagnosis | Corrective Action & Experimental Protocol |

|---|---|---|

| Low Reproducibility or varying properties (e.g., surface area) in a published synthesis. | Likely unreported phase impurities or a narrow window of thermodynamic stability for the target material. | Action: Systematically vary key synthesis parameters to map the phase space.Protocol: As done for MOF-235/MIL-101, test different solvent ratios (e.g., DMF:Ethanol), reactant stoichiometries (e.g., Fe:TPA), and temperatures. Use XRD and BET surface area analysis to correlate conditions with phase purity [20]. |

| ML model proposals are uncreative or fail in the lab. | Model is likely suffering from biased training data that over-represents certain pathways. | Action: Augment training data with deliberately diverse or random conditions.Protocol: As demonstrated in research, incorporate randomly generated reaction conditions into your training sets. Actively seek out and test "negative data" or unconventional precursor combinations to break the model's reliance on historical biases [19]. |

| A common synthesis route is inefficient, costly, or unreliable. | The conventional method may be based on historical convenience rather than optimal performance. | Action: Employ statistical optimization methods to explore a wider parameter space efficiently.Protocol: Use methods like Orthogonal Experimental Design to scientifically screen multiple factors (e.g., power, time, concentration) simultaneously. This was used to optimize a microwave reactor, determining the best combination of power (200 W), time (100 min), and concentration (50 mM/L) for MOF synthesis [21]. |

| Needing to find a new synthesis pathway for a computationally predicted material. | The obvious pathway may be kinetically hindered; you need to find a "mountain pass" instead of going "over the top" [18]. | Action: Use a reaction network-based approach to generate hundreds of potential pathways.Protocol: Model alternative reaction pathways starting from different precursors, including rarely tested intermediate phases. Use thermodynamic modeling and machine learning to filter for low-energy-barrier routes that avoid problematic byproducts before lab validation [18]. |

Visualizing the Bias Cycle and Solution Strategy

The following diagram illustrates how experimental bias is perpetuated and where corrective strategies can be applied.

The Self-Reinforcing Cycle of Experimental Bias and Correction Points

The Scientist's Toolkit: Key Reagents & Methods for Unbiased Synthesis

The following table lists essential components and methods for developing robust and reproducible synthesis protocols.

| Item / Method | Function & Role in Mitigating Bias |

|---|---|

| DMF (N,N-Dimethylformamide) | A common solvent in MOF synthesis. Its ratio to other solvents (e.g., Ethanol) is a critical parameter determining phase purity, as variations can lead to different products (e.g., MOF-235 vs. MIL-101) [20]. |

| Orthogonal Experimental Design | A chemometric method that efficiently screens the individual and interactive effects of multiple experimental factors without testing every possible combination, saving resources while providing robust optimization data [21]. |

| Decision Table (Rough Set Theory) | A data analysis method for optimizing synthesis conditions. It helps identify core attributes (critical synthesis parameters) and allows for attribute reduction, simplifying complex optimization processes by focusing on the most impactful factors [22]. |

| X-ray Diffraction (XRD) | The primary technique for verifying the phase purity of a synthesized material. It is essential for diagnosing impurities and confirming the success of a synthesis, as shown in the identification of MIL-101 contamination in MOF-235 samples [20]. |

| BET Surface Area Analysis | A key characterization method to confirm the porous structure of materials like MOFs. It provides a quantitative measure that correlates with phase purity, where a higher-than-expected surface area can indicate the presence of a more porous impurity phase (e.g., MIL-101) [20]. |

Frequently Asked Questions (FAQs)

Q1: What are the most common architectural inductive biases in Graph Neural Networks (GNNs) for atomic systems, and why are they necessary?

GNNs designed for 3D atomic systems incorporate specific architectural inductive biases to respect the fundamental physical symmetries and properties of atomic structures. The most common biases are E(3)-invariance and E(3)-equivariance [23] [24]. E(3)-invariance ensures that model predictions for scalar properties (like energy) remain unchanged under rotations, translations, and reflections of the input structure. This is necessary because the energy of a molecule should not depend on its orientation in space. E(3)-equivariance ensures that predictions for vectorial properties (like a dipole moment) transform consistently with the input structure. These biases are necessary because they build the known laws of physics directly into the model architecture, reducing the hypothesis space the model must learn from and leading to better generalization, especially with limited data [24].

Q2: My GNN model for molecule property prediction shows poor performance. What could be the issue?

Poor performance can stem from several issues related to an inadequate inductive bias or model architecture:

- Incorrect Symmetry Handling: If you are predicting a scalar property but your model is not E(3)-invariant, it will waste capacity learning to be invariant to rotations and translations, rather than focusing on the relevant chemical information [23] [24].

- Over-squashing or Over-smoothing: These are common issues where information from distant nodes becomes distorted (over-squashing) or node representations become too similar (over-smoothing) after many message-passing steps. This is particularly problematic in large molecules where long-range interactions are important [25].

- Insufficient Geometric Information: Relying solely on the chemical graph (atoms and bonds) may not be enough. Many state-of-the-art models explicitly incorporate geometric information like interatomic distances, bond angles, and dihedral angles to better model atomic interactions [24].

Q3: How can I effectively model both local (covalent) and non-local (non-covalent) interactions in a single model?

A effective strategy is to use a multiplex graph representation, which uses separate graph layers to model different types of interactions [24]. For example:

- A local graph can be defined using chemical bonds or a small distance cutoff, and its message passing can incorporate complex geometric information like angles.

- A global graph can be defined using a larger distance cutoff to capture non-local interactions (e.g., van der Waals, electrostatic), and its message passing can rely primarily on pairwise distances for efficiency. A fusion module, such as an attention mechanism, can then learn to combine the node embeddings from both layers for the final prediction. This approach is inspired by molecular mechanics, where energy is separately computed for local and non-local terms [24].

Q4: What does "graph unlearning" mean in the context of materials science, and when would I need it?

Graph unlearning involves removing the influence of a subset of training data (e.g., specific atoms or molecules) from a trained GNN model. This is primarily driven by privacy regulations like GDPR, which grant individuals the "right to be forgotten" [26]. In a research context, you might need it to correct for biased data or to update a model after discovering that certain data points are erroneous. A successfully unlearned model should completely remove the information of the target data, maintain high performance on the original task (model utility), and be efficient to compute [26].

Troubleshooting Guides

Issue: Model Fails to Capture Long-Range Interactions in Large Molecules or Crystals

Problem: Predictions are inaccurate for properties that depend on interactions between atoms that are far apart in the graph but close in 3D space (e.g., in proteins or crystal materials).

Diagnosis Steps:

- Check the number of message-passing layers (K): Information can only propagate K steps in the graph. If K is smaller than the graph's diameter, distant nodes cannot communicate.

- Identify node bottlenecks: Certain graph structures can "squash" information from a large receptive field into a fixed-size vector, leading to information loss [25].

- Verify the graph construction: Ensure your graph includes edges for relevant non-covalent interactions (e.g., using a distance cutoff) and is not limited to just covalent bonds.

Solutions:

- Increase Model Depth: Carefully increase the number of message-passing layers K to be at least the diameter of the graph. Be wary of over-smoothing.

- Use a Multiplex Architecture: Implement a framework like PAMNet that explicitly adds a global interaction graph with a large cutoff to capture non-local edges efficiently [24].

- Incorporate Higher-Order Representations: Explore architectures beyond standard Message Passing Neural Networks (MPNNs), such as subgraph GNNs or spectral methods that can better capture long-range dependencies [25] [27].

Issue: Model is Not Data-Efficient

Problem: The GNN requires an impractically large amount of labeled training data to achieve good performance.

Diagnosis Steps:

- Evaluate the inductive bias: A weak inductive bias means the model has to learn everything from data. Check if your model properly encodes physical symmetries (E(3)-invariance/equivariance) and geometric constraints [23].

- Analyze the task: Property prediction for a new class of materials with limited data is a common challenge.

Solutions:

- Strengthen Physics-Based Biases: Adopt a geometric GNN that explicitly uses distances and angles, and guarantees E(3)-invariance. This directly encodes physical intuition [24].

- Employ Self-Supervised Learning (SSL): Use Graph Contrastive Learning (GCL) for pre-training. The model can learn rich representations from unlabeled molecular data by solving a pretext task, such as distinguishing between original and augmented graph views, before fine-tuning on the limited labeled data [27].

- Leverage Multi-Task Learning: Train a single model to predict multiple related properties simultaneously. This encourages the model to learn more robust and generalizable representations [25].

Issue: Implementing Effective Graph Unlearning

Problem: You need to remove the data and influence of specific nodes (e.g., a particular molecule) from a trained GNN to comply with privacy requests or correct for bias, but retraining from scratch is too expensive.

Diagnosis Steps:

- Determine the unlearning scope: Identify if you need to remove nodes, edges, or entire graphs.

- Assess the neighborhood impact: Recognize that removing a node also affects the representations of its neighbors due to the message-passing mechanism [26].

Solutions:

- Use a Loss-Based Unlearning Method: Frameworks like Node-CUL (Node-level Contrastive Unlearning) directly optimize the model's embedding space instead of retraining [26].

- Follow a Two-Step Unlearning Process:

- Node Representation Unlearning: Adjust the embeddings of the target "unlearning" nodes so they become similar to unseen test nodes. This is done by pushing their embeddings away from same-class neighbors and pulling them towards different-class nodes [26].

- Neighborhood Reconstruction: Optimize the embeddings of the neighbors of the unlearning nodes to remove the influence of the forgotten node, thereby preserving the model's utility on the remaining graph [26].

Experimental Protocols & Methodologies

Protocol: Benchmarking GNN Architectures for Material Property Prediction

Objective: Compare the performance of different GNN architectures on a standardized set of material property prediction tasks.

Datasets:

- Materials Project: A large database of computed crystal structures and properties.

- QM9: A dataset of quantum chemical properties for ~134k small organic molecules.

Methodology:

- Data Preprocessing: Standardize the conversion of crystal structures (CIF files) or molecules (SDF files) into graph representations. Common node features include atomic number, valence, etc. Edge features can include bond type or distance.

- Model Training:

- Architectures: Train and evaluate the following model classes:

- Training Regime: Use a consistent data split (e.g., 80/10/10 train/validation/test), optimizer (e.g., Adam), and loss function (e.g., Mean Squared Error for regression) across all models.

- Evaluation: Compare models based on accuracy (e.g., Mean Absolute Error) and computational efficiency (training time, memory usage).

Table 1: Key Quantitative Metrics for GNN Benchmarking

| Metric | Description | Ideal Value |

|---|---|---|

| Mean Absolute Error (MAE) | Average absolute difference between predicted and true values. | Lower is better |

| Training Time (per Epoch) | Time required to process the entire training set once. | Lower is better |

| Inference Memory Usage | Maximum memory consumed during prediction on the test set. | Lower is better |

| Parameter Count | Total number of trainable parameters in the model. | Context-dependent |

Protocol: A Standard Message-Passing (MPNN) Workflow

The following diagram illustrates the core workflow of a Message Passing Neural Network (MPNN), a common framework for GNNs in materials science [25].

Detailed Steps:

- Graph Representation: Convert the atomic system into a graph ( G = (V, E) ), where atoms are nodes ( v \in V ) and chemical bonds/interactions are edges ( e \in E ). Initialize node features ( hv^0 ) (e.g., atom type) and edge features ( e{vw} ) (e.g., bond length) [25].

- Message Passing (K steps): For each node, iteratively aggregate information from its neighbors.

- Message Function (Mt): For each node ( v ), a message ( mv^{t+1} ) is computed as the sum of messages from its neighbors ( N(v) ): ( mv^{t+1} = \sum{w \in N(v)} Mt(hv^t, hw^t, e{vw}) ) [25].

- Update Function (Ut): Each node's embedding is updated using its previous embedding and the incoming message: ( hv^{t+1} = Ut(hv^t, m_v^{t+1}) ) [25]. After K steps, each node's embedding contains information from its K-hop neighborhood.

- Readout/Global Pooling: A permutation-invariant function ( R ) (e.g., sum, mean, or a learned function) pools all final node embeddings ( {hv^K} ) into a single graph-level embedding: ( y = R({hv^K | v \in G}) ) [25].

- Prediction: The graph-level embedding ( y ) is passed through a final network (e.g., a fully-connected layer) to make a prediction, such as a material's formation energy.

Protocol: The Node-Level Contrastive Unlearning (Node-CUL) Process

This protocol details the method for removing a node's influence from a trained GNN, as described in [26]. The process is visualized in the diagram below.

Detailed Steps:

- Inputs: A pre-trained GNN model and a set of target nodes ( U ) to be unlearned.

- Node Representation Unlearning: For each unlearning node ( u \in U ), adjust its embedding ( zu ) in the model's latent space [26].

- Push: Apply a loss term to push ( zu ) away from the embeddings of its immediate neighbors that have the same class as ( u ). This disconnects ( u ) from its local context.

- Pull: Apply a loss term to pull ( z_u ) towards the embeddings of other nodes in the graph that have a different class. This encourages the model to treat ( u ) as an unknown/unseen node.

- Neighborhood Reconstruction: To maintain the model's utility, adjust the embeddings of all neighbors of the unlearning nodes [26].

- For each neighbor, apply a loss term to pull its embedding closer to the embeddings of its other neighbors (excluding the unlearning node). This repairs the local graph structure by removing the influence of ( u ).

- Output: The updated model where the unlearning nodes have no discernible influence, and the performance on the remaining graph is preserved.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Models for Geometric Deep Learning in Materials Science

| Item | Function & Purpose | Key Characteristics |

|---|---|---|

| Message Passing Neural Network (MPNN) Framework [25] | A general and flexible framework for building GNNs. It formalizes the "message-passing" paradigm, making it easier to implement and reason about new architectures. | Provides a scalable and intuitive blueprint for graph learning. Serves as the foundation for many specialized models. |

| E(3)-Invariant/Equivariant Layers [23] [24] | Neural network layers designed to built the symmetries of 3D space directly into the model. They ensure predictions are physically meaningful (e.g., energy is rotation-invariant). | Critical for data efficiency and physical correctness. Reduces the need for data augmentation and helps models generalize from limited data. |

| Graph Contrastive Learning (GCL) [27] | A self-supervised learning method. It generates multiple "views" of a graph via augmentations and trains the model to agree on these views, learning useful representations without labels. | Enables pre-training on vast unlabeled molecular databases. Useful for initializing models before fine-tuning on small, labeled datasets. |

| Multiplex Graph Representation [24] | A graph data structure that uses multiple layers to represent different types of interactions (e.g., local covalent vs. non-local van der Waals) within the same system. | Allows for efficient and accurate modeling of complex interactions in molecules and materials by applying specialized operations to each layer. |

| Influence Function & Unlearning Methods (e.g., Node-CUL) [26] | Mathematical and algorithmic frameworks to quantify the effect of a training data point on a model's predictions and to efficiently "remove" that influence without full retraining. | Essential for model compliance with data privacy regulations (e.g., "right to be forgotten") and for correcting biases in trained models. |

Foundations: Understanding Bias in Data and Models

What is inductive bias in the context of machine learning for materials science?

Inductive bias refers to the set of assumptions a model uses to predict outputs for inputs it has not encountered before. In materials machine learning (ML), these are the built-in preferences that guide how an algorithm generalizes from its training data to new, unseen materials or compounds [28]. Unlike pejorative social biases, inductive biases are often necessary for learning; they help constrain the infinite hypothesis space to make learning tractable. For instance, a convolutional neural network has an inductive bias that spatial relationships are important, which is useful for analyzing crystal structures [28].

What is the core problem with bias in public materials databases?

The core problem is that these databases are often not perfectly representative of the vast, potential "materials universe." They can contain systematic distortions—such as over-representing certain classes of materials or properties—which are then learned and amplified by ML models [29] [30]. When a model trained on this biased data is deployed, it may fail to generalize accurately to materials that fall outside the scope of its skewed training set, leading to unreliable predictions and failed experimental guidance [30].

What are the common types of bias we might encounter?

The table below summarizes common bias types relevant to public materials databases.

| Bias Type | Description | Example in Materials Databases |

|---|---|---|

| Historical Bias [29] [30] | Bias embedded in the data due to past research priorities, measurement techniques, or cultural prejudices. | A database of superconducting materials is overwhelmingly composed of cuprates because these were the research focus for decades, under-representing newer iron-based or organic superconductors. |

| Selection/Representation Bias [29] [30] [31] | The sampling process does not accurately represent the target population, leading to skewed distributions. | A polymer database contains mostly rigid, high-strength polymers because flexible polymers were harder to characterize with older equipment, creating a gap in the data. |

| Measurement Bias [30] | Systematic errors introduced during the data collection or generation process. | Experimental formation energies in a database are consistently over-estimated due to a miscalibrated instrument used by a major contributing lab. |

| Confirmation Bias [29] | The tendency to search for, interpret, or favor data that confirms one's pre-existing beliefs or hypotheses. | A researcher selectively records data points that align with a predicted structure-property relationship, ignoring anomalous results. |

| Survivorship Bias [29] | Focusing on data points that have "survived" a selection process while ignoring those that did not. | A database of commercially successful catalysts only includes formulations that passed clinical trials, omitting all the failed candidates and their valuable property data. |

| Evaluation Bias [30] | Arises when benchmarking or evaluating a model on a dataset that does not represent the real-world deployment scenario. | A model for predicting material hardness is only tested on pure elements and simple alloys, but is later used to screen complex high-entropy alloys where it performs poorly. |

Troubleshooting Guide: Identifying and Diagnosing Bias

FAQ: How can I tell if my model's poor generalization is due to database bias?

| Symptom | Potential Underlying Bias Cause | Diagnostic Experiment |

|---|---|---|

| High training accuracy, low validation/test accuracy on your hold-out set. | The model has overfitted to spurious correlations present only in the training data. | 1. Perform subgroup analysis: Check if performance drops are concentrated in specific material classes or property ranges that are under-represented in the training data. 2. Use model explanation tools (e.g., SHAP) to see if the model is relying on non-causal features for its predictions [30]. |

| The model performs well on one class of materials but fails on another. | Representation bias: The failed class was under-represented in the training database [30]. | 1. Analyze the distribution of your training data across relevant categories (e.g., crystal system, constituent elements). 2. Stratify your performance metrics by these categories to identify "blind spots." |

| The model makes accurate but ethically or scientifically problematic predictions (e.g., systematically underestimating properties for materials developed by certain institutions). | Historical bias: The training data reflects past inequities in resource allocation or research focus [32]. | 1. Conduct an audit for fairness across relevant sensitive attributes. 2. Trace the provenance of low-performing data subgroups to identify potential sources of bias in the data generation process [32]. |

FAQ: What are the practical steps for quantifying data bias in a database before training?

The following protocol provides a methodological framework for auditing a public materials database.

Experimental Protocol 1: Quantifying Representation Bias in a Public Database

- Objective: To systematically measure the coverage and balance of a public materials database across key dimensions to identify potential gaps and imbalances.

- Research Reagent Solutions:

- Database Access: Scripts (e.g., in Python) to programmatically access and query the database via its API (e.g., the Materials Project, AFLOWLIB, NOMAD).

- Analysis Environment: A computational environment (e.g., Jupyter Notebook) with standard data science libraries (Pandas, NumPy, Scikit-learn).

- Visualization Tools: Libraries for data visualization (Matplotlib, Seaborn, Plotly) to create histograms, scatter plots, and other charts.

- Methodology:

- Define Relevant Axes of Analysis: Identify the material features most relevant to your prediction task. Common axes include:

- Compositional: Ranges of atomic number, electronegativity, or percentage of a specific element.

- Structural: Crystal system (e.g., cubic, hexagonal), space group, or coordination number.

- Property-based: Ranges of band gap, bulk modulus, or formation energy.

- Compute Descriptive Statistics: For each axis, calculate basic statistics (mean, standard deviation, min, max) and generate distribution plots (histograms, kernel density estimates).

- Measure Class Imbalance: For categorical axes (e.g., crystal system), calculate the frequency of each category. A high Gini impurity or entropy score indicates high imbalance.

- Perform Dimensionality Reduction: Use techniques like PCA or t-SNE on the feature space (e.g., using composition fingerprints) to visualize the overall data density in a 2D or 3D projection. Sparse regions indicate under-explored areas of the materials space.

- Compare to a Reference: If available, compare the database distribution to a known, broader distribution (e.g., the theoretical space of all possible ternary compounds) to quantify coverage.

- Define Relevant Axes of Analysis: Identify the material features most relevant to your prediction task. Common axes include:

Methodologies for Bias Mitigation and Robust Model Training

FAQ: My database is biased. How can I still train a robust model?

Once bias is identified, you can employ several mitigation strategies during the data preparation and model training phases.

Experimental Protocol 2: Mitigating Bias via Data-Centric Techniques

- Objective: To adjust the training data or the training process to reduce the model's reliance on biased patterns and improve its generalization to underrepresented groups.

- Research Reagent Solutions:

- Data Augmentation Libraries: Tools like

pymatgenfor generating derivative crystal structures orSMOTE(viaimbalanced-learn) for creating synthetic samples in feature space. - Reweighting Algorithms: Functions within ML frameworks (e.g.,

class_weightin Scikit-learn) or custom implementations for reweighting loss functions. - Synthetic Data Generators: (Advanced) Generative models like VAEs or GANs trained on the existing data to create new, realistic material data points for sparse regions [30].

- Data Augmentation Libraries: Tools like

- Methodology:

- Data Augmentation: For under-represented material classes, create new training examples by applying symmetry-preserving transformations or adding small noise to existing structures and properties. This increases the effective sample size for these groups.

- Resampling:

- Oversampling: Randomly duplicate samples from the minority classes.

- Undersampling: Randomly remove samples from the majority classes.

- Note: Oversampling can lead to overfitting, while undersampling discards potentially useful data. Use cross-validation to assess impact.

- Algorithmic Fairness / Reweighting: Modify the model's learning algorithm to assign higher weights to samples from under-represented groups during the loss calculation. This forces the model to pay more attention to these samples.

- Synthetic Data Generation: Use techniques like SMOTE or generative models to create plausible, new data points in the feature-space regions that are sparse in the original database [30]. This can help "fill in the gaps" of the training distribution.

FAQ: Are there specific model training techniques that can improve generalization despite biased data?

Yes, generalization techniques can be employed that make the model less sensitive to the specific, potentially biased, noise in the training data. However, recent research indicates that these techniques do not automatically guarantee fairness and can sometimes amplify existing biases if not applied carefully [33].

- Sharpness-Aware Minimization (SAM): This technique seeks to find parameter values that not only have low loss but also lie in a neighborhood (a "flat region") where the loss remains consistently low. This leads to models that are less sensitive to perturbations in the input data, which can improve generalization [33].

- Differential Privacy (DP): DP training adds calibrated noise to the optimization process to prevent the model from memorizing individual data points. This can help mitigate overfitting to specific biased examples in the training set. The combination of DP with SAM (DP-SAT) has been shown to further improve the privacy-utility trade-off [33].

- Critical Consideration: It is crucial to monitor the model's performance across different subgroups after applying these techniques. As noted in the research, "generalization techniques can amplify model bias in both private and non-private models" [33]. Therefore, bias mitigation must be an explicit, measured goal.

| Tool / Resource | Function | Relevance to Bias Mitigation |

|---|---|---|

| Materials Project API | Programmatic access to a vast database of computed material properties. | Enables the automated auditing of data distributions and the identification of gaps via scripts. |

| Pymatgen | A robust, open-source Python library for materials analysis. | Provides tools for canonicalizing crystal structures (reducing measurement bias) and generating symmetrically equivalent structures (data augmentation). |

| SHAP (SHapley Additive exPlanations) | A game theory-based approach to explain the output of any ML model. | Critical for diagnosing which features a model is using, helping to identify if it relies on spurious, biased correlations [30]. |

| imbalanced-learn | A Python toolbox for tackling dataset with class imbalance. | Provides implementations of resampling techniques like SMOTE and various undersampling/oversampling algorithms. |

| Fairlearn | An open-source project to help developers assess and improve the fairness of AI systems. | Contains metrics and algorithms for evaluating model performance across subgroups and for mitigating unfairness. |

Corrective Frameworks: From Data-Centric Sampling to Physics-Informed Architectures

Core Concepts and FAQs

What is the primary goal of Entropy-Targeted Active Learning (ET-AL)? The primary goal of ET-AL is to mitigate data bias in materials science datasets by strategically acquiring new data points that improve the diversity of underrepresented materials families or crystal systems. It uses an information entropy-based metric to measure and guide the correction of uneven data coverage, leading to more robust and generalizable machine learning models [34].

How does ET-AL differ from standard Active Learning? While standard active learning focuses on selecting the most informative samples to reduce model uncertainty (e.g., via uncertainty sampling), ET-AL specifically targets the improvement of dataset diversity. It uses an entropy-based metric to actively mitigate existing biases in the data distribution, rather than just optimizing for model performance [35] [34].

Within my thesis on inductive bias, how does ET-AL function as a data-level intervention? Inductive biases are the inherent assumptions (e.g., model architecture) that guide a learning algorithm. ET-AL acts as a complementary, data-level intervention. By directly curating a more balanced and physically representative dataset, it ensures that the inductive biases of the model are applied to a fairer data foundation, preventing the model from being misled by initial data imbalances and steering it toward more physically consistent and reliable predictions [36] [37].

What is the key entropy metric used in ET-AL? ET-AL employs an information entropy-based metric to quantify the bias in a dataset. This metric measures the uneven coverage across different materials families (e.g., crystal systems). A lower entropy value indicates a more biased dataset, where certain families are over- or under-represented. The active learning process then explicitly works to increase this entropy, thereby improving overall data diversity [34].

Implementation and Experimental Protocols

Workflow of the ET-AL Framework

The Entropy-Targeted Active Learning framework operates through an iterative loop of model training, entropy-based data selection, and targeted data acquisition. The diagram below illustrates this core workflow.

Quantitative Metrics for Bias and Performance

Effective implementation requires tracking specific metrics to quantify bias mitigation and model performance.

Table 1: Key Experimental Metrics for ET-AL Implementation

| Metric Category | Specific Metric | Description | Interpretation |

|---|---|---|---|

| Bias Measurement | Information Entropy of Dataset [34] | Quantifies the diversity and balance of data across different crystal systems or material families. | A higher entropy value indicates a more balanced and less biased dataset. |

| Model Performance | Prediction Accuracy / Mean Absolute Error (MAE) [38] | Measures the model's performance on a standardized test set, including hold-out samples from underrepresented groups. | Improved accuracy or reduced MAE indicates better generalization. |

| Downstream Impact | Stability Prediction "Hit Rate" [38] | The proportion of model-predicted stable materials that are verified as stable by DFT calculations. | A higher hit rate shows the model is more efficiently discovering stable materials. |

Step-by-Step Experimental Protocol

This protocol details the steps for implementing ET-AL in a materials discovery pipeline, drawing from large-scale active learning approaches [38].

Initialization:

- Begin with an initial dataset of materials (e.g., from established databases like the Materials Project). This dataset inherently contains biases in its coverage of different crystal systems [34].

- Train a baseline machine learning model (e.g., a Graph Neural Network) to predict target properties like formation energy or stability.

Candidate Generation:

- Generate a large and diverse pool of candidate materials. This can be achieved through methods like:

Entropy-Targeted Selection:

- Calculate Entropy: Compute the current information entropy of your dataset, broken down by a relevant category like crystal system.

- Identify Gaps: Identify which crystal systems or material families are most underrepresented (lowest contribution to overall entropy).

- Filter Candidates: From the candidate pool, prioritize materials that belong to these underrepresented groups. This selection can be guided by the model's uncertainty to combine diversity with informativeness.

Targeted Acquisition & Verification:

- Take the selected candidates and evaluate them using high-fidelity methods, typically Density Functional Theory (DFT) calculations [38].

- This step verifies the true properties of the candidates and is considered the "oracle" or expensive evaluation in the active learning loop.

Iteration and Model Update:

- Incorporate the newly acquired data (structures and their DFT-verified properties) into the training dataset.

- Retrain the machine learning model on this updated, more diverse dataset.

- Repeat steps 2-5 until a stopping criterion is met, such as a sufficient entropy level in the dataset or convergence in model performance.

Troubleshooting Common Experimental Issues

Problem: The entropy of the dataset is not increasing significantly after multiple iterations.

- Potential Cause 1: The candidate generation process is not diverse enough and is unable to produce viable candidates for the underrepresented groups.

- Solution: Broaden candidate generation strategies. Incorporate random structure search methods in addition to substitution-based approaches to explore a wider chemical space [38].

- Potential Cause 2: The selection criteria are too focused on model uncertainty alone, which can overlook diverse but seemingly "simple" samples.

- Solution: Explicitly bias the query strategy to favor samples from low-entropy (underrepresented) groups, even if their uncertainty is not the absolute highest [34].

Problem: The downstream model performance is not improving despite an increase in dataset entropy.

- Potential Cause 1: The newly acquired data from underrepresented groups is noisy or contains errors.

- Solution: Validate the accuracy of your high-fidelity verification method (e.g., DFT settings) for these new material classes. Ensure consistency in data quality.

- Potential Cause 2: The model capacity is insufficient to capture the complexities of the newly integrated, diverse data.

- Solution: Consider scaling up your model architecture. Research has shown that larger graph network models, when trained on massive and diverse datasets, exhibit improved generalization and emergent capabilities [38].

Problem: The active learning process is computationally expensive.

- Potential Cause: Each cycle requires costly DFT verification.

- Solution: Implement a pre-filtering step. Use a fast, pre-trained model to screen out clearly unstable candidates before passing the most promising ones to the entropy-targeting step and subsequent DFT verification [38].

The Scientist's Toolkit: Essential Research Reagents

This table lists key computational tools and data resources essential for implementing ET-AL in materials informatics.

Table 2: Key Research Reagents and Computational Tools

| Item Name | Function / Description | Relevance to ET-AL Experiment |

|---|---|---|

| Graph Neural Networks (GNNs) | A class of deep learning models that operate on graph-structured data, ideal for representing crystal structures [38]. | The core model architecture for predicting material properties (e.g., energy) and guiding the discovery process. |

| Density Functional Theory (DFT) | A computational quantum mechanical method used to investigate the electronic structure of many-body systems. | Serves as the high-fidelity "oracle" to verify the stability and properties of candidate materials identified by the active learning loop [38]. |

| Materials Databases (e.g., Materials Project, OQMD) | Public databases containing computed properties for a vast number of known and predicted crystalline materials [38]. | Provides the initial, often biased, dataset for bootstrapping the ET-AL process and serves as a source for candidate generation via substitutions. |

| Information Entropy Metric | A quantitative measure of the uncertainty or diversity present in a dataset's distribution. | The central metric for ET-AL, used to quantify initial bias and track progress in mitigating it by improving data balance across material families [34]. |

FAQs and Troubleshooting Guide

This section addresses common challenges researchers face when implementing Shortcut Hull Learning (SHL) in materials science and drug development contexts.

Q1: What is the fundamental cause of shortcut learning in high-dimensional materials data, and why is it particularly problematic?

Shortcut learning arises from inherent biases in datasets, which cause models to exploit unintended correlations or "shortcuts" instead of learning the underlying scientific principles [3]. These shortcuts are spurious features that happen to be correlated with the prediction target in the training data but do not hold in real-world deployment settings [39]. In high-dimensional data, the number of potential features grows exponentially, creating a "curse of shortcuts" where it becomes impossible to manually account for all possible unintended correlations [3]. This is especially problematic in materials and drug discovery because it undermines model robustness and interpretability, leading to predictions that fail to generalize beyond the specific conditions of the training data.

Q2: How does Shortcut Hull Learning (SHL) fundamentally differ from traditional bias mitigation techniques like dataset balancing or fairness constraints?

SHL introduces a paradigm shift from traditional methods. Instead of manipulating predefined shortcut features or using correlation-based debiasing, SHL unifies shortcut representations in a probability space and defines a fundamental indicator called the shortcut hull (SH)—the minimal set of shortcut features [3]. It then employs a suite of models with diverse inductive biases to collaboratively learn this shortcut hull, enabling a comprehensive diagnosis of the dataset itself [3]. This contrasts with traditional methods that often only identify specific, pre-specified shortcuts and fail to provide a holistic view of all biases present in complex, high-dimensional data [40].

Q3: Our model performs well on validation data but fails on external test sets. What is the first step in diagnosing shortcut learning as the cause?

The first diagnostic step is to implement the core SHL protocol: apply a suite of diverse models (e.g., CNNs, Transformers, Graph Neural Networks) with different inductive biases to your data [3]. If these models, which have inherent preferences for different types of features, all exploit the same shortcut and fail similarly on the external test set, it strongly indicates a fundamental shortcut inherent in the dataset itself, rather than a failure of a specific model architecture. This helps shift the focus from model tuning to data quality and construction [3].

Q4: What are the most common types of shortcut features encountered in materials informatics?

Shortcut features can be categorized based on their causal relationship with the target property [39]. The table below summarizes the common types and their impact on materials machine learning (ML).

Table: Common Shortcut Feature Types in Materials Informatics

| Shortcut Type | Causal Structure | Example in Materials Science | Impact on Model Generalizability |

|---|---|---|---|

| Anti-Causal | The prediction target causes the shortcut feature. | A specific synthesis lab's "signature" (e.g., a subtle impurity profile) is correlated with a target material property because that lab predominantly produces high-performance samples. | Fails when applied to materials synthesized in new labs without that signature. |

| Common Cause | A shared, unobserved factor causes both the target and the shortcut. | The use of a specific brand of characterization equipment (which introduces its own artifacts) is correlated with the discovery of a new polymer phase because a leading research group uses that brand. | Fails when data from different equipment is introduced. |

| Direct Effect | The shortcut feature affects the target in the training context but not in deployment. | In virtual drug screening, a molecule's calculated molecular weight may be associated with activity in the training library but is not a causal factor for binding in a diverse chemical space. | Fails to identify active compounds outside the narrow weight range of the training set. |

Q5: How can we construct a shortcut-free dataset for reliably evaluating the global capabilities of our AI models?

Following the SHL paradigm, constructing a shortcut-free dataset is a systematic process [3]:

- Formalize the Problem: Define your intended solution (the

Y_Intpartition of your sample space) using domain knowledge. - Diagnose with a Model Suite: Apply a diverse set of models (e.g., CNNs, Transformers, models with different inductive biases) to your initial dataset.

- Learn the Shortcut Hull (SH): Use the collaborative performance and failure modes of the model suite to identify the minimal set of shortcut features (the SH) present in your data.

- Data Intervention: Systematically modify or remove the identified shortcut features from the dataset. This may involve data augmentation, feature masking, or generating new samples that decouple the shortcut from the label.

- Validate with SFEF: Re-evaluate the same model suite on the new, intervened dataset using the Shortcut-Free Evaluation Framework (SFEF). A successful intervention is indicated by the models now demonstrating their true capabilities, which may challenge previous assumptions (e.g., CNNs outperforming Transformers on global tasks) [3].

Experimental Protocols and Methodologies

This section provides a detailed, step-by-step protocol for implementing the SHL diagnostic paradigm.

Protocol: Diagnosing Dataset Shortcuts with SHL

Objective: To identify the Shortcut Hull (SH) of a high-dimensional materials science dataset, enabling the creation of a robust, shortcut-free benchmark.

Table: Key Research Reagent Solutions for SHL Experiments

| Reagent (Conceptual) | Function in the SHL Workflow | Example Instantiations |

|---|---|---|

| Model Suite with Diverse Inductive Biases | To collaboratively learn and probe the dataset for different types of shortcuts. Each model's inherent preferences help uncover different subsets of the shortcut hull. | CNN-based models (biased towards local features), Transformer-based models (biased towards global attention), Graph Neural Networks, Linear Models [3] [41]. |

| Probabilistic Formalization Framework | To provide a unified, representation-agnostic space for defining shortcuts and the intended solution. | The probability space (Ω, F, ℙ) with defined random variables for input (X) and label (Y), and the formal definition of the intended partition σ(Y_Int) [3]. |

| Shortcut-Free Evaluation Framework (SFEF) | The final benchmarking environment that assesses the true capabilities of models after shortcuts have been mitigated. | A newly constructed dataset (e.g., a topological dataset for global capability assessment) where the shortcut hull has been empirically identified and removed [3]. |

Step-by-Step Procedure:

- Problem Setup and Probabilistic Formalization:

- Define your sample space

Ω(e.g., all possible material structures or molecular graphs in your domain of interest). - Formally define your intended classification task by specifying the intended label partition

σ(Y_Int)of the sample space [3].

- Define your sample space

Assembly of the Diagnostic Model Suite:

- Carefully select a minimum of 3-4 model architectures with fundamentally different inductive biases. For materials data, this should include:

- A CNN-based model (e.g., ResNet), biased towards learning local, translation-invariant features.

- A Transformer-based model (e.g., Vision Transformer), biased towards learning global dependencies via self-attention.

- A simple model (e.g., a heavily regularized logistic regression), biased towards learning a sparse set of strongly predictive features [41].

- Carefully select a minimum of 3-4 model architectures with fundamentally different inductive biases. For materials data, this should include:

Collaborative Learning and SH Identification:

- Train all models in the suite on the same training data split.

- Evaluate all models on a carefully curated out-of-distribution (OOD) test set or through cross-validation. The OOD set should be designed to break the suspected spurious correlations (e.g., materials from a different synthesis route or drugs from a different chemical library).

- Analyze the performance gaps and error patterns across the model suite. Consistent failure on a specific type of OOD sample by all or most models is a key indicator of a dataset shortcut that forms part of the Shortcut Hull.

Data Intervention and SFEF Validation:

- Based on the identified SH, intervene on the dataset. This could involve:

- Feature Removal: Cropping out or masking corrupted regions in images (e.g., watermarks in X-rays) [39].

- Data Augmentation: Generating new samples where the shortcut feature is no longer correlated with the label.

- Reweighting: Adjusting the loss function to de-emphasize samples that are likely to be learned via shortcuts.

- Construct your final shortcut-free dataset.

- Re-train and evaluate the same model suite on this new dataset within the SFEF. The results will now reflect the models' true capabilities on the intended task.

- Based on the identified SH, intervene on the dataset. This could involve:

Workflow Visualization

The following diagram illustrates the core SHL diagnostic and mitigation workflow.

Quantitative Results and Data Presentation

The following table summarizes quantitative findings from the application of the SHL framework to evaluate global topological perception capabilities, challenging previously held beliefs in the field.

Table: Model Performance Comparison on a Shortcut-Free Topological Dataset [3]

| Model Architecture | Inductive Bias | Reported Performance on\nBiased Topological Datasets (Previous Work) | Performance on Shortcut-Free Topological Dataset (via SHL) | Key Implication |

|---|---|---|---|---|

| CNN-based Models (e.g., ResNet) | Local, translation-invariant features. | Considered weak in global capabilities; inferior to Transformers [3]. | Outperformed Transformer-based models [3]. | Model preference for local features in biased data did not indicate a lack of global capability. |

| Transformer-based Models (e.g., ViT) | Global dependencies via self-attention. | Considered superior in global capabilities [3]. | Underperformed compared to CNNs [3]. | The previously observed superiority was likely due to a preference for exploiting dataset-specific global shortcuts, not a fundamentally better global ability. |

| All Tested DNNs | Varies by architecture. | Less effective than humans at recognizing global properties [3]. | Surpassed human capabilities [3]. | Eliminating data shortcuts revealed that DNNs possess stronger intrinsic capabilities than previously assessed. |

Core Concepts FAQ

What are Physics-Informed Neural Networks (PINNs) and how do they differ from traditional neural networks?