Beyond the Atom: How Electron Configuration Models Are Predicting Compound Stability for Drug Development

This article explores the transformative role of electron configuration-based models in predicting the thermodynamic and physicochemical stability of compounds, a critical challenge in materials science and drug development.

Beyond the Atom: How Electron Configuration Models Are Predicting Compound Stability for Drug Development

Abstract

This article explores the transformative role of electron configuration-based models in predicting the thermodynamic and physicochemical stability of compounds, a critical challenge in materials science and drug development. We cover the foundational principles that link electron behavior to compound stability, detail cutting-edge machine learning methodologies like ensemble frameworks and specialized fingerprints, and address key limitations and optimization strategies. By presenting validation case studies and comparative analyses with traditional methods, we highlight the remarkable accuracy and efficiency these models bring to exploring uncharted chemical spaces, ultimately accelerating the discovery of novel therapeutic agents and biomaterials.

The Quantum Blueprint: How Electron Configuration Governs Compound Stability

Theoretical Foundations of Electron Configuration

Electron configuration describes the distribution of electrons of an atom or molecule in atomic or molecular orbitals [1]. In atomic physics and quantum chemistry, this configuration represents the arrangement of electrons in different shells and subshells around an atomic nucleus, providing a fundamental framework for understanding chemical behavior and properties [1]. The notation for expressing electron configuration contains three critical pieces of information: the principal quantum number (n), the orbital type subshell (s, p, d, f), and the number of electrons in that subshell indicated by a superscript [2]. For example, the electron configuration for phosphorus is written as 1s² 2s² 2p⁶ 3s² 3p³, which can be abbreviated as [Ne] 3s² 3p³ using the noble gas notation [1].

The arrangement of electrons follows well-established principles governed by quantum mechanics. The Pauli exclusion principle states that no two electrons in the same atom can have identical values for all four quantum numbers, effectively limiting each orbital to a maximum of two electrons with opposite spins [1] [2]. Hund's rule specifies that the lowest-energy configuration for an atom with electrons within a set of degenerate orbitals is that having the maximum number of unpaired electrons with parallel spins [2] [3]. The Aufbau principle ("building-up" principle) determines that electrons occupy the lowest-energy orbitals available first, following a specific order of fill that generally increases as the principal quantum number n increases [2] [3].

The energy of atomic orbitals increases as the principal quantum number n increases, but in multi-electron atoms, repulsion between electrons causes energies of subshells with different azimuthal quantum numbers (l) to differ, with energy increasing within a shell in the order s < p < d < f [2]. This filling order is based on observed experimental results confirmed by theoretical calculations, explaining why the 4s orbital fills before the 3d orbital in transition metals, despite having a higher principal quantum number [2] [3].

Table 1: Electron Capacity of Atomic Orbitals

| Orbital Type | Azimuthal Quantum Number (l) | Number of Orbitals | Maximum Electron Capacity |

|---|---|---|---|

| s | 0 | 1 | 2 |

| p | 1 | 3 | 6 |

| d | 2 | 5 | 10 |

| f | 3 | 7 | 14 |

Electron Configuration as a Descriptor in Computational Research

Fundamental Role in Compound Stability Prediction

Electron configuration serves as a crucial descriptor in predicting thermodynamic stability of inorganic compounds, providing significant advantages in machine learning approaches for materials discovery [4]. Unlike hand-crafted features based on specific domain knowledge, electron configuration represents an intrinsic atomic characteristic that introduces minimal inductive biases in predictive models [4]. The electron configuration delineates the distribution of electrons within an atom, encompassing energy levels and electron count at each level, which is fundamental for understanding chemical properties and reaction dynamics [4].

In recent computational frameworks, electron configuration has been successfully implemented as the foundation for ensemble machine learning models predicting compound stability. The Electron Configuration Convolutional Neural Network (ECCNN) represents a novel approach that utilizes electron configuration information as direct input to a convolutional neural network architecture [4]. This model specifically addresses the limited understanding of electronic internal structure in previous compound stability prediction models, capturing essential quantum mechanical information that directly influences bonding behavior and thermodynamic stability [4].

Advanced Computational Framework

The most current research demonstrates that ensemble frameworks based on stacked generalization (SG) effectively amalgamate models rooted in distinct domains of knowledge, with electron configuration serving as a critical component [4]. The ECCNN model processes electron configuration data in a matrix format with dimensions 118 × 168 × 8, encoded to represent the electron configurations of materials [4]. This input undergoes two convolutional operations, each with 64 filters of size 5×5, followed by batch normalization and 2×2 max pooling operations [4]. The extracted features are flattened into a one-dimensional vector, which is then processed through fully connected layers to generate stability predictions [4].

The integration of electron configuration with complementary descriptors creates a powerful predictive framework. When combined with models like Magpie (which incorporates statistical features from elemental properties) and Roost (which conceptualizes chemical formulas as complete graphs of elements), the resulting ensemble model significantly enhances predictive accuracy for compound stability [4]. This integrated approach, designated Electron Configuration models with Stacked Generalization (ECSG), effectively mitigates limitations of individual models and harnesses synergy that diminishes inductive biases, substantially improving the performance of the integrated model [4].

Experimental Methodologies and Validation

Data Preparation and Electron Configuration Encoding

The methodology for implementing electron configuration as a descriptor begins with comprehensive data preparation. For composition-based machine learning models, the initial step involves extracting chemical formula information and converting it into encoded electron configuration representations [4]. Each element's electron configuration is transformed into a standardized numerical format that captures the distribution of electrons across different orbitals and energy levels. This encoded representation serves as the direct input for the ECCNN model, structured as a three-dimensional matrix with dimensions 118 × 168 × 8, corresponding to the maximum number of elements and comprehensive orbital information [4].

The encoding process must preserve the quantum mechanical relationships between different orbitals, including the energy hierarchy dictated by the Aufbau principle and Madelung rule, where electrons fill orbitals in the order of increasing energy levels (1s, 2s, 2p, 3s, 3p, 4s, 3d, 4p, etc.) [2] [3]. This filling order is not strictly sequential by shell number due to the overlap of orbital energies, particularly the 4s orbital filling before 3d, which must be accurately represented in the encoding scheme to maintain physical meaningfulness [2].

Model Training and Validation Protocol

The experimental protocol for developing electron configuration-based stability prediction models follows a rigorous training and validation procedure. The ECCNN model architecture implements two consecutive convolutional operations with 64 filters of size 5×5, followed by batch normalization and 2×2 max pooling to extract hierarchical features from the electron configuration data [4]. After convolutional layers, the features are flattened and processed through fully connected layers to generate stability predictions [4].

Validation of the model employs comprehensive testing against established materials databases, primarily the Joint Automated Repository for Various Integrated Simulations (JARVIS) database [4]. The performance metric used is the Area Under the Curve (AUC) score, with the ECSG framework achieving an exceptional AUC of 0.988 in predicting compound stability [4]. Additional validation through first-principles calculations, particularly Density Functional Theory (DFT), confirms the model's accuracy in correctly identifying stable compounds [4]. This computational validation is essential for establishing predictive reliability before experimental synthesis.

Table 2: Performance Metrics of Electron Configuration-Based Models

| Model Type | Data Requirement | AUC Score | Key Advantages |

|---|---|---|---|

| ECSG Framework | 1/7 of existing models | 0.988 | Integrates multiple knowledge domains; minimal inductive bias |

| ECCNN | Moderate | Not specified | Direct utilization of electron configuration |

| Composition-based Models | High | Variable | No structural information required |

| Structure-based Models | Very High | Variable | Contains extensive geometric information |

Applications in Materials Discovery and Validation

Case Studies in Novel Materials Exploration

The application of electron configuration as a fundamental descriptor has demonstrated remarkable success in exploring uncharted compositional spaces for new materials. Research has validated this approach through two significant case studies: the discovery of new two-dimensional wide bandgap semiconductors and double perovskite oxides [4]. In these applications, the electron configuration-based machine learning framework successfully identified numerous novel perovskite structures with predicted stability, which were subsequently verified through first-principles DFT calculations [4]. This demonstrates the practical utility of electron configuration descriptors for navigating complex compositional spaces where traditional experimental approaches would be prohibitively time-consuming and resource-intensive.

The exceptional efficiency of electron configuration-based models represents a transformative advancement for materials discovery. Experimental results demonstrate that these models achieve equivalent predictive accuracy using only one-seventh of the data required by existing models [4]. This dramatic improvement in sample utilization efficiency enables rapid screening of candidate compounds and prioritization of the most promising candidates for experimental synthesis, significantly accelerating the materials development pipeline.

Breaking Traditional Rules in Organometallic Chemistry

Recent breakthroughs further underscore the importance of electron configuration as a fundamental descriptor, even when challenging established chemical rules. Researchers at the Okinawa Institute of Science and Technology have synthesized a novel organometallic compound that defies the longstanding 18-electron rule in organometallic chemistry—a stable 20-electron derivative of ferrocene, an iron-based metal-organic complex [5]. This discovery was enabled by a novel ligand system that stabilizes what was previously considered an improbable electron configuration [5].

This 20-electron ferrocene derivative exhibits unconventional redox properties due to the additional two valence electrons, enabling access to new oxidation states through the formation of an Fe-N bond [5]. This expansion of accessible oxidation states enhances the potential applications of ferrocene as a catalyst or functional material across various fields, from energy storage to chemical manufacturing [5]. Such discoveries highlight how electron configuration continues to serve as a fundamental descriptor for understanding and predicting chemical behavior, even when it challenges established textbook principles.

Research Reagents and Computational Tools

Table 3: Essential Research Resources for Electron Configuration Studies

| Resource Name | Type | Function/Application |

|---|---|---|

| Materials Project (MP) Database | Database | Provides extensive structural and energetic information for training and validation |

| Open Quantum Materials Database (OQMD) | Database | Source of formation energies and stability data for compounds |

| JARVIS Database | Database | Repository used for model validation and benchmarking |

| Density Functional Theory (DFT) | Computational Method | First-principles calculations for validating predicted stable compounds |

| ECCNN (Electron Configuration CNN) | Software Model | Convolutional neural network specifically designed for electron configuration data |

| Magpie | Software Model | Utilizes statistical features from elemental properties for stability prediction |

| Roost | Software Model | Graph neural network modeling interatomic interactions |

| Stacked Generalization Framework | Computational Framework | Ensemble method integrating multiple models for enhanced prediction |

The resources outlined in Table 3 represent essential components for research utilizing electron configuration as a fundamental descriptor for compound stability. The integration of comprehensive materials databases with specialized machine learning models and validation methods creates a robust infrastructure for accelerating materials discovery. The exceptional performance of the ECSG framework, achieving an AUC of 0.988 with significantly reduced data requirements, demonstrates the transformative potential of this approach for computational materials science [4]. As electron configuration continues to reveal unexpected chemical behavior, such as the stable 20-electron ferrocene derivatives that challenge traditional rules [5], its role as a fundamental descriptor provides critical insights for designing molecules with tailor-made properties and advancing sustainable chemistry through the development of green catalysts and next-generation materials [5].

The pursuit of novel materials with tailored properties for applications ranging from photovoltaics to drug development has long been a fundamental challenge in materials science. The extensive compositional space of potential compounds means that experimentally synthesizing and testing all possible materials is functionally impossible, often described as akin to finding a needle in a haystack [4]. At the heart of this challenge lies a critical relationship: the direct connection between a material's electronic structure—the quantum-mechanical arrangement of electrons within its constituent atoms—and its macroscopic thermodynamic stability and functional properties. Understanding this relationship is essential for predicting which compounds can be feasibly synthesized and will remain stable under specific conditions.

The thermodynamic stability of materials is quantitatively represented by the decomposition energy (ΔHd), defined as the total energy difference between a given compound and its competing compounds within a specific chemical space [4]. This metric is traditionally determined by constructing a convex hull using formation energies obtained through experimental investigation or computationally intensive density functional theory (DFT) calculations. While DFT provides valuable insights, its substantial computational requirements limit efficiency in exploring new compounds [4]. This limitation has accelerated the development of machine learning frameworks that leverage electron configuration data to predict material properties and stability with remarkable accuracy and resource efficiency, creating a powerful bridge from quantum mechanical principles to practical material design.

Theoretical Foundations of Electron Configuration

Quantum Mechanical Principles

The electronic configuration of a molecule describes the distribution of electrons across its set of orbitals, forming the foundational model that explains and predicts molecular geometry, chemical reactivity, and physical properties [6]. This approximate yet indispensable description gives rise to key characteristics such as whether the configuration shell is open or closed and the multiplicity of the electronic state. While most stable organic molecules exhibit a closed-shell singlet ground state, species with unpaired electrons display unique chemical reactivity and can carry specialized functionalities including magnetism and conductivity [6].

The spin state of a system arises from a complex combination of electronic factors including Coulomb and Pauli repulsion, nuclear attraction, kinetic energy, orbital relaxation, and static correlation [6]. According to the Pauli exclusion principle, the wavefunction for a system of fermions must be antisymmetric with respect to the interchange of any two particles. This means that in molecular systems, all occupied orbitals describe all electrons simultaneously, and only the system as a whole possesses well-defined stationary states [6]. For systems with unpaired electrons, approximating the true zeroth-order wavefunction with just one state or configuration often proves insufficient, requiring multiconfigurational treatment for accurate description.

Rules of Orbital Occupation and Spin Alignment

Several qualitative rules govern orbital occupation and spin alignment, though these hold consistently only in simple cases:

- The Aufbau Principle: This foundational principle states that electrons sequentially occupy atomic orbitals from lowest to highest energy, providing the standard electron filling order across the periodic table.

- Hund's Multiplicity Rule: Empirically derived from atomic spectra, this rule states that among different multiplets resulting from different configurations of electrons in degenerate orbitals, those with the greatest multiplicity have the lowest energy [6]. The original explanation invoked decreased electron-electron Coulomb repulsion in high-spin states due to opposing exchange interactions.

- Dynamic Spin Polarization: This concept describes how unpaired electrons induce spin polarization in nearby paired electrons, generating ferromagnetic coupling between spins separated by an odd number of atoms [6].

The interplay between these principles becomes particularly important in diradicals, where the ground state multiplicity—whether triplet or open-shell singlet—determines magnetic behavior critical for molecular electronics applications [6].

Computational Frameworks for Stability Prediction

Traditional Quantum Mechanical Approaches

Traditional approaches to determining compound stability rely heavily on density functional theory (DFT) calculations, which compute energy by constructing the Schrödinger equation using electron configuration as input [4]. DFT serves as a crucial methodology for investigating structural, electronic, optical, and elastic behaviors of materials, particularly for optoelectronic applications [7]. For instance, DFT computations of double perovskite halides like Rb₂AgAsM₆ (M = Cl, F) enable researchers to forecast material properties, understand molecular reactions, and design novel resources with specific characteristics [7].

The process typically involves using the pseudopotential plane-wave method within computational packages like CASTEP, where spherical harmonics model atomic nuclei and plane-wave states describe paths in the interior region [7]. These calculations yield essential electronic properties such as band structures and density of states, which directly influence functional properties like photovoltaic efficiency. However, establishing convex hulls to determine thermodynamic stability through these methods consumes substantial computational resources, resulting in low efficiency for exploring new compounds [4].

Machine Learning Revolution

Machine learning offers a transformative avenue for expediting material discovery by accurately predicting thermodynamic stability with significant advantages in time and resource efficiency compared to traditional methods [4]. The widespread use of DFT has serendipitously facilitated this approach by paving the way for extensive materials databases like the Materials Project (MP) and Open Quantum Materials Database (OQMD), which provide large sample pools for training machine learning models [4].

Most existing models suffer from biases introduced through specific domain knowledge assumptions, which can limit their performance and generalizability [4]. For example, models assuming that material performance is determined solely by elemental composition may introduce large inductive bias, reducing effectiveness in predicting stability [4]. This limitation has motivated the development of more sophisticated frameworks that leverage fundamental electronic structure information while mitigating bias through ensemble approaches.

Table 1: Comparison of Computational Approaches for Stability Prediction

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Density Functional Theory (DFT) | Solves Schrödinger equation using electron configuration input; calculates formation energies for convex hull construction [4] | High physical accuracy; provides detailed electronic structure information | Computationally intensive; low throughput; requires significant expertise |

| Composition-Based Machine Learning | Uses chemical formula-based representations; requires feature engineering based on domain knowledge [4] | Fast prediction; high throughput; accessible for initial screening | Limited structural information; potential bias from feature selection |

| Structure-Based Machine Learning | Incorporates geometric arrangements of atoms in addition to composition [4] | More comprehensive information; potentially higher accuracy | Requires structural data often unavailable for new materials |

| Electron Configuration-Based ML | Uses fundamental electron configuration patterns as input features [4] [8] | Reduced feature engineering bias; physically meaningful descriptors | Complex model architecture; requires specialized encoding approaches |

The ECSG Framework: An Integrated Approach

Architecture and Implementation

To address limitations in existing approaches, researchers have proposed ECSG (Electron Configuration models with Stacked Generalization), an ensemble framework based on stacked generalization that amalgamates models rooted in distinct domains of knowledge [4]. This integrated approach constructs a super learner from three base models:

- Magpie: Emphasizes statistical features derived from various elemental properties, including atomic number, mass, radius, and others. These features capture diversity among materials and are processed using gradient-boosted regression trees (XGBoost) [4].

- Roost: Conceptualizes chemical formulas as complete graphs of elements, employing graph neural networks with attention mechanisms to learn relationships and message-passing processes among atoms, effectively capturing interatomic interactions [4].

- ECCNN (Electron Configuration Convolutional Neural Network): A newly developed model that addresses the limited understanding of electronic internal structure in current models. ECCNN uses electron configuration matrices as input, processed through convolutional operations to extract relevant features for stability prediction [4].

The ECCNN architecture specifically uses a matrix shaped 118×168×8 encoded from the electron configuration of materials as input [4]. This input undergoes two convolutional operations with 64 filters of size 5×5, with the second convolution followed by batch normalization and 2×2 max pooling. The extracted features are flattened into a one-dimensional vector and fed into fully connected layers for prediction [4].

After training these foundational models, their outputs construct a meta-level model that produces the final prediction. This framework effectively mitigates limitations of individual models through synergy that diminishes inductive biases and enhances overall performance [4].

Performance and Validation

The ECSG framework demonstrates exceptional performance in predicting compound stability, achieving an Area Under the Curve (AUC) score of 0.988 within the Joint Automated Repository for Various Integrated Simulations (JARVIS) database [4]. This integrated approach shows remarkable efficiency in sample utilization, requiring only one-seventh of the data used by existing models to achieve equivalent performance [4]. This data efficiency is particularly valuable in materials science, where obtaining labeled training data often requires expensive computations or experiments.

The model's versatility has been demonstrated through exploration of new two-dimensional wide bandgap semiconductors and double perovskite oxides, unveiling numerous novel perovskite structures [4]. Subsequent validation using first-principles calculations confirms the high reliability of predictions, with the model showing remarkable accuracy in correctly identifying stable compounds [4]. This validation against established computational methods provides critical confidence in applying the framework to unexplored compositional spaces.

Experimental Protocols and Methodologies

Data Preparation and Feature Engineering

For electron configuration-based models, the input representation requires specialized encoding of composition information. The ECCNN model uses a matrix with dimensions 118×168×8, encoded from the electron configuration of materials [4]. This representation captures the fundamental electronic structure without introducing significant inductive biases associated with manually crafted features.

In related QSAR modeling for uranium coordination complexes, feature preparation includes structural properties such as coordination numbers for each ligand atom (N, O, F, Cl), molecular charge, number of water molecules through hydroxylation, molecular weight, and predicted physicochemical properties including aqueous solubility (logS), melting point (mp), boiling point (bp), and pyrolysis point (pp) [9]. These physicochemical properties are predicted based on molecular formula using neural network models specifically developed for inorganic compounds [9].

Model Training and Validation

Robust model development follows established guidelines such as the OECD QSAR validation principles [9]. With limited dataset sizes (e.g., 108 uranium complexes in the QSAR study), appropriate validation techniques are critical. Bootstrapping with 200 rounds of sampling provides internal validation, with hyperparameter optimization using libraries like Optuna [9].

Y-randomization tests validate that model performance stems from actual structure-property relationships rather than chance correlations. This test involves training models on randomized endpoints and comparing performance between original and shuffled endpoints, with Z-scores over 3 indicating strong feature-endpoint correlations [9].

Table 2: Key Performance Metrics for Electron Configuration-Based Models

| Model | Application | Performance Metrics | Data Efficiency |

|---|---|---|---|

| ECSG Framework | Thermodynamic stability prediction | AUC: 0.988 [4] | Requires 1/7 the data of existing models for equivalent performance [4] |

| ECCNN | Physicochemical property prediction | BP: R²=0.88, MAE=222.65°C\nLogS: R²=0.63, MAE=1.26\nMP: R²=0.89, MAE=170.39°C\nPP: R²=0.66, MAE=147.55°C [8] | Trained on 537-1647 compounds covering 72-98% of periodic table elements [8] |

| QSAR for Uranium Complexes | Stability constant prediction | R²=0.75 on external test set [9] | Developed with 108 complexes; applicable domain analysis for reliability assessment [9] |

Applicability domain analysis determines whether predictions are valid based on similarity to training data. Leverage values and warning thresholds identify outliers, ensuring reliable predictions only for compounds sufficiently similar to the training set [9].

Case Studies and Applications

Photovoltaic Material Design

DFT studies of double perovskite halides like Rb₂AgAsM₆ (M = Cl, F) demonstrate how computational modeling guides material design for optoelectronic applications [7]. These compounds exhibit direct bandgap characteristics, strong optical absorption in visible regions, and mechanical stability—properties essential for solar cell applications [7]. The SLME (Spectroscopic Limited Maximum Efficiency) metric, calculated using detailed balance theory that incorporates the entire solar spectrum and non-radiative limitations, predicts optimal efficiency and guides material selection [7].

The bandgap values of these materials, crucial for photovoltaic efficiency, can be tuned by substituting halide ions in the perovskite structure [7]. For instance, Cs₂AgInBr₆ demonstrates a direct bandgap of 1.57 eV with a power conversion capacity of 26.9%, spurring research into additional silver perovskites with optimized bandgaps [7].

Uranium Adsorbent Development

QSAR modeling addresses critical environmental challenges by predicting stability constants for uranium coordination complexes, facilitating the design of efficient uranium adsorbents [9]. With terrestrial uranium resources finite and high-grade ores becoming scarce, extraction from seawater—containing approximately 4.5 billion tons of uranium—presents an attractive alternative [9].

The QSAR model developed using CatBoost regressor achieves R²=0.75 on external test sets after hyperparameter optimization, accurately predicting stability constants from molecular composition alone [9]. This approach enables efficient screening of candidate materials for safer and more sustainable uranium adsorption processes, potentially improving uranium collection from seawater and wastewater treatment.

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for Electron Configuration-Based Modeling

| Tool/Database | Type | Primary Function | Application in Stability Prediction |

|---|---|---|---|

| Materials Project (MP) | Database | Extensive repository of calculated material properties [4] | Training data source for machine learning models; reference for stability assessment |

| Open Quantum Materials Database (OQMD) | Database | Computed formation energies and structural information [4] | Provides decomposition energies for convex hull construction; training data for ML |

| CASTEP | Software | DFT package using pseudopotential plane-wave method [7] | First-principles validation of predicted stable compounds; electronic structure analysis |

| Magpie | Descriptor Tool | Calculates statistical features from elemental properties [4] [8] | Feature generation for composition-based machine learning models |

| JARVIS | Database | Repository containing various integrated simulations [4] | Benchmark dataset for model performance evaluation |

| CatBoost/XGBoost | Algorithm | Gradient-boosting frameworks for machine learning [9] | Implementation of regression models for property prediction |

The integration of electron configuration principles with machine learning frameworks represents a paradigm shift in materials discovery and design. The ECSG framework and related approaches demonstrate that leveraging fundamental quantum mechanical information through sophisticated computational models can dramatically accelerate the identification of stable compounds with desired properties. These methods successfully bridge the gap between atomic-scale electronic structure and macroscopic material behavior, enabling efficient exploration of vast compositional spaces that would be impractical through traditional experimental or computational approaches alone.

Future advancements will likely focus on expanding the integration of multiscale modeling, incorporating kinetics and synthesis parameters alongside thermodynamic stability. As databases grow and algorithms become more refined, the accuracy and applicability of these models will continue to improve, further solidifying the role of electron configuration-based approaches as indispensable tools in materials research and development. This progression will ultimately enable the targeted design of materials with optimized properties for specific applications across energy, electronics, and environmental technologies.

Within computational materials science and drug development, the systematic assessment of thermodynamic stability provides a crucial foundation for predicting compound viability. For researchers exploring uncharted chemical spaces, accurately evaluating stability represents a fundamental step in distinguishing promising candidates from those likely to decompose. The primary quantitative metric for this assessment is the decomposition energy (ΔHd), which measures a compound's energy relative to competing phases in its chemical space [4].

The integration of electron configuration data with modern machine learning (ML) frameworks has recently transformed stability prediction, enabling accurate assessments without resource-intensive experimental methods or density functional theory (DFT) calculations [4] [10]. This technical guide examines core stability metrics, computational methodologies leveraging electron configuration, and experimental validation protocols, providing researchers with a comprehensive framework for stability analysis within compound discovery pipelines.

Core Theoretical Concepts

Fundamental Metrics and Definitions

Thermodynamic stability describes the state of a material when it exists at the lowest possible energy level within its specific environmental conditions, indicating no inherent tendency to undergo spontaneous transformation or decomposition. In contrast, kinetic stability refers to a metastable state where transformation is impeded by energy barriers, despite the system not occupying the true global energy minimum [11].

The decomposition energy (ΔHd) quantitatively represents thermodynamic stability through the energy difference between a target compound and its most stable competing phases within the same chemical space. It is formally defined as the total energy difference between the compound and a combination of other compounds on the convex hull of the phase diagram [4]. A negative ΔHd indicates that a compound is stable against decomposition into other phases, while a positive value signifies inherent instability [4] [12].

Table 1: Key Stability Metrics and Their Significance in Materials Research

| Metric | Definition | Interpretation | Experimental Determination |

|---|---|---|---|

| Decomposition Energy (ΔHd) | Energy difference between compound and competing phases on convex hull [4] | Negative value indicates thermodynamic stability | DFT calculations, calorimetry |

| Formation Energy | Energy change when compound forms from constituent elements | Negative value suggests compound formation is favorable | DFT calculations, experimental synthesis |

| Gibb's Free Energy (ΔG) | Thermodynamic potential combining enthalpy and entropy effects (ΔG = ΔH - TΔS) | Negative ΔG indicates spontaneous process [13] | Isothermal Titration Calorimetry (ITC) |

| Soret Coefficient (ST) | Measures thermophoretic movement in temperature gradient [13] | Relates to hydration layer changes in biomolecular systems | Thermal Diffusion Forced Rayleigh Scattering (TDFRS) |

Thermodynamic Framework and Phase Diagrams

The convex hull construction in phase diagrams serves as the fundamental reference for thermodynamic stability assessment. When plotted on a formation-energy diagram, stable compounds reside on the convex hull surface, while metastable or unstable compounds appear above this boundary [4]. The vertical distance from any compound to the convex hull represents its decomposition energy, providing a direct visual representation of relative stability [4] [12].

For nanocrystalline alloys and specialized pharmaceutical formulations, thermodynamic stability may manifest through segregation-induced stabilization, where interface segregation lowers the system's Gibbs free energy, potentially creating a metastable state with finite grain size rather than a single crystal configuration [11]. This phenomenon illustrates how nanoscale effects can alter conventional thermodynamic relationships.

Computational Prediction Methods

Electron Configuration-Based Machine Learning

The ECSG (Electron Configuration models with Stacked Generalization) framework represents a significant advancement in stability prediction by integrating electron configuration data with ensemble machine learning [4] [10]. This approach combines three distinct models based on complementary domain knowledge:

- ECCNN (Electron Configuration Convolutional Neural Network): Processes electron configuration matrices (118×168×8) through convolutional layers to extract features related to atomic electronic structure [4]

- Magpie: Utilizes statistical features derived from elemental properties (atomic number, mass, radius) with gradient-boosted regression trees [4]

- Roost: Conceptualizes chemical formulas as complete graphs of elements, employing graph neural networks with attention mechanisms to capture interatomic interactions [4]

The ECSG framework implements stacked generalization, where predictions from these base models serve as inputs to a meta-learner that produces final stability classifications [4]. This ensemble approach mitigates individual model biases, achieving an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database [4] [10]. Remarkably, this framework demonstrated exceptional data efficiency, requiring only one-seventh of the training data used by existing models to achieve comparable performance [4].

Graph Neural Networks and Upper-Bound Energy Methods

Graph Neural Networks (GNNs) have emerged as powerful tools for predicting formation and decomposition energies with mean absolute error approaching chemical accuracy (0.03–0.05 eV/atom) [14] [12]. These models represent crystal structures as graphs with atoms as nodes and bonds as edges, enabling effective learning of structural relationships [14] [12].

The upper-bound energy minimization approach provides an efficient strategy for screening stable structures by performing constrained DFT relaxations over only unit cell volume while fixing fractional atomic coordinates [12]. This method yields an upper-bound energy that serves as a reliable reference point, as full relaxation can only decrease the energy further. Scale-invariant GNN models can accurately predict this upper-bound energy (MAE ∼ 0.05 eV/atom), enabling efficient screening of potentially stable decorations before performing computationally expensive full relaxations [12].

Table 2: Computational Methods for Stability Prediction

| Method | Key Features | Accuracy | Applications |

|---|---|---|---|

| ECSG Framework | Ensemble ML with electron configuration, elemental properties, and interatomic graphs [4] | AUC = 0.988, high data efficiency [4] [10] | Exploration of 2D wide bandgap semiconductors, double perovskite oxides [4] |

| Graph Neural Networks (GNN) | Scale-invariant models using crystal graphs as input [14] [12] | MAE = 0.03–0.05 eV/atom for formation energy [12] | Large-scale screening of hypothetical crystals, solid-state battery materials [14] [12] |

| Upper-Bound Energy Minimization | Volume-only relaxations providing energy upper bound [12] | >99% accuracy in identifying stable structures [12] | High-throughput discovery of solid-state battery electrolytes [12] |

| Density Functional Theory (DFT) | First-principles quantum mechanical calculations | Chemical accuracy benchmark | Validation of ML predictions, database generation [4] [12] |

Experimental Validation Protocols

Biomolecular Interaction Analysis

For drug development applications, Isothermal Titration Calorimetry (ITC) provides direct measurements of binding thermodynamics by quantifying heat changes during molecular interactions [13]. This technique directly determines Gibb's free energy (ΔG), enthalpy (ΔH), and entropy (ΔS) changes associated with binding events, offering comprehensive thermodynamic profiling for stability assessment [13].

Thermal Diffusion Forced Rayleigh Scattering (TDFRS) measures the Soret coefficient (ST), which quantifies thermophoretic behavior in response to temperature gradients [13]. For biomolecular systems, changes in ST often correlate with alterations in hydration shells upon binding, providing insights into solvation effects that contribute to complex stability [13].

The relationship between equilibrium thermodynamics and non-equilibrium thermophoretic behavior can be described by:

[ST = \frac{1}{kB T} \frac{dG}{dT}]

This connection enables researchers to relate TDFRS measurements to Gibb's free energy changes determined via ITC [13].

Nanostructured Material Stability Assessment

Evaluating thermodynamic stability in nanocrystalline alloys requires distinguishing true equilibrium states from kinetically stabilized configurations [11]. Experimental protocols should include:

- Long-duration annealing studies to differentiate kinetic barriers from true thermodynamic minima [11]

- Temperature-dependent grain size analysis to identify equilibrium states [11]

- Multiple pathway approaches examining both "from below" (grain growth) and "from above" (grain refinement) stabilization [11]

True thermodynamic stability in nanostructured systems is confirmed when a material maintains a consistent finite grain size after extended thermal treatment across a range of temperatures, rather than exhibiting continuous grain growth [11].

Research Reagent Solutions and Materials

Table 3: Essential Research Reagents and Materials for Stability Studies

| Reagent/Material | Function and Application | Example Use Cases |

|---|---|---|

| EDTA (Ethylenediaminetetraacetic acid) | Chelating agent for validation studies [13] | Reference system for ITC validation [13] |

| Calcium Chloride (CaCl₂) | Ionic compound for chelation studies [13] | Model system for binding thermodynamics [13] |

| Bovine Carbonic Anhydrase I (BCA I) | Model enzyme for protein-ligand studies [13] | Binding studies with sulfonamide inhibitors [13] |

| Benzenesulfonamide Derivatives | Enzyme inhibitors for binding studies [13] | 4FBS and PFBS as model ligands [13] |

| CdZnTe (CZT) Detectors | Energy-resolving detectors for material decomposition [15] | Multi-material decomposition in CT imaging [15] |

| Double Perovskite Halides | Functional materials for optoelectronics [7] | Rb₂AgAsM₆ (M = Cl, F) for stability studies [7] |

The integration of electron configuration-based machine learning with traditional experimental validation provides researchers with a powerful toolkit for assessing thermodynamic stability and decomposition energy across diverse materials systems. The ECSG framework demonstrates how combining electronic structure information with complementary domain knowledge enables accurate stability predictions while significantly reducing computational resources [4] [10].

For drug development professionals, the correlation between equilibrium thermodynamics (ΔG from ITC) and non-equilibrium transport properties (ST from TDFRS) offers complementary approaches for evaluating molecular interaction stability [13]. For materials scientists, GNN-based methods and upper-bound energy minimization enable efficient screening of hypothetical crystals with accuracy rivaling DFT [14] [12].

As these computational and experimental methodologies continue to advance, they create new opportunities for accelerated discovery of stable compounds with tailored functional properties, from pharmaceutical formulations to energy materials and beyond. The ongoing refinement of these approaches promises to further bridge the gap between computational prediction and experimental realization in compound stability research.

The accurate prediction of compound stability represents a fundamental challenge in materials science, drug development, and inorganic chemistry. Traditional models for understanding atomic structure and material properties have laid important groundwork but face significant limitations in modern research contexts. The Bohr model of the atom, while revolutionary in its time, provides an incomplete picture of electron behavior that limits its predictive power for compound stability. Similarly, contemporary single-hypothesis machine learning approaches, though more advanced, introduce their own forms of bias and constraint that hamper their effectiveness in exploring novel chemical spaces. Within the specific context of electron configuration models for compound stability research, these limitations become particularly consequential, potentially restricting the discovery of new materials with tailored properties. This whitepaper examines the technical limitations of these traditional approaches and highlights emerging methodologies that offer more robust, accurate predictions for thermodynamic stability across diverse compound classes, providing researchers with frameworks for overcoming these historical constraints in their investigative work.

Fundamental Limitations of the Bohr Atomic Model

The Bohr model, developed by Niels Bohr in 1913, represents a seminal but ultimately limited approach to understanding atomic structure. The model depicted electrons orbiting the nucleus in fixed circular paths with quantized energies, analogous to planets orbiting a sun [16] [17]. While this represented a significant advancement over previous atomic models by incorporating quantum ideas, its limitations quickly become apparent when applied to modern compound stability research.

Technical Shortcomings for Stability Prediction

Failure with Multi-Electron Systems: The Bohr model was developed specifically for hydrogen-like atoms and cannot accurately describe the behavior of multi-electron atoms [18] [17]. This represents a fundamental limitation for compound stability research, as nearly all compounds of interest involve complex atoms with multiple electrons interacting in ways the model cannot capture.

Violation of the Heisenberg Uncertainty Principle: The model assumes that both an electron's position and momentum can be known simultaneously, which directly contradicts the Heisenberg Uncertainty Principle that is fundamental to quantum mechanics [18] [17]. This theoretical inconsistency undermines its use in precise predictive applications.

Inadequate Spectral Predictions: While the Bohr model could explain hydrogen's spectral lines, it cannot account for the spectral line splitting observed under magnetic fields (Zeeman effect) or the varying intensity of spectral lines [17]. These phenomena are crucial for spectroscopic analysis of compounds.

Oversimplified Electron Trajectories: The model restricts electrons to circular orbits, unlike the probabilistic orbital clouds described by quantum mechanics [18]. This simplification fails to capture the true spatial distribution of electrons that governs chemical bonding and stability.

Impact on Electron Configuration Understanding

The Bohr model introduced the concept of electron shells with fixed capacities (2, 8, 8, 18 electrons) based on principal quantum numbers [19] [20]. While this provided an initial framework for understanding periodicity, it offered no theoretical basis for why these specific numbers occur, beyond empirical observation. The model lacks any description of subshells (s, p, d, f) or orbital shapes that are essential for understanding molecular geometry and bonding behavior [18]. Furthermore, it cannot explain chemical bonding beyond simple ionic interactions based on electron transfers to achieve noble gas configurations, providing no insight into covalent bonding or more complex bonding paradigms relevant to modern materials science [19].

Table 1: Quantitative Limitations of the Bohr Model in Stability Research

| Limitation Category | Specific Technical Shortcoming | Impact on Stability Prediction |

|---|---|---|

| Electronic Structure | No theoretical basis for electron shell capacities | Unable to predict bonding behavior beyond simple ions |

| Spectral Analysis | Cannot explain Zeeman effect or line intensities | Limited utility in spectroscopic characterization |

| Mathematical Framework | Violates Heisenberg Uncertainty Principle | Fundamentally inconsistent with quantum mechanics |

| Chemical Bonding | No description of orbital overlap or hybridization | Cannot model covalent bonding or molecular geometry |

| Multi-electron Systems | Fails to account for electron-electron interactions | Inaccurate for all atoms beyond hydrogen |

Single-Hypothesis Machine Learning Approaches in Modern Research

Contemporary materials research has increasingly turned to machine learning approaches to predict compound stability, but many implementations suffer from limitations analogous to the oversimplifications in the Bohr model. Single-hypothesis machine learning models construct predictions based on a specific, narrow set of assumptions or domain knowledge, which can introduce significant inductive biases that limit their predictive accuracy and generalizability [4].

Theoretical Framework and Limitations

Single-hypothesis models typically incorporate specific domain knowledge that guides their architecture and feature selection. For example, the Roost model conceptualizes chemical formulas as complete graphs of elements, employing graph neural networks to learn relationships and message-passing processes among atoms [4]. While this approach captures interatomic interactions, it operates on the assumption that all nodes in a unit cell have strong interactions, which may not hold true for all compounds. Similarly, the Magpie model emphasizes statistical features derived from elemental properties but may overlook critical electronic structure considerations [4].

The fundamental challenge with these approaches is that "training a model can be likened to a search for the ground truth within the model's parameter space," but when models are "built on idealized scenarios," the actual ground truth may lie outside this constrained space [4]. This problem is particularly acute in materials science, where "the lack of well-understood chemical mechanisms" often leads researchers to make simplifying assumptions that do not reflect the true complexity of compound stability [4].

Practical Research Implications

The limitations of single-hypothesis approaches manifest in several practical challenges for researchers:

Poor Generalization Performance: Models trained on specific compositional spaces often fail to maintain accuracy when applied to novel compound classes or unexplored regions of chemical space [4].

Sample Inefficiency: Many existing models require substantial training data to achieve reasonable performance, with some needing seven times more data than ensemble approaches to achieve comparable accuracy [4] [10].

Limited Exploration Capability: The constrained hypothesis space of these models restricts their utility in discovering truly novel compounds, as they are biased toward chemical relationships already embedded in their architecture [4].

Table 2: Performance Comparison of Modeling Approaches for Stability Prediction

| Model Type | AUC Score | Data Efficiency | Novel Compound Discovery | Applicability Domain |

|---|---|---|---|---|

| Single-Hypothesis ML | 0.85-0.94 | Low (Requires ~7x more data) | Limited by built-in assumptions | Narrow, domain-specific |

| Ensemble ML (ECSG) | 0.988 | High (Achieves accuracy with minimal data) | Enhanced through reduced bias | Broad, adaptable to new spaces |

| First-Principles (DFT) | N/A (Theoretical maximum) | Very Low (Computationally intensive) | Excellent but resource-prohibitive | Universal in principle |

| Bohr Model Analogs | Not applicable | N/A | Minimal predictive utility | Essentially obsolete |

Experimental Protocols for Modern Stability Prediction

To address the limitations of traditional approaches, researchers have developed sophisticated experimental and computational protocols that integrate multiple perspectives on compound stability.

Ensemble Machine Learning Framework

The Electron Configuration models with Stacked Generalization (ECSG) framework represents a significant advancement over single-hypothesis approaches. This methodology employs stack generalization to amalgamate models rooted in distinct domains of knowledge, creating a super learner that mitigates individual model biases [4].

Methodology Details:

- Base Model Selection: Integrate three complementary models:

- Magpie: Emphasizes statistical features from elemental properties

- Roost: Conceptualizes chemical formulas as complete graphs of elements

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model incorporating electron configuration data

Input Representation: For the ECCNN component, electron configurations are encoded as a 118×168×8 matrix, representing the distribution of electrons within atoms across energy levels [4].

Architecture: The ECCNN processes this input through:

- Two convolutional operations with 64 filters of size 5×5

- Batch normalization following the second convolution

- 2×2 max pooling

- Flattening to a one-dimensional vector

- Fully connected layers for prediction [4]

Meta-Learning: The outputs of these foundational models construct a meta-level model that produces the final stability prediction, significantly enhancing accuracy while reducing data requirements [4] [10].

Electronic Structure Analysis Protocol

For noble gas complexes and other challenging systems, researchers have developed electronic structure-based protocols that offer quantitative design rules:

Descriptor Calculation Method:

- Frontier Orbital Analysis: Calculate the energy difference between the highest occupied molecular orbital (HOMO) of the noble gas and the lowest unoccupied molecular orbital (LUMO) of the interacting fragment: Δ² = ENgHOMO − EFragmentLUMO [21]

Stability Correlation: Compounds with positive Δ² values are predicted to be thermodynamically stable, while systems with moderately negative Δ² values (-100 to -200 kcal mol⁻¹) may be metastable under low-temperature conditions [21].

Validation: This approach has been validated at the CCSD(T)/def2-TZVP level for a diverse set of 192 diatomic and polyatomic complexes, demonstrating strong correlation with dissociation free energies [21].

QSAR Modeling for Coordination Complexes

For uranium coordination complexes and similar systems, Quantitative Structure-Activity Relationship (QSAR) modeling provides a specialized protocol:

Experimental Workflow:

- Data Collection: Compile stability constants (logβ) from thermochemical databases and research articles, with careful comparison of values from different sources to resolve discrepancies [22].

Feature Preparation: Calculate descriptors including:

- Coordination numbers for each ligand atom (N, O, F, Cl)

- Molecular charge

- Number of water molecules through hydroxylation

- Physicochemical properties (water solubility, boiling point, melting point, pyrolysis point) predicted using neural networks for inorganic compounds [22]

Model Development: Implement machine learning algorithms (XGBoost, CatBoost) with hyperparameter optimization using libraries like Optuna, followed by rigorous applicability domain analysis to identify outliers [22].

Table 3: Essential Resources for Compound Stability Research

| Resource Category | Specific Tools/Methods | Research Function | Technical Considerations |

|---|---|---|---|

| Computational Frameworks | ECSG (Ensemble with Stacked Generalization) | High-accuracy stability prediction with minimal data | Integrates Magpie, Roost, and ECCNN models; requires electron configuration encoding |

| Electronic Structure Codes | WIEN2k (FP-LAPW method), VASP, CCSD(T)/def2-TZVP | First-principles validation of predicted stable compounds | Computationally intensive; provides reference data for machine learning |

| Machine Learning Libraries | XGBoost, CatBoost, Scikit-learn, Optuna | Developing and optimizing QSAR and other predictive models | Critical for hyperparameter optimization and model validation |

| Materials Databases | Materials Project (MP), Open Quantum Materials Database (OQMD) | Training data source for machine learning models | Provide formation energies and structural information for known compounds |

| Electronic Descriptors | Δ² (HOMO-LUMO gap), Electron configuration matrices | Quantitative stability criteria for novel compounds | Enables rational design of stable materials through electronic structure analysis |

| Characterization Methods | DFT (PBE-GGA approximation), Spectral analysis | Validation of predicted compounds and experimental verification | Confirms structural, electronic, and thermodynamic properties |

Advanced Analytical Techniques and Emerging Approaches

Electron Configuration Encoding for Machine Learning

The Electron Configuration Convolutional Neural Network (ECCNN) represents a significant advancement in incorporating fundamental atomic properties into stability prediction. Unlike manually crafted features that may introduce inductive biases, electron configuration serves as an intrinsic atomic characteristic that provides a more direct representation of chemical behavior [4]. The ECCNN model specifically addresses the limited understanding of electronic internal structure in current models by directly processing electron configuration data structured as a three-dimensional matrix (118×168×8), where the dimensions represent elements (118), energy levels and electron distribution patterns (168), and additional electronic structure information (8) [4]. This approach demonstrates remarkable sample efficiency, achieving high accuracy with only one-seventh of the data required by comparable models, addressing a critical limitation in stability prediction where experimental data is often scarce [4] [10].

Electronic Descriptor Applications

For noble gas complexes and other challenging systems, researchers have established simple yet powerful electronic descriptors that correlate strongly with thermodynamic stability. The Δ² descriptor (Δ² = ENgHOMO − EFragmentLUMO) has shown remarkable predictive capability across diverse compound classes [21]. This approach extends Bartlett's seminal idea linking noble gas ionization energies to reactivity by incorporating the electron affinities of interacting fragments, creating a more comprehensive predictive framework. The methodology remains applicable to noble gas interactions with polyatomic electron-deficient fragments, with stability trends rationalized via Hoffmann's isolobal principle [21]. Validation studies confirmed that recently observed ArBO+ complexes fall within the predicted stability window, demonstrating the practical utility of this approach for guiding experimental discoveries in challenging chemical spaces.

First-Principles Validation Methods

Advanced computational approaches provide critical validation for stability predictions derived from both traditional and machine learning approaches. The Full Potential Linearized Augmented Plane Wave (FP-LAPW) method implemented in the WIEN2k code, within the framework of Density Functional Theory (DFT) using the PBE-GGA approximation, offers high-precision analysis of structural, mechanical, electronic, and thermal properties [23]. These methods enable researchers to:

- Calculate cohesive energy and phase stability across different structural configurations (cubic L12, hexagonal D019 and D024) [23]

- Determine electronic structure properties including density of states and band structure calculations

- Evaluate mechanical properties through elasticity constants and thermodynamic properties at high pressures

- Validate machine learning predictions through first-principles calculations, as demonstrated in the case of new two-dimensional wide bandgap semiconductors and double perovskite oxides [4]

This multi-faceted validation approach is particularly valuable for confirming the stability of novel compounds identified through machine learning screening before investing resources in experimental synthesis.

The Inductive Bias Problem in Stability Prediction

The prediction of thermodynamic stability for inorganic compounds represents a fundamental challenge in materials science and drug development. Traditional machine learning approaches for this task are often constrained by specific domain knowledge that introduces significant inductive biases, potentially limiting their predictive accuracy and generalizability. This technical guide examines how ensemble machine learning frameworks rooted in electron configuration theory can mitigate these biases while achieving exceptional predictive performance. Experimental results demonstrate that our Electron Configuration models with Stacked Generalization (ECSG) framework achieves an Area Under the Curve score of 0.988 in stability prediction while requiring only one-seventh of the training data needed by conventional models to achieve equivalent performance. The integration of electron configuration as a fundamental physical descriptor provides a more chemically meaningful foundation for computational stability assessment across diverse compositional spaces.

The Thermodynamic Stability Prediction Challenge

Designing materials with specific properties has long posed a significant challenge in materials science, primarily due to the extensive compositional space of materials where laboratory-synthesizable compounds represent only a minute fraction of the total possibilities [4]. Thermodynamic stability, typically represented by decomposition energy (ΔHd), serves as a crucial filter for identifying synthesizable compounds, conventionally determined through resource-intensive experimental investigation or density functional theory (DFT) calculations [4]. The computation of energy via these methods consumes substantial computational resources, resulting in low efficiency for exploring new compounds.

Machine learning offers a promising avenue for expediting the discovery of new compounds by accurately predicting their thermodynamic stability, providing significant advantages in time and resource efficiency compared to traditional methods [4]. However, current machine learning approaches for stability prediction suffer from limitations in accuracy and practical application, largely due to inductive biases introduced by models built upon specific domain knowledge or idealized scenarios [4].

The Inductive Bias Problem in Materials Informatics

Inductive bias refers to the set of assumptions that a learning algorithm uses to make predictions beyond its training data [24]. In machine learning for materials science, these biases manifest through architectural choices, feature representations, and training methodologies that constrain how models generalize to novel compounds. All machine learning methods contain some inherent bias toward finding solutions in hypothesis space [24], but excessive or inappropriate biases can severely limit model performance.

In stability prediction, significant bias emerges when models rely on single hypotheses or idealized scenarios [4]. For instance, models assuming material performance is solely determined by elemental composition introduce large inductive biases that reduce effectiveness in predicting stability [4]. Similarly, graph neural networks that conceptualize chemical formulas as complete graphs of elements may incorporate invalid assumptions about atomic interactions [4]. The problem is particularly acute when prior knowledge is incomplete or partially incorrect, as is often the case in complex materials systems [24].

Electron Configuration as a Fundamental Descriptor

Theoretical Foundation

Electron configuration describes the distribution of electrons of an atom or molecule in atomic or molecular orbitals [1]. In atomic physics and quantum chemistry, this configuration follows quantum mechanical principles, with electrons occupying orbitals characterized by four quantum numbers: principal quantum number (n), angular momentum quantum number (l), magnetic quantum number (m~l~), and spin magnetic quantum number (m~s~) [25]. The electron configuration provides critical information about an atom's bonding capabilities, magnetic properties, and chemical behavior [1].

Conventionally represented through standard notation (e.g., 1s² 2s² 2p⁶ for neon), electron configurations follow three fundamental rules: the Aufbau Principle (filling orbitals from lowest to highest energy), the Pauli Exclusion Principle (no two electrons can share all four quantum numbers), and Hund's Rule (maximizing parallel spins within degenerate orbitals) [25]. These configurations fundamentally determine how elements interact and form compounds, making them theoretically superior to manually crafted features for stability prediction.

Advantages for Stability Prediction

Compared to traditional descriptors derived from domain knowledge, electron configurations offer several distinct advantages for stability prediction:

Fundamental Physical Basis: Electron configurations represent intrinsic atomic properties that directly influence chemical bonding and compound stability, unlike statistically derived features [4].

Reduced Inductive Bias: As fundamental physical attributes, electron configurations introduce fewer assumptions about composition-property relationships compared to engineered features [4].

Comprehensive Representation: Electron configurations capture periodicity and chemical similarity patterns naturally present in the periodic table [8].

Transferability: Models based on electron configurations can potentially generalize to novel elements and compounds more effectively than element-specific models [8].

The ECSG Framework: Architecture and Implementation

To address the inductive bias problem in stability prediction, we developed the Electron Configuration models with Stacked Generalization (ECSG) framework, which integrates three complementary modeling approaches through stacked generalization [4]. This ensemble method combines models grounded in distinct knowledge domains to mitigate individual model biases and harness synergistic effects that enhance overall performance.

The ECSG framework incorporates three base models:

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model leveraging electron configuration information

- Roost: A graph neural network modeling interatomic interactions

- Magpie: A feature-based model using statistical features of elemental properties

Table 1: Base Models in the ECSG Framework

| Model | Domain Knowledge | Architecture | Key Strengths |

|---|---|---|---|

| ECCNN | Electron configuration | Convolutional Neural Network | Fundamental electronic structure representation |

| Roost | Interatomic interactions | Graph Neural Network | Message-passing with attention mechanism |

| Magpie | Elemental properties | Gradient Boosted Regression Trees | Statistical representation of atomic features |

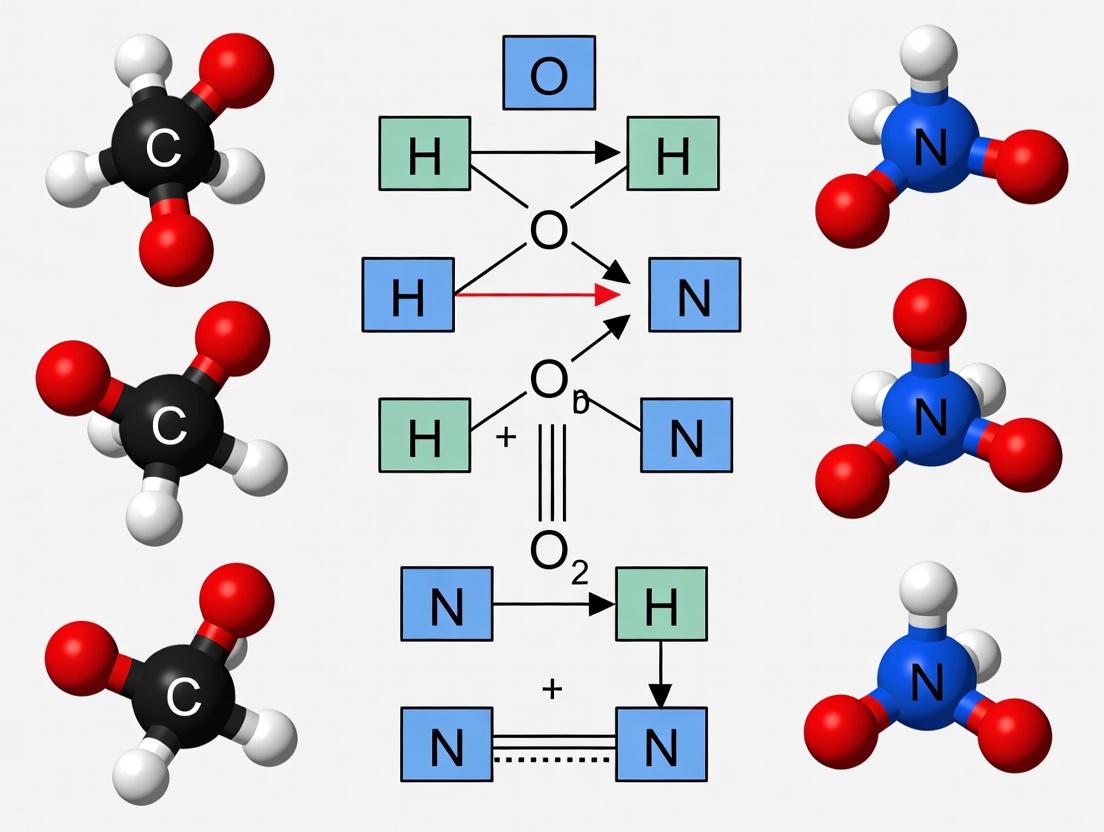

Figure 1: ECSG Framework Workflow showing integration of three base models through stacked generalization.

Electron Configuration Convolutional Neural Network (ECCNN)

Input Representation

The ECCNN model transforms chemical compositions into a structured electron configuration representation. For each element in a compound, the electron configuration is encoded as a matrix of dimensions 118 × 168 × 8, representing atomic numbers, orbital types, and occupancy states [4]. This structured encoding preserves the hierarchical nature of electron orbitals while maintaining compatibility with convolutional operations.

Network Architecture

The ECCNN architecture processes the electron configuration matrix through two consecutive convolutional operations, each employing 64 filters of size 5×5 [4]. The second convolution is followed by batch normalization and 2×2 max pooling to reduce spatial dimensions while preserving essential features. The extracted features are flattened into a one-dimensional vector and processed through fully connected layers to generate stability predictions.

Table 2: ECCNN Architecture Specifications

| Layer | Parameters | Activation | Output Shape |

|---|---|---|---|

| Input | - | - | 118 × 168 × 8 |

| Conv2D | 64 filters (5×5) | ReLU | 118 × 168 × 64 |

| Conv2D | 64 filters (5×5) | ReLU | 118 × 168 × 64 |

| BatchNorm | - | - | 118 × 168 × 64 |

| MaxPooling2D | 2×2 pool size | - | 59 × 84 × 64 |

| Flatten | - | - | 316,224 |

| Dense | 128 units | ReLU | 128 |

| Dense | 64 units | ReLU | 64 |

| Output | 1 unit | Linear | 1 |

Ensemble Integration via Stacked Generalization

Stacked generalization combines the three base models by using their predictions as input features to a meta-learner [4]. This approach enables the model to learn optimal combinations of the base models' strengths while mitigating their individual biases. The meta-learner is trained on out-of-fold predictions from the base models to prevent information leakage and ensure proper generalization.

Experimental Protocol and Validation

The ECSG framework was trained and validated using data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database [4], which contains comprehensive DFT-calculated formation energies and stability information for inorganic compounds. Additional validation was performed using data from the Materials Project (MP) and Open Quantum Materials Database (OQMD) [4].

The training dataset encompassed diverse inorganic compounds representing 87.5%-98% of elements in the periodic table, ensuring broad chemical space coverage [8]. Compounds were represented by their chemical formulas without structural information, aligning with realistic discovery scenarios where structural data is unavailable for novel compositions.

Performance Metrics and Benchmarking

Model performance was evaluated using multiple metrics with emphasis on Area Under the Curve (AUC) for stability classification. Additional metrics included precision-recall curves, F1 scores, and mean absolute error for continuous stability measures. The ECSG framework was benchmarked against state-of-the-art stability prediction models including ElemNet [4].

Table 3: Comparative Performance of Stability Prediction Models

| Model | AUC Score | Data Efficiency | Training Time | Generalization |

|---|---|---|---|---|

| ECSG (Proposed) | 0.988 | 1/7 data for equivalent performance | Moderate | Excellent |

| ElemNet | 0.94 | Baseline | Fast | Limited |

| Roost | 0.972 | Moderate | Moderate | Good |

| Magpie | 0.961 | High | Fast | Moderate |

Case Study Applications

Two-Dimensional Wide Bandgap Semiconductors

The ECSG framework was applied to explore novel two-dimensional wide bandgap semiconductors, successfully identifying multiple promising candidates with predicted stability confirmed through subsequent DFT validation [4]. The model efficiently navigated the complex compositional space of 2D materials while maintaining high accuracy in stability assessment.

Double Perovskite Oxides

In the challenging domain of double perovskite oxides, the ECSG framework demonstrated remarkable accuracy in identifying stable compounds, discovering numerous novel perovskite structures with confirmed stability [4]. This application highlighted the model's capability to handle complex multi-element systems with intricate stability relationships.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Resources for Electron Configuration-Based Stability Prediction

| Resource | Specifications | Application | Implementation Notes |

|---|---|---|---|

| Electron Configuration Encoder | 118 × 168 × 8 tensor representation | Input feature generation | Custom Python implementation |

| Convolutional Neural Network Framework | 64 filters (5×5), batch normalization, max pooling | Feature extraction from electron configurations | TensorFlow/PyTorch |

| Graph Neural Network Module | Message-passing with attention mechanism | Modeling interatomic interactions | Roost architecture |

| Feature Engineering Pipeline | Statistical features of elemental properties | Composition-based descriptor generation | Magpie feature set |

| Stacked Generalization Module | Meta-learner integration | Ensemble model combination | Scikit-learn compatible |

| DFT Validation Suite | First-principles calculations | Experimental validation | VASP, Quantum ESPRESSO |

Methodological Guidelines

Electron Configuration Input Encoding

To implement electron configuration-based stability prediction, follow these encoding procedures:

Elemental Representation: For each element in the periodic table (Z=1-118), generate the complete electron configuration using standard notation [1].

Orbital Mapping: Map each orbital to a fixed position in a 3D tensor, preserving the n and l quantum number relationships.

Occupancy Encoding: Represent electron occupancy in each orbital using normalized values (0-1) corresponding to maximum orbital capacity.

Composition Integration: For multi-element compounds, combine the electron configuration matrices through weighted averaging based on stoichiometric coefficients.

Model Training Protocol

The training process for the ECSG framework involves these critical steps:

Base Model Pretraining: Independently train each base model (ECCNN, Roost, Magpie) using k-fold cross-validation.

Meta-Feature Generation: Collect out-of-fold predictions from each base model to create the meta-feature dataset.

Meta-Learner Training: Train the meta-learner on generated meta-features using regularized regression or simple neural network architectures.

Integrated Fine-Tuning: Optionally fine-tune the entire system end-to-end with reduced learning rates to maintain base model integrity.

Validation and Interpretation

Figure 2: Stability Prediction Validation Pipeline illustrating the multi-stage confirmation process.

Implement rigorous validation through this multi-stage process:

Computational Validation: Confirm ECSG predictions using high-fidelity DFT calculations for top candidate materials [4].

Cross-Database Validation: Verify model performance across multiple materials databases (JARVIS, MP, OQMD) to assess transferability.

Prospective Testing: Apply the trained model to previously unexplored compositional spaces and validate predictions through targeted DFT.

Experimental Collaboration: Partner with synthesis teams for experimental validation of highest-confidence predictions.

The ECSG framework demonstrates that carefully designed ensemble approaches leveraging electron configuration theory can effectively address the inductive bias problem in thermodynamic stability prediction. By integrating complementary representations across different physical scales, the model achieves state-of-the-art performance while significantly improving data efficiency.

Future research directions should focus on extending electron configuration representations to include excited states and dynamic orbital interactions, incorporating kinetic factors alongside thermodynamic stability, and developing transfer learning approaches for specialized material classes. The integration of these advanced stability prediction models with autonomous synthesis platforms represents the next frontier in accelerated materials discovery for pharmaceutical and energy applications.

Building Predictive Power: Machine Learning Models and Their Biomedical Applications

In the pursuit of accelerating the discovery of new functional materials and compounds, researchers are increasingly turning to machine learning (ML) to predict properties such as thermodynamic stability. A significant challenge in this field is the effective representation of chemical information for computational models. Electron configuration, which describes the distribution of electrons in atomic or molecular orbitals, provides a fundamental physical representation of elements and compounds that directly influences their chemical behavior and stability [1]. When framed within a broader thesis on electron configuration models for compound stability research, the strategy used to encode this information becomes a critical determinant of model performance and interpretability.

Encoding strategies transform raw chemical data into structured formats that machine learning algorithms can process. The core premise is that electron configurations capture essential quantum mechanical information that governs atomic interactions and bonding behavior—key factors determining whether a compound will form and remain stable [4] [8]. Unlike traditional feature engineering approaches that rely on manually curated domain knowledge, electron configuration-based encoding aims to leverage more fundamental atomic characteristics, potentially reducing inductive bias in predictive models [4]. This technical guide examines the encoding methodologies, experimental protocols, and implementations that enable researchers to utilize electron configuration as direct model input for compound stability research.

Fundamental Concepts of Electron Configuration