Beyond Energy Convergence: A Practical Guide to Validating DOS with k-Point Density

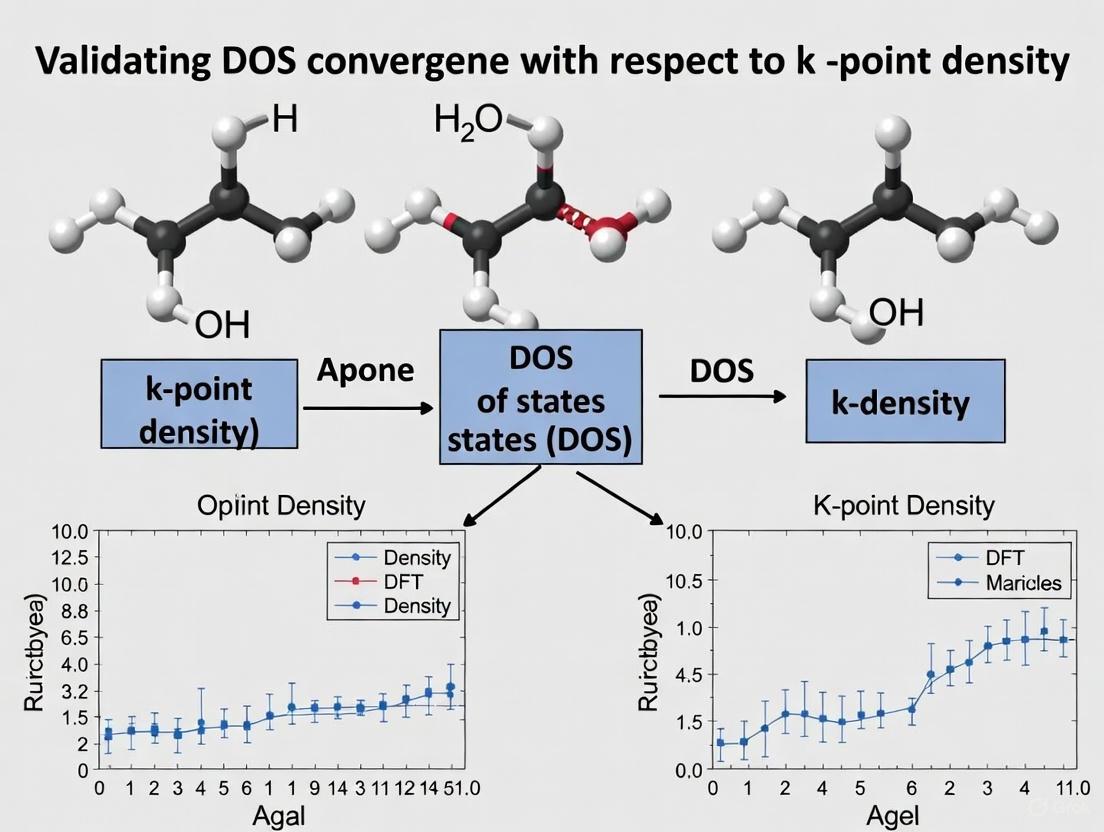

This article provides a comprehensive guide for computational researchers on validating the convergence of the Density of States (DOS) with k-point sampling.

Beyond Energy Convergence: A Practical Guide to Validating DOS with k-Point Density

Abstract

This article provides a comprehensive guide for computational researchers on validating the convergence of the Density of States (DOS) with k-point sampling. While total energy often converges with a coarse k-grid, obtaining a smooth and accurate DOS requires a significantly denser sampling of the Brillouin zone. We explore the foundational reasons for this discrepancy, detail methodological best practices for converging the DOS, offer troubleshooting strategies for common pitfalls, and establish a rigorous framework for validating results. This systematic approach is crucial for ensuring the reliability of electronic structure calculations in materials science and drug development research.

Why Your DOS Needs More k-Points Than Your Total Energy

In computational materials science, accurately predicting electronic properties hinges on the precise calculation of the Density of States (DOS). This foundational quantity, which describes the number of electron states at each energy level, is critically sensitive to the sampling of the Brillouin zone (BZ) with k-points. Two philosophically distinct approaches have emerged for handling the data obtained from this sampling: integral smoothing and discrete sampling. The former relies on integral transforms and mathematical smoothing to approximate the continuous DOS from a finite set of points, while the latter depends on the raw density of discrete k-points to resolve electronic features. Within the broader thesis of validating DOS convergence with k-point density, this guide provides an objective comparison of these two methodologies, equipping researchers with the data and protocols needed to select the optimal approach for their investigations.

Theoretical Foundations

The Role of k-points in Density of States Calculations

The DOS is a central quantity in electronic structure theory, integral for determining a material's conductive, optical, and thermodynamic properties. In practice, the DOS is computed by integrating the electronic band structure across the Brillouin zone, a task accomplished numerically on a discrete mesh of k-points. The central challenge is that the DOS can be a rapidly varying function of energy, particularly for metals and semi-metals, while the computational results are only known at a finite number of k-points. The choice between achieving a smooth representation through integral smoothing versus a direct, potentially noisy representation via discrete sampling constitutes a fundamental methodological divide. The accuracy of either method is ultimately contingent on the k-point density, with insufficient sampling leading to significant qualitative and quantitative errors [1].

Defining the Two Approaches

- Integral Smoothing: This approach employs a mathematical transformation that inherently incorporates a smoothing operation. A powerful example is the use of an imaginary-time correlation function, which is connected to the dynamic structure factor via a two-sided Laplace transform. This mapping naturally provides a pathway to obtain smoothed, physically meaningful results without introducing significant bias, effectively acting as an integrated smoothing mechanism [2]. The core of this method lies in its integral nature, which can dampen the effects of noise and discrete sampling.

- Discrete Sampling: This method directly utilizes the eigenvalues computed at a finite set of discrete k-points in the Brillouin zone. The DOS is constructed by explicitly counting these states and often applying an external broadening function (e.g., Gaussian or Lorentzian) to the discrete energy levels to produce a continuous curve [1] [3]. The quality of the result is therefore directly tied to the density of the k-point mesh; a coarse mesh results in a spiky, unreliable DOS, while a sufficiently dense mesh resolves the true electronic structure.

Methodological Comparison

The core differences between integral smoothing and discrete sampling are best understood through their specific implementations, requirements, and outputs. The table below provides a direct, point-by-point comparison.

Table 1: Fundamental comparison between Integral Smoothing and Discrete Sampling methodologies.

| Feature | Integral Smoothing | Discrete Sampling |

|---|---|---|

| Core Principle | Leverages integral transforms (e.g., Laplace) for inherent noise suppression and smoothing [2]. | Direct summation over discrete k-points, often with an external broadening function [1]. |

| Primary Input | Data in a transformed domain (e.g., imaginary time). | Eigenvalues calculated on a k-point mesh in the Brillouin zone. |

| Handling of Noise | Robust; noise attenuation is a built-in feature of the integral mapping [2]. | Sensitive; requires fine k-point meshes or large broadening to suppress irregularities [2] [1]. |

| Computational Efficiency | Can achieve convergence with fewer k-points, offering potential for significant speed-ups [2]. | Requires increasingly dense k-point meshes for convergence, leading to cubic or faster growth in computational cost [2]. |

| Convergence Metric | Tests based on the smoothness and properties in the transformed domain (e.g., imaginary time) [2]. | Metrics based on the stability of the output DOS curve, such as mean squared deviation between successive calculations [1]. |

| Key Challenge | Requires transformation between domains, which can add complexity to the workflow. | Susceptible to "ringing" and artificial spikes if the k-point mesh is under-converged [2] [3]. |

Experimental Protocols for Validating Convergence

A critical component of DOS calculations is establishing a robust protocol to validate convergence. The methodologies for the two approaches differ significantly.

Convergence Protocol for Discrete Sampling:

- System Energy vs. DOS Convergence: First, recognize that the total energy of a system typically converges with far fewer k-points than the DOS. A mesh that is sufficient for energy convergence (e.g., where energy variations are below 0.05 eV) is almost always insufficient for a converged DOS [1].

- Quantitative Metric: A robust metric is the mean squared deviation (MSD) between DOS curves calculated at successive k-point densities. Compute the DOS for a series of increasingly dense k-point meshes (e.g., from 6x6x6 to 20x20x20). The MSD between the DOS at mesh

kand meshk-1is given by(1/N) * Σ(DOS(k, i) - DOS(k-1, i))², whereNis the number of energy points andiindexes the energy [1]. - Convergence Criterion: The DOS is considered converged when the MSD falls below a predefined threshold (e.g., 0.001) and stabilizes with increasing mesh density [1].

Convergence Protocol for Integral Smoothing:

- Domain Shift: The convergence test is formulated in the transformed domain (e.g., imaginary time) rather than the frequency/energy domain. This leverages the one-to-one mapping between the dynamic structure factor

S(q,ω)and the imaginary-time density–density correlation functionF(q,τ)[2]. - Rigorous Testing: Convergence is assessed by testing the smoothness and stability of the results within this imaginary-time domain. This approach can be combined with constraints-based noise attenuation techniques [2].

- Convergence Criterion: The parameters (e.g., broadening

η) are considered converged when the results in the imaginary-time domain are stable, allowing for a smooth and unbiased transformation back to the energy domain without needing an infinitely dense k-point mesh [2].

- Domain Shift: The convergence test is formulated in the transformed domain (e.g., imaginary time) rather than the frequency/energy domain. This leverages the one-to-one mapping between the dynamic structure factor

Experimental Data and Case Studies

Quantitative Convergence Data for a Metal (Silver)

A study on silver, a prototypical metal, provides clear quantitative data on the convergence of DOS via discrete sampling. The system energy was found to be converged with a 7x7x7 k-point mesh (variations < 0.05 eV). However, the DOS required a much denser mesh to converge, as shown by the MSD metric [1].

Table 2: Convergence of Silver DOS with k-point mesh density, measured by Mean Squared Deviation (MSD). Data adapted from [1].

| k-point Mesh (NxNxN) | Cumulative MSD (vs. N=20) | Qualitative DOS Description |

|---|---|---|

| 6x6x6 | > 0.18 | Poorly converged, spiky, unreliable |

| 7x7x7 | N/A | Energy converged, DOS not converged |

| 13x13x13 | ~0.005 | Well-converged |

| 18x18x18 | ~0.001 | Highly converged |

The Case of Graphene: Sensitivity to k-point Inclusion

Graphene, a semi-metal, presents a unique case where the placement of k-points is as important as their density. A coarse 4x4x1 mesh produces a DOS comprised of spikes, with the Fermi level incorrectly positioned relative to the Dirac cone. Remarkably, switching to a 3x3x1 mesh that explicitly includes the high-symmetry K point (1/3, 1/3, 0) in the sampling pins the Fermi level exactly at the Dirac cone vertex. This highlights that a discrete sampling approach must consider both mesh density and symmetry. For a smooth DOS, meshes beyond 60x60x1 are often necessary [3].

Essential Research Workflows and Tools

The following diagram illustrates the logical workflow for selecting and applying either the Integral Smoothing or Discrete Sampling method, based on the nature of the system and the research goals.

Diagram 1: Decision workflow for DOS calculation methods

The Scientist's Toolkit: Key Research Reagents

In computational science, software and data sets serve as the essential "reagents" for conducting research. The following table details key resources relevant to the methods discussed in this guide.

Table 3: Essential computational tools and resources for DOS calculations and method validation.

| Item | Function & Relevance |

|---|---|

| CASTEP / SIESTA | Plane-wave and numerical basis-set DFT packages used for computing energies and DOS; provide practical examples of k-point convergence [1] [3]. |

| Atomate2 | A modular workflow platform for materials science that supports high-throughput DFT calculations and interoperability between different computational codes, facilitating systematic convergence studies [4]. |

| TM23 Data Set | A benchmark data set for 27 d-block elements; useful for testing the performance and transferability of computational methods across different types of metals [5]. |

| Mean Squared Deviation (MSD) | A quantitative metric for assessing the convergence of DOS curves with increasing k-point density in discrete sampling methods [1]. |

| Imaginary-Time Correlation Function F(q,τ) | The key quantity in the integral smoothing approach, providing a robust alternative domain for testing convergence and attenuating noise [2]. |

The comparative analysis presented in this guide reveals a clear trade-off. Discrete sampling is a direct and intuitive method but becomes computationally prohibitive for metals and complex systems, where its sensitivity to noise and mesh parameters necessitates expensive calculations. In contrast, integral smoothing offers a sophisticated pathway to computational efficiency and robust convergence, as demonstrated by methods that operate in the imaginary-time domain, potentially saving millions of CPU hours [2]. However, this efficiency comes at the cost of increased algorithmic complexity.

For the practicing researcher, the choice is not about finding a universally superior method but about selecting the right tool for the problem. For high-throughput screening of simple insulators, where directness is valued, discrete sampling with a standardized k-point mesh may be sufficient. For the study of metallic systems, materials under extreme conditions, or when computational efficiency is paramount, integral smoothing techniques represent the cutting edge, enabling accurate simulations that would otherwise be infeasible. As the field progresses, the integration of these robust, efficient methods into mainstream workflow platforms like Atomate2 will be crucial for advancing the high-throughput discovery and design of next-generation materials.

Understanding the Brillouin Zone and k-Space Sampling

In computational materials science, modeling infinite periodic systems like crystals relies on two fundamental concepts: the unit cell in real space and the Brillouin zone in reciprocal space. The unit cell, defined by three lattice vectors (a, b, c), is the smallest repeating unit that characterizes the crystal's structure through periodic boundary conditions (PBC) [6]. The Brillouin Zone (BZ) is the primitive cell in reciprocal space, uniquely defining the set of all possible wavevectors k that describe the periodicity of electronic waves in the crystal [7].

k-space sampling refers to the discrete selection of points within the Brillouin zone necessary for numerical calculations. Because physical properties, such as energy, require integration over this continuous space, practical computations must use a finite set of k-points to approximate these integrals [7]. The choice of k-point grid is a critical convergence parameter that directly impacts the accuracy and numerical cost of electronic structure calculations for periodic systems [6].

Methods for k-Space Sampling

Several established schemes exist for generating efficient k-point sets, each with distinct strengths for different applications.

Monkhorst-Pack Grids: This is one of the most common methods for generating k-points. It creates a uniform, Γ-centered grid defined by three integers (e.g., M x N x O) that specify the number of points along each reciprocal lattice vector [6] [7]. The density of this grid is paramount for convergence.

Special k-points and High-Symmetry Grids: For systems with high symmetry, it is possible to use non-uniform grids that sample only the irreducible wedge of the Brillouin zone. These "special k-points" can significantly reduce computational cost while maintaining accuracy [6] [7]. In some cases, the grid must be chosen to explicitly include specific high-symmetry points where key electronic events occur, such as band edges in hexagonal systems [8].

Automated and Adaptive Schemes: Modern high-throughput computing often employs automatically generated, very dense k-point sets to achieve high accuracy (e.g., better than 1 meV/atom) across many materials with different cell sizes and shapes [7]. More recently, adaptive and machine learning-based schemes have emerged to resolve fine structures in the BZ for properties like electronic transport and topological states [7].

Table: Comparison of Common k-Space Sampling Methods

| Method | Key Feature | Primary Use Case | Considerations |

|---|---|---|---|

| Monkhorst-Pack (MP) | Uniform, Γ-centered grid [6] | General-purpose calculations on diverse materials | Grid density must be converged; even/odd grid choice can matter [8] |

| Symmetric k-space grid | Samples only the irreducible Brillouin zone [6] | Highly symmetric crystal structures | Reduces number of points needed, lowering computational cost |

| Gamma-Only | Uses only the Γ-point (k=0) | Large supercells, surfaces, molecules in a box [7] | Only valid when the BZ is small enough that Γ-point is representative |

| High-Symmetry Paths | Points along lines connecting high-symmetry points | Band structure plots | Not for total energy; used for visualizing dispersion relations |

Convergence of the Density of States (DOS)

The Density of States (DOS) describes the number of electronic states available at each energy level and is a fundamental property for understanding electronic behavior. Accurately converging the DOS with respect to k-point sampling is a critical step in electronic structure calculations.

Protocol for DOS Convergence

A robust protocol for validating DOS convergence involves a systematic analysis [7]:

- Initial Calculation: Start with a coarse k-point grid (e.g., "Basic" or "Normal" quality in some codes [6]).

- Iterative Refinement: Repeatedly increase the density of the k-point grid (e.g., "Good", "VeryGood" [6]) while recalculating the DOS.

- Quantitative Comparison: Monitor key features of the DOS across calculations. These include:

- The position and shape of major peaks.

- The value of the DOS at the Fermi level (for metals).

- The fundamental band gap (for semiconductors/insulators).

- Convergence Criterion: The calculation is considered converged when these key features change by less than a pre-defined tolerance (e.g., 0.01-0.1 eV) between successive refinements.

The Scientist's Toolkit: Essential Components for k-Space Studies

Table: Key Computational Tools and Concepts

| Item / Concept | Function / Purpose |

|---|---|

| Kohn-Sham DFT | The workhorse method for computing the electronic structure of periodic systems [7]. |

| Plane-Wave Basis Set | A common choice of basis functions for expanding the electronic wavefunctions in periodic codes [7]. |

| Norm-Conserving Pseudopotentials | Replace core electrons to make plane-wave calculations feasible; used in high-throughput phonon databases [9]. |

| Brillouin Zone | The primitive cell in reciprocal space; must be sampled to compute total energies and DOS [7]. |

| Monkhorst-Pack Grid | A standard method for generating a uniform set of k-points for sampling the Brillouin zone [6] [7]. |

| DFPT | A method for efficiently calculating second-order derivatives, such as phonon spectra and dielectric properties [9]. |

| Phonon DOS | Describes the distribution of vibrational frequencies; calculated from the phonon band structure [9]. |

The following workflow outlines the systematic procedure for achieving a converged DOS:

System-Specific Considerations

The k-point density required for DOS convergence is not universal and depends heavily on the specific material and its electronic structure [6]:

- Metals vs. Insulators: Metals require denser k-point grids than insulators and semiconductors because of the sharp change in occupation around the Fermi level, known as the Fermi surface [6] [7].

- Unit Cell Size: A general rule is that smaller unit cells have larger Brillouin zones and thus require more k-points for adequate sampling. Conversely, large supercells and disordered systems approximated by large supercells can often be treated with very few k-points, sometimes only the Γ-point [6] [7].

- Crystal Symmetry: In systems like hexagonal crystals, important electronic features can be localized at specific high-symmetry points (e.g., the K-point). A standard Monkhorst-Pack grid might not sample these points unless the grid dimensions are chosen to be divisible by specific numbers (e.g., 3 for the K-point) [8]. For total DOS, a sufficiently dense grid will eventually recover the correct physics, but a smart grid choice accelerates convergence [8].

Comparative Performance of Electronic Structure Methods

While k-point sampling is a numerical parameter within a single calculation, the choice of the underlying electronic structure method itself is a physical approximation that greatly affects the result. This is particularly true for predicting band gaps.

Table: Benchmarking of Electronic Structure Methods for Band Gap Prediction

| Method | Theoretical Class | Typical Accuracy for Band Gaps | Computational Cost | Key Characteristic |

|---|---|---|---|---|

| LDA/GGA (e.g., PBE) | DFT (Jacob's Ladder 2-3) | Systematic underestimation [10] | Low | Workhorse for geometry; not for accurate band gaps. |

| HSE06 | Hybrid-DFT (Jacob's Ladder 4) | Good accuracy [10] | Moderate | Mixes HF exchange; widely used in condensed matter. |

| mBJ | meta-GGA (Jacob's Ladder 3) | Good accuracy [10] | Moderate | Potentially the best performing semi-local functional [10]. |

| G₀W₀@LDA (PPA) | Many-Body Perturbation Theory | Marginal gain over best DFT [10] | High | Common one-shot GW; accuracy limited by PPA and starting point. |

| G₀W₀ (Full-Freq) | Many-Body Perturbation Theory | Dramatic improvement over PPA [10] | High | Replacing PPA with full-frequency integration improves accuracy. |

| QSGW | Self-Consistent MBPT | Systematic overestimation (~15%) [10] | Very High | Removes starting-point dependence but over-corrects the gap. |

| QSGWĜ | Self-Consistent MBPT with Vertex | Highest accuracy [10] | Extremely High | Adds vertex corrections; can flag questionable experiments [10]. |

Experimental Protocols in High-Throughput Studies

High-throughput (HT) computational frameworks have established standardized protocols to ensure consistency and reliability across thousands of calculations. The methodology used for the Materials Project phonon database is an exemplary protocol [9]:

- Software and Functional: Calculations are performed with the ABINIT software package using the PBEsol exchange-correlation functional, which has proven accurate for phonon frequencies [9].

- Brillouin Zone Sampling: The Brillouin zone is sampled using a Γ-centered grid with a density of approximately 1500 k-points per reciprocal atom. This high density is chosen to achieve a target total energy accuracy of better than 1 meV/atom [9].

- Convergence Checks: Strict convergence criteria are applied during the initial geometry optimization (forces < 10⁻⁶ Ha/Bohr). The validity of the phonon calculation itself is checked by enforcing physical sum rules, such as the Acoustic Sum Rule (ASR) and Charge Neutrality Sum Rule (CNSR). Significant breaking of these rules indicates a lack of numerical convergence [9].

This HT approach demonstrates that for automated, high-accuracy studies, a "one-size-fits-all" k-point density, calibrated to a stringent energy tolerance, is an effective strategy.

How Band Dispersion and Electronic Structure Influence k-Point Requirements

In density functional theory (DFT) calculations for periodic systems, the selection of an appropriate k-point grid represents a fundamental computational parameter that directly controls the numerical integration over the Brillouin zone. This sampling density profoundly influences the accuracy and reliability of calculated electronic properties, particularly the density of states (DOS) and electronic band structure. The relationship between k-point sampling requirements and the underlying electronic structure of materials forms a critical research frontier in computational materials science, with significant implications for predicting material properties ranging from electronic transport to catalytic activity [11] [12].

The fundamental challenge stems from the intricate band dispersion relationships exhibited by different classes of materials. Crystalline solids with complex Fermi surfaces or rapidly varying electronic eigenvalues throughout the Brillouin zone necessitate considerably denser k-point sampling compared to materials with flatter band dispersions. This review systematically examines how electronic structure characteristics dictate k-point requirements, provides validated convergence protocols for different material classes, and establishes best practices for ensuring reproducible DOS calculations within high-throughput computational frameworks [12] [13].

Theoretical Foundations: Band Dispersion and Brillouin Zone Sampling

Electronic Band Structure Fundamentals

In periodic solids, electronic states are described by Bloch wavefunctions characterized by crystal momentum vectors (k-points) within the Brillouin zone. The relationship between energy and momentum for these states defines the band structure of a material, while the DOS describes the number of electronic states per unit energy [14]. The accuracy with which these properties are computed depends critically on adequately sampling the Brillouin zone with a sufficient density of k-points to capture all relevant electronic features.

Band dispersion refers to the energy variation of electronic states as a function of k-vector direction and magnitude throughout the Brillouin zone. Materials exhibit dramatically different dispersion characteristics: nearly-free electron systems like simple metals show gradual energy variations, while strongly correlated materials and those with complex Fermi surfaces often display rapid energy changes over small k-space regions [14] [11]. These differences directly impact k-point sampling requirements, as steeper band dispersions necessitate finer sampling to accurately resolve the electronic structure.

Brillouin Zone Integration and Special Point Methods

The theoretical foundation for modern k-point sampling schemes dates to seminal work by Baldereschi, Chadi-Cohen, and Monkhorst-Pack, who developed methods for efficient Brillouin zone integration using special k-point sets that exploit crystal symmetry [11]. The Monkhorst-Pack scheme, in particular, has become the standard approach for generating k-point meshes that systematically converge toward the continuum limit with increasing grid density [12].

The fundamental principle underlying these methods recognizes that different electronic properties converge at different rates with respect to k-point sampling. Total energy calculations typically converge more rapidly than band energies at specific k-points, while Fermi surface properties and dielectric responses often require exceptionally dense sampling [11]. This variability necessitates property-specific convergence testing, particularly for DOS calculations where insufficient sampling can artificially broaden or distort critical features.

Methodology: Establishing k-Point Convergence Protocols

Convergence Testing Framework

A robust protocol for establishing k-point requirements begins with systematic convergence tests where the property of interest is computed with progressively denser k-point meshes until the change falls below a predetermined threshold. For high-throughput databases like JARVIS-DFT and the Materials Project, this typically involves targeting a total energy convergence of 1 meV/atom or better, though stricter criteria may be necessary for specific electronic properties [12].

The automated convergence framework developed for the JARVIS-DFT database exemplifies this approach: first, the plane-wave cutoff energy is converged using a fixed k-point mesh, then k-point density is converged using the optimized cutoff. At each step, the k-point grid is progressively expanded while monitoring the total energy until the difference between successive calculations falls below 0.001 eV per cell [12]. This methodology has been applied to over 30,000 materials, providing extensive data on k-point requirements across diverse chemical spaces.

Computational Specifications for DOS Convergence

For DOS calculations specifically, additional considerations beyond total energy convergence come into play. The tetrahedron method (Blochl correction) often provides superior accuracy for DOS calculations compared to Gaussian smearing, particularly for metals and narrow-gap semiconductors [15]. This method interpolates eigenvalues between calculated k-points using linear tetrahedral integration, better capturing sharp features in the electronic structure.

The smearing width parameter must be carefully selected to balance physical meaningfulness with numerical stability. For metals, a smearing width of 0.01-0.05 eV is typically appropriate, while insulators may use smaller values or the tetrahedron method exclusively [16] [15]. Importantly, the smearing width and k-point sampling are interrelated parameters—coarser k-point meshes may require larger smearing values to produce apparently smooth DOS, but this artificially broadens spectral features and obscures genuine electronic structure details.

Table 1: Standard Convergence Parameters for Different Material Classes

| Material Class | Energy Convergence Threshold | Typical k-point Density | Smearing Type | Special Considerations |

|---|---|---|---|---|

| Simple Metals (Na, Al) | 1 meV/atom | 4,000-6,000 k-points/Å⁻³ | Gaussian (0.05 eV) | Fermi surface sampling critical |

| Transition Metals | 1 meV/atom | 6,000-10,000 k-points/Å⁻³ | Tetrahedron | d-band features require dense sampling |

| Semiconductors (Si, GaAs) | 1 meV/atom | 3,000-5,000 k-points/Å⁻³ | Gaussian (0.01 eV) | Band edges must be resolved |

| Insulators (SiO₂, diamond) | 1 meV/atom | 2,000-4,000 k-points/Å⁻³ | Tetrahedron | Lower sampling often sufficient |

| Topological Insulators | 0.5 meV/atom | 8,000-12,000 k-points/Å⁻³ | Tetrahedron | Surface states need very dense sampling |

Comparative Analysis: k-Point Requirements Across Material Systems

Simple Metals Versus Transition Metals

The contrasting k-point requirements for simple metals versus transition metals highlight how electronic structure dictates sampling density. Simple metals like aluminum exhibit nearly-free electron behavior with gradual band dispersion, enabling reasonable DOS convergence with moderate k-point densities (4,000-6,000 k-points/Å⁻³) [12]. In contrast, transition metals feature complex d-band manifolds with rapidly varying dispersion near the Fermi level, necessitating denser sampling (6,000-10,000 k-points/Å⁻³) to accurately reproduce distinctive DOS features like the prominent d-band peak [12].

This distinction becomes particularly important for catalytic applications where the d-band center position relative to the Fermi level serves as a critical descriptor of catalytic activity. Inadequate k-point sampling can shift the calculated d-band center by several tenths of an electronvolt, potentially reversing predictions of catalytic efficacy [12]. For such applications, convergence testing should directly monitor the property of interest (d-band center) rather than relying solely on total energy convergence.

Insulators, Semiconductors, and Low-Dispersion Systems

Materials with localized electronic states and flatter band dispersions—including many insulators, semiconductors, and strongly correlated systems—typically require less dense k-point sampling for DOS convergence. For instance, silicon band structure calculations achieve reasonable convergence with 8×8×8 Γ-centered k-point meshes for self-consistent field calculations, though denser sampling (16×16×16 or higher) may be necessary for highly accurate effective mass determinations [16] [17].

The presence of band gaps significantly influences convergence behavior. For gapped systems, the DOS within the gap region should be precisely zero, providing a sensitive metric for k-point convergence. Inadequate sampling can introduce spurious gap states or artificially narrow the fundamental band gap, particularly when using smearing methods without proper extrapolation to zero smearing width [15]. Hybrid functional calculations, which often employ reduced k-point sets due to computational constraints, require careful validation against standard DFT with full k-point convergence [17].

Low-Dimensional and Anisotropic Systems

Low-dimensional materials (surfaces, 2D materials, nanowires) and anisotropic crystals present unique challenges for k-point sampling due to their strongly direction-dependent band dispersion. For example, in layered materials like MoS₂, electronic dispersion is much stronger within the basal plane than perpendicular to it, necessitating anisotropic k-point sampling with higher density along in-plane directions [17].

The JARVIS-DFT database analysis reveals that anisotropic k-point meshes can reduce computational cost by 30-50% compared to isotropic sampling while maintaining equivalent accuracy for DOS calculations [12]. This optimization becomes particularly valuable in high-throughput computational workflows where thousands of materials must be screened efficiently. For such systems, the k-point grid should be scaled inversely with the magnitude of the real-space lattice vectors, with additional consideration of the anisotropy in effective mass tensors [18] [12].

Table 2: k-Point Sampling Recommendations for Different DFT Codes

| Software Package | Automatic k-point Generation | DOS-Specific Settings | Band Structure Path Generation |

|---|---|---|---|

| VASP | KSPACING tag or Monkhorst-Pack meshes | ISMEAR = -5 (tetrahedron) for DOS | Line mode KPOINTS with high-symmetry path |

| JDFTx | bandstructKpoints utility | Fixed density from SCF calculation | Automated path with bandstructKpoints |

| GPAW | ASE.dft.kpoints special_points | occupations=FermiDirac(0.01) | ASE Cell.bandpath() method |

| SIESTA | kgrid_cutoff parameter | Increased kgrid for DOS calculations | Band structure from saved density |

Experimental Validation: Case Studies and Computational Evidence

High-Throughput Validation Across Material Classes

The JARVIS-DFT database provides comprehensive evidence for k-point requirements across diverse material systems, with calculations for over 30,000 materials establishing clear relationships between crystal structure, electronic complexity, and necessary sampling density [12]. This large-scale analysis reveals that k-point density distributions vary significantly across material classes, with approximately 15% of materials requiring exceptionally dense sampling (>8,000 k-points/Å⁻³) for accurate DOS convergence, while another 20% converge adequately with relatively sparse sampling (<3,000 k-points/Å⁻³) [12].

Machine learning models trained on this extensive dataset can predict k-point requirements for new materials with high accuracy, using features derived from crystal structure and elemental composition [12]. These models demonstrate that the number of atoms in the unit cell, symmetry, and the presence of specific elements (particularly transition metals and rare earths) serve as strong predictors of k-point requirements, enabling pre-screening before expensive computational campaigns.

Silicon Band Structure: A Prototypical Example

Silicon band structure calculations provide an instructive case study in k-point convergence. The standard protocol involves: (1) a self-consistent field (SCF) calculation with a 8×8×8 Γ-centered k-point mesh to obtain the converged charge density; (2) a non-self-consistent calculation along a high-symmetry path (Γ-X-W-K-Γ-L) with fixed charge density to compute band eigenvalues [16]. This approach yields the characteristic indirect band gap of silicon between the Γ-point and near the X-point.

For DOS calculations specifically, the k-point requirement is actually more stringent than for band structure plots along symmetry lines. A 32×32×32 k-point mesh (27,000 k-points in the full Brillouin zone, reduced by symmetry) is often necessary to fully converge the silicon DOS, particularly the precise shape of the valence band edge and the conduction band minimum [16] [15]. This represents a significantly denser sampling than required for total energy convergence alone, highlighting the property-specific nature of k-point requirements.

Metallic Systems and Fermi Surface Resolution

For metallic systems, k-point convergence presents unique challenges due to the discontinuous occupation of states at the Fermi level. The sharp Fermi surface requires particularly dense sampling to accurately resolve, with insufficient k-point meshes producing spurious peaks or dips in the DOS at the Fermi energy [15]. This has profound implications for predicting electronic transport properties, superconducting behavior, and magnetic instabilities.

In palladium, for example, the d-band peak lies immediately below the Fermi level, and its position and shape directly influence the material's catalytic properties. Convergence testing reveals that a 24×24×24 k-point mesh is necessary to converge the Pd DOS within 0.01 eV for energies near the Fermi level, while total energy convergence might be achieved with a coarser 12×12×12 mesh [15]. This exemplifies how properties derived from the DOS often require 2-4 times denser k-point sampling compared to total energy calculations.

Visualization: k-Point Convergence Workflow

The following diagram illustrates the systematic protocol for establishing k-point requirements for DOS calculations:

Research Reagent Solutions: Computational Tools for k-Point Optimization

Table 3: Essential Computational Tools for k-Point Convergence Studies

| Tool Name | Function | Application Context |

|---|---|---|

| VASP KPOINTS | Defines k-point meshes and band structure paths | VASP DFT calculations |

| JDFTx bandstructKpoints | Generates k-point paths and plotting scripts | JDFTx band structure calculations |

| ASE.dft.kpoints | Provides high-symmetry points and paths | ASE-based workflows (GPAW, etc.) |

| KpLib | Generates optimized generalized k-point grids | Efficient Brillouin zone sampling |

| AutoGR | Automatic grid refinement for k-points | Adaptive k-point convergence |

| JARVIS-DFT Convergence | Automated convergence testing | High-throughput DFT workflows |

| Materials Project | Pre-converged parameters database | Initial parameter estimation |

The relationship between band dispersion and k-point requirements underscores a fundamental principle in computational materials science: electronic structure complexity dictates computational cost. Materials with steep band dispersions, complex Fermi surfaces, or localized d/f-states necessitate significantly denser k-point sampling for DOS convergence compared to systems with gradual electronic dispersion. The systematic convergence protocols and comparative data presented herein provide researchers with validated approaches for establishing k-point requirements across diverse material systems.

For high-throughput computational screening, machine learning models trained on extensive convergence data offer promising approaches for predicting k-point requirements without exhaustive testing [12]. However, for critical applications where electronic properties determine material functionality, property-specific convergence testing remains essential. As computational methods continue to evolve toward more complex electronic structure treatments—including hybrid functionals, GW calculations, and spectroscopic simulations—attention to k-point convergence will remain indispensable for generating reliable, reproducible computational predictions.

In the realm of computational materials science, using density functional theory (DFT) to predict electronic properties requires careful convergence of numerical parameters. Among these, the sampling of the Brillouin zone with k-points is crucial. The density of states (DOS) provides deep insight into a material's electronic behavior, revealing whether a material is a metal, semiconductor, or insulator. Achieving a converged DOS is often more demanding than converging the total energy, and the required k-point density varies significantly between different material types [15] [1]. This guide objectively compares the k-point sampling requirements for metals, semiconductors, and insulators, providing validated protocols and data to guide researchers in efficiently obtaining accurate results.

Theoretical Background: k-Space Sampling and the DOS

The DOS quantifies the number of electronic states at each energy level. Computationally, it is obtained by integrating the electronic band structure over the Brillouin zone [1]. Since this integration is performed numerically at a finite set of k-points, the accuracy of the DOS depends heavily on the density of this sampling.

Insufficient sampling leads to an under-resolved DOS that may miss sharp features, a particular problem for metals and narrow-gap semiconductors where the occupancy changes abruptly at the Fermi energy ((E_F)) [19]. For metallic systems, the discontinuity at the Fermi surface means that a prohibitively large number of k-points is needed for convergence unless smearing methods are employed to artificially smooth the occupation function [19].

The following workflow illustrates the general process for achieving a converged DOS, which is more complex than converging the total energy:

Comparative Analysis of k-Point Requirements

The k-point grid required for convergence is not universal; it depends strongly on the material's electronic structure. The primary reason for this difference lies in the nature of their band dispersion and the presence of a band gap.

Metals

Challenge: Metals possess a partially filled band, leading to a sharp Fermi surface where electronic occupancies change discontinuously. This makes the DOS near (E_F) particularly sensitive to k-point sampling [19].

K-point Need: Metals require the densest k-point sampling among the three material classes. One study on bulk aluminum (a metal) showed that a (25\times25\times25) grid was needed to converge the total energy within 1 meV when using a small smearing [19].

Smearing Strategy: Using a larger smearing broadening (e.g., Methfessel-Paxton or cold smearing) is highly effective for metals. This smoothens the discontinuity, allowing for convergence with a coarser grid (e.g., (13\times13\times13) for Al with a 0.43 eV broadening), significantly speeding up the calculation [19].

Semiconductors

Challenge: Semiconductors have a small but non-zero band gap. The DOS does not have a discontinuity, but the band edges need to be well-defined. Narrow-gap semiconductors behave similarly to metals and require finer sampling [20].

K-point Need: Semiconductors, especially narrow-gap ones, require a finer grid than insulators. For band gap prediction, a "Good" quality k-space is recommended, which can be significantly denser than a "Normal" grid sufficient for total energy [20].

Smearing Strategy: For gapped systems, a low broadening (e.g., 0.01 eV) with Fermi-Dirac or Gaussian smearing is appropriate to avoid artificially closing the band gap [19].

Insulators

Challenge: Insulators have a large band gap and typically possess smoothly varying DOS curves, making them less sensitive to the fineness of k-point sampling.

K-point Need: Insulators and wide-gap semiconductors have the lowest k-point requirement. A "Normal" k-space quality often suffices for obtaining a converged DOS and total energy [20].

Smearing Strategy: The Fermi-Dirac distribution with a very low smearing parameter (~0.01 eV) is suitable, as the occupation function is already nearly a step function [19].

Table 1: Summary of k-Point Requirements and Strategies for Different Material Types

| Material Type | Relative k-point Need | Key Challenge | Recommended Smearing | Typical Use Case |

|---|---|---|---|---|

| Metals | Very High | Fermi surface discontinuity | Methfessel-Paxton / Cold (Larger broadening) | Bulk Aluminum [19] |

| Semiconductors | Medium to High | Defining band edges, narrow gaps | Fermi-Dirac / Gaussian (Low broadening) | Narrow-gap materials [20] |

| Insulators | Low | Smooth DOS, large gap | Fermi-Dirac (Very low broadening) | Diamond, Anatase [20] |

Table 2: Quantitative Example of Convergence for a Metal (Silver) and an Insulator (Diamond)

| Material | Property | ~Converged k-grid | Property-Specific Note |

|---|---|---|---|

| Silver (Metal) [1] | Total Energy | 6x6x6 | Energy converged to within 0.05 eV. |

| Density of States (DOS) | 13x13x13 | Required >2x more points for smooth DOS. | |

| Diamond (Insulator) [20] | Formation Energy | "Normal" Quality | Error of ~0.03 eV/atom. |

| Band Gap | "Good" Quality | Higher accuracy needed for band gap. |

Experimental Protocols for DOS Convergence

General Convergence Workflow

A robust methodology is essential for validating DOS convergence. The following protocol, adaptable for any material, is based on established computational practices [1]:

- Initial Energy Convergence: First, perform a standard convergence test for the total energy with respect to the k-point grid. This establishes a baseline grid (e.g., NxNxN).

- DOS-Specific Grid Refinement: Increase the k-point density significantly beyond the energy-converged grid. A common practice is to use a grid that is 1.5 to 2 times denser in each dimension.

- Iterative Comparison: Calculate the DOS with a series of increasingly dense k-point grids. For each new grid, quantitatively compare the resulting DOS to the previous one.

- Convergence Metric: A suitable metric is the mean squared deviation (MSD) between two consecutive DOS curves, calculated over a relevant energy range (e.g., from 8 eV below to 8 eV above the Fermi level) [1]. The DOS can be considered converged when the MSD falls below a predefined threshold (e.g., 0.001-0.005 in the squared units of the DOS).

- Visual Inspection: Alongside quantitative metrics, visually inspect the DOS curves. A converged DOS will appear smooth, with no significant changes in the shape or height of its peaks when the k-grid is further increased.

Material-Specific Methodologies

For Metallic Systems (e.g., Silver):

- Smearing: Select an appropriate smearing method (Methfessel-Paxton or Cold) and a broadening parameter. The entropy contribution to the free energy should remain small [19].

- Focus Region: Pay close attention to the convergence of the DOS in the region immediately around the Fermi energy, as this is critical for metallic properties [1].

- Extrapolation: For the highest accuracy in the ground-state total energy, use the implemented extrapolation schemes to obtain the ( T \rightarrow 0 ) K energy from calculations performed with finite smearing [19].

For Semiconductors/Insulators (e.g., Anatase TiO₂):

- Smearing: Use the Fermi-Dirac distribution with a small smearing width (e.g., 0.01 eV) to avoid artificially affecting the band gap [19].

- Band Edges: Ensure the valence band maximum and conduction band minimum are stable with increasing k-points. Projected DOS (PDOS) calculations can be used to confirm the character of these bands is unchanged [21].

- Band Structure Validation: The k-point grid used for the self-consistent charge calculation (for the DOS) should be well-converged. The subsequent band structure calculation itself is performed along a high-symmetry path using a non-self-consistent calculation with hundreds of points along that path [21].

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

Table 3: Key Computational Tools and Methods for k-Point Convergence Studies

| Tool / Method | Function | Example Use Case |

|---|---|---|

| Monkhorst-Pack Grid | Scheme for generating uniform k-point meshes in the Brillouin zone. | Standard k-sampling for initial total energy and DOS calculations [18]. |

| Tetrahedron Method | An alternative integration scheme that can better capture features at high-symmetry points. | Important for systems like graphene where the physics is dominated by a specific k-point (the K-point) [20]. |

| Smearing Functions | Mathematical functions that smooth orbital occupations to accelerate convergence in metals. | Methfessel-Paxton smearing for force calculations in metals; Fermi-Dirac for insulators [19]. |

| Plane-Wave Codes (e.g., VASP, CASTEP) | DFT software packages that implement k-point sampling and DOS calculation. | High-throughput calculations for diverse material classes [1] [22]. |

| Mean Squared Deviation (MSD) | A quantitative metric to compare two DOS curves and assess numerical convergence. | Determining if a k-point grid is dense enough for a reliable DOS [1]. |

| Projected DOS (PDOS) | Decomposes the total DOS into contributions from specific atoms or atomic orbitals. | Identifying the atomic origin of states in the band edges (e.g., O-p vs. Ti-d states in TiO₂) [21]. |

This guide demonstrates that a one-size-fits-all approach to k-point sampling is ineffective. The validation of DOS convergence requires a systematic, material-aware protocol. Metals present the greatest challenge, necessitating dense grids coupled with strategic smearing. Semiconductors, particularly narrow-gap systems, also demand careful, property-specific convergence, while insulators are the most forgiving. By adopting the comparative frameworks, experimental protocols, and tools outlined herein, researchers can ensure the reliability of their computed electronic properties, forming a solid foundation for subsequent materials design and discovery efforts in fields ranging from electronics to drug development.

Common Artifacts of Insufficient k-Point Sampling in DOS Curves

Within density functional theory (DFT) calculations for periodic systems, the density of states (DOS) is a fundamental property that reveals the number of available electron states at each energy level. Achieving a converged DOS requires adequate sampling of the Brillouin zone using a k-point mesh. Insufficient k-point sampling introduces characteristic artifacts that obscure the true electronic structure, complicating the interpretation of material properties. This guide documents these common artifacts, providing a comparative analysis of their manifestation across different material classes, supported by experimental data and protocols essential for researchers validating DOS convergence.

Visual Artifacts and System-Specific Manifestations

Insufficient k-point sampling generates distinct visual artifacts in DOS curves, with severity and characteristics that depend on whether the material is a metal, semiconductor, or insulator.

General Artifacts from Coarse Sampling

- Spiky or Discontinuous DOS: The most direct artifact of a coarse k-point mesh is a DOS curve that appears as a series of sharp, discrete spikes rather than a smooth, continuous function. This occurs because the calculation only includes a limited set of discrete energy levels from the sparse k-point sampling, failing to integrate smoothly across the Brillouin zone [1] [3].

- Incorrect Fermi Level Placement: In metallic and semimetallic systems, the position of the Fermi level (Ef) is highly sensitive to k-point sampling. An inadequate mesh can pin the Fermi energy at an incorrect position, leading to a mischaracterization of the material as a metal or semiconductor [3]. For example, in graphene, a mesh that does not include the high-symmetry K point can miscalculate the Fermi level, showing it above or below the Dirac point [3].

- Poor Resolution of Critical Features: Key features like band edges, van Hove singularities, and peaks from localized states appear poorly resolved or may be missing entirely. This loss of detail prevents accurate extraction of band gaps and effective masses [1].

Material-Specific Artifacts

The impact of poor k-point sampling varies significantly with the material's electronic structure, as summarized in Table 1.

Table 1: Common Artifacts by Material Class

| Material Class | Example System | Common Artifacts from Low k-Point Sampling | Key Convergence Consideration |

|---|---|---|---|

| Metals & Semimetals | Silver (Ag), Graphene | Severe smearing of the Fermi edge, spiky DOS near Ef, incorrect Fermi level position [1] [3]. | Requires very dense sampling, especially near the Fermi surface. |

| Semiconductors | Diamond | Underestimation of band gap, poor definition of valence and conduction band edges [3]. | Moderate k-point density is often sufficient. |

| Insulators | Hexagonal Boron Nitride (hBN) | False peaks in the band gap, inaccurate band gap width [23]. | Coarser k-point grids may be adequate compared to metals. |

Graphene (Semimetal): A sparse k-point mesh (e.g., 4x4x1) results in a spiky, non-physical DOS that completely obscures the characteristic linear V-shape of the Dirac cone [3]. The Fermi level is incorrectly positioned unless the k-point mesh explicitly includes the high-symmetry K point in the Brillouin zone [3].

Silver (Metal): For silver, a k-point mesh that is sufficient for converging the total system energy (e.g., 7x7x7) is often insufficient for obtaining a smooth DOS. The DOS curve only becomes smooth and quantitatively accurate with a much denser mesh, such as 13x13x13 or finer [1].

Diamond (Insulator): As a non-metallic system with a large band gap, diamond's DOS converges more easily with k-points than metals. Artifacts primarily involve a lack of smoothness in the valence and conduction band peaks rather than a fundamental misrepresentation of the Fermi surface [3].

Quantitative Analysis of Convergence

Converging the DOS requires a different and typically more stringent standard than converging the total energy of a system.

Energy vs. DOS Convergence

The total energy is a variational quantity that often converges relatively quickly with the number of k-points. In contrast, the DOS is a detailed function of energy that requires a dense mesh to capture its full shape. In a study on silver, the total energy was converged to within 0.05 eV using a 7x7x7 k-point mesh. However, the DOS required a 13x13x13 mesh to achieve a smooth, well-converged curve, demonstrating that the k-point density needed for the DOS can be an order of magnitude higher than that needed for the energy [1].

Metrics for DOS Convergence

A suitable metric for quantifying DOS convergence is the Mean Squared Deviation (MSD) between DOS curves calculated with successive k-point meshes. One protocol defines the MSD as (1/N) * Σ(DOS_N(i) - DOS_{N-1}(i))^2, where the sum is over all energy points i [1]. The convergence is considered acceptable when the MSD falls below a predefined threshold (e.g., 0.005 in the studied case of silver) [1]. The summed MSD relative to the finest available mesh is another robust metric [1].

Table 2: Quantitative Convergence Data for Silver (FCC)

| k-Point Mesh | Total Energy Convergence (eV) | Mean Squared Deviation (MSD) of DOS | Qualitative DOS Description |

|---|---|---|---|

| 6x6x6 | ~0.05 | >0.18 | Severely spiked, non-physical |

| 7x7x7 | Converged (Reference) | ~0.05 | Spiked, poor resolution |

| 13x13x13 | Negligible change | ~0.005 | Smooth, well-converged |

| 18x18x18 | Negligible change | ~0.001 | Highly accurate |

Experimental Protocols for DOS Convergence Testing

A standardized protocol is essential for rigorous validation of DOS convergence.

Workflow for Systematic Convergence

The following workflow, adapted from standard practice in computational materials science [1] [3], ensures a systematic approach.

Diagram 1: Workflow for systematic DOS convergence testing.

Detailed Methodology

The workflow consists of the following detailed steps, with critical notes for robust results:

- Converge Basis Set and Other Parameters: Prerequisite. Converge the basis set (e.g., plane-wave cutoff energy) and pseudopotential choices before k-point convergence testing. This isolates the k-point as the sole variable [1].

- Select Initial k-Point Mesh: Start with a coarse k-point mesh. The Monkhorst-Pack scheme is the standard method for generating k-point grids [3] [11]. For materials with metallic character, consider a denser starting point than for insulators.

- Perform DFT Calculation: Run a single-point (static) DFT calculation to achieve self-consistency for the electron density. Use a previously relaxed geometry to ensure forces are negligible [1].

- Calculate DOS: Compute the DOS using the converged charge density. A small broadening parameter (e.g., 0.1 eV) helps resolve fine features without excessive smearing [3].

- Increase k-Point Density: Systematically increase the density of the k-point mesh. For cubic systems, this can be an NxNxN series. For lower-symmetry systems, increase the sampling proportionally in all reciprocal lattice directions [11].

- Calculate Convergence Metric: Quantify the difference between the current DOS and the previous one using the MSD metric [1]. Restrict the analysis to an energy range of interest (e.g., from 8 eV below to 8 eV above the Fermi level) to focus on chemically relevant states [1].

- Check Convergence Criterion: If the MSD is below a pre-defined threshold (e.g., 0.005), the DOS can be considered converged. If not, return to Step 5.

The Scientist's Toolkit: Essential Research Reagents

This section details the key computational tools and concepts required for conducting DOS convergence tests.

Table 3: Key "Research Reagent" Solutions for DOS Studies

| Tool / Concept | Function & Explanation | Example Implementations |

|---|---|---|

| DFT Code | Software that performs the core electronic structure calculation to obtain energies and wavefunctions. | CASTEP, SIESTA, VASP, Quantum ESPRESSO [1] [3] |

| k-Point Sampling Scheme | A method for generating the discrete set of k-points in the Brillouin Zone. The Monkhorst-Pack scheme is the most common. | Monkhorst-Pack, Gamma-centered [3] [11] |

| DOS Calculation Utility | A post-processing tool that uses the output of a DFT code (eigenvalues, k-point weights) to generate the DOS. | Eig2DOS (SIESTA), optados (OPIUM), internal DOS modules in VASP/CASTEP [3] |

| Broadening Scheme | A mathematical function used to represent discrete energy levels as a continuous DOS. The width (broadening) controls smoothness. | Gaussian, Lorentzian, Methfessel-Paxton, Tetrahedron [1] [3] |

| Convergence Metric Script | A custom script (e.g., in Python) to calculate quantitative convergence metrics like Mean Squared Deviation (MSD). | Custom scripts using numpy/scipy in Python [1] |

Advanced Considerations and Diagnostic Flowchart

Beyond standard protocols, several advanced factors can influence convergence and the interpretation of artifacts.

Advanced Considerations

- High-Symmetry Point Inclusion: For certain systems, especially low-symmetry crystals or those with Dirac cones like graphene, including specific high-symmetry points in the k-mesh is more critical than a uniformly dense mesh. In graphene, a 3x3x1 mesh that includes the K point can yield a more accurate Fermi level than a denser mesh that misses it [3].

- Projected DOS (PDOS) Convergence: The atom-projected or orbital-projected DOS (PDOS) can converge at different rates than the total DOS. Localized states might require a different k-point density for convergence than delocalized band states [23].

- Supercell Calculations: For defective systems modeled with large supercells, the Brillouin zone shrinks, reducing the k-point density required. In the limit of very large supercells, a single k-point (often Γ-point) calculation can be sufficient [23].

Diagnostic Flowchart

The following diagnostic chart provides a logical path for identifying and remedying the root cause of common DOS artifacts.

Diagram 2: Diagnostic flowchart for common DOS artifacts.

This guide has detailed the common artifacts—spikiness, incorrect Fermi level placement, and poor feature resolution—that arise from insufficient k-point sampling in DOS curves. The comparative data and protocols provided offer a clear, actionable roadmap for researchers. Adherence to the systematic workflow and utilization of the quantitative metrics outlined herein are essential for producing reliable, converged DOS results, forming a critical foundation for accurate electronic structure analysis within the broader context of computational materials discovery and validation.

Systematic Procedures for Achieving DOS Convergence

In the field of computational materials science, achieving a converged electronic density of states (DOS) is a fundamental prerequisite for obtaining reliable electronic properties. The discretization of the Brillouin zone through k-point sampling lies at the heart of this challenge, directly impacting the accuracy and computational cost of density functional theory (DFT) calculations. Two predominant schemes exist for defining this sampling: the traditional Monkhorst-Pack (MP) method and the more automated KpointDensity approach (often implemented as a k-spacing parameter). The central thesis of this guide is that while both schemes can achieve mathematically equivalent sampling, their practical implementation, convergence behavior, and suitability for high-throughput workflows differ significantly. The choice between them should be informed by the specific material system, target properties, and computational workflow constraints.

The fundamental challenge stems from approximating integrals over the Brillouin zone with finite sums. Insufficient sampling can lead to inaccurate total energies, forces, and—crucially for this discussion—a poorly converged DOS, which affects subsequent predictions of band gaps and other electronic properties [15]. This guide provides an objective comparison of the MP and KpointDensity schemes, supported by experimental data and detailed protocols, to help researchers make informed decisions when validating DOS convergence.

Scheme Fundamentals: Implementation and Workflow

The Monkhorst-Pack (MP) Scheme

The MP scheme generates a regular grid of k-points based on user-specified subdivisions ((N1), (N2), (N3)) along the reciprocal lattice vectors [18]. The k-points are generated according to: $${\mathbf k} = \sum{i = 1}^3 \frac{ni + si}{Ni} {\mathbf b}i, \qquad ni \in [0, Ni[$$ where (\mathbf{b}i) are the reciprocal lattice vectors, and (si) is an optional shift (often 0 or 0.5). A Gamma-centered mesh includes the Γ-point (0,0,0), while the standard MP mesh can be offset [18]. The number of subdivisions is typically chosen to be approximately inversely proportional to the length of the corresponding unit cell vector [18].

The KpointDensity Scheme

The KpointDensity scheme (known as KSPACING in VASP or specified via a k-point separation in codes like CASTEP) defines a uniform k-point spacing parameter, which automatically generates a grid to achieve that spacing throughout the Brillouin zone [18] [24]. The grid parameters (Ni) along each reciprocal lattice vector are derived from:

$$Ni = \max(1, \text{round}(|\mathbf{b}i| / \text{k-spacing}))$$

where (|\mathbf{b}i|) is the norm of the reciprocal lattice vector [25]. This approach directly ties the sampling density to the real-space cell dimensions.

Visualizing the k-Point Convergence Workflow

The following diagram illustrates a systematic workflow for validating DOS convergence, applicable to both MP and KpointDensity schemes:

Comparative Analysis: Performance and Practical Implementation

Key Characteristics and Operational Differences

The table below summarizes the fundamental differences between the two k-grid generation schemes:

| Feature | Monkhorst-Pack (MP) Scheme | KpointDensity Scheme |

|---|---|---|

| Primary Input | Subdivisions (N1, N2, N_3) along reciprocal lattice vectors [18] | Target k-point spacing (in Å⁻¹ or Bohr⁻¹) [24] |

| Grid Control | Direct, explicit control over grid dimensions | Indirect, automated control based on a spacing parameter |

| Basis | Reciprocal lattice vectors | Reciprocal lattice vector lengths and real-space cell size |

| Automation Level | Lower (requires manual dimension selection) | Higher (automatically adjusts to cell geometry) [25] |

| Typical Use Case | Systems with known symmetry, manual calculations | High-throughput workflows, variable cell geometries [12] [25] |

Performance Considerations and Convergence Behavior

Computational Cost and Convergence Stability: A finer k-grid does not always guarantee faster Self-Consistent Field (SCF) convergence. In some cases, a coarse grid (e.g., 2×2×1 for a MoS₂ monolayer) can fail to converge entirely, while a slightly denser grid (3×3×1) converges readily [26]. This occurs because a poor discretization can make the SCF minimization ill-behaved [26].

Material-Dependence: The required k-point density is highly material-specific. Metals, with their rapidly changing electronic states near the Fermi level, generally require denser sampling than insulators [3]. A high-throughput study of over 30,000 materials found that converged k-point density correlates with material parameters like density, band slope, number of band-crossings, and crystal system [12] [25].

Symmetry Considerations: MP grids can potentially break crystal symmetry if not chosen carefully, particularly for non-symmorphic space groups. The KpointDensity approach, as implemented in many codes, automatically respects the symmetry of the reciprocal lattice [18].

Quantitative Data from Systematic Studies

Large-scale convergence studies provide quantitative guidance for k-point selection. The table below summarizes recommended k-point spacings for different accuracy levels, as implemented in the CASTEP code [24]:

| Quality Setting | k-point Separation (Å⁻¹) | Typical Application |

|---|---|---|

| Coarse | 0.07 | Initial structure screening, quick tests |

| Medium | 0.05 | Standard property calculations |

| Fine | 0.04 | High-precision DOS, phonons, elastic constants |

The JARVIS-DFT database, generated through automated convergence of over 30,000 materials, provides statistical data for k-point line density (L) with a tolerance of 0.001 eV/cell [12] [25]. Machine learning models trained on this data can predict appropriate k-point densities and plane-wave cutoffs for new materials before DFT calculations are performed [12] [25].

Experimental Protocols for DOS Convergence Validation

Standard Convergence Protocol

- Initialization: Start with a structurally optimized system and a converged plane-wave cutoff energy [27].

- Parameter Sweep: Perform a series of single-point energy calculations while systematically increasing the k-point density.

- For the MP scheme, increase the number of subdivisions (e.g., 2×2×2, 4×4×4, 6×6×6).

- For the KpointDensity scheme, decrease the spacing parameter (e.g., 0.07, 0.05, 0.04, 0.03 Å⁻¹).

- Property Monitoring: For each calculation, monitor the convergence of:

- Convergence Criterion: The parameters are considered converged when the change in the target property (e.g., total energy) between successive calculations falls below a predefined threshold (e.g., 1 meV/atom) [12] [25].

DOS-Specific Convergence Checks

- Energy Smearing and Broadening: When calculating the DOS, a smearing width or broadening parameter is often applied. A finer k-grid allows for the use of a smaller broadening value, revealing more detail in the DOS [3] [15].

- Tetrahedron Method: For MP grids, using the tetrahedron method (Blochl correction) for DOS integration generally provides a more accurate DOS than the Gaussian broadening method alone, especially with moderate k-grids [18] [15].

- Band Structure Verification: For complex systems with band crossings or topological features, the converged k-grid used for the DOS calculation should be validated against the band structure to ensure all relevant features are captured [3].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

| Tool/Solution | Function in k-Point Convergence | Example Implementations |

|---|---|---|

| Automated Convergence Workflows | Systematically tests k-grid densities and evaluates property convergence. Essential for high-throughput studies. | JARVIS-DFT automation code [12] [25] |

| K-Point Prediction Models | Machine learning models that predict starting k-point parameters for new materials, reducing initial guesswork. | JARVIS-ML [12] [25] |

| Symmetry-Aware Grid Generators | Generates k-point grids that respect the crystal symmetry, ensuring efficient and correct sampling. | KpLib, autoGR [18] |

| Post-Processing and Analysis Tools | Extracts and visualizes DOS, band structures, and other electronic properties from calculation results. | Eig2DOS, gnubands (SIESTA) [3] |

The choice between Monkhorst-Pack and KpointDensity schemes is not merely syntactic but represents different approaches to managing computational complexity. For traditional, manual calculations on systems with known symmetry, the MP scheme provides explicit, fine-grained control. For high-throughput computational workflows or systems with varying cell sizes, the KpointDensity scheme offers automation and transferability, ensuring consistent sampling quality across diverse materials [12] [25].

Ultimately, the "right" initial k-grid is one that achieves property convergence within acceptable computational limits. Neither scheme is universally superior; the optimal choice depends on the research context. For DOS convergence specifically, the key is a systematic validation protocol that directly monitors the DOS and related electronic properties, rather than relying solely on total energy convergence. The methodologies and data presented herein provide a robust framework for this essential computational materials science procedure.

Within the broader context of validating density of states (DOS) convergence with k-point density research, establishing a robust convergence protocol is fundamental for obtaining reliable electronic structure calculations. The precision of DOS analysis directly impacts subsequent materials characterization and property prediction, making systematic convergence testing an essential component of computational materials science. This guide objectively compares convergence methodologies across major computational frameworks, providing experimental data and protocols to help researchers establish definitive convergence criteria for DOS calculations, with particular emphasis on monitoring both energy convergence and feature stability in the resulting electronic structure.

Experimental Protocols for K-Point Convergence

Basic Convergence Testing Methodology

The fundamental approach to k-point convergence involves progressively increasing k-point grid density while monitoring target properties until changes fall below predetermined thresholds. The step-by-step protocol encompasses:

Initial Grid Selection: Begin with a coarse k-point grid (e.g., 2×2×2 or 4×4×4) based on system symmetry and lattice parameters [28]. For anisotropic systems, maintain k-point ratios inversely proportional to real-space lattice vectors [29].

Progressive Refinement: Systematically increase k-point density through a sequence of calculations (e.g., 4×4×4 → 6×6×6 → 8×8×8, etc.) while maintaining consistent computational parameters [28] [30].

Convergence Thresholding: Establish energy-based convergence criteria prior to calculation, typically targeting thresholds of 1.0E-04 eV/atom for preliminary calculations, 1.0E-05 eV/atom for production quality, and 1.0E-06 eV/atom for metallic systems or optical properties [28].

DOS-Specific Monitoring: Beyond total energy, explicitly track DOS feature stability, including peak positions, band edges, and spectral shapes, which may require stricter convergence than total energy alone [30].

Automated Convergence Workflows

Multiple computational packages offer automated k-point convergence utilities:

AIMStools Implementation:

Alternatively, via command line: aims_workflow converge_kpoints geometry.in [28]

VASP Workflow Integration: K-point convergence can be prepended as a workflow add-on to property calculations through the "Convergence" dialog in VASP's workflow designer interface [30].

Script-Based Automation: Custom bash or Python scripts can automate file generation for sequential k-point calculations, extraction of target properties, and convergence analysis [29].

DOS Feature Stability Assessment

Beyond energy convergence, DOS-specific stability metrics must be monitored:

Feature Position Stability: Track movement of characteristic peaks, band edges, and van Hove singularities across k-point densities.

Spectral Smoothness: Quantify reduction in spurious oscillations with increasing k-point density.

Feature Preservation: Ensure emergent features at low k-point densities persist and stabilize at higher densities rather than representing numerical artifacts.

The convergence workflow can be visualized through the following protocol:

Quantitative Convergence Data Comparison

Total Energy Convergence Thresholds

Table 1: Standard convergence thresholds for k-point grids in electronic structure calculations

| Precision Level | Energy Threshold (eV/atom) | Typical Applications | Representative K-Grid |

|---|---|---|---|

| Sparse Sampling | 1.0E-04 eV/atom | Initial geometry optimizations | 8×8×8 [28] |

| Production Quality | 1.0E-05 eV/atom | Most electronic property calculations | 12×12×12 to 16×16×16 [28] |

| High Precision | 1.0E-06 eV/atom | Metallic systems, optical spectra, phonons | 18×18×18 and higher [28] |

Representative Convergence Data

Table 2: K-point convergence data for a representative semiconductor system (adapted from AIMStools documentation)

| K-Grid | K-Point Density | Total Energy (eV) | Band Gap (eV) | SCF Cycles | Converged |

|---|---|---|---|---|---|

| 2×2×2 | 1.01 | -7900.073544 | 0.67 | 12 | True |

| 4×4×4 | 2.01 | -7901.237159 | 0.77 | 12 | True |

| 6×6×6 | 3.02 | -7901.317293 | 0.78 | 12 | True |

| 8×8×8 | 4.02 | -7901.327057 | 0.67 | 12 | True |

| 12×12×12 | 6.03 | -7901.328883 | 0.64 | 12 | True |

| 16×16×16 | 8.04 | -7901.328954 | 0.64 | 12 | True |

The data demonstrates energy convergence at 12×12×12 for 1.0E-04 eV/atom threshold, while stricter thresholds require denser grids [28]. Notably, the band gap shows non-monotonic convergence, highlighting the importance of property-specific testing beyond total energy.

Research Reagent Solutions

Table 3: Essential computational tools for k-point convergence studies

| Tool / Solution | Function | Implementation Examples |

|---|---|---|

| Automated Workflow Managers | Streamline convergence testing | AIMStools KPointConvergence, VASP workflow add-ons [28] [30] |

| Scripting Frameworks | Custom convergence protocols | Bash/Python scripts for batch job generation [29] |

| Convergence Accelerators | Reduce computational cost | Initial state reuse from pre-converged calculations [31] |

| Stability Metrics | Quantify feature robustness | Adjusted stability measures, DOS smoothness parameters [32] [33] |

| Visualization Tools | Analyze convergence trends | Interactive plotting (aims_workflow -i), convergence charts [28] [30] |

DOS Feature Stability Assessment Protocol

Stability Metrics and Evaluation

The stability of selected features—whether in k-space sampling or electronic structure features—can be quantified using adjusted stability measures that account for chance selection. For DOS convergence, we adapt stability concepts from feature selection literature [32] [33]:

The stability assessment relationship can be visualized as:

The adjusted stability measure (ASM) is calculated as:

Where SA(si,sj) = (r - kikj/n) / (min(ki,kj) - max(0,ki+kj-n)) with r = |si ∩ sj|, ki = |si|, kj = |sj|, and n = total features [32].

This measure ranges from (-1, 1], where positive values indicate stability better than random selection, addressing limitations of unadjusted measures that artificially inflate with larger feature subsets [32].

Comparative Performance Analysis

Computational Efficiency Comparison

Initializing from pre-converged states significantly enhances computational efficiency in convergence testing. In benchmark studies:

Gold Crystal K-Point Convergence: Calculations initialized from previous states showed more than two times fewer self-consistent field iterations compared to neutral atom initialization [31].

Water Molecule Calculations: High-accuracy calculations initialized from low-accuracy results converged in a single iteration versus six iterations from neutral atom start [31].

Biomedical Feature Selection: Classifiers like logistic regression demonstrated higher feature selection stability than Random Forest, with stability decreasing hyperbolically as data perturbation increased [33].

System-Specific Convergence Behavior

Different materials systems exhibit distinct convergence characteristics:

Semiconductors/Insulators: Generally converge with moderate k-point densities (8×8×8 to 12×12×12), though band gaps may show oscillatory convergence [28].

Metallic Systems: Require denser k-point sampling (16×16×16 and higher) due to Fermi surface effects [28].

Anisotropic Structures: Require k-point grids adapted to reciprocal lattice vector ratios rather than symmetric grids [29].

Magnetic Materials: May need specialized initialization protocols for different spin configurations [31].

This comparison guide demonstrates that robust DOS convergence requires monitoring both energy thresholds and feature stability metrics. Automated workflow tools like AIMStools and VASP add-ons provide standardized protocols, while custom scripting enables specialized analyses. The integration of stability assessment concepts from feature selection literature offers a more comprehensive convergence validation framework. Researchers should select convergence thresholds appropriate for their target applications, with production-quality DOS calculations typically requiring at least 1.0E-05 eV/atom energy convergence and stable electronic features across k-point densities. Implementation of state-reuse initialization protocols can significantly enhance computational efficiency during convergence testing cycles.