Benchmarking Density Functional Theory: A Comprehensive Guide to Accurate DOS Predictions

This article provides a systematic comparison of Density Functional Theory (DFT) functionals for predicting the electronic Density of States (DOS), a critical property for understanding material behavior in drug development...

Benchmarking Density Functional Theory: A Comprehensive Guide to Accurate DOS Predictions

Abstract

This article provides a systematic comparison of Density Functional Theory (DFT) functionals for predicting the electronic Density of States (DOS), a critical property for understanding material behavior in drug development and biomedical research. We explore the foundational principles of DOS, evaluate the performance of popular functionals like PBE, B3LYP, and M062X, and address common accuracy challenges. The guide also covers advanced machine-learning correction techniques and provides a practical framework for validating predictions against experimental and high-fidelity computational data, empowering researchers to select optimal methodologies for their specific applications.

Understanding the Electronic Density of States: A Foundation for Material Properties

The Density of States (DOS) is a fundamental concept in solid-state physics and materials science, providing a simple yet highly informative summary of the electronic structure of a material. Formally, the DOS, denoted as ( \mathcal{D}(\varepsilon) ), describes the number of electronic states available to be occupied at each energy level ( \varepsilon ) [1] [2]. This quantity is crucial for understanding and predicting a material's behavior, as it directly influences key physical properties, including electrical conductivity, optical absorption, and thermal properties. The DOS can be decomposed into contributions from specific atoms or orbitals, known as the projected density of states (PDOS) or local density of states (LDOS), offering deeper insights into the contributions of different chemical species and atomic orbitals to the overall electronic structure [2]. For periodic crystals, the DOS is calculated by integrating over the Brillouin zone, summing over all bands ( n ) and wavevectors ( \mathbf{k} ) [2].

The analysis of DOS reveals remarkable features of a material's electronic structure. Notably, it allows for the investigation of the ( E ) vs. ( k ) dispersion relation near the band edges, the effective mass of charge carriers, Van Hove singularities (which appear as sharp features in the DOS at critical points where ( \nablak \omega{ef} = 0 )), and the effective dimensionality of the electrons [1] [3]. These features have a profound influence on the physical properties of materials and are essential for the interpretation of experimental data, such as fundamental absorption spectra, which yield information about critical points in the optical density of states [3].

Computational Methods for DOS Calculation

The prediction of DOS relies heavily on computational methods, primarily Density Functional Theory (DFT), which provides a framework for solving the single-electron Kohn-Sham equations for the ground state electron density [2]. The accuracy of these predictions, however, is intrinsically linked to the choice of the exchange-correlation (XC) functional. This guide focuses on comparing DOS predictions across three major categories of functionals: semi-local functionals, hybrid functionals, and empirical methods.

Key Functionals and Methodologies

- Semi-Local Functionals (LDA, GGA, meta-GGA): These include the Local Density Approximation (LDA) and Generalized Gradient Approximations (GGA), such as the Perdew-Burke-Ernzerhof (PBE) functional. They are computationally efficient but are known to underestimate band gaps due to their incomplete treatment of electronic self-interaction [4]. This underestimation can lead to an inaccurate description of electronic and optical properties.

- Hybrid Functionals: This category mixes a fraction of the exact Fock exchange with semi-local exchange and correlation. A prominent example is the PBE0 functional, which combines one-quarter Fock exchange with three-quarters PBE exchange and PBE correlation [4]. This mixing partially corrects the band gap underestimation of semi-local functionals but at a significantly higher computational cost. Another semi-empirical hybrid functional is B3LYP, whose parameters are fitted to experimental data [4].

- Empirical and Semi-Empirical Methods: Techniques like the empirical pseudopotential method (EPM), the k·p method, and the adjustable orthogonalized plane waves (AOPW) method use parameters adjusted to reproduce experimental results, such as optical data from critical points [3]. These methods were historically crucial for calculating DOS and optical properties with manageable computational resources before the widespread adoption of ab initio DFT.

Table 1: Comparison of Common Density Functional Approximations for DOS Calculation

| Functional Type | Representative Example(s) | Key Features for DOS | Band Gap Tendency | Computational Cost |

|---|---|---|---|---|

| Semi-Local GGA | PBE [4] | Computationally efficient; standard for initial screening | Underestimates [4] | Low |

| Hybrid | PBE0 [4] | Mixes exact Hartree-Fock exchange; improves gap accuracy | Corrects towards experimental values [4] | High |

| Semi-Empirical Hybrid | B3LYP [4] | Parameters fitted to molecular data; good for molecules | Varies, generally more accurate than GGA | High |

| Empirical Parametric | Empirical Pseudopotential Method (EPM), k·p [3] | Parameters fitted to experimental optical data | Designed to match experiment | Low (once parameterized) |

Workflow for DOS Calculation

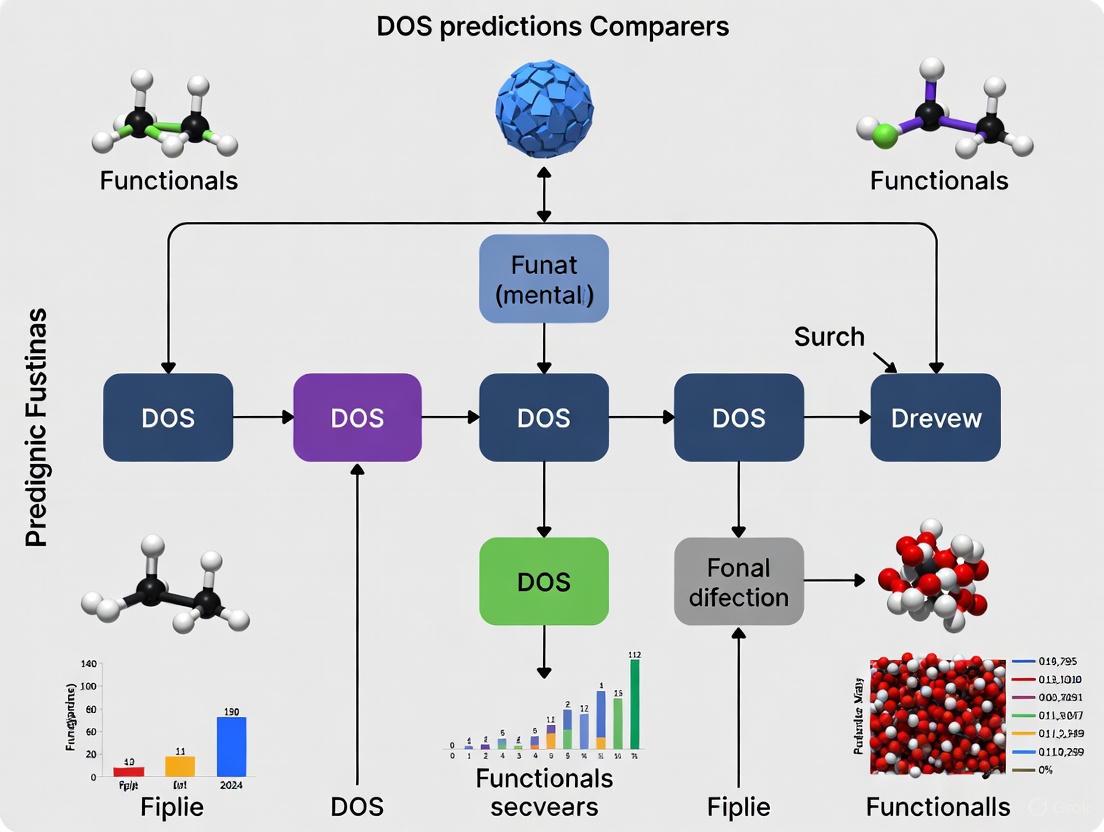

The following diagram illustrates a generalized computational workflow for calculating the Density of States using ab initio packages like VASP or Quantum ESPRESSO.

Diagram 1: Workflow for DOS calculation.

Software and Protocols

Different software packages implement these methodologies with specific protocols. For instance, in VASP, a typical workflow involves a self-consistent field (SCF) calculation followed by a non-SCF calculation to obtain the DOS. Key parameters include ISMEAR (smearing method), SIGMA (smearing width), and LORBIT (to enable orbital projections) [5] [4]. For hybrid functional calculations like PBE0, tags such as LHFCALC = .TRUE. and AEXX = 0.25 are used [4]. In Quantum ESPRESSO, the dos.x module calculates the DOS from a prior SCF calculation performed by pw.x. It requires an input file with a &DOS namelist, where parameters like degauss (broadening), DeltaE (energy grid step), and bz_sum (choice between 'smearing' or 'tetrahedra' for Brillouin zone summation) are specified [6].

Comparative Analysis of DOS Predictions

The choice of functional leads to significant differences in predicted DOS and, consequently, in derived material properties.

Band Gap and Electronic Structure

A clear demonstration of functional dependency is the calculation of the electronic band gap. For cubic diamond silicon, a PBE (GGA) calculation yields a band gap of 0.62 eV, which is severely underestimated compared to the experimental value of about 1.1 eV. In contrast, a PBE0 (hybrid) calculation on the same system predicts a band gap of 1.84 eV, providing a much better, though still not perfect, agreement [4]. This systematic underestimation of band gaps by semi-local functionals like PBE and LDA limits their predictive power for classifying materials as metals, semiconductors, or insulators.

Table 2: Example DOS-Derived Properties for BaXH₃ Hydrides from GGA-PBE [7]

| Material | Electronic Nature (from DOS) | Primary Contributors at Fermi Level | Hydrogen Gravimetric Capacity (wt%) |

|---|---|---|---|

| BaMoH₃ | Metallic | Mo 4d electrons [7] | 1.26% |

| BaTcH₃ | Metallic | Tc 4d electrons [7] | 1.24% |

| BaTaH₃ | Metallic | Ta 5d electrons [7] | 0.93% |

Optical Properties from DOS

The DOS is directly linked to a material's optical response. The imaginary part of the dielectric constant, ( \epsiloni(\omega) ), which describes optical absorption, can be written in terms of a combined optical density of states, ( Nd(\omega) ) [3]: [ \epsiloni(\omega) = \frac{2\pi^2}{\omega} \bar{F} Nd(\omega) ] where ( \bar{F} ) is an average oscillator strength. This equation shows that structure in ( \epsilon_i(\omega) ) originates from critical points (Van Hove singularities) in the joint DOS between occupied and unoccupied states [3]. Therefore, inaccuracies in the DOS, such as an underestimated band gap, will directly translate to errors in the predicted absorption spectra and other optical constants like reflectivity. Hybrid functionals, by improving the description of the DOS, generally yield more accurate optical properties.

Advanced Topics and Future Directions

Phonon Density of States

Beyond the electronic DOS, the phonon DOS is critical for understanding lattice dynamics and thermodynamic properties. Its calculation, for example in VASP, involves computing interatomic force constants in a supercell, followed by Fourier interpolation to build the dynamical matrix and diagonalize it to obtain phonon frequencies on a q-point mesh [5]. For polar materials, the long-range dipole-dipole interactions must be treated via Ewald summation, requiring input of the Born effective charges and the dielectric tensor to correctly capture the LO-TO splitting of optical phonon modes [5].

Machine Learning for DOS

A emerging frontier is the application of machine learning (ML) to predict the DOS. One approach is to learn the total DOS directly. A more scalable and transferable method is to learn the atom-projected local DOS (LDOS), ( \mathcal{D}i(\varepsilon) ), based on the principle of nearsightedness in electronic matter [2]. The total DOS is then the sum of these atomic contributions: ( \mathcal{D}(\varepsilon) = \sumi \mathcal{D}_i(\varepsilon) ). This approach can achieve high accuracy and is much faster than ab initio calculations, facilitating the high-throughput screening of materials' electronic structures [2].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Computational DOS Studies

| Tool / Reagent | Function / Role | Example Use-Case |

|---|---|---|

| DFT Software (VASP, Quantum ESPRESSO) | Engine for performing first-principles electronic structure calculations. | Calculating eigenfunctions and eigenvalues to compute DOS via Eq. (3) [5] [6]. |

| Exchange-Correlation Functional | Approximates the quantum mechanical exchange-correlation energy. | PBE for rapid screening; PBE0 for accurate band gaps [4]. |

| Pseudopotential | Represents the effect of core electrons and nucleus, reducing computational cost. | Norm-conserving or PAW pseudopotentials for elements in a compound [7]. |

| k-point Mesh | A grid of points in the Brillouin zone for numerical integration. | Dense, uniform mesh for accurate DOS (e.g., in dos.x [6]). |

| Smearing / Tetrahedron Method | Method for Brillouin zone integration and dealing with Dirac deltas in DOS. | Gaussian smearing for metals; tetrahedron method for accurate DOS of insulators [6] [4]. |

| Post-Processing & Visualization (PyProcar) | Tool for plotting and analyzing DOS/PDOS from calculation outputs. | Comparing spin-up and spin-down DOS or PDOS from different atoms [8]. |

The Density of States (DOS) is a fundamental concept in condensed matter physics and materials science that describes the number of electronic states available at each energy level in a material [9]. It serves as a crucial bridge between a material's atomic structure and its macroscopic electronic, optical, and catalytic properties. Unlike band structure diagrams that display energy levels as a function of electron momentum, the DOS aggregates all allowed electronic states within small energy intervals, providing a compressed yet highly informative view of a material's electronic landscape [9]. This comprehensive guide examines DOS prediction methodologies across different computational functionals, comparing their performance, accuracy, and applicability to real-world material behavior prediction.

At its core, the DOS plot shares the same energy axis as band structure but replaces the wave vector (k) information with the density of available electronic states. Regions where bands are dense correspond to high DOS values, while sparse bands yield low DOS, and energy ranges completely devoid of bands result in zero DOS [9]. The position of the Fermi level within this distribution determines whether a material behaves as a metal (Fermi level within a high DOS region) or insulator/semiconductor (Fermi level within a DOS gap) [9]. The Projected Density of States (PDOS) extends this concept by decomposing the total DOS into contributions from specific atomic orbitals, enabling researchers to determine which atoms and orbitals dominate particular energy regions [9].

Methodological Approaches to DOS Prediction

First-Principles Calculations

Density Functional Theory (DFT) stands as the cornerstone computational method for calculating electronic structures from first principles. The Materials Project employs standardized DFT workflows where relaxed structures undergo both uniform and line-mode non-self-consistent field (NSCF) calculations, typically using the GGA (PBE) functional, sometimes with a +U correction for strongly correlated systems [10]. The calculation hierarchy for determining band gaps prioritizes DOS-derived values over line-mode band structures, followed by static and optimization calculations [10]. However, conventional DFT methodologies face significant challenges in accurately predicting band gaps, typically underestimating them by approximately 40% due to approximations in exchange-correlation functionals and derivative discontinuity issues [10]. This systematic underestimation has motivated the development of more advanced functionals and alternative approaches.

Machine Learning Innovations

Pattern Learning (PL) represents a groundbreaking machine learning approach that circumvents the computational limitations of traditional DFT methods [11]. This method compresses DOS patterns from one-dimensional continuous curves into multi-dimensional vectors, then applies principal component analysis (PCA) to identify highly correlated DOS patterns across various metal systems [11]. The approach uses only four carefully selected features: the d-orbital occupation ratio, coordination number, mixing factor, and the inverse of Miller indices [11]. Remarkably, while DFT scaling follows O(N³) where N is the number of electrons, the PL method operates independently of electron count, reducing computation time from hours to minutes while maintaining 91-98% pattern similarity compared to DFT calculations [11].

Functional Forms for Disordered Systems

For disordered organic semiconductors, traditional DOS models have relied primarily on Gaussian and exponential functional forms, each with significant limitations [12] [13]. The Gaussian DOS model fails at high carrier concentrations, while the exponential DOS proves inadequate at low concentrations [12]. A novel DOS theory based on frontier orbital theory and probability statistics has recently emerged, proposing a Weibull distribution-based DOS that more accurately reflects the physical reality that states in disordered systems are localized only in the band tail of DOS while remaining extended in the center of the band [12]. This approach aligns with Anderson's localization theory and demonstrates superior performance in predicting charge carrier mobility across varying concentrations and electric fields [12].

Table 1: Comparison of DOS Prediction Methodologies

| Method | Theoretical Basis | Computational Scaling | Key Advantages | Principal Limitations |

|---|---|---|---|---|

| DFT (GGA/PBE) | First Principles | O(N³) | First-principles accuracy without empirical parameters; Wide applicability | Band gap underestimation (~40%); High computational cost |

| Pattern Learning (ML) | Principal Component Analysis | Independent of electron count | Speed (minutes vs. hours); 91-98% pattern similarity | Requires training data; Feature selection critical |

| Novel DOS for Organics | Frontier Orbital Theory & Probability Statistics | Varies with implementation | Better mobility prediction; Physical basis in disorder | Parameter selection required; Less established |

Comparative Performance Analysis Across Functionals

Accuracy in Band Gap Prediction

The accuracy of DOS and consequent band gap predictions varies significantly across computational methods. Traditional DFT functionals like LDA and GGA systematically underestimate band gaps by approximately 50% according to literature, with internal testing by the Materials Project confirming roughly 40% underestimation [10]. Some known insulators are even incorrectly predicted to be metallic using these standard functionals [10]. The mBJ (modified Becke-Johnson) potential significantly improves upon standard GGA, as demonstrated in studies of CoZrSi and CoZrGe Heusler alloys where it provided more accurate electronic structure characterization for these thermoelectric materials [14].

Machine learning approaches offer a fundamentally different accuracy profile. In testing across binary alloy systems including Cu-Ni and Cu-Fe, the pattern learning method achieved pattern similarities of 91-98% compared to reference DFT calculations while operating independently of system size constraints [11]. For disordered organic semiconductors, the novel DOS model based on Weibull distributions demonstrated superior agreement with experimental mobility data across varying concentrations and electric fields compared to traditional Gaussian and exponential DOS models [12].

Table 2: Quantitative Accuracy Comparison of DOS Methods

| Material System | Method | Performance Metric | Result | Experimental Validation |

|---|---|---|---|---|

| Multi-component Alloys | Pattern Learning | Pattern Similarity | 91-98% | Compared to DFT calculations [11] |

| General Compounds | DFT (GGA/PBE) | Band Gap Error | ~40% underestimation | Internal test of 237 compounds [10] |

| Disordered Organic Semiconductors | Novel DOS Model | Mobility Prediction | Closer to experimental data | Across concentration and electric field variations [12] |

| Heusler Alloys (CoZrSi, CoZrGe) | GGA+mBJ | Electronic Structure | Half-metallic nature revealed | Good agreement with experimental trends [14] |

Computational Efficiency

The computational efficiency of DOS prediction methods varies dramatically, with significant implications for research throughput and applicability to high-throughput screening. Traditional DFT methods require substantial computational resources, with typical calculation times ranging from hours to days depending on system size and complexity [11]. The pattern learning method reduces this to minutes or less—demonstrated in the Cu-Ni system where accurate DOS predictions were obtained in under one minute on a single CPU core compared to two hours on 16 cores for DFT [11].

For high-throughput materials screening, efficiency considerations extend beyond individual calculation time to encompass preprocessing, feature selection, and model training. The Materials Project's automated DFT workflow represents an optimized implementation for high-throughput computation, but still faces scalability challenges due to the fundamental O(N³) scaling of DFT [10]. Machine learning approaches dramatically improve scalability once trained, enabling rapid screening of thousands of materials without recurring quantum mechanical calculations [11].

Application-Specific Performance

Different DOS prediction methods excel in specific material domains. For ordered inorganic crystals like Heusler alloys, DFT with appropriate functionals (GGA+mBJ) successfully predicts key electronic properties including half-metallic behavior in CoZrSi and CoZrGe, which is crucial for their application in spintronics and thermoelectric domains [14]. The pattern learning method has demonstrated particular strength in metallic alloy systems, accurately reproducing DOS patterns across composition variations in Cu-Ni and Cu-Fe systems while capturing the effects of different crystal structures [11].

For disordered organic semiconductors, the novel DOS model based on probability statistics and frontier orbital theory outperforms both Gaussian and exponential DOS models in predicting charge carrier mobility dependencies on concentration and electric field [12] [13]. This improved performance stems from its more physical representation of the DOS distribution near the HOMO and LUMO orbitals, correctly representing states as localized only in the band tails while extended in the band center [12].

Experimental Protocols and Methodologies

DFT Calculation Workflow

Standardized protocols for DOS calculation using Density Functional Theory have been established by consortia like the Materials Project to ensure consistency and reproducibility [10]. The workflow begins with structure optimization to determine the lowest energy atomic configuration, followed by a self-consistent field (SCF) calculation with a uniform k-point grid (Monkhorst-Pack or Γ-centered for hexagonal systems) [10]. The charge density from this calculation is then used for subsequent non-self-consistent field (NSCF) calculations along two paths: a line-mode calculation for band structure visualization along high-symmetry lines, and a uniform calculation for DOS computation [10].

For DOS computation, a normalized DOS probability matrix can be defined from the calculated eigenvalues. The elements of this matrix represent probable values of each DOS level at given energy intervals, allowing for comprehensive electronic structure analysis [11]. The Materials Project provides both total DOS and elemental projections by default, with total orbital and elemental orbital projections available through their API [10]. Validation steps include recomputing band gaps from both DOS and band structure objects to address potential discrepancies arising from k-point sampling differences [10].

Machine Learning Implementation

The pattern learning methodology for DOS prediction follows a structured pipeline comprising learning and prediction phases [11]. In the learning phase, DOS patterns from training systems are digitized into image vectors within a defined energy-DOS window (typically -10 eV to 5 eV for energy and 0 to 3 for DOS) [11]. Principal Component Analysis is then applied to identify the eigenvectors (principal components) that capture maximum variance in the training data, effectively creating a compressed representation of DOS patterns [11].

In the prediction phase for new materials, coefficients for the principal components are estimated through linear interpolation between the two most similar training systems based on selected features (d-orbital occupation ratio, coordination number, etc.) [11]. The predicted DOS pattern is reconstructed using these coefficients, followed by transformation to a DOS probability matrix and final DOS calculation [11]. This method successfully addresses the mathematical challenge of mapping relatively few input material labels (composition, structure) to numerous output DOS values across energy levels [11].

Diagram 1: DOS Prediction Methodologies Workflow. This diagram illustrates the three primary computational approaches for predicting Density of States, showing their distinct workflows and application domains.

Research Reagent Solutions: Computational Tools for DOS Analysis

Table 3: Essential Computational Tools for DOS Research

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| WIEN2k | DFT Package | Full-potential electronic structure calculations | DOS calculation for Heusler alloys and ordered crystals [14] |

| Materials Project API | Database Interface | Access to precomputed DOS and band structures | High-throughput screening and validation [10] |

| BoltzTraP Code | Transport Properties Calculator | Thermoelectric coefficients from band structure | Transport property calculation [14] |

| pymatgen | Python Materials Library | Materials analysis and DFT input generation | Structure manipulation and DOS analysis [10] |

| Principal Component Analysis | Statistical Method | Dimensionality reduction for DOS patterns | Machine learning DOS prediction [11] |

The comparative analysis of DOS prediction methods reveals a complex landscape where different approaches excel in specific domains. Traditional DFT methods with standard functionals like GGA-PBE provide reasonable accuracy for many ordered inorganic materials while systematically underestimating band gaps [10]. The pattern learning approach represents a paradigm shift in computational materials science, offering unprecedented speed while maintaining high accuracy for metallic alloy systems [11]. For disordered organic semiconductors, novel DOS models based on physical principles beyond Gaussian and exponential distributions show promising improvements in predicting charge transport properties [12] [13].

Future research directions will likely focus on hybrid methodologies that combine the physical rigor of first-principles calculations with the speed of machine learning approaches. The development of more accurate exchange-correlation functionals remains crucial for addressing DFT's fundamental limitations in band gap prediction [10]. As computational resources expand and algorithms improve, the accurate prediction of DOS across diverse material classes will continue to enhance our ability to design materials with tailored electronic properties for specific applications in electronics, energy conversion, and quantum technologies.

Density Functional Theory (DFT) stands as the most widely employed computational method for modeling materials and molecular systems across chemistry, physics, and materials science due to its favorable balance of accuracy and computational cost [15] [16]. In principle, DFT is an exact theory; however, in practice, its application requires an approximation for the exchange-correlation (XC) energy functional, which encapsulates complex quantum mechanical electron-electron interactions [15]. The inexact treatment of these interactions is the primary source of systematic errors in DFT calculations, leading to delocalization or self-interaction error (SIE) where electrons incorrectly interact with themselves [16]. This error is particularly pronounced in systems with strongly correlated electrons, such as those containing transition metals or rare-earth elements with partially occupied d or f orbitals, and can significantly impact predictions of electronic structure, band gaps, reaction energies, and magnetic properties [16].

The development of XC functionals is often visualized using "Jacob's Ladder," a hierarchy that classifies functionals by their theoretical sophistication and the information they use, with each rung (LDA → GGA → meta-GGA → hybrid → etc.) generally offering improved accuracy at increased computational cost [16]. This guide provides a comparative analysis of the performance of different rungs on this ladder, focusing on their ability to predict one of the most fundamental electronic properties: the Density of States (DOS). We objectively compare the predictive performance of various functionals, supported by experimental and high-level theoretical data, and detail the methodologies used for their validation.

Functional Formalism and Classification

Table 1: Classification and Characteristics of Common DFT Approximations

| Functional Class | Representative Examples | Key Inputs | Systematic Error Tendencies |

|---|---|---|---|

| Local Density Approximation (LDA) | LSDA [17] [18] | Electron density (ρ) | Overbinding, severely underestimated band gaps |

| Generalized Gradient Approximation (GGA) | PBE [19] [16], BP86 [20] | ρ, Gradient of ρ (∇ρ) | Improved structures, but still underestimated band gaps |

| meta-GGA | SCAN, r2SCAN [16] [21] | ρ, ∇ρ, Kinetic energy density (τ) | Reduced self-interaction error; improved band gaps vs. GGA |

| Hybrid GGA | B3LYP [20] [22] [17], PBE0 [22] | ρ, ∇ρ, + a fraction of exact HF exchange | Better atomization energies and band gaps, but high computational cost |

| Screened Hybrid | HSE [16] [22] | ρ, ∇ρ, + screened HF exchange | Improved efficiency for solids; good band gaps and geometries |

The Hierarchy of Functionals: Jacob's Ladder

The following diagram illustrates the structure of Jacob's Ladder, connecting the different classes of functionals to their underlying formalisms.

Figure 1: Jacob's Ladder of DFT Functionals. This hierarchy arranges functionals from the simplest to the most complex, with each rung incorporating more physical information to improve accuracy. LDA uses only the local electron density, GGA adds its gradient, meta-GGA includes the kinetic energy density, and hybrid functionals incorporate a portion of non-local exact exchange from Hartree-Fock theory [16] [17] [18].

Quantitative Performance Assessment for Electronic Structure

Band Gap and DOS Prediction Accuracy

The band gap is a critical property derived from the DOS, and its inaccurate prediction is a classic failure of standard local and semi-local functionals.

Table 2: Performance Benchmark of Functionals for Electronic Structure Properties

| Functional | Class | Reported Band Gap Error (System) | DOS/Remarks |

|---|---|---|---|

| PBE | GGA | Severe underestimation [19] [16] | Semiconducting character identified, but band gap values are notably decreased with doping [19]. |

| PBE+mBJ | GGA+Potential | Improved gap prediction [19] | Used with GGA to provide more accurate electronic and optical properties [19]. |

| B3LYP | Hybrid GGA | Better than PBE/BP86 for conformational distributions [20] | Shows improved agreement with experimental J-coupling constants, indirectly related to DOS [20]. |

| HSE06 | Screened Hybrid | Improved localization for d/f electrons [16] | More accurate electronic structure for rare-earth oxides (REOs) vs. GGA [16]. |

| r2SCAN | meta-GGA | High accuracy for REOs [16] | Delivers high accuracy for structural and electronic predictions; reduces SIE [16]. |

Case Study: Rare-Earth Oxides and Strong Correlation

Rare-earth oxides (REOs) present a severe test for DFT due to the highly localized, strongly correlated 4f electrons. A comprehensive assessment of 13 XC approximations for binary REOs provides clear performance trends [16]. Standard GGA functionals like PBE often fail qualitatively for such systems. The meta-GGA functionals, particularly SCAN and r2SCAN, demonstrate significant improvement by reducing the SIE without empirical parameters, leading to more accurate structural, electronic, and energetic predictions [16]. For the highest accuracy, especially in electronic structure, incorporating a Hubbard +U correction to address local correlation and spin-orbit coupling (SOC) for heavy elements is often critical [16]. While hybrid functionals like HSE06 also improve localization, their computational cost for periodic systems like REOs is substantially higher [16].

Experimental and Theoretical Validation Protocols

Methodologies for Validating DFT Predictions

The following diagram outlines a generalized workflow for the experimental validation of DFT-predicted electronic structures.

Figure 2: Workflow for Validating DFT Predictions. The accuracy of DFT functionals is assessed by comparing their predictions against experimental data or results from high-level quantum chemistry methods [20] [15].

Key Validation Techniques

Validation via Free Energy and NMR: Unlike traditional validations based on single-point energies, a more rigorous test involves comparing the free energy surface generated by DFT-powered molecular dynamics with experimental observations. For instance, conformational distributions of hydrated peptides from DFT simulations can be validated by comparing calculated NMR scalar coupling constants (J-couplings) with experimental measurements via the Karplus relationship [20]. This approach validates the DFT functional's ability to accurately describe not just a minimum-energy structure, but the entire potential energy landscape relevant at finite temperatures.

Validation Against High-Level Theory: For systems where experimental data is scarce or difficult to interpret, results from high-level ab initio wavefunction methods like CCSD(T) (Coupled Cluster Single-Double with perturbative Triple) or FCI (Full Configuration Interaction) serve as a benchmark. These methods are often considered the gold standard for molecular systems [15]. The errors of hybrid functionals, for example, can be quantified by comparing their total energies, electron densities, and first ionization potentials against these reference values [15].

Optical Property Validation: For solids and semiconductors, the calculated optical properties—such as the complex dielectric function, absorption coefficient, and refractive index—derived from the DOS and band structure can be directly compared to experimental spectroscopic data (e.g., UV-Vis, ellipsometry) [19]. This provides a sensitive test for the accuracy of the underlying electronic structure.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Computational Tools and Concepts for DOS Studies

| Tool or Concept | Function & Role in DOS Analysis |

|---|---|

| Hybrid Functionals (e.g., B3LYP, PBE0) | Mix a fraction of exact Hartree-Fock exchange with GGA/meta-GGA exchange-correlation to reduce self-interaction error and improve band gap prediction [22] [17]. |

| DFT+U | Adds a Hubbard-type on-site Coulomb correction to treat strongly localized electrons (e.g., in d or f orbitals), crucial for accurate DOS of transition metal and rare-earth compounds [16]. |

| Modified Becke-Johnson (mBJ) Potential | A non-empirical potential used with GGA that can significantly improve band gap predictions without the cost of hybrid functionals [19]. |

| Spin-Orbit Coupling (SOC) | A relativistic correction essential for heavy elements that splits electronic levels and correctly describes the degeneracy of states in the DOS [16]. |

| VASP, WIEN2k | Widely used software packages for electronic structure calculations of periodic solids, capable of computing total and projected DOS with high precision [19] [16]. |

| PCA-based DOS Mapping | A data-driven framework that can predict surface DOS from bulk DOS calculations, bypassing expensive slab-model simulations for high-throughput screening [23]. |

The systematic errors inherent in standard DFT approximations, particularly the self-interaction error, remain a fundamental challenge in computational materials science and chemistry. As demonstrated, the choice of XC functional systematically impacts the predicted Density of States, with higher-rung functionals on Jacob's Ladder generally offering improved accuracy at a higher computational cost. For general-purpose calculations, GGAs like PBE offer a good compromise, but for properties like band gaps or systems with strong electron correlation, meta-GGAs (r2SCAN) or hybrid functionals (HSE, B3LYP) are often necessary. The most severe cases, such as rare-earth oxides, require additional corrections like +U and SOC for qualitatively correct results [16].

The future of functional development and application lies in the continued systematic benchmarking against robust experimental and high-level theoretical data, as detailed in the validation protocols above. Furthermore, the emergence of machine learning approaches, such as linear mapping to predict surface DOS from bulk calculations, points toward a new paradigm of data-driven and computationally efficient electronic structure analysis [23].

Density Functional Theory (DFT) has become the most widely utilized first-principles method for theoretically modeling materials at the electronic level because it provides a reasonable balance between accuracy and computational cost. Within the Kohn-Sham approach to DFT, the most complex electron interactions are collected into an exchange–correlation (XC) energy functional (EXC). The exact functional form of the electron interactions contained in EXC is not known and therefore must be approximated. Hence, the accuracy of DFT predictions hinges upon the choice of XC functional used to model the electron–electron interactions. Perdew and coworkers proposed an illustrative hierarchy, referred to as Jacob's ladder, that describes XC functionals in ascending accuracy by assigning EXC approximations to rungs on the ladder. As one moves up the ladder, the theoretical rigor increases, the XC approximations become more complex, and the energy functionals depend on additional information [16].

The five rungs of Jacob's ladder represent different levels of approximation sophistication. The first rung contains the Local Density Approximation (LDA), which depends only on the electron density (ρ) at each point in space. The second rung comprises Generalized Gradient Approximations (GGAs), which incorporate both the electron density and its gradient (∇ρ). The third rung introduces meta-GGAs, which further include the orbital kinetic energy density (τ) or the density Laplacian. The fourth rung consists of hybrid functionals that mix a portion of exact Hartree-Fock exchange with DFT exchange. The fifth and highest rung includes methods that incorporate virtual Kohn-Sham orbitals, such as double-hybrids which add MP2-like correlation [16] [24].

Figure 1: The five rungs of Jacob's Ladder in Density Functional Theory, representing increasing levels of sophistication in exchange-correlation approximations.

This progression up Jacob's Ladder generally yields improved accuracy for molecular and solid-state systems, though at increasing computational cost. Inexact treatment of electron exchange interactions underlying local and semi-local functionals leads to a fundamental deficiency known as delocalization error or self-interaction error (SIE). This error is particularly severe for systems with partially occupied d or f states, making the selection of EXC crucial to correctly describe these systems' electronic structure, magnetic ground state, thermodynamic properties, and relative energies [16].

Theoretical Foundations of Functional Families

Local Density Approximation (LDA)

The Local Density Approximation represents the simplest and historically first practical exchange-correlation functional in DFT. LDA assumes that the exchange-correlation energy per electron at a point in space equals that of a uniform electron gas with the same density. The LDA functional thus depends only on the electron density (ρ) at each point in space, without considering how the density varies between points [24].

Common LDA functionals include the Vosko-Wilk-Nusair (VWN) parametrization, which incorporates correlation effects, and the Perdew-Wang 1992 (PW92) parametrization. The pure-exchange electron gas formula (Xonly) and the scaled exchange-only formula (Xalpha) represent exchange-only LDA variants. While LDA provides reasonable structural predictions and has good numerical stability, it systematically underestimates band gaps and tends to overbind molecules and solids, resulting in shortened bond lengths and lattice parameters [16] [24].

Generalized Gradient Approximation (GGA)

Generalized Gradient Approximations improve upon LDA by incorporating information about how the electron density changes in space. GGA functionals thus depend on both the electron density and its gradient (∇ρ). This additional information allows GGAs to better describe inhomogeneous electron densities, generally improving molecular atomization energies, structural properties, and bond lengths compared to LDA [16] [24].

The Perdew-Burke-Ernzerhof (PBE) functional is one of the most widely used GGAs in solid-state physics, offering a good balance between accuracy and computational efficiency. Its variant PBEsol is optimized for solids and surfaces. Other popular GGA functionals include Becke-Perdew 1986 (BP86), Becke-Lee-Yang-Parr (BLYP), and revised PBE (revPBE). GGAs typically reduce the overbinding tendency of LDA and provide better lattice parameters, though they still significantly underestimate band gaps and struggle with strongly correlated systems [16] [24].

Meta-Generalized Gradient Approximation (meta-GGA)

Meta-GGAs constitute the third rung of Jacob's Ladder, incorporating additional information beyond density and its gradient. These functionals introduce dependence on the kinetic energy density (τ) or the Laplacian of the electron density (∇²ρ), providing more detailed information about the local electronic environment. This additional flexibility allows meta-GGAs to satisfy more theoretical constraints and achieve better accuracy for diverse chemical and material systems [16] [24].

The strongly constrained and appropriately normed (SCAN) functional and its restored regularized variant (r2SCAN) represent significant advances in meta-GGA development, as they obey all known constraints for a semi-local functional. Other notable meta-GGAs include the Tao-Perdew-Staroverov-Scuseria (TPSS) functional and its revised version (revTPSS). Meta-GGAs can reduce self-interaction error and improve the description of strongly correlated systems compared to GGAs, often providing better band gaps and reaction barriers without the computational cost of hybrid functionals [16] [24].

Hybrid Functionals

Hybrid functionals occupy the fourth rung of Jacob's Ladder by incorporating a fraction of exact Hartree-Fock exchange into the DFT exchange functional. This mixing helps address the self-interaction error inherent in pure DFT functionals and generally improves the prediction of electronic properties, including band gaps. Hybrid functionals typically follow the form: EXChybrid = a EXHF + (1-a) EXDFT + ECDFT, where a is the mixing parameter [16] [24].

The Heyd-Scuseria-Ernzerhof (HSE06) functional is particularly popular in solid-state physics because it screens the long-range portion of Hartree-Fock exchange, making it computationally more efficient for extended systems. Other common hybrids include B3LYP (popular in quantum chemistry) and PBE0. While hybrid functionals significantly improve band gap predictions over semi-local functionals, they come with substantially higher computational cost due to the need to calculate non-local Hartree-Fock exchange [25] [16].

Performance Comparison for Electronic Properties

Band Gap Prediction Accuracy

Accurately predicting band gaps remains a challenging task for DFT, especially because interpreting the Kohn-Sham gap as the fundamental band gap leads to systematic underestimation. A comprehensive benchmark study comparing many-body perturbation theory (GW methods) against density functional theory for the band gaps of 472 non-magnetic materials provides valuable insights into functional performance [25].

Table 1: Performance comparison of DFT and GW methods for band gap prediction across 472 materials

| Method | Category | Mean Absolute Error (eV) | Systematic Error | Computational Cost |

|---|---|---|---|---|

| LDA | DFT | ~1.0-1.5 (est.) | Severe underestimation | Low |

| PBE | GGA | ~1.0 (est.) | Severe underestimation | Low |

| mBJ | meta-GGA | Moderate | Moderate underestimation | Moderate |

| HSE06 | Hybrid | Moderate improvement over semi-local | Reduced underestimation | High |

| G₀W₀-PPA | Many-Body Perturbation Theory | Marginal improvement over best DFT | Small underestimation | Very High |

| QP G₀W₀ | Many-Body Perturbation Theory | Significant improvement | Small systematic error | Very High |

| QSGW | Many-Body Perturbation Theory | Good accuracy | ~15% overestimation | Extremely High |

| QSGŴ | Many-Body Perturbation Theory | Best overall accuracy | Minimal systematic error | Highest |

The benchmark results show that meta-GGA functionals like mBJ and hybrid functionals like HSE06 significantly reduce the systematic underestimation of band gaps compared to LDA and GGA. However, these improvements are often due to (semi-)empirical adjustments rather than a solid theoretical basis. The mBJ functional represents the best-performing meta-GGA for band gaps, while HSE06 is the best-performing hybrid functional [25].

For systems with strong electron correlation, such as rare-earth oxides containing localized f-electrons, the selection of appropriate functionals becomes particularly important. A comprehensive assessment of thirteen exchange-correlation approximations for rare-earth oxides found that the r2SCAN meta-GGA functional delivers high accuracy for structural, electronic, and energetic predictions. The study also highlighted that +U and +SOC corrections are critical for accurate electronic structure modeling of these strongly correlated systems [16] [26].

Performance for Strongly Correlated Systems

Rare-earth oxides (REOs) present a particular challenge for DFT due to their highly correlated electronic structure with coexisting localized and itinerant states. The 17 rare-earth elements consist of the lanthanide group plus Sc and Y, characterized by complex electronic interactions that directly influence their physicochemical properties. REOs typically exhibit mixed valences, high oxygen conductivities, and unique electronic properties that make them relevant for technological applications including catalysis, ionic conduction, and sensing [16].

Table 2: Functional performance for rare-earth oxides (structural, electronic, and energetic properties)

| Functional | Family | REO Structural Properties | REO Electronic Properties | REO Energetics | Recommended Usage |

|---|---|---|---|---|---|

| PBE/PBEsol | GGA | Good lattice parameters | Poor band gaps, severe SIE | Moderate formation energies | Standard solid-state calculations |

| SCAN | meta-GGA | Good accuracy | Improved band gaps, reduced SIE | Good accuracy | Accurate REO modeling |

| r2SCAN | meta-GGA | High accuracy | Good band gaps, reduced SIE | High accuracy | Recommended for REOs |

| HSE06 | Hybrid | High accuracy | Best DFT band gaps | High accuracy | When cost permits |

The assessment of functional performance for REOs reveals that the SCAN family of meta-GGA functionals provides a promising compromise between enhanced chemical accuracy and only a marginal cost increase from GGA. These functionals reduce the self-interaction error for general materials and oxides, resulting in increased accuracy for property predictions. For the most accurate electronic structure modeling of REOs, the study recommends using r2SCAN with +U and spin-orbit coupling (SOC) corrections to properly account for strong correlation and relativistic effects [16].

Experimental Protocols and Computational Methodologies

Benchmarking Methodologies for Electronic Structure

Large-scale benchmarking studies follow rigorous computational protocols to ensure meaningful comparisons between different functionals. For the GW vs. DFT band gap benchmark, researchers adopted an extensive dataset of experimental band gaps for 472 non-magnetic semiconductors and insulators, using experimental crystal structures and geometries from the Inorganic Crystal Structure Database (ICSD) to facilitate direct comparison. This approach ensures that differences in predicted properties reflect functional performance rather than structural discrepancies [25].

The computational workflow typically begins with DFT calculations using local or semi-local functionals as a starting point. For GW calculations, four strategically chosen methods were implemented: (1) One-shot G₀W₀ using the Godby-Needs plasmon-pole approximation (PPA); (2) Full-frequency quasiparticle G₀W₀ (QP G₀W₀); (3) Full-frequency quasiparticle self-consistent GW (QSGW); and (4) QSGW with vertex corrections in the screened Coulomb interaction W (QSGŴ). These methods represent a hierarchy of computational cost and physical rigor in many-body perturbation theory [25].

For plane-wave pseudopotential implementations, the linearized quasiparticle equation solves for quasiparticle energies:

εiQP = εiKS + Zi⟨φiKS|(Σ(εiKS) - VXCKS)|φiKS⟩

where Zi is the renormalization factor, Σ is the self-energy, VXCKS is the KS exchange-correlation potential, and |φiKS⟩ are KS states. More advanced methods "quasiparticlize" the energy-dependent Σ by constructing a static Hermitian potential, replacing VXCKS and solving the resulting effective KS equations self-consistently [25].

Figure 2: Computational workflow for systematic benchmarking of electronic structure methods, from initial DFT calculations to advanced GW approaches.

Treatment of Strongly Correlated Systems

For strongly correlated systems like rare-earth oxides, additional methodological considerations are essential. The standard approach involves DFT+U calculations employing a Hubbard-type parameter to account for strong on-site Coulomb repulsion amidst localized 4f electrons. The +U essentially acts as an on-site correction to reproduce the Coulomb interaction, thus serving as a penalty for delocalization. For REOs with partially filled 4f levels, this potential promotes on-site 4f electrons to localize, improving electronic structure description [16].

Spin-orbit coupling (SOC) represents another critical consideration for heavy-element systems like REOs. For heavier atoms with larger nuclear charges, spin-orbit interactions become as strong as or stronger than electron-electron repulsion and may dominate spin-spin or orbit-orbit interactions. Consequently, physical and chemical properties can be strongly influenced by these relativistic effects. SOC can shift electronic levels, change the symmetry of electronic states, and describe the energetic splitting of atomic p, d, and f states. While often disregarded due to increased computational cost, SOC becomes necessary for achieving qualitatively accurate electronic descriptions in heavy-element systems [16].

The comprehensive assessment of REOs typically involves comparing multiple methodological approaches: standard DFT, DFT+U, DFT+SOC, and DFT+U+SOC across different XC approximations (PBEsol, SCAN, or r2SCAN) and pseudopotential parameterizations (4f-band and 4f-core). This systematic approach allows researchers to quantify the performance, numerical accuracy, and computational efficiency of different methodological choices for specific properties and studies of REOs [16].

Research Reagents and Computational Tools

Table 3: Essential computational tools and methodologies for electronic structure calculations

| Tool/Method | Category | Function | Example Implementations |

|---|---|---|---|

| Plane-Wave Codes | Software Package | Solves Kohn-Sham equations using plane-wave basis sets | Quantum ESPRESSO, VASP |

| All-Electron Codes | Software Package | Performs electronic structure calculations with full electron treatment | Questaal, ADF |

| GW Implementations | Methodology | Computes quasiparticle energies beyond DFT | Yambo, Questaal |

| Pseudopotentials | Computational Tool | Reduces computational cost by representing core electrons | PAW pseudopotentials, Norm-conserving pseudopotentials |

| Hubbard U Correction | Methodology | Addresses self-interaction error in strongly correlated systems | DFT+U implementation in VASP, Quantum ESPRESSO |

| Spin-Orbit Coupling | Methodology | Accounts for relativistic effects in heavy elements | SOC implementations in VASP, ADF |

The selection of appropriate computational tools depends on the specific research goals and available resources. For high-throughput screening of materials, plane-wave pseudopotential codes like VASP and Quantum ESPRESSO with GGA or meta-GGA functionals offer a reasonable balance between accuracy and computational efficiency. For highest accuracy in electronic structure prediction, especially for band gaps, many-body perturbation theory (GW methods) implemented in codes like Yambo or Questaal provides superior results but at significantly higher computational cost [25] [16].

For molecular systems and quantum chemistry applications, all-electron codes like ADF with hybrid functionals often represent the preferred choice. The ADF software supports a wide range of density functionals, including LDA, GGA, meta-GGA, hybrid, meta-hybrid, and double-hybrid functionals, allowing researchers to systematically climb Jacob's Ladder based on their accuracy requirements and computational resources [24].

The systematic benchmarking of density functional families reveals a clear trade-off between computational cost and accuracy for electronic structure predictions. While LDA and GGA functionals offer computational efficiency, they systematically underestimate band gaps and struggle with strongly correlated systems. Meta-GGA functionals like SCAN and r2SCAN provide improved accuracy with only a modest increase in computational cost, making them attractive for solid-state calculations. Hybrid functionals like HSE06 further improve accuracy, particularly for band gaps, but at significantly higher computational expense [25] [16].

For the most accurate band gap predictions, many-body perturbation theory within the GW approximation currently represents the gold standard, with QSGŴ (including vertex corrections) achieving remarkable accuracy that can reliably flag questionable experimental measurements. However, the computational cost of such methods remains prohibitive for high-throughput materials screening [25].

For strongly correlated systems like rare-earth oxides, the recommended approach involves using meta-GGA functionals (particularly r2SCAN) with Hubbard U corrections and spin-orbit coupling to properly account for both strong correlation and relativistic effects. This balanced approach provides sufficient accuracy for most applications while maintaining reasonable computational efficiency [16].

As computational resources continue to improve and methodological advances emerge, the materials science community can expect increasingly accurate electronic structure predictions across broader classes of materials. The development of more efficient implementations of hybrid functionals and GW methods will make these higher-rung approaches more accessible for routine calculations, potentially revolutionizing our ability to predict and design materials with tailored electronic properties.

A Practical Guide to Functionals: From PBE to Hybrid Methods

Density Functional Theory (DFT) is a cornerstone of computational chemistry, enabling the study of molecular structures, energies, and properties. The accuracy of DFT calculations critically depends on the choice of the exchange-correlation functional. This guide provides an objective comparison of the performance of three widely used functionals—PBE, B3LYP, and M06-2X—across diverse chemical systems, with a special focus on properties relevant to drug development. We synthesize benchmark data from recent scientific literature to offer a clear, evidence-based guide for researchers in selecting the appropriate functional for their specific applications.

DFT approximates the solution to the many-electron Schrödinger equation by using the electron density as the fundamental variable. The exchange-correlation functional, which encapsulates quantum mechanical effects not described by classical electrostatics, is the key determinant of a functional's performance. The functionals discussed herein represent different generations of development:

- PBE: A Generalized Gradient Approximation (GGA) functional, PBE is a non-empirical, first-principles functional derived to obey certain physical constraints. It generally provides good structural properties but tends to underestimate reaction barriers and binding energies, particularly for non-covalent interactions [27].

- B3LYP: A hybrid GGA functional, B3LYP incorporates a portion of exact Hartree-Fock (HF) exchange (20-25%) into the exchange-correlation energy. It has been immensely popular in organic and inorganic chemistry for decades due to its good overall performance for thermochemistry [28].

- M06-2X: A hybrid meta-GGA functional from the Minnesota suite, M06-2X includes a high percentage of HF exchange (54%) and is parameterized against a broad set of training data. It was specifically designed for accurate treatment of main-group thermochemistry, kinetics, and non-covalent interactions, with improved description of medium-range electron correlation [28].

The following diagram illustrates a general decision workflow for selecting a functional based on the primary chemical phenomenon of interest.

Performance Comparison Across Chemical Properties

Non-Covalent Interactions

Non-covalent interactions, such as dispersion and hydrogen bonding, are crucial in drug binding, supramolecular chemistry, and materials science.

Table 1: Performance on Non-Covalent Interactions

| Functional | Functional Type | Performance on Dispersion-Dominated π⋯π Interactions | Performance on Ionic Hydrogen-Bonding Clusters |

|---|---|---|---|

| PBE | GGA | Fails to describe dispersion without empirical correction (PBE-D) [29]. | Data not available in search results. |

| B3LYP | Hybrid GGA | Performs significantly less well for systems where dispersion interactions contribute significantly [30]. | Data not available in search results. |

| M06-2X | Hybrid meta-GGA | Underestimates interaction energies for curved π⋯π systems (e.g., corannulene dimer); works well for planar, non-eclipsed monomers [29]. | Excellent performance; low mean unsigned error for zwitterionic conformers (e.g., 0.85 kJ/mol for Br⁻·arginine) [30]. |

| B97-D | DFT-D (Empirical Dispersion) | Best performer for π⋯π interactions, including complex curved and eclipsed systems [29]. | Data not available in search results. |

For dispersion-dominated π⋯π interactions, such as those in polycyclic aromatic hydrocarbon (PAH) complexes, DFT-D functionals like B97-D are clearly superior, providing more accurate interaction energies than M06-2X, which tends to underestimate them, especially for curved systems [29]. In contrast, for systems involving ionic hydrogen bonding, as found in halide ion-amino acid clusters, the M06 suite of functionals (M06 and M06-2X) outperforms B3LYP. M06-2X, in particular, yields the lowest errors for the relative energies of zwitterionic conformers [30].

Electronic Properties and Excited States

Accurate prediction of electronic properties is vital for understanding spectroscopy and designing optical materials.

Table 2: Performance on Electronic and Excited State Properties

| Functional | Functional Type | Dipole Moment Accuracy (Conjugated Molecules) | Excitation Energy Accuracy (Biochromophores) |

|---|---|---|---|

| PBE | GGA | Data not available in search results. | Consistently underestimates vertical excitation energies (VEEs) relative to CC2 [31]. |

| B3LYP | Hybrid GGA | High accuracy; reproduces experimental dipole moments with anharmonic correction [32]. | Underestimates VEEs (MSA = -0.31 eV, RMS = 0.37 eV) [31]. |

| M06-2X | Hybrid meta-GGA | Yields larger deviations from experimental dipole moments [32]. | Overestimates VEEs (MSA = +0.25 eV, RMS = 0.31 eV) [31]. |

| ωhPBE0 | Range-Separated Hybrid | Data not available in search results. | Best performer; excellent agreement with CC2 (MSA = 0.06 eV, RMS = 0.17 eV) [31]. |

For calculating ground-state dipole moments of conjugated organic molecules, B3LYP demonstrates high accuracy when used with an appropriate basis set and anharmonic corrections [32]. Conversely, for predicting the excited states of biochromophores (e.g., from GFP or rhodopsin), standard hybrid functionals like B3LYP and PBE0 systematically underestimate vertical excitation energies, while M06-2X and other long-range corrected functionals tend to overestimate them [31]. Newer, empirically adjusted range-separated functionals like ωhPBE0 and CAMh-B3LYP currently provide the best performance for this specific task [31].

Energetics, Geometries, and Drug-like Molecules

The accurate computation of reaction energies, barrier heights, and molecular geometries is fundamental to mechanistic studies and drug design.

Table 3: Performance on Energetics and Geometries

| Functional | Functional Type | Reaction Energy & Barrier Height MAE (BH9 Benchmark) | Molecular Geometry Accuracy (Triclosan Benchmark) |

|---|---|---|---|

| PBE | GGA | Data not available. | Data not available. |

| B3LYP | Hybrid GGA | Higher errors (MAE: 5.26 kcal/mol reaction energy, 4.22 kcal/mol barrier height) [33]. | Good performance, but outclassed by M06-2X [34]. |

| M06-2X | Hybrid meta-GGA | Moderate errors (MAE: 2.76 kcal/mol reaction energy, 2.27 kcal/mol barrier height) [33]. | Superior performance; most accurate for bond length prediction [34]. |

| Double-Hybrids (e.g., ωDOD) | Double-Hybrid | Near-CCSD(T) accuracy (MAE ~1.0-1.5 kcal/mol), but higher computational cost [33]. | Data not available. |

| ML-DFT (DeePHF) | Machine-Learning | Best performer; achieves CCSD(T)-level precision, surpassing double-hybrids [33]. | Data not available. |

For general main-group thermochemistry and kinetics, M06-2X shows a significant improvement over B3LYP, with mean absolute errors about half those of B3LYP for reaction energies and barrier heights [33]. In geometry optimization of drug-like molecules such as triclosan, M06-2X/6-311++G(d,p) has been shown to be superior to several other functionals, including B3LYP, providing bond lengths closest to experimental values [34]. For the highest accuracy in reaction energetics, machine learning-augmented DFT methods like DeePHF are emerging as powerful tools, achieving coupled-cluster quality at a fraction of the cost [33].

Experimental Protocols for Benchmarking

To ensure reproducibility and rigorous comparison, the following methodological details are typically employed in benchmark studies.

Protocol 1: Conformationally Flexible Anionic Clusters

- Objective: To assess the performance of functionals for predicting relative energies of canonical vs. zwitterionic tautomers and their conformers in halide-ion-amino acid complexes (e.g., Cl⁻·arginine) [30].

- Methodology:

- Geometry Optimization: Full optimization of all conformational isomers is performed using the target functionals (e.g., M06, M06-2X, B3LYP).

- Benchmark Calculation: Single-point energy calculations are performed on optimized geometries using a high-level ab initio method (MP2) with a large basis set to establish a benchmark.

- Error Analysis: The relative energies of conformers calculated by each DFT functional are compared against the MP2 benchmark. The mean unsigned error (MUE) is computed to quantify performance.

- Key Metrics: Mean unsigned error (MUE) in kJ/mol for relative conformer energies [30].

Protocol 2: Dipole Moment Calculations in Conjugated Systems

- Objective: To evaluate the ability of functionals to predict experimental dipole moments in donor-acceptor substituted organic molecules [32].

- Methodology:

- Conformational Search & Averaging: For molecules with rotatable substituents, a conformational search is conducted. At higher temperatures (where rotation is unhindered), dipole moments are calculated as a Boltzmann average over all low-energy rotamers.

- Geometry and Frequency Calculation: Molecular geometries are optimized, and anharmonic frequency calculations are performed (using

opt=vtightkeyword in Gaussian) to obtain vibrationally averaged properties. - Comparison: The computed dipole moments are directly compared to high-fidelity experimental gas-phase data.

- Key Metrics: Deviation from experimental dipole moments (in Debye) [32].

Protocol 3: Interaction Energy for π⋯π Complexes

- Objective: To benchmark the performance of functionals for calculating interaction energies in stacked π-systems [29].

- Methodology:

- System Selection: A diverse set of complexes is chosen, including planar π⋯π dimers (e.g., from the S22 database), curved polycyclic aromatic hydrocarbons (PAHs), and mixed planar-curved systems.

- Geometry Optimization: The structures of the monomers and the complexes are fully optimized using the functionals under investigation.

- Interaction Energy Calculation: The interaction energy (ΔE) is calculated as the difference between the energy of the complex and the sum of the energies of the isolated monomers, applying Boys-Bernardi counterpoise correction to account for basis set superposition error (BSSE).

- Reference Data: Results are compared against high-level ab initio data or reliable experimental values where available.

- Key Metrics: Computed interaction energy (ΔE in kcal/mol) versus reference data [29].

Essential Research Reagents and Computational Tools

The following table lists key computational "reagents" and methodologies essential for conducting benchmark studies in computational chemistry.

Table 4: Research Reagent Solutions for DFT Benchmarking

| Research Reagent | Function/Description | Example Use Case |

|---|---|---|

| Gaussian 09W/16 | A comprehensive software package for electronic structure modeling [32] [34]. | Used for geometry optimization, frequency, and energy calculations across all benchmark studies. |

| aug-cc-pVTZ / 6-311++G(d,p) | Large Pople-style or correlation-consistent basis sets for high-accuracy calculations [32] [31] [34]. | Employed for final single-point energy or property calculations to minimize basis set error. |

| S22 Database | A curated set of 22 non-covalent complexes with reference interaction energies [29]. | Serves as a primary benchmark for testing functional performance on weak interactions like hydrogen bonds and dispersion. |

| DLPNO-CCSD(T) | A highly accurate, computationally efficient coupled-cluster method for large molecules [33]. | Used to generate near-CCSD(T) quality reference energies for training or validating machine-learning models like DeePHF. |

| COSMO Solvation Model | A continuum solvation model that calculates the screening charges in a conductor-like environment [27]. | Incorporated to evaluate and simulate the effects of a polar solvent environment on molecular properties and reaction energies. |

This guide synthesizes recent benchmark data to illuminate the strengths and weaknesses of common DFT functionals. The core finding is that there is no single "best" functional for all scenarios. The choice is inherently application-dependent:

- For general organic thermochemistry and kinetics, M06-2X broadly outperforms B3LYP.

- For non-covalent dispersion interactions, especially in complex π-systems, DFT-D methods (e.g., B97-D) are recommended.

- For calculating dipole moments of conjugated molecules, B3LYP with anharmonic corrections remains highly accurate.

- For excited-state properties of biochromophores, range-separated hybrids (e.g., ωhPBE0) show superior performance.

- For the highest-accuracy reaction energetics, emerging machine learning-augmented methods (e.g., DeePHF) are setting new standards.

Researchers are encouraged to use this comparative data as a starting point for selecting a functional, always considering the primary chemical interactions governing their system of interest.

The accuracy of quantum chemical calculations is paramount for their predictive power in materials science and drug development. Two properties that serve as critical benchmarks for computational methods are proton affinity (PA)—the negative of the enthalpy change when a molecule accepts a proton in the gas phase—and the band gap—the energy difference between the valence and conduction bands in a material [35] [36]. Accurately predicting PA is essential for understanding reaction mechanisms in catalysis and biochemistry, while reliable band gap predictions are crucial for developing semiconductors and optoelectronic devices [37] [36].

This guide objectively compares the performance of different computational approaches and functionals for predicting these properties, providing researchers with the data needed to select appropriate methods for their work.

Performance Analysis: Proton Affinity Predictions

Proton affinity calculations are sensitive to the treatment of nuclear quantum effects (NQEs) and electron-proton correlation [38]. The following sections compare the accuracy of traditional and advanced density functional theory (DFT) methods.

Traditional DFT Functionals for Proton Affinity

A benchmarking study on molecules including amines, amides, esters, and alcohols evaluated several popular exchange-correlation functionals against experimental PA values [39]. The results, summarized in Table 1, indicate that the M062X functional provides a slight advantage in accuracy.

Table 1: Performance of Selected DFT Functionals for Proton Affinity Prediction (using def2-TZVP basis set) [39]

| Functional | Mean Unsigned Error (MUE) | Key Characteristics |

|---|---|---|

| M062X | Minimum error | Slightly better performance, especially for molecules containing heteroatoms |

| B3LYP | Good results | Reliable, well-established functional |

| BP86 | Good results | Generalized gradient approximation (GGA) functional |

| PBEPBE | Good results | GGA functional |

| APFD | Overestimates values | Hybrid functional with dispersion correction |

| wB97XD | Overestimates values | Range-separated hybrid functional with dispersion correction |

The study also found that Grimme's dispersion corrections did not significantly improve PA predictions for small molecules, suggesting that the inherent parameterization of the functional itself is more critical for this property [39].

Advanced Methods: Nuclear Electronic Orbital DFT (NEO-DFT)

For properties intimately linked to hydrogen atoms, such as proton affinity, explicitly treating the quantum nature of the proton can enhance accuracy. Nuclear Electronic Orbital DFT (NEO-DFT) is an efficient method that does precisely this, treating selected protons as quantum particles similar to electrons [40] [38].

A large-scale benchmark study demonstrated that NEO-DFT significantly outperforms traditional DFT for PA predictions. Traditional DFT achieved a mean absolute deviation (MAD) of 31.6 kJ/mol from experimental values, whereas NEO-DFT, when combined with an electron-proton correlation functional, reduced the MAD dramatically [40]. The study provided clear guidance on optimal parameter selection [40] [38]:

- Best Functional: The CAM-B3LYP exchange-correlation functional yielded the best results with an MAD of 6.2 kJ/mol.

- Electron-Proton Correlation: Both the LDA-type

epc17-2and GGA-typeepc19functionals delivered comparable and accurate results. - Electronic Basis Set: The

def2-QZVPbasis set achieved the highest accuracy (MAD = 5.0 kJ/mol), though thedef2-TZVPoffers a good balance of accuracy and computational cost. Nuclear basis sets showed minimal impact on PA accuracy.

Experimental Workflow for Proton Affinity Validation

Computational predictions require validation against reliable experimental data. Techniques like the Selected Ion Flow Drift Tube (SIFDT) mass spectrometry are used to determine PA and gas-phase basicity (GB) experimentally [35]. The workflow for these experiments is outlined below.

Diagram 1: Experimental SIFDT Workflow for Proton Affinity. This diagram illustrates the key steps in determining proton affinity using a Selected Ion Flow Drift Tube instrument [35].

Performance Analysis: Band Gap Predictions

Predicting band gaps is a known challenge for standard DFT approaches, which tend to underestimate this property. Advanced functionals have been developed to address this issue.

The Hybrid Functional Approach

Hybrid functionals, which mix a portion of exact Hartree-Fock exchange with DFT exchange, generally offer improved band gap predictions over semi-local functionals. A recent study revisited the reliability of hybrids for bulk solids and surfaces like Si(111) and Ge(111) [37] [41].

- Conventional Hybrids: Functionals like HSE06 often provide a significant improvement over standard semi-local functionals like PBE for fundamental band gaps.

- Optimally-Tuned Range-Separated Hybrids: A new generation of functionals, such as Wannier optimally-tuned screened range-separated hybrids (WOT-SRSH), has shown exceptional accuracy. These functionals can simultaneously and accurately predict both the fundamental gap (Eg) and the optical gap (Eopt) for bulk materials and their surfaces, a task that was previously challenging [37].

Reproducibility and Computational Parameters

For band gap calculations of materials, the choice of computational parameters is critical for reproducibility and accuracy. A study on 340 3D materials found that standard protocols can lead to a ~20% failure rate during bandgap calculations [42]. Key parameters requiring careful attention are:

- Pseudopotentials: The choice of potential describing core electrons must be optimized.

- Plane-Wave Cutoff Energy: The basis set cutoff energy must be converged.

- Brillouin-Zone Integration: A new protocol that minimizes interpolation errors by choosing k-point grids based on the second-derivative matrix of orbital energies was shown to be superior to established procedures [42].

Selecting the right software and pseudopotentials is a fundamental step in computational research. The performance and capabilities of different codes can vary significantly.

Table 2: Comparison of Two Prominent Plane-Wave DFT Codes

| Feature | Quantum ESPRESSO | VASP |

|---|---|---|

| License & Cost | Free (GPL 2.0), Open Source | Commercial License Required |

| Pseudopotentials | Not included by default; users source from libraries (PSLibrary, pseudo-dojo) | Well-tested PAW potentials included by default |

| Key Strengths | - Active user community & forums [43]- Fast implementation of new methods [43]- hp.x for first-principles DFT+U calculation [43] |

- User-friendly interface & documentation [43]- Robust handling of hybrid functionals [43]- Good parallel scaling for large systems [43] |

| Notable Features | Effective Screening Method for charged slabs [43] | - |

| Considerations | - Some property combinations not available (e.g., dipole + Hubbard U) [43]- Non-collinear SOC only [43] | - Implements approximations to accelerate hybrid calculations [43] |

Emerging Methods: Machine Learning for Electronic Properties

Beyond traditional quantum chemistry methods, machine learning (ML) is emerging as a powerful tool for predicting electronic properties at a fraction of the computational cost. Universal ML models are now being developed to predict the electronic density of states (DOS) across a wide chemical space [44].

For instance, the PET-MAD-DOS model, a transformer-based neural network, can predict the DOS for diverse systems ranging from inorganic crystals to organic molecules. While such universal models achieve semi-quantitative agreement, they can be fine-tuned with small, system-specific datasets to achieve accuracy comparable to bespoke models trained exclusively on that data, opening new avenues for high-throughput materials discovery [44]. The relationship between the DOS and bandgap makes these models particularly useful for initial screening of materials with desirable electronic properties.

The electronic density of states (DOS) is a fundamental quantity in computational materials science that quantifies the distribution of available electronic states at each energy level. It underlies critical optoelectronic properties such as conductivity, bandgap, and optical absorption spectra, making it instrumental for material discovery in domains ranging from semiconductor technology to photovoltaic device development [44]. Traditional density functional theory (DFT) calculations, while accurate, face significant computational bottlenecks that limit their application for large systems or high-throughput screening [45] [46]. The scaling behavior of DFT calculations, which typically increases cubically with system size, presents a substantial constraint for modeling complex materials such as nanoparticles and high-entropy alloys [45].

In recent years, machine learning (ML) approaches have emerged as powerful surrogates for DFT, offering comparable accuracy at a fraction of the computational cost [44]. Early efforts in this domain focused primarily on highly specialized models designed for specific properties in narrow regions of the chemical space [44]. These included interatomic potentials and models predicting bandgaps, charge densities, Hamiltonians, and DOS with limited transferability beyond their training domains. However, a significant paradigm shift has occurred with the development of universal machine learning models that generalize across extensive portions of the periodic table, spanning both molecular systems and extended materials [44]. This transition mirrors broader trends in artificial intelligence toward foundation models capable of addressing diverse tasks within a unified architecture.

This guide provides a comprehensive comparison of contemporary universal ML models for DOS prediction, examining their architectural approaches, performance benchmarks, and practical implementation methodologies. By synthesizing experimental data and evaluation protocols from cutting-edge research, we aim to equip computational researchers with the necessary framework to select and implement appropriate DOS prediction strategies for their specific scientific applications.

Comparative Analysis of Universal DOS Prediction Models

Architectural Approaches and Methodological Frameworks