Benchmarking Computational Methods: A Framework for Assessing Accuracy and Reliability in Drug Discovery

This article provides a comprehensive framework for benchmarking the accuracy of computational methods in drug discovery, addressing a critical need for standardized assessment.

Benchmarking Computational Methods: A Framework for Assessing Accuracy and Reliability in Drug Discovery

Abstract

This article provides a comprehensive framework for benchmarking the accuracy of computational methods in drug discovery, addressing a critical need for standardized assessment. Aimed at researchers and development professionals, it explores the foundational principles of benchmarking, reviews current methodological applications from QSAR to AI, outlines common pitfalls and optimization strategies, and establishes robust protocols for validation and comparative analysis. By synthesizing insights from recent studies and established guidelines, the content offers practical direction for selecting, validating, and improving computational tools to enhance the reliability of predictions in biomedical research.

The Critical Need for Benchmarking: Establishing Standards in Computational Drug Discovery

In computational biology and other data-driven sciences, researchers are frequently faced with a choice between numerous methods for performing data analyses. This decision is critical, as method selection can significantly affect scientific conclusions and subsequent research directions [1]. The rapid expansion of computational techniques, with nearly 400 methods available for analyzing data from single-cell RNA-sequencing experiments at the time of one review, presents both an opportunity and a challenge [1]. Within this context, reproducibility—specifically defined in genomics as the ability of bioinformatics tools to maintain consistent results across technical replicates—emerges as a fundamental problem that threatens scientific progress [2]. This article argues that rigorous, neutral benchmarking is non-negotiable for addressing this reproducibility crisis, with a specific focus on its critical role in evaluating DOS (Denial of Service) accuracy across computational methods in cybersecurity research.

The Reproducibility Crisis in Computational Science

Defining Reproducibility in Computational Contexts

In computational research, reproducibility and related concepts like replicability and robustness are often defined based on whether identical code and data are used [2]. Goodman et al. define methods reproducibility as the ability to precisely repeat experimental and computational procedures using the same data and tools to yield identical results [2]. In genomics, this translates to obtaining consistent outcomes across multiple runs of bioinformatics tools using the same parameters and genomic data [2].

The challenge extends to cybersecurity research, where computational methods must reliably detect and classify attacks such as Denial of Service (DoS), Distributed Denial of Service (DDoS), and Mirai attacks in IoT environments [3]. Variations in algorithm implementation, parameter settings, and data processing approaches can significantly impact the reproducibility of results, potentially leading to inconsistent security recommendations and vulnerable systems.

Bioinformatics tools can introduce both deterministic and stochastic variations that compromise reproducibility [2]. Deterministic variations include algorithmic biases, such as reference bias in alignment algorithms favoring sequences containing reference alleles [2]. Stochastic variations stem from intrinsic randomness in computational processes like Markov Chain Monte Carlo and genetic algorithms [2]. These variations can produce divergent outcomes even when analyzing identical datasets under identical conditions.

In cybersecurity, similar challenges exist where machine learning models for attack classification may produce inconsistent results due to variations in feature selection methods, data preprocessing techniques, or random initialization of algorithm parameters [3].

Benchmarking as the Cornerstone of Reproducibility

The Essential Role of Benchmarking

Benchmarking studies aim to rigorously compare the performance of different methods using well-characterized benchmark datasets to determine method strengths and provide recommendations for method selection [1]. Properly designed benchmarking serves as a crucial mechanism for:

- Identifying performance gaps between existing methods and ideal outcomes [4]

- Driving continuous improvement in method development and implementation [4]

- Establishing reliable standards for evaluating methodological claims [1]

- Enhancing competitive advantage for organizations implementing best practices [4]

Types of Benchmarking Studies

Benchmarking in computational sciences generally falls into three broad categories [1]:

- Method development benchmarks: Performed by method developers to demonstrate the merits of their new approach

- Neutral benchmarks: Conducted independently of method development by authors without perceived bias

- Community challenges: Organized initiatives such as those from DREAM, CAMI, and MAQC/SEQC consortia [1]

Neutral benchmarking studies are particularly valuable for the research community as they focus specifically on comparison rather than promoting a particular method [1].

Designing Rigorous Benchmarking Studies for DOS Accuracy

Defining Purpose and Scope

The purpose and scope of a benchmark should be clearly defined at the beginning of the study, as this fundamentally guides the design and implementation [1]. For DOS accuracy evaluation, this might include determining whether the focus is on detection sensitivity, classification accuracy, computational efficiency, or all these factors. The scope decision involves tradeoffs in terms of available resources, with neutral benchmarks ideally being as comprehensive as possible [1].

Selection of Methods

The selection of methods for benchmarking should be guided by the study's purpose and scope [1]. A comprehensive neutral benchmark should include all available methods for a specific type of analysis, while benchmarks for new method development may sufficiently compare against a representative subset of state-of-the-art and baseline methods [1]. Inclusion criteria should be chosen without favoring any methods, and exclusion of widely used methods should be justified [1].

Selection and Design of Datasets

The selection of reference datasets represents a critical design choice in benchmarking [1]. For DOS accuracy research, this typically involves using well-characterized datasets such as CICDDoS2019, CICIoT2023, or Edge-IIoT [3] [5]. These datasets can include both simulated data with known "ground truth" and real-world experimental data capturing actual attack patterns [1] [3]. Including a variety of datasets ensures methods can be evaluated under a wide range of conditions [1].

Establishing Evaluation Criteria

A robust benchmarking study employs multiple evaluation criteria to assess different aspects of performance. For DOS accuracy research, key quantitative metrics typically include [3]:

- Accuracy: Overall correctness of the classification

- Precision: Proportion of true positives among all positive predictions

- Sensitivity/Recall: Ability to identify all actual attacks

- F1-Score: Harmonic mean of precision and recall

- Computational Efficiency: Training and prediction times

Secondary measures might include scalability, resource requirements, and usability factors [1] [3].

Experimental Protocol for Benchmarking DOS Detection Methods

Methodology for Comprehensive Evaluation

Based on established benchmarking principles and recent studies in IoT security, the following experimental protocol provides a framework for evaluating DOS accuracy across computational methods:

Data Preprocessing: Address class imbalance techniques such as undersampling to prevent model bias [3]. Normalize features to ensure consistent scaling across the dataset.

Feature Selection: Implement multiple feature selection methods to compare their impact, including:

Model Training: Apply multiple machine learning algorithms to ensure comprehensive comparison, including Random Forest, Gradient Boosting, Naive Bayes, Decision Tree, and K-Nearest Neighbors [3]. Utilize cross-validation techniques to prevent overfitting.

Performance Evaluation: Measure all key metrics (accuracy, precision, sensitivity, F1-score) consistently across all methods and configurations [3]. Record computational efficiency metrics including training time and prediction time [3].

Statistical Analysis: Conduct significance testing to determine whether observed performance differences are statistically meaningful rather than incidental.

Research Reagent Solutions for DOS Detection Benchmarking

Table: Essential Components for DOS Detection Experiments

| Research Reagent | Function | Example Specifications |

|---|---|---|

| Benchmark Datasets | Provide standardized data for training and evaluation | CICIoT2023, CICDDoS2019, Edge-IIoT [3] [5] |

| Feature Selection Algorithms | Identify most relevant features for classification | Chi-square, PCA, Random Forest Regressor [3] |

| Machine Learning Libraries | Implement classification algorithms | Scikit-learn, TensorFlow, PyTorch |

| Performance Metrics | Quantify detection accuracy and efficiency | Accuracy, Precision, F1-Score, Training Time [3] |

| Computational Environment | Standardize hardware/software configuration | CPU/GPU specifications, memory capacity, operating system |

Workflow for Comprehensive Benchmarking

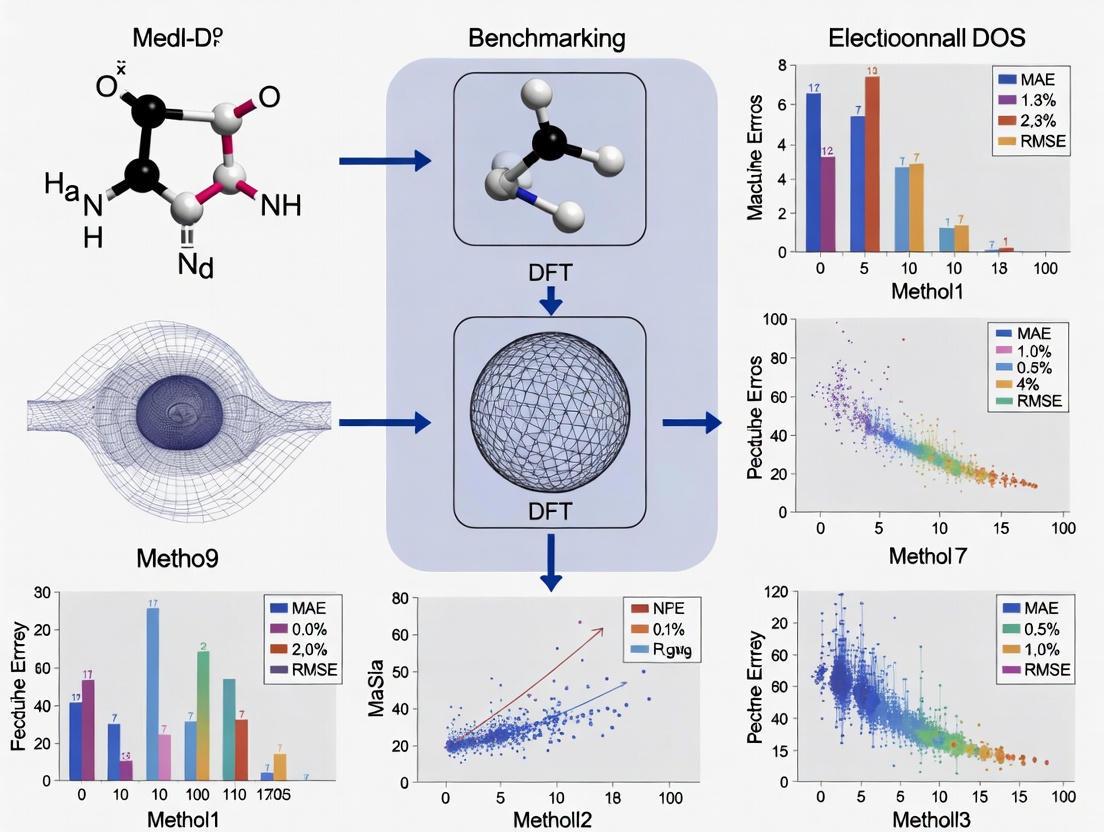

The following diagram illustrates the systematic workflow for conducting a rigorous benchmarking study of DOS detection methods:

Diagram: Benchmarking Workflow for DOS Accuracy Evaluation

Quantitative Results: Benchmarking DOS Detection Performance

Performance Comparison Across Machine Learning Algorithms

Table: Performance Metrics for DOS Detection Using Different Feature Selection Methods (Based on CICIoT2023 Dataset)

| Machine Learning Algorithm | Feature Selection Method | Accuracy (%) | Precision (%) | Sensitivity (%) | F1-Score (%) | Training Time Reduction* |

|---|---|---|---|---|---|---|

| Random Forest | Random Forest Regressor | 99.99 | 99.98 | 99.99 | 99.99 | 96.42% |

| Decision Tree | Random Forest Regressor | 99.99 | 99.97 | 99.98 | 99.98 | 98.71% |

| Gradient Boosting | Random Forest Regressor | 99.99 | 99.96 | 99.97 | 99.97 | 95.88% |

| K-Nearest Neighbors | Chi-square | 99.12 | 98.95 | 98.87 | 98.91 | 92.15% |

| Naive Bayes | PCA | 98.76 | 98.34 | 98.25 | 98.29 | 97.43% |

Note: Training time reduction compared to previously reported results in existing literature [3]

Comparative Analysis of Feature Selection Methods

Table: Impact of Feature Selection on DOS Classification Performance

| Feature Selection Method | Best-Performing Algorithm | Accuracy Achieved | Key Advantages | Computational Efficiency |

|---|---|---|---|---|

| Random Forest Regressor (RFR) | Random Forest | 99.99% | Identifies non-linear relationships, handles mixed data types | Moderate training time, fast inference |

| Chi-square | K-Nearest Neighbors | 99.12% | Computational efficiency, simple implementation | Fast execution, minimal overhead |

| Principal Component Analysis (PCA) | Naive Bayes | 98.76% | Dimensionality reduction, handles correlated features | Moderate execution time |

Analysis of Benchmarking Results for DOS Accuracy

Interpretation of Quantitative Findings

The benchmarking results demonstrate that traditional machine learning algorithms like Random Forest, Decision Tree, and Gradient Boosting can achieve exceptional accuracy (99.99%) in classifying DOS, DDoS, and Mirai attacks when paired with appropriate feature selection methods [3]. The Random Forest Regressor feature selection method consistently outperformed other approaches across multiple algorithms [3].

A critical finding from benchmarking studies is the tradeoff between accuracy and computational efficiency. While multiple algorithms achieved similar accuracy levels, the Decision Tree model demonstrated remarkable efficiency improvements with a 98.71% reduction in training time and a 99.53% reduction in prediction time compared to previously reported results [3]. This highlights the importance of including computational efficiency metrics alongside accuracy measures in benchmarking studies, particularly for resource-constrained IoT environments [3].

Challenges in Attack Classification

Benchmarking studies reveal inherent challenges in distinguishing between certain attack types. DOS and DDoS attacks present particular classification difficulties due to their shared network traffic characteristics [3]. In contrast, Mirai attacks are generally well-classified because of their distinct operational patterns [3]. These findings underscore how rigorous benchmarking can identify not just overall performance but also specific strengths and limitations of methods across different attack scenarios.

Visualization Framework for Benchmarking Analysis

Data Visualization Best Practices for Benchmarking Studies

Effective visualization of benchmarking results enhances interpretation and communication of findings. The following principles should guide visualization design [6]:

- Know your audience: Tailor visualizations to researchers, scientists, and drug development professionals

- Focus on the core message: Highlight key comparisons and performance differences

- Select appropriate visual encodings: Match visual representations to data types and relationships

- Use color effectively: Implement color palettes that enhance comprehension

- Avoid chartjunk: Eliminate unnecessary visual elements that don't convey information

Color Palette for Benchmarking Visualizations

Table: Recommended Color Palette for Data Visualizations

| Color Hex Code | Recommended Usage | Accessibility Considerations |

|---|---|---|

| #4285F4 | Primary data series, key metrics | Sufficient contrast against white backgrounds |

| #EA4335 | Highlighting performance gaps, anomalies | Meets enhanced contrast requirements [7] |

| #FBBC05 | Secondary data series, comparisons | Avoid with light backgrounds for text |

| #34A853 | Positive outcomes, best performers | Paired with dark text for labels |

| #FFFFFF | Background color | Provides clean canvas for data presentation |

| #F1F3F4 | Alternate backgrounds, gridlines | Subtle distinction from white |

| #202124 | Primary text, labels | Excellent readability on light backgrounds |

| #5F6368 | Secondary text, axis labels | Meets minimum contrast ratios [7] |

Performance Evaluation Framework

The following diagram illustrates the multi-dimensional evaluation framework necessary for comprehensive benchmarking of DOS detection methods:

Diagram: Multi-dimensional Evaluation Framework for DOS Detection

The reproducibility crisis in computational science represents a fundamental challenge to scientific progress, particularly in critical areas like DOS attack detection where accuracy directly impacts security outcomes. Through systematic examination of benchmarking methodologies and experimental results, this review demonstrates that rigorous benchmarking is non-negotiable for establishing reliable, reproducible computational methods.

The framework presented—encompassing careful scope definition, comprehensive method selection, appropriate dataset choice, and multi-dimensional evaluation criteria—provides a roadmap for conducting benchmarking studies that yield meaningful, actionable insights. As computational methods continue to evolve and proliferate, the scientific community must prioritize neutral benchmarking initiatives that objectively assess performance across diverse scenarios and requirements.

For researchers, scientists, and drug development professionals relying on computational methods, the implications are clear: benchmarking should be integrated as a fundamental component of method selection and validation processes. Only through such rigorous comparative evaluation can we advance toward truly reproducible computational science that generates reliable knowledge and drives meaningful innovation.

In computational research, the reliability of a model is governed by three foundational pillars: accuracy, bias, and applicability domain. Accuracy quantifies a model's predictive performance on a given task, often measured by metrics such as F1-score or area under the curve (AUC). Bias describes systematic errors that skew predictions, frequently arising from non-representative training data. The applicability domain (AD) defines the boundary within the chemical, biological, or feature space where the model's predictions are reliable; predictions for samples outside this domain are considered uncertain [8]. In the context of drug response prediction (DRP) and intrusion detection systems (IDS), rigorously defining these concepts is paramount for translating computational models into real-world applications. Benchmarking studies reveal a critical challenge: models that exhibit high accuracy on their native dataset often suffer significant performance drops when applied to external datasets, highlighting the limitations of internal validation and the necessity of cross-dataset generalization analysis [9]. This guide objectively compares the performance of various computational methods, detailing the experimental protocols and data that underpin these core concepts.

Experimental Protocols for Benchmarking

Standardized benchmarking requires rigorous, reproducible methodologies. The following protocols are commonly employed in computational research.

Cross-Dataset Generalization Analysis

This protocol tests a model's robustness and generalizability by training it on one dataset and evaluating it on a completely separate, unseen dataset.

- Objective: To assess real-world applicability and prevent over-optimistic performance estimates from internal cross-validation.

- Workflow: The process involves using a benchmark dataset composed of multiple source studies (e.g., CCLE, CTRPv2, GDSCv1 for DRP). Models are trained on the training split of a source dataset. The trained model is then used to make predictions on the test splits of all other target datasets without any retraining. Performance is measured on all these external tests [9].

- Key Output: Generalization metrics that quantify the performance drop from source to target datasets.

Defining the Applicability Domain

The Applicability Domain (AD) is defined using measures that reflect the reliability of individual predictions. These measures fall into two main categories:

- Novelty Detection: This approach flags objects that are unusual or dissimilar to the training set objects in terms of their explanatory variables (e.g., molecular descriptors). It is independent of the underlying classifier and relies on one-class classification to define a region of "known" objects [8]. Common methods include various distance measures to the training data.

- Confidence Estimation: This approach uses information from the trained classifier itself, most effectively through class probability estimates. A future object's distance to the decision boundary is a strong predictor of its probability of misclassification. Benchmarks have shown that class probability estimates consistently perform best at differentiating reliable from unreliable predictions [8]. Ensemble methods, like Random Forests, naturally provide a confidence score through the fraction of votes for a predicted class.

Handling Class Imbalance and Data Preprocessing

For classification tasks, especially in intrusion detection, sophisticated preprocessing is critical.

- Class Imbalance: Techniques like SMOTE (Synthetic Minority Over-sampling Technique) are used to generate synthetic samples for the minority class, preventing model bias toward the majority class [10].

- Feature Skewness: Applying transformations such as the Quantile Uniform Transformation reduces feature skewness while preserving critical patterns (e.g., attack signatures in security data). This has been shown to achieve near-zero skewness (0.0003), outperforming log transformations (1.8642 skewness) [10].

- Feature Selection: A multi-layered approach combining correlation analysis, Chi-square statistics with p-value validation, and feature dependency examination enhances model discriminative power and efficiency [10].

Performance Comparison of Computational Methods

Performance in Intrusion Detection Systems

Table 1: Performance comparison of machine learning models for DDoS attack detection on various datasets.

| Model | Dataset | Accuracy (%) | Precision (%) | F1-Score (%) | Notes |

|---|---|---|---|---|---|

| Random Forest (RF) | CICIDS2017 | 98.9 | - | - | PCA-based feature selection [11] |

| Random Forest (RF) | CICDDoS2019 | 98.7 | - | - | PCA-based feature selection [11] |

| SVM | CICIDS2018 | 98.7 | - | - | PCA-based feature selection [11] |

| LSTM-FF (Hybrid) | CIC-DoS2017 | 99.7 | 99.5 | 97.5 | For Low-Rate DoS attacks, low FAR of 0.03% [12] |

| Weighted Ensemble (CNN, BiLSTM, RF, LR) | BOT-IOT | 100.0 | - | - | Integrated via soft-voting [10] |

| Weighted Ensemble (CNN, BiLSTM, RF, LR) | CICIOT2023 | 99.2 | - | - | Integrated via soft-voting [10] |

| RNN | CIC-DDoS2019 | 97.9 | - | - | With adaptive temporal windows [13] |

Deep learning models, particularly hybrids like LSTM-FF and ensembles, achieve top-tier accuracy in detecting sophisticated attacks like Low-Rate DoS [12]. Traditional machine learning models, especially Random Forest, remain highly competitive, often offering a superior balance of high accuracy and computational efficiency [11] [14].

Performance in Drug Response Prediction

Table 2: Cross-dataset generalization performance of Drug Response Prediction (DRP) models.

| Source Dataset | Target Dataset | Generalization Performance | Key Insight |

|---|---|---|---|

| CCLE, gCSI, GDSCv1, GDSCv2 | Various | Substantial performance drop on unseen datasets | Highlights the importance of cross-dataset benchmarks [9] |

| CTRPv2 | Various | Highest generalization scores across target datasets | Most effective source dataset for training robust DRP models [9] |

| Random Forest / XGBoost | IEC 60870-5-104 / SDN | F1-Score: 93.57% / 99.97% | Often outperform deeper learning models despite simpler architecture [14] |

Benchmarking in DRP reveals that no single model consistently outperforms all others across every dataset. The source of the training data (e.g., CTRPv2) can be as critical to generalization performance as the model architecture itself [9].

Table 3: Key resources and datasets for benchmarking computational models.

| Resource Name | Type | Primary Function | Field of Application |

|---|---|---|---|

| CIC-DDoS2019 | Dataset | Provides labeled benign and sophisticated DDoS attack traffic for training and evaluating IDS models. | Network Security / IDS |

| BOT-IOT, CICIOT2023, IOT23 | Dataset | A set of benchmark datasets used to compare IoT attack detection models under diverse network scenarios. | IoT Security |

| CCLE, CTRPv2, gCSI, GDSC | Dataset | A collection of drug screening studies containing cell line viability data (AUC) in response to compound treatments. | Drug Discovery / DRP |

| SMOTE | Algorithm | Synthetically generates samples for the minority class to mitigate model bias caused by class imbalance. | Data Preprocessing |

| Quantile Uniform Transformation | Algorithm | Reduces skewness in feature distributions while preserving critical information like attack signatures. | Data Preprocessing |

| Principal Component Analysis (PCA) | Algorithm | Reduces the dimensionality of data, improving computational efficiency and sometimes model performance. | Feature Selection |

| IMPROVE Framework | Software | A standardized Python package and benchmarking framework for reproducible drug response prediction. | Drug Discovery / DRP |

| GPMin / GOFEE | Software | ML-assisted algorithms for accelerating local and global geometry optimization of surface and interface structures. | Computational Materials Science |

Workflow and Relationship Visualizations

Diagram 1: Generalized workflow for benchmarking computational methods, covering data preparation, model training, evaluation, and applicability domain definition.

Diagram 2: Core methods for defining the Applicability Domain (AD), showing the distinct approaches of novelty detection and confidence estimation.

The Role of Standardized Datasets and Benchmarking Frameworks

In computational sciences, particularly in data-intensive fields like drug discovery and cybersecurity, standardized datasets and benchmarking frameworks provide the foundational infrastructure for objective performance evaluation. These resources allow researchers to compare novel algorithms and computational methods against established baselines under consistent conditions, enabling accurate assessment of progress and practical utility [15]. A benchmarking dataset is formally defined as any resource explicitly published for evaluation purposes, publicly available or accessible upon request, and accompanied by clear evaluation methodologies [15]. This distinguishes them from general datasets used for unsupervised pre-training or novel dataset creation.

The critical importance of these tools stems from their role in mitigating experimental variability and ensuring reproducible findings. As computational approaches become increasingly integrated into high-stakes domains like pharmaceutical development, where experimental validation remains extraordinarily costly and time-consuming, robust benchmarking practices help prioritize the most promising candidates for further investigation [16] [17]. Furthermore, in cybersecurity applications such as intrusion detection systems for Internet of Things (IoT) environments, benchmarking enables researchers to evaluate both detection accuracy and computational efficiency—essential considerations for resource-constrained environments [3] [18].

Characteristics of Effective Benchmarking Datasets

Essential Qualities and Design Principles

Effective benchmarking datasets share several defining characteristics that ensure their utility and longevity within research communities. According to computational science literature, high-quality benchmarks should be:

- Standardized and validated collections specifically designed for evaluation purposes [15]

- Representative of real-world conditions to provide realistic assessment environments [15]

- Periodically updated to reflect evolving challenges and new threat vectors [15]

- Structured for scalability through category spaces and hierarchies that allow augmentation with additional samples and categories [15]

- Accompanied by clear evaluation metrics and methodologies to ensure consistent application [15]

The principle of diversity, richness, and scalability (DiRS) is particularly emphasized in domains like remote sensing and GeoAI, where benchmarks must demonstrate high within-class diversity, between-class similarity, and multiple semantic categories to support generalization and discrimination of fine-grained content [15].

Domain-Specific Benchmarking Examples

Table 1: Notable Benchmarking Datasets Across Computational Domains

| Domain | Dataset Name | Application Focus | Key Characteristics |

|---|---|---|---|

| IoT Security | CICIoT2023 [3] | DoS, DDoS, and Mirai attack classification | Comprehensive attack variants, realistic network traffic patterns |

| Medical Imaging | Abdomen-1K [15] | Computed tomography analysis | 1,112 CT scans with enhanced variety and diversity |

| Medical Imaging | Medical Segmentation Decathlon [15] | Multi-organ segmentation | 10 different segmentation challenges across various modalities |

| Code Migration | MigrationBench [19] | Java repository migration | 5,102 open-source Java 8 Maven repositories with test validation |

| Code Migration | Poly-MigrationBench [19] | Multi-language migration | .NET, Node.js, and Python repositories for cross-platform migration |

| Natural Language Processing | GLUE [15] | General language understanding | Diverse tasks extracted from news, social media, books, and Wikipedia |

Benchmarking Methodologies and Evaluation Metrics

Components of a Benchmarking Framework

A comprehensive machine learning benchmark typically consists of four core components: (1) a dataset providing standardized inputs; (2) an objective defining the task to be performed; (3) metrics to quantify progress toward objectives; and (4) reporting protocols to ensure consistent communication of results [15]. These components work synergistically to create environments where algorithmic performance can be objectively quantified and compared.

Frameworks like the Language Model Evaluation Harness from EleutherAI provide unified infrastructure to benchmark machine learning models on large numbers of evaluation tasks, structuring diverse datasets, configurations, and evaluation strategies in one place [20]. Similarly, Stanford's HELM (Holistic Evaluation of Language Models) takes a comprehensive approach by prioritizing scenarios and metrics based on societal relevance, coverage across languages, and computational feasibility [20].

Key Evaluation Metrics

Table 2: Common Evaluation Metrics in Computational Benchmarking

| Metric | Calculation | Interpretation | Optimal Use Cases |

|---|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correctness | Balanced class distributions |

| Precision | TP/(TP+FP) | Proportion of true positives among positive predictions | When false positives are costly |

| Recall (Sensitivity) | TP/(TP+FN) | Proportion of actual positives correctly identified | When false negatives are costly |

| F1-Score | 2×(Precision×Recall)/(Precision+Recall) | Harmonic mean of precision and recall | Imbalanced datasets |

| AUC-ROC | Area under ROC curve | Overall performance across classification thresholds | Comprehensive model assessment |

While accuracy remains commonly reported, it may be the least informative metric in scenarios with class imbalance, such as manufacturing datasets or cybersecurity threat detection [15]. In these contexts, precision, recall, and F1-score offer more nuanced insights into algorithm performance. For example, in IoT security research, the F1-score provides a balanced assessment of model capability in distinguishing between attack types and normal traffic [3].

Experimental Protocols in Computational Research

IoT Security Assessment Protocol

Research evaluating machine learning approaches for attack classification in IoT networks demonstrates a comprehensive benchmarking methodology [3]. The experimental protocol encompasses:

- Data Preprocessing: Addressing class imbalance through undersampling techniques to improve model reliability and generalizability [3]

- Feature Selection: Implementing multiple selection methods including Chi-square, Principal Component Analysis (PCA), and Random Forest Regressor to identify optimal feature subsets [3]

- Model Training: Applying five supervised machine learning algorithms (Random Forest, Gradient Boosting, Naive Bayes, Decision Tree, and K-Nearest Neighbors) under consistent conditions [3]

- Performance Evaluation: Measuring accuracy, precision, sensitivity, and F1-score metrics alongside computational efficiency indicators like training and prediction times [3]

This methodology revealed that the Random Forest Regressor feature selection method combined with Decision Tree classification achieved state-of-the-art performance (99.99% accuracy) while significantly improving computational efficiency—reducing training time by 98.71% and prediction time by 99.53% compared to previous studies [3].

IoT Security Benchmarking Workflow

Drug Discovery Evaluation Protocol

In computational drug discovery, benchmarking follows rigorous protocols to assess predictive accuracy for key physicochemical and absorption, distribution, metabolism, and excretion (ADME) properties [21]. Standard methodologies include:

- Experimental Data Curation: Compiling large, high-quality datasets from published studies and proprietary sources, acknowledging challenges related to experimental error and data volume [21]

- Method Comparison: Evaluating diverse computational approaches ranging from quantum mechanics calculations to machine learning models against standardized datasets [17] [22]

- Statistical Validation: Employing multiple error metrics including mean signed error (MSE), mean unsigned error (MUE), and maximum error (MAXE) to comprehensively quantify performance [22]

For proton affinity predictions, benchmarking studies systematically evaluate density functional theory (DFT) functionals (B3LYP, BP86, PBEPBE, APFD, wB97XD, M062X) using the flexible def2tzvp basis set, comparing calculated values against experimental reference data from the NIST database [22]. These protocols identified the M062X functional as providing optimal accuracy for predicting proton affinities and gas-phase basicities across diverse molecular structures [22].

Drug Discovery Benchmarking Workflow

Table 3: Essential Computational Tools for Benchmarking Studies

| Tool Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian09 [22] | Thermochemistry calculations | Proton affinity predictions, molecular property computation |

| Evaluation Frameworks | Language Model Evaluation Harness [20] | Unified benchmarking framework | Evaluating generative capabilities and reasoning tasks |

| Evaluation Frameworks | Stanford HELM [20] | Holistic language model evaluation | Multi-metric assessment across diverse scenarios |

| Evaluation Frameworks | PromptBench [20] | Prompt engineering evaluation | Benchmarking prompt-level adversarial attacks |

| Evaluation Frameworks | DeepEval [20] | LLM evaluation platform | Regression testing and model evaluation on cloud |

| Dataset Repositories | Hugging Face [19] | Dataset hosting and sharing | Access to MigrationBench and Poly-MigrationBench |

| Dataset Repositories | GitHub [19] | Code and dataset distribution | Open-source benchmarking implementations |

Benchmarking Datasets as Research Reagents

In computational research, benchmarking datasets themselves function as essential research reagents, providing standardized substrates for method validation:

- CICIoT2023: Serves as a validated benchmark for IoT security research, containing diverse attack variants including Denial of Service (DoS), Distributed Denial of Service (DDoS), and Mirai attacks [3]

- MigrationBench: Provides 5,102 open-source Java 8 Maven repositories for evaluating code migration tools, with quality filters ensuring inclusion of projects with sufficient complexity and test coverage [19]

- Medical Segmentation Decathlon: Offers 10 different segmentation challenges across various imaging modalities and anatomical structures, testing algorithm robustness across diverse medical imaging tasks [15]

Challenges and Future Directions in Benchmarking

Despite their critical importance, benchmarking datasets and frameworks face several persistent challenges that limit their effectiveness and adoption. A significant issue across multiple domains is the limited availability of specialized public datasets. In manufacturing and cyber-physical systems, for example, the scarcity of tailored benchmarking datasets restricts standardized evaluation and fair algorithm comparison [15]. Similarly, medical imaging research suffers from insufficient large, representative labeled datasets due to privacy concerns, cost constraints, and data fragmentation across institutions [15].

Community-wide overfitting presents another fundamental challenge, particularly in computer vision and medical imaging, where researchers repeatedly optimize algorithms on the same public benchmarks, potentially inflating performance metrics without corresponding real-world improvements [15]. To mitigate this, evaluation on multiple public and private datasets is recommended, though this only partially addresses the underlying bias [15].

Future directions in benchmarking emphasize several promising approaches:

- Federated learning frameworks that enable secure access to sensitive data without compromising privacy, particularly valuable for healthcare applications [15]

- Dynamic benchmark development that evolves with changing requirements and emerging challenges, as exemplified by the DiRS principle in remote sensing [15]

- Multi-dimensional evaluation that moves beyond narrow accuracy metrics to assess computational efficiency, robustness, fairness, and practical deployability [3] [15]

- Cross-platform benchmarking that enables performance comparison across diverse computational environments and resource constraints [19]

As computational methods continue to advance, the role of standardized datasets and benchmarking frameworks will only grow in importance, providing the critical infrastructure needed to distinguish incremental optimization from genuine scientific progress across research domains.

Within computational methods research, particularly in fields requiring high-fidelity simulations like drug development, Multidisciplinary Design Optimization (MDO) faces a fundamental challenge: balancing model accuracy with computational cost. Multifidelity methods address this by strategically combining information sources of varying fidelity—from fast, approximate models to slow, high-accuracy simulations—to enable efficient and scalable design exploration [23]. The core challenge lies in selecting appropriate fidelity levels and coupling them effectively. Without rigorous benchmarking, comparing the performance of these numerous multifidelity methods remains difficult, hindering the adoption of robust optimization strategies in scientific and industrial applications. This guide provides a structured framework for assessing these methods, enabling researchers to make informed decisions when deploying multifidelity optimization for complex problems like drug design and molecular simulation.

A Standardized Benchmarking Framework

A comprehensive benchmarking framework is essential for the objective comparison of multifidelity optimization methods. According to community standards, test problems are classified into three levels [23]:

- L1 Problems: Computationally cheap analytical functions with known exact solutions, ideal for rapid prototyping and controlled algorithmic assessment.

- L2 Problems: Simplified engineering applications executable with reduced computational expense.

- L3 Problems: Complex engineering use cases, often involving multi-physics couplings.

This guide focuses on L1 analytical benchmarks, which provide a controlled environment for stress-testing algorithms. Their closed-form nature ensures high reproducibility, computational efficiency, and isolates algorithmic behavior from numerical artifacts [23]. The global optima of these benchmarks are known by construction, allowing for precise quantification of optimization performance.

Core Analytical Benchmark Problems

The following suite of L1 benchmark problems is designed to capture mathematical challenges endemic to real-world computational tasks, including high dimensionality, multimodality, discontinuities, and noise [23].

Table 1: Suite of Analytical Benchmark Problems for Multifidelity Optimization

| Benchmark Problem | Key Mathematical Characteristics | Relevance to Real-World Applications |

|---|---|---|

| Forrester Function (Continuous & Discontinuous) | Non-linear, one-dimensional, strong non-linearity | Tests ability to model non-linear relationships between model fidelities. |

| Rosenbrock Function | Continuous, non-convex, curved parabolic valley | Represents problems with long, flat optimal regions and sharp gradients. |

| Rastrigin Function (Shifted & Rotated) | Highly multimodal, separable, scalable dimensionality | Mimics landscapes with many local optima, testing escape from suboptimal solutions. |

| Heterogeneous Function | Mixed properties (e.g., linear, quadratic, sinusoidal regions) | Challenges methods to adapt to varying local function behaviors. |

| Coupled Spring-Mass System | Physics-based, coupled interactions | Represents simple dynamical systems with interacting components. |

| Pacioreck Function with Noise | Affected by artificial noise | Tests robustness to uncertainties in function evaluations. |

Defining Fidelity and Discrepancy

In a multifidelity setting, the function to be minimized is the highest-fidelity function, ( f1(\mathbf{x}) ). The optimization leverages a spectrum of ( L ) cheaper-to-evaluate approximations, from ( f1(\mathbf{x}) ) down to ( f_L(\mathbf{x}) ), the lowest-fidelity level available [23]. A critical aspect of these benchmarks is the discrepancy type, which describes the relationship between different fidelities. A linear discrepancy is simpler to model than a non-linear one, and the selected benchmarks allow for assessing how well methods can handle these relationships as the number of available fidelities changes [23].

Quantitative Assessment and Performance Metrics

A rigorous assessment requires predefined metrics to quantify performance over measurable objectives. The proposed metrics evaluate both optimization effectiveness and global approximation accuracy [23].

Table 2: Performance Metrics for Multifidelity Optimization Assessment

| Metric Category | Specific Metric | Definition and Purpose |

|---|---|---|

| Optimization Effectiveness | Convergence Speed | Number of high-fidelity evaluations or total computational cost required to find the optimum. |

| Solution Accuracy | Difference between the found optimum ( f(\mathbf{x}^*) ) and the known global optimum ( f^\star ). | |

| Robustness | Consistency of performance across multiple runs with different initial samples. | |

| Global Approximation Accuracy | Mean Squared Error (MSE) | Average squared difference between the surrogate model and the high-fidelity function across the design space. |

| Coefficient of Determination (( R^2 )) | Proportion of variance in the high-fidelity model explained by the multifidelity surrogate. |

Experimental Protocol for Method Comparison

To ensure a fair and meaningful comparison between different multifidelity optimization methods, the following experimental protocol is recommended.

Experimental Workflow

The diagram below outlines the logical workflow for a standardized benchmarking experiment.

Recommended Experimental Setup

For reproducible results, researchers should adhere to the following setup for the benchmark problems [23]:

- Initial Sampling: Use a space-filling design, such as Latin Hypercube Sampling (LHS), to generate an initial set of sample points for the lowest-fidelity model. The sample size should be a multiple of the problem's dimensionality.

- Infill Strategy: Define a consistent acquisition function (e.g., Expected Improvement, Lower Confidence Bound) for selecting new evaluation points based on the multifidelity surrogate model.

- Termination Criteria: Standardize stopping conditions, such as a maximum number of high-fidelity evaluations, a tolerance in solution improvement between iterations, or a computational budget.

- Repetitions: Perform multiple independent runs of each optimization method from different initial samples to account for stochasticity and compute robust performance statistics.

The Scientist's Toolkit for Multifidelity Optimization

Implementing and testing multifidelity optimization methods requires a specific set of computational tools and resources.

Table 3: Essential Research Reagent Solutions for Multifidelity Benchmarking

| Item | Function in the Benchmarking Process |

|---|---|

| L1 Benchmark Code Suite | Pre-implemented analytical benchmark functions in languages like Python, MATLAB, or Fortran. Provides a standardized, ready-to-use testbed. [23] |

| Multifidelity Optimization Software | Frameworks such as mf2 or Dakota that provide built-in algorithms for multifidelity surrogate modeling (e.g., Co-Kriging) and optimization. |

| Performance Metric Calculators | Scripts to compute standardized metrics (see Table 2) from optimization history data, ensuring consistent evaluation across studies. |

| Color Contrast Checker | A tool like the WebAIM Color Contrast Checker to ensure all visualizations (e.g., convergence plots, surrogate models) meet accessibility standards (WCAG AA). [24] |

The systematic assessment of multifidelity optimization methods through standardized analytical benchmarks is a critical step toward their reliable application in computationally intensive fields like drug development. The framework presented here—encompassing a diverse suite of benchmark problems, quantitative performance metrics, and a detailed experimental protocol—provides researchers with the necessary tools for objective comparison.

Based on this benchmarking approach, the primary lessons are:

- No Single Best Method: The performance of a multifidelity method is highly dependent on the mathematical characteristics of the problem, such as modality and fidelity discrepancy.

- Controlled Testing is Crucial: L1 benchmarks are indispensable for understanding fundamental algorithmic behaviors before progressing to more expensive L2 or L3 engineering problems.

- Rigorous Protocol Ensures Fairness: Adherence to a standardized experimental setup, including initial sampling, termination criteria, and multiple runs, is fundamental for producing credible and comparable results.

This benchmarking framework equips scientists and engineers to select and tailor multifidelity optimization strategies that can significantly accelerate the discovery and development pipeline by making the most efficient use of computational resources across model fidelity levels.

A Landscape of Computational Tools: From QSAR and Docking to AI and Quantum Mechanics

The predictive assessment of physicochemical properties and toxicokinetic profiles is a critical step in the development of new chemical entities, particularly in the pharmaceutical and regulatory sectors. Quantitative Structure-Activity Relationship (QSAR) tools have emerged as indispensable computational methods for filling data gaps by estimating properties based on molecular structure, thereby reducing reliance on costly and time-consuming experimental testing. These tools operate on the fundamental principle that similar molecular structures exhibit similar biological activities and properties, a concept formally known as the similarity-property principle [25] [26]. The evolution of QSAR methodologies from simple linear regression models utilizing few physicochemical parameters to complex machine learning algorithms capable of processing thousands of chemical descriptors has significantly expanded their predictive capabilities and application domains [25].

The reliability of QSAR predictions is of paramount importance for regulatory acceptance and safety assessment. Consequently, benchmarking the predictive accuracy and applicability domains of these tools has become a central focus in computational toxicology and drug design research. This review objectively compares the performance of prominent QSAR tools, with particular emphasis on the OECD QSAR Toolbox, and examines the experimental protocols and benchmarking methodologies essential for validating their predictive capabilities for physicochemical and toxicokinetic properties.

The OECD QSAR Toolbox

The OECD QSAR Toolbox represents a comprehensive software solution developed through international collaboration to promote the regulatory acceptance of (Q)SAR methodologies [27] [28]. As a freely available application, it supports transparent chemical hazard assessment by providing functionalities for experimental data retrieval, metabolism simulation, and chemical property profiling. The Toolbox incorporates 62 databases covering approximately 155,000 chemicals and containing over 3.3 million experimental data points, making it one of the most extensive resources for chemical safety assessment [27].

The seminal workflow of the Toolbox involves: (1) identifying relevant structural characteristics and potential mechanisms or modes of action of a target chemical; (2) identifying other chemicals that share the same structural characteristics and/or mechanisms; and (3) using existing experimental data from these analogous chemicals to fill data gaps through read-across or trend analysis [28]. The system also incorporates various external QSAR models that can be executed to generate supporting evidence for chemical assessments [27].

Other QSAR Platforms and Frameworks

While the OECD QSAR Toolbox represents a major integrative effort, several other platforms and methodologies contribute to the QSAR landscape. OrbiTox, developed by Sciome, offers chemistry-based similarity searching, molecular descriptors, over a million data points, more than 100 QSAR models, and a built-in metabolism predictor [29]. Similarly, research continues to develop novel QSAR approaches such as Topological Regression (TR), which provides a statistically grounded, computationally fast, and interpretable technique for predicting drug responses while addressing the challenge of activity cliffs—pairs of structurally similar compounds with large differences in potency [30].

The development of robust QSAR models relies on specialized software packages for molecular descriptor calculation, including PaDEL, Mordred, and RDKit [30]. Deep-learning methods such as Chemprop utilize directed message-passing neural networks to learn molecular representations directly from graphs for property prediction, demonstrating particular utility in antibiotic discovery and lipophilicity prediction [30].

Table 1: Comparison of Major QSAR Platforms and Their Capabilities

| Platform | Primary Focus | Data Resources | Key Functionalities | Regulatory Acceptance |

|---|---|---|---|---|

| OECD QSAR Toolbox | Integrated chemical hazard assessment | 62 databases, 155K+ chemicals, 3.3M+ data points [27] | Profiling, read-across, metabolic simulator, QSAR model integration | High (OECD-developed) |

| OrbiTox (Sciome) | Read-across and QSAR modeling | 1M+ data points, 100+ QSAR models [29] | Chemistry-based similarity searching, metabolism prediction | Growing (Regulatory submissions focus) |

| Topological Regression | Drug response prediction | Dependent on input datasets [30] | Interpretable similarity-based regression, activity cliff handling | Research phase |

| Chemprop | Property prediction from molecular graphs | Dependent on input datasets [30] | Message-passing neural networks, embedded feature extraction | Research phase |

Experimental Protocols for QSAR Tool Benchmarking

Standardized Workflow for Predictive Assessment

The evaluation of QSAR tool performance requires carefully designed experimental protocols that ensure reproducibility and statistical significance. A robust benchmarking methodology typically follows these essential steps:

Dataset Curation and Preprocessing: High-quality datasets with well-characterized chemical structures and reliably measured experimental values for physicochemical and toxicokinetic properties form the foundation of any benchmarking study. The chemical diversity and structural complexity of the compounds in the dataset must adequately represent the application domain of interest [25]. Data preprocessing steps may include normalization, handling of missing values, and removal of duplicates.

Chemical Representation and Descriptor Calculation: Molecular structures are converted into machine-readable mathematical representations using various descriptor types. These may include classical molecular descriptors encoding specific computed or measured attributes, molecular fingerprints such as Extended-Connectivity Fingerprints (ECFPs) that encode chemical substructures, or graph representations that characterize 2D chemical structures as graphs with atoms as vertices and bonds as edges [30].

Chemical Category Formation: For read-across approaches, chemicals are grouped into toxicologically meaningful categories based on structural similarity, mechanistic similarity, or shared metabolic pathways [27]. The OECD QSAR Toolbox provides several profiling schemes (profilers) to identify the affiliation of target chemicals with predefined categories containing functional groups or alerts associated with specific mechanisms of action [27].

Model Application and Prediction: The curated dataset is processed through the QSAR tools being evaluated to generate predictions for the target properties. This may involve read-across from similar compounds with experimental data, application of QSAR models, or trend analysis within chemical categories [27].

Performance Validation and Statistical Analysis: Predictive performance is quantified by comparing tool predictions with held-out experimental data using statistical metrics. Common measures include accuracy, precision, sensitivity, and F1-score for classification endpoints, and correlation coefficients, root mean square error (RMSE), and mean absolute error (MAE) for continuous endpoints [3]. Cross-validation techniques are employed to ensure robust performance estimation [25].

The following diagram illustrates the generalized workflow for benchmarking QSAR tools:

Addressing Methodological Challenges

Robust benchmarking must account for several methodological challenges inherent to QSAR modeling. The applicability domain of each tool must be carefully considered to avoid extrapolation beyond the chemical space for which the tool was designed [25]. The presence of activity cliffs, where small structural modifications result in significant activity changes, can substantially impact predictive performance and requires specific handling strategies [30].

Class imbalance in datasets represents another critical challenge, as unequal representation of different activity classes can bias model performance. Techniques such as undersampling have been successfully employed to address this issue in computational toxicology studies [3]. Furthermore, feature selection methods including Chi-square tests, Principal Component Analysis (PCA), and Random Forest Regressor (RFR) can enhance model performance and computational efficiency by identifying the most relevant molecular descriptors [3].

Essential Research Reagent Solutions

The effective application of QSAR tools requires a suite of computational "research reagents" that facilitate various stages of the predictive workflow. These foundational resources enable everything from initial chemical representation to final model interpretation.

Table 2: Essential Research Reagent Solutions for QSAR Studies

| Research Reagent | Category | Primary Function | Examples/Implementations |

|---|---|---|---|

| Molecular Descriptors | Chemical Representation | Quantify structural and physicochemical features | PaDEL, Mordred, RDKit [30] |

| Molecular Fingerprints | Chemical Representation | Encode substructural patterns as bit strings | Extended-Connectivity Fingerprints (ECFPs) [30] |

| Profiling Schemes | Category Formation | Identify structural alerts and mechanism-based groups | OECD QSAR Toolbox Profilers [27] |

| Metabolic Simulators | Transformation Prediction | Predict biotic and abiotic transformation products | Built-in metabolism simulators [27] |

| Similarity Metrics | Read-Across | Quantify structural similarity between compounds | Tanimoto coefficient, Euclidean distance [30] |

| Feature Selection Methods | Model Optimization | Identify most relevant descriptors | Chi-square, PCA, Random Forest Regressor [3] |

Molecular descriptors and fingerprints serve as the fundamental language for representing chemical structures in machine-readable formats, enabling quantitative comparisons between compounds [30]. Profiling schemes, such as those implemented in the OECD QSAR Toolbox, facilitate the identification of structurally and mechanistically related compounds, forming the basis for read-across and category formation [27]. Metabolic simulators predict potential transformation products, which is crucial for toxicokinetic assessments as metabolites may exhibit different properties and activities compared to parent compounds [27].

Similarity metrics provide quantitative measures of structural resemblance, guiding the identification of suitable source compounds for read-across predictions [30]. Finally, feature selection methods enhance model interpretability and computational efficiency by identifying the most relevant molecular descriptors for specific predictive tasks [3].

Performance Benchmarking and Comparison

Quantitative Performance Assessment

Rigorous benchmarking studies provide valuable insights into the relative performance of different QSAR approaches. While direct comparative studies between the OECD QSAR Toolbox and alternative platforms are limited in the available literature, performance data from individual studies illustrate the capabilities of contemporary QSAR methodologies.

In the evaluation of QSAR models for biological activity prediction, topological regression (TR) has demonstrated comparable or superior performance to deep-learning-based QSAR models across 530 ChEMBL human target activity datasets, while offering enhanced interpretability through the extraction of approximate isometry between chemical space and activity space [30]. Similarly, in specialized applications such as IoT security (which employs similar classification challenges), machine learning approaches including Random Forest, Decision Tree, and Gradient Boosting have achieved accuracies of 99.99% with appropriate feature selection methods, demonstrating the potential performance of well-optimized predictive models [3].

The OECD QSAR Toolbox has demonstrated practical utility across diverse regulatory and industry applications. Case studies document its use in evaluating biocides under Regulation (EC) No 528/2012, assessing agrochemicals, supporting REACH regulatory submissions, and conducting preliminary screening of raw materials for cosmetics [27]. These real-world applications provide evidence of the Toolbox's predictive capabilities, though quantitative performance metrics for specific physicochemical and toxicokinetic properties are not uniformly reported in the available literature.

Computational Efficiency Considerations

Beyond predictive accuracy, computational efficiency represents a critical practical consideration, particularly for large-scale chemical assessments. Recent advances have demonstrated significant improvements in training and prediction times without compromising accuracy. For instance, optimized Decision Tree models have achieved a 98.71% reduction in training time and a 99.53% reduction in prediction time compared to previously reported results while maintaining superior accuracy [3]. Although these results come from a different application domain, they highlight the importance of computational efficiency in practical implementations of predictive algorithms.

Feature selection methods substantially impact computational efficiency. Studies comparing Chi-square, PCA, and Random Forest Regressor (RFR) feature selection techniques have found that RFR consistently outperforms other methods, contributing to both enhanced accuracy and reduced computational requirements [3]. The OECD QSAR Toolbox addresses efficiency challenges through its streamlined workflow, which incorporates theoretical knowledge, experimental data, and computational tools organized in a logical sequence to simplify the application of non-test methods [27].

The following diagram illustrates the relationship between key factors influencing QSAR tool performance:

Emerging Trends and Development Needs

The field of QSAR modeling continues to evolve, with several emerging trends shaping its future development. The integration of deep learning methodologies represents a significant advancement, offering enhanced capabilities for learning complex functional relationships between molecular descriptors and activity [25]. However, these approaches often face challenges in interpretability, prompting research into explainable AI techniques for molecular design [30].

The development of universal QSAR models capable of reliably predicting the properties of diverse chemical structures remains an aspirational goal. Achieving this objective requires addressing several fundamental challenges: (1) assembling sufficient structure-activity relationship instances to cope with the complexity and diversity of molecular structures and action mechanisms; (2) developing precise molecular descriptors that balance dimensionality with computational cost; and (3) implementing powerful and flexible mathematical models to learn complex structure-activity relationships [25].

Bibliometric analyses of QSAR publications reveal evolutionary trends in the field, including increases in dataset sizes, diversification of descriptor types, and growing adoption of advanced machine learning algorithms [25]. These trends reflect ongoing efforts to expand the applicability domains of QSAR models and enhance their predictive performance across broader chemical spaces.

This review has examined the current landscape of QSAR tools for predicting physicochemical and toxicokinetic properties, with particular focus on the OECD QSAR Toolbox as a comprehensive, regulatory-supported platform. The benchmarking of these tools requires carefully designed experimental protocols that address dataset curation, chemical representation, category formation, model application, and performance validation.

The OECD QSAR Toolbox distinguishes itself through its extensive data resources, integrative workflow combining multiple assessment approaches, and widespread adoption in regulatory contexts. While emerging approaches such as topological regression and deep learning-based models show promise for enhanced performance and interpretability, the Toolbox remains a cornerstone in computational toxicology due to its transparency, comprehensive functionality, and regulatory acceptance.

As the field advances, the convergence of larger and higher-quality datasets, more accurate molecular descriptors, and sophisticated modeling techniques will continue to improve the predictive ability, interpretability, and application domains of QSAR tools. These developments will further solidify the role of computational approaches in chemical safety assessment and drug discovery, providing efficient and effective means for predicting essential physicochemical and toxicokinetic properties.

Molecular docking is a cornerstone of computational drug discovery, enabling the prediction of how small molecules interact with biological targets. The accuracy of these predictions hinges on the docking protocols and scoring functions used to approximate binding affinity. However, with a plethora of available tools and functions, their performance can vary significantly based on the target and scenario. This creates an critical need for rigorous benchmarking—the systematic comparison of computational methods using standardized datasets and metrics—to provide actionable insights for researchers and drive method development forward. This guide objectively compares the performance of current docking and scoring methodologies, framing the findings within the broader thesis that robust benchmarking is fundamental for ensuring the accuracy and reliability of computational methods in structural biology and drug design.

Performance Comparison of Docking and Scoring Methods

The performance of docking tools and scoring functions is highly context-dependent, influenced by the protein target, the presence of resistance mutations, and the chemical space of the screened ligands. The following tables summarize key quantitative findings from recent benchmarking studies.

Table 1: Benchmarking Docking Tools and ML Rescoring against PfDHFR Variants [31]

| Target Variant | Docking Tool | Rescoring Method | Primary Metric (EF 1%) | Performance Summary |

|---|---|---|---|---|

| Wild-Type (WT) PfDHFR | AutoDock Vina | None (Default Scoring) | Worse-than-random | Poor initial screening performance |

| Wild-Type (WT) PfDHFR | AutoDock Vina | RF-Score-VS v2 | Better-than-random | Significant improvement with ML rescoring |

| Wild-Type (WT) PfDHFR | AutoDock Vina | CNN-Score | Better-than-random | Significant improvement with ML rescoring |

| Wild-Type (WT) PfDHFR | PLANTS | CNN-Score | 28 | Best overall enrichment for WT variant |

| Quadruple-Mutant (Q) PfDHFR | FRED | CNN-Score | 31 | Best overall enrichment for resistant Q variant |

EF 1%: Enrichment Factor at the top 1% of the screened library; a higher value indicates better ability to prioritize active compounds.

Table 2: Pairwise Performance Comparison of MOE Scoring Functions [32]

| Scoring Function | Type | Best Docking Score (BestDS) | Best RMSD (BestRMSD) | RMSD of BestDS Pose (RMSD_BestDS) | DS of BestRMSD Pose (DS_BestRMSD) |

|---|---|---|---|---|---|

| Alpha HB | Empirical | Moderate | High Performance | Moderate | Moderate |

| London dG | Empirical | Moderate | High Performance | Moderate | Moderate |

| ASE | Empirical | Moderate | Moderate | Moderate | Moderate |

| Affinity dG | Empirical | Moderate | Moderate | Moderate | Moderate |

| GBVI/WSA dG | Force-Field | Moderate | Moderate | Moderate | Moderate |

Performance assessed on the CASF-2013 benchmark (195 complexes). The BestRMSD output, which measures pose prediction accuracy, was the most informative for distinguishing between scoring functions, with Alpha HB and London dG showing the highest comparability and performance [32].

Table 3: Impact of Training Data on ML Score Prediction (Chemprop) [33]

| Training Set Size | Sampling Strategy | Overall Pearson (AmpC) | logAUC (Top 0.01%) | Key Insight |

|---|---|---|---|---|

| 1,000 | Random | 0.65 | 0.49 (est.) | Low correlation, poor enrichment of top scorers |

| 100,000 | Random | 0.83 | 0.49 | High correlation does not guarantee good enrichment |

| 100,000 | Stratified | 0.76 | 0.77 | Strategic sampling significantly improves enrichment |

Experimental Protocols for Key Benchmarking Studies

Benchmarking Protocol for Antimalarial Target (PfDHFR)

A comprehensive benchmark was conducted to evaluate screening performance against both wild-type and drug-resistant Plasmodium falciparum dihydrofolate reductase (PfDHFR) [31].

- Protein Preparation: Crystal structures for WT (PDB: 6A2M) and quadruple-mutant (Q) PfDHFR (PDB: 6KP2) were obtained from the Protein Data Bank. Proteins were prepared using OpenEye's "Make Receptor" GUI: removing water molecules, ions, and redundant chains; adding and optimizing hydrogen atoms [31].

- Benchmark Set Preparation: The DEKOIS 2.0 protocol was employed to create benchmark sets for each variant. Each set contained 40 known bioactive molecules and 1,200 structurally similar but presumed inactive decoys (a 1:30 ratio). Ligands were prepared with Omega to generate multiple conformations [31].

- Docking Experiments: Three docking tools were evaluated:

- AutoDock Vina: Receptor and ligands converted to PDBQT format. Grid boxes were centered on the binding site.

- FRED: Required pre-generated ligand conformations.

- PLANTS: Utilized SPORES for correct atom typing.

- Machine Learning Rescoring: The top poses from each docking tool were rescored using two pretrained ML scoring functions: RF-Score-VS v2 (Random Forest-based) and CNN-Score (Convolutional Neural Network-based).

- Performance Evaluation: Screening quality was assessed using:

- Enrichment Factor at 1% (EF 1%): Measures the concentration of true actives in the top 1% of the ranked list.

- pROC-AUC: Area under the semi-log ROC curve, assessing early enrichment.

- pROC-Chemotype Plots: Evaluate the retrieval of diverse, high-affinity chemotypes.

Community Standard Benchmarking Guidelines

Essential guidelines for rigorous computational benchmarking, distilled from community best practices, include [34]:

- Define Purpose and Scope: Clearly state whether the benchmark is a "neutral" comparison or for demonstrating a new method's advantage. The selection of methods and datasets should flow from this purpose.

- Comprehensive Method Selection: Neutral benchmarks should strive to include all available methods, or at least a representative subset, with clear and unbiased inclusion criteria (e.g., software availability, usability).

- Use of Diverse Datasets: Incorporate a variety of benchmark datasets, including both simulated data (with known ground truth) and real experimental data. Datasets should reflect relevant biological and chemical challenges.

- Avoid Bias: Apply the same level of optimization and trouble-shooting to all methods being compared. Do not over-tune a new method while using out-of-the-box settings for competitors.

- Employ Robust Metrics: Use multiple, complementary performance metrics (e.g., EF, AUC, RMSD) to provide a holistic view of method performance and trade-offs.

Workflow and Relationship Diagrams

The following diagram illustrates the standard workflow for a structure-based virtual screening (SBVS) benchmarking study, from initial preparation to final evaluation.

Machine Learning Rescoring Process

This diagram details the specific process of applying machine learning scoring functions to refine the results of classical docking tools.

Table 4: Key Software Tools and Databases for Docking Benchmarking

| Resource Name | Type | Primary Function in Benchmarking | Relevant Citation |

|---|---|---|---|

| DEKOIS 2.0 | Benchmark Dataset | Provides sets of known active molecules and carefully matched decoys to test screening enrichment. | [31] |

| PDBbind | Database | A comprehensive collection of protein-ligand complexes with binding affinity data, used for scoring function validation. | [32] |

| CASF-2013 | Benchmark Dataset | A curated subset of PDBbind used for the Comparative Assessment of Scoring Functions. | [32] |

| AutoDock Vina | Docking Tool | A widely used, open-source molecular docking engine. | [31] |

| FRED | Docking Tool | A docking tool that requires pre-generated ligand conformations and uses a rigorous scoring process. | [31] |

| PLANTS | Docking Tool | A docking tool that utilizes ant colony optimization algorithms for pose prediction. | [31] |

| CNN-Score | ML Scoring Function | A convolutional neural network-based scoring function for re-ranking docking poses. | [31] |

| RF-Score-VS v2 | ML Scoring Function | A random forest-based scoring function designed for virtual screening. | [31] |

| TDC Docking Benchmark | Benchmark Framework | Provides benchmarks and oracles for evaluating AI-generated molecules against target proteins. | [35] |

| CCharPPI Server | Evaluation Tool | Allows for the assessment of scoring functions independent of the docking process itself. | [36] |

The application of artificial intelligence and machine learning (AI/ML) in drug discovery has ushered in a new era of computational methods research. Central to this paradigm shift is the critical need to rigorously benchmark the accuracy of different approaches, particularly in predicting drug mechanisms of action (MOA) in oncology. DeepTarget emerges as a significant innovation in this landscape, representing a class of models that prioritize functional cellular context over purely structural predictions. Unlike traditional structure-based methods that predict protein-small molecule binding affinity from static structures, DeepTarget introduces a fundamentally different approach by integrating large-scale drug and genetic knockdown viability screens with omics data from matched cell lines [37]. This methodological divergence presents a unique opportunity for comparative benchmarking to determine optimal applications for different computational strategies in target identification.

Methodological Comparison: DeepTarget Versus Structural Approaches

Core Computational Frameworks

The fundamental difference between DeepTarget and structure-based methods lies in their underlying principles and data requirements:

DeepTarget's Functional Approach: DeepTarget operates on the hypothesis that CRISPR-Cas9 knockout (CRISPR-KO) of a drug's target gene mimics the drug's inhibitory effects across cancer cell lines [37]. It integrates three data types across cancer cell line panels: (1) drug response profiles, (2) genome-wide CRISPR-KO viability profiles, and (3) corresponding omics data (gene expression and mutation) [37]. The method calculates a Drug-Knockout Similarity (DKS) score through linear regression that corrects for screen confounding factors, quantifying the similarity between drug treatment and genetic perturbation effects [37].

Structure-Based Methods: Tools like RoseTTAFold All-Atom and Chai-1 represent state-of-the-art in predicting protein-small molecule binding affinity based on structural information [37]. These methods rely on protein structures and chemical information to predict binding interactions but lack incorporation of cellular context, interaction dynamics, and pharmacokinetics [37].

Key Differentiating Factors

DeepTarget's architecture incorporates several distinctive capabilities that structure-based methods do not explicitly address:

- Context-Specific Secondary Target Prediction: DeepTarget identifies secondary targets that contribute to efficacy even when primary targets are present, and those mediating responses specifically when primary targets are not expressed [37].

- Mutation-Specificity Prediction: The tool determines whether drugs preferentially target wild-type or mutant protein forms by comparing DKS scores in different genetic contexts [37].

- Pathway-Level Effects: Beyond direct binding interactions, DeepTarget captures indirect, pathway-level effects that emerge from cellular context [37].

Experimental Benchmarking: Protocols and Performance Metrics

Gold-Standard Dataset Curation

To enable rigorous benchmarking, researchers curated eight gold-standard datasets comprising high-confidence drug-target pairs focused on cancer drugs [37]. These datasets represent distinct validation scenarios:

- Clinical Resistance Pairs: Drug-target pairs where tumor mutations cause clinical resistance (COSMIC resistance, N=16; oncoKB resistance, N=28) [37].

- FDA-Approved Pairs: Targets with FDA approval for anti-cancer treatment (FDA mutation-approval, N=86) [37].

- High-Confidence Experimental Pairs: Targets validated by multiple independent reports (BioGrid Highly Cited, N=28) or designated high-confidence by scientific advisory boards (SAB, N=24) [37].

- Direct Interaction Pairs: Compounds with confirmed direct target interactions (DrugBank Active Inhibitors, N=90; DrugBank Active Antagonists, N=52) [37].

- Selective Inhibitors: Highly selective inhibitors based on binding profiles (SelleckChem selective inhibitors, N=142) [37].

Quantitative Performance Comparison

In comprehensive benchmarking across the eight gold-standard datasets, DeepTarget demonstrated superior performance against state-of-the-art structural methods:

Table 1: Benchmarking Performance Across Methodologies

| Computational Method | Mean AUC Across 8 Datasets | Performance Advantage | Key Strength |

|---|---|---|---|

| DeepTarget | 0.73 | Reference standard | Cellular context integration |

| RoseTTAFold All-Atom | 0.58 | Outperformed in 7/8 datasets | Structural binding prediction |

| Chai-1 (without MSA) | 0.53 | Outperformed in 7/8 datasets | Protein-ligand interaction |

The benchmarking revealed that DeepTarget stratified positive versus negative drug-target pairs with significantly higher accuracy (mean AUC: 0.73) compared to RoseTTAFold All-Atom (0.58) and Chai-1 without multiple sequence alignment (0.53) [37]. DeepTarget outperformed these structural methods in 7 out of 8 tested datasets, demonstrating particularly strong performance in predicting clinically relevant targets and mutation-specific drug effects [37].

Experimental Validation Case Studies

Case Study 1: Ibrutinib in BTK-Negative Solid Tumors

Experimental Protocol: Researchers tested DeepTarget's prediction that Ibrutinib (FDA-approved for blood cancers) kills lung cancer cells through secondary targeting of epidermal growth factor receptor (EGFR) despite absence of its primary target Bruton's tyrosine kinase (BTK) [38] [39]. The validation involved comparing Ibrutinib effects on cancer cells with and without cancerous mutant EGFR [38] [39].

Results: Cells harboring the mutant EGFR form were significantly more sensitive to Ibrutinib, confirming EGFR as a functionally relevant target in this context [38] [39]. This demonstrated DeepTarget's ability to identify context-specific targets that explain drug efficacy in unexpected cellular environments.

Case Study 2: Pyrimethamine Repurposing

Experimental Protocol: Researchers experimentally validated DeepTarget's prediction that pyrimethamine, an anti-parasitic drug, affects cellular viability through modulation of mitochondrial function [40].