Batch vs. Flow Reactors: An AI and Machine Learning Optimization Guide for Biomedical Research

This article provides a comprehensive comparison of batch and flow reactor performance through the lens of modern machine learning (ML) optimization.

Batch vs. Flow Reactors: An AI and Machine Learning Optimization Guide for Biomedical Research

Abstract

This article provides a comprehensive comparison of batch and flow reactor performance through the lens of modern machine learning (ML) optimization. Tailored for researchers, scientists, and drug development professionals, it explores the fundamental principles of both reactor types and delves into how ML algorithms—from real-time pattern recognition and predictive modeling to reinforcement learning and Bayesian optimization—are revolutionizing their operation. The scope ranges from foundational concepts and methodological applications to practical troubleshooting and rigorous validation, offering a clear roadmap for leveraging AI to enhance yield, purity, and efficiency in chemical synthesis and pharmaceutical development.

Batch vs. Flow Reactors: Core Principles and AI's Transformative Potential

In the pursuit of optimized chemical processes, the selection of a reactor type is a fundamental decision that significantly influences the efficiency, scalability, and success of research and development. For researchers, scientists, and drug development professionals engaged in machine learning (ML) optimization, the choice often narrows to two principal contenders: the established flexibility of batch reactors and the precise control of continuous flow reactors. This guide provides an objective comparison of their performance, supported by experimental data and detailed protocols, to inform data-driven decisions within modern ML-driven research frameworks.

Performance Comparison at a Glance

The core operational differences between batch and flow reactors lead to distinct performance characteristics, summarized in the table below.

Table 1: Key Performance Indicators for Batch and Flow Reactors

| Performance Parameter | Batch Reactor | Continuous Flow Reactor |

|---|---|---|

| Production Volume & Rate | Suitable for small to medium volumes; longer cycle times due to pauses between batches [1]. | Designed for large-scale, high-volume output; higher throughput and shorter processing times [1]. |

| Inherent Flexibility | High; allows for reconfiguration and customization between batches. Ideal for R&D and niche markets [1] [2]. | Low; designed for a specific product type. Changes require significant equipment investment [1]. |

| Reaction Control & Mixing | Can suffer from poor heat/mass transfer, leading to hot spots and challenges with exothermic reactions [3]. | Superior heat and mass transfer from high surface-to-volume ratios; precise control of residence time [3]. |

| Quality Control Approach | Quality checks at the end of a process; adjustments made based on previous batch inspections [1]. | Real-time, in-line monitoring with Process Analytical Technology (PAT); enables immediate corrections [1] [4]. |

| Typical Equipment & Footprint | Generally simpler, smaller equipment [1]. Stirred-tank reactors are common [2]. | More sophisticated equipment for prolonged operation [1]. Plug Flow Reactors (PFRs) and CSTRs are common [2]. |

| Operational Safety | Intuitive for hazardous reactions but contains large volumes of material at once [3]. | Safer for hazardous reactions; only small volumes of reactive material are present in the system at any time [3] [5]. |

Experimental Protocols for Performance Evaluation

To generate quantitative data for ML model training, standardized experimental protocols are essential. Below are detailed methodologies for key performance tests.

Protocol: Evaluating Mixing and Plug Flow Performance

This protocol is used to quantify the flow behavior and mixing efficiency within a reactor, which is critical for predicting yield and selectivity.

- Objective: To characterize the Residence Time Distribution (RTD) and quantify deviation from ideal plug flow in a continuous flow reactor.

- Background: RTD is a critical parameter in reactor design. A narrow RTD indicates plug flow behavior, which is desirable for many reactions as it ensures all molecules have a similar residence time, improving product consistency and yield [6].

- Methodology:

- Tracer Introduction: A non-reactive tracer is injected as a pulse or step function into the reactor's inlet stream under steady-state flow conditions.

- In-line Monitoring: The tracer concentration at the reactor outlet is monitored in real-time using a suitable Process Analytical Technology (PAT) tool, such as an in-line UV/Vis spectrophotometer or conductivity meter [5].

- Data Collection: Concentration data is collected at a high frequency to construct the RTD curve,

E(t).

- Data Analysis: The mean residence time and variance of the RTD curve are calculated. The number of equivalent tanks-in-series can be used as a metric for plug flow performance, with higher values indicating behavior closer to ideal plug flow [7]. This metric can be directly used as an optimization target in ML frameworks.

Protocol: Autonomous Reaction Optimization with ML

This protocol leverages the dynamic control of flow reactors for rapid, AI-driven optimization.

- Objective: To autonomously discover optimal reaction conditions (e.g., temperature, residence time, catalyst concentration) for maximizing yield or selectivity.

- Background: Flow systems are uniquely suited for autonomous optimization due to their steady-state operation and seamless integration with PAT and control systems [3] [5].

- Methodology:

- System Setup: A continuous flow reactor is integrated with automated pumps, heaters, and PAT (e.g., inline IR or NMR spectroscopy) for real-time yield analysis [5].

- ML Integration: A machine learning algorithm, such as Bayesian optimization, is set up to control the process parameters. The algorithm's objective is to maximize the yield/selectivity signal from the PAT.

- Autonomous Experimentation: The ML algorithm sequentially selects new experimental conditions (e.g., by adjusting pump flow rates and heater temperature) based on all previous results, driving the system towards the global optimum without human intervention [3] [7].

- Data Analysis: The performance of the optimization is evaluated by the number of experiments required to find the optimum and the final achieved yield/selectivity. This demonstrates the synergy between flow control and ML efficiency.

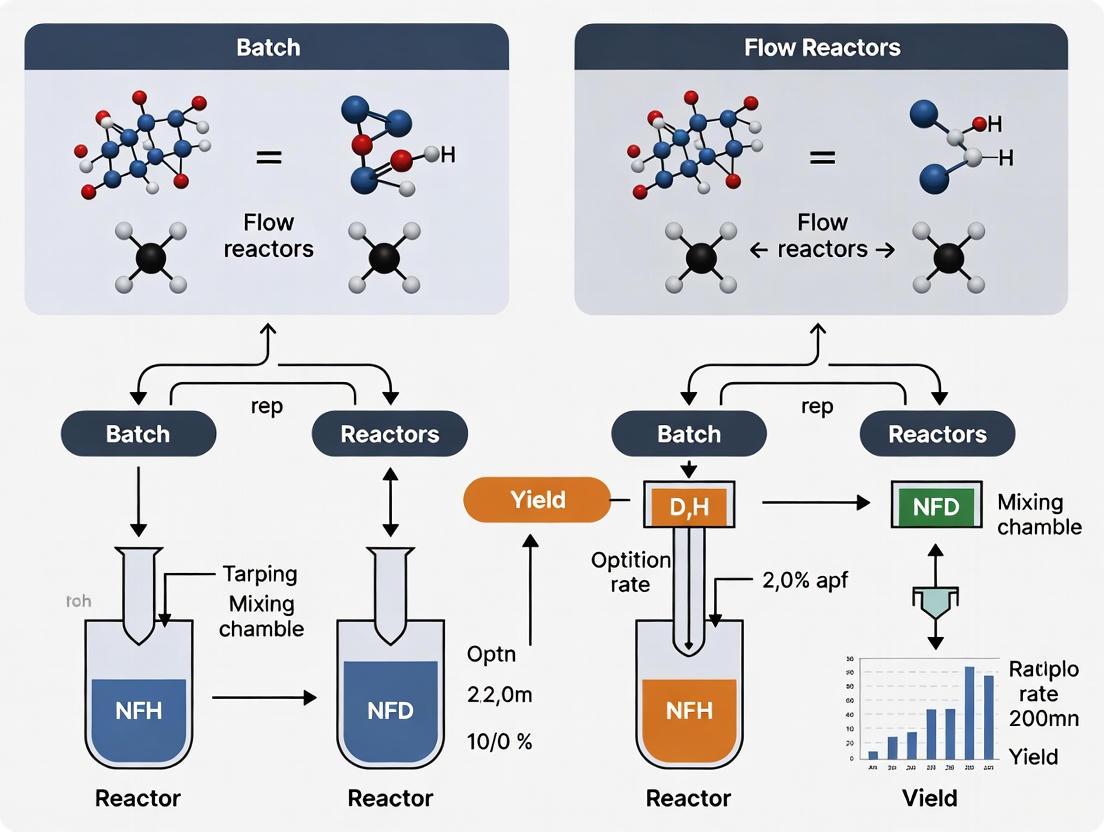

Visualizing the ML-Driven Optimization Workflow

The integration of ML with flow chemistry creates a powerful, closed-loop system for process discovery. The diagram below illustrates this automated workflow.

Diagram 1: Closed-loop ML optimization of flow chemistry. The ML algorithm autonomously proposes new experiments based on real-time analytical feedback, rapidly converging on optimal conditions.

A Researcher's Toolkit for Reactor Studies

Selecting the right tools is critical for conducting the experiments described above. The following table details essential research reagent solutions.

Table 2: Essential Research Reagents and Equipment for Reactor Studies

| Item | Function/Application | Relevance to ML & Optimization |

|---|---|---|

| Continuous Flow Microreactor | Tubing or chip-based reactor with high surface-to-volume ratio for precise reaction control [3]. | The core component for achieving the steady-state operation required for autonomous ML optimization. |

| Process Analytical Technology (PAT) | In-line sensors (e.g., IR, UV/Vis) for real-time monitoring of reaction conversion and purity [5]. | Provides the high-quality, real-time data stream required for ML algorithms to make informed decisions. |

| Automated Pump System | Delivers reagents to the flow reactor at precisely controlled rates [5]. | Acts as the "actuator" for the ML algorithm, allowing it to dynamically adjust parameters like residence time. |

| Multi-fidelity Bayesian Optimization Algorithm | An ML technique that uses cheaper, low-fidelity simulations to reduce the number of expensive real-world experiments needed [7]. | Dramatically accelerates the discovery of optimal reactor geometries and process conditions by smartly exploring the design space. |

| Additively Manufactured Reactors | 3D-printed reactors with complex, optimized internal geometries that are impossible to make traditionally [7]. | Enables the physical implementation of ML-designed reactor geometries that enhance performance (e.g., via induced vortices). |

The Reactor Selection Framework for Research Goals

The choice between batch and flow is not absolute but should be guided by the specific research context. The following logic can aid in this decision.

Diagram 2: A decision framework for selecting between batch and flow reactors based on project requirements. This logic highlights the scenarios where flow reactors are particularly advantageous for ML-driven research.

In the context of ML optimization research, the contest between batch flexibility and flow control is increasingly leaning towards the latter. While batch reactors remain indispensable for flexible, small-scale R&D, continuous flow reactors offer a paradigm of control, safety, and data-generation efficiency that is inherently compatible with machine learning. The ability of flow systems to integrate with PAT and facilitate closed-loop, autonomous optimization makes them a powerful tool for accelerating discovery and development. As ML and additive manufacturing continue to advance, enabling the creation of previously infeasible, optimized reactor designs [7], the role of flow control in shaping the future of chemical research and manufacturing is set to expand further.

Inherent Strengths and Weaknesses for Pharmaceutical Synthesis

The selection of reactor configuration—batch or continuous flow—is a fundamental decision in pharmaceutical process development, influencing everything from reaction selectivity and safety to scalability and integration with modern optimization techniques like machine learning (ML). Historically, the manufacturing of fine chemicals and pharmaceuticals has been dominated by batch technologies due to their flexibility and the high profit with low cost of multipurpose batch units [8]. However, the past decades have witnessed a significant shift, with continuous flow processes emerging as a powerful alternative, offering enhanced control, safety, and efficiency for many transformations [9]. This guide provides an objective comparison of batch and continuous flow reactors for pharmaceutical synthesis, framing their inherent strengths and weaknesses within the context of ML-driven reaction optimization. It summarizes key quantitative data, details experimental protocols, and visualizes the workflows that are reshaping modern process development.

Core Reactor Concepts and Comparative Analysis

A batch reactor is a transient system where all reactants are added at the beginning of the process in a single vessel, and the reaction proceeds over time until the desired conversion is achieved, after which the products are removed [10]. Its operation is characterized by changing concentrations and conditions within the clock time [8].

In contrast, a continuous flow reactor is a steady-state system where reactants are constantly pumped into the reactor, move through a catalyst bed or reaction channel, and products are continuously collected at the outlet [8]. This mode of operation provides consistent residence time and reaction parameters [11].

Table 1: Fundamental Characteristics of Batch and Continuous Flow Reactors

| Characteristic | Batch Reactor | Continuous Flow Reactor |

|---|---|---|

| Operational Mode | Transient; concentrations change with time [8] | Steady-state; outlet composition is constant [8] |

| Production Scale | Suitable for small-scale and specialty production [10] | Ideal for large-scale, high-throughput production [10] |

| Flexibility | High; easy to change products and conditions between batches [12] | Low; designed for a specific set of operating parameters [10] |

| Reaction Control | Straightforward control in a single vessel [10] | Requires sophisticated control systems for steady operation [10] |

| Catalyst Handling | Catalyst separation from products is required [8] | Catalyst is often immobilized; no separation needed [8] [11] |

| Heat Transfer | Can be inefficient, especially upon scale-up [13] | Excellent due to high surface-area-to-volume ratio [11] |

| Residence Time | Variable, determined by batch duration | Precise and consistent control |

Table 2: Quantitative Performance Comparison for Select Pharmaceutical Reactions

| Reaction Type | Reactor Mode | Key Condition | Reported Yield (Batch vs. Flow) | Key Advantage Demonstrated |

|---|---|---|---|---|

| Hydrogenation [11] | Batch | Not Specified | 49% | Baseline performance |

| Continuous Flow | Not Specified | 95% | Suppression of side reactions | |

| Organolithium [11] | Batch | -78 °C | 32% | Baseline performance |

| Continuous Flow | -20 °C | 60% | Safer operation at higher temperatures | |

| Diazotization [11] | Batch | Not Specified | 56% | Baseline performance |

| Continuous Flow | Not Specified | 90% (1 kg in 8 h) | Safe handling of unstable intermediates | |

| Hydride Reduction [11] | Batch | Not Specified | Not Specified | Baseline performance |

| Continuous Flow | Not Specified | 96% | Superior thermal control | |

| Knoevenagel Condensation [14] | Continuous Flow | Algorithm-optimized flow rates | 59.9% | Autonomous ML-driven optimization |

Experimental Protocols and Data Generation

Robust experimental data is crucial for comparing reactor performance and training ML models. The following protocols illustrate how data is generated for both isolated reactions and autonomous optimization campaigns.

Protocol for Selective Nitroarene Hydrogenation

This protocol is adapted from studies comparing the catalytic hydrogenation of halogenated nitroarenes to haloanilines, valuable pharmaceutical intermediates, in both batch and flow modes [8].

- Objective: To selectively hydrogenate the nitro group in ortho-chloronitrobenzene (o-CNB) to produce o-chloroaniline (o-CAN) while minimizing dehalogenation.

- Batch Procedure:

- Reactor Setup: Charge a 100 mL stainless steel autoclave with a catalyst (e.g., Pd/C or Au/TiO₂) and an ethanol solution of o-CNB.

- Reaction Execution: Purge the reactor with hydrogen, then pressurize to the target pressure (e.g., 5-12 bar). Initiate the reaction with vigorous stirring and heating to 150 °C.

- Sampling & Analysis: Monitor reaction progress by sampling at intervals. Analyze samples via GC or HPLC to determine o-CNB conversion and selectivity to o-CAN versus dehalogenated by-products (aniline and nitrobenzene) [8].

- Continuous Flow (Gas-Phase) Procedure:

- Reactor Setup: Pack a fixed-bed glass reactor (15 mm inner diameter) with a supported catalyst (e.g., Au/Mo₂N or Au/TiO₂).

- Reaction Execution: Pre-heat the reactor to the target temperature (e.g., 150-220 °C). Feed a vaporized mixture of o-CNB in ethanol and hydrogen at atmospheric pressure through the catalyst bed.

- Analysis: Continuously analyze the effluent gas stream using online GC to measure steady-state conversion and selectivity [8].

- Key Findings: Under comparable conditions, Au-based catalysts demonstrated 100% selectivity to o-CAN in continuous flow mode, whereas Pd/C in batch mode produced significant dehalogenation by-products (up to 20% aniline) [8]. This highlights the flow reactor's superior selectivity for sensitive hydrogenations.

Protocol for Autonomous Flow Reactor Optimization

This protocol details a self-optimizing flow system, integrating real-time analytics and Bayesian optimization, as demonstrated for a Knoevenagel condensation [14].

- Objective: Autonomously maximize the yield of 3-acetyl coumarin by optimizing reactant flow rates (affecting stoichiometry and residence time).

- Experimental Workflow:

- System Configuration:

- Pumps: Employ syringe pumps for reagent feeds (Salicylaldehyde + catalyst in EtOAc; Ethyl acetoacetate in EtOAc) and a dilution pump (DCM in Acetone).

- Reactor: Use a micromixer followed by a temperature-controlled capillary reactor.

- Analysis: Integrate a benchtop NMR spectrometer (e.g., Magritek Spinsolve Ultra) with a flow cell for online monitoring.

- Control: Connect all components to an automation system (e.g., HiTec Zang LabManager) running a Bayesian optimization algorithm [14].

- Optimization Cycle:

- The automation system sets new flow rates for the reagent pumps.

- The system is allowed to reach steady-state, confirmed by consecutive NMR measurements showing stable yield.

- The NMR software automatically quantifies the yield using qNMR, integrating specific signals for the aldehyde starting material and the product.

- The calculated yield is fed back to the Bayesian optimization algorithm.

- The algorithm uses this data to propose the next set of flow rates to test, balancing exploration of the parameter space and exploitation of promising regions [14].

- System Configuration:

- Key Findings: This closed-loop system performed 30 autonomous experiments, successfully navigating the parameter space to achieve a final yield of 59.9%, effectively demonstrating the trade-off between exploration and exploitation [14].

Autonomous Reactor Optimization Loop

The Machine Learning Optimization Context

The paradigm of reactor optimization is rapidly evolving with the integration of Machine Learning (ML) and artificial intelligence (AI). The inherent characteristics of batch and flow reactors present distinct opportunities and challenges in this data-driven landscape.

Data Generation and Quality: Continuous flow reactors are inherently more amenable to real-time, automated data acquisition. Their steady-state operation facilitates consistent sampling and integration with online Process Analytical Technology (PAT) like IR, UV-Vis, and NMR spectroscopy [14]. This enables the generation of high-quality, time-series data crucial for training ML models. In contrast, the transient nature of batch reactions can make consistent, automated sampling more complex, though not impossible.

Optimization Efficiency: ML-assisted approaches, particularly Bayesian optimization, have been successfully applied to optimize flow reactors with high-dimensional parameter spaces (e.g., geometry, flow rates, temperature) [7]. The ability of flow systems to quickly reach steady-state allows for rapid evaluation of each experimental condition proposed by the algorithm. A single autonomous flow system can efficiently navigate complex parameter spaces, as demonstrated by the optimization of a coiled-tube reactor's geometry using multi-fidelity Bayesian optimization, which led to a ~60% improvement in plug flow performance [7].

Scale-Up and Digital Twins: Flow chemistry enables a more straightforward "scale-up by numbering-up" approach. Once a process is optimized at a small scale, it can be parallelized without re-optimization. This aligns perfectly with ML, where a highly accurate "digital twin" or surrogate model of a single reactor can be developed using computational fluid dynamics (CFD) and AI, as shown in the design of an optimized alcohol oxidation reactor [15]. This model can then predict the performance of a multi-reactor production plant, significantly reducing the time and cost of process development. Batch reactor scale-up, however, often involves complex re-optimization due to changing heat and mass transfer characteristics in larger vessels [13], posing a greater challenge for predictive modeling.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions and Equipment

| Item | Function in Reactor Systems | Relevance to ML/Optimization |

|---|---|---|

| Supported Metal Catalysts (e.g., Pd/C, Au/TiO₂) [8] | Facilitate heterogeneous catalytic reactions (e.g., hydrogenation); can be packed in fixed-bed flow reactors. | Catalyst properties (loading, support) become tunable parameters in optimization campaigns. |

| Immobilized Enzymes [16] | Enable biocatalysis in packed-bed flow reactors, often with high selectivity. | Expands the reaction space for sustainable synthesis; enzyme stability is a key optimization target. |

| Process Analytical Technology (PAT) (e.g., Benchtop NMR [14]) | Provides real-time, inline quantification of reaction conversion and yield. | Critical data source for feedback in autonomous optimization loops; enables high-frequency data generation. |

| Automation & Control System (e.g., LabManager [14]) | Interfaces with pumps, sensors, and valves to execute recipes and record data. | The hardware backbone that executes parameter changes dictated by ML algorithms. |

| Bayesian Optimization Algorithm [7] [14] | An ML strategy that efficiently explores complex parameter spaces to find a global optimum with fewer experiments. | The "brain" of autonomous systems, balancing exploration and exploitation to accelerate discovery. |

The choice between batch and continuous flow reactors is not a simple binary decision but a strategic one, dependent on the specific reaction, production scale, and safety considerations. Batch reactors offer unmatched flexibility for multipurpose facilities and early-stage development, while continuous flow reactors provide superior control, safety, and efficiency for many transformations, particularly those involving hazardous intermediates or extreme conditions.

The emergence of ML and AI as powerful tools in chemical engineering is strengthening the position of continuous flow systems for process intensification and optimization. The compatibility of flow chemistry with high-throughput data generation, real-time analytics, and autonomous decision-making creates a synergistic pathway for the future of pharmaceutical synthesis, enabling faster, safer, and more sustainable development of active pharmaceutical ingredients (APIs). As carrier material innovation, reactor design optimization, and data-driven process control continue to advance, the integration of flow chemistry with ML is poised to become an unstoppable trend in the pharmaceutical industry [16] [7].

The integration of Artificial Intelligence (AI) into chemical manufacturing and research represents a fundamental paradigm shift from reactive control to predictive optimization. For decades, batch processing has served as the unquestioned standard across pharmaceuticals, specialty chemicals, and materials science, relying on intuitive but inefficient cycles of charging reactants into vessels, heating, stirring, and quenching before purification [3]. This approach, while flexible, introduces significant variability, scale-up challenges, and inefficiencies that limit innovation speed and sustainability.

In the 21st century, continuous flow chemistry has emerged as a disruptive alternative, where reagents flow through tubes or microreactors instead of static flasks, enabling precise control of temperature, pressure, and mixing for superior safety, reproducibility, and scalability [3]. The convergence of flow chemistry with advanced AI and machine learning (ML) transforms chemical processes into data-rich, self-optimizing systems capable of autonomous experimentation and discovery. This evolution moves beyond reactive adjustments based on past data toward predictive systems that forecast optimal conditions, design novel reactor geometries, and accelerate development timelines from months to days.

This guide objectively compares the performance of batch versus flow reactors within ML-driven optimization research, providing experimental data, detailed methodologies, and essential tools for researchers navigating this transformative landscape.

Technical Comparison: Batch vs. Flow Reactors for ML-Driven Research

The core differences between batch and flow reactors create distinct advantages and limitations when integrated with machine learning optimization. Understanding these technical distinctions is crucial for selecting the appropriate platform for specific research applications.

Batch reactors dominate due to their simplicity and flexibility. A single vessel accommodates various reactions and volumes, allowing researchers to pause, add reagents, take samples, and observe progress. However, these advantages mask fundamental inefficiencies: large volumes are prone to hot spots, poor mixing, and difficulties in removing heat from exothermic reactions. Scaling up often dramatically changes reaction dynamics, creating notorious pain points in process development [3] [17]. For ML applications, batch processing generates data points slowly, as each complete reaction provides only a single data point, and sampling during the reaction can mislead by changing reaction conditions [17].

Continuous flow reactors pump reactants through narrow channels where high surface-to-volume ratios enable tight control over reaction parameters. Residence time—the duration molecules spend inside the reactor—is precisely tuned by adjusting flow rates, ensuring consistent environments and eliminating lot-to-lot variability [3]. This continuous operation generates high-density, real-time data streams ideal for ML algorithms, which can test dozens of variables simultaneously and identify optimal conditions far faster than human trial-and-error [3].

Table 1: Fundamental Characteristics of Batch vs. Flow Reactors for ML Optimization

| Characteristic | Batch Reactors | Flow Reactors |

|---|---|---|

| Processing Mode | Cyclical (charge-react-quench) | Continuous steady-state operation |

| Heat Transfer | Limited, prone to hot spots | Superior due to high surface-to-volume ratio |

| Scale-up Approach | Redesign process for larger vessels | "Numbering up" or running longer |

| Data Generation | Single point per experiment | Continuous real-time streams |

| ML Integration | Limited by slower data acquisition | Seamless with real-time analytics |

| Safety Profile | Large volumes under reaction conditions | Small volumes, inherent safety |

| Material Inventory | High (all materials committed at start) | Low (materials continuously fed) |

Experimental Performance Data: Quantitative Comparisons

Recent studies across academic and industrial laboratories provide quantitative evidence of the performance advantages when combining flow chemistry with ML optimization. The following experimental data demonstrates clear benefits in yield, optimization speed, and space-time productivity.

Table 2: Experimental Performance Comparison of ML-Optimized Reactions

| Reaction Type | Reactor Type | ML Method | Optimization Time | Result | Source |

|---|---|---|---|---|---|

| Knoevenagel Condensation | Flow | Bayesian Optimization with NMR | 30 iterations | 59.9% yield achieved autonomously | [14] |

| CO₂ Cycloaddition | Flow (3D-printed) | Reac-Discovery Platform | N/R | Highest reported space-time yield for triphasic reaction | [18] |

| Hydrogenation (Model) | Batch | Conventional DoE | Days to weeks | Limited by heat/mass transfer | [17] |

| Hydrogenation (Flow) | Flow (Fixed-bed) | Automated parameter screening | Hours | Safer, higher pressure operation | [17] |

| Tracer Flow Experiment | 3D-printed Coiled-tube | Multi-fidelity Bayesian Optimization | N/R | ~60% improvement in plug flow performance | [7] |

A compelling case study from Magritek and HiTec Zang demonstrates a fully automated flow system optimizing a Knoevenagel condensation to produce 3-acetyl coumarin. The setup integrated an Ehrfeld microreactor system with a Spinsolve Ultra benchtop NMR spectrometer and LabManager automation, controlled by a Bayesian optimization algorithm [14]. The system autonomously varied flow rates of reactants, affecting both stoichiometry and residence time, while continuously monitoring conversion and yield via NMR. After 30 iterations, the algorithm achieved a 59.9% yield, demonstrating effective navigation of the parameter space through balanced exploration and exploitation [14].

Experimental Protocols: Methodologies for ML-Driven Reactor Optimization

Autonomous Flow Reactor Optimization with Bayesian Methods

Objective: To autonomously optimize chemical reaction conditions in a continuous flow reactor using real-time NMR monitoring and Bayesian optimization algorithms [14].

Materials & Equipment:

- Ehrfeld Modular Microreactor System (MMRS) with syringe pumps

- Magritek Spinsolve Ultra Benchtop NMR Spectrometer

- HiTec Zang LabManager and LabVision automation software

- Reactants: Salicylaldehyde, Ethyl acetoacetate

- Catalyst: Piperidine

- Solvents: Ethyl acetate, Acetone

Procedure:

- System Configuration: Prepare reactant solutions (Feed 1: salicylaldehyde with piperidine catalyst in ethyl acetate; Feed 2: ethyl acetoacetate in ethyl acetate). Load into syringe pumps.

- NMR Method Setup: Configure qNMR template with 1D EXTENDED+ protocol (4 scans, 6.55s acquisition, 15s repetition time, 90-degree pulse).

- Automation Workflow: Program LabManager to control reactor parameters (flow rates, temperature) and trigger NMR measurements automatically.

- Optimization Loop: For each iteration:

- Adjust flow rates (0-1 mL/min range) as directed by Bayesian algorithm.

- Allow system to reach steady-state (monitored via consecutive NMR measurements).

- Acquire and analyze NMR spectrum, calculating conversion and yield.

- Feed results to Bayesian algorithm to determine next parameter set.

- Termination: Continue for predetermined iterations or until yield convergence.

AI-Driven Discovery of 3D-Printed Reactor Geometries

Objective: To discover and fabricate optimal reactor geometries using a digital platform combining parametric design, machine learning, and additive manufacturing [18].

Materials & Equipment:

- Reac-Discovery platform (Reac-Gen, Reac-Fab, Reac-Eval modules)

- High-resolution stereolithography 3D printer

- Immobilized catalyst systems

- Real-time NMR monitoring system

Procedure:

- Reac-Gen (Design): Generate reactor geometries using mathematical equations for periodic open-cell structures (POCS). Vary parameters: size (spatial boundaries), level threshold (porosity), resolution (voxel density).

- Reac-Fab (Fabrication): 3D-print validated structures using stereolithography. Functionalize with catalytic coatings.

- Reac-Eval (Testing): Load multiple printed reactors into self-driving laboratory platform. Conduct parallel evaluations with varying process parameters (flow rates, concentration, temperature).

- ML Optimization: Train two machine learning models simultaneously: one for process parameter optimization, another for reactor geometry refinement.

- Validation: Test optimized reactors for multiphase catalytic reactions (e.g., hydrogenation of acetophenone, CO₂ cycloaddition).

Visualization: AI-Optimization Workflows in Flow Chemistry

Self-Optimizing Flow Reactor Workflow

AI-Optimization Workflow Diagram Title: Closed-Loop Autonomous Optimization in Flow Chemistry

AI-Driven Reactor Discovery Platform

AI-Driven Reactor Discovery Platform Diagram Title: Integrated Digital Workflow for Reactor Discovery

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing AI-driven optimization in flow chemistry requires specialized materials and equipment. The following table details key research reagent solutions and their functions in advanced reactor systems.

Table 3: Essential Research Reagent Solutions for AI-Optimized Flow Chemistry

| Category | Specific Examples | Function in Research | Application Notes |

|---|---|---|---|

| Structured Reactors | 3D-printed POCS (Gyroid, Schwarz structures) | Enhance mass/heat transfer via engineered geometries | Fabricated via stereolithography; customizable void areas [18] |

| Heterogeneous Catalysts | Immobilized catalysts (50-400 micron particles) | Enable fixed-bed continuous flow reactions | Avoid pressure drops; suitable for pharma applications [17] |

| Analytical Integration | Benchtop NMR (e.g., Spinsolve Ultra) | Real-time reaction monitoring without deuterated solvents | Enables closed-loop optimization; provides quantitative data [14] |

| Automation Systems | LabManager, LabVision software | Control reactors, pumps, and analytical instruments | Modular interface for diverse laboratory equipment [14] |

| Optimization Algorithms | Bayesian optimization, Multi-fidelity GPs | Efficiently navigate complex parameter spaces | Balances exploration vs. exploitation; reduces experiment count [14] [7] |

| Flow Reactor Systems | Ehrfeld MMRS, H.E.L FlowCAT | Provide precise residence time control | Configurable fixed-bed reactors for hydrogenation [17] [14] |

The paradigm shift from reactive batch processing to predictive flow optimization represents a fundamental transformation in chemical research methodology. Experimental data consistently demonstrates that AI-enhanced flow systems achieve superior performance through autonomous optimization, enhanced reactor geometries, and real-time analytical feedback. The integration of machine learning with continuous flow chemistry enables researchers to navigate complex parameter spaces efficiently, discover novel reactor designs, and accelerate development timelines while improving sustainability and safety.

As these technologies mature, we anticipate broader adoption across pharmaceutical development, specialty chemicals, and materials science, ultimately leading to autonomous chemical plants operating with minimal human intervention. Researchers who embrace this shift will gain significant competitive advantages through faster development cycles, reduced waste, and access to previously inaccessible chemical space. The future of chemical optimization is predictive, not reactive, powered by the synergistic combination of flow chemistry and artificial intelligence.

The integration of artificial intelligence (AI) and machine learning (ML) into chemical reaction engineering is reshaping the fundamental approach to process optimization. Central to evaluating the success of these intelligent systems are four key performance metrics: Yield, Purity, Cycle Time, and Energy Use [19]. These metrics provide a quantitative framework for comparing the performance of traditional batch processing against increasingly prevalent continuous flow chemistry [20] [3]. Batch reactors, characterized by their transient operation where reactants are charged and products are removed after reaction completion, have long been the standard in pharmaceuticals and specialty chemicals due to their flexibility [20] [21]. In contrast, continuous flow reactors, where reagents are constantly fed through a catalyst bed or reactor channel, offer advantages in precise parameter control, safety, and scalability [20] [21]. The emergence of self-driving laboratories and AI-driven platforms like "Reac-Discovery" now enables the simultaneous optimization of both reactor process parameters and geometry, pushing the boundaries of these performance metrics [18]. This guide objectively compares how AI optimization impacts these core metrics in both batch and flow regimes, providing researchers and drug development professionals with the experimental data and methodologies needed for informed process selection.

Core Performance Metrics and Their Significance

In the context of AI-optimized chemical processes, these four metrics are critical for assessing economic, operational, and environmental performance.

- Yield measures the percentage of reactants successfully converted into the desired saleable product. AI systems push conversion rates higher by optimizing every stage of the reaction [19].

- Purity tracks the weight-percent quality of the final product, indicating the process's selectivity and its ability to minimize byproducts. AI tools with early prediction capabilities can flag potential deviations before impurities take hold, allowing for corrective action [19].

- Batch Cycle Time captures the total hours from reactor charging to clean-out. For batch processes, AI can identify and eliminate idle time, freeing up capacity. In flow systems, AI optimizes residence time and flow rates to maximize throughput [19].

- Specific Energy Consumption reflects the gigajoules used per tonne of product produced. By tackling heating losses and optimizing utility systems, AI directly reduces utility costs and associated emissions [19].

AI leverages real-time pattern recognition and predictive modeling to drive improvements in these areas. For instance, plants embedding these technologies into their reactors consistently achieve mid-single-digit improvements in yield, cycle time, and energy use—gains that multiply across hundreds of annual batches [19].

Comparative Performance of Batch and Flow Reactors

The underlying reactor technology significantly influences the potential for AI-driven optimization. The table below summarizes the general characteristics and AI optimization potential of each system.

Table 1: Fundamental Comparison of Batch and Flow Reactors

| Feature | Batch Reactors | Continuous Flow Reactors |

|---|---|---|

| Operation Mode | Transient; reactants charged, then products removed after reaction [20] | Steady-state; constant feed and product removal [20] |

| Reaction Phase | Primarily liquid phase [20] | Can be gas or liquid phase [20] |

| Heat Transfer | Limited by reactor volume; risk of hotspots [21] | Excellent due to high surface-to-volume ratio [21] |

| Mass Transfer/Mixing | Dependent on impeller design; can be uneven [21] | Highly efficient; rapid diffusion in small space [20] |

| Scale-up | Often requires multiple vessels; can change reaction dynamics [19] [3] | Straightforward via "numbering up" or longer operation; consistent environment [21] [3] |

| Process Safety | Large volume of hazardous materials [21] | Small hold-up volume; inherently safer for hazardous reactions [21] |

| AI Optimization Focus | Reducing cycle time, improving yield/purity, energy management [19] | Optimizing residence time, flow rates, catalyst longevity, system stability [18] |

AI-Optimized Batch Reactor Performance

In batch systems, AI addresses inherent inefficiencies. Traditional control methods like PID loops are reactive, often forcing operators to use conservative setpoints that widen safety margins and slow down operations [19]. AI optimization flips this equation by combining real-time pattern recognition and predictive modeling to make proactive adjustments.

Table 2: Reported AI Performance Improvements in Batch Reactors

| Metric | Reported Improvement | Application Context |

|---|---|---|

| Yield | Mid-single-digit % increase [19] | General batch reactor operations |

| Cycle Time | Mid-single-digit % reduction [19] | General batch reactor operations |

| Energy Use | Mid-single-digit % reduction [19] | General batch reactor operations |

| Cycle Time | >40% reduction [22] | Solvent swap distillation column |

| Energy & Emissions | 25% reduction in energy use and Scope 1/2 emissions [22] | Real-time optimization in refineries |

AI systems map the normal rhythm of a batch and use the ideal "Golden Batch" as a dynamic benchmark. When sensor data drifts, the model flags it minutes rather than hours later, enabling corrective actions that protect yield and purity [19]. For example, in a solvent swap distillation column, a hybrid AI model using first principles and machine learning enabled predictive stoppage, reducing a cycle time of over twenty hours by more than 40% [22].

AI-Optimized Flow Reactor Performance

Continuous flow reactors, with their steady-state operation and superior transport properties, provide an ideal platform for AI, particularly for heterogeneous catalytic reactions [20] [23]. AI and ML excel at optimizing the high-dimensional parameter spaces in flow chemistry, including process conditions and novel reactor geometries.

Table 3: AI-Driven Advancements in Continuous Flow Systems

| Metric / Achievement | System Details | AI Role & Impact |

|---|---|---|

| Space-Time Yield (STY) | Highest reported STY for triphasic CO₂ cycloaddition [18] | Self-driving lab (Reac-Discovery) optimized process and reactor topology simultaneously [18] |

| Plug Flow Performance | ~60% improvement vs. conventional designs [7] | ML-assisted discovery of coiled reactor geometries inducing vortical flow [7] |

| Catalytic Reactor Discovery | Hydrogenation of acetophenone and CO₂ cycloaddition [18] | Integrated platform for design, fabrication (3D printing), and evaluation of periodic open-cell structures [18] |

| Throughput & Scale | Production of ~50 kg/day of a cyanated product for Remdesivir [21] | Flow process enabled reaction at -30°C (vs. -78°C in batch) with a residence time of 2.5 min [21] |

A landmark study using the "Reac-Discovery" platform demonstrated AI's power to go beyond process parameters and optimize reactor geometry itself. The platform uses a self-driving laboratory to perform parallel multi-reactor evaluations with real-time NMR monitoring. For the CO₂ cycloaddition reaction, it achieved the highest reported space-time yield by simultaneously optimizing process descriptors and topological descriptors of 3D-printed periodic open-cell structures [18]. Another study used multi-fidelity Bayesian optimization to design novel coiled-tube reactors, resulting in a ~60% experimental improvement in plug flow performance compared to conventional designs by promoting mixing vortices at low flow rates [7].

Experimental Protocols and Methodologies

AI Optimization of a Batch Reactor: A Standard Protocol

The implementation of AI optimization in a batch reactor environment typically follows a disciplined, multi-phase path to ensure measurable returns and build operator confidence [19].

- Data Readiness Audit: The foundation is a unified and cleansed dataset. This involves inventorying every sensor, historian tag, and lab record, then cleansing gaps or calibration drift. The deliverable is a dataset that accurately reflects current operations without manual patchwork [19].

- Proof-of-Value Modeling: Algorithms—often blending first-principles equations with machine learning—are trained on historical batches and stress-tested against unseen scenarios. A successful model should predict end-of-batch quality within the lab's analytical error and show a clear economic upside [19].

- Pilot Run & Operator Training: The model runs in "advisory mode," providing recommendations while operators retain manual control. This phase builds trust and allows for fine-tuning of alarms and training needs [19].

- Closed-Loop Deployment: The vetted model is granted permission to write optimized setpoints directly back to the Distributed Control System (DCS) under strict safety overrides. Deployment typically starts with a narrow control envelope [19].

- Continuous Value Sustainment: The system requires periodic retraining and monitoring for drift. Logging every control action is crucial for regulatory audits. This sustained effort ensures compounding value as the model learns from each batch [19].

AI-Driven Discovery of an Optimized Flow Reactor

The "Reac-Discovery" platform outlines a protocol for the integrated design and optimization of a catalytic flow reactor, demonstrating a advanced methodology [18].

- Reac-Gen (Parametric Design): The process begins with the digital construction of reactor geometries. A library of mathematical equations (e.g., for Gyroid, Schwarz structures) is used to generate Periodic Open-Cell Structures (POCS). Key parameters—size (S), level threshold (L), and resolution (R)—are varied to define the topology, influencing bounding box dimensions, porosity, and mesh fidelity [18].

- Reac-Fab (Additive Manufacturing): Designed structures are fabricated using high-resolution 3D printing (e.g., stereolithography). A predictive ML model validates the printability of each design before fabrication, ensuring structural viability [18].

- Reac-Eval (Self-Driving Laboratory Evaluation): The 3D-printed reactors are evaluated in a parallel, automated testing system. The self-driving lab varies process descriptors (e.g., flow rates, concentration, temperature) and uses real-time monitoring (e.g., benchtop NMR) to track reaction progress [18].

- Machine Learning Feedback Loop: Data from Reac-Eval is used to train two ML models: one for process optimization and another for reactor geometry refinement. This creates a closed-loop system where each experimental result informs the next cycle of design and fabrication, simultaneously optimizing both the reactor and the process it runs [18].

AI-Driven Flow Reactor Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

The experimental protocols and case studies cited rely on a suite of specialized materials and technologies. The following table details key components essential for researchers working in this field.

Table 4: Essential Research Toolkit for AI-Optimized Reactor Systems

| Item / Technology | Function & Relevance in AI-Optimized Research |

|---|---|

| Periodic Open-Cell Structures (POCS) | Engineered, repeating unit cell architectures (e.g., Gyroids) that enable superior heat and mass transfer compared to packed beds; the primary target for geometry optimization in platforms like Reac-Discovery [18]. |

| Heterogeneous Catalysts (Immobilized) | Catalysts fixed onto a solid support, enabling their use in continuous flow packed-bed or structured reactors; their longevity and activity are critical for process stability [20] [18]. |

| Real-Time Process Analytical Technology (PAT) | Tools like inline NMR or IR spectroscopy that provide real-time data on conversion and selectivity; this high-frequency data is the essential fuel for AI/ML model training and decision-making [18] [3]. |

| High-Resolution 3D Printer | Enables the fabrication of complex, digitally-designed reactor geometries that are otherwise infeasible to produce; crucial for implementing AI-designed reactor topologies [18] [7]. |

| Hybrid ML Models | Algorithms that combine first-principles chemical engineering equations with data-driven machine learning; they improve model reliability in biased, noisy industrial environments and enhance scale-up predictions [24] [22]. |

| Self-Driving Laboratory (SDL) | An automated platform that integrates robotics, reactor systems, and PAT to perform continuous cycles of hypothesis, experimentation, and analysis; allows for the rapid, autonomous optimization of high-dimensional parameter spaces [18]. |

The objective comparison of AI performance metrics reveals a clear paradigm shift in chemical reaction engineering. While AI brings significant efficiency gains to traditional batch processes, such as reducing cycle time and energy use by double-digit percentages, its transformative potential is fully unlocked in continuous flow systems [19] [22]. The integration of AI with flow chemistry enables not just process optimization, but the generative discovery of novel reactor geometries, leading to step-change improvements in key metrics like space-time yield and plug flow performance [18] [7]. For researchers and drug development professionals, the choice between batch and flow is no longer solely based on traditional chemical engineering heuristics. The decision must now account for the powerful amplification effect provided by modern AI and ML tools. Flow reactors, with their superior transport properties and steady-state operation, provide a more data-rich and controllable environment for AI to exploit, paving the way for more autonomous, efficient, and sustainable chemical manufacturing.

Machine Learning in Action: Optimization Strategies for Batch and Flow Systems

The choice between batch and flow reactors presents a fundamental strategic decision in chemical manufacturing and drug development, with significant implications for process optimization using machine learning (ML). Batch processing, characterized by its cyclic, vessel-based approach, offers advantages for quality control and small-volume trials [25]. In contrast, continuous flow processing, where reactions occur in a continuous stream, provides enhanced mixing, superior temperature control, and improved scale-up potential [25]. Modern "augmented intelligence" frameworks combine data-driven optimization and machine learning with advances in computational fluid dynamics and additive manufacturing to design next-generation reactors with dramatically improved performance [7].

Artificial intelligence revolutionizes the optimization of these systems through real-time pattern recognition and closed-loop control. Industrial AI creates a continuous feedback loop that collects live data, analyzes it in real-time, and automatically adjusts setpoints, balancing quality, throughput, energy use, and emissions simultaneously [26]. For researchers and drug development professionals, understanding the performance characteristics and ML optimization potential of each reactor type is crucial for designing efficient, scalable, and consistent processes.

Performance Comparison: Experimental Data

The following tables summarize key experimental findings and AI performance metrics for batch and flow reactor optimization, synthesizing data from recent studies.

Table 1: Experimental Performance Metrics for Conventional vs. AI-Optimized Reactors

| Reactor Design & Configuration | Key Performance Metric | Experimental Result | Context & Conditions |

|---|---|---|---|

| Conventional Coiled-Tube (Design 1) [7] | Plug Flow Performance | Baseline | Steady-state flow, Reynolds number (Re) = 50 |

| AI-Optimized Path & Cross-section (Design 4) [7] | Plug Flow Performance | ~60% improvement vs. Design 1 | Steady-state flow, Reynolds number (Re) = 50 |

| AI-Optimized Reactor [7] | Dean Vortex Formation | Fully developed at low Re (50) | Under steady-state flow; enhances radial mixing |

| Conventional Coiled-Tube [7] | Dean Vortex Formation | Only partially established near outlet | Under steady-state flow |

| Batch Process with AI Closed-Loop [26] | Off-Spec Batches | Marked reduction | Predictive quality modeling and dynamic adjustments |

Table 2: AI Model & Optimization Performance in Industrial Settings

| AI Strategy / Technology | Performance Gain | Application Context |

|---|---|---|

| Predictive Quality Modeling [26] | Fewer off-spec batches, tighter consistency | Anticipates deviations in batch processes |

| Dynamic Recipe Adjustments [26] | Yield improvements, fewer operator interventions | Responds to raw material quality shifts |

| Multi-fidelity Bayesian Optimization [7] | Identified high-performing reactor designs | Combined low/high-fidelity CFD simulations |

| AI Batch API (OpenAI) [27] | ~50% cost reduction on tokens | Large-scale, non-urgent AI workloads |

| Quantization & Pruning [28] | Up to 73% reduction in model inference time | Optimized AI models for real-time tasks |

Experimental Protocols & Methodologies

Multi-Fidelity Bayesian Optimization for Reactor Design

This methodology, used to discover high-performance reactor geometries, combines high-dimensional parameterizations, computational fluid dynamics (CFD), and multi-fidelity Bayesian optimization [7].

- Parameterization: The reactor geometry is defined using a high-dimensional parameter space. This can include both the coil path and the tube's cross-section, which can vary along the reactor's length.

- Objective Definition: A composite objective function is formulated for maximization. This typically includes:

- Plug Flow Performance: Approximated from computational residence time distributions using a tanks-in-series model.

- Non-ideality Penalty: Penalizes bimodal or asymmetrical residence time distributions.

- Multi-Fidelity CFD Simulation: The optimization uses CFD simulations of varying cost and accuracy (fidelities). Lower-fidelity simulations allow for cheaper exploration of the design space.

- Gaussian Process (GP) Modeling: GPs are used to model the simulation cost and the objective function across the design space.

- Iterative Optimization & Selection: An acquisition function, leveraging the GP models, selects the most promising design parameters and simulation fidelities to evaluate in each iteration. This process efficiently balances exploration of new regions with exploitation of known promising areas.

- Validation: Optimal designs are 3D-printed and experimentally validated using both tracer and reacting flow experiments to confirm performance improvements [7].

Closed-Loop AI for Batch-to-Batch Consistency

This protocol outlines the implementation of a real-time, closed-loop AI system for enhancing batch process consistency and reproducing "golden batch" performance [26].

- Data Foundation & Golden Batch Analysis: Historical process data encompassing sensor feeds, quality lab results, and operational setpoints from thousands of runs is aggregated. Machine learning models analyze this data to identify the subtle, nonlinear patterns that define optimal "golden batch" performance.

- Predictive Model Deployment: Soft-sensor models are deployed to run in real-time alongside the active batch process. These models continuously analyze live sensor data to predict final quality properties (e.g., viscosity, purity) hours in advance.

- Deviation Alerting & Root Cause Analysis: If the model predicts a trajectory toward an off-spec condition, it alerts operators and highlights the key process drivers (e.g., temperature ramp, catalyst rate) responsible for the predicted deviation.

- Closed-Loop Control Action: The system automatically calculates and implements fresh, optimized setpoints. These dynamic adjustments are written directly to the Distributed Control System (DCS) in real-time, correcting the process course without requiring manual intervention [26].

- Continuous Learning: The system incorporates the results of each batch (successful or otherwise) into its knowledge base, continuously refining its models and improving decision-making for subsequent runs.

Visualization of Workflows and Logical Relationships

AI-Driven Reactor Design & Optimization Workflow

The following diagram illustrates the integrated computational and experimental workflow for AI-assisted reactor discovery.

Closed-Loop AI Control for Batch Consistency

This diagram details the real-time feedback control system that enables autonomous batch process optimization.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Materials and Computational Tools for AI-Driven Reactor Optimization Research

| Item / Solution | Function in Research |

|---|---|

| Additive Manufacturing (3D Printer) | Enables the fabrication of complex, optimized reactor geometries identified through computational design, allowing for rapid experimental validation [7]. |

| Computational Fluid Dynamics (CFD) Software | Simulates fluid flow, mixing, and residence time distributions within proposed reactor designs, providing the performance data for the AI optimization loop [7]. |

| Multi-Fidelity Bayesian Optimization Platform | A computational framework (e.g., using Gaussian Processes) that efficiently explores high-dimensional design spaces by strategically selecting which design simulations to run and at what level of fidelity [7]. |

| Distributed Control System (DCS) | The core industrial control system that operates the batch process. The AI system interfaces with the DCS to read live sensor data and implement optimized setpoints in a closed loop [26]. |

| Predictive Quality Modeling Software | Machine learning tools that create "soft sensors" to predict final batch quality from real-time process data, enabling proactive intervention [26]. |

| Tracer Compounds | Chemical substances used in residence time distribution experiments to characterize mixing efficiency and flow patterns in reactor prototypes [7]. |

The fields of chemical synthesis and process development are undergoing a significant transformation, driven by the integration of automation, advanced data analytics, and enabling technologies. High-Throughput Experimentation (HTE) has emerged as one of the most prevalent techniques for accelerating the discovery and optimization of chemical reactions, allowing researchers to explore vast reaction spaces in parallel, drastically reducing development time. [5] Concurrently, flow chemistry has established itself as a powerful tool that provides enhanced control over reaction parameters, improved safety profiles, and more straightforward scalability compared to traditional batch processes. [29] The convergence of these approaches with machine learning (ML) and artificial intelligence (AI) creates a powerful framework for autonomous optimization in chemical synthesis. This guide provides an objective comparison of batch versus flow reactors within the context of this modern paradigm, examining their respective capabilities for ML-driven optimization research through experimental data, protocols, and implementation frameworks.

Batch vs. Flow Reactors: A Systematic Comparison for Modern Optimization

The fundamental differences between batch and continuous flow chemistry significantly influence their suitability for autonomous optimization and High-Throughput Experimentation workflows. The table below summarizes the key characteristics of each approach from an optimization research perspective.

Table 1: Comparative Analysis of Batch and Flow Reactors for Optimization Research

| Factor | Batch Reactors | Continuous Flow Reactors |

|---|---|---|

| Process Control | Flexible mid-reaction adjustments; suitable for exploratory synthesis. [29] | Precise, automated control over residence time, temperature, and mixing; ideal for optimized processes. [29] |

| HTE Compatibility | High parallelism with multi-well plates; well-established for diverse condition screening. [5] | Typically serial operation; excels in screening continuous variables (e.g., time, concentration gradients) dynamically. [5] |

| Scalability | Challenging; requires re-optimization when moving from lab to production scale. [29] | Seamless; scale-up often involves increasing flow rates or operating time without changing reactor geometry. [5] [29] |

| Safety Profile | Higher risk for exothermic or hazardous reactions due to larger volumes. [29] | Enhanced safety; smaller in-process volumes minimize risks with hazardous intermediates or extreme conditions. [5] [29] |

| Data Generation for ML | Generates discrete data points from parallel experiments; suitable for initial screening. [5] | Excellent for generating transient (dynamic) data and continuous reaction profiles for kinetic studies and model training. [30] |

| Initial Cost & Setup | Lower initial investment; utilizes standard laboratory glassware. [29] [31] | Higher initial investment; requires specialized pumps, tubing, and reactors. [29] |

| Reaction Types | Highly flexible for diverse reaction types, including those with solids. [31] | Superior for photochemistry, electrochemistry, and highly exothermic reactions; challenges with solids. [5] [31] |

High-Throughput Experimentation in Flow Systems

Capabilities and Applications

Flow chemistry extends the capabilities of traditional HTE by enabling the efficient investigation of continuous variables such as temperature, pressure, and reaction time in a dynamic manner, which is challenging in batch-based microwell plates. [5] This approach widens the available process windows, giving access to chemistry that is extremely challenging under batch-wise HTS, including reactions using hazardous reagents or requiring elevated temperatures and pressures. [5] The technology has found impactful applications across various chemical disciplines, including:

- Photochemistry: Flow reactors enable efficient photochemical processes by minimizing light path length and precisely controlling irradiation time, overcoming the limitations of poor light penetration in batch. [5]

- Algorithmic Optimization: The precise control and automation of flow systems make them ideal platforms for feedback-driven optimization using machine learning algorithms. [5]

- Catalysis and Electrochemistry: Flow systems facilitate the study of catalytic cycles and electrochemical transformations with improved mass and electron transfer. [5]

Experimental Protocol: Photochemical Reaction Optimization in Flow

The following protocol, adapted from Jerkovic et al., outlines a typical workflow for developing and scaling a photochemical reaction using an integrated HTE and flow approach. [5]

Table 2: Key Reagents and Equipment for Photochemical Flow Optimization

| Item | Function/Description |

|---|---|

| 96-well Plate Batch Photoreactor | Initial high-throughput screening of reaction variables (e.g., photocatalysts, bases). |

| Vapourtec Ltd UV150 Photoreactor | Small-scale flow optimization and preliminary parameter testing. |

| Custom Two-Feed Flow Setup | Large-scale production with continuous reactant feeding and product collection. |

| Inline NMR/IR Spectroscopy | Real-time process analytical technology (PAT) for reaction monitoring. |

| Design of Experiments (DoE) | Statistical approach to efficiently explore parameter spaces and model responses. |

Methodology:

- Initial HTE Screening: A 96-well plate photoreactor was used to screen 24 photocatalysts, 13 bases, and 4 fluorinating agents for a flavin-catalyzed photoredox fluorodecarboxylation reaction. This brute-force approach identified several hits outside previously reported optimal conditions. [5]

- Batch Validation and DoE: The promising conditions from HTE were validated in a batch reactor and further optimized using a Design of Experiments (DoE) approach to model parameter interactions. [5]

- Homogenization Study: Additional photocatalyst screening was conducted to develop a homogeneous procedure suitable for continuous flow, avoiding clogging or fouling risks. [5]

- Flow Transfer and Optimization: The process was transferred to a small-scale flow photoreactor (2 g scale). Time-course (^1)H NMR data optimized residence time, and a stability study determined feed solution composition. [5]

- Scale-Up: A custom two-feed flow setup was employed for gradual scale-up, optimizing light power, residence time, and temperature. This achieved a 100 g scale and was finally successfully scaled to a kilo scale, producing 1.23 kg of product (92% yield) with a throughput of 6.56 kg per day. [5]

Autonomous and Machine Learning-Driven Optimization

Modeling and Simulation Toolkits

The integration of modeling and simulation is crucial for accelerating reactor optimization. The FlowMat toolbox is an open-source MATLAB/Simulink resource designed for modeling flow reactors using physics-based, data-driven, and hybrid approaches. [30] Its capabilities include:

- Modular Simulation: Users can build reactor models via a drag-and-drop interface, incorporating elements like transfer functions, tanks-in-series models, and axial-dispersion models. [30]

- Parameter Identification: The toolbox can use transient experimental data to identify key reaction parameters, reducing the time and cost associated with traditional kinetic studies. [30]

- Reactor Optimization: It supports the optimization of reactor operating points and configurations, including finding Pareto fronts for multi-objective optimization. [30]

Experimental Protocol: AI-Assisted Reactor and Process Design

Zhang et al. demonstrated a comprehensive AI-assisted workflow for optimizing the continuous oxidation of 2-ethylhexanol (2-EHA) to 2-ethylhexanoic acid (2-EHAD), a process traditionally plagued by low efficiency in batch reactors. [15]

Methodology:

- Data Generation: A foundational dataset was generated using computational fluid dynamics (Fluent software) simulating the complex mass transfer, heat transfer, and reaction kinetics within the oxidation reactor. [15]

- Surrogate Model Development: A precise reactor surrogate model was developed using neural networks. This data-driven model could rapidly predict reactor performance based on structural parameters and operational conditions, bypassing the computational expense of full mechanistic simulations. [15]

- Multi-objective Optimization: The surrogate model was integrated into a full process simulation. Explainable AI techniques helped elucidate connections between design parameters. The system was optimized for multiple objectives, including conversion rate, yield, energy consumption, and equipment investment. [15]

- Validation and Analysis: The optimized continuous process achieved a conversion rate of 67.80%, a 25% improvement over initial methods. The AI-designed process demonstrated a 30-40% increase in economic profit and a 10-50% reduction in carbon emissions compared to traditional batch processes. [15]

AI-Driven Reactor Optimization Workflow

Benchmarking Optimization Algorithms

The performance of optimization algorithms is critical for autonomous discovery. Schwarcz et al. created a benchmark problem for optimizing a nuclear reactor unit cell, a challenge with distinct local optima representing different physical regimes. [32] Their work demonstrated that reinforcement learning and neuroevolutionary algorithms could effectively navigate this complex, constrained optimization landscape, highlighting the potential of these approaches for chemical reactor optimization where multiple competing objectives exist. [32]

The integration of High-Throughput Experimentation, flow chemistry, and machine learning represents a paradigm shift in chemical synthesis and process development. While batch reactors maintain their utility for exploratory synthesis and reactions requiring maximum flexibility, continuous flow systems offer superior control, safety, and scalability for processes targeted for industrial translation. The capacity of flow reactors to generate high-quality, continuous data makes them particularly amenable to machine learning-driven optimization, enabling the rapid development of more efficient and sustainable chemical processes. As modeling tools like FlowMat become more accessible and AI-assisted workflows more refined, the synergy between these technologies is poised to significantly accelerate innovation across pharmaceutical, fine chemical, and materials science research.

The transition from traditional batch processing to continuous flow chemistry represents a significant paradigm shift in chemical engineering, particularly for pharmaceutical and specialty chemical production. This evolution is being accelerated by the integration of artificial intelligence (AI) and machine learning (ML), which enables the rapid design and optimization of continuous reactor systems that outperform conventional batch processes. Oxidation reactions, critical in synthesizing high-value chemicals and active pharmaceutical ingredients (APIs), often benefit substantially from continuous processing due to enhanced safety and improved mass/heat transfer characteristics.

This case study objectively compares the performance of an ML-optimized continuous oxidation reactor against traditional batch processing for the production of 2-ethylhexanoic acid (2-EHAD) from 2-ethylhexanol (2-EHA). We present quantitative experimental data, detailed methodologies, and the specific AI tools enabling this performance leap, providing researchers and drug development professionals with a framework for implementing similar advanced reactor design strategies.

Experimental Design & Methodologies

ML-Assisted Continuous Reactor Design Workflow

The development of the continuous oxidation reactor followed an integrated AI-driven workflow that combined chemical engineering fundamentals with data-driven algorithms [15]. The methodology can be decomposed into three principal phases, illustrated in the diagram below.

AI-Driven Reactor Design Workflow

Phase 1: Surrogate Model Development A high-fidelity mechanistic model incorporating computational fluid dynamics (CFD), mass transfer, heat transfer, and reaction kinetics was initially developed [15]. This model, while accurate, was computationally expensive for optimization. To overcome this, a neural network-based surrogate model was trained using data generated from the mechanistic model, supplemented by limited targeted continuous oxidation experiments designed to overcome data scarcity caused by long operating cycles and oxygen safety concerns [15]. This surrogate model accurately predicted key performance metrics like conversion and yield while reducing computational time by several orders of magnitude.

Phase 2: Multi-Objective Optimization The trained surrogate model was deployed within a multi-objective optimization framework. The algorithm simultaneously optimized reactor geometry (e.g., internal baffling, impeller design) and macroscopic process parameters (e.g., temperature, pressure, residence time) to maximize conversion and yield while minimizing energy consumption and equipment investment [15]. Explainable AI techniques were employed to identify the most influential design parameters and uncover hidden relationships between them [15].

Phase 3: Process Integration and Assessment The optimized reactor configuration was integrated into a full process simulation. A comprehensive technical, economic, and environmental impact analysis was then conducted from a life cycle perspective, comparing the ML-designed continuous process against traditional batch and alternative production methods [15].

Comparative Experimental Protocol: Batch vs. Continuous Flow

To generate objective performance data, the oxidation of 2-EHA to 2-EHAD was conducted under both traditional batch and the newly designed ML-optimized continuous conditions.

Batch Protocol:

- Reactor: Standard stirred-tank batch reactor.

- Catalyst: Pt-based catalyst in powder form (~10 microns) [33].

- Procedure: All reactants, including 2-EHA and catalyst, were charged into the reactor at the beginning. The reaction proceeded under controlled temperature (30-200°C) and pressure (0.1-3 MPa) with continuous oxygen sparging for a defined reaction time [15] [33].

- Monitoring: Substrate, product, and intermediate concentrations were monitored over time via periodic sampling.

Continuous Flow Protocol:

- Reactor: ML-optimized continuous stirred-tank reactor (CSTR) series with tailored internal geometry.

- Catalyst: Immobilized Pt-based catalyst on a structured support (50-400 micron particles) [33].

- Procedure: Reactants were continuously pumped through the reactor system at optimized flow rates to achieve the target residence time. Oxygen was introduced co-currently. The system was operated until a steady state was reached, confirmed by consistent product output composition [15].

- Monitoring: Real-time monitoring using in-line analytics (e.g., NMR [18] or IR spectroscopy) was used to track conversion and yield.

Performance Comparison & Results

The table below summarizes the key quantitative performance metrics for the ML-optimized continuous reactor compared to the traditional batch process for the oxidation of 2-EHA to 2-EHAD.

Table 1: Quantitative Performance Comparison: Batch vs. ML-Optimized Continuous Flow

| Performance Metric | Traditional Batch Reactor | ML-Optimized Continuous Reactor | Improvement |

|---|---|---|---|

| Conversion Rate | ~54.2% (Baseline) | 67.8% [15] | +25% [15] |

| Economic Profit | Baseline | 30-40% higher [15] | +30-40% [15] |

| Carbon Emissions | Baseline | 10-50% lower [15] | -10 to -50% [15] |

| Process Safety | Large H₂/O₂ inventory; Lower pressure limits (5-10 bar) [33] | Small reagent hold-up; Higher pressure operation possible [33] | Inherently safer |

| Catalyst Handling | Powder filtration required [33] | Fixed-bed; No filtration [33] | Simplified operation |

| Reactor Downtime | Vessel cleaning between batches [33] | Continuous operation; Minimal downtime [33] | Increased productivity |

Analysis of Key Performance Drivers

The superior performance of the continuous system stems from several key factors unlocked by the ML-assisted design:

- Enhanced Mass Transfer: The continuous reactor's optimized geometry, characterized by a high surface-to-volume ratio, significantly improves oxygen mass transfer into the liquid reaction mixture. This is critical for aerobic oxidation reactions, which are often mass-transfer-limited [34]. The ML model specifically optimized parameters like power number, gas hold-up, and impeller geometry to maximize this effect [15].

- Precise Thermal Management: The continuous flow design enables excellent heat transfer, eliminating hot spots common in large-scale exothermic batch oxidations and allowing operation at more favorable, consistent temperatures [35].

- Optimal Catalyst Environment: The use of larger, immobilized catalyst particles (50-400 microns) in a fixed-bed configuration eliminates the need for post-reaction filtration, a significant bottleneck and cost driver in batch processing with fine catalyst powders [33]. The ML optimization ensured the reactor geometry and flow parameters prevented pressure drops across this catalyst bed.

The Researcher's Toolkit

Implementing ML-assisted reactor design requires a suite of specialized reagents, software, and hardware. The following table details the key components of this research toolkit.

Table 2: Essential Research Reagents and Solutions for ML-Assisted Reactor Development

| Tool Category | Specific Example / Specification | Function & Importance |

|---|---|---|

| Catalyst Systems | Pt-based heterogeneous catalysts (50-400 µm for flow) [15] [33] | Facilitates the oxidation reaction; Particle size critical for flow hydrodynamics and pressure drop. |

| AI/ML Software Platforms | Summit optimization package [36], Python-based ML libraries (e.g., PyTorch, TensorFlow) |

Enables implementation of optimization algorithms like Multi-Task Bayesian Optimization (MTBO) and neural network training. |

| Reactor Fabrication | High-resolution 3D Printing (Stereolithography) [18] | Allows rapid prototyping of complex, optimized reactor geometries (e.g., periodic open-cell structures). |

| Process Analytics (PAT) | Real-time Benchtop NMR [18], In-line IR/UV Spectroscopy | Provides continuous, high-frequency data on reaction progress, essential for training and validating ML models. |

| Computational Modeling | Computational Fluid Dynamics (CFD) Software [15] | Generates high-fidelity data on flow, mixing, and heat transfer for initial surrogate model training. |

Advanced ML Frameworks for Reactor Optimization

Beyond the specific case study, two advanced ML frameworks are proving particularly powerful for reactor optimization, as visualized in the diagram below.

Advanced ML Frameworks for Reactor Optimization

A. Multi-Task Bayesian Optimization (MTBO) This algorithm leverages pre-existing reaction data (auxiliary task) to accelerate the optimization of a new, but related, reaction system (primary task) [36]. For example, public data from Suzuki couplings can inform the optimization of a new C-H activation reaction. This approach is especially valuable when experimental data for the target reaction is scarce, reducing the number of required experiments by efficiently incorporating prior knowledge [36].

B. Integrated Digital Platforms (Reac-Discovery)

For complex multiphase reactions, platforms like Reac-Discovery close the loop between design, fabrication, and testing. This platform uses:

- Reac-Gen: A digital module that generates reactor geometries based on mathematical models (e.g., Gyroid structures) defined by parameters like size and level threshold, which control porosity and surface area [18].

- Reac-Fab: High-resolution 3D prints the designed reactors [18].

- Reac-Eval: A self-driving lab (SDL) that tests the reactors in parallel, using real-time NMR to monitor reactions and machine learning to simultaneously optimize both process parameters and the reactor's topological descriptors [18]. This enables the discovery of non-intuitive, high-performance reactor designs.

This case study demonstrates that ML-assisted design of continuous oxidation reactors delivers substantial and quantifiable improvements over traditional batch processing. The data confirms a 25% increase in conversion, 30-40% higher economic profit, and a 10-50% reduction in carbon emissions for the production of 2-EHAD [15]. These performance gains are driven by AI's ability to navigate complex, multi-dimensional optimization spaces, simultaneously refining reactor geometry and process parameters to overcome the mass and heat transfer limitations inherent in batch systems.

For researchers and drug development professionals, the adoption of ML-driven continuous flow chemistry represents more than an incremental improvement; it is a paradigm shift towards safer, more sustainable, and more economical chemical manufacturing. The experimental protocols and toolkits outlined provide a actionable roadmap for deploying these advanced methodologies, enabling the development of next-generation reactor systems that are intrinsically superior to their batch predecessors.