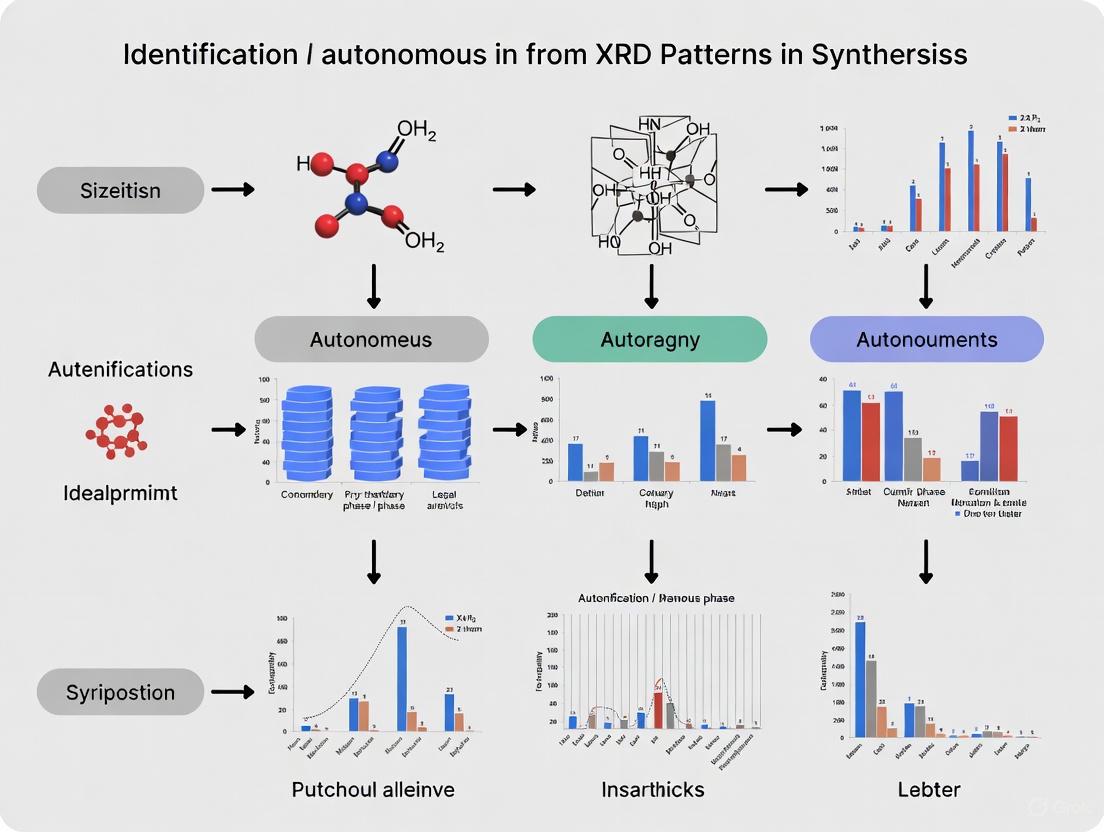

Autonomous Phase Identification from XRD Patterns: AI-Driven Methods for Accelerated Materials Discovery and Pharmaceutical Development

This article comprehensively reviews the transformative role of autonomous methods in identifying crystalline phases from X-ray diffraction (XRD) patterns, a critical task in materials science and pharmaceutical development.

Autonomous Phase Identification from XRD Patterns: AI-Driven Methods for Accelerated Materials Discovery and Pharmaceutical Development

Abstract

This article comprehensively reviews the transformative role of autonomous methods in identifying crystalline phases from X-ray diffraction (XRD) patterns, a critical task in materials science and pharmaceutical development. It explores the foundational principles driving the shift from traditional, expert-dependent analysis to machine learning (ML) and probabilistic algorithms. The piece details cutting-edge methodologies, including probabilistic phase labeling with CrystalShift, deep neural networks trained on synthetic data, and hybrid approaches that integrate multiple data representations. It further addresses key challenges like experimental noise and multi-phase complexity, offering troubleshooting and optimization strategies. Finally, through comparative analysis of different techniques and their validation on experimental data, this review provides researchers and drug development professionals with a clear framework for implementing and validating these autonomous systems to accelerate discovery and ensure product quality and safety.

The Why and How: Foundations of Autonomous XRD Phase Analysis

X-ray diffraction (XRD) stands as a foundational technique for determining the atomic and molecular structure of crystalline materials, enabling researchers across pharmaceuticals, metallurgy, and materials science to understand critical material properties [1] [2]. For decades, the analysis of XRD patterns has relied heavily on manual interpretation and refinement techniques, most notably Rietveld refinement, a method that iteratively adjusts structural parameters until a theoretical pattern matches experimental data [3]. However, the emergence of high-throughput synthesis methodologies—including combinatorial thin-film libraries [4] and automated robotic laboratories [5]—has exposed a critical bottleneck: traditional XRD analysis cannot keep pace with the rate at which modern science produces new samples. This disparity threatens to stall progress in autonomous materials discovery and drug development, where establishing precise composition-structure-property relationships is paramount [4]. This whitepaper examines the limitations of traditional XRD analysis, explores cutting-edge computational solutions, and details experimental protocols essential for achieving autonomous phase identification.

The Fundamental Bottlenecks of Conventional XRD Analysis

Manual Expertise and the "Chemical Reasonableness" Problem

Analyzing XRD patterns authoritatively requires significant domain-specific knowledge, including crystallography, X-ray diffraction physics, thermodynamics, and solid-state chemistry [4]. Experienced specialists do not merely fit patterns; they leverage comprehensive understanding to arrive at the "most reasonable" solutions. For instance, intensity deviations may indicate crystallographic texture or a polymorphic phase, while low-intensity peaks could suggest minor phases or mere background noise [4]. This dependency on human expertise creates a major bottleneck, as manual analysis of the hundreds to thousands of samples in a typical combinatorial library is impractical and incompatible with autonomous discovery loops [4]. Furthermore, minimizing the difference between observed and reconstructed patterns, while a straightforward optimization objective, does not guarantee a trustworthy solution with "chemical reasonableness" [4].

Table 1: Core Limitations of Traditional XRD Analysis

| Limitation Factor | Impact on Analysis Workflow | Consequence for High-Throughput Research |

|---|---|---|

| Manual Rietveld Refinement | Time-consuming, iterative process requiring expert supervision [3] | Creates a critical throughput bottleneck; incompatible with automated synthesis |

| Expert-Dependent Interpretation | Requires deep knowledge of crystallography, thermodynamics, and kinetics [4] | Introduces subjectivity and limits reproducibility; scarce expertise becomes a bottleneck |

| Handling of Complex Mixtures | Difficulty in deconvoluting overlapping peaks from multi-phase samples [6] | Impedes accurate phase mapping in complex material systems like multi-component oxides |

| Data Quality Dependency | High-quality, high-intensity data required for reliable manual analysis [6] | Makes analysis of low-intensity or noisy data from rapid scans unreliable |

Throughput Disparity and the Data Deluge

The core of the bottleneck is a simple disparity in speed. Robotic laboratories can synthesize and characterize hundreds of samples in weeks [5], while combinatorial libraries can contain thousands of compositionally varied samples [4]. Traditional analysis methods are utterly overwhelmed by this volume. Compounding this, high-throughput methodologies often produce "small datasets" by machine learning standards—hundreds to thousands of samples—making it difficult to apply large, data-hungry models [4]. This data volume challenge is exacerbated by the complexity of extracting advanced information such as lattice parameter changes, solid solution behavior, and texture from high-throughput datasets [4].

Paradigm Shift: Integrating AI and Machine Learning for Autonomous Phase Identification

From Manual Fitting to Unsupervised Machine Learning

Next-generation phase mapping algorithms are overcoming these bottlenecks by encoding domain-specific knowledge directly into automated optimization processes. One advanced approach, termed AutoMapper, uses an unsupervised optimization-based solver that integrates material science knowledge—including thermodynamic data from first-principles calculations, crystallography, and diffraction physics—directly into its loss function [4]. This workflow automates the identification of valid candidate phases by sourcing data from inorganic databases like the ICDD and ICSD, then filters them based on thermodynamic stability to eliminate physically unreasonable structures [4]. The solver employs a neural-network optimization to determine phase fractions and peak shifts, treating phase mapping not as a demixing problem but as a direct fitting process using simulated patterns from candidate phases [4].

Table 2: AI-Driven Solutions for XRD Bottlenecks

| AI/ML Technology | Mechanism of Action | Resolved Bottleneck |

|---|---|---|

| Unsupervised Optimization (AutoMapper) | Encodes domain knowledge (thermodynamics, crystallography) into a neural-network loss function [4] | Replaces expert-dependent "chemical reasonableness" checks with automated, physics-informed constraints |

| Convolutional Neural Networks (XRD-AutoAnalyzer) | Provides rapid phase classification and confidence assessment from pattern data [6] [7] | Drastically reduces analysis time per sample from hours/days to seconds |

| Class Activation Maps (CAM) | Highlights specific 2θ regions most critical for phase identification [6] [7] | Guides adaptive data collection, focusing measurement time on diagnostically useful regions |

| Non-Negative Matrix Factorization (NMF) | Demixes observed XRD patterns into constituent phase patterns and their concentrations [4] | Enables automated decomposition of complex, multi-phase patterns without manual input |

Adaptive XRD: Closing the Loop Between Measurement and Analysis

A transformative advancement is adaptive XRD, which integrates an ML algorithm directly with a physical diffractometer to create a closed-loop system [6] [7]. This approach uses initial rapid scans to make preliminary phase predictions, then intelligently steers subsequent measurements to collect data that maximally improves classification confidence.

Autonomous XRD Workflow

This autonomous workflow enables the accurate detection of trace impurity phases and the identification of short-lived intermediate phases during in situ experiments, achievements that are challenging for conventional methods with fixed measurement protocols [6].

Experimental Protocols for Autonomous Phase Identification

Protocol 1: Automated Phase Mapping with Integrated Knowledge

The AutoMapper protocol demonstrates how to integrate materials science knowledge directly into an automated analysis pipeline [4].

- Candidate Phase Collection: Compile all relevant crystalline phases from authoritative databases (ICDD, ICSD), filtering for system-relevant chemistry (e.g., only oxides for an oxide library) [4].

- Thermodynamic Filtering: Calculate the energy above the convex hull for all candidate phases using first-principles calculations. Eliminate phases with energy >100 meV/atom as highly unstable under experimental conditions [4].

- Pattern Simulation: Generate reference XRD patterns for the remaining candidate phases, accounting for specific instrument parameters (e.g., polarization state of the X-ray source) [4].

- Optimization-Based Solving: Use a neural-network model with an encoder-decoder structure to solve for phase fractions and peak shifts. The model minimizes a composite loss function with three key components:

- Iterative Refinement: Prioritize solving "easy" samples (1-2 major phases) first, using these solutions to inform the analysis of more complex, multi-phase samples at phase boundaries [4].

Protocol 2: ML-Driven Adaptive Data Acquisition

This protocol outlines the steps for implementing an adaptive XRD experiment, as validated on battery material systems like Li-La-Zr-O [6] [7].

- Initialization: Perform a rapid, low-resolution scan over a limited angular range (e.g., 2θ = 10° to 60°) to serve as the basis for initial ML predictions.

- Prediction and Confidence Assessment: Process the initial scan with a trained deep learning model (e.g., XRD-AutoAnalyzer) to identify potential phases and assign a confidence score (0-100%) to each prediction [6].

- Decision Point: If all suspected phases have a confidence >50%, proceed to final reporting. If not, initiate the adaptive loop [6].

- Selective Rescanning via CAM:

- Range Expansion (if needed): If confidence remains low after resampling, iteratively expand the scan range by increments (e.g., +10°) to detect additional distinguishing peaks, up to a maximum of 140° [6].

- Ensemble Prediction: Aggregate predictions from all collected data subsets (initial scan, resampled regions, expanded ranges) into a final, confidence-weighted ensemble prediction for the most robust phase identification [6].

Table 3: Key Research Reagent Solutions for Autonomous XRD Workflows

| Tool / Resource | Function in Autonomous Workflow | Example / Source |

|---|---|---|

| Crystallographic Databases | Provides reference "fingerprints" for phase identification by matching peak positions and intensities [4] [8] | ICDD PDF, ICSD, Crystallography Open Database (COD) [3] |

| Thermodynamic Data | Filters candidate phases by stability, eliminating chemically unreasonable options and improving solution validity [4] | First-principles calculated energy above convex hull (e.g., from Materials Project) [4] |

| Robotic Synthesis Labs | Generates the high-throughput sample libraries that create the initial demand for automated analysis [5] | Samsung ASTRAL lab; fluid-handling and dispensing robots [5] [3] |

| Specialized XRD Instrumentation | Enables versatile measurement of powders, thin films, and solids; high-throughput capabilities are critical [8] | Malvern Panalytical Empyrean & Aeris systems; high-resolution detectors [2] [8] |

| Analysis Software Suites | Executes search-match algorithms, automated phase ID, and quantification, often with AI integration [2] | HighScore Plus; XRD-AutoAnalyzer [6] [8] |

The field of XRD analysis is undergoing a fundamental transformation, driven by the urgent need to keep pace with high-throughput synthesis. The traditional bottleneck of manual Rietveld refinement and expert-dependent interpretation is being dismantled by a new paradigm of autonomous phase identification. This paradigm integrates domain-specific knowledge directly into machine learning algorithms, employs adaptive data collection strategies, and leverages robotic automation. These advances are not merely about speed; they are about achieving new levels of reliability and insight in mapping composition-structure-property relationships. As these computational and experimental workflows mature and become more accessible, they will unlock truly autonomous materials discovery and drug development cycles, empowering researchers to navigate complex material systems with unprecedented efficiency and scale.

The integration of machine learning (ML) with X-ray diffraction (XRD) and pair distribution function (PDF) analysis represents a paradigm shift in materials characterization, moving toward fully autonomous phase identification in synthesis research. XRD provides detailed information on long-range order and crystal structure in materials, while PDF analysis is powerful for characterizing both long-range structures and local atomic distortions [3] [9]. Traditional analysis methods, such as Rietveld refinement for XRD, are highly effective but often labor-intensive and require expert knowledge, creating bottlenecks in high-throughput experimental workflows [3]. Machine learning addresses these limitations by automating interpretation, enhancing speed, and extracting subtle patterns from complex spectral data that might be challenging for conventional methods [10] [6].

The fundamental challenge in autonomous phase identification lies in developing models that are not only accurate but also robust, interpretable, and capable of quantifying their prediction uncertainty [11]. This technical guide explores the core principles underpinning how machine learning interprets XRD patterns and PDFs, focusing on the methodologies, architectures, and experimental protocols that enable reliable autonomous analysis within synthesis research. By coupling ML algorithms directly with physical diffractometers, researchers can now create adaptive characterization techniques that steer measurements toward features that improve phase identification confidence, fundamentally rethinking the measurement step itself [6].

Machine Learning for XRD Pattern Analysis

Core Workflows and Architectures

Machine learning applied to XRD pattern analysis typically follows a structured workflow encompassing data acquisition, preprocessing, model training, and phase identification. Convolutional Neural Networks (CNNs) have emerged as particularly effective architectures for this task due to their ability to recognize peak patterns and shapes within diffraction spectra [6]. The Bayesian-VGGNet model, for instance, has demonstrated robust performance by combining deep learning with uncertainty quantification, achieving 84% accuracy on simulated XRD spectra and 75% accuracy on external experimental data [11].

A particularly advanced application involves adaptive XRD driven by machine learning for autonomous phase identification. This approach integrates diffraction and analysis such that early experimental information guides subsequent measurements toward features that improve model confidence [6]. The workflow, illustrated in the diagram below, begins with a rapid initial scan, followed by iterative resampling and analysis until sufficient prediction confidence is achieved.

Addressing Data Scarcity and Enhancing Model Generalization

A significant challenge in ML for XRD analysis is data scarcity, as obtaining comprehensive experimental XRD datasets remains costly and time-consuming [11]. To address this, researchers have developed innovative data generation strategies such as Template Element Replacement (TER), which generates a perovskite chemical space containing physically unstable virtual structures to enhance model understanding of XRD-crystal structure relationships [11]. This approach has been shown to improve classification accuracy by approximately 5%, effectively circumventing the common accuracy degradation problem during dataset expansion.

The TER strategy leverages well-defined lattice archetypes to create richly varied virtual libraries. For perovskites, this utilizes the ABX₃ framework's chemically diverse substitution space to generate synthetic XRD patterns that closely resemble experimental data. When models are trained solely on virtual structure spectral data (VSS) and validated on real structure spectral data (RSS), results are often unsatisfactory. To bridge this gap, researchers create synthetic spectra data (SYN) by combining VSS and RSS, significantly reducing differences between synthetic and real data and substantially improving classification accuracy [11].

Uncertainty Quantification and Model Interpretability

For autonomous phase identification to be reliable in synthesis research, ML models must not only make accurate predictions but also quantify their uncertainty and provide interpretable results. Bayesian methods incorporated into deep learning models enable simultaneous prediction and uncertainty estimation, which is crucial for assessing confidence in autonomous phase identification [11]. Approaches such as variational inference, Laplace approximation, and Monte Carlo dropout have been successfully employed in XRD analysis models.

Interpretability is enhanced through techniques like SHAP (SHapley Additive exPlanations) and Class Activation Maps (CAMs), which highlight features in XRD patterns that contribute most to classification decisions [11] [6]. CAMs are particularly valuable in adaptive XRD, where they guide resampling decisions by identifying regions of the pattern that distinguish between the most probable phases [6]. This interpretability aligns model decisions with physical principles, building trust in autonomous systems and providing researchers with insights into the model's reasoning process.

Table 1: Key ML Architectures for XRD Analysis and Their Performance Characteristics

| Model Architecture | Application | Key Features | Reported Accuracy | Uncertainty Quantification |

|---|---|---|---|---|

| Bayesian-VGGNet [11] | Crystal structure & space group classification | Bayesian methods for uncertainty, VGG-style CNN | 84% (simulated), 75% (experimental) | Yes (variational inference, Laplace approximation) |

| XRD-AutoAnalyzer [6] | Phase identification in multi-phase mixtures | CNN with confidence assessment, CAM integration | High accuracy for trace phase detection | Yes (confidence scores) |

| Random Forest [10] | Multi-modal analysis (XRD & PDF) | Feature importance analysis, handles heterogeneous inputs | Varies by task and dataset | No (standard implementation) |

| Swin Transformer [12] | Space group prediction from radial images | Computer vision transformer architecture | 45.32% accuracy, 82.79% top-5 accuracy | No (standard implementation) |

Machine Learning for Pair Distribution Function (PDF) Analysis

Information Content and Extraction Approaches

Pair distribution function analysis provides rich information about local atomic arrangements in materials, complementing the long-range order information from XRD [10]. While PDF data contains detailed structural information, extracting this information through conventional methods like Rietveld refinement for small-box models and Reverse Monte Carlo (RMC) for big-box models often suffers from efficiency limitations [9]. Machine learning approaches address these challenges by directly mapping PDF patterns to structural characteristics.

Random forest models have proven particularly effective for extracting local structural information from PDF data. These models can be trained to predict key local environment descriptors including oxidation state, coordination number, and mean nearest-neighbor bond length of specific elements in complex materials [10]. The species-specificity of PDF analysis – focusing on the local environment around particular atomic species – makes it particularly valuable for understanding materials where local distortions play crucial roles in properties and functionality.

Recent innovations in PDF analysis include using backpropagation algorithms to fit neutron and X-ray PDF data of complex materials like ferroelectric perovskites [9]. This approach achieves fitting accuracy comparable to RMC while offering potential efficiency advantages by simultaneously optimizing tens of thousands of parameters and overcoming unstable convergence inherent in RMC's random perturbation. Furthermore, unsupervised ML techniques like non-negative matrix factorization (NMF) have shown effectiveness for decomposing PDFs into components that resemble partial (differential) PDFs of different chemical components in a system [10].

Comparative Information Content: PDF vs. XANES

Understanding the relative strengths of different characterization techniques is crucial for experimental design in synthesis research. Interpretable machine learning enables direct comparison of the information content in PDF versus other techniques like X-ray absorption near-edge spectroscopy (XANES). Research shows that XANES-only models often outperform PDF-only models, even for structural tasks, due to the rich structural information contained in XANES spectra and the utility of species-specificity [10].

However, when using the metal's differential-PDFs (dPDFs) instead of total-PDFs, this performance gap narrows significantly, highlighting the importance of data preprocessing and representation [10]. For tasks involving the prediction of oxidation states and local coordination environments, the combination of both techniques does not always lead to dramatic improvements, as the information content often overlaps, with XANES features frequently dominating the predictions when both modalities are used.

Table 2: ML Performance on PDF Analysis Tasks for Transition Metal Oxides

| Prediction Task | Input Modality | Key Findings | Relative Performance |

|---|---|---|---|

| Oxidation State [10] | XANES-only | Rich electronic structure information enables accurate oxidation state determination | High |

| Oxidation State [10] | PDF-only | Limited direct electronic structure information reduces effectiveness | Moderate |

| Coordination Number [10] | XANES-only | Pre-edge and edge features encode local coordination information | High |

| Coordination Number [10] | PDF-only | Local atomic distances provide coordination information | Moderate to High |

| Bond Length [10] | XANES-only | Extended fine structure (EXAFS region) contains distance information | Moderate |

| Bond Length [10] | PDF-only | Direct distance correlations enable accurate bond length prediction | High |

| Multi-task [10] | XANES + PDF (combined) | Information from XANES often dominates predictions | Context-dependent |

Multimodal Integration of XRD and PDF

Principles of Multimodal Machine Learning

Multimodal machine learning integrates heterogeneous data sources to extract more comprehensive materials characterization than possible with single techniques alone. For XRD and PDF analysis, this involves combining the long-range order information from XRD with the local structure insights from PDF [10]. The random forest algorithm has been particularly successful for this integration, as it can flexibly handle diverse input types with minimal numerical issues, providing an off-the-shelf solution for multimodal analysis [10].

The fundamental challenge in multimodal integration lies in the heterogeneous nature of information in different experiments and possible incompatibility of systematic errors [10]. Traditional methods struggle to integrate these heterogeneous datasets because they lack a priori knowledge about how to weight contributions from each measurement in the cost function. Machine learning circumvents this limitation by learning the optimal weighting directly from the data during training, enabling more effective fusion of complementary information.

Workflow for Multimodal XRD-PDF Analysis

The multimodal analysis workflow begins with data acquisition from both techniques, followed by feature extraction and alignment. For XRD data, this typically involves using the full diffraction pattern or key features derived from it, while PDF analysis uses the full PDF profile or specific peak characteristics. The machine learning model then learns to map relationships between these input features and target structural properties, leveraging complementary information to improve prediction accuracy and robustness.

Interpretability remains crucial in multimodal analysis, with feature importance analysis revealing how information is balanced between XRD and PDF inputs [10]. This analysis shows which technique contributes most significantly to specific predictions, guiding researchers in experimental design and helping determine when combining complementary techniques adds meaningful information to a scientific investigation. For many prediction tasks, one modality often dominates – XANES features frequently outweigh PDF data in combined models for local structure prediction, though this balance varies depending on the specific prediction task [10].

Key Databases and Computational Tools

Successful implementation of ML for XRD and PDF analysis requires access to comprehensive databases and specialized computational tools. Several key resources have emerged as standards in the field, enabling robust model training and validation.

Table 3: Essential Research Resources for ML-Driven XRD and PDF Analysis

| Resource Name | Type | Key Features/Applications | Access/Reference |

|---|---|---|---|

| SIMPOD [12] | Dataset | 467,861 crystal structures with simulated PXRD patterns; includes 1D diffractograms and 2D radial images | Publicly available benchmark |

| Inorganic Crystal Structure Database (ICSD) [11] | Database | Experimental crystal structures for training and validation | Subscription required |

| Materials Project [10] | Database | Theoretical spectra and structures; includes XANES calculated with FEFF | Publicly available |

| Crystallography Open Database (COD) [12] | Database | Open-access collection of crystal structures | Publicly available |

| Diffpy-CMI [10] | Software | PDF calculation from atomic coordinates | Open source |

| XRD-AutoAnalyzer [6] | ML Model | CNN for phase identification with confidence assessment | Research implementation |

| Bayesian-VGGNet [11] | ML Model | Bayesian CNN for XRD with uncertainty quantification | Research implementation |

Protocol for Adaptive XRD for Phase Identification

The following detailed protocol enables implementation of adaptive XRD for autonomous phase identification, based on validated experimental approaches [6]:

Initial Rapid Scan: Begin with a rapid XRD scan over a narrow angular range of 2θ = [10°, 60°], optimized to conserve scan time while including sufficient peaks for preliminary phase prediction.

ML Analysis and Confidence Assessment: Process the initial pattern using a trained CNN model (e.g., XRD-AutoAnalyzer) to predict potential phases and assess confidence levels for each identification. The confidence threshold for reliable identification is typically set at 50%.

CAM Calculation for Feature Importance: If confidence is below threshold, calculate Class Activation Maps (CAMs) to identify regions of the XRD pattern that most significantly contribute to the classification decision for the two most probable phases.

Targeted Resampling: Resample regions where the difference between CAMs of the top candidate phases exceeds a predetermined threshold (typically 25%). Use increased resolution (slower scan rate) in these regions to clarify distinguishing peaks.

Angular Range Expansion: If confidence remains low after resampling, expand the angular range systematically in +10° increments up to a maximum of 140° to detect additional distinguishing peaks.

Iterative Refinement and Ensemble Prediction: Continue iterative resampling and expansion until confidence thresholds are met or maximum angles are reached. For patterns with multiple expansions, use ensemble predictions weighted by confidence scores (Eq. 1):

[ P{\text{ens}} = \frac{\sum{10}^{2\thetai} ci P_i}{n + 1} ]

where (Pi) represents each prediction over [10, 2θi], (ci) is the confidence of that prediction, and (n + 1) gives the total number of 2θ-ranges included.

Validation: Cross-validate identified phases against known databases and structural models to ensure physical plausibility.

This protocol has demonstrated particular effectiveness for detecting trace amounts of materials in multi-phase mixtures and identifying short-lived intermediate phases during in situ synthesis studies, enabling the capture of transient states that would be missed by conventional approaches [6].

Protocol for Multimodal XRD-PDF Analysis

For researchers seeking to integrate information from both XRD and PDF techniques, the following protocol provides a framework for multimodal analysis [10]:

Data Acquisition: Collect complementary XRD and PDF data from the same sample, ensuring consistent experimental conditions and sample environment.

Data Preprocessing:

- For XRD: Normalize patterns, correct for background, and optionally extract prominent features or use full pattern.

- For PDF: Compute PDFs from raw scattering data using established Fourier transform procedures, focusing on the differential-PDF (dPDF) for specific elements of interest when possible.

Feature Alignment: Align XRD and PDF data representations to ensure consistent length scales and resolution, creating a unified feature set for ML analysis.

Model Training: Train random forest or other suitable ML models on the combined feature set to predict target properties (oxidation state, coordination number, bond lengths). Use k-fold cross-validation to assess model performance.

Feature Importance Analysis: Calculate and interpret feature importance scores to understand the relative contribution of XRD versus PDF features for different prediction tasks.

Model Validation: Validate predictions against known structures or complementary characterization data to ensure reliability.

This multimodal approach is particularly valuable for complex materials where neither technique alone provides sufficient insight, such as systems with both long-range and local disorder, or materials containing multiple elements with distinct local environments.

The field of machine learning for XRD and PDF analysis is rapidly evolving, with several emerging trends shaping its future development. Uncertainty-aware autonomous experimentation represents a particularly promising direction, where models not only identify phases but also quantify their confidence and strategically plan experiments to maximize information gain [6]. The integration of ML directly with diffractometers to create closed-loop, adaptive characterization systems marks a significant advancement beyond simply automating analysis to fundamentally rethinking the measurement process itself.

Multimodal data fusion approaches that combine XRD and PDF with complementary techniques like XANES, Raman spectroscopy, and electron microscopy will provide increasingly comprehensive materials characterization [10]. The development of interpretable, physics-informed models that incorporate domain knowledge and physical constraints will address current limitations of purely data-driven approaches, enhancing reliability and adoption in scientific research [3]. Furthermore, the creation of large-scale, standardized benchmarks like SIMPOD will accelerate progress by enabling fair comparison of methods and promoting reproducibility [12].

For synthesis research, these advancements translate to dramatically accelerated materials discovery and characterization cycles. Autonomous phase identification enables real-time monitoring of solid-state reactions, detection of transient intermediate phases, and intelligent guidance of synthesis pathways toward target materials [6]. As these technologies mature, they will increasingly transform materials characterization from a manual, expert-driven process to an automated, data-rich pipeline that seamlessly integrates with robotic synthesis platforms, closing the loop on autonomous materials discovery and development.

Closed-loop autonomous experimentation represents a paradigm shift in scientific research, enabling the rapid discovery and development of new materials and pharmaceutical compounds. These self-driving laboratories integrate robotic hardware for material handling and measurement with artificial intelligence that plans experiments, analyzes data, and iteratively refines hypotheses without human intervention [13]. This transformative approach is particularly impactful in fields requiring exploration of vast parameter spaces, such as materials synthesis and drug development, where traditional experimentation methods are often time-intensive and limited in scope.

The core value of autonomous experimentation lies in its ability to address high-dimensional optimization problems that would be intractable through manual investigation. By combining high-throughput screening (HTS) technologies with AI-driven decision-making, these systems can systematically navigate complex experimental landscapes, revealing non-intuitive relationships between synthesis parameters, structural properties, and functional performance [13]. Within the specific context of autonomous phase identification from X-ray diffraction (XRD) patterns, this methodology accelerates the establishment of critical composition-structure-property relationships that form the foundation of materials science and pharmaceutical development [4].

Core Technological Drivers

Automated Hardware and Robotics

The physical infrastructure for autonomous experimentation encompasses integrated robotic systems that handle sample preparation, processing, and characterization with minimal human intervention. These systems address key challenges in reproducibility and efficiency by executing standardized protocols with precision exceeding manual operations [14]. For powder X-ray diffraction analysis, specialized robotic arms with multifunctional end effectors can prepare samples by gently flattening powder surfaces using soft gel attachments, significantly reducing background noise in the critical low-angle region essential for analyzing materials like organic compounds and lead halide perovskites [14].

Advanced systems incorporate purpose-built components such as:

- Sample holders with frosted glass surfaces that prevent powder spillage while minimizing background intensity [14]

- Automated sample hotels with capacity for dozens of samples in temperature and humidity-controlled environments [14]

- Integrated actuators for instrument operation (e.g., opening/closing XRD instrument doors) [14]

- Flow chemistry systems for automated electrolyte formulation and disposal in electrochemical research [15]

This hardware automation enables continuous operation for extended durations (e.g., ~50 hours) while experimentally examining thousands of parameter combinations without manual intervention [15].

Machine Learning and AI Algorithms

Artificial intelligence serves as the decision-making engine of autonomous experimentation systems, with algorithms that range from supervised learning for classification to Bayesian optimization for experimental design. Several specialized ML approaches have been developed specifically for materials science applications:

Deep Learning for Mechanism Classification: Residual neural network (ResNet) architectures can automatically distill subtle features in voltammograms and probabilistically classify electrochemical mechanisms, yielding numerical propensity distributions compatible with automated experimentation [15]. Similar approaches have been adapted for XRD pattern analysis, enabling real-time phase identification during autonomous operation.

Automated Phase Mapping: Non-negative matrix factorization (NMF) and convolutional NMF approaches can identify constituent phases and reveal lattice parameter changes in combinatorial libraries [4]. Recent advances integrate thermodynamic data from first-principles calculations and crystallographic knowledge to ensure physically reasonable solutions [4].

Bayesian Optimization: Adaptive design of experiments using packages like Dragonfly allows efficient exploration of high-dimensional parameter spaces by suggesting new experimental conditions toward user-defined objectives [15]. These algorithms balance exploration of uncertain regions with exploitation of promising areas to maximize information gain.

Table 1: Machine Learning Approaches in Autonomous Experimentation

| Algorithm Type | Representative Methods | Applications in Autonomous Experimentation |

|---|---|---|

| Deep Learning | Residual Neural Networks (ResNet) [15], Convolutional Neural Networks (CNN) [3] [16] | Classification of electrochemical mechanisms [15], Phase identification from XRD patterns [3] |

| Unsupervised Learning | Non-negative Matrix Factorization (NMF) [4], Convolutional NMF [4] | Phase mapping in combinatorial libraries [4], Extraction of patterns from high-dimensional data [3] |

| Optimization Methods | Bayesian Optimization [15] | Adaptive experimental design [15], Parameter space exploration [13] |

| Computer Vision | AlexNet, ResNet, DenseNet, Swin Transformer [16] | Space group prediction from XRD radial images [16] |

Data Infrastructure and FAIRification

The effectiveness of autonomous experimentation systems depends critically on robust data management practices that ensure Findability, Accessibility, Interoperability, and Reuse (FAIR) of generated data. Automated FAIRification protocols convert experimental data into machine-readable formats with associated metadata, enabling efficient data reuse and collaboration across research communities [17].

Specialized tools have been developed to support this data lifecycle:

- eNanoMapper Template Wizard streamlines data entry through user-friendly online forms [17]

- Template Designer automates creation of custom data entry templates [17]

- NeXus format integrates all data and metadata into a single file and multidimensional matrix for interactive visualization [17]

- ToxFAIRy Python module enables automated data preprocessing and score calculation within Orange Data Mining workflows [17]

For XRD data analysis, benchmark datasets like SIMPOD (Simulated Powder X-ray Diffraction Open Database) provide 467,861 crystal structures with corresponding simulated powder X-ray diffractograms in both vector and radial-image formats, facilitating the development and validation of ML models for crystal structure determination [16].

Implementation in XRD Analysis

Workflow Integration

The integration of closed-loop autonomous systems for XRD analysis follows a structured workflow that connects synthesis, characterization, and decision-making into an iterative cycle. A representative implementation for autonomous phase identification and mapping includes:

This workflow demonstrates how autonomous systems iteratively refine their understanding of composition-structure relationships. The AI decision point determines whether sufficient data has been collected to update the predictive model or whether additional experiments are needed to reduce uncertainty in specific regions of the phase diagram.

Domain Knowledge Integration

A critical advancement in autonomous XRD analysis is the integration of domain-specific knowledge directly into the optimization algorithms, ensuring that solutions are not just mathematically sound but also physically plausible. This integration occurs at multiple levels:

Crystallographic Knowledge: Automated phase mapping algorithms incorporate constraints from crystallography, such as space group symmetry and structure factor calculations, directly into their loss functions [4]. For example, the AutoMapper solver uses a weighted loss function with components for XRD pattern fitting (LXRD), composition consistency (Lcomp), and entropy-based regularization (Lentropy) to prevent overfitting [4].

Thermodynamic Constraints: First-principles calculated thermodynamic data helps filter plausible candidate phases by eliminating highly unstable structures (e.g., those with energy above hull >100 meV/atom) [4]. This prevents the identification of physically unrealistic phases that might otherwise provide good pattern fits.

Experimental Considerations: Successful algorithms account for experimental factors such as X-ray beam polarization (fully plane-polarized for synchrotron sources vs. unpolarized for laboratory sources) and texture effects through appropriate modeling of diffraction intensity distributions [4].

Table 2: Domain Knowledge Integration in Autonomous XRD Analysis

| Knowledge Domain | Integrated Information | Implementation in Autonomous Systems |

|---|---|---|

| Crystallography | Space group symmetry, Structure factors, Systematic absences | Constraints in loss functions during phase mapping [4], Candidate phase identification from structural databases [16] |

| Thermodynamics | Formation energies, Energy above convex hull, Phase stability | Filtering of implausible candidate phases [4], Prediction of stable phases in unexplored compositions [4] |

| XRD Physics | Polarization effects, Scattering factors, Peak broadening | Accurate simulation of diffraction patterns for different instrument configurations [4] [18], Modeling of peak shapes for crystallite size and microstrain [18] |

| Materials Chemistry | Bonding characteristics, Solid solution behavior, Oxidation states | Restriction of valid candidate structures based on chemical reasoning [4], Prediction of lattice parameter changes across composition spreads [4] |

Experimental Protocols and Methodologies

Protocol for Autonomous Electrochemical Mechanism Investigation

The investigation of molecular electrochemistry mechanisms serves as an exemplary protocol for closed-loop experimentation [15]:

Sample Preparation:

- Automated flow chemistry systems prepare electrolytes with precise concentrations of molecular electrocatalysts (e.g., 1 mM cobalt tetraphenylporphyrin) and substrates (e.g., 0-20 mM organohalide electrophiles) in dimethylformamide with 0.1 M supporting electrolyte [15]

- System operates within a glovebox to ensure compatibility with oxygen- and moisture-sensitive chemistry [15]

Experimental Measurement:

- Cyclic voltammetry measurements performed with automatic iR compensation using a commercial potentiostat controlled by a modified Hard Potato Python library [15]

- Each experimental condition combines six logarithmically-spaced scan rates (νmax/νmin = 10) with varied reactant concentrations [15]

- Measurement rate of approximately 1.2 minutes per CV enables examination of 2520 parameter combinations in ~50 hours [15]

Data Analysis:

- Deep learning model based on ResNet architecture analyzes voltammograms immediately after measurement [15]

- Model yields propensity distributions for five prototypical electrochemical mechanisms (E, EC, CE, ECE, DISP1) [15]

- Numerical propensity values (0-1) quantify mechanism likelihood instead of descriptive classifications [15]

Decision-Making:

- Bayesian optimization algorithm (Dragonfly package) suggests new experimental conditions based on current understanding [15]

- Adaptive workflow either identifies parameter combinations suitable for kinetic analysis or rules out mechanisms for negative controls [15]

- For confirmed EC mechanisms, system extracts second-order kinetic rate constants spanning 7 orders of magnitude [15]

Protocol for High-Throughput Toxicity Screening

Quantitative high-throughput screening (qHTS) represents another well-established autonomous protocol with applications in drug discovery and nanomaterials safety assessment [19] [17]:

Assay Configuration:

- Implementation in 1536-well plates with low-volume cellular systems (<10 μl per well) using high-sensitivity detectors [19]

- Panel of five toxicity endpoints: CellTiter-Glo (cell viability), DAPI (cell number), gammaH2AX (DNA damage), 8OHG (nucleic acid oxidative stress), and Caspase-Glo 3/7 (apoptosis) [17]

- Multiple exposure times (kinetic dimension) and concentration ranges (e.g., twelve-concentration dilution series) [17]

Data Processing:

- Automated FAIRification converts raw data into machine-readable formats with comprehensive metadata annotation [17]

- Calculation of multiple metrics: first statistically significant effect, area under curve (AUC), and maximum effect from dose-response data [17]

- ToxPi software normalizes metrics across endpoints and timepoints for comparability [17]

Toxicity Scoring:

- Endpoint- and timepoint-specific toxicity scores compiled into integrated Tox5-score [17]

- Enables hazard-based ranking and grouping against well-known reference toxicants [17]

- Transparency in scoring allows visualization of each endpoint's contribution to overall toxicity assessment [17]

Essential Research Tools and Reagents

The implementation of closed-loop autonomous experimentation requires specialized materials and computational resources. The following toolkit outlines essential components for establishing such systems:

Table 3: Research Reagent Solutions for Autonomous Experimentation

| Tool/Reagent | Function | Application Examples |

|---|---|---|

| Flow Chemistry Systems | Automated electrolyte formulation and disposal with precise concentration control | Preparation of electrochemical research samples [15] |

| Multifunctional Robotic End Effectors | Sample preparation, loading/unloading, and instrument operation without attachment changes | Powder sample handling for automated XRD [14] |

| Specialized Sample Holders | Secure powder retention with minimal background contribution for high-quality XRD measurements | Frosted glass holders with embedded magnets for automated XRD [14] |

| Deep Learning Classification Models | Automated analysis of complex data patterns (voltammograms, XRD patterns) for mechanism identification | ResNet for electrochemical mechanism classification [15], CNN for XRD phase identification [3] |

| Bayesian Optimization Software | Adaptive experimental design through efficient parameter space exploration | Dragonfly package for suggesting new experimental conditions [15] |

| FAIR Data Management Tools | Automated data formatting, metadata annotation, and conversion to machine-readable formats | eNanoMapper Template Wizard, ToxFAIRy Python module [17] |

| Reference Materials Databases | Source of candidate structures for phase identification and validation | Crystallography Open Database (COD), Inorganic Crystal Structure Database (ICSD) [4] [16] |

Challenges and Future Directions

Despite significant advances, autonomous experimentation systems face several challenges that represent opportunities for future development. The integration of domain knowledge remains partially dependent on human expertise, particularly for evaluating solution "reasonableness" according to materials chemistry principles [4]. As one researcher notes, experienced specialists arrive at solutions not only based on fitting quality but also by leveraging comprehensive understanding of the investigated materials system [4].

Workforce development represents another critical challenge, as effective exploitation of autonomous research requires scientists comfortable working with artificial intelligence and robotics [13]. Current systems also struggle with transferring knowledge between different materials systems or experimental domains, limiting their generalizability.

Future advancements will likely focus on:

- Developing more sophisticated physics-informed machine learning models that intrinsically incorporate scientific knowledge [3] [18]

- Creating standardized interfaces and data formats to enable interoperability between autonomous systems from different vendors [13]

- Implementing more advanced decision-making algorithms that can reason about scientific novelty rather than just optimization [13]

- Establishing community-wide benchmark datasets and challenges to drive algorithmic improvements [16]

As these systems mature, network effects may emerge where interconnected autonomous laboratories collectively accelerate materials development, potentially reducing discovery and deployment timelines from decades to years or even months [13]. This paradigm shift promises to fundamentally transform how scientific research is conducted across academia, government laboratories, and industry.

In pharmaceutical development, a polymorph is a distinct crystalline form of a solid compound that possesses the same chemical composition but a different spatial arrangement of molecules or conformers in the crystal lattice [20]. Common examples include polymorphs, hydrates, solvates, and amorphous forms, each exhibiting unique solid-state characteristics. The identification and control of these solid-state forms is not merely an academic exercise but a regulatory requirement with direct implications for drug safety, efficacy, and quality. Different polymorphs can demonstrate significant variations in key physicochemical properties including solubility, dissolution rate, chemical and physical stability, melting point, and hygroscopicity [21]. These differences can profoundly impact the bioavailability of a drug product, as the rate and extent of drug absorption can be altered by the solubility and dissolution characteristics of the specific polymorph form. Consequently, polymorph screening has become a crucial step in pharmaceutical development to ensure the selection of the most thermodynamically stable and bioavailable form of an Active Pharmaceutical Ingredient (API) [21].

The case of the HIV drug Ritonavir stands as a cautionary tale within the industry, where the unexpected appearance of a previously unknown, less soluble polymorph years after product launch necessitated a costly reformulation and highlighted the potential risks associated with inadequate polymorph control [21]. Such incidents underscore why regulatory authorities worldwide require comprehensive understanding and control of the solid-state form of APIs and drug products throughout their lifecycle. X-ray Powder Diffraction (XRPD) has emerged as the primary analytical technique for this purpose due to its ability to provide a unique "fingerprint" for each crystalline phase based on its atomic arrangement, enabling both identification and quantification of polymorphic forms [20] [22]. This technical guide examines the critical applications of polymorph identification within the framework of USP general chapter 〈941〉, focusing on both established methodologies and emerging autonomous technologies that are reshaping pharmaceutical development.

USP 〈941〉 Standards: Principles and Requirements

United States Pharmacopeia (USP) general chapter 〈941〉 Characterization of Crystalline and Partially Crystalline Solids by X-Ray Powder Diffraction (XRPD) provides the standardized framework for applying X-ray diffraction in pharmaceutical analysis [23]. This harmonized standard, developed through the Pharmacopeial Discussion Group (PDG) involving USP, European Pharmacopoeia, and Japanese Pharmacopoeia, establishes universal testing methodologies and acceptance criteria to ensure consistency and reliability in polymorph identification across global regulatory submissions [23]. The chapter was officially updated and adopted on May 1, 2022, with revisions that include clarifying the term "crystallite," replacing "particle orientation" with "preferred orientation," specifying "elastically scattered X-rays" in the principles section, adding silver as a utilized radiation source, and including silicon powder or α-alumina as certified reference materials for instrument performance control [23].

Fundamental Principles and Instrument Requirements

USP 〈941〉 establishes that every crystalline form of a compound produces a characteristic X-ray diffraction pattern, whether derived from a single crystal or powdered material [22]. The fundamental principle underlying XRPD is Bragg's Law, which describes the specific geometrical conditions under which constructive interference occurs when X-rays interact with atomic planes in a crystal lattice, producing distinct diffraction peaks at angles that depend on the atomic arrangement [24]. The positions (angles) and relative intensities of these diffracted maxima provide the information necessary for both qualitative identification and quantitative analysis of crystalline materials [22].

The chapter specifies critical instrument requirements to ensure analytical validity:

- Radiation Sources: Copper anodes are most commonly employed for organic substances, though molybdenum, iron, chromium, and silver may also be utilized, with appropriate filters to achieve practically monochromatized radiation [23] [22].

- Specimen Preparation: The specimen must be ground to a fine powder to improve randomness in crystal orientation, though caution is advised as grinding pressure may induce phase transformations [22].

- Instrument Performance Control: Silicon powder or α-alumina (corundum) are recommended as certified reference materials for verifying instrument performance and calibration [23].

- Angular Range: For most organic crystals, the diffraction pattern should be recorded from "as near to 0° as possible to at least 30°" in 2θ, though inorganic salts may require extending this range well beyond 40° to capture all relevant diffraction maxima [23].

Table 1: Key Requirements of USP 〈941〉 for Qualitative Phase Analysis

| Parameter | Requirement | Purpose |

|---|---|---|

| Angular Range | Typically 0° to 30° (2θ) for organic crystals | Capture sufficient diffraction maxima for identification |

| Angle Reproducibility | ±0.10° for 2θ values | Ensure measurement precision and pattern matching reliability |

| Reference Materials | Silicon powder or α-alumina (corundum) | Verify instrument performance and calibration |

| Pattern Comparison | Compare to reference data (e.g., PDF database or USP Reference Standard) | Identify crystalline phases present in sample |

| Sample Preparation | Grinding to fine powder, minimizing preferred orientation | Ensure representative diffraction pattern free from orientation bias |

Qualitative and Quantitative Analysis Specifications

For qualitative phase analysis, USP 〈941〉 requires comparison of the sample's diffraction pattern to "reference data" rather than "comparison data," specifically mentioning the International Centre for Diffraction Data (ICDD) Powder Diffraction File (PDF) containing over 60,000 crystalline materials as an appropriate resource [23] [22]. When a USP Reference Standard is available, it is preferable to generate a primary reference pattern on the same equipment under identical conditions. Agreement between sample and reference patterns should be within the calibrated precision of the diffractometer for diffraction angle (typically ±0.10° for 2θ values), while relative intensity variations may occur due to preferred orientation effects [22].

For quantitative analysis, the chapter notes that "amounts of crystalline phases as small as 10% may usually be determined in solid matrices, and in favorable cases amounts of crystalline phases less than 10% may be determined" [23]. Quantitative measurements require careful preparation to avoid preferred orientation effects, and may employ internal standardization where a known amount of reference material is added to enable determination of the unknown substance relative to the standard [22]. The standard should have similar density and absorption characteristics to the specimen, and its diffraction pattern should not significantly overlap with that of the material being analyzed.

Technical Challenges in Pharmaceutical Polymorph Identification

Detection Sensitivity Limitations

A significant technical challenge in pharmaceutical polymorph identification arises when dealing with low-concentration APIs in final drug formulations. This is particularly problematic in high-potency drugs where the API represents only a small fraction of the total formulation mass. Research has demonstrated that conventional laboratory XRPD has a detection limit typically in the range of 2-5 w/w% for crystalline APIs in powder blends, making it insufficient for formulations with very low API concentrations [25].

A case study investigating tiotropium bromide monohydrate (the API in Spiriva inhalation powder) in a lactose matrix highlighted this limitation. At the commercial concentration of 0.4 w/w%, laboratory XRPD using CuKα1 radiation (λ = 1.54 Å) with a standard detector could not detect the characteristic diffraction peaks of the API, as their intensity was indistinguishable from background noise, even with optimized specimen preparation [25]. The marker peaks for tiotropium bromide monohydrate at P₁ = 10.51 Å⁻¹ and P₂ = 11.92 Å⁻¹ were barely visible even at 5 w/w% concentration and completely undetectable at the actual use concentration of 0.4 w/w% [25].

Advanced Solutions for Enhanced Sensitivity

To overcome these sensitivity limitations, synchrotron XRPD has been successfully employed for polymorph identification in low-concentration formulations. Synchrotron radiation offers advantages of high brightness and high parallelity, enabling significantly improved sensitivity and resolution compared to conventional laboratory sources [25]. In the tiotropium bromide case study, synchrotron XRPD performed at the BL19B2 beamline of the SPring-8 facility (using a wavelength of 1.0 Å) could unambiguously identify four different polymorphic forms present at 0.4 w/w% concentration in lactose powder blends [25].

The technical approach for synchrotron analysis included:

- Specimen Preparation: Filling powder blends into Lindemann glass capillaries (1.0 mm diameter) to ensure uniform packing and orientation [25].

- Exposure Time Extension: Utilizing 2-hour X-ray exposure (compared to 5 minutes for pure substances) to enhance diffraction intensity from the low-concentration API [25].

- Selective Detection: Masking regions on the imaging plate detector where strong lactose peaks appeared using lead tapes to prevent overexposure and destruction of the detector while accumulating diffraction from the API [25].

This approach enabled unambiguous identification of tiotropium bromide monohydrate and three anhydrate forms (I, II, and III) at the commercial concentration of 0.4 w/w%, demonstrating at least an order of magnitude improvement in detection limit compared to conventional laboratory XRPD [25].

Table 2: Comparison of XRPD Techniques for Polymorph Identification

| Parameter | Laboratory XRPD | Synchrotron XRPD |

|---|---|---|

| Typical Detection Limit | 2-5 w/w% [25] | ≤0.4 w/w% [25] |

| Radiation Source | Sealed X-ray tube (Cu, Mo, etc.) [22] | Synchrotron storage ring [25] |

| Beam Characteristics | Divergent, relatively low intensity | Highly parallel and intense [25] |

| Typical Measurement Time | 5-60 minutes | Up to several hours [25] |

| Accessibility | Widely available in industrial labs | Limited to large-scale facilities |

| Applications | Routine quality control, high-concentration APIs | Research, troubleshooting, low-concentration APIs [25] |

Autonomous Phase Identification: Machine Learning Approaches

The Need for Automation in XRD Analysis

The integration of X-ray diffraction into high-throughput experimentation (HTE) frameworks for materials discovery has created a significant bottleneck in data analysis. While modern synchrotron sources and automated laboratory instruments can generate XRD patterns at unprecedented rates, traditional analysis methods like Rietveld refinement are computationally intensive and require extensive expert knowledge, making them insufficiently robust to match the pace of data acquisition in HTE workflows [26]. This analytical bottleneck becomes particularly problematic in autonomous materials research, where artificially intelligent agents require rapid, automated, and reliable analysis of XRD data to make real-time decisions about subsequent experiments [26].

To address these challenges, researchers have developed machine learning (ML) and artificial intelligence (AI) approaches for autonomous phase identification from XRD patterns. These methods aim to provide rapid, reliable structural determination that can be integrated into closed-loop experimental systems where AI agents design synthesis methods to obtain structures associated with desired target properties [26]. The ideal autonomous identification system must not only provide accurate phase labeling but also quantitative probability estimates that enable robust reasoning about composition-structure-property relationships and uncertainty quantification for efficient phase space exploration [26].

Current Machine Learning Methodologies

Recent advances in autonomous phase identification have yielded several promising approaches:

CrystalShift Algorithm: This probabilistic algorithm employs symmetry-constrained optimization, best-first tree search, and Bayesian model comparison to quantify the posterior probability of potential phase combinations given a set of candidate phases [26]. Unlike neural network-based methods, CrystalShift requires only the experimental spectrum and candidate phases without expensive training on synthetic spectra. The algorithm optimizes lattice parameters without breaking space group symmetry and uses Bayesian model comparison to generate probability estimates that naturally introduce Occam's razor effect, preferring simpler models (fewer phases) as long as they adequately explain the data [26]. This approach has demonstrated robust probability estimates that outperform existing methods on both synthetic and experimental datasets, providing quantitative insights into materials' structural parameters that facilitate both expert evaluation and AI-based modeling [26].

Bayesian FusionNet Framework: This comprehensive framework implements a hybrid machine learning approach for autonomous phase identification through four key stages [27]:

- Pre-processing: Raw XRD data undergoes meticulous cleaning to eliminate noise, followed by normalization and smoothing procedures to ensure data integrity.

- Feature Extraction: A multi-faceted approach including peak identification (capturing position, intensity, and width), statistical features (mean, standard deviation, skewness, kurtosis), and Discrete Wavelet Transform to capture both high and low-frequency information.

- Feature Selection: A Hybrid Optimization Approach combining Kookaburra Optimization Algorithm (KOA) and White Shark Optimizer to ensure an optimal feature subset.

- Phase Identification: A Bayesian FusionNet integrating Improved GhostNetV2, Bayesian Neural Network (BNN), and Feedforward Neural Network (FNN), with outcomes aggregated by taking the mean to enhance reliability and accuracy [27].

Deep Learning Methods: Conventional deep learning approaches typically create training datasets using crystallographic structure databases (ICSD, Materials Project) to simulate XRD patterns, then train convolutional neural networks to create phase labeling models [26]. Some methods employ detect-and-subtract approaches, iteratively detecting a phase, subtracting its signal from the XRD pattern, and repeating until the pattern is sufficiently reconstructed [26]. However, these methods can be vulnerable to experimental noise and strong peak overlap from distinct phases, and their probability estimates have yet to be demonstrated as robust for XRD phase labeling [26].

Integration with USP 〈941〉 Compliance

A critical requirement for any autonomous phase identification system in pharmaceutical applications is adherence to USP 〈941〉 standards. The algorithmic approaches must be validated against the compendial requirements for angular precision (±0.10° for 2θ values), reference pattern matching, and quantitative analysis thresholds [23] [22]. Machine learning models can be trained to recognize not only the presence of specific polymorphs but also to flag potential compliance issues such as:

- Presence of undesired polymorphic forms above threshold levels

- Shifts in diffraction angles indicative of lattice parameter changes

- Variations in relative peak intensities suggesting preferred orientation

- Appearance of amorphous content affecting crystallinity estimates

The probabilistic outputs from algorithms like CrystalShift provide natural uncertainty quantification that aligns with quality-by-design principles, enabling risk-based decision making about pharmaceutical product quality [26].

Experimental Protocols for Polymorph Identification

Standard Operating Procedure for USP 〈941〉 Compliance

For regulatory compliance and technical accuracy, the following detailed protocol should be implemented for polymorph identification:

Sample Preparation Methodology:

- Particle Size Reduction: Gently grind the specimen in a mortar to a fine powder to improve randomness in crystal orientation. Avoid excessive grinding pressure that may induce phase transformations, and verify the diffraction pattern of the unground sample if transformation risk is suspected [22].

- Specimen Loading: For powder diffractometers, load the prepared powder into a specimen holder using a back-loading technique to minimize preferred orientation. For plate-like or needle-like crystals that exhibit strong orientation bias, consider spray-drying or side-drifted loading methods [22].

- Capillary Packing (Synchrotron): For synchrotron XRPD analysis of low-concentration APIs, uniformly pack powder blends into Lindemann glass capillaries (typically 1.0 mm diameter) using vibrational settling to ensure dense, consistent packing without segregation [25].

Instrument Calibration and Data Collection:

- Instrument Qualification: Verify diffractometer performance using silicon powder or α-alumina (corundum) certified reference material, confirming angular calibration and intensity response across the measurement range [23].

- Experimental Parameters:

- Radiation: Cu Kα (λ = 1.5406 Å) for organic compounds, with nickel filter to remove Kβ radiation [22]

- Voltage/Current: Typically 40 kV/40 mA for laboratory instruments, optimized for sample characteristics

- Scan Range: 2-40° 2θ for most organic crystals, extended to 60° for inorganic materials [23] [22]

- Step Size: 0.01-0.02° 2θ

- Counting Time: 1-5 seconds per step for routine analysis, extended for low-concentration samples

- Low-Concentration Analysis (Synchrotron): For API concentrations below 1 w/w%, utilize synchrotron radiation with extended exposure times (up to 2 hours) and selective detector masking to prevent overexposure from dominant excipient peaks [25].

Data Analysis and Interpretation:

- Pattern Processing: Apply smooth filtering and background subtraction to enhance signal-to-noise ratio while preserving legitimate diffraction peaks.

- Phase Identification: Compare processed pattern to reference data from ICDD PDF database or USP Reference Standard, matching both peak positions (within ±0.10° 2θ) and relative intensity ratios [22].

- Quantitative Analysis (if required): Employ internal standard method with reference material having similar absorption characteristics but non-overlapping diffraction pattern, establishing calibration curve across relevant concentration range [22].

Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Polymorph Identification

| Material/Reagent | Specification | Function in Analysis |

|---|---|---|

| Silicon Powder | NIST-certified reference material (SRM 640e) | Instrument qualification and angular calibration standard [23] |

| α-Alumina (Corundum) | NIST-certified reference material (SRM 676a) | Instrument performance verification and intensity calibration [23] |

| Lindemann Glass Capillaries | 1.0 mm diameter, 0.01 mm wall thickness | Specimen containment for synchrotron XRPD analysis of low-concentration samples [25] |

| USP Reference Standards | Pharmacopeial reference standards for specific APIs | Primary reference pattern generation for compendial compliance [22] |

| International Centre for Diffraction Data (ICDD) | PDF-2 database with >60,000 reference patterns | Reference database for phase identification of unknown materials [22] |

The field of polymorph identification is rapidly evolving from traditional manual analysis toward integrated autonomous systems that combine advanced instrumentation with artificial intelligence. The convergence of high-throughput experimentation, advanced detection technologies, and machine learning algorithms is creating new paradigms for pharmaceutical development that can significantly reduce development timelines while improving product quality and regulatory compliance. Future developments will likely focus on the complete integration of autonomous phase identification systems with robotic synthesis platforms, enabling closed-loop materials discovery and optimization without human intervention [26].

For regulatory compliance, the challenge remains to establish validation frameworks for autonomous identification systems that satisfy the requirements of USP 〈941〉 and other global pharmacopeial standards. This will require collaborative efforts between pharmaceutical companies, regulatory authorities, and technology developers to establish standardized protocols for algorithm validation, uncertainty quantification, and system qualification. As these frameworks mature, autonomous polymorph identification will become an indispensable tool for ensuring drug quality, safety, and efficacy throughout the product lifecycle, from initial development to commercial manufacturing and beyond.

The critical applications of polymorph identification in drug development, framed within the context of USP 〈941〉 compliance and enabled by advancing autonomous technologies, represent a fundamental pillar of modern pharmaceutical quality systems. By leveraging these approaches, the industry can better manage the risks associated with solid-form variability while accelerating the development of robust, effective pharmaceutical products.

From Theory to Practice: A Guide to Autonomous Phase Identification Techniques

X-ray diffraction (XRD) stands as a powerful technique for determining a material's crystal structure and is increasingly being incorporated into artificially intelligent agents for autonomous scientific discovery [26]. However, a significant bottleneck exists in the rapid, automated, and reliable analysis of XRD data at rates that match the pace of experimental measurements at synchrotron sources [26] [28]. Traditional analysis methods, such as Rietveld refinement, are computationally involved, require extensive expert knowledge, and lack the robustness required for high-throughput experimentation (HTE) [26]. The presence of multiple phases in a single sample further complicates analysis, leading to overlapping peaks and potentially ambiguous phase assignments [26]. In autonomous materials research, errors in phase labeling directly impact the inferred scientific knowledge and the subsequent decisions made by AI agents. Therefore, a labeling algorithm that provides quantitative probability estimation is not just preferable but essential for robust and efficient AI-based phase space exploration [26].

CrystalShift has been developed specifically to address these challenges, serving as an efficient probabilistic algorithm for XRD phase labeling that complements HTE and fits seamlessly into autonomous workflows [26] [29]. Its core innovation lies in employing a hierarchy of symmetry-constrained optimizations, best-first tree search, and Bayesian model comparison to quantify the posterior probability of potential phase combinations given a set of candidate phases [26]. In contrast to neural network-based methods, CrystalShift requires only the experimental spectrum for analysis and does not require any expensive training based on synthetic spectra, making it both agile and robust [26]. The probability estimates from CrystalShift have been demonstrated to be more robust against noise than existing methods, can be easily calibrated, and exhibit higher predictive accuracy on both synthetic and experimental datasets [28].

Core Methodology of CrystalShift

Algorithmic Workflow and Components

The CrystalShift algorithm operates through a sophisticated, multi-stage workflow designed to efficiently and accurately identify phase combinations from a single XRD pattern. The process requires two primary inputs: the experimental XRD spectrum and a user-provided list of candidate phases [26]. The workflow, illustrated in the diagram below, integrates several advanced computational techniques to achieve probabilistic phase labeling.

The workflow begins with a best-first tree search algorithm that systematically explores possible phase combinations [26]. The search starts by evaluating all individual phases from the candidate pool. A symmetry-constrained pseudo-refinement lattice cell optimization algorithm then optimizes the lattice parameters of these candidate phases—without breaking space group symmetry—to minimize the difference between the simulated and experimental XRD spectrum [26]. Based on the residue from this refinement, the tree search algorithm selects the top-k most likely nodes and expands them by adding one additional candidate phase to form a new candidate phase combination. This refine-and-expand process repeats iteratively until a specified depth is reached, which corresponds to the maximum allowed number of coexisting phases [26].

Following the search process, the results feed into a Bayesian model comparison framework to generate probabilistic labels [26]. The evidence for each model, representing each phase combination, is calculated by marginalizing out all variables—including lattice parameters, phase activations, and peak width—in the likelihood function. This marginalization process is analytically intractable, so CrystalShift employs the Laplace approximation, which assumes the likelihood function to be locally Gaussian near the optimum [26]. A final softmax function is applied over all model evidence to generate the output as a probability distribution. A key feature of this framework is its inherent preference for sparseness, which prevents overfitting the XRD spectrum by adding phases that do not actually exist, adhering to the principle of Occam's razor [26].

Key Differentiators from Alternative Approaches

CrystalShift differentiates itself from other phase identification methods through several key characteristics, as summarized in the table below.

Table 1: Comparison of CrystalShift with Alternative Phase Identification Approaches

| Method | Core Approach | Training Requirement | Probabilistic Output | Lattice Refinement | Handles Multi-Phase |

|---|---|---|---|---|---|

| CrystalShift | Bayesian optimization & tree search | No | Yes, robust and calibratable | Yes, symmetry-constrained | Yes, up to specified limit |

| Traditional Rietveld | Least-squares refinement | No | No | Yes | Yes, but requires prior ID |

| Deep Learning (e.g., CNN) [30] | Neural network inference | Yes, large synthetic datasets | Possible via ensembles | Limited in some implementations | Varies by model |

| Non-negative Matrix Factorization (NMF) [4] | Matrix factorization | No | No | Limited (e.g., multiplicative shift only) | Yes, but requires phase number |

| AutoMapper [4] | Neural-network optimization | No | Not explicitly mentioned | Yes, with texture | Yes |

A primary differentiator is that CrystalShift requires no training data, unlike deep learning methods which often require generating large datasets of synthetic XRD patterns for training [26] [30]. Furthermore, while methods like convolutional non-negative matrix factorization (NMF) often use simple multiplicative peak shifting, CrystalShift models diffraction peak positions using all crystallographic parameters, providing a more physically accurate model, especially for non-cubic crystal systems [26]. This approach constrains the model without adding significant computational overhead and, when combined with regularization of lattice strain, peak width, and phase activation during optimization, ensures that the results are physically sound [26].

Experimental Protocols and Validation

Implementation and Experimental Setup

The robustness and performance of CrystalShift were validated through applications on both synthetic and experimental datasets. For experimental validation, one representative study involved analyzing eleven XRD patterns collected from a sample with distinct phases, specifically the CrₓFe₀.₅₋ᵥVO₄ monoclinic phase as a thin film on a fluorine-doped tin oxide (SnO₂) substrate at different Fe-Cr ratios [26]. The monoclinic symmetry of this system produces complex peak shifting in the XRD pattern as a function of composition and strain, which cannot be accurately modeled by simpler methods like multiplicative peak shifting [26].