Assessing Synthesis Feasibility in Multi-Parameter Optimization: A Strategic Framework for Drug Discovery

This article addresses the critical challenge of integrating synthetic feasibility assessment into the multi-parameter optimization (MPO) process in drug discovery.

Assessing Synthesis Feasibility in Multi-Parameter Optimization: A Strategic Framework for Drug Discovery

Abstract

This article addresses the critical challenge of integrating synthetic feasibility assessment into the multi-parameter optimization (MPO) process in drug discovery. As generative chemistry and AI-driven design rapidly expand the explorable chemical space, ensuring that proposed compounds are practically synthesizable has become a major bottleneck. We explore the foundational principles of synthesizability scoring, from classical rule-based methods to modern machine learning approaches that incorporate human expert feedback. The article provides a methodological framework for applying Multi-Criteria Decision Analysis (MCDA) to balance synthetic feasibility with other critical parameters like potency, pharmacokinetics, and toxicity. Through troubleshooting guidance and comparative analysis of validation strategies, we equip researchers with practical tools to prioritize viable drug candidates, reduce late-stage attrition, and accelerate the development of innovative therapeutics.

The Synthesis Feasibility Imperative: Foundations and Challenges in Drug Discovery

Defining Synthesis Feasibility in the Context of Multi-Parameter Optimization

In modern drug discovery, multi-parameter optimization (MPO) has emerged as a critical framework for addressing the complex trade-offs inherent in developing viable therapeutic candidates. The process involves simultaneously balancing multiple, often competing, molecular properties—such as potency, selectivity, metabolic stability, and solubility—to identify compounds with the highest probability of clinical success [1]. Within this framework, the concept of synthesis feasibility serves as a crucial charge-balancing criterion, determining whether a theoretically optimal compound can be practically and efficiently synthesized, thus bridging computational design with laboratory reality.

The pharmaceutical industry faces a persistent productivity challenge, with the average cost per approved drug reaching $2.6 billion and development timelines spanning 10-15 years, coupled with a 90% failure rate in clinical trials [1]. This stark reality, often described as Eroom's Law (the inverse of Moore's Law), highlights the critical need for more efficient discovery approaches [1]. MPO, enhanced by artificial intelligence, represents a strategic response to this challenge, enabling researchers to navigate the vast chemical space of approximately 10³³ drug-like compounds to identify candidates that optimally balance multiple parameters, including synthetic accessibility [1].

Algorithmic Foundations of Multi-Objective Optimization

Theoretical Framework

Multi-parameter optimization in drug discovery represents a specialized application of multi-objective optimization (MOO), which addresses problems involving multiple conflicting objectives simultaneously [2]. The mathematical formulation of an MOO problem aims to find a vector of decision variables (x^* \in X) that optimizes a vector of (k \geq 2) objective functions:

[\min{x \in X} (f1(x), f2(x), \ldots, fk(x))]

where (X) represents the feasible region of decision variables [2]. In pharmaceutical contexts, these objective functions typically represent molecular properties such as binding affinity, toxicity, solubility, and synthetic complexity.

For such problems, there is rarely a single solution that optimizes all objectives simultaneously. Instead, MOO identifies a set of Pareto optimal solutions—compounds where no objective can be improved without degrading at least one other objective [2]. The collection of these solutions forms a Pareto front, which defines the optimal trade-off surface in the multi-dimensional property space [2] [3].

Key Optimization Algorithms in Drug Discovery

Table 1: Comparison of Multi-Objective Optimization Algorithms

| Algorithm | Optimization Approach | Key Features | Drug Discovery Applications |

|---|---|---|---|

| Multi-Objective Genetic Algorithm (MOGA) [4] | Evolutionary selection based on fitness | Mimics natural selection; handles non-convex spaces | Controller optimization; balanced molecular design |

| Multi-Objective Particle Swarm Optimization (MOPSO) [4] | Swarm intelligence based on particle movement | Efficient exploration/exploitation balance; fast convergence | Microgrid frequency regulation; chemical space exploration |

| Non-Dominated Sorting Genetic Algorithm (NSGA-II) [3] | Elite-preserving evolutionary algorithm with crowding distance | Good convergence; maintains solution diversity | Molecular design; balanced property optimization |

| Multi-Objective Resistance-Capacitance Optimization Algorithm (MORCOA) [3] | Physics-inspired using RC circuit transient response | Robust global optimization; handles many competing objectives | Engineering design; potential for complex molecular optimization |

These algorithms employ different strategies to approximate the Pareto front. A posteriori methods generate a representative set of Pareto optimal solutions before decision-makers select based on preferences, while a priori methods incorporate preferences before optimization [3]. The linear weighted sum technique represents a simplified approach that scalarizes multiple objectives into a single function, though it may struggle to find solutions in non-convex regions of the Pareto front [5].

Synthesis Feasibility as a Charge-Balancing Criterion

Defining the Synthesis Feasibility Parameter

Synthesis feasibility represents a critical charge-balancing criterion in MPO, quantifying the practical synthesizability of a proposed molecular structure. This parameter integrates multiple chemical considerations, including step count, reaction complexity, commercial availability of starting materials, predicted yields, and purification challenges. In MPO frameworks, synthesis feasibility acts as a constraint function that balances ideal molecular properties against practical synthetic accessibility, preventing the selection of theoretically optimal but practically inaccessible compounds.

The importance of synthesis feasibility stems from its direct impact on discovery timelines and resource allocation. Compounds with low synthesis feasibility typically require extensive route development, difficult-to-source starting materials, or low-yielding transformations, creating bottlenecks in the critical design-make-test-analyze (DMTA) cycles that drive lead optimization [6]. By incorporating synthesis feasibility as an explicit parameter, research teams can prioritize compounds that balance optimal molecular properties with practical synthetic pathways.

Integration with Other Molecular Parameters

Synthesis feasibility functions as a charge-balancing criterion against other key discovery parameters:

Potency-Synthesis Trade-off: Highly potent compounds may contain complex structural motifs that challenge synthetic feasibility. MPO balances this trade-off to identify synthetically accessible compounds with sufficient potency.

Selectivity-Complexity Relationship: Achieving selectivity often requires specific structural features that may complicate synthesis. MPO evaluates whether similar selectivity can be achieved with simpler, more synthetically accessible scaffolds.

ADMET-Synthesis Interplay: Compounds optimized for absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties may require structural modifications that impact synthetic feasibility [1].

Table 2: Key Parameters in Drug Discovery Multi-Parameter Optimization

| Parameter Category | Specific Metrics | Relationship to Synthesis Feasibility |

|---|---|---|

| Potency | IC₅₀, EC₅₀, Ki | Complex binding motifs often decrease synthetic accessibility |

| Selectivity | Selectivity index, panel screening | Specific recognition elements may require challenging syntheses |

| ADMET | Metabolic stability, permeability, solubility | Structural optimizations for ADMET may complicate synthesis |

| Physicochemical | LogP, PSA, molecular weight | Correlates with compound complexity and synthetic challenges |

| Synthesis Feasibility | Step count, complexity score, availability | Primary charge-balancing criterion |

Experimental Protocols for Assessing Synthesis Feasibility

Retrosynthetic Analysis Protocol

Objective: To evaluate the synthetic accessibility of proposed compounds through systematic retrosynthetic analysis.

Materials:

- Compound structures in standardized representation (SMILES, SDF)

- Retrosynthetic analysis software (e.g., AiZynthFinder, ASKCOS)

- Chemical database access (e.g., Reaxys, SciFinder)

- Commercially available building block catalogs

Methodology:

- Input Structure Preparation: Convert target compounds to machine-readable formats and remove stereochemistry if not specified.

- Retrosynthetic Expansion: Apply retrosynthetic transformations to generate potential synthetic pathways using implemented algorithm.

- Route Scoring: Evaluate generated routes based on:

- Number of synthetic steps

- Commercial availability of building blocks (price, lead time)

- Reaction feasibility scores (yield predictions, safety considerations)

- Overall complexity assessment

- Feasibility Index Calculation: Compute composite feasibility score incorporating all route parameters, with weighting based on organizational priorities.

- Comparative Analysis: Rank compounds by feasibility scores alongside other molecular properties to identify optimal balances.

Validation: Compare predicted feasible syntheses with literature-known routes for benchmark compounds to validate scoring accuracy.

High-Throughput Experimental Validation Protocol

Objective: To empirically validate synthesis feasibility predictions through standardized small-scale synthesis.

Materials:

- Proposed compound list with priority rankings

- Automated synthesis platform (e.g., Chemspeed, Unchained Labs)

- Standardized reaction kits for common transformations

- LC-MS systems for reaction monitoring and purification

- Building blocks from commercial suppliers or internal collections

Methodology:

- Compound Selection: Select diverse compounds spanning the predicted feasibility range (high, medium, low).

- Route Implementation: Execute predicted optimal synthetic routes on automated platforms.

- Success Monitoring: Track reaction progress, purification success, and final compound quality.

- Metric Calculation: Determine empirical feasibility scores based on:

- Synthesis success rate (binary outcome)

- Purified yield after optimization

- Total synthesis time

- Number of required purification steps

- Model Refinement: Use experimental results to refine computational feasibility predictions.

This protocol generates ground-truth data that validates and improves computational synthesis feasibility predictions, creating a feedback loop that enhances MPO decision-making.

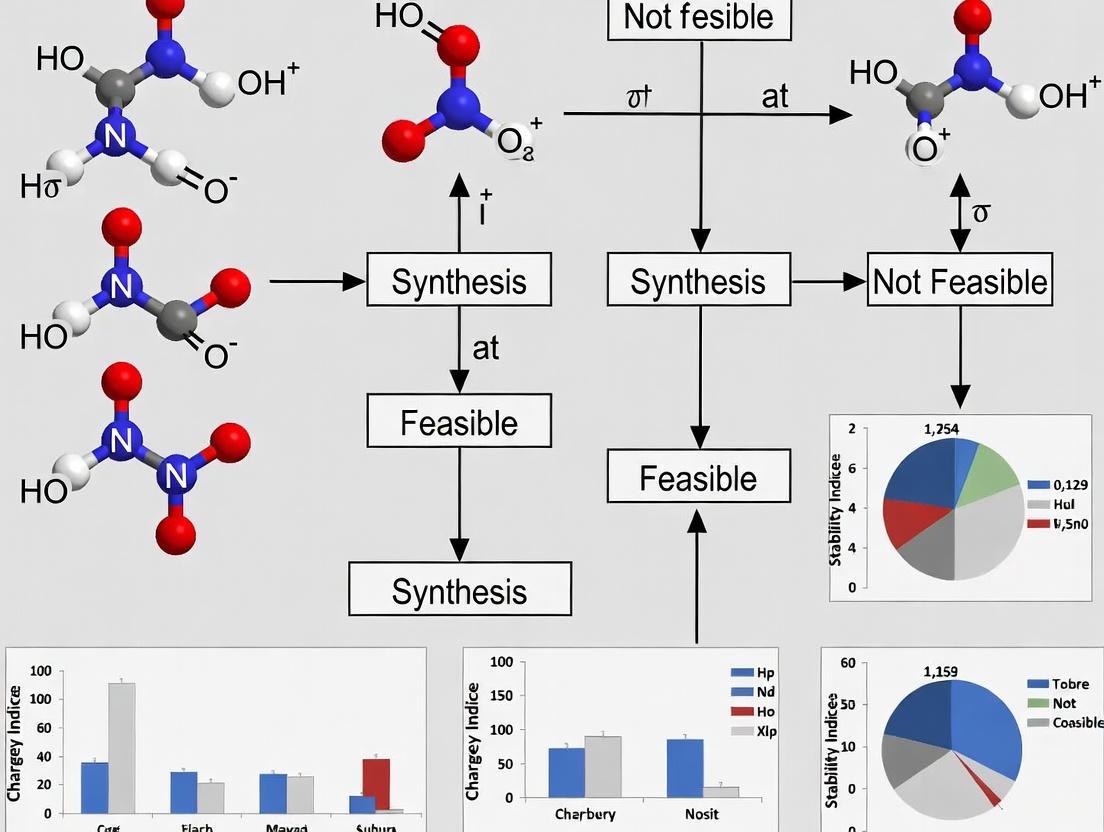

Visualization of Synthesis Feasibility Assessment

The following diagram illustrates the integrated workflow for assessing synthesis feasibility within the multi-parameter optimization framework:

Synthesis Feasibility Assessment Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Synthesis Feasibility Assessment

| Tool Category | Specific Tools/Platforms | Function in Feasibility Assessment |

|---|---|---|

| Retrosynthetic Software | AiZynthFinder, ASKCOS, Synthia | Automated retrosynthetic analysis and route generation |

| Chemical Databases | Reaxys, SciFinder, PubChem | Building block availability and literature precedent checking |

| Automated Synthesis Platforms | Chemspeed, Unchained Labs | Empirical validation of predicted synthetic routes |

| AI-Based Design Tools | Generative chemical models (VAEs, GANs) [7] [1] | De novo molecular design with synthetic accessibility constraints |

| Reaction Prediction Tools | Molecular Transformer, Reaction Prediction | Prediction of reaction outcomes and potential side products |

| Building Block Sources | Enamine, Sigma-Aldrich, Mcule | Sourcing of starting materials for synthetic validation |

Comparative Performance of Optimization Approaches

Algorithm Performance Metrics

The effectiveness of different MPO approaches can be evaluated using standardized metrics that capture both computational efficiency and practical utility in identifying synthesizable compounds:

Table 4: Performance Comparison of Multi-Parameter Optimization Methods

| Algorithm | Pareto Front Quality | Computational Efficiency | Synthesis Feasibility Integration | Handling of Conflicting Objectives |

|---|---|---|---|---|

| MOGA [4] | Good diversity; moderate convergence | Moderate computational requirements | Requires explicit feasibility scoring | Effective for 2-5 objectives |

| MOPSO [4] | Excellent convergence; moderate diversity | Faster convergence than MOGA | Compatible with complex feasibility functions | Robust for 3-8 objectives |

| NSGA-II [3] | Excellent diversity preservation | Higher computational cost for large populations | Flexible constraint handling | Effective for highly conflicting objectives |

| MORCOA [3] | Robust global optimization; evenly distributed solutions | Efficient for high-dimensional problems | Physics-inspired approach to balance trade-offs | Superior for many competing objectives |

Case Study: AI-Driven Hit-to-Lead Optimization

Recent advances demonstrate the impact of integrating synthesis feasibility into MPO frameworks. In a 2025 study, deep graph networks were used to generate 26,000+ virtual analogs, resulting in sub-nanomolar MAGL inhibitors with over 4,500-fold potency improvement over initial hits [6]. This achievement exemplifies effective multi-parameter optimization, where synthesis feasibility was explicitly included as a constraint to ensure generated compounds were not only potent but also synthetically accessible.

The integration of generative AI models, including Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs), has further enhanced this capability [7] [1]. These approaches employ a generator network that proposes new molecular structures and a discriminator network that evaluates their authenticity, driving the creation of novel compounds optimized for multiple parameters including synthetic accessibility [1].

Future Directions in Synthesis Feasibility Assessment

The field of synthesis feasibility assessment within MPO continues to evolve, with several emerging trends shaping its development. Reinforcement learning approaches are being applied to retrosynthetic planning, enabling systems to learn optimal disconnection strategies through iterative practice [1]. The integration of robust control theory from engineering disciplines, particularly μ-synthesis controllers that handle system uncertainties, offers promising approaches for managing the inherent uncertainties in synthetic route predictions [4].

Additionally, the emergence of synthetic data generation techniques, including Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs), enables the creation of expanded chemical datasets for training more accurate feasibility prediction models [7] [8]. These approaches facilitate the development of evaluation frameworks that assess synthetic data quality across multiple dimensions including fidelity, utility, and privacy [9], which can be adapted to evaluate synthesis feasibility predictions.

As these technologies mature, the integration of synthesis feasibility as a charge-balancing criterion in multi-parameter optimization will continue to enhance its critical role in bridging computational design and practical synthesis, ultimately accelerating the discovery of viable therapeutic candidates.

The drug discovery pipeline is a high-stakes arena where the selection of infeasible candidates exacts a heavy toll, with approximately 90% of drug candidates failing in clinical development [10] [11]. This staggering attrition rate represents one of the most significant challenges facing pharmaceutical research and development today. The "cost of neglect"—the consequences of advancing suboptimal candidates—manifests in prolonged timelines, escalated expenses, and ultimately, failed treatments for patients in need.

Recent analyses reveal that between 40-50% of clinical failures stem from lack of clinical efficacy, while approximately 30% result from unmanageable toxicity [10] [11]. These failures often originate not in the clinical setting but in the earliest stages of drug discovery, where inadequate candidate selection and optimization criteria set the stage for later derailment. This article examines how systematic feasibility assessment, particularly through innovative frameworks like Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) and Multi-Criteria Decision Analysis (MCDA), can address these critical failure points and reshape the future of drug development pipelines.

The High Stakes of Failure: Quantifying Pipeline Attrition

The drug development process is notoriously resource-intensive, typically requiring 10-15 years and over $1-2 billion for each new drug approved for clinical use [10]. When candidates fail after entering clinical trials, these sunk costs represent significant financial losses and opportunity costs for pharmaceutical companies and academic institutions. The quantitative breakdown of failure causes provides critical insights for improving selection feasibility.

Table 1: Primary Causes of Clinical Drug Development Failure [10] [11]

| Failure Cause | Failure Percentage | Primary Stage Impacted |

|---|---|---|

| Lack of Clinical Efficacy | 40-50% | Phase II/III Trials |

| Unmanageable Toxicity | ~30% | Phase I/II Trials |

| Poor Drug-Like Properties | 10-15% | Preclinical/Phase I |

| Lack of Commercial Interest & Poor Strategic Planning | ~10% | All Stages |

The distribution of failure causes highlights a critical insight: the majority of failures stem from fundamental flaws in candidate compounds rather than operational trial execution. This suggests that improving early-stage feasibility assessment could significantly impact overall pipeline productivity.

The STAR Framework: Rebalancing Drug Optimization Criteria

Current drug optimization processes predominantly emphasize potency and specificity through structure-activity relationship (SAR) studies, often at the expense of equally critical tissue exposure and selectivity considerations [10] [11]. This imbalanced approach frequently leads to selecting candidates that appear optimal in simplified biochemical assays but possess inherent flaws that manifest later in clinical development.

The STAR (Structure–Tissue Exposure/Selectivity–Activity Relationship) framework addresses this imbalance by systematically classifying drug candidates based on both potency/specificity and tissue exposure/selectivity profiles [10]. This classification enables more informed candidate selection and clinical dose strategy, directly addressing major failure causes.

Table 2: STAR Classification System for Drug Candidates [10]

| Class | Potency/Specificity | Tissue Exposure/Selectivity | Clinical Dose Need | Success Probability |

|---|---|---|---|---|

| Class I | High | High | Low | Superior efficacy/safety |

| Class II | High | Low | High | High toxicity risk |

| Class III | Low (Adequate) | High | Low-Medium | High but often overlooked |

| Class IV | Low | Low | N/A | Inadequate efficacy/safety |

The STAR framework reveals that Class III candidates—those with adequate specificity/potency but high tissue exposure/selectivity—represent a particularly valuable opportunity. These compounds often demonstrate favorable clinical success due to manageable toxicity profiles but are frequently overlooked in traditional optimization schemes that overemphasize potency metrics [10].

Multi-Criteria Decision Analysis: A Systematic Approach to Candidate Selection

Multi-Criteria Decision Analysis (MCDA) provides a structured computational framework for evaluating drug candidates against multiple, often competing, objectives simultaneously [12]. In drug discovery, MCDA methodologies help balance critical criteria including pharmacokinetics, pharmacodynamics, toxicity, and synthesis feasibility—addressing the complex trade-offs that single-metric optimization approaches often miss.

The VIKOR method, one MCDA technique implemented in AI-powered Drug Design (AIDD) platforms, operates by identifying compromise solutions through a balanced evaluation of multiple criteria [12]. The method calculates utility (S) and regret (R) measures for each candidate:

- Utility (Sj) = ∑[i=1 to n] wi (fi* - fi(xj))/(fi* - f_i^-)

- Regret (Rj) = maxi [wi (fi* - fi(xj))/(fi* - fi^-)]

These measures combine into an aggregated Q-score that ranks candidates based on their balanced performance across all criteria, with a preference parameter (v) allowing researchers to weight group benefit against individual regret [12].

Diagram 1: Integrated Feasibility Assessment Workflow. This workflow illustrates how combining MCDA evaluation with STAR classification can systematically identify high-potential candidates while flagging infeasible candidates for early termination.

Experimental Protocols for Feasibility Assessment

Protocol 1: Tissue Exposure and Selectivity Profiling

Objective: Quantify drug candidate distribution between diseased and healthy tissues to inform STAR classification [10].

Methodology:

- Administer candidate compounds to disease model organisms via relevant routes (oral, intravenous)

- Collect tissue samples (target organs, liver, kidney, brain) at predetermined timepoints

- Quantify compound concentrations using LC-MS/MS analysis

- Calculate tissue-to-plasma ratios and disease-to-healthy tissue selectivity indices

- Compare exposure profiles against efficacy thresholds and toxicity limits

Key Parameters:

- Maximum tissue concentration (C~max~)

- Area under concentration-time curve (AUC)

- Tissue selectivity index (TSI) = AUC~diseased tissue~/AUC~healthy tissue~

- Tissue-plasma partition coefficients

Protocol 2: Multi-Parameter Optimization Using MCDA

Objective: Systematically rank drug candidates based on multiple properties to identify optimal leads [12].

Methodology:

- Define evaluation criteria (potency, selectivity, solubility, metabolic stability, etc.)

- Assign weights to each criterion based on therapeutic area requirements

- Measure or compute each candidate's performance for all criteria

- Apply VIKOR method to calculate utility (S), regret (R), and aggregated Q-scores

- Rank candidates by Q-scores and identify compromise solutions

- Validate ranking against experimental data and adjust weights if necessary

Key Parameters:

- Ideal (fi*) and anti-ideal (fi^-) values for each criterion

- Weight assignments (w_i) reflecting relative importance

- Preference parameter (v) balancing utility and regret

- Q-score ranking across candidate set

Research Reagent Solutions for Feasibility Assessment

Table 3: Essential Research Tools for Comprehensive Feasibility Assessment

| Reagent/Technology | Primary Function | Application in Feasibility Assessment |

|---|---|---|

| High-Throughput Screening (HTS) Platforms | Automated compound screening | Rapid potency and specificity assessment across multiple targets [10] |

| CRISPR-Based Target Validation Systems | Genetic target confirmation | Validates molecular target relevance to human disease before candidate optimization [11] |

| LC-MS/MS Instrumentation | Quantitative bioanalysis | Measures tissue exposure and selectivity parameters for STAR classification [10] |

| AI-Powered Drug Design (AIDD) Platforms | Generative molecule design with MCDA | Integrates multiple optimization criteria for balanced candidate selection [12] |

| Predictive ADMET Modeling Software | In silico property prediction | Estimates absorption, distribution, metabolism, excretion, and toxicity early in discovery [12] |

| Microsomal Stability Assays | Metabolic stability assessment | Evaluates compound susceptibility to metabolic degradation [10] |

The systematic integration of balanced optimization frameworks like STAR and computational decision-support tools like MCDA represents a paradigm shift in how the drug discovery community can address the persistent challenge of pipeline attrition. By moving beyond single-dimensional potency optimization to embrace multifaceted feasibility assessment, researchers can significantly improve the identification of candidates with genuine clinical potential.

The "cost of neglect"—continuing to advance infeasible candidates based on incomplete optimization criteria—remains substantial. However, the experimental protocols and analytical frameworks presented here offer tangible pathways to derisk drug development pipelines. Through earlier and more rigorous feasibility assessment that equally weights tissue exposure/selectivity with potency/specificity, and through systematic multi-criteria decision support, the field can progress toward more efficient and productive drug discovery ecosystems that deliver better medicines to patients in need.

For decades, the charge-balancing criterion has served as a foundational heuristic in the initial assessment of inorganic material synthesis feasibility. Rooted in fundamental physicochemical knowledge, this rule posits that synthesizable inorganic compounds should exhibit a net neutral ionic charge when constituent elements are considered in their common oxidation states. It has provided chemists with an intuitive, first-pass filter for prioritizing candidate materials from a vast and unexplored chemical space. However, within the rigorous context of modern materials science and drug development, reliance on such simplified empirical rules presents significant limitations. As the demand for novel functional materials accelerates, the scientific community is increasingly confronted with the inadequacy of traditional assessment methods. This article objectively examines the specific limitations of the charge-balancing criterion by comparing its performance against emerging data-driven machine learning (ML) techniques, framing this evolution within broader thesis research on synthesis feasibility assessment.

Quantitative Comparison: Traditional vs. Modern Assessment Methods

The following tables summarize the performance and characteristics of traditional charge-balancing versus modern computational and ML-based assessment methods, based on current research findings.

Table 1: Performance Comparison of Feasibility Assessment Methods

| Assessment Method | Theoretical Basis | Reported Accuracy/Performance | Key Limitations |

|---|---|---|---|

| Charge-Balancing Criterion | Empirical rule (net neutral charge) | Only 37% of observed Cs binary compounds in ICSD meet the criterion [13] | Neglects diverse bonding environments; fails for metallic/covalent materials [13] |

| Formation Energy (DFT) | Thermodynamics (comparative stability) | Challenging to predict feasibility based on energy alone; neglects kinetic stabilization [13] | High computational cost; does not account for kinetic barriers [13] |

| Machine Learning (FSscore) | Data-driven ranking via Graph Neural Network | Fine-tuned model sampled >40% synthesizable molecules while maintaining good docking scores [14] | Performance on very complex chemical scopes with limited labels can be challenging [14] |

| ML (SCScore, RAscore) | Data-driven (reaction data/templates) | Good performance on benchmarks approximating reaction path length [14] | Struggles with out-of-distribution data and predicting feasibility via synthesis predictors [14] |

Table 2: Characteristics of Data-Driven Synthesizability Scores

| Score Name | Type | Basis of Assessment | Differentiating Features |

|---|---|---|---|

| FSscore [14] | ML-based | Pre-trained on reactions, fine-tuned with human expertise | Fully differentiable; incorporates stereochemistry and human feedback [14] |

| SA Score [14] | Rule-based | Penalizes rare fragments and specific structural features | Fails to identify large, complex molecules with reasonable fragments [14] |

| SCScore [14] | ML-based | Predicts complexity via required reaction steps | Based on Morgan fingerprints; struggles with feasibility prediction [14] |

| SYBA [14] | ML-based | Classifies molecules as easy or hard to synthesize | Found to have sub-optimal performance in some assessments [14] |

| RAscore [14] | ML-based | Predicts feasibility relative to a synthesis predictor | Dependent on the performance of the upstream synthesis prediction tool [14] |

Experimental Protocols: Evaluating and Advancing Synthesis Feasibility

Methodology: Quantifying the Shortcomings of the Charge-Balancing Criterion

A critical experimental approach for validating the limitations of traditional assessment involves large-scale retrospective analysis of known materials.

- Data Source Curation: Researchers utilize established experimental crystal structure databases, primarily the Inorganic Crystal Structure Database (ICSD), as a ground-truth source for synthesizable inorganic materials [13].

- Validation Protocol: A set of experimentally observed compounds (e.g., all Cs binary compounds) is selected from the database. For each compound, researchers apply the charge-balancing criterion, calculating the net ionic charge using common oxidation states [13].

- Performance Metric Calculation: The percentage of experimentally observed compounds that meet the charge-balancing criterion is calculated. As evidenced in the results, this percentage can be remarkably low (e.g., 37%), demonstrating the criterion's failure to account for a majority of real-world synthesizable materials [13].

Methodology: The FSscore Machine Learning Framework

The FSscore represents a modern, two-stage ML approach designed to overcome the limitations of rule-based methods.

Stage 1: Baseline Model Pre-training

- Data Acquisition: The model is pre-trained on a large-scale dataset of reactant-product pairs derived from chemical reaction databases. This data structure implicitly informs the model about synthetic difficulty through the relational nature of the reactions [14].

- Model Architecture: A Graph Attention Network (GAT) is used to process molecular structures. This architecture offers high expressivity and can capture crucial structural details, including stereochemistry and repeated substructures, which are often missed by simpler fingerprint-based methods [14].

- Training Objective: The model is framed as a ranking problem, learning from pairwise preferences where reactants are assumed to be more synthetically accessible than their products [14].

Stage 2: Fine-Tuning with Human Expertise

- Feedback Integration: The pre-trained model is fine-tuned using a relatively small set of binary preference labels (e.g., 20-50 pairs) provided by expert chemists, focusing the model on a specific chemical space of interest (e.g., natural products, PROTACs) [14].

- Active Learning Framework: The fine-tuning process can be embedded in an active-learning loop, where the model itself helps select the most informative pairs for the experts to label, optimizing the use of valuable human resources [14].

- Validation: The model's performance is evaluated by its ability to rank molecules by synthesizability and its utility in generative model pipelines, measured by the percentage of generated molecules deemed synthesizable by external sources (e.g., Chemspace) [14].

The logical workflow of this advanced methodology is outlined below.

Table 3: Essential Research Reagents and Computational Tools

| Item/Resource | Function in Research | Application Note |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Provides a curated source of experimentally synthesized inorganic structures, used as ground truth for validating assessment methods [13]. | Serves as the benchmark for evaluating the accuracy of both traditional and ML-based feasibility criteria. |

| Chemical Reaction Databases | Large collections of published chemical reactions (e.g., USPTO, Reaxys). Serve as the primary data source for pre-training ML models like FSscore and SCScore [14]. | The quality and scope of the database directly influence the model's generalizability and initial knowledge. |

| Graph Neural Network (GNN) Frameworks | Software libraries (e.g., PyTorch Geometric, Deep Graph Library) used to build models that learn directly from molecular graph structures [14]. | Enable the incorporation of stereochemistry and complex structural patterns into the feasibility assessment. |

| Density Functional Theory (DFT) Codes | Computational tools for calculating fundamental material properties, including formation energy, which is one input for assessing thermodynamic stability [13]. | Computationally intensive; often used in conjunction with, rather than as a replacement for, data-driven methods. |

| Expert Chemist Panels | Source of human feedback for fine-tuning ML models. Provide binary preferences on synthesizability within a focused chemical domain [14]. | Critical for transferring human intuition and domain-specific knowledge into the computational model. |

The transition from traditional, intuition-based assessment to quantitative, data-driven methods represents a paradigm shift in the field of synthesis feasibility. The empirical charge-balancing criterion, while simple, fails to account for the complex bonding environments and kinetic factors that govern real-world synthesis, as quantitatively demonstrated by its poor performance against experimental databases. Machine learning models, particularly those that can be refined with targeted human expertise like the FSscore, offer a powerful alternative. They integrate the relational knowledge from vast reaction corpora with the nuanced understanding of expert chemists, enabling more accurate and context-aware synthesizability predictions. This evolution is critical for accelerating the discovery of new functional materials and drug candidates, moving the field beyond intuition towards a more predictive and efficient science.

The pursuit of new chemical entities, particularly in pharmaceutical and materials science, demands robust methods for assessing synthetic feasibility. This evaluation is paramount for prioritizing research efforts and allocating resources efficiently. The concept of charge-balancing, a fundamental principle in inorganic chemistry where a material's ionic charge must net zero based on common oxidation states, has long served as an initial proxy for synthesizability. [15] However, this criterion alone proves insufficient, correctly identifying only 37% of known synthesized inorganic crystalline materials. [15] This stark limitation highlights the critical need to incorporate more sophisticated determinants, primarily structural complexity, chirality, and the availability of synthetic pathways, into feasibility assessment frameworks. These factors collectively influence not only whether a molecule can be made but also the practicality of its production at relevant scales and purities.

The global market for chiral technology, projected to grow from US$8.6 billion in 2024 to US$10.7 billion by 2030, underscores the economic significance of controlling these molecular features, particularly in pharmaceutical applications where enantiopurity directly impacts therapeutic efficacy and safety. [16] This review systematically compares how structural complexity, chirality, and available synthesis methods determine the feasibility of preparing target molecules, providing researchers with a structured approach to evaluate synthetic accessibility within charge-balancing criterion synthesis feasibility assessment research.

Structural Complexity: Synthetic Implications

Structural complexity encompasses molecular size, ring systems, stereocenters, and overall three-dimensional architecture. Comparative analyses between natural products (NPs) and synthetic compounds (SCs) reveal distinct evolutionary patterns and synthetic challenges. NPs have historically served as inspiration for synthetic campaigns, yet they possess unique structural characteristics that complicate their synthesis.

Table 1: Time-Dependent Structural Evolution of Natural Products vs. Synthetic Compounds

| Structural Feature | Natural Products Trend | Synthetic Compounds Trend | Synthetic Implications |

|---|---|---|---|

| Molecular Size | Increasing molecular weight, volume, and surface area over time [17] | Limited range governed by drug-like constraints [17] | Larger NPs require more synthetic steps and complex purification |

| Ring Systems | More rings, particularly non-aromatic and fused systems; increasing glycosylation [17] | More aromatic rings, especially 5- and 6-membered; stable energy conformations [17] | NP ring systems often require specialized cyclization strategies |

| Structural Diversity | High scaffold diversity and complexity [17] | Broader synthetic pathways but more constrained chemical space [17] | NP-inspired synthesis expands accessible chemical space |

| Synthetic Accessibility | Often lower due to complex fused ring systems [17] | Generally higher due to prevalence of synthetically tractable motifs [17] | Retrosynthetic analysis of NPs often reveals key strategic disconnections |

Natural products exhibit a clear trend toward increasing structural complexity over time, with modern NPs being larger and containing more complex ring systems than their historical counterparts. [17] This evolution reflects technological advancements in isolation and characterization techniques that enable scientists to identify more challenging structures. Conversely, synthetic compounds have evolved under different constraints, primarily governed by drug-like properties (as embodied in guidelines like Lipinski's Rule of Five) and synthetic accessibility. [17] This divergence creates a fundamental tension between biological relevance (often associated with NP-like structures) and synthetic feasibility.

The synthetic implications of structural complexity are profound. Complex NPs like (N,N)-spiroketals, which feature rigid three-dimensional architectures with multiple stereocenters, present significant synthetic challenges that require innovative methodologies. [18] Such structures are increasingly valued in drug discovery for their ability to interact with biological targets in specific ways, yet their synthesis often demands multi-step sequences with careful stereocontrol. The rise of pseudo-natural products, which combine NP fragments through arrangements not found in nature, represents one approach to balancing the biological relevance of NPs with the synthetic accessibility of SCs. [17]

Chirality: Analytical and Synthetic Challenges

Chirality introduces profound implications for synthetic feasibility, particularly in pharmaceutical applications where different enantiomers can exhibit vastly different biological activities. [16] The ability to control stereochemistry during synthesis and accurately determine enantiomeric purity represents a critical feasibility determinant.

Table 2: Chirality Analysis and Synthesis Methods

| Method Category | Specific Techniques | Application in Feasibility Assessment | Performance Considerations |

|---|---|---|---|

| Analytical Methods | Chromatography (HPLC, GC with chiral stationary phases), Chiroptical methods (ORD, CD, VCD), NMR with chiral solvating agents, Mass spectrometry [19] | Enantiomeric excess (ee) determination, absolute configuration assignment | Chromatography dominates practical applications; CD/VCD provide structural information |

| Synthetic Approaches | Asymmetric catalysis, Chiral pool/synthons, Chiral auxiliaries, Biocatalysis [20] [21] | Creating specific enantiomers with high optical purity | Asymmetric catalysis efficient but requires specialized ligands; biocatalysis offers sustainability |

| Industrial Production | Traditional separation, Asymmetric synthesis, Biological separation [21] | Scaling chirally pure compound manufacturing | Asymmetric preparation method growing at 6.9% CAGR [21] |

The concept of enantiomeric excess (ee), defined as ee = |[R] - [S]| / ([R] + [S]) × 100%, where [R] and [S] represent the concentrations of each enantiomer, serves as the primary metric for quantifying enantiopurity. [19] This measurement is essential for evaluating the success of asymmetric synthetic methods and ensuring product quality. Analytical techniques for ee determination have evolved significantly from Pasteur's manual separation of tartaric acid crystals to sophisticated instrumental methods. [19] Chromatographic methods with chiral stationary phases have emerged as the workhorse for routine analysis due to their reliability and precision, while chiroptical methods like vibrational circular dichroism (VCD) provide valuable structural information alongside enantiopurity assessment. [19]

Synthetic strategies for controlling chirality have similarly evolved. The field has progressed from relying on chiral pool starting materials to sophisticated catalytic asymmetric synthesis, where a substoichiometric amount of a chiral catalyst can impart stereochemistry to the product. [20] This approach is particularly powerful as it leverages kinetic resolution or asymmetric induction to favor formation of one enantiomer over the other. Recent innovations in biocatalysis have further expanded the toolbox for chiral synthesis, using enzymes and microorganisms to achieve highly selective transformations under mild conditions. [21] The global market growth for asymmetric preparation methods (projected at 6.9% CAGR) underscores the increasing adoption of these approaches in industrial applications. [21]

Synthesis Availability: Methods and Predictive Tools

The availability of reliable synthetic methods fundamentally determines whether a target molecule can be practically accessed. This spans from traditional organic transformations to cutting-edge catalytic systems and predictive computational tools.

Traditional vs. Modern Synthetic Methods

Traditional synthetic approaches, including SN2 reactions and stoichiometric chiral reagent control, continue to provide reliable access to many target structures. For instance, pro-chiral diynes/dienes can be synthesized through SN2 reactions between acetoacetanilide derivatives and propargyl/allyl bromide, with the reaction mechanism validated through intrinsic reaction coordinate (IRC) analysis. [22] These well-understood transformations offer predictability and often excel in robustness, particularly at scale.

Modern synthetic methods have dramatically expanded the scope of accessible structures. Palladium-catalyzed cascade reactions, such as the enantioconvergent aminocarbonylation and dearomative nucleophilic aza-addition developed for (N,N)-spiroketal synthesis, enable efficient construction of complex chiral architectures that would be challenging to access via traditional means. [18] Such methods achieve impressive yields (up to 99%) and enantioselectivities (up to 98% ee) while exhibiting broad functional group tolerance. [18] The dynamic kinetic asymmetric transformation (DyKAT) strategy is particularly powerful for converting racemic starting materials into enantiomerically enriched products. [18]

Predictive Tools for Synthesizability Assessment

Computational methods have revolutionized synthesizability assessment by enabling predictions before laboratory investment. Density functional theory (DFT) calculations provide insights into reaction mechanisms, transition states, and thermodynamic parameters, guiding the development of efficient synthetic routes. [22] For instance, DFT studies have elucidated why specific reaction pathways (e.g., deprotonation at methylene groups versus amide nitrogen) are favored in the synthesis of pro-chiral compounds. [22]

Machine learning approaches like SynthNN represent the cutting edge in synthesizability prediction. This deep learning model leverages the entire space of synthesized inorganic compositions to predict synthesizability with significantly higher precision than traditional metrics like charge-balancing or formation energy calculations. [15] Remarkably, SynthNN outperformed expert materials scientists in prediction precision (1.5× higher) while completing the task five orders of magnitude faster. [15] Such tools learn the underlying principles of synthesizability directly from data, capturing complex factors beyond simple heuristics.

Experimental Protocols and Methodologies

Protocol 1: SN2-Based Synthesis of Pro-Chiral Diynes/Dienes

The synthesis of pro-chiral 2-acetyl-N-aryl-2-(prop-2-yn-1-yl)pent-4-ynamides/-2-allyl-4-enamide derivatives exemplifies a practical approach to complex chiral structures: [22]

Reagents and Conditions:

- Acetoacetanilide derivatives (1a-g, 1 mmol)

- Propargyl/allyl bromide (2a-b, 3 equiv.)

- Potassium carbonate (3 equiv.)

- Acetonitrile (5-7 mL) as solvent

- Room temperature, 10 hours reaction time

Experimental Procedure:

- Suspend acetoacetanilide derivative in acetonitrile

- Add potassium carbonate base and propargyl/allyl bromide

- Stir reaction mixture at room temperature for 10 hours

- Monitor reaction progress by TLC until complete consumption of starting material

- Precipitate crude product by adding water

- Filter and wash sequentially with water (2 × 20 mL) and n-hexane (2 × 10 mL)

- Characterize products using NMR spectroscopy and mass spectrometry

Key Optimization Insights:

- Lower equivalents of propargyl bromide (1-2 equiv.) resulted in incomplete reactions or mixture formation

- Base screening revealed K₂CO₃ as optimal, with Cs₂CO₃ leading to mixtures

- The method provides excellent yields across diverse acetoacetanilide substrates [22]

Protocol 2: Pd-Catalyzed Asymmetric Spiroketal Synthesis

The catalytic asymmetric synthesis of chiral (N,N)-spiroketals demonstrates advanced methodology for complex chiral systems: [18]

Reagents and Conditions:

- Racemic quinazoline-derived heterobiaryl triflates

- Alkylamines (2-phenylethan-1-amine derivatives)

- Palladium precursor: Pd(acac)₂ (7.5 mol%)

- Chiral ligand: JOSIPHOS-type (L4, 7.5 mol%)

- Base: Cs₂CO₃ (3.0 equiv.)

- Solvent: 1,2-dimethoxyethane (DME)

- Carbon monoxide atmosphere (10 atm)

- Temperature: 50°C

- Reaction time: 18 hours

Experimental Procedure:

- Charge racemic triflate substrate, amine, and base in reaction vessel

- Add Pd(acac)₂ and chiral ligand under inert atmosphere

- Purge reaction system with carbon monoxide

- Pressurize with CO (10 atm) and heat to 50°C with stirring

- Monitor reaction completion by TLC or LC-MS

- Purify products by flash chromatography

- Determine enantiomeric excess by chiral HPLC or SFC

Key Optimization Insights:

- Ligand screening identified JOSIPHOS-type as optimal (91% yield, 97% ee)

- Solvent effects significant: toluene and DCM increased yield but eroded enantioselectivity

- Base crucial for enantioselectivity: K₂CO₃, K₃PO₄, and NEt₃ gave lower ee

- Catalyst loading reduction to 5 mol% dropped yield to 51% despite maintained ee [18]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Complexity and Chirality Studies

| Reagent/Material | Function/Application | Specific Examples |

|---|---|---|

| Chiral Ligands | Control enantioselectivity in asymmetric catalysis | JOSIPHOS-type ligands (for Pd-catalyzed spiroketal synthesis, 97% ee) [18] |

| Transition Metal Catalysts | Enable key bond formations and cascade reactions | Pd(acac)₂ for aminocarbonylation [18] |

| Chiral Stationary Phases | Separate and analyze enantiomers | HPLC columns with chiral selectors for ee determination [19] |

| Biocatalysts | Sustainable chiral synthesis and resolution | Enzymes and microorganisms for biocatalysis [21] |

| Computational Tools | Predict synthesizability and reaction outcomes | SynthNN for synthesizability classification [15]; DFT for mechanism study [22] |

| Building Blocks | Provide chirality and complexity elements | Acetoacetanilide derivatives, propargyl/allyl bromides [22] |

Comparative Performance Analysis

The integration of structural complexity, chirality, and synthesis availability into feasibility assessment represents a significant advancement over traditional single-parameter approaches like charge-balancing. Charge-balancing alone achieves only 7% precision in identifying synthesizable materials, while machine learning approaches like SynthNN reach 49% precision—a 7-fold improvement. [15] This dramatic enhancement demonstrates the value of incorporating multiple feasibility determinants.

In asymmetric synthesis, modern catalytic methods consistently achieve enantioselectivities exceeding 90% ee for challenging transformations, with optimal systems reaching 97-98% ee for spiroketal formation. [18] These performance metrics make such methods competitive with or superior to traditional chiral resolution techniques, particularly when considering atom economy and step efficiency. The commercial growth of asymmetric preparation methods (projected at 6.9% CAGR) versus traditional separation methods (5.8% CAGR) reflects this performance advantage in industrial applications. [21]

Diagram 1: Synthesis Feasibility Assessment Workflow

Diagram 2: Structural Complexity Impact on Synthesizability

Synthesis feasibility assessment has evolved dramatically from simplistic criteria like charge-balancing to sophisticated multi-parameter frameworks incorporating structural complexity, chirality, and synthesis availability. The integration of computational prediction tools, advanced asymmetric catalysis, and detailed mechanistic understanding has created a powerful toolkit for evaluating synthetic accessibility before laboratory investment. As structural complexity continues to increase in target molecules, particularly in pharmaceutical applications, and regulatory requirements for enantiopurity become more stringent, these feasibility determinants will grow in importance. The ongoing development of machine learning approaches like SynthNN and innovative catalytic systems promises to further refine our ability to distinguish synthetically viable targets from those likely to consume disproportionate resources, ultimately accelerating the discovery and development of new molecular entities across diverse fields.

The Evolving Regulatory Landscape and Its Impact on Feasibility Requirements

The regulatory landscape governing scientific research and product development is in a constant state of evolution, directly shaping the feasibility requirements for bringing new innovations to market. For researchers, scientists, and drug development professionals, understanding this dynamic interplay is crucial for designing successful development strategies. This guide explores the current regulatory frameworks impacting feasibility assessments, with a specific focus on the context of charge-balancing criterion synthesis feasibility assessment research. As regulatory bodies worldwide intensify their focus on safety, efficacy, and ethical compliance, the criteria for deeming a project feasible have become more rigorous and complex. This analysis objectively compares different regulatory pathways and the methodological approaches they necessitate, providing a structured overview of the protocols, data requirements, and strategic considerations essential for navigating this challenging environment.

The Regulatory Framework for Feasibility Assessment

Regulatory feasibility assessment serves as a critical bridge between innovation and market approval, ensuring that new products and technologies comply with necessary standards before significant resources are invested. Defined as the evaluation of a clinical trial or product development plan against the rules and compliances of a specific geographic region, this process assesses the compatibility of a design with current regulatory requirements, focusing on safety and efficiency [23]. Its primary impact is to build confidence between developers, regulators, and participants, ultimately streamlining the approval process and minimizing potential risks.

The following table summarizes the core components and strategic value of regulatory feasibility assessment:

Table 1: Core Components of Regulatory Feasibility Assessment

| Component | Strategic Consideration | Impact on Development |

|---|---|---|

| Target Disease & Study Design | Evaluating if the design (randomized, blind, controlled) is appropriate for the target disease and standard of care [23]. | Guides optimal clinical protocol design to answer key research questions. |

| Regulatory Hurdles | Identifying government-related issues and ensuring the trial is ethically/scientifically acceptable [23]. | Prevents costly delays by proactively addressing ethical and scientific concerns. |

| Patient Recruitment & Site Selection | Analyzing inclusion/exclusion criteria, recruitment challenges, and facility capabilities [23]. | Ensures timely enrollment and identifies operational bottlenecks early. |

| Staffing & Timeline | Verifying staff qualifications and assessing the realism of start-up and overall study timelines [23]. | Mitigates risks associated with inadequate resources or unrealistic planning. |

Levels of Feasibility Assessment

Feasibility assessments are conducted at three distinct levels of granularity [23]:

- Program Level: A high-level assessment conducted early in development to determine disease prevalence and regional suitability for research.

- Study Level: Evaluates whether a specific clinical trial can be conducted in a particular country or region, identifying potential risks.

- Site/Investigator Level: A granular evaluation of the suitability of a specific clinical trial site and investigator.

Comparative Analysis of Regulatory Pathways and Feasibility Requirements

Different regulatory pathways impose distinct feasibility requirements. A comparative analysis of key frameworks reveals varying approaches to early-stage development and risk mitigation.

Phase 0 / Microdosing Studies in Drug Development

The International Conference on Harmonisation (ICH) M3 guidance outlines several exploratory clinical trial approaches, offering a spectrum of options for early human testing [24]. These approaches allow for the collection of critical human data with reduced preclinical requirements, thereby de-risking early development.

Table 2: Comparison of ICH M3 Exploratory Clinical Trial Approaches

| Feature | Approach 1: Single Microdose | Approach 2: Multiple Microdoses | Approach 5: Limited Therapeutic Dose |

|---|---|---|---|

| Dose Definition | ≤1/100th of NOAEL and ≤1/100th of pharmacologically active dose [24]. | Same as Approach 1 [24]. | Highest dose: < non-rodent NOAEL AUC [24]. |

| Cumulative Dose | 100 μg [24]. | 500 μg [24]. | Not specified by a fixed mass. |

| Dosing Regimen | Single dose [24]. | Up to 5 doses [24]. | Multiple doses, up to 14 days [24]. |

| Preclinical Toxicity Requirements | 14-day extended single-dose toxicity (GLP) [24]. | 7-day repeated-dose toxicity (GLP) [24]. | 14-day repeated-dose toxicity in rodent and non-rodent (GLP) [24]. |

| Key Strategic Application | Initial human PK data with minimal preclinical footprint. | Gathering preliminary data on metabolite formation. | Obtaining early pharmacodynamic (PD) and mechanism of action (MOA) data [24]. |

Supporting Experimental Data: A 2017 case study by GlaxoSmithKline (GSK) demonstrated the utility of a microdosing study to terminate the development of an anti-malarial drug. The study revealed an elimination half-life of 17 hours, which was deemed too short for the developmental objectives, preventing further investment in a non-viable candidate [24].

Early Feasibility Studies (EFS) for Medical Devices

The U.S. Food and Drug Administration (FDA) runs an Early Feasibility Studies (EFS) Program for medical devices. An EFS is a limited clinical investigation of a device early in development, typically enrolling a small number of subjects to evaluate the device design concept regarding initial clinical safety and device functionality [25]. This pathway is appropriate when non-clinical testing is unavailable or inadequate to provide the information needed for further development, and it allows for potential device modifications based on early clinical insights [25].

Nucleic Acid Synthesis Screening Framework

A recent evolution in the regulatory landscape for life sciences research is the Framework for Nucleic Acid Synthesis Screening. Effective April 2025, federally funded researchers in the U.S. must procure synthetic nucleic acids and related equipment only from providers that adhere to new national safety standards designed to screen for "sequences of concern" (SOCs) [26] [27]. This framework directly impacts feasibility by adding a mandatory vendor compliance check to the material sourcing phase of research.

Experimental Protocols for Feasibility and Synthesizability Assessment

Protocol for a Customized Feasibility Study (Pharmaceutical QC)

For drug developers assessing new equipment or systems, a standardized protocol for a feasibility study is recommended [28].

- Objective: To confirm product compatibility and method performance with a new QC testing platform (e.g., for Mycoplasma detection, Endotoxin detection, or Sterility testing).

- Methodology: A partner or supplier's application laboratory performs the study using the manufacturer's product samples and microbial strains. The service typically includes 1 product and up to 2 strains, with options for expansion.

- Duration & Deliverable: The study runs for 4-6 weeks, concluding with a customized study report that provides evidence of compatibility and performance, supporting the investment decision [28].

Protocol for Predicting Material Synthesizability (SynthNN)

In the context of charge-balancing criterion synthesis, predicting whether a hypothetical inorganic crystalline material is synthesizable is a major challenge. Traditional reliance on the charge-balancing criterion alone has proven insufficient, as only 37% of known synthesized materials are charge-balanced [15]. A modern machine learning protocol, SynthNN, addresses this:

- Objective: To predict the synthesizability of an inorganic chemical formula without requiring structural information.

- Methodology: A deep learning model is trained on the Inorganic Crystal Structure Database (ICSD), which contains synthesized materials, augmented with artificially generated "unsynthesized" materials. The model uses a positive-unlabeled (PU) learning algorithm to handle the fact that some materials in the "unsynthesized" set may actually be synthesizable but not yet discovered.

- Validation: In a head-to-head comparison against 20 expert material scientists and traditional computational methods like DFT-calculated formation energies, SynthNN achieved 1.5x higher precision than the best human expert and 7x higher precision than the formation energy baseline, demonstrating its superior capability in identifying synthesizable materials [15].

Visualization of Regulatory Feasibility Workflows

The following diagram illustrates the key decision points and types of feasibility assessments in the regulated development lifecycle.

Diagram Title: Regulatory Feasibility Assessment Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and resources referenced in the experimental protocols and regulatory frameworks discussed.

Table 3: Key Research Reagents and Resources for Feasibility Assessment

| Item/Resource | Function in Feasibility Assessment | Relevant Context |

|---|---|---|

| Synthetic Nucleic Acids | Key reagents for genetic engineering and synthetic biology research. | Subject to new screening requirements under the Framework for Nucleic Acid Synthesis; must be sourced from compliant providers [26] [27]. |

| Validated QC Platforms | Microbiological testing systems for quality control (e.g., Sterility, Endotoxin detection). | Compatibility is verified via feasibility studies to ensure they meet a manufacturer's specific process needs before full implementation [28]. |

| Inorganic Crystal Structure Database (ICSD) | A comprehensive database of experimentally reported inorganic crystal structures. | Serves as the primary source of "synthesized" data for training machine learning models like SynthNN to predict new synthesizable materials [15]. |

| Microdosed Drug Candidate | A sub-therapeutic dose (≤100 μg) of a pharmaceutical compound. | Used in Phase 0 studies to obtain early human pharmacokinetic data without requiring extensive preclinical safety packages [24]. |

The regulatory landscape is unequivocally shaping the core of feasibility requirements across scientific disciplines. From the structured pathways of drug and device development to emerging frameworks governing synthetic biology, success is increasingly dependent on a proactive and informed approach to regulatory feasibility. The comparative data and protocols presented here underscore a consistent theme: early, strategic assessment using the appropriate tools—whether a customized QC study, a Phase 0 trial, or an AI-driven synthesizability prediction—is indispensable. For professionals navigating this complex environment, integrating these evolving requirements into the earliest stages of project planning is no longer optional but a fundamental component of feasible and successful research and development.

From Theory to Practice: Methodological Approaches for Feasibility Assessment

In modern drug discovery and materials science, the synthetic accessibility (SA) of a proposed molecule is a critical determinant of its practical potential. Rule-based computational methods have been developed to rapidly estimate the ease with which an organic compound can be synthesized, providing invaluable metrics for prioritizing candidates in virtual screening and generative design. Among these, Synthetic Accessibility Score (SAscore) and related fragment-based assessment methods have gained prominence for their speed, interpretability, and correlation with expert judgment [29] [30].

These approaches are particularly valuable within charge-balancing criterion synthesis feasibility assessment research, where researchers must evaluate numerous candidate structures and balance desired electronic or optical properties with practical synthesizability. Unlike retrosynthesis-based methods that require extensive reaction databases and computationally intensive analysis, rule-based methods like SAscore use molecular complexity metrics and fragment frequency analysis to provide rapid assessments suitable for high-throughput screening environments [29] [31].

Core Methodologies and Algorithms

SAscore: Original Framework and Calculation

The original SAscore, introduced in 2009, combines two complementary approaches to synthetic accessibility estimation: historical synthetic knowledge captured through fragment analysis and structural complexity penalties for challenging molecular features [29].

The SAscore is calculated using the following equation:

SAscore = fragmentScore - complexityPenalty

The fragmentScore component captures "historical synthetic knowledge" by analyzing the frequency of molecular substructures in previously synthesized compounds. This is based on the premise that fragments commonly found in existing chemical databases are likely easier to synthesize. The score is derived from statistical analysis of Extended Connectivity Fragments (ECFC_4) from approximately one million representative molecules in the PubChem database [29].

The complexityPenalty accounts for structurally complex features that typically present synthetic challenges:

- Size Complexity: Penalizes large molecules (number of atoms)

- Stereo Complexity: Accounts for chiral centers

- Ring Complexity: Penalizes non-standard ring fusions, bridgehead, and spiro atoms

- Macrocycle Complexity: Accounts for large rings (size > 8) [30]

The final score is scaled between 1 (easy to synthesize) and 10 (very difficult to synthesize), with a suggested threshold of 6.0 for distinguishing between easy and hard to synthesize compounds [32].

BR-SAScore: Building Block and Reaction-Aware Enhancement

BR-SAScore represents an evolution of the original method that explicitly incorporates building block information and reaction knowledge from synthesis planning programs. This enhancement addresses a key limitation of the original SAscore by differentiating between fragments inherent in available building blocks and those formed through chemical reactions [30].

The BR-SAScore calculation modifies the original framework:

BR-SAScore = BR-fragmentScore - complexityPenalty

Where BR-fragmentScore comprises:

- BScore: Building block fragment score derived from available starting materials

- RScore: Reaction-driven fragment score derived from known reaction transforms [30]

This distinction allows BR-SAScore to more accurately reflect actual synthetic pathways rather than relying solely on statistical fragment prevalence in databases.

SYBA: Bayesian Fragment-Based Classification

SYBA (SYnthetic Bayesian Accessibility) employs a different statistical approach based on a Bernoulli naïve Bayes classifier. Unlike SAscore which primarily uses frequency data from easy-to-synthesize compounds, SYBA incorporates both positive and negative examples in its training [32].

SYBA is trained on:

- Easy-to-Synthesize (ES) molecules from purchasable compound databases (ZINC15)

- Hard-to-Synthesize (HS) molecules generated using the Nonpher molecular morphing approach [32]

The SYBA score represents the log-ratio of probabilities that a compound belongs to the ES versus HS class, with positive values indicating easier synthesis. The method uses ECFP8 fragments and includes special handling for stereocenters [32].

Table 1: Comparison of Rule-Based Synthetic Accessibility Assessment Methods

| Method | Statistical Approach | Score Range | Key Components | Training Data |

|---|---|---|---|---|

| SAscore | Fragment frequency analysis | 1 (easy) - 10 (hard) | fragmentScore, complexityPenalty | 1M PubChem compounds [29] |

| BR-SAScore | Enhanced fragment analysis | N/A | BR-fragmentScore (BScore + RScore), complexityPenalty | Building blocks & reaction datasets [30] |

| SYBA | Bernoulli naïve Bayes classifier | -∞ to +∞ (positive = easier) | Fragment contributions, stereo score | ES: ZINC15, HS: Nonpher-generated [32] |

Experimental Validation and Performance Comparison

Validation Against Expert Assessment

The original SAscore was validated against assessments by experienced medicinal chemists for a set of 40 molecules. The method demonstrated very good agreement with human expert evaluation, achieving a correlation of r² = 0.89 between calculated and manually estimated synthetic accessibility [29].

This level of agreement is notable given the documented variability among expert chemists themselves. Studies have shown that correlation coefficients between different chemists ranking the same compounds typically range from 0.40 to 0.84, reflecting different backgrounds, research areas, and subjective experiences [29].

Comparative Performance Studies

Independent evaluations have compared the performance of these methods across diverse test sets. In one comprehensive assessment, SYBA demonstrated superior performance compared to SAscore and SCScore when using their default thresholds [32].

However, the study also found that when the SAscore classification threshold was optimized from 6.0 to -4.5, it performed similarly to SYBA. This highlights the importance of threshold calibration for specific applications and chemical spaces [32].

BR-SAScore has shown particular strength in predicting the output of synthesis planning programs. In tests across three different benchmark sets (TS1-TS3), BR-SAScore achieved superior accuracy and precision in identifying whether synthesis routes could be found by Retro* synthesis planning software compared to original SAscore and deep learning models [30].

Table 2: Quantitative Performance Comparison of Synthetic Accessibility Methods

| Method | Default Threshold | Accuracy | Computation Speed | Key Strengths |

|---|---|---|---|---|

| SAscore | 6.0 | r²=0.89 vs. medicinal chemists [29] | Very fast | Validation against expert judgment, interpretability [29] |

| BR-SAScore | N/A | Superior prediction of synthesis planning program success [30] | Fast (similar to SAScore) | Incorporates actual synthesis knowledge, better chemical interpretability [30] |

| SYBA | 0.0 | Similar to optimized SAScore [32] | Fast | Uses both positive and negative examples, Bayesian probability framework [32] |

Implementation Workflows and Integration

SAscore Calculation Workflow

The following diagram illustrates the computational workflow for calculating SAscore:

BR-SAScore Enhanced Workflow

BR-SAScore extends this workflow by incorporating additional chemical knowledge sources:

Research Reagent Solutions and Essential Tools

Table 3: Essential Research Tools for Synthetic Accessibility Assessment

| Tool/Resource | Function in Research | Application Context |

|---|---|---|

| PubChem Database | Provides reference set of synthesized compounds for fragment frequency analysis [29] | Source of historical synthetic knowledge for SAscore |

| ZINC15 Database | Curated collection of commercially available compounds; source of easy-to-synthesize molecules for SYBA training [32] | Training and validation data for method development |

| RDKit | Open-source cheminformatics toolkit; provides fragmentation and descriptor calculation capabilities [31] | Essential for molecular manipulation and fingerprint generation |

| BRICS Implementation | Breaking Retrosynthetically Interesting Chemical Substructures; used for controlled molecule design [31] | Building block-based molecule assembly for validation studies |

| Nonpher Algorithm | Molecular morphing approach for generating complex, hard-to-synthesize molecules [32] | Creates training data for hard-to-synthesize compound classification |

Applications in Drug Discovery and Materials Science

Integration with Generative Molecular Design

Rule-based SA assessment methods have become integral components of generative molecular design pipelines. In drug discovery, they help prioritize generated structures that balance target activity with practical synthesizability [33] [34].

The speed of methods like SAscore (typically milliseconds per molecule) makes them particularly suitable for screening large virtual libraries. For example, when processing 200,000 molecules from the ChEMBL database, SAscore-based filtering reduced computation time from an estimated 239 days (with full synthesis planning) to approximately 79 minutes [30].

Applications Beyond Pharmaceutical Research

While initially developed for drug-like molecules, these methods have found applications in materials informatics. Recent research has demonstrated the use of SAscore and SYBA for prioritizing organic semiconductor candidates in solar cell development [31].

In one study, researchers designed 10,000 organic semi-conductors using the BRICS approach and used SAscore and SYBA to prioritize easily synthesizable candidates for organic solar cell applications. The results indicated these scores as effective strategies for screening generated structures and focusing experimental efforts on the most promising candidates [31].

Limitations and Future Directions

Despite their utility, current rule-based methods have limitations. The original SAscore can exhibit over-pessimism toward molecules containing chemical fragments that are common in building blocks but absent in the PubChem database [30]. Additionally, these methods generally do not account for recent advances in synthetic methodologies that might make previously challenging structures more accessible.

Future developments are likely to focus on dynamic SA assessment that incorporates temporal evolution of synthetic methodologies, integration with automated synthesis platforms, and specialized scoring functions for emerging chemical domains such as macrocycles, covalent inhibitors, and new modalities beyond small molecules [30] [35].

The progression from SAscore to BR-SAScore represents a promising direction—maintaining computational efficiency while incorporating more explicit chemical knowledge about available building blocks and known reaction transforms. This approach helps bridge the gap between purely statistical assessments and resource-intensive retrosynthetic analysis [30].

In modern drug discovery, the question of whether a proposed molecule can be feasibly synthesized is as crucial as its predicted bioactivity. Computer-Aided Synthesis Planning (CASP) tools can answer this but are computationally expensive, making them impractical for screening virtual libraries containing millions of compounds [36] [37]. Machine learning-driven synthetic accessibility scores have emerged as rapid, computational filters to address this bottleneck.

This guide provides an objective comparison of established and modern synthetic accessibility scores, focusing on their operational principles, performance data, and practical utility for researchers engaged in feasibility assessment within drug development pipelines. We frame this comparison within the critical context of charge-balancing criterion synthesis feasibility assessment research, where rapid and accurate synthesizability evaluation is paramount.

Core Scoring Algorithms: Mechanisms and Methodologies

Synthetic accessibility scores can be broadly categorized into structure-based approaches, which evaluate molecular feasibility using fragment occurrence, and reaction-based approaches, which leverage knowledge from reaction databases or CASP outcomes [36] [38].

SCScore (Synthetic Complexity Score)

- Core Concept: A reaction-based score that estimates molecular complexity as the expected number of synthetic steps required to produce a target. It operates on the principle that products are generally more complex than their reactants [37].

- Training Data: Trained on a dataset of 12 million reactions from the Reaxys database [36] [38].

- Model Architecture: Utilizes a neural network. Molecules are represented as 1024-bit Morgan fingerprints (radius 2) [36] [38].

- Output Range: A continuous score from 1 (simple) to 5 (complex) [36].

RAscore (Retrosynthetic Accessibility Score)

- Core Concept: A reaction-based score designed as a rapid classifier to predict the outcome of a specific CASP tool, AiZynthFinder. It answers a binary question: "Can AiZynthFinder find a synthetic route for this molecule?" [37].

- Training Data: Trained on over 200,000 molecules from the ChEMBL database, each labeled by AiZynthFinder as "solved" (synthesizable) or "unsolved" (non-synthesizable) [36] [38] [37].

- Model Architecture: Two primary models are available: a Neural Network and a Gradient Boosting Machine (e.g., XGBoost), providing flexibility in performance and interpretability [36] [38].

- Output Range: A score between 0 and 1, indicating the probability that a molecule is synthesizable as per AiZynthFinder [37].

Modern and Alternative Scores

- SAscore (Synthetic Accessibility Score): A structure-based score combining a fragment score (based on ECFP4 fragment frequency in PubChem) with a complexity penalty (for features like stereocenters and macrocycles). It ranges from 1 (easy) to 10 (hard) and is available in the RDKit package [36] [38].

- SYBA (Synthetic Bayesian Accessibility): A structure-based score employing a Bernoulli naïve Bayes classifier. It is trained on easy-to-synthesize compounds from ZINC15 and hard-to-synthesize compounds generated using the Nonpher tool [36] [38].

Table 1: Summary of Core Synthetic Accessibility Scores

| Score | Underlying Approach | Core Principle | Training Data | Output Range |

|---|---|---|---|---|

| SCScore | Reaction-based | Molecular complexity as expected synthesis steps | 12M reactions from Reaxys | 1 (simple) to 5 (complex) |

| RAscore | Reaction-based | Prediction of CASP (AiZynthFinder) outcome | 200k molecules from ChEMBL | 0 to 1 (probability) |

| SAscore | Structure-based | Fragment frequency & structural complexity penalties | Molecules from PubChem | 1 (easy) to 10 (hard) |

| SYBA | Structure-based | Bayesian classification of easy/hard-to-synthesize structures | ZINC15 & Nonpher-generated molecules | Binary classification / score |

Performance Comparison and Experimental Assessment