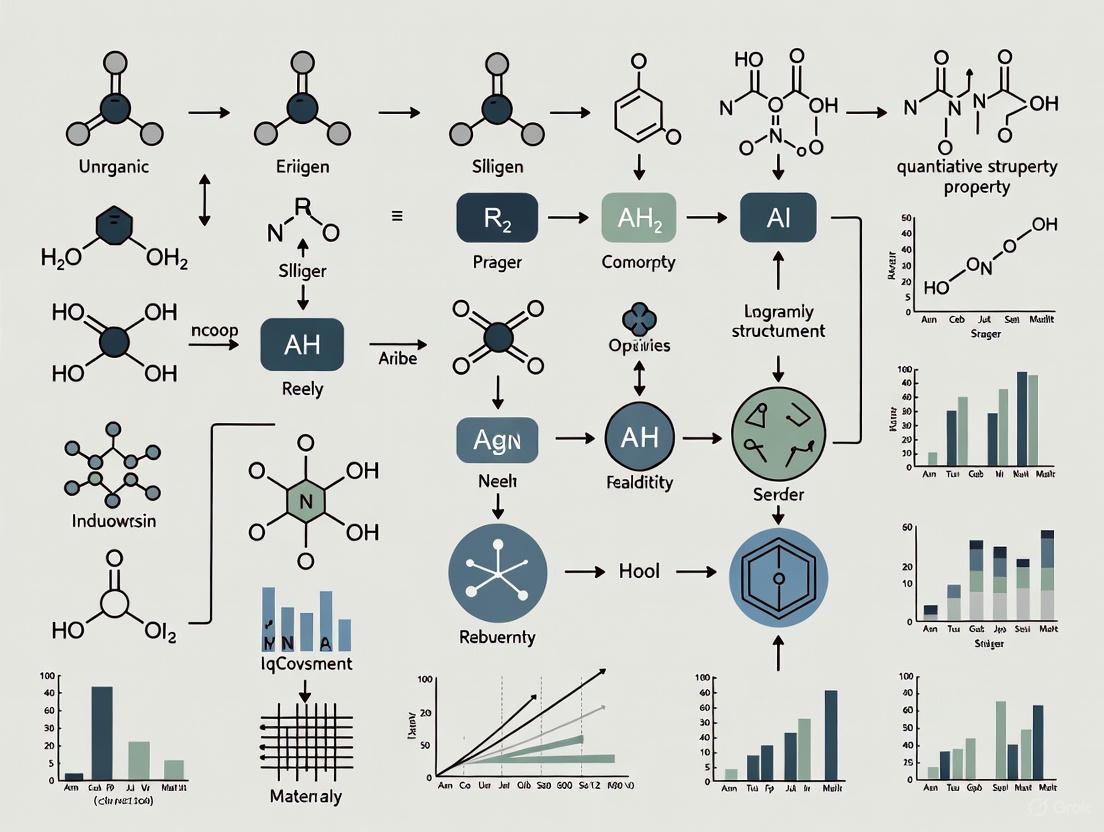

Advances in Quantitative Structure-Property Relationship (QSPR) Modeling for Inorganic Compounds: Methods, Applications, and Future Directions

Quantitative Structure-Property Relationship (QSPR) modeling is a powerful computational tool that correlates the physicochemical properties of compounds with their molecular structures.

Advances in Quantitative Structure-Property Relationship (QSPR) Modeling for Inorganic Compounds: Methods, Applications, and Future Directions

Abstract

Quantitative Structure-Property Relationship (QSPR) modeling is a powerful computational tool that correlates the physicochemical properties of compounds with their molecular structures. While extensively developed for organic molecules, the application of QSPR to inorganic and organometallic compounds presents unique challenges and opportunities. This article provides a comprehensive overview of the foundational principles, methodological developments, and current applications of QSPR in inorganic chemistry. It explores the critical differences between modeling organic and inorganic substances, including descriptor selection, data set limitations, and algorithmic adaptations. By synthesizing recent benchmarking studies and novel research, this review offers practical guidance for troubleshooting model optimization, validating predictive performance, and expanding applicability domains. Aimed at researchers, scientists, and drug development professionals, this article highlights the potential of QSPR to accelerate the design and discovery of novel inorganic materials with tailored properties for biomedical, environmental, and industrial applications.

The Foundations of Inorganic QSPR: Bridging the Gap with Organic Chemistry

Quantitative Structure-Property Relationship (QSPR) is a computational modeling methodology used to correlate the structural characteristics of chemical compounds with their specific physical, chemical, or environmental properties [1]. This approach operates on the fundamental principle that a compound's molecular structure inherently determines its physicochemical properties [2]. By developing statistical models that utilize structural descriptors, QSPR enables the prediction of material behavior without requiring extensive physical laboratory testing, thereby serving as a powerful tool across chemical research, pharmaceutical development, and environmental science [2] [1].

The core assumption of QSPR theory establishes a direct relationship between molecular structure and observable properties, allowing researchers to mathematically describe how subtle structural changes affect properties ranging from simple boiling points to complex biological activities [2]. The methodology originated in medicinal chemistry and has since been adopted by environmental science for hazard assessment, playing an increasingly vital role in green chemistry by enabling rapid computational assessment of chemical properties [1].

The Core Principle: From Molecular Structure to Predictable Properties

Foundational Principle

The foundational principle of QSPR is that variations in molecular structure consistently correspond to changes in measurable physicochemical properties [2]. This structure-property relationship allows for the development of mathematical models that can predict properties for new, unsynthesized compounds based solely on their structural features. The principle applies to diverse properties including lipophilicity, solubility, molecular weight, topological polar surface area, bioavailability, and toxicity [3].

This principle extends beyond simple correlation to encompass complex multivariate relationships where multiple structural descriptors collectively determine property outcomes. For instance, in pharmaceutical applications, QSPR models can predict how structural modifications will affect a drug candidate's absorption, distribution, metabolism, excretion, and toxicity (ADMET) characteristics, providing crucial insights early in the development process [4].

Mathematical Foundation

The general QSPR equation takes the form of a mathematical model:

Property = f(structural descriptors) + error [5]

In this equation, the property represents the experimental response variable, structural descriptors are quantitative representations of molecular features, and the error term encompasses both model bias and observational variability. The function f can take various forms, including multiple linear regression, partial least squares analysis, artificial neural networks, or other machine learning algorithms [2] [5].

Table 1: Core Components of a QSPR Model

| Component | Description | Examples |

|---|---|---|

| Response Variable | The physicochemical property being modeled | Boiling point, solubility, retention index, toxicity [2] [6] |

| Structural Descriptors | Quantitative representations of molecular structure | Topological indices, electronic parameters, geometric descriptors [2] [3] |

| Algorithm | Mathematical method relating descriptors to property | Multiple Linear Regression (MLR), Artificial Neural Networks (ANN), Partial Least Squares (PLS) [2] [5] |

| Validation Metrics | Statistical measures of model performance | R², cross-validated R², mean absolute error, applicability domain [5] [7] |

Essential Methodologies and Descriptors

Molecular Descriptors and Their Calculation

Molecular descriptors are quantitative numerical values that encode specific structural and electronic information about molecules. These descriptors serve as the independent variables in QSPR models and can be categorized into several classes:

Topological Descriptors are derived from graph theoretical representations of molecular structure, where atoms represent vertices and bonds represent edges [3] [4]. These include:

- Degree-based indices: Randić, Zagreb, and Atom-Bond Connectivity (ABC) indices that capture molecular branching and connectivity patterns [3]

- Distance-based indices: Wiener index based on topological distances between vertices [4]

- Information-theoretic indices: Hosoya and Estrada indices based on graph spectra and edge arrangements [4]

Three-Dimensional Descriptors capture stereochemical and electronic features through methods such as:

- Comparative Molecular Field Analysis (CoMFA): Examines steric and electrostatic fields around molecules [5]

- Quantum Chemical Descriptors: Derived from electronic structure calculations, including highest occupied and lowest unoccupied molecular orbital energies (HOMO-LUMO), dipole moments, and molecular polarizabilities [8] [9]

Fragment-Based Descriptors utilize group contribution approaches where molecular properties are estimated as the sum of contributions from constituent functional groups or substructures [5].

Model Development Workflow

The QSPR modeling process follows a systematic workflow comprising four fundamental stages [5] [6]:

- Data Set Selection: Curating a high-quality, representative set of compounds with reliable experimental property data

- Descriptor Generation: Calculating molecular descriptors for all compounds in the data set

- Model Construction: Applying statistical and machine learning methods to relate descriptors to the target property

- Model Validation: Rigorously assessing model performance using internal and external validation techniques

Experimental Protocols in QSPR Analysis

Protocol 1: QSPR Model Development with Topological Indices

This protocol outlines the development of QSPR models using degree-based topological indices, as applied in pharmaceutical research for necrotizing fasciitis antibiotics and Parkinson's disease medications [3] [4].

Materials and Reagents:

- Chemical Structures: Molecular structures of compounds in the dataset, obtained from databases such as PubChem or ChemSpider [3]

- Software for Structure Drawing: KingDraw or equivalent chemical structure editing software [3]

- Computational Environment: MATLAB, R, or Python with appropriate chemical informatics libraries [6]

Methodology:

- Data Set Compilation: Select a homogeneous set of compounds with experimentally determined property values. For pharmaceutical applications, this may include known drugs with measured physicochemical properties (e.g., solubility, lipophilicity) [3] [4].

- Molecular Graph Representation: Represent each molecule as a hydrogen-suppressed graph where atoms are vertices and bonds are edges [4].

- Topological Index Calculation: Compute degree-based topological indices for each molecular graph:

- Calculate Zagreb indices focusing on vertex degrees

- Compute Randić connectivity index based on bond connectivity

- Determine Atom-Bond Connectivity (ABC) index

- Evaluate recently developed neighborhood degree-based indices [4]

- Descriptor Selection: Apply unsupervised and supervised variable selection techniques to identify the most relevant topological indices for the target property [7].

- Model Construction: Develop mathematical relationships using:

- Linear, quadratic, and cubic regression models

- Multiple linear regression with stepwise variable selection

- Machine learning approaches including artificial neural networks when nonlinear relationships are suspected [3]

- Model Validation:

Protocol 2: QSPR for Chromatographic Retention Indices

This protocol details the development of QSPR models for predicting gas chromatographic retention indices of volatile organic compounds, as applied in food chemistry and environmental analysis [7] [6].

Materials and Reagents:

- Experimental Retention Index Data: Experimentally determined retention indices for a training set of compounds, preferably determined under standardized conditions [7]

- Computational Chemistry Software: Programs for molecular geometry optimization (e.g., Gaussian, alvaDesc) [7]

- Descriptor Calculation Software: alvaDesc or equivalent molecular descriptor calculation package [7]

Methodology:

- Database Curation: Collect and curate experimental retention indices from reliable sources. For the quinoa seed VOC study, 61 volatile organic compounds were selected with retention indices determined using GC-IMS with an FS-SE-54-CB-1 capillary column [7].

- Molecular Geometry Optimization: Optimize molecular geometries using semiempirical or density functional theory (DFT) methods to obtain minimum energy conformations [7].

- Molecular Descriptor Calculation: Compute a comprehensive set of molecular descriptors (5,633 descriptors in the quinoa study) categorized into logical blocks including:

- Constitutional descriptors (molecular weight, atom counts)

- Topological descriptors (connectivity indices, path counts)

- Geometrical descriptors (moment of inertia, molecular volume)

- Electronic descriptors (partial charges, HOMO-LUMO energies)

- Quantum chemical descriptors (polarizability, hardness) [7]

- Data Preprocessing: Remove non-informative descriptors including:

- Constants or near-constant values

- Descriptors with missing values

- Highly correlated descriptors (using correlation analysis) [7]

- Data Set Division: Split the data set into training (approximately 80%) and test (approximately 20%) sets using statistical design methods to ensure representative distribution [7].

- Model Development: Apply multiple linear regression with feature selection techniques such as:

- Stepwise regression

- Genetic algorithm-based variable selection

- Particle swarm optimization [7]

- Model Validation:

- Internal validation using cross-validation (leave-one-out or k-fold)

- External validation using the test set

- Applicability domain definition using leverage approach [7]

- Mechanistic Interpretation: Analyze the significance of selected descriptors in relation to the retention mechanism to provide chemical insights [7].

Table 2: Key Reagents and Computational Tools for QSPR Studies

| Category | Specific Tool/Reagent | Function in QSPR |

|---|---|---|

| Chemical Databases | PubChem, ChemSpider | Source of molecular structures and experimental properties [3] |

| Structure Drawing | KingDraw | Creation and visualization of molecular structures [3] |

| Geometry Optimization | Gaussian, MOPAC | Calculation of minimum energy molecular conformations [7] |

| Descriptor Calculation | alvaDesc, Dragon | Computation of molecular descriptors from chemical structure [7] |

| Statistical Analysis | MATLAB, R, Python | Model development and validation [6] |

| Specialized QSPR | CoMFA, COSMO-RS | 3D-QSPR and solvation-based prediction [2] [9] |

Advanced QSPR Frameworks and Applications

Integration with Read-Across Techniques

The quantitative Read-Across Structure-Property Relationship (q-RASPR) represents a significant advancement that integrates traditional QSPR with similarity-based read-across techniques [9]. This hybrid approach enhances predictive accuracy, particularly for compounds with limited experimental data, by incorporating chemical similarity information alongside structural descriptors.

The q-RASPR methodology follows these key steps:

- Similarity Assessment: Calculate pairwise similarity measures between all compounds in the data set

- Descriptor Integration: Combine conventional structural descriptors with similarity-based descriptors

- Model Development: Construct models using the augmented descriptor matrix

- Outlier Detection: Identify and exclude structurally distinct outliers to improve model robustness [9]

This approach has demonstrated superior performance for predicting environmentally relevant properties of persistent organic pollutants, including partition coefficients and degradation rate constants [9].

Quantum QSPR (QQSPR)

Quantum QSPR represents a sophisticated approach that utilizes quantum mechanical density functions as molecular descriptors [10]. In this framework:

- Molecular structures are represented as quantum multimolecular polyhedra (QMP) with vertices formed by molecular density functions

- Molecular properties are calculated as quantum expectation values of Hermitian operators

- Similarity matrices derived from quantum similarity measures replace traditional descriptors [10]

This approach provides a theoretically rigorous foundation for property prediction that directly incorporates quantum mechanical principles, potentially offering advantages for modeling complex electronic properties [10].

Applications in Inorganic Compounds Research

While many cited examples focus on organic and pharmaceutical compounds, the QSPR methodology is equally applicable to inorganic compounds research. The fundamental principle—linking molecular structure to physical properties—transfers directly to inorganic systems, though descriptor selection may emphasize different features:

- Coordination Compounds: Topological descriptors can capture connectivity patterns in coordination polymers and metal-organic frameworks

- Organometallic Complexes: Electronic descriptors derived from quantum chemical calculations can predict catalytic properties and stability

- Main Group Compounds: Geometric descriptors may dominate models for predicting properties of main group clusters and extended solids

The protocols outlined in Section 4 can be directly adapted to inorganic systems by selecting appropriate descriptors that capture relevant structural and electronic features of inorganic compounds.

QSPR represents a powerful paradigm for connecting molecular structure to measurable physicochemical properties through mathematical modeling. The core principle—that molecular structure determines properties—enables the prediction of chemical behavior for both existing and novel compounds. As methodologies advance with innovations such as q-RASPR, quantum QSPR, and sophisticated machine learning approaches, the accuracy and applicability of QSPR models continue to expand. For inorganic compounds research, these methodologies offer a robust framework for accelerating discovery and optimization of materials with tailored properties, reducing reliance on resource-intensive experimental screening while providing fundamental insights into structure-property relationships.

Quantitative Structure-Property Relationship (QSPR) modeling serves as a cornerstone in computational chemistry, enabling the prediction of material behaviors from molecular descriptors. However, the fundamental chemical divide between organic and inorganic compounds necessitates distinct modeling approaches. While organic QSPR traditionally deals with carbon-based molecules possessing complex molecular architectures, inorganic QSPR confronts the challenge of representing extended periodic structures, diverse bonding environments, and metal-containing systems [11]. This whitepaper examines the core methodological differences between these domains, framed within the context of advancing QSPR for inorganic compounds research. Understanding these distinctions is critical for researchers and drug development professionals working with metallodrugs, catalytic materials, and hybrid organic-inorganic systems, where accurate property prediction can significantly accelerate discovery pipelines.

Theoretical Foundations and Key Concepts

Defining the Modeling Domains

Organic compound modeling primarily concerns molecules centered on carbon skeletons, typically featuring covalent bonding and discrete molecular structures. These compounds often exhibit predictable connectivity patterns that can be efficiently represented using graph-based approaches [11]. The QSPR models for organic compounds leverage descriptors that capture molecular branching, functional group presence, and electronic effects within finite molecules.

Inorganic compound modeling encompasses a vastly broader chemical space, including ionic solids, intermetallic compounds, coordination complexes, and extended periodic structures. Unlike organic molecules, inorganic materials frequently lack discrete molecular boundaries in their solid states, existing as extended crystal lattices with complex periodicity [12]. This fundamental structural difference necessitates descriptors that can represent infinite periodic systems, diverse coordination environments, and mixed bonding types.

Fundamental Modeling Challenges

The core challenge in inorganic materials modeling stems from the structural complexity and diversity of bonding environments. Where organic molecules predominantly feature covalent bonds with relatively predictable geometries, inorganic compounds can exhibit ionic, metallic, and covalent bonding, often within the same material [12]. This diversity complicates descriptor development, as no single representation adequately captures all bonding scenarios.

Additionally, inorganic materials frequently exist as thermodynamically metastable phases that are nonetheless synthesizable and functionally important. Traditional thermodynamic descriptors like formation energy alone often fail to predict synthesis feasibility for these systems, as kinetic factors play a crucial role in their formation and stability [12]. This contrasts with organic molecular stability, which is more reliably predicted from molecular structure alone.

Comparative Analysis of Modeling Approaches

Descriptor Selection and Application

Table 1: Comparison of Descriptors in Organic and Inorganic QSPR Modeling

| Descriptor Category | Organic Compound Applications | Inorganic Compound Applications | Key Differences |

|---|---|---|---|

| Topological Descriptors | Degree-based indices (Randić, Zagreb), connectivity indices; Predict physicochemical properties of antibiotics and drug candidates [3] | Limited application for extended crystal structures; More commonly used in organometallic complexes | Direct applicability to molecular graphs vs. challenge for periodic systems |

| Electronic Descriptors | HOMO/LUMO energies, molecular dipole moments, partial atomic charges | Band structure, density of states, Fermi level, formation energy from DFT [13] | Molecular orbital theory vs. band theory framework |

| Geometric Descriptors | Molecular volume, surface area, asphericity | Crystal symmetry (space group), lattice parameters, atomic packing factors [13] | Finite molecular geometry vs. infinite periodic lattice parameters |

| Thermodynamic Descriptors | Heats of formation, bond dissociation energies | Formation energy relative to convex hull, phase stability, synthesis feasibility [12] | Molecular stability vs. phase stability in chemical space |

Data Availability and Model Development

Table 2: Data Infrastructure for QSPR Model Development

| Aspect | Organic QSPR | Inorganic QSPR |

|---|---|---|

| Database Size & Diversity | Large, diverse databases with well-established molecular representations [11] | More modest databases in both number and content [11] |

| Representation Standards | SMILES, InChI, molecular graphs | CIF files, composition-based representations, crystal graphs |

| Experimental Data | Abundant physicochemical and biochemical data [7] [14] | Sparse, high-cost experimental data leading to class imbalance issues [12] |

| Software Compatibility | Mature software ecosystem for organic molecules [11] | Emerging tools often require specialized adaptation for inorganic systems |

Methodological Frameworks and Experimental Protocols

QSPR Workflow for Organic Compounds

The standard workflow for organic QSPR modeling involves carefully curated molecular datasets, descriptor calculation, and model validation following OECD principles [7]:

Protocol 1: Organic QSPR Model Development for Retention Index Prediction

- Data Curation: Compile experimental data for target property (e.g., retention indices of 61 volatile organic compounds in quinoa seeds) [7]

- Molecular Structure Optimization: Optimize molecular geometries using semi-empirical or DFT methods to obtain minimum energy conformations

- Descriptor Calculation: Compute 5,633+ molecular descriptors categorized into 33 logical blocks and 166 MACCS structural keys using software such as alvaDesc [7]

- Descriptor Filtering:

- Remove non-informative descriptors (2,578 constant-value features)

- Eliminate near-constant values (64 descriptors)

- Exclude features with missing values (43 descriptors)

- Result: 2,948 descriptors for supervised selection [7]

- Dataset Division: Split data into training set (48 compounds, ~80%) and test set (13 compounds, ~20%) using a balanced subset method [7]

- Model Development: Employ multivariate analysis to identify optimal 4-descriptor model through supervised variable selection

- Validation:

- Internal validation: Cross-validation with various strategies

- External validation: Predict test set compounds not used in training

- Statistical metrics: R²train = 0.957, R²test = 0.954 [7]

- Applicability Domain: Define chemical space where model provides reliable predictions

Advanced Inorganic Material Generation with MatterGen

For inorganic materials, generative models like MatterGen represent cutting-edge approaches that overcome traditional QSPR limitations:

Protocol 2: Inorganic Material Generation with MatterGen

Base Model Pretraining:

- Dataset: Curate Alex-MP-20 dataset with 607,683 stable structures from Materials Project and Alexandria datasets [13]

- Architecture: Implement diffusion process generating atom types, coordinates, and periodic lattice simultaneously

- Training: Learn score network with invariant scores for atom types and equivariant scores for coordinates/lattice

Property-Guided Fine-tuning:

- Adapter Modules: Inject tunable components into base model layers to alter outputs based on property labels [13]

- Conditioning: Use classifier-free guidance to steer generation toward target properties (chemistry, symmetry, mechanical/electronic/magnetic properties)

- Multi-property Optimization: Generate materials satisfying multiple constraints (e.g., high magnetic density with low supply-chain risk) [13]

Stability Assessment:

- DFT Validation: Perform density functional theory calculations on generated structures

- Stability Metric: Define stable materials as those with energy ≤0.1 eV/atom above convex hull of reference dataset [13]

- Structure Matching: Use ordered-disordered structure matcher to identify new materials

Experimental Validation:

- Synthesis: Select generated materials for laboratory synthesis

- Property Measurement: Compare measured properties with target values (e.g., within 20% of target) [13]

Cross-Domain Modeling with CORAL Software

For researchers working across both domains, the CORAL software offers a unified approach:

Protocol 3: Cross-Domain QSPR with Monte Carlo Optimization

- Data Preparation: Represent compounds via SMILES without distinction between organic and inorganic compounds [11]

- Descriptor Calculation: Use correlation weights of SMILES attributes as descriptors (DCW)

- Dataset Division: Split data into four subsets using Las Vegas algorithm:

- Active training set (optimization of correlation weights)

- Passive training set (evaluation of correlation weight suitability)

- Calibration set (detection of optimization stagnation)

- Validation set (final model evaluation) [11]

- Optimization Strategies:

- Apply Index of Ideality of Correlation (IIC) for toxicity endpoints

- Use Coefficient of Conformism of Correlative Prediction (CCCP) for partition coefficient and enthalpy models [11]

- Model Validation: Assess predictive potential through statistical analysis across multiple random splits

Essential Research Reagent Solutions

Table 3: Key Research Tools for Organic and Inorganic Modeling

| Research Tool | Application Domain | Function | Examples |

|---|---|---|---|

| KingDraw | Organic Chemistry | Molecular structure drawing and visualization | Drawing NF antibiotic structures [3] |

| alvaDesc | Organic QSPR | Molecular descriptor calculation (5,633+ descriptors) | Calculating descriptors for VOC retention index prediction [7] |

| CORAL Software | Cross-Domain | QSPR/QSAR modeling with Monte Carlo optimization | Modeling octanol-water coefficients for mixed compound sets [11] |

| DFT Codes | Inorganic Materials | Electronic structure calculations for crystals | Formation energy, band structure calculations [13] [12] |

| MatterGen | Inorganic Materials | Generative model for stable inorganic crystals | Designing materials with target properties [13] |

| Paragraph2Actions | Organic Synthesis | Converting experimental text to action sequences | Extracting procedures from patents for training data [15] |

The fundamental divide between organic and inorganic compound modeling stems from intrinsic differences in chemical bonding, structural complexity, and available data infrastructure. Organic QSPR benefits from well-established molecular representations and abundant data, enabling precise property prediction using topological and electronic descriptors. In contrast, inorganic QSPR confronts the challenges of periodic structures, diverse bonding environments, and data scarcity, requiring specialized approaches like generative models and crystal graph representations. For researchers pursuing inorganic materials design, integration of generative AI with high-throughput experimentation and synthesis validation represents the most promising path forward. As both fields evolve, cross-domain approaches that leverage strengths from each domain will become increasingly valuable, particularly for emerging applications in hybrid organic-inorganic materials and metallodrug development.

The pursuit of novel materials through data-driven discovery is revolutionizing inorganic chemistry, yet this promise is constrained by a fundamental challenge: data scarcity. For many properties critical to the development of next-generation technologies, the available data is both scarcely populated and of variable quality, creating a significant bottleneck for machine learning (ML)-accelerated discovery [16]. This data landscape is characterized by a trade-off between enumerating hypothetical materials and studying those with existing synthesis data, with each approach presenting distinct challenges for building robust quantitative structure-property relationship (QSPR) models [16]. The problem is particularly acute for inorganic compounds and transition metal complexes (TMCs), where properties computed from widely used methods like density functional theory (DFT) can be highly sensitive to the chosen computational parameters, thus reducing data utility for discovery efforts [16]. This article examines the current state of inorganic compound databases within the context of QSPR research, evaluates methodological innovations overcoming data limitations, and provides a strategic framework for database utilization in predictive materials design.

The Data Scarcity Challenge in Inorganic Chemistry

Data scarcity in inorganic chemistry stems from multiple interconnected factors that limit the availability of high-fidelity data for QSPR modeling.

Methodological Limitations in Data Generation

The reliance on computational methods like DFT for high-throughput screening introduces significant data quality challenges. Different density functional approximations (DFAs) can yield varying results for the same compound, with errors often most pronounced in promising classes of functional materials exhibiting challenging electronic structure, such as those with strong multireference character [16]. For these systems, cost-prohibitive wavefunction theory (WFT) calculations may be necessary to obtain accurate properties, creating a fundamental tension between data quantity and fidelity [16]. This methodological sensitivity introduces bias in data generation and reduces the quality of data in a way that degrades utility for discovery efforts.

Experimental Data Limitations

While high-throughput experimentation has advanced significantly, it remains time-intensive relative to computation and is often limited in scope to a single class of materials amenable to automated synthesis and characterization [16]. Except for structural data, experimental properties are seldom reported by multiple sources in a standardized format. Furthermore, positive publication bias creates a significant data imbalance, as negative results are often underrepresented in the literature [16]. This bias toward successful experiments limits the ability of models to learn from failures, which is crucial for predicting synthesis outcomes and materials stability.

Current Database Landscape and Utilization

Researchers navigating the sparse data landscape for inorganic compounds rely on both established repositories and innovative utilization strategies. The table below summarizes key databases and their applications in addressing data scarcity challenges.

Table 1: Key Databases and Applications in Inorganic Materials Research

| Database Name | Primary Content | Scale | Applications in QSPR | Notable Strengths |

|---|---|---|---|---|

| Cambridge Structural Database (CSD) [16] | Experimentally determined organic and metal-organic crystal structures | >100,000 TMCs [16]; 90,000 MOFs [16] | Assigning oxidation/spin states; Training ML models for property prediction | Large volume of curated experimental data |

| Materials Project [16] | Computed properties of inorganic materials | Not specified in results | High-throughput virtual screening; Materials design principles | Computed properties accessible for community use |

| CCDC Database [17] | Crystal structures from crystallographic studies | Not specified in results | Pretraining deep learning models for catalytic property prediction | Structural data for transfer learning |

| QM9 [17] | Quantum chemical properties for small organic molecules | Not specified in results | Baseline for molecular property prediction | Extensive quantum chemical calculations |

| Custom-tailored Virtual Databases [17] | Computer-generated molecular structures with topological indices | 25,000-30,000 molecules [17] | Pretraining deep learning models for catalytic activity prediction | Cost-efficient generation of large datasets |

When high-throughput, automated tools are unavailable, researchers increasingly turn to community data resources like the CSD [16]. For example, Taylor et al. curated a set of bimetallic complexes from the CSD with emergent metal-metal interactions that are challenging to predict with first-principles DFT modeling [16]. They used a subset of experimentally characterized complexes to train machine learning models that could identify promising candidates from the broader CSD, demonstrating how existing community resources can be mined to overcome data scarcity for specific challenging properties.

Methodological Innovations for Data Scarcity

Transfer Learning and Virtual Databases

Transfer learning (TL) has emerged as a powerful strategy for overcoming data limitations in catalysis research and inorganic chemistry [17]. This approach consists of transferring knowledge acquired from one task to another to enhance model performance with minimal data. A particularly innovative approach involves using custom-tailored virtual molecular databases composed of inorganic-like fragments for pretraining graph convolutional network (GCN) models [17].

Table 2: Methodological Approaches to Data Scarcity in Inorganic Chemistry

| Methodological Approach | Core Principle | Application Examples | Limitations |

|---|---|---|---|

| Transfer Learning from Virtual Databases [17] | Pretrain models on large virtual datasets; Fine-tune on limited experimental data | Predicting photocatalytic activity of organic photosensitizers | Domain shift between virtual and real molecules |

| Consensus Across Multiple DFAs [16] | Aggregate predictions from multiple density functionals | Identifying optimal DFA-basis set combinations using game theory | Increased computational cost |

| Multifidelity Modeling [16] | Combine high-cost accurate data with lower-cost approximations | Using both WFT and DFT data for improved predictions | Complex model integration |

| Natural Language Processing [16] | Extract structured data from scientific literature | Automated data extraction from thousands of manuscripts | Data quality and standardization issues |

Researchers have developed methods to construct these virtual databases by systematically combining molecular fragments or using reinforcement learning (RL)-based molecular generation [17]. For example, one study used 30 donor fragments, 47 acceptor fragments, and 12 bridge fragments to generate over 25,000 molecules, 94-99% of which were unregistered in PubChem [17]. To address the challenge of obtaining expensive quantum chemical or experimental properties for these virtual molecules, researchers have used readily calculable molecular topological indices as pretraining labels, which nonetheless improve predictive performance for real-world catalytic activity when used in transfer learning approaches [17].

Addressing Electronic Structure Method Sensitivity

Several innovative approaches have been developed to address the challenge of electronic structure method sensitivity in data for ML models:

- Game Theory for Functional Selection: McAnanama-Brereton and Waller developed an approach using game theory to identify optimal DFA-basis set combinations, creating a recommender system that improves prediction consensus [16].

- Consensus Across Multiple DFAs: Another strategy involves leveraging consensus across multiple density functionals to improve prediction reliability and identify areas where high-cost methods are most necessary [16].

- Machine Learning for Multireference Character: Duan et al. used machine learning to detect strong multireference character in molecules, helping identify where conventional DFT methods would fail and more advanced wavefunction theory is needed [16].

The following diagram illustrates a transfer learning workflow from virtual molecular databases to real-world catalyst prediction:

Diagram 1: Transfer learning from virtual databases

Hybrid Computational-Experimental Approaches

Many fundamental electronic properties, such as the ground-state spin of a transition metal complex, remain challenging to determine by computation alone due to strong dependence on the method used [16]. In such cases, a combination of experimental data and computation can overcome these limitations [16]. For instance, Taylor et al. used an artificial neural network trained on DFT bond lengths to assign oxidation and spin states to transition metal complexes in the CSD, demonstrating how hybrid approaches can leverage both computational and experimental data strengths [16].

Experimental Protocols and Workflows

Virtual Database Creation and Transfer Learning Protocol

Objective: To create a transfer learning pipeline for predicting catalytic activity of inorganic compounds using virtual molecular databases.

Materials and Methods:

- Fragment Libraries: Prepare 30 donor fragments (aryl/alkyl amino groups, carbazolyl groups with various substituents), 47 acceptor fragments (nitrogen-containing heterocyclic rings, aromatic rings with electron-withdrawing groups), and 12 bridge fragments (π-conjugated fragments like benzene, acetylene, ethylene) [17].

- Database Generation:

- Pretraining Labels: Calculate 16 molecular topological indices (Kappa2, PEOE_VSA6, BertzCT, etc.) using RDKit and Mordred descriptor sets as pretraining labels [17].

- Model Architecture: Implement graph convolutional network (GCN) for molecular representation learning.

- Transfer Learning:

- Step 1: Pretrain GCN on virtual database using topological indices as targets.

- Step 2: Fine-tune pretrained model on limited experimental catalytic activity data.

- Step 3: Evaluate model performance on test set of real-world catalysts.

Validation: Assess model performance using correlation coefficients and root-mean-square error between predicted and experimental catalytic activities [17].

Consensus DFT Protocol for Improved Data Quality

Objective: To generate more reliable computational data for inorganic compounds through consensus across multiple density functionals.

Workflow:

- Functional Selection: Select multiple density functional approximations (DFAs) representing different rungs of Jacob's Ladder (e.g., LDA, GGA, meta-GGA, hybrid) [16].

- Consensus Calculation: Compute target properties using all selected DFAs and analyze distribution of results [16].

- Game Theory Application: Implement game theory approach to identify optimal DFA-basis set combinations that maximize consensus [16].

- Data Integration: Incorporate consensus values into materials database with metadata on method agreement.

The following workflow illustrates the hybrid computational-experimental approach for building robust QSPR models:

Diagram 2: Hybrid computational-experimental workflow

Table 3: Essential Computational and Experimental Resources for Inorganic Database Research

| Resource Category | Specific Tools/Resources | Function/Application | Key Features |

|---|---|---|---|

| Computational Databases | Materials Project [16] | High-throughput screening of inorganic materials | Computed properties for community use |

| Cambridge Structural Database (CSD) [16] | Training ML models on experimental crystal structures | >100,000 transition metal complexes | |

| Experimental Databases | CSD [16] | Source for experimental structural data | Curated crystal structures |

| Micro-computed tomographies [18] | Digitization of real material morphologies | Precise morphology for diffusion simulations | |

| Software & Algorithms | RDKit/Mordred descriptors [17] | Calculation of molecular topological indices | Cost-efficient pretraining labels |

| Graph Convolutional Networks (GCN) [17] | Molecular representation learning | Transfer learning from virtual databases | |

| Lattice Boltzmann Model (LBM) [18] | Single-phase fluid flow simulation in porous media | GPU-accelerated computation | |

| Molecular Generation | Systematic fragment combination [17] | Virtual database generation | Controlled exploration of chemical space |

| Reinforcement learning molecular generator [17] | Directed exploration of chemical space | Reward based on molecular dissimilarity |

The landscape of inorganic compound databases is rapidly evolving from static repositories to dynamic platforms that integrate community feedback and continuous learning. Future developments will likely focus on creating more sophisticated feedback mechanisms where researcher interactions with model predictions are systematically incorporated to improve both data quality and model performance [16]. As these databases grow more comprehensive through the integration of virtual compounds, multifidelity data, and automated literature extraction, they will increasingly enable the discovery of robust materials with well-understood structure-property relationships [16].

The integration of physical models with machine learning approaches represents another promising direction for overcoming data scarcity. While not specifically covered in the available search results, such hybrid approaches can leverage the fundamental knowledge encoded in physical models to reduce the amount of empirical data needed for accurate predictions. Similarly, the use of large language models for automated data extraction from the vast body of existing scientific literature shows tremendous potential for populating databases with previously inaccessible information [19].

In conclusion, while data scarcity remains a significant challenge in inorganic materials research, the development of innovative database generation strategies, transfer learning methodologies, and hybrid computational-experimental approaches is rapidly expanding the frontiers of what is possible. By strategically leveraging these emerging resources and techniques, researchers can accelerate the discovery and development of novel inorganic compounds with tailored properties for specific applications, from catalysis to energy storage and beyond.

The development of quantitative structure-property relationship (QSPR) models for inorganic compounds presents unique challenges and opportunities in materials science and drug development. Unlike organic molecules, inorganic systems often feature complex bonding patterns, periodicity, and diverse elemental compositions that require specialized descriptors for accurate characterization. This technical guide provides an in-depth examination of the core molecular descriptors essential for modeling inorganic compounds, framing them within the broader context of modern QSPR research. The descriptors covered herein enable researchers to correlate structural features with physical properties, biological activity, and materials performance, thereby accelerating the design of novel inorganic materials with tailored functionalities.

Topological Descriptors for Inorganic Systems

Topological descriptors quantify molecular structure using graph theory, representing atoms as vertices and bonds as edges. While originally developed for organic molecules, recent advances have extended their applicability to inorganic compounds.

Classical Topological Indices

Traditional graph-based indices provide a mathematical foundation for characterizing molecular structure, though their application to inorganic systems often requires modification:

- Wiener Index: The sum of the shortest path distances between all pairs of atoms in the molecular graph, useful for characterizing branching in molecular structures [20].

- Zagreb Indices: The first Zagreb index (M₁) is based on the sum of vertex degrees, while the second (M₂) uses the product of adjacent vertex degrees. These indices relate to the total π-electron energy of molecules [21].

- Randic Index: Also known as the connectivity index, it is defined as the sum of (dᵢdⱼ)⁻⁰⁵ over all edges in the molecular graph, where dᵢ and dⱼ represent the vertex degrees [21].

Recent research has proposed novel topological indices specifically designed for inorganic compounds. The Tareq Index (TI) incorporates bond multiplicity and molecular connectivity to capture bonding patterns in inorganic acids, addressing limitations of traditional indices like Zagreb or Randic for these systems [22].

Table 1: Classical Topological Indices and Their Applications to Inorganic Systems

| Index Name | Mathematical Definition | Application in Inorganic Systems | Limitations |

|---|---|---|---|

| Wiener Index | W = ½∑ᵢ∑ⱼ dᵢⱼ | Characterizes branching in molecular structures | Limited for periodic systems |

| First Zagreb Index | M₁ = ∑ᵢ dᵢ² | Correlates with total electron energy | Less sensitive to bond multiplicity |

| Randic Index | χ = ∑(dᵢdⱼ)⁻⁰⁵ | Predicts boiling points and solubility | Designed primarily for hydrocarbons |

| Tareq Index (TI) | Incorporates bond multiplicity | Specific to inorganic acid molecules | Newly proposed, limited validation |

Statistical-Mechanical Interpretation

A significant theoretical advancement demonstrates that topological indices can be interpreted as molecular partition functions at very high temperatures. This statistical-mechanical framework establishes that topological indices are partition functions of molecules when submerged in a thermal bath at extremely high temperatures, derived through generalized tight-binding Hamiltonians of molecular graphs. This interpretation has enabled dramatic improvements in quantitative structure-property relations [23].

Electronic Structure Descriptors

Electronic descriptors derived from quantum chemical computations provide insights into reactivity, stability, and electronic properties of inorganic compounds.

Density Functional Theory (DFT) Based Descriptors

Low-cost quantum chemical computations using the DFT/COSMO approach enable the determination of theoretical molecular descriptor scales independent of experimental data. These descriptors have demonstrated good performance in LSER correlations of solvation-related thermodynamic and kinetic properties [24]:

- Volume (V*COSMO): Characterizes molecular volume within the COSMO solvation model

- Hydrogen Bond/Lewis Acidity (αCOSMO) and Basicity (βCOSMO): Quantify hydrogen bonding capability and Lewis acid-base properties

- Charge Asymmetry (δCOSMO): Captures charge distribution asymmetry in nonpolar molecular regions

These theoretical descriptor scales correlate linearly with established empirical scales (mostly R² > 0.8, with some exceeding R² > 0.9), validating their physical relevance despite being derived purely computationally [24].

Table 2: Electronic Structure Descriptors for Inorganic Systems

| Descriptor Category | Specific Descriptors | Computational Level | Applications |

|---|---|---|---|

| DFT/COSMO Parameters | VCOSMO, αCOSMO, βCOSMO, δCOSMO | DFT/COSMO | Solvation properties, partition coefficients |

| Frontier Molecular Orbitals | HOMO/LUMO energies, band gap | Semi-empirical to DFT | Reactivity, conductivity, optical properties |

| Charge-Based Descriptors | Partial atomic charges, dipole moments | Various levels of theory | Polarity, intermolecular interactions |

| Surface Property Descriptors | CPSA, TPSA | Empirical to QM | Solubility, membrane permeability |

Electronic and Crystal Structure (ECS²) Model

The ECS² model predicts binary solid solution formation by prioritizing electronic structure similarity, with atomic size as a secondary factor. This approach significantly outperforms the traditional 15% Hume-Rothery size rule (84.5% vs. 70.7% reliability) and the Darken-Gurry model (84.5% vs. 72.4% reliability). The model uses crystal structures as surrogates for electronic structure and atomic sizes of elements, making it practical for predicting primary solid solutions [25].

Fragment and Surface Descriptors

Property-Labelled Materials Fragments (PLMF)

PLMF descriptors represent inorganic crystals as 'coloured' graphs where vertices are decorated with atomic properties rather than just elemental symbols. The construction methodology involves several key steps [26]:

- Atomic Connectivity Determination: Partition crystal structure into atom-centered Voronoi-Dirichlet polyhedra using computational geometry

- Bonding Criteria: Atoms are connected if they share a Voronoi face AND the interatomic distance is shorter than the sum of Cordero covalent radii (within 0.25 Å tolerance)

- Graph Representation: Create adjacency matrix reflecting global topology including interatomic bonds and contacts

- Fragment Generation: Partition full graph into path fragments (linear strands of up to 4 atoms) and circular fragments (coordination polyhedra)

The PLMF approach incorporates diverse atomic properties including Mendeleev group/period numbers, valence electrons, atomic mass, electron affinity, thermal conductivity, heat capacity, ionization potentials, effective atomic charge, molar volume, chemical hardness, various radii, electronegativity, and polarizability [26].

Surface Structure Descriptors for Electron Microscopy

AI-STEM represents an automated framework for identifying crystal structures and interfaces from atomic-resolution scanning transmission electron microscopy (STEM) images. The method employs a Bayesian convolutional neural network trained exclusively on simulated images, yet achieves high accuracy on experimental data [27].

The key innovation involves a Fourier-space descriptor (FFT-HAADF) that enhances lattice periodicity information while introducing translational invariance. The workflow involves:

- Local Patch Extraction: Sliding window scans whole image to extract local patches

- Fourier Transform: FFT calculation with pre- and post-processing steps

- BNN Classification: Bayesian neural network assigns symmetry and lattice orientation

- Uncertainty Estimation: Model uncertainty identifies bulk (low uncertainty) vs. interface regions (high uncertainty)

This approach successfully classifies common crystal structures (fcc, bcc, hcp) in various orientations and identifies interfaces without explicit training on defect structures [27].

Experimental Protocols and Methodologies

DFT/COSMO Descriptor Calculation Protocol

The following detailed methodology enables computation of molecular descriptors using low-cost quantum chemistry:

Computational Setup

- Software: Amsterdam Modeling Suite with ADF/COSMO-RS module

- Theory Level: Density Functional Theory (DFT)

- Solvation Model: COSMO (Conductor-like Screening Model)

- Geometry: Fully optimized molecular structures

Step-by-Step Procedure

- Geometry Optimization

- Initial molecular structure preparation

- DFT optimization with appropriate basis set

- Convergence criteria: energy change < 10⁻⁵ Ha, gradient < 10⁻⁴ Ha/Å

COSMO Calculation

- Single-point energy calculation with COSMO solvation model

- Dielectric constant set to infinity (conductor limit)

- Obtain screening charge densities on molecular surface

Descriptor Extraction

- VCOSMO: Calculate from molecular volume in COSMO cavity

- αCOSMO and βCOSMO: Determine from hydrogen bond acceptor and donor capabilities via surface screening charge analysis

- δCOSMO: Compute charge asymmetry in nonpolar regions

Validation

- Linear correlation with empirical scales (Abraham, Kamlet-Taft, Catalan)

- Identify and analyze statistical outliers

- Apply to LSER fitting of solvation-related properties

This methodology has been validated on sets of 128 non-ionic organic molecules and 47 ionic liquid ions, demonstrating correlation coefficients R² > 0.8-0.9 with established empirical scales [24].

PLMF Generation Protocol

The generation of Property-Labelled Materials Fragments follows this experimental workflow:

Input Data Preparation

- Crystallographic information files (CIF) for inorganic crystals

- Database of atomic properties (33+ physicochemical parameters)

Connectivity Analysis

- Voronoi-Dirichlet tessellation of crystal structure

- Bond determination using dual criteria:

- Voronoi face sharing between atomic sites

- Interatomic distance ≤ sum of Cordero covalent radii + 0.25 Å tolerance

Descriptor Computation

- Generate path fragments (maximum length 3) and circular fragments

- Calculate property statistics (min, max, sum, average, standard deviation) for each fragment

- Incorporate crystal-wide properties (lattice parameters, symmetry)

- Filter low-variance (<0.001) and highly correlated (r²>0.95) features

- Final descriptor vector contains 2,494 features

This approach has demonstrated predictive accuracy for eight electronic and thermomechanical properties, including metal/insulator classification, band gap energy, bulk/shear moduli, Debye temperature, and heat capacities [26].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Inorganic Descriptor Calculation

| Tool/Software | Primary Function | Application in Inorganic Systems | Key Features |

|---|---|---|---|

| Amsterdam Modeling Suite | DFT/COSMO computations | Calculation of VCOSMO, αCOSMO, βCOSMO, δCOSMO descriptors | COSMO-RS module for solvation properties |

| CORAL Software | QSPR/QSAR model development | Modeling inorganic compounds and organometallic complexes | Monte Carlo optimization with target functions (IIC, CCCP) |

| AFLOW Repository | High-throughput computational materials data | Source of training data for machine learning models | Contains calculated properties for thousands of inorganic crystals |

| AI-STEM | Automated STEM image analysis | Crystal structure and interface identification from microscopy | Bayesian CNN trained on simulated images |

| PLMF Generator | Fragment descriptor calculation | Representation of inorganic crystals as property-labeled graphs | Voronoi-based connectivity analysis |

| MatterGen | Generative materials design | Inverse design of stable inorganic materials | Diffusion-based generation of crystal structures |

Advanced Applications and Emerging Approaches

Generative Models for Inverse Materials Design

Recent advances in generative models represent a paradigm shift in inorganic materials discovery. MatterGen, a diffusion-based generative model, directly generates stable, diverse inorganic materials across the periodic table and can be fine-tuned toward specific property constraints [13].

Key capabilities of MatterGen include:

- Stable Structure Generation: 78% of generated structures fall below 0.1 eV/atom on the convex hull

- Novelty: 61% of generated structures are new with respect to known databases

- Property Optimization: Fine-tuning enables generation of materials with target chemistry, symmetry, and mechanical/electronic/magnetic properties

- Synthesizability: Successful experimental validation of generated structures

This approach significantly outperforms previous generative models, more than doubling the percentage of generated stable, unique, and new (SUN) materials while producing structures ten times closer to their DFT-relaxed configurations [13].

QSPR/QSAR Modeling Strategies for Inorganics

Comparative studies reveal important differences in QSPR modeling strategies for organic versus inorganic compounds. Key considerations for inorganic systems include:

- Descriptor Optimization: The coefficient of conformism of correlative prediction (CCCP) generally provides better predictive potential for inorganic property models compared to the index of ideality of correlation (IIC) [11]

- Representation Challenges: Salts and disconnected structures require specialized handling compared to predominantly covalent organic molecules

- Data Scarcity: Inorganic compound databases are considerably more limited in both number and content compared to organic databases

Successful modeling of inorganic compounds often requires specialized representations such as the electronic and crystal structure (ECS²) approach, which prioritizes electronic structure compatibility through crystal structure similarity before applying size criteria [25].

The landscape of molecular descriptors for inorganic systems has evolved significantly from adaptations of organic chemistry descriptors to specialized approaches addressing the unique challenges of inorganic compounds. Topological indices with statistical-mechanical interpretations, DFT-derived electronic parameters, property-labeled fragment descriptors, and AI-based structural analysis tools collectively provide a comprehensive toolkit for quantitative structure-property relationship modeling. Emerging generative approaches now enable inverse design of inorganic materials with targeted properties, representing a transformative advancement in materials discovery. As these methodologies continue to mature and integrate, they promise to accelerate the development of novel inorganic compounds with optimized properties for applications spanning energy storage, catalysis, electronics, and pharmaceutical development.

The Critical Role of Data Curation and Standardization in Model Reliability

In the field of quantitative structure-property relationship (QSPR) research, the reliability of predictive models is paramount. For inorganic compounds and drug development applications, the adage "garbage in, garbage out" is particularly pertinent. Model reliability begins not with algorithmic sophistication but with the foundational practices of data curation and standardization. Recent perspectives highlight that while the importance of data curation is recognized across research domains, its discussion is only beginning to gain traction in materials science [28]. This technical guide examines the critical need for rigorous data curation standards, detailing methodologies and frameworks that ensure QSPR models for inorganic compounds achieve the reproducibility and accuracy required for scientific and regulatory acceptance.

The Data Quality Imperative in QSPR Modeling

The performance of QSPR models is intrinsically tied to the quality of the underlying data and the methodologies used for modeling [29]. Inaccurate or inconsistent data propagates through the modeling pipeline, compromising predictive accuracy and scientific validity. For inorganic compounds, this challenge is exacerbated by the complexity of crystalline structures, diverse synthesis conditions, and varied experimental protocols.

The consequences of poor data quality are profound. Without rigorous curation, even advanced machine learning algorithms produce models that fail to generalize beyond their training sets or provide unreliable predictions for regulatory decisions. Research indicates that embracing a culture of rigorous data curation is essential to promoting the reliability, reproducibility, and integrity of materials research, which in turn enables the development of trustworthy AI and machine learning models that depend on quality data [28].

The Standardization Gap in Materials Informatics

Despite established databases such as the Crystallography Open Database (COD) and the Cambridge Structural Database (CSD), inconsistent data reporting remains a significant obstacle. The absence of unified data curation standards leads to heterogeneous datasets with incompatible formats, missing metadata, and unvalidated entries. This heterogeneity creates artificial boundaries in data and hinders the development of robust, generalizable models [30] [28].

A Standardized Data Curation Pipeline

To address these challenges, we propose a sample data curation pipeline for materials chemistry, illustrated below. This workflow transforms raw, heterogeneous data into a curated, standardized resource suitable for reliable QSPR modeling.

Pipeline Stage Specifications

Data Curation and Cleaning

The initial stage involves rigorous data cleaning to identify and rectify inconsistencies, outliers, and errors. This process includes:

- Unit normalization: Ensuring all measurement units follow consistent systems (e.g., eV for formation energy, Å for lattice parameters)

- Outlier detection: Implementing statistical methods to identify and verify anomalous data points

- Conflict resolution: Addressing contradictory entries from different sources through systematic verification

- Missing data handling: Implementing appropriate imputation strategies or documenting omission reasons

For inorganic materials, particular attention must be paid to formation energy calculations and phase stability annotations, as these fundamentally impact model predictions [13].

Structure Standardization

This critical stage ensures consistent representation of inorganic crystal structures:

- Format standardization: Converting diverse structure representations (CIF, POSCAR, etc.) to a unified format

- Symmetry analysis: Applying consistent space group identification and handling of disordered structures

- Descriptor calculation: Generating standardized molecular descriptors and fingerprints compatible with QSPR frameworks

The NFDI4Cat project exemplifies this approach by mapping data and metadata to relevant ontologies and vocabularies, then representing them semantically within the Resource Description Framework (RDF) to ensure machine-readability and cross-referencing capability [31].

Metadata Annotation

Comprehensive metadata annotation provides essential experimental context:

- Synthesis conditions: Documenting temperature, pressure, and precursor information

- Characterization methods: Specifying analytical techniques and instrumentation

- Computational parameters: Recording functional, basis set, and convergence criteria for DFT calculations

- Provenance tracking: Maintaining data lineage from origin through transformations

Quality Validation

The final pre-modeling stage implements multi-faceted validation:

- Internal consistency checks: Verifying that related properties follow physical laws (e.g., energy-volume equations of state)

- Cross-reference validation: Comparing with established databases and literature values

- Expert review: Domain specialist verification of chemically plausible entries

Experimental Protocols for Data Curation

Use Case-Driven Methodology for Catalysis Research

The NFDI4Cat project has developed a comprehensive methodology for ensuring high-quality data and metadata in catalysis research, which serves as a model for inorganic compound QSPR. The protocol involves:

- Use Case Collection: Systematic gathering of research workflows and data from field researchers across biocatalysis, homogeneous catalysis, and heterogeneous catalysis [31]

- Quality Evaluation: Assessing collected use cases against established criteria for data and metadata quality

- Collaborative Refinement: Addressing identified issues through direct collaboration with researchers

- Standardization and Semantic Representation: Mapping standardized metadata to ontologies within the Resource Description Framework (RDF) [31]

This methodology ensures that the resulting data infrastructure comprehensively represents catalysis metadata while adhering to established standards.

OECD-Compliant QSPR Model Development

For regulatory acceptance, QSPR models must adhere to the OECD principles for validation. The following workflow illustrates the integration of curated data with model development to ensure reliability and reproducibility.

The q-RASPR (quantitative read-across structure-property relationship) approach exemplifies OECD-compliant modeling, integrating chemical similarity information with traditional QSPR. This methodology:

- Adheres to all five OECD principles for QSPR model validation [9]

- Defines predicted endpoints clearly and uses transparent, reproducible algorithms

- Determines applicability domains to ensure reliable predictions

- Demonstrates strong internal and external validation metrics [9]

Model Reproducibility and Deployment Framework

Ensuring model reproducibility requires capturing the complete modeling workflow:

- Complete Code Preservation: Saving all code used for data preprocessing, feature generation, and model training

- Version Control: Documenting software library versions and dependencies

- Data and Model Serialization: Packaging models with all preprocessing steps for direct deployment

Tools like QSPRpred address these needs through automated serialization schemes that save models with required data pre-processing steps, enabling predictions directly from SMILES strings and significantly improving reproducibility and transferability [32].

Quantitative Impact of Data Curation

The table below summarizes key quantitative findings on how data curation practices impact model performance and scientific outcomes.

Table 1: Quantitative Benefits of Data Curation and Standardization in Materials Informatics

| Metric Category | Specific Impact | Quantitative Improvement | Research Context |

|---|---|---|---|

| Generative Model Performance | Success rate of generating stable, unique, new materials | More than doubled percentage [13] | MatterGen model for inorganic materials design |

| Structural Accuracy | Distance to DFT local energy minimum (RMSD) | >10x closer to ground truth [13] | Comparison with previous generative models |

| Data Comprehension | Information retention with visual + text combination | 65% with visuals vs. 10% with text alone [33] | STEM education research |

| Model Reliability | Rediscovery of experimentally verified structures | >2,000 ICSD structures not seen during training [13] | Validation of generative model output |

Essential Research Reagent Solutions

The following toolkit details essential computational resources and their functions in implementing rigorous data curation and QSPR modeling for inorganic compounds.

Table 2: Essential Computational Tools for Data Curation and QSPR Modeling

| Tool/Resource Name | Type/Category | Primary Function in Data Curation & QSPR |

|---|---|---|

| OPERA | QSAR/QSPR Suite | Provides open-source, open-data QSAR models with predictions for toxicity endpoints and physicochemical properties aligned with OECD standards [29] |

| QSPRpred | Python API | Offers modular toolkit for QSPR modeling with comprehensive serialization of data preprocessing and model components for improved reproducibility [32] |

| NFDI4Cat Methodology | Framework | Establishes use case-driven approach for standardizing catalysis research data through semantic RDF representation [31] |

| Resource Description Framework (RDF) | Semantic Framework | Enables easy integration and cross-referencing of data, ensuring machine-readability and linked data capabilities [31] |

| MatterGen | Generative Model | Creates stable, diverse inorganic materials across periodic table with property constraints, demonstrating impact of quality training data [13] |

| q-RASPR | Modeling Approach | Integrates chemical similarity information with traditional QSPR to enhance predictive accuracy and robustness [9] |

Data curation and standardization are not preliminary administrative tasks but foundational scientific practices that directly determine the reliability and utility of QSPR models for inorganic compounds. As the field advances toward more complex generative models and AI-driven materials design, the principles outlined in this technical guide become increasingly critical. By implementing rigorous data curation pipelines, adopting standardized methodologies, and utilizing appropriate computational tools, researchers can ensure their QSPR models achieve the reproducibility, accuracy, and regulatory acceptance necessary to drive genuine scientific and technological progress in inorganic materials design and drug development.

Methodologies and Real-World Applications: Building Predictive QSPR Models for Inorganics

Quantitative Structure-Property Relationship (QSPR) modeling for inorganic compounds presents unique computational challenges that extend beyond traditional organic-focused approaches. While organic QSPR typically deals with covalent molecular structures, inorganic compounds encompass salts, organometallics, and complex ions characterized by ionic bonding, coordination geometry, metal-specific electronic effects, and diverse solvation behaviors. The descriptor calculation framework must capture these inorganic-specific features to build predictive models for properties such as catalytic activity, Lewis acidity/basicity, and materials performance [34].

Traditional molecular descriptors developed for drug discovery often fall short when applied to broader chemical spaces containing inorganic compounds. This limitation has driven the development of specialized descriptors and approaches that explicitly handle the structural and electronic complexities of inorganic systems [35]. This technical guide examines current methodologies for calculating meaningful descriptors for inorganic compounds within the context of QSPR research, addressing the particular challenges presented by salts, organometallics, and complex ions.

Fundamental Concepts and Inorganic-Specific Challenges

Key Differences from Organic Compound Descriptors

Inorganic compounds require descriptor calculation approaches that account for several unique characteristics. Coordination geometry and metal-ligand bonding are fundamental aspects not present in organic molecules. The variable coordination numbers and oxidation states of metal centers create diverse structural possibilities. Additionally, ionic interactions and lattice energies for salts, along with solvation effects in coordinating solvents, significantly influence properties and reactivity [34] [36].

For organometallic compounds, the presence of both organic and inorganic components necessitates descriptors that capture this hybrid character. The geometric structures of even simple organometallics, such as diorganozincs (ZnR₂) in non-coordinating solvents, demonstrate linear C-Zn-C arrangements with angles of 180° (or flex between 160-180°), as confirmed by Zn 1s HERFD-XANES spectroscopy [34]. This structural information is crucial for developing accurate electronic descriptors.

The Spectroscopic Silence Problem

Many metal centers, particularly closed-shell d¹⁰ Zn²⁺, are "spectroscopically quiet" for common techniques like NMR and UV-Vis, creating a significant challenge for experimental descriptor development. This limitation has driven innovation in X-ray spectroscopy methods, including X-ray absorption near edge structure (XANES) and valence-to-core X-ray emission spectroscopy (VtC-XES), which provide zinc-specific electronic structure information [34]. These techniques enable the development of metal-specific descriptors that directly probe the reactive center rather than relying on indirect measurements through peripheral atoms.

Computational Approaches and Descriptor Frameworks

Graph-Theoretic Representations for Inorganic Systems

Graph theory provides a mathematical foundation for representing inorganic structures, particularly for extended networks. In this approach, atoms correspond to vertices and bonds form the edges of the graph. For silicate networks (CSn), studies have applied degree-based topological indices including the Atom Bond Connectivity (ABC) Index, Atom Bond Sum Connectivity (ABS) Index, and Augmented Zagreb Index (AZI) to quantify structural complexity and connectivity patterns [36].

The mathematical formulations for these indices include:

- ABC Index: ( ABC(G) = \sum\limits{uv \in E(G)} {\sqrt {\frac{{d{u} + d{v} - 2}}{{d{u} d_{v} }}} } ) (quantifies molecular branching)

- Sum Zagreb Index (SZI): ( SZI(G) = \sum\limits_{v \in V(G)} {(dg(v))^{3} } ) (captures molecular complexity)

- Geometric Arithmetic Index (GAI): ( GAI(G) = \sum\limits_{uv \in E(G)} {\frac{{2\sqrt {dg(u)dg(v)} }}{dg(u) + dg(v)}} ) (relates to thermodynamic stability)

Table 1: Topological Descriptors for Inorganic Network Structures

| Descriptor | Mathematical Formula | Structural Interpretation | Application Example |

|---|---|---|---|

| ABC Index | ( ABC(G) = \sum\limits{uv \in E(G)} {\sqrt {\frac{{d{u} + d{v} - 2}}{{d{u} d_{v} }}} } ) | Molecular branching | Silicate chain stability |

| SZI Index | ( SZI(G) = \sum\limits_{v \in V(G)} {(dg(v))^{3} } ) | Molecular complexity | Connectivity patterns in CSn |

| Wiener Index | ( W(G) = \sum\limits_{u < v} {d(u,v)} ) | Overall connectivity | Network compactness |

| GAI Index | ( GAI(G) = \sum\limits_{uv \in E(G)} {\frac{{2\sqrt {dg(u)dg(v)} }}{dg(u) + dg(v)}} ) | Thermodynamic stability | Structural robustness |

For single-chain diamond silicates (CSn), these indices follow linear relationships with chain length (n): ABC = 0.1931 + 3.3555n, SZI = 9.8318 + 11.2095n, and GAI = 0.3407 + 4.4641n, enabling quantitative prediction of properties as structure expands [36].

Norm Indices for Universal Property Estimation

Norm indices represent a consistent descriptor framework applicable across diverse compound classes, including organics and inorganics. These indices are derived from the norm of matrices combining step matrices (encoding interatomic connections) with property matrices (capturing atomic characteristics) [37].

QSPR models based on norm indices have demonstrated robust predictive capability for critical properties (Pc, Vc, Tc), boiling points (Tb), and melting points (Tm) across diverse chemical spaces. The model for critical temperature exemplifies this approach: ( Tc = -641.511 + \sum\limits{k=1}^{6} bk Ik + nh \sum\limits{k=7}^{8} bk Ik + ws \sum\limits{k=9}^{16} bk Ik + sm \sum\limits{k=17}^{19} bk Ik + ss \sum\limits{k=20}^{26} bk I_k ) where Iₖ are norm indices and modifiers handle non-hydrocarbon (nₕ), weak (wₛ), medium (sₘ), and strong (sₛ) stereochemical effects [37].

Metal-Specific Electronic Descriptors

For organometallic compounds, metal-centered descriptors provide crucial information about reactivity. Research on diorganozincs has established three zinc-specific descriptors developed through X-ray spectroscopy and computational methods:

- Zinc-specific hardness (ηZn): Characterizes resistance to electron deformation

- Zinc-specific absolute electronegativity (χZn): Represents electron attraction tendency

- Zinc-specific global electrophilicity index (ωZn): Quantifies electrophilic capacity

These intrinsic descriptors capture Lewis acidity/basicity directly at the zinc center, independent of probe molecules, providing more accurate reactivity predictions than peripheral measurements [34].

Advanced Representation Learning Approaches

Molecular representation learning has catalyzed a paradigm shift from manually engineered descriptors to automated feature extraction using deep learning. Graph neural networks (GNNs) now provide sophisticated representations that naturally encode coordination geometry by treating atoms as nodes and bonds as edges [38].

For inorganic compounds, 3D-aware representations that capture spatial geometry offer significant advantages. Equivariant models and learned potential energy surfaces provide physically consistent, geometry-aware embeddings that extend beyond static graphs. These approaches explicitly incorporate quantum mechanical properties and spatial relationships critical for modeling metal-centered reactivity and materials properties [38].

Experimental Protocols for Descriptor Development

X-Ray Spectroscopy Workflow for Metal-Specific Descriptors

The development of zinc-specific descriptors for diorganozincs exemplifies a robust protocol for creating metal-centered descriptors [34]:

Diagram 1: X-ray Spectroscopy Descriptor Workflow

Step 1: Sample Preparation - Prepare 0.1 M solutions of organometallic compounds (e.g., ZnEt₂, ZnPh₂, Zn(C₆F₅)₂) in non-coordinating solvents (toluene/hexane). Exclude water and oxygen using Schlenk line techniques.

Step 2: HERFD-XANES Spectroscopy - Collect Zn 1s high-energy-resolution fluorescence detected XANES spectra at synchrotron facility. Identify characteristic sharp peak at ~9661 eV indicating linear C-Zn-C geometry.

Step 3: VtC-XES Measurements - Perform both non-resonant and resonant valence-to-core X-ray emission spectroscopy to identify zinc-containing occupied (OMO) and unoccupied molecular orbitals (UMO).

Step 4: Computational Validation - Conduct density functional theory (DFT) and time-dependent DFT (TDDFT) calculations to validate geometric structures and electronic transitions observed experimentally.

Step 5: Descriptor Calculation - Calculate ηZn, χZn, and ωZn by combining experimental spectroscopy results with computational chemistry within Pearson's theoretical framework [34].

Topological Descriptor Calculation for Silicate Networks

For extended inorganic structures like single-chain diamond silicates (CSn), apply this computational protocol [36]:

Step 1: Graph Representation - Represent the silicate structure as a mathematical graph where silicon atoms correspond to vertices and Si-O-Si bonds form edges. For CSn dimension n, verify 3n+1 vertices and 5n edges.

Step 2: Degree Calculation - Calculate degree dg(v) for each vertex as number of incident edges: ( dg(v) = |{e ∈ E(G) | e = uv, u ∈ V(G)}| )

Step 3: Index Computation - Compute topological indices using established formulas: