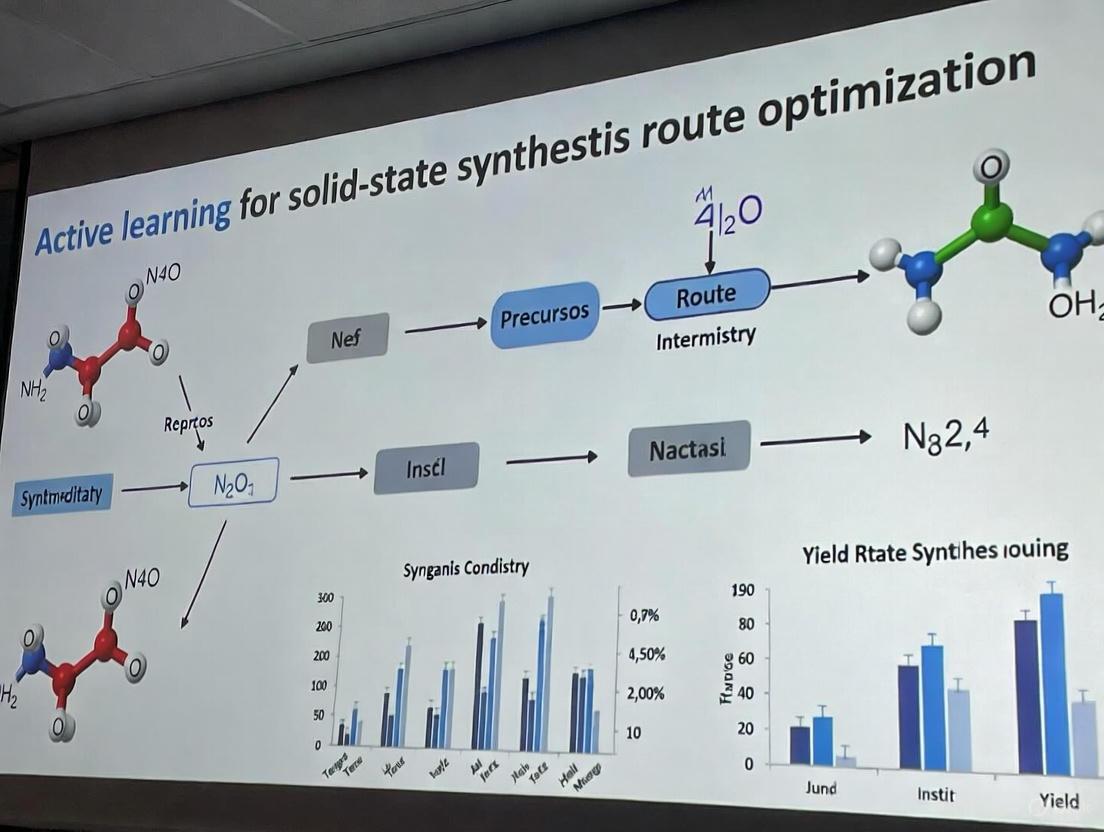

Active Learning for Solid-State Synthesis: AI-Driven Route Optimization in Materials Science and Drug Development

This article explores the transformative role of active learning (AL), a subfield of artificial intelligence, in optimizing solid-state synthesis routes—a critical challenge in materials science and drug development.

Active Learning for Solid-State Synthesis: AI-Driven Route Optimization in Materials Science and Drug Development

Abstract

This article explores the transformative role of active learning (AL), a subfield of artificial intelligence, in optimizing solid-state synthesis routes—a critical challenge in materials science and drug development. It covers the foundational principles of AL, which iteratively guides experiments to maximize information gain, and details its methodological implementation in autonomous laboratories. The content addresses common troubleshooting and optimization challenges, provides a comparative validation of various AL strategies against traditional methods, and highlights real-world successes, such as the A-Lab's demonstration of synthesizing 41 new inorganic materials. Aimed at researchers and scientists, this review underscores how AL accelerates the discovery of high-performance materials while significantly reducing experimental time and costs.

What is Active Learning? Foundations for Solid-State Synthesis

Core Concepts and Definitions

Active Learning (AL) represents a paradigm shift in scientific experimentation, moving from traditional passive data collection to an intelligent, iterative process where the learning algorithm itself selects the most informative data points to be labeled or experiments to be performed. This data-centric approach is designed to maximize model performance or knowledge gain while minimizing the often prohibitive cost of experimental synthesis and characterization [1].

Within the context of solid-state synthesis and materials discovery, AL functions as a closed-loop system. This system integrates computational prediction, robotic experimentation, and data analysis to accelerate the identification and optimization of novel materials [2]. The core principle involves using an agent—often a machine learning model—to decide which experiment to conduct next based on the data collected from all previous experiments. This is in stark contrast to one-time, static design-of-experiments or high-throughput screening that lacks a sequential decision-making component.

The fundamental components of an AL cycle are:

- Proxy Model: A surrogate model that predicts material properties or synthesis outcomes.

- Acquisition Function: A strategy that uses the model's state to quantify the potential value of any new experiment.

- Experimental Oracle: The automated or robotic system that executes the proposed experiment and returns results (e.g., yield, phase purity).

- Update Rule: The procedure for incorporating new data into the model to refine future predictions.

This methodology is particularly critical in fields like materials science and drug development, where the synthesis and characterization of a single sample can require extensive resources, expert knowledge, and time [1]. By intelligently selecting which experiments to run, AL can dramatically reduce the number of experiments required to achieve a research objective, such as discovering a new battery material or optimizing a catalytic reaction.

Foundational Principles and Algorithmic Strategies

Active learning strategies are grounded in several core principles, which are implemented through specific acquisition functions. The table below summarizes the primary principles and their corresponding algorithmic strategies used in AL for scientific domains.

Table 1: Foundational Principles and Corresponding Active Learning Strategies

| Principle | Description | Example AL Strategies |

|---|---|---|

| Uncertainty Estimation | Selects samples where the model's prediction is most uncertain, aiming to reduce model variance and improve overall accuracy. | Least Confidence Margin (LCMD), Tree-based Uncertainty (Tree-based-R) [1]. |

| Diversity | Aims to select a set of data points that are representative of the entire input space, ensuring the model learns from a broad range of conditions. | Geometry-based (GSx), Euclidean Distance-based (EGAL) [1]. |

| Expected Model Change | Selects samples that are expected to cause the greatest change in the current model, thereby accelerating learning. | Expected Model Change Maximization (EMCM) [1]. |

| Representativeness | Selects samples that are similar to many other unlabeled points, ensuring the model learns from common scenarios. | Representative-Diversity hybrids (RD-GS) [1]. |

| Hybrid Strategies | Combines multiple principles (e.g., uncertainty and diversity) to balance exploration of the unknown with refinement of known areas. | RD-GS (Representativeness-Diversity) [1]. |

In practice, the choice of strategy depends heavily on the specific task and data characteristics. Benchmark studies have shown that in the early, data-scarce phase of a project, uncertainty-driven and diversity-hybrid strategies (like RD-GS) clearly outperform random sampling and geometry-only heuristics [1]. As the volume of labeled data increases, the performance advantage of specialized AL strategies tends to diminish, with all methods converging toward similar model accuracy.

Experimental Protocols: Implementation in a Self-Driving Lab

The following protocol details the implementation of an active learning cycle within an autonomous laboratory for solid-state synthesis, based on the landmark A-Lab system [3].

Protocol: Autonomous Synthesis and Optimization of Novel Inorganic Materials

Objective: To autonomously synthesize a target inorganic material predicted to be stable by computational screening, and to iteratively optimize the synthesis recipe to maximize target yield.

Primary Materials and Instruments: Table 2: Research Reagent Solutions and Essential Materials for Solid-State Synthesis

| Item Name | Function/Description |

|---|---|

| Precursor Powders | High-purity solid powders of constituent elements or simple compounds. Serve as the starting materials for solid-state reactions. |

| Alumina Crucibles | Containers for holding powder mixtures during high-temperature heating. They are inert and withstand repeated heating cycles. |

| Box Furnaces | Provide controlled high-temperature environments necessary for solid-state synthesis reactions to occur. |

| Robotic Milling Apparatus | Automates the grinding and mixing of precursor powders to ensure homogeneity and improve reactivity. |

| X-ray Diffractometer (XRD) | The primary characterization tool used to identify crystalline phases present in the synthesis product and estimate their weight fractions. |

Methodology:

Target Identification and Initial Recipe Proposal:

- Input: A set of novel, computationally-predicted stable materials are identified from ab initio databases (e.g., Materials Project, Google DeepMind) [3].

- Action: For each target material, an AI model trained on historical literature data using natural-language processing proposes up to five initial solid-state synthesis recipes. These recipes are based on chemical analogy to known materials [2] [3].

- Output: A set of proposed recipes specifying precursor powders and an initial synthesis temperature.

Robotic Synthesis Execution:

- A robotic arm transfers weighed precursor powders into a mixing vessel.

- An automated system mills the powders to create a homogeneous mixture.

- The mixture is transferred into an alumina crucible.

- A second robotic arm loads the crucible into one of four box furnaces for heating according to the proposed time-temperature profile [3].

Automated Product Characterization and Analysis:

- After cooling, the sample is automatically transferred to a grinding station and prepared for analysis.

- The sample is analyzed via X-ray Diffraction (XRD).

- The XRD pattern is interpreted by a ensemble of machine learning models trained on experimental structures, which identify the crystalline phases present [3].

- The phase identification is confirmed and quantified via automated Rietveld refinement. The weight fraction of the target material is calculated and reported.

Active Learning-Driven Iteration:

- Decision Point: If the target yield exceeds a pre-defined threshold (e.g., >50%), the process is deemed successful. If not, the AL loop is initiated [3].

- Algorithm: The Autonomous Reaction Route Optimization with Solid-State Synthesis (ARROWS³) algorithm is employed. This active learning system integrates ab initio computed reaction energies with the observed experimental outcomes [3].

- Hypothesis Generation: ARROWS³ uses two key hypotheses to propose a new, improved recipe: a. It prioritizes reaction pathways that avoid intermediate phases with a low thermodynamic driving force (<50 meV per atom) to form the final target, as these can lead to kinetic traps [3]. b. It leverages a growing database of observed pairwise solid-state reactions to infer reaction pathways and prune the search space of possible recipes, avoiding redundant experiments [3].

- The newly proposed recipe is executed (return to Step 2), and the loop continues until the target is synthesized or all plausible recipes are exhausted.

Active Learning Cycle for Solid-State Synthesis

Quantitative Comparison of Active Learning Strategies

The effectiveness of different AL strategies can be quantitatively benchmarked, particularly when integrated with Automated Machine Learning (AutoML) frameworks that dynamically select and tune model types. The following data, derived from a comprehensive benchmark study on materials science regression tasks, compares the performance of various strategies in a small-data regime [1].

Table 3: Benchmarking of Active Learning Strategies with AutoML on Materials Datasets

| Strategy Type | Example Strategies | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random sampling | Converges with other methods | Selects points where model is most uncertain, rapidly improving accuracy. |

| Diversity-Hybrid | RD-GS | Clearly outperforms random sampling | Converges with other methods | Balances exploration of input space with representativeness. |

| Geometry-Only | GSx, EGAL | Performance closer to baseline | Converges with other methods | Focuses on spatial coverage of the feature space. |

| Baseline | Random-Sampling | Reference for comparison | Reference for comparison | Selects experiments randomly, lacking intelligent selection. |

Key Insight: The benchmark demonstrates that the choice of AL strategy is most critical during the early stages of an experimental campaign. Uncertainty-based and hybrid methods can rapidly steer the model toward high performance with fewer data points, leading to significant resource savings [1]. This underscores the importance of strategic experiment selection in resource-constrained environments like solid-state synthesis.

Advanced Architectures and Implementation Guidelines

The Role of Large Language Models (LLMs) as Cognitive Agents

Recent advances have introduced hierarchical, multi-agent systems powered by Large Language Models (LLMs) as the "brain" of autonomous laboratories. Frameworks like ChemAgents utilize a central task manager that coordinates role-specific agents (e.g., Literature Reader, Experiment Designer, Robot Operator) to conduct on-demand chemical research [2]. Similarly, Coscientist is an LLM-driven system capable of autonomously designing, planning, and executing complex chemical experiments by leveraging tool-use capabilities such as web searching, document retrieval, and code-based control of robotic systems [2]. These systems mark a significant step towards generalist autonomous research platforms.

Key Constraints and Mitigation Strategies

Despite their promise, autonomous laboratories face several constraints that must be addressed for widespread adoption [2]:

- Data Quality and Scarcity: AI model performance is heavily dependent on high-quality, diverse data. Noisy or scarce experimental data can hinder accurate predictions.

- Mitigation: Develop standardized data formats, utilize high-quality simulation data, and employ uncertainty analysis.

- Model Generalization: Most AI models are highly specialized and struggle to generalize across different reaction types or material systems.

- Mitigation: Train foundation models across different domains and use transfer learning to adapt to new data.

- LLM Reliability: LLMs can generate plausible but incorrect information ("hallucinations") without indicating uncertainty, potentially leading to failed experiments.

- Mitigation: Implement targeted human oversight and robust fact-checking mechanisms within the agent workflow.

- Hardware Integration: A lack of modular hardware architectures makes it difficult to reconfigure platforms for different chemical tasks.

- Mitigation: Develop standardized interfaces and extend mobile robotic capabilities to include specialized analytical modules.

Hierarchical Multi-Agent System for Autonomous Research

Active learning (AL) represents a paradigm shift in scientific experimentation, moving from traditional high-throughput screening to an intelligent, data-efficient approach that accelerates discovery while minimizing resource consumption. In the context of solid-state synthesis and materials science, AL addresses a critical bottleneck: the prohibitive cost and time required for experimental synthesis and characterization [4]. This methodology is particularly valuable for optimizing solid-state synthesis routes, where each experimental cycle can require expert knowledge, expensive equipment, and days of processing [3]. By integrating surrogate models, acquisition functions, and experimental validation into a closed-loop system, active learning enables researchers to navigate complex experimental spaces systematically, prioritizing the most promising experiments based on iterative model predictions [2].

The fundamental active learning cycle operates through three interconnected components: surrogate models that approximate complex physical systems, acquisition functions that quantify the potential value of new experiments, and experimental validation that grounds the process in empirical reality. This framework has demonstrated remarkable success in practical applications. For instance, in autonomous materials discovery platforms, active learning has achieved order-of-magnitude efficiency gains over traditional approaches, successfully synthesizing novel compounds with minimal human intervention [4] [3]. Similarly, in computational physiology, AL has reduced the computational costs of inverse parameter identification by strategically selecting training data for surrogate models [5].

Core Component 1: Surrogate Models

Definition and Purpose

Surrogate models, also known as metamodels or reduced-order models, are computationally efficient approximations of complex, high-fidelity simulation models or experimental processes. They serve as replacement models during the iterative optimization phases of active learning, where executing the full model repeatedly would be prohibitively expensive [5]. In solid-state synthesis optimization, surrogate models learn the relationship between synthesis parameters (e.g., precursor selection, temperature profiles, processing conditions) and experimental outcomes (e.g., phase purity, yield, material properties) [4]. By capturing the essential input-output relationships of the actual experimental process, these models enable rapid exploration of the synthesis parameter space while dramatically reducing the need for physical experiments.

The primary advantage of surrogate models lies in their computational efficiency. Once trained, they can generate predictions in seconds or milliseconds compared to hours or days for actual experiments or high-fidelity simulations. This speed advantage makes them ideal for active learning cycles that require numerous iterations to converge on optimal solutions [5]. For example, in biomechanical parameter identification, neural network surrogates have achieved speed improvements of several orders of magnitude compared to finite element simulations while maintaining high accuracy in predicting material behavior [5].

Model Selection and Implementation

Different surrogate model architectures offer distinct advantages depending on the nature of the modeling task:

- Recurrent Neural Networks (RNNs) are particularly effective for modeling time-dependent processes such as viscoelastic material behavior or kinetic processes in solid-state reactions. Their ability to maintain a persistent state makes them analogous to internal variables in constitutive models [5].

- Feed-Forward Neural Networks excel at capturing nonlinear static relationships, such as mapping material composition to final properties in hyperelastic metamodels [5].

- Gaussian Process Regression provides natural uncertainty quantification alongside predictions, making them valuable for Bayesian optimization approaches where uncertainty estimates guide exploration [5].

- Graph Neural Networks and Transformers have recently achieved unprecedented accuracy in predicting material properties across diverse chemical spaces, particularly when dealing with structured representations of materials [4].

The process for developing effective surrogate models begins with generating an initial training dataset using space-filling designs such as Latin Hypercube Sampling or Poisson's disk sampling to ensure good coverage of the parameter space [5]. The model is then trained to minimize the difference between its predictions and the outputs from high-fidelity simulations or experiments. For dynamic processes, sequence-based loss functions that account for temporal evolution are typically employed [5].

Application in Solid-State Synthesis

In solid-state synthesis optimization, surrogate models can predict the outcome of proposed synthesis routes before physical execution. For instance, machine learning interatomic potentials have enabled microsecond-scale molecular dynamics simulations at near-density functional theory accuracy, revealing non-Arrhenius transport behavior and overturning established transport mechanisms [4]. These models learn from both computational data and experimental results, creating a compressed representation of the complex relationship between synthesis parameters and material outcomes.

Recent advances have integrated surrogate models with automated machine learning (AutoML) systems that automatically search and optimize between different model families and their hyperparameters [1]. This approach is particularly valuable in materials science, where experimentation and characterization are resource-intensive, making large-scale manual model tuning impractical. AutoML has been proven to be an excellent tool for material design, automatically selecting the optimal surrogate model architecture for specific synthesis prediction tasks [1].

Core Component 2: Acquisition Functions

Role in Active Learning

Acquisition functions form the decision-making engine of the active learning loop, quantitatively evaluating which experiments or simulations would provide the maximum information gain if performed next. These functions serve as mathematical heuristics that balance the competing objectives of exploration (sampling from regions of high uncertainty) and exploitation (refining knowledge in promising regions) [1]. In solid-state synthesis optimization, acquisition functions analyze the predictions of surrogate models to identify the most "rewarding" synthesis conditions to test experimentally, thereby maximizing the efficiency of the experimental campaign [5].

The importance of well-designed acquisition functions cannot be overstated—they directly determine the data efficiency of the entire active learning process. Empirical studies have demonstrated that effective acquisition strategies can reduce the number of experiments required to reach a target level of performance by 60-70% compared to random sampling [1] [3]. For example, in alloy design and ternary phase-diagram regression, uncertainty-driven active learning has achieved state-of-the-art accuracy using only 30% of the data typically required by traditional approaches [1].

Classification and Comparison

Acquisition functions can be categorized based on their underlying mathematical principles:

Table 1: Classification of Acquisition Functions for Regression Tasks

| Category | Principle | Representative Methods | Strengths | Limitations |

|---|---|---|---|---|

| Uncertainty-Based | Selects points where model prediction uncertainty is highest | Monte Carlo Dropout [5], Query-by-Committee [5] | Directly reduces model uncertainty; Simple to implement | May overlook data distribution structure |

| Diversity-Based | Maximizes coverage of the input feature space | RD-GS [1] | Ensures representative sampling; Avoids redundancy | Ignores model uncertainty; May sample unimportant regions |

| Expected Model Change | Selects points that would most alter the current model | EMCM [1] | Focuses on model improvement; Efficient for complex models | Computationally intensive for large datasets |

| Hybrid Approaches | Combines multiple principles for balanced sampling | LCMD, Tree-based-R [1] | Balances exploration and exploitation; Robust performance | More complex to implement and tune |

Performance Benchmarking

Recent comprehensive benchmarking studies have evaluated various acquisition functions in materials science regression tasks. These studies reveal that the relative performance of different strategies depends significantly on the stage of the active learning process and the specific characteristics of the dataset:

Table 2: Performance Comparison of Acquisition Functions in Materials Science Regression [1]

| Strategy Type | Early-Stage Performance | Late-Stage Performance | Consistency Across Datasets | Computational Overhead |

|---|---|---|---|---|

| Uncertainty-Driven (LCMD) | High | Medium | High | Low |

| Diversity-Hybrid (RD-GS) | High | Medium | Medium | Medium |

| Tree-Based (Tree-based-R) | High | High | High | Low |

| Geometry-Only (GSx, EGAL) | Low | Medium | Low | Low |

| Random Sampling | Low | Medium | High | Very Low |

Benchmark results indicate that early in the acquisition process when labeled data is scarce, uncertainty-driven and diversity-hybrid strategies clearly outperform geometry-only heuristics and random sampling [1]. These methods excel at selecting informative samples that rapidly improve model accuracy. However, as the labeled set grows, the performance gap narrows and all methods eventually converge, indicating diminishing returns from active learning under AutoML frameworks [1].

Interestingly, despite the development of sophisticated acquisition functions, empirical studies have found that in general settings, no single-model approach consistently outperforms entropy-based strategies [6]. This surprising result serves as a reality check for the field, suggesting that simple, well-understood acquisition functions may provide more robust performance across diverse applications than increasingly complex alternatives.

Core Component 3: Experimental Validation

Closing the Loop

Experimental validation represents the critical ground-truthing step that closes the active learning loop, transforming it from a computational exercise into a scientifically rigorous process. This phase involves executing the experiments selected by the acquisition function and measuring their outcomes to generate new labeled data points [3]. In solid-state synthesis, this typically entails robotic execution of proposed synthesis recipes followed by automated characterization of the resulting products [2]. The validation data serves dual purposes: it provides training examples to improve the surrogate model in subsequent iterations, and it progressively converges toward optimal synthesis conditions.

The importance of robust experimental validation cannot be overstated, as it ensures that the active learning process remains anchored in physical reality rather than diverging into computationally plausible but experimentally invalid regions of the parameter space. Autonomous laboratories like the A-Lab have demonstrated the power of tight integration between computational prediction and experimental validation, successfully synthesizing 41 of 58 novel target compounds through iterative optimization [3]. Their success rate of 71% underscores the effectiveness of this approach for accelerating materials discovery.

Validation Methodologies

Different experimental domains employ specialized validation techniques appropriate for their specific measurement requirements:

Solid-State Synthesis: Automated platforms like the A-Lab utilize robotic arms for sample preparation, transfer of precursors to crucibles, loading into box furnaces for heating, and subsequent grinding of products into fine powders for X-ray diffraction (XRD) analysis [3]. Phase identification and weight fractions are extracted from XRD patterns using probabilistic machine learning models trained on experimental structures, with confirmation through automated Rietveld refinement [3].

Biomechanical Parameter Identification: Experimental validation involves mechanical testing of material specimens under controlled deformation conditions while recording force responses. For inhomogeneous deformation states, digital image correlation techniques may be employed to capture full-field displacement data [5].

Chemical Synthesis: Automated platforms integrate robotic liquid handling systems with analytical instrumentation such as ultra-performance liquid chromatography-mass spectrometry (UPLC-MS) and benchtop nuclear magnetic resonance (NMR) spectroscopy [2]. Heuristic decision makers process orthogonal analytical data to mimic expert judgments, using techniques like dynamic time warping to detect reaction-induced spectral changes [2].

A critical aspect of experimental validation is handling failed syntheses and unexpected outcomes. Rather than considering these as mere failures, sophisticated active learning systems analyze them to extract valuable information about synthesis barriers. Common failure modes in solid-state synthesis include slow reaction kinetics, precursor volatility, amorphization, and computational inaccuracies in phase stability predictions [3]. Documenting and learning from these failures provides direct and actionable suggestions for improving both computational screening techniques and synthesis design strategies.

Integrated Workflow and Protocols

Standard Operating Procedure

Implementing an effective active learning loop for solid-state synthesis optimization requires careful integration of the three core components into a seamless workflow. The following protocol outlines a standardized approach based on successful implementations in autonomous materials discovery platforms:

Phase 1: Initialization

- Define Target: Identify the target material to be synthesized, ensuring it is predicted to be thermodynamically stable or nearly stable (within <10 meV/atom of the convex hull) based on ab initio computations [3].

- Establish Baseline: Generate initial synthesis recipes using literature-inspired approaches, such as natural language processing models trained on historical synthesis data [3].

- Characterization Setup: Configure automated characterization instruments (e.g., XRD, spectroscopy) and validate measurement protocols using standard reference materials.

Phase 2: Active Learning Cycle

- Surrogate Model Training: Train the selected surrogate model architecture on all available labeled data (both initial and accumulated from previous cycles).

- Candidate Generation: Use the trained surrogate model to predict outcomes for a large pool of candidate synthesis conditions within the predefined parameter space.

- Acquisition Function Evaluation: Apply the selected acquisition function to rank candidate experiments by their expected information gain or potential improvement.

- Experimental Execution: Robotically execute the top-ranked synthesis recipes, including precursor dispensing, mixing, heating according to specified profiles, and product handling [3].

- Automated Characterization: Perform structural and compositional analysis of synthesis products using integrated characterization tools.

- Data Integration: Extract phase identification and yield information from characterization data and add the newly labeled examples to the training dataset [3].

Phase 3: Termination and Analysis

- Convergence Check: Evaluate if stopping criteria have been met (e.g., target yield >50%, diminishing returns, or budget exhaustion).

- Result Validation: Perform additional characterization on optimized materials to confirm properties beyond phase purity.

- Knowledge Extraction: Analyze the accumulated data to extract insights about synthesis-structure-property relationships for future campaigns.

The following workflow diagram illustrates the integrated active learning process for solid-state synthesis optimization:

Implementing an active learning system for solid-state synthesis requires both computational and experimental resources:

Table 3: Essential Research Reagents and Resources for Active Learning-Driven Synthesis

| Resource Category | Specific Examples | Function in AL Workflow | Implementation Considerations |

|---|---|---|---|

| Computational Databases | Materials Project [3], Google DeepMind stability data [3] | Provides initial target screening and thermodynamic references | Ensure air-stability predictions for targets; Cross-reference multiple databases |

| Precursor Materials | High-purity oxide and phosphate powders [3] | Raw materials for solid-state synthesis | Characterize particle size, purity, and moisture content before use |

| Robotic Automation | Robotic arms for powder handling [3], Mobile sample transport robots [2] | Executes synthesis recipes with minimal human intervention | Implement collision avoidance and error recovery protocols |

| Heating Systems | Programmable box furnaces [3] | Performs solid-state reactions at controlled temperatures | Calibrate temperature profiles and monitor thermal uniformity |

| Characterization Instruments | X-ray diffractometers [3], UPLC-MS [2], Benchtop NMR [2] | Provides phase identification and yield quantification | Automate data analysis with ML models for real-time feedback |

| Surrogate Model Platforms | Bayesian optimization frameworks [5], AutoML systems [1] | Accelerates parameter space exploration | Select models appropriate for data type (RNN for kinetics, etc.) |

| Acquisition Functions | Uncertainty sampling [5], Diversity methods [1], Hybrid approaches [1] | Guides experiment selection | Balance exploration vs. exploitation based on campaign stage |

Case Study: A-Lab Implementation

The A-Lab autonomous materials discovery platform provides a compelling case study of the integrated active learning framework applied to solid-state synthesis optimization. Over 17 days of continuous operation, the A-Lab successfully synthesized 41 of 58 novel target compounds identified using large-scale ab initio phase-stability data [3]. This 71% success rate demonstrates the practical effectiveness of combining surrogate models, acquisition functions, and robotic validation.

The A-Lab implementation featured several innovative elements. For surrogate modeling, the system utilized multiple complementary approaches: natural-language models trained on literature data for initial recipe generation, and thermodynamic models informed by ab initio computations for active learning optimization [3]. The acquisition function employed a sophisticated strategy called ARROWS3 (Autonomous Reaction Route Optimization with Solid-State Synthesis), which integrated observed synthesis outcomes with computed reaction energies to predict optimal solid-state reaction pathways [3].

A key insight from the A-Lab implementation was the importance of handling failed syntheses as learning opportunities rather than mere failures. Analysis of the 17 unobtained targets revealed specific failure modes including slow reaction kinetics, precursor volatility, amorphization, and computational inaccuracies [3]. This analysis provided direct, actionable suggestions for improving both computational screening techniques and synthesis design strategies, highlighting that minor adjustments to the lab's decision-making algorithm could increase the success rate to 74%, with further improvements to 78% possible with enhanced computational techniques [3].

The following diagram illustrates the specific active learning workflow implemented in the A-Lab platform:

The integration of surrogate models, acquisition functions, and experimental validation represents a powerful framework for accelerating scientific discovery in solid-state synthesis and related fields. Current research directions focus on addressing several key challenges to further enhance the capabilities of active learning systems.

For surrogate models, emerging approaches include physics-informed neural networks that incorporate known physical constraints and conservation laws directly into the model architecture, improving extrapolation accuracy and data efficiency [4]. Transfer learning techniques are being developed to leverage knowledge from data-rich chemical domains to accelerate learning in data-scarce environments, particularly for multivalent systems where experimental data is limited [4]. For acquisition functions, recent benchmarks highlight the need for more robust evaluation methodologies that account for real-world constraints like batch parallelism and multi-fidelity data sources [6] [1].

The most significant advances are likely to come from improved integration of the three core components. Autonomous laboratories are increasingly adopting hierarchical multi-agent systems where different components specialize in specific tasks yet coordinate through a central planner [2]. For example, the ChemAgents framework features a central Task Manager that coordinates four role-specific agents (Literature Reader, Experiment Designer, Computation Performer, and Robot Operator) for on-demand autonomous chemical research [2]. Such architectures promise more robust and adaptable systems capable of handling the complex, multi-step decision-making required for real scientific discovery.

In conclusion, the core components of active learning—surrogate models, acquisition functions, and experimental validation—form a powerful framework for accelerating materials discovery and optimization. When thoughtfully integrated into a closed-loop system, these components enable researchers to navigate complex experimental spaces with unprecedented efficiency, as demonstrated by successful implementations in autonomous laboratories. As these technologies continue to mature, they promise to transform the pace and scope of scientific discovery across chemistry, materials science, and related fields.

The discovery and optimization of materials through solid-state synthesis are fundamentally constrained by the immense, high-dimensional space of possible experimental parameters. This space encompasses variations in chemistry, crystal structure, processing conditions, and microstructure [7]. The traditional approach of relying on trial-and-error or even high-throughput methods that attempt to densely populate this entire phase space is often impractical, time-consuming, and resource-intensive [7]. The central challenge is to efficiently guide experiments towards materials with desired properties without exhaustively testing every possible combination.

Active learning (AL), a paradigm from the fields of machine learning and statistical experimental design, offers a powerful solution to this challenge. It provides a systematic, iterative framework for making optimal decisions about which experiment to perform next. The core of this approach is an active learning loop: a surrogate model makes predictions about the material property of interest; these predictions, together with their associated uncertainties, are fed into a utility function (also called an acquisition function); the optimal point of this utility function dictates the next experiment or calculation to be performed [7]. The results of this experiment then augment the training data, and the loop continues until the target performance is met, dramatically reducing the number of experiments required.

Active Learning Methodologies and Experimental Protocols

The following section details the core components for implementing an active learning strategy in a solid-state synthesis workflow.

The Active Learning Workflow for Synthesis Optimization

The active learning process for optimizing solid-state synthesis can be visualized as a cyclic workflow, where computational guidance and experimental validation are tightly integrated. This workflow is designed to efficiently navigate the parameter space by strategically selecting the most informative experiments.

Core Components of the Active Learning Protocol

Surrogate Models and Utility Functions

The surrogate model, often a machine learning regression model, learns the relationship between synthesis parameters and the target material property from the available data. In parallel, the model estimates the uncertainty of its predictions for unexplored parameter combinations. The choice of utility function is critical as it balances the exploration of uncertain regions with the exploitation of known high-performing regions [7]. Common functions include:

- Expected Improvement (EI): Selects points that offer the highest potential improvement over the current best observation [7].

- Upper Confidence Bound (UCB): Selects points based on a weighted sum of the predicted mean and its uncertainty.

- Density-Aware Methods: Recent advances, such as Density-Aware Greedy Sampling (DAGS), integrate data density with uncertainty estimation. This is particularly effective for large design spaces, as it prevents the sampling of outliers and focuses on the most promising, densely populated regions of the parameter space [8].

Queue Prioritization for Generative Workflows

In workflows that use generative AI to propose new candidate materials, an additional step of queue prioritization can be integrated. A dedicated active learning model can be used to score and rank the AI-generated candidates, ensuring that the most promising ones are synthesized and tested first. This prevents the workflow from expending resources on nonsensical or low-quality candidates and can significantly increase the number of high-performing candidates identified—in one case study, increasing the average from 281 to 604 out of 1000 novel candidates [9].

Detailed Experimental Protocol: A Case Study in Wollastonite Synthesis

The following protocol is inspired by a recent study on the single-step solid-state synthesis of Wollastonite-2M using rice husk ash (RHA), adapted here within an active learning framework [10].

Application Note: Optimizing the synthesis of single-phase Wollastonite-2M from RHA and natural limestone. Targeted Property: Phase purity (minimization of secondary crystalline phases as determined by X-ray Diffraction).

Initial Dataset Creation:

- Define Parameter Ranges: Based on literature, define the initial search space.

- Design of Experiments (DoE): Use a space-filling design (e.g., Latin Hypercube Sampling) to select 10-15 initial (sintering temperature, sintering time, CaO:SiO₂ molar ratio) triplets from the predefined ranges.

- Initial Experimentation: Execute the synthesis and characterization for each initial data point.

Active Learning Loop:

- Model Training: Train a Gaussian Process Regression (GPR) model on the current dataset. The GPR is ideal as it provides inherent uncertainty estimates.

- Candidate Selection: Using the GPR model, predict the phase purity and uncertainty for a large number of candidate parameter sets within the search space. Calculate the Expected Improvement for each candidate.

- Next Experiment: Select the candidate parameter set with the highest Expected Improvement.

- Synthesis Execution:

- Mixing: Grind the RHA (source of SiO₂) and limestone (CaCO₃, source of CaO) in the selected molar ratio (e.g., ~1:1) using a ball mill for 30 minutes.

- Pelletizing: Press the homogeneous powder mixture into pellets under uniaxial pressure.

- Sintering: Heat the pellets in a furnace at the selected temperature (e.g., 1150-1350°C) for the selected duration (e.g., 2-8 hours), followed by free cooling in the air [10].

- Characterization: Analyze the synthesized product using X-ray Powder Diffraction (XRD) to determine the phase composition and quantify the primary phase purity.

- Data Augmentation: Add the new (parameters, purity) data pair to the training dataset.

- Iteration: Repeat steps 1-6 until a predefined purity threshold is achieved or the experimental budget is exhausted.

Quantitative Data and Research Reagent Solutions

Synthesis Parameter Space and Performance Metrics

The optimization of solid-state synthesis involves navigating a multi-dimensional space of continuous and discrete parameters. The table below summarizes the key parameters, their typical ranges based on the wollastonite case study and general synthesis principles, and the target properties that can be optimized [10].

Table 1: Key Parameters and Target Properties in Solid-State Synthesis Optimization

| Parameter Category | Specific Parameter | Typical Range / Options | Measurable Target Property |

|---|---|---|---|

| Thermal Profile | Sintering Temperature | 1150 - 1350 °C [10] | Phase Purity (from XRD) |

| Sintering Time | 2 - 8 hours [10] | Crystallite Size (from XRD Scherrer) | |

| Heating/Cooling Rate | 1 - 10 °C/min | Particle Morphology (from SEM) | |

| Stoichiometry | CaO:SiO₂ Molar Ratio | 0.9:1 - 1.1:1 | Lattice Parameters (from XRD Rietveld) |

| Dopant/Additive Concentration | 0 - 5 mol% | Bulk Density/Porosity | |

| Processing | Grinding Time | 15 - 60 minutes | Target Functional Property (e.g., CO₂ uptake) |

| Applied Pressure (for pellets) | 10 - 50 MPa |

Research Reagent Solutions for Solid-State Synthesis

The following table lists essential materials and equipment required for setting up an active learning-driven solid-state synthesis laboratory, with a focus on the wollastonite case study.

Table 2: Essential Research Reagent Solutions for Solid-State Synthesis

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| Rice Husk Ash (RHA) | Eco-friendly, high-purity (≈90% SiO₂) silica source for silicate synthesis. Reduces costs and utilizes agricultural waste [10]. | Should be characterized for SiO₂ content and impurities before use. |

| Calcium Carbonate (CaCO₃) | Common precursor for introducing CaO into the reaction. | High-purity powder; can be replaced by calcium hydrate. |

| Planetary Ball Mill | Provides mechanical energy for homogenizing and reducing particle size of precursor mixtures, critical for reaction kinetics. | Milling time and speed are optimizable parameters. |

| High-Temperature Furnace | Provides the thermal energy required for solid-state diffusion and reaction to form the target crystalline phase. | Must be capable of reaching temperatures up to 1400-1500°C with precise control. |

| Uniaxial Press | Forms powder mixtures into dense pellets, increasing inter-particle contact and improving reaction yield. | Applied pressure is an optimizable processing parameter. |

| X-ray Diffractometer (XRD) | The primary characterization tool for verifying phase formation, quantifying purity, and determining crystal structure. | Essential for generating the target property data (e.g., phase purity) for the active learning model. |

The integration of active learning into solid-state synthesis represents a paradigm shift from empirically guided exploration to a principled, data-driven decision-making process. By leveraging surrogate models and utility functions to strategically select the most informative experiments, researchers can dramatically compress the time and resources required to discover and optimize new materials. The detailed workflow, protocols, and resource guides provided here offer a practical roadmap for implementing this powerful approach, turning the critical challenge of navigating vast parameter spaces into a manageable and efficient scientific endeavor.

Active Learning (AL) has emerged as a transformative paradigm in scientific research, strategically overcoming the inefficiencies of traditional trial-and-error and the high costs associated with exhaustive high-throughput screening (HTS). By integrating artificial intelligence (AI) with robotic experimentation, AL creates a closed-loop system that iteratively selects the most informative experiments, dramatically accelerating the discovery and optimization of novel materials and drug molecules [2] [11]. This approach is particularly powerful in solid-state synthesis and drug discovery, where it leverages machine learning models to guide experimental planning, execution, and analysis with minimal human intervention. This Application Note details the quantitative benefits of AL, provides executable protocols for its implementation, and visualizes its core workflows, framing these advances within the context of solid-state synthesis route optimization.

Quantitative Advantages of Active Learning

The following tables summarize performance data from recent, high-impact studies applying Active Learning across chemical and materials domains.

Table 1: Performance of Active Learning in Materials and Molecule Discovery

| Application Area | System / Method | Key Performance Metric | Result |

|---|---|---|---|

| Solid-State Materials Discovery | A-Lab [3] | Novel materials synthesized successfully | 41 out of 58 targets (71% success rate) |

| Duration of continuous operation | 17 days | ||

| Molecular Potency Optimization | ActiveDelta (99 datasets) [12] | Identification of more potent & diverse inhibitors | Outperformed standard exploitative AL |

| Virtual Screening Acceleration | Bayesian Optimization (100M library) [13] | Top ligands identified after screening | 94.8% of top-50k found after testing 2.4% of library |

| De Novo Drug Design | GM with AL (CDK2 target) [14] | Experimentally confirmed active molecules | 8 out of 9 synthesized molecules showed activity |

Table 2: Active Learning Methods and Their Applications

| AL Method / Architecture | Domain | Key Advantage |

|---|---|---|

| ActiveDelta (Paired Representation) [12] | Drug Discovery | Excels in low-data regimes; identifies more diverse inhibitors |

| ARROWS3 [3] | Solid-State Synthesis | Uses active learning grounded in thermodynamics to optimize synthesis routes |

| Bayesian Optimization (D-MPNN) [13] | Virtual Screening | Massive reduction in computational cost for docking massive libraries |

| Nested AL Cycles (VAE-based) [14] | De Novo Drug Design | Integrates generative AI with physics-based oracles for target engagement |

| Deep Batch AL (COVDROP/COVLAP) [15] | ADMET & Affinity Prediction | Maximizes joint entropy for batch diversity and model performance |

Application Note: The A-Lab for Solid-State Synthesis

The A-Lab represents a landmark implementation of AL for autonomous solid-state synthesis, demonstrating a closed-loop workflow from computational target identification to synthesized material [3].

Workflow and Protocol

The A-Lab's operation is a continuous cycle of planning, execution, and learning. The following diagram illustrates this integrated workflow.

Detailed Experimental Protocol

Objective: To autonomously synthesize and optimize a novel, computationally predicted inorganic material.

Starting Requirements:

- Computational Targets: A set of air-stable, theoretically stable inorganic materials identified from the Materials Project or similar ab initio databases [3].

- Hardware: Integrated robotic station with powder handling capabilities, box furnaces for solid-state reactions, and an X-ray Diffractometer (XRD).

- Software & Data: AI models trained on historical synthesis literature, ML models for XRD phase identification, and an active learning algorithm (e.g., ARROWS3).

Procedure:

- Target Input: Provide the A-Lab with the chemical formula of a target material predicted to be stable.

- Initial Recipe Proposal:

- A natural-language processing model analyzes historical data to propose initial solid-state synthesis recipes based on precursor analogy [3].

- A second ML model recommends an initial synthesis temperature.

- Robotic Execution:

- The robotic system automatically dispenses, weighs, and mixes precursor powders in an alumina crucible [3].

- A robotic arm transfers the crucible to a box furnace for heating under prescribed conditions (temperature, time, atmosphere).

- Product Characterization & Analysis:

- Active Learning Cycle:

- Decision Point: If the target yield is >50%, the experiment is concluded successfully.

- If yield is <50%: The ARROWS3 active learning algorithm is triggered.

- ARROWS3 integrates the observed reaction pathway (e.g., intermediate phases) with thermodynamic data from the Materials Project.

- The algorithm prioritizes new precursor sets or conditions that avoid low-driving-force intermediates, proposing a new recipe with a higher probability of success [3].

- Iteration: Steps 3-5 are repeated until either the target is successfully synthesized or a predefined number of recipe attempts are exhausted.

Application Note: Active Learning in Drug Discovery

AL has proven highly effective in various drug discovery stages, from virtual screening to hit optimization.

Workflow for Ligand Prioritization

A common application is using AL to efficiently prioritize compounds from large virtual or on-demand libraries. The workflow below, exemplified by tools like FEgrow, demonstrates this process [16].

Detailed Protocol: ActiveDelta for Molecular Optimization

Objective: To rapidly identify potent and chemically diverse inhibitors for a drug target using minimal experimental data.

Starting Requirements:

- Initial Data: A very small initial training set (e.g., 2 random data points) with measured binding affinity (e.g., Ki) from a larger database [12].

- Learning Pool: A larger pool of compounds ("learning set") without assay data.

- Model: A machine learning model capable of paired-input learning, such as the two-molecule variant of Chemprop (D-MPNN) or XGBoost with concatenated molecular fingerprints [12].

Procedure:

- Data Preparation:

- Start with a small initial training set ( D{train} ).

- Form a paired training set by cross-merging all molecules in ( D{train} ). Each data point is a molecular pair (A, B) with the label being the property difference (e.g., ΔKi = Ki,B - Ki,A) [12].

- Model Training:

- Train the paired model (e.g., ActiveDelta Chemprop) on this cross-merged dataset to learn molecular property differences.

- Candidate Selection:

- Identify the single most potent molecule (Mbest) in the current ( D{train} ).

- Create pairs (M_best, X) for every molecule X in the unlabeled learning set.

- Use the trained model to predict the potency improvement (ΔKi) for every pair.

- Iterative Batch Update:

- Select the molecule X from the learning set that is predicted to yield the greatest improvement over Mbest.

- This molecule is "assayed" (its label is acquired from the oracle) and added to ( D{train} ).

- The model is retrained on the updated, cross-merged ( D_{train} ).

- Termination: The cycle repeats until a predefined number of iterations is completed or a desired potency level is reached.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Resources for Implementing an Active Learning Laboratory

| Category | Item / Solution | Function / Description | Example Use Case |

|---|---|---|---|

| Computational & Data Resources | Ab Initio Databases (e.g., Materials Project) | Provides computationally predicted, stable target materials for synthesis [3]. | A-Lab target selection |

| Historical Synthesis Databases | Trains natural-language models for initial recipe generation [2]. | Proposal of precursor combinations | |

| ChEMBL / SIMPD Datasets [12] | Provides bioactivity data for benchmarking and training AL models in drug discovery. | Ki prediction optimization | |

| AI/ML Software | Natural Language Processing Models | Analyzes scientific text to propose synthesis routes by analogy [2]. | A-Lab recipe generation |

| Bayesian Optimization Algorithms | Guides experiment selection by balancing exploration and exploitation [13]. | Virtual screening acceleration | |

| Paired Molecular Learning (ActiveDelta) | Directly predicts property improvements, excelling with small data [12]. | Potency optimization | |

| Hardware & Automation | Robotic Powder Handling Systems | Automates precise dispensing and mixing of solid precursors [3]. | Solid-state synthesis |

| Automated Box Furnaces | Provides controlled high-temperature environments for reactions [3]. | Solid-state synthesis | |

| Integrated XRD with ML Analysis | Enables rapid, automated phase identification and yield estimation [2] [3]. | Product characterization | |

| Chemical Resources | On-Demand Compound Libraries (e.g., Enamine REAL) | Vast source of purchasable, synthetically accessible compounds for virtual screening [16]. | Seed library for de novo design |

| Fragment Libraries | Structurally validated starting points for hit expansion using tools like FEgrow [16]. | Structure-based drug design |

Implementing an Active Learning Pipeline for Synthesis Optimization

Application Notes: Core Architectural Framework

The modern autonomous laboratory represents a paradigm shift in scientific research, transitioning from manual, sequential experimentation to a continuous, closed-loop operation driven by artificial intelligence (AI), robotics, and sophisticated workflow automation. This architecture is particularly transformative for active learning in solid-state synthesis route optimization, where it systematically explores vast parameter spaces to discover and optimize materials with unprecedented efficiency [17] [2].

Foundational Pillars of the Autonomous Lab

The architecture of an autonomous laboratory is built upon four tightly integrated technological pillars:

- The AI Brain: The central intelligence of the lab uses AI and Machine Learning (ML) for experimental planning, data analysis, and decision-making. In active learning cycles, AI models like Gaussian Process Regressors (GPR) propose the most informative subsequent experiments to achieve the research objective, such as optimizing for material properties like strength and ductility [18] [19]. This includes Large Language Models (LLMs) that can interpret scientific literature, design synthetic routes, and even generate executable code for robotic systems [2].

- The Robotic Body: Robotic systems provide the physical means to execute experiments with minimal human intervention. This includes:

- Fixed Automation: Robotic arms and liquid handlers stationed at specific instruments for precise, high-throughput tasks like pipetting, weighing, and synthesis [17] [20].

- Mobile Robots: Autonomous Mobile Robots (AMRs) that navigate the lab floor, transporting samples and materials between different analytical stations, thereby connecting isolated automated islands into a seamless workflow [17] [2].

- The Data Spine: A unified, cloud- or hybrid-capable data infrastructure, often centered around a Laboratory Information Management System (LIMS), is critical. It automatically captures, standardizes, and contextualizes all data generated by instruments and robots. This ensures data is FAIR (Findable, Accessible, Interoperable, and Reusable), forming the reliable foundation upon which AI models are built and trained [17] [20].

- The Connectivity Layer: The Internet of Things (IoT) sensors and a robust integration infrastructure provide real-time monitoring of the laboratory environment (temperature, humidity) and instrument status (predictive maintenance). This layer enables the seamless orchestration of all components, allowing the AI brain to perceive the state of the physical world and act upon it through the robotic body [17] [21].

The Active Learning Closed Loop

The synergy of these components enables the core operational paradigm: the active learning closed loop. In the context of solid-state synthesis, this loop operates as a continuous cycle of planning, execution, and learning [2] [19].

- AI-Driven Proposal: An AI model, trained on prior data and/or theoretical knowledge, analyzes the current state of knowledge and proposes one or several high-value synthesis experiments. These are selected via an acquisition function designed to maximize learning and progress toward multi-objective goals, such as maximizing both strength and ductility in a material [18].

- Robotic Execution: The proposed synthesis recipe is translated into actionable commands for the robotic systems. AMRs retrieve and deliver precursor powders to a automated synthesizer (e.g., a furnace), which executes the solid-state reaction under precisely controlled conditions [2] [22].

- Automated Analysis & Characterization: Upon synthesis completion, mobile robots transport the resulting sample to various analytical instruments. For solid-state materials, X-ray Diffraction (XRD) is crucial, and ML models can automatically analyze the diffraction patterns to identify phases and assess product quality [2].

- Data Integration & Model Update: The characterization results are automatically fed back into the LIMS and to the AI model. The model is then retrained with this new data, updating its understanding of the synthesis-property relationship. This updated model then proposes the next set of experiments, closing the loop and beginning the cycle anew [18] [2].

This architecture was demonstrated powerfully by "A-Lab," a fully autonomous solid-state synthesis platform that successfully synthesized 41 novel inorganic materials over 17 days of continuous operation by leveraging this exact closed-loop strategy [2].

Experimental Protocols

This section provides a detailed, executable protocol for implementing an active learning cycle aimed at optimizing the synthesis parameters of a functional solid-state material, such as a cathode or electrolyte for energy storage applications.

Protocol: Active Learning for Solid-State Synthesis Optimization

Objective: To autonomously discover the optimal solid-state synthesis parameters (e.g., annealing temperature, time, precursor mixing ratio) for a target material that maximizes one or more desired properties (e.g., ionic conductivity, phase purity, stability).

Prerequisites:

- A curated initial dataset of synthesis parameters and corresponding material properties (e.g., from literature, prior experiments, or high-throughput simulations).

- A calibrated and automated synthesis platform (e.g., a robotic furnace system like the Chemspeed ISynth).

- Integrated analytical instruments (e.g., XRD, SEM) with automated sample transfer capabilities.

- A deployed AI/ML model for prediction and active learning.

Materials:

- Precursor powders (e.g., metal oxides, carbonates).

- Milling media (e.g., zirconia balls).

- Crucibles or sample holders compatible with the robotic furnace.

Procedure:

Table 1: Step-by-Step Active Learning Protocol for Solid-State Synthesis.

| Step | Process | Details & Specifications | Duration |

|---|---|---|---|

| 1. Initialization | Load precursors & define search space. | Robotic system loads precursor powders into designated hoppers. The AI system is initialized with the boundaries of the parameter space to explore (e.g., temperature: 600-1200°C, time: 1-48 hours). | ~1 hour |

| 2. AI Experimental Proposal | Active learning cycle iteration. | The AI model (e.g., a Gaussian Process Regressor with Expected Hypervolume Improvement (EHVI) acquisition function) analyzes all existing data and selects the next synthesis condition(s) predicted to yield the greatest information gain toward the multi-objective goal [18] [19]. | < 5 minutes |

| 3. Automated Synthesis | Weighing, mixing, pelletizing, annealing. | 1. Dispensing & Weighing: Robotic arm dispenses precise masses of precursors into a synthesis vial. 2. Mixing: Vial is transferred to a mixer or mill for homogenization. 3. Pelletizing (Optional): Powder is automatically pressed into a pellet. 4. Annealing: AMR transports the pellet to a robotic furnace, which places it in a hot zone under the specified temperature and time profile. | 2 - 48 hours |

| 4. Automated Characterization | Sample transport & phase identification. | 1. Transport: After synthesis, the AMR retrieves the sample and delivers it to an XRD instrument. 2. Analysis: XRD pattern is collected and analyzed in real-time by a convolutional neural network (CNN) to determine phase purity and identity [2]. | ~30 minutes |

| 5. Data Processing & Model Update | Data integration & model retraining. | The synthesis parameters and characterization results (e.g., phase fraction, lattice parameters) are automatically stored in the LIMS. The AI model is updated with this new data point, refining its predictive capability for the next cycle [18]. | ~10 minutes |

| 6. Iteration | Return to Step 2. | The loop (Steps 2-5) repeats until a performance target is met, a specified number of iterations is completed, or the parameter space is sufficiently explored. | Continuous |

Safety Notes:

- The entire process should be conducted within appropriate engineering controls (e.g., a fume hood or glovebox for air-sensitive materials) with robotic systems handling hazardous operations.

- Implement real-time monitoring and anomaly detection algorithms to halt experiments in case of instrument failure or unsafe conditions.

Workflow Visualization

The following diagram illustrates the closed-loop, active learning process described in the protocol.

Diagram 1: Active learning closed loop for solid-state synthesis.

The Scientist's Toolkit: Research Reagent Solutions

The following table details the essential hardware and software components required to establish an autonomous laboratory for solid-state synthesis.

Table 2: Key Research Reagent Solutions for an Autonomous Solid-State Synthesis Laboratory.

| Item | Function / Role | Specific Examples & Notes |

|---|---|---|

| AI/ML Software Stack | Serves as the "brain" for planning experiments, analyzing data, and decision-making via active learning. | Gaussian Process Regressor (GPR): A robust surrogate model for predicting material properties and quantifying uncertainty [18]. Acquisition Function (e.g., EHVI): Guides the selection of next experiments in multi-objective optimization [18] [19]. LLM-based Agents (e.g., Coscientist, ChemCrow): For literature-based recipe design and natural language control of robots [2]. |

| Robotic Synthesis Platform | Automates the physical handling and processing of solid precursors and samples. | Chemspeed ISynth: An automated synthesizer for powder weighing, slurry mixing, and heat treatment [2]. Fixed Robotic Arms: For precise, repetitive tasks at a single station. Autonomous Mobile Robots (AMRs): For flexible transport of samples between instruments, creating a connected lab [17] [2]. |

| Automated Analytical Instruments | Provides rapid, high-throughput characterization of synthesized materials to generate feedback for the AI. | X-Ray Diffractometer (XRD) with ML analysis: For phase identification and quantification; critical for validating synthesis outcomes [2]. SEM/EDS: For automated microstructural and elemental analysis. |

| Laboratory Information Management System (LIMS) | Acts as the central "data spine," integrating and standardizing all experimental data. | Cloud-based LIMS (e.g., LabLynx): Enables remote access, real-time collaboration, and seamless data flow from all connected instruments and robots [17] [20]. |

| IoT Sensors & Edge Computing | Enables real-time environmental monitoring and low-latency, secure AI processing at the source of data generation. | Temperature/Humidity Sensors: To validate and log synthesis conditions. On-Premises GPU Cluster (Edge AI): For running AI models locally, ensuring operational resilience and fast response times for real-time control [17]. |

The discovery and synthesis of novel inorganic materials is crucial for technological advancement, yet the experimental realization of computationally predicted compounds remains a persistent bottleneck. Bridging this gap requires overcoming the traditional limitations of time-consuming, manual trial-and-error methods. This application note details a case study of the A-Lab, an autonomous laboratory that integrates artificial intelligence (AI), robotics, and active learning to accelerate the solid-state synthesis of novel inorganic powders. We frame the A-Lab's performance within a broader thesis on active learning, demonstrating its effectiveness in optimizing synthesis routes with minimal human intervention. Over 17 days of continuous operation, the A-Lab successfully synthesized 41 out of 58 target compounds identified using large-scale ab initio phase-stability data, achieving a 71% success rate and providing a scalable blueprint for the future of materials discovery [3] [2].

Autonomous Workflow & Experimental Design

The A-Lab operates via a continuous closed-loop cycle, seamlessly integrating computational prediction, robotic execution, and AI-driven learning. Its workflow synthesizes several advanced technologies to create an autonomous discovery pipeline.

The end-to-end process, from target selection to synthesis optimization, is illustrated below.

Detailed Experimental Protocols

Protocol: Target Selection and Preparation

- Objective: To identify novel, air-stable inorganic compounds for synthesis.

- Procedure:

- Computational Screening: Select target materials from the Materials Project and Google DeepMind databases. Targets are predicted to be on or near (<10 meV per atom) the thermodynamic convex hull of stable phases [3].

- Stability Assessment: Cross-reference targets to ensure they are predicted not to react with O₂, CO₂, and H₂O, ensuring compatibility with open-air handling in the lab [3].

- Precursor Selection: Input selected targets into a natural-language processing (NLP) model trained on a vast database of historical synthesis literature. The model proposes initial solid-state precursor sets based on chemical similarity to known compounds [3] [2].

- Temperature Calibration: A second machine learning model, trained on literature heating data, proposes an initial synthesis temperature [3].

Protocol: Robotic Solid-State Synthesis

- Objective: To automatically execute powder synthesis recipes with high consistency.

- Procedure:

- Powder Dispensing: A robotic station accurately dispenses and weighs precursor powders from a curated library.

- Mixing and Milling: Precursors are transferred to a mixing apparatus and milled together to ensure homogeneity and good reactivity.

- Crucible Loading: The mixed powder is automatically transferred into an alumina crucible.

- Heating Cycle: A robotic arm loads the crucible into one of four available box furnaces. The furnace executes the heating profile (temperature, ramp rate, dwell time) as specified by the AI planner [3].

- Cooling: Samples are allowed to cool to ambient temperature within the furnace before robotic retrieval.

Protocol: Automated Product Characterization and Analysis

- Objective: To identify the phases present in the synthesized product and quantify the yield of the target material.

- Procedure:

- Sample Preparation: A robotic arm transfers the cooled sample to a station where it is ground into a fine powder to prepare it for X-ray diffraction (XRD).

- XRD Data Collection: The powdered sample is mounted and its XRD pattern is collected automatically.

- Phase Identification: The XRD pattern is analyzed by probabilistic machine learning models trained on experimental structures from the Inorganic Crystal Structure Database (ICSD). For novel targets, patterns are simulated from computed Structures and corrected for density functional theory (DFT) errors [3].

- Yield Quantification: Automated Rietveld refinement is performed to confirm identified phases and calculate the weight fraction (yield) of the target compound. A synthesis is deemed successful if the target yield exceeds 50% [3].

Active Learning for Synthesis Optimization

When initial synthesis recipes fail, the A-Lab employs an active learning cycle to propose improved follow-up recipes. This process is governed by the ARROWS3 algorithm, which leverages thermodynamic data and observed reaction outcomes [3]. The logic of this optimization cycle is detailed below.

The algorithm is grounded in two key hypotheses:

- Pairwise Reactions: Solid-state reactions tend to proceed through intermediates formed by reactions between two phases at a time [3].

- Driving Force Maximization: Intermediate phases that leave only a small driving force (computed from Materials Project formation energies) to form the target should be avoided, as they lead to sluggish kinetics [3].

The lab continuously builds a database of observed pairwise reactions. This knowledge allows it to prune the search space of possible recipes and prioritize synthesis pathways with larger driving forces, thereby increasing the likelihood of success in subsequent attempts [3]. This active learning loop was responsible for identifying successful synthesis routes for nine targets, six of which had completely failed in their initial literature-inspired attempts [3].

Key Research Reagents & Solutions

The following table catalogues the essential materials, data, and software tools that constitute the core "research reagent" solutions for operating an autonomous laboratory like the A-Lab.

Table 1: Essential Research Reagents and Solutions for an Autonomous Materials Discovery Laboratory.

| Category | Item/Resource Name | Function and Application |

|---|---|---|

| Computational & Data Resources | Materials Project/DeepMind Database [3] | Provides ab initio computed phase stability data for target identification and thermodynamic driving force calculations. |

| Literature Synthesis Database [3] [2] | A text-mined corpus of historical synthesis procedures used to train ML models for initial precursor and temperature selection. | |

| Inorganic Crystal Structure Database (ICSD) [3] | Source of experimental crystal structures for training ML models for automated XRD phase identification. | |

| AI & Software Tools | Natural Language Processing (NLP) Models [3] | Analyzes chemical literature to propose initial synthesis recipes based on analogy to known materials. |

| ARROWS3 Active Learning Algorithm [3] | The core optimization engine that uses thermodynamic data and experimental outcomes to propose improved synthesis routes after failures. | |

| Probabilistic ML Models for XRD [3] | Analyzes XRD patterns to identify crystalline phases and estimate their weight fractions in the product. | |

| Hardware & Robotic Systems | Automated Powder Handling Station [3] | Precisely dispenses, weighs, and mixes solid precursor powders for synthesis. |

| Robotic Furnace Station [3] | Automates the loading, heating, and unloading of samples from box furnaces. | |

| Automated XRD Station [3] [23] | Prepares powdered samples, collects XRD patterns, and performs subsequent analysis with minimal human intervention. |

Results & Performance Data

The performance of the A-Lab was quantitatively evaluated over a campaign targeting 58 novel inorganic compounds. The overall outcomes are summarized below.

Table 2: Summary of A-Lab Synthesis Outcomes Over 17 Days of Operation.

| Metric | Value | Details |

|---|---|---|

| Total Targets | 58 | Primarily oxides and phosphates from 33 elements and 41 structural prototypes [3]. |

| Successfully Synthesized | 41 | Compounds obtained as the majority phase (>50% yield) [3]. |

| Overall Success Rate | 71% | Demonstrated feasibility of autonomous discovery at scale [3]. |

| Success from Literature Recipes | 35 | Initial recipes proposed by NLP models were successful for 35 targets [3]. |

| Success from Active Learning | 6 | Targets successfully synthesized only after optimization via the ARROWS3 algorithm [3]. |

| Total Recipes Tested | 355 | Highlights the non-trivial nature of precursor selection, with only ~37% producing the target [3]. |

Analysis of the 17 unsuccessful syntheses revealed specific failure modes, providing actionable insights for improving both computational and experimental methods.

Table 3: Analysis of Synthesis Failure Modes for 17 Unobtained Targets.

| Failure Mode | Frequency | Description and Impact |

|---|---|---|

| Slow Kinetics | 11/17 | The most common issue, affecting reactions with low thermodynamic driving forces (<50 meV per atom), leading to incomplete reactions [3]. |

| Precursor Volatility | Not Specified | Volatilization of precursor materials during heating, altering the reactant stoichiometry and preventing target formation [3]. |

| Amorphization | Not Specified | Formation of non-crystalline products, which are not detected by XRD and hinder the assessment of synthesis success [3]. |

| Computational Inaccuracy | Not Specified | Inaccuracies in the ab initio computed stability data, meaning the target compound may not be stable under the experimental conditions [3]. |

Discussion

The A-Lab case study validates the powerful synergy between high-throughput computation, historical data, machine learning, and robotics. Its 71% success rate in synthesizing computationally predicted materials demonstrates that autonomous laboratories are no longer a futuristic concept but a present-day tool capable of accelerating materials innovation [3] [2].

The role of active learning, specifically the ARROWS3 algorithm, was critical in overcoming initial failures for nearly 15% of the targets. By leveraging a growing database of observed reactions and thermodynamic principles, the system efficiently navigated the complex solid-state synthesis space. This approach directly addresses the "data-scarcity" problem common in materials science by making intelligent, data-driven decisions on which experiments to perform next [19].

Future development of autonomous laboratories will focus on enhancing the generalization and robustness of AI models. This will involve training foundation models across different material classes, developing standardized hardware interfaces for modular robotic systems [2], and improving error-handling capabilities to manage unexpected experimental outcomes. The integration of large language models (LLMs) for higher-level experimental planning and reasoning also presents a promising frontier for further automating the scientific process [2].

In the field of solid-state synthesis route optimization, the experimental characterization of novel materials is both time-consuming and resource-intensive. Active learning (AL) has emerged as a powerful framework to accelerate this process by intelligently selecting which experiments to perform, thereby minimizing the number of costly syntheses required. Central to the success of any active learning strategy is the acquisition function (AF), a heuristic that guides the selection of the most informative data points to label next. The choice of acquisition function critically balances the exploration of uncertain regions with the exploitation of promising areas in the experimental space. This document provides detailed application notes and protocols for three fundamental families of acquisition functions—Expected Improvement, Uncertainty Sampling, and Diversity Methods—within the context of autonomous materials discovery platforms like the A-Lab [3].

Acquisition Function Fundamentals

An acquisition function, denoted as ( U(\mathbf{x}) ), scores the utility of querying an unlabeled sample ( \mathbf{x} ). The goal is to select a subset of samples that maximizes model improvement under a fixed labeling budget ( B ) [24]. In pool-based active learning, given an unlabeled pool ( \mathcal{U} ), the core operation at each round ( t ) is:

[ \mathbf{x}t^* = \arg \max{x \in Ut} AF(x; \theta{t-1}) ]

where ( \theta{t-1} ) represents the model parameters from the previous round [25]. The selected point ( \mathbf{x}t^* ) is then labeled by an oracle (e.g., a robotic synthesis and characterization step), and the model is retrained on the updated dataset.

Core Acquisition Function Classes

Uncertainty Sampling

Uncertainty sampling is one of the most common strategies in active learning for classification tasks. It selects samples for which the current model is most uncertain about the predicted label [24]. The underlying principle is that labeling these ambiguous points will most effectively reduce the model's overall uncertainty.