Achieving SCF Convergence in Computational Drug Discovery: A Comprehensive Guide to Parameter Setting and Optimization

This article provides a comprehensive guide for researchers and drug development professionals on setting and optimizing parameters to achieve Self-Consistent Field (SCF) convergence in computational chemistry calculations.

Achieving SCF Convergence in Computational Drug Discovery: A Comprehensive Guide to Parameter Setting and Optimization

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on setting and optimizing parameters to achieve Self-Consistent Field (SCF) convergence in computational chemistry calculations. Covering foundational principles, advanced methodological applications, systematic troubleshooting, and rigorous validation techniques, it addresses critical challenges in electronic structure calculations for biomolecular systems. By synthesizing current methodologies and optimization strategies, this guide aims to enhance the reliability and efficiency of quantum chemical computations in pharmaceutical research, ultimately accelerating the drug discovery pipeline.

Understanding SCF Convergence: Fundamental Principles and Challenges in Quantum Chemistry for Drug Discovery

The Critical Role of SCF Convergence in Accurate Molecular Property Prediction

The Self-Consistent Field (SCF) method is an iterative computational procedure central to quantum chemical calculations based on Density Functional Theory (DFT) and other electronic structure methods. Its primary role is to solve for the electron density of a molecular system by ensuring that the computed electronic potential and the resulting electron density are mutually consistent [1]. The SCF cycle involves repeatedly constructing the Fock matrix from the current density, diagonalizing it to obtain new orbitals, and building a new density matrix until the input and output densities converge within a specified threshold [2].

Achieving robust SCF convergence is a prerequisite for obtaining accurate predictions of molecular properties. The electron density directly determines all ground-state electronic properties, including molecular energies, reaction barriers, vibrational frequencies, and spectroscopic parameters [3] [1]. Poor convergence not only prevents calculations from completing but can lead to qualitatively incorrect results, such as convergence to high-energy excited states rather than the ground state, or significant errors in predicted molecular geometries and energies [3]. Within the context of energy grid parameter research for distribution system operators (DSOs), the challenges of SCF convergence find a conceptual parallel in achieving stable convergence in smart grid energy flow optimizations, where iterative solvers must balance multiple constraints to reach optimal operational states [4] [5].

The SCF Convergence Challenge

Fundamental Causes of Convergence Failure

SCF convergence failures predominantly arise from two scenarios: initial oscillations in the early iterations and trailing convergence where small, persistent changes prevent reaching the convergence threshold [3]. The former often occurs with poor initial guesses for the electron density, particularly for systems with complex electronic structures, such those involving transition metals, open-shell radicals, or near-degenerate orbital energy levels [2]. The latter represents a more insidious problem where the SCF cycle appears to progress but never formally converges, often due to numerical instabilities or the presence of multiple states with similar energies [3].

For ΔSCF calculations targeting excited states, the challenge intensifies, as the procedure requires converging to a saddle point on the electronic Hamiltonian rather than a minimum [3]. This necessitates specialized convergence methods to ensure the solution remains on the desired excited state surface, which is crucial for modeling processes like charge-transfer excitations and core-hole spectroscopies where time-dependent DFT (TDDFT) often fails [3].

Impact on Molecular Property Prediction

The accuracy of nearly all quantum chemically derived properties depends directly on the quality of the converged SCF solution. Forces used in geometry optimization are derived from the Hellmann-Feynman theorem, which requires a fully variational wavefunction only obtained at SCF convergence. Vibrational frequencies determined from the Hessian matrix (second derivatives of energy with respect to nuclear positions) are particularly sensitive to convergence quality, as small residual errors in the electron density can significantly affect curvature of the potential energy surface [3]. Properties like NMR chemical shifts, electronic circular dichroism (ECD), and vibrational circular dichroism (VCD) require highly accurate electron densities and orbital energies, yet open-source implementations for predicting these properties remain scarce [3].

Table 1: Molecular Properties and Their Dependence on SCF Convergence Quality

| Molecular Property | Dependence on SCF Convergence | Typical Convergence Requirement |

|---|---|---|

| Total Energy | Direct dependence on electron density accuracy | 10⁻⁶ a.u. (default in ADF) [2] |

| Nuclear Gradients (Forces) | Requires variational wavefunction for Hellmann-Feynman theorem | 10⁻⁶ a.u. or tighter |

| Molecular Geometry | Depends on accurate forces | 10⁻⁶ a.u. or tighter |

| Vibrational Frequencies | Highly sensitive to Hessian matrix accuracy | 10⁻⁸ a.u. or tighter [3] |

| Electronic Properties (HOMO/LUMO, Dipole Moment) | Direct dependence on orbital energies and electron density | 10⁻⁶ a.u. |

| Spectroscopic Parameters (NMR, ECD, VCD) | Requires highly precise density and orbital energies | 10⁻⁸ a.u. or tighter [3] |

Quantitative Assessment of SCF Convergence

Convergence Criteria and Metrics

The primary metric for SCF convergence is the commutator of the Fock and density matrices ([F,P]), which theoretically should be zero at full self-consistency [2]. In practical implementations, convergence is considered achieved when the maximum element of this commutator falls below a specified threshold (SCFcnv), while the norm of the matrix falls below 10×SCFcnv [2]. The ADF package implements a secondary criterion (sconv2) that, when met, allows calculations to continue with only a warning if the primary criterion cannot be achieved [2].

Table 2: Standard SCF Convergence Parameters in Quantum Chemistry Codes

| Parameter | Default Value in ADF | Function | Impact on Calculation |

|---|---|---|---|

| SCFcnv (Primary criterion) | 1.0×10⁻⁶ (Create mode: 1.0×10⁻⁸) | Threshold for maximum element of [F,P] commutator | Determines final convergence quality; tighter values needed for properties |

| sconv2 (Secondary criterion) | 1.0×10⁻³ | Fallback threshold when primary criterion not met | Allows continued computation with warning if moderate convergence achieved |

| Maximum Iterations (Niter) | 300 | Maximum SCF cycles before termination | Prevents infinite loops in problematic cases |

| DIIS N (Expansion vectors) | 10 | Number of previous cycles used in DIIS extrapolation | Critical for convergence acceleration; too small or large values can break convergence |

Performance of Acceleration Methods

Different SCF acceleration methods demonstrate varying performance characteristics across chemical systems. The mixed ADIIS+SDIIS method, used by default in ADF since 2016, typically provides optimal performance for most systems [2]. The LIST family of methods (LISTi, LISTb, LISTf) can be more effective for difficult cases but are sensitive to the number of expansion vectors [2]. The MESA method combines multiple acceleration techniques (ADIIS, fDIIS, LISTb, LISTf, LISTi, and SDIIS) and can be fine-tuned by disabling specific components for problematic systems [2].

Experimental Protocols for SCF Convergence

Standard Protocol for Routine Systems

For systems with well-behaved convergence characteristics, the following protocol provides reliable performance:

- Initialization: Use the default electron density guess (typically superposition of atomic densities or extended Hückel theory).

- SCF Parameters: Set convergence threshold to 1.0×10⁻⁶ a.u. for geometry optimizations and 1.0×10⁻⁸ a.u. for frequency calculations [3] [2].

- Acceleration: Employ the default ADIIS+SDIIS method with 10 DIIS expansion vectors [2].

- Monitoring: Track both the maximum element and norm of the [F,P] commutator to ensure proper convergence [2].

- Validation: Confirm convergence within the specified maximum iterations (typically 50-100 cycles for well-behaved systems).

Advanced Protocol for Problematic Systems

For systems exhibiting convergence difficulties (oscillatory behavior, trailing convergence, or failure to converge):

Enhanced Initial Guess:

- Utilize machine learning-based density prediction where available [3]

- Employ fragment-based or computational results file (CRF) restart from similar systems

Modified SCF Parameters:

- Implement the MESA method with selective component disabling (e.g.,

MESA NoSDIISfor oscillatory cases) [2] - Adjust DIIS expansion vectors (N=12-20) for LIST methods [2]

- For ADIIS, decrease thresholds (THRESH1 and THRESH2) to 0.001 and 0.00001 respectively to let A-DIIS approach the final solution [2]

- Implement the MESA method with selective component disabling (e.g.,

Alternative Strategies:

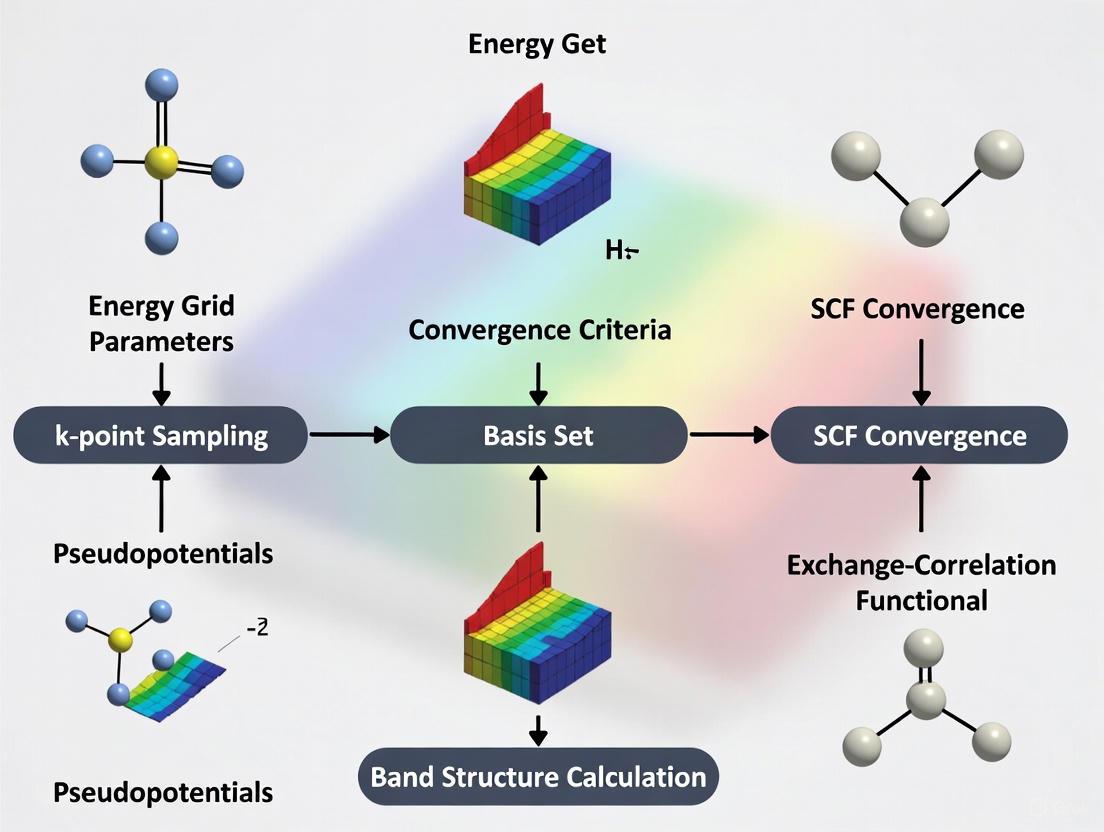

Diagram 1: SCF Convergence Workflow

Protocol for ΔSCF Excited State Calculations

For calculating excited states using the ΔSCF method:

- Initial Orbital Selection: Manually promote electrons to target the desired excited state configuration [3].

- Convergence Enforcement: Apply the Maximum Overlap Method (MOM) to maintain orbital character during iterations [3].

- Constrained DFT: Implement charge or spin constraints where appropriate for charge-transfer states [3].

- Validation: Confirm convergence to saddle point by verifying the Hessian has exactly one negative eigenvalue.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for SCF Convergence Research

| Tool/Resource | Type | Primary Function | Application in SCF Research |

|---|---|---|---|

| ADF SCF Module [2] | Software Module | SCF convergence implementation | Provides production implementation of multiple acceleration methods |

| Libxc [3] | Software Library | Exchange-correlation functionals | Enables testing SCF convergence across functional types |

| Open Molecules 2025 [6] | Dataset | Training data for ML density guesses | Provides reference data for developing improved initial guesses |

| DeePMD-kit [1] | Software Framework | Neural network potentials | Alternative to DFT for large systems where SCF convergence is problematic |

| CREST [3] | Conformer Search | Metadynamics-based sampling | Generates diverse molecular geometries for testing SCF robustness |

| DP-GEN [7] | Active Learning | Automated potential generation | Framework for developing systems with guaranteed SCF convergence |

Advanced Applications and Future Directions

Machine Learning Approaches

Machine learning offers promising avenues for addressing SCF convergence challenges. ML-based electron density guesses can provide starting points much closer to the final solution, significantly reducing iteration counts [3]. Training such models requires large datasets of high-quality electron densities, such as the Open Molecules 2025 dataset with >100 million DFT calculations [6]. Transfer learning approaches, as demonstrated in the EMFF-2025 neural network potential, show that models pre-trained on diverse chemical systems can be specialized with minimal additional data [7].

Connection to Grid Parameter Optimization

The challenge of SCF convergence shares conceptual parallels with achieving convergence in smart grid energy management systems. Both involve iterative optimization of complex, nonlinear systems with multiple interacting components [5] [8]. Grid operators face analogous challenges in achieving convergence to stable operating points while managing distributed energy resources, where artificial neural networks (ANNs) and other AI methods are increasingly employed for optimization [5]. Research in either domain can inform the other, particularly in developing robust convergence accelerators and adaptive optimization parameters.

Diagram 2: SCF and Grid Convergence Analogy

Emerging Research Frontiers

Future research directions include developing universal SCF convergence accelerators that automatically adapt to system characteristics, eliminating the need for manual parameter tuning [3]. Hybrid quantum-classical algorithms may leverage quantum computers to calculate particularly challenging components of the SCF procedure. For drug development professionals, improved SCF convergence directly translates to more reliable prediction of protein-ligand binding energies, spectroscopic properties for characterization, and reaction mechanisms for synthetic planning [3]. The ongoing development of unified thermochemistry libraries and better implicit-solvent models will further increase the demands on SCF convergence for pharmaceutical applications [3].

Self-Consistent Field (SCF) theory represents a cornerstone of modern computational quantum chemistry, enabling the in silico modeling of chemical reactions and the first-principles design of novel materials and catalysts [9]. As the simplest, most affordable, and most widely-used category of electronic structure methods, SCF approaches include both Hartree-Fock (HF) theory and Kohn-Sham density functional theory (DFT) [10]. The mathematical foundation of these methods lies in solving the SCF equations through an iterative procedure that continues until the energy is minimized and the electron distribution becomes consistent with the potential it generates [9]. This application note provides a comprehensive overview of the mathematical foundations of SCF theory, with particular emphasis on its relevance to convergence research, specifically in setting energy grid parameters for Density of States (DOS) convergence studies essential for drug development and materials science applications.

Theoretical Framework

Basis Set Expansion and the LCAO Ansatz

In the HF and DFT approaches, the electronic wave function is formulated as a Slater determinant where electrons occupy a set of molecular orbitals (MOs). Central to the SCF methodology is the Linear Combination of Atomic Orbitals (LCAO) ansatz, where MOs are expanded in terms of normalized atomic orbital basis functions [9]:

[ \varphii^\alpha(\vec{r}) = \sum{\mu=1}^M C{\mu i}^\alpha \chi\mu(\vec{r}) ]

[ \varphii^\beta(\vec{r}) = \sum{\mu=1}^M C{\mu i}^\beta \chi\mu(\vec{r}) ]

Here, ( \varphii^\alpha ) and ( \varphii^\beta ) represent the α (spin-up) and β (spin-down) molecular orbitals, ( C{\mu i}^\alpha ) and ( C{\mu i}^\beta ) are the expansion coefficients, and ( \chi\mu ) are the atomic orbital basis functions, with M indicating the total number of basis functions [9]. The basis functions are typically not orthonormal, with their overlap defined by the overlap matrix ( S{\mu\nu} ):

[ \int d\vec{r} \chi\mu(\vec{r}) \chi\nu(\vec{r}) = S{\mu\nu} \neq \delta{\mu\nu} ]

Table 1: Comparison of SCF Method Theoretical Foundations

| Method | Theoretical Basis | Electron Correlation Treatment | Computational Cost | Typical Applications |

|---|---|---|---|---|

| Hartree-Fock (HF) | Wavefunction theory | Mean-field, exact exchange | Moderate | Reference calculations, molecular properties |

| Density Functional Theory (DFT) | Electron density | Approximate exchange-correlation functional | Low to moderate | Large systems, catalysis, materials |

| Local Density Approximation (LDA) | Uniform electron gas model | Local density dependence | Low | Metallic systems, preliminary studies |

| Generalized Gradient Approximation (GGA) | Electron density and gradient | Semi-local functional | Low to moderate | General purpose, molecular systems |

| Meta-GGA | Density, gradient, and kinetic energy density | Higher-order semi-local | Moderate | Improved accuracy for diverse systems |

Density Matrices and Electron Density

The electron density plays a fundamental role in quantum chemistry, particularly in DFT calculations. The spin-σ electron density can be expressed as [9]:

[ \rho^\sigma(\vec{r}) = \sum{i=1}^{N\sigma} |\varphii^\sigma(\vec{r})|^2 = \sum{i=1}^{N\sigma} \sum{\mu\nu} C{\mu i}^\sigma C{\nu i}^\sigma \chi\mu(\vec{r}) \chi\nu(\vec{r}) = \sum{\mu\nu} P{\mu\nu}^\sigma \chi\mu(\vec{r}) \chi\nu(\vec{r}) ]

Here, ( N_\sigma ) represents the number of spin-σ electrons in the system, and the density matrix ( P^\sigma ) is defined as [9]:

[ P{\mu\nu}^\sigma = \sum{i=1}^{N\sigma} C{\mu i}^\sigma C_{\nu i}^\sigma ]

The total electron density is obtained from the sum of the α and β densities: ( \rho(\vec{r}) = \rho^\alpha(\vec{r}) + \rho^\beta(\vec{r}) ), with a corresponding total density matrix ( P = P^\alpha + P^\beta ) [9].

SCF Equations and Eigenvalue Problems

The SCF equations manifest as generalized eigenvalue problems in the non-orthogonal atomic orbital basis set. For restricted calculations, these take the form of the Roothaan-Hall equations [9]:

[ \mathbf{F} \mathbf{C} = \mathbf{S} \mathbf{C} \mathbf{E} ]

For unrestricted open-shell systems, the Pople-Nesbet-Berthier equations yield a coupled set of generalized eigenvalue equations [9]:

[ \mathbf{F}^\alpha \mathbf{C}^\alpha = \mathbf{S} \mathbf{C}^\alpha \mathbf{E}^\alpha ] [ \mathbf{F}^\beta \mathbf{C}^\beta = \mathbf{S} \mathbf{C}^\beta \mathbf{E}^\beta ]

In these equations, ( \mathbf{F} ) represents the Fock matrix, ( \mathbf{S} ) is the overlap matrix, ( \mathbf{C} ) contains the molecular orbital coefficients, and ( \mathbf{E} ) is a diagonal matrix of orbital energies [9].

SCF Convergence Protocols

Standard SCF Iterative Procedure

The solution of SCF equations typically employs an iterative procedure until self-consistency is achieved. The fundamental steps in this process are visualized in the following workflow:

Advanced Convergence Techniques

When standard SCF procedures fail to converge, several advanced strategies can be employed:

Initial Guess Modification: Changing the initial electron density guess is recommended when SCF calculations fail to converge, with options including superposition of atomic densities, fragment approaches, or results from previous calculations [10].

Convergence Algorithm Alteration: Switching between different convergence algorithms, such as damping, level shifting, or direct inversion in iterative subspace (DIIS) methods can stabilize convergence [10].

Dual-Basis Approaches: These methods facilitate large-basis quality results while requiring self-consistent iterations only in a smaller basis set, significantly improving computational efficiency [10].

SCF Meta-dynamics: This technique helps locate multiple solutions to the SCF equations and verifies that the obtained solution represents the lowest minimum [10].

Density of States Convergence Protocol

For DOS convergence research, particularly relevant for electronic structure analysis in drug development, the following detailed protocol is recommended:

Table 2: DOS Convergence Protocol Parameters

| Step | Parameter | Recommended Settings | Convergence Criterion | Remarks |

|---|---|---|---|---|

| Initialization | Basis Set | 6-31G* or def2-SVP | N/A | Balance between accuracy and cost |

| K-points Grid | 3×3×3 (minimal) | N/A | For periodic systems | |

| Energy Grid | 0.5 eV resolution | N/A | Initial coarse grid | |

| SCF Cycle | Max Iterations | 100-200 | Energy change < 10⁻⁶ Ha | Adjust based on system |

| Density Convergence | 10⁻⁶ a.u. | Density change < 10⁻⁵ | Critical for property accuracy | |

| Mixing Scheme | DIIS with 0.1 damping | Stable convergence | Reduce damping if oscillating | |

| DOS Refinement | K-point Grid | Increase to 6×6×6 | DOS features stable | Monitor band edges |

| Energy Grid | 0.05-0.01 eV | Peak positions stable | Focus on relevant energy window | |

| Broadening | 0.1-0.05 eV | Physical peak width | Gaussian/Lorentzian mixing |

Procedure:

System Preparation:

- Define molecular geometry or crystal structure

- Select appropriate basis set considering accuracy requirements and computational resources

- Choose exchange-correlation functional appropriate for the system (e.g., PBE for metals, B3LYP for molecules)

Initial SCF Calculation:

- Perform calculation with moderate convergence criteria (energy change < 10⁻⁵ Ha)

- Use coarse k-point grid for periodic systems (e.g., 3×3×3)

- Employ minimal energy grid resolution (0.5 eV) for initial DOS calculation

Convergence Assessment:

- Monitor total energy changes between iterations

- Track density matrix convergence

- Verify orbital energy stability, particularly for frontier orbitals

Grid Refinement:

- Systematically increase k-point density until DOS features stabilize

- Refine energy grid resolution to 0.05-0.01 eV in relevant energy regions

- Adjust broadening parameters to physically meaningful values

Validation:

- Compare DOS with experimental data when available

- Verify integration of DOS gives correct electron count

- Check consistency of band gaps or HOMO-LUMO gaps with expected values

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Component | Function/Purpose | Implementation Examples | Relevance to DOS Convergence |

|---|---|---|---|

| Basis Sets | Atomic orbital basis for MO expansion | Gaussian-type orbitals (GTOs), Slater-type orbitals (STOs), numerical AOs (NAOs) | Determines accuracy of wavefunction representation and computational cost |

| Exchange-Correlation Functionals | Approximate electron correlation in DFT | LDA, GGA (PBE), meta-GGA (SCAN), hybrid (B3LYP) | Critical for accurate electronic structure and DOS features |

| K-point Grids | Brillouin zone sampling for periodic systems | Monkhorst-Pack scheme, Gamma-centered | Essential for convergent DOS in materials and surfaces |

| Density Matrix | Electron density representation in basis | Construction from MO coefficients | Directly determines accuracy of calculated electron density |

| SCF Convergers | Algorithms for SCF convergence | DIIS, EDIIS, damping, level shifting | Enable stable convergence to ground state for accurate DOS |

| Energy Grid Parameters | DOS energy point discretization | Resolution, energy range, broadening | Controls resolution and smoothness of final DOS spectrum |

| Pseudopotentials/ECPs | Core electron approximation | Norm-conserving, ultrasoft, PAW | Reduces computational cost while maintaining valence electron accuracy |

Convergence Framework and Methodology

The convergence of SCF calculations, particularly in the context of DOS analysis for materials and drug development applications, requires a systematic approach. Recent research emphasizes convergence as problem-driven research that fosters deep integration across disciplines [11]. This is especially relevant when setting energy grid parameters for DOS convergence, where both technical parameters and physical understanding must be integrated.

The mathematical framework for assessing convergence involves monitoring multiple parameters:

Energy Convergence: The total energy difference between successive iterations should approach zero: [ \Delta E = |E{n} - E{n-1}| < \epsilon_E ]

Density Convergence: The change in the density matrix should diminish: [ \Delta P = ||P{n} - P{n-1}|| < \epsilon_P ]

DOS Convergence: The density of states should become invariant to further k-point or energy grid refinement: [ \text{DOS}(E, \text{grid}{n}) - \text{DOS}(E, \text{grid}{n-1}) \approx 0 ]

The relationship between these convergence criteria and the computational workflow can be visualized as follows:

For research focusing on drug development applications, particular attention should be paid to the accurate calculation of frontier orbital energies (HOMO-LUMO gap) and the DOS in the energy region relevant to molecular interactions, as these parameters directly influence binding affinity and reactivity predictions.

The mathematical foundations of Self-Consistent Field theory provide the essential framework for electronic structure calculations central to modern computational chemistry and materials science. The LCAO approach, combined with efficient iterative diagonalization techniques, enables the solution of the SCF equations for both molecular and periodic systems. For Density of States convergence research, careful attention to basis set selection, k-point sampling, energy grid parameters, and convergence criteria is essential for obtaining reliable results. The protocols and methodologies outlined in this application note provide researchers with a systematic approach to setting appropriate energy grid parameters for DOS convergence, facilitating accurate electronic structure calculations in drug development and materials design applications. As SCF methodologies continue to evolve, particularly with advances in linear-scaling algorithms and improved density functional approximations, the efficiency and applicability of these methods to larger and more complex systems will further expand their utility in scientific research and industrial applications.

Common Convergence Challenges in Biomolecular Systems and Transition Metal Complexes

A critical, yet often overlooked, step in computational chemistry is ensuring the convergence of the electron density of states (DOS) and related properties. This process involves defining appropriate energy grid parameters and computational settings to achieve a stable, accurate numerical solution of the electronic structure. Inefficient or incomplete convergence can lead to inaccurate energies, forces, reaction barriers, and spectroscopic predictions, fundamentally compromising the reliability of a simulation. These challenges are particularly acute in two important classes of systems: large, flexible biomolecules and electronically complex transition metal complexes (TMCs). This application note details the specific convergence challenges encountered in these systems and provides validated protocols to overcome them.

The table below summarizes the core sources of convergence difficulties for biomolecular systems and transition metal complexes, highlighting the distinct nature of the problems in each domain.

Table 1: Fundamental Convergence Challenges in Biomolecular and Transition Metal Systems

| System Characteristic | Biomolecular Systems (e.g., Proteins, DNA, in Solvent) | Transition Metal Complexes (TMCs) |

|---|---|---|

| Primary Challenge | System size and conformational flexibility [12] | Strong electron correlation and multi-configurational ground states [13] |

| Typical System Size | 2 to 350+ atoms per snapshot [12] | Varies, but often smaller (e.g., 10-100 atoms) |

| Key Electronic Structure Problem | Accurate treatment of diverse non-covalent interactions (electrostatics, dispersion) [12] | Description of near-degenerate d-orbitals, metal-ligand charge transfer, and spin states [13] |

| Impact on DOS/Energy Convergence | Slow convergence with basis set size; sensitivity to functional for dispersion; requires large integration grids [12] | High sensitivity to the choice of density functional approximation (DFA); instability in SCF cycles due to near-degeneracies [13] |

| Recommended Functional Class | Range-separated meta-GGAs (e.g., ωB97M-V) [12] | Multiple DFAs across Jacob's Ladder; consensus approach recommended [13] |

Protocols for DOS Convergence

Protocol for Biomolecular Systems

This protocol is optimized for achieving DOS and energy convergence in large biomolecular systems, including proteins, nucleic acids, and their complexes with ligands in explicit solvent.

1. System Preparation and Pre-Optimization

- Structure Source: Obtain initial coordinates from experimental databases (e.g., RCSB PDB) or generate using tools like Architector [12].

- Protonation and Tautomers: Use tools like Schrödinger to sample biologically relevant protonation states and tautomers [12].

- Solvation: Employ explicit solvent models (e.g., TIP3P) within a QM/MM framework where the core region is treated quantum mechanically and the environment with a molecular mechanical force field [14].

2. Electronic Structure Method Selection

- Density Functional: Use the ωB97M-V functional with the def2-TZVPD basis set. This meta-GGA functional provides a high-accuracy, balanced description of various interaction types prevalent in biomolecules [12].

- Integration Grid: Select a large, pruned grid (e.g., 99,590 points) to ensure accurate integration, which is critical for gradients and non-covalent interactions [12].

3. Self-Consistent Field (SCF) Convergence

- Algorithm: Use the Gaussian Smearing method with an initial smearing width of 0.01–0.05 eV to aid initial convergence by populating near-degenerate states, then reduce the width for the final calculation.

- Mixing Parameters: For difficult systems, increase the SCF density mixing parameter (e.g., to 0.05–0.10) or use a Kerker model to damp long-range charge oscillations.

- Fallback: If SCF fails, use the "Always Generate Initial Guess" option to recalculate the initial density from core Hamiltonians.

4. DOS Calculation and Analysis

- Energy Grid: For post-processing DOS, set a fine energy grid with a k-point spacing of ≤ 0.01 eV/Angstrom if using periodic boundary conditions. For molecular clusters, a high density of states points (e.g., 1000 points/eV) is recommended.

- Broadening: Apply a small Gaussian broadening (e.g., 0.01-0.05 eV) to the calculated DOS to smooth the distribution and aid in visualization and analysis, ensuring this does not obscure genuine physical features.

The following workflow diagram outlines the key steps and decision points in this protocol.

Protocol for Transition Metal Complexes

This protocol addresses the severe convergence challenges in TMCs, which stem from their complex electronic structure, including multi-reference character and narrow energy gaps.

1. Active Learning and System Sampling

- Design Space Construction: Build a space of synthetically accessible TMCs using ligand databases (e.g., Cambridge Structural Database). For chromophores, constrain to octahedral d⁶ Fe(II) or Co(III) centers with bidentate ligands [13].

- Consensus DFT Approach: To mitigate DFA bias, employ an ensemble of 23 density functionals across multiple rungs of Jacob's Ladder for property evaluation. This identifies candidates where predictions are robust [13].

2. Electronic Structure Method Selection

- Functional Selection: No single functional is universally best. Use a multi-functional consensus or select a hybrid functional known for reasonable performance on TMCs (e.g., a member of the Minnesota family). Always test sensitivity.

- Basis Set: Use a triple-zeta quality basis set with polarization functions (e.g., def2-TZVP). For metals, incorporate diffuse functions if studying excited states or anion properties.

- Integration Grid: Use an ultrafine grid (e.g., 150,000 points or more) to accurately capture the complex electron density around the metal center.

3. Advanced SCF Convergence

- Initial Guess: Use a fragment-based or atomic guess rather than a superposition of atomic densities (SAD) to provide a better starting point for the metal-ligand system.

- Smearing and Damping: Apply a larger initial smearing width (0.05–0.10 eV) than for biomolecules. Combine this with robust damping algorithms (e.g., a combination of Kerker and Thomas-Fermi screening).

- Stability Analysis: After initial convergence, perform a wavefunction stability check. If an unstable solution is found, re-optimize using the unstable wavefunction as a new guess.

4. DOS and Property Validation

- Multi-Reference Character: Calculate the %t1 diagnostic or the ℏND parameter from fractional occupation number DFT. A value of ℏND > 0.307 indicates strong multi-reference character, signaling that single-reference DFT may be inadequate [13].

- Target Properties: For chromophores, calculate the Δ-SCF absorption energy and ensure it falls within the target visible range (1.5–3.5 eV). Verify the ground state is low-spin to promote desired metal-to-ligand charge-transfer states [13].

- DOS Analysis: Analyze the projected DOS (PDOS) onto the metal d-orbitals and ligand orbitals to confirm the expected electronic structure and identify the HOMO-LUMO gap character.

The workflow for TMCs involves careful state preparation and validation, as shown below.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

The following table details key computational tools and datasets essential for conducting the research described in this application note.

Table 2: Key Research Reagent Solutions for Convergence Studies

| Tool/Resource Name | Type | Primary Function in Research | Application Context |

|---|---|---|---|

| Open Molecules 2025 (OMol25) [12] | Dataset | Provides over 100 million gold-standard DFT calculations for training and benchmarking machine learning interatomic potentials and validating methods. | Biomolecules, Electrolytes, Metal Complexes |

| Universal Model for Atoms (UMA) [12] | Pre-trained Model | A universal neural network potential trained on OMol25 and other datasets for fast, accurate energy and force predictions. | All System Types |

| Architector Package [12] | Software Tool | Generates initial 3D geometries for metal complexes combinatorially using GFN2-xTB, providing starting structures for high-level calculation. | Transition Metal Complexes |

| ωB97M-V/def2-TZVPD [12] | Computational Method | A high-accuracy density functional and basis set combination used for generating reference data in the OMol25 dataset. | All System Types |

| MiMiC Framework [14] | Simulation Framework | Enables highly efficient multi-scale QM/MM MD simulations by coupling different computational chemistry programs optimally. | Biomolecular Systems |

| rND (nondynamical correlation metric) [13] | Diagnostic Metric | Quantifies multireference character from fractional occupation number DFT; values > ~0.3 indicate potential single-reference DFT failure. | Transition Metal Complexes |

| Active Learning with 2D Efficient Global Optimization [13] | Computational Workflow | Balances exploration and exploitation to efficiently discover target molecules (e.g., chromophores) from vast chemical spaces. | Transition Metal Complexes |

Impact of Convergence Failures on Drug Discovery Timelines and Resource Allocation

Convergence failures, the breakdown in integrating data and methodologies across disciplines, represent a critical bottleneck in modern drug discovery. The traditional linear, siloed approach to research and development (R&D) contributes significantly to the sector's well-documented productivity crisis, characterized by unsustainable costs and extended timelines. Eroom's Law—the observation that the number of new drugs approved per billion US dollars spent has halved roughly every nine years since 1950—illustrates this worsening inefficiency [15]. This application note quantifies the impact of convergence failures on development timelines and resource allocation, provides validated experimental protocols to diagnose and remediate such failures, and establishes a framework for optimizing energy grid parameters to ensure robust Density of States (DOS) convergence in computational drug discovery.

Quantitative Impact of Convergence Failures

The failure to effectively integrate data from patents, scientific literature, clinical trials, and real-world evidence creates significant downstream inefficiencies and costs. The following tables summarize the quantitative impact on timelines, costs, and success rates.

Table 1: Impact of Traditional Silos vs. Convergent Approaches on Development Metrics

| Development Metric | Traditional Siloed Approach | Integrated Convergent Approach | Impact of Convergence |

|---|---|---|---|

| Average Timeline | 10–15 years [16] [15] | 5–7.5 years (Projected 50% reduction) [17] | 50% reduction |

| Average Cost per Approved Drug | $2.6 billion [15] | Significant reduction via early failure [17] [18] | Avoids costly late-stage failures |

| Clinical Trial Success Rate | ~10% (90% failure rate) [19] [15] | Increased via better target validation & patient stratification [18] | Potential for substantial improvement |

| Probability of Phase I to Approval | 13.8% overall (range 3.4–33.4%) [20] | Higher predicted success with integrated data [21] | Mitigates Phase II "graveyard" |

Table 2: Phase-by-Phase Attrition and Primary Causes of Failure

| Development Phase | Attrition Rate | Primary Causes of Failure (Often due to Convergence Gaps) |

|---|---|---|

| Preclinical | High (>99% of candidates fail) [16] | Poor target validation, unforeseen toxicity in animal models [20] [15] |

| Phase I | ~37% [15] | Safety and dosage issues in humans |

| Phase II | ~70% [15] | Lack of efficacy in patients—major convergence failure point |

| Phase III | ~42% [15] | Insufficient efficacy vs. standard of care, safety in larger population |

Experimental Protocols for Diagnosing Convergence Failures

Protocol: Integrated Data Audit for Target Validation

Purpose: To systematically identify gaps and inconsistencies in the biological, chemical, and clinical data supporting a proposed drug target before initiating costly screening campaigns.

Materials:

- Life Science Knowledge Graph (e.g., BenevolentAI Platform): Integrates public and proprietary data from scientific literature, patents, genomics, and clinical trials [22] [23].

- CETSA (Cellular Thermal Shift Assay) Kit: Validates direct target engagement of a drug molecule within intact cells, providing physiologically relevant confirmation [24].

- hiPSC-derived Disease Models: Human induced pluripotent stem cell-derived cells (e.g., neurons, microglia) for pathophysiologically relevant testing in complex diseases [20].

- AI-Powered Target Prediction Software: Uses machine learning to analyze genomic, proteomic, and patient data to identify and prioritize novel disease targets [15] [18].

Procedure:

- Data Mapping: Using the knowledge graph, map all known interactions, pathways, and genetic associations for the proposed target. Flag any inconsistencies between data types (e.g., genomic data suggests efficacy, but proteomic data does not) [23].

- Competitive Landscape Analysis: Audit patent and clinical trial databases to assess competitor activity and identify potential overlapping mechanisms or prior art that might limit the freedom to operate [23].

- Experimental Validation: In the hiPSC-derived disease model, perform CETSA assays to confirm that a known modulator of the target engages and stabilizes it in a dose-dependent manner [24] [20].

- Go/No-Go Decision: Integrate the computational and experimental findings. A "Go" decision requires consistent supporting evidence across all data pillars and confirmation of direct target engagement in a physiologically relevant human model.

Protocol: Quantum Chemical Calculation with DOS Convergence Monitoring

Purpose: To ensure robust and reproducible electronic structure calculations for in silico drug design by achieving DOS convergence, thereby preventing wasted computational resources and erroneous predictions.

Materials:

- High-Performance Computing (HPC) Cluster: Configured with quantum chemistry software (e.g., VASP, Gaussian).

- Molecular Dataset: A curated set of small molecule drug candidates with known electronic properties for validation.

Procedure:

- Initial Setup: Define the molecular system and select an appropriate basis set and exchange-correlation functional.

- Parameter Sweep: Systematically vary the energy grid cut-off (ECUT) and K-point mesh density parameters. These directly control the resolution and sampling of the energy space, critical for DOS convergence.

- Calculation Execution: For each parameter set, run a single-point energy calculation and extract the total energy and DOS.

- Convergence Criteria Check: Plot the total energy and the highest occupied molecular orbital (HOMO) – lowest unoccupied molecular orbital (LUMO) gap against the ECUT and K-point parameters. Convergence is achieved when these values change by less than a predefined threshold (e.g., 1 meV/atom) between successive parameter refinements.

- Validation: Once the optimal parameters are identified, run calculations on the validation dataset to ensure predicted properties (e.g., ionization potential, electron affinity) align with reference data.

Diagram 1: DOS convergence workflow.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Platforms for Integrated Discovery

| Research Reagent / Platform | Function & Application | Role in Preventing Convergence Failure |

|---|---|---|

| CETSA Assay Kits [24] | Measures drug-target engagement in intact cells and tissues under physiological conditions. | Bridges the gap between biochemical potency and cellular efficacy, a key point of failure. |

| hiPSC-Derived Cells & Organoids [20] | Provides human-specific, pathologically relevant models for efficacy and toxicity testing. | Reduces reliance on animal models, which have poor external validity for human responses. |

| Organs-on-Chips [20] | Microfluidic devices that recapitulate human organ-level physiology and tissue-tissue interfaces. | Enables more accurate human PK/PD modeling and assessment of complex drug effects. |

| AI-Driven Knowledge Graphs (e.g., BenevolentAI, Exscientia) [22] [23] | Integrates disparate data sources (patents, literature, omics, trials) to identify novel targets and connections. | Systematically identifies inconsistencies and gaps in the early hypothesis, forcing convergence. |

| Generative AI Chemistry Platforms (e.g., Insilico Medicine) [22] [15] | Designs novel molecular structures from scratch optimized for multiple properties (potency, ADMET). | Compresses design cycles and generates molecules optimized for both efficacy and developability. |

| Federated Data Platforms (e.g., Lifebit) [15] | Enables secure, compliant analytics across distributed clinical and genomic datasets without moving data. | Allows integration of real-world evidence into discovery while maintaining privacy, improving translational predictivity. |

Visualizing the Convergent Workflow

The following diagram illustrates an integrated, AI-driven drug discovery workflow designed to systematically prevent convergence failures by establishing continuous feedback loops between computational and experimental data.

Diagram 2: Convergent AI-driven discovery workflow.

Practical Implementation: Method Selection and Parameter Configuration Strategies for Stable Convergence

Self-Consistent Field (SCF) methods are fundamental computational procedures in quantum chemistry for determining molecular electronic structure. This application note provides a detailed comparative analysis of three prominent SCF convergence algorithms—DIIS, MultiSecant, and LIST methods—within the specific research context of setting energy grid parameters for Density of States (DOS) convergence. We present structured performance comparisons, detailed experimental protocols, and visualization tools to guide researchers in selecting and implementing optimal SCF methodologies for electronic structure calculations in materials science and drug development applications.

The Self-Consistent Field (SCF) procedure is an iterative computational method at the heart of modern quantum chemical calculations, particularly in Density Functional Theory (DFT). In Kohn-Sham DFT, the total electronic energy is expressed as a functional of the electron density, and the SCF method searches for a self-consistent electron density where the input and output densities converge [25]. The convergence is typically monitored through the self-consistent error, defined as the square root of the integral of the squared difference between the input and output density: (\text{err}=\sqrt{\int dx \; (\rho\text{out}(x)-\rho\text{in}(x))^2 }) [26].

Achieving SCF convergence presents significant computational challenges, particularly for systems with complex electronic structures such as transition metal complexes, open-shell systems, and molecules with small HOMO-LUMO gaps. The choice of convergence algorithm directly impacts computational efficiency, stability, and reliability of results—factors critically important for DOS calculations where accurate convergence directly influences electronic property predictions [27]. This application note focuses on three principal algorithms—DIIS, MultiSecant, and LIST methods—providing researchers with practical implementation guidelines within the context of electronic structure calculations for materials and pharmaceutical development.

Comparative Algorithm Analysis

Algorithm Specifications and Mechanisms

Direct Inversion in the Iterative Subspace (DIIS) is one of the most widely used SCF convergence algorithms. The DIIS method accelerates convergence by constructing an optimal linear combination of previous trial density matrices or Fock matrices to generate an improved guess for the next iteration [26] [28]. This extrapolation procedure effectively reduces oscillations in the convergence path. Key parameters controlling DIIS performance include the number of previous vectors retained in the expansion (NVctrx), damping parameters (DiMix, DiMixMin, DiMixMax), and criteria for handling large expansion coefficients (CHuge, CLarge) [26].

MultiSecant Methods represent a class of quasi-Newton approaches that generalize the secant method to multidimensional problems. These methods build an approximate Jacobian matrix using information from previous iterations, effectively capturing the convergence landscape without explicit Jacobian calculation [26]. In the SCM software implementation, MultiSecant serves as an alternative to DIIS at similar computational cost per cycle and can offer improved convergence in problematic cases [26]. The method is particularly valuable for systems where DIIS exhibits oscillatory behavior.

LIST Methods (including LISTi, LISTb, and LISTd variants) constitute a family of algorithms implemented as alternatives within the DIIS framework [26]. These methods employ different strategies for handling the iterative subspace and managing the history of iterations. The LIST variants provide flexibility in managing the balance between convergence stability and computational overhead, with each variant employing distinct approaches to building and maintaining the iterative subspace.

Performance Comparison Table

Table 1: Comparative Characteristics of SCF Convergence Algorithms

| Algorithm | Computational Efficiency | Convergence Stability | Memory Requirements | Optimal Application Domain | Key Tunable Parameters |

|---|---|---|---|---|---|

| DIIS | High for standard systems | Moderate; prone to oscillations in difficult cases | Moderate (stores 5-10 previous vectors) | Standard molecular systems with reasonable HOMO-LUMO gaps | NVctrx, DiMix, CHuge, CLarge, Condition [26] |

| MultiSecant | Comparable to DIIS per cycle | High; robust for problematic convergence | Similar to DIIS | Systems with difficult convergence, metallic systems, small-gap semiconductors | Mixing parameters, history length [26] |

| LIST Methods | Variable by variant | Generally high with proper variant selection | Similar to DIIS | Systems requiring specialized subspace handling | Variant selection (LISTi, LISTb, LISTd), subspace management [26] |

| MultiStepper | Flexible, adaptive | High through preset pathways | Implementation-dependent | General purpose, black-box applications | Preset path configurations [26] |

Parameter Optimization Guidelines

Table 2: Key Algorithm Parameters and Optimization Recommendations

| Parameter | Algorithm | Default Value | Optimization Guidance | DOS Convergence Consideration |

|---|---|---|---|---|

Iterations |

All | 300 (SCM) [26], 50 (Q-Chem) [28] | Increase to 500+ for difficult systems | Essential for metallic systems with dense DOS near Fermi level |

Mixing |

All | 0.075 [26] | Reduce for oscillating systems; increase for monotonic convergence | Critical for DOS accuracy; affects orbital energy convergence |

Criterion |

All | Depends on NumericalQuality and ( \sqrt{N_\text{atoms}} ) [26] |

Tighten to 1e-7 or 1e-8 for property calculations | Directly impacts DOS resolution; tighter criteria needed for accurate band edges |

NVctrx |

DIIS | Implementation-dependent | 6-10 for standard systems; reduce if unstable | Affects convergence stability for systems with complex DOS features |

DiMix |

DIIS | Implementation-dependent | Adaptive mixing often preferable | Influences convergence rate of valence and conduction band states |

ElectronicTemperature |

All | 0.0 [26] | 500-5000 K for metallic systems | Essential for smearing DOS near Fermi level in metallic systems |

Experimental Protocols for SCF Convergence

Standard Convergence Protocol for DOS Calculations

This protocol provides a systematic approach for achieving SCF convergence in Density of States calculations, particularly relevant for systems with challenging electronic structures.

Initialization Phase:

- System Preparation: Begin with a reasonable initial geometry. For DOS calculations, ensure the k-point grid is sufficiently dense to capture electronic structure features (typically 0.02 Å⁻¹ or finer spacing in reciprocal space).

- Initial Density Guess: Select appropriate initial density strategy (

InitialDensityparameter). Use atomic orbital superposition (psi) for molecular systems; considerfrompotfor periodic systems or metallic clusters [26]. - Baseline Parameters: Set initial SCF parameters to conservative values:

Mixing = 0.05,Iterations = 200, and standard convergence criteria (Criterion = 1e-5 √N_atomsforNumericalQuality = Normal) [26].

SCF Execution Phase:

- Algorithm Selection: Begin with DIIS algorithm for standard systems. For metallic systems or those with small HOMO-LUMO gaps (<0.1 eV), consider MultiSecant or LIST methods as primary alternatives.

- Convergence Monitoring: Track both energy change and density change between iterations. For DOS calculations, also monitor the stability of frontier orbital energies (HOMO and LUMO).

- Adaptive Adjustment: If oscillations occur after 20-30 iterations, reduce mixing parameter by 30-50% or switch to MultiSecant method. If convergence stagnates, gradually increase mixing parameter or consider LIST variant methods.

Convergence Validation:

- Accuracy Verification: Confirm that final SCF error is at least one order of magnitude smaller than the desired DOS energy resolution.

- DOS Calculation: Proceed with DOS calculation only after stable SCF convergence is achieved, verifying that electronic temperature settings (if used) are appropriate for the system [26].

Troubleshooting Protocol for Problematic Systems

For systems exhibiting persistent SCF convergence failures, implement this structured troubleshooting approach:

Diagnosis Phase:

- Convergence Pattern Analysis: Examine the SCF energy progression. Oscillatory behavior suggests reducing mixing parameters; monotonic but slow convergence benefits from increased mixing or algorithm switching [27].

- Electronic Structure Analysis: Check HOMO-LUMO gap at final geometry. Gaps smaller than kT suggest implementing fractional occupancy smearing (

ElectronicTemperature= 500-5000 K) [26]. - Wavefunction Stability: Perform initial stability analysis using

ROBUST_STABLEalgorithm if available [28] to ensure ground state convergence.

Intervention Phase:

- Accuracy Enhancement: Increase integration grid density (

NumericalQuality = GoodorVeryGood), tighten SCF convergence criterion to 1e-7 or higher, and consider exact exchange-correlation potential evaluation [27]. - Algorithm Cycling: Implement sequential algorithm strategy: Begin with DIIS for initial convergence, switch to MultiSecant or LIST methods if stagnation occurs near convergence.

- Degeneracy Handling: For systems with near-degenerate states, enable

Degeneratekeyword with appropriate energy width (default 1e-4 a.u.) to smooth orbital occupations [26].

Advanced Strategies:

- Damping Techniques: Implement aggressive damping (mixing = 0.01-0.02) for initial 10-15 iterations, gradually increasing to standard values.

- Fallback Protocol: If standard methods fail, employ specialized algorithms:

RCA_DIISorADIIS_DIISfor initial convergence establishment, switching toDIIS_GDMfor final convergence [28].

Table 3: Essential Research Reagent Solutions for SCF Convergence Studies

| Resource | Function in SCF Convergence | Implementation Examples | Application Context |

|---|---|---|---|

| Integration Grids | Numerical integration of XC functional | UltraFine grid (Gaussian) [29], Various grid levels (PySCF) [30] | Critical for accuracy; denser grids needed for difficult convergence |

| Basis Sets | Represent molecular orbitals | TZ2P, 6-31G(d), cc-pVDZ [27] [31] | Larger bases need tighter convergence criteria |

| XC Functionals | Define exchange-correlation energy | B3LYP, PBE, wB97XD [29] [31] | Hybrid functionals often need tighter convergence than GGAs |

| Relativistic Methods | Account for relativistic effects | ZORA, Pauli formalism [27] | Essential for heavy elements; ZORA preferred over Pauli |

| Solvation Models | Incorporate solvent effects | SCRF, COSMO, SMD | Implicit solvation can improve or hinder convergence |

| Dispersion Corrections | Account for van der Waals interactions | D2, D3, VV10 [29] | Can affect convergence stability in dense systems |

Visualization of SCF Algorithm Workflows

SCF Convergence Algorithm Decision Workflow

DOS Convergence Optimization Pathway

The selection and optimization of SCF convergence algorithms—DIIS, MultiSecant, and LIST methods—represent a critical step in ensuring accurate and efficient electronic structure calculations, particularly for Density of States determinations in materials research and drug development. DIIS offers robust performance for standard systems, MultiSecant provides enhanced stability for challenging metallic or small-gap systems, while LIST methods deliver specialized subspace handling capabilities. Through the implementation of the structured protocols, parameter optimization strategies, and diagnostic workflows presented in this application note, researchers can systematically address SCF convergence challenges within the specific context of DOS calculations. The integrated approach of algorithm selection, parameter tuning, and systematic troubleshooting enables reliable convergence across diverse chemical systems, forming a foundation for accurate electronic property predictions in both materials design and pharmaceutical development.

Optimal Mixing Parameter (SCF%Mixing) Configuration for Different Molecular Systems

The self-consistent field (SCF) method is the fundamental algorithm for solving the electronic structure problem in density functional theory (DFT) and Hartree-Fock calculations [32]. This iterative procedure requires the electron density or Hamiltonian to converge to a stable solution, but this process can be challenging, slow, or even divergent without proper parameterization [33] [32]. The choice of optimal mixing parameters—which control how the new density or Hamiltonian is constructed from previous iterations—varies significantly across different molecular systems and is crucial for computational efficiency and accuracy [33] [34].

For researchers focusing on density of states (DOS) convergence, which requires particularly high accuracy in electronic structure calculations [35], appropriate SCF mixing strategy is even more critical. This application note provides structured guidelines and experimental protocols for determining optimal SCF mixing parameters across diverse molecular systems, with special consideration for DOS-related research.

Theoretical Background: SCF Convergence and Mixing Strategies

The SCF Cycle and Convergence Monitoring

The SCF cycle represents an iterative loop where the Kohn-Sham equations must be solved self-consistently: the Hamiltonian depends on the electron density, which in turn is obtained from the Hamiltonian [33]. Starting from an initial guess, the code computes the Hamiltonian, solves the Kohn-Sham equations to obtain a new density matrix, and repeats until convergence is reached [33].

Convergence is typically monitored through two main criteria:

- Density Matrix Tolerance: The maximum absolute difference (dDmax) between matrix elements of new and old density matrices, controlled by

SCF.DM.Tolerance(default: 10⁻⁴) [33] [34] - Hamiltonian Tolerance: The maximum absolute difference (dHmax) between matrix elements of the Hamiltonian, controlled by

SCF.H.Tolerance(default: 10⁻³ eV) [33] [34]

Both criteria must be satisfied by default for convergence, though either can be disabled if necessary [34].

Mixing Methods and Algorithms

SCF convergence relies heavily on the strategy for mixing the electron density or Hamiltonian between iterations. The two fundamental approaches are:

- Density Mixing: The density matrix (DM) is mixed between iterations [33] [34]

- Hamiltonian Mixing: The Hamiltonian (H) is mixed between iterations (typically provides better results) [33] [34]

Within these approaches, several algorithmic implementations exist:

- Linear Mixing: Uses a simple damping factor (

SCF.Mixer.Weight); robust but inefficient for difficult systems [33] - Pulay Mixing: Also known as Direct Inversion in the Iterative Subspace (DIIS); the default in many codes [33]

- Broyden Mixing: A quasi-Newton scheme that sometimes outperforms Pulay for metallic or magnetic systems [33]

The more sophisticated Pulay and Broyden methods retain a history of previous DMs or Hamiltonians (controlled by SCF.Mixer.History) to accelerate convergence [33] [34].

Optimal Mixing Parameters for Different System Types

Parameter Recommendations by System Category

Table 1: Optimal SCF Mixing Parameters for Different Molecular Systems

| System Type | Recommended Mixing Method | Optimal Weight | History Length | Special Considerations |

|---|---|---|---|---|

| Small Molecules (e.g., CH₄) | Pulay or Broyden [33] | 0.1-0.5 [33] | 2-4 [33] | Relatively easy to converge; default parameters often sufficient |

| Metallic Systems | Broyden [33] [36] | 0.1-0.3 [36] | 4-8 [36] | Requires smaller weights for stability; electron smearing recommended [32] [36] |

| Magnetic Systems (e.g., Fe clusters) | Broyden [33] | 0.1-0.3 (charge), 0.8 (spin) [36] | 4-8 [33] | For non-collinear calculations with difficult convergence, set mixing_angle=1.0 [36] |

| Open-Shell Systems | Pulay or Broyden [32] | 0.1-0.3 [32] | 4-8 [32] | Ensure correct spin multiplicity; strongly fluctuating errors may indicate improper electronic structure description [32] |

| Systems with Small HOMO-LUMO Gap | Broyden with electron smearing [32] | 0.05-0.2 [32] | 6-10 [32] | Fractional occupation numbers help overcome convergence issues [32] |

| Challenging/Divergent Systems | Linear or Pulay with reduced weight [32] | 0.015-0.09 [32] | 15-25 [32] | Use DIIS N=25, Cyc=30, Mixing=0.015, Mixing1=0.09 for slow but stable convergence [32] |

Advanced Mixing Techniques for Difficult Systems

For particularly challenging systems, additional techniques beyond standard mixing approaches may be necessary:

Electron Smearing: Applies fractional occupation numbers to distribute electrons over near-degenerate levels; particularly helpful for metallic systems or those with small HOMO-LUMO gaps [32] [36]. Keep the smearing value as low as possible and use successive restarts with reduced values [32].

Level Shifting: Artificially raises the energy of unoccupied orbitals; helpful for convergence but invalidates properties involving virtual orbitals (excitation energies, response properties, NMR shifts) [32].

U-Ramping for DFT+U: For systems using the DFT+U method, employ U-ramping with

mixing_restart>0andmixing_dmr=1to improve convergence [36].MESA Method: The MESA method combines multiple acceleration techniques (ADIIS, fDIIS, LISTb, LISTf, LISTi, and SDIIS) and can be particularly effective for problematic cases [2] [32].

Experimental Protocols for Parameter Optimization

General Workflow for Determining Optimal Mixing Parameters

The following diagram illustrates the systematic workflow for determining optimal SCF mixing parameters:

SCF Parameter Optimization Workflow

Protocol 1: Basic Mixing Parameter Screening

Purpose: To efficiently identify promising mixing parameter ranges for a new molecular system.

Materials and Setup:

- Quantum chemistry code (SIESTA, ADF, ABACUS, or VASP)

- Molecular structure file

- Baseline computational parameters (functional, basis set, k-points)

Procedure:

- Begin with a moderate number of maximum SCF iterations (e.g., 100) [33]

- Set mixing method to Pulay/DIIS (default in most codes) [33]

- Test a range of mixing weights (0.1, 0.2, 0.3, 0.5, 0.7) with default history length [33]

- For each parameter set, record:

- Number of iterations to convergence

- Final energy

- Convergence behavior (smooth, oscillatory, divergent)

- Computational time

- Identify the parameter set with the fewest iterations to convergence

- Verify final energies are consistent across parameter sets

Interpretation: Lower iteration counts indicate better performance, but consistent final energies must be confirmed to ensure physical validity.

Protocol 2: Advanced Parameter Optimization for Challenging Systems

Purpose: To address systems that fail to converge with standard parameter screening.

Materials and Setup:

- As in Protocol 1, plus:

- Access to SCF acceleration methods (Broyden, LIST, MESA)

- Electron smearing capabilities

- Level shifting options

Procedure:

- If oscillations occur, reduce mixing weight by 50% and implement Pulay mixing with increased history length (4-8) [33]

- For persistent oscillations, switch to Broyden method with similar parameters [33]

- For metallic systems or those with small HOMO-LUMO gaps:

- For magnetic systems:

- As a last resort for extremely difficult cases:

Interpretation: Successful convergence should show monotonic decrease in energy and density/Hamiltonian changes. Consistent final energies across different methods validate the result.

Protocol 3: DOS-Specific Convergence Verification

Purpose: To ensure SCF convergence is adequate for accurate density of states calculations.

Background: DOS calculations often require higher k-point sampling and more stringent convergence criteria than total energy calculations [35]. The relationship between k-point sampling and DOS quality involves multiple factors: Brillouin zone integration scheme, k-point sampling fineness, energy grid fineness, DOS smoothing, and band dispersion [35].

Materials and Setup:

- Converged geometry from previous calculations

- Fine k-point grid appropriate for DOS calculations [35]

- High-energy cutoff if using plane-wave basis

Procedure:

- First, converge the system using Protocols 1 or 2 with standard k-point grid

- Increase k-point density by at least 2× in each direction for final DOS calculation [35]

- Use the optimal mixing parameters identified in step 1

- Verify convergence with tighter criteria (e.g.,

SCF.DM.Tolerance = 10⁻⁵) [33] - Calculate DOS using tetrahedron method or appropriate smearing [35]

- Check DOS reproducibility with slightly different mixing parameters

Interpretation: The DOS should be stable with respect to small changes in mixing parameters and SCF convergence criteria.

Table 2: Essential Computational Tools for SCF Convergence Research

| Tool/Resource | Function/Purpose | Implementation Examples |

|---|---|---|

| Pulay/DIIS Mixer | Accelerates SCF convergence using history of previous steps [33] | SIESTA: SCF.Mixer.Method Pulay [33]; ADF: Default DIIS [2] |

| Broyden Mixer | Alternative acceleration method; sometimes superior for metallic systems [33] | SIESTA: SCF.Mixer.Method Broyden [33]; ABACUS: Default method [36] |

| Electron Smearing | Enables fractional occupancies for metallic systems [32] [36] | ABACUS: smearing_method and smearing_sigma [36]; ADF: Occupations settings [32] |

| MESA Algorithm | Combines multiple acceleration methods for difficult cases [2] [32] | ADF: AccelerationMethod MESA [2] |

| Level Shifting | Artificially raises virtual orbital energies to aid convergence [32] | ADF: Lshift parameter (enables OldSCF) [32] |

| Adaptive History Length | Controls how many previous steps are used in Pulay/Broyden [33] | SIESTA: SCF.Mixer.History [33]; ADF: DIIS N [2] |

Optimal configuration of SCF mixing parameters is system-dependent and crucial for efficient and accurate electronic structure calculations. Small molecules typically perform well with default parameters, while metallic, magnetic, and open-shell systems require more careful parameterization. For DOS calculations, which demand high accuracy in the electronic structure, verifying convergence with respect to mixing parameters is particularly important.

The protocols provided herein offer systematic approaches for determining optimal parameters across diverse molecular systems. By following these guidelines and understanding the underlying principles of SCF convergence, researchers can significantly improve the efficiency and reliability of their computational workflows, especially in the context of DOS convergence research for energy grid parameterization.

This application note provides detailed protocols for implementing two advanced computational techniques essential for researchers conducting electronic structure calculations, particularly in the context of density of states (DOS) convergence research. Achieving converged results in computational materials science and drug development requires sophisticated approaches to handle temperature effects and optimization processes. We focus specifically on finite electronic temperature methodologies, which account for thermal effects on electronic properties, and adaptive convergence criteria, which dynamically control iterative solvers to improve computational efficiency. These techniques are particularly valuable for setting energy grid parameters in DOS calculations where accuracy and computational cost must be carefully balanced.

The content is structured to provide immediately applicable knowledge, featuring comparative tables of methodological approaches, detailed experimental protocols, visualization of computational workflows, and essential research toolkits. This framework supports researchers in materials science and computational drug development who require robust methods for predicting material properties and behavior at finite temperatures.

Finite Electronic Temperature Methodology

Theoretical Foundation and Computational Approaches

Incorporating finite electronic temperature is crucial for simulating realistic material behavior, as it accounts for how thermal excitations influence electronic structure, magnetic properties, and transport phenomena. For Density of States (DOS) convergence research, this is particularly important as temperature effects can significantly alter electronic distributions near the Fermi level.

Table 1: Comparison of Finite-Temperature Simulation Approaches for DOS Calculations

| Method | Key Principle | Temperature Treatment | Best Suited Materials | Computational Cost |

|---|---|---|---|---|

| Classical Heisenberg Model with Boltzmann distribution | Treats spin moments as classical vectors [37] | Boltzmann distribution for thermal fluctuations | General ferromagnets near TC | Medium |

| Quantum-Corrected Approach with Bose-Einstein statistics | Incorporates magnon quantization effects [37] | Bose-Einstein distribution for magnon excitations | bcc Fe, other ferromagnets at low T | High |

| First-Principles with Thermal Lattice Vibrations | DFT-derived Jij with thermal lattice effects [37] | CPA averaging of atomic displacements | Systems with strong electron-phonon coupling | Very High |

| Monte Carlo with Quantum Fluctuation-Dissipation | Modified Monte Carlo sampling [37] | Quantum fluctuation-dissipation ratio ηqt(T) | Low-temperature magnetic systems | High |

For DOS calculations, the finite-temperature electronic structure forms the foundation for understanding various material properties. As emphasized in electronic structure analysis, "The density of states of electrons is a simple, yet highly-informative, summary of the electronic structure of a material" [38]. When temperature effects are properly incorporated, researchers can more accurately predict effective mass, Van Hove singularities, and the effective dimensionality of electrons in materials.

Protocol: Implementing Finite Electronic Temperature in DOS Calculations

Objective: To incorporate finite electronic temperature effects in density of states calculations for body-centered cubic (bcc) iron, enabling more accurate prediction of magnetic and transport properties.

Materials and Computational Resources:

- First-principles calculation software (VASP, SPR-KKR)

- Monte Carlo simulation framework for magnetic systems

- High-performance computing resources

- Post-processing tools for DOS analysis

Procedure:

Initial Structure Relaxation

- Perform crystal structure relaxation using DFT code (e.g., VASP) with GGA-PBE functional [37]

- Utilize a k-point mesh of 24×24×24 and plane-wave basis set cutoff energy of 500 eV

- Confirm convergence of structural parameters to tolerances of 0.01 eV for energy and 0.01 eV/Å for forces

Phonon Calculations for Thermal Lattice Effects

- Compute force constants using density functional perturbation theory

- Employ supercells of varying sizes (2×2×2, 3×3×3, and 4×4×4) for comprehensive sampling

- Derive phonon dispersion curves and phonon density of states using Phonopy code [37]

- Calculate specific heat of the lattice using harmonic approximations

Temperature-Dependent Exchange Coupling Constants

- Evaluate magnetic exchange coupling constants (Jij) using Liechtenstein formula within SPR-KKR code

- Incorporate thermal lattice vibration effects through coherent potential approximation (CPA)

- Generate multiple atomic displacement configurations characterized by probabilities xv for v=1,…,Nv [37]

- Compute Jij over temperature range from 0 K to 1600 K with 25 K intervals

Monte Carlo Simulations with Quantum Corrections

- Implement classical Heisenberg model with temperature-dependent exchange interactions

- Apply quantum fluctuation-dissipation ratio ηqt(T) to account for Bose-Einstein statistics of magnons: ηqt(T) = ∫[ℏω/2 + (ℏω)/(e^(ℏω/kBT) - 1)]gm(ω,T)dω [37]

- Execute Monte Carlo sampling with modified probability distribution: P({Si}) = C × exp(-E/η(T))

- Calculate magnetization and magnetic energy as functions of lattice temperature

Finite-Temperature DOS Calculation

- Compute electronic structures while accounting for both thermal lattice vibration and thermal spin fluctuation effects

- Utilize the Kubo-Greenwood formula for temperature-dependent electrical resistivity as validation

- Confirm that spontaneous magnetization follows Bloch's T^(3/2) power law in low-temperature regime

Validation:

- Compare calculated Curie temperature with experimental value (1043 K for bcc Fe)

- Verify that spontaneous magnetization matches experimental curves across temperature range

- Confirm electrical resistivity trends align with experimental measurements

Finite Temperature DOS Calculation Workflow

Adaptive Convergence Criteria

Theoretical Framework and Method Selection

Adaptive convergence criteria dynamically control iterative processes in computational simulations, balancing solution accuracy with computational expense. For DOS convergence research, appropriate convergence criteria are essential for obtaining reliable results without excessive computational overhead.

Table 2: Adaptive Convergence Criteria for Iterative Methods

| Criterion Type | Mathematical Formulation | Applications | Advantages | Limitations |

|---|---|---|---|---|

| Absolute Difference | ⎸V(Xt) - V(Xt+1)⎹ ≤ μ(1-β)/2β [39] | SDP for reservoir systems | Simple implementation | May converge slowly for flat value functions |

| Squared Difference | ∑(V(Xt) - V(Xt+1))² ≤ μ(1-β)/2β [39] | Multi-reservoir optimization problems | Faster convergence for smooth functions | More sensitive to outliers |

| Adaptive Grid Refinement | Metric-based cell subdivision [40] | CFD, unstructured hexahedral grids | Automatic focus on high-error regions | Complex implementation |

| Dual Certificate Violation | Branch-and-bound detection [41] | Total variation minimization | Avoids heuristic approaches | Requires dual problem formulation |

The fundamental challenge in convergence criterion selection lies in the balance between computational efficiency and solution accuracy. As noted in reservoir optimization studies, "an incremental solution strategy based on an iterative method will be effective if, and only if, the selection of the convergence criterion is adequate for the completion of the iteration process" [39]. Adaptive grid refinement methods have demonstrated particular value for complex systems where "the grid resolution needed to resolve the flow phenomena, as well as the precise position of these features, is uncertain" [40].

Protocol: Implementing Adaptive Convergence for DOS Calculations

Objective: To implement and validate adaptive convergence criteria for density of states calculations, reducing computational time while maintaining required accuracy.

Materials and Computational Resources:

- Electronic structure calculation software

- Grid refinement capabilities (isotropic and anisotropic)

- Programming environment for algorithm customization

- Convergence monitoring tools

Procedure:

Baseline Convergence Parameter Establishment

- Set initial tolerance value μ = 10⁻³ as reference point [39]

- Select discount factor β between 0 and 1 (typically 0.95-0.99)

- Determine maximum iteration count based on system complexity and computational constraints

Convergence Criterion Implementation

- Option A: Absolute Difference Criterion

- Calculate D = ∑⎸V(Xt) - V(Xt+1)⎹ across all states [39]

- Compare against threshold μ(1-β)/2β

- Option B: Squared Difference Criterion

- Calculate D = ∑(V(Xt) - V(Xt+1))² across all states [39]

- Compare against threshold μ(1-β)/2β

- For grid-based DOS calculations, implement metric-based adaptive refinement:

- Option A: Absolute Difference Criterion

Adaptive Refinement Process

- For initial iterations, use coarser convergence criteria to accelerate early progress

- Implement conditional refinement based on dual constraint violations for total variation minimization [41]

- Gradually tighten criteria as solution approaches convergence

- For DOS calculations, focus refinement on regions near Fermi level where higher resolution is critical

Convergence Monitoring and Dynamic Adjustment

- Track convergence rate throughout iterative process

- If oscillation detected, increase damping factor or adjust refinement aggressiveness

- For stochastic methods like Wang-Landau, modify the density of states estimate using distribution of random walkers without auxiliary modification factors [42]

Validation and Accuracy Assessment

- Compare results obtained with adaptive criteria against reference calculations with stringent fixed criteria

- Verify that key DOS features (band edges, Van Hove singularities) are preserved

- Quantify computational time savings and any accuracy trade-offs

Troubleshooting:

- For oscillatory behavior: Increase discount factor β or implement moving average smoothing

- For premature convergence: Tighten tolerance μ or implement additional validation checks

- For excessive computation time: Loosen criteria in well-behaved regions or implement multi-level approach

Adaptive Convergence Decision Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Finite Temperature and Convergence Research

| Tool/Category | Specific Examples | Function/Purpose | Application Context |

|---|---|---|---|

| First-Principles Codes | VASP, SPR-KKR [37] | Electronic structure calculation | DFT-based property prediction |

| Monte Carlo Frameworks | Custom Heisenberg model implementations [37] | Statistical sampling of configurations | Finite temperature magnetism |

| Phonon Calculators | Phonopy [37] | Lattice vibration analysis | Thermal lattice effects |